Brahms ByzantineResilient Random Membership Sampling Bortnikov Gurevich Keidar

Brahms Byzantine-Resilient Random Membership Sampling Bortnikov, Gurevich, Keidar, Kliot, and Shraer April 2008 1

Edward (Eddie) Bortnikov Gabriel (Gabi) Kliot April 2008 Maxim (Max) Gurevich Idit Keidar Alexander (Alex) Shraer 2

Why Random Node Sampling n Gossip partners n Random choices make gossip protocols work n Unstructured overlay networks n E. g. , among super-peers n Random links provide robustness, expansion n Gathering statistics n Probe random nodes n Choosing cache locations April 2008 3

The Setting n Many nodes – n n 10, 000 s, 100, 000 s, 1, 000 s, … n Come and go n Churn n Full network n Like the Internet n Every joining node knows some others n (Initial) Connectivity April 2008 4

Adversary Attacks n Faulty nodes (portion f of ids) n Attack other nodes n Byzantine failures n May want to bias samples n Isolate nodes, Do. S nodes n Promote themselves, bias statistics April 2008 5

Previous Work n Benign gossip membership n Small (logarithmic) views n Robust to churn and benign failures n Empirical study [Lpbcast, Scamp, Cyclon, PSS] n Analytical study [Allavena et al. ] n Never proven uniform samples n Spatial correlation among neighbors’ views [PSS] n Byzantine-resilient gossip n Full views [MMR, MS, Fireflies, Drum, BAR] n Small views, some resilience [SPSS] n We are not aware of any analytical work April 2008 6

Our Contributions 1. Gossip-based attack-tolerant membership n Linear portion f of failures The view is not all bad n O(n 1/3)-size partial views n Correct nodes remain connected n Mathematically analyzed, validated in simulations Better than benign 2. Random sampling gossip n Novel memory-efficient approach n Converges to proven independent uniform samples April 2008 7

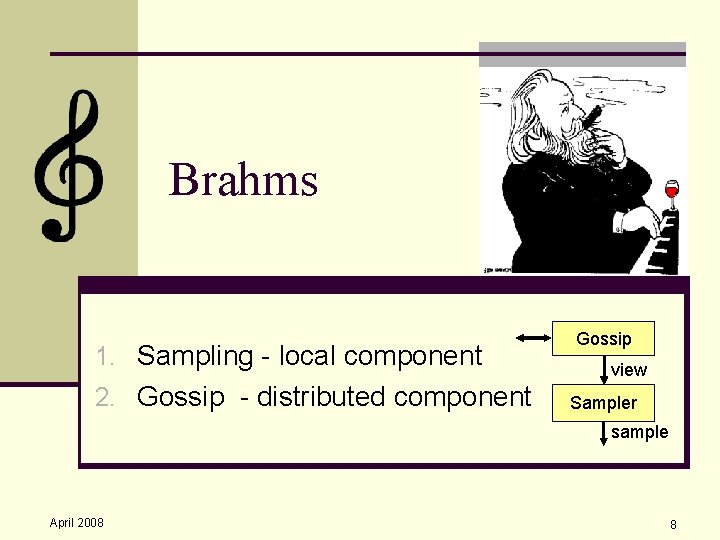

Brahms 1. Sampling - local component 2. Gossip - distributed component Gossip view Sampler sample April 2008 8

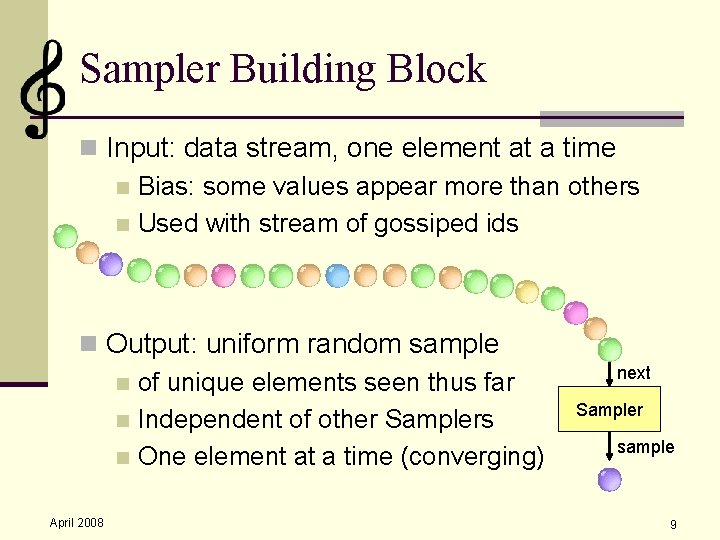

Sampler Building Block n Input: data stream, one element at a time n Bias: some values appear more than others n Used with stream of gossiped ids n Output: uniform random sample n of unique elements seen thus far n Independent of other Samplers n One element at a time (converging) April 2008 next Sampler sample 9

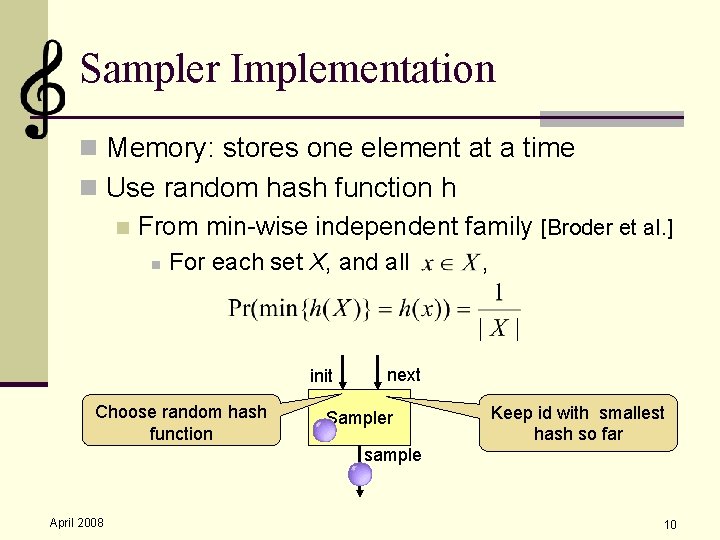

Sampler Implementation n Memory: stores one element at a time n Use random hash function h n From min-wise independent family [Broder et al. ] n For each set X, and all init Choose random hash function , next Sampler Keep id with smallest hash so far sample April 2008 10

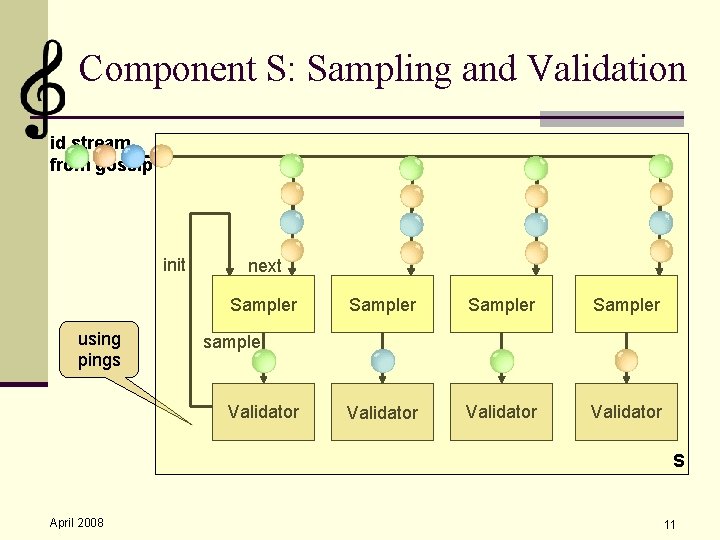

Component S: Sampling and Validation id stream from gossip init next Sampler using pings Sampler Validator sample Validator S April 2008 11

Gossip Process n Provides the stream of ids for S n Needs to ensure connectivity n Use a bag of tricks to overcome attacks April 2008 12

Gossip-Based Membership Primer n Small (sub-linear) local view V n V constantly changes - essential due to churn n Typically, evolves in (unsynchronized) rounds n Push: send my id to some node in V n Reinforce underrepresented nodes n Pull: retrieve view from some node in V n Spread knowledge within the network n [Allavena et al. ‘ 05]: both are essential n Low probability for partitions and star topologies April 2008 13

Brahms Gossip Rounds n Each round: n Send pushes, pulls to random nodes from V n Wait to receive pulls, pushes n Update S with all received ids n (Sometimes) re-compute V n April 2008 Tricky! Beware of adversary attacks 14

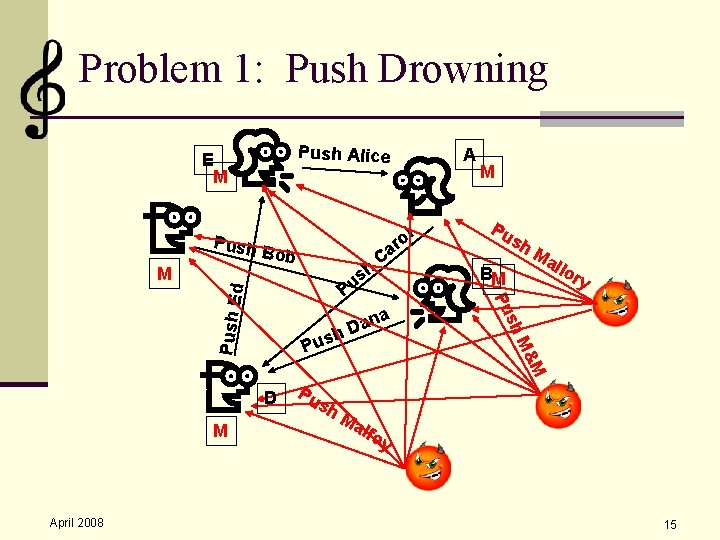

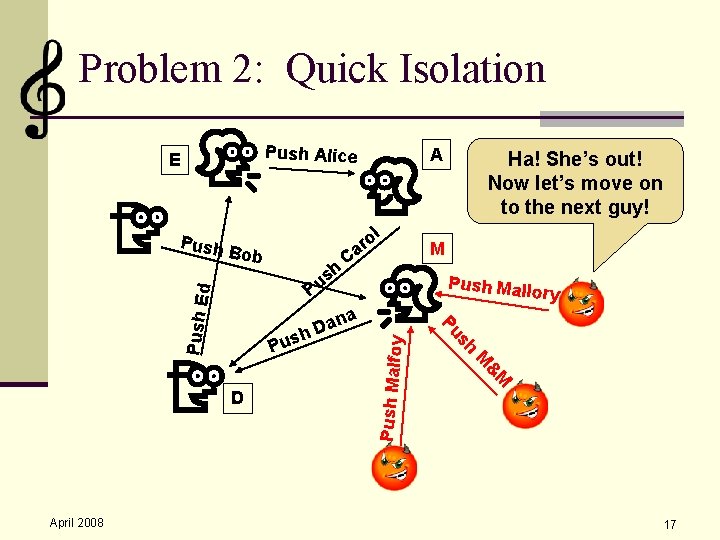

Problem 1: Push Drowning Push Alice E M Push E April 2008 Pu sh sh BM Ma llo ry &M Pus Pu M sh ana D h D M C M Pu h s Pu d M Bob ol r a A Ma lfo y 15

Trick 1: Rate-Limit Pushes n Use limited messages to bound faulty pushes system-wide n Assume at most p of pushes are faulty n E. g. , computational puzzles/virtual currency n Faulty nodes can send portion p of them n Views won’t be all bad April 2008 16

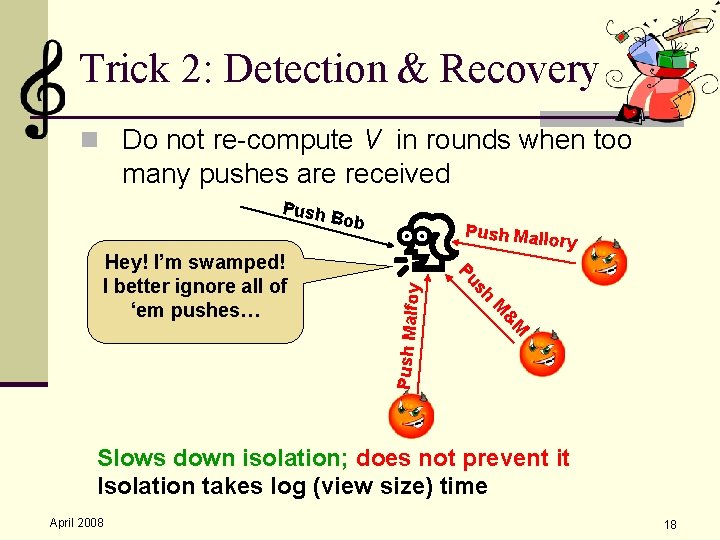

Problem 2: Quick Isolation Push Alice Bob h s Pu C ana D h M Push Mallo ry h Pus & M M C D April 2008 ol r a Ha! She’s out! Now let’s move on to the next guy! s Pu Push E d Push A Push Malfoy E 17

Trick 2: Detection & Recovery n Do not re-compute V in rounds when too many pushes are received Push Mallo ry h s Pu & M Hey! I’m swamped! I better ignore all of ‘em pushes… Bob M Push Malfoy Push Slows down isolation; does not prevent it Isolation takes log (view size) time April 2008 18

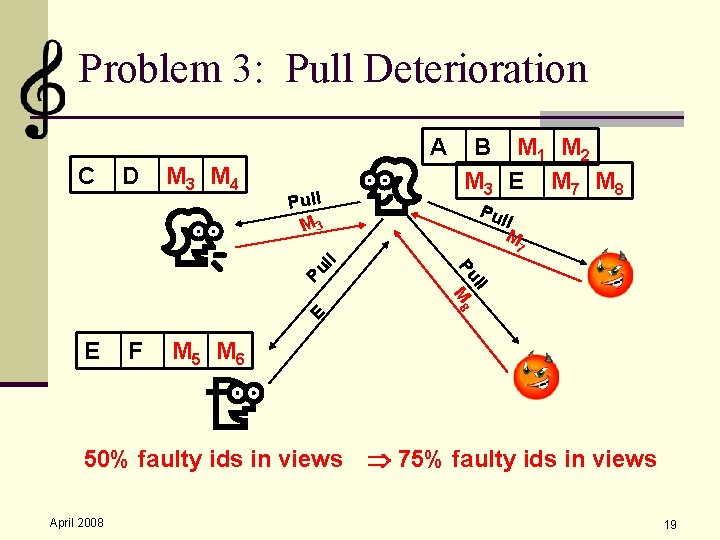

Problem 3: Pull Deterioration A C D M 3 M 4 Pull M 3 B M 1 M 2 M 3 E M 7 M 8 Pul l M Pu E E F ll Pu M 8 ll 7 M 5 M 6 50% faulty ids in views 75% faulty ids in views April 2008 19

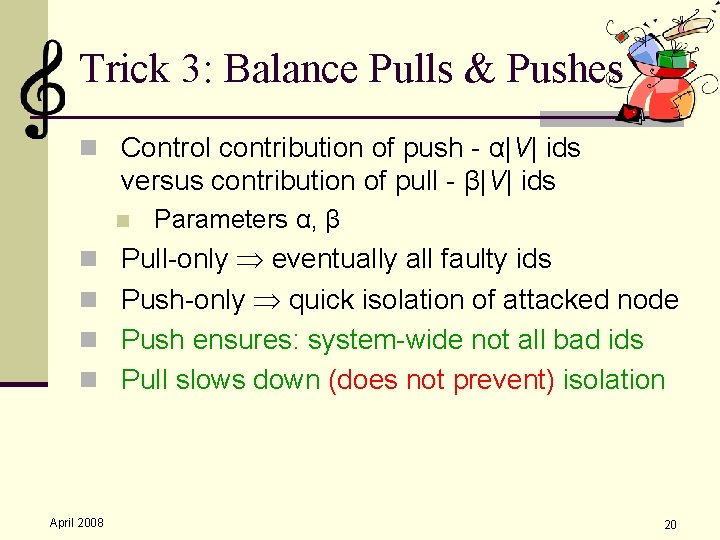

Trick 3: Balance Pulls & Pushes n Control contribution of push - α|V| ids versus contribution of pull - β|V| ids n Parameters α, β n Pull-only eventually all faulty ids n Push-only quick isolation of attacked node n Push ensures: system-wide not all bad ids n Pull slows down (does not prevent) isolation April 2008 20

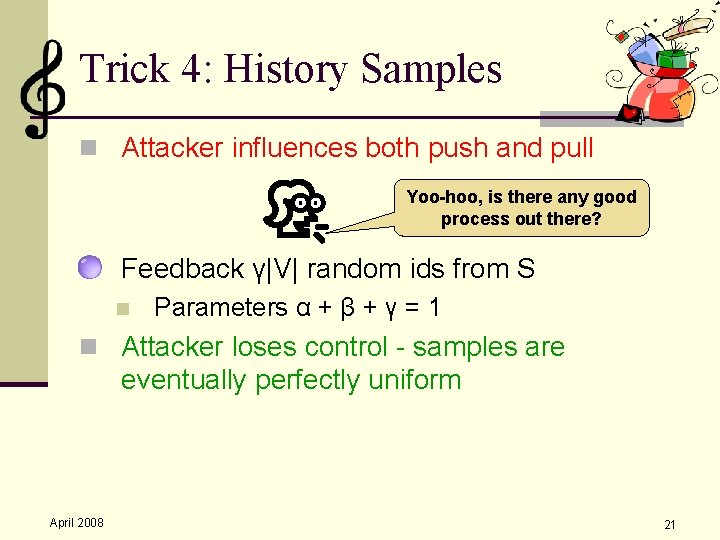

Trick 4: History Samples n Attacker influences both push and pull Yoo-hoo, is there any good process out there? n Feedback γ|V| random ids from S n Parameters α + β + γ = 1 n Attacker loses control - samples are eventually perfectly uniform April 2008 21

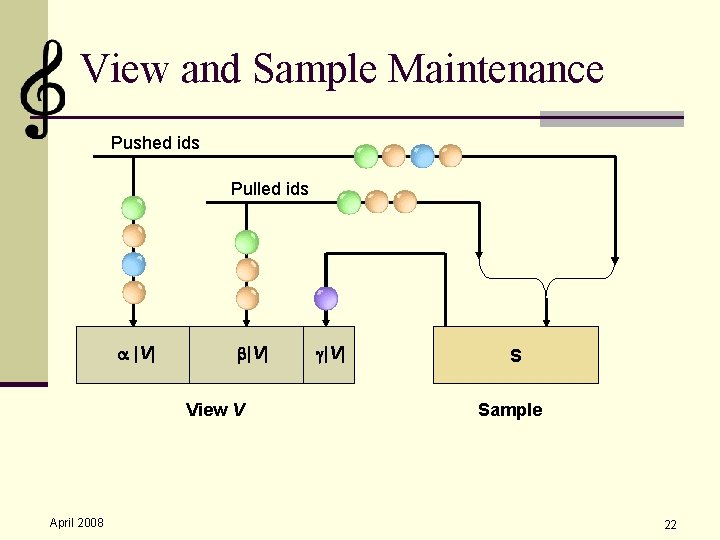

View and Sample Maintenance Pushed ids Pulled ids |V| View V April 2008 |V| S Sample 22

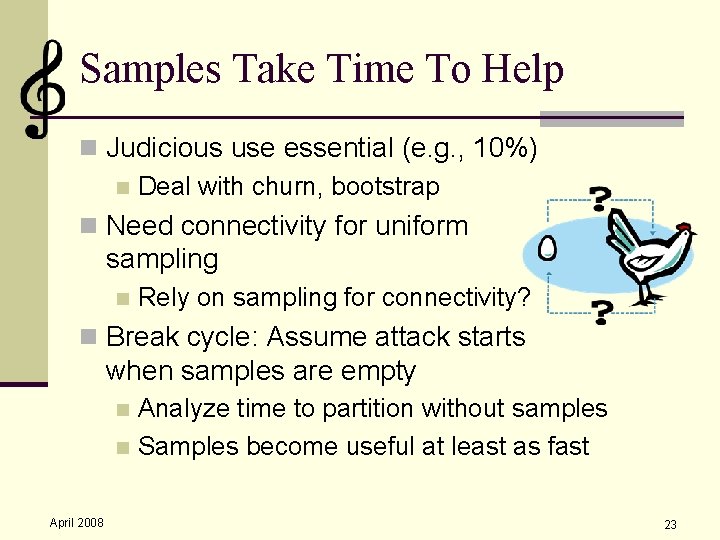

Samples Take Time To Help n Judicious use essential (e. g. , 10%) n Deal with churn, bootstrap n Need connectivity for uniform sampling n Rely on sampling for connectivity? n Break cycle: Assume attack starts when samples are empty Analyze time to partition without samples n Samples become useful at least as fast n April 2008 23

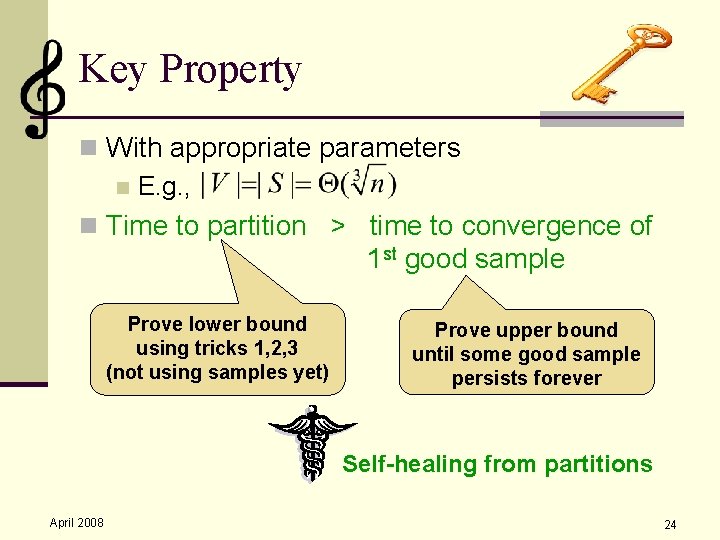

Key Property n With appropriate parameters n E. g. , n Time to partition > time to convergence of 1 st good sample Prove lower bound using tricks 1, 2, 3 (not using samples yet) Prove upper bound until some good sample persists forever Self-healing from partitions April 2008 24

Time to Partition Analysis n Easiest partition to cause – isolate one node n Targeted attack n Analysis of targeted attack: n Assume unrealistically strong adversary n Analyze evolution of faulty ids in views as a random process n Show lower bound on time to isolation n Depends on p, |V|, α, β n Independent of n April 2008 25

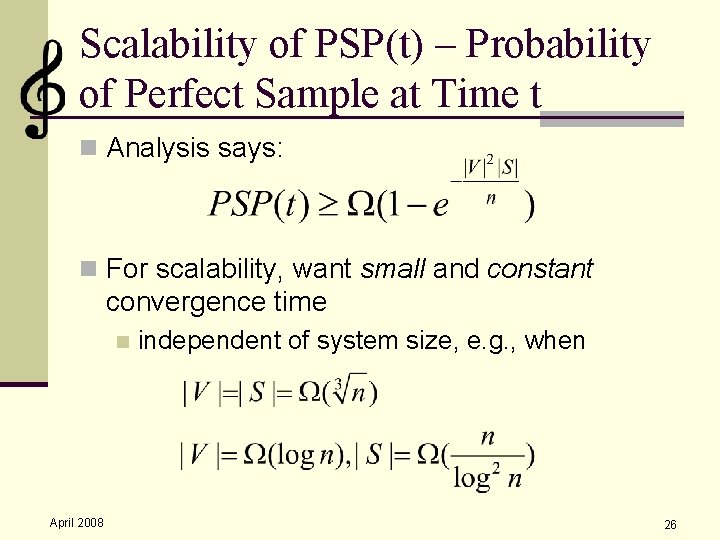

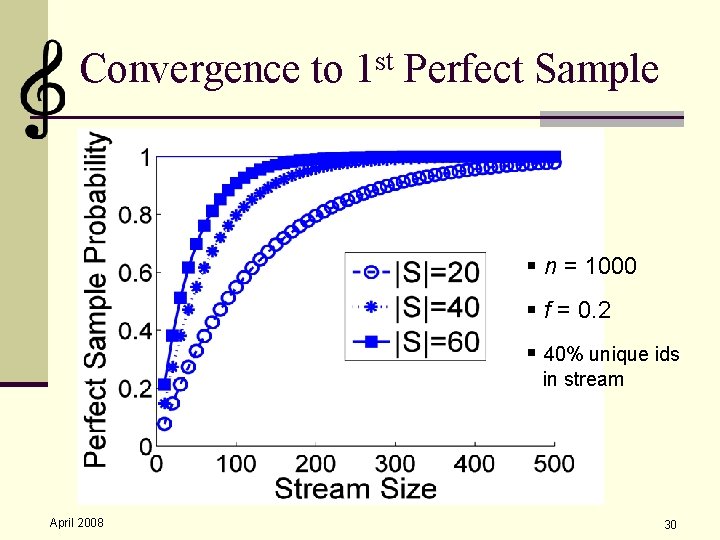

Scalability of PSP(t) – Probability of Perfect Sample at Time t n Analysis says: n For scalability, want small and constant convergence time n April 2008 independent of system size, e. g. , when 26

Analysis 1. Sampling - mathematical analysis 2. Connectivity - analysis and simulation 3. Full system simulation April 2008 27

Connectivity Sampling n Theorem: If overlay remains connected indefinitely, samples are eventually uniform April 2008 28

Sampling Connectivity Ever After n Perfect sample of a sampler with hash h: the id with the lowest h(id) system-wide n If correct, sticks once the sampler sees it n Correct perfect sample self-healing from partitions ever after April 2008 29

Convergence to 1 st Perfect Sample § n = 1000 § f = 0. 2 § 40% unique ids in stream April 2008 30

Connectivity Analysis 1: Balanced Attacks n Attack all nodes the same n Maximizes faulty ids in views system-wide n in any single round n If repeated, system converges to fixed point ratio of faulty ids in views, which is < 1 if n n γ=0 (no history) and p < 1/3 or History samples are used, any p There always good ids in views! April 2008 31

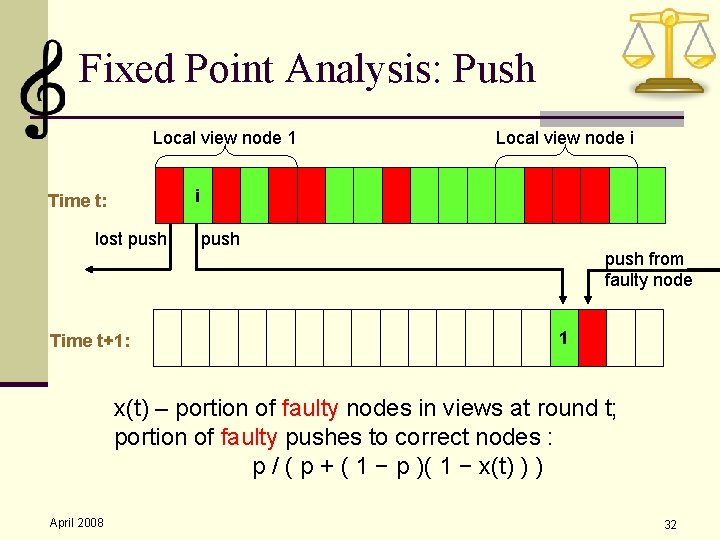

Fixed Point Analysis: Push Local view node 1 Local view node i i Time t: lost push Time t+1: push from faulty node 1 x(t) – portion of faulty nodes in views at round t; portion of faulty pushes to correct nodes : p / ( p + ( 1 − p )( 1 − x(t) ) ) April 2008 32

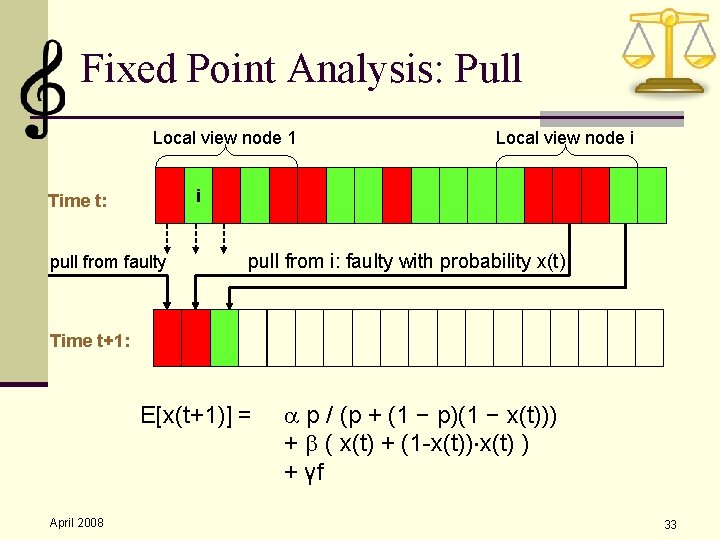

Fixed Point Analysis: Pull Local view node 1 Local view node i i Time t: pull from faulty pull from i: faulty with probability x(t) Time t+1: E[x(t+1)] = April 2008 p / (p + (1 − p)(1 − x(t))) + ( x(t) + (1 -x(t)) x(t) ) + γf 33

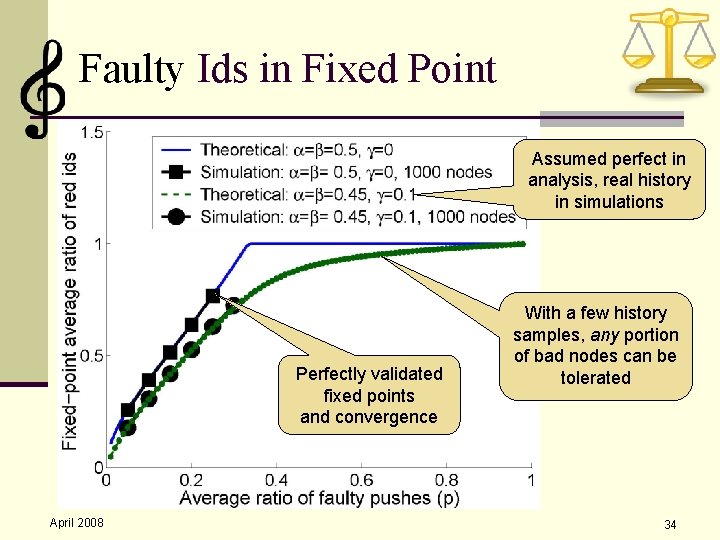

Faulty Ids in Fixed Point Assumed perfect in analysis, real history in simulations Perfectly validated fixed points and convergence April 2008 With a few history samples, any portion of bad nodes can be tolerated 34

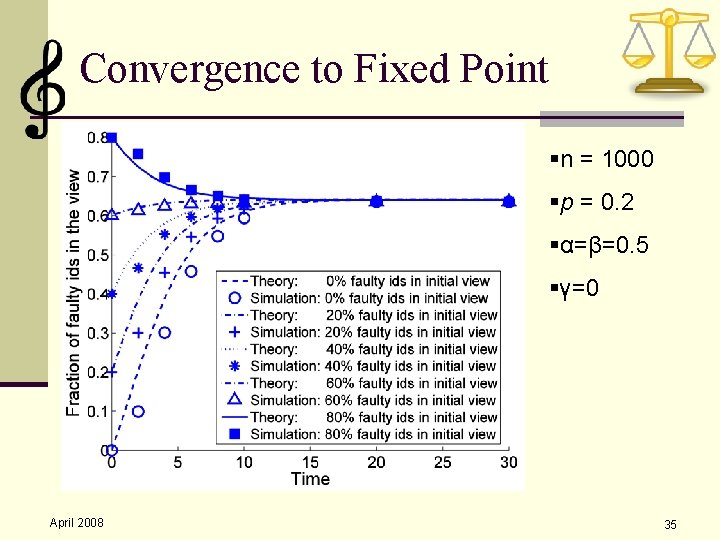

Convergence to Fixed Point §n = 1000 §p = 0. 2 §α=β=0. 5 §γ=0 April 2008 35

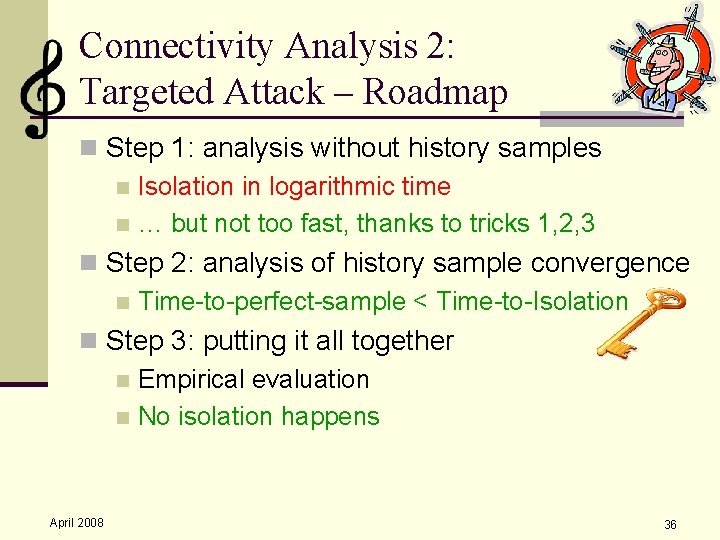

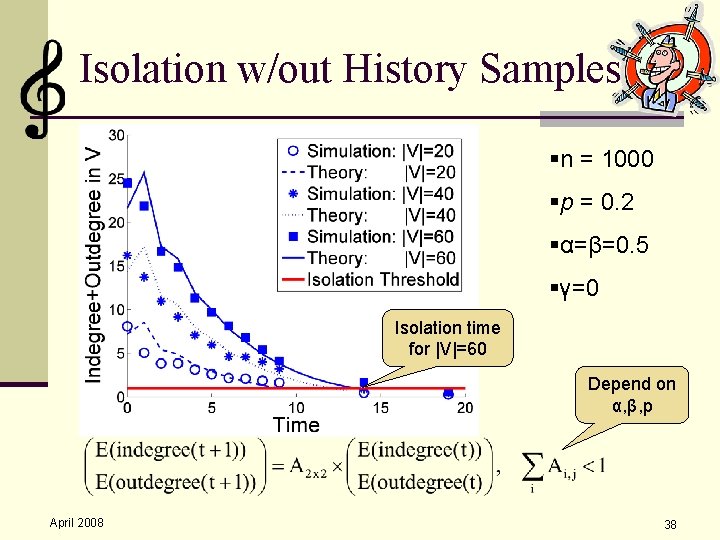

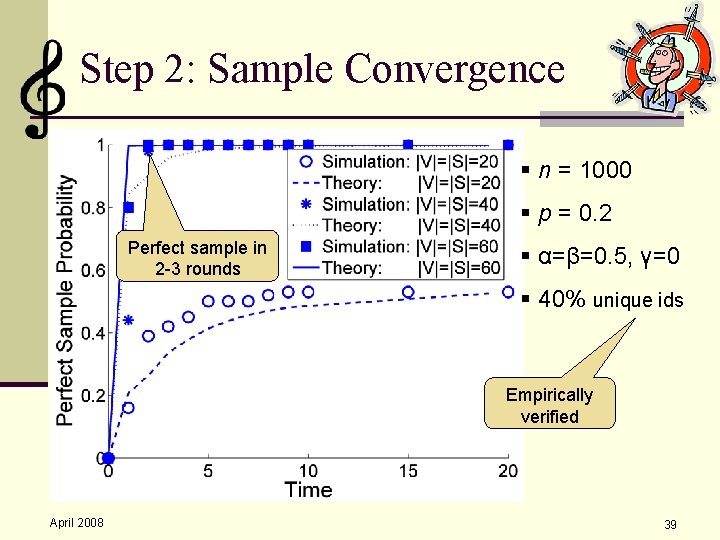

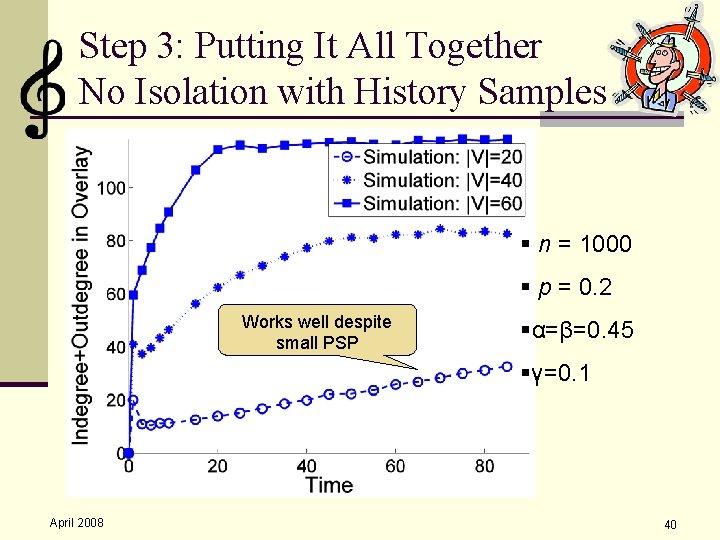

Connectivity Analysis 2: Targeted Attack – Roadmap n Step 1: analysis without history samples n Isolation in logarithmic time n … but not too fast, thanks to tricks 1, 2, 3 n Step 2: analysis of history sample convergence n Time-to-perfect-sample < Time-to-Isolation n Step 3: putting it all together n Empirical evaluation n No isolation happens April 2008 36

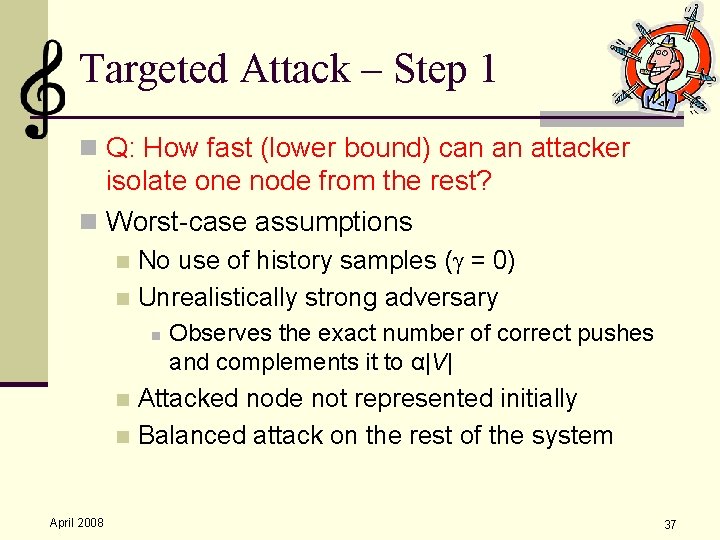

Targeted Attack – Step 1 n Q: How fast (lower bound) can an attacker isolate one node from the rest? n Worst-case assumptions No use of history samples ( = 0) n Unrealistically strong adversary n n Observes the exact number of correct pushes and complements it to α|V| Attacked node not represented initially n Balanced attack on the rest of the system n April 2008 37

Isolation w/out History Samples §n = 1000 §p = 0. 2 §α=β=0. 5 §γ=0 Isolation time for |V|=60 Depend on α, β, p April 2008 38

Step 2: Sample Convergence § n = 1000 § p = 0. 2 Perfect sample in 2 -3 rounds § α=β=0. 5, γ=0 § 40% unique ids Empirically verified April 2008 39

Step 3: Putting It All Together No Isolation with History Samples § n = 1000 § p = 0. 2 Works well despite small PSP §α=β=0. 45 §γ=0. 1 April 2008 40

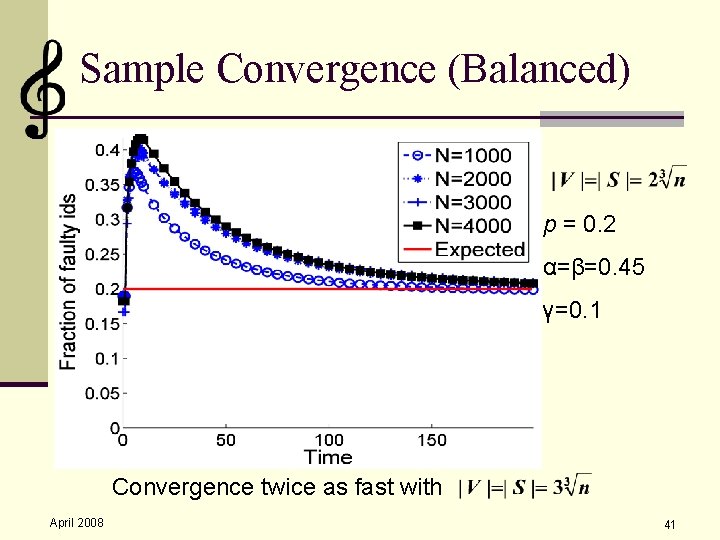

Sample Convergence (Balanced) § §p = 0. 2 §α=β=0. 45 §γ=0. 1 Convergence twice as fast with April 2008 41

Summary n O(n 1/3)-size views n Resist attacks / failures of linear portion n Converge to proven uniform samples n Precise analysis of impact of attacks April 2008 42

- Slides: 42