Benchmarking the interoperability of ontology development tools Ral

Benchmarking the interoperability of ontology development tools Raúl García-Castro, Asunción Gómez-Pérez <rgarcia, asun@fi. upm. es> April 7 th 2005 Benchmarking the interoperability of ODTs. April 7 th 2005 1 © Raúl García-Castro, Asunción Gómez-Pérez

Table of Contents • • The interoperability problem Benchmarking framework Experiment to perform Participating in the benchmarking Benchmarking the interoperability of ODTs. April 7 th 2005 2 © Raúl García-Castro, Asunción Gómez-Pérez

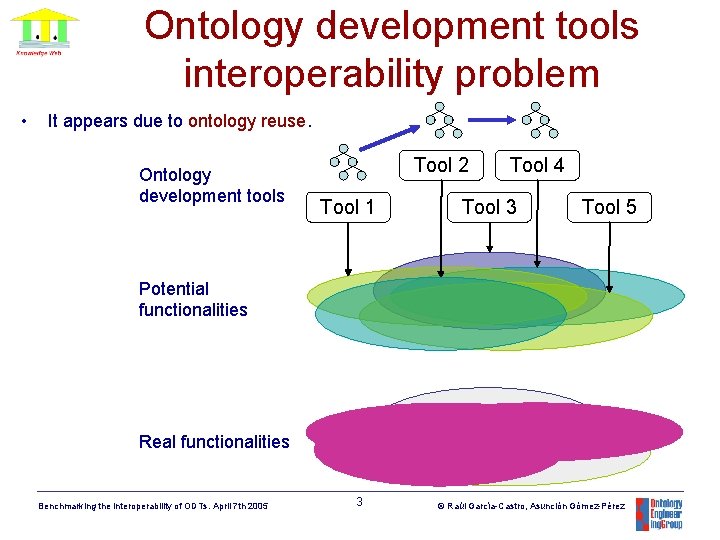

Ontology development tools interoperability problem • It appears due to ontology reuse. Ontology development tools Tool 2 Tool 1 Tool 4 Tool 3 Tool 5 Potential functionalities Real functionalities Benchmarking the interoperability of ODTs. April 7 th 2005 3 © Raúl García-Castro, Asunción Gómez-Pérez

Ontology development tools interoperability problem • Why is it difficult? – Different KR formalisms frames first order logic description logic semantic networks conceptual graphs – Different modelling components inside the same KR formalism • Some results: – It is difficult to preserve the semantics and the intended meaning of the ontology – Interoperability decisions… • At many different levels • Usually hidden in the programming code of ontology exporters/importers O. Corcho. A Layered Declarative Approach to Ontology Translation©with Knowledge Preservation 4 Raúl García-Castro, Asunción Gómez-Pérez Frontiers in Artificial Intelligence and Applications, Volume 116, January 2005 Benchmarking the interoperability of ODTs. April 7 th 2005

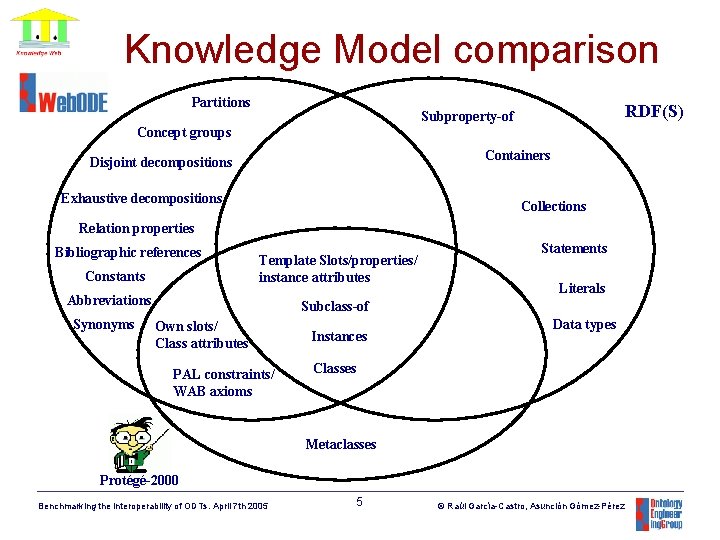

Knowledge Model comparison Partitions RDF(S) Subproperty-of Concept groups Containers Disjoint decompositions Exhaustive decompositions Collections Relation properties Bibliographic references Constants Template Slots/properties/ instance attributes Abbreviations Synonyms Statements Literals Subclass-of Own slots/ Class attributes PAL constraints/ WAB axioms Instances Data types Classes Metaclasses Protégé-2000 Benchmarking the interoperability of ODTs. April 7 th 2005 5 © Raúl García-Castro, Asunción Gómez-Pérez

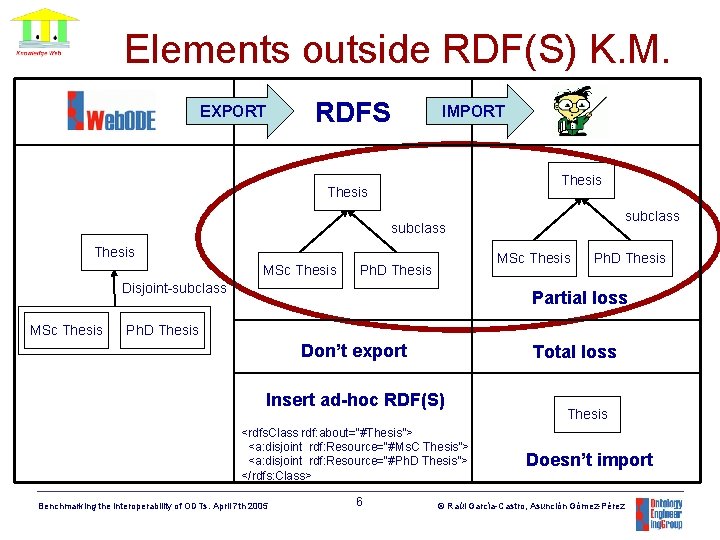

Elements outside RDF(S) K. M. RDFS EXPORT IMPORT Thesis subclass Thesis MSc Thesis Ph. D Thesis Disjoint-subclass MSc Thesis Ph. D Thesis Partial loss Ph. D Thesis Don’t export Total loss Insert ad-hoc RDF(S) <rdfs. Class rdf: about=“#Thesis”> <a: disjoint rdf: Resource=“#Ms. C Thesis”> <a: disjoint rdf: Resource=“#Ph. D Thesis”> </rdfs: Class> Benchmarking the interoperability of ODTs. April 7 th 2005 6 Thesis Doesn’t import © Raúl García-Castro, Asunción Gómez-Pérez

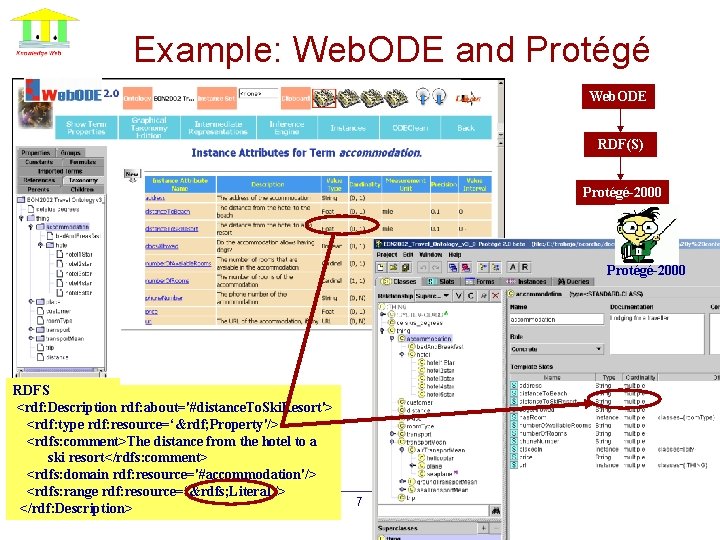

Example: Web. ODE and Protégé Web. ODE RDF(S) Protégé-2000 RDFS <rdf: Description rdf: about='#distance. To. Ski. Resort'> <rdf: type rdf: resource=‘&rdf; Property'/> <rdfs: comment>The distance from the hotel to a ski resort</rdfs: comment> <rdfs: domain rdf: resource='#accommodation'/> <rdfs: range rdf: resource=‘&rdfs; Literal'/> Benchmarking the interoperability of ODTs. April 7 th 2005 </rdf: Description> 7 © Raúl García-Castro, Asunción Gómez-Pérez

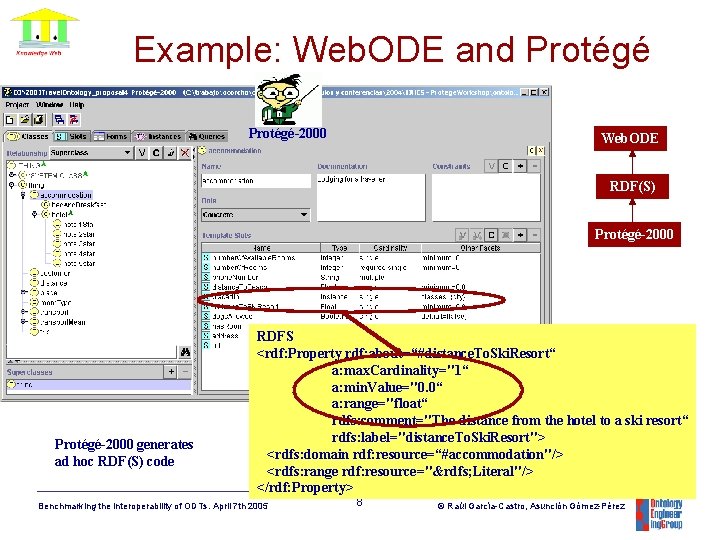

Example: Web. ODE and Protégé-2000 Web. ODE RDF(S) Protégé-2000 generates ad hoc RDF(S) code RDFS <rdf: Property rdf: about=“#distance. To. Ski. Resort“ a: max. Cardinality="1“ a: min. Value="0. 0“ a: range="float“ rdfs: comment="The distance from the hotel to a ski resort“ rdfs: label="distance. To. Ski. Resort"> <rdfs: domain rdf: resource=“#accommodation"/> <rdfs: range rdf: resource="&rdfs; Literal"/> </rdf: Property> Benchmarking the interoperability of ODTs. April 7 th 2005 8 © Raúl García-Castro, Asunción Gómez-Pérez

Table of Contents • • The interoperability problem Benchmarking framework Experiment to perform Participating in the benchmarking Benchmarking the interoperability of ODTs. April 7 th 2005 9 © Raúl García-Castro, Asunción Gómez-Pérez

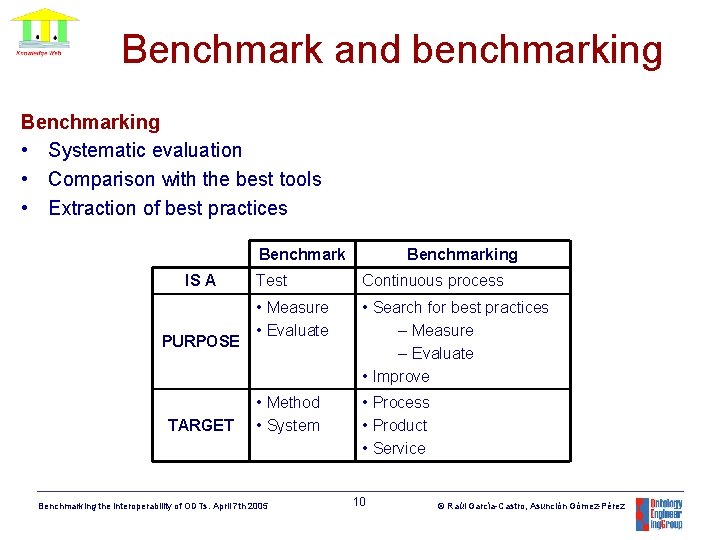

Benchmark and benchmarking Benchmarking • Systematic evaluation • Comparison with the best tools • Extraction of best practices Benchmark IS A PURPOSE TARGET Benchmarking Test Continuous process • Measure • Evaluate • Search for best practices – Measure – Evaluate • Improve • Method • System • Process • Product • Service Benchmarking the interoperability of ODTs. April 7 th 2005 10 © Raúl García-Castro, Asunción Gómez-Pérez

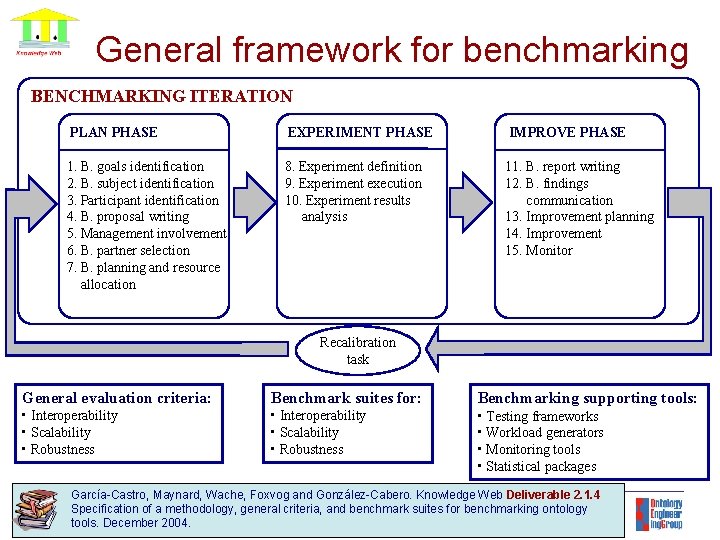

General framework for benchmarking BENCHMARKING ITERATION PLAN PHASE EXPERIMENT PHASE IMPROVE PHASE 1. B. goals identification 2. B. subject identification 3. Participant identification 4. B. proposal writing 5. Management involvement 6. B. partner selection 7. B. planning and resource allocation 8. Experiment definition 9. Experiment execution 10. Experiment results analysis 11. B. report writing 12. B. findings communication 13. Improvement planning 14. Improvement 15. Monitor Recalibration task General evaluation criteria: Benchmark suites for: Benchmarking supporting tools: • Interoperability • Scalability • Robustness • Testing frameworks • Workload generators • Monitoring tools • Statistical packages García-Castro, Maynard, Wache, Foxvog and González-Cabero. Knowledge Web Deliverable 2. 1. 4 11 Raúlbenchmarking García-Castro, Asunción Gómez-Pérez criteria, and benchmark suites© for ontology tools. December 2004. Benchmarking the interoperability of ODTs. April 7 th 2005 Specification of a methodology, general

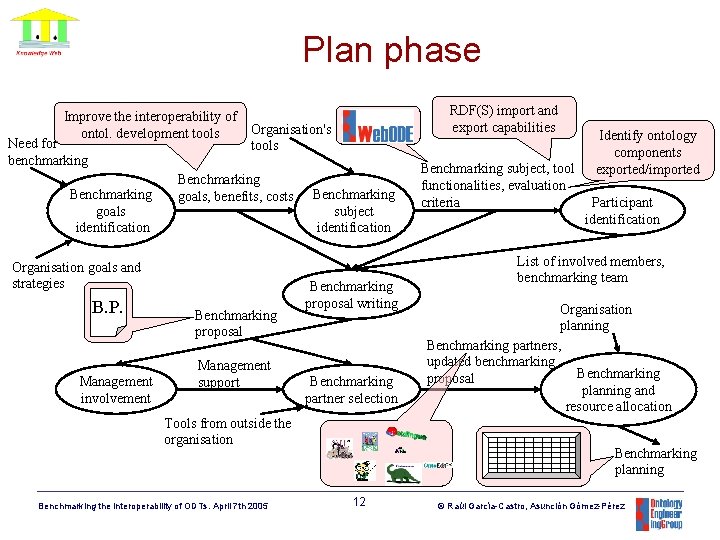

Plan phase Improve the interoperability of ontol. development tools Need for benchmarking Benchmarking goals identification Organisation's tools Benchmarking goals, benefits, costs Organisation goals and strategies B. P. Management involvement RDF(S) import and export capabilities Benchmarking proposal Management support Benchmarking subject identification Benchmarking proposal writing Benchmarking partner selection Tools from outside the organisation Benchmarking the interoperability of ODTs. April 7 th 2005 Benchmarking subject, tool functionalities, evaluation criteria Identify ontology components exported/imported Participant identification List of involved members, benchmarking team Organisation planning Benchmarking partners, updated benchmarking Benchmarking proposal planning and resource allocation Benchmarking planning 12 © Raúl García-Castro, Asunción Gómez-Pérez

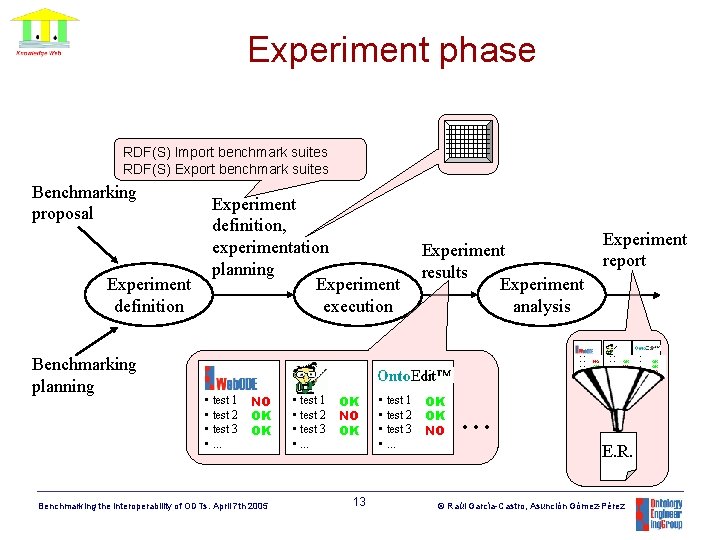

Experiment phase RDF(S) Import benchmark suites RDF(S) Export benchmark suites Benchmarking proposal Experiment definition Benchmarking planning Experiment definition, experimentation planning Experiment execution Experiment results Experiment analysis • test 1 • test 2 NO • test 3 OK • . . . OK • test 1 • test 2 • test 3 • . . . NO OK OK Benchmarking the interoperability of ODTs. April 7 th 2005 • test 1 • test 2 • test 3 • . . . OK NO OK 13 • test 1 • test 2 • test 3 • . . . OK OK NO Experiment report • test 1 • test 2 OK • test 3 NO • . . . OK . . . E. R. © Raúl García-Castro, Asunción Gómez-Pérez • test 1 • test 2 OK • test 3 OK • . . . NO

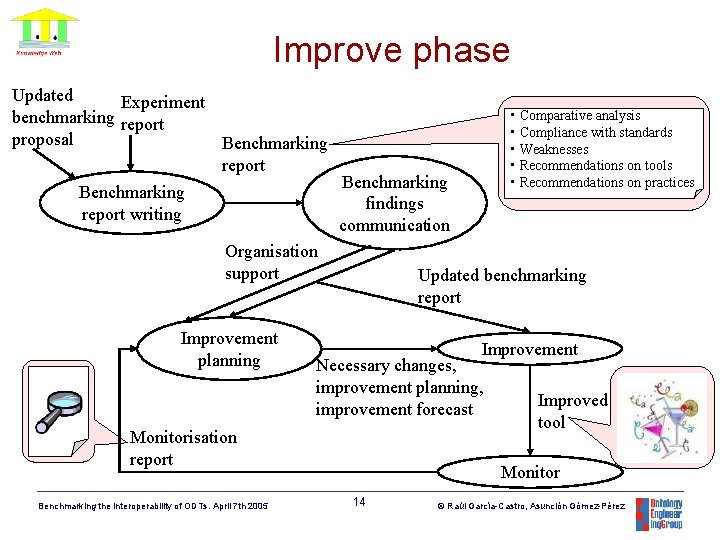

Improve phase Updated Experiment benchmarking report proposal Benchmarking report writing Benchmarking findings communication Organisation support Improvement planning Updated benchmarking report Improvement Necessary changes, improvement planning, improvement forecast Monitorisation report Benchmarking the interoperability of ODTs. April 7 th 2005 • Comparative analysis • Compliance with standards • Weaknesses • Recommendations on tools • Recommendations on practices Improved tool Monitor 14 © Raúl García-Castro, Asunción Gómez-Pérez

Table of Contents • • The interoperability problem Benchmarking framework Experiment to perform Participating in the benchmarking Benchmarking the interoperability of ODTs. April 7 th 2005 15 © Raúl García-Castro, Asunción Gómez-Pérez

Benchmarking goals Goal 1: • To assess and improve the interoperability of ontology development tools using RDF(S) for ontology exchange. Goal 2: • To identify the subset of RDF(S) elements that ontology development tools can use to correctly interoperate. Goal 3: • Next step: OWL. Benchmarking the interoperability of ODTs. April 7 th 2005 16 © Raúl García-Castro, Asunción Gómez-Pérez

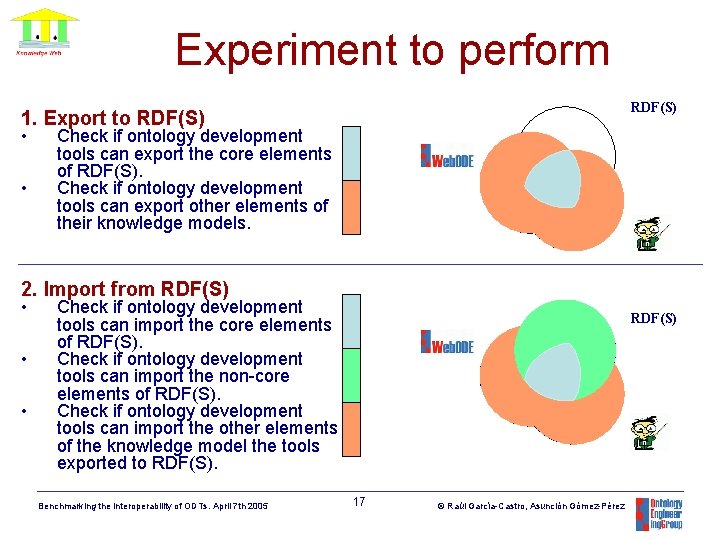

Experiment to perform RDF(S) 1. Export to RDF(S) • • Check if ontology development tools can export the core elements of RDF(S). Check if ontology development tools can export other elements of their knowledge models. 2. Import from RDF(S) • • • Check if ontology development tools can import the core elements of RDF(S). Check if ontology development tools can import the non-core elements of RDF(S). Check if ontology development tools can import the other elements of the knowledge model the tools exported to RDF(S). Benchmarking the interoperability of ODTs. April 7 th 2005 RDF(S) 17 © Raúl García-Castro, Asunción Gómez-Pérez

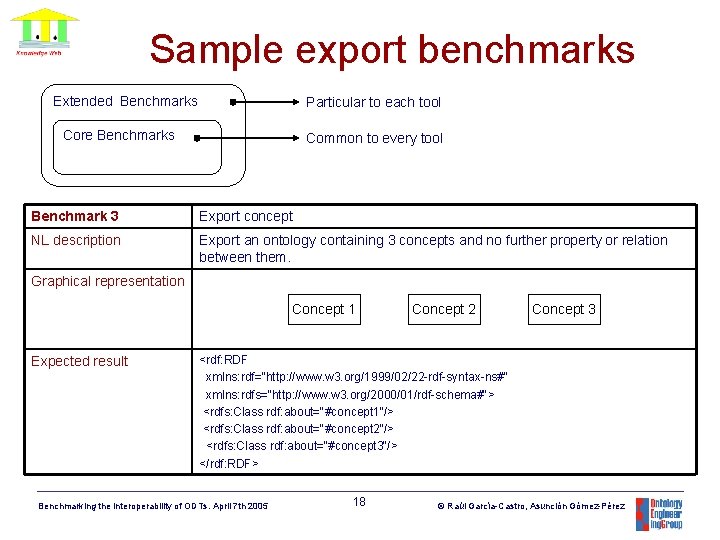

Sample export benchmarks Extended Benchmarks Particular to each tool Core Benchmarks Common to every tool Benchmark 3 Export concept NL description Export an ontology containing 3 concepts and no further property or relation between them. Graphical representation Concept 1 Expected result Concept 2 Concept 3 <rdf: RDF xmlns: rdf="http: //www. w 3. org/1999/02/22 -rdf-syntax-ns#" xmlns: rdfs="http: //www. w 3. org/2000/01/rdf-schema#"> <rdfs: Class rdf: about="#concept 1“/> <rdfs: Class rdf: about="#concept 2“/> <rdfs: Class rdf: about="#concept 3“/> </rdf: RDF> Benchmarking the interoperability of ODTs. April 7 th 2005 18 © Raúl García-Castro, Asunción Gómez-Pérez

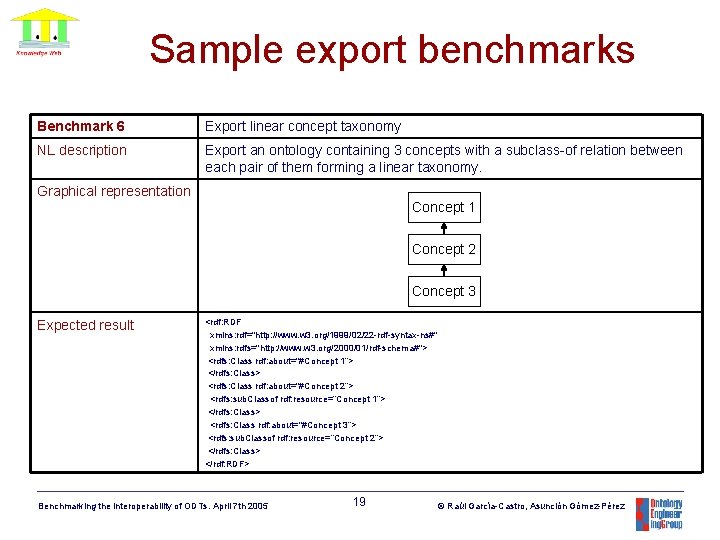

Sample export benchmarks Benchmark 6 Export linear concept taxonomy NL description Export an ontology containing 3 concepts with a subclass-of relation between each pair of them forming a linear taxonomy. Graphical representation Concept 1 Concept 2 Concept 3 Expected result <rdf: RDF xmlns: rdf="http: //www. w 3. org/1999/02/22 -rdf-syntax-ns#" xmlns: rdfs="http: //www. w 3. org/2000/01/rdf-schema#"> <rdfs: Class rdf: about="#Concept 1“> </rdfs: Class> <rdfs: Class rdf: about="#Concept 2“> <rdfs: sub. Classof rdf: resource=“Concept 1”> </rdfs: Class> <rdfs: Class rdf: about="#Concept 3”> <rdfs: sub. Classof rdf: resource=“Concept 2”> </rdfs: Class> </rdf: RDF> Benchmarking the interoperability of ODTs. April 7 th 2005 19 © Raúl García-Castro, Asunción Gómez-Pérez

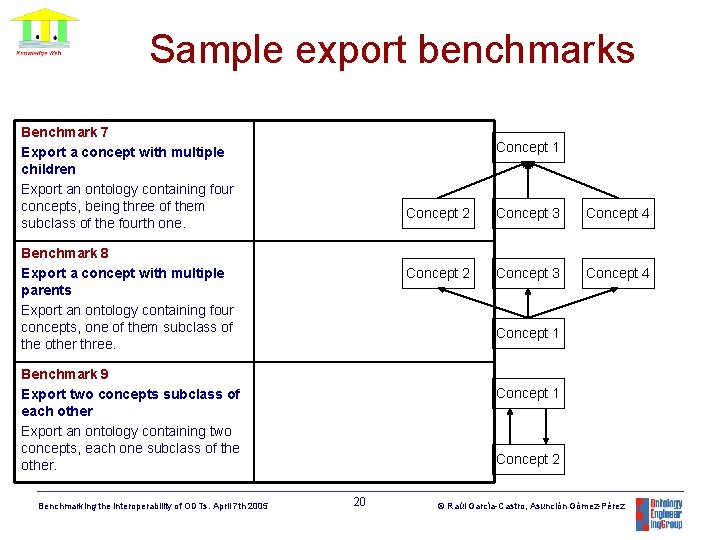

Sample export benchmarks Benchmark 7 Export a concept with multiple children Export an ontology containing four concepts, being three of them subclass of the fourth one. Concept 1 Benchmark 8 Export a concept with multiple parents Export an ontology containing four concepts, one of them subclass of the other three. Concept 3 Concept 4 Concept 2 Concept 3 Concept 4 Concept 1 Benchmark 9 Export two concepts subclass of each other Export an ontology containing two concepts, each one subclass of the other. Benchmarking the interoperability of ODTs. April 7 th 2005 Concept 2 Concept 1 Concept 2 20 © Raúl García-Castro, Asunción Gómez-Pérez

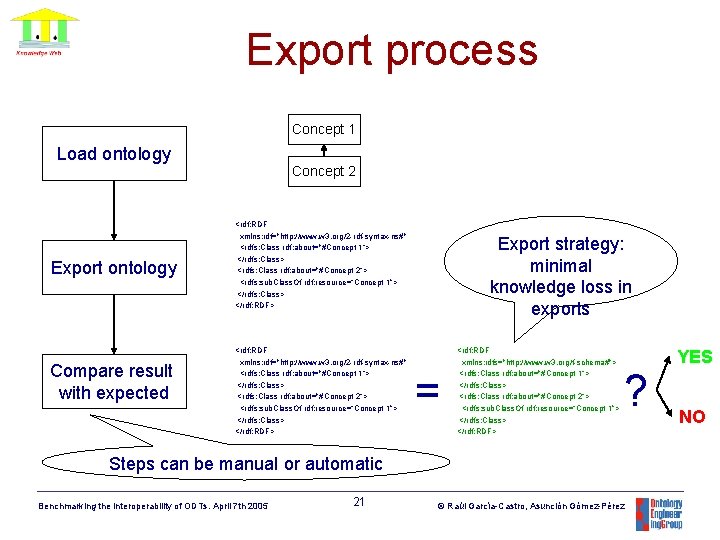

Export process Concept 1 Load ontology Concept 2 Export ontology Compare result with expected <rdf: RDF xmlns: rdf="http: //www. w 3. org/2 -rdf-syntax-ns#" <rdfs: Class rdf: about="#Concept 1“> </rdfs: Class> <rdfs: Class rdf: about="#Concept 2“> <rdfs: sub. Class. Of rdf: resource=“Concept 1”> </rdfs: Class> </rdf: RDF> Export strategy: minimal knowledge loss in exports = <rdf: RDF xmlns: rdfs="http: //www. w 3. org/f-schema#"> <rdfs: Class rdf: about="#Concept 1“> </rdfs: Class> <rdfs: Class rdf: about="#Concept 2“> <rdfs: sub. Class. Of rdf: resource=“Concept 1”> </rdfs: Class> </rdf: RDF> ? Steps can be manual or automatic Benchmarking the interoperability of ODTs. April 7 th 2005 21 © Raúl García-Castro, Asunción Gómez-Pérez YES NO

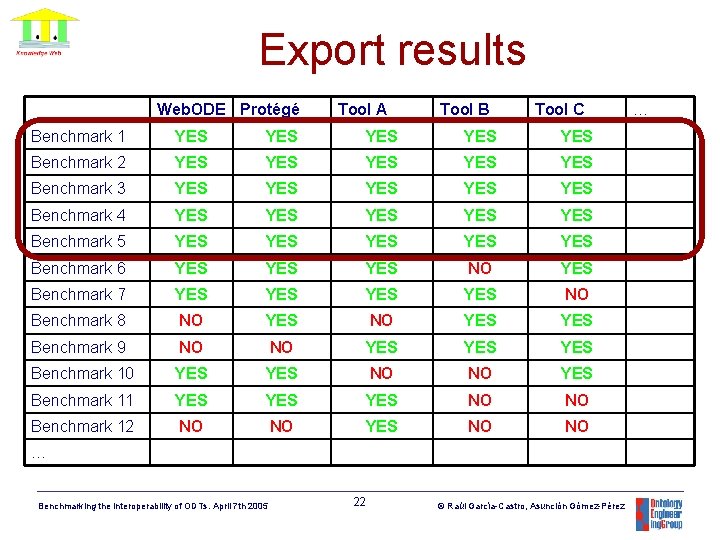

Export results Web. ODE Protégé Tool A Tool B Tool C Benchmark 1 YES YES YES Benchmark 2 YES YES YES Benchmark 3 YES YES YES Benchmark 4 YES YES YES Benchmark 5 YES YES YES Benchmark 6 YES YES NO YES Benchmark 7 YES YES NO Benchmark 8 NO YES YES Benchmark 9 NO NO YES YES Benchmark 10 YES NO NO YES Benchmark 11 YES YES NO NO Benchmark 12 NO NO YES NO NO … Benchmarking the interoperability of ODTs. April 7 th 2005 22 © Raúl García-Castro, Asunción Gómez-Pérez …

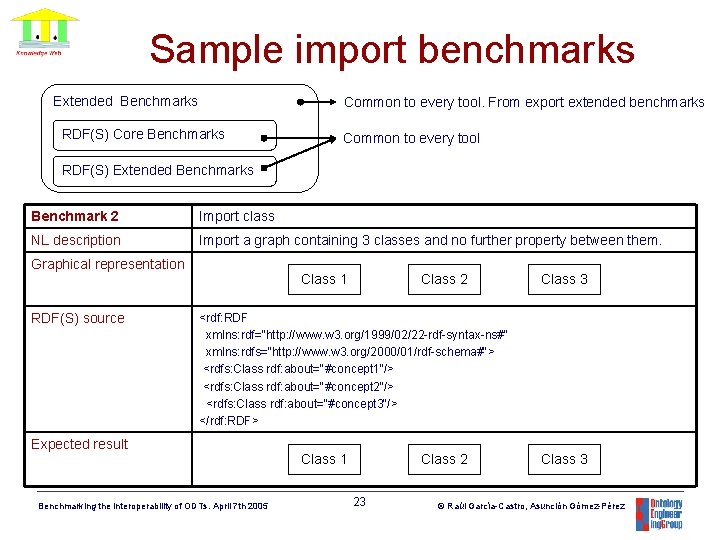

Sample import benchmarks Extended Benchmarks Common to every tool. From export extended benchmarks RDF(S) Core Benchmarks Common to every tool RDF(S) Extended Benchmarks Benchmark 2 Import class NL description Import a graph containing 3 classes and no further property between them. Graphical representation RDF(S) source Class 1 Class 2 Class 3 <rdf: RDF xmlns: rdf="http: //www. w 3. org/1999/02/22 -rdf-syntax-ns#" xmlns: rdfs="http: //www. w 3. org/2000/01/rdf-schema#"> <rdfs: Class rdf: about="#concept 1“/> <rdfs: Class rdf: about="#concept 2“/> <rdfs: Class rdf: about="#concept 3“/> </rdf: RDF> Expected result Benchmarking the interoperability of ODTs. April 7 th 2005 Class 1 Class 2 23 Class 3 © Raúl García-Castro, Asunción Gómez-Pérez

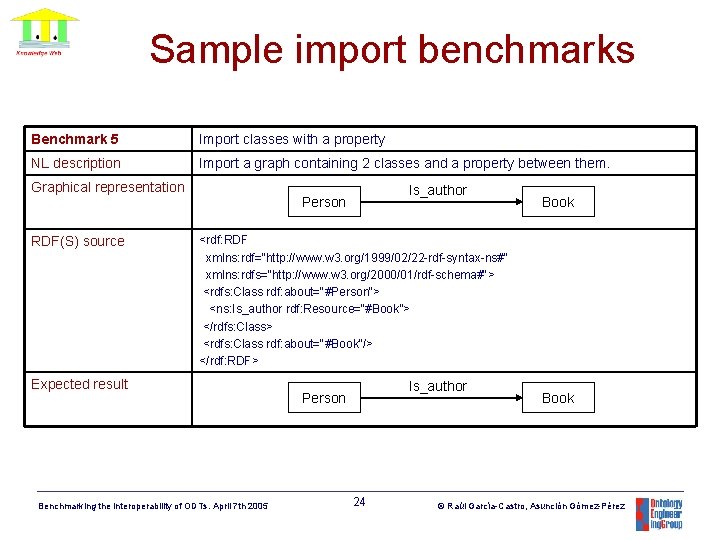

Sample import benchmarks Benchmark 5 Import classes with a property NL description Import a graph containing 2 classes and a property between them. Graphical representation RDF(S) source Is_author Person Book <rdf: RDF xmlns: rdf="http: //www. w 3. org/1999/02/22 -rdf-syntax-ns#" xmlns: rdfs="http: //www. w 3. org/2000/01/rdf-schema#"> <rdfs: Class rdf: about="#Person“> <ns: Is_author rdf: Resource=“#Book”> </rdfs: Class> <rdfs: Class rdf: about="#Book“/> </rdf: RDF> Expected result Benchmarking the interoperability of ODTs. April 7 th 2005 Is_author Person 24 Book © Raúl García-Castro, Asunción Gómez-Pérez

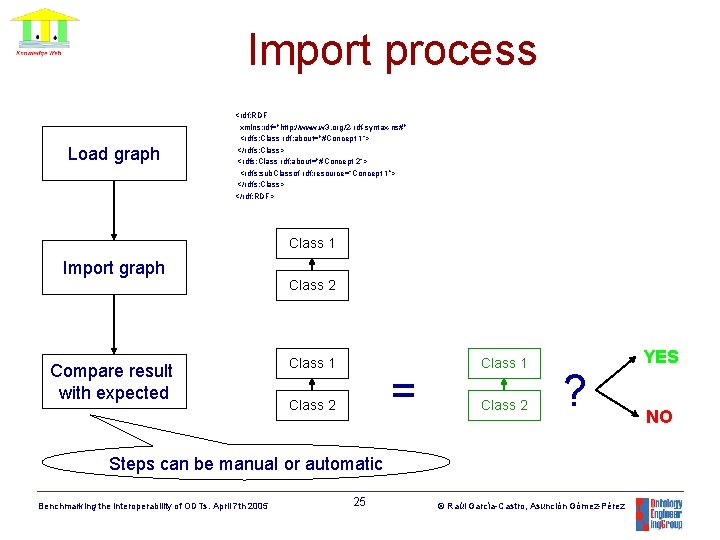

Import process Load graph <rdf: RDF xmlns: rdf="http: //www. w 3. org/2 -rdf-syntax-ns#" <rdfs: Class rdf: about="#Concept 1“> </rdfs: Class> <rdfs: Class rdf: about="#Concept 2“> <rdfs: sub. Classof rdf: resource=“Concept 1”> </rdfs: Class> </rdf: RDF> Class 1 Import graph Class 2 Compare result with expected Class 1 = Class 2 Class 1 Class 2 ? Steps can be manual or automatic Benchmarking the interoperability of ODTs. April 7 th 2005 25 © Raúl García-Castro, Asunción Gómez-Pérez YES NO

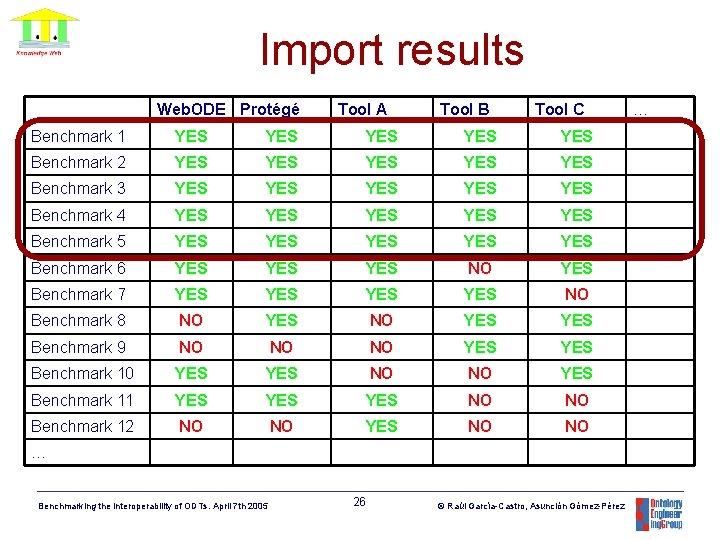

Import results Web. ODE Protégé Tool A Tool B Tool C Benchmark 1 YES YES YES Benchmark 2 YES YES YES Benchmark 3 YES YES YES Benchmark 4 YES YES YES Benchmark 5 YES YES YES Benchmark 6 YES YES NO YES Benchmark 7 YES YES NO Benchmark 8 NO YES YES Benchmark 9 NO NO NO YES Benchmark 10 YES NO NO YES Benchmark 11 YES YES NO NO Benchmark 12 NO NO YES NO NO … Benchmarking the interoperability of ODTs. April 7 th 2005 26 © Raúl García-Castro, Asunción Gómez-Pérez …

Table of Contents • • The interoperability problem Benchmarking framework Experiment to perform Participating in the benchmarking Benchmarking the interoperability of ODTs. April 7 th 2005 27 © Raúl García-Castro, Asunción Gómez-Pérez

Benchmarking benefits • For the participants: – To know in detail the interoperability of their ODTs. – To know the set of terms in which interoperability between their ODTs can be achieved. – To show the rest of the world that their ODTs are able to interoperate and are among the best ODTs. • For the Semantic Web community: – To obtain a significant improvement in the interoperability of ODTs. – To know the best practices that are performed when developing the interoperability of ontology development tools. – To obtain instruments to assess the interoperability of ODTs. – To know the best-in-class ODTs regarding interoperability. Benchmarking the interoperability of ODTs. April 7 th 2005 28 © Raúl García-Castro, Asunción Gómez-Pérez

Participating in the benchmarking • Every organisation is invited to participate: – If you are developers, with your own tool. – If you are users, with your preferred tool. • Supported by the Knowledge Web No. E. • The results will be presented in the EON 2005 workshop. Benchmarking the interoperability of ODTs. April 7 th 2005 29 © Raúl García-Castro, Asunción Gómez-Pérez

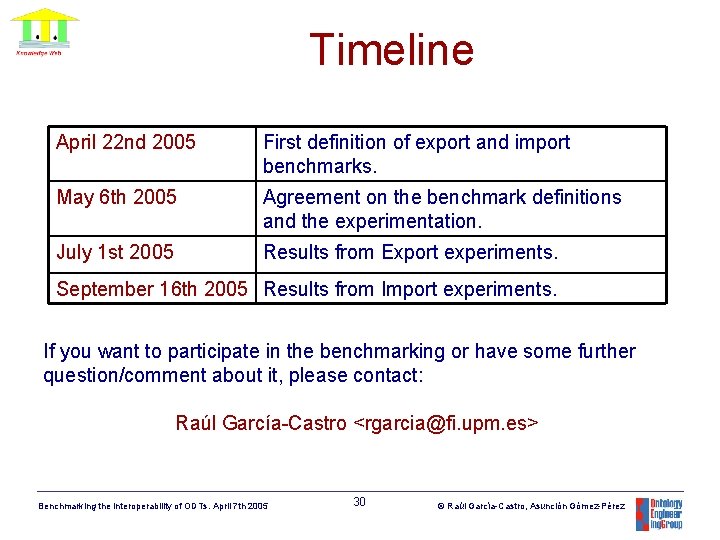

Timeline April 22 nd 2005 First definition of export and import benchmarks. May 6 th 2005 Agreement on the benchmark definitions and the experimentation. July 1 st 2005 Results from Export experiments. September 16 th 2005 Results from Import experiments. If you want to participate in the benchmarking or have some further question/comment about it, please contact: Raúl García-Castro <rgarcia@fi. upm. es> Benchmarking the interoperability of ODTs. April 7 th 2005 30 © Raúl García-Castro, Asunción Gómez-Pérez

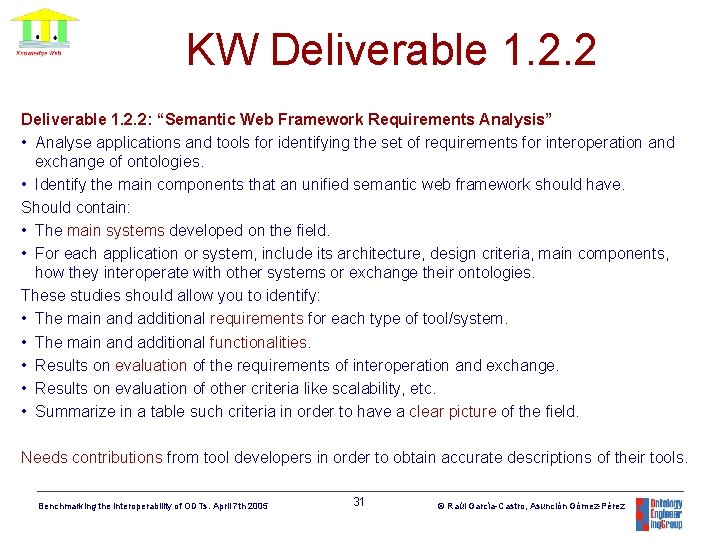

KW Deliverable 1. 2. 2: “Semantic Web Framework Requirements Analysis” • Analyse applications and tools for identifying the set of requirements for interoperation and exchange of ontologies. • Identify the main components that an unified semantic web framework should have. Should contain: • The main systems developed on the field. • For each application or system, include its architecture, design criteria, main components, how they interoperate with other systems or exchange their ontologies. These studies should allow you to identify: • The main and additional requirements for each type of tool/system. • The main and additional functionalities. • Results on evaluation of the requirements of interoperation and exchange. • Results on evaluation of other criteria like scalability, etc. • Summarize in a table such criteria in order to have a clear picture of the field. Needs contributions from tool developers in order to obtain accurate descriptions of their tools. Benchmarking the interoperability of ODTs. April 7 th 2005 31 © Raúl García-Castro, Asunción Gómez-Pérez

Benchmarking the interoperability of ontology development tools Raúl García-Castro, Asunción Gómez-Pérez <rgarcia, asun@fi. upm. es> April 7 th 2005 Benchmarking the interoperability of ODTs. April 7 th 2005 32 © Raúl García-Castro, Asunción Gómez-Pérez

- Slides: 32