Benchmarking Ontology Technology Ral GarcaCastro Asuncin GmezPrez rgarcia

Benchmarking Ontology Technology Raúl García-Castro, Asunción Gómez-Pérez <rgarcia, asun@fi. upm. es> May 13 th, 2004 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 1 © R. García-Castro, A. Gómez-Pérez 1

Table of contents • Benchmarking • Experimental Software Engineering • Measurement • Ontology Technology Evaluation • Conclusions and Future Work 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 2 © R. García-Castro, A. Gómez-Pérez 2

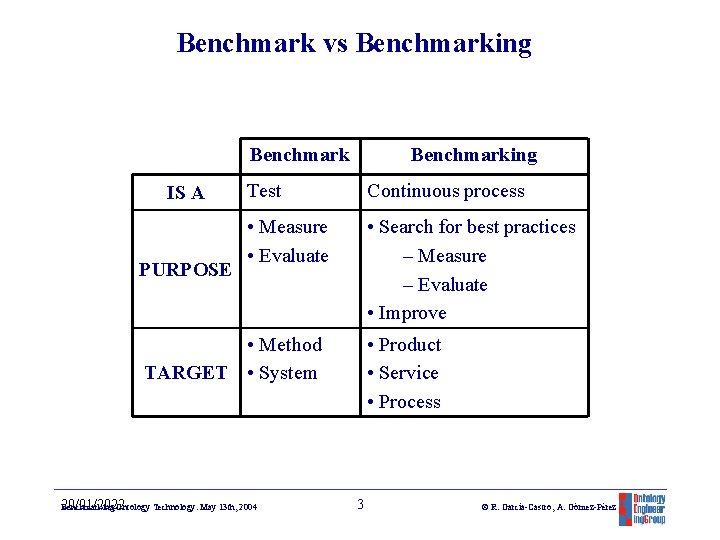

Benchmark vs Benchmarking Benchmark IS A PURPOSE Benchmarking Test Continuous process • Measure • Evaluate • Search for best practices – Measure – Evaluate • Improve • Method TARGET • System 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 • Product • Service • Process 3 © R. García-Castro, A. Gómez-Pérez 3

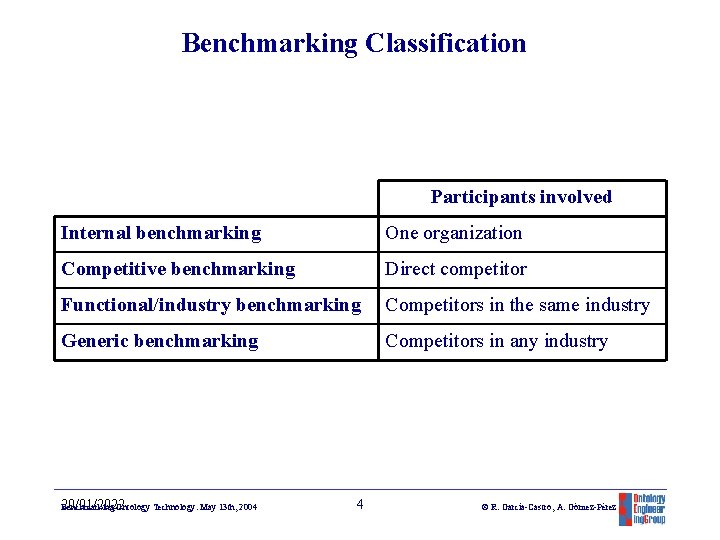

Benchmarking Classification Participants involved Internal benchmarking One organization Competitive benchmarking Direct competitor Functional/industry benchmarking Competitors in the same industry Generic benchmarking Competitors in any industry 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 4 © R. García-Castro, A. Gómez-Pérez 4

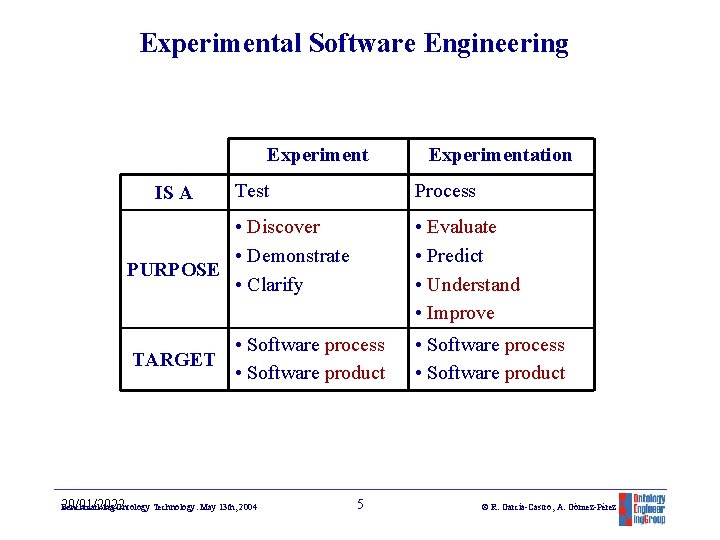

Experimental Software Engineering Experiment IS A Test Experimentation Process • Discover • Demonstrate PURPOSE • Clarify • Evaluate • Predict • Understand • Improve • Software process TARGET • Software product • Software process • Software product 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 5 © R. García-Castro, A. Gómez-Pérez 5

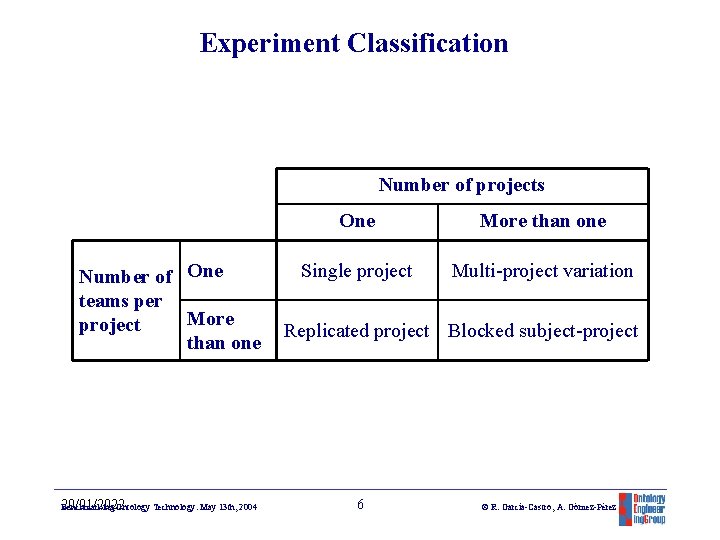

Experiment Classification Number of projects Number of One teams per More project than one 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 One More than one Single project Multi-project variation Replicated project Blocked subject-project 6 © R. García-Castro, A. Gómez-Pérez 6

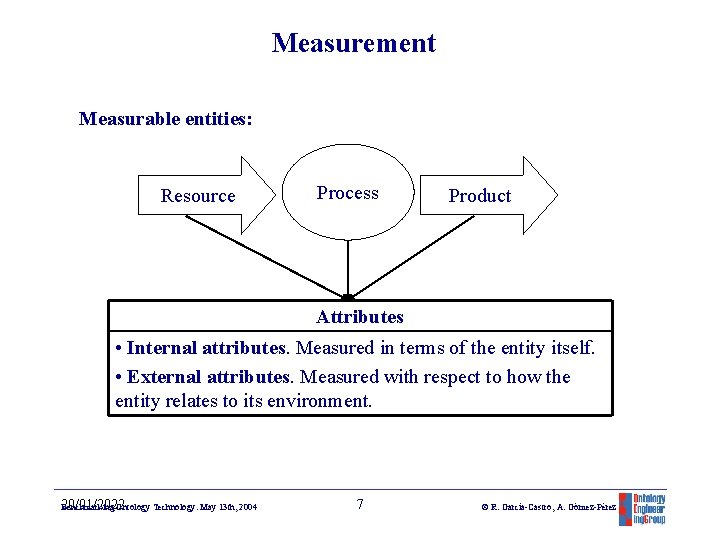

Measurement Measurable entities: Resource Process Product Attributes • Internal attributes. Measured in terms of the entity itself. • External attributes. Measured with respect to how the entity relates to its environment. 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 7 © R. García-Castro, A. Gómez-Pérez 7

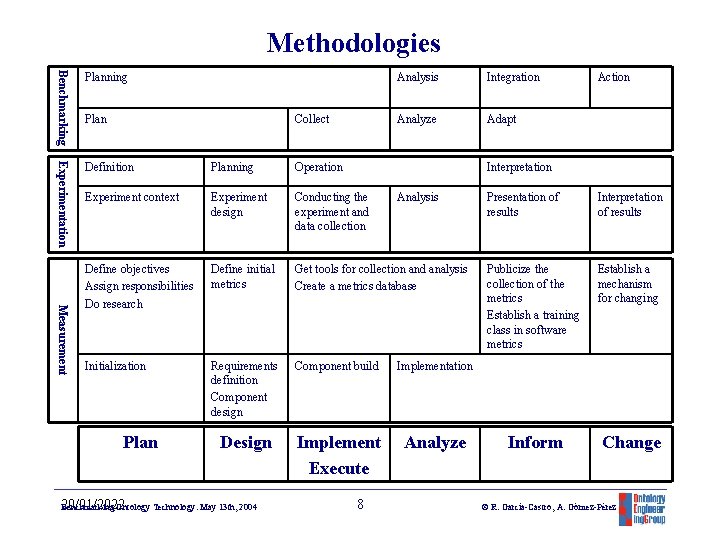

Methodologies Experimentation Definition Planning Operation Experiment context Experiment design Conducting the experiment and data collection Define objectives Assign responsibilities Do research Define initial metrics Get tools for collection and analysis Create a metrics database Initialization Requirements definition Component design Component build Implementation Design Implement Execute Analyze Measurement Benchmarking Planning Plan Collect Plan 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 Analysis Integration Analyze Adapt Action Interpretation 8 Analysis Presentation of results Interpretation of results Publicize the collection of the metrics Establish a training class in software metrics Establish a mechanism for changing Inform Change © R. García-Castro, A. Gómez-Pérez 8

Table of contents • Benchmarking • Experimental Software Engineering • Measurement • Ontology Technology Evaluation • Conclusions and Future Work 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 9 © R. García-Castro, A. Gómez-Pérez 9

General framework for ontology tool evaluation Onto. Web deliverable 1. 3: Tool comparison according to different criteria: • Ontology building tools • Ontology merge and integration tools • Ontology evaluation tools • Ontology-based annotation tools • Ontology storage and querying tools 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 10 © R. García-Castro, A. Gómez-Pérez 10

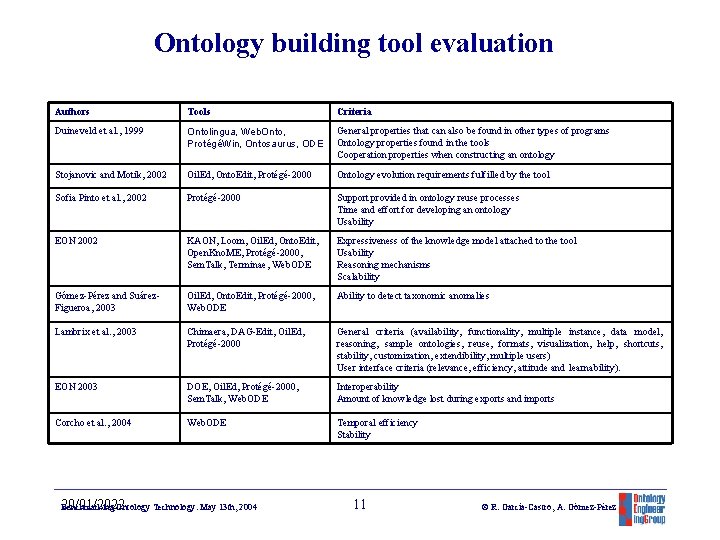

Ontology building tool evaluation Authors Tools Criteria Duineveld et al. , 1999 Ontolingua, Web. Onto, ProtégéWin, Ontosaurus, ODE General properties that can also be found in other types of programs Ontology properties found in the tools Cooperation properties when constructing an ontology Stojanovic and Motik, 2002 Oil. Ed, Onto. Edit, Protégé-2000 Ontology evolution requirements fulfilled by the tool Sofia Pinto et al. , 2002 Protégé-2000 Support provided in ontology reuse processes Time and effort for developing an ontology Usability EON 2002 KAON, Loom, Oil. Ed, Onto. Edit, Open. Kno. ME, Protégé-2000, Sem. Talk, Terminae, Web. ODE Expressiveness of the knowledge model attached to the tool Usability Reasoning mechanisms Scalability Gómez-Pérez and Suárez. Figueroa, 2003 Oil. Ed, Onto. Edit, Protégé-2000, Web. ODE Ability to detect taxonomic anomalies Lambrix et al. , 2003 Chimaera, DAG-Edit, Oil. Ed, Protégé-2000 General criteria (availability, functionality, multiple instance, data model, reasoning, sample ontologies, reuse, formats, visualization, help, shortcuts, stability, customization, extendibility, multiple users) User interface criteria (relevance, efficiency, attitude and learnability). EON 2003 DOE, Oil. Ed, Protégé-2000, Sem. Talk, Web. ODE Interoperability Amount of knowledge lost during exports and imports Corcho et al. , 2004 Web. ODE Temporal efficiency Stability 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 11 © R. García-Castro, A. Gómez-Pérez 11

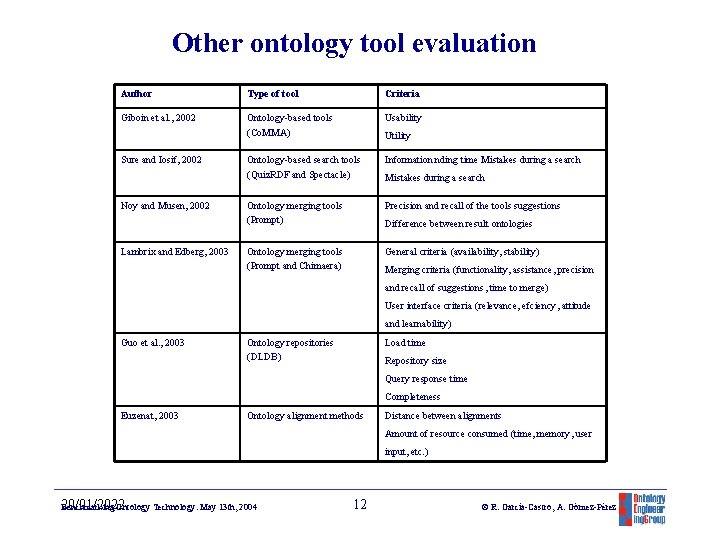

Other ontology tool evaluation Author Type of tool Criteria Giboin et al. , 2002 Ontology-based tools (Co. MMA) Usability Ontology-based search tools (Quiz. RDF and Spectacle) Information nding time Mistakes during a search Ontology merging tools (Prompt) Precision and recall of the tools suggestions Ontology merging tools (Prompt and Chimaera) General criteria (availability, stability) Sure and Iosif, 2002 Noy and Musen, 2002 Lambrix and Edberg, 2003 Utility Mistakes during a search Difference between result ontologies Merging criteria (functionality, assistance, precision and recall of suggestions, time to merge) User interface criteria (relevance, efciency, attitude and learnability) Guo et al. , 2003 Ontology repositories (DLDB) Load time Repository size Query response time Completeness Euzenat, 2003 Ontology alignment methods Distance between alignments Amount of resource consumed (time, memory, user input, etc. ) 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 12 © R. García-Castro, A. Gómez-Pérez 12

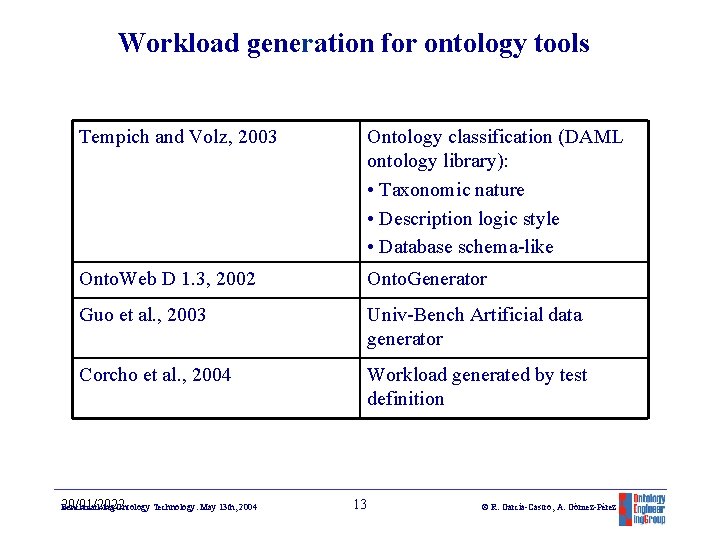

Workload generation for ontology tools Tempich and Volz, 2003 Ontology classification (DAML ontology library): • Taxonomic nature • Description logic style • Database schema-like Onto. Web D 1. 3, 2002 Onto. Generator Guo et al. , 2003 Univ-Bench Artificial data generator Corcho et al. , 2004 Workload generated by test definition 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 13 © R. García-Castro, A. Gómez-Pérez 13

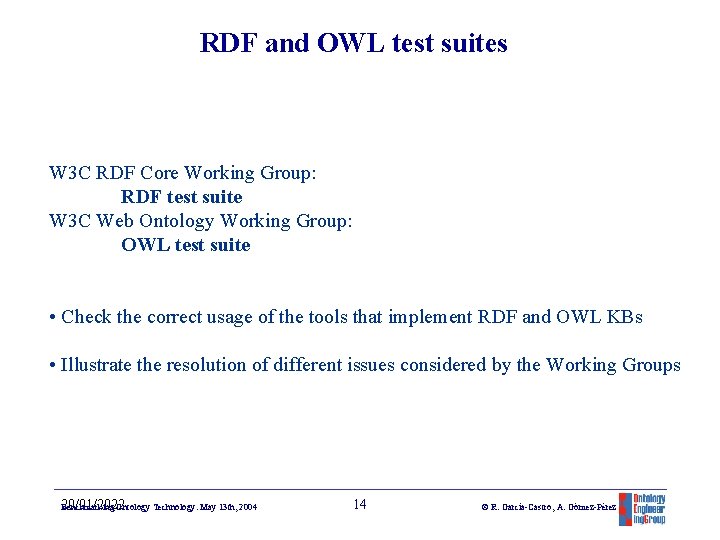

RDF and OWL test suites W 3 C RDF Core Working Group: RDF test suite W 3 C Web Ontology Working Group: OWL test suite • Check the correct usage of the tools that implement RDF and OWL KBs • Illustrate the resolution of different issues considered by the Working Groups 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 14 © R. García-Castro, A. Gómez-Pérez 14

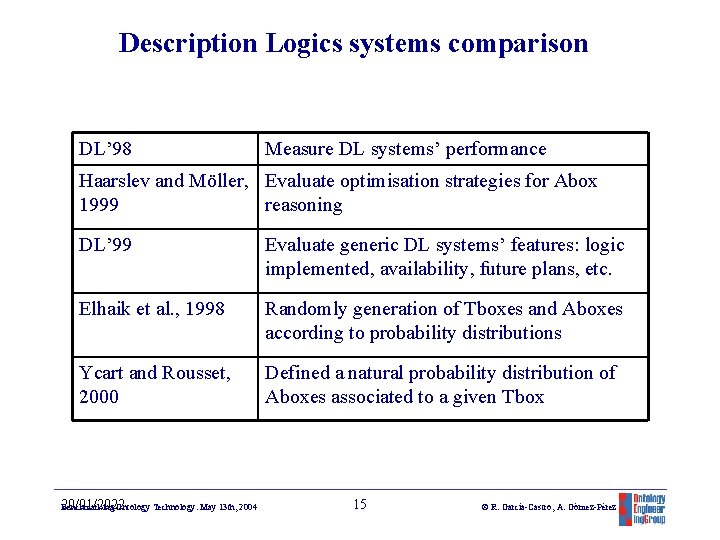

Description Logics systems comparison DL’ 98 Measure DL systems’ performance Haarslev and Möller, Evaluate optimisation strategies for Abox 1999 reasoning DL’ 99 Evaluate generic DL systems’ features: logic implemented, availability, future plans, etc. Elhaik et al. , 1998 Randomly generation of Tboxes and Aboxes according to probability distributions Ycart and Rousset, 2000 Defined a natural probability distribution of Aboxes associated to a given Tbox 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 15 © R. García-Castro, A. Gómez-Pérez 15

Table of contents • Benchmarking • Experimental Software Engineering • Measurement • Ontology Technology Evaluation • Conclusions and Future Work 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 16 © R. García-Castro, A. Gómez-Pérez 16

Conclusions • We present an overview of the main research areas involved in benchmarking. • There is no common benchmarking methodology, although they are general and similar. • Evaluation studies concerning ontology technology have been scarce. • In the last years the effort devoted to evaluate ontology technology is significantly growing. • Most of the evaluation studies are of qualitative nature. Few of them involve quantitative data and controlled environments. 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 17 © R. García-Castro, A. Gómez-Pérez 17

Future Work Goals (18 months): 1. 2. 3. 4. 5. 6. 7. State of the Art (month 6) First draft of a methodology (month 12) Identification of criteria (month 12) Identification of metrics (month 12) Definition of test beds for benchmarking (month 12) Development of first versions of prototypes of tools (month 18) Benchmarking of ontology development tools according to the criteria and test beds produced (month 18) 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 18 © R. García-Castro, A. Gómez-Pérez 18

Benchmarking Ontology Technology Raúl García-Castro, Asunción Gómez-Pérez <rgarcia, asun@fi. upm. es> May 13 th, 2004 20/01/2022 Benchmarking Ontology Technology. May 13 th, 2004 19 © R. García-Castro, A. Gómez-Pérez 19

- Slides: 19