15 213 Recitation 6 31102 Outline Cache Organization

- Slides: 21

15 -213 Recitation 6 – 3/11/02 Outline • Cache Organization • Accessing Cache • Replacement Policy Mengzhi Wang e-mail: mzwang@cs. cmu. edu Office Hours: Thursday 1: 30 – 3: 00 Wean Hall 3108

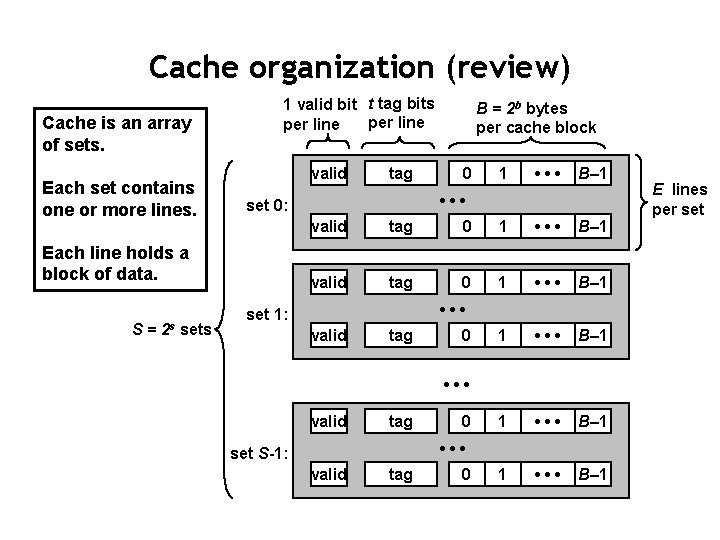

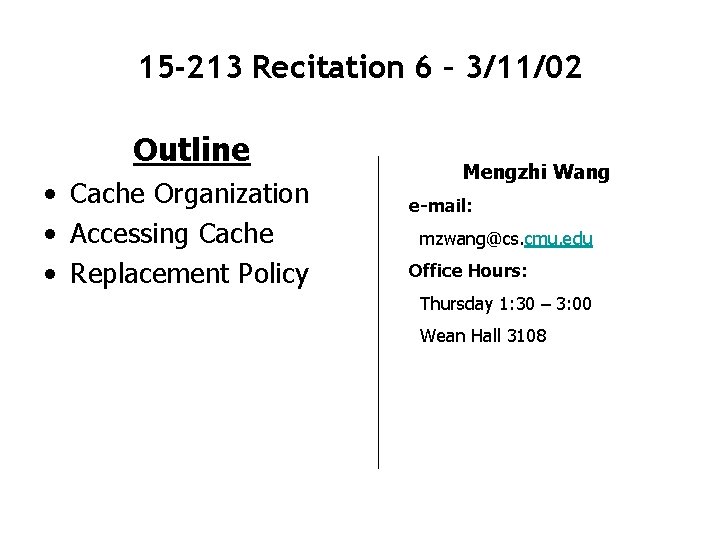

Cache organization (review) Cache is an array of sets. Each set contains one or more lines. 1 valid bit t tag bits per line valid 0 1 • • • B– 1 • • • set 0: Each line holds a block of data. S = 2 s sets tag B = 2 b bytes per cache block valid tag 0 1 • • • B– 1 1 • • • B– 1 • • • set 1: valid tag 0 • • • set S-1: valid tag 0 E lines per set

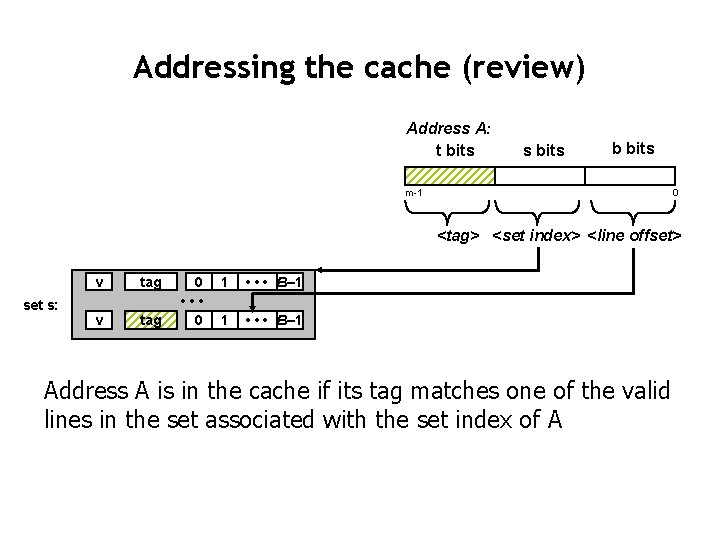

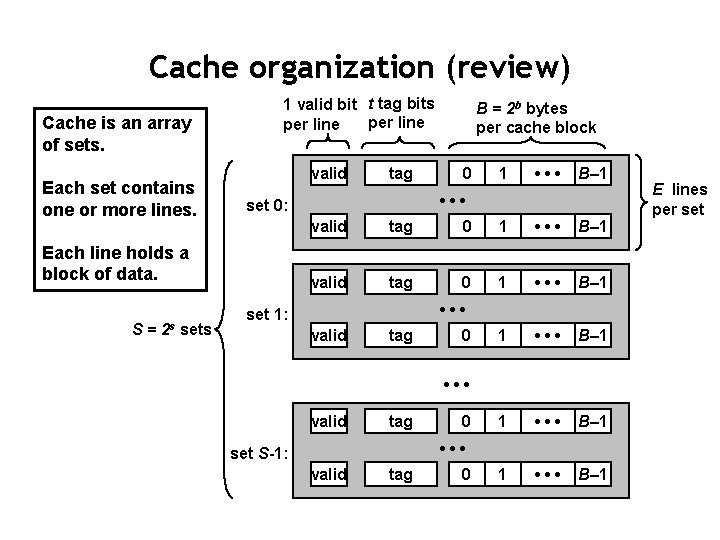

Addressing the cache (review) Address A: t bits s bits b bits m-1 0 <tag> <set index> <line offset> set s: v tag 0 • • • 0 1 • • • B– 1 Address A is in the cache if its tag matches one of the valid lines in the set associated with the set index of A

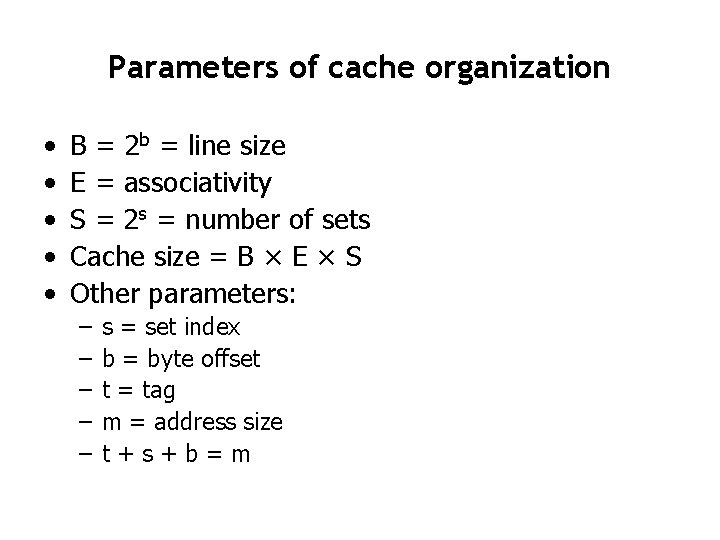

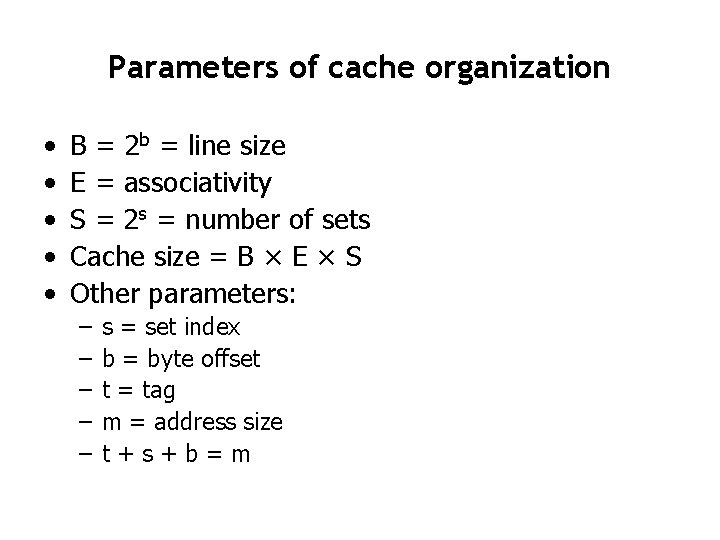

Parameters of cache organization • • • B = 2 b = line size E = associativity S = 2 s = number of sets Cache size = B × E × S Other parameters: – – – s = set index b = byte offset t = tag m = address size t+s+b=m

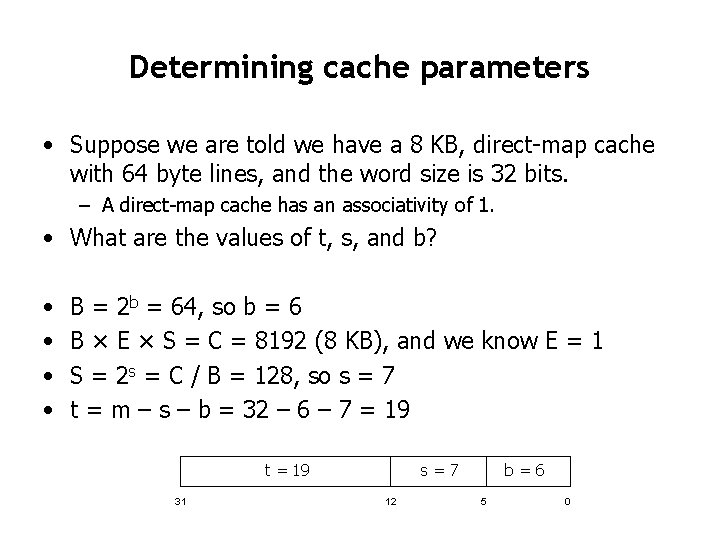

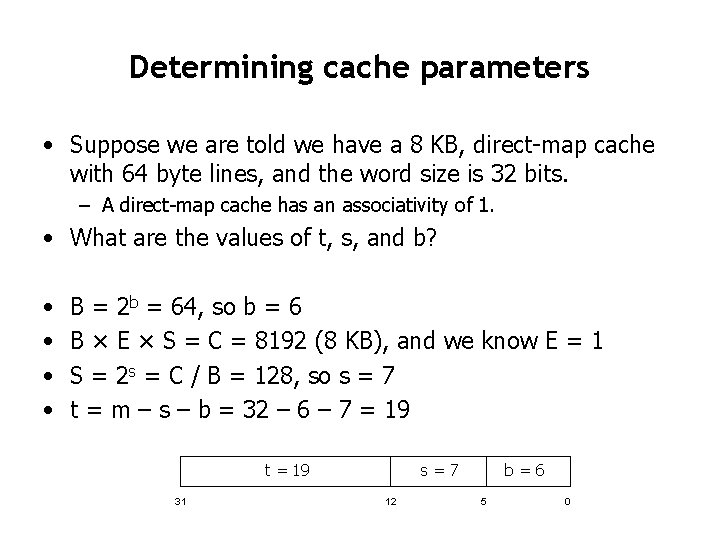

Determining cache parameters • Suppose we are told we have a 8 KB, direct-map cache with 64 byte lines, and the word size is 32 bits. – A direct-map cache has an associativity of 1. • What are the values of t, s, and b? • • B = 2 b = 64, so b = 6 B × E × S = C = 8192 (8 KB), and we know E = 1 S = 2 s = C / B = 128, so s = 7 t = m – s – b = 32 – 6 – 7 = 19 t = 19 31 s=7 12 b=6 5 0

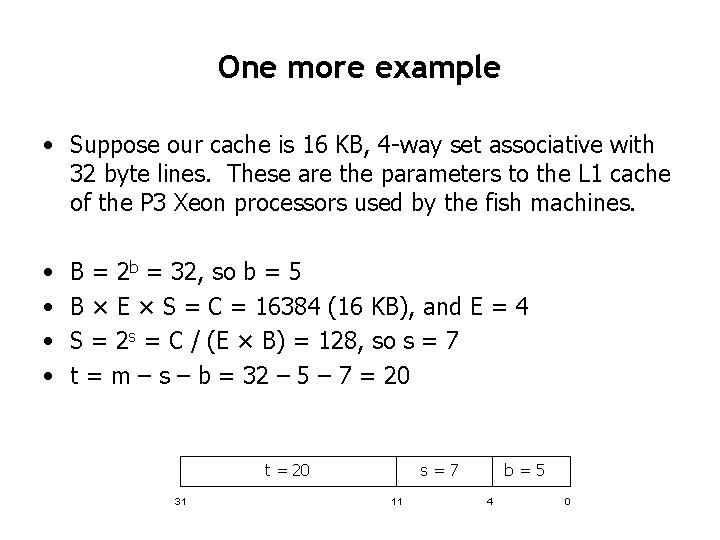

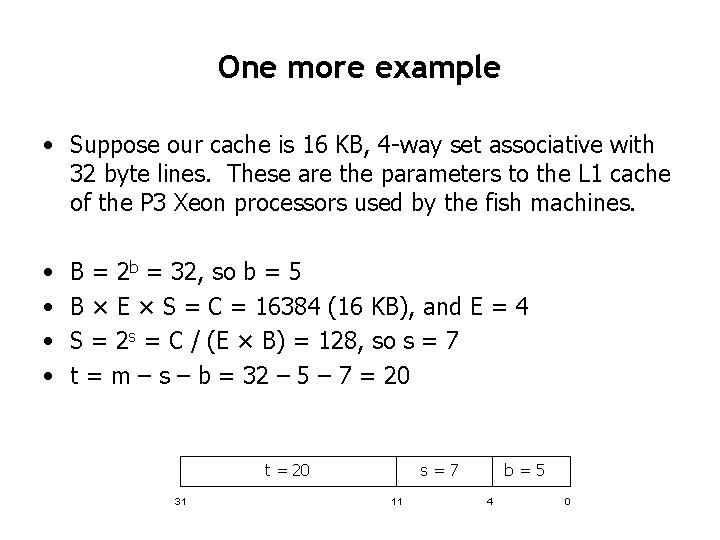

One more example • Suppose our cache is 16 KB, 4 -way set associative with 32 byte lines. These are the parameters to the L 1 cache of the P 3 Xeon processors used by the fish machines. • • B = 2 b = 32, so b = 5 B × E × S = C = 16384 (16 KB), and E = 4 S = 2 s = C / (E × B) = 128, so s = 7 t = m – s – b = 32 – 5 – 7 = 20 t = 20 31 s=7 11 b=5 4 0

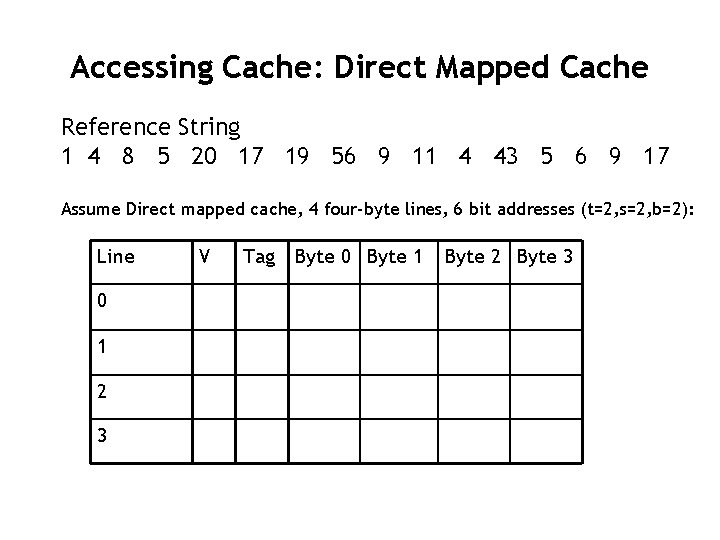

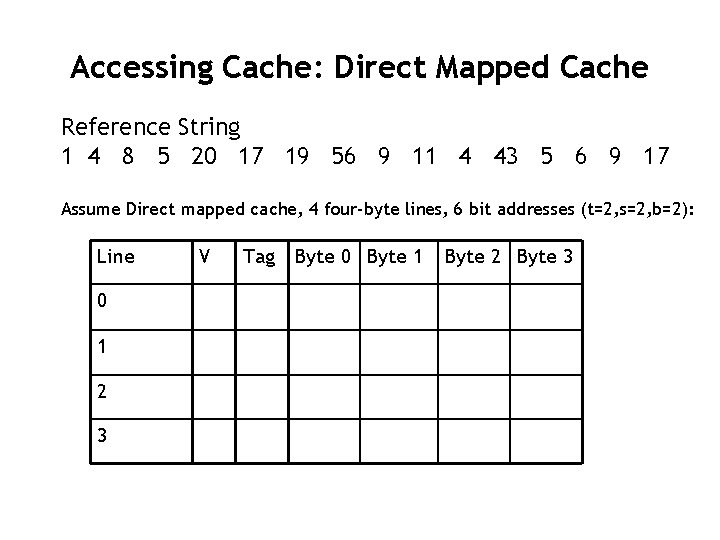

Accessing Cache: Direct Mapped Cache Reference String 1 4 8 5 20 17 19 56 9 11 4 43 5 6 9 17 Assume Direct mapped cache, 4 four-byte lines, 6 bit addresses (t=2, s=2, b=2): Line 0 1 2 3 V Tag Byte 0 Byte 1 Byte 2 Byte 3

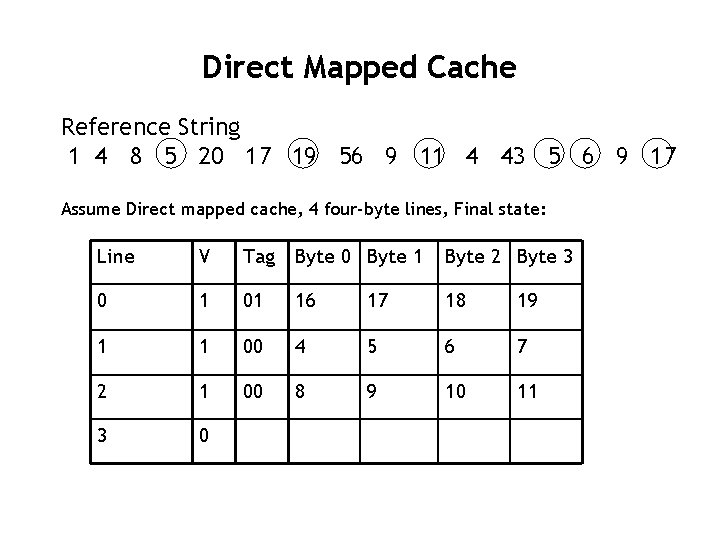

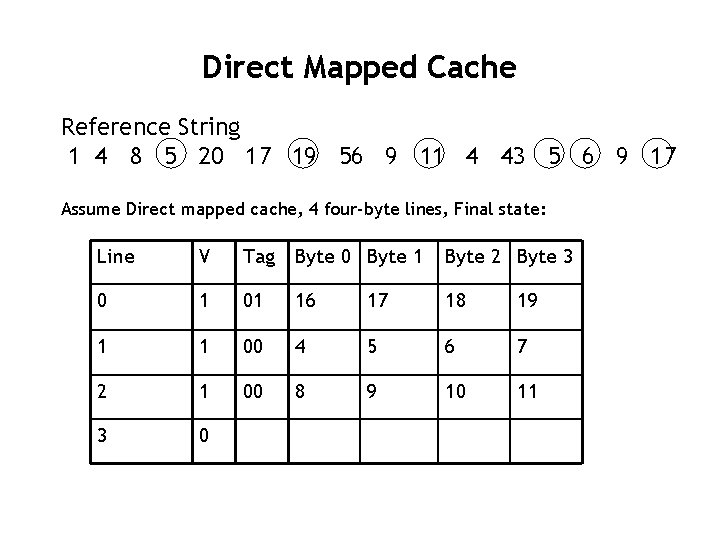

Direct Mapped Cache Reference String 1 4 8 5 20 17 19 56 9 11 4 43 5 6 9 17 Assume Direct mapped cache, 4 four-byte lines, Final state: Line V Tag Byte 0 Byte 1 Byte 2 Byte 3 0 1 01 16 17 18 19 1 1 00 4 5 6 7 2 1 00 8 9 10 11 3 0

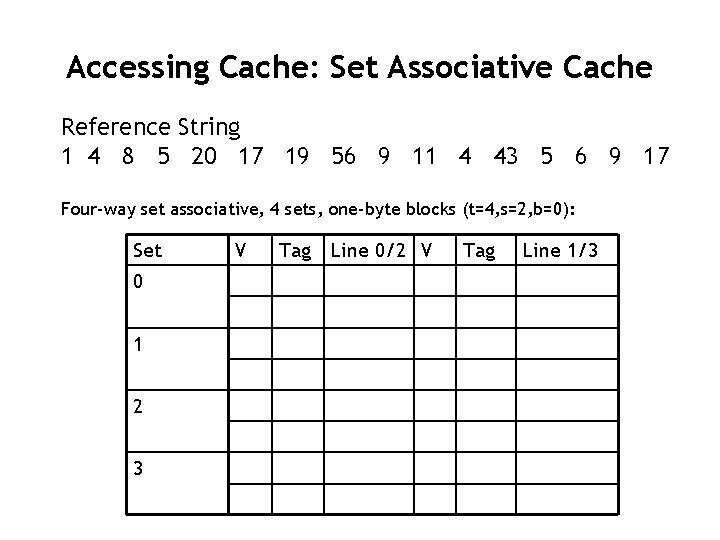

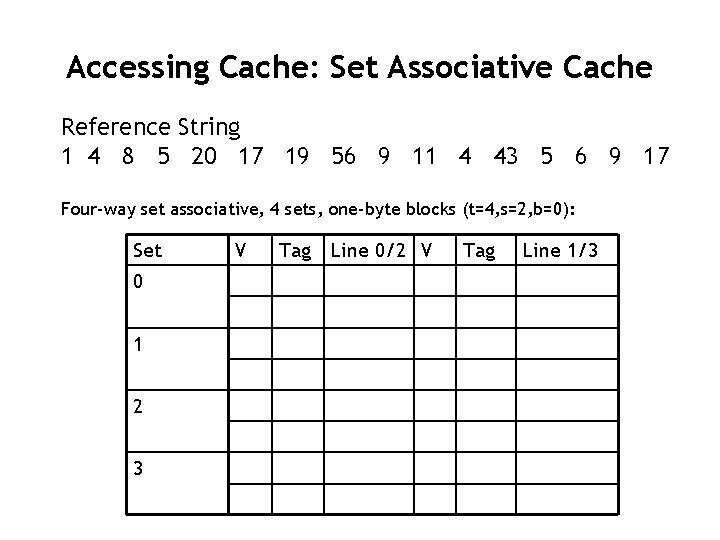

Accessing Cache: Set Associative Cache Reference String 1 4 8 5 20 17 19 56 9 11 4 43 5 6 9 17 Four-way set associative, 4 sets, one-byte blocks (t=4, s=2, b=0): Set 0 1 2 3 V Tag Line 0/2 V Tag Line 1/3

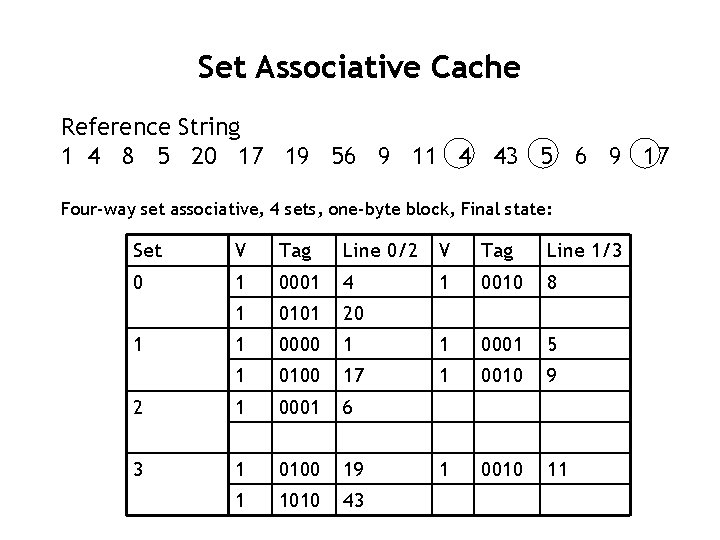

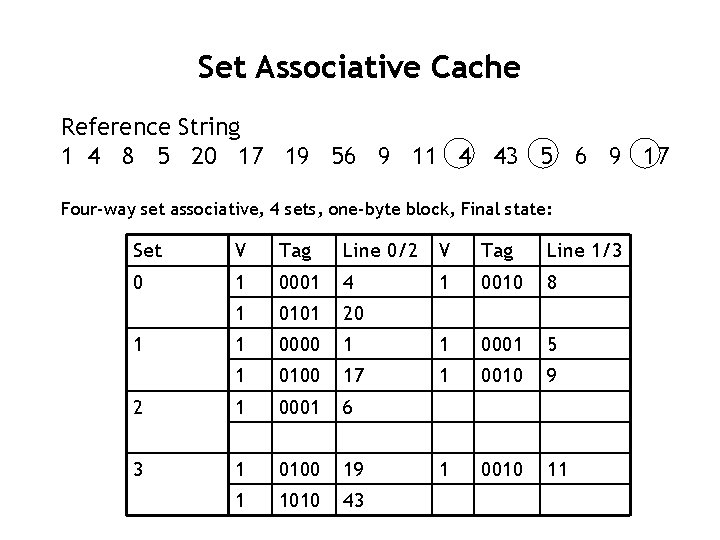

Set Associative Cache Reference String 1 4 8 5 20 17 19 56 9 11 4 43 5 6 9 17 Four-way set associative, 4 sets, one-byte block, Final state: Set V Tag Line 0/2 V Tag Line 1/3 0 1 0001 4 1 0010 8 1 0101 20 1 0000 1 1 0001 5 1 0100 17 1 0010 9 2 1 0001 6 3 1 0100 19 1 0010 11 1 1010 43 1

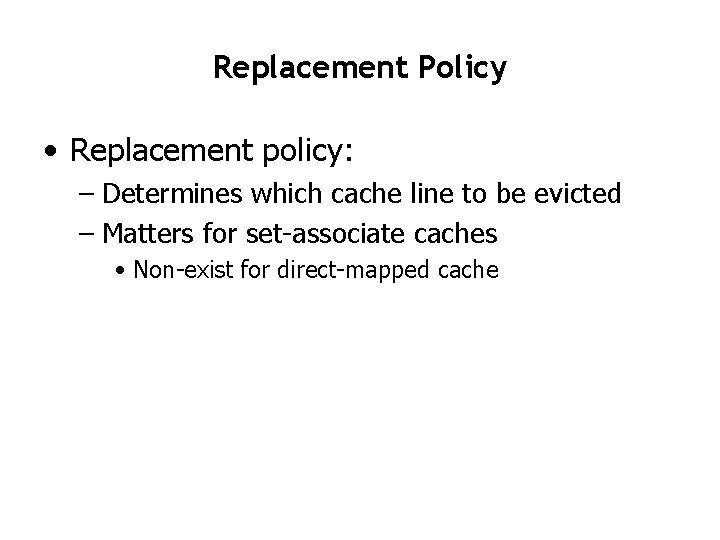

Replacement Policy • Replacement policy: – Determines which cache line to be evicted – Matters for set-associate caches • Non-exist for direct-mapped cache

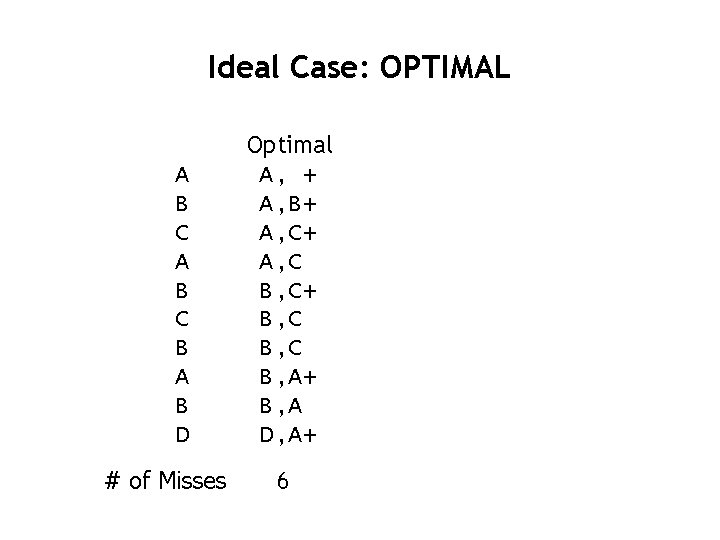

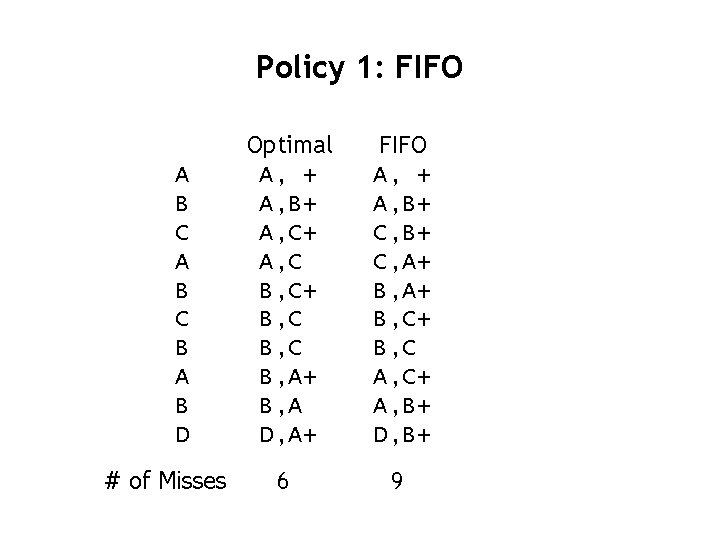

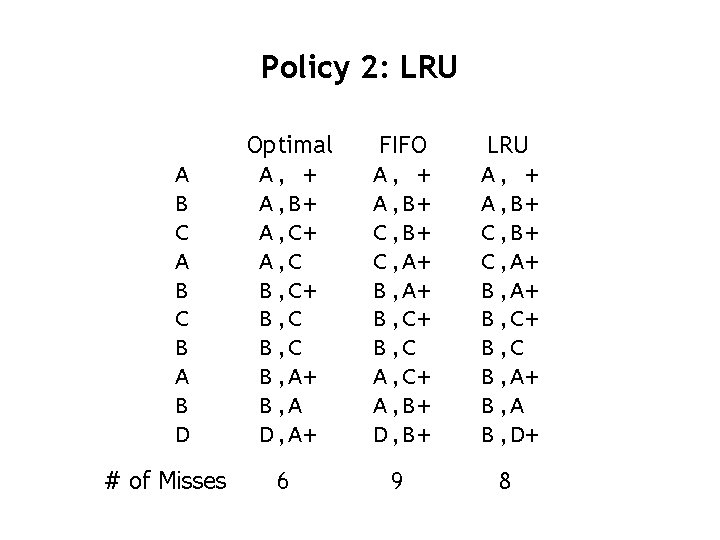

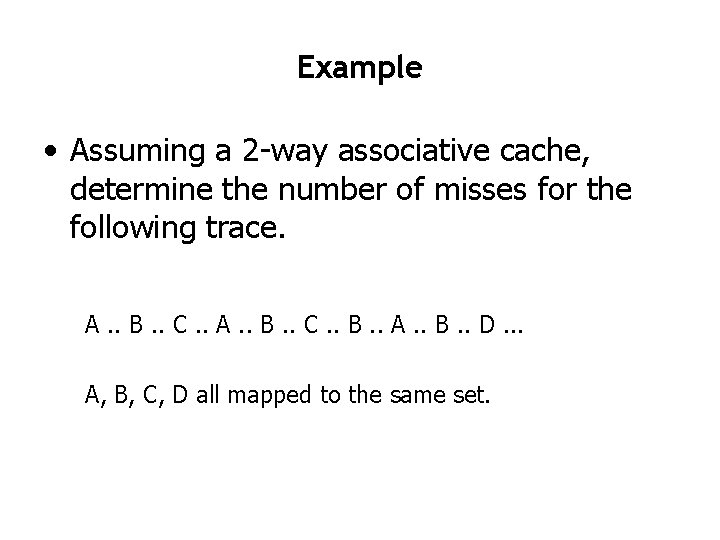

Example • Assuming a 2 -way associative cache, determine the number of misses for the following trace. A. . B. . C. . B. . A. . B. . D. . . A, B, C, D all mapped to the same set.

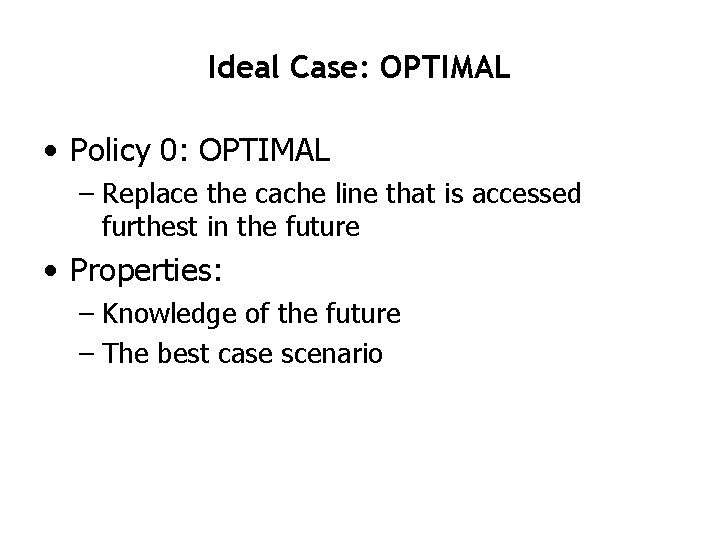

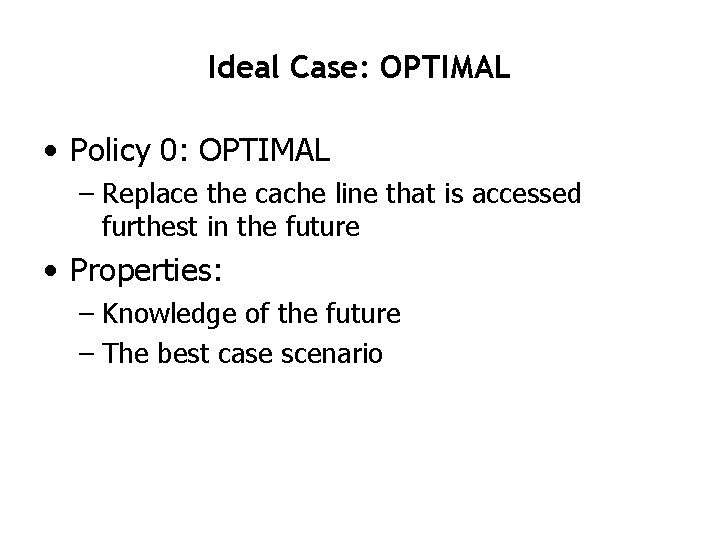

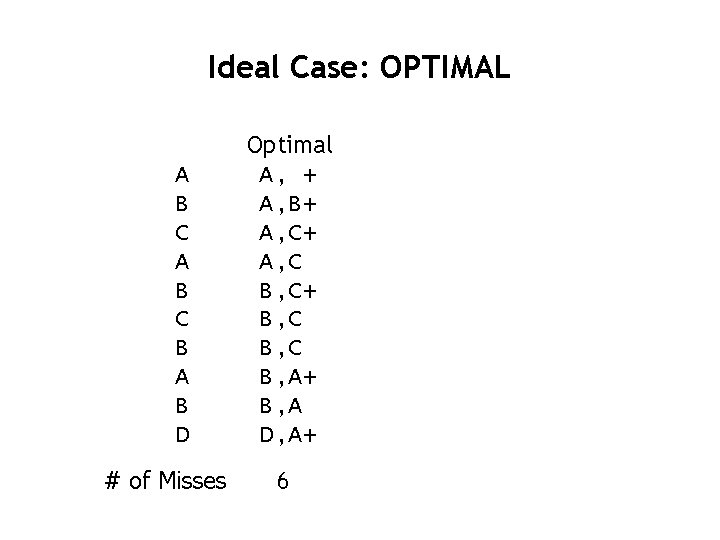

Ideal Case: OPTIMAL • Policy 0: OPTIMAL – Replace the cache line that is accessed furthest in the future • Properties: – Knowledge of the future – The best case scenario

Ideal Case: OPTIMAL A B C B A B D # of Misses Optimal A, + A, B+ A, C B, C+ B, C B, A+ B, A D, A+ 6

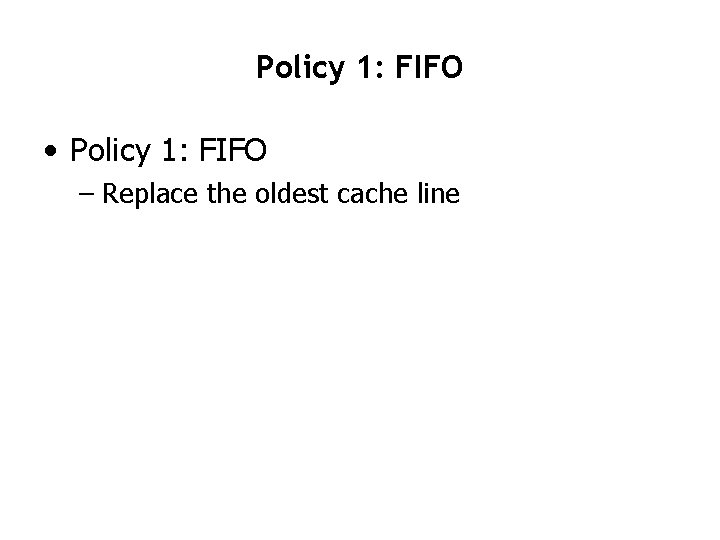

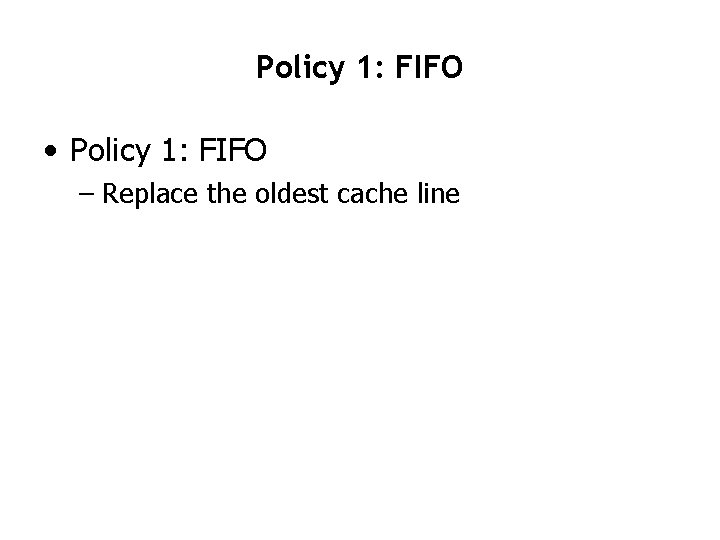

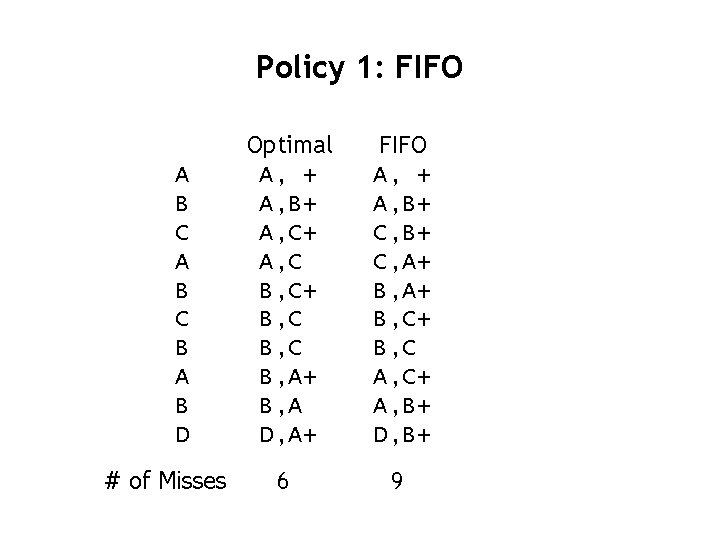

Policy 1: FIFO • Policy 1: FIFO – Replace the oldest cache line

Policy 1: FIFO A B C B A B D # of Misses Optimal A, + A, B+ A, C B, C+ B, C B, A+ B, A D, A+ FIFO A, + A, B+ C, A+ B, C+ B, C A, C+ A, B+ D, B+ 6 9

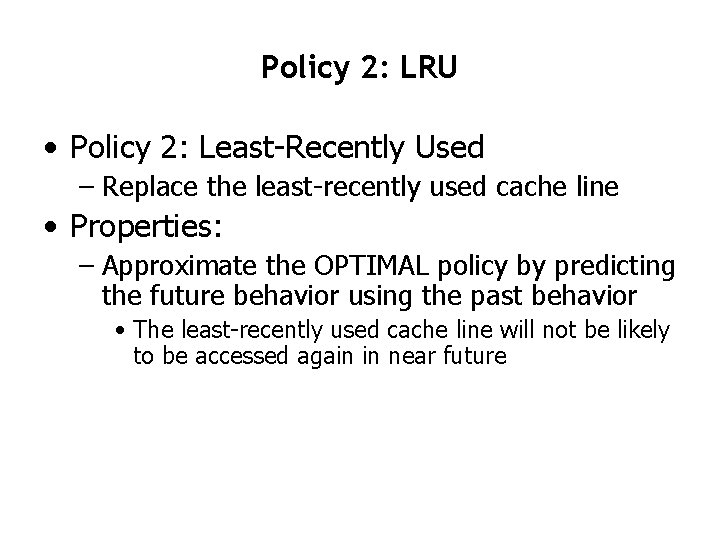

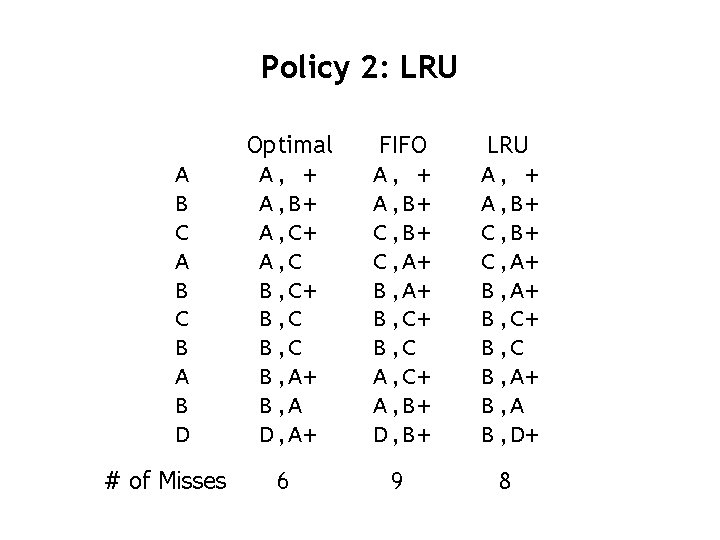

Policy 2: LRU • Policy 2: Least-Recently Used – Replace the least-recently used cache line • Properties: – Approximate the OPTIMAL policy by predicting the future behavior using the past behavior • The least-recently used cache line will not be likely to be accessed again in near future

Policy 2: LRU A B C B A B D # of Misses Optimal A, + A, B+ A, C B, C+ B, C B, A+ B, A D, A+ FIFO A, + A, B+ C, A+ B, C+ B, C A, C+ A, B+ D, B+ LRU A, + A, B+ C, A+ B, C+ B, C B, A+ B, A B, D+ 6 9 8

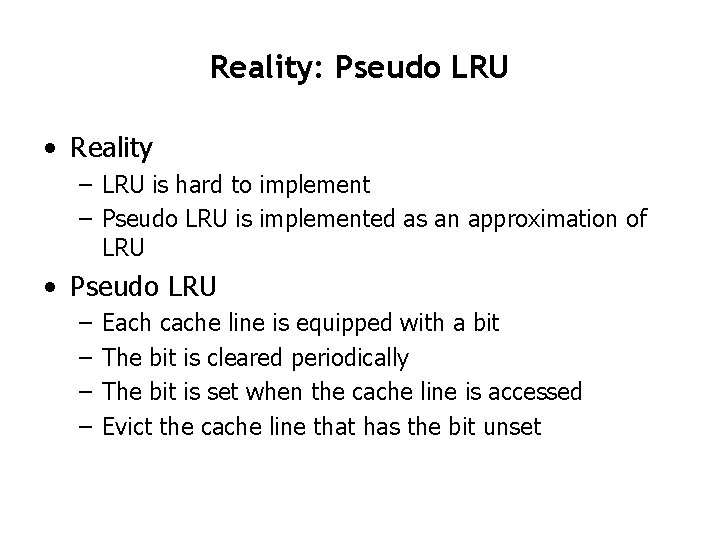

Reality: Pseudo LRU • Reality – LRU is hard to implement – Pseudo LRU is implemented as an approximation of LRU • Pseudo LRU – – Each cache line is equipped with a bit The bit is cleared periodically The bit is set when the cache line is accessed Evict the cache line that has the bit unset

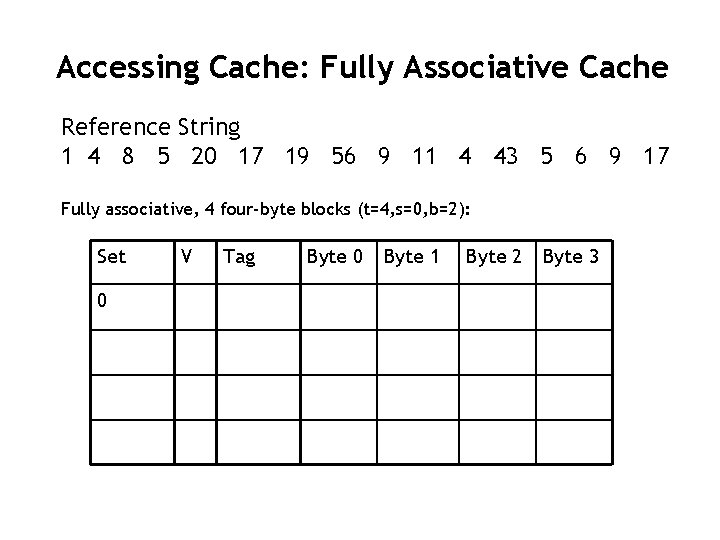

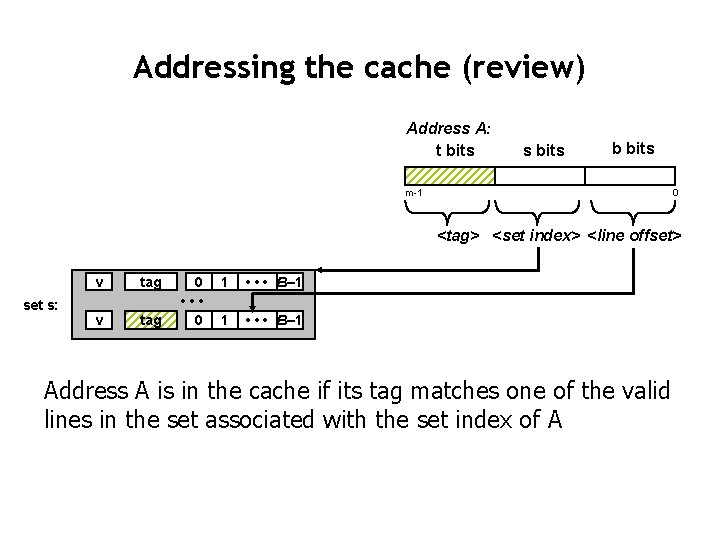

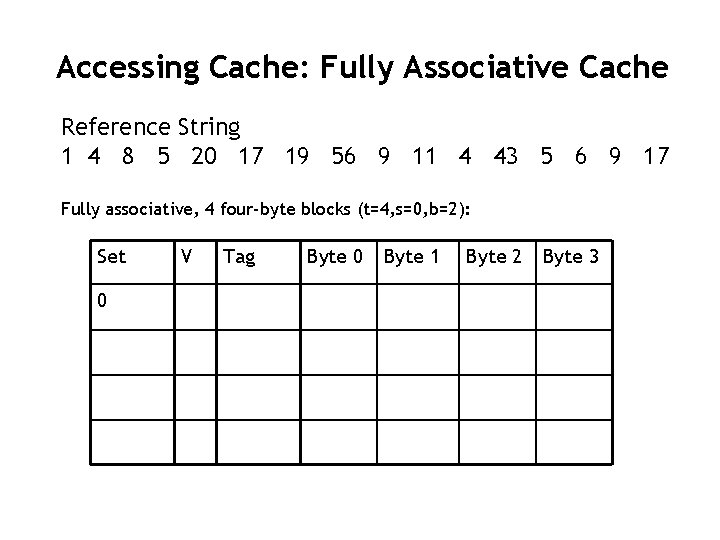

Accessing Cache: Fully Associative Cache Reference String 1 4 8 5 20 17 19 56 9 11 4 43 5 6 9 17 Fully associative, 4 four-byte blocks (t=4, s=0, b=2): Set 0 V Tag Byte 0 Byte 1 Byte 2 Byte 3

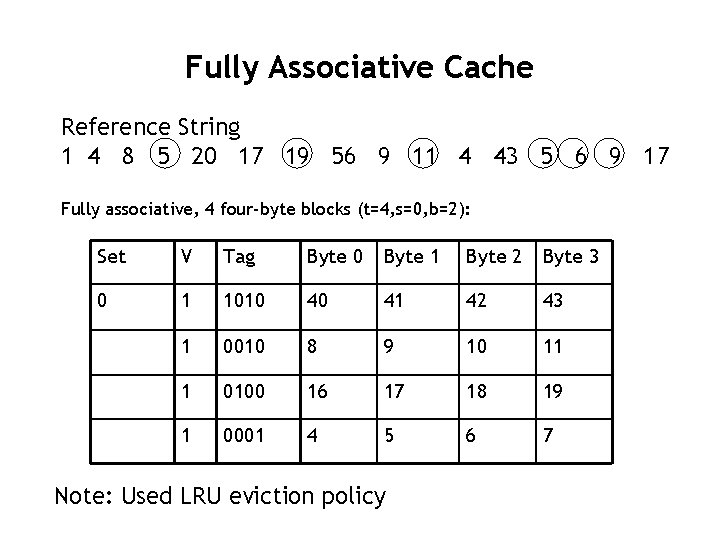

Fully Associative Cache Reference String 1 4 8 5 20 17 19 56 9 11 4 43 5 6 9 17 Fully associative, 4 four-byte blocks (t=4, s=0, b=2): Set V Tag Byte 0 Byte 1 Byte 2 Byte 3 0 1 1010 40 41 42 43 1 0010 8 9 10 11 1 0100 16 17 18 19 1 0001 4 5 6 7 Note: Used LRU eviction policy