Cache Organization of Pentium Instruction Data Cache of

- Slides: 23

Cache Organization of Pentium

Instruction & Data Cache of Pentium • Both caches are organized as 2 -way set associative caches with 128 sets (total 256 entries) • There are 32 bytes in a line (8 K/256) • An LRU algorithm is used to select victims in each cache.

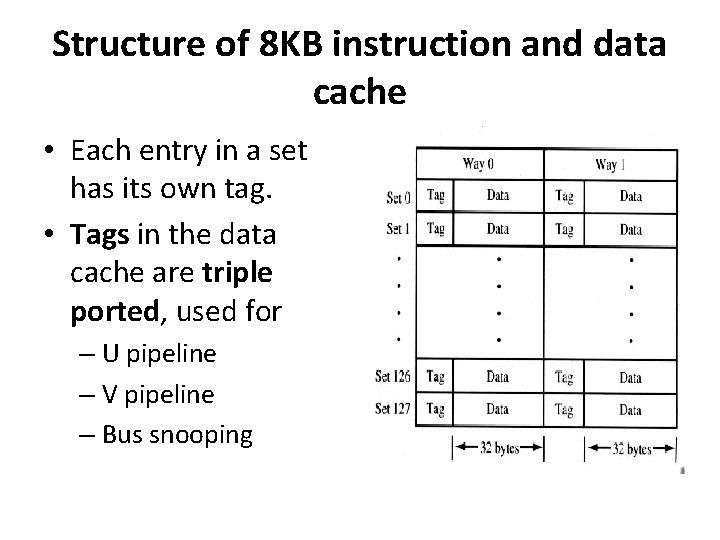

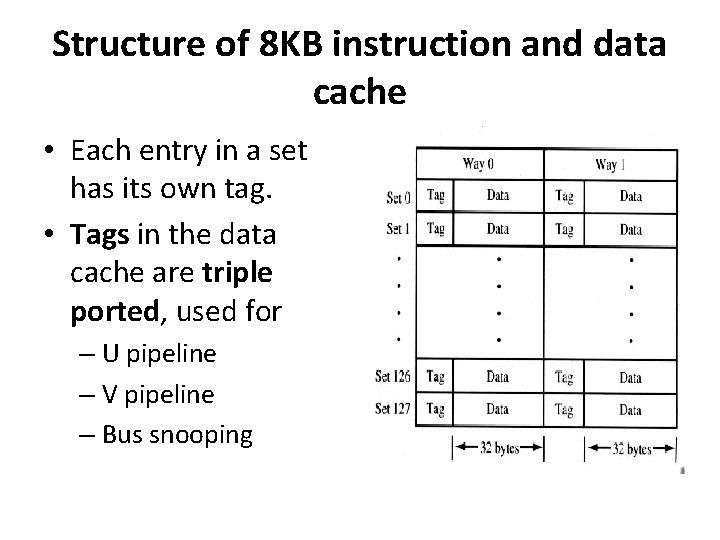

Structure of 8 KB instruction and data cache • Each entry in a set has its own tag. • Tags in the data cache are triple ported, used for – U pipeline – V pipeline – Bus snooping

Data Cache of Pentium • Bus Snooping: It is used to maintain consistent data in a multiprocessor system where each processor has a separate cache • Each entry in data cache can be configured for writethrough or write-back

Instruction Cache of Pentium • Instruction cache is write protected to prevent self-modifying code. • Tags in instruction cache are also triple ported – Two ports for split-line accesses – Third port for bus snooping

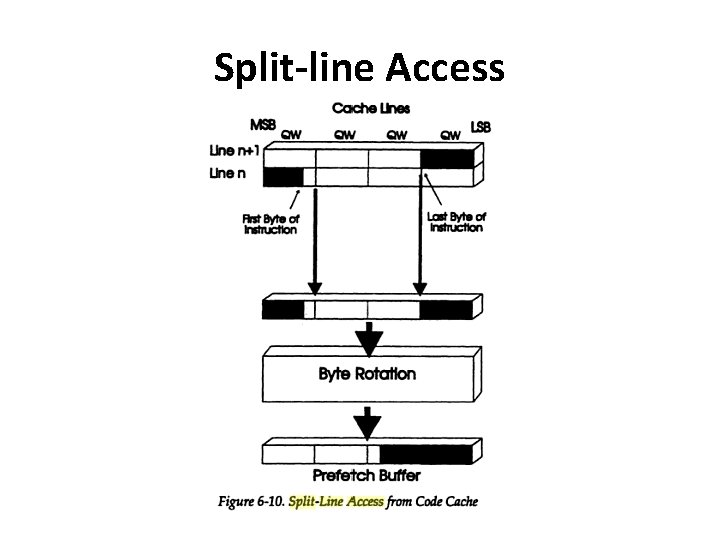

Split-line Access • In Pentium (since CISC), instructions are of variable length(1 -15 bytes) • Multibyte instructions may staddle two sequential lines stored in code cache • Then it has to go for two sequential access which degrades performance. • Solution: Split line Access

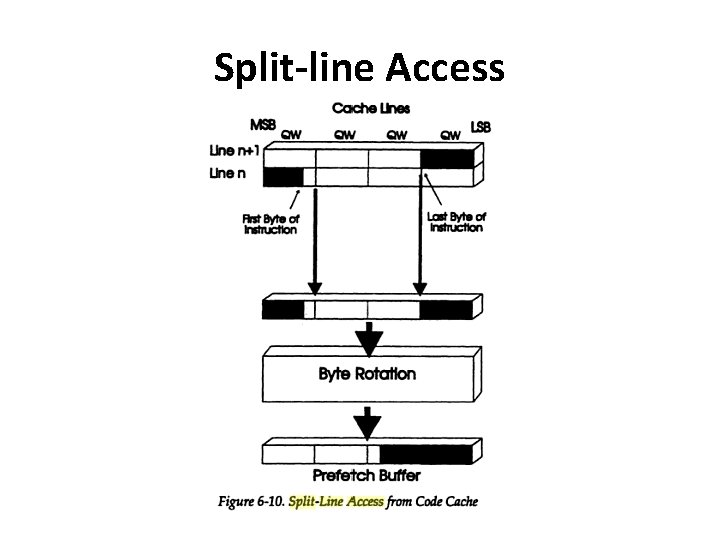

Split-line Access

Split-line Access • It permits upper half of one line and lower half of next to be fetched from code cache in one clock cycle. • When split-line is read, the information is not correctly aligned. • The bytes need to be rotated so that prefetch queue receives instruction in proper order.

Instruction & Data Cache of Pentium • Parity bits are used to maintain data integrity • Each tag and every byte in data cache has its own parity bit. • There is one parity bit for every 8 byte of data in instruction cache.

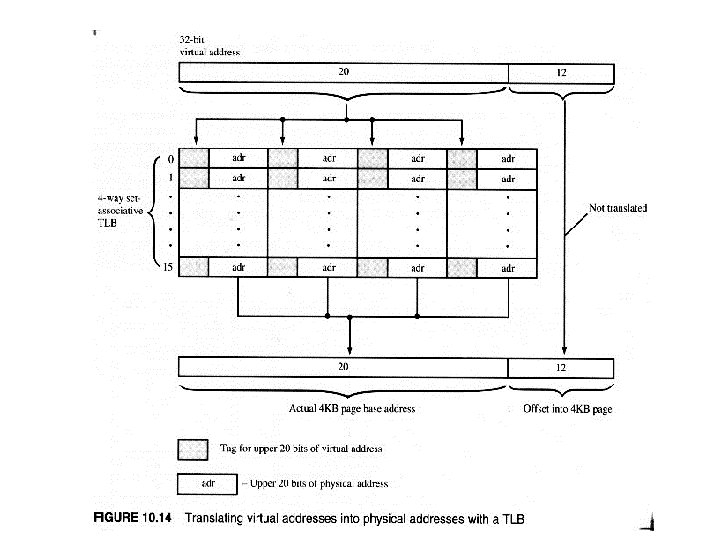

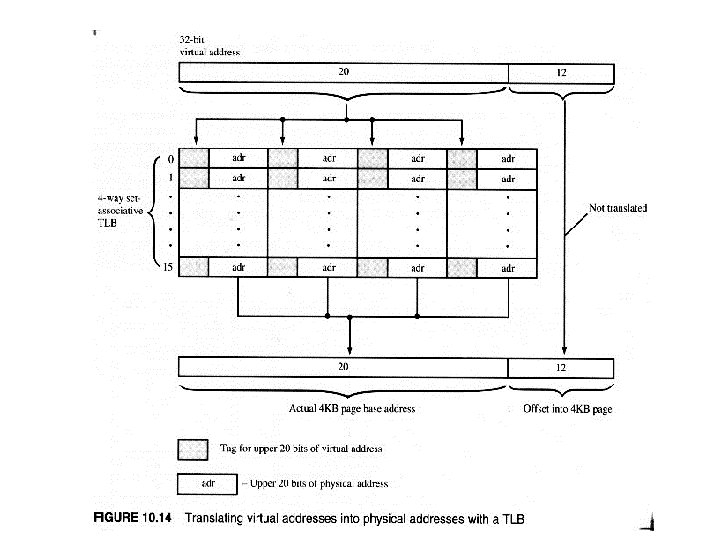

Translation Lookaside Buffers • They translate virtual addresses to physical addresses • Data Cache: – Data cache contains two TLBs • First: – 4 -way set associative with 64 entries – Translates addresses for 4 KB pages of main memory

Translation Lookaside Buffers • First: – The lower 12 bits addresses are same – The upper 20 -bits of virtual address are checked against four tags and translated into upper 20 -bit physical address during a hit – Since translation need to be quick, TLB is kept small • Second: – 4 way set-associative with 8 entries – Used to handle 4 MB pages

Translation Lookaside Buffers • Both TLBs are parity protected and dual ported. • Instruction Cache: – Uses a single 4 -way set associative TLB with 32 entries – Both 4 KB and 4 MB are supported (4 MB in 4 KB chunks) • Parity bits are used on tags and data to maintain data integrity • Entries are placed in all 3 TLBs through the use of a 3 -bit LRU counter stored in each set.

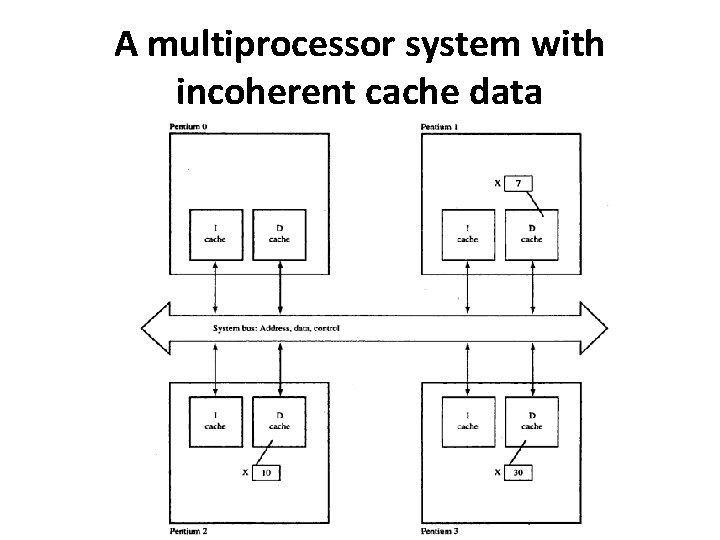

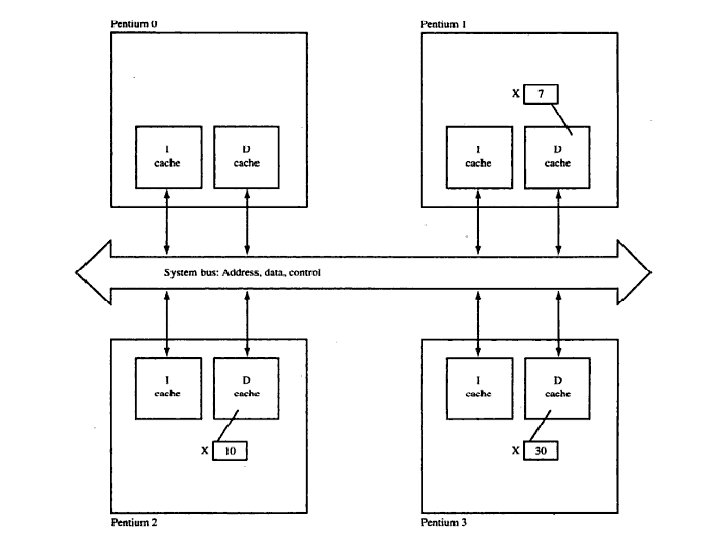

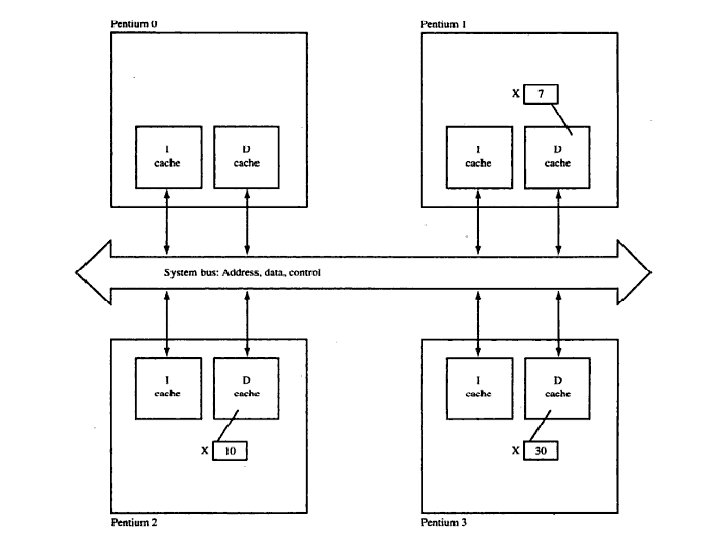

Cache Coherency in Multiprocessor System • When multiple processors are used in a single system, there needs to be a mechanism whereby all processors agree on the contents of shared cache information. • For e. g. , two or more processors may utilize data from the same memory location, X. • Each processor may change value of X, thus which value of X has to be considered?

Cache coherency in Multiprocessor Systems • If each processor change the value of the data item, we have different(incoherent) values of X’s data in each cache. • Solution : Cache Coherency Mechanism

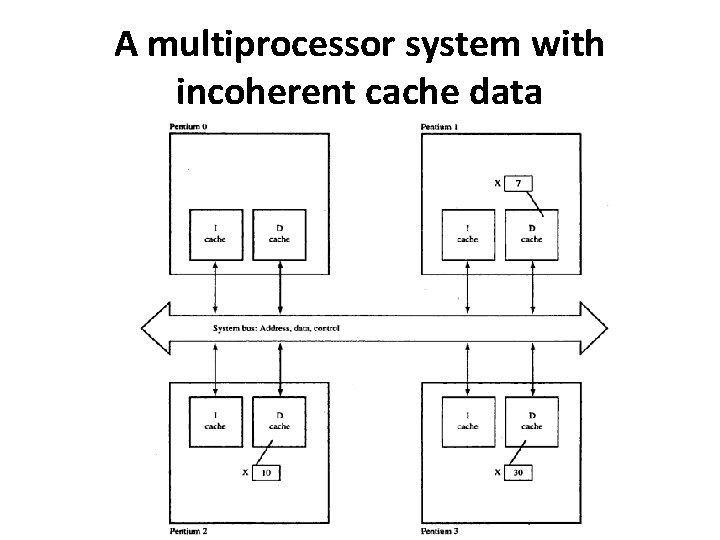

A multiprocessor system with incoherent cache data

Cache Coherency • Pentium’s mechanism is called MESI (Modified/Exclusive/Shared/Invalid)Protocol. • This protocol uses two bits stored with each line of data to keep track of the state of cache line.

Cache Coherency • The four states are defined as follows: • Modified: – The current line has been modified and is only available in a single cache. • Exclusive: – The current line has not been modified and is only available in a single cache – Writing to this line changes its state to modified

Cache Coherency • Shared: – Copies of the current line may exist in more than one cache. – A write to this line causes a writethrough to main memory and may invalidate the copies in the other cache • Invalid: – The current line is empty – A read from this line will generate a miss – A write will cause a writethrough to main memory

Cache Coherency • Only the shared and invalid states are used in code cache. • MESI protocol requires Pentium to monitor all accesses to main memory in a multiprocessor system. This is called bus snooping.

Cache Coherency • Consider the above example. • If the Processor 3 writes its local copy of X(30) back to memory, the memory write cycle will be detected by the other 3 processors. • Each processor will then run an internal inquire cycle to determine whether its data cache contains address of X. • Processor 1 and 2 then updates their cache based on individual MESI states.

Cache Coherency • Inquire cycles examine the code cache as well (as code cache supports bus snooping) • Pentium’s address lines are used as inputs during an inquire cycle to accomplish bus snooping.