VARIABLEDIMENSION REDUCTION IN PYTHON MAHUA DUTTA DIMENSION REDUCTION

VARIABLE/DIMENSION REDUCTION IN PYTHON MAHUA DUTTA

DIMENSION REDUCTION • A dimensionality reduction technique, which finds a lower-dimensional representation of a dataset such that as much information as possible about the original data is preserved. • The data reside in a space of lower dimensionality • Essentially, we assume that some of the data is noise, and we can approximate the useful part with a lower dimensionality space. • Dimensionality reduction does not just reduce the amount of data, it often brings out the useful part of the data

TECHNIQUE OF DIMENSION REDUCTION 1. High number of missing values 2. Low variance 3. High correlation with other data columns 4. Principal Component Analysis (PCA) 6. Backward feature elimination 7. Forward feature construction The technique selected here is Principle Component Analysis (PCA)

PCA Principal Component Analysis (PCA) is a statistical procedure that uses an orthogonal transformation to transform the original ‘n’ coordinates of a data set into a new set of ‘n’ coordinates called principal components. a. The result of this transformation is that the first principal component accounts for the largest possible variance b. Each succeeding component has the highest possible variance under the constraint that it is orthogonal to (i. e. , uncorrelated with) the preceding components. c. The principal components are orthogonal because they are the eigenvectors of the symmetric covariance matrix

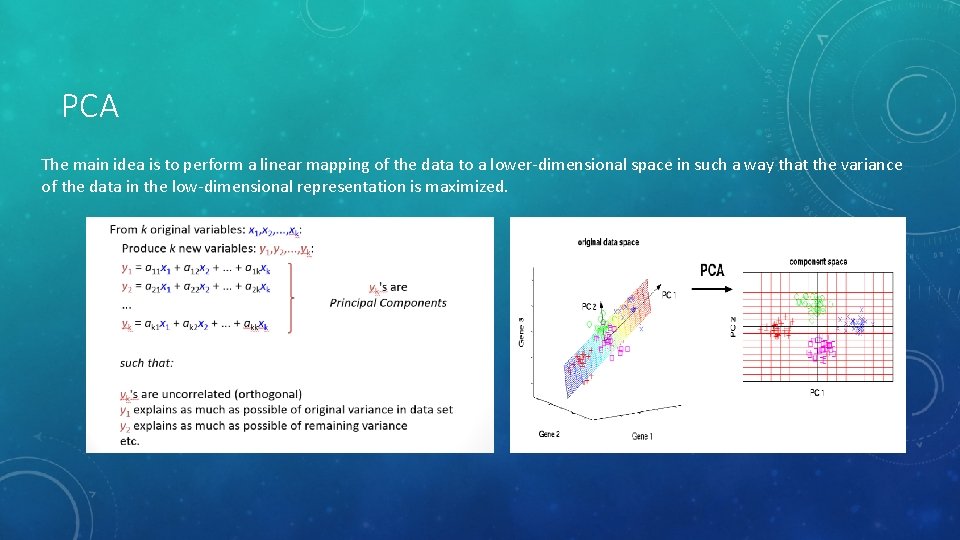

PCA The main idea is to perform a linear mapping of the data to a lower-dimensional space in such a way that the variance of the data in the low-dimensional representation is maximized.

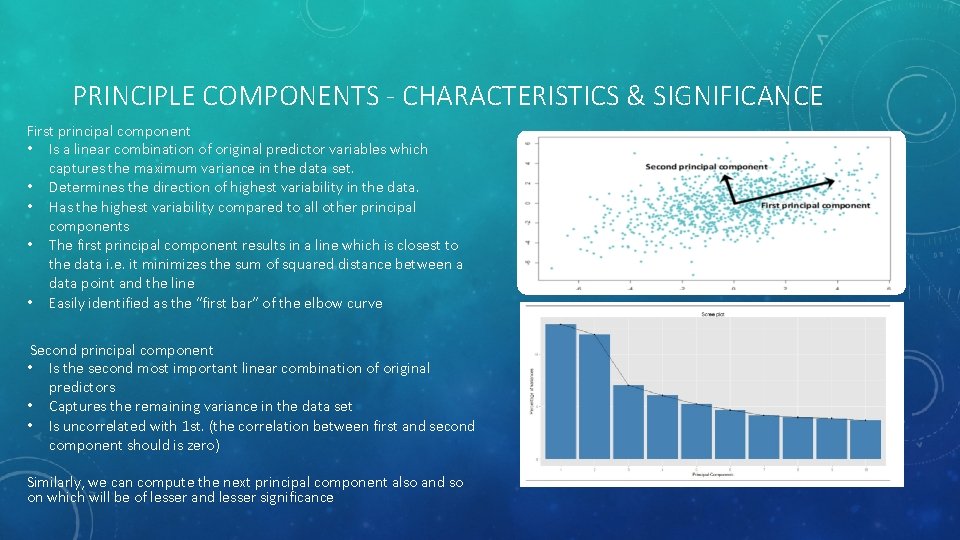

PRINCIPLE COMPONENTS - CHARACTERISTICS & SIGNIFICANCE First principal component • Is a linear combination of original predictor variables which captures the maximum variance in the data set. • Determines the direction of highest variability in the data. • Has the highest variability compared to all other principal components • The first principal component results in a line which is closest to the data i. e. it minimizes the sum of squared distance between a data point and the line • Easily identified as the “first bar” of the elbow curve Second principal component • Is the second most important linear combination of original predictors • Captures the remaining variance in the data set • Is uncorrelated with 1 st. (the correlation between first and second component should is zero) Similarly, we can compute the next principal component also and so on which will be of lesser and lesser significance

WHY TO USE It helps in data compressing and reducing the storage space required It fastens the time required for performing same computations. Less dimensions leads to less computing, also less dimensions can allow usage of algorithms unfit for a large number of dimensions It takes care of multi-collinearity that improves the model performance. It removes redundant features. For example: there is no point in storing a value in two different units (meters and inches). It helps to produce better visualizations of high dimensional data. Reducing the dimensions of data to 2 D or 3 D may allow us to plot and visualize it precisely. You can then observe patterns more clearly. It is helpful in noise removal also and as result of that we can improve the performance of models.

A SUMMARY OF THE PCA APPROACH UNSUPERVISED TECHNIQUE • Not using Target variable (As Unsupervised Process) • Standardize the data. • Obtain the Eigenvectors and Eigenvalues from the covariance matrix or correlation matrix, or perform Singular Vector Decomposition. • Sort eigenvalues in descending order and choose the k eigenvectors that correspond to the k largest eigenvalues where k is the number of dimensions of the new feature subspace (k≤dk≤d)/. • Construct the projection matrix W from the selected k eigenvectors. • Transform the original dataset X via W to obtain a k-dimensional feature subspace Y

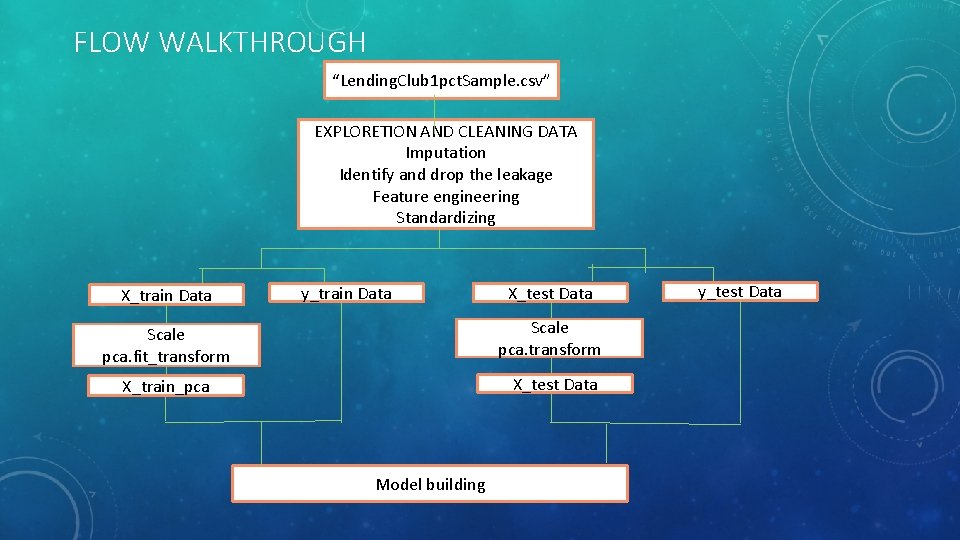

FLOW WALKTHROUGH “Lending. Club 1 pct. Sample. csv” EXPLORETION AND CLEANING DATA Imputation Identify and drop the leakage Feature engineering Standardizing X_train Data y_train Data X_test Data Scale pca. fit_transform Scale pca. transform X_train_pca X_test Data Model building y_test Data

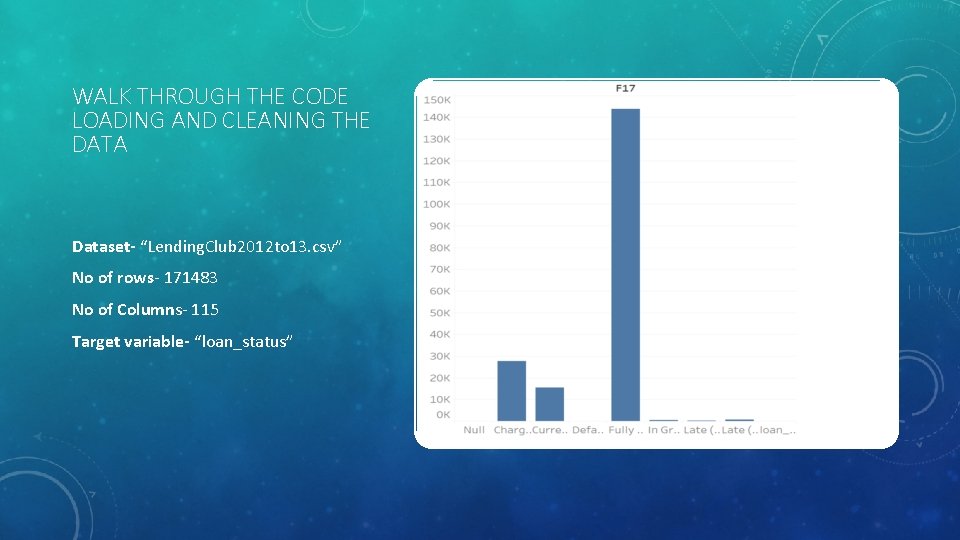

WALK THROUGH THE CODE LOADING AND CLEANING THE DATA Dataset- “Lending. Club 2012 to 13. csv” No of rows- 171483 No of Columns- 115 Target variable- “loan_status”

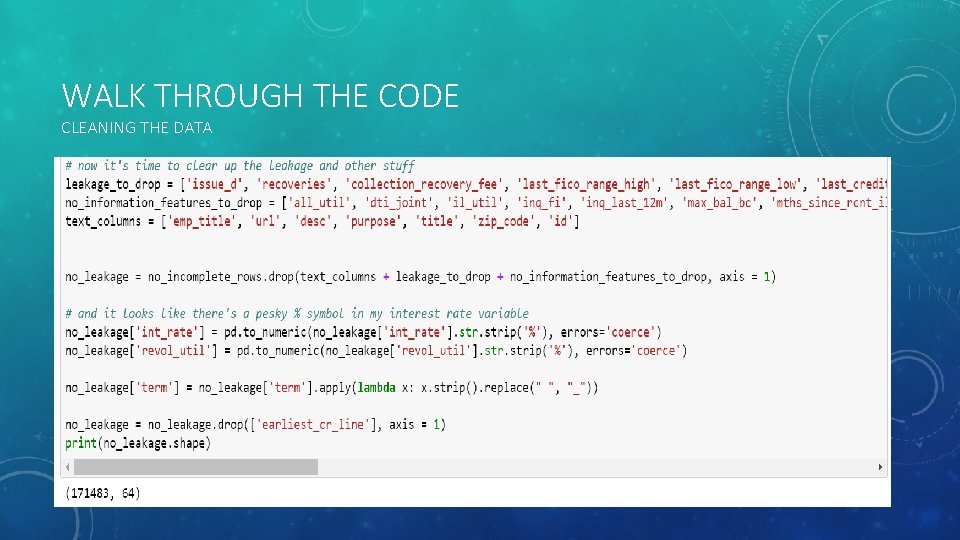

WALK THROUGH THE CODE CLEANING THE DATA

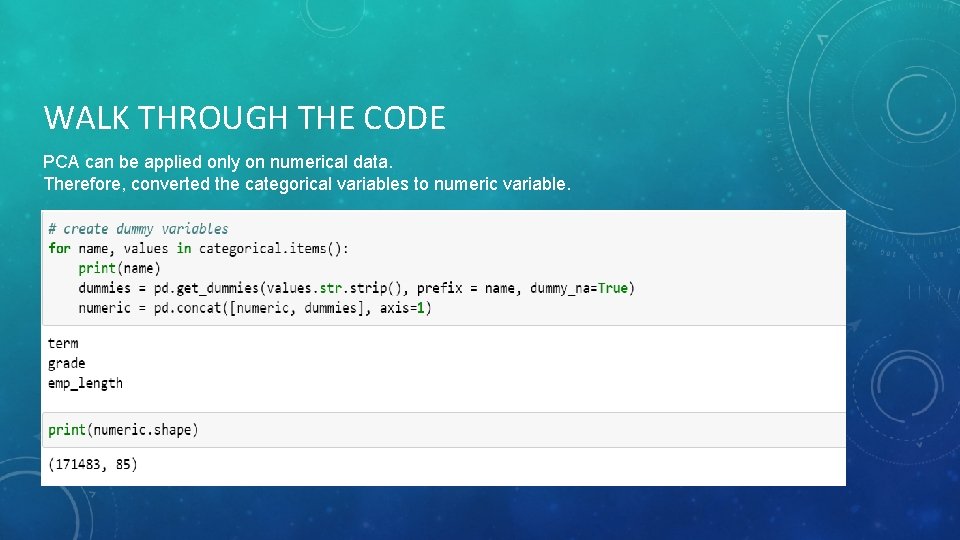

WALK THROUGH THE CODE PCA can be applied only on numerical data. Therefore, converted the categorical variables to numeric variable.

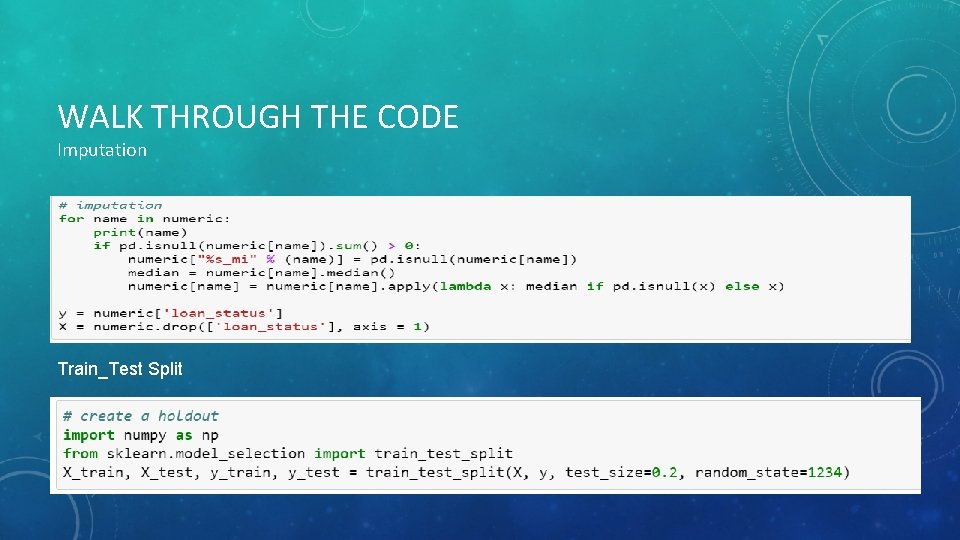

WALK THROUGH THE CODE Imputation Train_Test Split

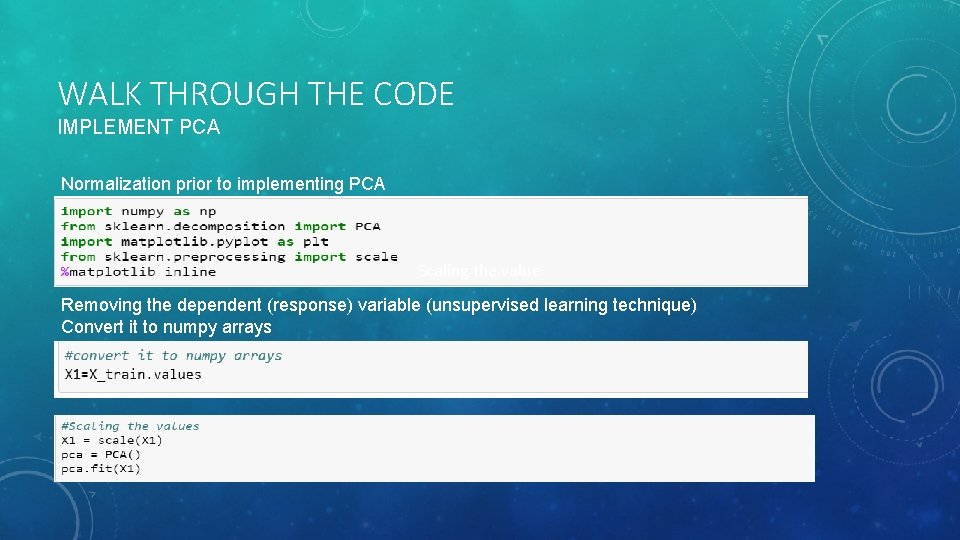

WALK THROUGH THE CODE IMPLEMENT PCA Normalization prior to implementing PCA Scaling the value Removing the dependent (response) variable (unsupervised learning technique) Convert it to numpy arrays

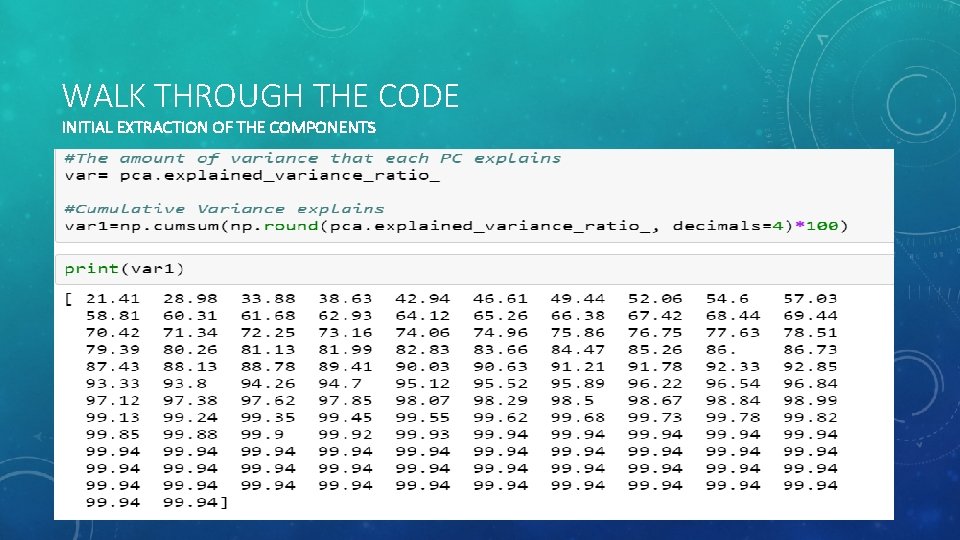

WALK THROUGH THE CODE INITIAL EXTRACTION OF THE COMPONENTS

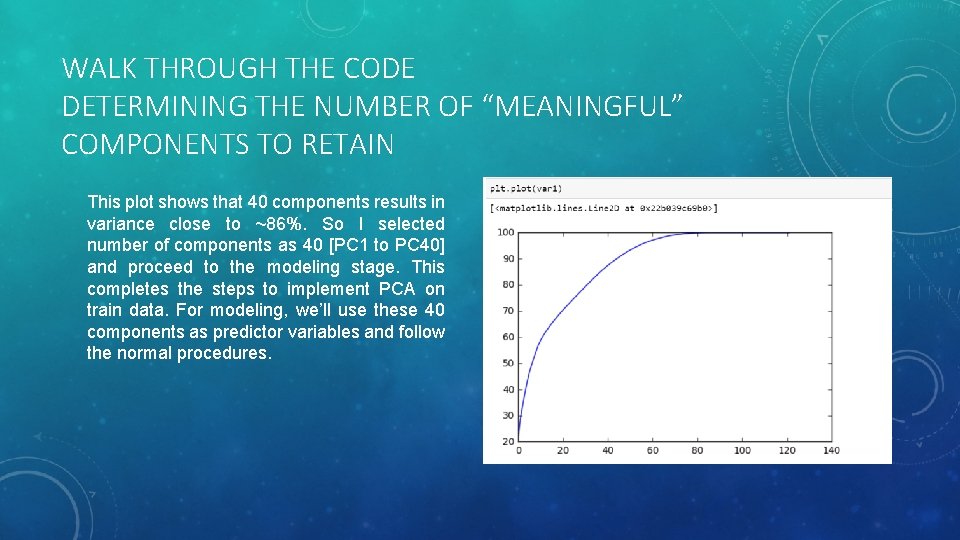

WALK THROUGH THE CODE DETERMINING THE NUMBER OF “MEANINGFUL” COMPONENTS TO RETAIN This plot shows that 40 components results in variance close to ~86%. So I selected number of components as 40 [PC 1 to PC 40] and proceed to the modeling stage. This completes the steps to implement PCA on train data. For modeling, we’ll use these 40 components as predictor variables and follow the normal procedures.

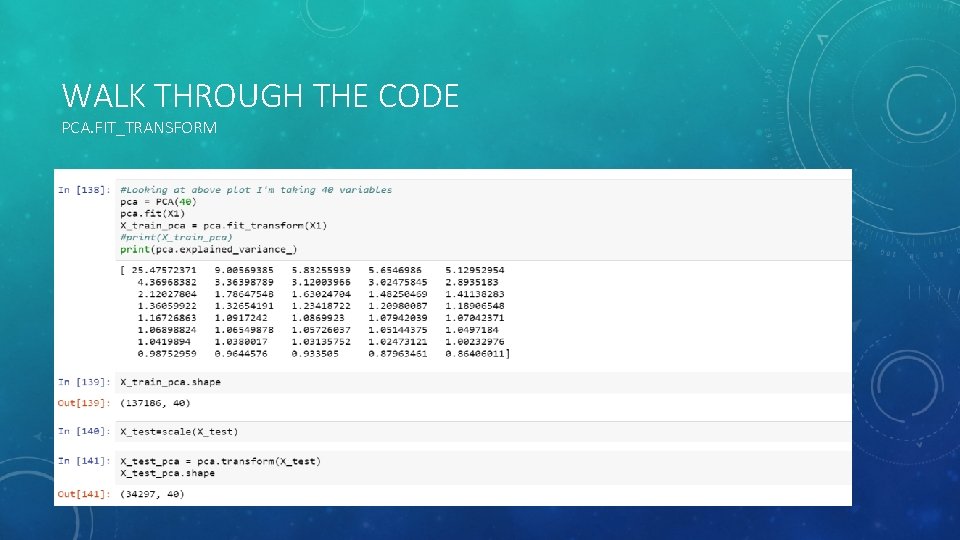

WALK THROUGH THE CODE PCA. FIT_TRANSFORM

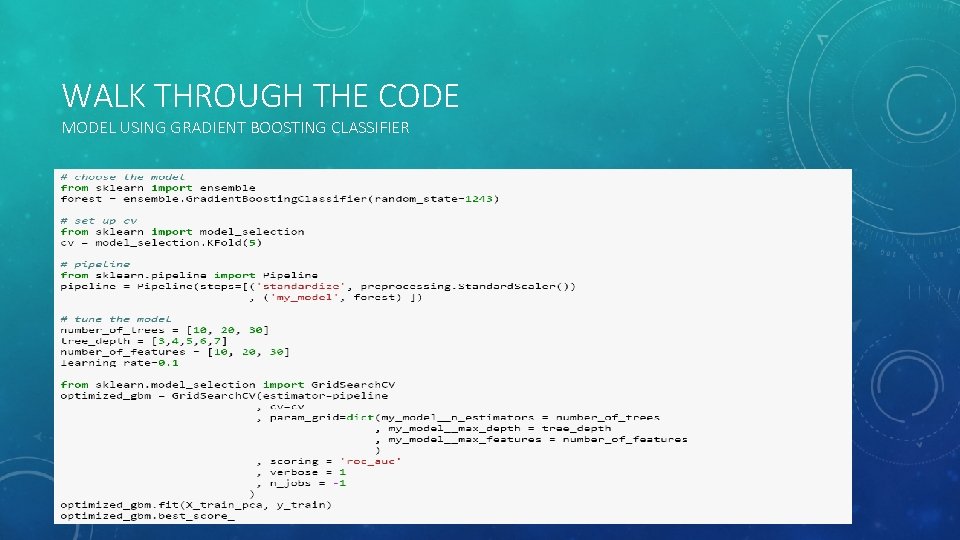

WALK THROUGH THE CODE MODEL USING GRADIENT BOOSTING CLASSIFIER

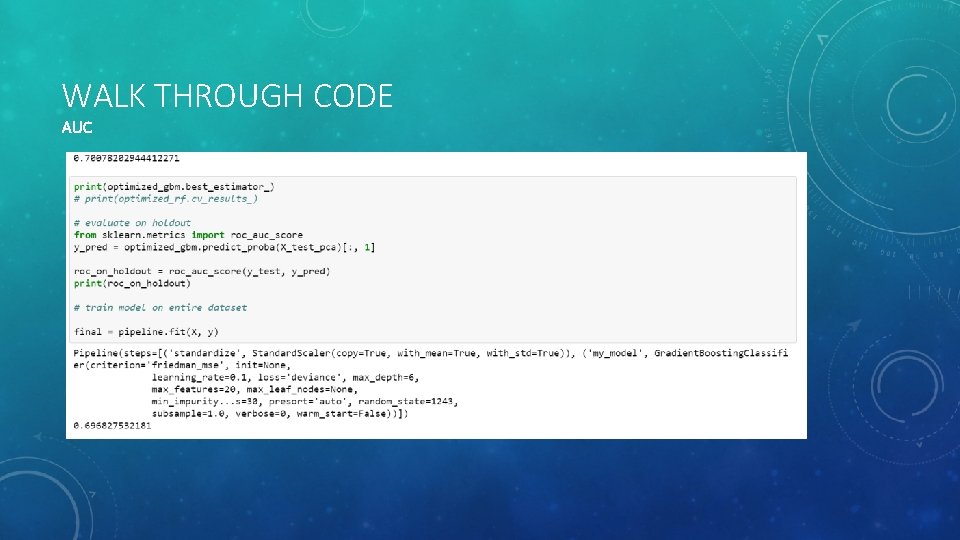

WALK THROUGH CODE AUC

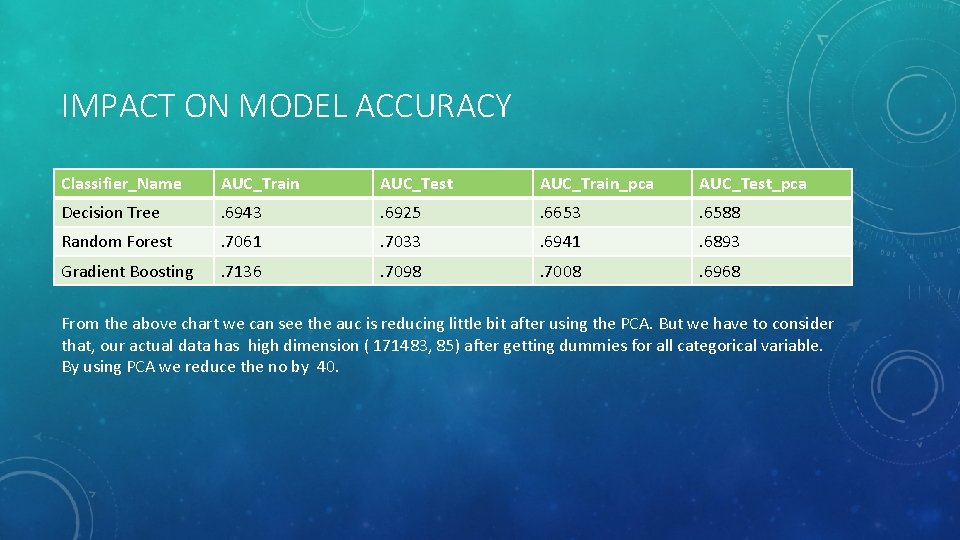

IMPACT ON MODEL ACCURACY Classifier_Name AUC_Train AUC_Test AUC_Train_pca AUC_Test_pca Decision Tree . 6943 . 6925 . 6653 . 6588 Random Forest . 7061 . 7033 . 6941 . 6893 Gradient Boosting . 7136 . 7098 . 7008 . 6968 From the above chart we can see the auc is reducing little bit after using the PCA. But we have to consider that, our actual data has high dimension ( 171483, 85) after getting dummies for all categorical variable. By using PCA we reduce the no by 40.

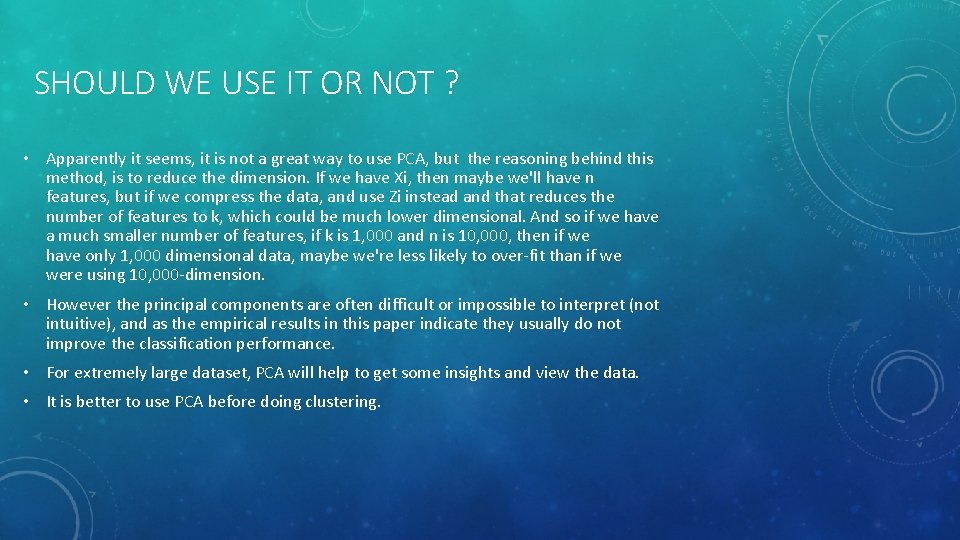

SHOULD WE USE IT OR NOT ? • Apparently it seems, it is not a great way to use PCA, but the reasoning behind this method, is to reduce the dimension. If we have Xi, then maybe we'll have n features, but if we compress the data, and use Zi instead and that reduces the number of features to k, which could be much lower dimensional. And so if we have a much smaller number of features, if k is 1, 000 and n is 10, 000, then if we have only 1, 000 dimensional data, maybe we're less likely to over-fit than if we were using 10, 000 -dimension. • However the principal components are often difficult or impossible to interpret (not intuitive), and as the empirical results in this paper indicate they usually do not improve the classification performance. • For extremely large dataset, PCA will help to get some insights and view the data. • It is better to use PCA before doing clustering.

Thank You

- Slides: 22