Three Papers AUC PFA and BIOInformatics The three

Three Papers: AUC, PFA and BIOInformatics The three papers are posted online

Learning Algorithms for Better Ranking Jin Huang, Charles X. Ling: Using AUC and Accuracy in Evaluating Learning Algorithms. IEEE Trans. Knowl. Data Eng. 17(3): 299 -310 (2005) l Find the citations online (google scholar) l Goal: accuracy vs ranking l Secondary Goal: Decision Tree vs Bayesian Networks in Ranking l – Design Algorithms That Directly Optimize Ranking

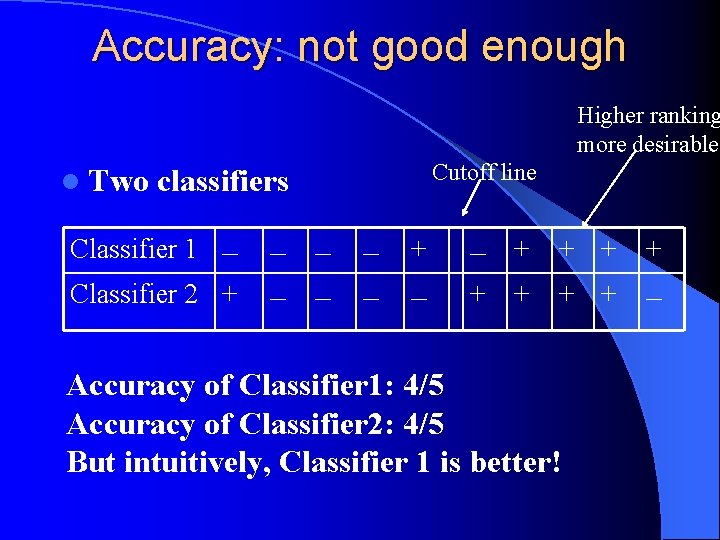

Accuracy: not good enough Higher ranking more desirable l Two Cutoff line classifiers Classifier 1 – Classifier 2 + – – – + + + + – Accuracy of Classifier 1: 4/5 Accuracy of Classifier 2: 4/5 But intuitively, Classifier 1 is better!

Accuracy vs ranking l Accuracy-based: making two assumptions: balanced class distribution and equal costs for misclassification l Ranking: step aside these assumptions – Problem: Training examples are labeled, not ranked l How to evaluate ranking?

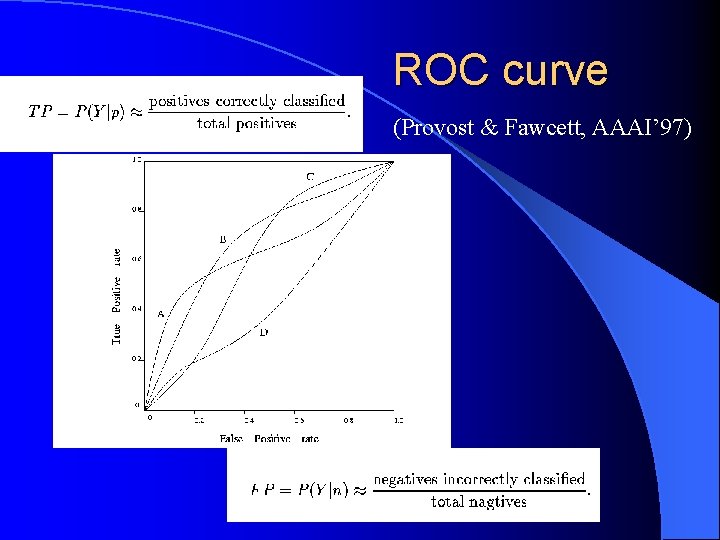

ROC curve (Provost & Fawcett, AAAI’ 97)

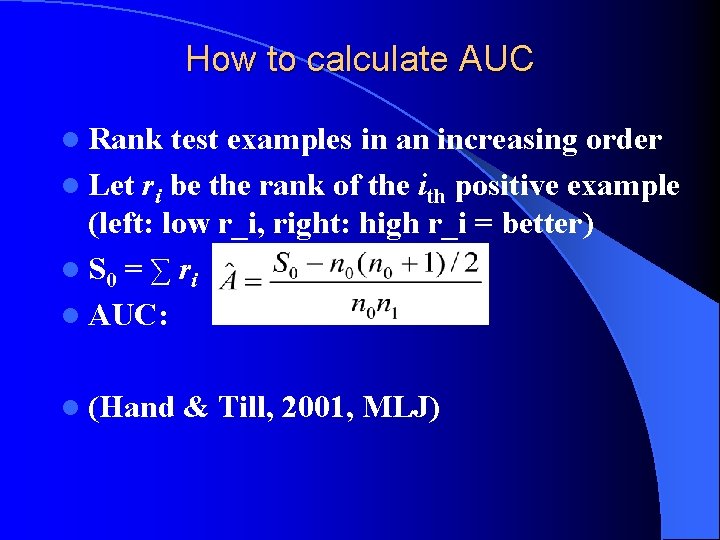

How to calculate AUC l Rank test examples in an increasing order l Let ri be the rank of the ith positive example (left: low r_i, right: high r_i = better) l S 0 = ∑ ri l AUC: l (Hand & Till, 2001, MLJ)

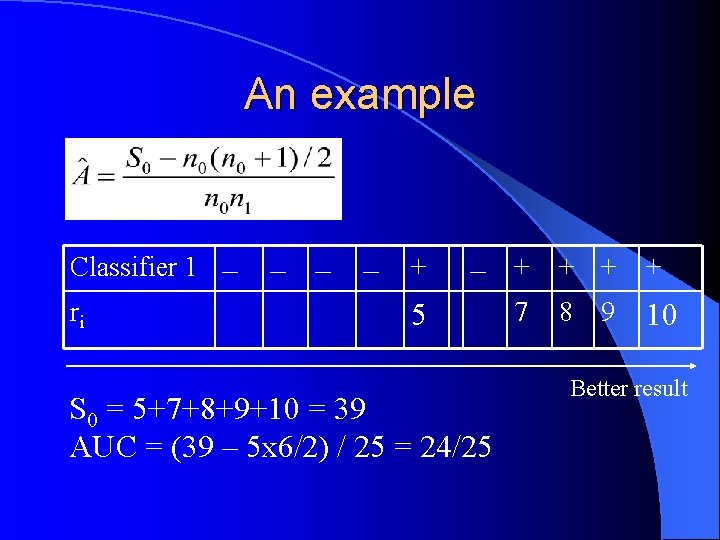

An example Classifier 1 – ri – – – + 5 – + + 7 8 9 10 S 0 = 5+7+8+9+10 = 39 AUC = (39 – 5 x 6/2) / 25 = 24/25 Better result

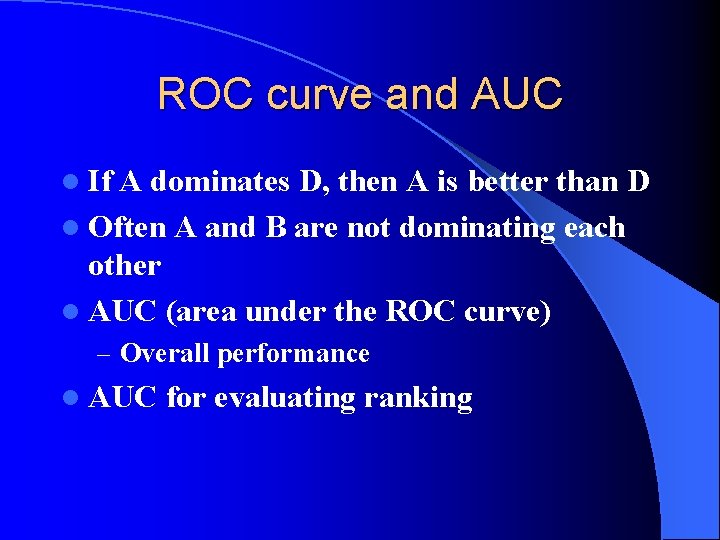

ROC curve and AUC l If A dominates D, then A is better than D l Often A and B are not dominating each other l AUC (area under the ROC curve) – Overall performance l AUC for evaluating ranking

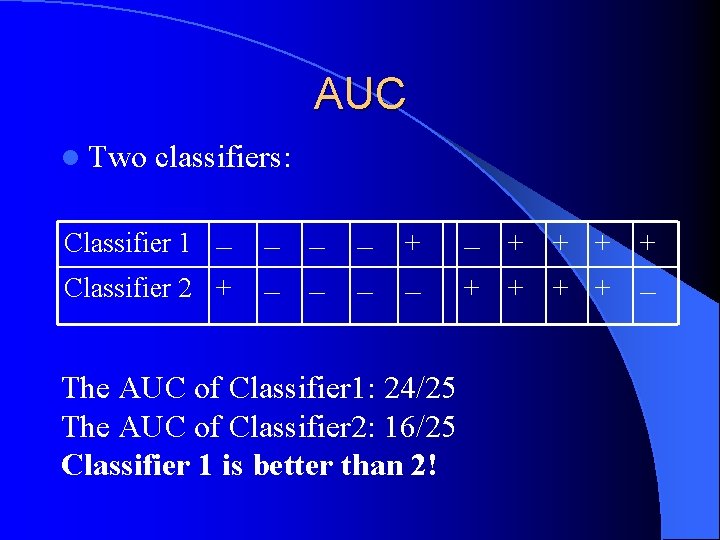

AUC l Two classifiers: Classifier 1 – Classifier 2 + – – – + – The AUC of Classifier 1: 24/25 The AUC of Classifier 2: 16/25 Classifier 1 is better than 2! – + + + + –

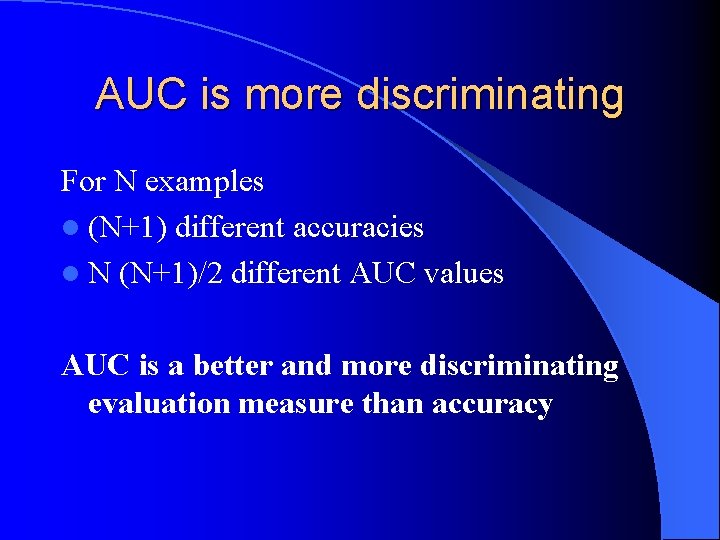

AUC is more discriminating For N examples l (N+1) different accuracies l N (N+1)/2 different AUC values AUC is a better and more discriminating evaluation measure than accuracy

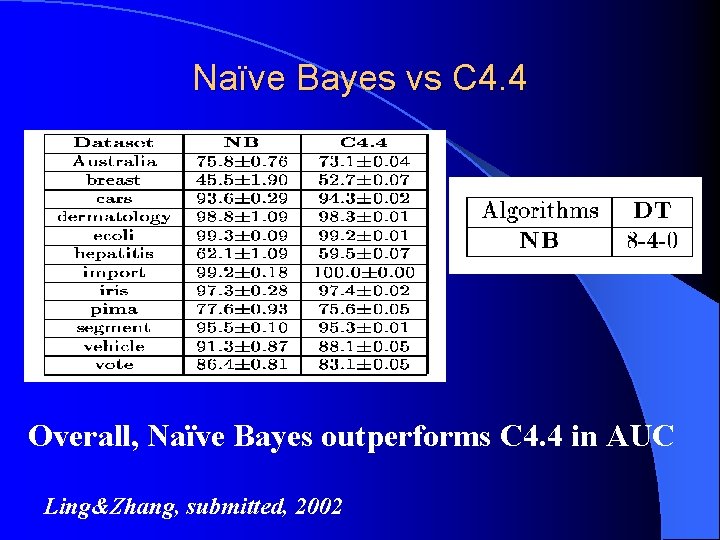

Naïve Bayes vs C 4. 4 Overall, Naïve Bayes outperforms C 4. 4 in AUC Ling&Zhang, submitted, 2002

PCA in Face Recognition

Problem with PCA l The features are principal components – Thus they do not correspond directly to the original features – Problem with face recognition: wish to pick a subset of original features rather than composed ones l Principal Feature Analysis: pick the best, uncorrelated, subset of features of a data set – Equivalent to finding q dimensions of a random variable X=[x 1, x 2, … , xn]^T

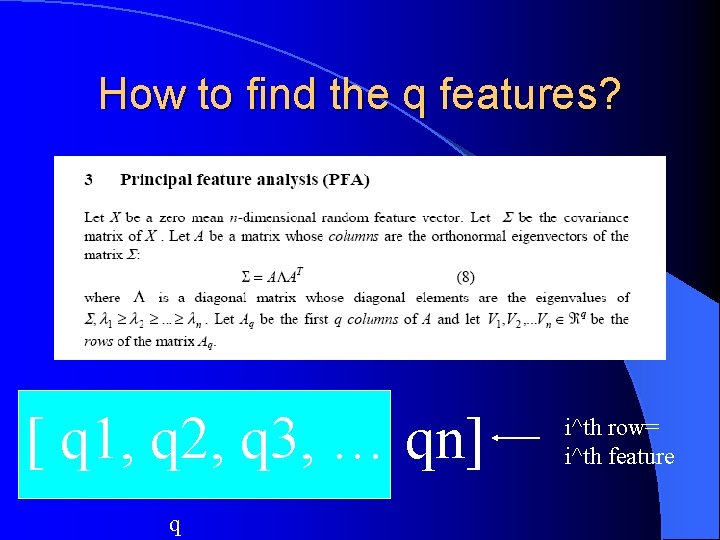

How to find the q features? [ q 1, q 2, q 3, … qn] q i^th row= i^th feature

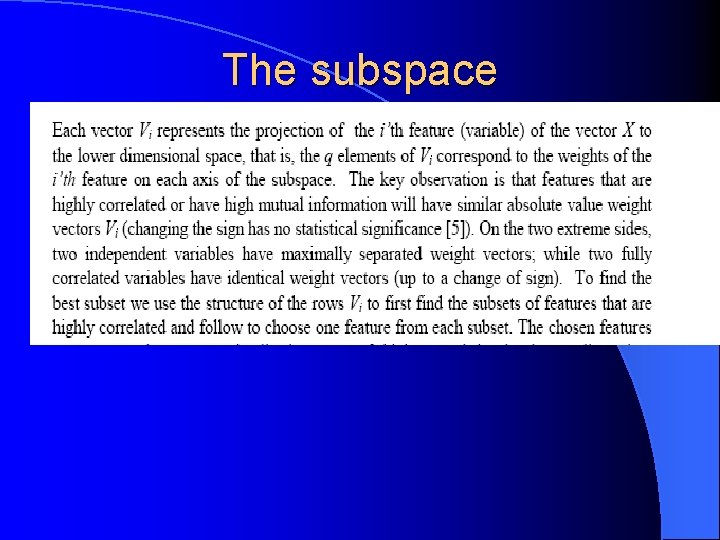

The subspace

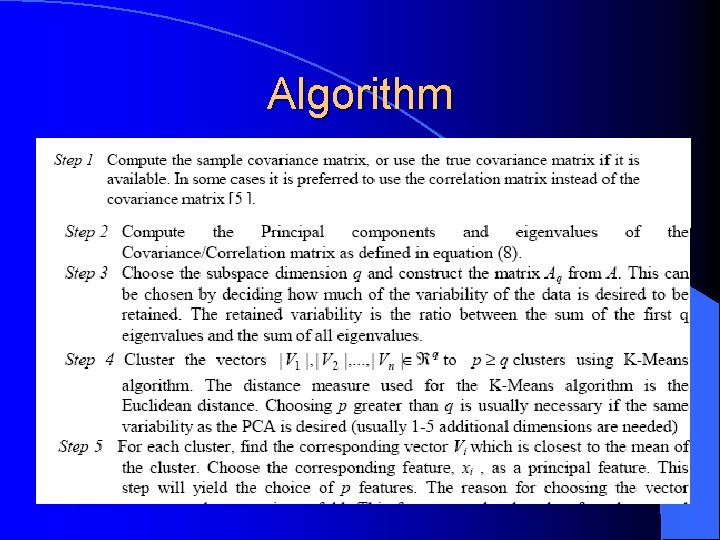

Algorithm

Result

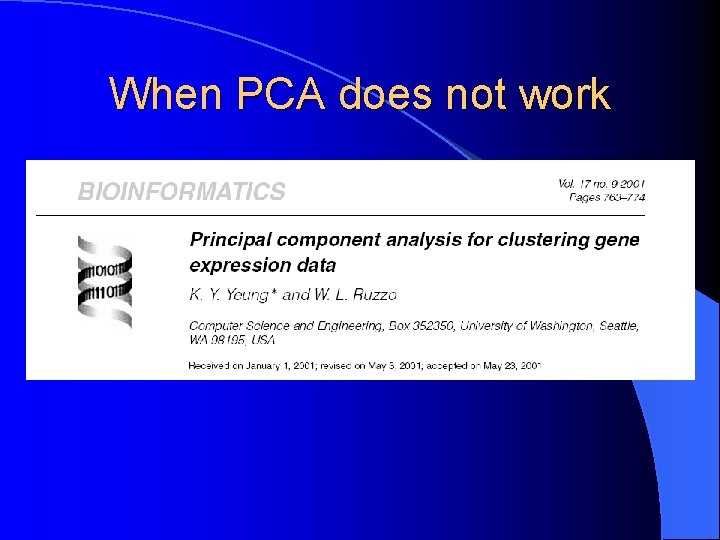

When PCA does not work

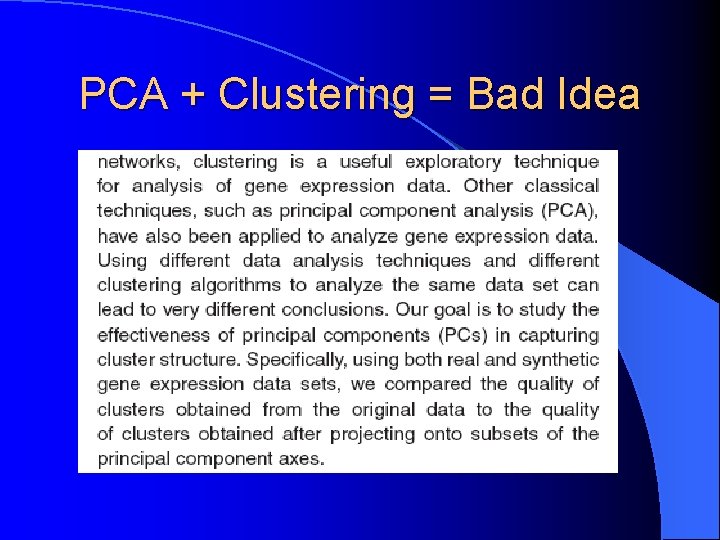

PCA + Clustering = Bad Idea

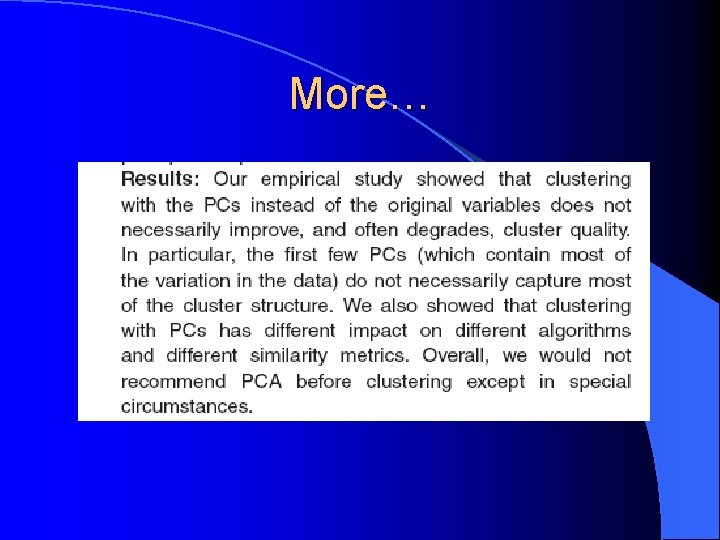

More…

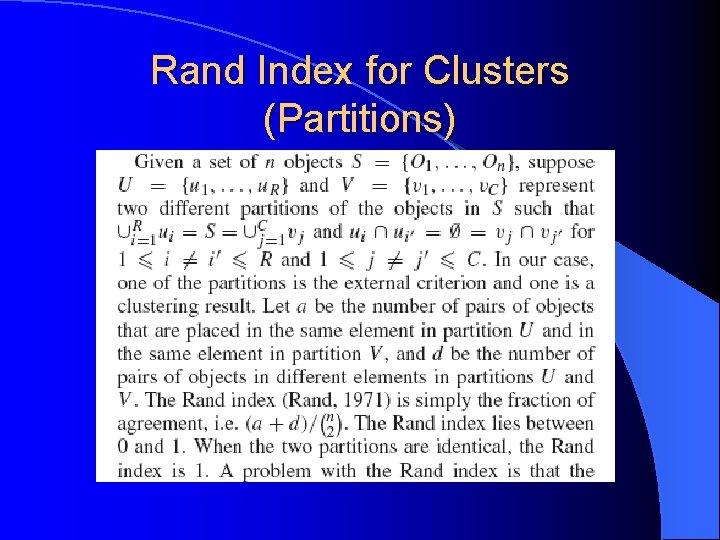

Rand Index for Clusters (Partitions)

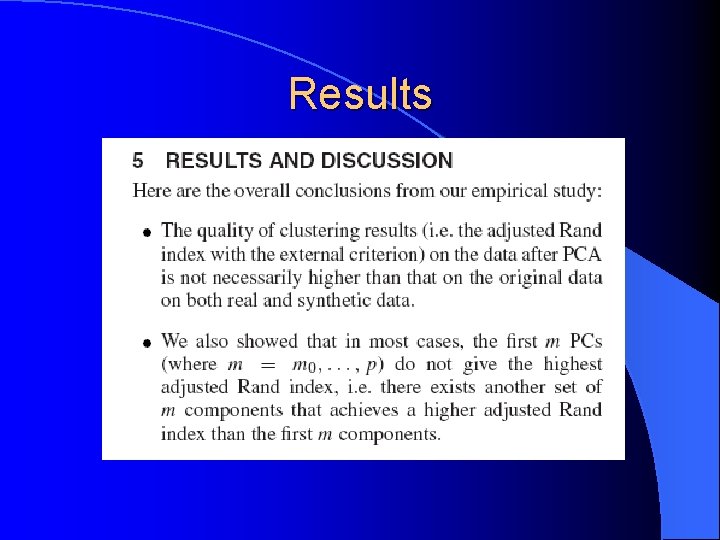

Results

- Slides: 22