Statistical Analysis of Packet Buffer Architectures Gireesh Shrimali

Statistical Analysis of Packet Buffer Architectures Gireesh Shrimali, Isaac Keslassy, Nick Mc. Keown E-mail: gireesh@stanford. edu 1

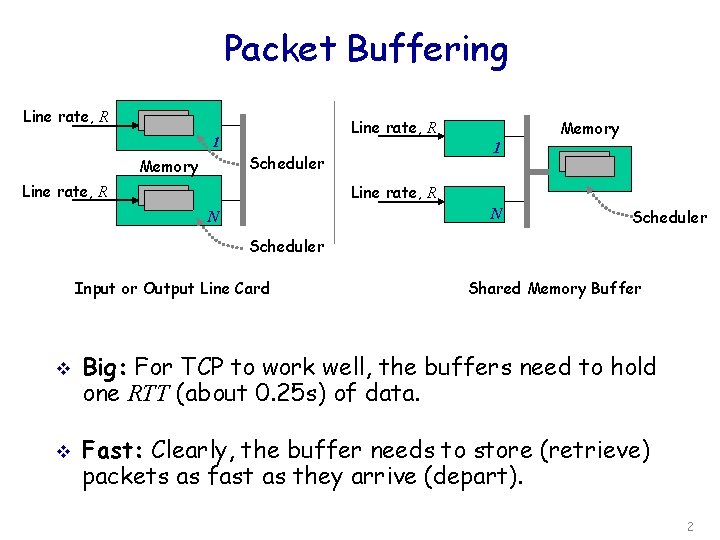

Packet Buffering Line rate, R 1 1 Scheduler Memory Line rate, R N N Scheduler Input or Output Line Card v v Shared Memory Buffer Big: For TCP to work well, the buffers need to hold one RTT (about 0. 25 s) of data. Fast: Clearly, the buffer needs to store (retrieve) packets as fast as they arrive (depart). 2

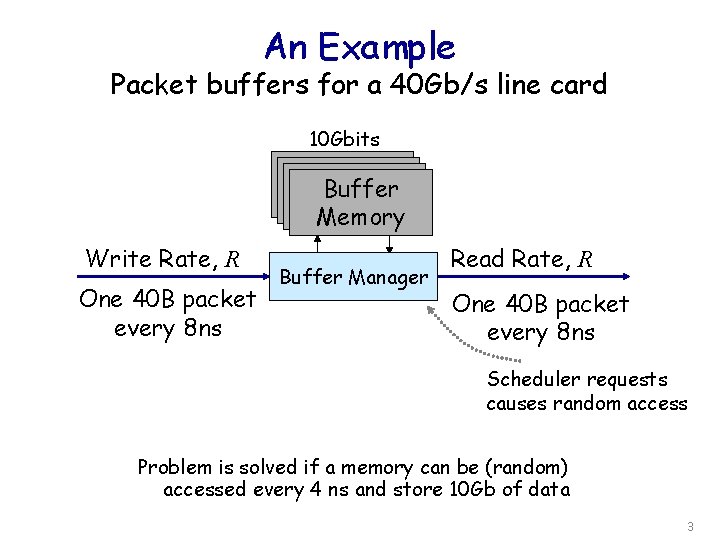

An Example Packet buffers for a 40 Gb/s line card 10 Gbits Buffer Memory Write Rate, R One 40 B packet every 8 ns Buffer Manager Read Rate, R One 40 B packet every 8 ns Scheduler requests causes random access Problem is solved if a memory can be (random) accessed every 4 ns and store 10 Gb of data 3

Key Question How can we design high speed packet buffers from commodity available memories? 4

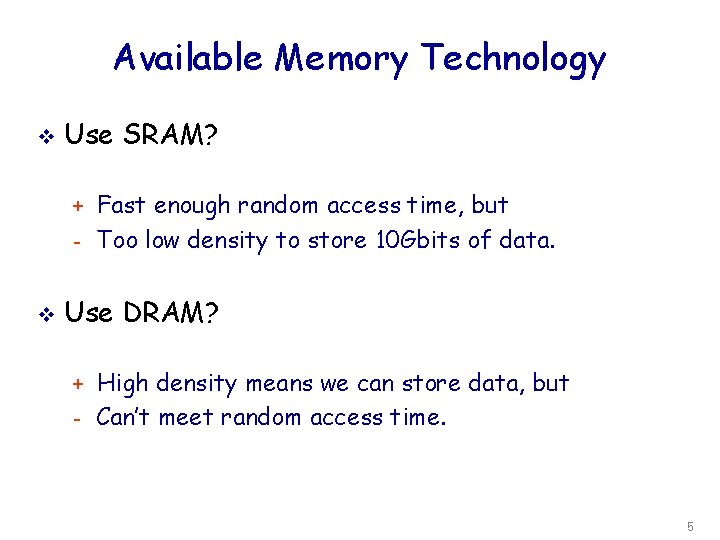

Available Memory Technology v Use SRAM? + Fast enough random access time, but - Too low density to store 10 Gbits of data. v Use DRAM? + High density means we can store data, but - Can’t meet random access time. 5

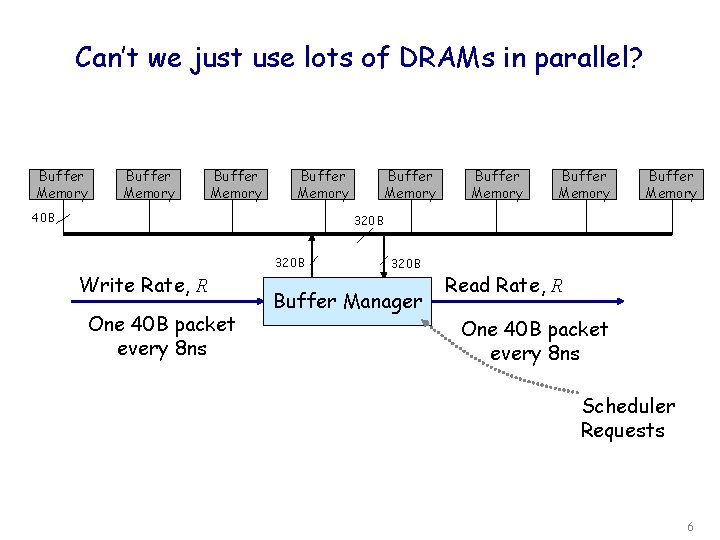

Can’t we just use lots of DRAMs in parallel? Buffer Memory 40 B Buffer Memory 320 B Write Rate, R One 40 B packet every 8 ns 320 B Buffer Manager Read Rate, R One 40 B packet every 8 ns Scheduler Requests 6

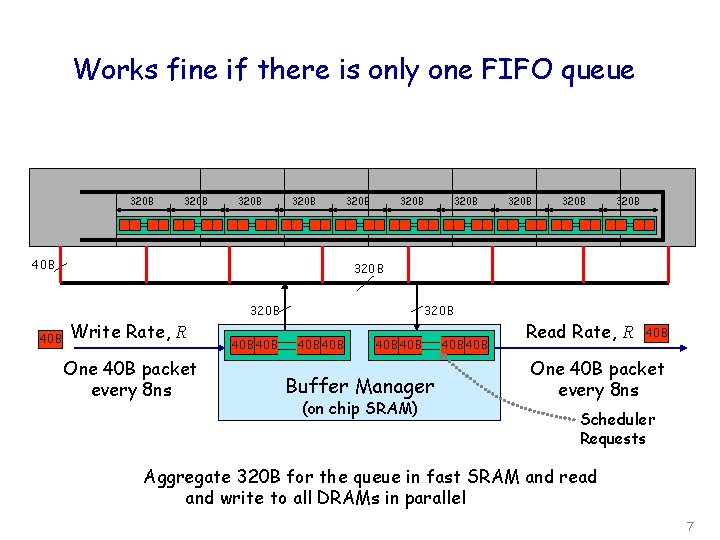

Works fine if there is only one FIFO queue 320 B 40 B 320 B 320 B Write Rate, R One 40 B packet every 8 ns 320 B 40 B 40 B Buffer Manager (on chip SRAM) Read Rate, R 40 B One 40 B packet every 8 ns Scheduler Requests Aggregate 320 B for the queue in fast SRAM and read and write to all DRAMs in parallel 7

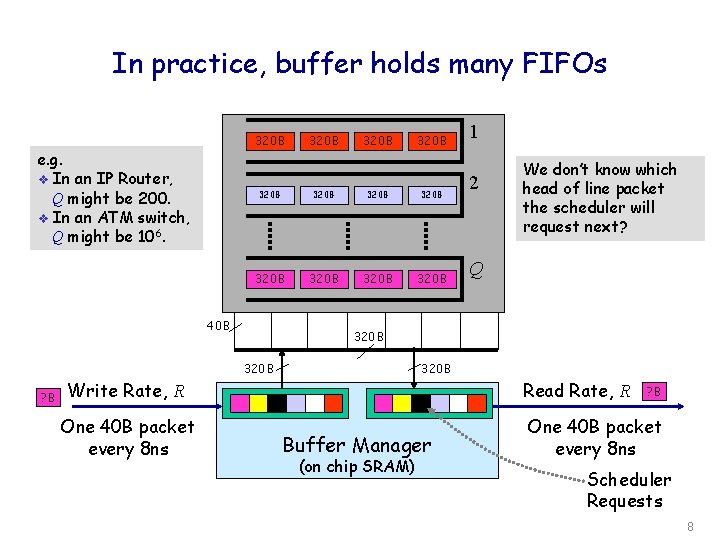

In practice, buffer holds many FIFOs 320 B e. g. v In an IP Router, Q might be 200. v In an ATM switch, Q might be 106. 320 B Write Rate, R One 40 B packet every 8 ns 320 B 320 B 320 B 40 B ? B 320 B 1 2 We don’t know which head of line packet the scheduler will request next? Q 320 B 320 B Buffer Manager (on chip SRAM) Read Rate, R ? B One 40 B packet every 8 ns Scheduler Requests 8

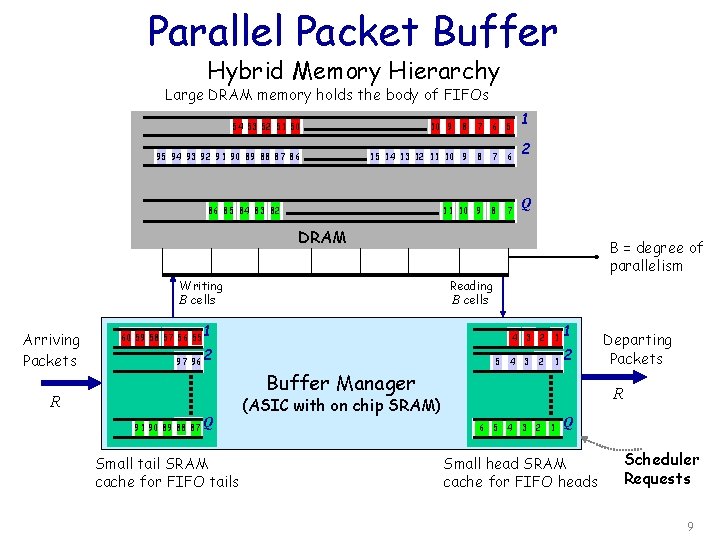

Parallel Packet Buffer Hybrid Memory Hierarchy Large DRAM memory holds the body of FIFOs 54 53 52 51 50 95 94 93 92 91 90 89 88 87 86 10 9 8 7 6 5 15 14 13 12 11 10 9 8 7 6 11 10 9 8 7 86 85 84 83 82 1 2 Q DRAM Writing B cells Arriving Packets 60 59 58 57 56 55 1 97 96 2 Reading B cells Buffer Manager R Q 91 90 89 88 87 Small tail SRAM cache for FIFO tails B = degree of parallelism 5 4 3 2 1 1 2 4 3 Departing Packets R (ASIC with on chip SRAM) 6 5 4 3 2 1 Q Small head SRAM cache for FIFO heads Scheduler Requests 9

Objective Would like to Minimize the size of SRAM while providing reasonable guarantees v So, ask the following question If the designer is willing to tolerate a certain drop probability then how small can the SRAM get? v 10

Memory Management Algorithm v Algorithm: At every service opportunity serve a FIFO from the set of FIFOs with occupancy greater than or equal to B Ø Ø v B-work conserving - thus minimizes SRAM size Round-robin performs as well as largest FIFO first Some definitions Ø Ø FIFO occupancy counter: L(i, t) Sum of occupancies: L(t) 11

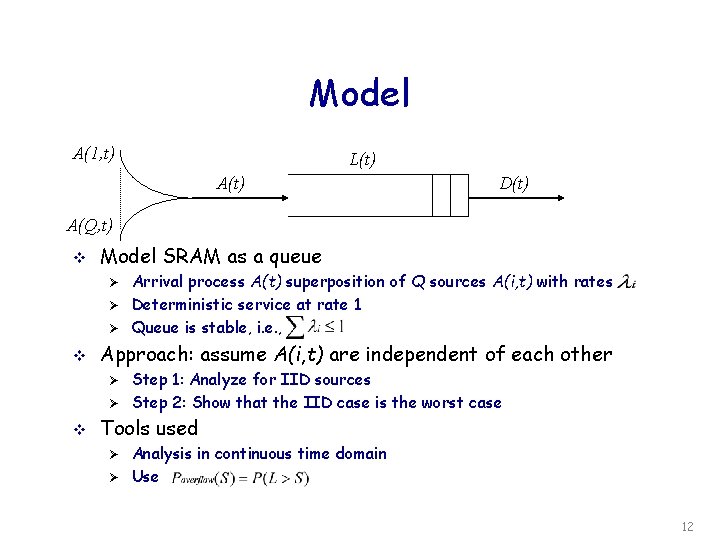

Model A(1, t) L(t) A(t) D(t) A(Q, t) v Model SRAM as a queue Ø Ø Ø v Approach: assume A(i, t) are independent of each other Ø Ø v Arrival process A(t) superposition of Q sources A(i, t) with rates Deterministic service at rate 1 Queue is stable, i. e. , Step 1: Analyze for IID sources Step 2: Show that the IID case is the worst case Tools used Ø Ø Analysis in continuous time domain Use 12

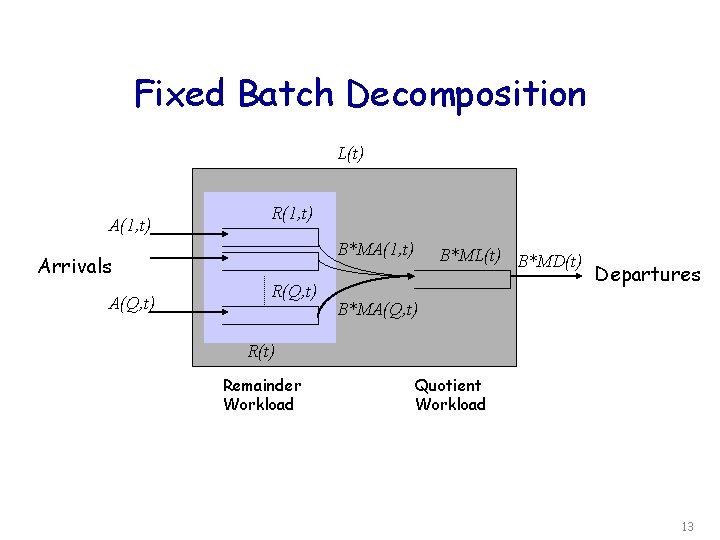

Fixed Batch Decomposition L(t) A(1, t) R(1, t) B*MA(1, t) Arrivals A(Q, t) R(Q, t) B*ML(t) B*MD(t) Departures B*MA(Q, t) R(t) Remainder Workload Quotient Workload 13

Assumptions A(i, t) are 1. independent of each other 2. stationary and ergodic 3. simple point processes 14

PDF of SRAM Occupancy Theorem: The quotient workload and the remainder workload are independent of each other v Thus The distribution of SRAM occupancy is the convolution of the distributions of the quotient and remainder workloads v 15

PDF of Remainder Workload v v Theorem: For large Q, PDF of remainder workload approaches a Gaussian distribution with mean Q(B- 1)/2 & variance Q(B^2 -1)/12 Intuition: Application of central limit theorem 16

![PDF of Quotient Workload v Theorem [Cao, Ramanan INFOCOM 2002]: For large Q, the PDF of Quotient Workload v Theorem [Cao, Ramanan INFOCOM 2002]: For large Q, the](http://slidetodoc.com/presentation_image_h2/fce32b4ae55577177d93e400444e5ecd/image-17.jpg)

PDF of Quotient Workload v Theorem [Cao, Ramanan INFOCOM 2002]: For large Q, the behavior of the quotient FIFO approaches the behavior of an M/D/1 queue with the same load Ø v Numerical solution through recurrence relations Depends only on load Ø Ø Independent of Q and B Close to impulse at low loads 17

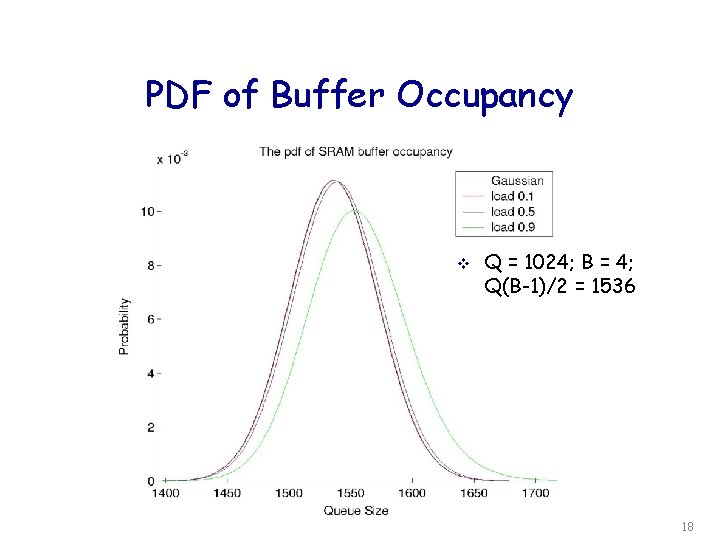

PDF of Buffer Occupancy v Q = 1024; B = 4; Q(B-1)/2 = 1536 18

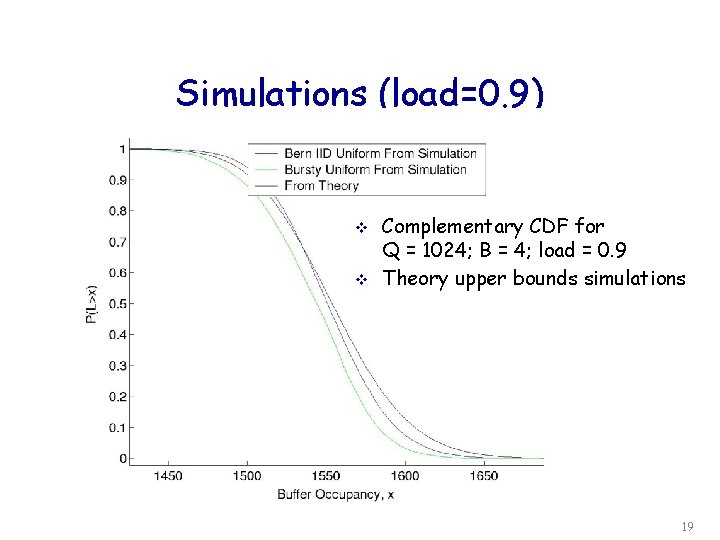

Simulations (load=0. 9) v v Complementary CDF for Q = 1024; B = 4; load = 0. 9 Theory upper bounds simulations 19

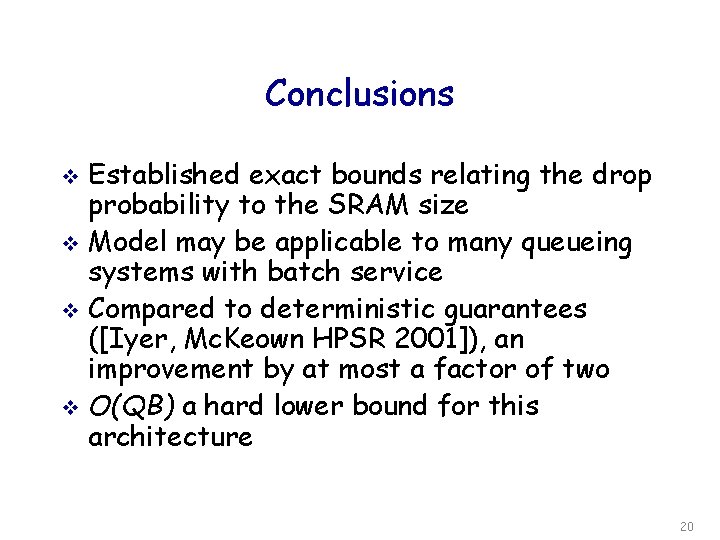

Conclusions Established exact bounds relating the drop probability to the SRAM size v Model may be applicable to many queueing systems with batch service v Compared to deterministic guarantees ([Iyer, Mc. Keown HPSR 2001]), an improvement by at most a factor of two v O(QB) a hard lower bound for this architecture v 20

- Slides: 20