Static Analysis Willem Visser Stellenbosch University Overview Static

Static Analysis Willem Visser Stellenbosch University

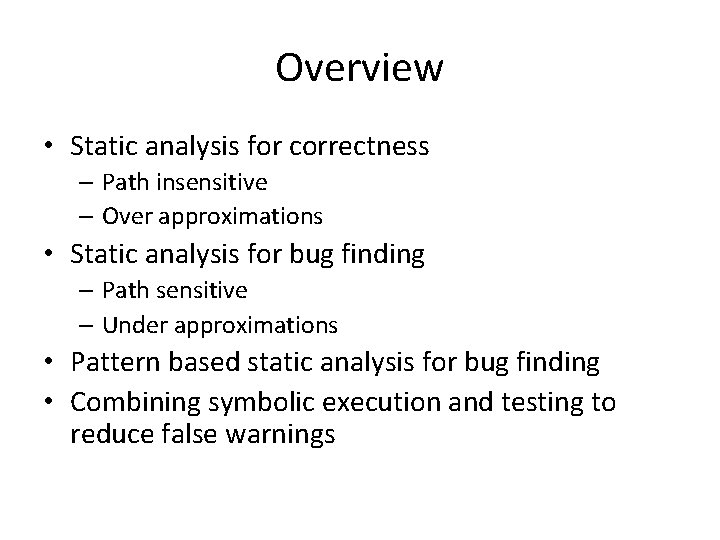

Overview • Static analysis for correctness – Path insensitive – Over approximations • Static analysis for bug finding – Path sensitive – Under approximations • Pattern based static analysis for bug finding • Combining symbolic execution and testing to reduce false warnings

Static Analysis in General • • Used all over the place Type checkers Optimizers …

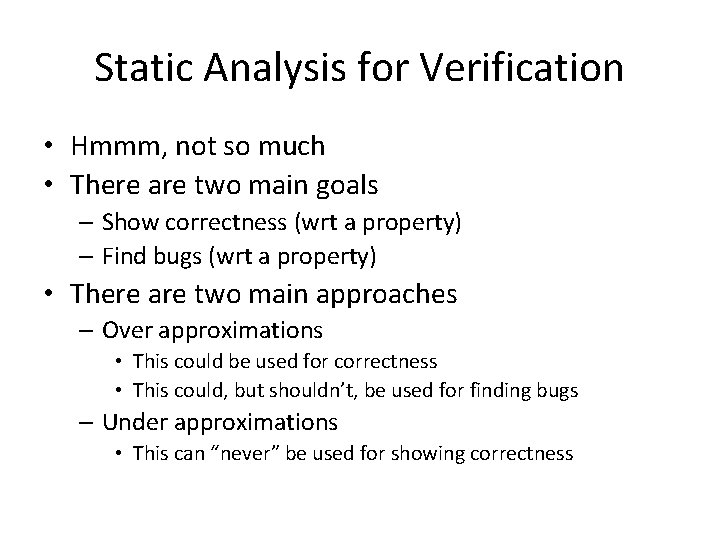

Static Analysis for Verification • Hmmm, not so much • There are two main goals – Show correctness (wrt a property) – Find bugs (wrt a property) • There are two main approaches – Over approximations • This could be used for correctness • This could, but shouldn’t, be used for finding bugs – Under approximations • This can “never” be used for showing correctness

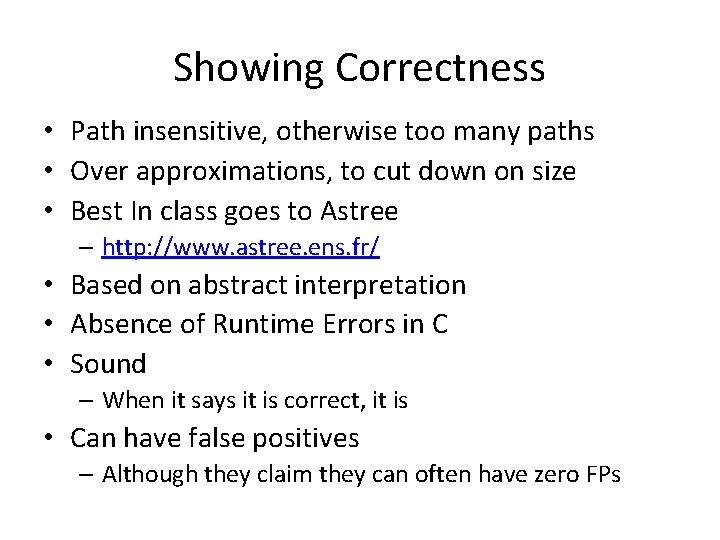

Showing Correctness • Path insensitive, otherwise too many paths • Over approximations, to cut down on size • Best In class goes to Astree – http: //www. astree. ens. fr/ • Based on abstract interpretation • Absence of Runtime Errors in C • Sound – When it says it is correct, it is • Can have false positives – Although they claim they can often have zero FPs

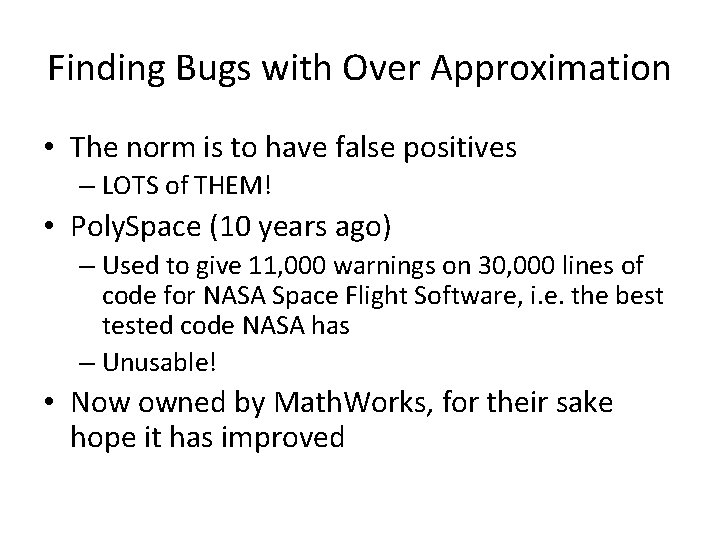

Finding Bugs with Over Approximation • The norm is to have false positives – LOTS of THEM! • Poly. Space (10 years ago) – Used to give 11, 000 warnings on 30, 000 lines of code for NASA Space Flight Software, i. e. the best tested code NASA has – Unusable! • Now owned by Math. Works, for their sake hope it has improved

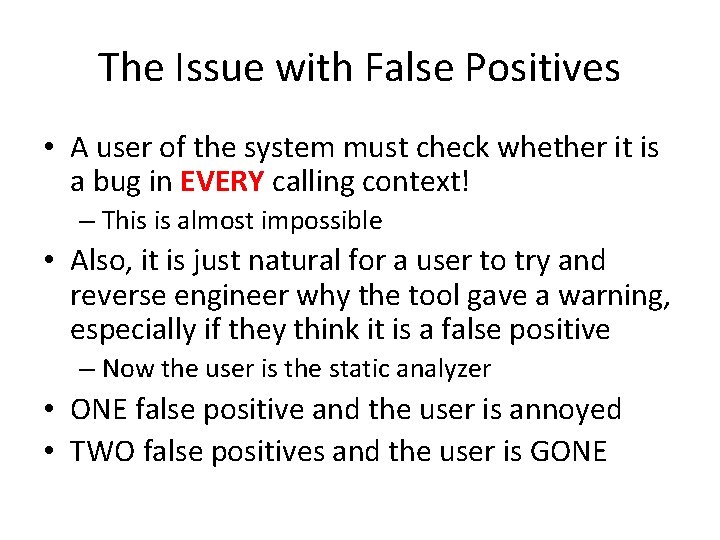

The Issue with False Positives • A user of the system must check whether it is a bug in EVERY calling context! – This is almost impossible • Also, it is just natural for a user to try and reverse engineer why the tool gave a warning, especially if they think it is a false positive – Now the user is the static analyzer • ONE false positive and the user is annoyed • TWO false positives and the user is GONE

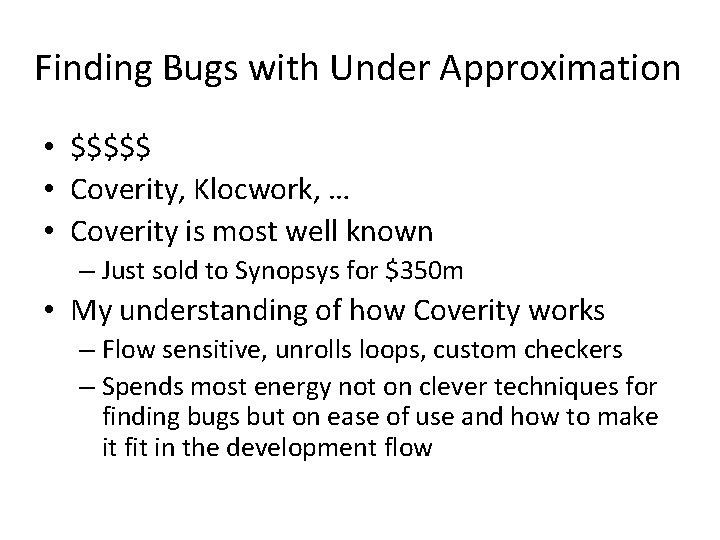

Finding Bugs with Under Approximation • $$$$$ • Coverity, Klocwork, … • Coverity is most well known – Just sold to Synopsys for $350 m • My understanding of how Coverity works – Flow sensitive, unrolls loops, custom checkers – Spends most energy not on clever techniques for finding bugs but on ease of use and how to make it fit in the development flow

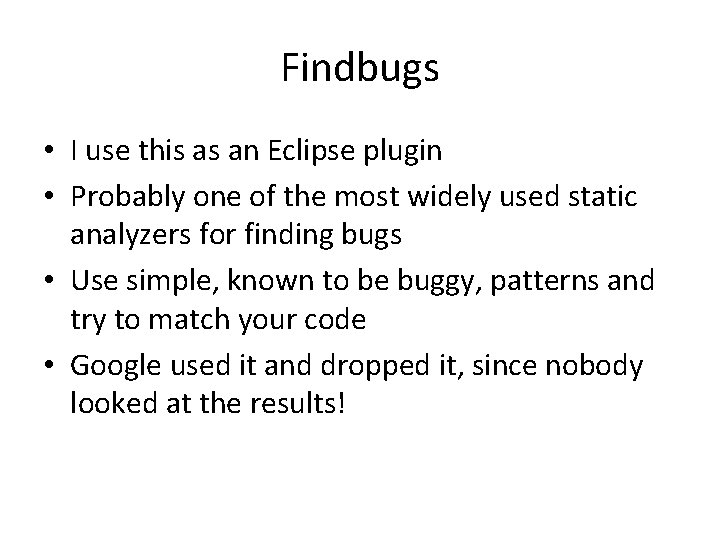

Findbugs • I use this as an Eclipse plugin • Probably one of the most widely used static analyzers for finding bugs • Use simple, known to be buggy, patterns and try to match your code • Google used it and dropped it, since nobody looked at the results!

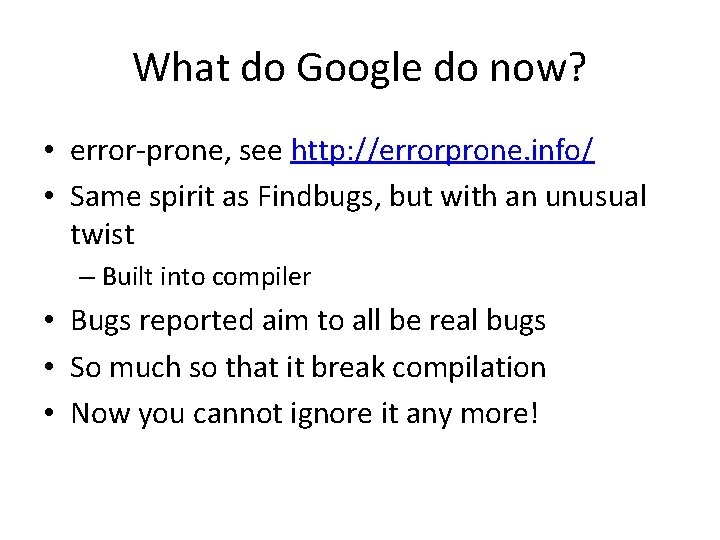

What do Google do now? • error-prone, see http: //errorprone. info/ • Same spirit as Findbugs, but with an unusual twist – Built into compiler • Bugs reported aim to all be real bugs • So much so that it break compilation • Now you cannot ignore it any more!

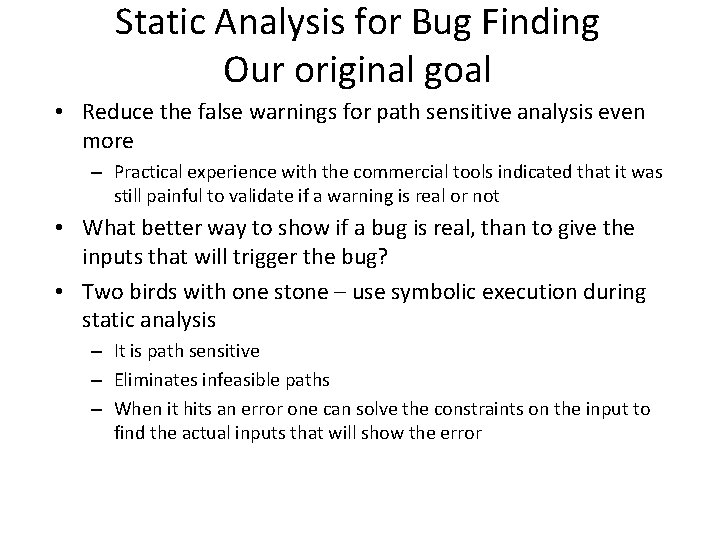

Static Analysis for Bug Finding Our original goal • Reduce the false warnings for path sensitive analysis even more – Practical experience with the commercial tools indicated that it was still painful to validate if a warning is real or not • What better way to show if a bug is real, than to give the inputs that will trigger the bug? • Two birds with one stone – use symbolic execution during static analysis – It is path sensitive – Eliminates infeasible paths – When it hits an error one can solve the constraints on the input to find the actual inputs that will show the error

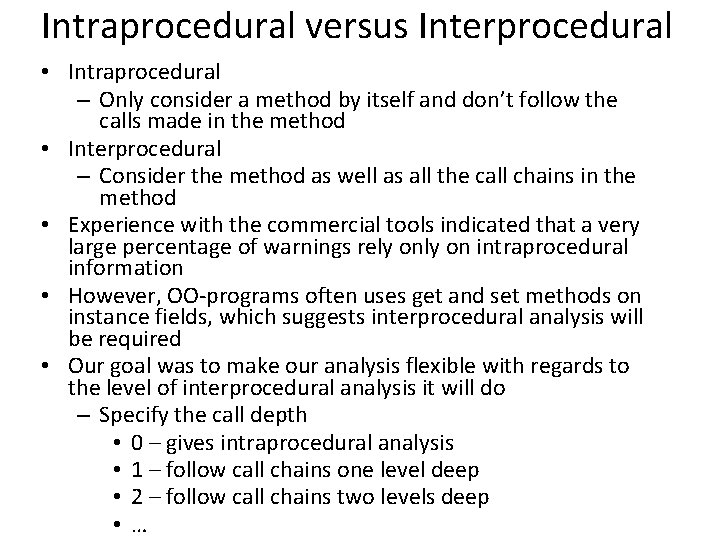

Intraprocedural versus Interprocedural • Intraprocedural – Only consider a method by itself and don’t follow the calls made in the method • Interprocedural – Consider the method as well as all the call chains in the method • Experience with the commercial tools indicated that a very large percentage of warnings rely on intraprocedural information • However, OO-programs often uses get and set methods on instance fields, which suggests interprocedural analysis will be required • Our goal was to make our analysis flexible with regards to the level of interprocedural analysis it will do – Specify the call depth • 0 – gives intraprocedural analysis • 1 – follow call chains one level deep • 2 – follow call chains two levels deep • …

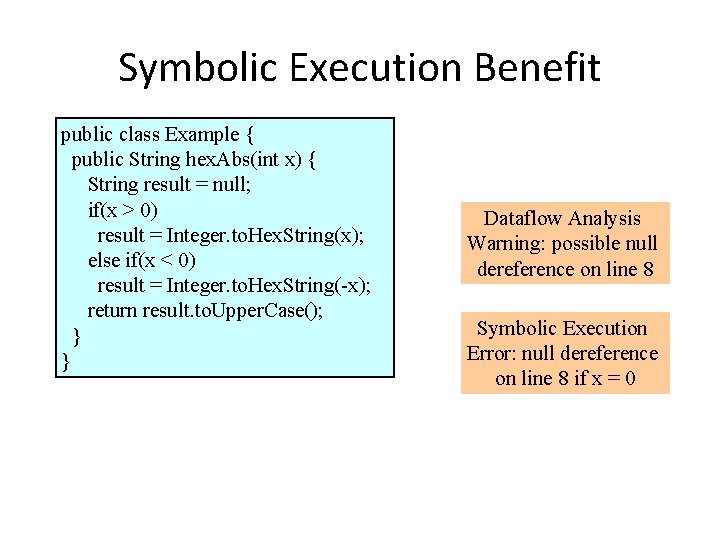

Symbolic Execution Benefit public class Example { public String hex. Abs(int x) { String result = null; if(x > 0) result = Integer. to. Hex. String(x); else if(x < 0) result = Integer. to. Hex. String(-x); return result. to. Upper. Case(); } } Dataflow Analysis Warning: possible null dereference on line 8 Symbolic Execution Error: null dereference on line 8 if x = 0

Terminating the Symbolic Execution • Set a maximum size the path condition can grow to • Set a maximum number of times a specific instruction can be revisited during the analysis

![Framework Java classes Symbolic Execution [SOOT + CVCL] • Starts symbolic analysis at each Framework Java classes Symbolic Execution [SOOT + CVCL] • Starts symbolic analysis at each](http://slidetodoc.com/presentation_image_h2/de36509813fd701bcfb8cceb141a5d03/image-15.jpg)

Framework Java classes Symbolic Execution [SOOT + CVCL] • Starts symbolic analysis at each method in the class file(s) • Symbolic execution detects a possible error; passes it and the symbolic state to the test generator • From the current state and the path condition, generate a test to try and cover the error • Execute the test and check if the expected error is triggered Warnings Test Generation [POOC] Test Cases Test Execution [Reflection]

![public class Array. Bound { public void f(int n, int m) { int[] array public class Array. Bound { public void f(int n, int m) { int[] array](http://slidetodoc.com/presentation_image_h2/de36509813fd701bcfb8cceb141a5d03/image-16.jpg)

public class Array. Bound { public void f(int n, int m) { int[] array = new int[m]; for(int i = 0; i < n; i++) { array[i] = 0; }}} Small Example WARNING: possible array upper bound violation (f: 5) Symbolic state at time of warning: Method: <Array. Bound: void f(int, int)> Instruction: array[i] = 0 Line number: 5 Depth: instruction = 6, branch = 1, pc = 4 Path condition: [U 1 >= 0, 0 < U 0, U 1 = len(A 0), 0 >= len(A 0), len(A 0) >= 0] Parameter values: [U 0, U 1] This object: o 0 Local vars: [i=0, m=U 1, n=U 0, this=o 0, array=A 0] Solution (1): this = o 0, param 0 = 1, param 1 = 0 Running 2 test(s). . . 2) Solution (1) Testing Array. Bound. f REAL? Caught expected exception: java. lang. Array. Index. Out. Of. Bounds. Exception: 0 Occurred at Array. Bound. f: 5

![public class Array. Bound { public void f(int n, int m) { int[] array public class Array. Bound { public void f(int n, int m) { int[] array](http://slidetodoc.com/presentation_image_h2/de36509813fd701bcfb8cceb141a5d03/image-17.jpg)

public class Array. Bound { public void f(int n, int m) { int[] array = new int[m]; for(int i = 0; i < n; i++) { array[i] = 0; }}} Small Example WARNING: possible array upper bound violation (f: 5) Symbolic state at time of warning: Method: <Array. Bound: void f(int, int)> Instruction: array[i] = 0 Line number: 5 Depth: instruction = 6, branch = 1, pc = 4 Path condition: [0 < U 0, U 1 = len(A 0) = 0 ] Parameter values: [U 0, U 1] This object: o 0 Local vars: [i=0, m=U 1, n=U 0, this=o 0, array=A 0] Solution (1): this = o 0, n = 1, m = 0 Running 2 test(s). . . 2) Solution (1) Testing Array. Bound. f REAL? Caught expected exception: java. lang. Array. Index. Out. Of. Bounds. Exception: 0 Occurred at Array. Bound. f: 5

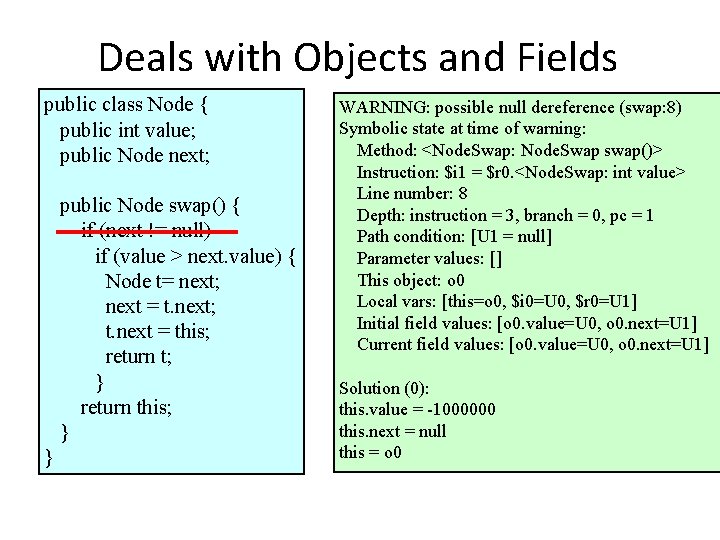

Deals with Objects and Fields public class Node { public int value; public Node next; public Node swap() { if (next != null) if (value > next. value) { Node t= next; next = t. next; t. next = this; return t; } return this; } } WARNING: possible null dereference (swap: 8) Symbolic state at time of warning: Method: <Node. Swap: Node. Swap swap()> Instruction: $i 1 = $r 0. <Node. Swap: int value> Line number: 8 Depth: instruction = 3, branch = 0, pc = 1 Path condition: [U 1 = null] Parameter values: [] This object: o 0 Local vars: [this=o 0, $i 0=U 0, $r 0=U 1] Initial field values: [o 0. value=U 0, o 0. next=U 1] Current field values: [o 0. value=U 0, o 0. next=U 1] Solution (0): this. value = -1000000 this. next = null this = o 0

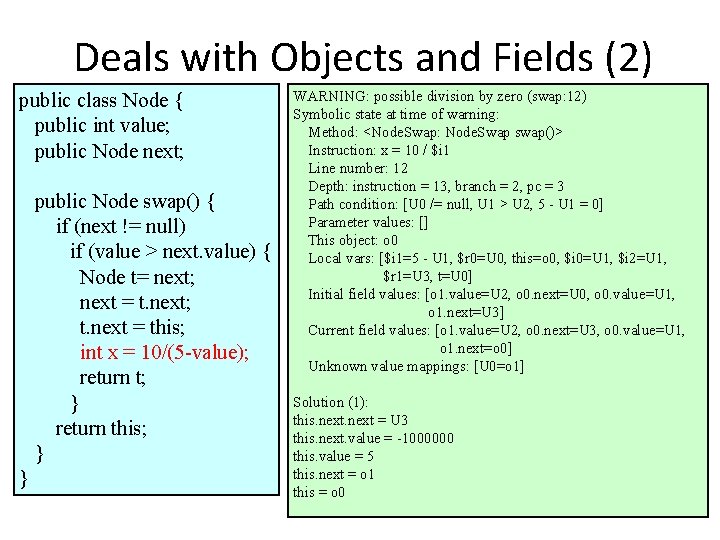

Deals with Objects and Fields (2) public class Node { public int value; public Node next; public Node swap() { if (next != null) if (value > next. value) { Node t= next; next = t. next; t. next = this; int x = 10/(5 -value); return t; } return this; } } WARNING: possible division by zero (swap: 12) Symbolic state at time of warning: Method: <Node. Swap: Node. Swap swap()> Instruction: x = 10 / $i 1 Line number: 12 Depth: instruction = 13, branch = 2, pc = 3 Path condition: [U 0 /= null, U 1 > U 2, 5 - U 1 = 0] Parameter values: [] This object: o 0 Local vars: [$i 1=5 - U 1, $r 0=U 0, this=o 0, $i 0=U 1, $i 2=U 1, $r 1=U 3, t=U 0] Initial field values: [o 1. value=U 2, o 0. next=U 0, o 0. value=U 1, o 1. next=U 3] Current field values: [o 1. value=U 2, o 0. next=U 3, o 0. value=U 1, o 1. next=o 0] Unknown value mappings: [U 0=o 1] Solution (1): this. next = U 3 this. next. value = -1000000 this. value = 5 this. next = o 1 this = o 0

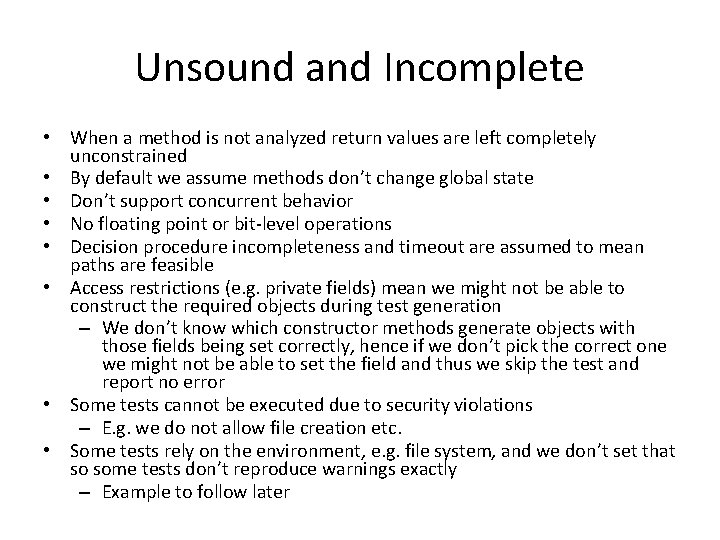

Unsound and Incomplete • When a method is not analyzed return values are left completely unconstrained • By default we assume methods don’t change global state • Don’t support concurrent behavior • No floating point or bit-level operations • Decision procedure incompleteness and timeout are assumed to mean paths are feasible • Access restrictions (e. g. private fields) mean we might not be able to construct the required objects during test generation – We don’t know which constructor methods generate objects with those fields being set correctly, hence if we don’t pick the correct one we might not be able to set the field and thus we skip the test and report no error • Some tests cannot be executed due to security violations – E. g. we do not allow file creation etc. • Some tests rely on the environment, e. g. file system, and we don’t set that so some tests don’t reproduce warnings exactly – Example to follow later

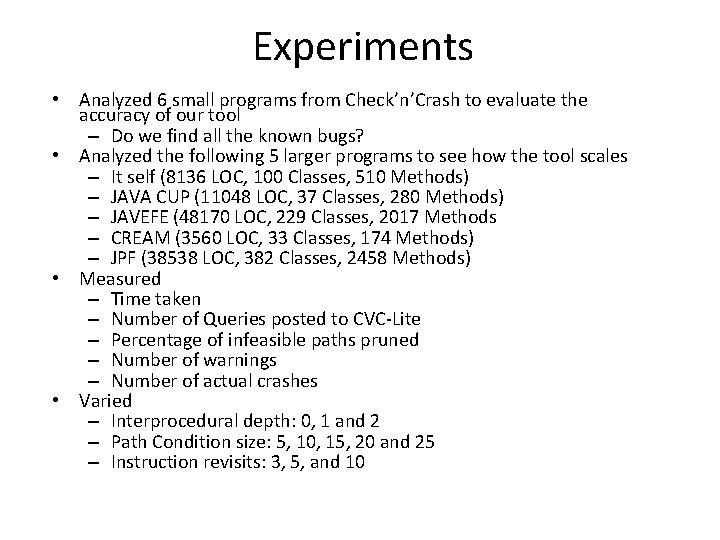

Experiments • Analyzed 6 small programs from Check’n’Crash to evaluate the accuracy of our tool – Do we find all the known bugs? • Analyzed the following 5 larger programs to see how the tool scales – It self (8136 LOC, 100 Classes, 510 Methods) – JAVA CUP (11048 LOC, 37 Classes, 280 Methods) – JAVEFE (48170 LOC, 229 Classes, 2017 Methods – CREAM (3560 LOC, 33 Classes, 174 Methods) – JPF (38538 LOC, 382 Classes, 2458 Methods) • Measured – Time taken – Number of Queries posted to CVC-Lite – Percentage of infeasible paths pruned – Number of warnings – Number of actual crashes • Varied – Interprocedural depth: 0, 1 and 2 – Path Condition size: 5, 10, 15, 20 and 25 – Instruction revisits: 3, 5, and 10

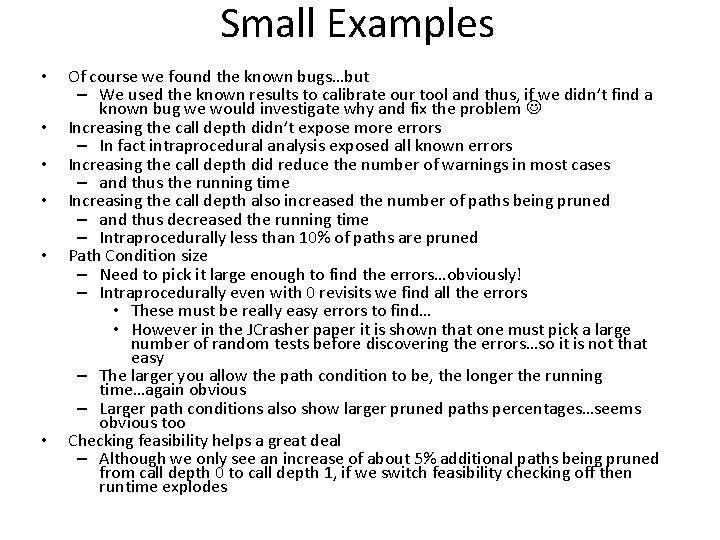

Small Examples • • • Of course we found the known bugs…but – We used the known results to calibrate our tool and thus, if we didn’t find a known bug we would investigate why and fix the problem Increasing the call depth didn’t expose more errors – In fact intraprocedural analysis exposed all known errors Increasing the call depth did reduce the number of warnings in most cases – and thus the running time Increasing the call depth also increased the number of paths being pruned – and thus decreased the running time – Intraprocedurally less than 10% of paths are pruned Path Condition size – Need to pick it large enough to find the errors…obviously! – Intraprocedurally even with 0 revisits we find all the errors • These must be really easy errors to find… • However in the JCrasher paper it is shown that one must pick a large number of random tests before discovering the errors…so it is not that easy – The larger you allow the path condition to be, the longer the running time…again obvious – Larger path conditions also show larger pruned paths percentages…seems obvious too Checking feasibility helps a great deal – Although we only see an increase of about 5% additional paths being pruned from call depth 0 to call depth 1, if we switch feasibility checking off then runtime explodes

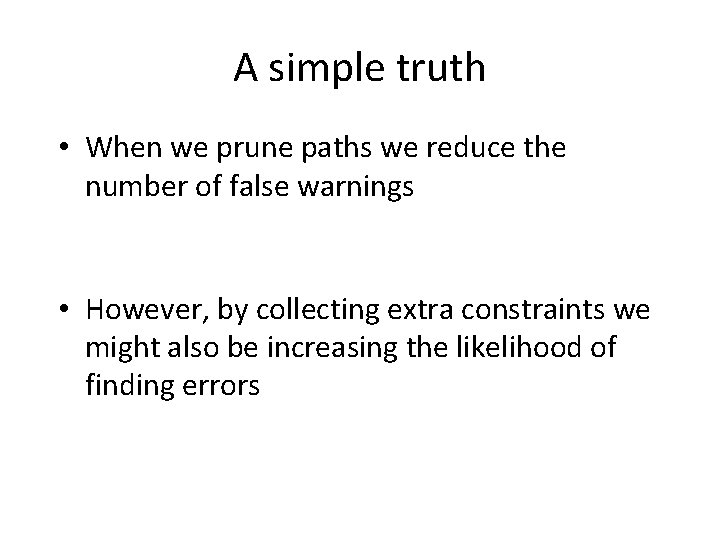

A simple truth • When we prune paths we reduce the number of false warnings • However, by collecting extra constraints we might also be increasing the likelihood of finding errors

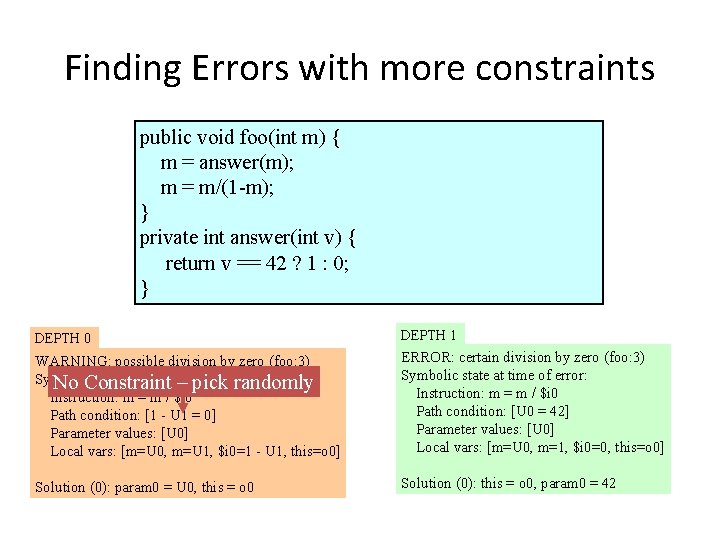

Finding Errors with more constraints public void foo(int m) { m = answer(m); m = m/(1 -m); } private int answer(int v) { return v == 42 ? 1 : 0; } DEPTH 0 DEPTH 1 WARNING: possible division by zero (foo: 3) Symbolic state at time of No Constraint – warning: pick randomly Instruction: m = m / $i 0 Path condition: [1 - U 1 = 0] Parameter values: [U 0] Local vars: [m=U 0, m=U 1, $i 0=1 - U 1, this=o 0] ERROR: certain division by zero (foo: 3) Symbolic state at time of error: Instruction: m = m / $i 0 Path condition: [U 0 = 42] Parameter values: [U 0] Local vars: [m=U 0, m=1, $i 0=0, this=o 0] Solution (0): param 0 = U 0, this = o 0 Solution (0): this = o 0, param 0 = 42

• • • Larger Examples We don’t know what the actual bugs are in this code – Sorry! Runtime becomes an issue now – For example for JPF, which seems to be the most complicated code to deal with for our tool, times range from 14 minutes (level 0) to 484 minutes (level 2) Call depth 1 now seems to be the sweet spot – Most errors for least number of warnings – Conjecture it is due to OO programming style Drop off in warnings at level 2 – But no decrease in running time! Path pruning percentages much higher than small examples – Even for level 0 – Methods are obviously much more complex thus containing more contradictory branch conditions – Switching feasibility checking off now doesn’t work even at level 0 Behavior is now much more application specific – Around 50% of the paths are pruned for Cream, a constraint solver, using a path condition size limit. This almost seems intuitive…it is taking constraints as inputs, and using the size of the constraints as a termination condition will allow all constraints up to that size to be analyzed and about 50% of them will fail. – However for the same code using the revisits as a termination condition pruned paths max out at 20% (depth 2) and the same number of errors are found in all cases. Now the structure of the code is as important as the inputs.

Larger Examples (2) • • • Where are we spending the running time – One would think doing the infeasibility checks – This is mostly correct – For Cream and JPF 40% time is spent doing constraint solving – For JAVA CUP 90% of the time is spent executing the tests – Again very application specific Error classes – Mostly find Null. Pointer. Exceptions – around 90% on average • Any code without an explicit check for null parameters will cause an error • In JAVA CUP we found more Array. Index. Out. Of. Bounds errors and it turned out that the code contained explicit checks for null parameters – Next category is Array. Index. Out. Of. Bounds – around 10% on average – The rest… Code Quality – JAVA CUP is the most mature product and we found only about 1 error per KLOC (1000 LOC) – For the other more research tools the error ratio ranged between 5 -12 errors/KLOC – Note that number of warnings may not be a good predictor of code quality • JAVA CUP and Cream had almost the same number of warnings/KLOC but Cream had 5 x more errors/KLOC – Would be interesting to further investigate how well the tool predicts code quality

h c s Future Work • Test generation o b n e l l e St – Achilles heel of the current systemat r e – Need a better approach to create st objects • Currently pick constructors randomly, starting with one Be that takes no parameters em l l i – Should produce code sequences to generate the W tests, since it willbyallow standalone execution via is JUnit s e h • Currently t we use reflection to set fields directly and run c S during the analysis the tests M ! E N O D

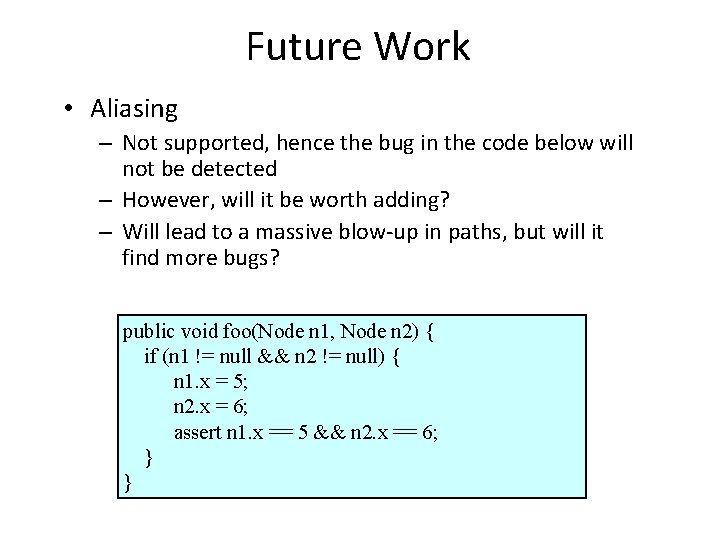

Future Work • Aliasing – Not supported, hence the bug in the code below will not be detected – However, will it be worth adding? – Will lead to a massive blow-up in paths, but will it find more bugs? public void foo(Node n 1, Node n 2) { if (n 1 != null && n 2 != null) { n 1. x = 5; n 2. x = 6; assert n 1. x == 5 && n 2. x == 6; } }

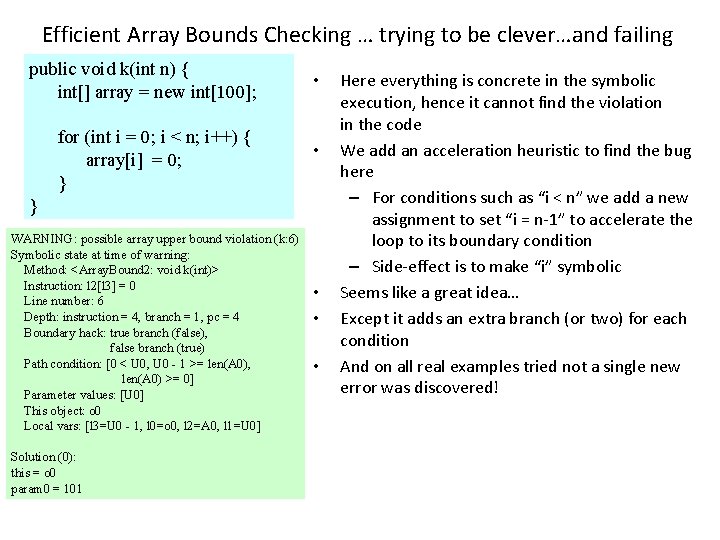

Efficient Array Bounds Checking … trying to be clever…and failing public void k(int n) { int[] array = new int[100]; for (int i = 0; i < n; i++) { array[i] = 0; } • • } WARNING: possible array upper bound violation (k: 6) Symbolic state at time of warning: Method: <Array. Bound 2: void k(int)> Instruction: l 2[l 3] = 0 Line number: 6 Depth: instruction = 4, branch = 1, pc = 4 Boundary hack: true branch (false), false branch (true) Path condition: [0 < U 0, U 0 - 1 >= len(A 0), len(A 0) >= 0] Parameter values: [U 0] This object: o 0 Local vars: [l 3=U 0 - 1, l 0=o 0, l 2=A 0, l 1=U 0] Solution (0): this = o 0 param 0 = 101 • • • Here everything is concrete in the symbolic execution, hence it cannot find the violation in the code We add an acceleration heuristic to find the bug here – For conditions such as “i < n” we add a new assignment to set “i = n-1” to accelerate the loop to its boundary condition – Side-effect is to make “i” symbolic Seems like a great idea… Except it adds an extra branch (or two) for each condition And on all real examples tried not a single new error was discovered!

![Future Work File data. Dir = new File(data. Dir. Name); File[] conflicts = data. Future Work File data. Dir = new File(data. Dir. Name); File[] conflicts = data.](http://slidetodoc.com/presentation_image_h2/de36509813fd701bcfb8cceb141a5d03/image-30.jpg)

Future Work File data. Dir = new File(data. Dir. Name); File[] conflicts = data. Dir. list. Files(); Tester. Thread[] thread. List = new Tester. Thread[10]; for(int i=0; i<thread. List. length; ++i) { File. Input. Stream fis = new File. Input. Stream(conflicts[i]); … • • Environment Generation – Actual bug in NASA software – Could not be discovered since it involves interaction with the environment • Need to be less than 10 files in the directory This problem also makes it hard to do whole program symbolic analysis – Typically program reads input from a file (etc. ) and therefore we can find potential errors symbolically, but will never be able to run the program to find an actual execution to show the error.

Related Work • There is a large amount of related work – Focus on the closely related • Check’n’Crash – Same basic idea but uses ESC/Java for symbolic execution and thus is not as flexible as our framework • Concolic testing – Also uses a combination of symbolic execution and concrete execution – However here concrete execution drives the analysis, whereas for us symbolic execution drives the concrete execution – A good comparison is required here

Conclusions • Showed a simple approach to eliminating false warnings during static analysis – Initial implementation took about 6 weeks • Symbolic execution was (almost) “trivial” • Refinements to the test generation took another 10 weeks! • Interprocedural analysis didn’t seem to help uncover more bugs – But, the error classes were very simple – Will it still hold if we look for more behavioral errors? • Will the symbolic execution scale to allow looking for deep behavioral errors…unlikely. • We have yet another data point that most of the work in these kinds of tools go into presenting the errors! – We have no UI, just one BIG output file – Used about 10 different Perl and shell scripts to extract the relevant data • I think this system as it is now is very good at measuring code quality, but will need a lot of work to become a serious player in the commercial world

What happened in Industry? • Tried to apply this to real code in a company • Found 100 s of “real” errors – API methods that dereference their inputs with a null check was the most common • Only problem was the developers had “hidden” preconditions, for example they knew never to call the code with a null • So they didn’t consider it a bug • Best we can thus do is to generate explicit preconditions • New version of the tool also generates Junit tests that developers find more useful, since they can use it for regression testing

- Slides: 33