Review of Probability 1 Probability Theory n Many

Review of Probability 1

Probability Theory: n Many techniques in speech processing require the manipulation of probabilities and statistics. n The two principal application areas we will encounter are: ¨ Statistics pattern recognition. ¨ Modeling of linear systems. 2

Events: n It is customary to refer to the probability of an event. n An event is a certain set of possible outcomes of an experiment or trial. n Outcomes are assumed to be mutually exclusive and, taken together, to cover all possibilities. 3

Axioms of Probability: n To any event A we can assign a number, P(A), which satisfies the following axioms: ¨ P(A)≥ 0. ¨ P(S)=1. ¨ If A and B are mutually exclusive, then P(A+B)=P(A)+P(B). n The number P(A) is called the probability of A. 4

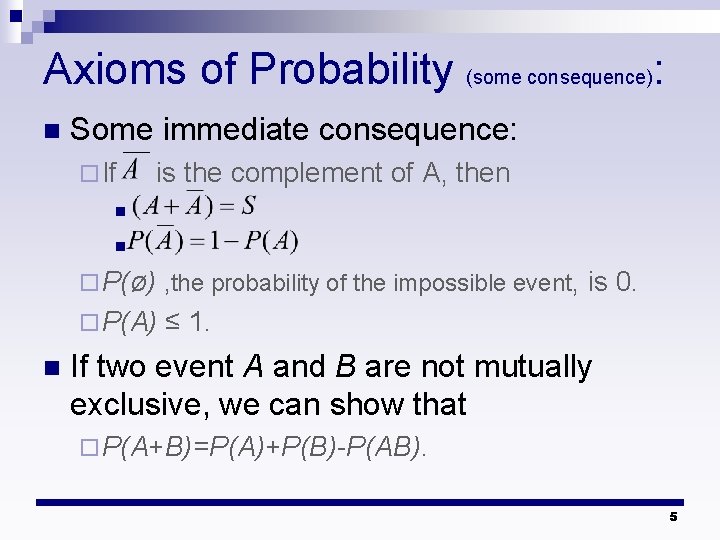

Axioms of Probability (some consequence): n Some immediate consequence: ¨ If is the complement of A, then n n ¨ P(ø) , the probability of the impossible event, ¨ P(A) n is 0. ≤ 1. If two event A and B are not mutually exclusive, we can show that ¨ P(A+B)=P(A)+P(B)-P(AB). 5

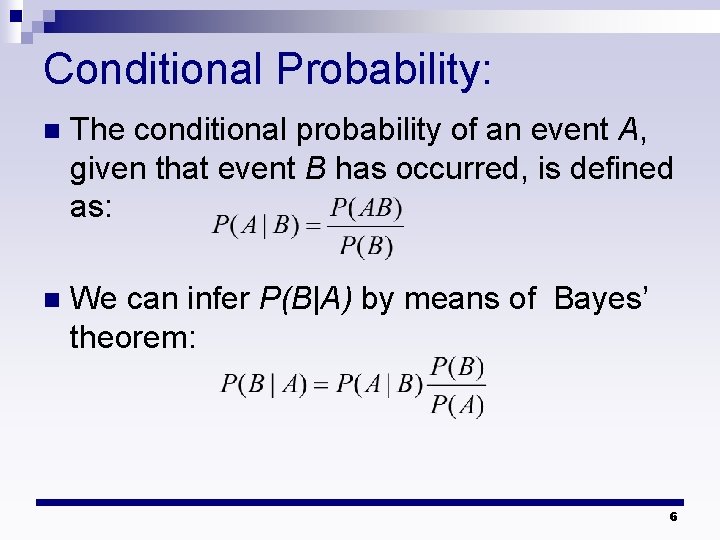

Conditional Probability: n The conditional probability of an event A, given that event B has occurred, is defined as: n We can infer P(B|A) by means of Bayes’ theorem: 6

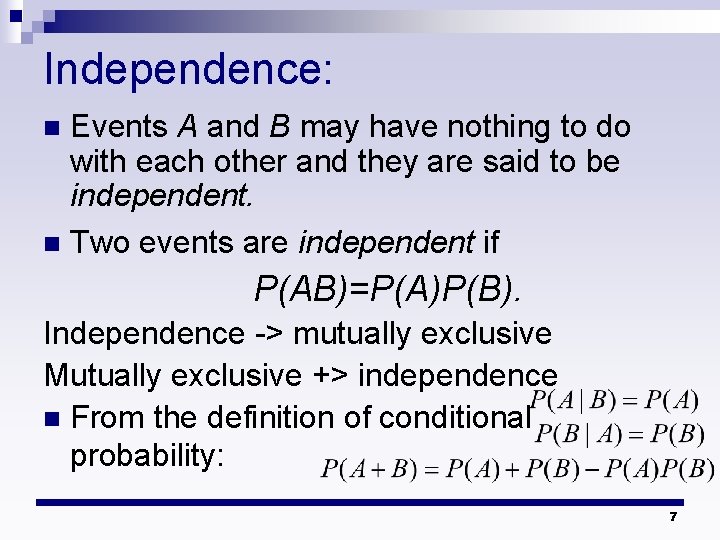

Independence: Events A and B may have nothing to do with each other and they are said to be independent. n Two events are independent if n P(AB)=P(A)P(B). Independence -> mutually exclusive Mutually exclusive +> independence n From the definition of conditional probability: 7

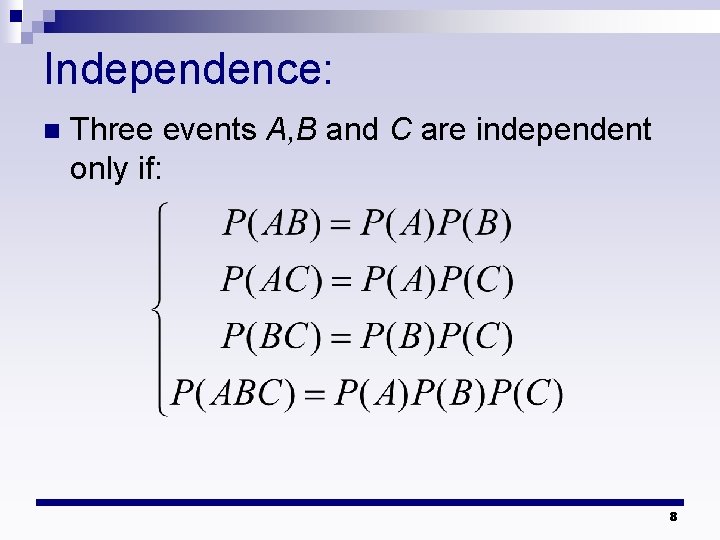

Independence: n Three events A, B and C are independent only if: 8

Random Variables: A random variable is a number chosen at random as the outcome of an experiment. n Random variable may be real or complex and may be discrete or continuous. n ¨ In S. P. , the random variable encounter are most often real and discrete. n We can characterize a random variable by its probability distribution or by its probability density function (pdf). 9

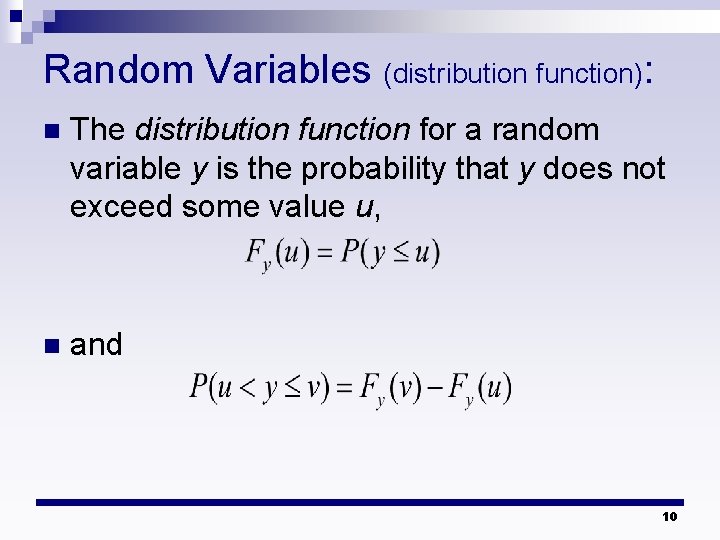

Random Variables (distribution function): n The distribution function for a random variable y is the probability that y does not exceed some value u, n and 10

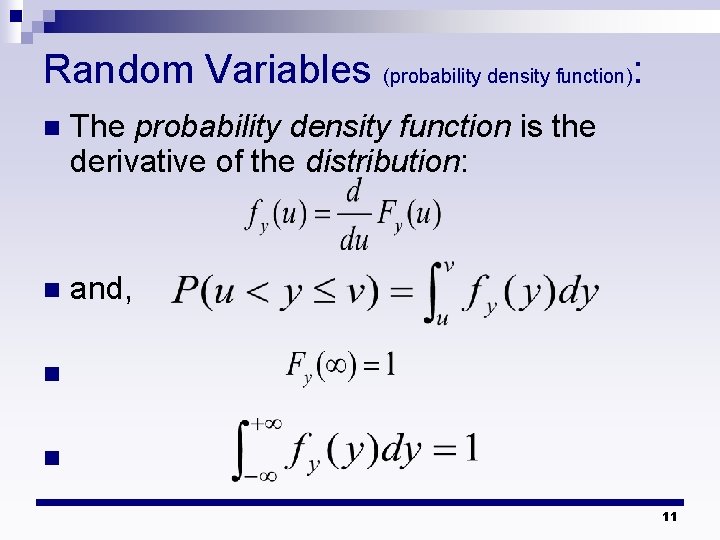

Random Variables (probability density function): n The probability density function is the derivative of the distribution: n and, n n 11

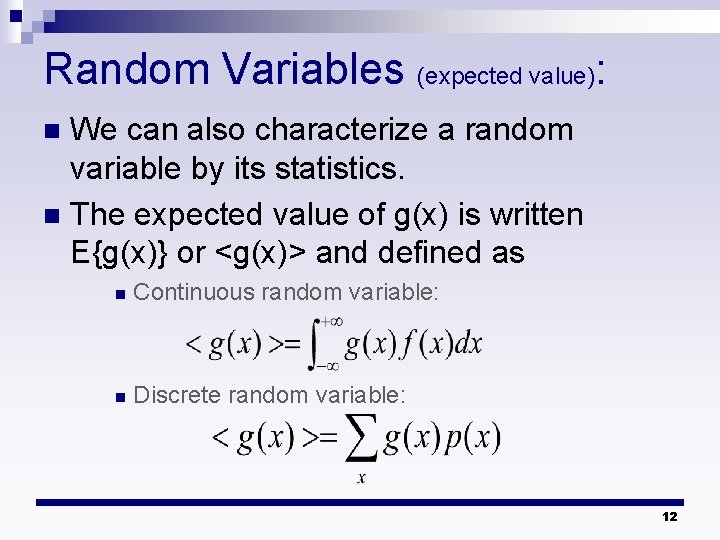

Random Variables (expected value): We can also characterize a random variable by its statistics. n The expected value of g(x) is written E{g(x)} or <g(x)> and defined as n n Continuous random variable: n Discrete random variable: 12

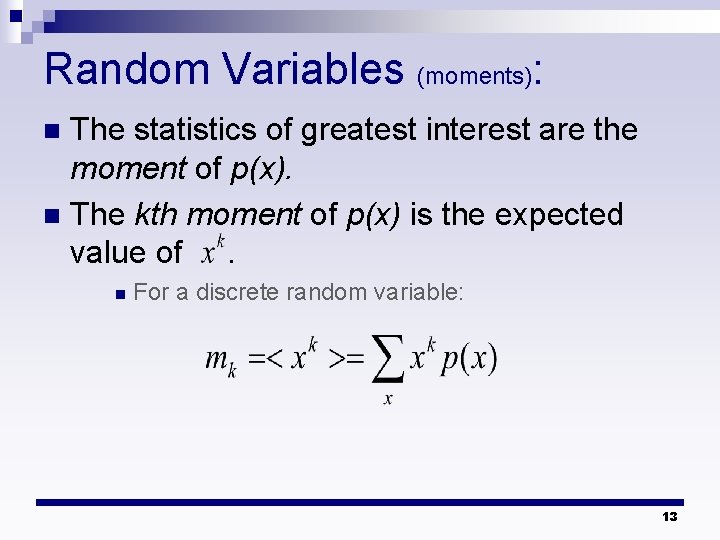

Random Variables (moments): The statistics of greatest interest are the moment of p(x). n The kth moment of p(x) is the expected value of. n n For a discrete random variable: 13

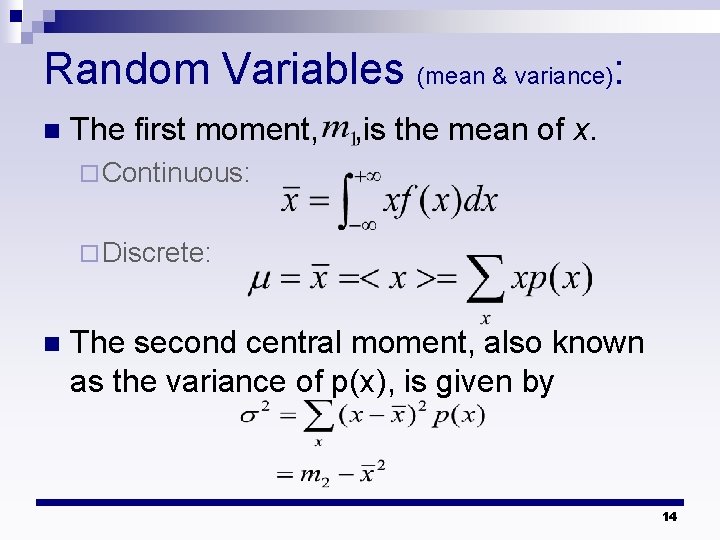

Random Variables (mean & variance): n The first moment, , is the mean of x. ¨ Continuous: ¨ Discrete: n The second central moment, also known as the variance of p(x), is given by 14

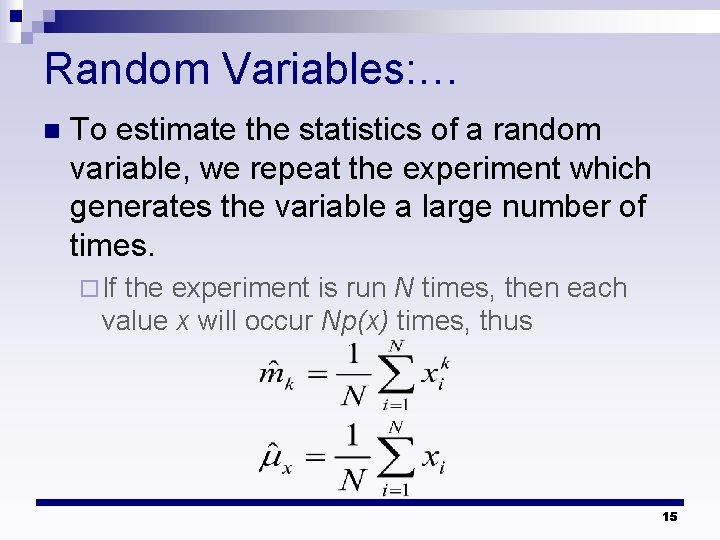

Random Variables: … n To estimate the statistics of a random variable, we repeat the experiment which generates the variable a large number of times. ¨ If the experiment is run N times, then each value x will occur Np(x) times, thus 15

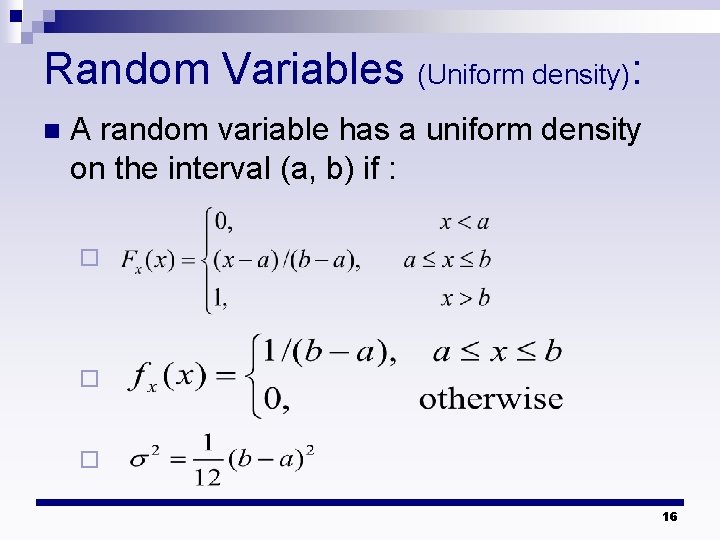

Random Variables (Uniform density): n A random variable has a uniform density on the interval (a, b) if : ¨ ¨ ¨ 16

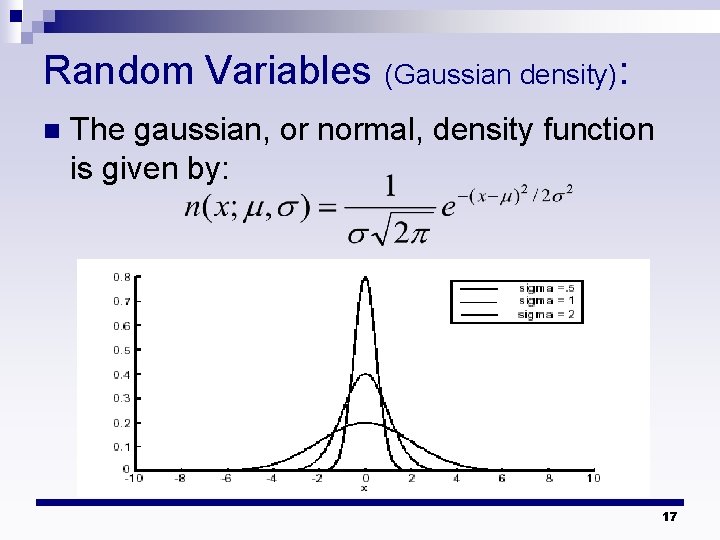

Random Variables n (Gaussian density): The gaussian, or normal, density function is given by: 17

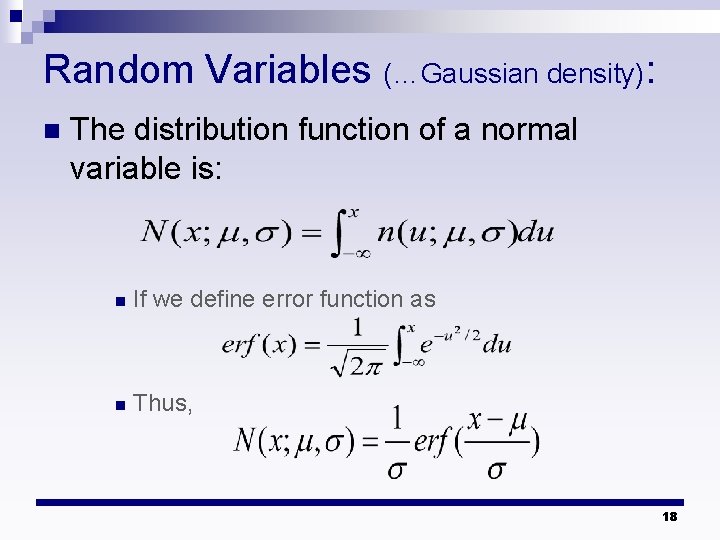

Random Variables (…Gaussian density): n The distribution function of a normal variable is: n If we define error function as n Thus, 18

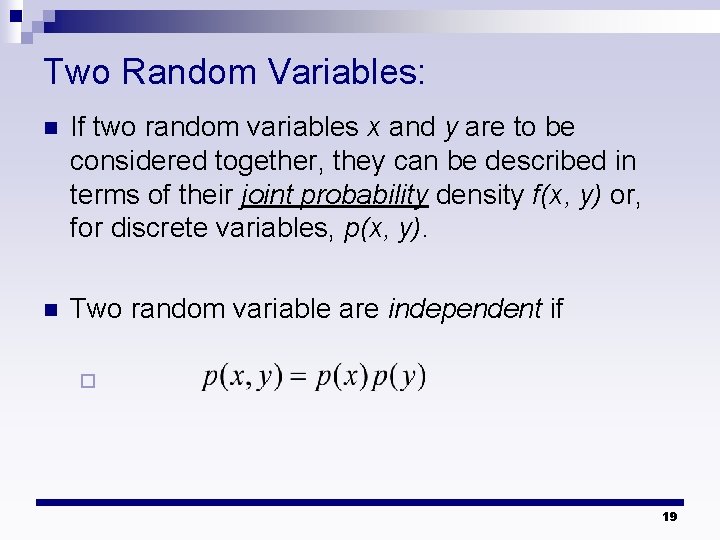

Two Random Variables: n If two random variables x and y are to be considered together, they can be described in terms of their joint probability density f(x, y) or, for discrete variables, p(x, y). n Two random variable are independent if ¨ 19

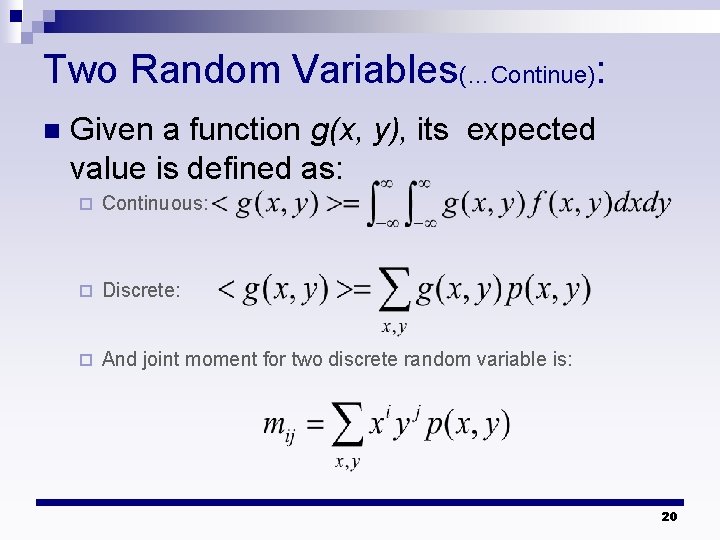

Two Random Variables(…Continue): n Given a function g(x, y), its expected value is defined as: ¨ Continuous: ¨ Discrete: ¨ And joint moment for two discrete random variable is: 20

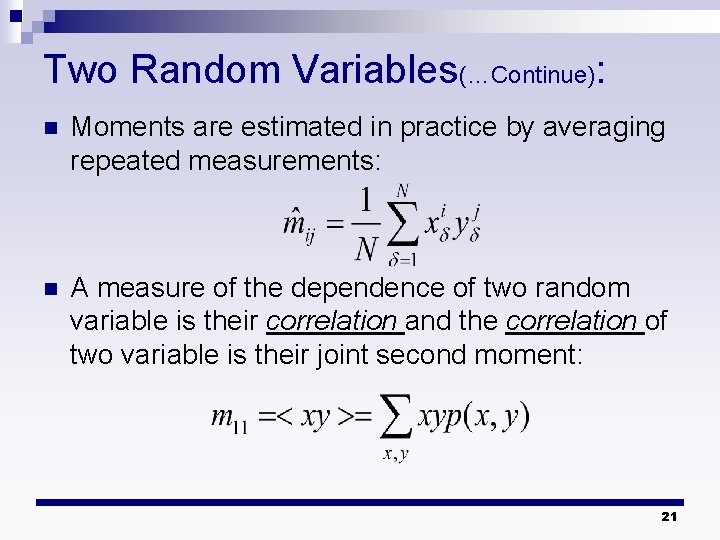

Two Random Variables(…Continue): n Moments are estimated in practice by averaging repeated measurements: n A measure of the dependence of two random variable is their correlation and the correlation of two variable is their joint second moment: 21

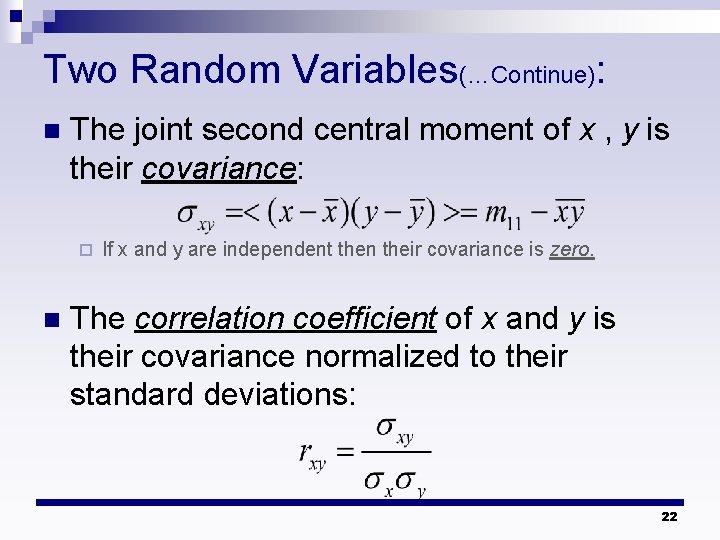

Two Random Variables(…Continue): n The joint second central moment of x , y is their covariance: ¨ n If x and y are independent then their covariance is zero. The correlation coefficient of x and y is their covariance normalized to their standard deviations: 22

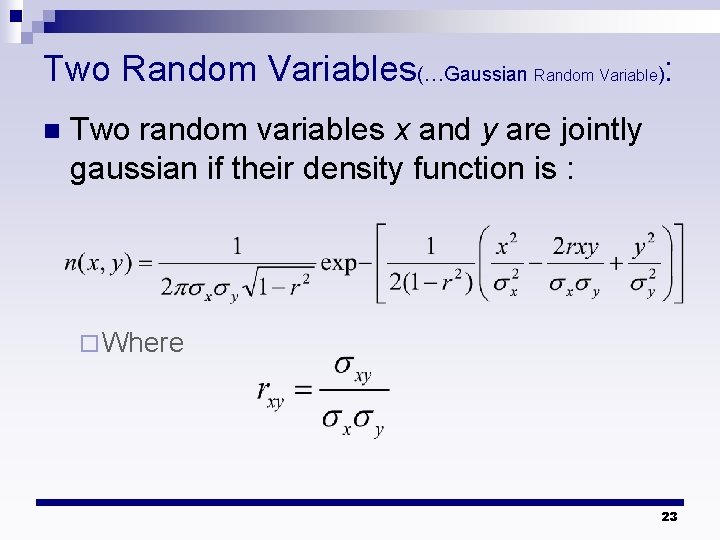

Two Random Variables(…Gaussian Random Variable): n Two random variables x and y are jointly gaussian if their density function is : ¨ Where 23

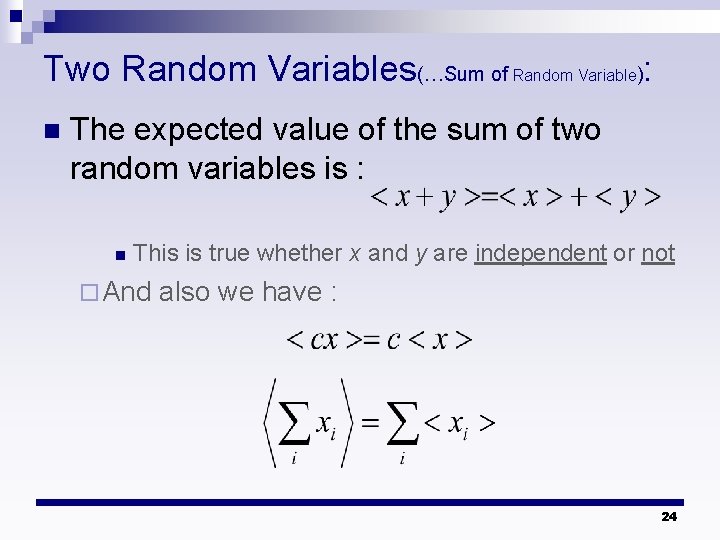

Two Random Variables(…Sum of Random Variable): n The expected value of the sum of two random variables is : n This is true whether x and y are independent or not ¨ And also we have : 24

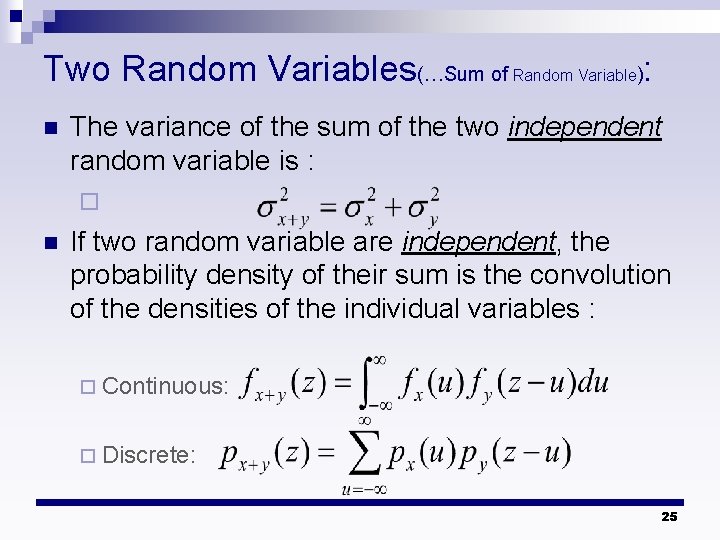

Two Random Variables(…Sum of Random Variable): n The variance of the sum of the two independent random variable is : ¨ n If two random variable are independent, the probability density of their sum is the convolution of the densities of the individual variables : ¨ Continuous: ¨ Discrete: 25

Central Limit Theorem n Central Limit Theorem (informal paraphrase): If many independent random variable are summed, the probability density function (pdf) of the sum tends toward the gaussian density, no matter what their individual densities are. 26

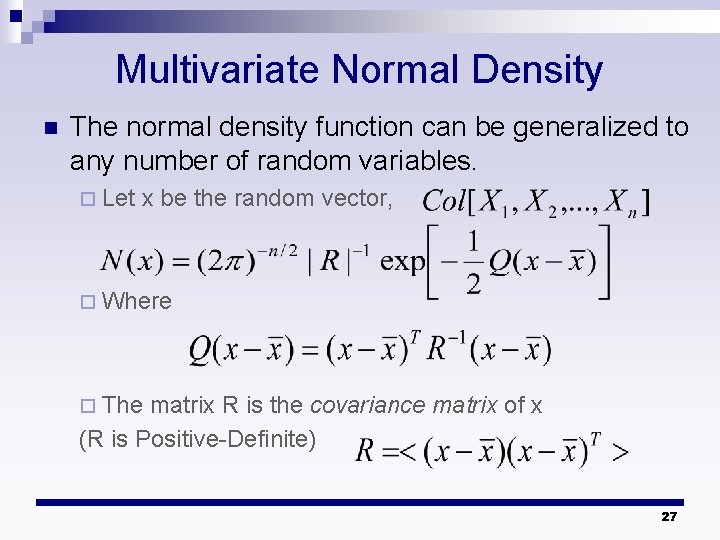

Multivariate Normal Density n The normal density function can be generalized to any number of random variables. ¨ Let x be the random vector, ¨ Where ¨ The matrix R is the covariance matrix of x (R is Positive-Definite) 27

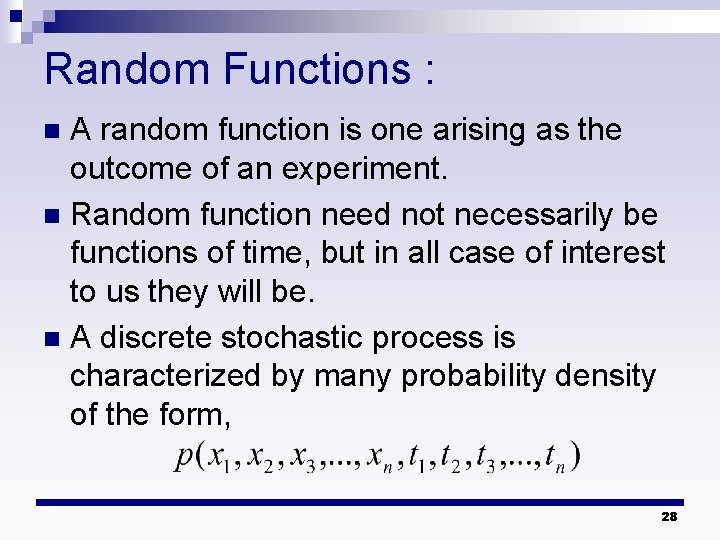

Random Functions : A random function is one arising as the outcome of an experiment. n Random function need not necessarily be functions of time, but in all case of interest to us they will be. n A discrete stochastic process is characterized by many probability density of the form, n 28

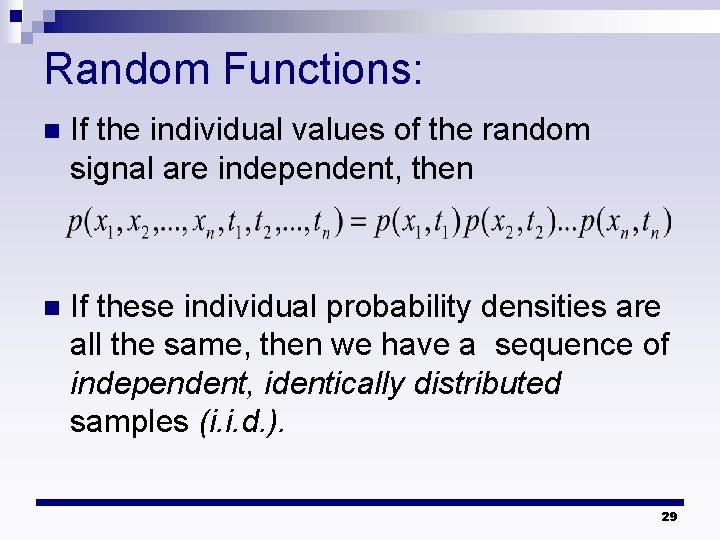

Random Functions: n If the individual values of the random signal are independent, then n If these individual probability densities are all the same, then we have a sequence of independent, identically distributed samples (i. i. d. ). 29

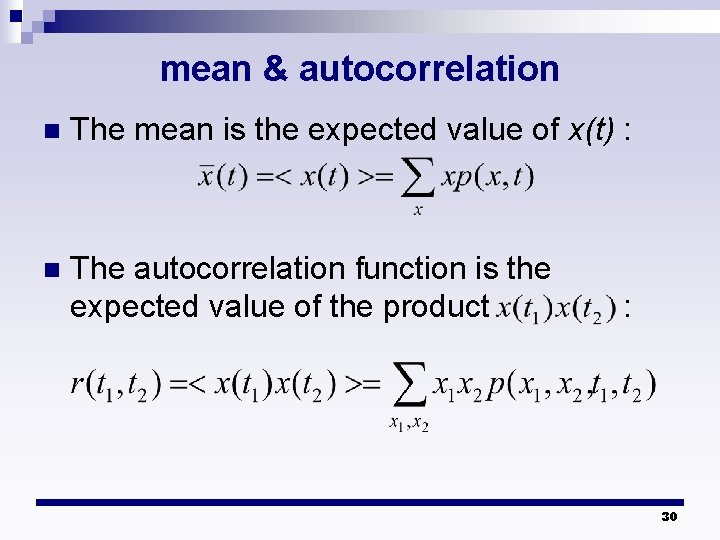

mean & autocorrelation n The mean is the expected value of x(t) : n The autocorrelation function is the expected value of the product : 30

ensemble & time average n Mean and autocorrelation can be determined in two ways: ¨ The experiment can be repeated many times and the average taken over all these functions. Such an average is called ensemble average. ¨ Take any one of these function as being representative of the ensemble and find the average from a number of samples of this one function. This is called a time average. 31

ergodic & stationary n If the time average and ensemble average of a random function are the same, it is said to be ergodic. n A random function is said to be stationary if its statistics do not change as a function of time. n Any ergodic function is also stationary. 32

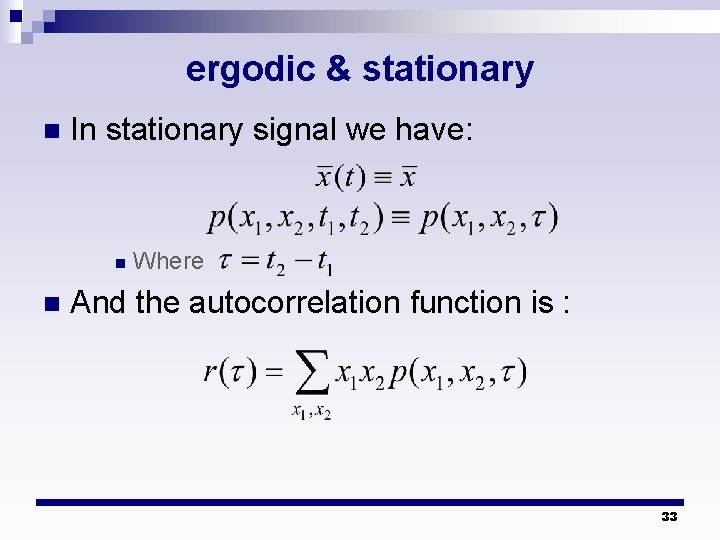

ergodic & stationary n In stationary signal we have: n n Where And the autocorrelation function is : 33

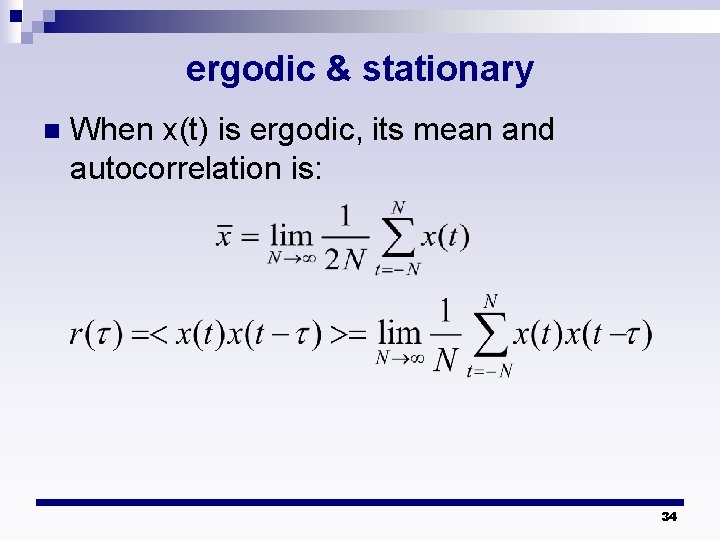

ergodic & stationary n When x(t) is ergodic, its mean and autocorrelation is: 34

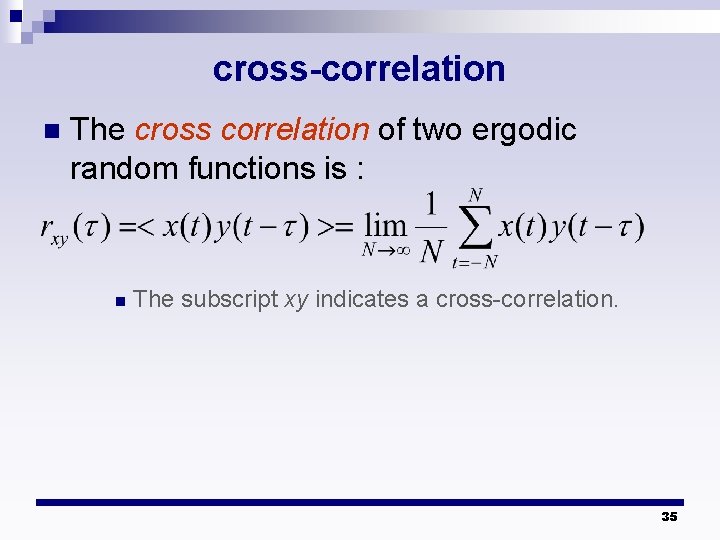

cross-correlation n The cross correlation of two ergodic random functions is : n The subscript xy indicates a cross-correlation. 35

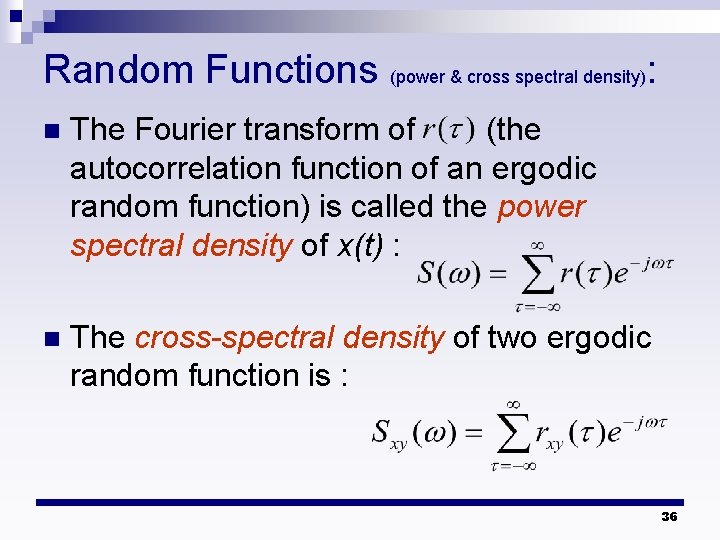

Random Functions (power & cross spectral density): n The Fourier transform of (the autocorrelation function of an ergodic random function) is called the power spectral density of x(t) : n The cross-spectral density of two ergodic random function is : 36

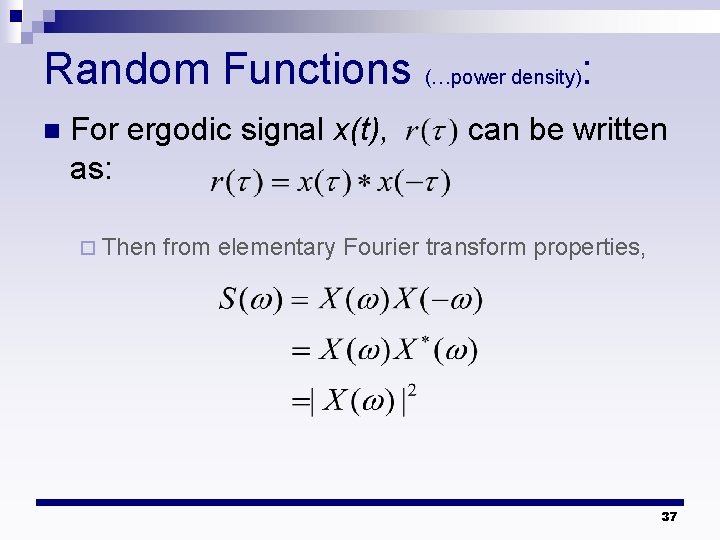

Random Functions (…power density): n For ergodic signal x(t), as: ¨ Then can be written from elementary Fourier transform properties, 37

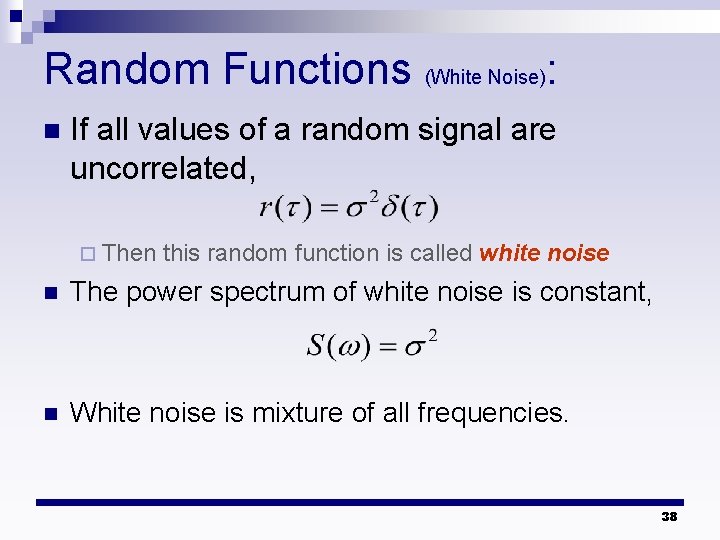

Random Functions (White Noise): n If all values of a random signal are uncorrelated, ¨ Then this random function is called white noise n The power spectrum of white noise is constant, n White noise is mixture of all frequencies. 38

![Random Signal in Linear Systems : n Let T[ ] represent the linear operation; Random Signal in Linear Systems : n Let T[ ] represent the linear operation;](http://slidetodoc.com/presentation_image_h2/b71ef398a44d6138ec1cca18e4667962/image-39.jpg)

Random Signal in Linear Systems : n Let T[ ] represent the linear operation; then n Given a system with impulse response h(n), n A stationary signal applied to a linear system yields a stationary output, 39

- Slides: 39