Recurrent Neural Network What problems are CNNs good

Recurrent Neural Network

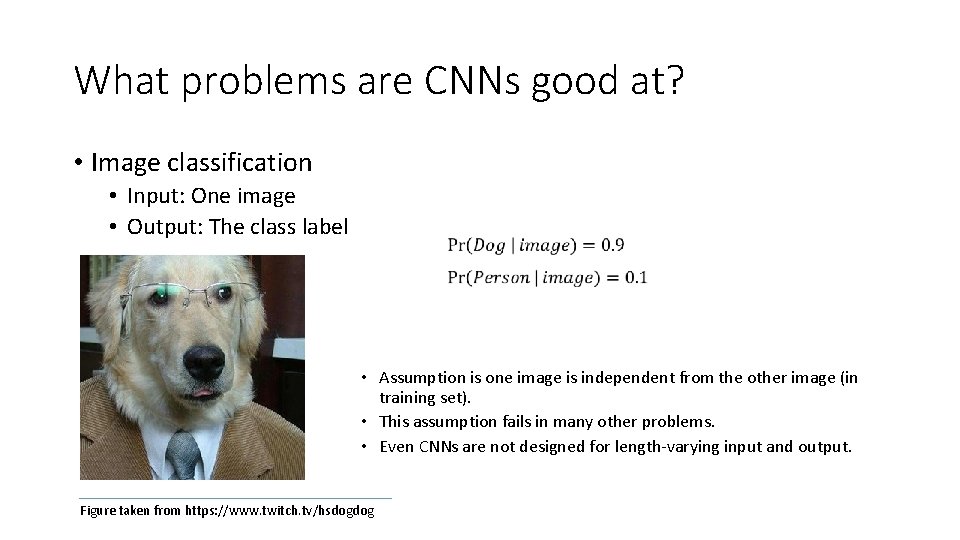

What problems are CNNs good at? • Image classification • Input: One image • Output: The class label • Assumption is one image is independent from the other image (in training set). • This assumption fails in many other problems. • Even CNNs are not designed for length-varying input and output. Figure taken from https: //www. twitch. tv/hsdogdog

What is a sequence Learning? • Sequence learning is the study of machine learning algorithms designed for sequential data. • Sequence learning is used for: • Time-series prediction: Tasks where the history of a time series is used to predict the next point. • Applications: stock market prediction, weather forecasting, animal migration tracking, storm trajectory prediction, and so on. • Sequence labelling: Tasks where a sequence of labels is applied to a sequence of data. • Applications: Language modeling, Speech recognition, gesture recognition, handwriting recognition, etc.

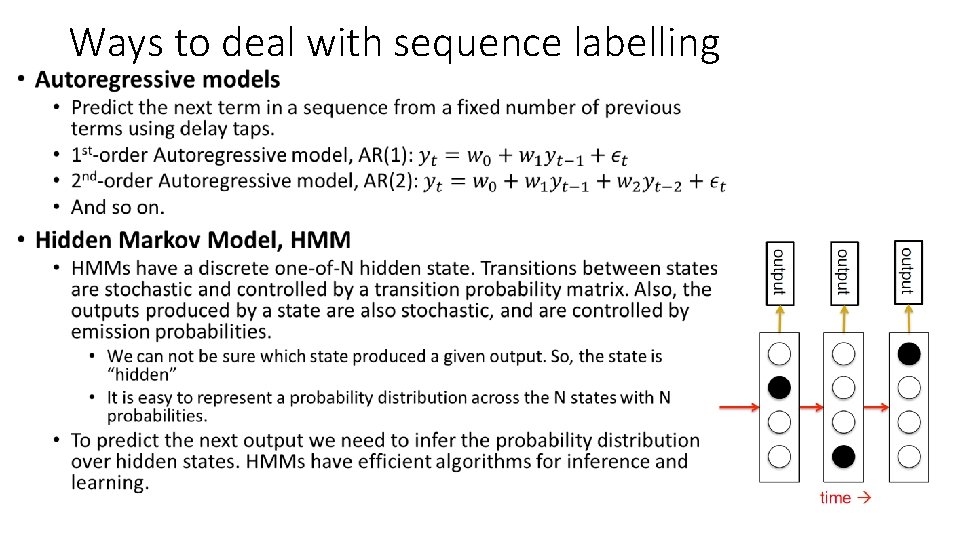

• Ways to deal with sequence labelling

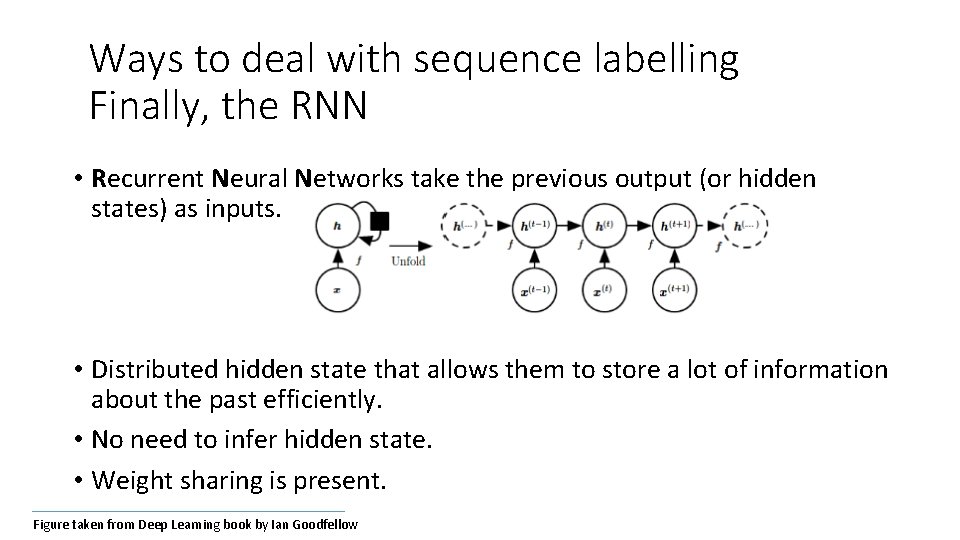

Ways to deal with sequence labelling Finally, the RNN • Recurrent Neural Networks take the previous output (or hidden states) as inputs. • Distributed hidden state that allows them to store a lot of information about the past efficiently. • No need to infer hidden state. • Weight sharing is present. Figure taken from Deep Learning book by Ian Goodfellow

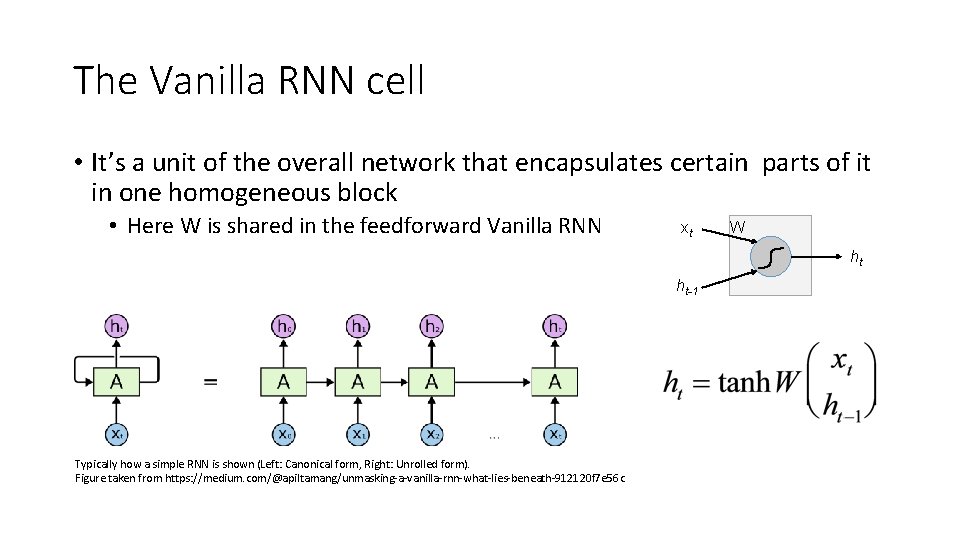

The Vanilla RNN cell • It’s a unit of the overall network that encapsulates certain parts of it in one homogeneous block • Here W is shared in the feedforward Vanilla RNN xt W ht ht-1 Typically how a simple RNN is shown (Left: Canonical form, Right: Unrolled form). Figure taken from https: //medium. com/@apiltamang/unmasking-a-vanilla-rnn-what-lies-beneath-912120 f 7 e 56 c

Recurrent Neural Networks (RNNs) • Note that the weights, W are shared over time, t • Essentially, copies of the RNN cell are made over time (unrolling), with different inputs at different time steps.

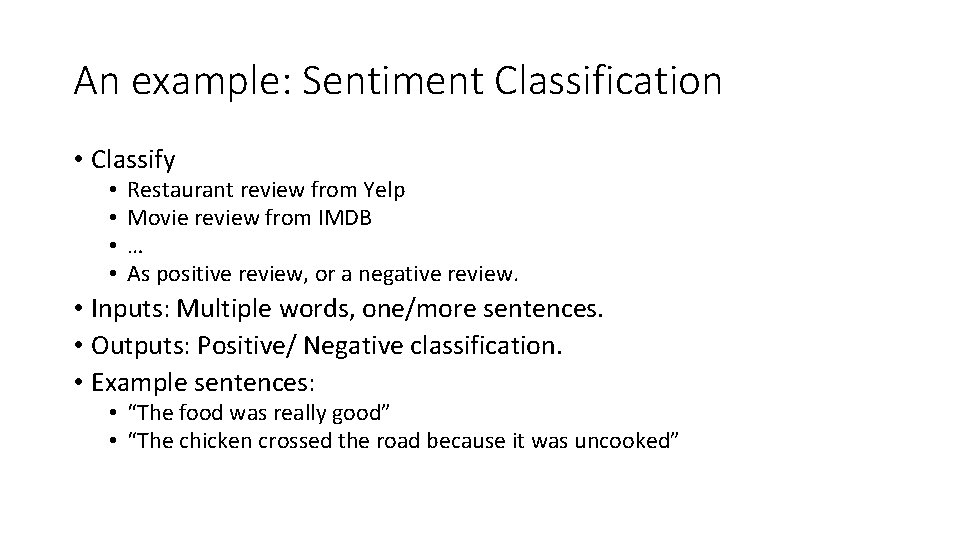

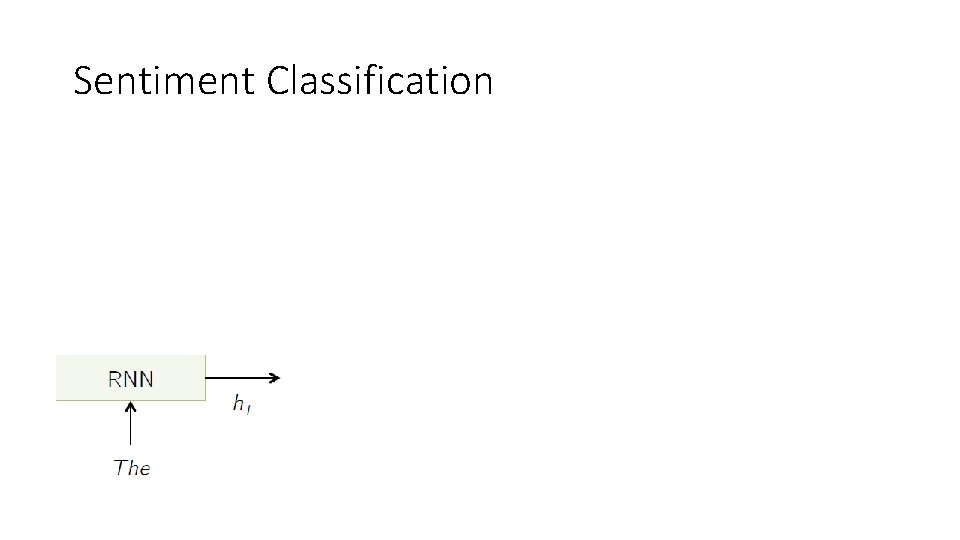

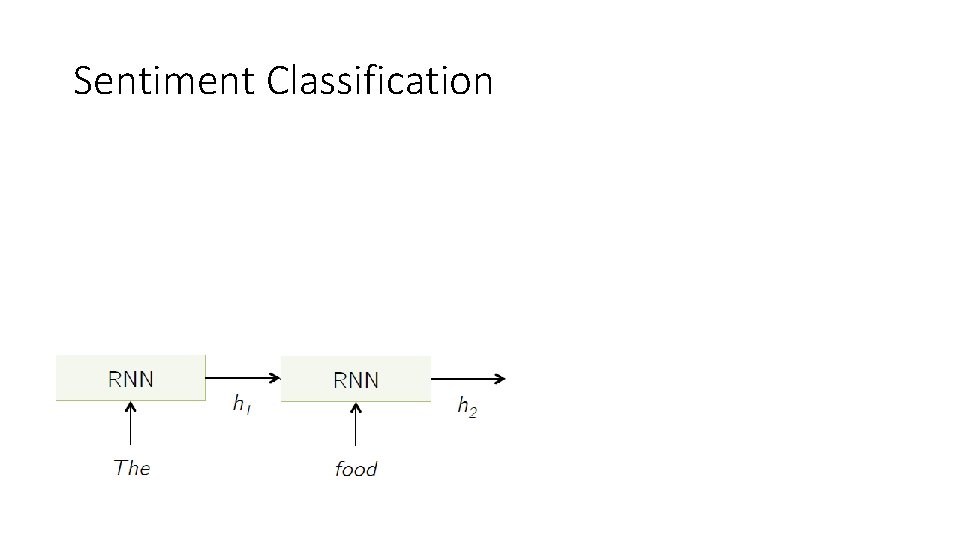

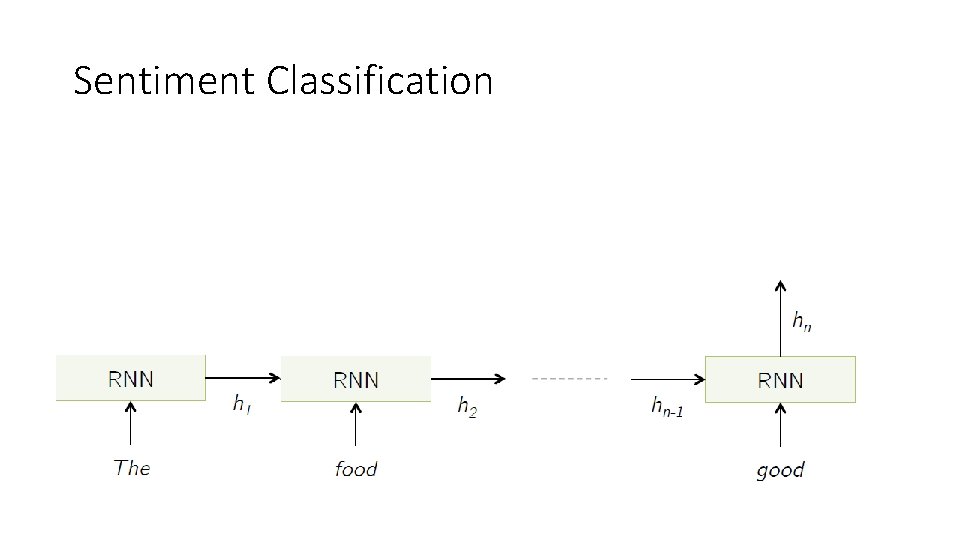

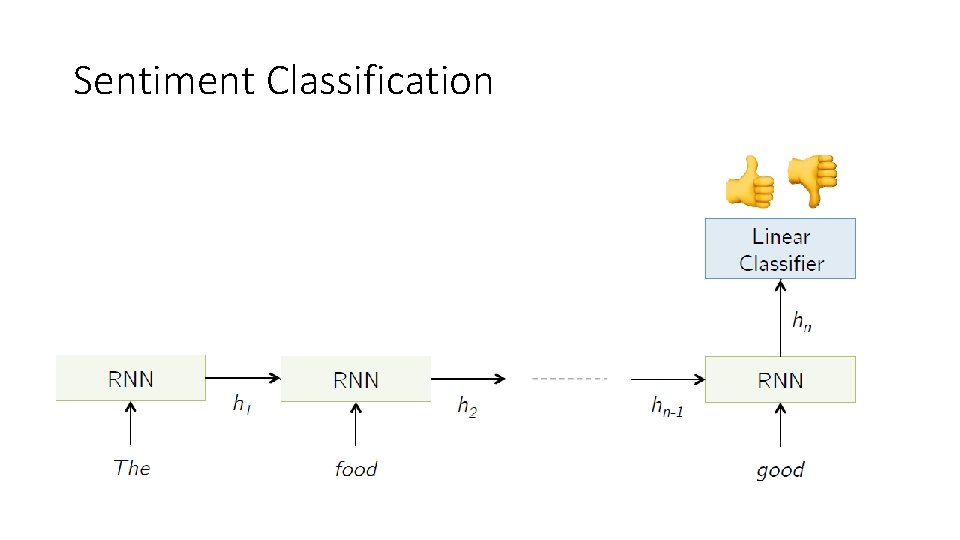

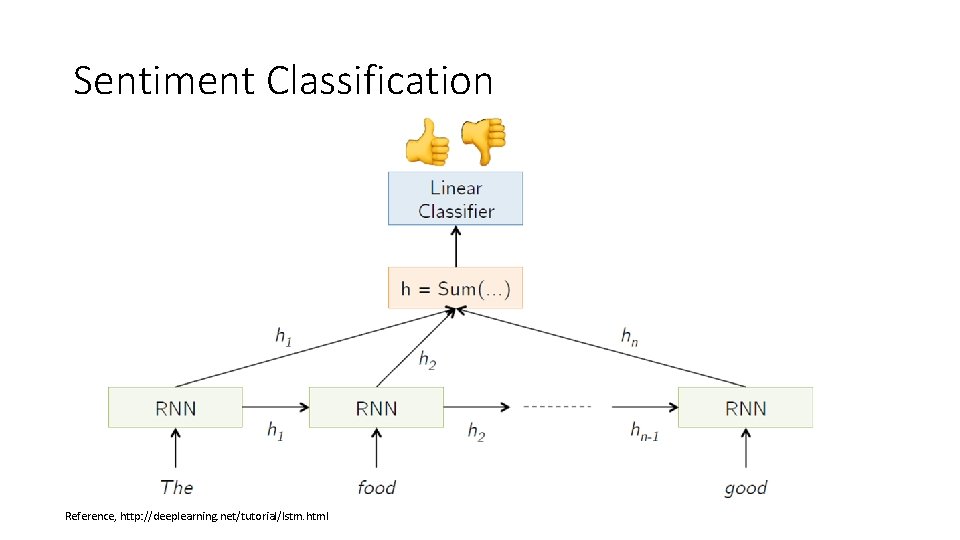

An example: Sentiment Classification • Classify • • Restaurant review from Yelp Movie review from IMDB … As positive review, or a negative review. • Inputs: Multiple words, one/more sentences. • Outputs: Positive/ Negative classification. • Example sentences: • “The food was really good” • “The chicken crossed the road because it was uncooked”

Sentiment Classification

Sentiment Classification

Sentiment Classification

Sentiment Classification

Sentiment Classification Reference, http: //deeplearning. net/tutorial/lstm. html

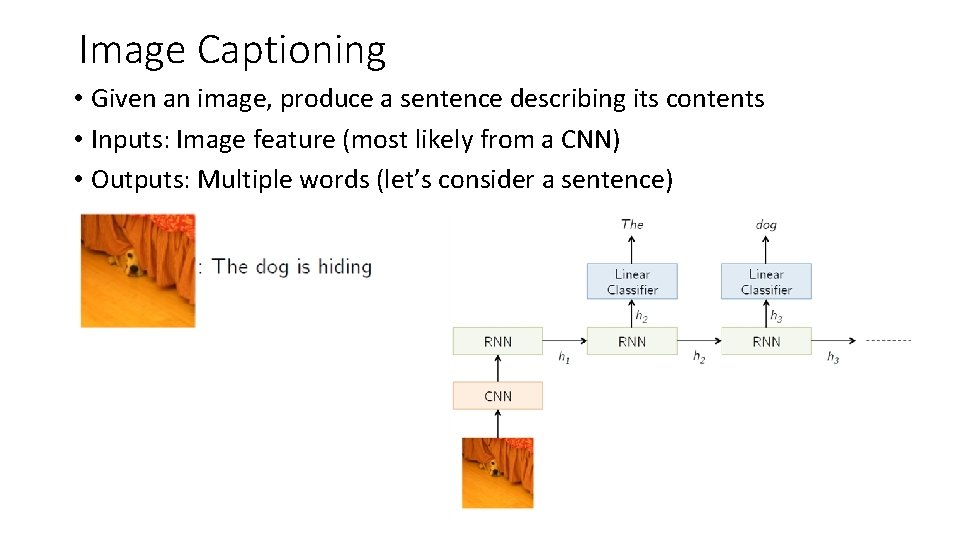

Image Captioning • Given an image, produce a sentence describing its contents • Inputs: Image feature (most likely from a CNN) • Outputs: Multiple words (let’s consider a sentence)

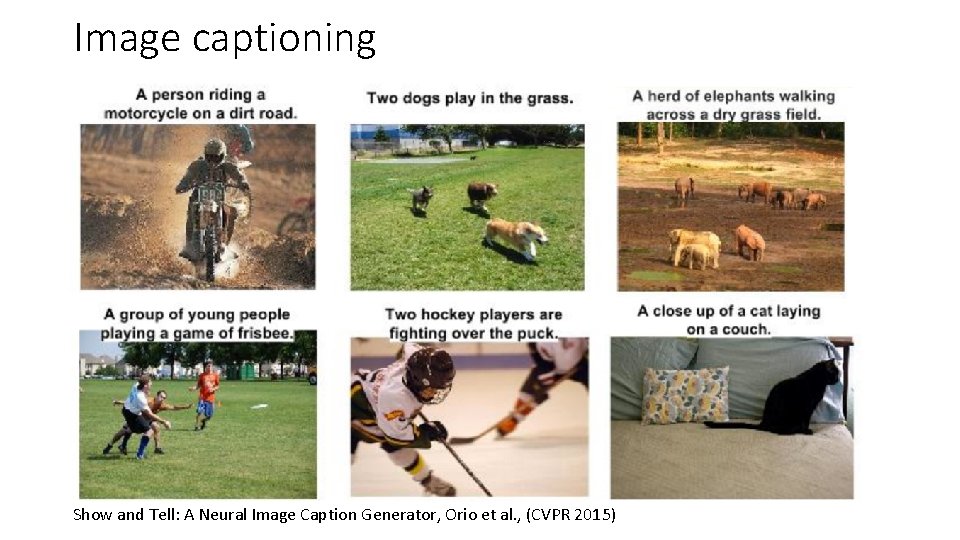

Image captioning Show and Tell: A Neural Image Caption Generator, Orio et al. , (CVPR 2015)

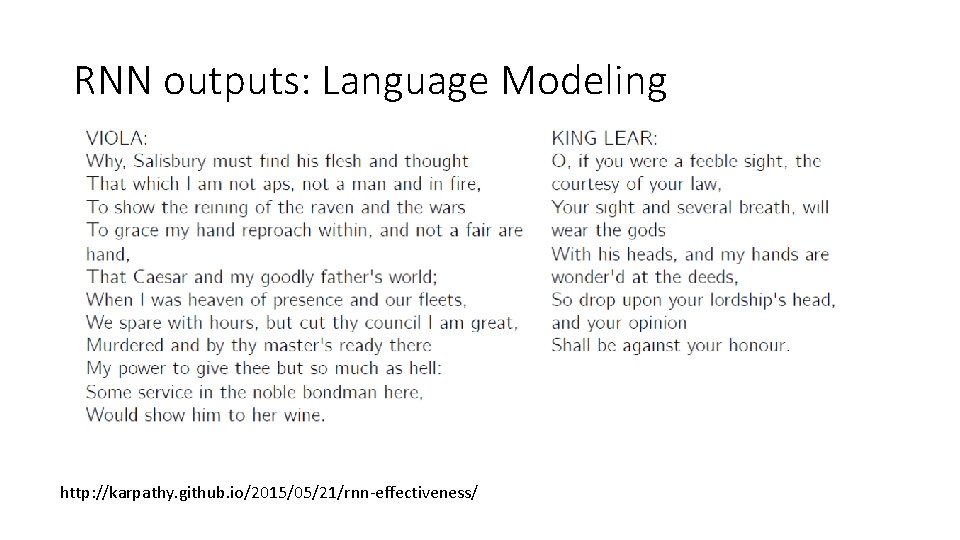

RNN outputs: Language Modeling http: //karpathy. github. io/2015/05/21/rnn-effectiveness/

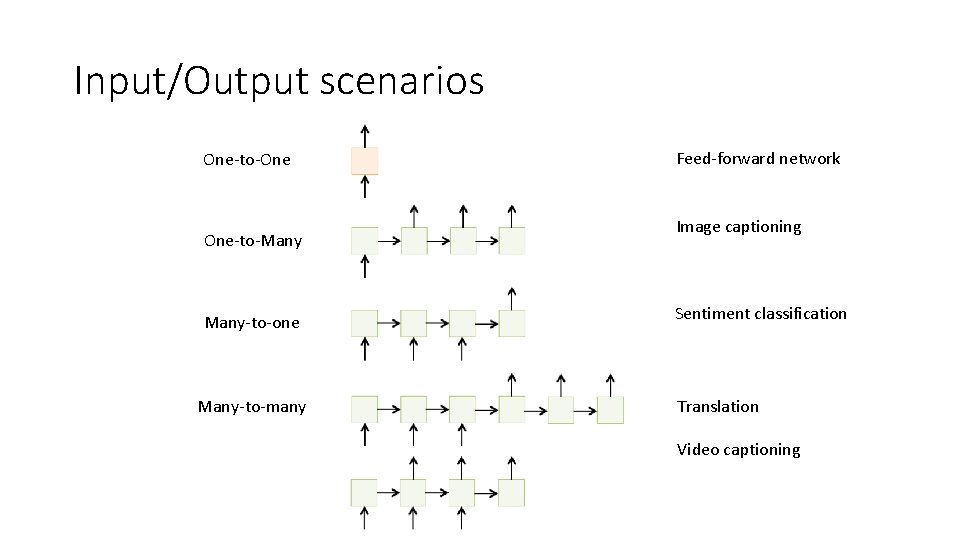

Input/Output scenarios One-to-One One-to-Many-to-one Many-to-many Feed-forward network Image captioning Sentiment classification Translation Video captioning

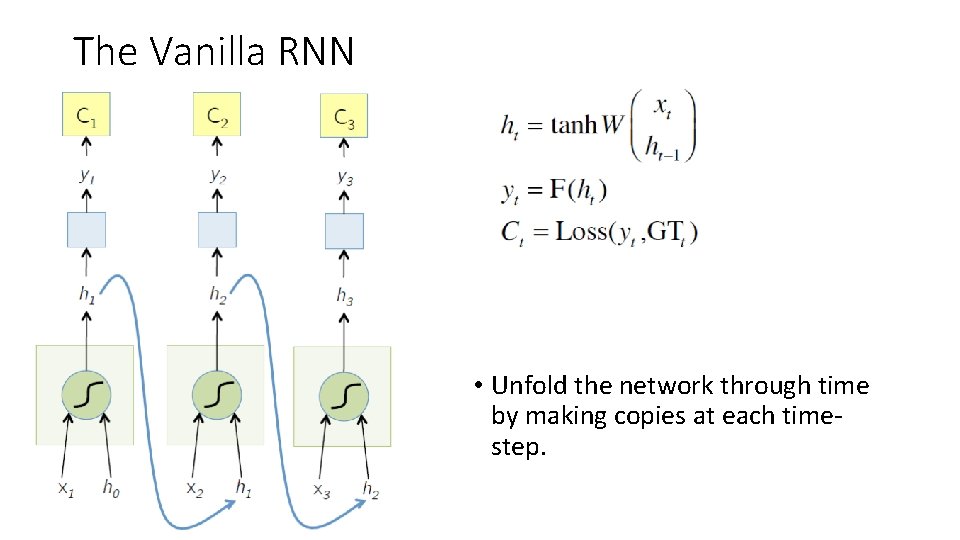

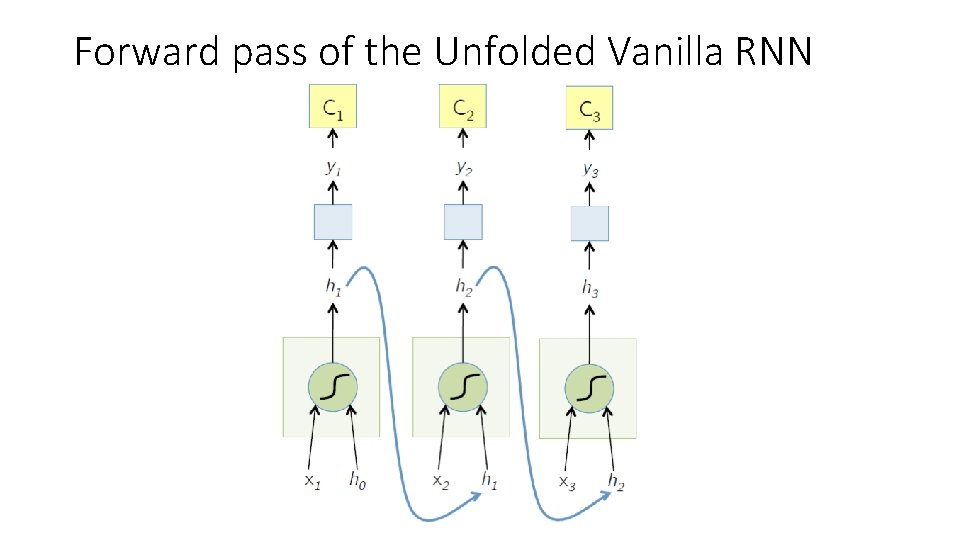

The Vanilla RNN • Unfold the network through time by making copies at each timestep.

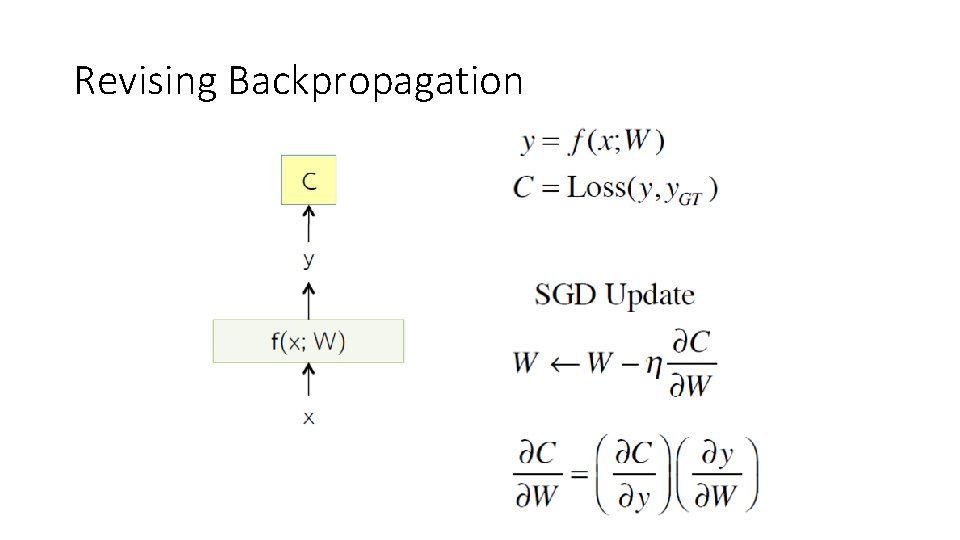

Revising Backpropagation

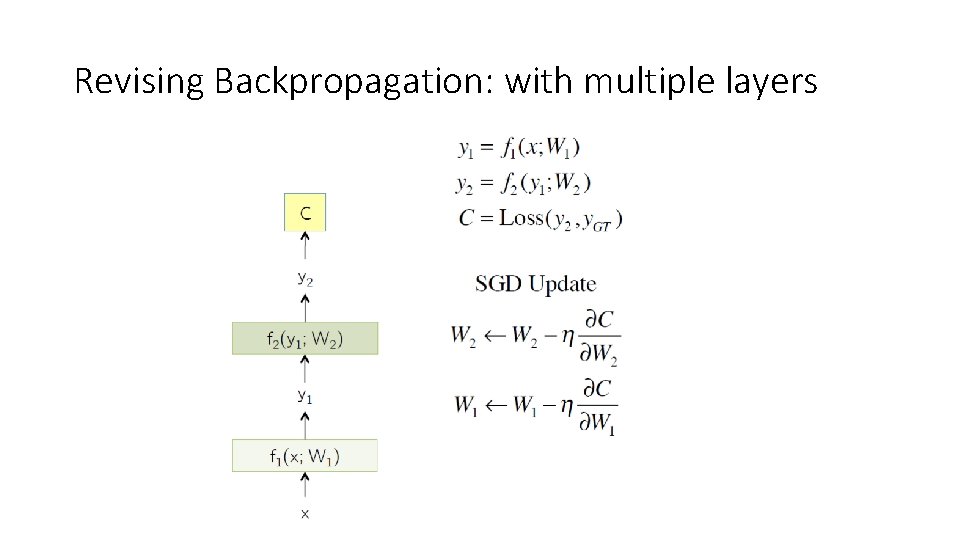

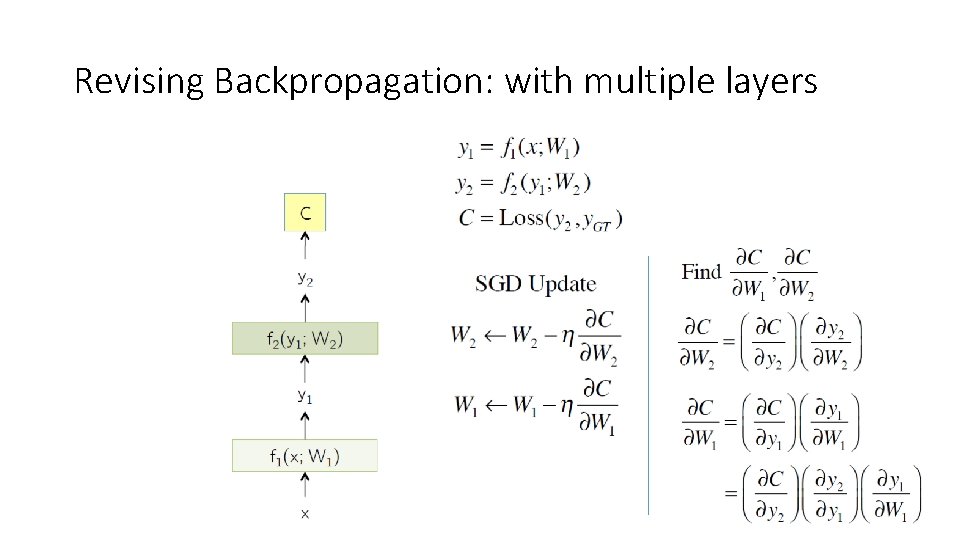

Revising Backpropagation: with multiple layers

Revising Backpropagation: with multiple layers

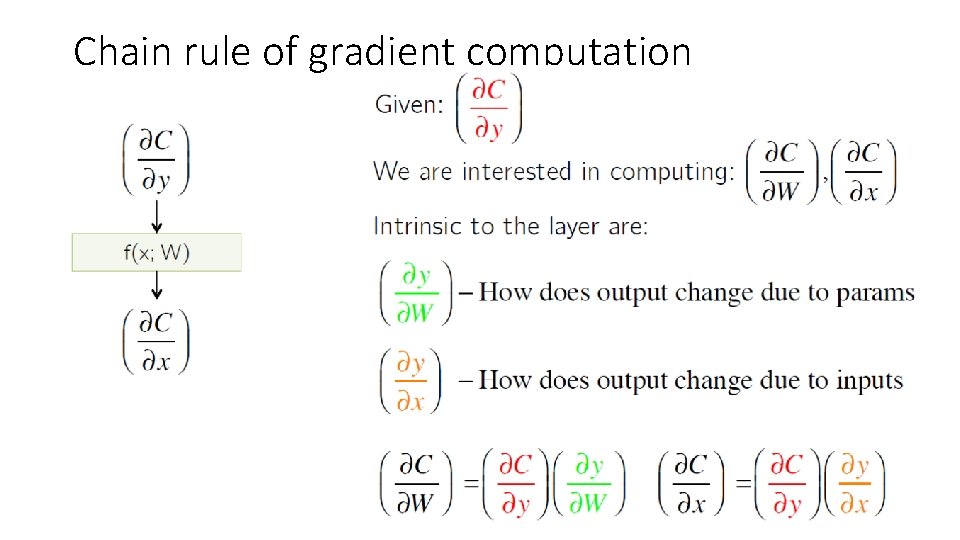

Chain rule of gradient computation

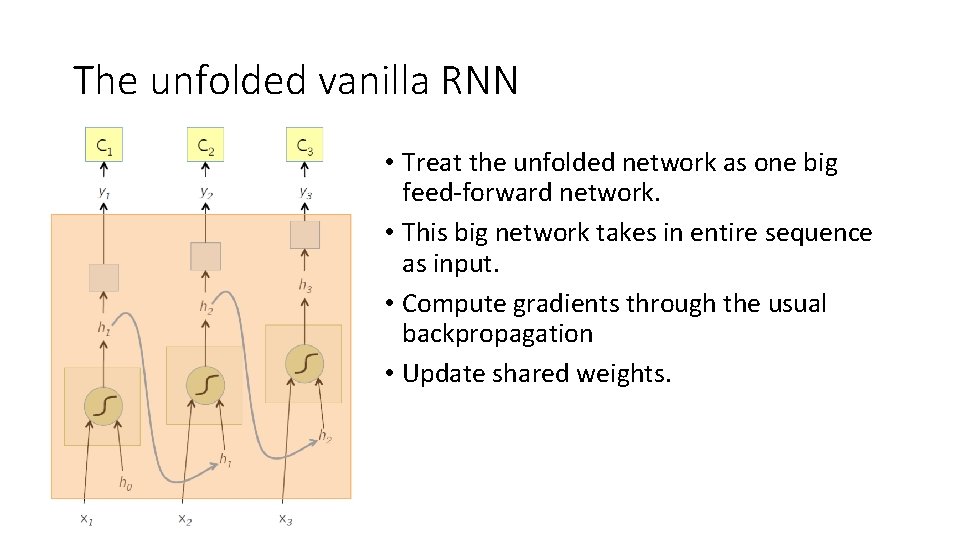

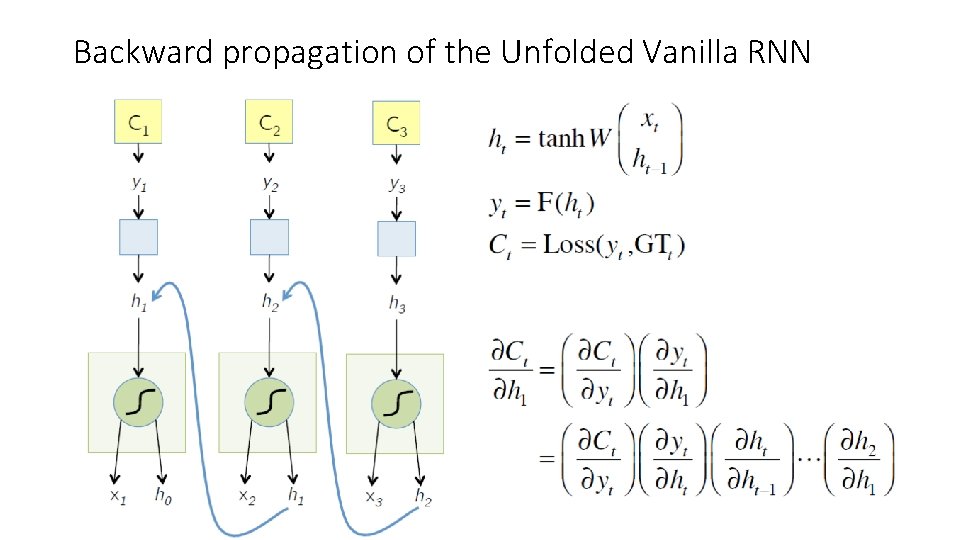

Backpropagation through time (BPTT) • One of the methods used to train RNNs. • The unfolded network (using during forward pass) is treated as one big feed-forward network. • This unfolded network accepts the whole time series as input. • The weight updates are computed for each copy in the unfolded network, then summed (or averaged), and then applied to the RNN weights.

The unfolded vanilla RNN • Treat the unfolded network as one big feed-forward network. • This big network takes in entire sequence as input. • Compute gradients through the usual backpropagation • Update shared weights.

Forward pass of the Unfolded Vanilla RNN

Backward propagation of the Unfolded Vanilla RNN

Issues with BPTT learning of the RNN params • Vanishing gradient, and Exploding gradient issues • Since the gradient is a product of k real number, it can shrink to zero, or explode to infinity. • This issue can be solved by using a special type of memory cell, called Long short-term Memory cells. • More on this coming up in “Deep Learning” Course (Fall 2018). • You are all invited! • Reference: Long Short-term memory, by Sepp Hochreiter et al. (1997)

![Acknowledgements • Slide courtesy to Arun Mallya [http: //arunmallya. github. io/ ] Acknowledgements • Slide courtesy to Arun Mallya [http: //arunmallya. github. io/ ]](http://slidetodoc.com/presentation_image_h/d649df52c51d91ef0244571da71aee11/image-28.jpg)

Acknowledgements • Slide courtesy to Arun Mallya [http: //arunmallya. github. io/ ]

- Slides: 28