Recurrent Neural Network presented by Outline Neuron Feed

Recurrent Neural Network presented by 楊子顥

Outline Neuron Feed network forward NN Ø Forward propagation Ø Backpropagation Recurrent NN Ø Example: LTSM

What’s inside the box? What should go into the box? Features? / parameters? What is the range of outputs? Target? / Error?

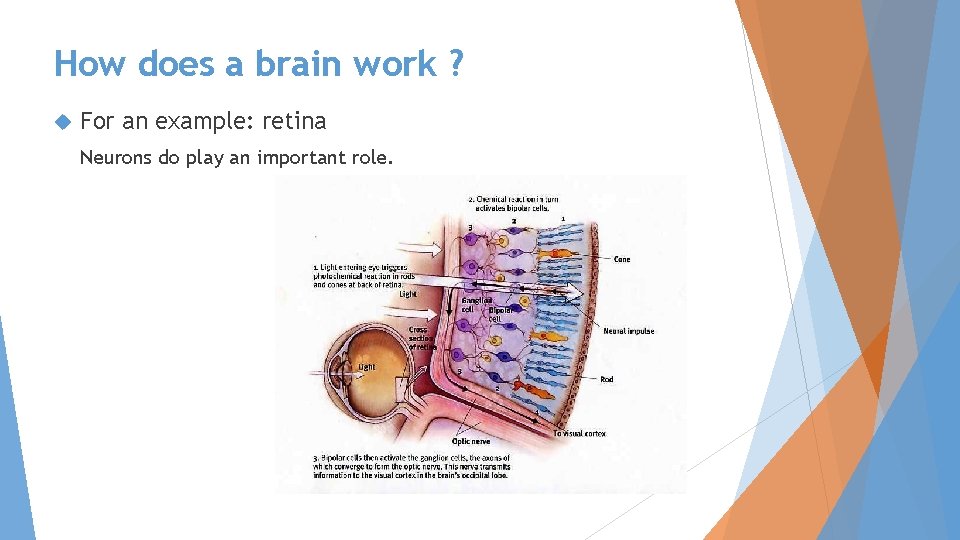

How does a brain work ? For an example: retina Neurons do play an important role.

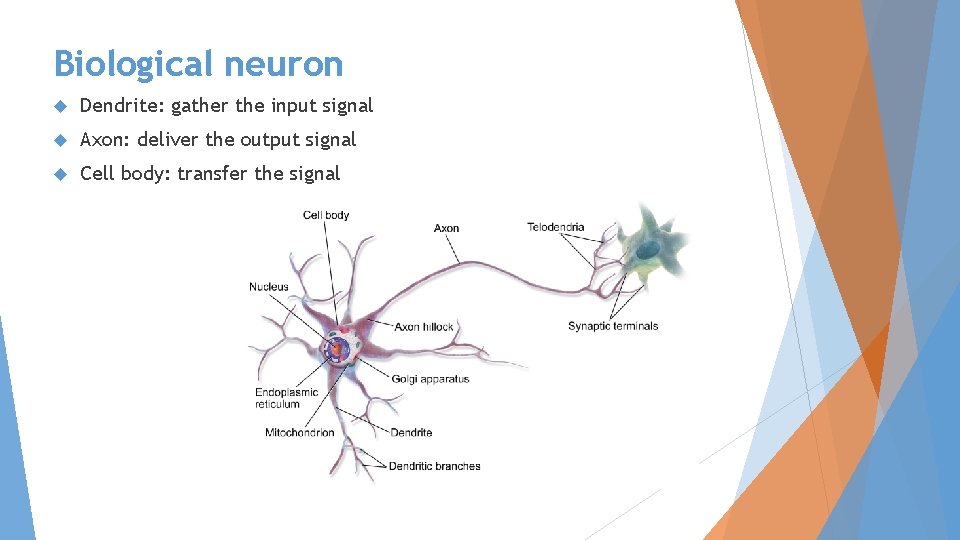

Biological neuron Dendrite: gather the input signal Axon: deliver the output signal Cell body: transfer the signal

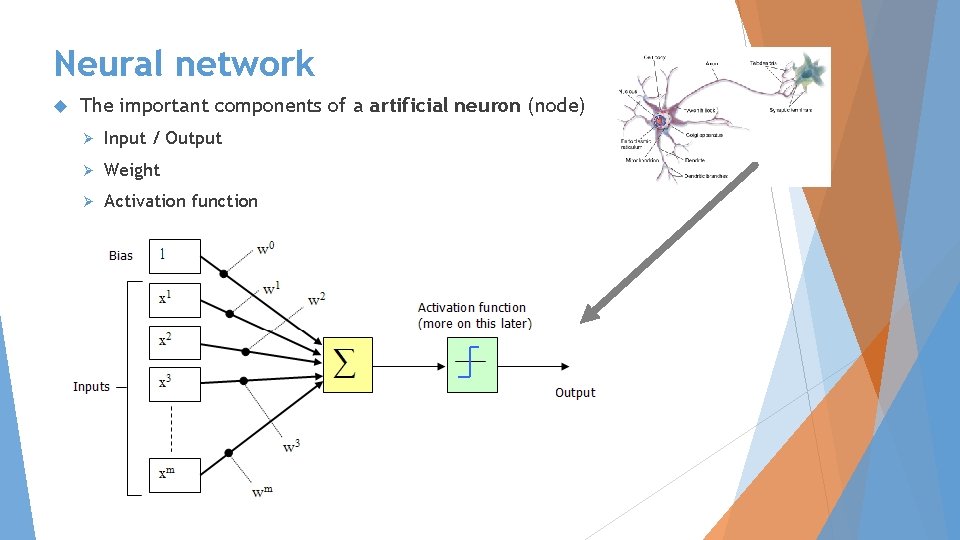

Neural network The important components of a artificial neuron (node) Ø Input / Output Ø Weight Ø Activation function

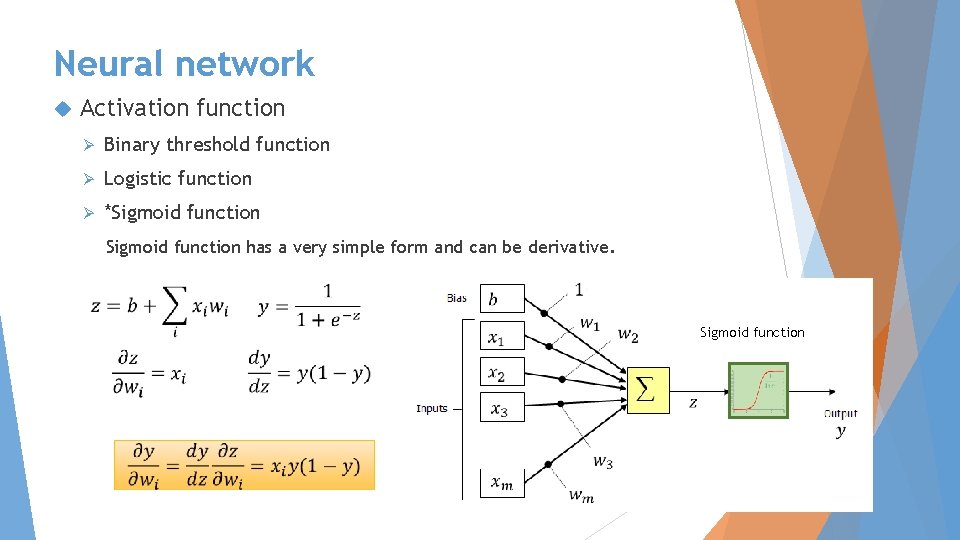

Neural network Activation function Ø Binary threshold function Ø Logistic function Ø *Sigmoid function has a very simple form and can be derivative. Sigmoid function

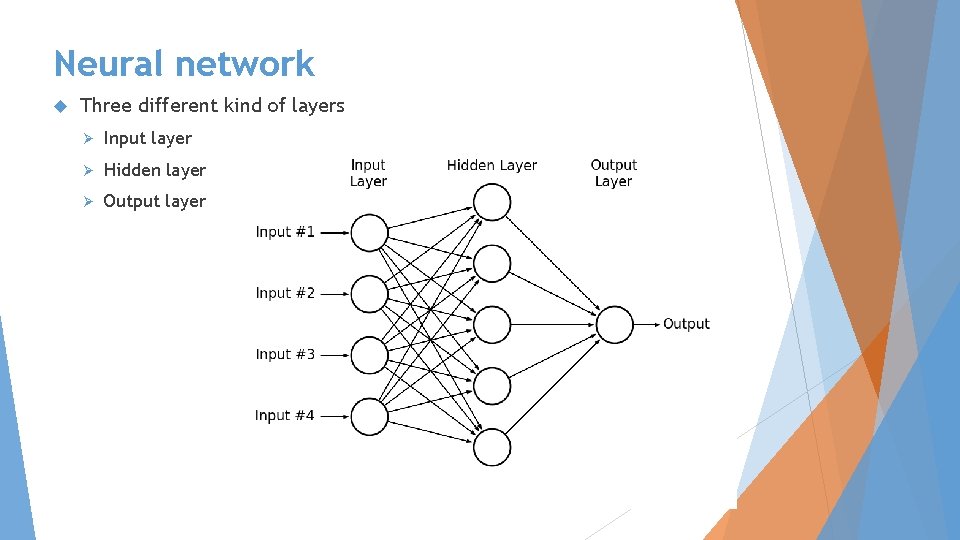

Neural network Three different kind of layers Ø Input layer Ø Hidden layer Ø Output layer

Outline Neuron Feed network forward NN Ø Forward propagation Ø Backpropagation Recurrent NN Ø Example: LTSM

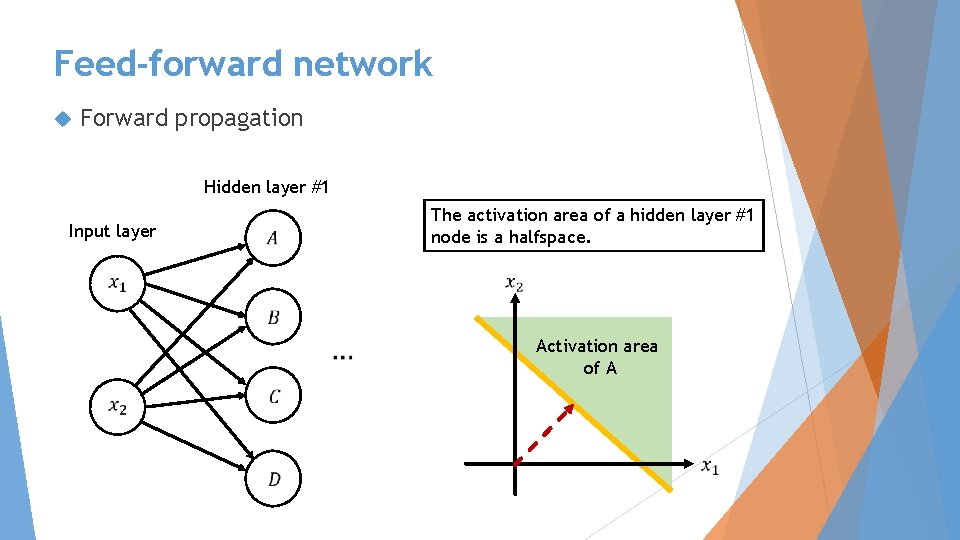

Feed-forward network Forward propagation Hidden layer #1 Input layer The activation area of a hidden layer #1 node is a halfspace. Activation area of A

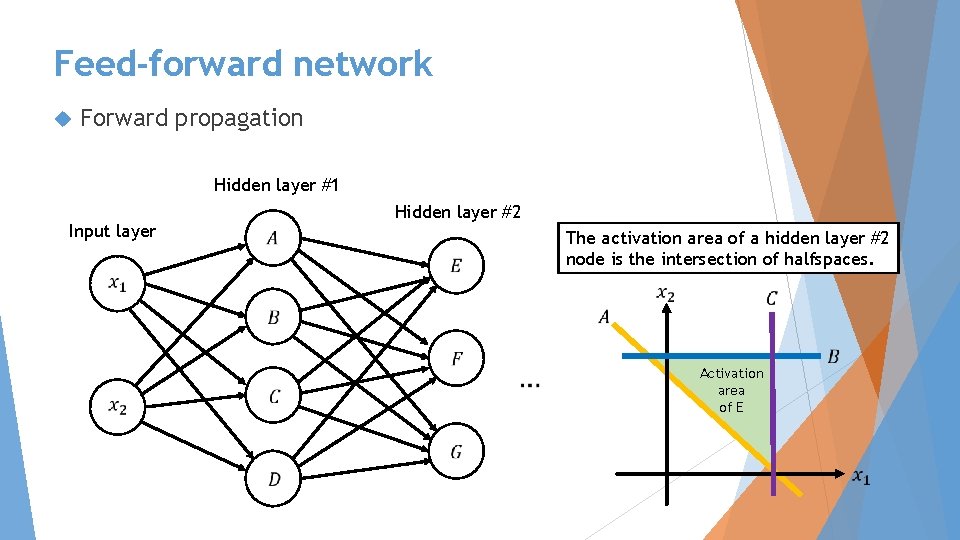

Feed-forward network Forward propagation Hidden layer #1 Hidden layer #2 Input layer The activation area of a hidden layer #2 node is the intersection of halfspaces. Activation area of E

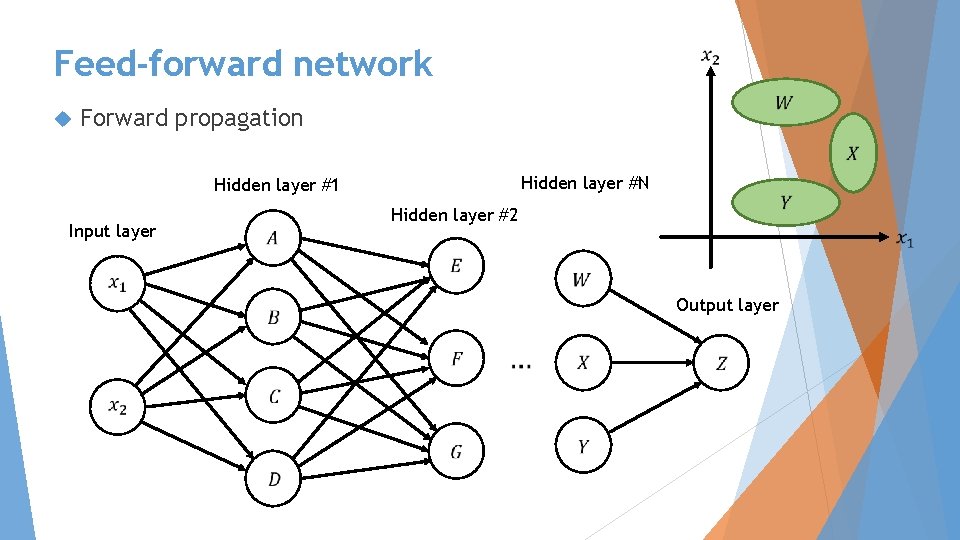

Feed-forward network Forward propagation Hidden layer #N Hidden layer #1 Input layer Output layer Hidden layer #2

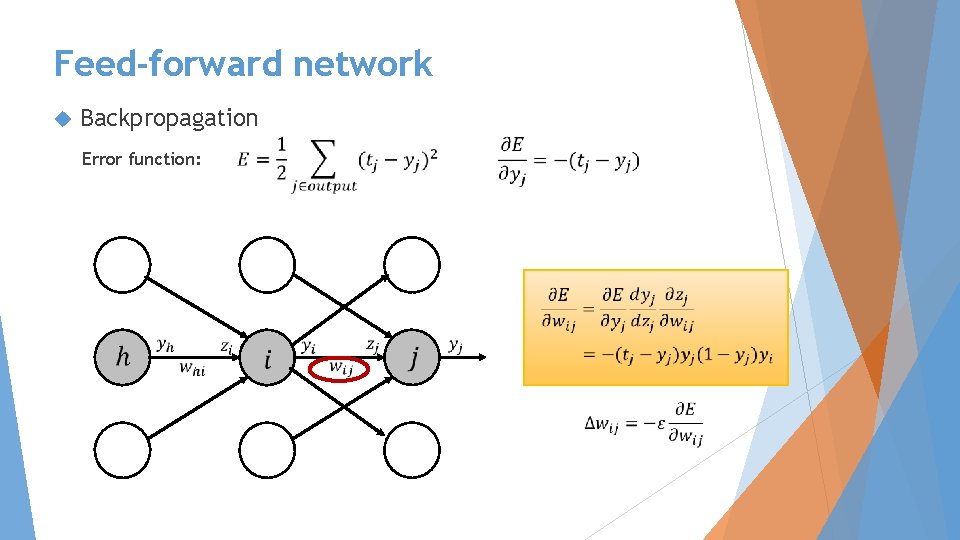

Feed-forward network Backpropagation Error function:

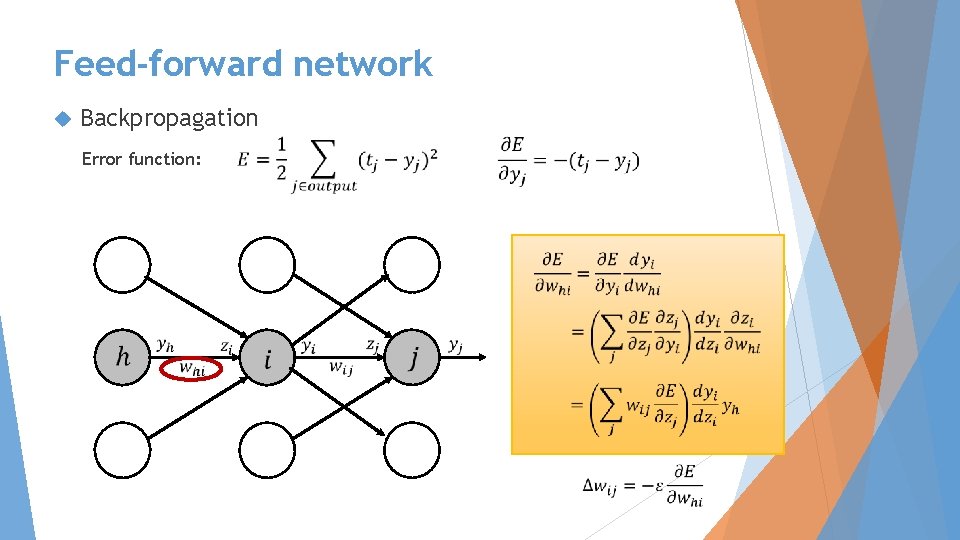

Feed-forward network Backpropagation Error function:

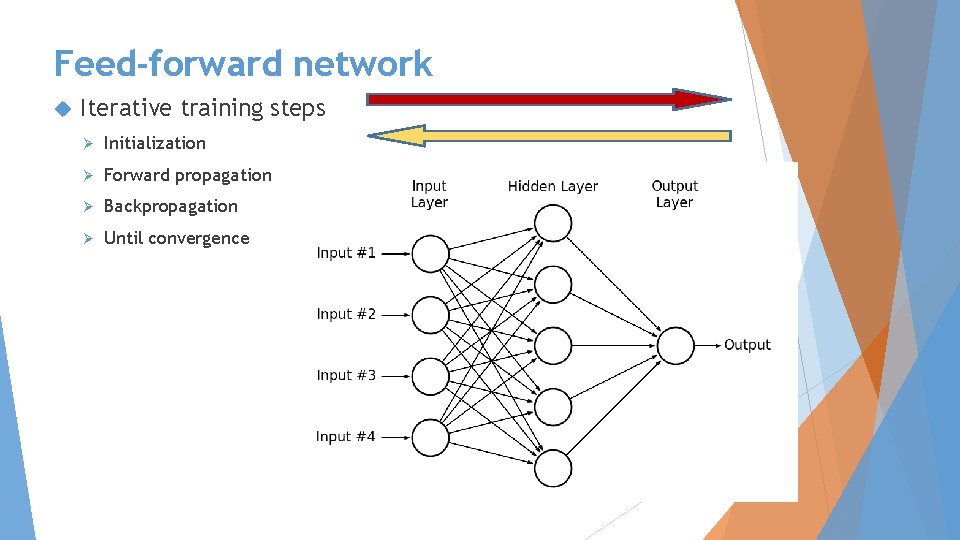

Feed-forward network Iterative training steps Ø Initialization Ø Forward propagation Ø Backpropagation Ø Until convergence

Outline Neuron Feed network forward NN Ø Forward propagation Ø Backpropagation Recurrent NN Ø Example: LTSM

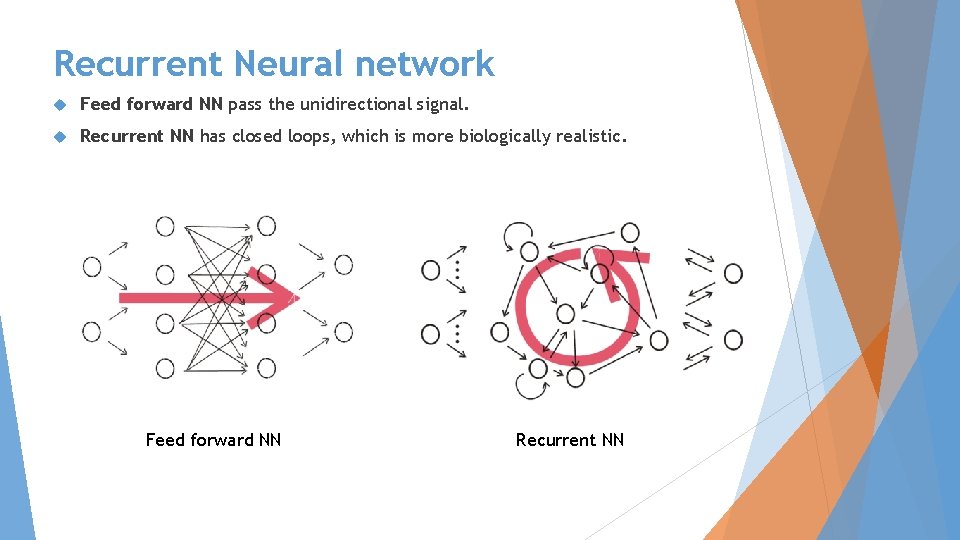

Recurrent Neural network Feed forward NN pass the unidirectional signal. Recurrent NN has closed loops, which is more biologically realistic. Feed forward NN Recurrent NN

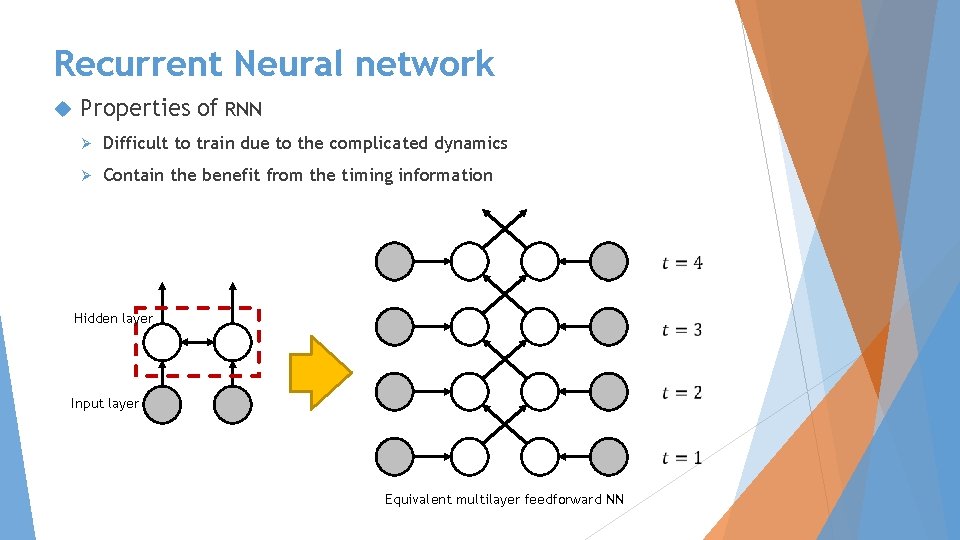

Recurrent Neural network Properties of RNN Ø Difficult to train due to the complicated dynamics Ø Contain the benefit from the timing information Hidden layer Input layer Equivalent multilayer feedforward NN

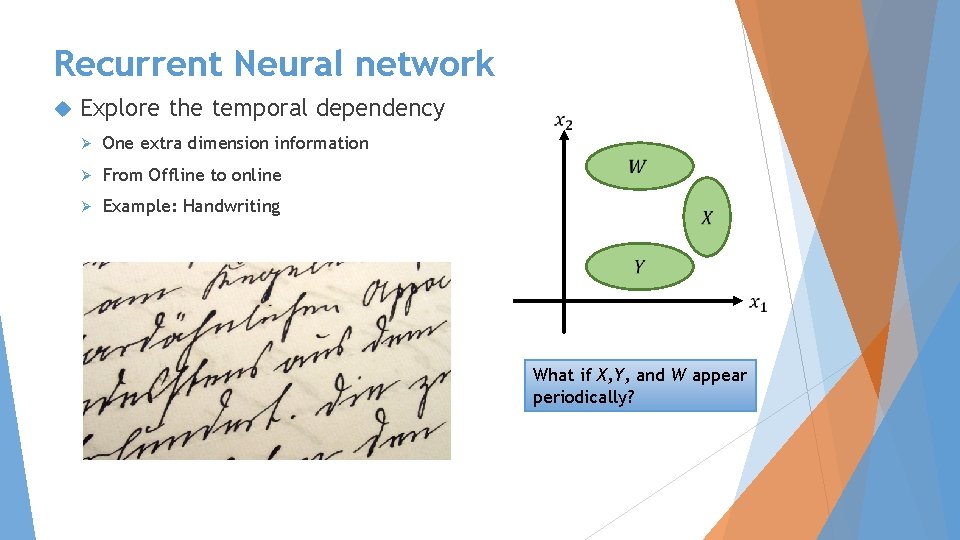

Recurrent Neural network Explore the temporal dependency Ø One extra dimension information Ø From Offline to online Ø Example: Handwriting What if X, Y, and W appear periodically?

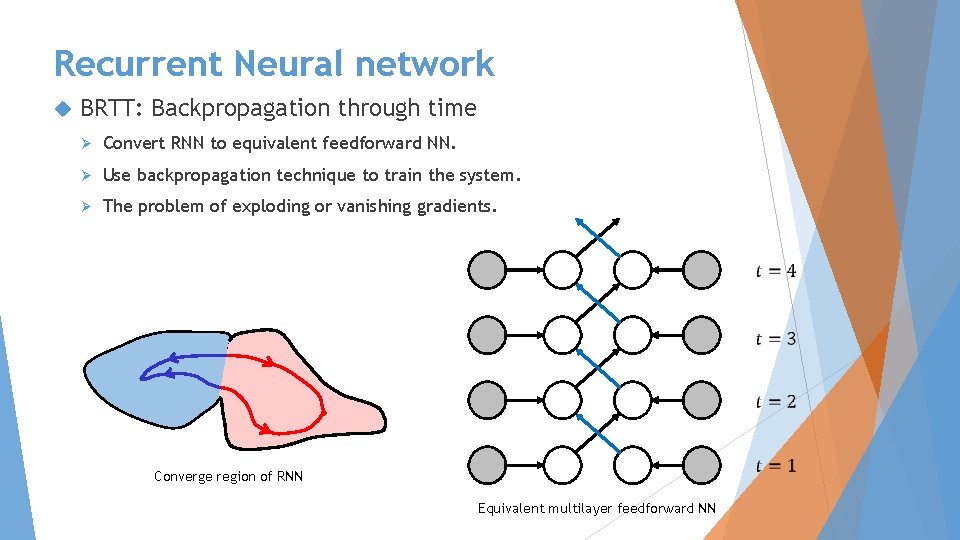

Recurrent Neural network BRTT: Backpropagation through time Ø Convert RNN to equivalent feedforward NN. Ø Use backpropagation technique to train the system. Ø The problem of exploding or vanishing gradients. Converge region of RNN Equivalent multilayer feedforward NN

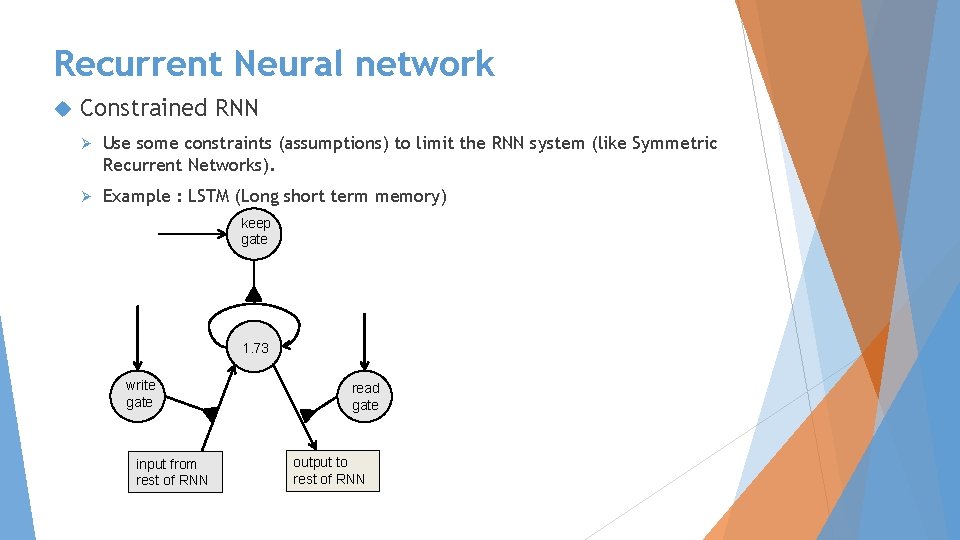

Recurrent Neural network Constrained RNN Ø Use some constraints (assumptions) to limit the RNN system (like Symmetric Recurrent Networks). Ø Example : LSTM (Long short term memory) keep gate 1. 73 write gate input from rest of RNN read gate output to rest of RNN

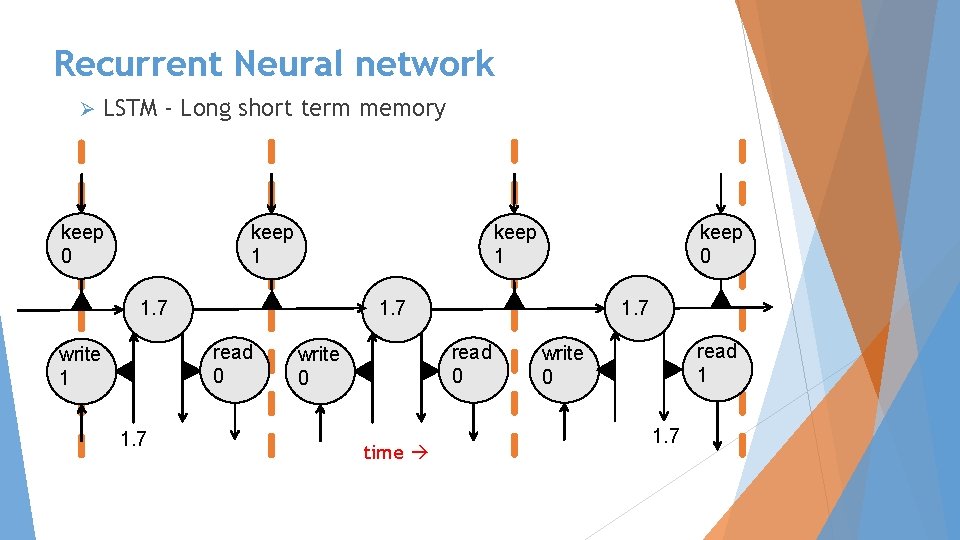

Recurrent Neural network Ø LSTM - Long short term memory keep 0 keep 1 1. 7 read 0 write 1 1. 7 keep 0 1. 7 read 0 write 0 time read 1 write 0 1. 7

Recurrent Neural network Ø LSTM - Long short term memory

Reference Geoffrey Hinton, Neural Networks for Machine Learning Lecture notes and videos, Coursera course, 2012. Herbert Jaeger, A tutorial on training recurrent neural networks, covering BPPT, RTRL, EKF and the echo state network approach, Tech. Rep. , Fraunhofer Institute for Autonomous Intelligent Systems (AIS) since 2003: International University Bremen, 2005. “http: //en. wikipedia. org/wiki/Recurrent_neural_network”, Wikipedia. “http: //en. wikipedia. org/wiki/Backpropagation”, Wikipedia.

- Slides: 24