Probabilistic models for corpora and graphs Review some

Probabilistic models for corpora and graphs

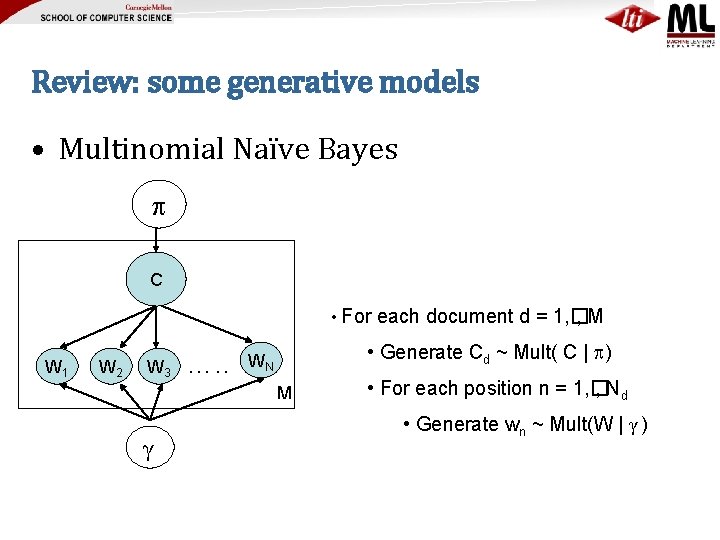

Review: some generative models • Multinomial Naïve Bayes C • For W 1 W 2 W 3 …. . • Generate Cd ~ Mult( C | ) WN M γ each document d = 1, � , M • For each position n = 1, � , Nd • Generate wn ~ Mult(W | γ )

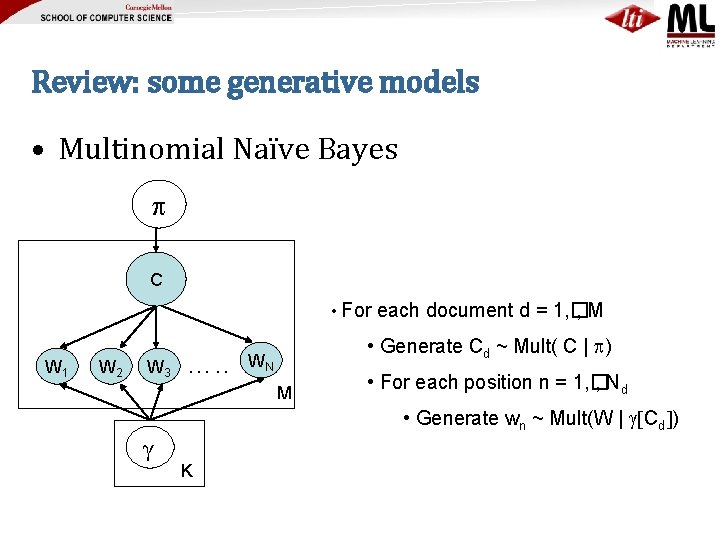

Review: some generative models • Multinomial Naïve Bayes C • For W 1 W 2 W 3 …. . each document d = 1, � , M • Generate Cd ~ Mult( C | ) WN M • For each position n = 1, � , Nd • Generate wn ~ Mult(W | g[Cd]) γ K

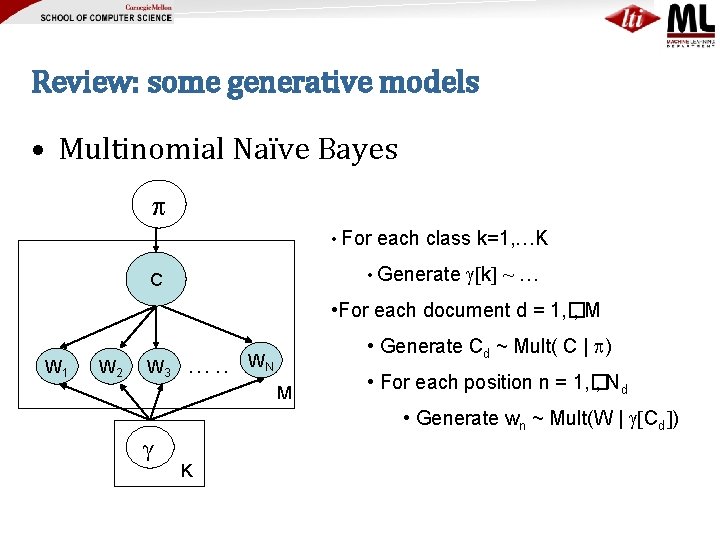

Review: some generative models • Multinomial Naïve Bayes • For each class k=1, …K • Generate C g[k] ~ … • For each document d = 1, � , M W 1 W 2 W 3 …. . • Generate Cd ~ Mult( C | ) WN M • For each position n = 1, � , Nd • Generate wn ~ Mult(W | g[Cd]) γ K

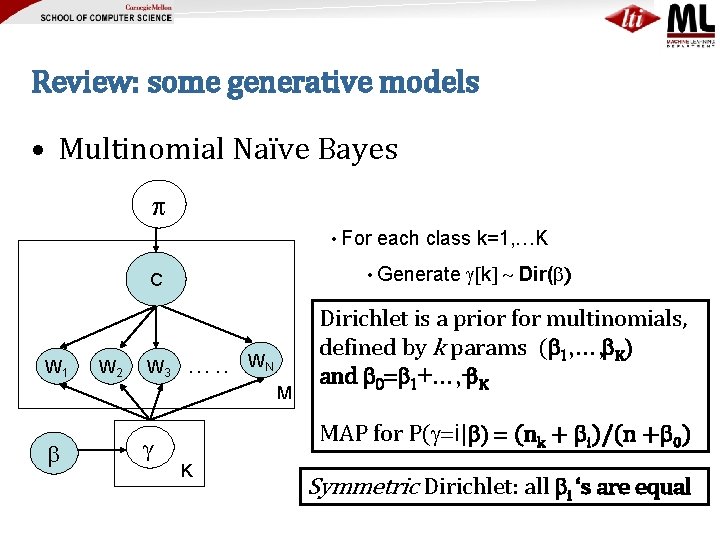

Review: some generative models • Multinomial Naïve Bayes • For • Generate C W 1 W 2 W 3 each class k=1, …K …. . WN M g[k] ~ Dir(b) • For eachisdocument d = multinomials, 1, � , M Dirichlet a prior for • Generate Cd ~ Mult( | ) defined by k params (b 1 C, …, b K) and b • 0 For =b 1 each +…, +position b. K n = 1, � , Nd • Generate wn ~ Mult(W | g[Cd]) b γ MAP for P(g=i|b) = (nk + bi)/(n +b 0) K Symmetric Dirichlet: all bi ‘s are equal

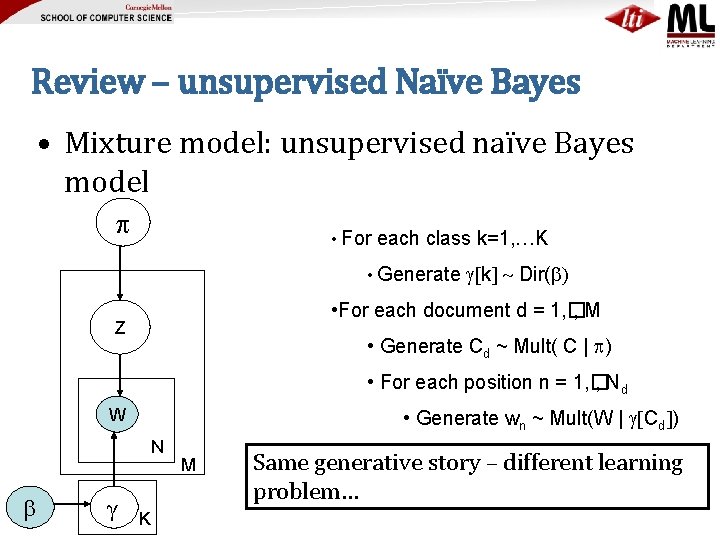

Review – unsupervised Naïve Bayes • Mixture model: unsupervised naïve Bayes model • For each class k=1, …K • Generate g[k] ~ Dir(b) • For each document d = 1, � , M C Z • Generate Cd ~ Mult( C | ) • For each position n = 1, � , Nd W • Generate wn ~ Mult(W | g[Cd]) N b γ K M Same generative story – different learning problem…

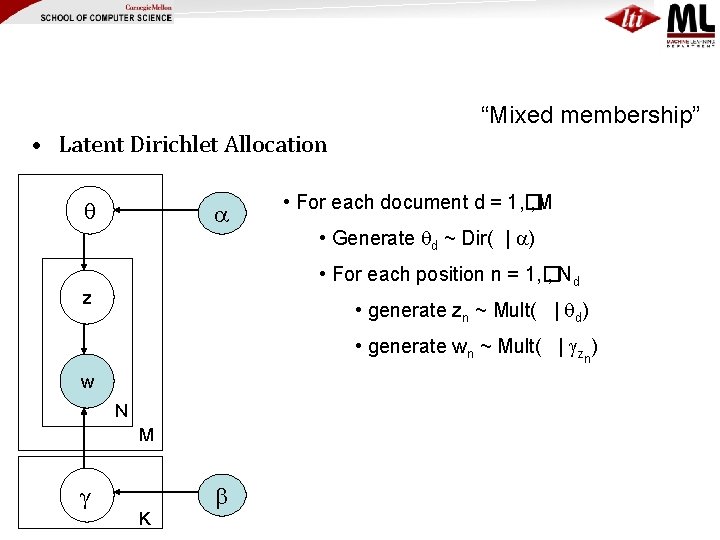

“Mixed membership” • Latent Dirichlet Allocation • For each document d = 1, � , M • Generate d ~ Dir( | ) • For each position n = 1, � , Nd z • generate zn ~ Mult( | d) • generate wn ~ Mult( | gzn) w N M γ K b

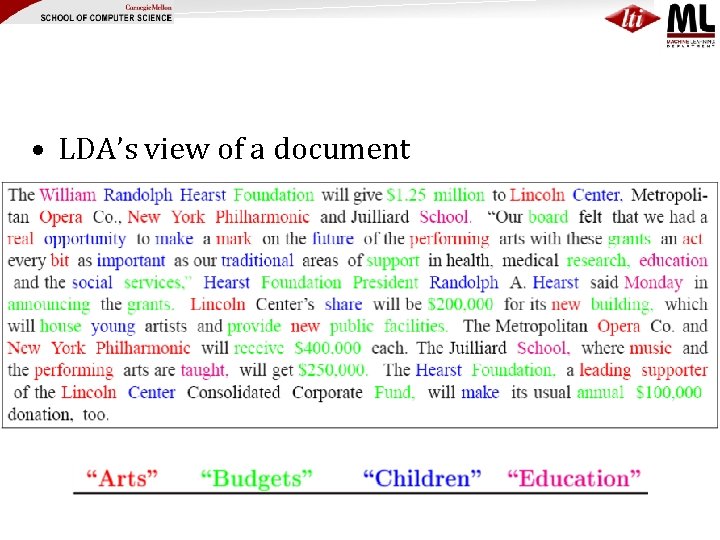

• LDA’s view of a document

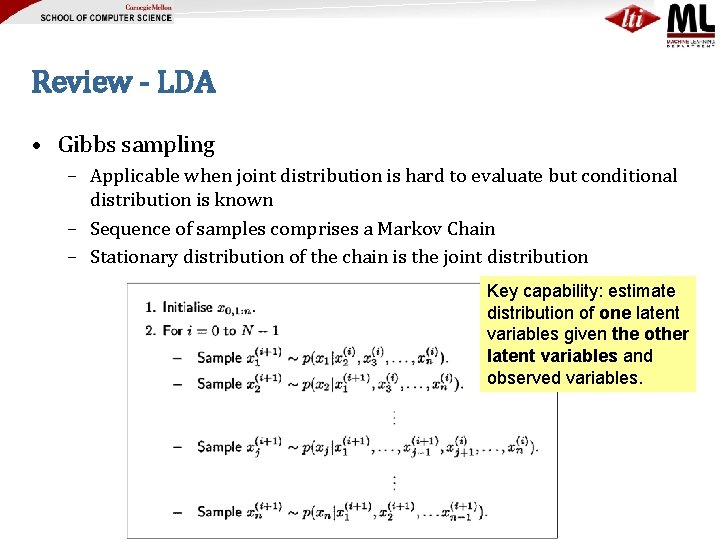

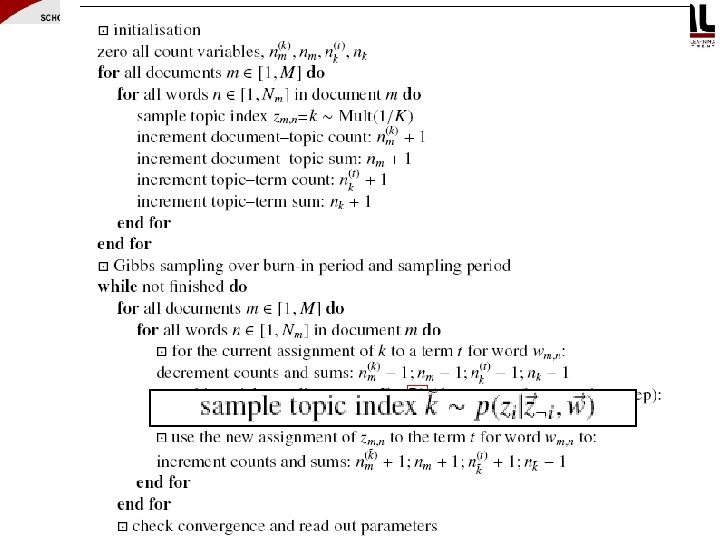

Review - LDA • Gibbs sampling – Applicable when joint distribution is hard to evaluate but conditional distribution is known – Sequence of samples comprises a Markov Chain – Stationary distribution of the chain is the joint distribution Key capability: estimate distribution of one latent variables given the other latent variables and observed variables.

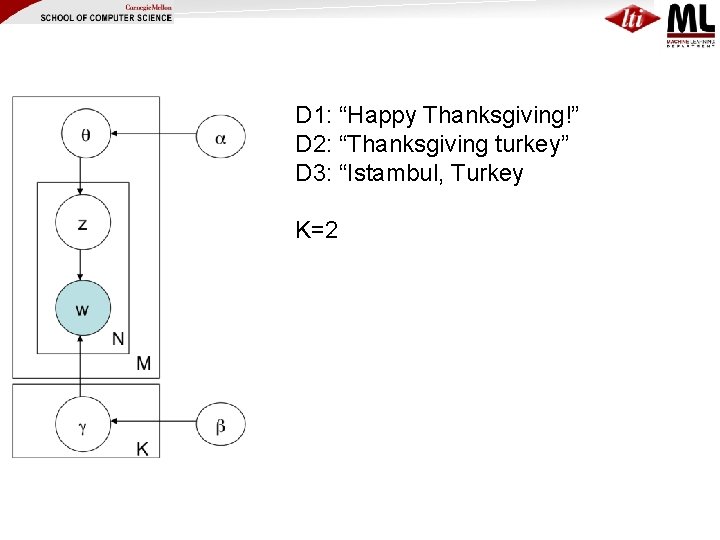

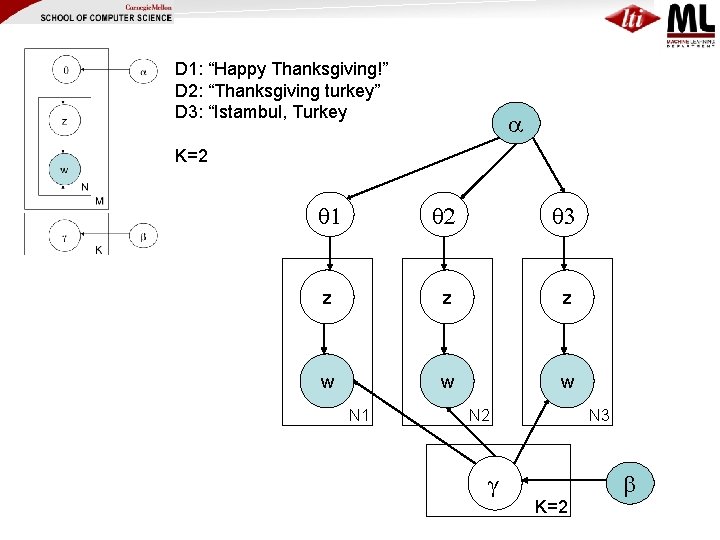

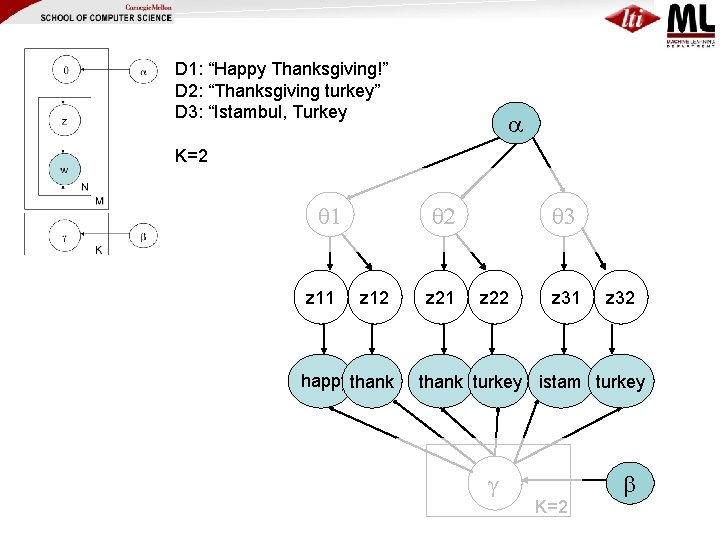

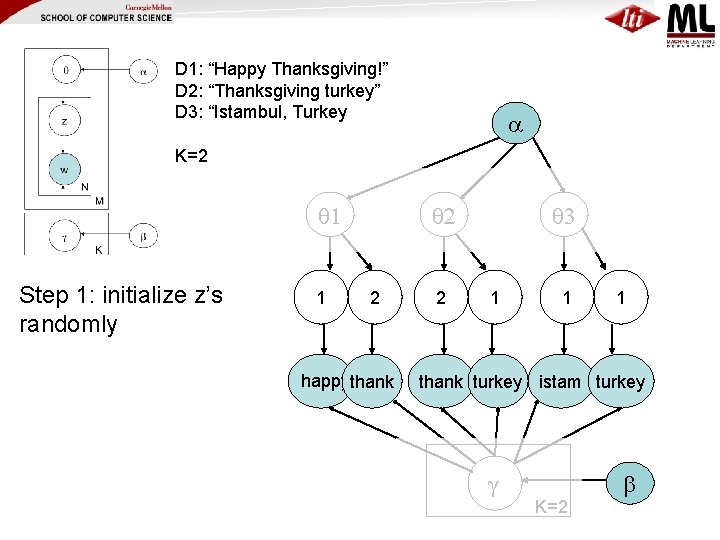

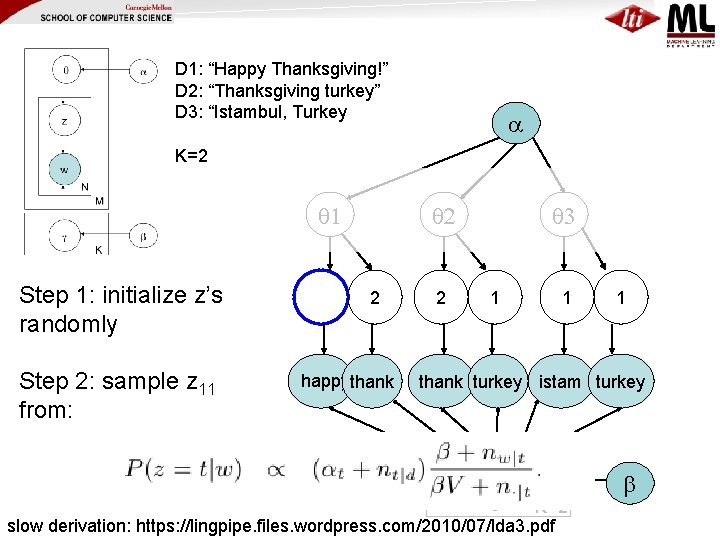

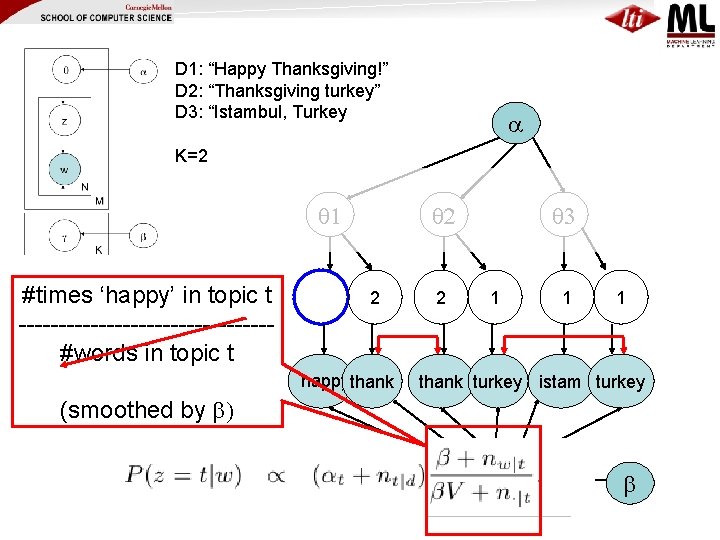

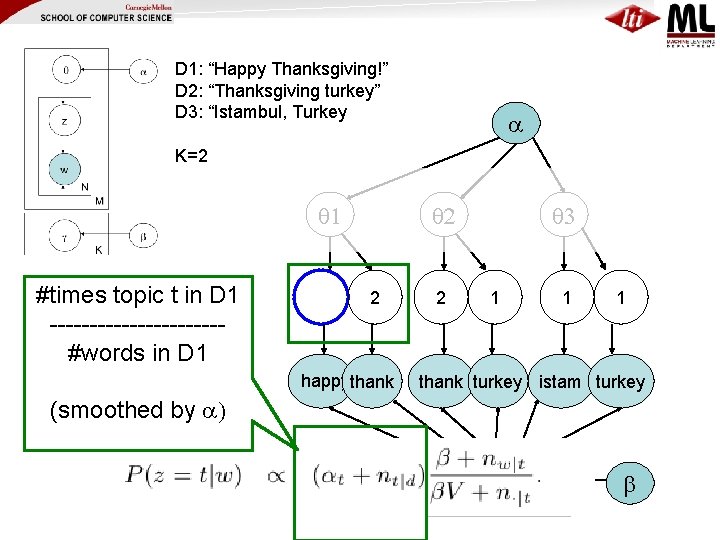

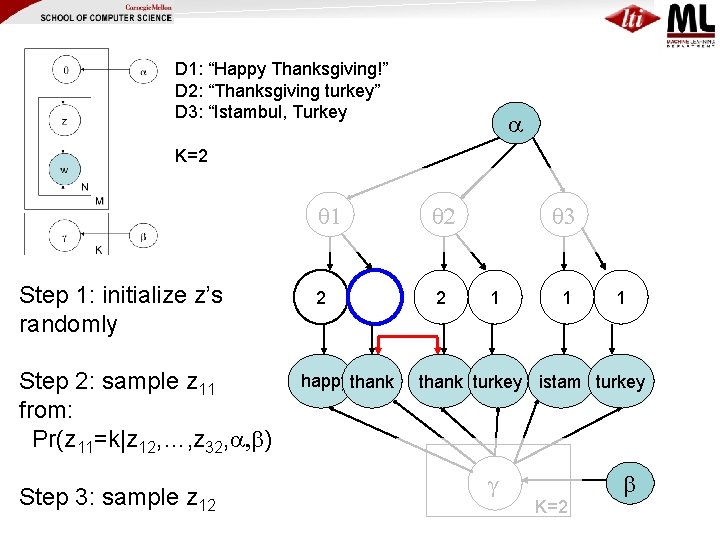

D 1: “Happy Thanksgiving!” D 2: “Thanksgiving turkey” D 3: “Istambul, Turkey K=2

D 1: “Happy Thanksgiving!” D 2: “Thanksgiving turkey” D 3: “Istambul, Turkey K=2 1 2 3 z z z w w w N 1 N 2 γ N 3 K=2 b

D 1: “Happy Thanksgiving!” D 2: “Thanksgiving turkey” D 3: “Istambul, Turkey K=2 1 z 11 2 z 12 happythank z 21 3 z 22 z 31 z 32 thank turkey istam turkey γ K=2 b

D 1: “Happy Thanksgiving!” D 2: “Thanksgiving turkey” D 3: “Istambul, Turkey K=2 1 Step 1: initialize z’s randomly 1 2 2 happythank 2 3 1 1 1 thank turkey istam turkey γ K=2 b

D 1: “Happy Thanksgiving!” D 2: “Thanksgiving turkey” D 3: “Istambul, Turkey K=2 1 Step 1: initialize z’s randomly Step 2: sample z 11 from: 1 2 2 happythank 2 3 1 1 1 thank turkey istam turkey γ K=2 slow derivation: https: //lingpipe. files. wordpress. com/2010/07/lda 3. pdf b

D 1: “Happy Thanksgiving!” D 2: “Thanksgiving turkey” D 3: “Istambul, Turkey K=2 1 #times ‘happy’ in topic t ----------------#words in topic t 2 2 happythank 2 3 1 1 1 thank turkey istam turkey (smoothed by b) γ K=2 b

D 1: “Happy Thanksgiving!” D 2: “Thanksgiving turkey” D 3: “Istambul, Turkey K=2 1 #times topic t in D 1 -----------#words in D 1 2 2 happythank 2 3 1 1 1 thank turkey istam turkey (smoothed by ) γ K=2 b

D 1: “Happy Thanksgiving!” D 2: “Thanksgiving turkey” D 3: “Istambul, Turkey K=2 Step 1: initialize z’s randomly Step 2: sample z 11 from: Pr(z 11=k|z 12, …, z 32, , b) Step 3: sample z 12 1 2 2 2 happythank 3 1 1 1 thank turkey istam turkey γ K=2 b

Models for corpora and graphs

21

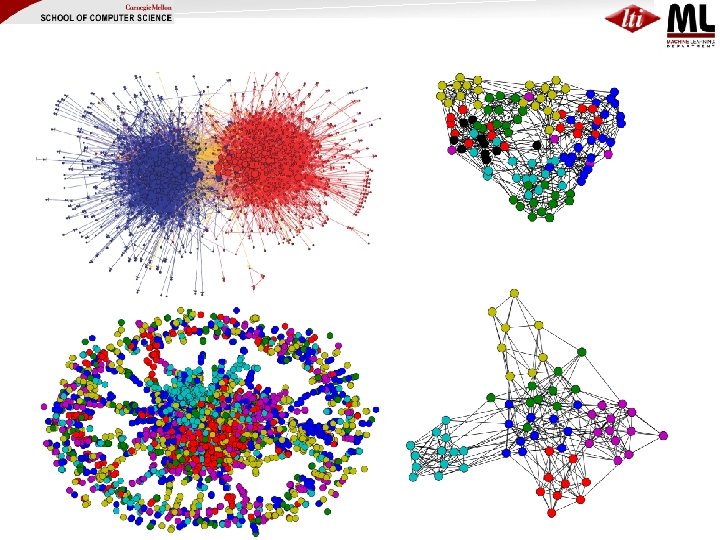

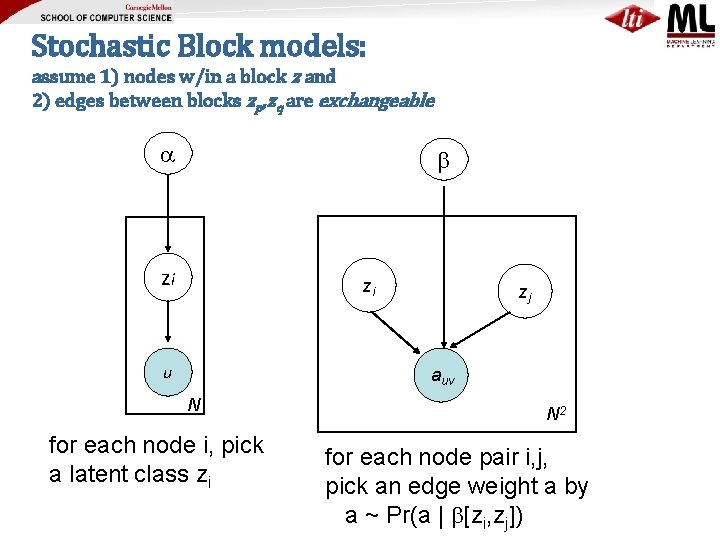

Stochastic Block models: assume 1) nodes w/in a block z and 2) edges between blocks zp, zq are exchangeable b zi zi u zj auv N for each node i, pick a latent class zi N 2 for each node pair i, j, pick an edge weight a by a ~ Pr(a | b[zi, zj])

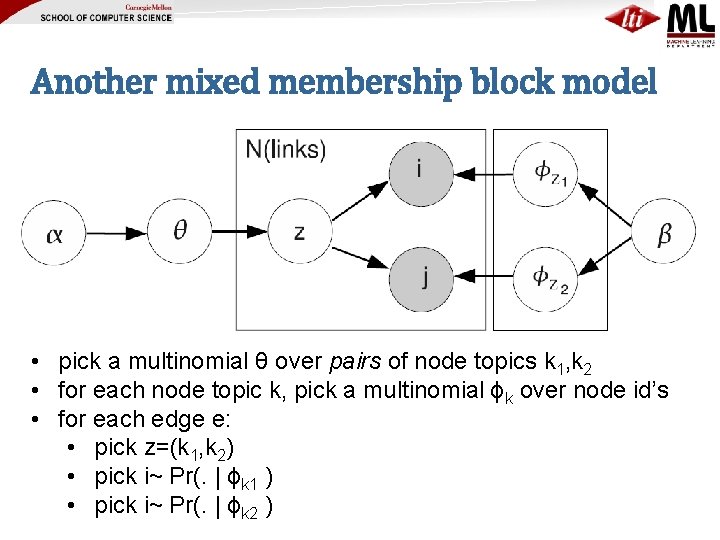

Another mixed membership block model • pick a multinomial θ over pairs of node topics k 1, k 2 • for each node topic k, pick a multinomial ϕk over node id’s • for each edge e: • pick z=(k 1, k 2) • pick i~ Pr(. | ϕk 1 ) • pick i~ Pr(. | ϕk 2 )

Speeding up modeling for corpora and graphs

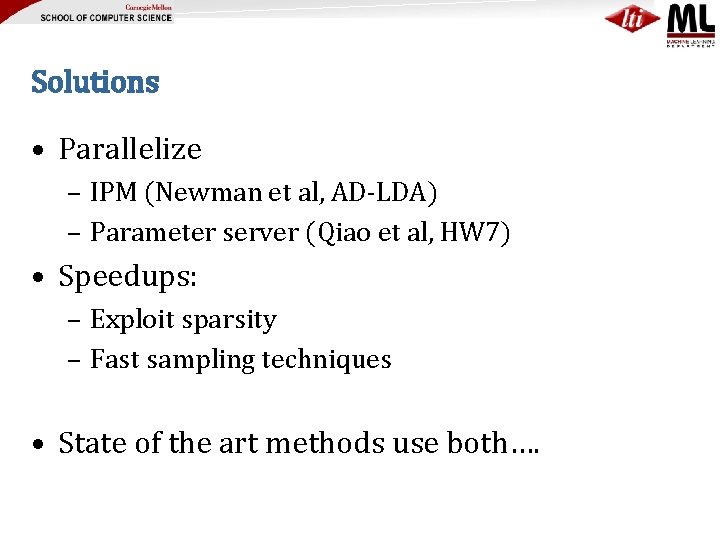

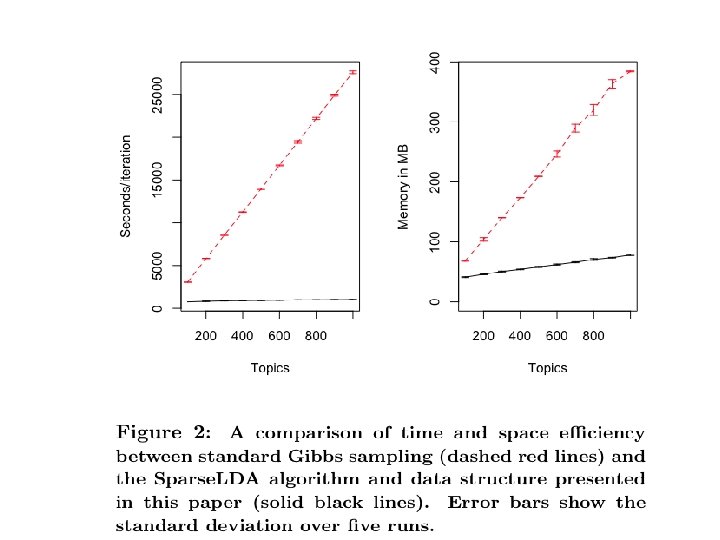

Solutions • Parallelize – IPM (Newman et al, AD-LDA) – Parameter server (Qiao et al, HW 7) • Speedups: – Exploit sparsity – Fast sampling techniques • State of the art methods use both….

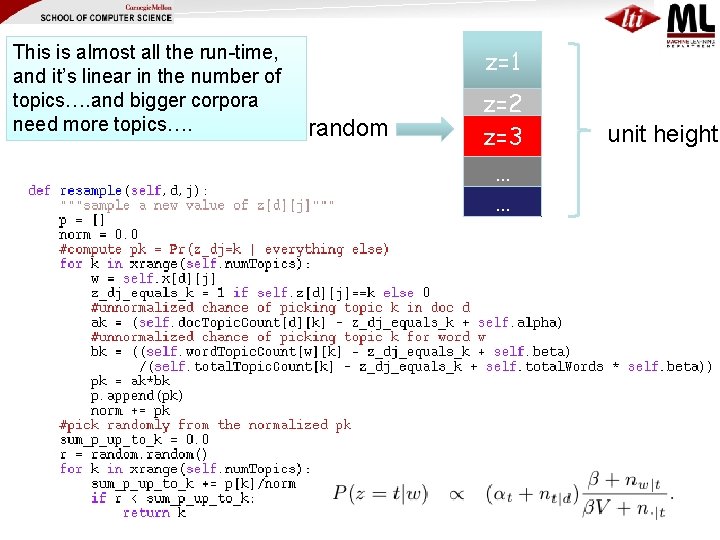

This is almost all the run-time, and it’s linear in the number of topics…. and bigger corpora need more topics…. z=1 random z=2 z=3 … … unit height

KDD 09

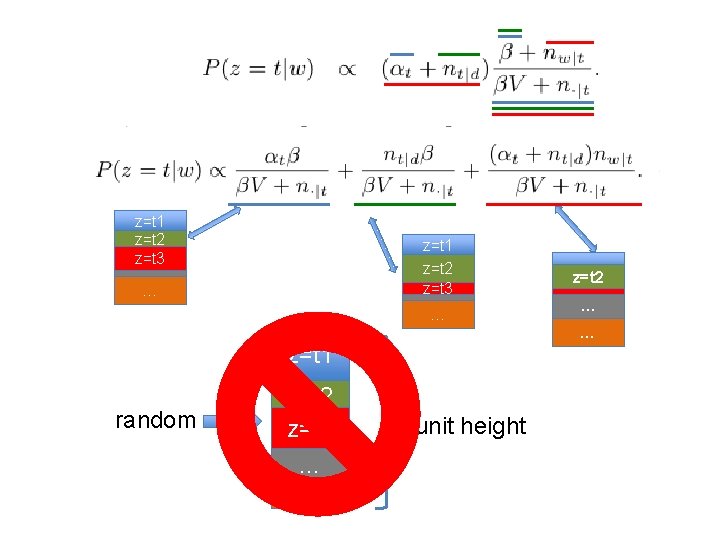

z=t 1 z=t 2 z=t 3 … … … z=t 1 random z=t 2 z=t 3 … … z=t 2 z=t 3 … unit height

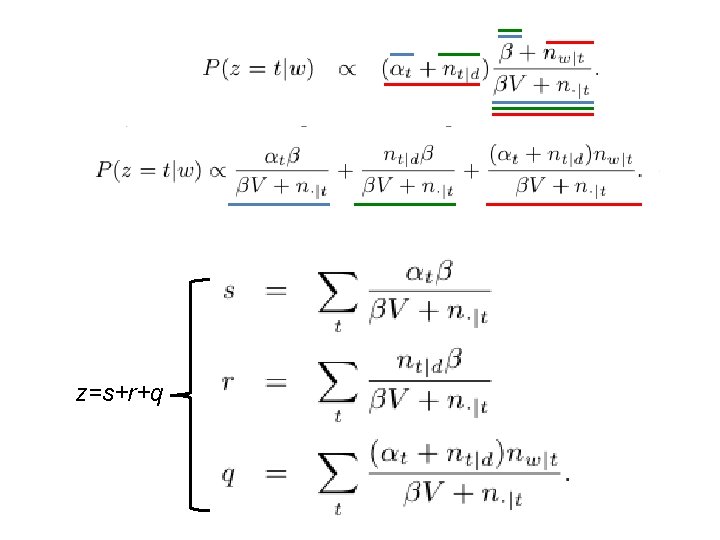

z=s+r+q

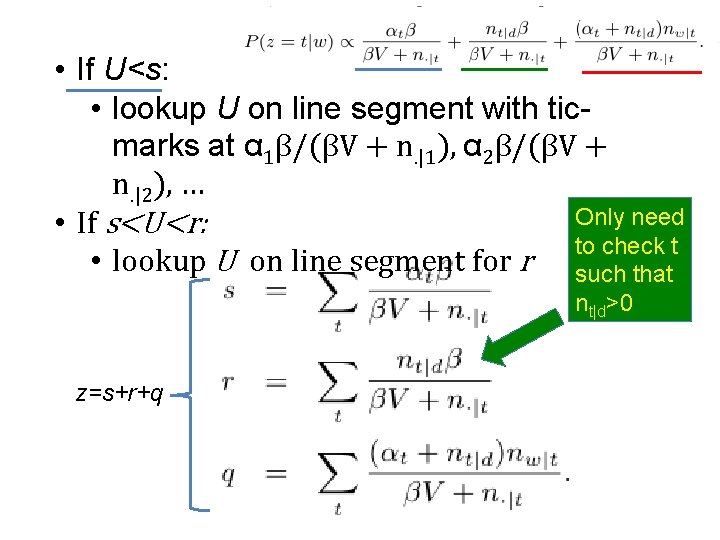

• If U<s: • lookup U on line segment with ticmarks at α 1β/(βV + n. |1), α 2β/(βV + n. |2), … Only need • If s<U<r: to check t • lookup U on line segment for r such that nt|d>0 z=s+r+q

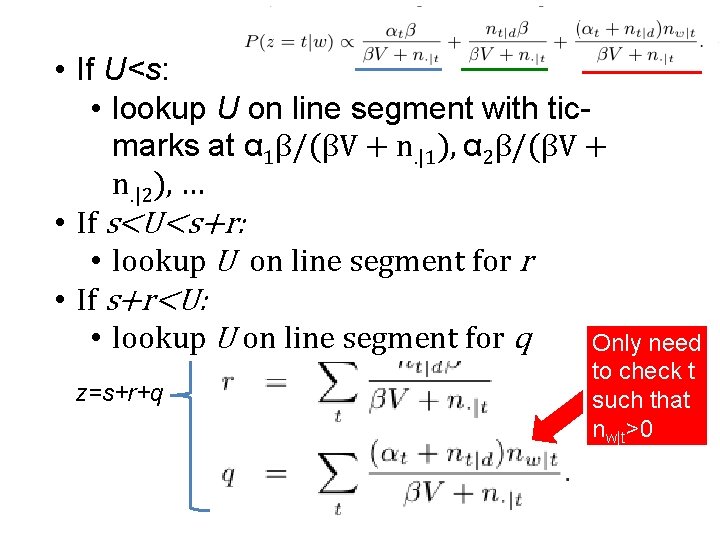

• If U<s: • lookup U on line segment with ticmarks at α 1β/(βV + n. |1), α 2β/(βV + n. |2), … • If s<U<s+r: • lookup U on line segment for r • If s+r<U: • lookup U on line segment for q Only need z=s+r+q to check t such that nw|t>0

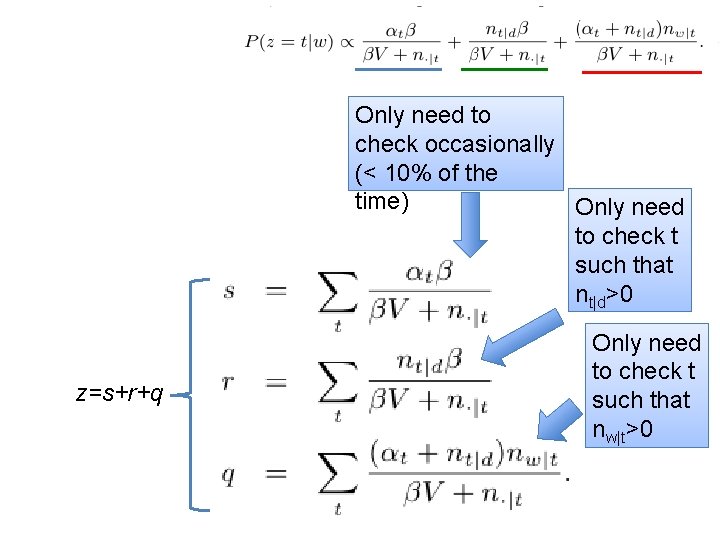

Only need to check occasionally (< 10% of the time) Only need to check t such that nt|d>0 z=s+r+q Only need to check t such that nw|t>0

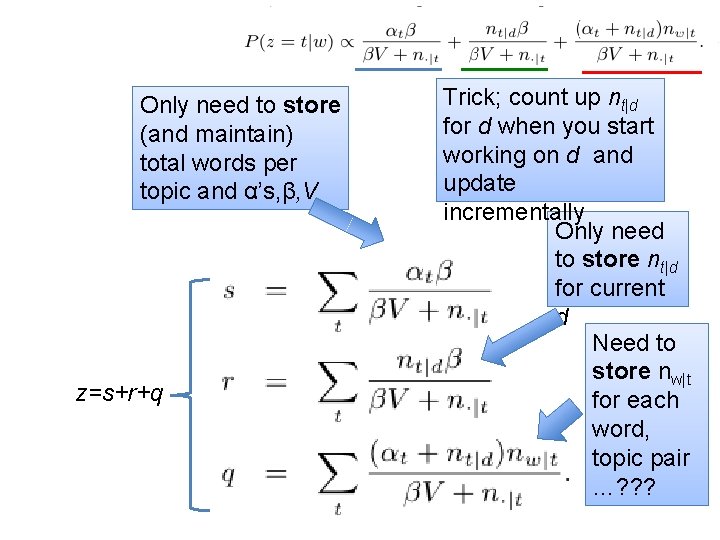

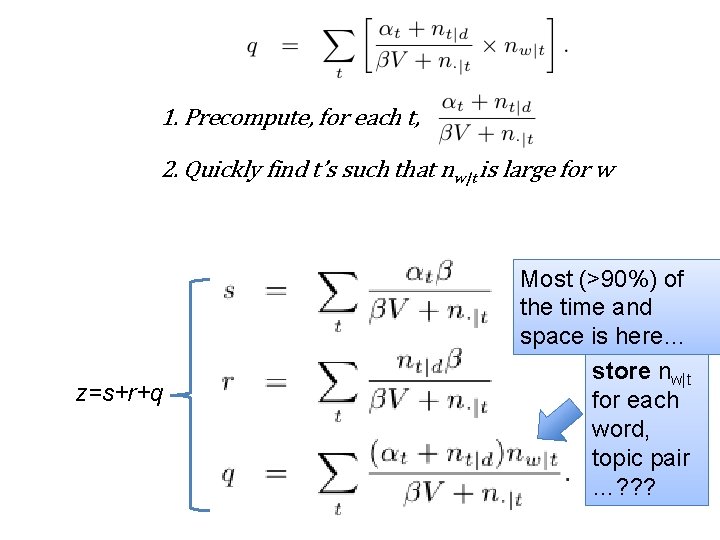

Only need to store (and maintain) total words per topic and α’s, β, V z=s+r+q Trick; count up nt|d for d when you start working on d and update incrementally Only need to store nt|d for current d Need to store nw|t for each word, topic pair …? ? ?

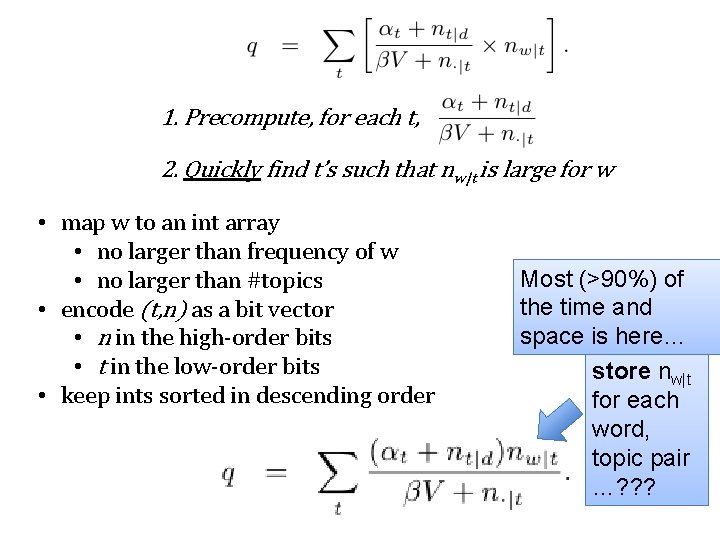

1. Precompute, for each t, 2. Quickly find t’s such that nw|t is large for w z=s+r+q Most (>90%) of the time and space is here… Need to store nw|t for each word, topic pair …? ? ?

1. Precompute, for each t, 2. Quickly find t’s such that nw|t is large for w • map w to an int array • no larger than frequency of w • no larger than #topics • encode (t, n) as a bit vector • n in the high-order bits • t in the low-order bits • keep ints sorted in descending order Most (>90%) of the time and space is here… Need to store nw|t for each word, topic pair …? ? ?

Outline • LDA/Gibbs algorithm details • How to speed it up by parallelizing • How to speed it up by faster sampling – Why sampling is key – Some sampling ideas for LDA • The Mimno/Mc. Callum decomposition (Sparse. LDA) • Alias tables (Walker 1977; Li, Ahmed, Ravi, Smola KDD 2014)

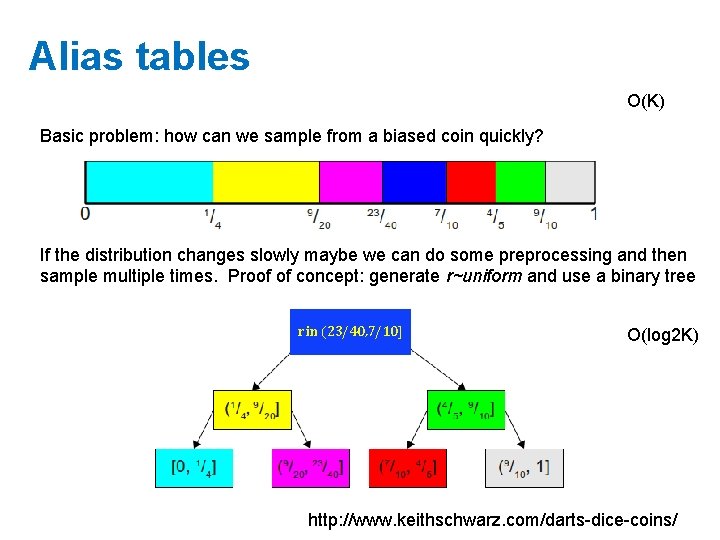

Alias tables O(K) Basic problem: how can we sample from a biased coin quickly? If the distribution changes slowly maybe we can do some preprocessing and then sample multiple times. Proof of concept: generate r~uniform and use a binary tree r in (23/40, 7/10] O(log 2 K) http: //www. keithschwarz. com/darts-dice-coins/

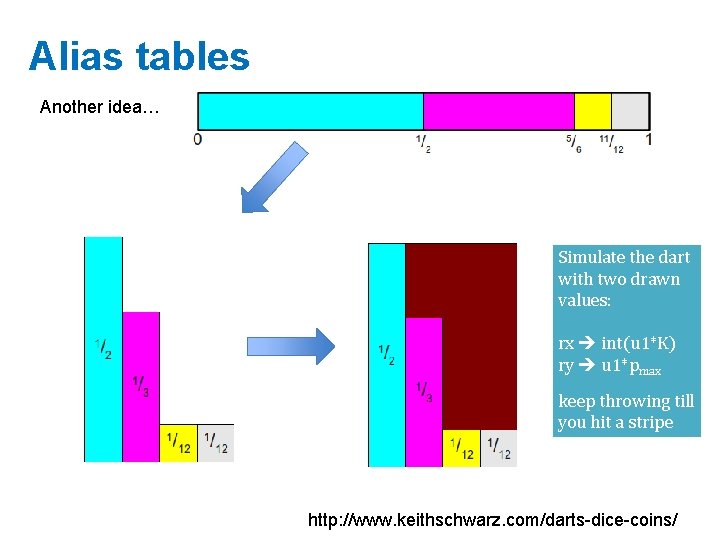

Alias tables Another idea… Simulate the dart with two drawn values: rx int(u 1*K) ry u 1*pmax keep throwing till you hit a stripe http: //www. keithschwarz. com/darts-dice-coins/

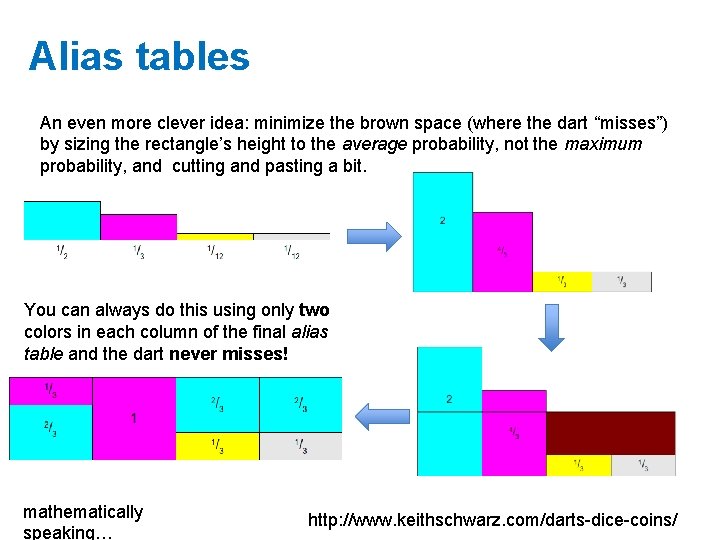

Alias tables An even more clever idea: minimize the brown space (where the dart “misses”) by sizing the rectangle’s height to the average probability, not the maximum probability, and cutting and pasting a bit. You can always do this using only two colors in each column of the final alias table and the dart never misses! mathematically speaking… http: //www. keithschwarz. com/darts-dice-coins/

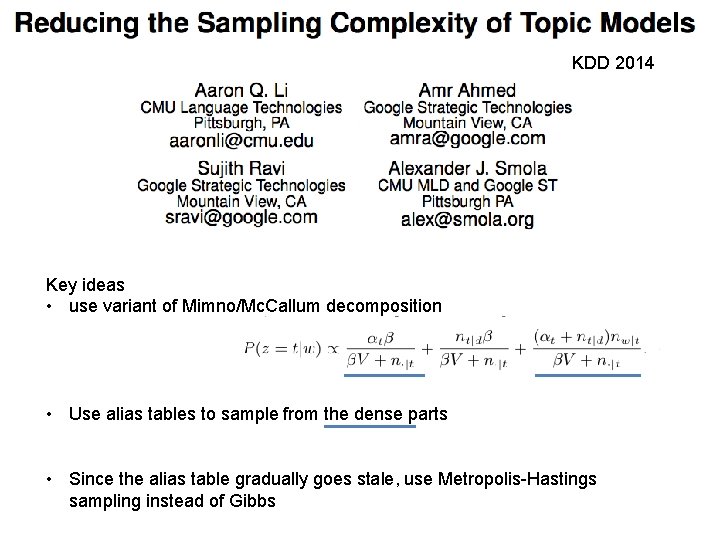

KDD 2014 Key ideas • use variant of Mimno/Mc. Callum decomposition • Use alias tables to sample from the dense parts • Since the alias table gradually goes stale, use Metropolis-Hastings sampling instead of Gibbs

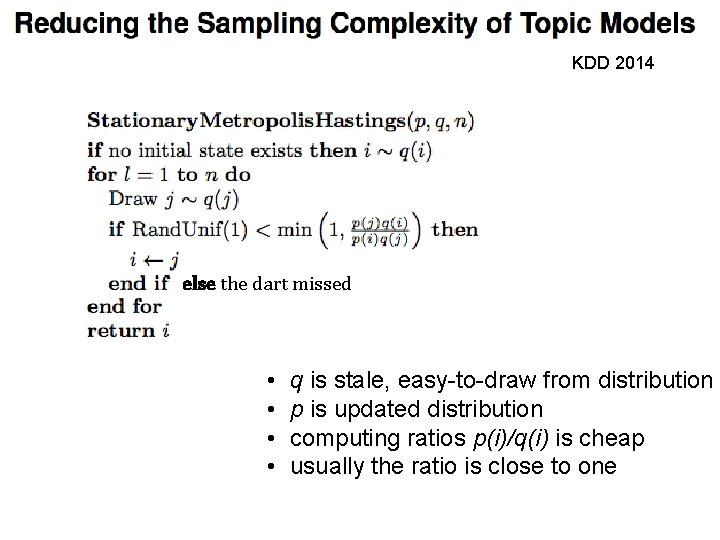

KDD 2014 else the dart missed • • q is stale, easy-to-draw from distribution p is updated distribution computing ratios p(i)/q(i) is cheap usually the ratio is close to one

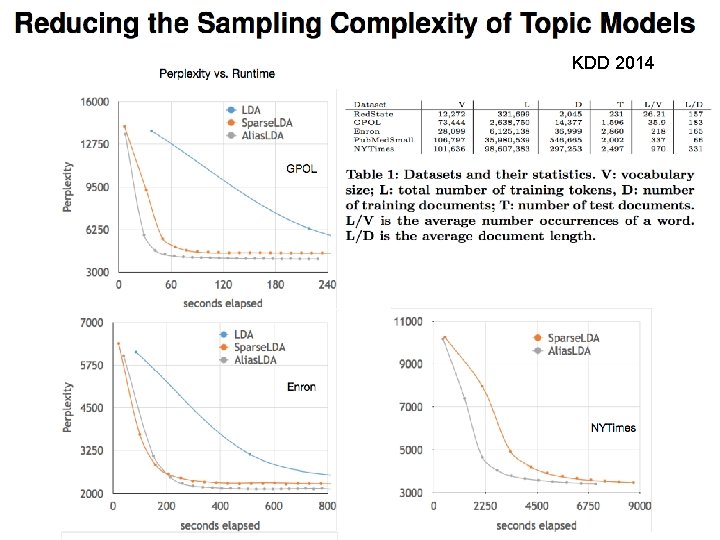

KDD 2014

- Slides: 42