POMDP Simple Example Andreyevich Markov POMDP Instance general

- Slides: 18

POMDP Simple Example Andreyevich Markov

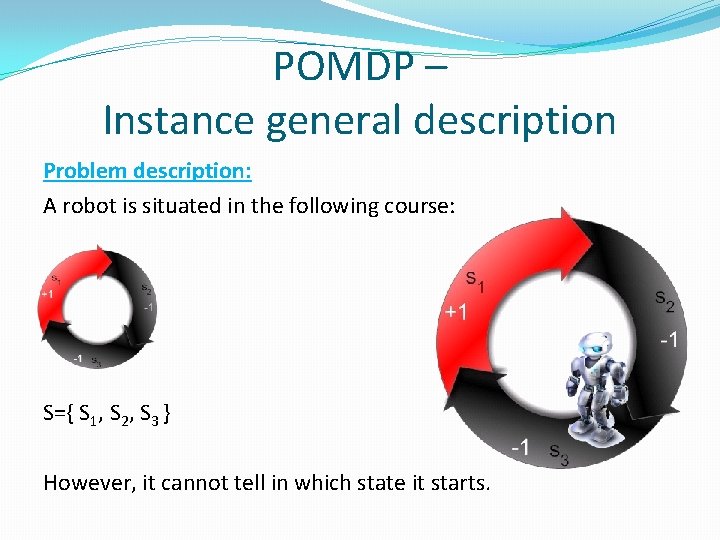

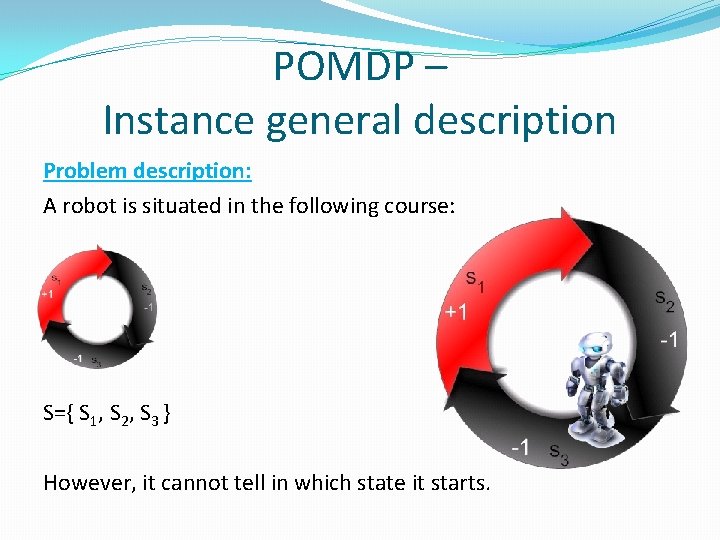

POMDP – Instance general description Problem description: A robot is situated in the following course: S={ S 1, S 2, S 3 } However, it cannot tell in which state it starts.

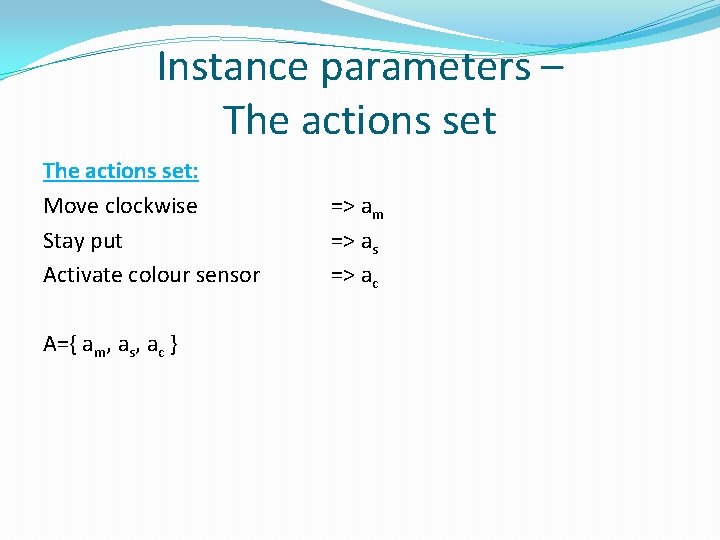

Instance parameters – The actions set: Move clockwise Stay put Activate colour sensor A={ am, as, ac } => am => as => ac

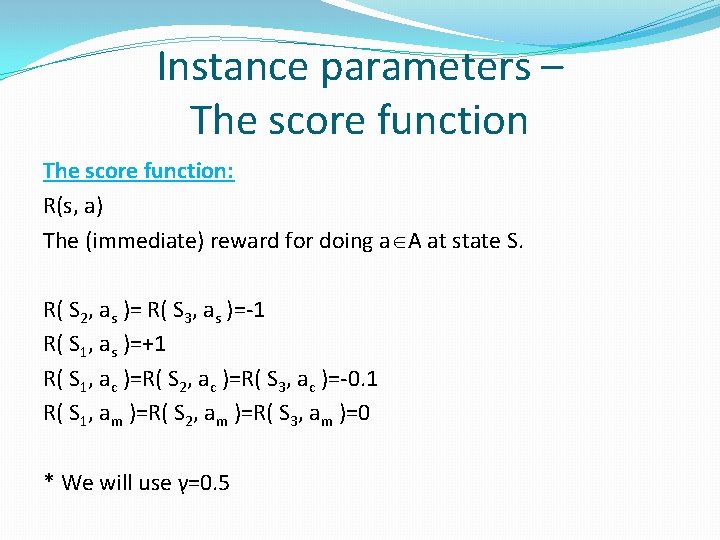

Instance parameters – The score function: R(s, a) The (immediate) reward for doing a A at state S. R( S 2, as )= R( S 3, as )=-1 R( S 1, as )=+1 R( S 1, ac )=R( S 2, ac )=R( S 3, ac )=-0. 1 R( S 1, am )=R( S 2, am )=R( S 3, am )=0 * We will use γ=0. 5

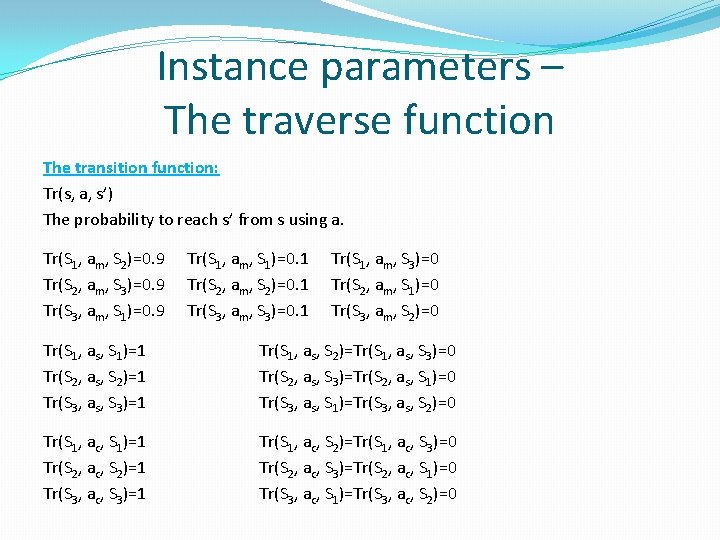

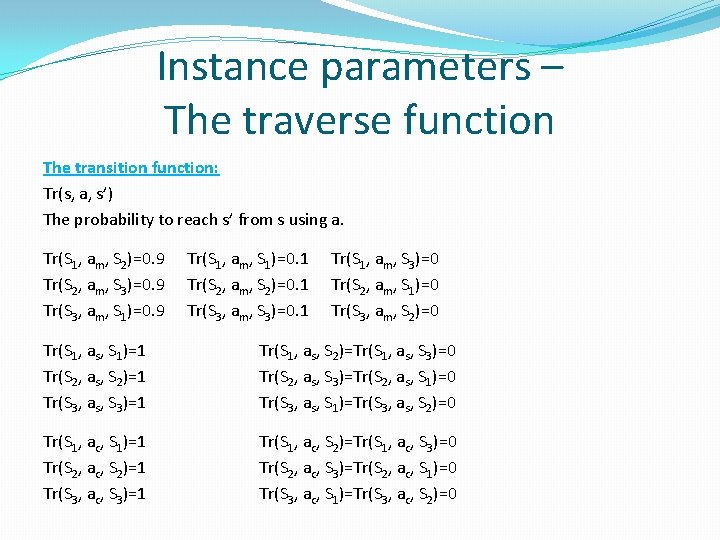

Instance parameters – The traverse function The transition function: Tr(s, a, s’) The probability to reach s’ from s using a. Tr(S 1, am, S 2)=0. 9 Tr(S 2, am, S 3)=0. 9 Tr(S 3, am, S 1)=0. 9 Tr(S 1, am, S 1)=0. 1 Tr(S 2, am, S 2)=0. 1 Tr(S 3, am, S 3)=0. 1 Tr(S 1, am, S 3)=0 Tr(S 2, am, S 1)=0 Tr(S 3, am, S 2)=0 Tr(S 1, as, S 1)=1 Tr(S 2, as, S 2)=1 Tr(S 3, as, S 3)=1 Tr(S 1, as, S 2)=Tr(S 1, as, S 3)=0 Tr(S 2, as, S 3)=Tr(S 2, as, S 1)=0 Tr(S 3, as, S 1)=Tr(S 3, as, S 2)=0 Tr(S 1, ac, S 1)=1 Tr(S 2, ac, S 2)=1 Tr(S 3, ac, S 3)=1 Tr(S 1, ac, S 2)=Tr(S 1, ac, S 3)=0 Tr(S 2, ac, S 3)=Tr(S 2, ac, S 1)=0 Tr(S 3, ac, S 1)=Tr(S 3, ac, S 2)=0

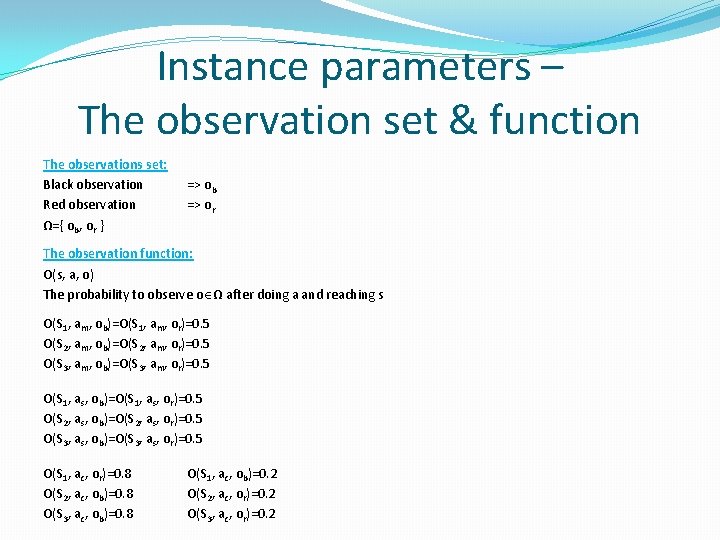

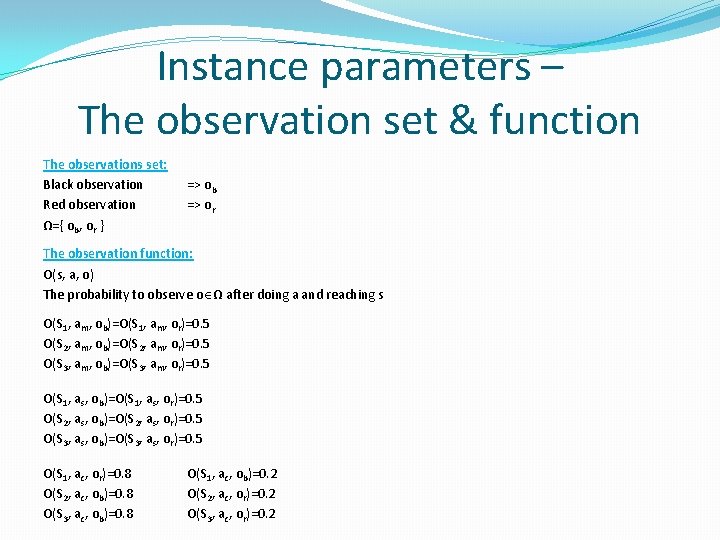

Instance parameters – The observation set & function The observations set: Black observation Red observation Ω={ ob, or } => ob => or The observation function: O(s, a, o) The probability to observe o Ω after doing a and reaching s O(S 1, am, ob)=O(S 1, am, or)=0. 5 O(S 2, am, ob)=O(S 2, am, or)=0. 5 O(S 3, am, ob)=O(S 3, am, or)=0. 5 O(S 1, as, ob)=O(S 1, as, or)=0. 5 O(S 2, as, ob)=O(S 2, as, or)=0. 5 O(S 3, as, ob)=O(S 3, as, or)=0. 5 O(S 1, ac, or)=0. 8 O(S 2, ac, ob)=0. 8 O(S 3, ac, ob)=0. 8 O(S 1, ac, ob)=0. 2 O(S 2, ac, or)=0. 2 O(S 3, ac, or)=0. 2

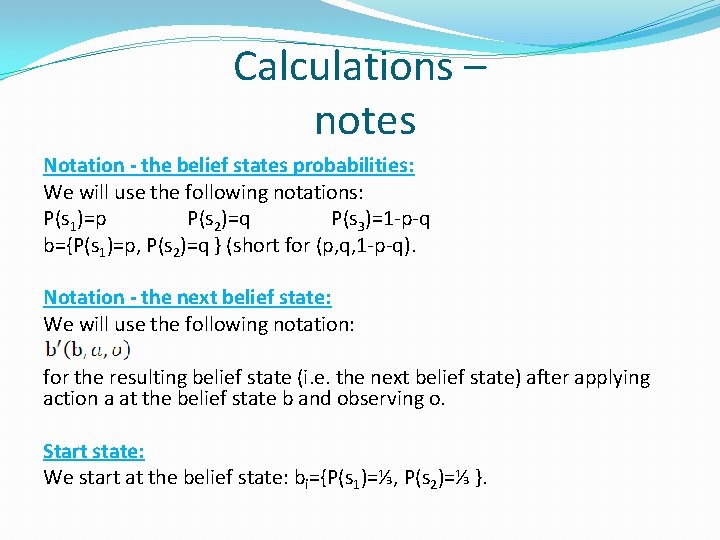

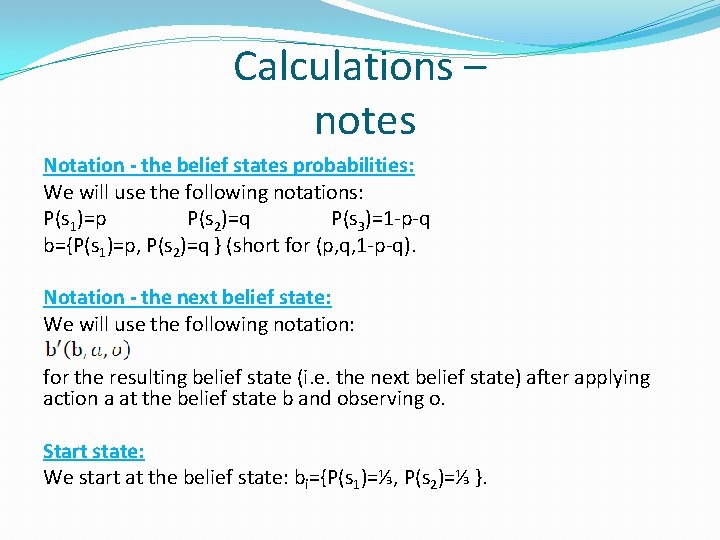

Calculations – notes Notation - the belief states probabilities: We will use the following notations: P(s 1)=p P(s 2)=q P(s 3)=1 -p-q b={P(s 1)=p, P(s 2)=q } (short for (p, q, 1 -p-q). Notation - the next belief state: We will use the following notation: for the resulting belief state (i. e. the next belief state) after applying action a at the belief state b and observing o. Start state: We start at the belief state: bi={P(s 1)=⅓, P(s 2)=⅓ }.

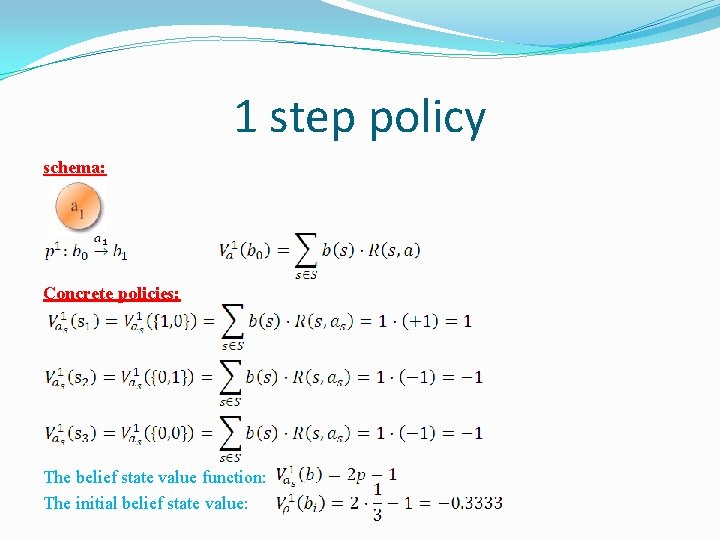

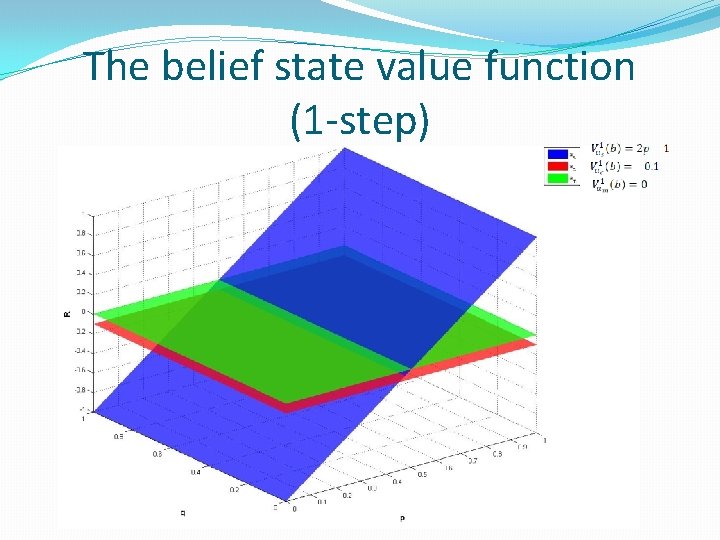

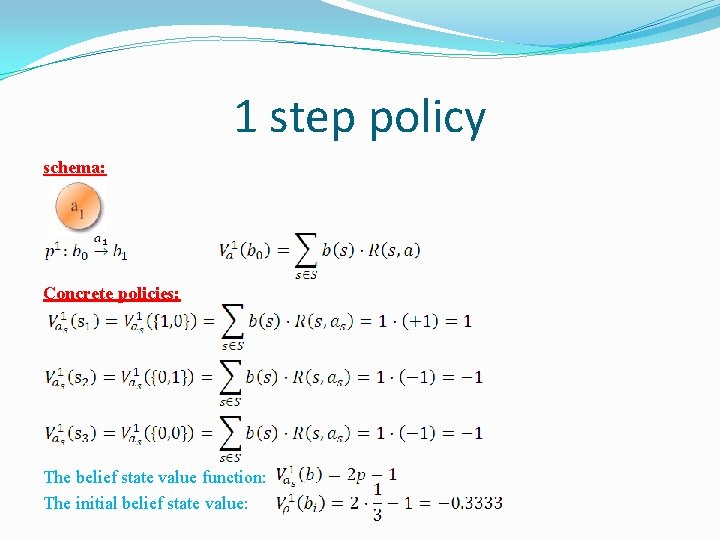

1 step policy schema: Concrete policies: The belief state value function: The initial belief state value:

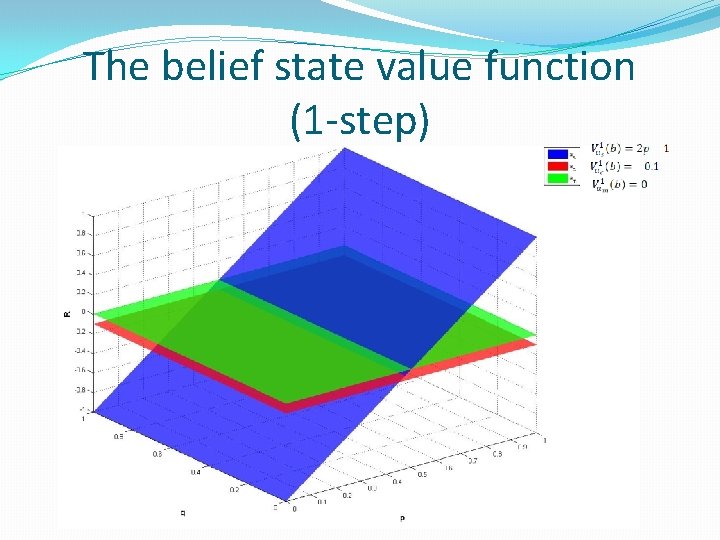

The belief state value function (1 -step)

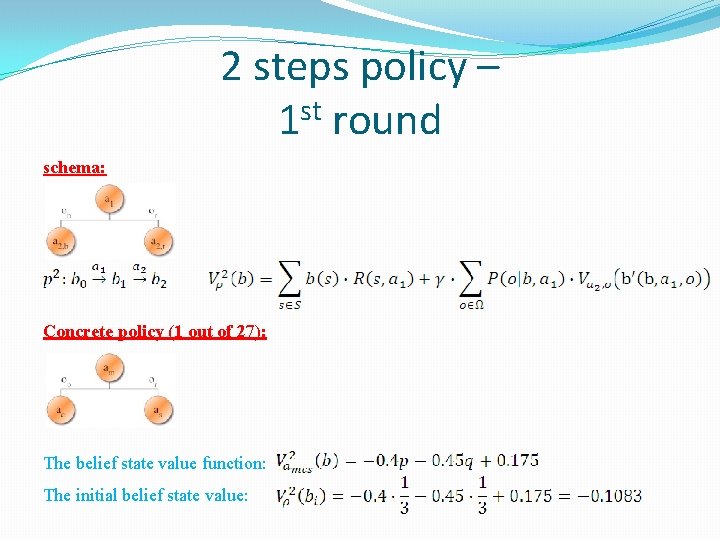

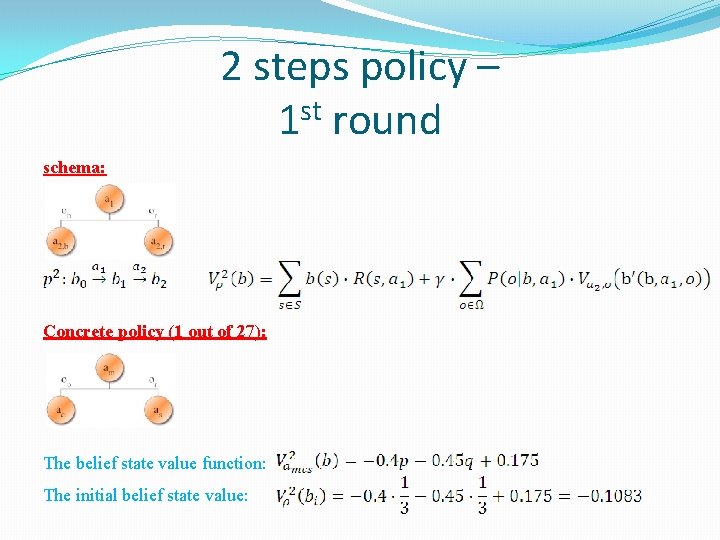

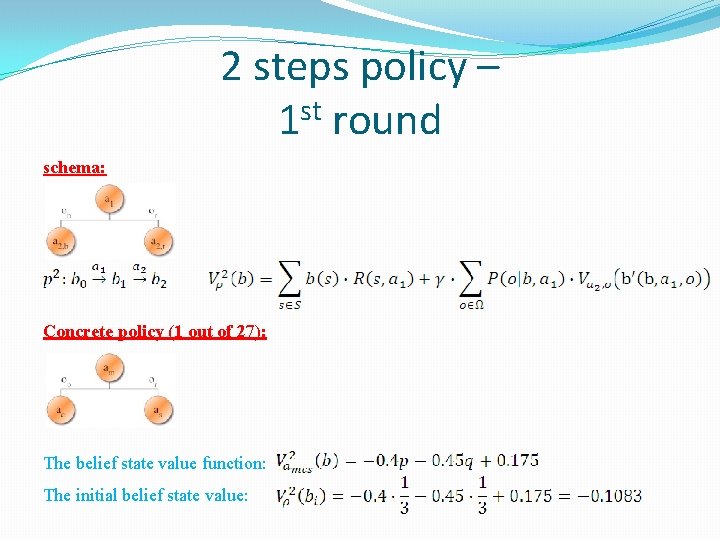

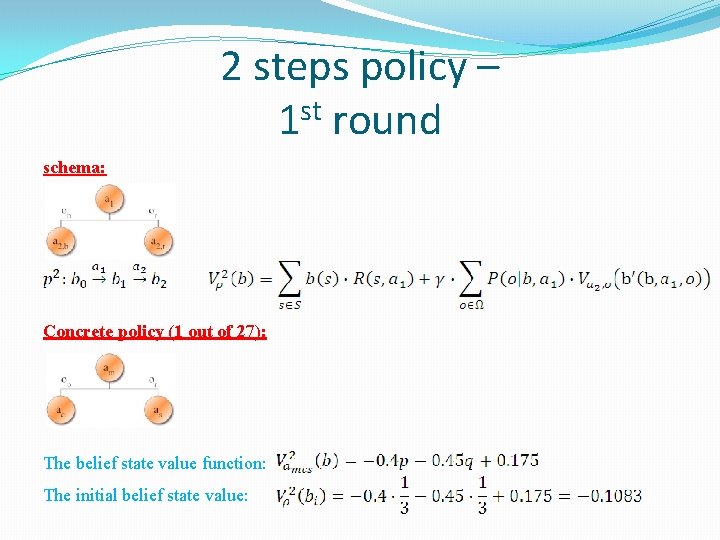

2 steps policy – 1 st round schema: Concrete policy (1 out of 27): The belief state value function: The initial belief state value:

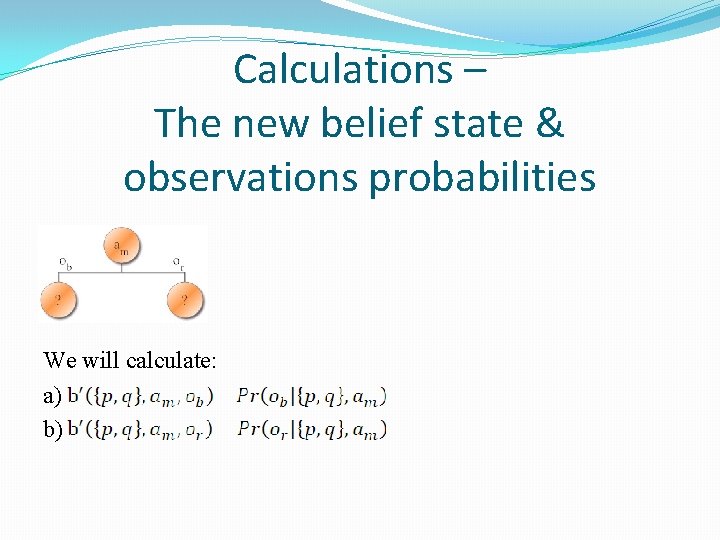

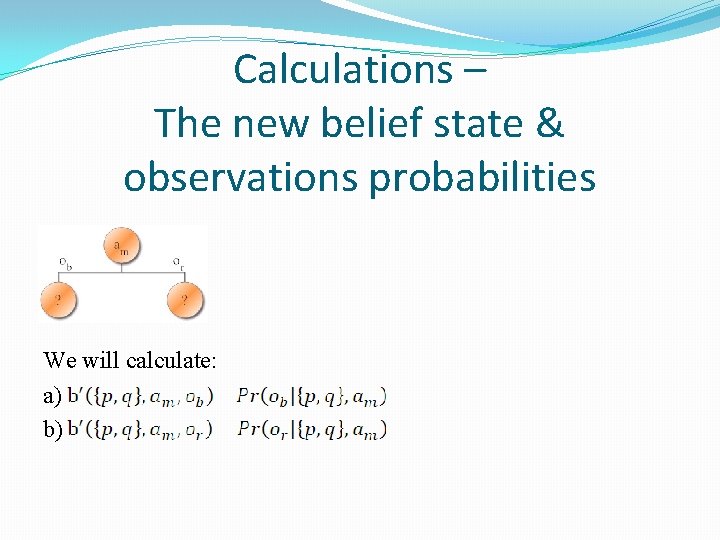

Calculations – The new belief state & observations probabilities We will calculate: a) b)

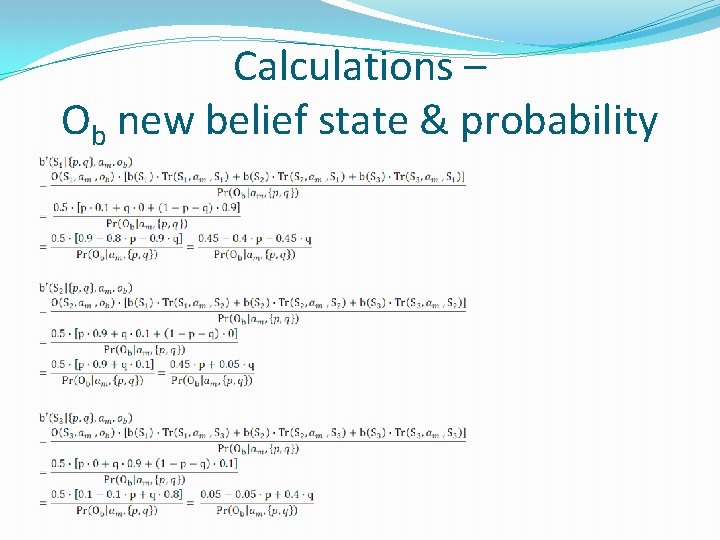

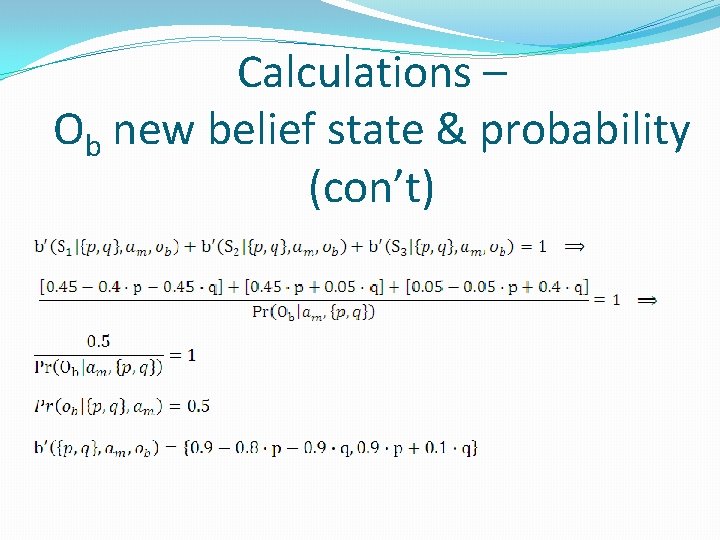

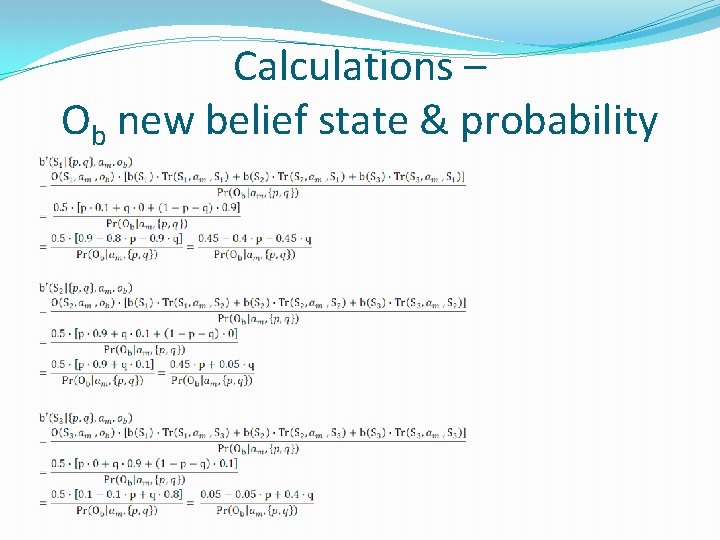

Calculations – Ob new belief state & probability

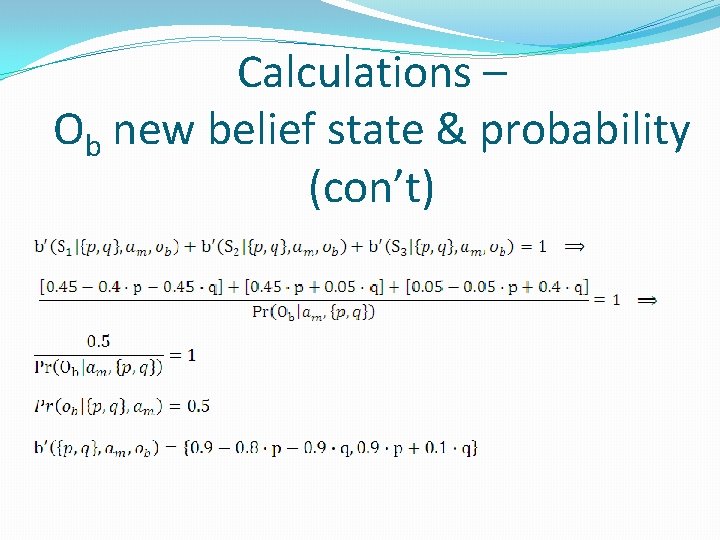

Calculations – Ob new belief state & probability (con’t)

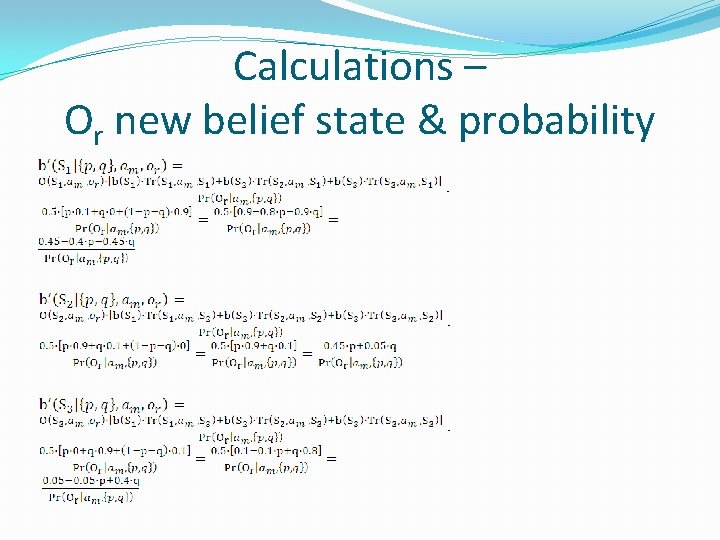

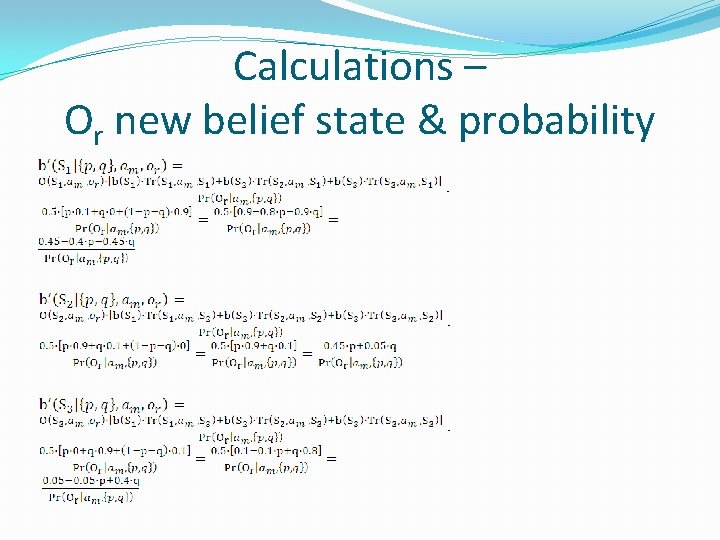

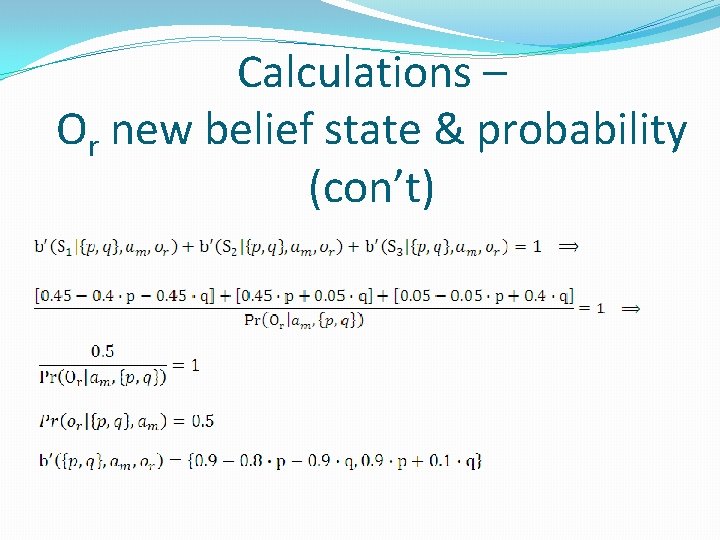

Calculations – Or new belief state & probability

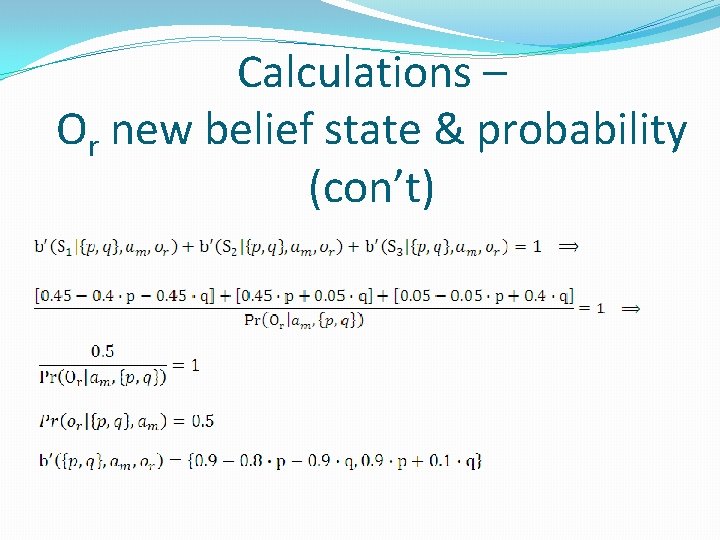

Calculations – Or new belief state & probability (con’t)

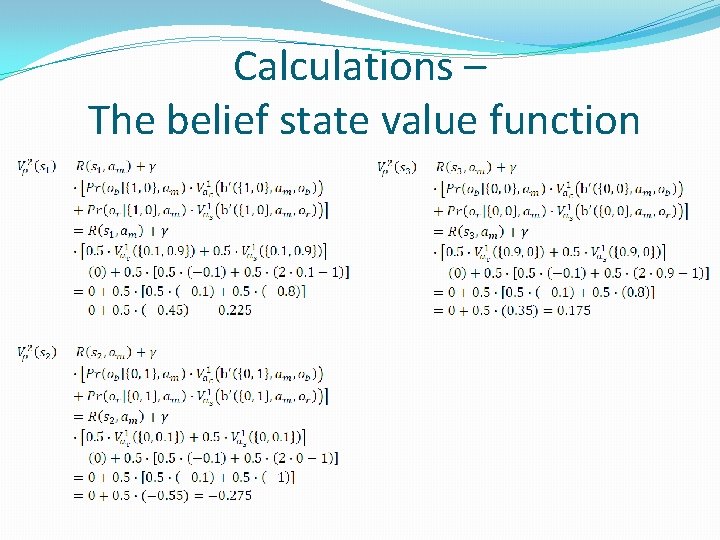

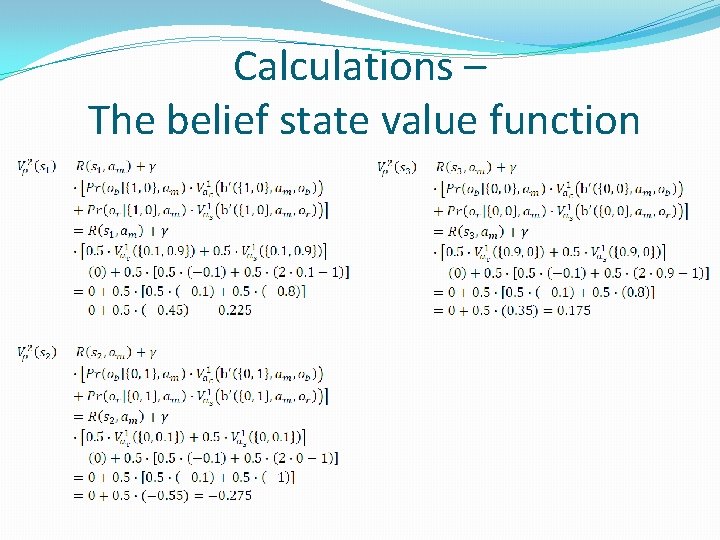

Calculations – The belief state value function

2 steps policy – 1 st round schema: Concrete policy (1 out of 27): The belief state value function: The initial belief state value:

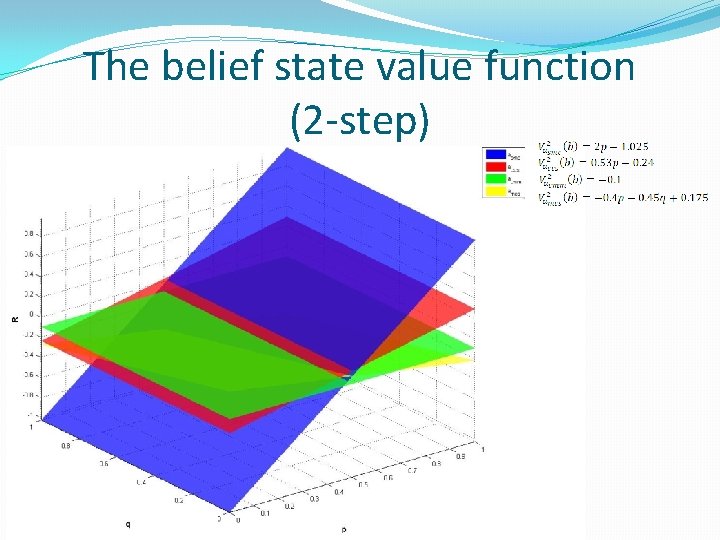

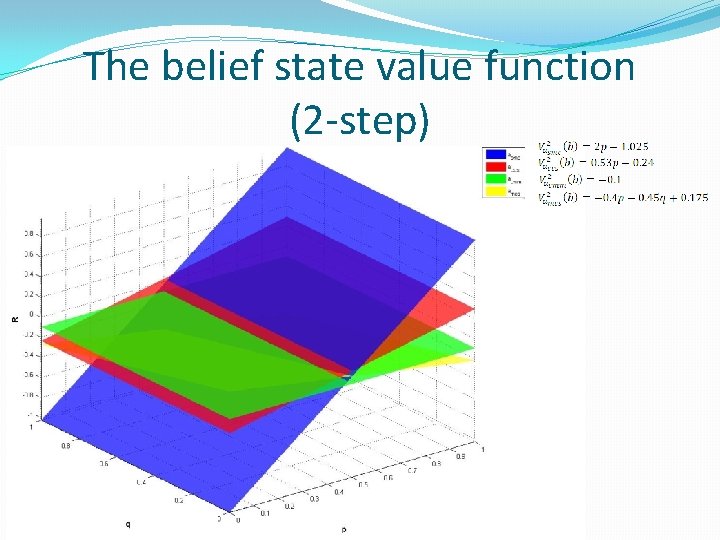

The belief state value function (2 -step)