Performance metrics l l What is a performance

- Slides: 42

Performance metrics l l What is a performance metric? Characteristics of good metrics Standard processor and system metrics Speedup and relative change Copyright 2004 David J. Lilja 1

What is a performance metric? l Count l l Duration l l Of a time interval Size l l Of how many times an event occurs Of some parameter A value derived from these fundamental measurements Copyright 2004 David J. Lilja 2

Time-normalized metrics l “Rate” metrics l Normalize metric to common time basis l l l Transactions per second Bytes per second (Number of events) ÷ (time interval over which events occurred) “Throughput” Useful for comparing measurements over different time intervals Copyright 2004 David J. Lilja 3

What makes a “good” metric? l l l Allows accurate and detailed comparisons Leads to correct conclusions Is well understood by everyone Has a quantitative basis A good metric helps avoid erroneous conclusions Copyright 2004 David J. Lilja 4

Good metrics are … l Linear l l l Fits with our intuition If metric increases 2 x, performance should increase 2 x Not an absolute requirement, but very appealing l d. B scale to measure sound is nonlinear Copyright 2004 David J. Lilja 5

Good metrics are … l Reliable l If metric A > metric B l l l Then, Performance A > Performance B Seems obvious! However, l l MIPS(A) > MIPS(B), but Execution time (A) > Execution time (B) Copyright 2004 David J. Lilja 6

Good metrics are … l Repeatable l l l Same value is measured each time an experiment is performed Must be deterministic Seems obvious, but not always true… l E. g. Time-sharing changes measured execution time Copyright 2004 David J. Lilja 7

Good metrics are … l Easy to use l l No one will use it if it is hard to measure Hard to measure/derive l l → less likely to be measured correctly A wrong value is worse than a bad metric! Copyright 2004 David J. Lilja 8

Good metrics are … l Consistent l l Units and definition are constant across systems Seems obvious, but often not true… l l E. g. MIPS, MFLOPS Inconsistent → impossible to make comparisons Copyright 2004 David J. Lilja 9

Good metrics are … l Independent l l A lot of $$$ riding on performance results Pressure on manufacturers to optimize for a particular metric Pressure to influence definition of a metric But a good metric is independent of this pressure Copyright 2004 David J. Lilja 10

Good metrics are … l l l Linear -- nice, but not necessary Reliable -- required Repeatable -- required Easy to use -- nice, but not necessary Consistent -- required Independent -- required Copyright 2004 David J. Lilja 11

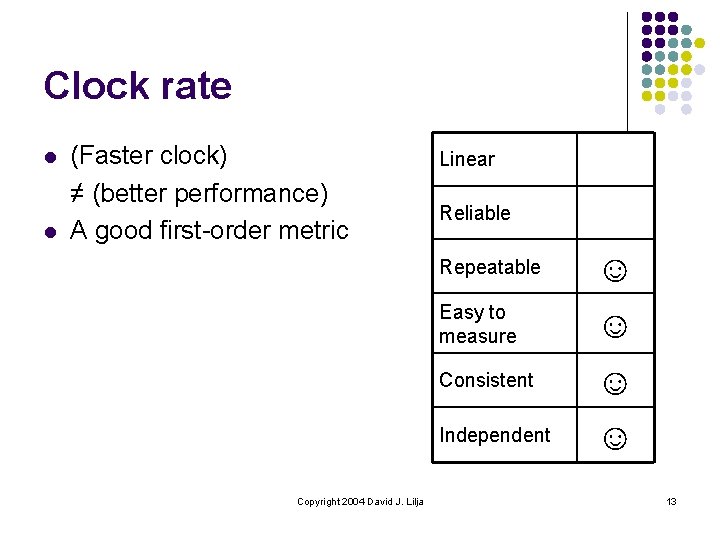

Clock rate l Faster clock == higher performance l l l But is it a proportional increase? What about architectural differences? l l 1 GHz processor always better than 2 GHz Actual operations performed per cycle Clocks per instruction (CPI) Penalty on branches due to pipeline depth What if the processor is not the bottleneck? l Memory and I/O delays Copyright 2004 David J. Lilja 12

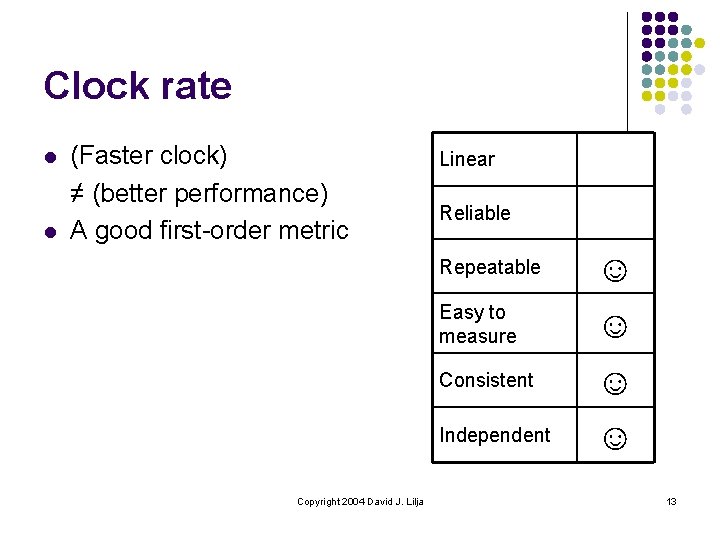

Clock rate l l (Faster clock) ≠ (better performance) A good first-order metric Copyright 2004 David J. Lilja Linear Reliable Repeatable ☺ Easy to measure ☺ Consistent ☺ Independent ☺ 13

MIPS l l l Measure of computation “speed” Millions of instructions executed per second MIPS = n / (Te * 1000000) l l l n = number of instructions Te = execution time Physical analog l Distance traveled per unit time Copyright 2004 David J. Lilja 14

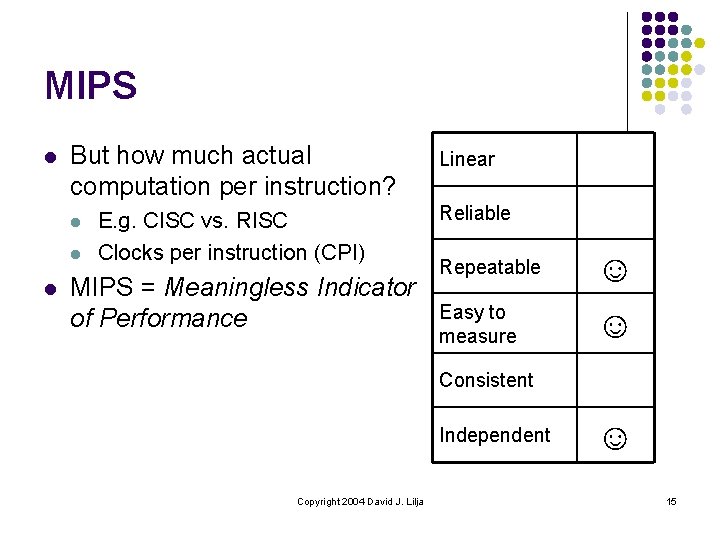

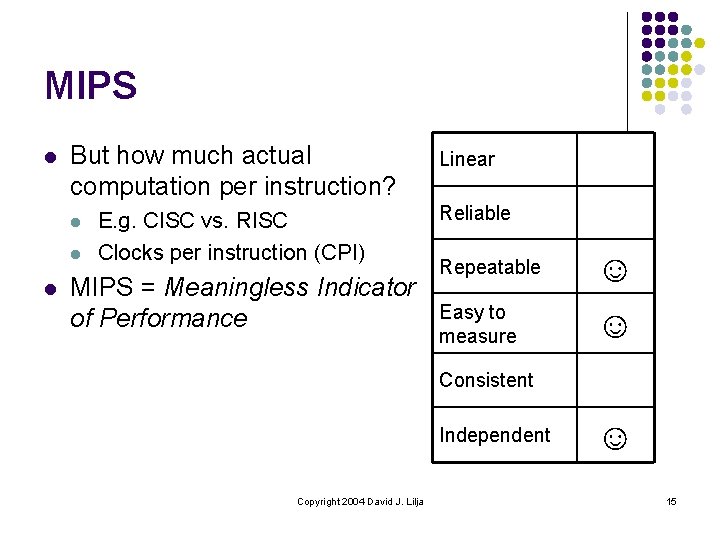

MIPS l But how much actual computation per instruction? l l l E. g. CISC vs. RISC Clocks per instruction (CPI) MIPS = Meaningless Indicator of Performance Linear Reliable Repeatable ☺ Easy to measure ☺ Consistent Independent Copyright 2004 David J. Lilja ☺ 15

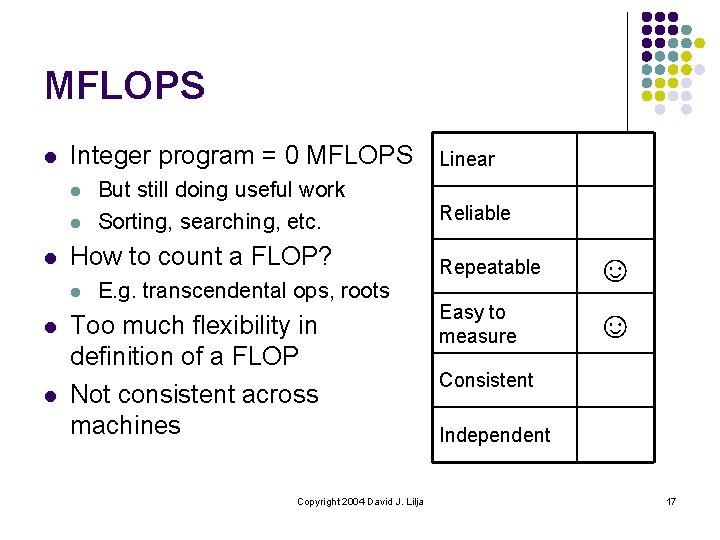

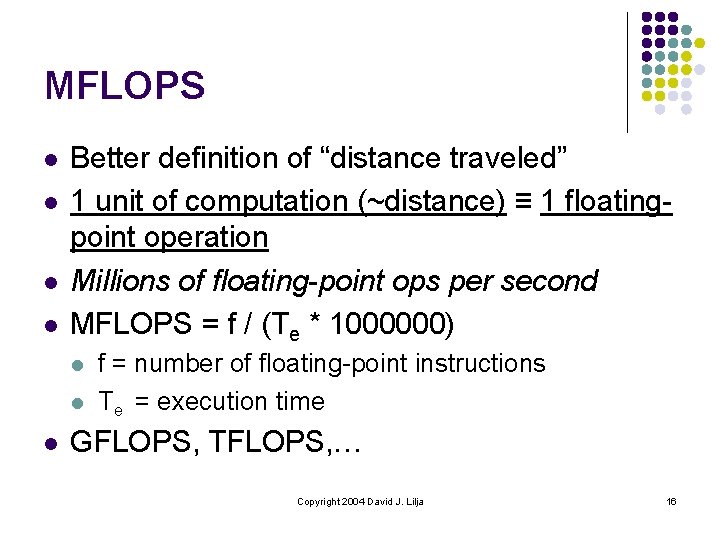

MFLOPS l l Better definition of “distance traveled” 1 unit of computation (~distance) ≡ 1 floatingpoint operation Millions of floating-point ops per second MFLOPS = f / (Te * 1000000) l l l f = number of floating-point instructions Te = execution time GFLOPS, TFLOPS, … Copyright 2004 David J. Lilja 16

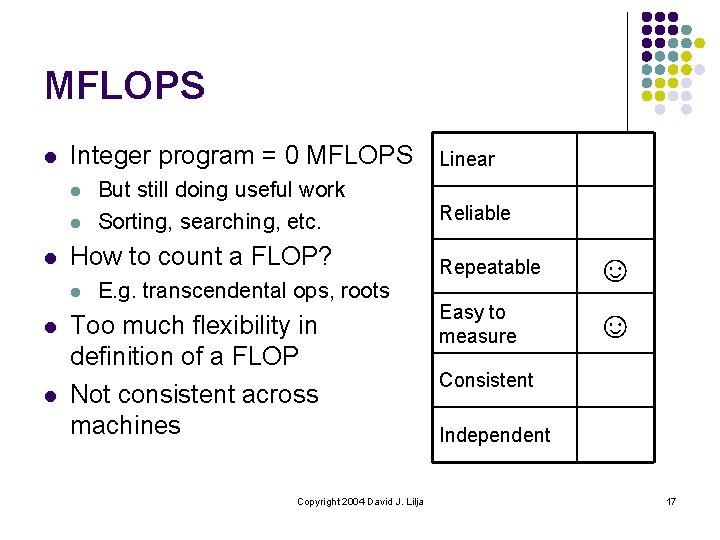

MFLOPS l Integer program = 0 MFLOPS l l l How to count a FLOP? l l l But still doing useful work Sorting, searching, etc. E. g. transcendental ops, roots Too much flexibility in definition of a FLOP Not consistent across machines Copyright 2004 David J. Lilja Linear Reliable Repeatable ☺ Easy to measure ☺ Consistent Independent 17

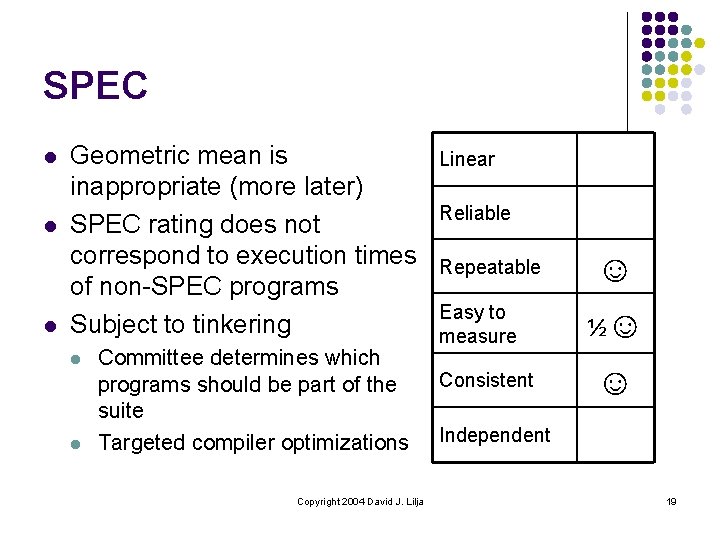

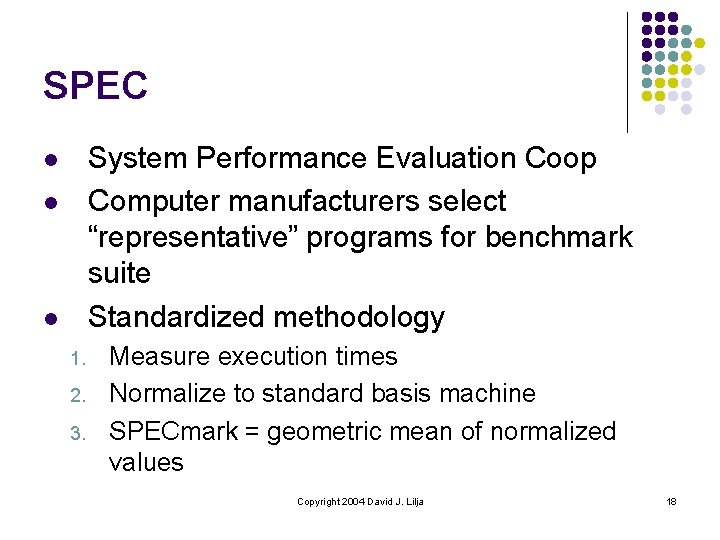

SPEC System Performance Evaluation Coop Computer manufacturers select “representative” programs for benchmark suite Standardized methodology l l l 1. 2. 3. Measure execution times Normalize to standard basis machine SPECmark = geometric mean of normalized values Copyright 2004 David J. Lilja 18

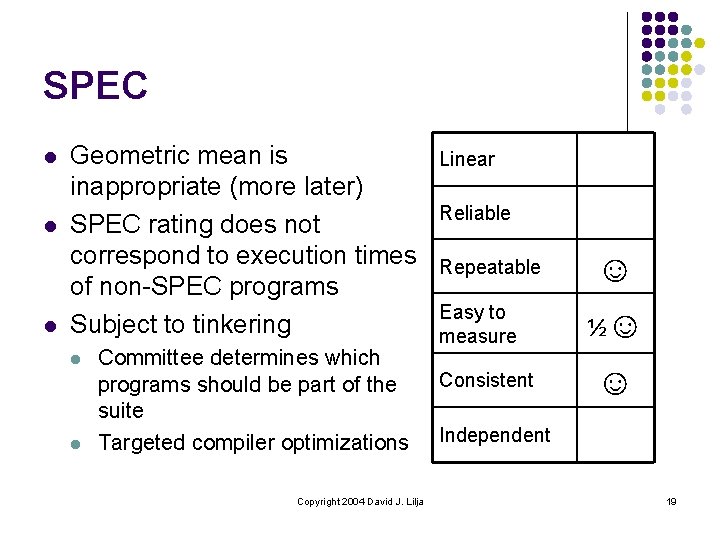

SPEC l l l Geometric mean is inappropriate (more later) SPEC rating does not correspond to execution times of non-SPEC programs Subject to tinkering l l Committee determines which programs should be part of the suite Targeted compiler optimizations Copyright 2004 David J. Lilja Linear Reliable Repeatable Easy to measure Consistent ☺ ½☺ ☺ Independent 19

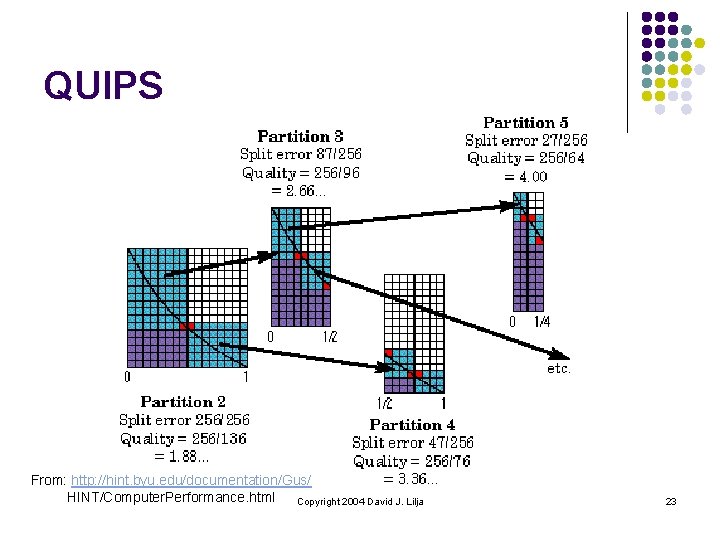

QUIPS l l Developed as part of HINT benchmark Instead of effort expended, use quality of solution Quality has rigorous mathematical definition QUIPS = Quality Improvements Per Second Copyright 2004 David J. Lilja 20

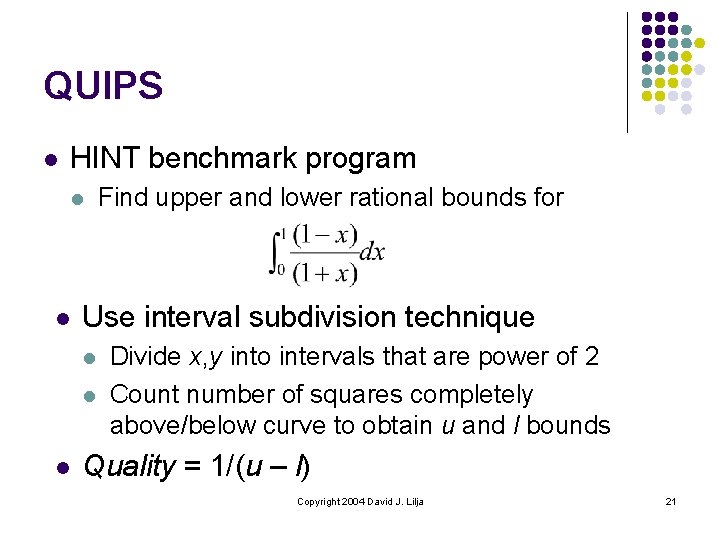

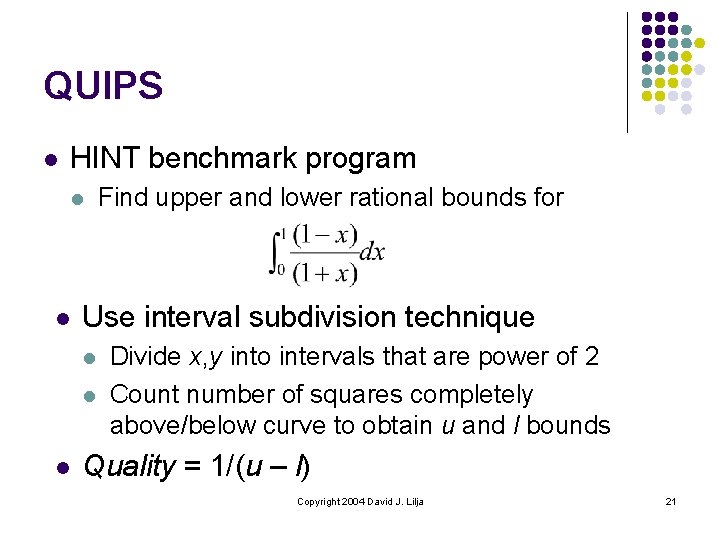

QUIPS l HINT benchmark program l l Use interval subdivision technique l l l Find upper and lower rational bounds for Divide x, y into intervals that are power of 2 Count number of squares completely above/below curve to obtain u and l bounds Quality = 1/(u – l) Copyright 2004 David J. Lilja 21

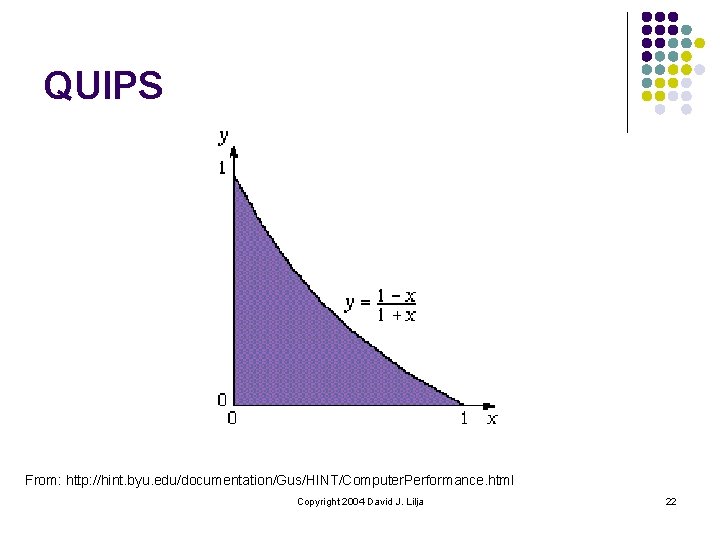

QUIPS From: http: //hint. byu. edu/documentation/Gus/HINT/Computer. Performance. html Copyright 2004 David J. Lilja 22

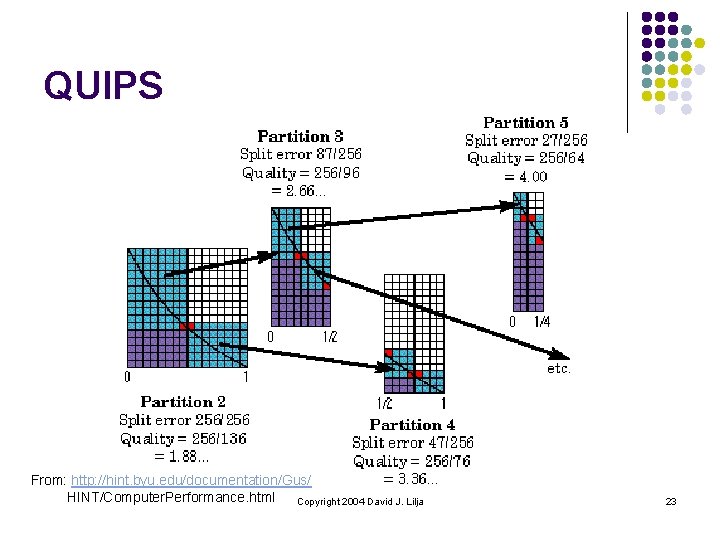

QUIPS From: http: //hint. byu. edu/documentation/Gus/ HINT/Computer. Performance. html Copyright 2004 David J. Lilja 23

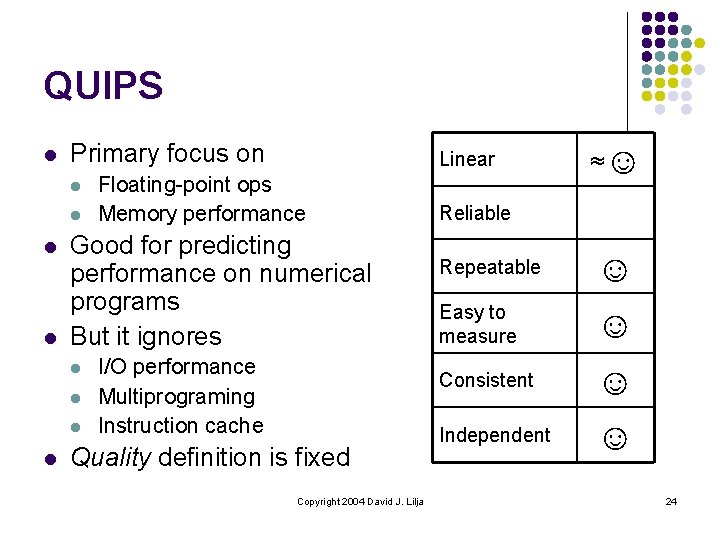

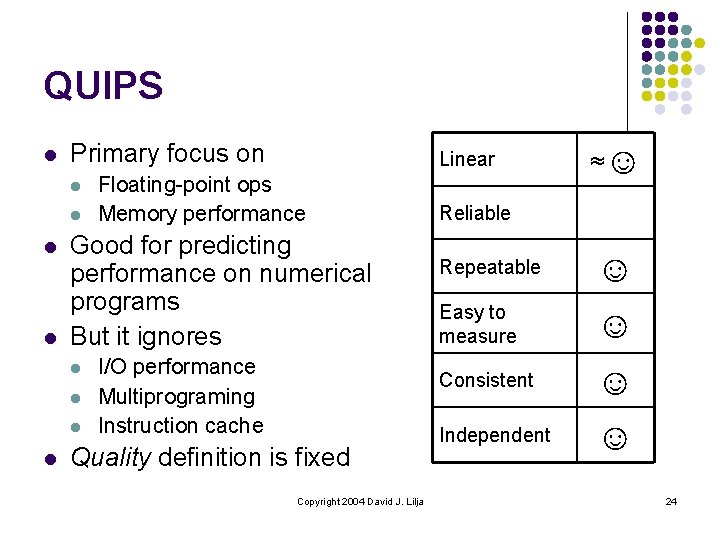

QUIPS l Primary focus on l l Floating-point ops Memory performance Good for predicting performance on numerical programs But it ignores l l Linear I/O performance Multiprograming Instruction cache Quality definition is fixed Copyright 2004 David J. Lilja ≈☺ Reliable Repeatable ☺ Easy to measure ☺ Consistent ☺ Independent ☺ 24

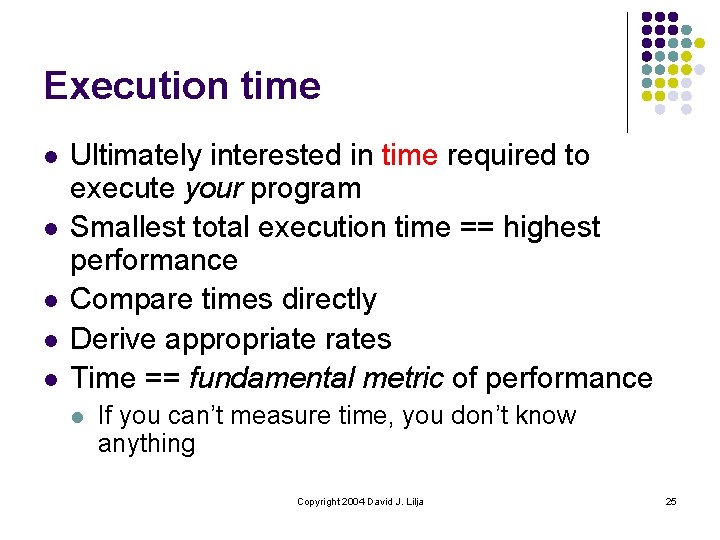

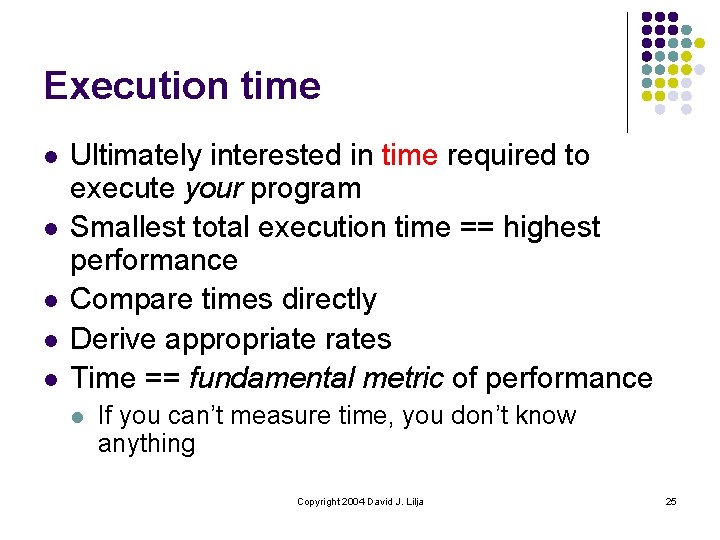

Execution time l l l Ultimately interested in time required to execute your program Smallest total execution time == highest performance Compare times directly Derive appropriate rates Time == fundamental metric of performance l If you can’t measure time, you don’t know anything Copyright 2004 David J. Lilja 25

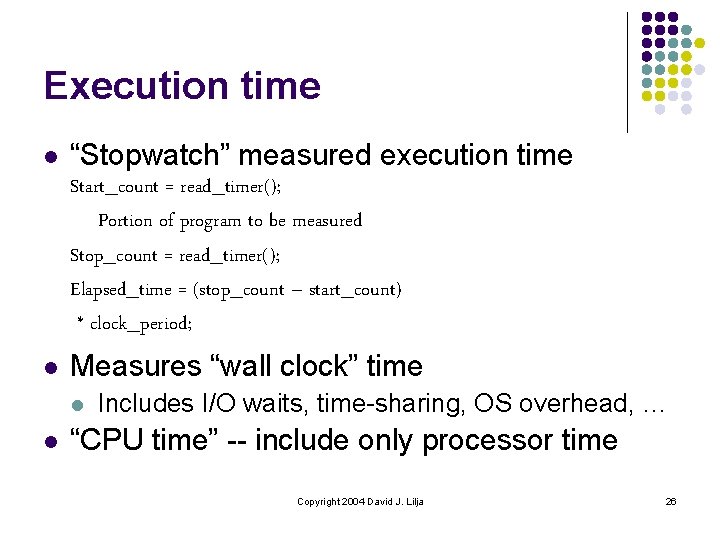

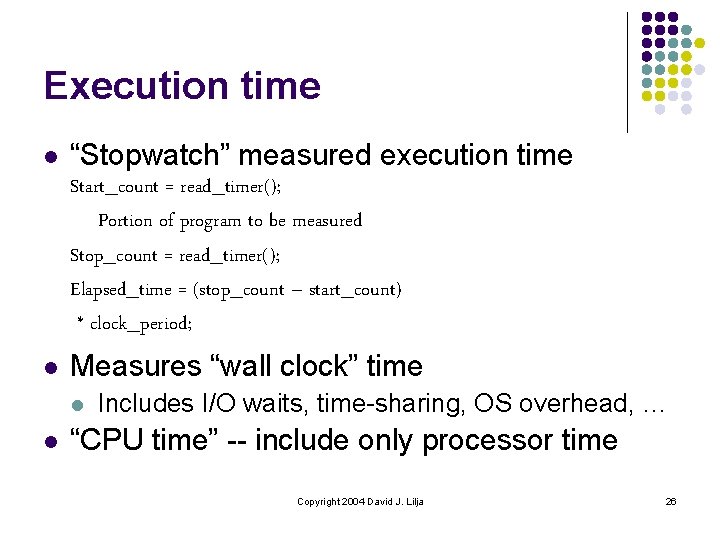

Execution time l “Stopwatch” measured execution time Start_count = read_timer(); Portion of program to be measured Stop_count = read_timer(); Elapsed_time = (stop_count – start_count) * clock_period; l Measures “wall clock” time l l Includes I/O waits, time-sharing, OS overhead, … “CPU time” -- include only processor time Copyright 2004 David J. Lilja 26

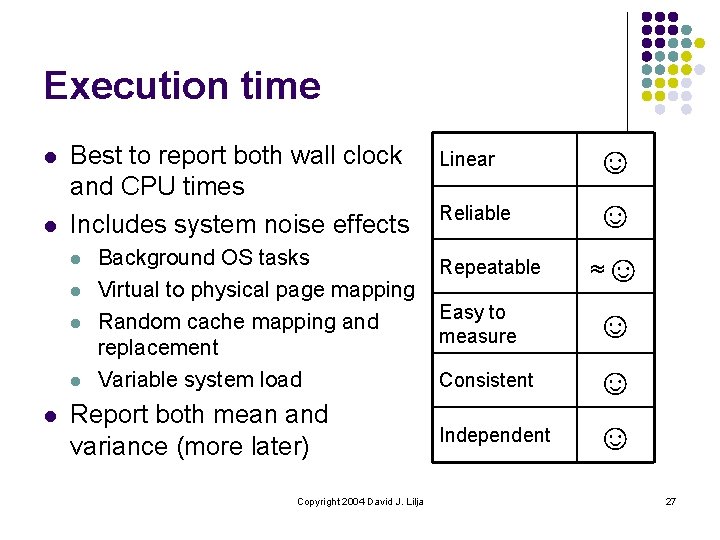

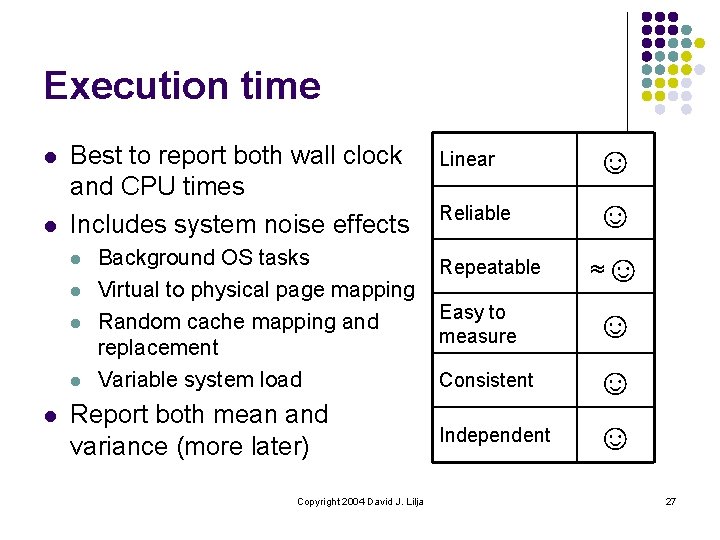

Execution time l l Best to report both wall clock and CPU times Includes system noise effects l l l Background OS tasks Virtual to physical page mapping Random cache mapping and replacement Variable system load Report both mean and variance (more later) Copyright 2004 David J. Lilja Linear Reliable Repeatable ☺ ☺ ≈☺ Easy to measure ☺ Consistent ☺ Independent ☺ 27

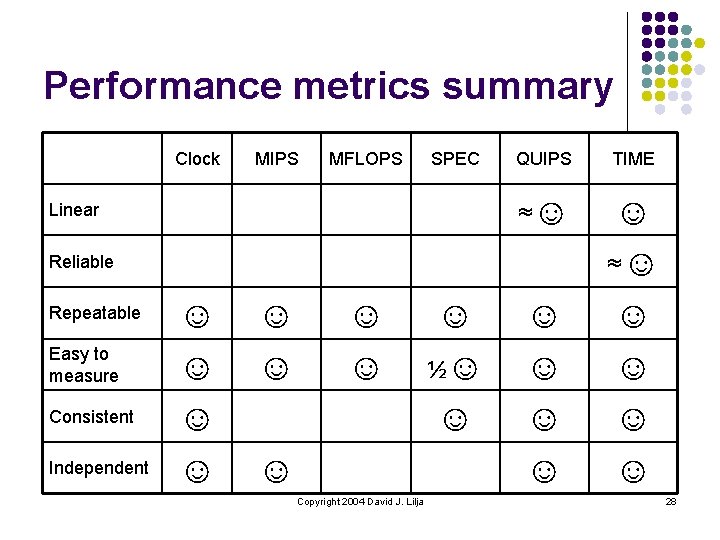

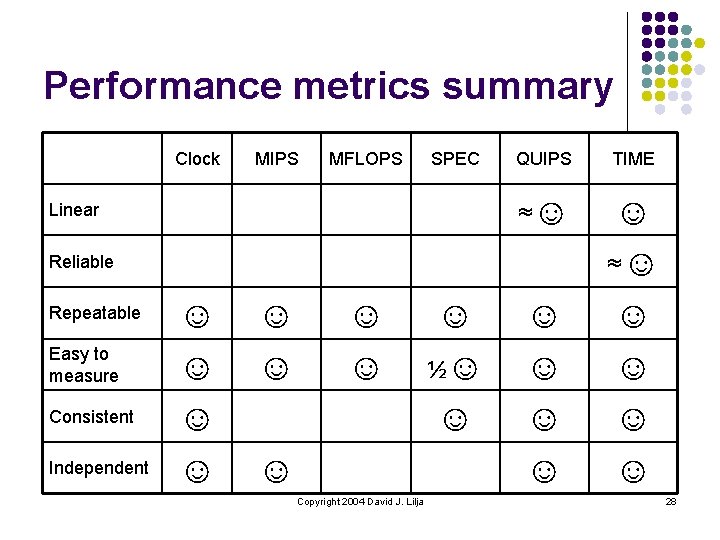

Performance metrics summary Clock MIPS MFLOPS SPEC Linear QUIPS TIME ≈☺ ☺ ☺ ☺ ☺ Reliable Repeatable Easy to measure Consistent Independent ☺ ☺ ☺ ☺ ☺ Copyright 2004 David J. Lilja ☺ ½☺ ☺ ☺ 28

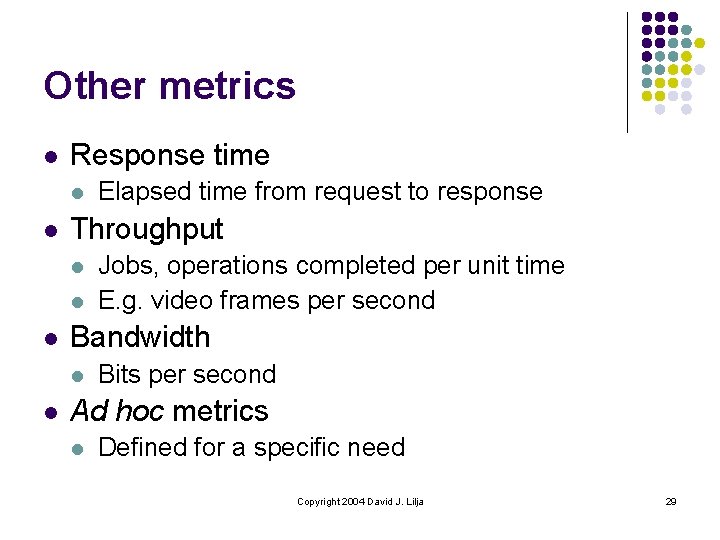

Other metrics l Response time l l Throughput l l l Jobs, operations completed per unit time E. g. video frames per second Bandwidth l l Elapsed time from request to response Bits per second Ad hoc metrics l Defined for a specific need Copyright 2004 David J. Lilja 29

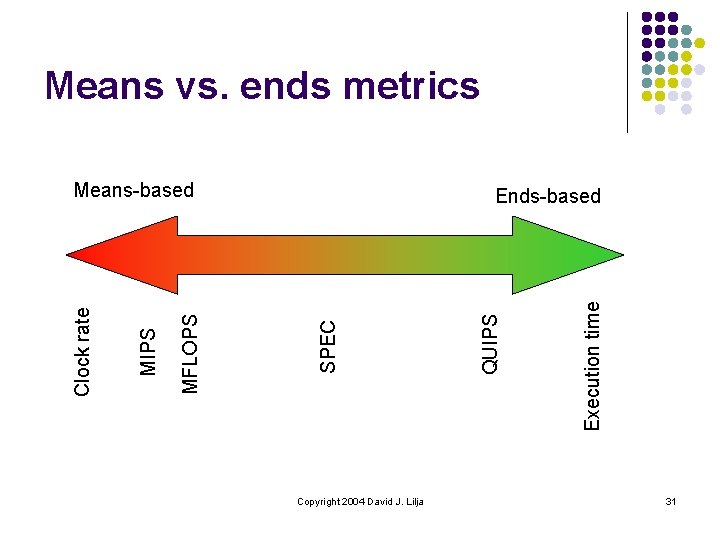

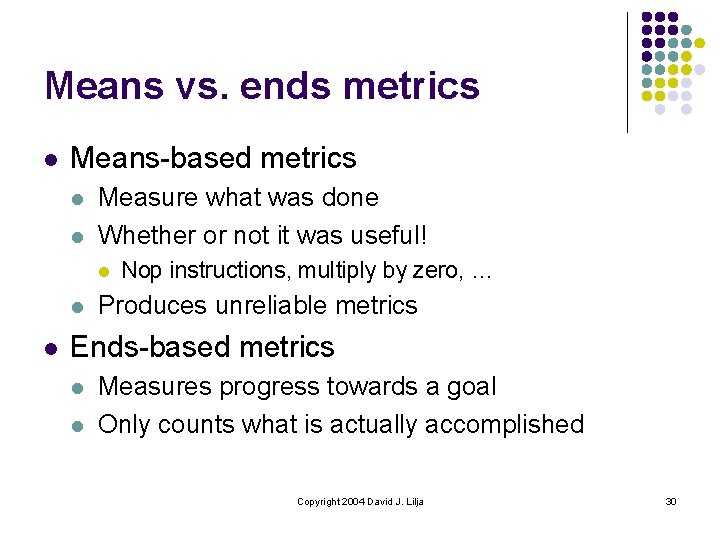

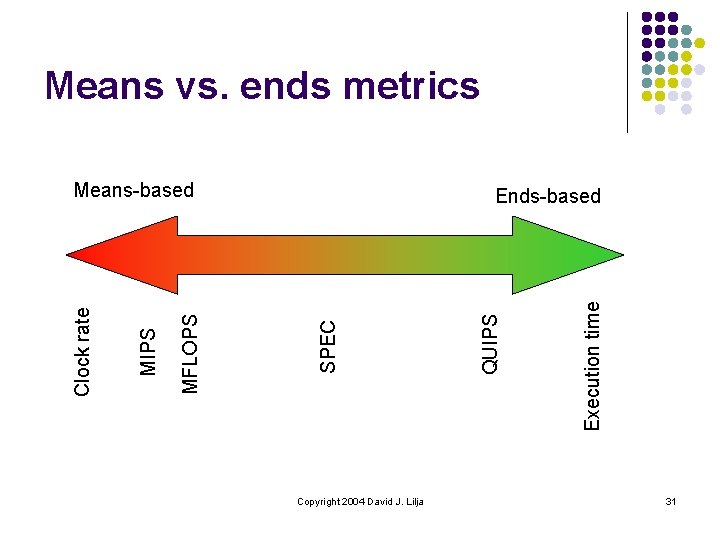

Means vs. ends metrics l Means-based metrics l l Measure what was done Whether or not it was useful! l l l Nop instructions, multiply by zero, … Produces unreliable metrics Ends-based metrics l l Measures progress towards a goal Only counts what is actually accomplished Copyright 2004 David J. Lilja 30

Means vs. ends metrics Copyright 2004 David J. Lilja Execution time QUIPS Ends-based SPEC MFLOPS MIPS Clock rate Means-based 31

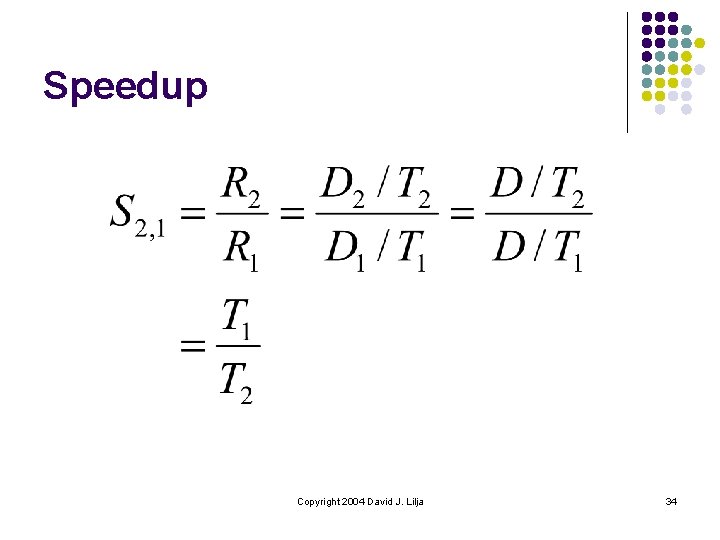

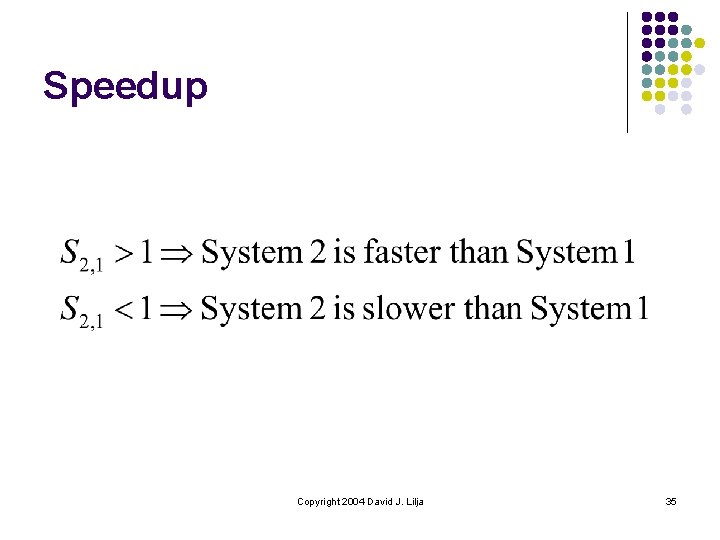

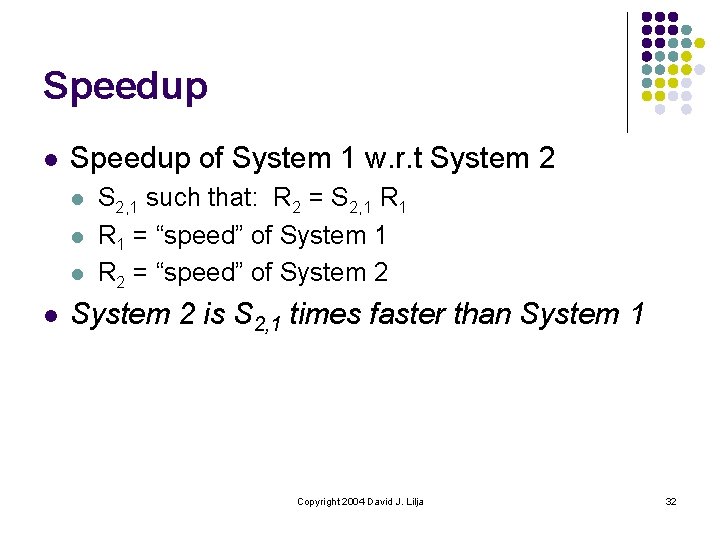

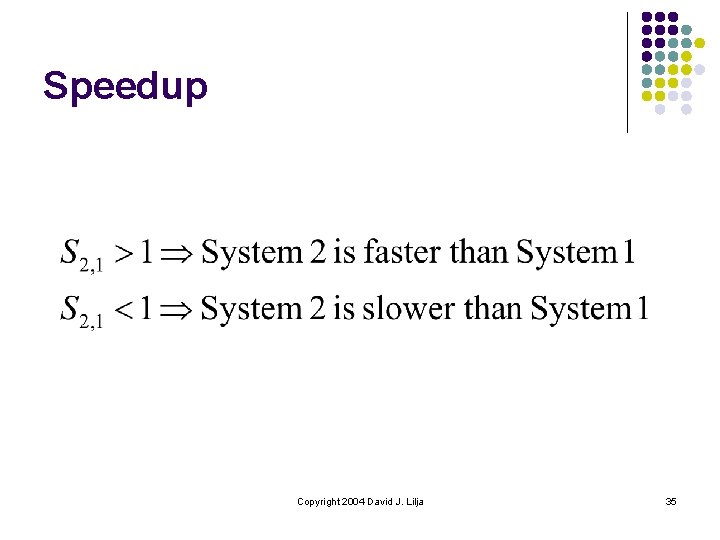

Speedup l Speedup of System 1 w. r. t System 2 l l S 2, 1 such that: R 2 = S 2, 1 R 1 = “speed” of System 1 R 2 = “speed” of System 2 is S 2, 1 times faster than System 1 Copyright 2004 David J. Lilja 32

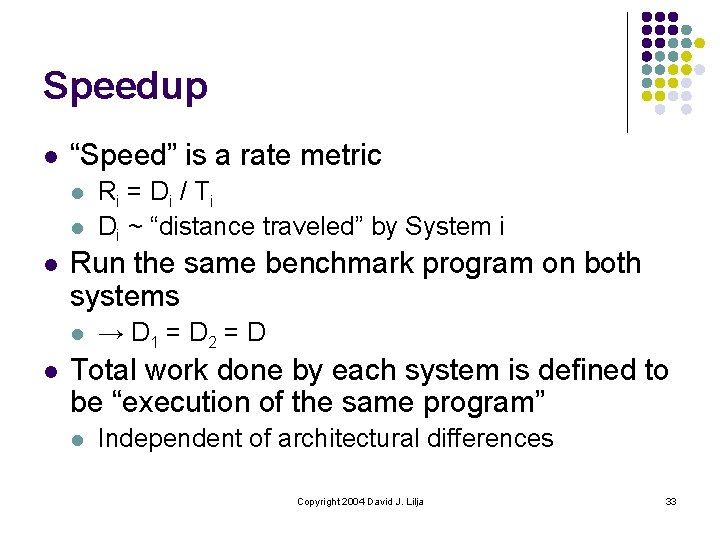

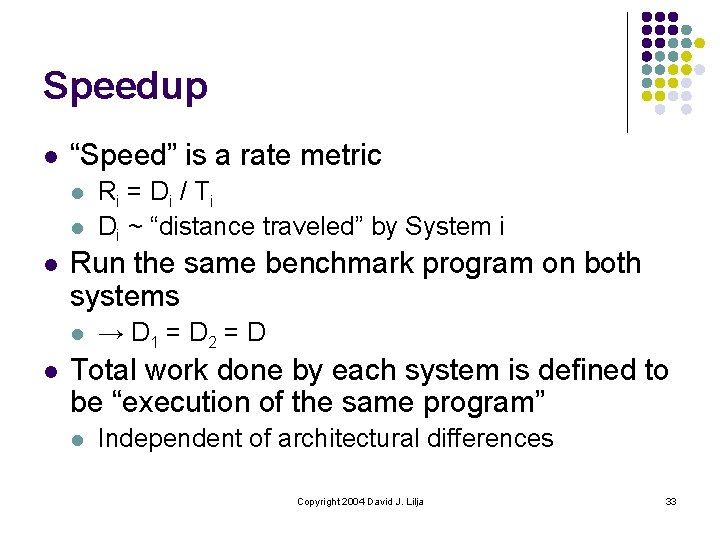

Speedup l “Speed” is a rate metric l l l Run the same benchmark program on both systems l l Ri = Di / T i Di ~ “distance traveled” by System i → D 1 = D 2 = D Total work done by each system is defined to be “execution of the same program” l Independent of architectural differences Copyright 2004 David J. Lilja 33

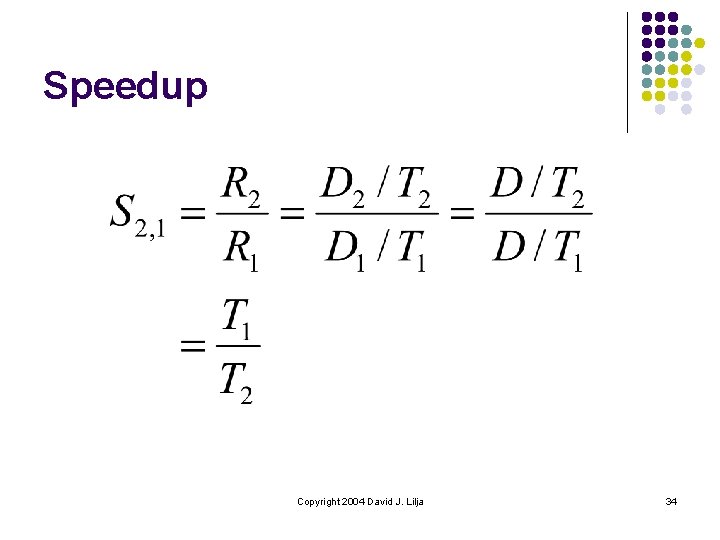

Speedup Copyright 2004 David J. Lilja 34

Speedup Copyright 2004 David J. Lilja 35

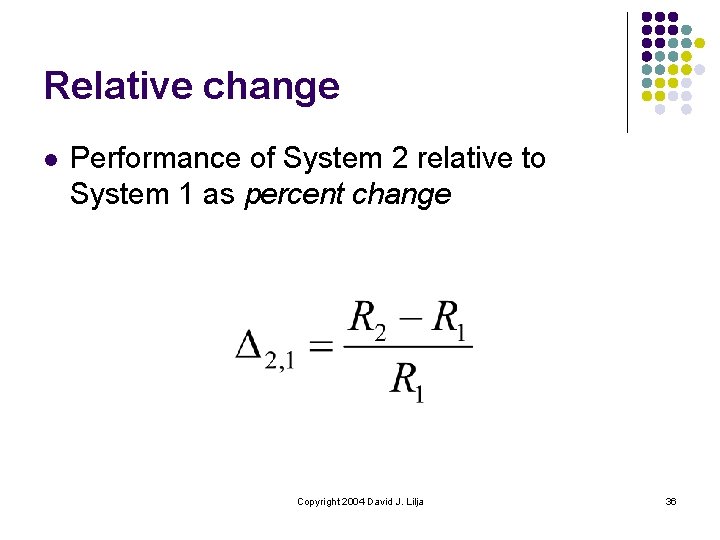

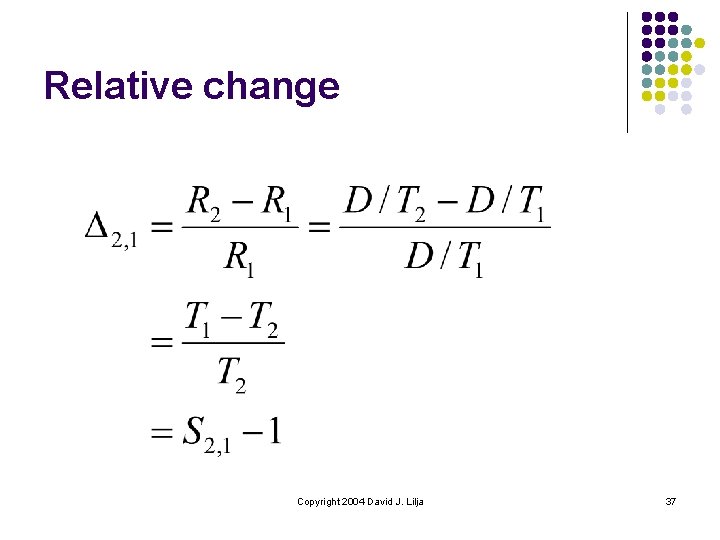

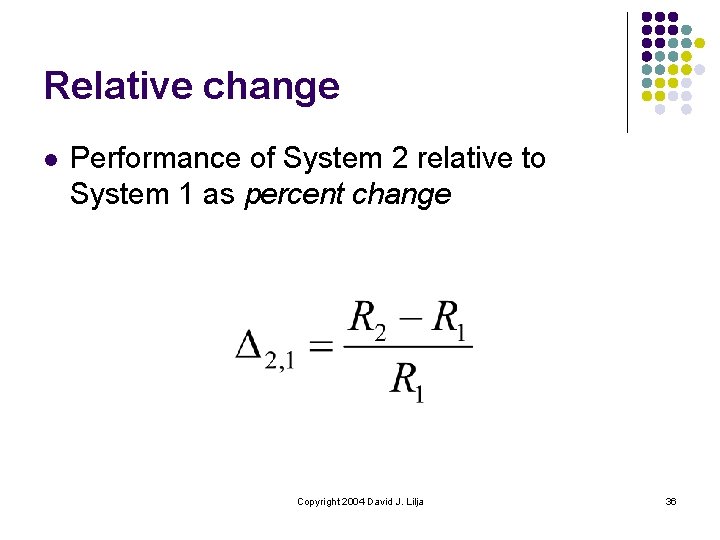

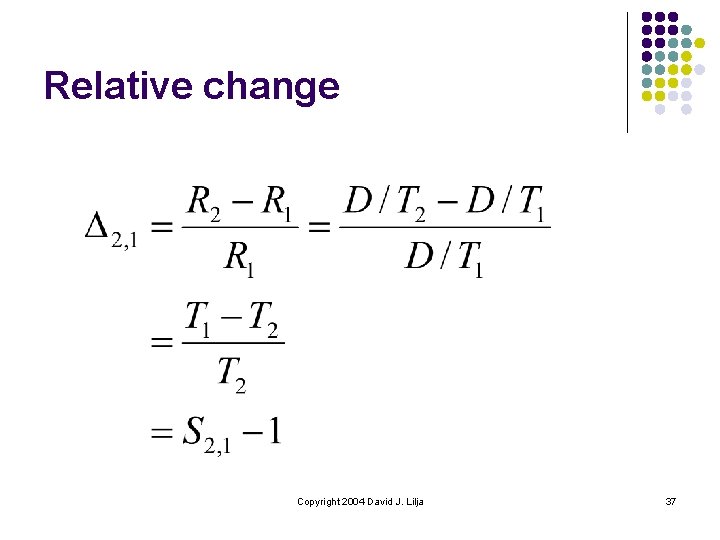

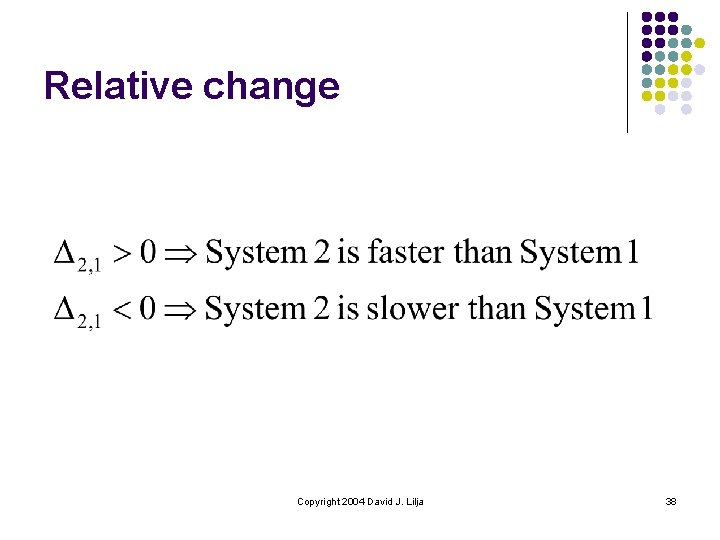

Relative change l Performance of System 2 relative to System 1 as percent change Copyright 2004 David J. Lilja 36

Relative change Copyright 2004 David J. Lilja 37

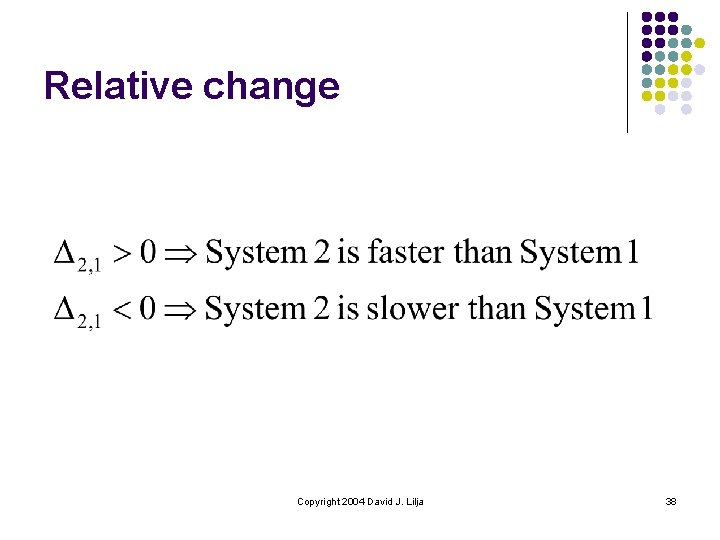

Relative change Copyright 2004 David J. Lilja 38

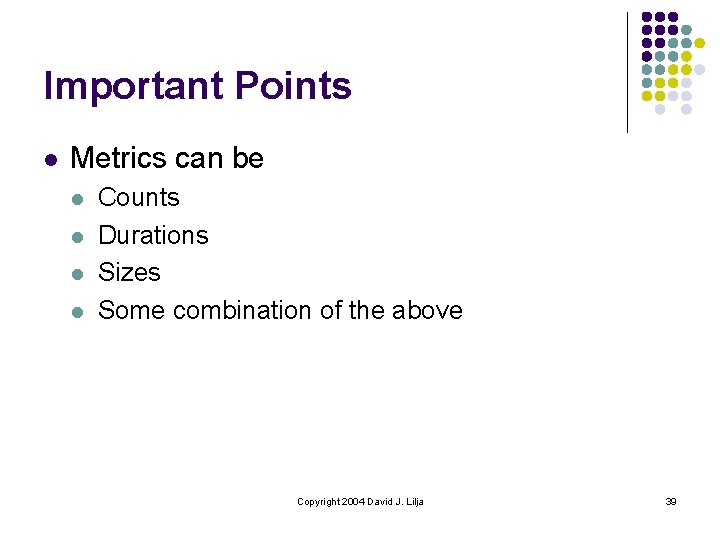

Important Points l Metrics can be l l Counts Durations Sizes Some combination of the above Copyright 2004 David J. Lilja 39

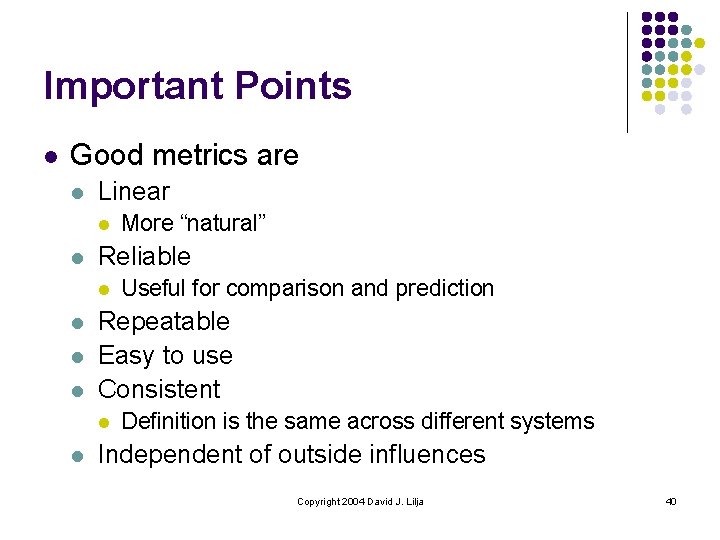

Important Points l Good metrics are l Linear l l Reliable l l Useful for comparison and prediction Repeatable Easy to use Consistent l l More “natural” Definition is the same across different systems Independent of outside influences Copyright 2004 David J. Lilja 40

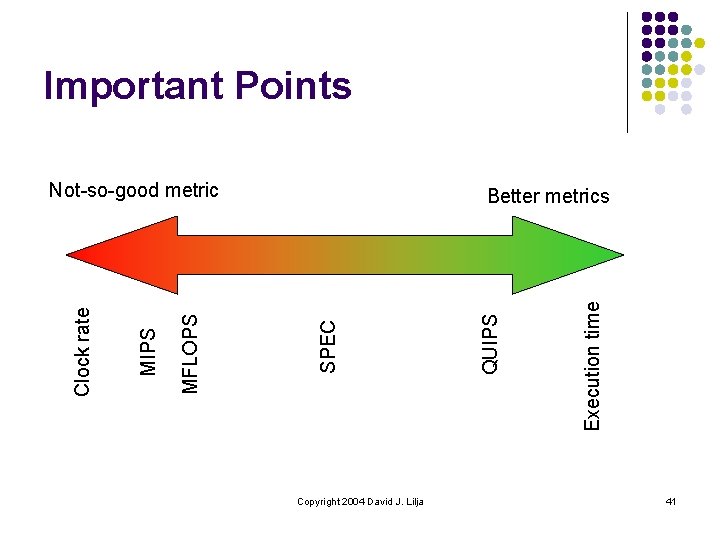

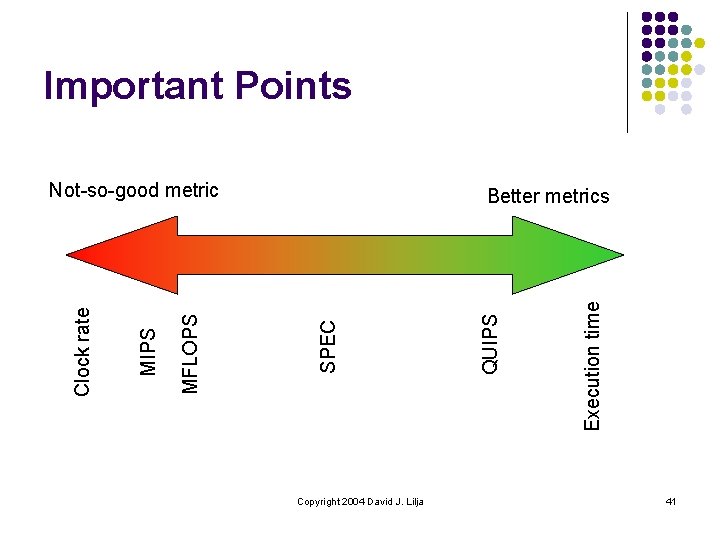

Important Points Copyright 2004 David J. Lilja Execution time QUIPS Better metrics SPEC MFLOPS MIPS Clock rate Not-so-good metric 41

Important Points l l Speedup = T 2 / T 1 Relative change = Speedup - 1 Copyright 2004 David J. Lilja 42