NUMA Everywhere but oh so difficult to use

NUMA Everywhere but oh so difficult to use Adam Backman White Star Software

About White Star Software • The oldest and most respected independent Progress Open. Edge consulting firm • 5 of the top Open. Edge DBAs in the world: Adam Backman, Tom Bascom, Dan Foreman, Paul Koufalis and Nectarios Daloglou • Our performance, monitoring and alerting tool, Pro. Top. An incredibly powerful single-pane-of-glass view of your entire Open. Edge ecosystems • Real world mentoring From real world DBAs info@wss. com | wss. com

Agenda What is NUMA? Why is there so much NUMA today? How does it work? What is the problem How can I work in a NUMA environment?

What is NUMA? Non-Uniform Memory Architecture Tightly coupled CPU and Memory cells connected by a high-speed interconnect All CPUs can directly address all memory Concept of near and far memory calls

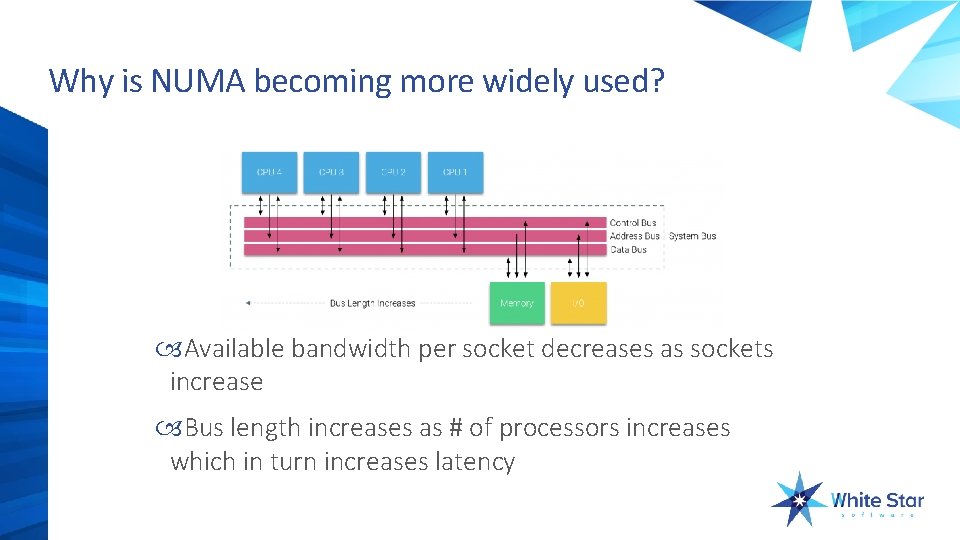

Why is NUMA becoming more widely used? Available bandwidth per socket decreases as sockets increase Bus length increases as # of processors increases which in turn increases latency

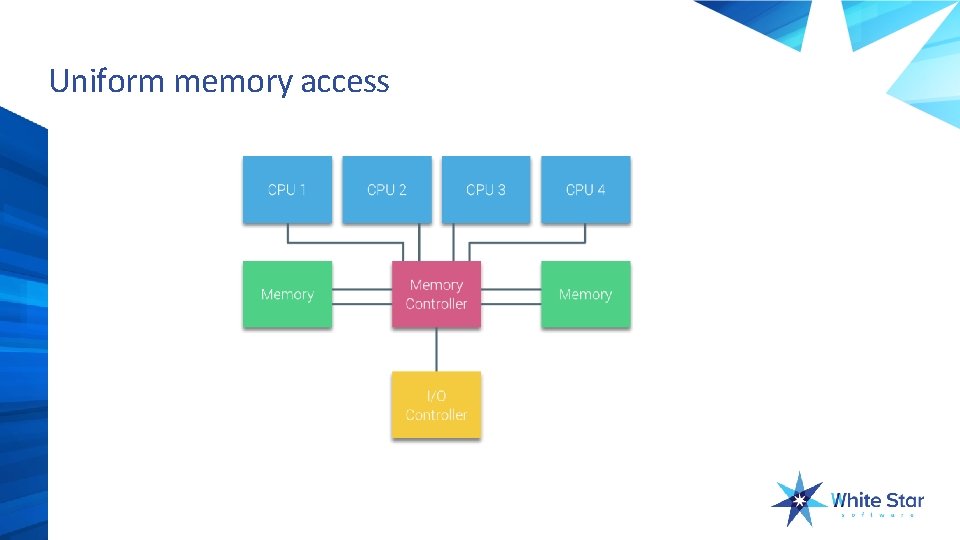

Uniform memory access

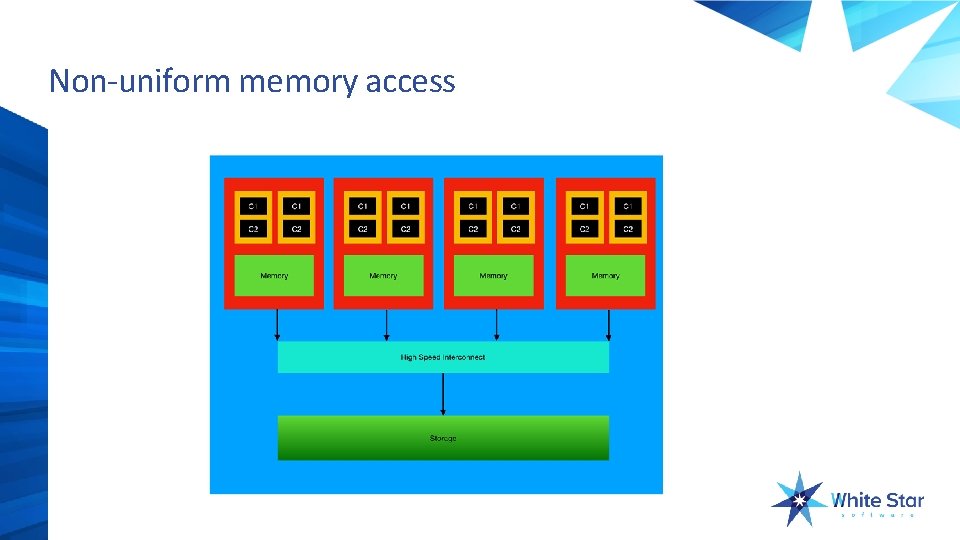

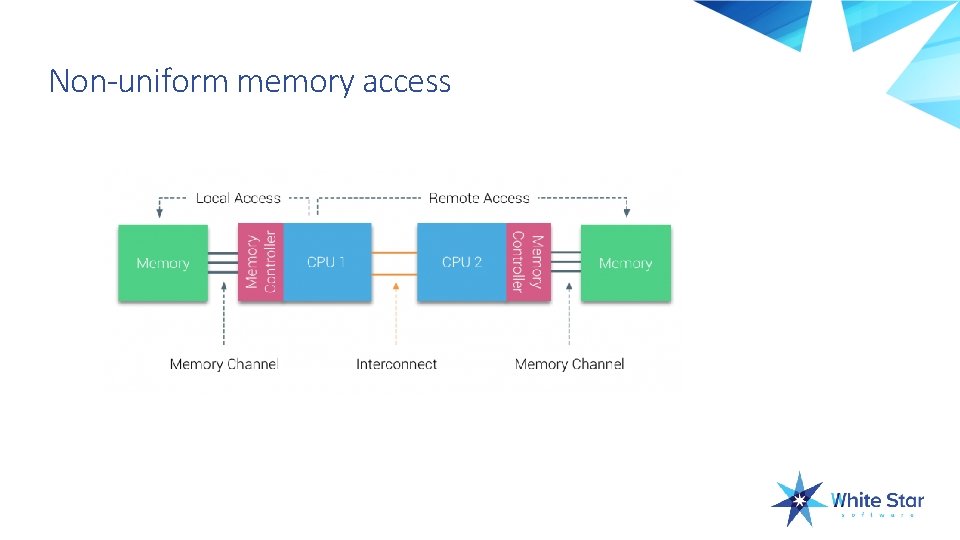

Non-uniform memory access

Non-uniform memory access

What is the problem with NUMA? Nothing for applications that do not rely on shared memory Open. Edge and Oracle and SQL Server and … all rely on shared memory Near memory works like uniform memory access Far memory calls are at LEAST 3 times less efficient than near memory calls

Words to watch for AMD – Hyper. Transport Intel – Quick. Path SUMA – Sufficiently Uniform Memory Access Uniform memory access for near and far access but less efficient than all near memory access 30 -40% reduction for SUMA vs. all local

What is the problem? NUMA systems try to maintain near memory calls if possible As load increases the likelihood of far memory calls increases So as load increases RDBMS overhead increases making performance decrease at a much greater rate

How do we solve the problem? By setting processor and memory affinity Affinity determines which resources will be used in the machine By setting processor and memory affinity to a single cell (node, book, …) you eliminate far memory calls

Sounds easy, why should I be concerned? You resources are now limited to the amount of resources in a single cell Example: 4 socket 2 -cell NUMA with 512 GB RAM Each socket is 8 -core Maximum VM configuration would be 16 -core with 256 GB RAM Please note: some current systems are 1 socket per cell Then you could only have 8 -core 128 GB RAM per VM

Seeing the issue Opvizer Performance Analyzer ($$ - 30 day free trial) esxtop – www. yellow-bricks. com/esxtop

No NUMA? # numactl --show policy: default preferred node: current physcpubind: 0 1 2 3 4 5 6 7 8 9 10 11 cpubind: 0 nodebind: 0 membind: 0

Still no NUMA? #numactl --hardware available: 1 nodes (0) node 0 cpus: 0 1 2 3 4 5 6 7 8 9 10 11 node 0 size: 65525 MB node 0 free: 17419 MB node distances: node 0 0: 10

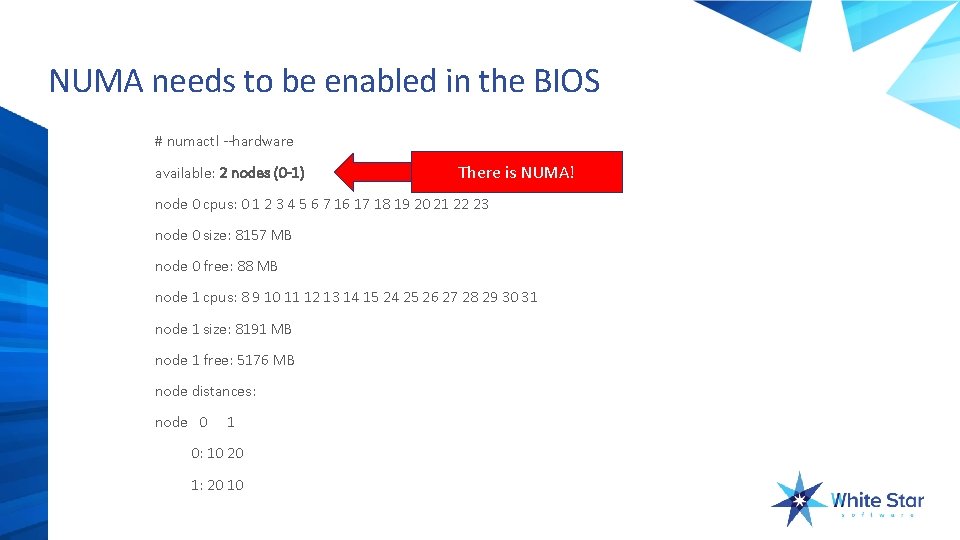

NUMA needs to be enabled in the BIOS # numactl --hardware available: 2 nodes (0 -1) There is NUMA! node 0 cpus: 0 1 2 3 4 5 6 7 16 17 18 19 20 21 22 23 node 0 size: 8157 MB node 0 free: 88 MB node 1 cpus: 8 9 10 11 12 13 14 15 24 25 26 27 28 29 30 31 node 1 size: 8191 MB node 1 free: 5176 MB node distances: node 0 1 0: 10 20 1: 20 10

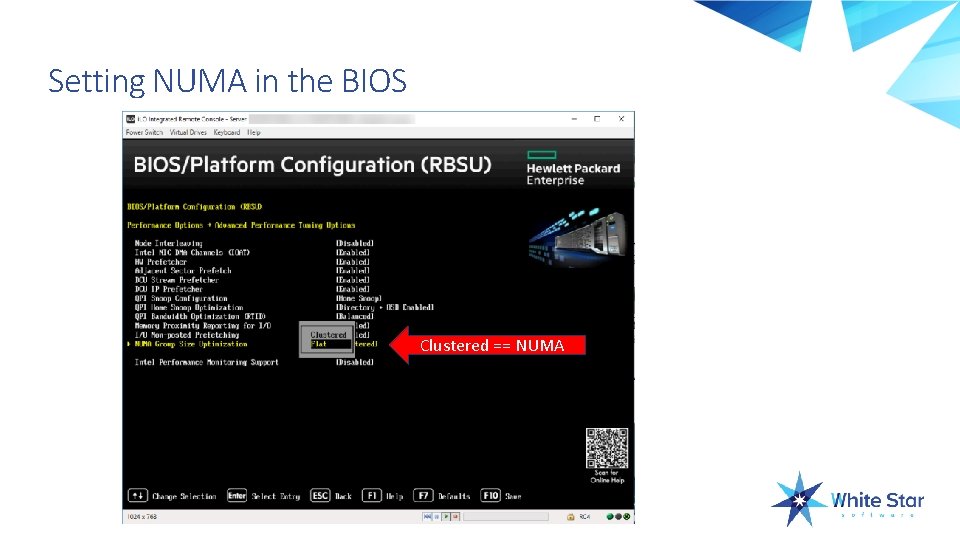

Setting NUMA in the BIOS Clustered == NUMA

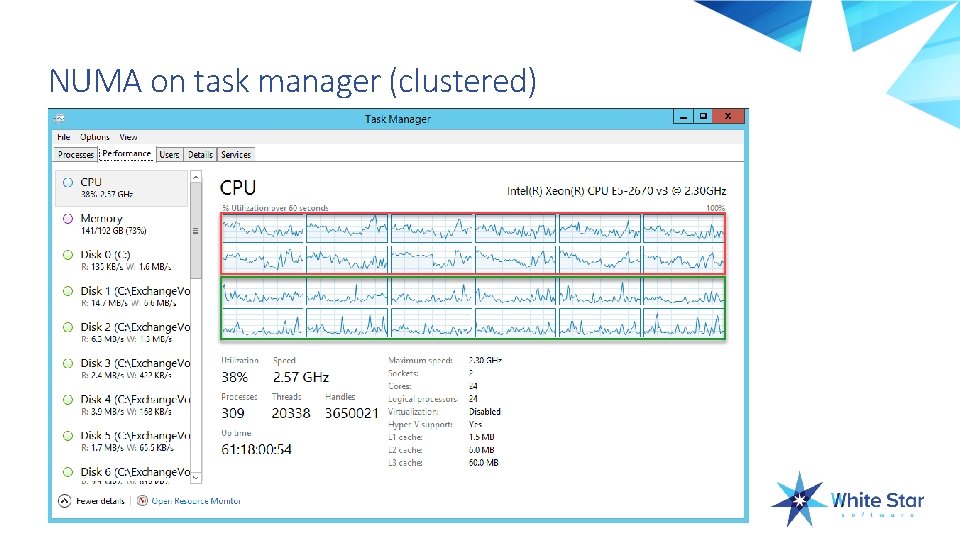

NUMA on task manager (clustered)

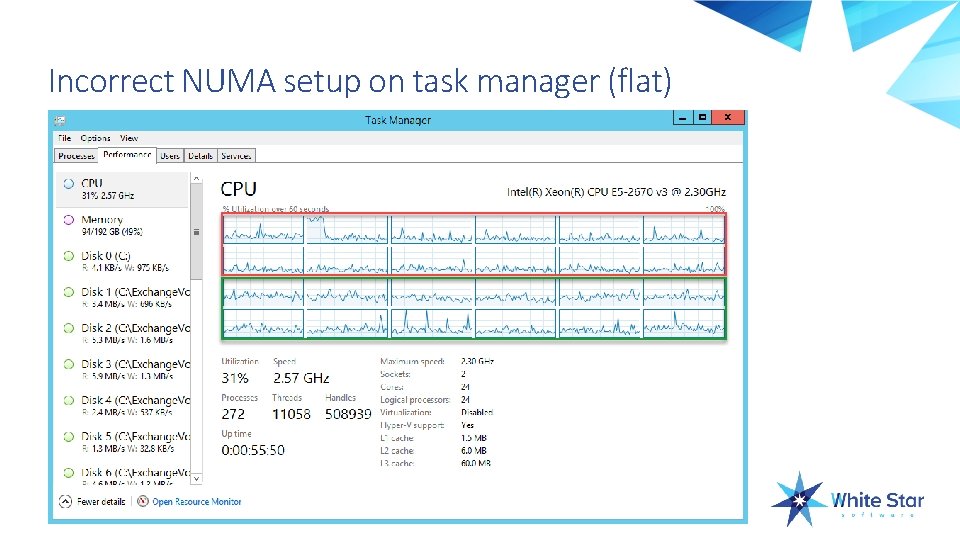

Incorrect NUMA setup on task manager (flat)

Fixing the problem Don’t buy NUMA People try to buy increased core count rather than fewer cores with higher speed Open. Edge benefits from greater speed more than higher core count in the vast majority of cases Evenly distribute the RAM across NUMA cells Never define a VM with more resources than a single node can support (Both CPU and RAM)

Fixing the problem On properly configured VMs you can set the affinity for the VM (Best method) It is possible to set the affinity for individual processes but this is generally a bad idea that should only be considered as a short-term fix

VMWare NUMA controls VMWare node affinity VMWare can only schedule to the nodes listed in the affinity CPU affinity VMWare can only use the processors in the affinity Memory affinity VMWare can only use the memory in the affinity

Dynamic NUMA control - linux numactl --physcpubind=cpus, -C cpus Only execute process on cpus. This accepts cpu numbers as shown in the processor fields of /proc/cpuinfo, or relative cpus as in relative to the current cpuset.

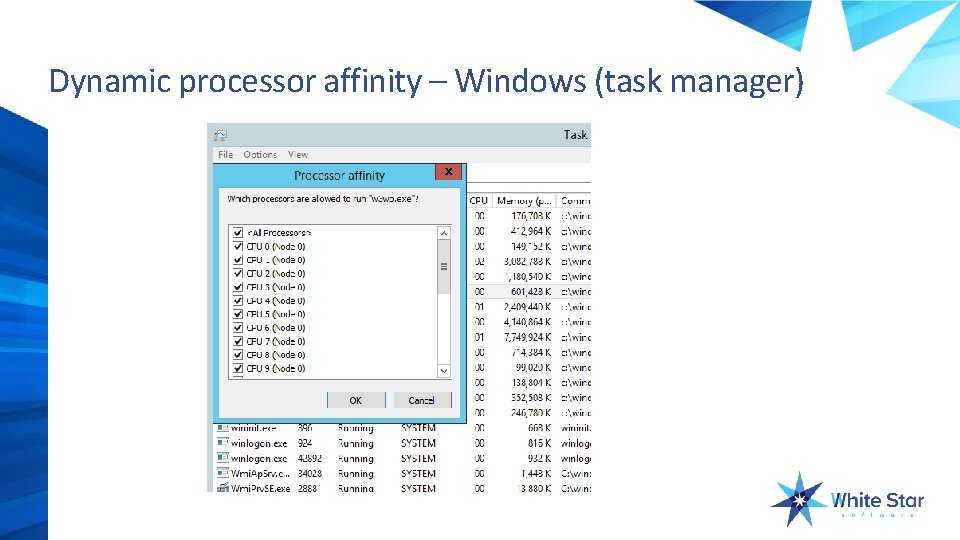

Dynamic processor affinity – Windows (task manager)

Other options Divide work load across machines Client/Server N-tier

Questions?

- Slides: 27