Largescale computerized adaptive progress testing individual precision estimates

- Slides: 20

Large-scale computerized adaptive progress testing: individual precision estimates, testretest reliability and developmental validity Carlos Fernando Collares 1, 2 José Juan Góngora Cortés 3 Silvia Lizett Olivares 3 Jorge Eugenio Valdez García 3 Cees van der Vleuten 1, 2 1. Maastricht University. 2. European Board of Medical Assessors. 3. Instituto Tecnológico y de Estudios Superiores de Monterrey Barcelona, August 30 th, 2016.

BACKGROUND

Progress test: an assessment tool with many purposes • Diagnosis • Prediction • Remediation • Improvement • Faculty Development • Curricular Governance • Quality Assurance • Assessment FOR learning

History of the International Progress Testing • 2011: progress test specialists from several countries discussed the prospects of a collaborative effort. • 2013: progress test specialists developed guidelines for implementing an international progress testing programme. • Initially, the IPT was implemented by Maastricht University. • Since its first edition, there has been close cooperation between the IPT and the Dutch progress test consortium (i. VTG). • Partners: Maastricht University (The Netherlands), in Flinders University (Australia), in Mozambique (Catholic University), Saudi Arabia (Al Rajhi College) and Mexico (Monterrey Tec). • 2015: IPT was brought under the EBMA umbrella.

What is EBMA? • Non-profit organization formed by a group of European professionals who have expertise in assessment and/or have leadership roles in universities, or other bodies concerned with medical education and training. • Not a licensing body • Community of experts in assessment for learning in medical education

EBMA members • • • • Belgium: Ghent University Cyprus: University of Nicosia Denmark: University of Copenhagen Finland: University of Helsinki Germany: University of Heidelberg Italy: Catholic University of Rome Netherlands: University Medical Center Groningen Netherlands: Maastricht University Poland: Jagiellonian University Poland: Lødz University Portugal: University of Minho UK: Keele University UK: University of Exeter UK: Plymouth University

What is computerized adaptive testing? • A Computerized Adaptive Test (CAT) dynamically adjusts the difficulty of the items (questions) to the demonstrated performance level of each examinee while the test is being administered. • CAT is enabled by an algorithm. • A large calibrated item bank is used to feed the system. • A calibrated item is an item whose difficulty level in the population of interest is estimated beforehand. • The selection of items is similar to a video game: correct answers lead to more difficult items; incorrect answers lead to easier items. • After each answer, the algorithm recalculates the score and the measurement error, which tends to decrease after each item.

Benefits of CAT • Shorter tests and shorter testing time keeping optimal reliability • Each test is individually customized → increased test safety and automatic adjustment of test difficulty according to students’ knowledge (top students don’t waste time on easy questions; underachievers are less demotivated by tough questions) • Flexible test delivery → no need of large-scale simultaneous test administration; it is possible to schedule different testing dates • Cost-effectiveness & environmental friendliness → zero paper • Immediate score reporting → timely feedback • More item formats (audio, video, etc. ) → questions can have more relevance, professional authenticity

History of CAT on progress testing • Results from the first small-sample pilot CAT PT delivered in São Paulo (Brazil) were presented in 2012 during the Ottawa Conference in Kuala Lumpur (Collares et al. , 2012). • The interinstitutional CMIRA program from the Syrian-Lebanese Hospital in São Paulo used a CAT PT solution, for a short period. • Monterrey Tec (ITESM) showed interest in the IPT but could not accommodate all students to do the test simultaneously. • A solution based on a CAT approach was offered and accepted. • The third edition of the test at ITESM has been recently delivered. • A new edition of the test will be delivered next November. • Former Brazilian parterners to reinstate CAT PT later this year.

METHOD

Method • Item bank size: simulation studies. • Algorithm: Content specification; “enemy items”. • Two editions of the computerized adaptive version of the IPT, configured to contain 50 items were administered in the same institution within a six-month interval (N = 1149 and N = 1217). • Individual precision estimates were calculated based on Rasch model individual standard error estimates realsed for misfit. • Correlation of scores obtained in both test administrations was used to calculate test-retest reliability. • A paired t-test and a Cohen's d coefficient were used to describe the difference of scores for all students who took both tests (N = 882).

Students doing the EBMA IPT at ITESM

RESULTS

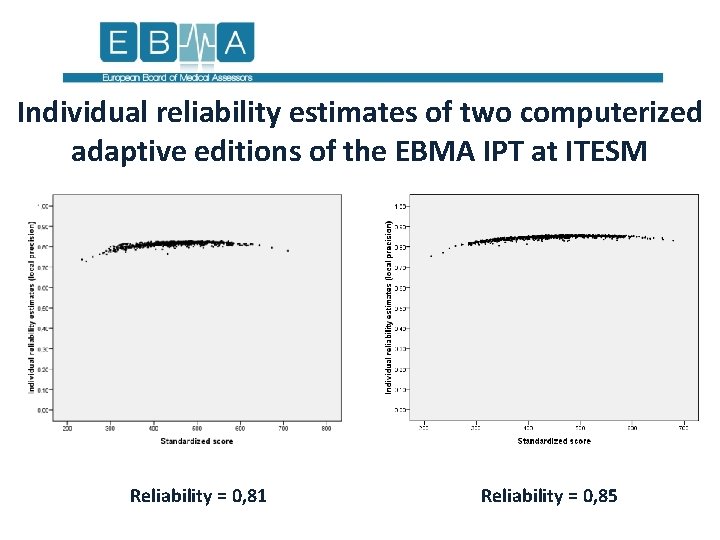

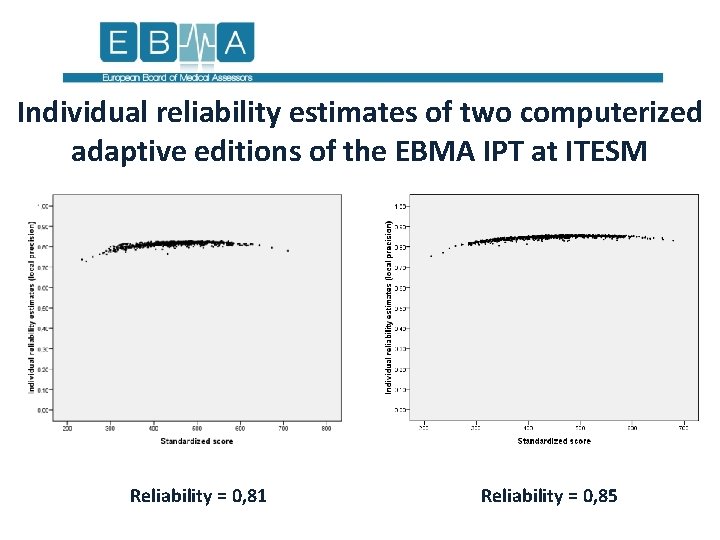

Individual reliability estimates of two computerized adaptive editions of the EBMA IPT at ITESM Reliability = 0, 81 Reliability = 0, 85

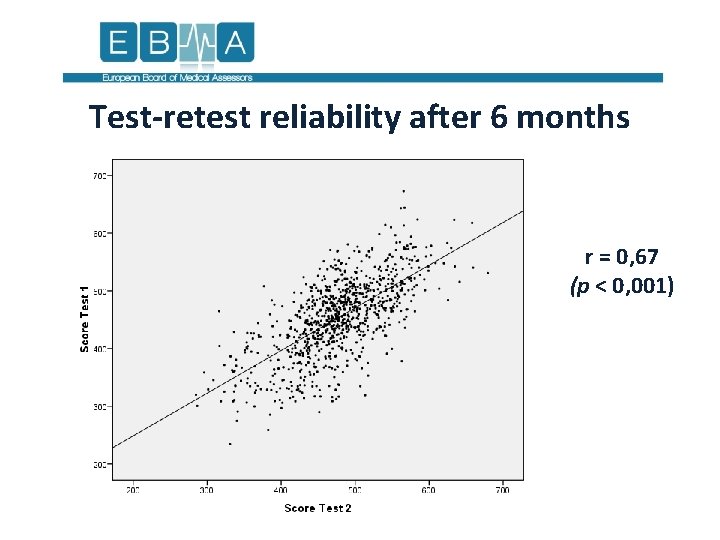

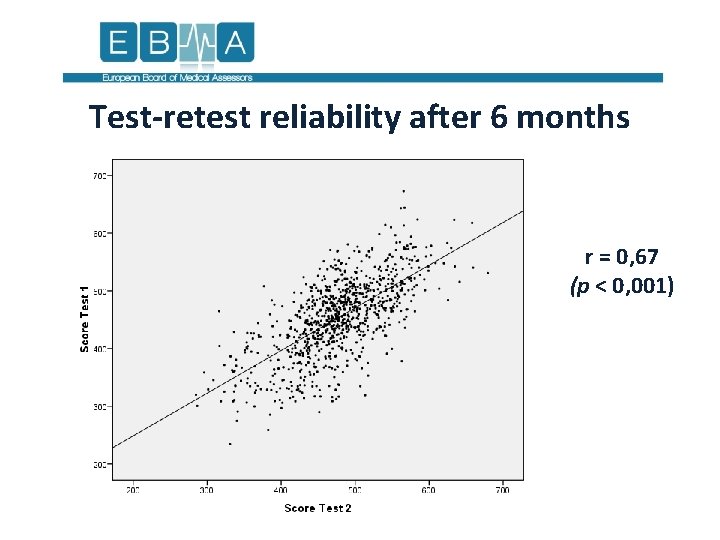

Test-retest reliability after 6 months r = 0, 67 (p < 0, 001)

Developmental validity • ANOVAs with cross-sectional data obtained in each test separately resulted in a significant increase of full test scores and all subscores across the curriculum in both occasions (p < 0, 001). • Post-hoc tests show lack of significant increase after the fourth academic year. • A non-parametric alternative for ordered groups, the Jonckheere -Terpstra test, also showed significant differences for the full test scores and all subscores. • Score increase for students who received both editions of the test was significant on the paired t-test (t = 13, 505; df = 881; p < 0, 001) with a moderate effect size (Cohen's d = 0, 37).

Conclusions The computerized adaptive version of the International Progress Test administered in ITESM presented: • adequate estimates of individual precision; • a reasonable degree of test-retest reliability (especially considering the short test length and long time to retest); • a significant increase in scores after 6 months, with a moderate effect size, an evidence of developmental validity.

Future perspectives • Increase in item number to provide better reliability and content validity (50 → 100 items). • Repeated measures ANOVA for developmental validity. • Continuous content-wise maintenance of the item bank. • Control for under- and overexposure. • Control for item parameter drift. • Strengthening the local customization of the test, with more items written by ITESM staff, in order to reflect local epidemiology, local health care laws and local code of ethics. • International benchmarking. • Perceptions of test-takers, interpretation of scores.

Take-home message Computerized adaptive progress testing can be a solution with acceptable degrees of validity and reliability, making it especially attractive to schools that cannot administer a test to all students simultaneously.

Thank you! http: //www. ebma. eu. com info@ebma. eu. com www. twitter. com/EBMAorg