Information Representation Level ISA 3 Floating point numbers

Information Representation (Level ISA 3) Floating point numbers

Floating point storage • Two’s complement notation can’t be used to represent floating point numbers because there is no provision for the radix point • A form of scientific notation is used

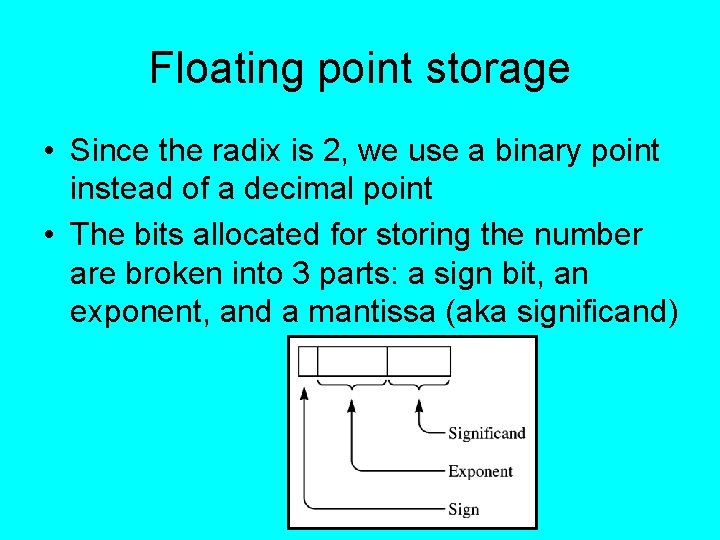

Floating point storage • Since the radix is 2, we use a binary point instead of a decimal point • The bits allocated for storing the number are broken into 3 parts: a sign bit, an exponent, and a mantissa (aka significand)

Floating point storage • The number of bits used for exponent and significand depend on what we want to optimize for: – For greater range of magnitude, we would allocate more bits to the exponent – For greater precision, we would allocate more bits to the significand • For the next several slides, we’ll use a 14 bit model with a sign bit, a 5 -bit exponent, and an 8 -bit significand

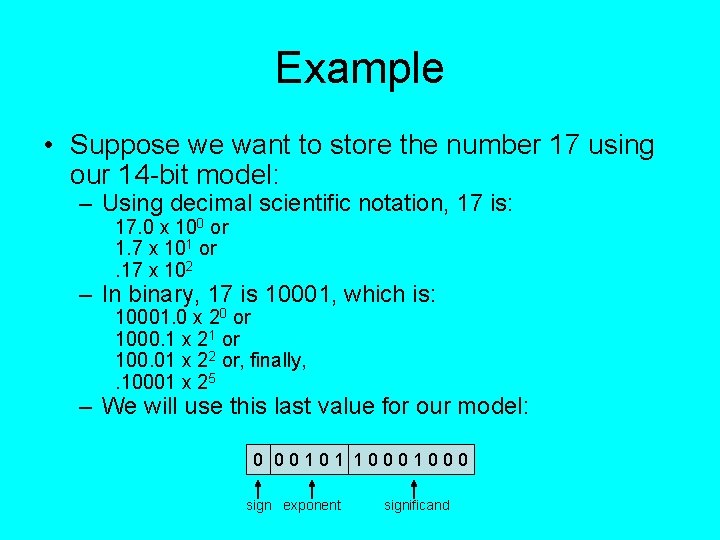

Example • Suppose we want to store the number 17 using our 14 -bit model: – Using decimal scientific notation, 17 is: 17. 0 x 100 or 1. 7 x 101 or. 17 x 102 – In binary, 17 is 10001, which is: 10001. 0 x 20 or 1000. 1 x 21 or 100. 01 x 22 or, finally, . 10001 x 25 – We will use this last value for our model: 0 00101 1000 sign exponent significand

Negative exponents • With the existing model, we can only represent numbers with positive exponents • Two possible solutions: – Reserve a sign bit for the exponent (with a resulting loss in range) – Use a biased exponent; explanation on next slide

Bias values • With bias values, every integer in a given range is converted into a non-negative by adding an offset (bias) to every value to be stored • The bias value should be at or near the middle of the range of numbers – Since we are using 5 bits for the exponent, the range of values is 0. . 31 – If we choose 16 as our bias value, we can consider every number less than 16 a negative number, every number greater than 16 a positive number, and 16 itself can represent 0 – This is called excess-16 representation, since we have to subtract 16 from the stored value to get the actual value – Note: exponents of all 0 s or all 1 s are usually reserved for special numbers (such as 0 or infinity)

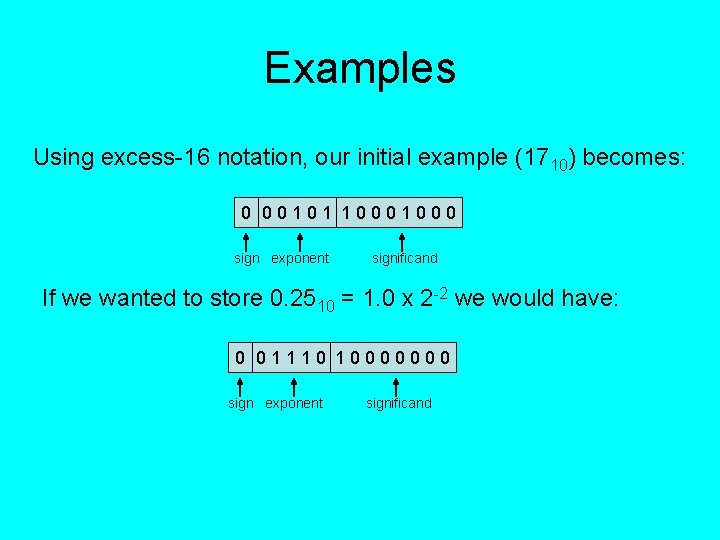

Examples Using excess-16 notation, our initial example (1710) becomes: 0 00101 1000 sign exponent significand If we wanted to store 0. 2510 = 1. 0 x 2 -2 we would have: 0 01110 10000000 sign exponent significand

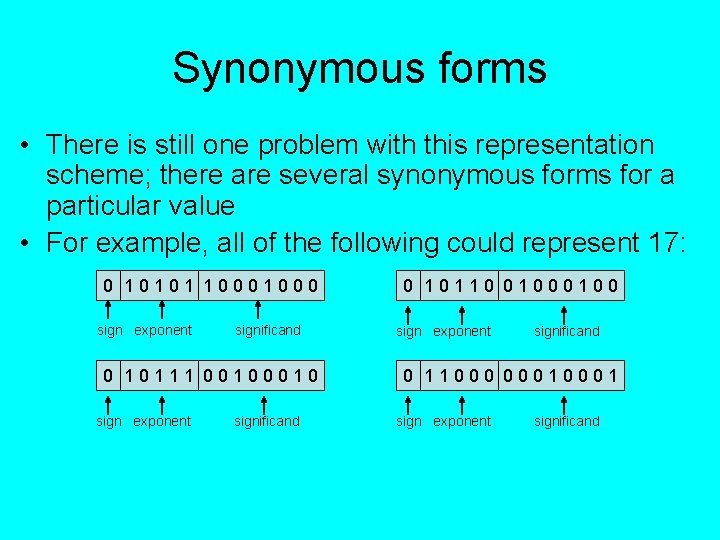

Synonymous forms • There is still one problem with this representation scheme; there are several synonymous forms for a particular value • For example, all of the following could represent 17: 0 10101 1000 sign exponent significand 0 10111 0010 sign exponent significand 0 10110 0100 sign exponent significand 0 11000 0001 sign exponent significand

Normalization • In order to avoid the potential chaos of synonymous forms, a convention has been established that the leftmost bit of the significand will always be 1 • An additional advantage of this convention is that, since we always know the 1 is supposed to be present, we never need to actually store it; thus we get an extra bit of precision for the significand; your text refers to this as the hidden bit

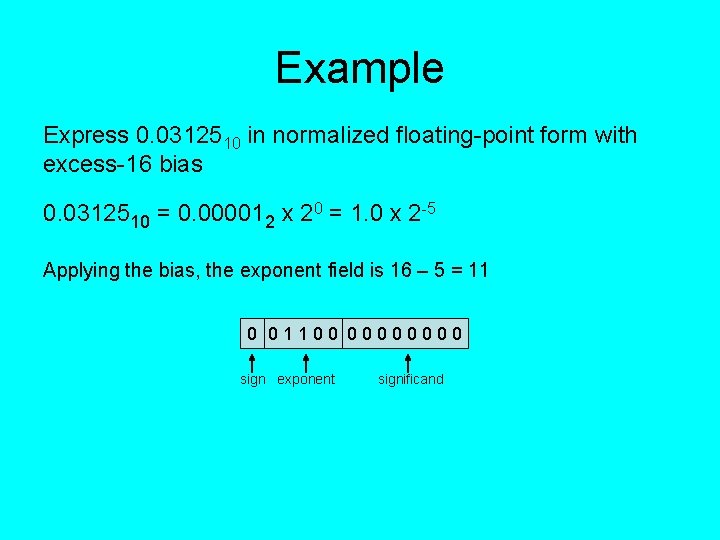

Example Express 0. 0312510 in normalized floating-point form with excess-16 bias 0. 0312510 = 0. 000012 x 20 = 1. 0 x 2 -5 Applying the bias, the exponent field is 16 – 5 = 11 0 01100 0000 sign exponent significand

Floating-point arithmetic • If we wanted to add decimal numbers expressed in scientific notation, we would change one of the numbers so that both of them are expressed in the same power of the base • Example: 1. 5 x 102 + 3. 5 x 103 =. 15 x 103 + 3. 5 x 103 = 3. 65 x 103

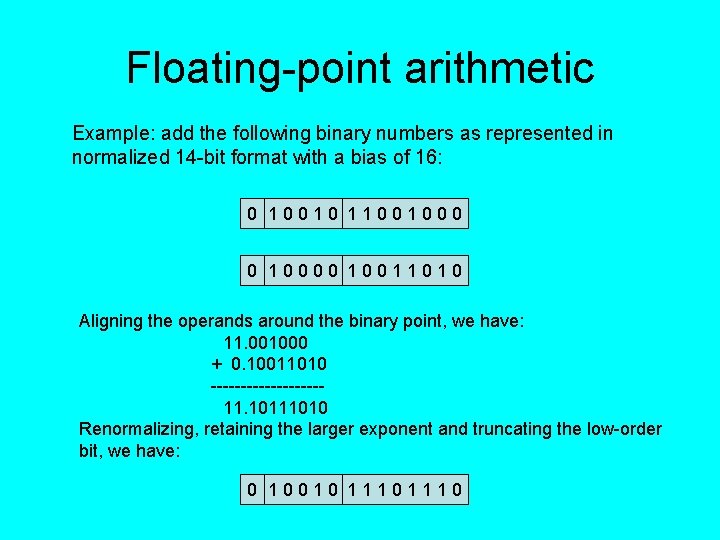

Floating-point arithmetic Example: add the following binary numbers as represented in normalized 14 -bit format with a bias of 16: 0 10010 11001000 0 10000 10011010 Aligning the operands around the binary point, we have: 11. 001000 + 0. 10011010 ---------11. 10111010 Renormalizing, retaining the larger exponent and truncating the low-order bit, we have: 0 10010 1110

Floating-point errors • Any two real numbers have an infinite number of values between them • Computers have a finite amount of memory in which to represent real numbers • Modeling an infinite system using a finite system means the model is only an approximation – The more bits used, the more accurate the approximation – Always some element of error, no matter how many bits used

Floating-point errors • Errors can be blatant or subtle (or unnoticed) – Blatant errors: overflow or underflow; blatant because they cause crashes – Subtle errors: can lead to wildly erroneous results, but are often hard to detect (may go unnoticed until they cause real problems)

Example • In our simple model, we can express normalized numbers in the range -. 11112 x 215 …. 1111 x 215 – Certain limitations are clear; we know we can’t store 2 -19 or 2128 – Not so obvious that we can’t accurately store 256. 5: • Binary equivalent is 10000000. 1, which is 10 bytes wide • The low-order bit would be dropped (or rounded into next bit) • Either way, we have introduced an error

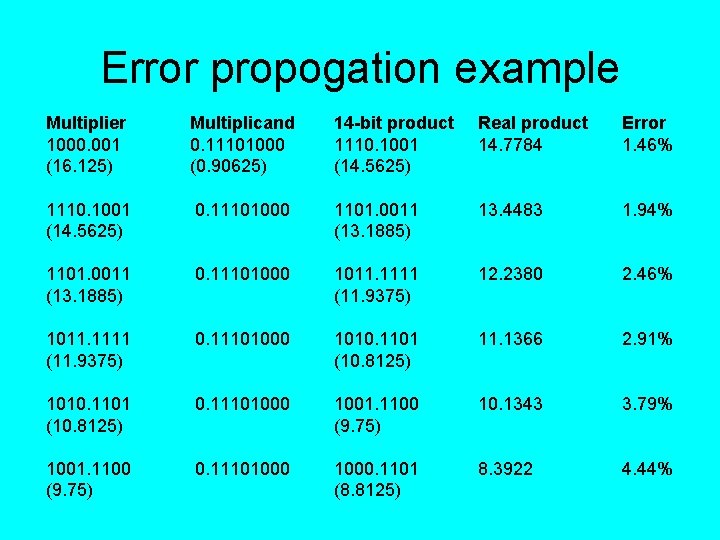

Error propogation • The relative error in the previous example can be found by taking the ratio of the absolute value of the error to the true value of the number: 256. 5 – 256 --------256 =. 003906 (about. 39%) • In a lengthy calculation, such errors can propogate, causing substantial loss of precision • The next slide illustrates such a situation; it shows the first five iterations of a floating-point product loop. Eventually the error will be 100%, since the product will go to 0.

Error propogation example Multiplier 1000. 001 (16. 125) Multiplicand 0. 11101000 (0. 90625) 14 -bit product 1110. 1001 (14. 5625) Real product 14. 7784 Error 1. 46% 1110. 1001 (14. 5625) 0. 11101000 1101. 0011 (13. 1885) 13. 4483 1. 94% 1101. 0011 (13. 1885) 0. 11101000 1011. 1111 (11. 9375) 12. 2380 2. 46% 1011. 1111 (11. 9375) 0. 11101000 1010. 1101 (10. 8125) 11. 1366 2. 91% 1010. 1101 (10. 8125) 0. 11101000 1001. 1100 (9. 75) 10. 1343 3. 79% 1001. 1100 (9. 75) 0. 11101000. 1101 (8. 8125) 8. 3922 4. 44%

Special values • Zero is an example of a special floatingpoint value – Can’t be normalized; no 1 in its binary version – Usually represented as all 0 s in both exponent and significand regions – It is common to have both positive and negative versions of 0 in floating-point representation

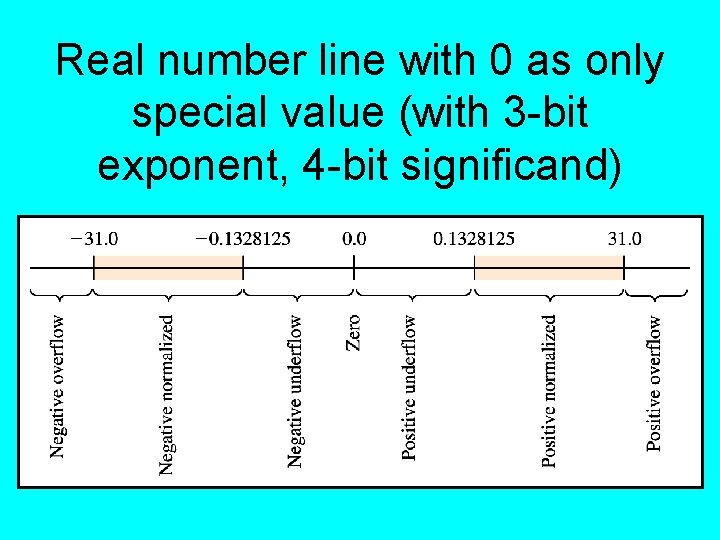

Real number line with 0 as only special value (with 3 -bit exponent, 4 -bit significand)

More special values • Infinity: bit pattern used when a result falls in the overflow region – Uses exponent field of all 1 s, significand of all 0 s • Not a number (Na. N): used to indicate illegal floating point operations – Uses exponent of all 1 s, any significand • Use of these bit patterns for special values restricts the range of numbers that can be represented

Denormalized numbers • There is no value to represent infinity in the underflow region analogous to infinity in the overflow region • Denormalized numbers exist in the underflow regions, shortening the gap between small positive values and 0

Denormalized numbers • When the exponent field of a number is all 0 s and the significand contains at least a single 1, special rules apply to the representation: – The hidden bit is assumed to be 0 instead of 1 – The exponent is stored in excess n-1 (where excess n is used for normalized numbers)

Floating point storage and C • In the C programming language, the standard output function, printf(), prints floating-point numbers with a default precision of 6 (7 digits, including the whole part, using either fixed or scientific notation) • We can reason backwards from this fun fact to discover C’s default size for floating point numbers

Floating point values and C • To represent some number n with d decimal digits, we need log 2 n x d bits: – – – Let L 10 = log 10(n); then 10 L 10 = n So log 2(10 L 10) = log 2(n), and L 10 * log 2(10) = log 2(n) which means log 2(n) = log 10(n) * log 2(10) The last value above (log 2(10)) is a constant, about 3. 322 • So, the number of bits needed to represent a decimal number with 7 significant digits is 3. 322 * 7, or 23. 254; 24 bits, in other words

The IEEE 754 floating point standard • Specifies uniform standard for single and double-precision floating-point numbers • Single-precision: 32 bits – 23 -bit significand – 8 -bit using excess 127 bias • Double-precision: 64 bits – 52 -bit significand – 11 -bit exponent with excess 1023 bias

Binary-coded decimal representation • Numeric coding system used mainly in IBM mainframe & midrange systems • BCD encodes each digit of a decimal number to a 4 -bit binary form • In an 8 -bit byte, only the lower nibble represents the digit’s value; the upper nibble (called the zone) is used to hold the sign, which can be positive (1100), negative (1101), or unsigned (1111)

BCD • Commonly used in banking and other financial settings where: – Most data is monetary – High level of I/O activity • BCD is easier to convert to decimal for printed reports • Circuitry for arithmetic operations usually slower than for unsigned binary

- Slides: 28