Hubs and Authorities Learning Perceptrons Artificial Intelligence CMSC

Hubs and Authorities & Learning: Perceptrons Artificial Intelligence CMSC 25000 February 1, 2005

Roadmap • Problem: – Matching Topics and Documents • Methods: – Classic: Vector Space Model • Challenge I: Beyond literal matching – Expansion Strategies • Challenge II: Authoritative source – Hubs & Authorities – Page Rank

Authoritative Sources • Based on vector space alone, what would you expect to get searching for “search engine”? – Would you expect to get Google?

Issue Text isn’t always best indicator of content Example: • “search engine” – Text search -> review of search engines • • Term doesn’t appear on search engine pages Term probably appears on many pages that point to many search engines

Hubs & Authorities • Not all sites are created equal – Finding “better” sites • Question: What defines a good site? – Authoritative – Not just content, but connections! • One that many other sites think is good • Site that is pointed to by many other sites – Authority

Conferring Authority • Authorities rarely link to each other – Competition • Hubs: – Relevant sites point to prominent sites on topic • Often not prominent themselves • Professional or amateur • Good Hubs Good Authorities

Computing HITS • Finding Hubs and Authorities • Two steps: – Sampling: • Find potential authorities – Weight-propagation: • Iteratively estimate best hubs and authorities

Sampling • Identify potential hubs and authorities – Connected subsections of web • Select root set with standard text query • Construct base set: – All nodes pointed to by root set – All nodes that point to root set • Drop within-domain links – 1000 -5000 pages

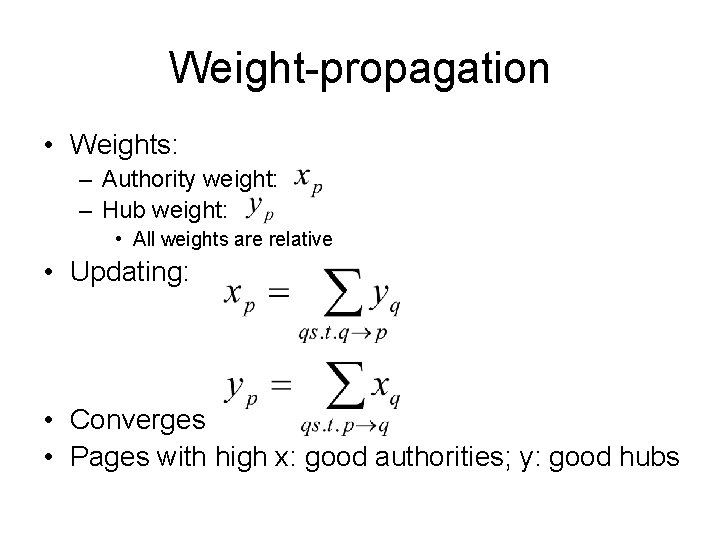

Weight-propagation • Weights: – Authority weight: – Hub weight: • All weights are relative • Updating: • Converges • Pages with high x: good authorities; y: good hubs

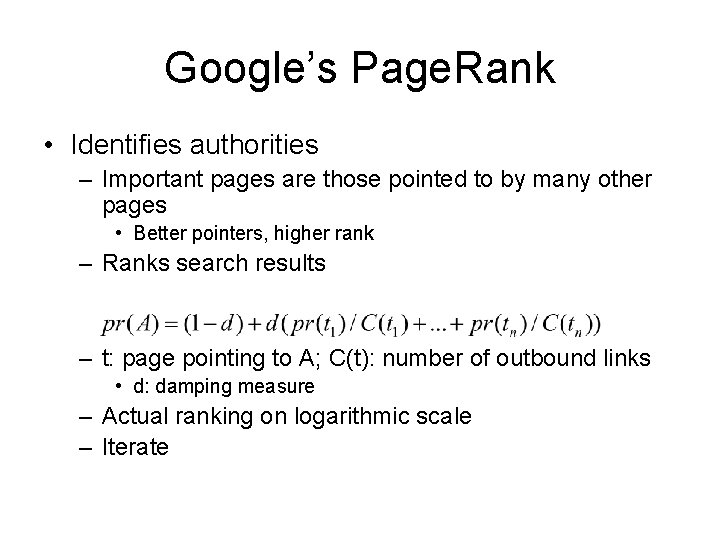

Google’s Page. Rank • Identifies authorities – Important pages are those pointed to by many other pages • Better pointers, higher rank – Ranks search results – t: page pointing to A; C(t): number of outbound links • d: damping measure – Actual ranking on logarithmic scale – Iterate

Contrasts • Internal links – Large sites carry more weight • If well-designed – H&A ignores site-internals • Outbound links explicitly penalized • Lots of tweaks….

Web Search • Search by content – Vector space model • • Word-based representation “Aboutness” and “Surprise” Enhancing matches Simple learning model • Search by structure – Authorities identified by link structure of web • Hubs confer authority

Nearest Neighbor Summary

Nearest Neighbor: Issues • Prediction can be expensive if many features • Affected by classification, feature noise – One entry can change prediction • Definition of distance metric – How to combine different features • Different types, ranges of values • Sensitive to feature selection

Nearest Neighbor: Analysis • Issue: – What features should we use? • E. g. Credit rating: Many possible features – Tax bracket, debt burden, retirement savings, etc. . – Nearest neighbor uses ALL – Irrelevant feature(s) could mislead • Fundamental problem with nearest neighbor

Nearest Neighbor: Advantages • Fast training: – Just record feature vector - output value set • Can model wide variety of functions – Complex decision boundaries – Weak inductive bias • Very generally applicable

Summary: Nearest Neighbor • Nearest neighbor: – Training: record input vectors + output value – Prediction: closest training instance to new data • Efficient implementations • Pros: fast training, very general, little bias • Cons: distance metric (scaling), sensitivity to noise & extraneous features

Learning: Perceptrons Artificial Intelligence CMSC 25000 February 1, 2005

Agenda • Neural Networks: – Biological analogy • Perceptrons: Single layer networks • Perceptron training • Perceptron convergence theorem • Perceptron limitations • Conclusions

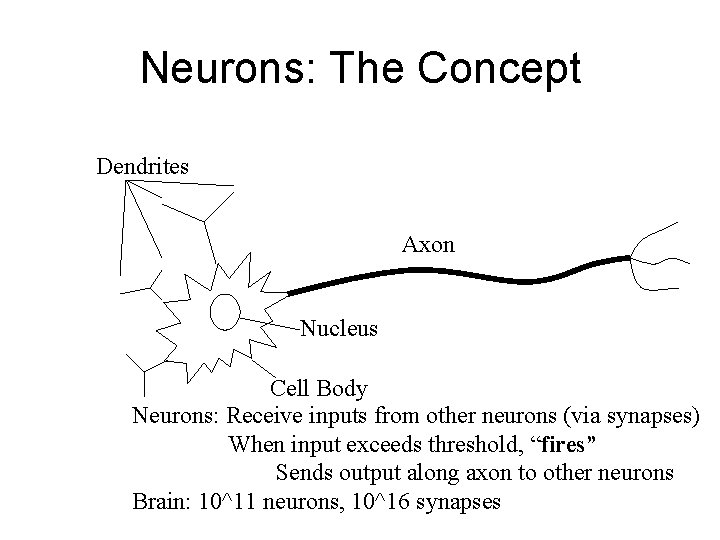

Neurons: The Concept Dendrites Axon Nucleus Cell Body Neurons: Receive inputs from other neurons (via synapses) When input exceeds threshold, “fires” Sends output along axon to other neurons Brain: 10^11 neurons, 10^16 synapses

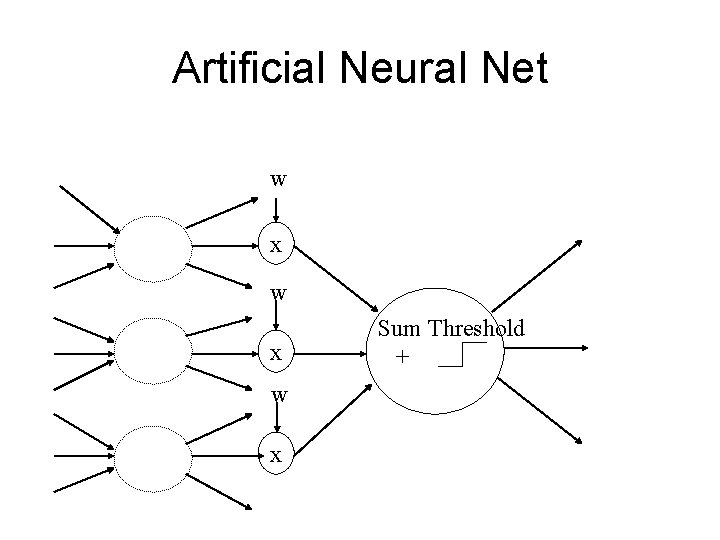

Artificial Neural Nets • Simulated Neuron: – Node connected to other nodes via links • Links = axon+synapse+link • Links associated with weight (like synapse) – Multiplied by output of node – Node combines input via activation function • E. g. sum of weighted inputs passed thru threshold • Simpler than real neuronal processes

Artificial Neural Net w x w x Sum Threshold +

Perceptrons • Single neuron-like element – Binary inputs – Binary outputs • Weighted sum of inputs > threshold

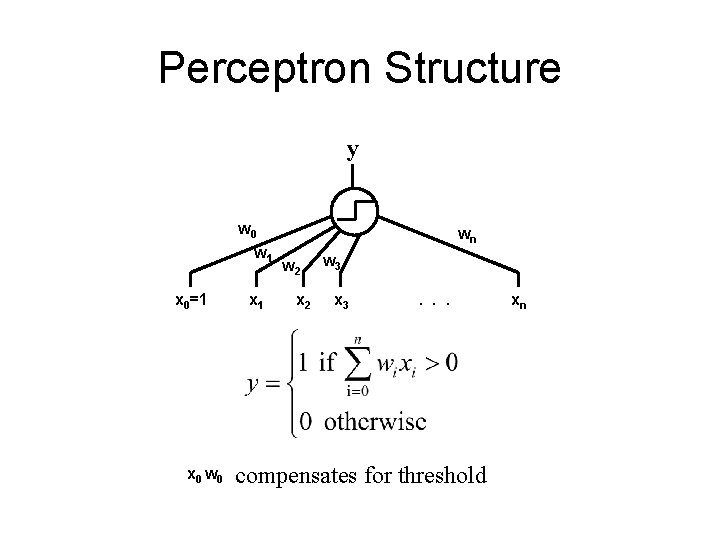

Perceptron Structure y w 0 w 1 x 0=1 x 0 w 0 x 1 wn w 2 x 2 w 3 x 3 . . . compensates for threshold xn

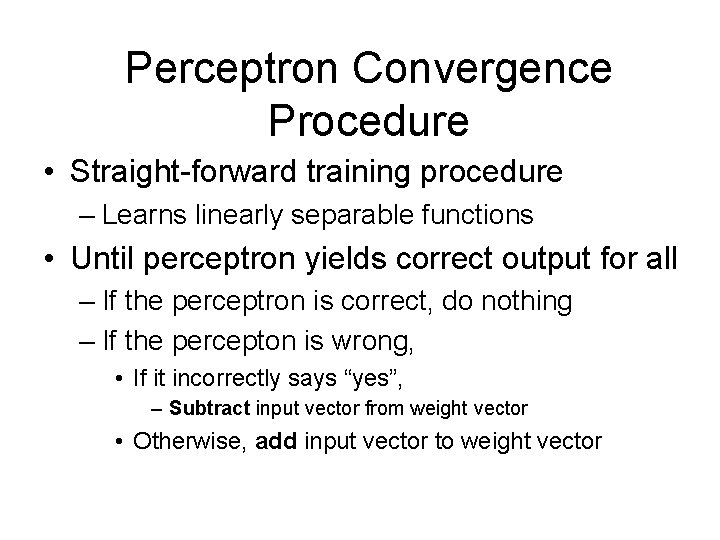

Perceptron Convergence Procedure • Straight-forward training procedure – Learns linearly separable functions • Until perceptron yields correct output for all – If the perceptron is correct, do nothing – If the percepton is wrong, • If it incorrectly says “yes”, – Subtract input vector from weight vector • Otherwise, add input vector to weight vector

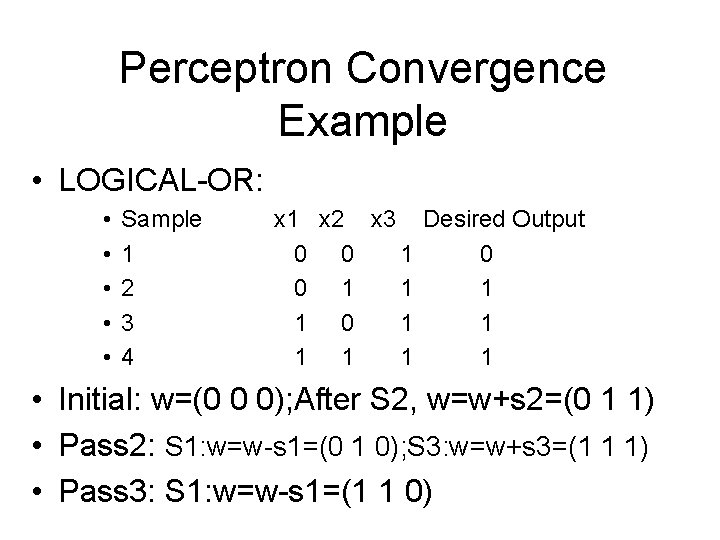

Perceptron Convergence Example • LOGICAL-OR: • • • Sample 1 2 3 4 x 1 x 2 x 3 Desired Output 0 0 1 1 1 1 0 1 1 1 • Initial: w=(0 0 0); After S 2, w=w+s 2=(0 1 1) • Pass 2: S 1: w=w-s 1=(0 1 0); S 3: w=w+s 3=(1 1 1) • Pass 3: S 1: w=w-s 1=(1 1 0)

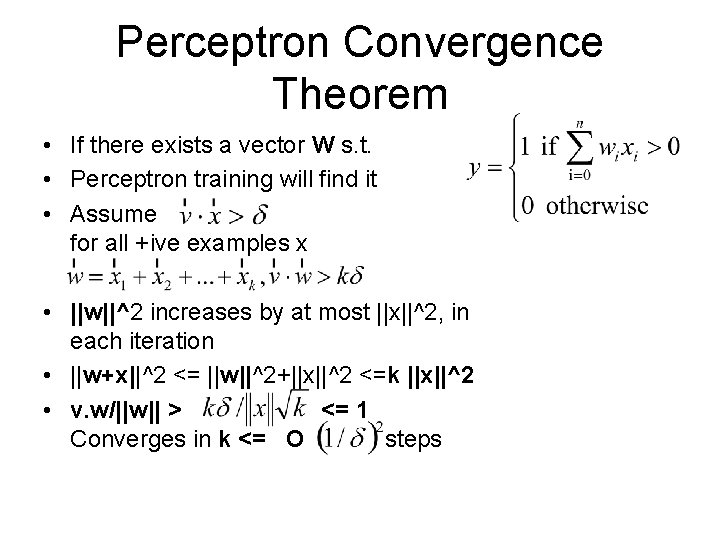

Perceptron Convergence Theorem • If there exists a vector W s. t. • Perceptron training will find it • Assume for all +ive examples x • ||w||^2 increases by at most ||x||^2, in each iteration • ||w+x||^2 <= ||w||^2+||x||^2 <=k ||x||^2 • v. w/||w|| > <= 1 Converges in k <= O steps

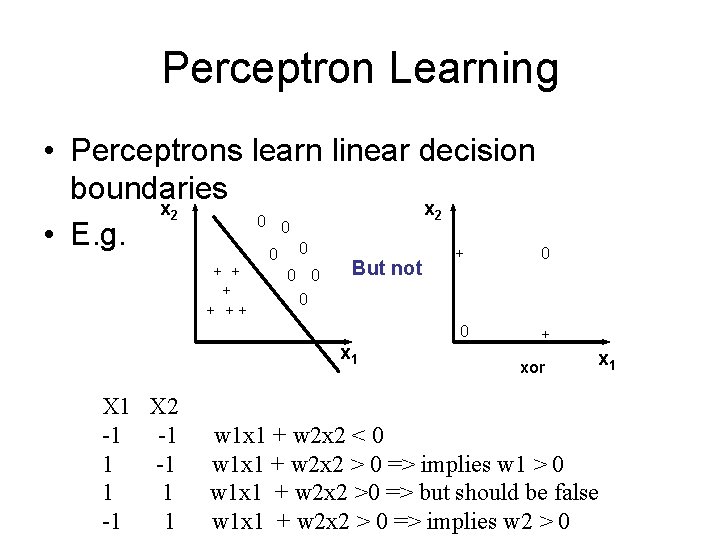

Perceptron Learning • Perceptrons learn linear decision boundaries x 2 0 0 • E. g. + 0 0 0 But not 0 x 1 X 2 -1 -1 1 + + ++ xor x 1 w 1 x 1 + w 2 x 2 < 0 w 1 x 1 + w 2 x 2 > 0 => implies w 1 > 0 w 1 x 1 + w 2 x 2 >0 => but should be false w 1 x 1 + w 2 x 2 > 0 => implies w 2 > 0

Perceptron Example • Digit recognition – Assume display= 8 lightable bars – Inputs – on/off + threshold – 65 steps to recognize “ 8”

Perceptron Summary • Motivated by neuron activation • Simple training procedure • Guaranteed to converge – IF linearly separable

- Slides: 30