Googles MapReduce Presented by Ofir Gordon References Based

Google’s Map-Reduce Presented by Ofir Gordon

References • Based on Google’s article – Map. Reduce: Simplied Data Processing on Large Clusters, by Jeffrey Dean and Sanjay Ghemawat • Google Developers online video lecture on “Cluster Computing and Map. Reduce” (five lectures – You. Tube course)

In This Lecture • Functional Programming • Map-Reduce – Intro: § Motivation § Basic Flow and Architecture § Example • Google’s Map-Reduce: § Implementation § Properties and Refinements • Usage Examples – Google Search

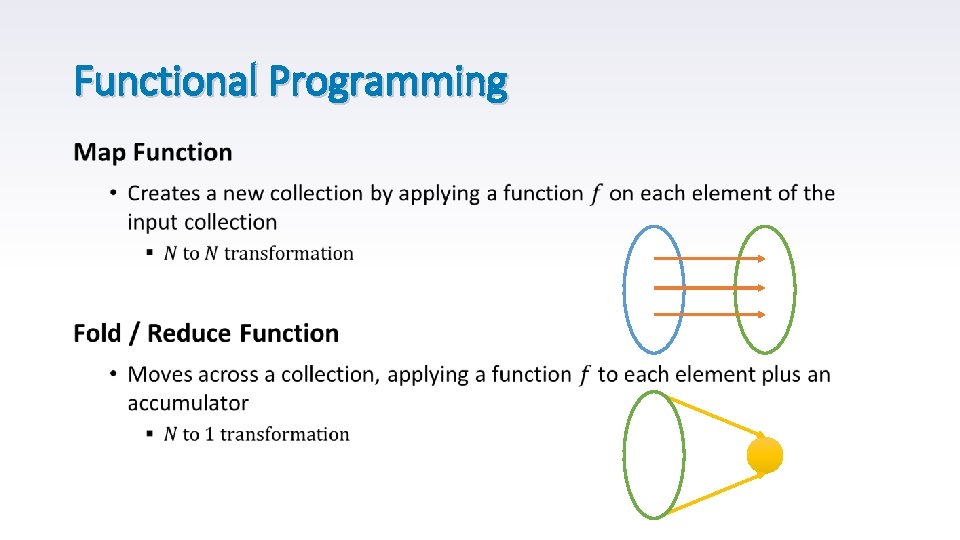

Functional Programming • Map-Reduce drew its inspiration from functional languages, such as ML & LISP • The main idea: § Computation is based on the evaluation of sequence of functions § Operations do not modify data (avoids mutable data) § Functions can be use as arguments (“First-Class Citizens”)

Functional Programming •

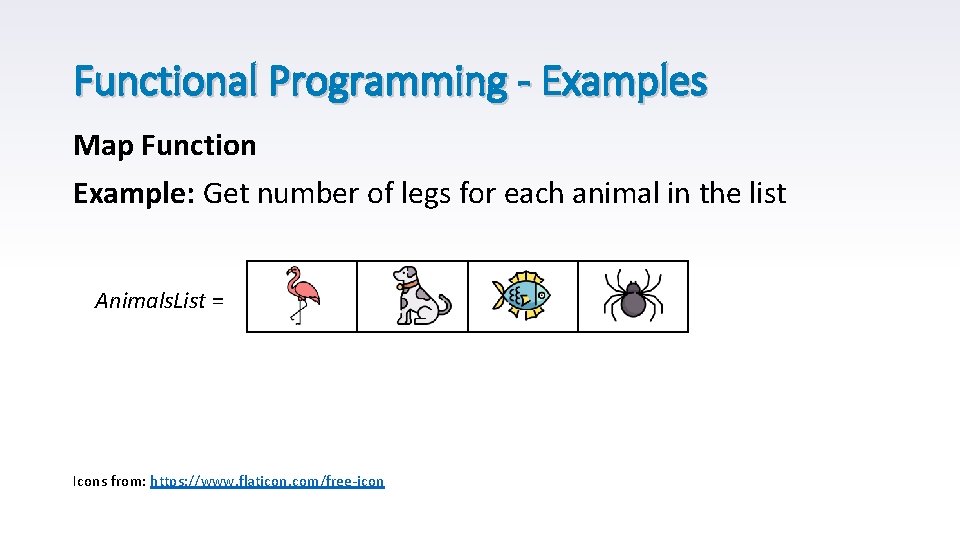

Functional Programming - Examples Map Function Example: Get number of legs for each animal in the list Animals. List = Icons from: https: //www. flaticon. com/free-icon

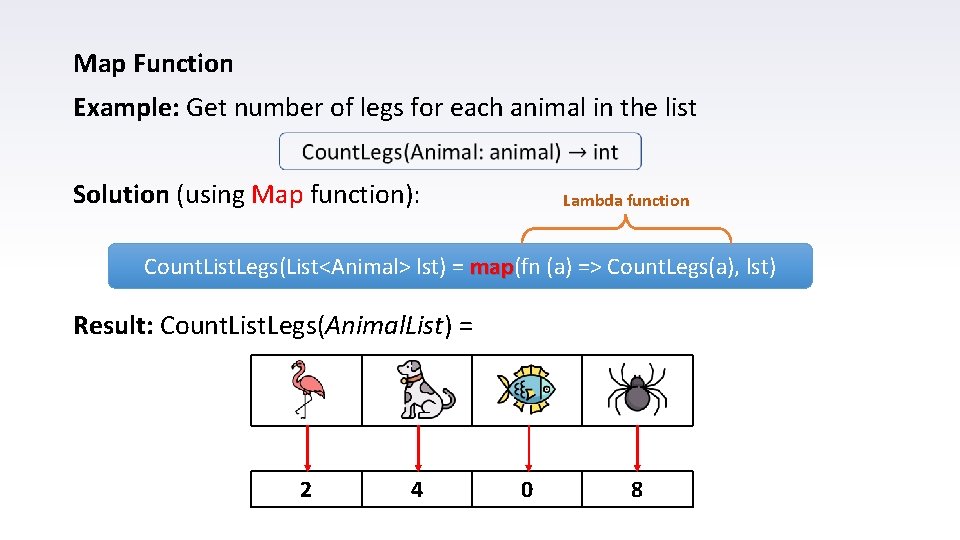

Map Function Example: Get number of legs for each animal in the list Solution (using Map function): Lambda function Count. List. Legs(List<Animal> lst) = map(fn (a) => Count. Legs(a), lst) map Result: Count. List. Legs(Animal. List) = 2 4 0 8

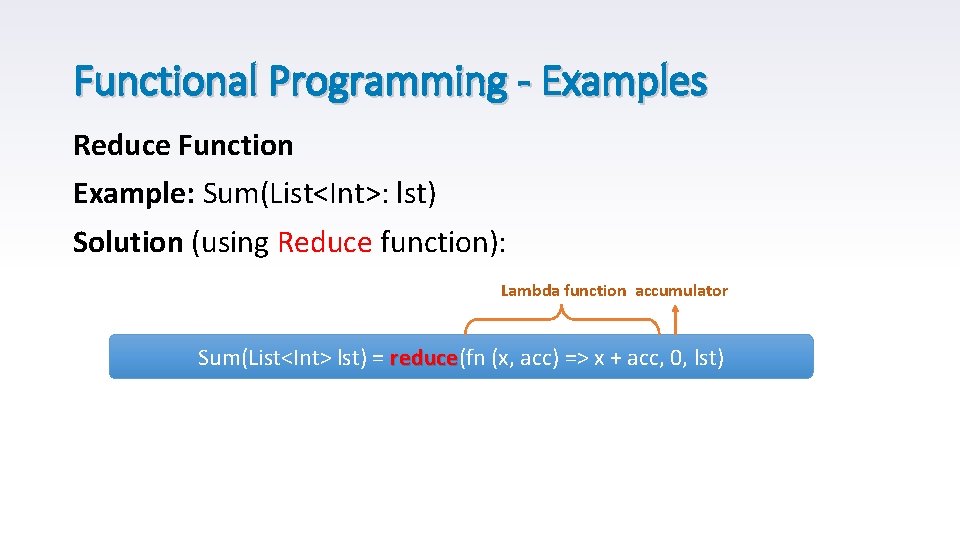

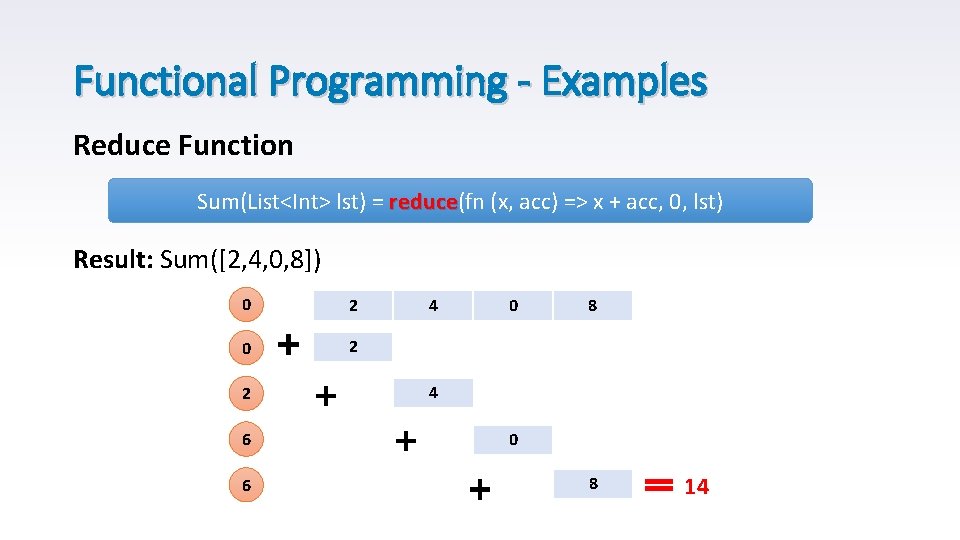

Functional Programming - Examples Reduce Function Example: Sum(List<Int>: lst) Solution (using Reduce function): Lambda function accumulator Sum(List<Int> lst) = reduce(fn (x, acc) => x + acc, 0, lst) reduce

Functional Programming - Examples Reduce Function Sum(List<Int> lst) = reduce(fn (x, acc) => x + acc, 0, lst) reduce Result: Sum([2, 4, 0, 8]) 0 2 2 6 6 4 0 8 14

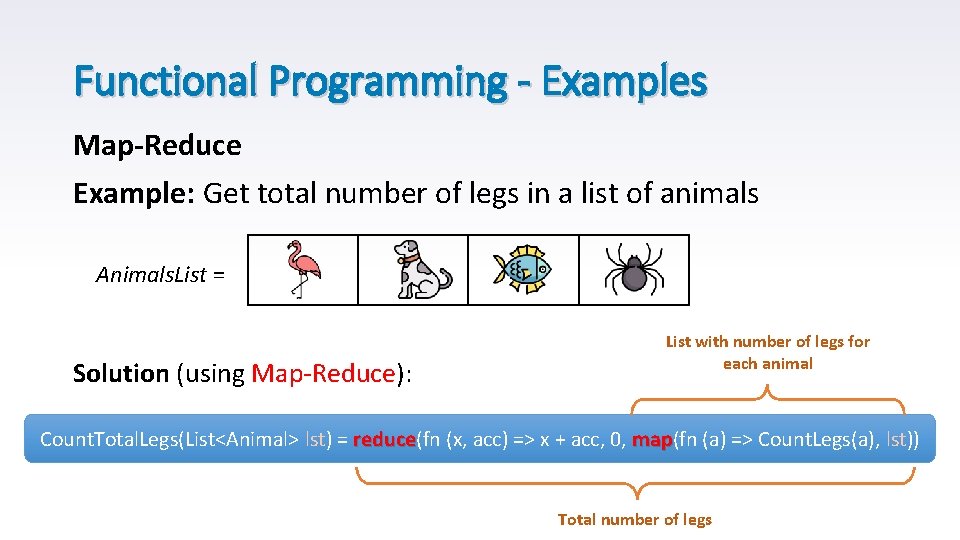

Functional Programming - Examples Map-Reduce Example: Get total number of legs in a list of animals Animals. List = Solution (using Map-Reduce): List with number of legs for each animal Count. Total. Legs(List<Animal> lst) = reduce(fn (x, acc) => x + acc, 0, map(fn (a) => Count. Legs(a), lst)) reduce map Total number of legs

Map-Reduce – Motivation • Process lots of data • Parallelize the processing across multiple servers • Functional style design makes it simpler to parallelize and execute on large clusters

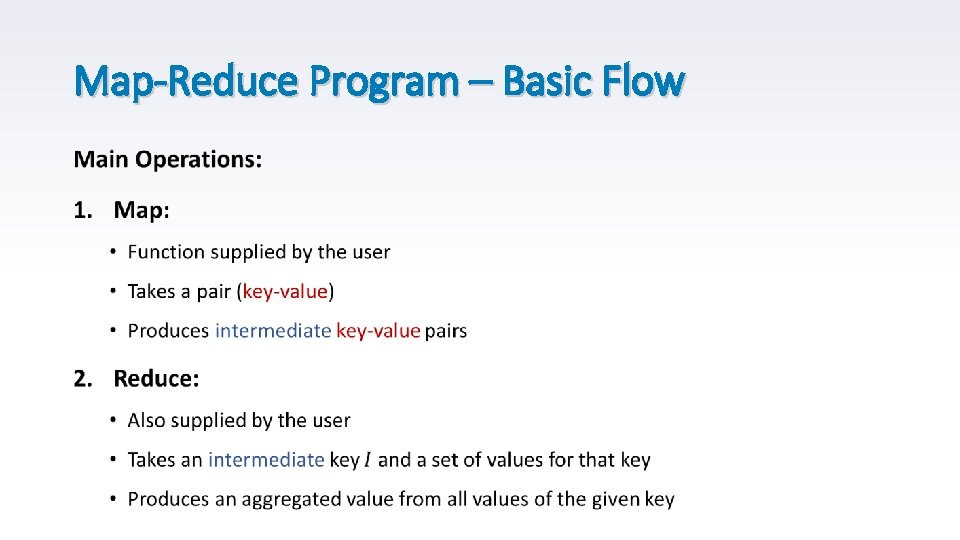

Map-Reduce Program – Basic Flow • Input: Set of key-value pairs (records) • output: Set of different (usually much smaller) key-value pairs § Preforming some aggregation over the input records § Usually output is 0 or 1, but not mandatory

Map-Reduce Program – Basic Flow •

Map-Reduce Program – Basic Flow • All Map operations run in parallel § On different input datasets • All Reduce operations also run in parallel § On different intermediate output keys • All values are processed independently • Bottleneck – reduce phase can’t start until map phase is complete

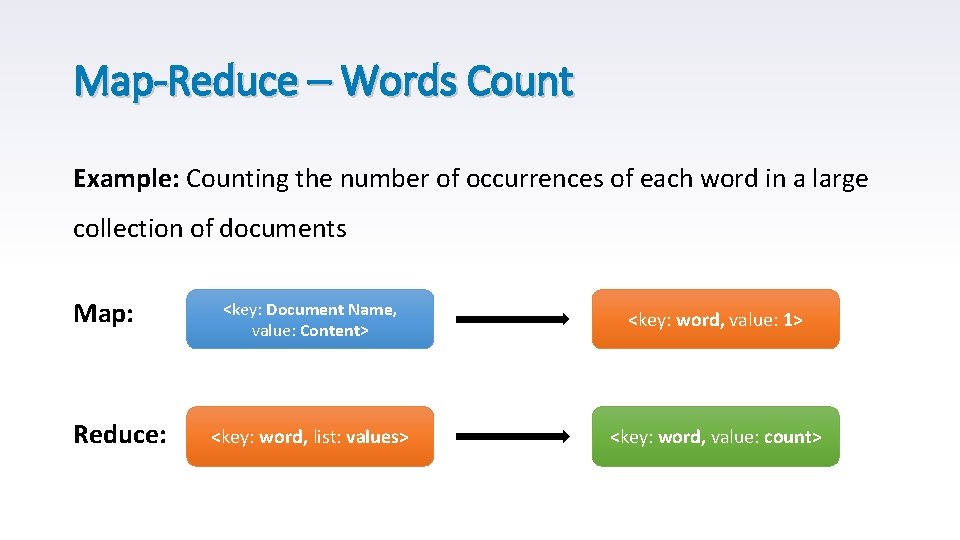

Map-Reduce – Words Count Example: Counting the number of occurrences of each word in a large collection of documents Map: Reduce: <key: Document Name, value: Content> <key: word, value: 1> <key: word, list: values> <key: word, value: count>

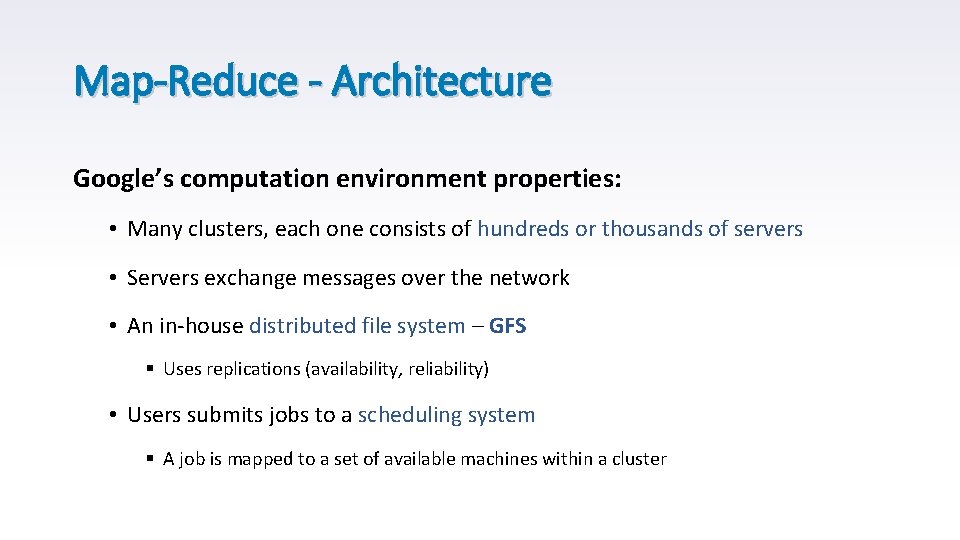

Map-Reduce - Architecture Google’s computation environment properties: • Many clusters, each one consists of hundreds or thousands of servers • Servers exchange messages over the network • An in-house distributed file system – GFS § Uses replications (availability, reliability) • Users submits jobs to a scheduling system § A job is mapped to a set of available machines within a cluster

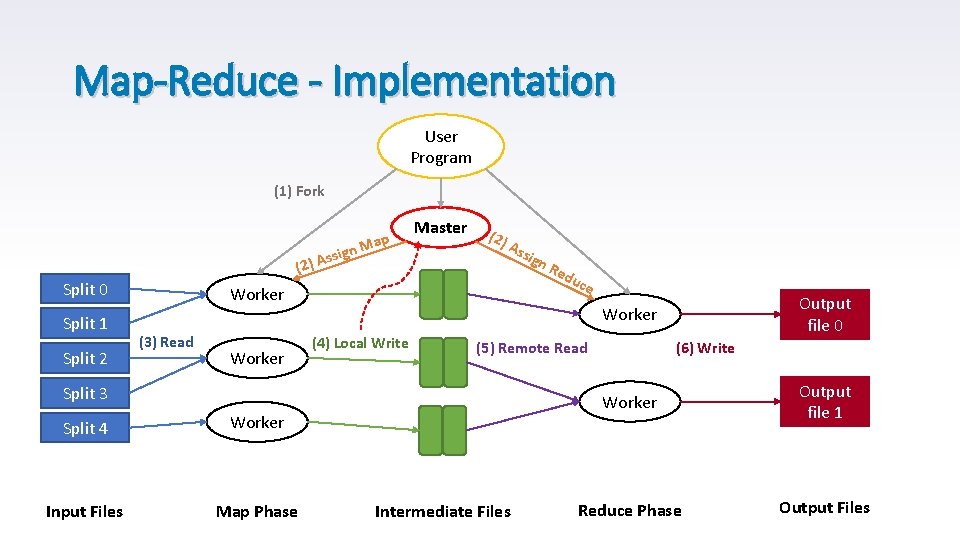

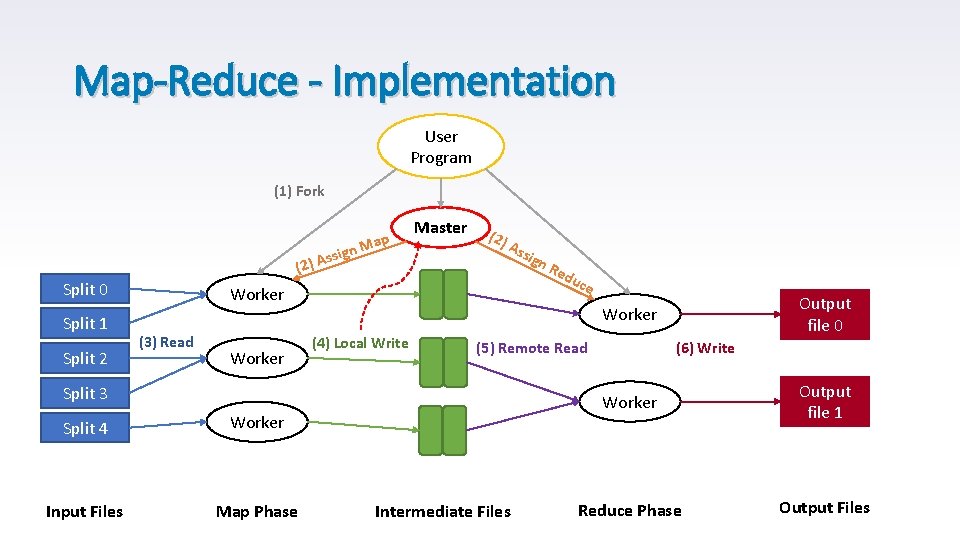

Map-Reduce - Implementation User Program (1) Fork ign s s A ) Map (2 Split 0 Split 1 Split 2 Master (2) Ass Worker (3) Read Worker Input Files Map Phase Re du ce Output file 0 Worker (4) Local Write (5) Remote Read Split 3 Split 4 ign Intermediate Files (6) Write Worker Output file 1 Reduce Phase Output Files

Map-Reduce - Implementation User Program (1) Fork ign s s A ) Map (2 Split 0 Split 1 Split 2 Master (2) Ass Worker (3) Read Worker Input Files Map Phase Re du ce Output file 0 Worker (4) Local Write (5) Remote Read Split 3 Split 4 ign Intermediate Files (6) Write Worker Output file 1 Reduce Phase Output Files

The Master Data Structures • Stores a State for each map reduce task • Stores the Identity of the worker machine for each task • Stores location and size of each completed map task output

Locality • The master attempts to schedule a map task on machine that contains a replica of the corresponding input data • Saves network bandwidth

Task Granularity • Number of workers is defined by the user and tasks split across workers by the master § Assume M map tasks, R reduce tasks • Bounds on M and R are derived from the master’s implementation § Effects the number of scheduling decisions (run time) § Effects the number of states need to keep in memory (space)

Fault Tolerance 1. Worker Failure • Master pings each worker periodically and marks nonresponding servers as failed • In-progress map or reduce tasks are being Reset and Reschedule on another worker • Completed map tasks are also Reset and Reschedule § output is stored on the local disk § Reduce workers are notified • Completed reduce tasks do not need to be re-executed – stored on global file system

Fault Tolerance 2. Master Failure • Master failure is very not common • The described solution in case of a master failure is to cancel the Map-Reduce operation • However, it is possible to save checkpoints of the master’s data structures states, and recover from the last checkpoint in case of a failure

Fault Tolerance 3. Semantic in the presence of Failures • The distributed system produces the same output as a sequential one • Relies on Atomic operations in the file system layer § Duplicate tasks are being filtered out by the master

Refinements – Backup Tasks • Common Problem: One slow machine that takes unusually long time to complete the last map reduce tasks § Bad disk § Cluster resources § Cache miss

Refinements – Backup Tasks • Common Problem: One slow machine that takes unusually long time to complete the last map reduce tasks • Solution: When the Map-Reduce operation is close to completion The master schedules Backup Executions for all in-progress tasks

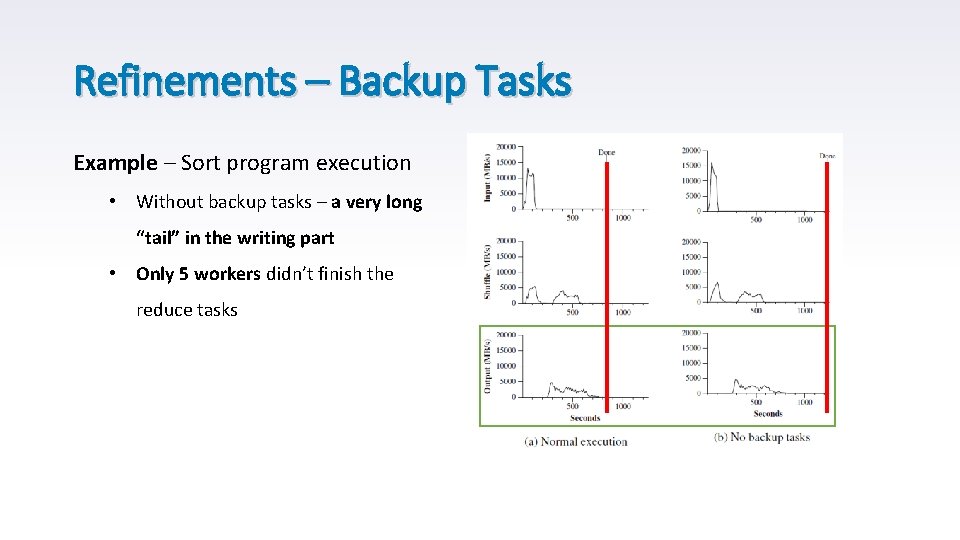

Refinements – Backup Tasks Example – Sort program execution • Without backup tasks – a very long “tail” in the writing part • Only 5 workers didn’t finish the reduce tasks

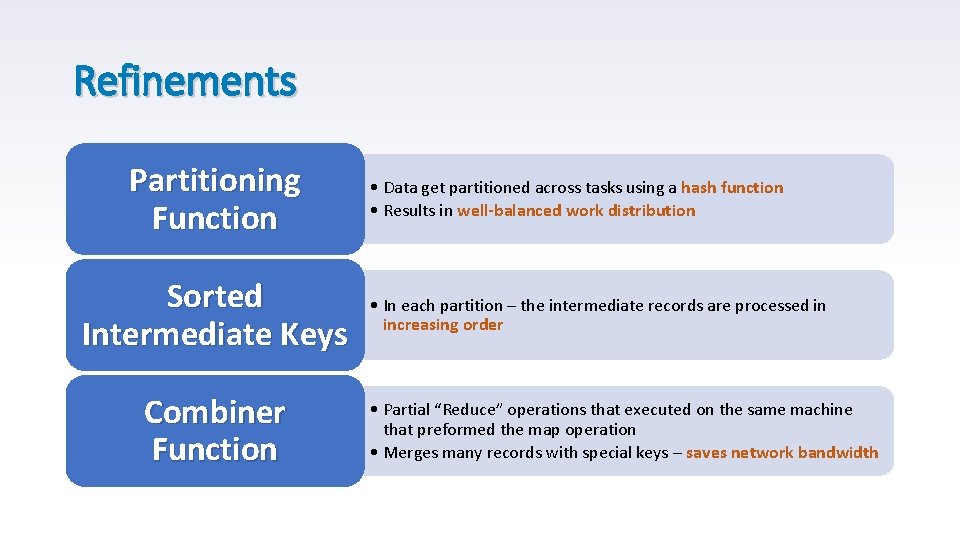

Refinements Partitioning Function Sorted Intermediate Keys Combiner Function • Data get partitioned across tasks using a hash function • Results in well-balanced work distribution • In each partition – the intermediate records are processed in increasing order • Partial “Reduce” operations that executed on the same machine that preformed the map operation • Merges many records with special keys – saves network bandwidth

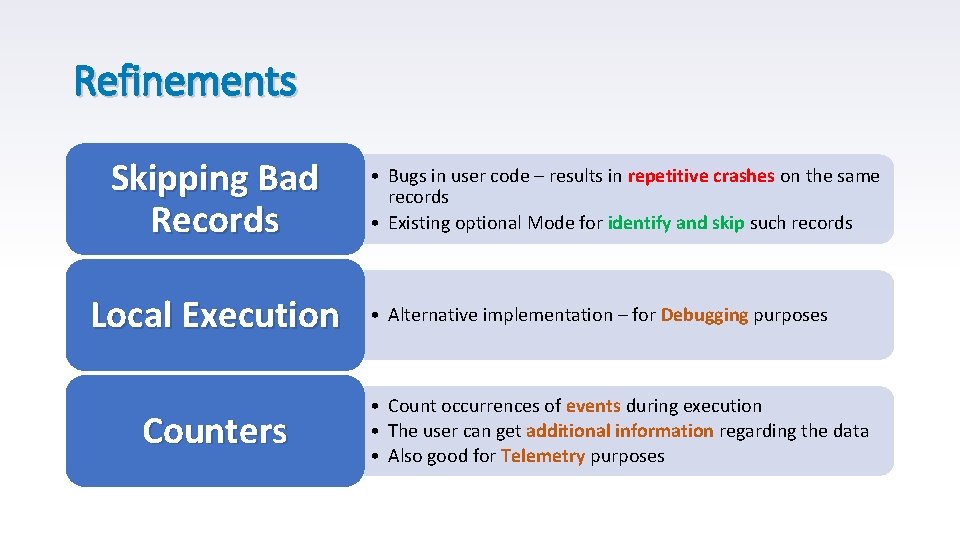

Refinements Skipping Bad Records Local Execution Counters • Bugs in user code – results in repetitive crashes on the same records • Existing optional Mode for identify and skip such records • Alternative implementation – for Debugging purposes • Count occurrences of events during execution • The user can get additional information regarding the data • Also good for Telemetry purposes

Map-Reduce Applications - Google Search • Large-Scale indexing system • Takes a large set of documents as input • Runs a sequence of Map-Reduce iterations • Simple code – fault tolerance, parallelization and distribution are hidden in the Map-Reduce layer • Highly scalable – easy to maintain and increase performance

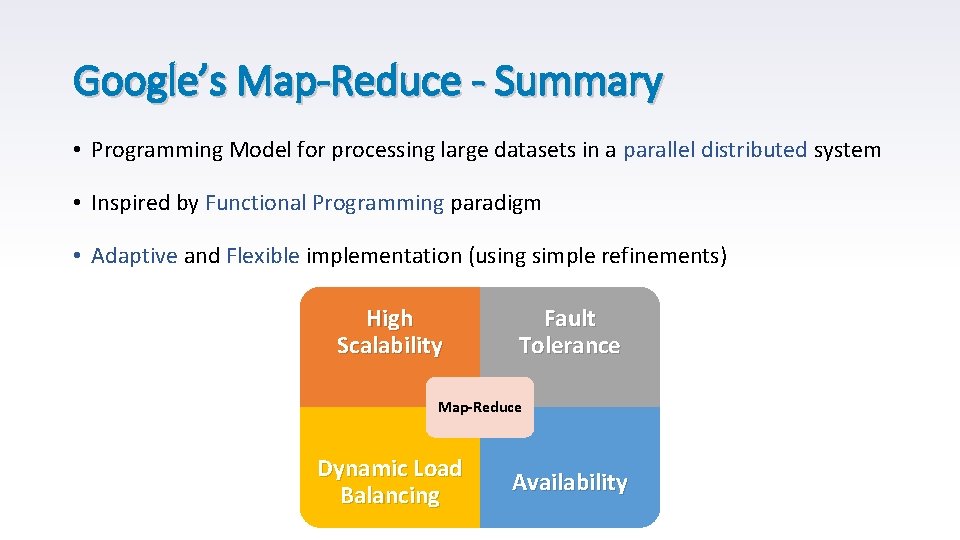

Google’s Map-Reduce - Summary • Programming Model for processing large datasets in a parallel distributed system • Inspired by Functional Programming paradigm • Adaptive and Flexible implementation (using simple refinements) High Scalability Fault Tolerance Map-Reduce Dynamic Load Balancing Availability

- Slides: 32