Feature descriptors and matching Basic correspondence Image patch

Feature descriptors and matching

Basic correspondence • Image patch as descriptor, NCC as similarity • Invariant to? • • Photometric transformations? Translation? Rotation? Scaling?

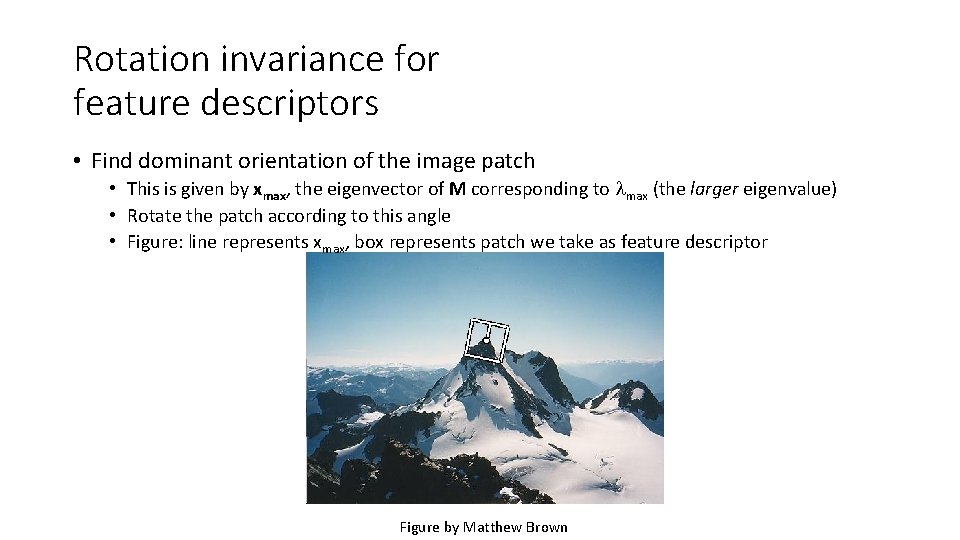

Rotation invariance for feature descriptors • Find dominant orientation of the image patch • This is given by xmax, the eigenvector of M corresponding to max (the larger eigenvalue) • Rotate the patch according to this angle • Figure: line represents xmax, box represents patch we take as feature descriptor Figure by Matthew Brown

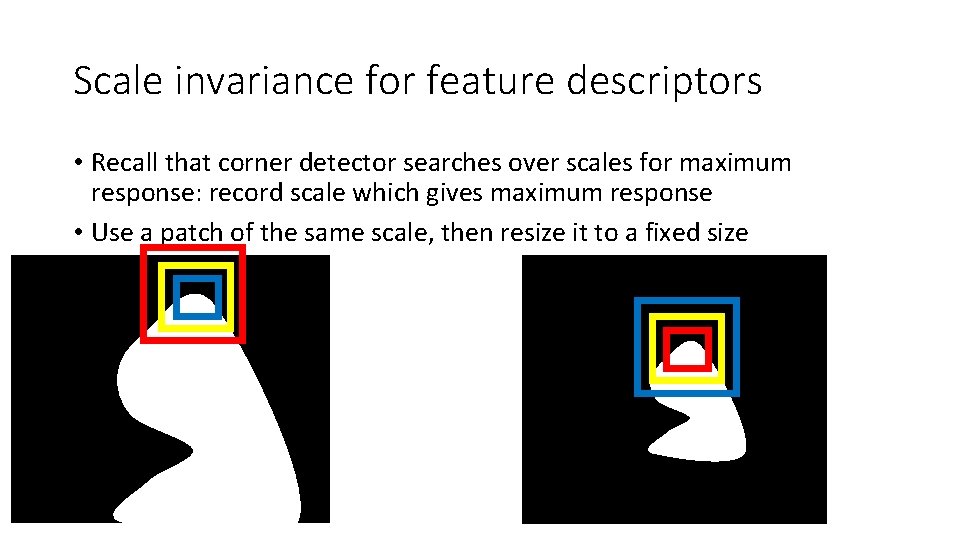

Scale invariance for feature descriptors • Recall that corner detector searches over scales for maximum response: record scale which gives maximum response • Use a patch of the same scale, then resize it to a fixed size

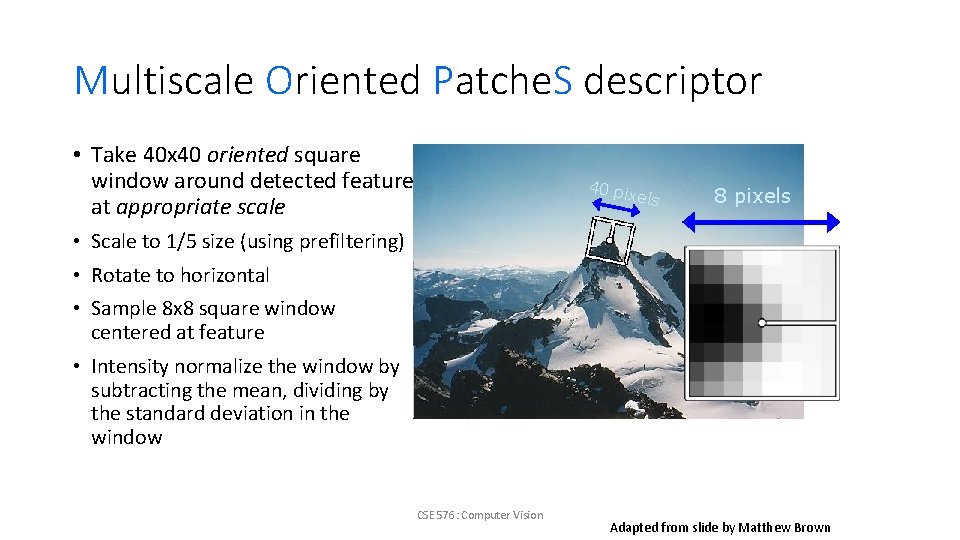

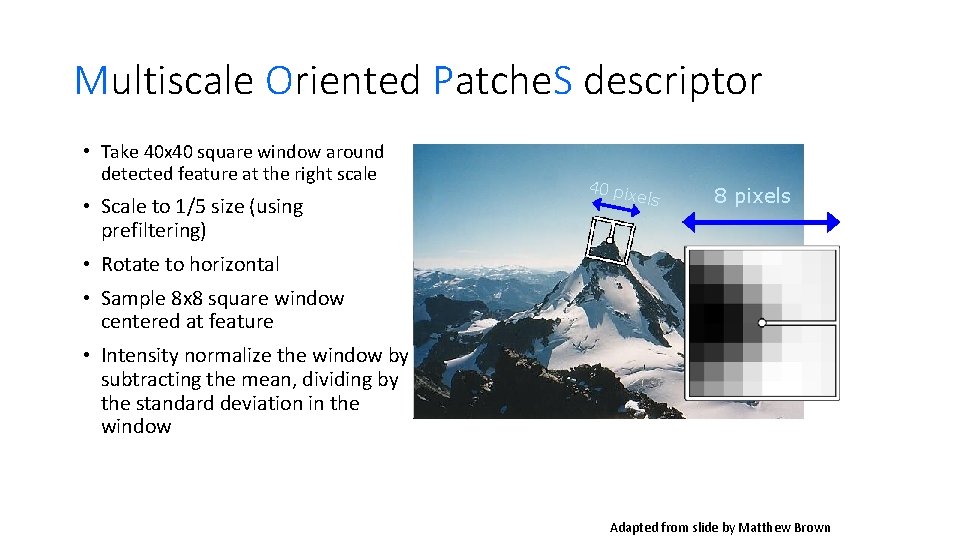

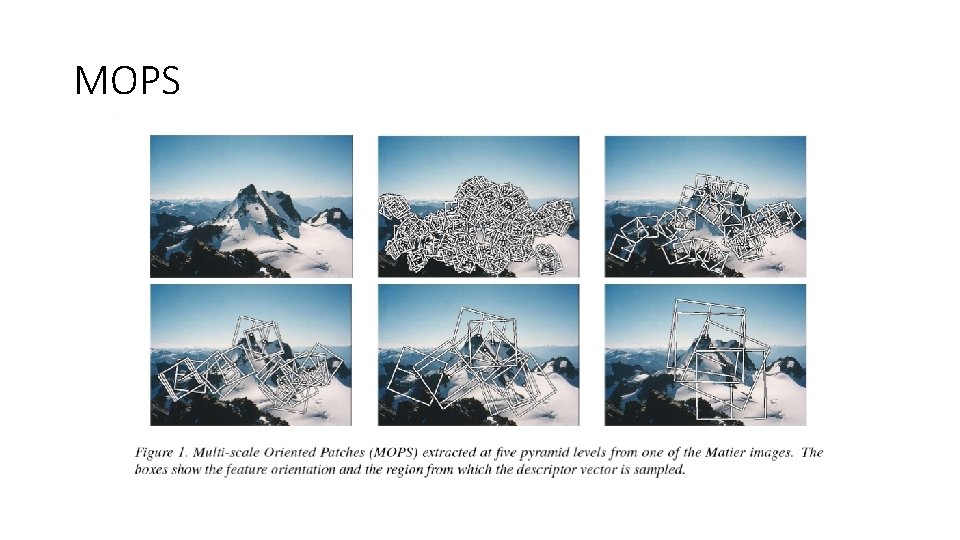

Multiscale Oriented Patche. S descriptor • Take 40 x 40 oriented square window around detected feature at appropriate scale 40 pi xels 8 pixels • Scale to 1/5 size (using prefiltering) • Rotate to horizontal • Sample 8 x 8 square window centered at feature • Intensity normalize the window by subtracting the mean, dividing by the standard deviation in the window CSE 576: Computer Vision Adapted from slide by Matthew Brown

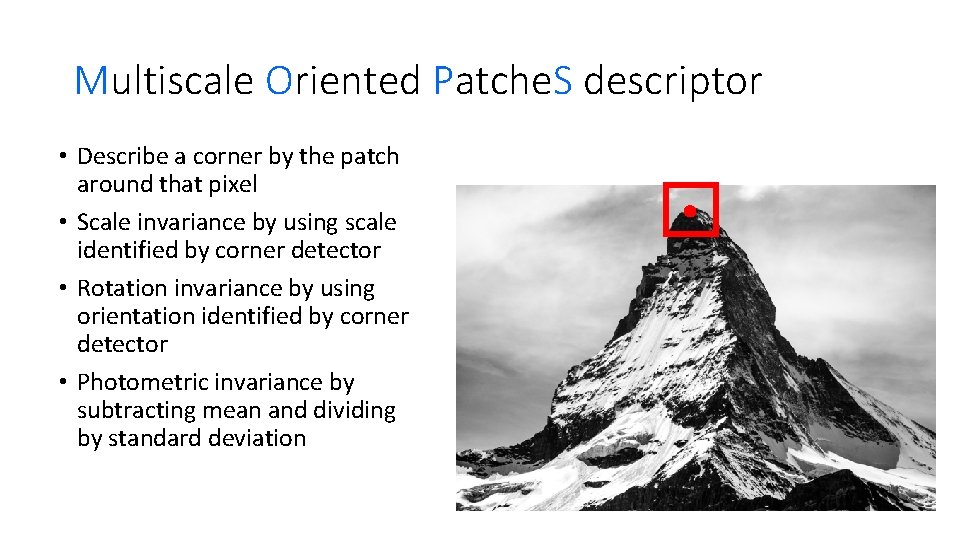

Multiscale Oriented Patche. S descriptor • Describe a corner by the patch around that pixel • Scale invariance by using scale identified by corner detector • Rotation invariance by using orientation identified by corner detector • Photometric invariance by subtracting mean and dividing by standard deviation

Multiscale Oriented Patche. S descriptor • Take 40 x 40 square window around detected feature at the right scale • Scale to 1/5 size (using prefiltering) 40 pi xels 8 pixels • Rotate to horizontal • Sample 8 x 8 square window centered at feature • Intensity normalize the window by subtracting the mean, dividing by the standard deviation in the window CSE 576: Computer Vision Adapted from slide by Matthew Brown

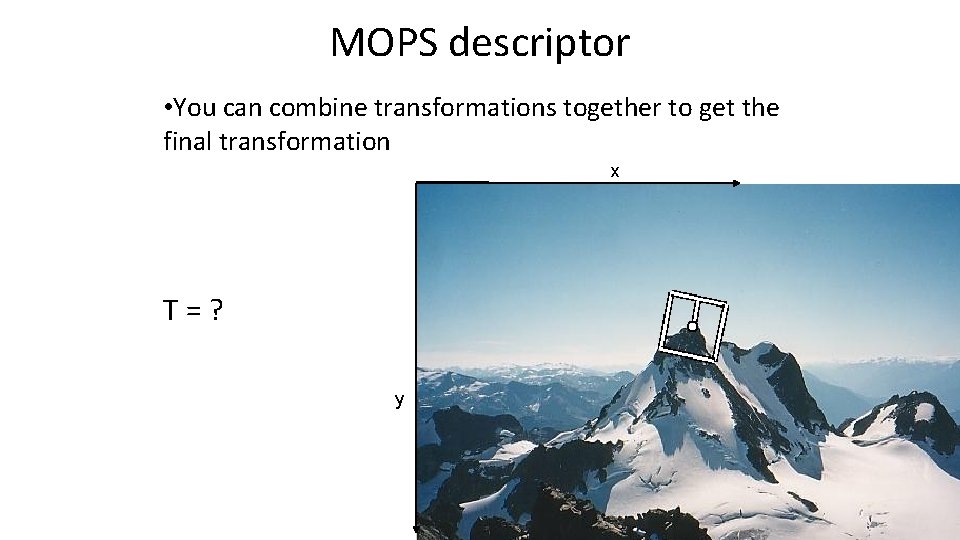

MOPS descriptor • You can combine transformations together to get the final transformation x T=? y

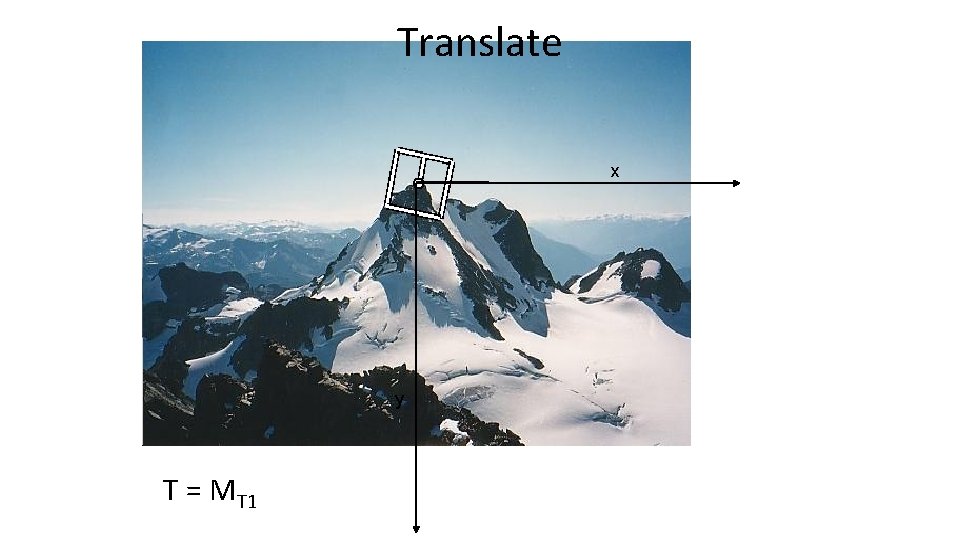

Translate x y T = MT 1

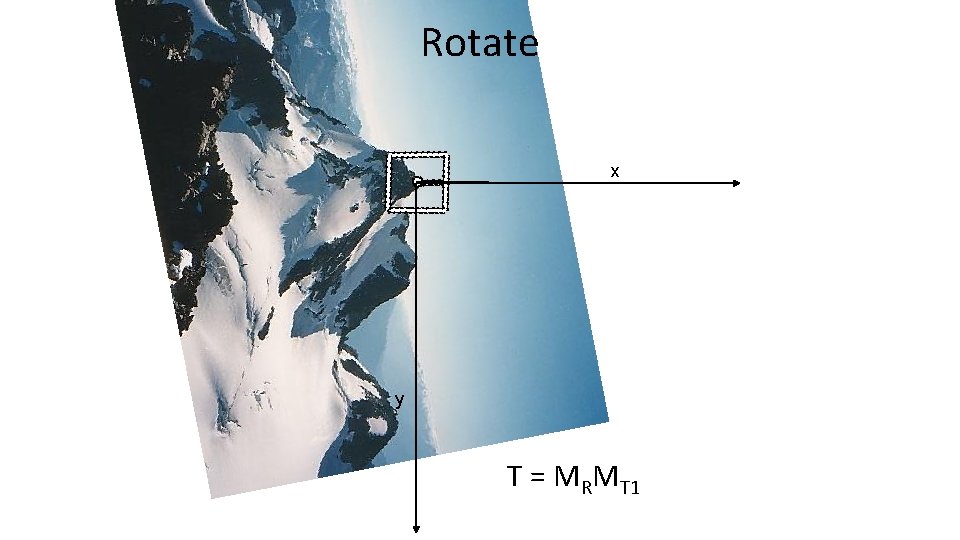

Rotate x y T = MRMT 1

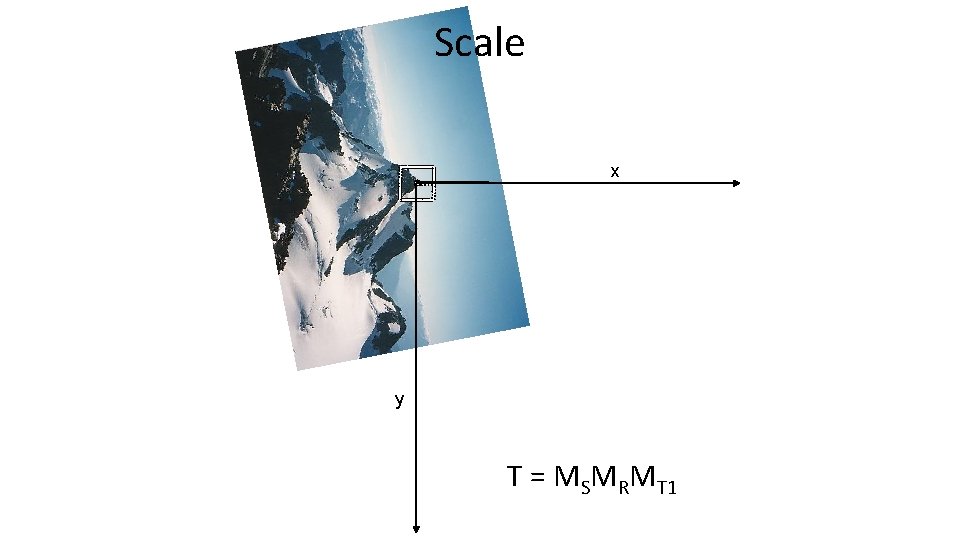

Scale x y T = MSMRMT 1

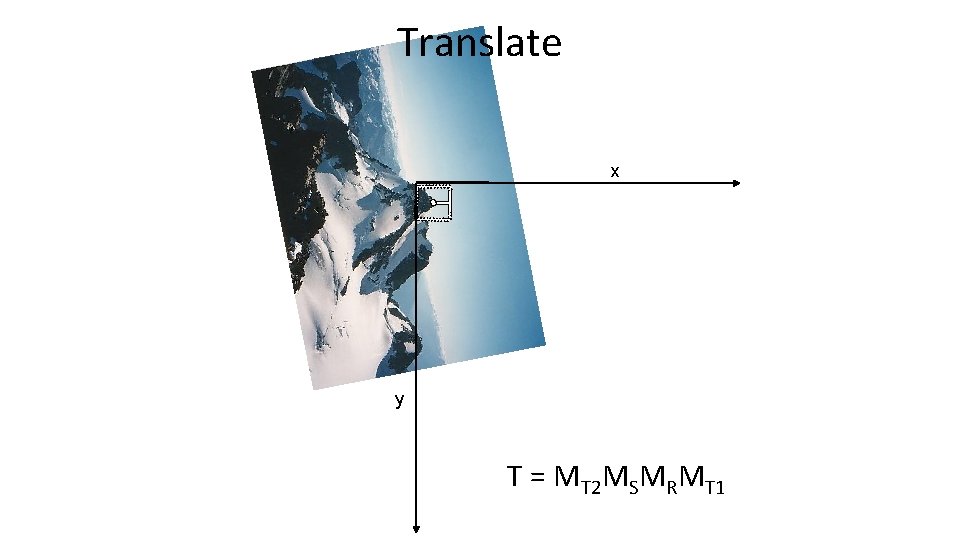

Translate x y T = MT 2 MSMRMT 1

Crop x y

MOPS

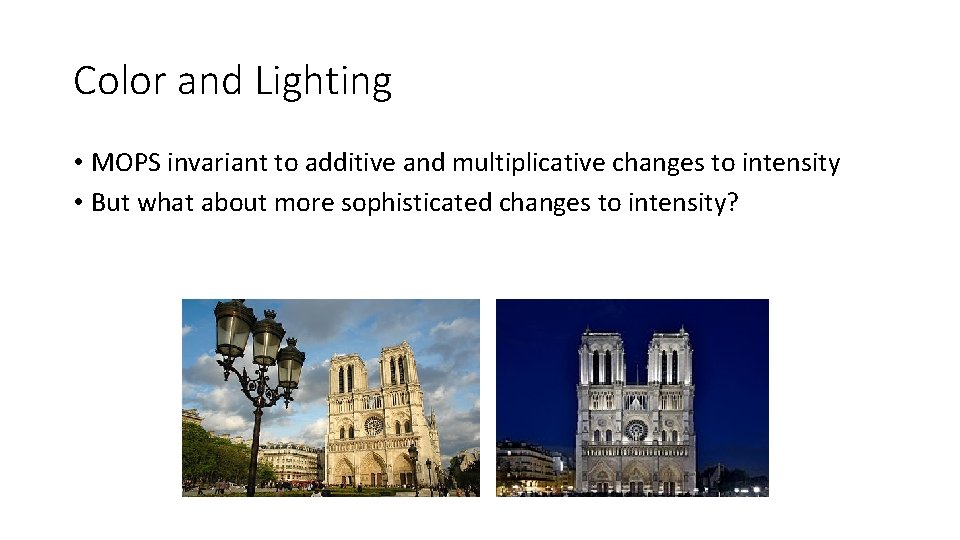

Color and Lighting • MOPS invariant to additive and multiplicative changes to intensity • But what about more sophisticated changes to intensity?

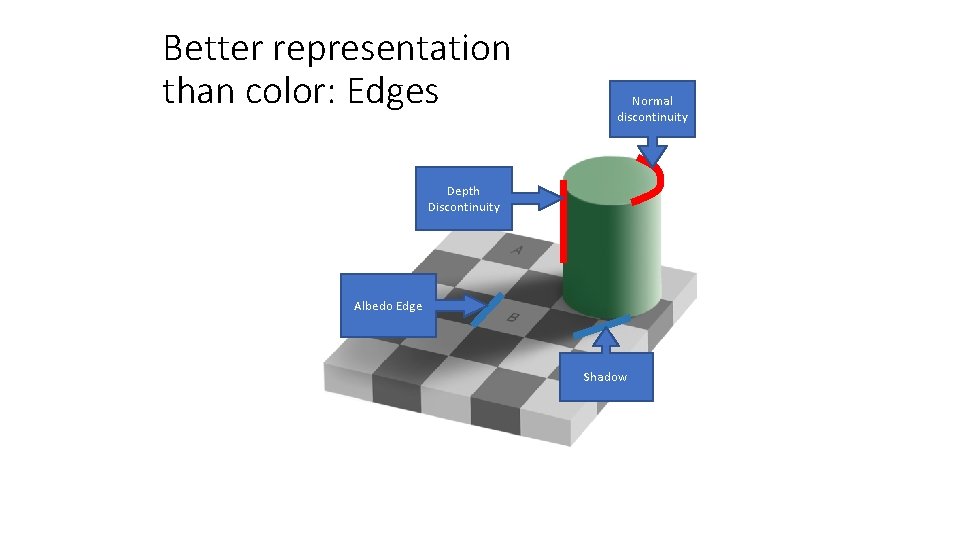

Better representation than color: Edges Normal discontinuity Depth Discontinuity Albedo Edge Shadow

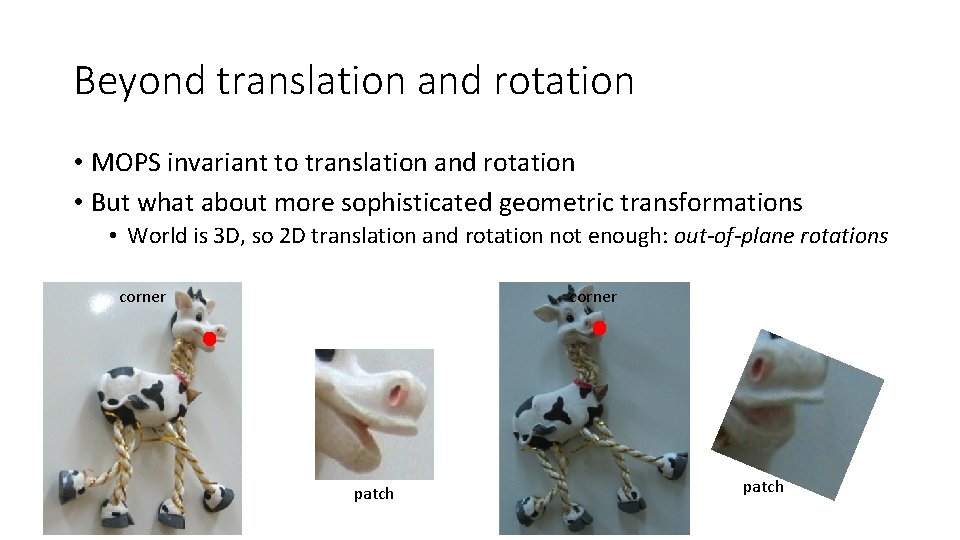

Beyond translation and rotation • MOPS invariant to translation and rotation • But what about more sophisticated geometric transformations • World is 3 D, so 2 D translation and rotation not enough: out-of-plane rotations corner patch

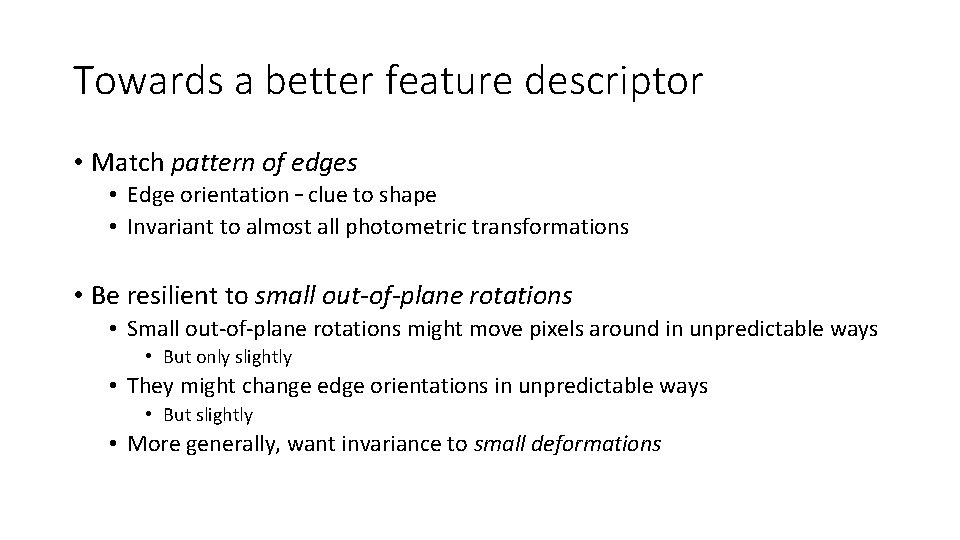

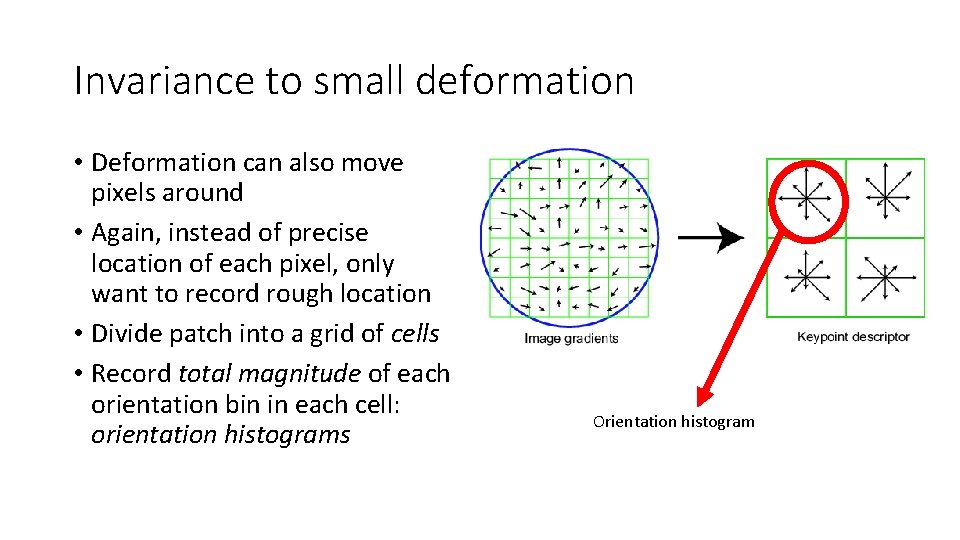

Towards a better feature descriptor • Match pattern of edges • Edge orientation – clue to shape • Invariant to almost all photometric transformations • Be resilient to small out-of-plane rotations • Small out-of-plane rotations might move pixels around in unpredictable ways • But only slightly • They might change edge orientations in unpredictable ways • But slightly • More generally, want invariance to small deformations

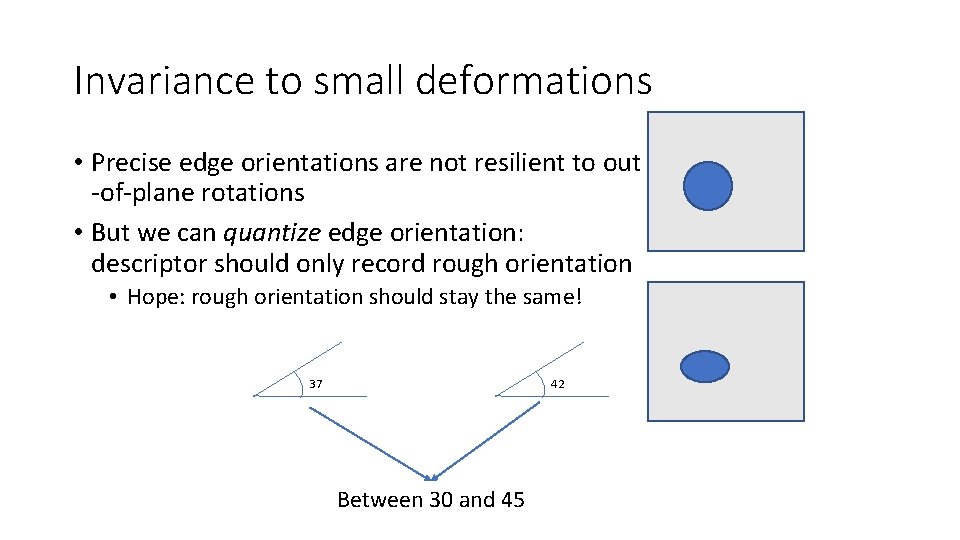

Invariance to small deformations • Precise edge orientations are not resilient to out -of-plane rotations • But we can quantize edge orientation: descriptor should only record rough orientation • Hope: rough orientation should stay the same! 37 42 Between 30 and 45

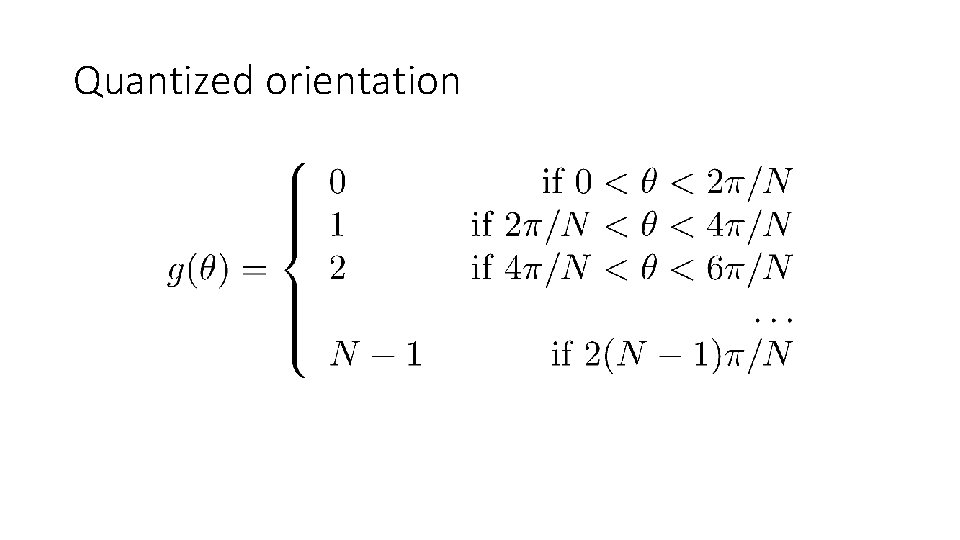

Quantized orientation

Quantization • Quantization is a general trick • Converts from continuous, real numbers to discrete choices

Invariance to small deformation • Deformation can also move pixels around • Again, instead of precise location of each pixel, only want to record rough location • Divide patch into a grid of cells • Record total magnitude of each orientation bin in each cell: orientation histograms Orientation histogram

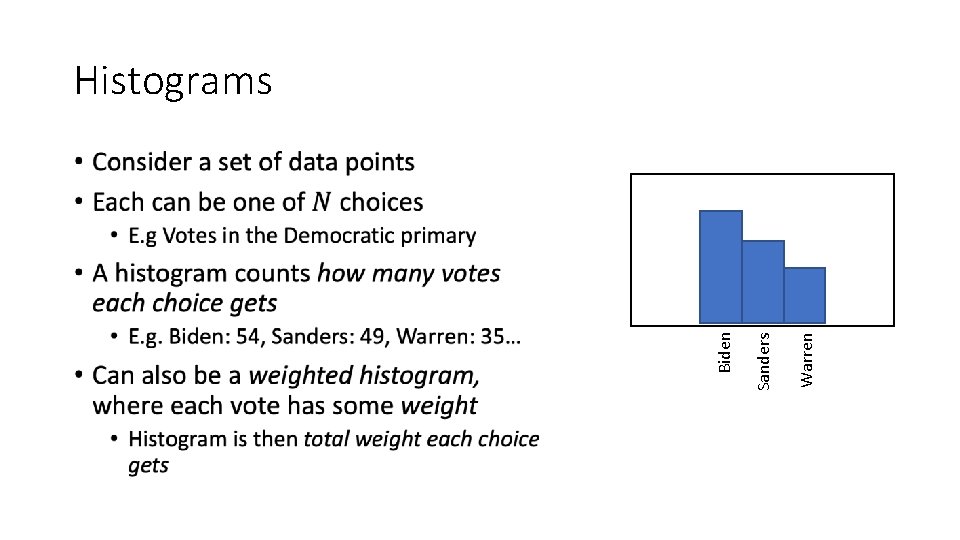

Warren Sanders Biden Histograms •

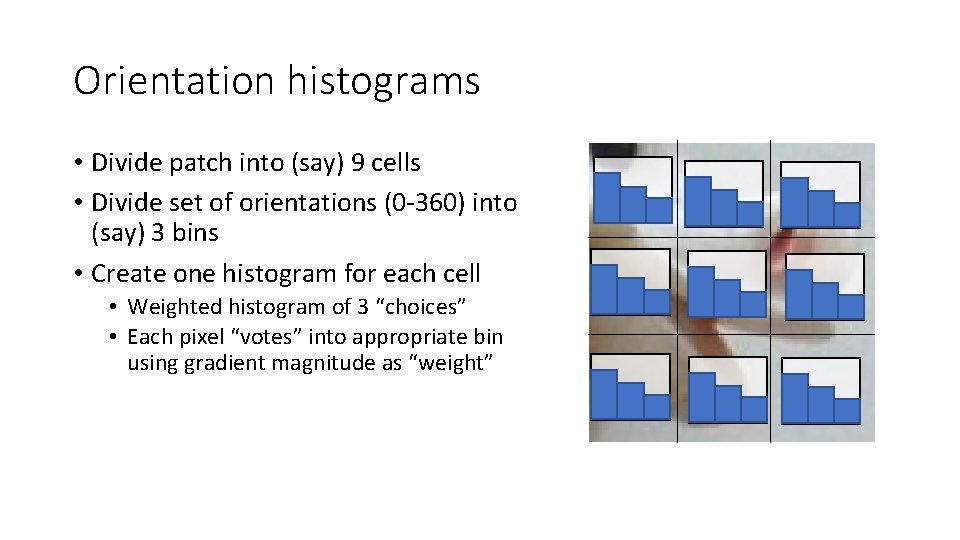

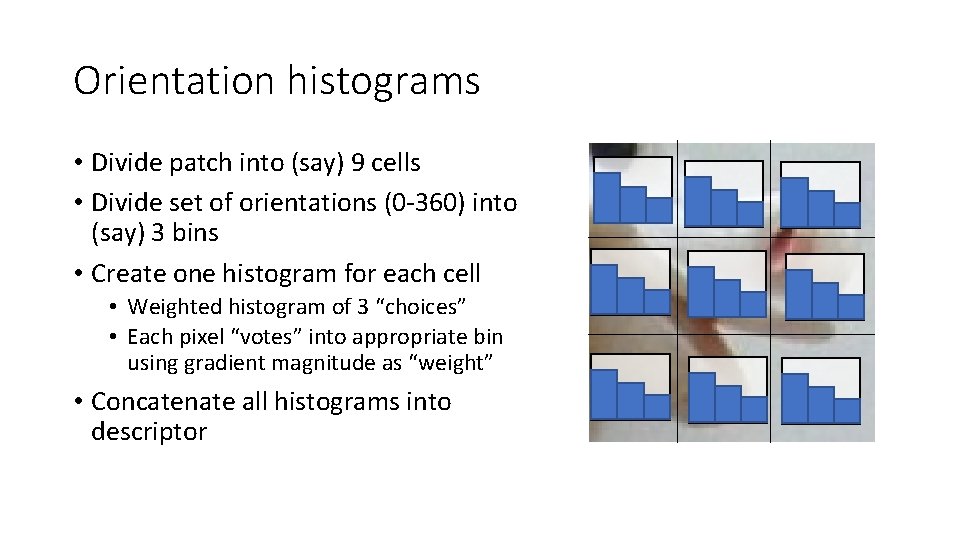

Orientation histograms • Divide patch into (say) 9 cells • Divide set of orientations (0 -360) into (say) 3 bins • Create one histogram for each cell • Weighted histogram of 3 “choices” • Each pixel “votes” into appropriate bin using gradient magnitude as “weight”

Orientation histograms • Divide patch into (say) 9 cells • Divide set of orientations (0 -360) into (say) 3 bins • Create one histogram for each cell • Weighted histogram of 3 “choices” • Each pixel “votes” into appropriate bin using gradient magnitude as “weight” • Concatenate all histograms into descriptor

Orientation Histograms •

The SIFT Descriptor Pipeline • Scale and rotation invariance as before: • Find dominant orientation and rotate till orientation is along X (say) • Use scale output by corner detector to crop patch of appropriate size • Divide into cells and construct orientation histograms per cell

![Rotation Invariance by Orientation [Lowe, SIFT, 1999] Normalization • Compute orientation histogram over entire Rotation Invariance by Orientation [Lowe, SIFT, 1999] Normalization • Compute orientation histogram over entire](http://slidetodoc.com/presentation_image_h2/0cf23e6c3b78e30ff3f4a52cfa16f162/image-28.jpg)

Rotation Invariance by Orientation [Lowe, SIFT, 1999] Normalization • Compute orientation histogram over entire patch • Select dominant orientation (mode of the histogram; alternative to eigenvector of second moment matrix) • Normalize: rotate patch to fixed orientation 0 T. Tuytelaars, B. Leibe 2 p

Tips and tricks 1: Weak edges • Lots of weak edge pixels with low gradient magnitude might dominate histogram • Orientation is unreliable for low gradient magnitude • Idea: Threshold gradient magnitude: only keep edges of high gradient magnitude

Tips and tricks 2: Soft voting • Small deformations can cause pixels on edge of cells to switch to new cell • If edge pixel, drastically different descriptor • Idea: soft voting • Pixel votes into 4 nearest cells based on distance • Similar to bilinear interpolation • Use same idea for orientation bins

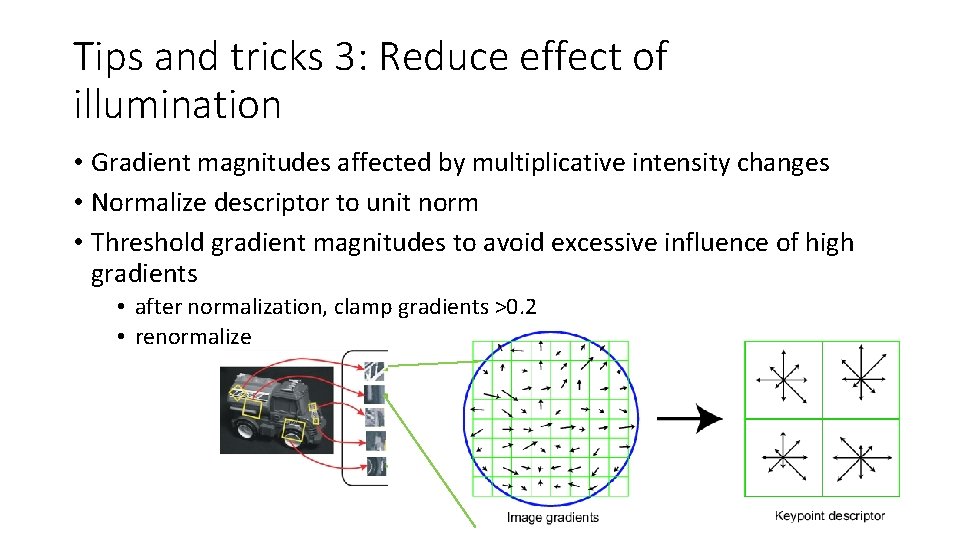

Tips and tricks 3: Reduce effect of illumination • Gradient magnitudes affected by multiplicative intensity changes • Normalize descriptor to unit norm • Threshold gradient magnitudes to avoid excessive influence of high gradients • after normalization, clamp gradients >0. 2 • renormalize

Detail: Feature detection • We have talked about Harris corner detector • Other feature detectors available too • SIFT implementations usually use Difference-of-Gaussians filters • Peaks in Do. G response

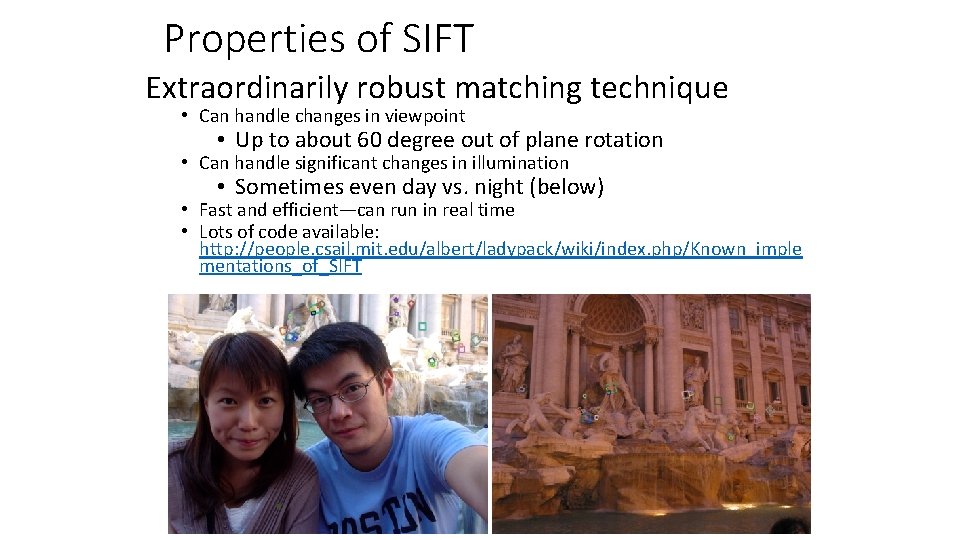

Properties of SIFT Extraordinarily robust matching technique • Can handle changes in viewpoint • Up to about 60 degree out of plane rotation • Can handle significant changes in illumination • Sometimes even day vs. night (below) • Fast and efficient—can run in real time • Lots of code available: http: //people. csail. mit. edu/albert/ladypack/wiki/index. php/Known_imple mentations_of_SIFT

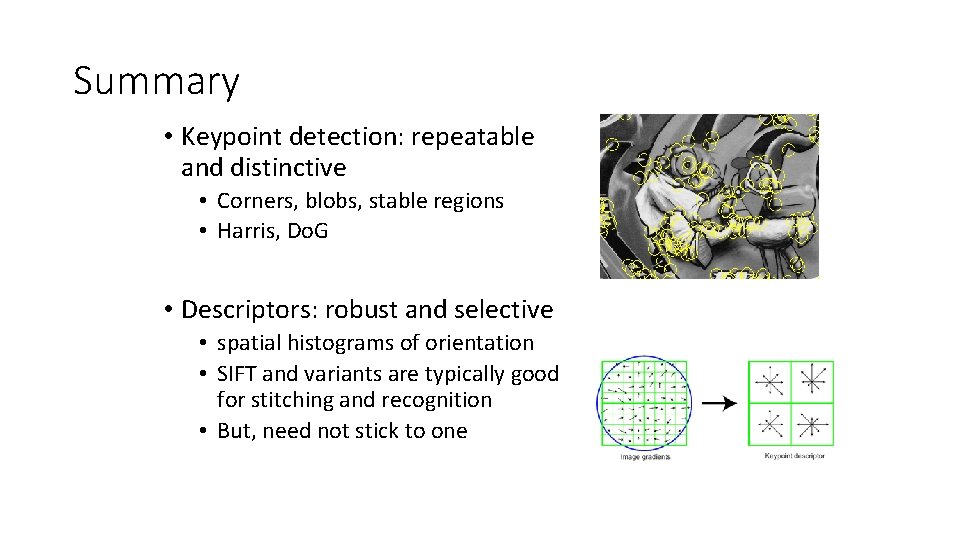

Summary • Keypoint detection: repeatable and distinctive • Corners, blobs, stable regions • Harris, Do. G • Descriptors: robust and selective • spatial histograms of orientation • SIFT and variants are typically good for stitching and recognition • But, need not stick to one

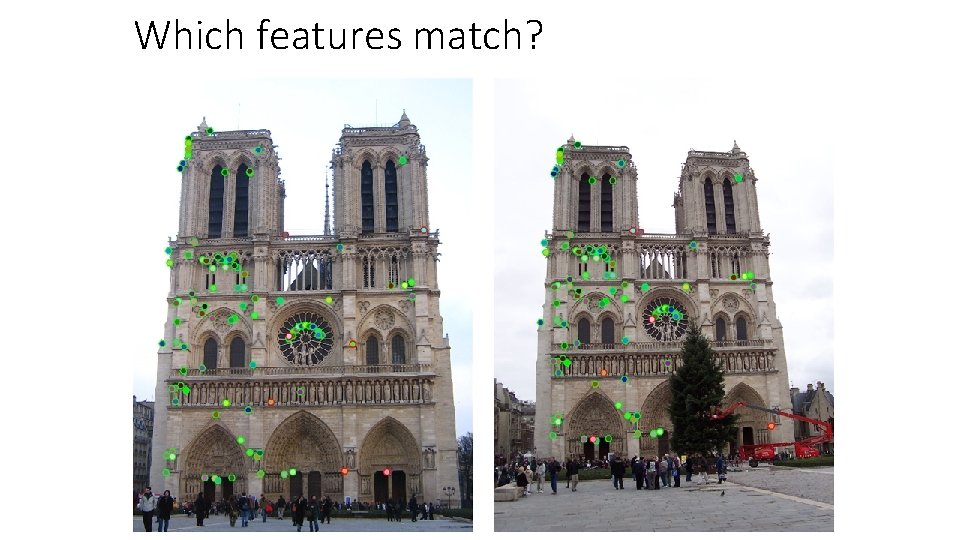

Which features match?

Feature matching Given a feature in I 1, how to find the best match in I 2? 1. Define distance function that compares two descriptors 2. Test all the features in I 2, find the one with min distance

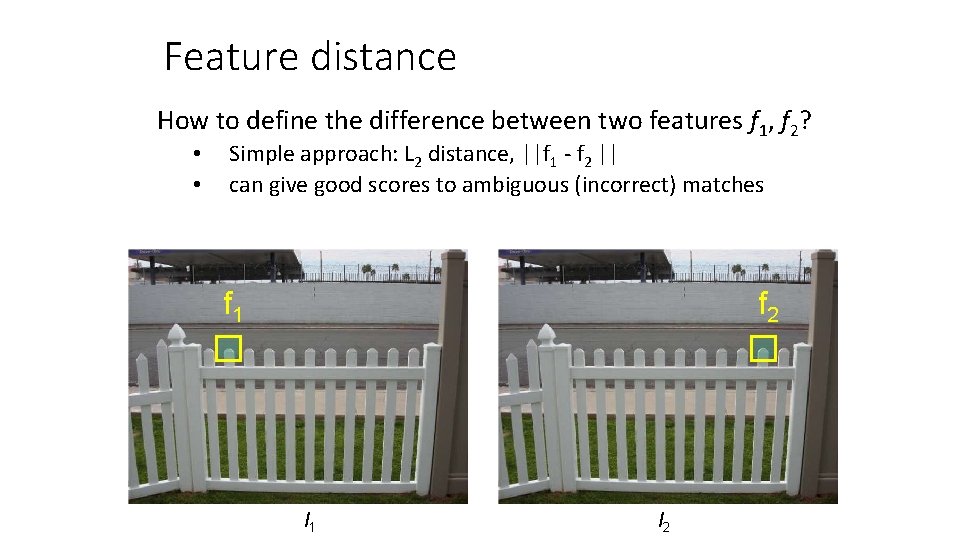

Feature distance How to define the difference between two features f 1, f 2? • • Simple approach: L 2 distance, ||f 1 - f 2 || can give good scores to ambiguous (incorrect) matches f 1 f 2 I 1 I 2

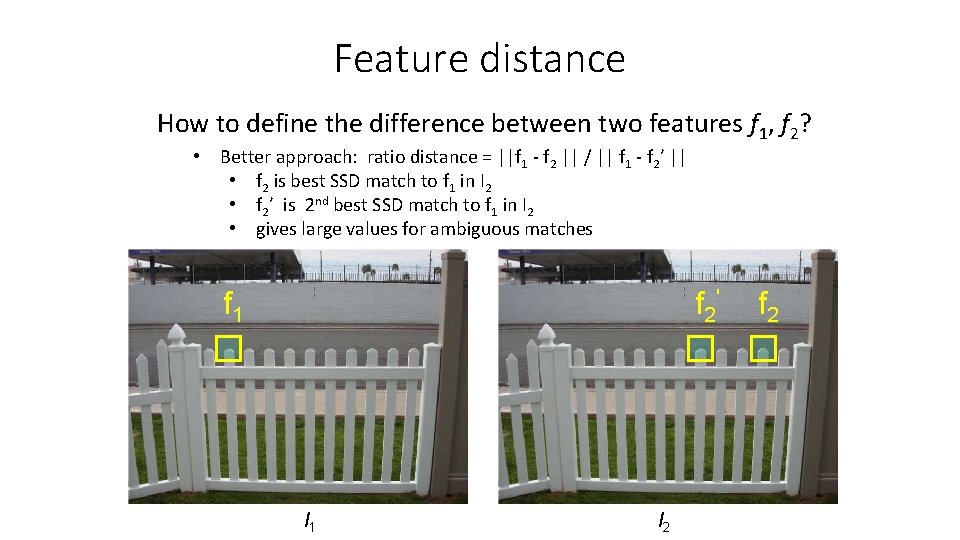

Feature distance How to define the difference between two features f 1, f 2? • Better approach: ratio distance = ||f 1 - f 2 || / || f 1 - f 2’ || • f 2 is best SSD match to f 1 in I 2 • f 2’ is 2 nd best SSD match to f 1 in I 2 • gives large values for ambiguous matches f 1 f 2' I 1 I 2 f 2

- Slides: 38