Ensemble Learning Alexander Hefele Rafael Pires combination of

Ensemble Learning Alexander Hefele Rafael Pires

combination of different machine learning algorithms to improve overall result What ? Definition What ? Why ? How ? Where & When ?

Features & Labels What ? Why ? How ? Where & When ?

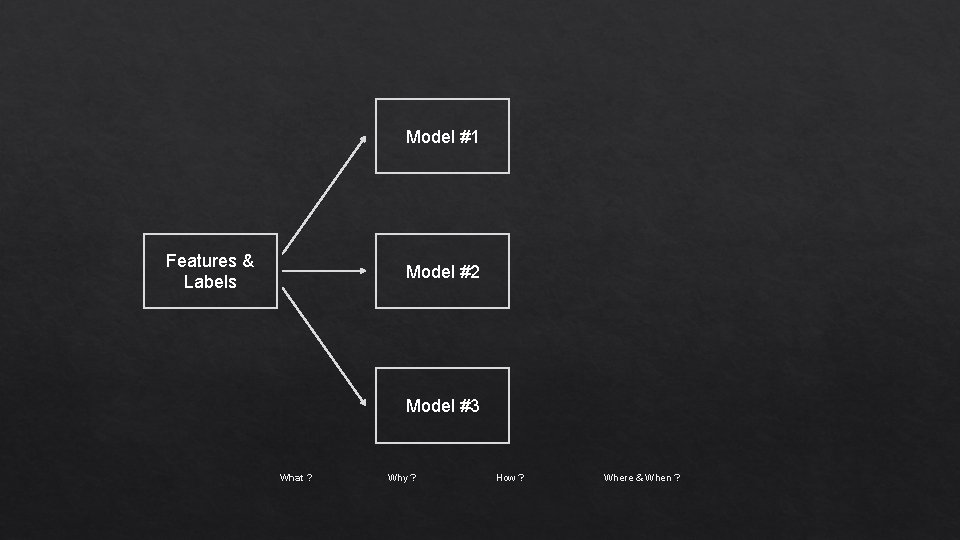

Model #1 Features & Labels Model #2 Model #3 What ? Why ? How ? Where & When ?

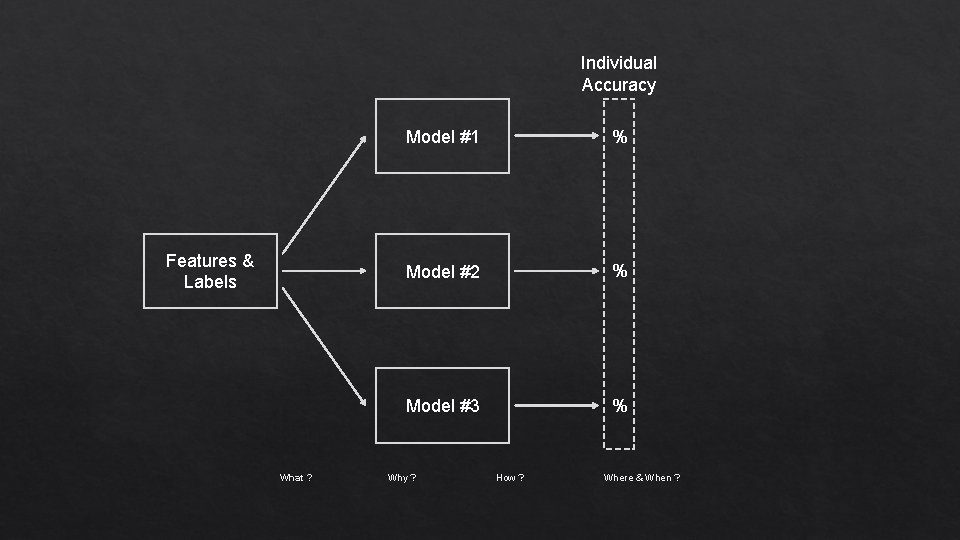

Individual Accuracy Features & Labels What ? Model #1 % Model #2 % Model #3 % Why ? How ? Where & When ?

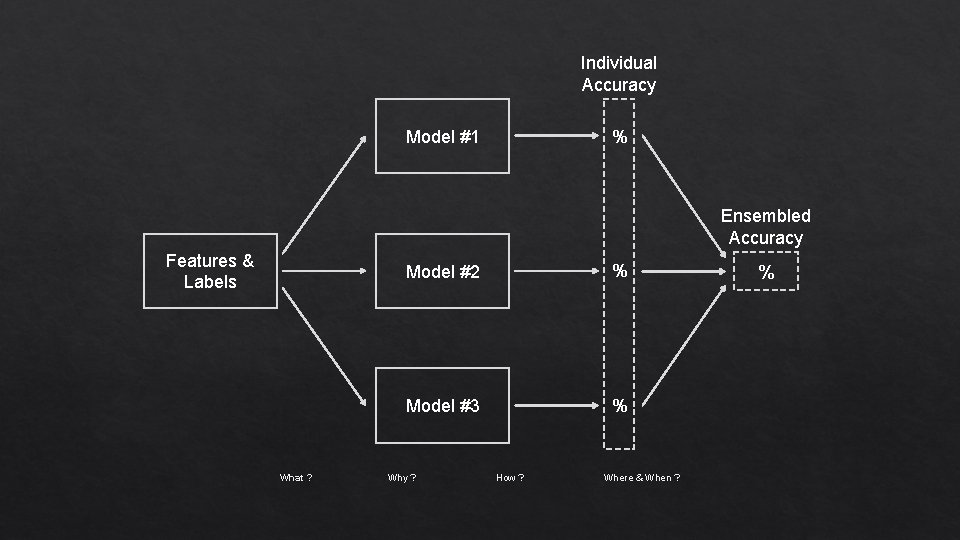

Individual Accuracy % Model #1 Ensembled Accuracy Features & Labels What ? Model #2 % Model #3 % Why ? How ? Where & When ? %

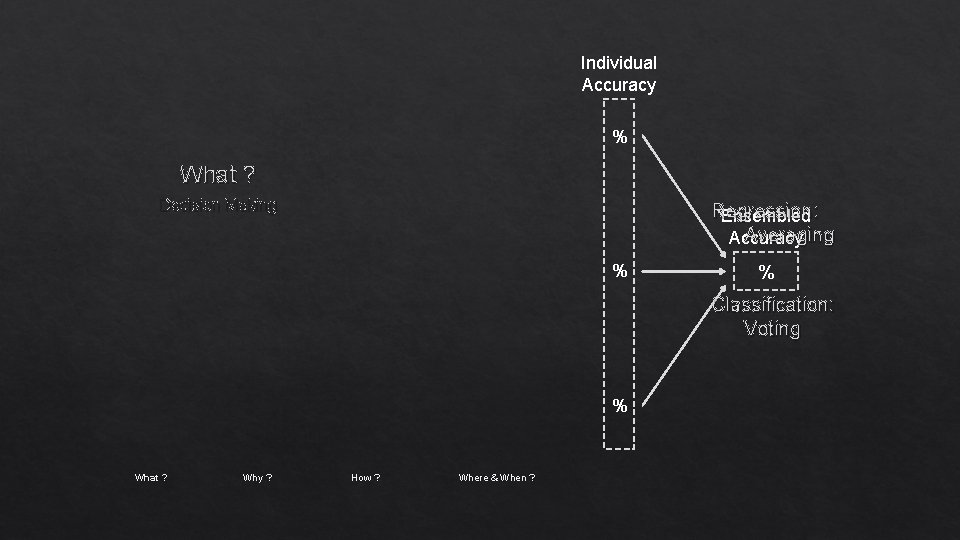

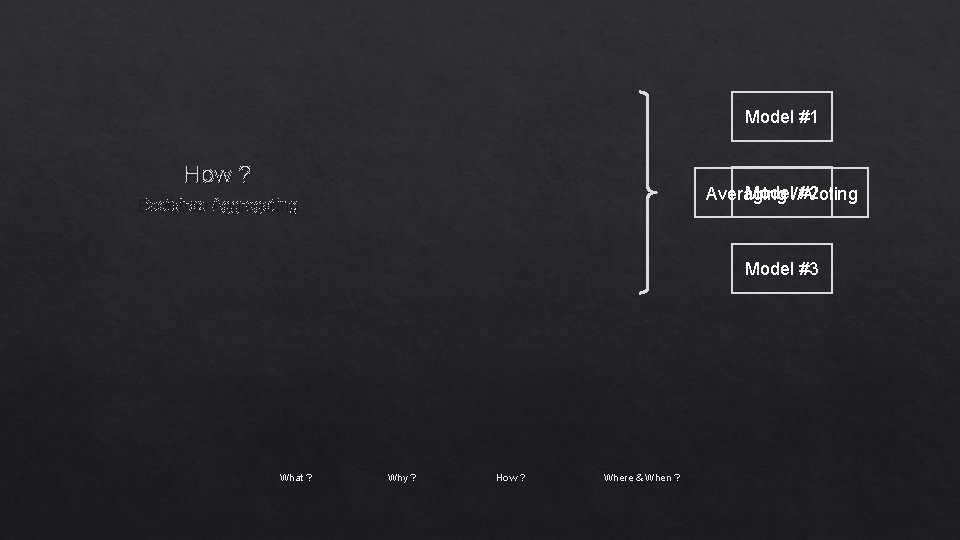

Individual Accuracy % What ? Decision Making Regression: Ensembled Averaging Accuracy % % Classification: Voting % What ? Why ? How ? Where & When ?

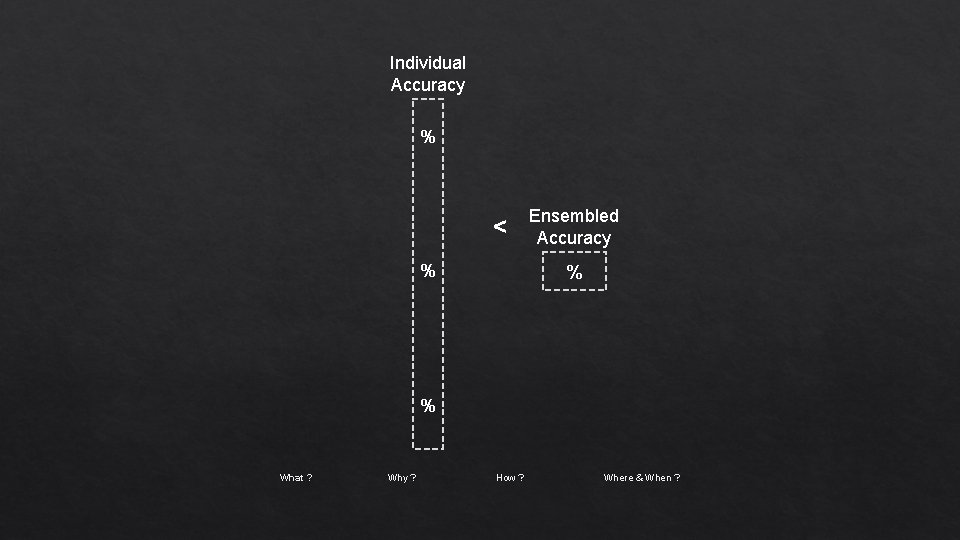

Individual Accuracy % < % Ensembled Accuracy % % What ? Why ? How ? Where & When ?

Why ? Advantages low error > better accuracy avoids overfitting > higher consistency reduces bias and variance errors What ? Why ? How ? Where & When ?

Why ? higher computational effort Drawback What ? Why ? How ? Where & When ?

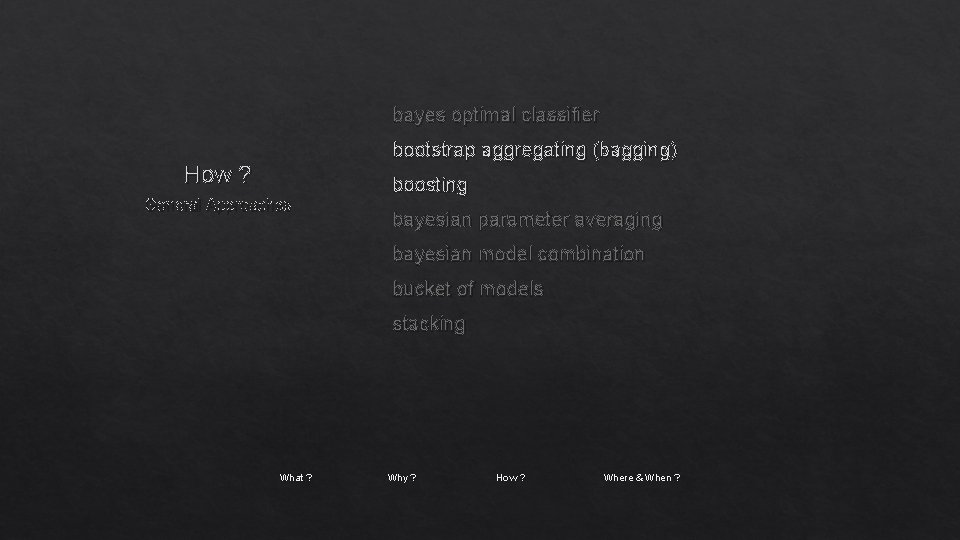

bayes optimal classifier bootstrap aggregating (bagging) How ? General Approaches boosting bayesian parameter averaging bayesian model combination bucket of models stacking What ? Why ? How ? Where & When ?

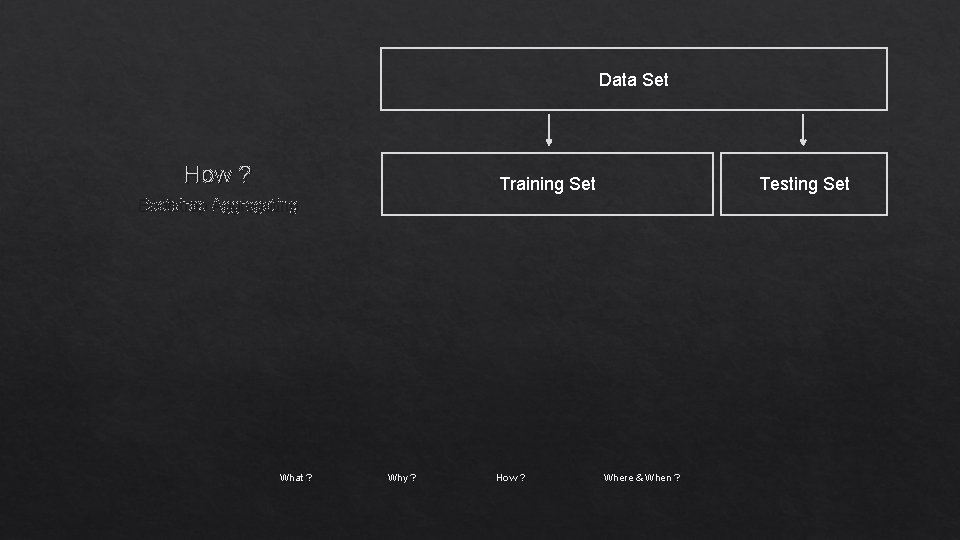

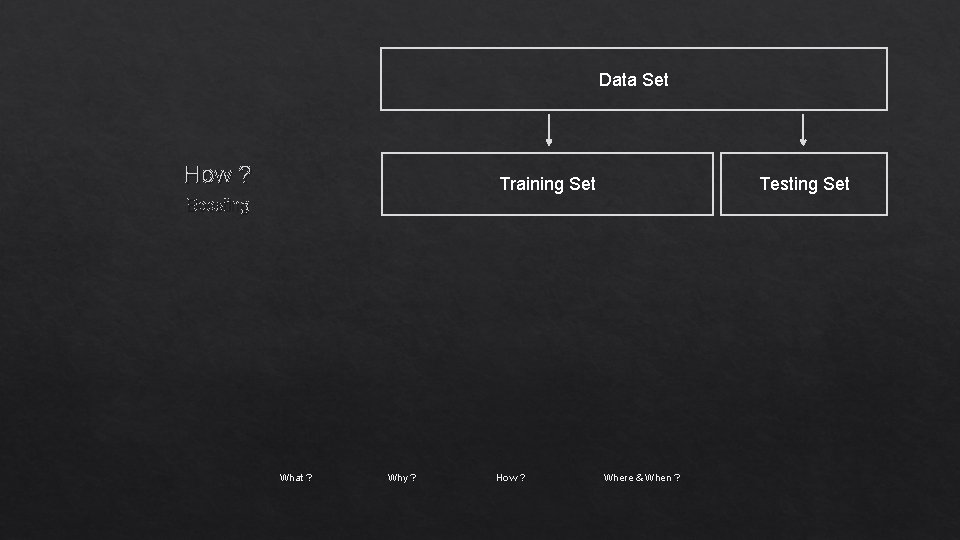

Data Set How ? Training Set Testing Set Bootstrap Aggregating What ? Why ? How ? Where & When ?

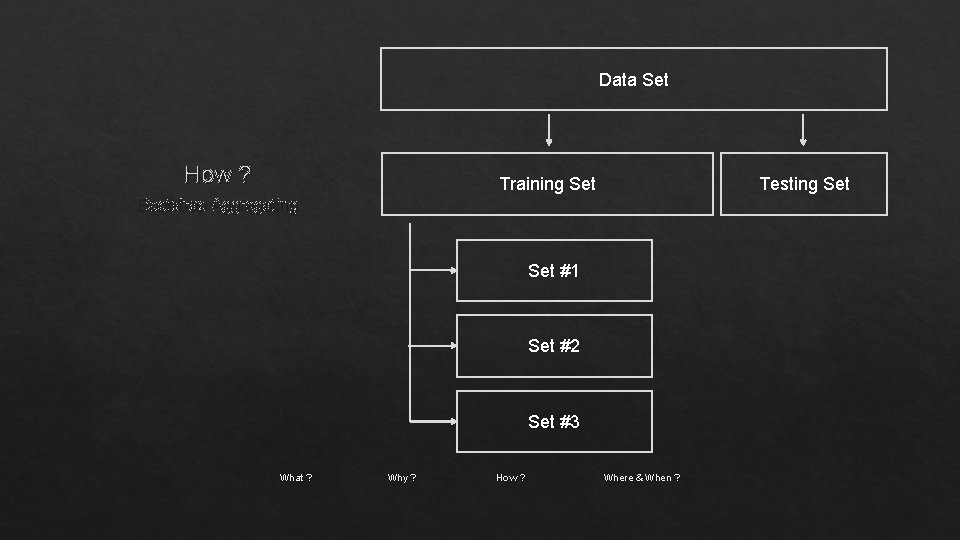

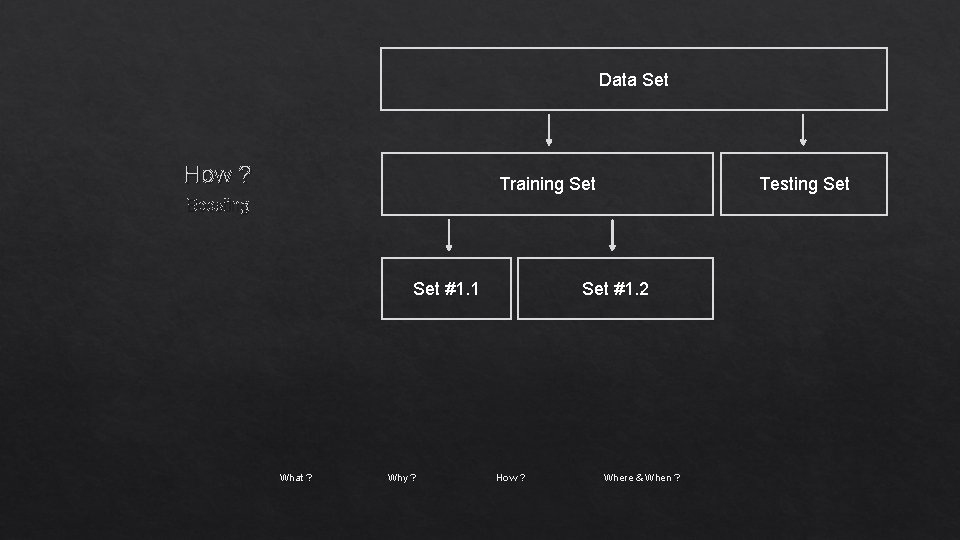

Data Set How ? Training Set Testing Set Bootstrap Aggregating Set #1 Set #2 Set #3 What ? Why ? How ? Where & When ?

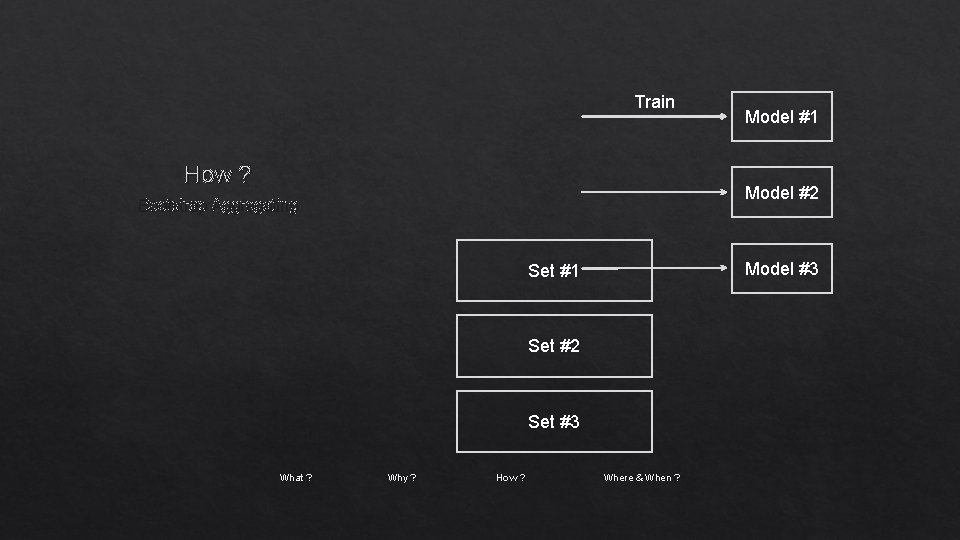

Train How ? Model #2 Bootstrap Aggregating Model #3 Set #1 Set #2 Set #3 What ? Model #1 Why ? How ? Where & When ?

Model #1 How ? Model//#2 Averaging Voting Bootstrap Aggregating Model #3 What ? Why ? How ? Where & When ?

bayes optimal classifier bootstrap aggregating (bagging) How ? General Approaches boosting bayesian parameter averaging bayesian model combination bucket of models stacking What ? Why ? How ? Where & When ?

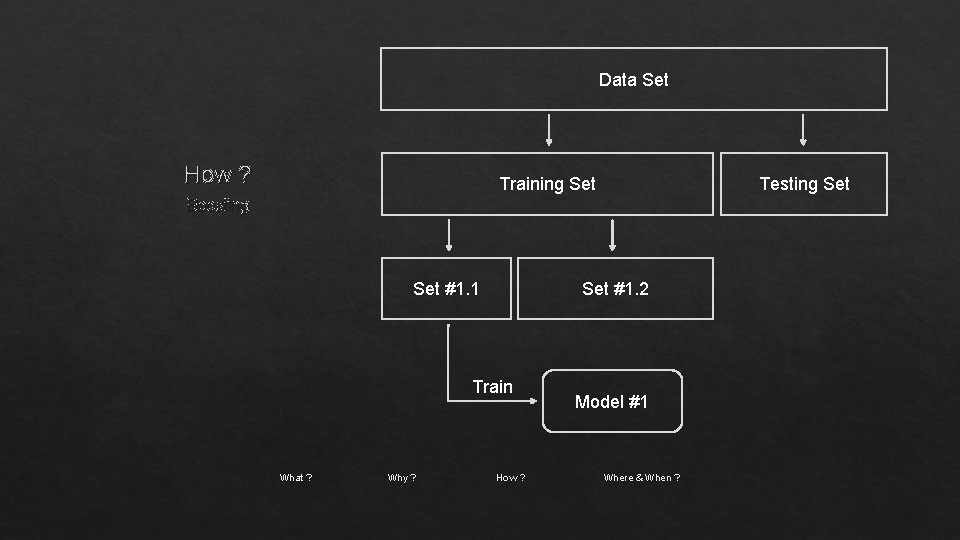

Data Set How ? Training Set Testing Set Boosting What ? Why ? How ? Where & When ?

Data Set How ? Training Set Testing Set Boosting Set #1. 1 What ? Why ? Set #1. 2 How ? Where & When ?

Data Set How ? Training Set Testing Set Boosting Set #1. 1 Set #1. 2 Train What ? Why ? How ? Model #1 Where & When ?

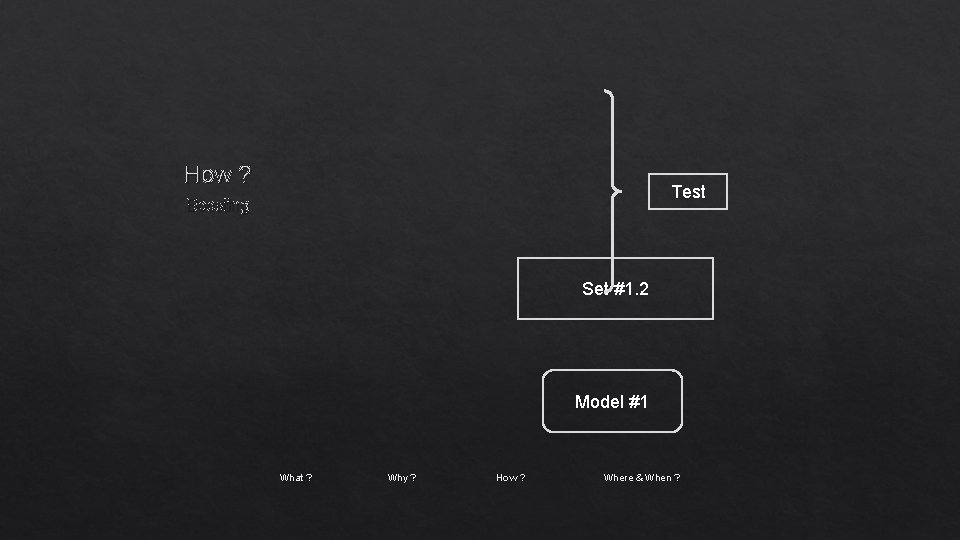

How ? Test Boosting Set #1. 2 Model #1 What ? Why ? How ? Where & When ?

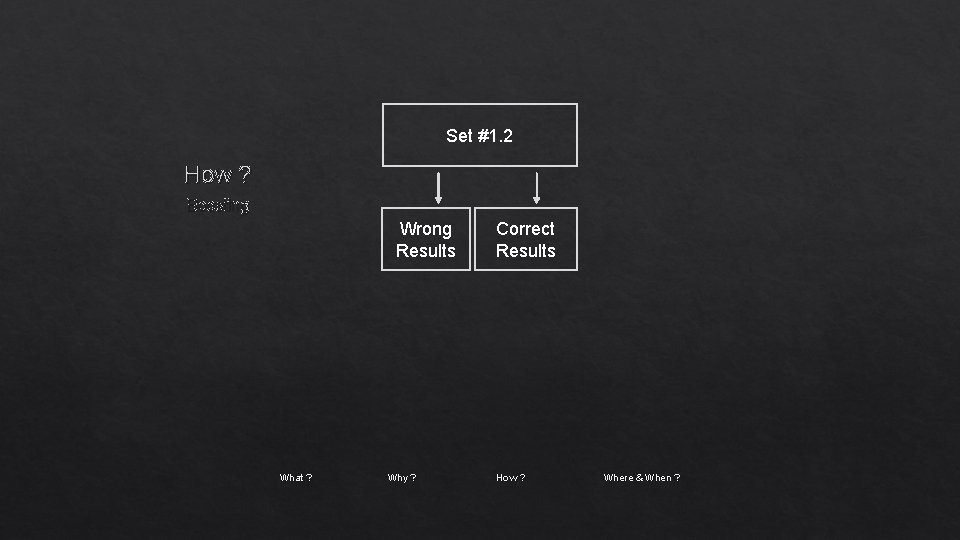

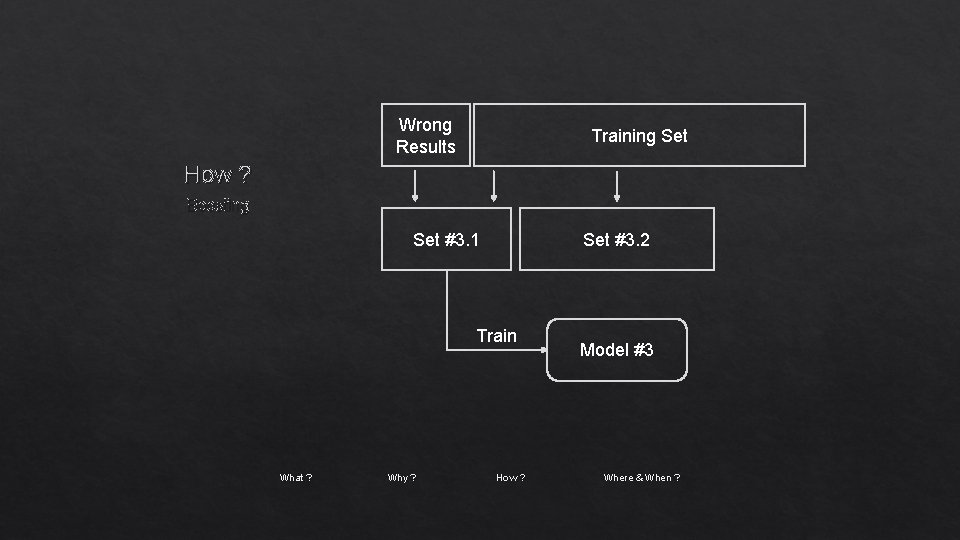

Set #1. 2 How ? Boosting Wrong Results What ? Why ? Correct Results How ? Where & When ?

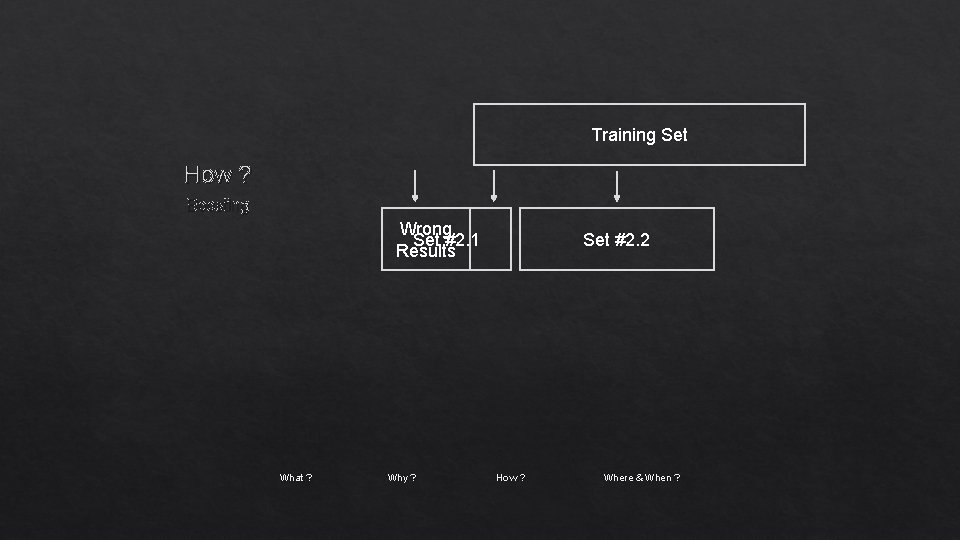

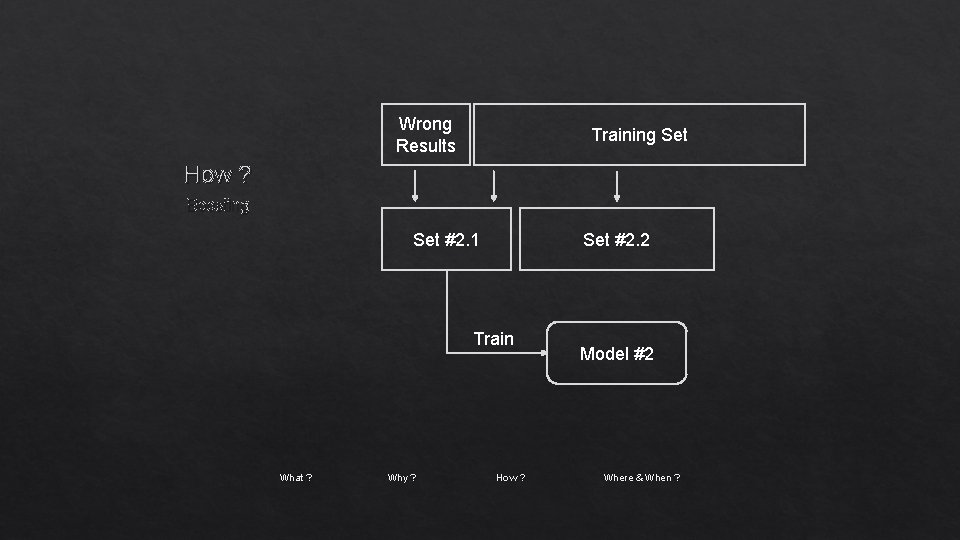

Training Set How ? Boosting Wrong Set #2. 1 Results What ? Why ? Set #2. 2 How ? Where & When ?

Wrong Results Training Set How ? Boosting Set #2. 1 Set #2. 2 Train What ? Why ? How ? Model #2 Where & When ?

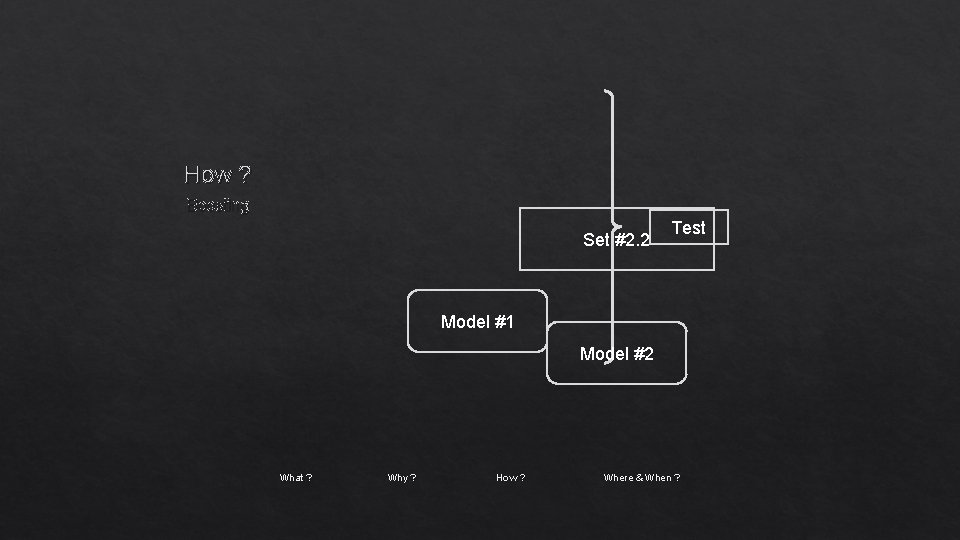

How ? Boosting Set #2. 2 Test Model #1 Model #2 What ? Why ? How ? Where & When ?

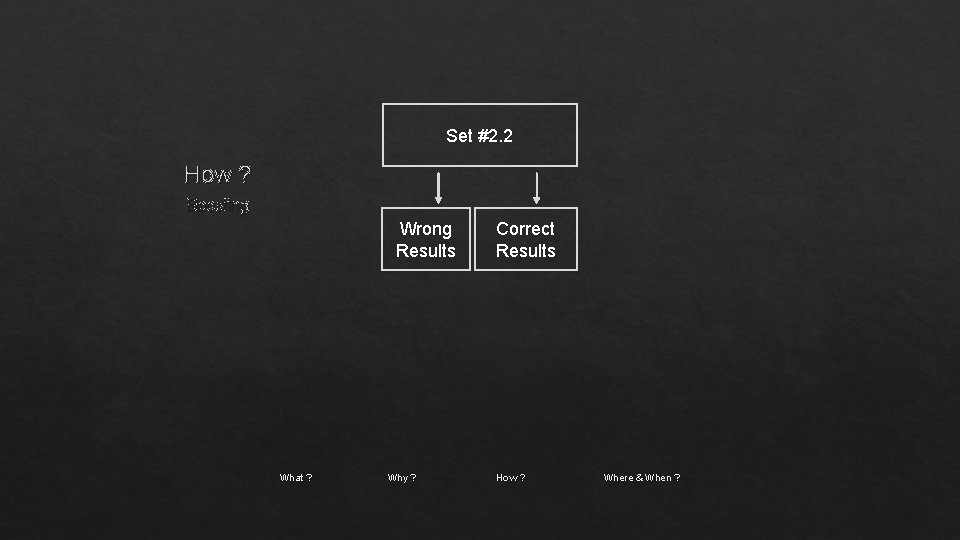

Set #2. 2 How ? Boosting Wrong Results What ? Why ? Correct Results How ? Where & When ?

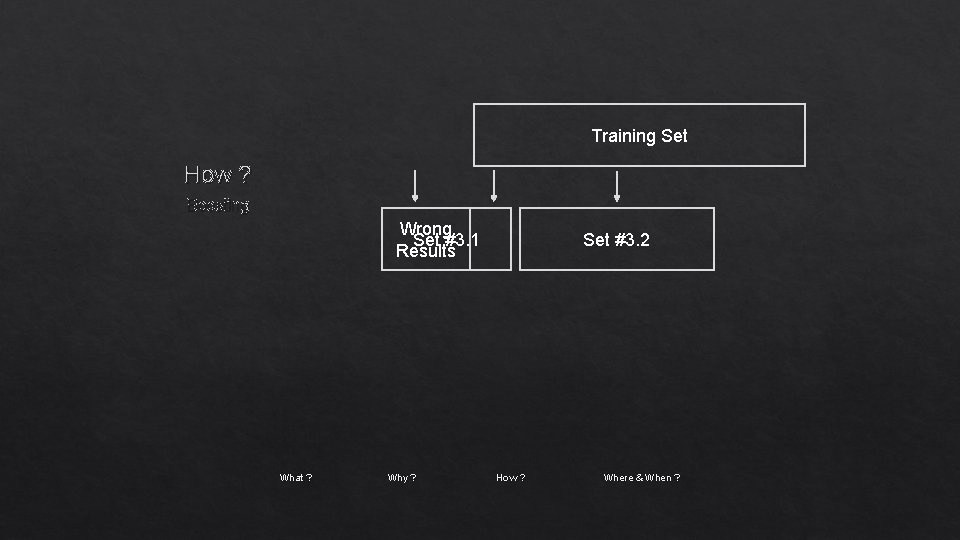

Training Set How ? Boosting Wrong Set #3. 1 Results What ? Why ? Set #3. 2 How ? Where & When ?

Wrong Results Training Set How ? Boosting Set #3. 1 Set #3. 2 Train What ? Why ? How ? Model #3 Where & When ?

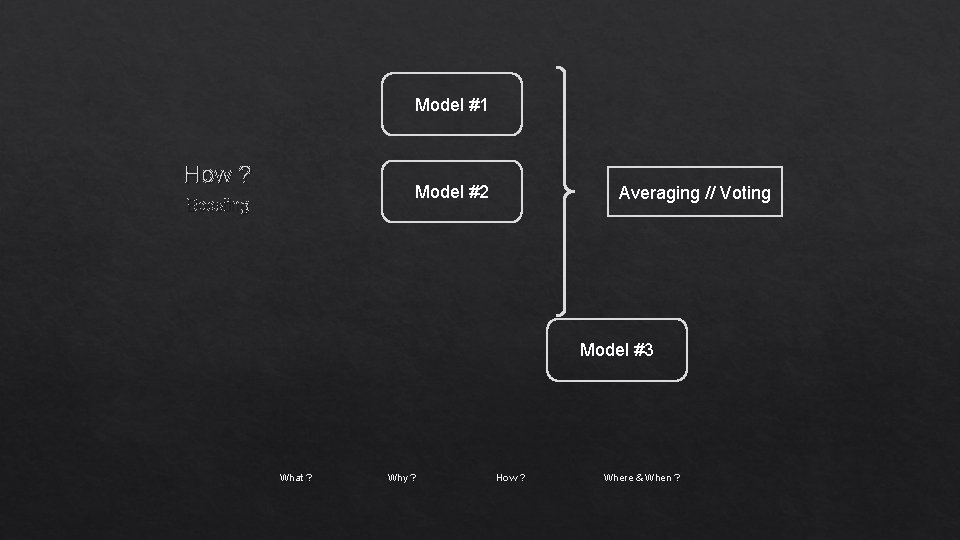

Model #1 How ? Model #2 Boosting Averaging // Voting Model #3 What ? Why ? How ? Where & When ?

single model overfits Where & When ? results worth extra training can be used for classification & regression What ? Why ? How ? Where & When ?

- Slides: 29