Distributed File Systems By Ryan Middleton 1 Outline

Distributed File Systems By Ryan Middleton 1

Outline �Introduction �Definition �Example architecture �Early implementations �NFS �AFS �Later advancements �Journal articles �Patent applications �Conclusion 2 } In the Book

Introduction: Definition 3 �Non-distributed file systems � Provide “nice” interface for working with disks, OS level, concept of “files” � Also provide file locking, access control, file organization, and more �Distributed file systems � Share files (possibly distributed) with multiple remote clients � Ideally provides � Transparency: access, location, mobility, performance, scaling � Concurrency control/consistency � Replication support � Hardware and OS heterogeneity � Fault tolerance � Security � Efficiency � Challenges: availability, load balancing, reliability, security, SOURCE: concurrency control COULOURIS, G. F. , DOLLIMORE, J. AND KINDBERG, T. 2005. Distributed systems: concepts and design. Addison-Wesley Longman, .

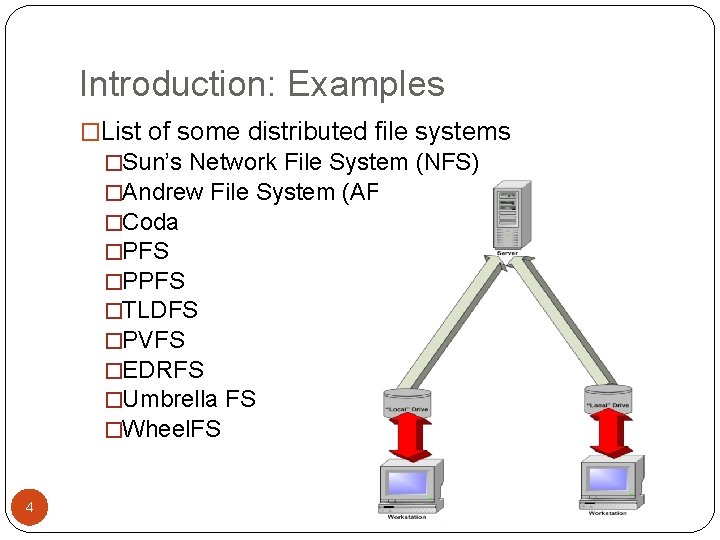

Introduction: Examples �List of some distributed file systems �Sun’s Network File System (NFS) �Andrew File System (AFS) �Coda �PFS �PPFS �TLDFS �PVFS �EDRFS �Umbrella FS �Wheel. FS 4

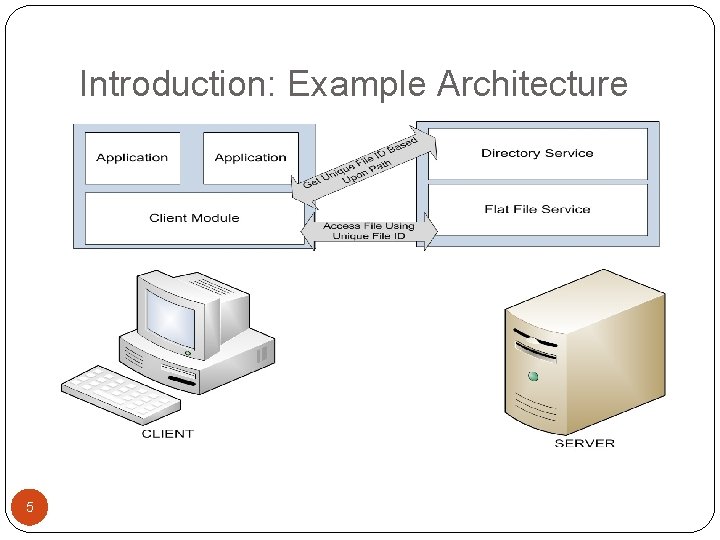

Introduction: Example Architecture 5

Early Implementations: Sun’s Network File System (NFS) �First distributed file system to be developed as a commercial product (1985) �Originally implemented using UNIX 4. 2 kernel (BSD) �Design goals: �Architecture & OS independent �Crash Recovery (server crashes) �Access transparency �UNIX file system semantics �“Reasonable Performance” (1985) �“about 80% as fast as a local disk. ” 6 SOURCE: SANDBERG, R. , GOLDBERG, D. , KLEIMAN, S. , WALSH, D. AND LYON, B. 1985. Design and implementation of the Sun network filesystem. In Proceedings of the Summer 1985 USENIX Conference, Anonymous , 119– 130.

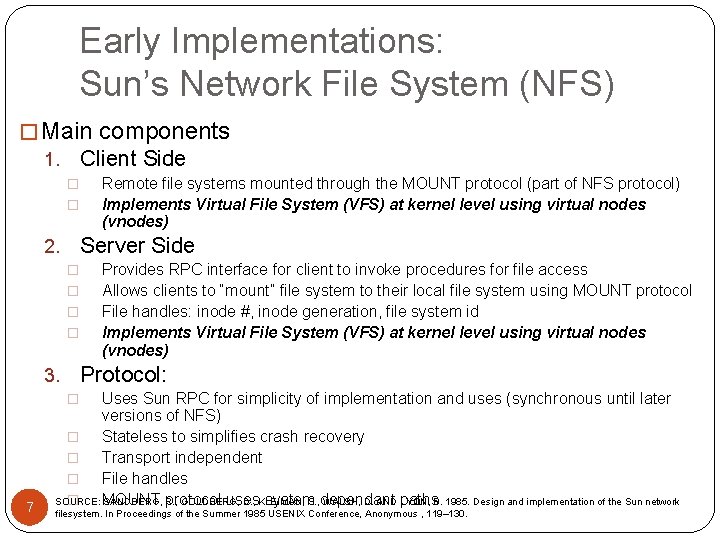

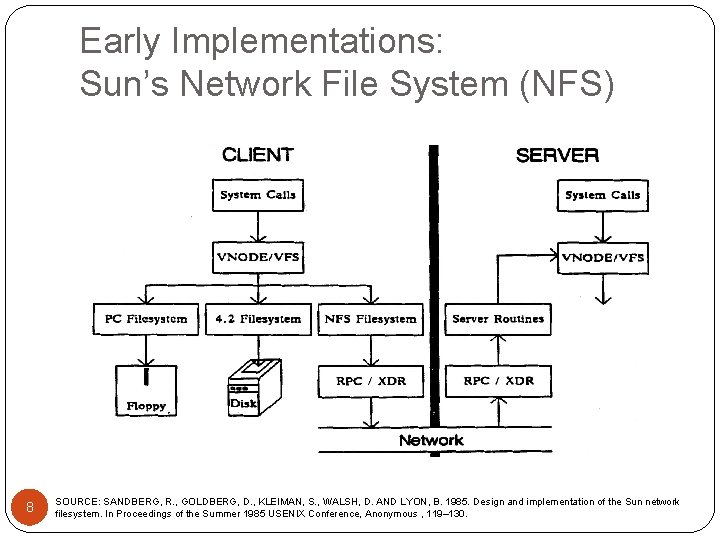

Early Implementations: Sun’s Network File System (NFS) � Main components Client Side 1. � � Remote file systems mounted through the MOUNT protocol (part of NFS protocol) Implements Virtual File System (VFS) at kernel level using virtual nodes (vnodes) Server Side 2. � � Provides RPC interface for client to invoke procedures for file access Allows clients to “mount” file system to their local file system using MOUNT protocol File handles: inode #, inode generation, file system id Implements Virtual File System (VFS) at kernel level using virtual nodes (vnodes) Protocol: 3. Uses Sun RPC for simplicity of implementation and uses (synchronous until later versions of NFS) � Stateless to simplifies crash recovery � Transport independent � File handles � MOUNT uses system. S. , dependant SOURCE: SANDBERG, protocol R. , GOLDBERG, D. , KLEIMAN, WALSH, D. AND paths LYON, B. 1985. Design and implementation of the Sun network � 7 filesystem. In Proceedings of the Summer 1985 USENIX Conference, Anonymous , 119– 130.

Early Implementations: Sun’s Network File System (NFS) 8 SOURCE: SANDBERG, R. , GOLDBERG, D. , KLEIMAN, S. , WALSH, D. AND LYON, B. 1985. Design and implementation of the Sun network filesystem. In Proceedings of the Summer 1985 USENIX Conference, Anonymous , 119– 130.

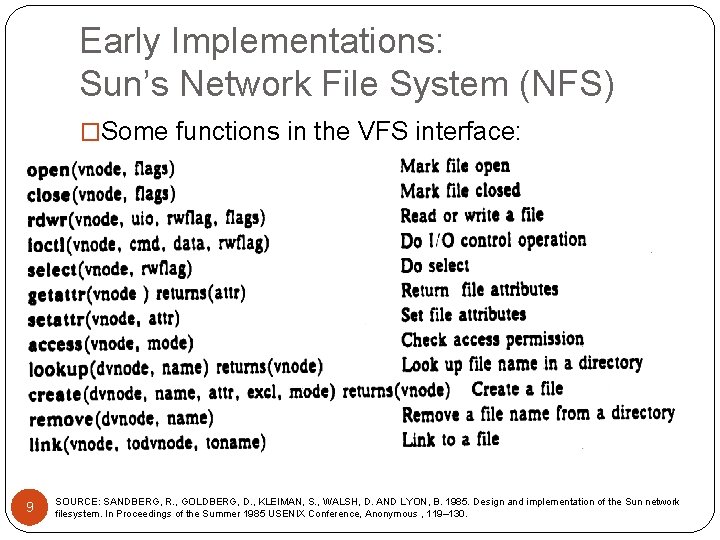

Early Implementations: Sun’s Network File System (NFS) �Some functions in the VFS interface: 9 SOURCE: SANDBERG, R. , GOLDBERG, D. , KLEIMAN, S. , WALSH, D. AND LYON, B. 1985. Design and implementation of the Sun network filesystem. In Proceedings of the Summer 1985 USENIX Conference, Anonymous , 119– 130.

Early Implementations: Sun’s Network File System (NFS) �Later developments �Automounter �Client/Server caching �Clients must poll server copies to validate cache using timestamps �Faster and more efficient local file systems �Better security than originally implemented using Kerberos authentication system �Performance improvements �Very effective �Depends on hardware, network, dedicated OS SOURCES: COULOURIS, G. F. , DOLLIMORE, J. AND KINDBERG, T. 2005. Distributed systems: concepts and design. Addison-Wesley Longman, . 10 SANDBERG, R. , GOLDBERG, D. , KLEIMAN, S. , WALSH, D. AND LYON, B. 1985. Design and implementation of the Sun network filesystem. In Proceedings of the Summer 1985 USENIX Conference, Anonymous , 119– 130.

Early Implementations: Sun’s Network File System (NFS) � Characteristics �Access transparency: yes, through client module using 11 VFS �Location transparency: yes, remote file systems mounted into local file system �Mobility transparency: mostly, remote mount tables must be updated with each move �Scalability: yes, can handle large loads efficiently (economic and performance) �File replication: limited, read-only replication is supported and multiple remote file systems may be given to the automounter �Security: yes, using Kerberos authentication SOURCES: �Fault tolerance, heterogeneity, efficiency, and COULOURIS, G. F. , DOLLIMORE, J. AND KINDBERG, T. 2005. Distributed systems: concepts and design. Addison-Wesley Longman, . consistency: yes SANDBERG, R. , GOLDBERG, D. , KLEIMAN, S. , WALSH, D. AND LYON, B. 1985. Design and implementation of the Sun network filesystem. In Proceedings of the Summer 1985 USENIX Conference, Anonymous , 119– 130.

Early Implementations: Andrew File System (AFS) �Provides transparent access for UNIX programs �Uses UNIX file primitives �Compatible with NFS �Files referenced using handles like NFS �NFS client may access data from AFS “server” �Design goals �Access transparency �Scalability (most important) �Performance requirements met by serving whole files and caching them at clients 12 SOURCE: COULOURIS, G. F. , DOLLIMORE, J. AND KINDBERG, T. 2005. Distributed systems: concepts and design. Addison-Wesley Longman, .

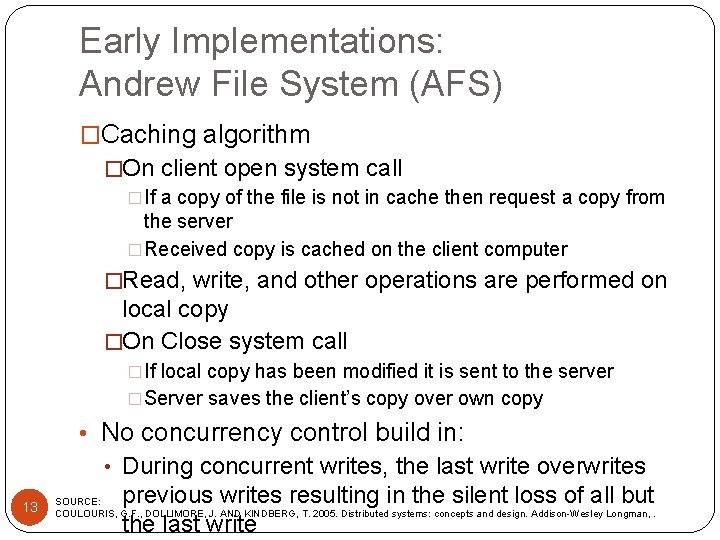

Early Implementations: Andrew File System (AFS) �Caching algorithm �On client open system call �If a copy of the file is not in cache then request a copy from the server �Received copy is cached on the client computer �Read, write, and other operations are performed on local copy �On Close system call �If local copy has been modified it is sent to the server �Server saves the client’s copy over own copy • No concurrency control build in: • During concurrent writes, the last write overwrites 13 previous writes resulting in the silent loss of all but the last write SOURCE: COULOURIS, G. F. , DOLLIMORE, J. AND KINDBERG, T. 2005. Distributed systems: concepts and design. Addison-Wesley Longman, .

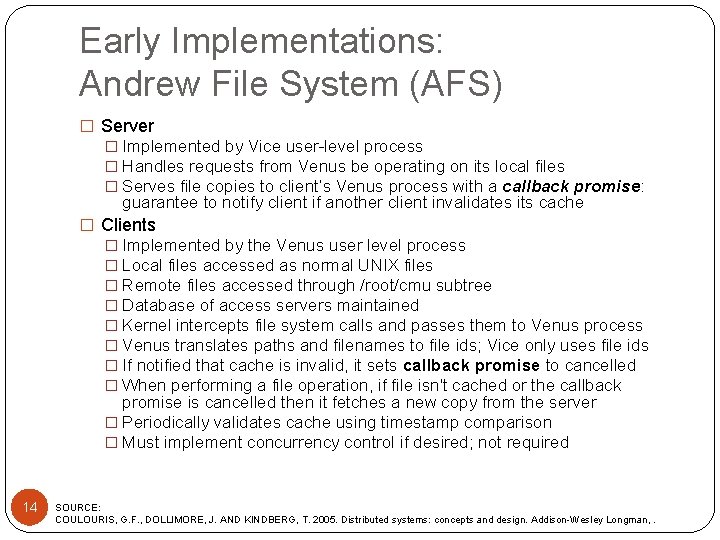

Early Implementations: Andrew File System (AFS) � Server � Implemented by Vice user-level process � Handles requests from Venus be operating on its local files � Serves file copies to client’s Venus process with a callback promise: guarantee to notify client if another client invalidates its cache � Clients � Implemented by the Venus user level process � Local files accessed as normal UNIX files � Remote files accessed through /root/cmu subtree � Database of access servers maintained � Kernel intercepts file system calls and passes them to Venus process � Venus translates paths and filenames to file ids; Vice only uses file ids � If notified that cache is invalid, it sets callback promise to cancelled � When performing a file operation, if file isn't cached or the callback promise is cancelled then it fetches a new copy from the server � Periodically validates cache using timestamp comparison � Must implement concurrency control if desired; not required 14 SOURCE: COULOURIS, G. F. , DOLLIMORE, J. AND KINDBERG, T. 2005. Distributed systems: concepts and design. Addison-Wesley Longman, .

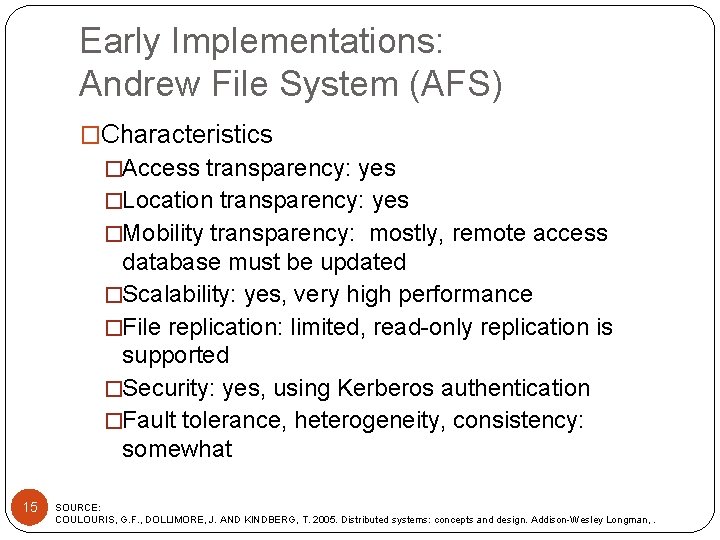

Early Implementations: Andrew File System (AFS) �Characteristics �Access transparency: yes �Location transparency: yes �Mobility transparency: mostly, remote access database must be updated �Scalability: yes, very high performance �File replication: limited, read-only replication is supported �Security: yes, using Kerberos authentication �Fault tolerance, heterogeneity, consistency: somewhat 15 SOURCE: COULOURIS, G. F. , DOLLIMORE, J. AND KINDBERG, T. 2005. Distributed systems: concepts and design. Addison-Wesley Longman, .

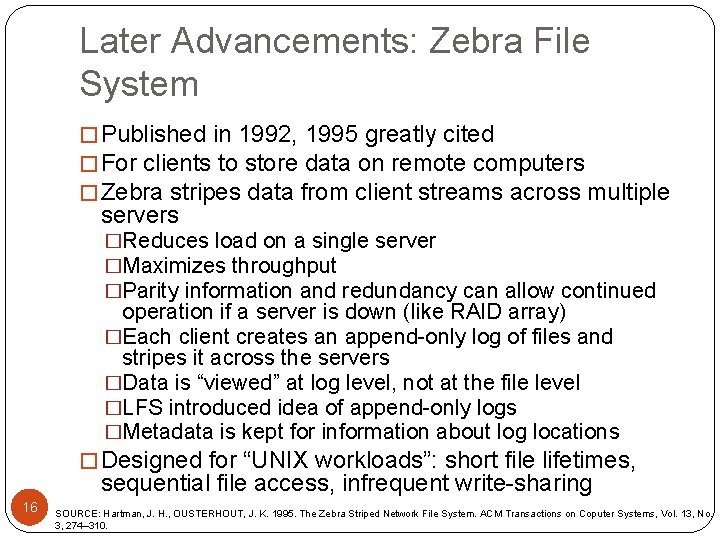

Later Advancements: Zebra File System � Published in 1992, 1995 greatly cited � For clients to store data on remote computers � Zebra stripes data from client streams across multiple servers �Reduces load on a single server �Maximizes throughput �Parity information and redundancy can allow continued operation if a server is down (like RAID array) �Each client creates an append-only log of files and stripes it across the servers �Data is “viewed” at log level, not at the file level �LFS introduced idea of append-only logs �Metadata is kept for information about log locations � Designed for “UNIX workloads”: short file lifetimes, sequential file access, infrequent write-sharing 16 SOURCE: Hartman, J. H. , OUSTERHOUT, J. K. 1995. The Zebra Striped Network File System. ACM Transactions on Coputer Systems, Vol. 13, No. 3, 274– 310.

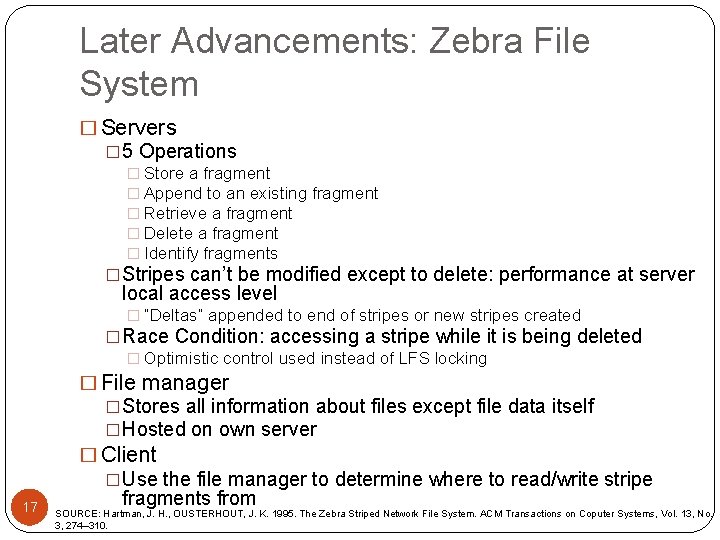

Later Advancements: Zebra File System � Servers � 5 Operations � Store a fragment � Append to an existing fragment � Retrieve a fragment � Delete a fragment � Identify fragments �Stripes can’t be modified except to delete: performance at server local access level � “Deltas” appended to end of stripes or new stripes created �Race Condition: accessing a stripe while it is being deleted � Optimistic control used instead of LFS locking 17 � File manager �Stores all information about files except file data itself �Hosted on own server � Client �Use the file manager to determine where to read/write stripe fragments from SOURCE: Hartman, J. H. , OUSTERHOUT, J. K. 1995. The Zebra Striped Network File System. ACM Transactions on Coputer Systems, Vol. 13, No. 3, 274– 310.

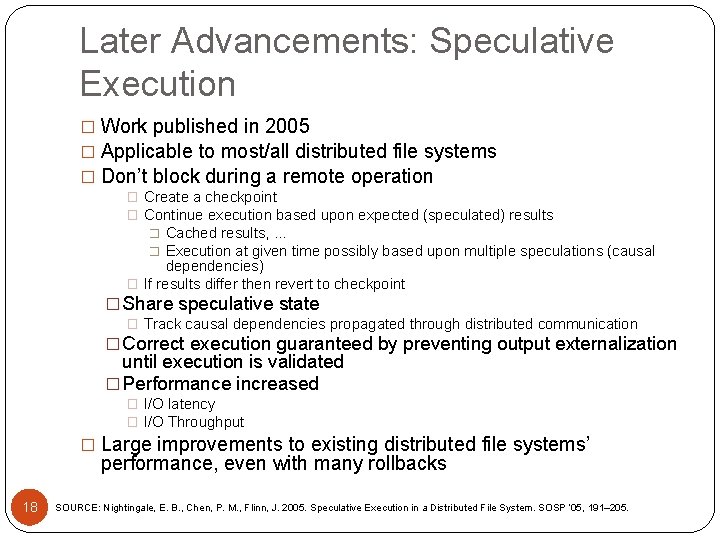

Later Advancements: Speculative Execution � Work published in 2005 � Applicable to most/all distributed file systems � Don’t block during a remote operation � Create a checkpoint � Continue execution based upon expected (speculated) results � Cached results, … � Execution at given time possibly based upon multiple speculations (causal dependencies) � If results differ then revert to checkpoint � Share speculative state � Track causal dependencies propagated through distributed communication � Correct execution guaranteed by preventing output externalization until execution is validated � Performance increased � I/O latency � I/O Throughput � Large improvements to existing distributed file systems’ performance, even with many rollbacks 18 SOURCE: Nightingale, E. B. , Chen, P. M. , Flinn, J. 2005. Speculative Execution in a Distributed File System. SOSP ‘ 05, 191– 205.

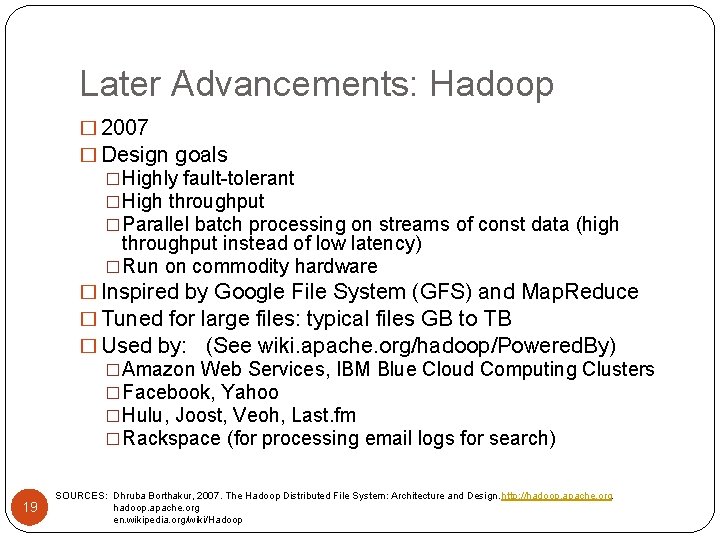

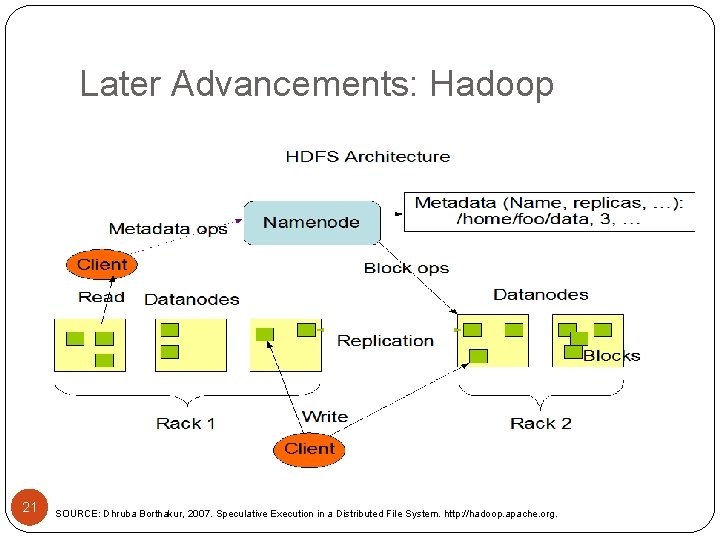

Later Advancements: Hadoop � 2007 � Design goals �Highly fault-tolerant �High throughput �Parallel batch processing on streams of const data (high throughput instead of low latency) �Run on commodity hardware � Inspired by Google File System (GFS) and Map. Reduce � Tuned for large files: typical files GB to TB � Used by: (See wiki. apache. org/hadoop/Powered. By) �Amazon Web Services, IBM Blue Cloud Computing Clusters �Facebook, Yahoo �Hulu, Joost, Veoh, Last. fm �Rackspace (for processing email logs for search) 19 SOURCES: Dhruba Borthakur, 2007. The Hadoop Distributed File System: Architecture and Design. http: //hadoop. apache. org en. wikipedia. org/wiki/Hadoop

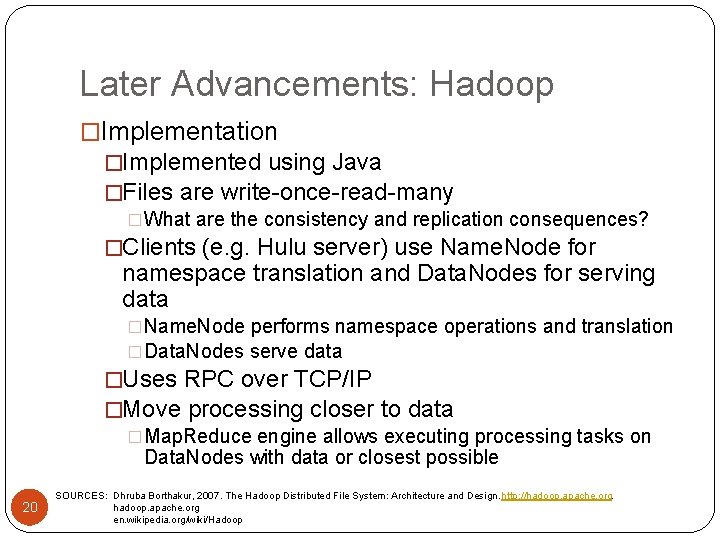

Later Advancements: Hadoop �Implementation �Implemented using Java �Files are write-once-read-many �What are the consistency and replication consequences? �Clients (e. g. Hulu server) use Name. Node for namespace translation and Data. Nodes for serving data �Name. Node performs namespace operations and translation �Data. Nodes serve data �Uses RPC over TCP/IP �Move processing closer to data �Map. Reduce engine allows executing processing tasks on Data. Nodes with data or closest possible 20 SOURCES: Dhruba Borthakur, 2007. The Hadoop Distributed File System: Architecture and Design. http: //hadoop. apache. org en. wikipedia. org/wiki/Hadoop

Later Advancements: Hadoop 21 SOURCE: Dhruba Borthakur, 2007. Speculative Execution in a Distributed File System. http: //hadoop. apache. org.

Later Advancements: Wheel. FS � Articles published 2007 -2009 � User-level wide-area file system � POSIX interface � Design Goal: �Help nodes in a distributed application share data �Provide fault tolerance for distributed applications �Applications tune operations and performance � Applications using Wheel. FS show similar performance to Bit. Torrent services �Distributed web cache �Email service �Large file distribution 22 SOURCE: Stribling, J. , Sovran, Y. , Zhang, I. , Pretzer, X. 2009. Flexible, Wide Area Storage for Distributed Systems with Wheel. FS. NSDI ’ 09, 43– 58.

Later Advancements: Wheel. FS 23 � Implemented using Filesystem in Userspace (FUSE) �User-level process providing a bridge between user created file system and kernel operation �Communication uses RPC over SSH � Servers �Unified directory structure presented �Each file/directory object has primary maintainer agreed upon by all nodes �Subdirectories can be maintained by other servers �Replication to non-primary servers supported � Clients �Find primary maintainer (or backup) through configuraton service replicated to multiple sites (nodes cache copy of lookup table) �Cached copy is leased from maintainer �Writes buffered in cache until close operation for performance �Clients can read from other clients’ cache �File system entry through wfs directory in the root of the local SOURCE: Stribling, J. , Sovran, Y. , Zhang, I. , Pretzer, X. 2009. Flexible, Wide Area Storage for Distributed Systems with Wheel. FS. NSDI ’ 09, 43– 58. file system

Later Advancements: Wheel. FS � Semantic Cues included in file or directory paths �Allow user applications to customize data access policies �/wfs/. cue/dir 1/dir 2/file �Apply to all sub-paths proceeding cue �Cues: Hotspot, Max. Time, Eventual. Consistency, … � Ex: /wfs/. Max. Time=200/url causes operations to fail accessing /wfs/url after 200 ms � Ex: Eventual. Consistency allows operations to access data through backups, caches, etc… instead of directly from maintainer � Configuration Service eventually reconciles differences o Version numbers o resolves conflicts by choosing latest version number (result in lost writes) � Combined with Max. Time a client will use the backup/cache version with the highest version number that is reachable in the specified time 24 SOURCE: Stribling, J. , Sovran, Y. , Zhang, I. , Pretzer, X. 2009. Flexible, Wide Area Storage for Distributed Systems with Wheel. FS. NSDI ’ 09, 43– 58.

Later Advancements: Patent Applications � Patent No. US 7, 406, 473 � July 2008 � Brassow et al. � Meta-data servers, locking servers, file servers organized into single layer each � Patent No. US 7, 475, 199 B 1 � January 2009 � Bobbitt et al. � All files organized into virtual volumes � Virtualization layer intercepts file operations and maps to physical location � Client operations handled by “Venus” � Sound familiar? � Others � Reserving space on remote storage locations � Distributed file system using NFS servers coupled to hosts to directly request data from NAS nodes, bypassing NFS clients 25

Conclusion � Non-Distributed File Systems � Access files over a distributed system � Concurrency issues � Performance � Transparency � New Advances in Distributed File Systems � Parallel processing and striping � Advanced caching � Tune file system performance on a per-application basis � Very large file support � Future Work � Relational-database organization � Better concurrency control � Developments in non-distributed file systems can be applicable to distributed file systems 26

- Slides: 26