Distributed File System Introduction 1 Distributed File Systems

Distributed File System Introduction 1

Distributed File Systems v Issues v Semantics v Naming v Sharing v Caching: where, whole files, partial files? v Cache consistency v Replication v Transaction support v Security v Disconnected Operation (partitions) 2

Distributed File System: Permanent Storage v v 3 This is a fundamental computing abstraction which consists of a named collection of objects subject to explicit creation, destruction, immunity to system failures. This abstraction is refined by proper realizations, such as file systems databases complex-object oriented repositories

Distributed File System v v It is a distributed realization of the classic time-sharing file system File systems can be classified into a hierarchy: v Single user, single process (MS-DOS): FS in this level must address following key issues v v v Single user, multiprocess (OS/2) v v 4 Concurrency is an important issue for programming interface and the FS Multiuser, multiprocess (Unix) Security now becomes important. Distributed (NFS), where users are physically dispersed share the same FS v v Naming Programming interface Storage representation (mapping fs to physical storage media) Integrity (reliability) agains power, hardware, media, and software. Naming, location and availability become important issues in addition to other issues.

File Systems vs. Databases v v v Design issues for both seem identical Databases are assumed to have fine-granularity concurrency control transactional support recordlevel access non-sequential access high availability etc. But many file systems provide these functions as well: v v 5 Alpine, Locus VMS record-management services MVS access methods Coda.

True differences between FSs and Databases v v v 6 Naming (specific vs. associative) Access to files in a FS is by name, in DB is by associations Encapsulation (byte streams vs. typed data) data in a Fs is non interpreted byte sequence; DB keeps information about the data types and their relationships DFSs bring data to the point of use, DBs take the function(computation) to the site of the data storage

When to use file systems v When access by name is adequate v e. g. , small name spaces (in PCs): human cognitive ability limits the number of objects that users can know by name v Depends on search/usage ratio: v how long it takes to search for an item and how long item is used, once found v Low search/usage ratio: High locality! v use 7 FS, otherwise use DB.

When to use file systems v v v 8 Use of file usage properties, based on empirical data from real file systems determine parameters v block sizes v disk layout v disk capacity decide on architecture, such as v caching strategies v replication techniques v concurrency control techniques decide on policies, such as v backup, migration, archiving v data placement

Difficulties of obtaining empirical data v v large volumes of data need to stored and processed v performance degradation v is observed behavior intrinsic? v or is it an artifact of system design? Strategies of empirical observations v Static study v v v 9 scan of static file structures does not involve O. S. modifications Dynamic study v requires interception of system calls v higher overhead v much richer information v yields data weighted by use v amount of information can be staggering

Results of various studies v v v Surprisingly robust! Files tend to be small Most files are read sequentially Most files are read entirely Functional lifetime is usually small Sharing patterns v v v 10 most common: one user reads and writes sometimes: one user writes, many read rarely: multiple users read and write Substantial locality of reference File type significantly affects properties: temp files are short lived sys files are read often written rarely

Client Caching v v v v 11 indispensable for good performance used by every modern DFS disk caches survive reboot callback offers best performance cache granularity is an important issue read ahead exploits bias towards sequential reading Alternative methods: write-through, delayed-write, write-on-close, centralized comtrol

Callback v v v Server hands out tokens: read tokens or exclusive tokens Cache entries can be read when in possession of a token and modified when in possession of an exclusive token Fault tolerance through timeout for revocation v v v 12 Tokens expire after a timeout They need to be refreshed periodically When client crashes, its tokens time out When server crashes, it loses token information It waits token-expiry time before giving service after reboot

Bulk Data Transfer v v 13 The delay caused by protocol processing can be substantial v bulk data transfer exploits spatial locality: if a page is referenced, it is highly likely that the consecutive pages will be accessed as well v it amortizes protocol overhead over many consecutive pages of file v wide range of chunk sizes is accumulated in one big chunk Replication v fundamental technique for availability v strategies: pessimistic(restricts exclusive modifications to one site), optimistic (allow modification every site but resolve the conflicting updates when they happen) v can improve availability v can also improve performance (NFS)

Bulk Data Transfer v Hints: a piece of information that can improve the performance if correct, but has no negative consequence if erroneous. v v v Encryption v v v 14 used for self-validating information, such as path name prefixes for servers, cached locally avoids need for cache-coherence protocol, with no loss of consistency essential for security the role of the file-system in end-to-end encryption is prevent unauthorized modification of information, or its unauthorized denial. end-to-end authentication and end-to-end secure transmission are the requirements DES most popular private key encryption it can be an economic obstacle for some, as the involved hardware is still expensive

Bulk Data Transfer v Mount points mount allows gluing together different file systems to provide a single name space. v v 15 first, used in Unix-based systems originally used for mountable devices, to direct search to appropriate device, using a mount table; now it is more general… client-specific approach is simple, as in NFS where each client mounts subtrees from servers; servers are unaware of where the subtrees have been mounted alternatively mount information is embedded in the data stored in the file servers; in this way all the clients will see the same file name space

Andrew File System v v v Developed to integrate a huge number of workstations at CMU The distributed file system appears as a single large subtree of the local system on each workstation A process called Venus runs on each workstation. It finds file in Vice caches them locally and emulates Unix file system. Vice is the backbone of the AFS running on a collection of file servers AFS-1 — first prototype: v v v 16 used for statistics gathering in preparation of AFS-2; one process per client is created, whole-file transfer and caching are supported, chache-consistency check on each open, to maintain consistency directory operations carried out at the server, path-name prefixes are used as hints for server to use (files are named by their full path)

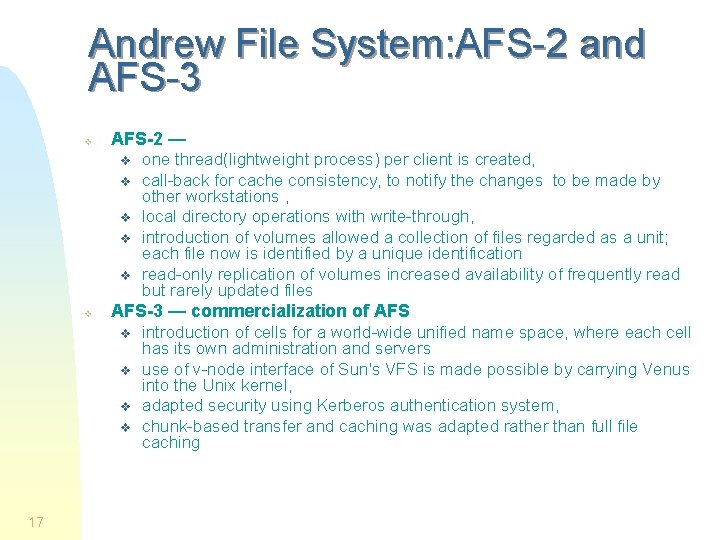

Andrew File System: AFS-2 and AFS-3 v AFS-2 — v v v AFS-3 — commercialization of AFS v v 17 one thread(lightweight process) per client is created, call-back for cache consistency, to notify the changes to be made by other workstations , local directory operations with write-through, introduction of volumes allowed a collection of files regarded as a unit; each file now is identified by a unique identification read-only replication of volumes increased availability of frequently read but rarely updated files introduction of cells for a world-wide unified name space, where each cell has its own administration and servers use of v-node interface of Sun's VFS is made possible by carrying Venus into the Unix kernel, adapted security using Kerberos authentication system, chunk-based transfer and caching was adapted rather than full file caching

Andrew File System: AFS-3 v Security in AFS (protection v v v Authentication v v v 18 Principals are users and groups Users can be member of one or more groups The group rights of a user is the union of the rights from each of the groups System Administrators form a distinguished group, with unlimited access rights Uses Kerberos: users get an encrypted ticket form the authentication server, after presenting password The ticket expires after some hours Presentation of the ticket proves who the client is thus authenticates him/her

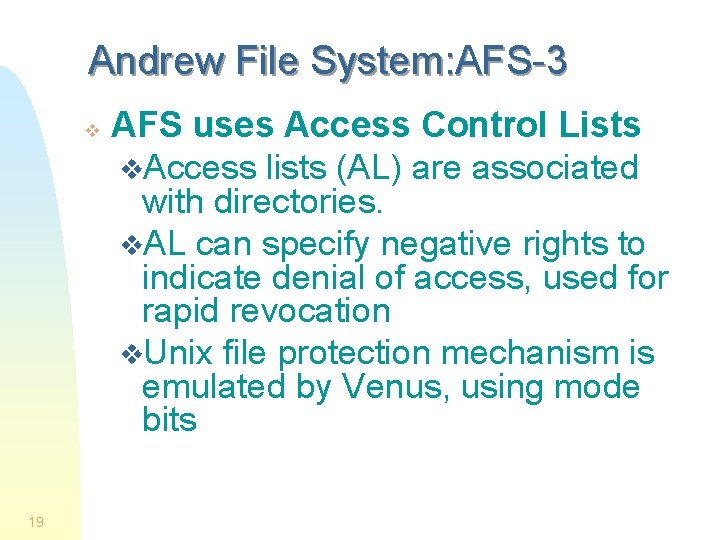

Andrew File System: AFS-3 v 19 AFS uses Access Control Lists v. Access lists (AL) are associated with directories. v. AL can specify negative rights to indicate denial of access, used for rapid revocation v. Unix file protection mechanism is emulated by Venus, using mode bits

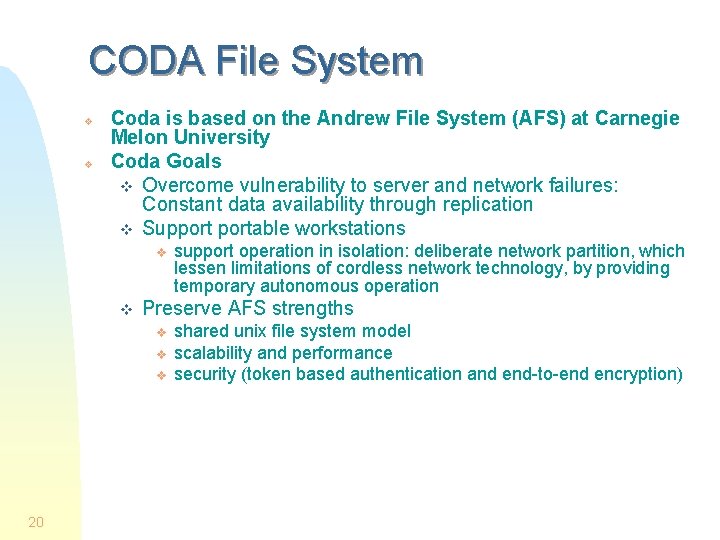

CODA File System v v Coda is based on the Andrew File System (AFS) at Carnegie Melon University Coda Goals v Overcome vulnerability to server and network failures: Constant data availability through replication v Supportable workstations v v Preserve AFS strengths v v v 20 support operation in isolation: deliberate network partition, which lessen limitations of cordless network technology, by providing temporary autonomous operation shared unix file system model scalability and performance security (token based authentication and end-to-end encryption)

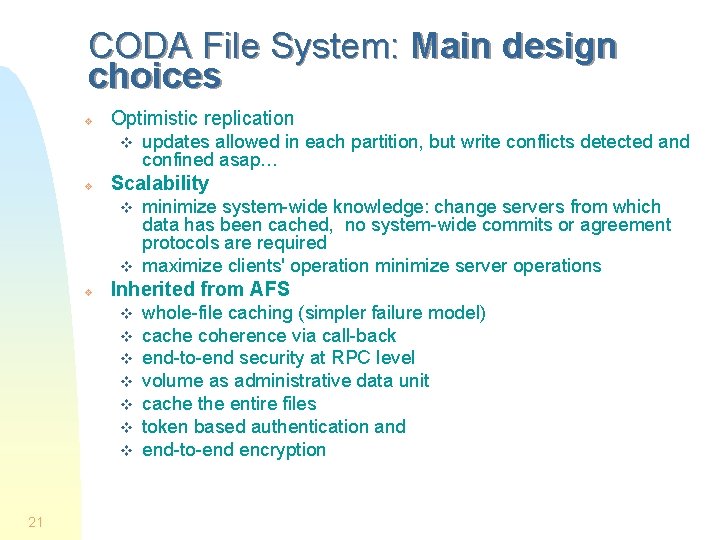

CODA File System: Main design choices v Optimistic replication v v Scalability v v v minimize system-wide knowledge: change servers from which data has been cached, no system-wide commits or agreement protocols are required maximize clients' operation minimize server operations Inherited from AFS v v v v 21 updates allowed in each partition, but write conflicts detected and confined asap… whole-file caching (simpler failure model) cache coherence via call-back end-to-end security at RPC level volume as administrative data unit cache the entire files token based authentication and end-to-end encryption

CODA File System: Consistency guarantees v Coda guarantees v open: result of last close in accessible universe v close: immediate propagation to accessible universe eventual propagation everywhere v failure: cache miss when disconnected, will be subject to conflict resolution for consistency v AFS guarantees v open: result of last close anywhere v close: immediate propagation everywhere v failure: server or network failure 22

CODA File System: Server replication v Volume is the unit of replication: a replicate volume may include several physical volumes or replicas v. A set of servers with replicas form a volume storage group (VSG) v only as subset of VSG may be accessible (accessible volume storage group-AVSG) v Venus at a client v caches latest data from AVSG v propagates changes to AVSG v detects AVSG membership changes 23

CODA File System: Access Protocol v v 24 Open is successful if cache hit and callback is valid – go ahead and use cached data If cache miss happens with open, fetch file from preferred server (PS), fetch version info from AVSG, place call-back on PS, notify stale servers On close propagates modified file to AVSG, to update version info at AVSG Directories are cached like files, mutations directly propagated to PS

CODA File System: Conflict detection and resolution v v v 25 Conflict arises when replicas diverge Sufficient information is logged (replay logs) to be able to replay. Replay logs are broadcast to AVSG. Reintegration allows Venus to update its cache pass changes made during emulation to the servers. Reintegration is made a volume at a time. Coda marks all accessible replicas as inconsistent, to be handled for consistency later Directory maintenance is automated by the servers using a log based strategy Be optimistic in allowing use of data. Be conservative in detecting conflicts

CODA File System: Disconnected operation v Venus operates in one of three states: v v Hoarding v v _ hoarding _ emulating _ reintegrating Simple demand caching is inadequate, in this phase the useful data is hoarded, in anticipation of disconnection, into a hoard database(HDB). The information to be hoarded is given either explicitly or implicitly. Hoard list is prioritized; highest priority is attended first. Emulation and Reintegration v Emulation is a function of acting as pseudo server. Temporary file ids are given for new objects, later to be changed to permanent ids. v v 26 Venus records mutations in replay log to be reintegrated into the servers, during the reintegration phase. Reintegration phase replays the log for the servers.

Sun’s NFS - Network File System v v NFS is in use since 1985, developed by Sun Microsystems Any part of the file system hierarchy can exist on any computer in the network. That computer doesn't even have to be running UNIX! v v 27 This is accomplished with the network file system (NFS), which is an extension to the file system that transparently extends the local disk over the network. With NFS, each client can define its own view of the file system. NFS is a stateless file system NFS uses network wide v-node to identify individual files, as part of VFS (virtual file system, under UNIX)

Sun’s NFS - Network File System: How NFS Works v v 28 UNIX supports many file system types. The kernel accesses files via the virtual file system (VFS). This makes every file system type appear the same to the operating system. One VFS type is the network file system. When the kernel I/O routines access an inode that is on a file system of the type NFS and the data is not in the memory cache of the system, the request is shunted to the next available nfsd task (or thread). When the local nfsd task receives the request it figures out on which system the partition resides and forwards the request to a biod (or nfsd thread) on the remote system using UDP. Remote system accesses the file using the normal I/O procedures just as in any other process and returns the requested information via UDP.

Sun’s NFS - Network File System: How NFS Works-2 v v 29 Since the biod just uses the normal I/O procedures the data will be cached in the RAM of the server for use by other local or biod tasks. If the block was recently accessed, the I/O request will be satisfied out of the cache and no disk activity will be needed. NFS uses UDP to allow multiple transactions to occur in parallel. But with UDP the requests are unverified. nfsd has a time-out mechanism to make sure it gets the data it asks for. When it cannot retrieve the data within its time-outs, it reports via a logging message that it is waiting for the server to respond. To increase performance the client systems add to their local file system caches disk blocks read via NFS, together with the attribute such as inodes, offset, etc. . To ensure that the disk writes complete correctly, NFS does those synchronously. This means that the process doing the writing is blocked until the remote system acknowledges that the disk write is complete.

Sun’s NFS - Network File System: How NFS Works-3 v v Asynchronous writes are possible, where the disk write is acknowledged back to the process immediately and then the write occurs later. Although using asynchronous writes is faster, it can lead to data inconstancy, write may fail due either the server being unreachable or a disk error. v v v 30 The local process may be already told that the write succeeded and can no longer handle the error status. NFS will allow you to balance your disk requirements and your disk space availability across the entire network. Use of the auto-mounter will allow this to be transparent to the users. Make generous use of both. NIS will make your life easier by allowing you to maintain a single copy of the system administration files. You have to troubleshoot the network when things go wrong, make use of the tools (utilities such as ifconfig, netstat, route, etc. ) available.

Sun’s NFS - Network File System: facilities v v v Data accessed by all users can be maintained on a central host, with clients mounting the directory at boot time. For example, all user accounts can be kept on one host in /home directory, and have all the other hosts mount this directory on local /home. So all the users can use their own home directories. Administrative data can be kept in one host. Data consuming large disk space can be kept on a special host. Example on how to mount an NFS directory: % mount -t nfs serverhostname: /home /users Mount will then try to connect to the mount daemon (mountd) on the workstation serverhostname via RPC. The server will check if the localhost is allowed to mount the directory in question, if so return it a file handle. This file handle will be used for all subsequent requests to files on /users. If someone accesses a file over NFS, the kernel places an RPC to the NFS daemon on the server machine. This call takes the file handle, the name of the file to be accessed, and the user’s user and group id as parameters. These are used to determine the access rights. How to mount NFS volume % mount -t nfs remote_host: remote_dir local_dir You can skip -t nfs as NFS understands it from : used in the source directory, eg. : % mount ccse: /home -o options the options can be put in /etc/fstab which simplifies the usage of /etc/fstab v v v v v 31 Exporting files by the nfs server To meat the requirements of the clients, the nfs server has to get configured for them. To permit one or more hosts to nfsmount a directory, it must be exported. This is done by putting export privilege into the /etc/export file at the server. For example, an export file may look like # export file for host 1 net 1, which is nfs server /home host 2 net 1(rw) host 3 net 1 (rw) /usr/Tex host 2 net 1(rw) host 3 net 1 (rw) /home/ftp (ro) #mount read-only To accelerate the job of volume mounting, an auto-mounter is used. This is implemented by a daemon (amd) Microsystems in 1985.

- Slides: 31