DB 2 Test data management using IBM OPTIM

DB 2 Test data management using IBM OPTIM TDM -- migration case study Frank Petersen, Bankdata - Denmark Session Code: F 12 Wednesday, 18 November 2015, 11: 00 - 12: 00 Platform: z/OS

Bullet points – “What’s in it for the audience” • Present the audience with a view of the challenges in managing test data in a modern complex environment. • Make the audience aware of both the advantages in using homegrown solutions and in using a vendor tool. And the disadvantages in both. • Present the audience with the decisions Bankdata made on managing test data in the different environments. • Give the audience a detailed understanding on how the vendor solution is implemented. • Give a status on pitfalls and problems that Bankdata has faced in this conversion project. 2

Agenda • Setting the stage • Anonymizing data in the past, the present and the future • Test systems • Other data sources than DB 2 z/OS ? • • • Bankdata’s homegrown solution IBM Optim TDM – how it works Bankdata’s implementation of Optim TDM Problems and challenges faced Anonymizing of data Why we have no “baseline concept” 3

Setting the stage • Many years ago production data was used for testing • Perhaps even directly in production • Many installations still have parts of this mantra living in the mind-sets • Today a typical standard is to anonymize selected columns • • Social security numbers, names, addresses But data in datasets are often neglected Data in datawarehousing is often neglected “No chain is stronger than the weakest link” • Denmark has had a number of “public scandals” • Not on test data but on real data • Still has put focus on the way we deal with access to data • Handling of credit cards enforces a strict rule set • EEC is expected to make legislation concerning test data 4

Anonymizing the data • There are 2 ways to anonymize data : • “Scrambling” or “randomization”– give (all) columns random values • “Pseudonymization” – change specific value to same “random” value • Is data recognizable ? • If data is pseudonymizated – someone can figure out the origin of the data. • If we have estate data and we search for the biggest house in Washington we might find : • In this way someone might be able to “reverse engineer” to the pseudonymizated owner’s accounts and in this way see the amount of money. • And find data that could be interesting for outsiders • EEC press release 2014: “Incidentally, the opinion clarifies that pseudonymization is not a method of anonymization, but merely a useful security measure to reduce the linkability of a dataset with the original identity 5 of a data subject. ”

Setting the stage • The need for test data is different for different tests : • Testing functionality will normally not require real nor anonymized data • Testing performance (in batch) will require huge amount of data • Testing datawarehousing and analytics will require correct distribution of values etc. • User acceptance test will require data with some kind of relationship to existing production data • Your setup of test environments can impact test data strategy : • How many environments ? • Do you run a batch flow on the environment during night ? • Can data be refreshed ? • How often ? • Initiated by the user ? • Can you allow a fenced environment to receive un-anonymized data • For troubleshooting with a limited number of staff with access ? 6

Bankdata’s homegrown solution • Has existed for decades • Very well designed • In our system we have an ”anchor” called ”Reference#” • Every (”most”) data is related from this number • More or less direct • The user ”books” test-data using ranges of these numbers • Data pulled out of production and anonymized • Every application system then feeds the next application system. • With more ”Reference-#”’s • Until all application areas are extracted anonymized 7

Bankdata’s homegrown solution • Consists of three parts : • Control system running at Web. Sphere (browser based) : • • Users places an order of ”Reference#”-ranges Can order, refresh and see status of orders Ensures that numbers are not ordered by multiple persons etc. In the early evening the orders are transformed to a dataset that enters the extracting system • Extracting system • • Running at production Anonymizes data COBOL programs Numerous iterations • Inserting system • • Handles all RI COBOL programs 8

Bankdata’s homegrown solution • Data extracted (COBOL-programs) • Delivered as part of the development and maintenance • Some system contains lots of special code • • • To compensate for shortcuts in the datamodel Speciel tests for speciel values for some columns Call to business logic to further enrich data • Anonymization coded directly in COBOL • • Good – no parameters that can be removed Bad – rigid approach • Data ending in LOTS of datasets • • Transported to receiving LPARS • Difficult to maintain overview (complex) Running in automated batch flow • Literally thousands of jobs • Has to complete before production batch flow starts 9

Bankdata’s homegrown solution • Anonymizing : • Names, addresses etc are anonymized • Keys, like Danish Social Security number, must adhere to conventions Modulus control Contains birthdates etc. Must be anonymized to the same ”random” value in all application systems Special modules on production that looks in a database. • If found, the anonymized value is returned • If not found, new random value generated and stored • Remember the EEC-words about ”pseudonymization” ? Special security environment for non-anonymized orders : • Totally fenced off – “production like” • Few users • Controlled under special change requests • For special end-users tests • For trouble shooting • • • 10

Bankdata’s homegrown solution • Inserting at receiving site : • Data is been deleted on keys before inserts are attempted • Inserts done by COBOL • • Programs contains logic to compensate for existing data Calls to a lot of local subroutines • Difficult to understand the code • Difficult to understand the need • If table definition changed at receiving site • • Abends in the INSERT flow • Programmer called in • Problem fixed by recoding program or bypassing specific data • Once the INSERTs start they have to finish ! Running in automated batch flow • Literally thousands of jobs • Batch flow starts immediately after data is insereted • Copy of the production batch-flow 11

The ”Project” • In 2014 Bankdata decided to look into using a tool instead : • To save development resources in coding and maintenance • We did NOT validate different tooling • We had a license for IBM OPTIM TDM through our outsourcer. • So the job was really : • • ”We have this thing we already pay for - make it work” Bankdata did not have any experience with OPTIM TDM • It was given a year timeframe for a conversion project • • • But unrealistic to convert thousands of programs And the project consisted of 1 person • Well - that kept discussions to a minimum And the receiving site consisted of up to 5 environments Minimum of changes to users ? ? I was hired for the task – which made me somehow motivated 12

Minimum of changes to users ? ? • A challenging sentence • • Remember most users saw a well-working system The ”fear of the unknown” factor Users had a browser based ordering system OPTIM TDM is a 3270 tool • At least the license we held was • Big-bang conversion ? ? : • Simply impossible because of the number of COBOL-programs • Simply impossible because of the number changes • The fabrication of test-data is vital • • • 450 developers An unknown number of customers for education and project-work. If application relationships breaks we would be in deep problems ! • To use both COBOL and tooling is NOT attractive • How to ensure that we get everything converted • To end with 50% converted will be worse than before ! • To do ”as we use to” is a human factor 13

What is OPTIM TDM? ? • Really consist of 3 elements (at least) • z/OS component driven from 3270 • GUI component (Eclipse based-separately charged) as front end • OPTIM Designer, Manager og Repository • Data Masking option (separately charged) with additional privacy functions • We only had license to the 3270 -component • The GUI component considered briefly • Needed if source is other than z/OS • Probably the GUI frontend is more appealing to modern users • Decided to start without it • Decided to “protect” users from seeing the tool • Data Privacy option was acquired • Specific functions needed 14

How does OPTIM TDM work • All experience is based upon outdated V 7. 1 • Currently being upgraded to 11. 1 • The key thing to understand is ”relations” • In 3270 you point a ”starting table” and a number of ”child tables” • For each child-table you indicate the traversing relation : • • • Can be a DB 2 RI-relations (”free”) Can be an existing OPTIM defined relation (”free”) Can be a new OPTIM defined relation – you have to define it in 3270 15

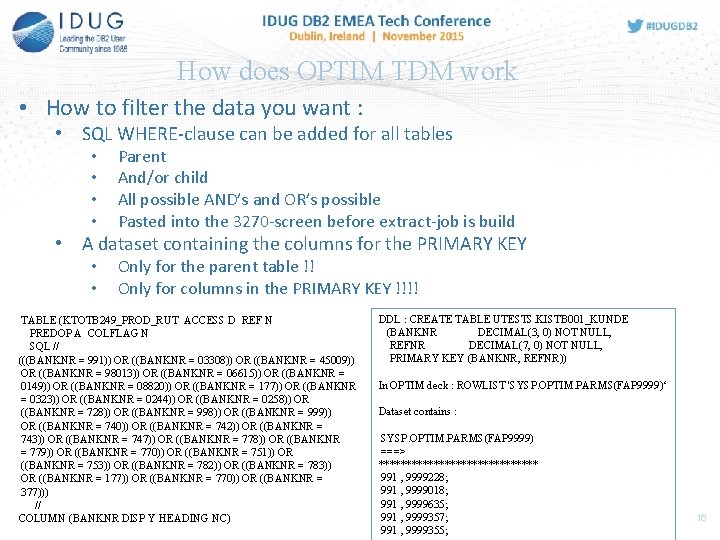

How does OPTIM TDM work • How to filter the data you want : • SQL WHERE-clause can be added for all tables • • Parent And/or child All possible AND’s and OR’s possible Pasted into the 3270 -screen before extract-job is build • A dataset containing the columns for the PRIMARY KEY • • Only for the parent table !! Only for columns in the PRIMARY KEY !!!! TABLE (KTOTB 249_PROD_RUT ACCESS D REF N PREDOP A COLFLAG N SQL // (((BANKNR = 991)) OR ((BANKNR = 03308)) OR ((BANKNR = 45009)) OR ((BANKNR = 98013)) OR ((BANKNR = 06615)) OR ((BANKNR = 0149)) OR ((BANKNR = 08820)) OR ((BANKNR = 177)) OR ((BANKNR = 0323)) OR ((BANKNR = 0244)) OR ((BANKNR = 0258)) OR ((BANKNR = 728)) OR ((BANKNR = 999)) OR ((BANKNR = 740)) OR ((BANKNR = 742)) OR ((BANKNR = 743)) OR ((BANKNR = 747)) OR ((BANKNR = 778)) OR ((BANKNR = 779)) OR ((BANKNR = 770)) OR ((BANKNR = 751)) OR ((BANKNR = 753)) OR ((BANKNR = 782)) OR ((BANKNR = 783)) OR ((BANKNR = 177)) OR ((BANKNR = 770)) OR ((BANKNR = 377))) // COLUMN (BANKNR DISP Y HEADING NC) DDL : CREATE TABLE UTESTS. KISTB 001_KUNDE (BANKNR DECIMAL(3, 0) NOT NULL, REFNR DECIMAL(7, 0) NOT NULL, PRIMARY KEY (BANKNR, REFNR)) In OPTIM deck : ROWLIST 'SYSP. OPTIM. PARMS(FAP 9999)‘ Dataset contains : SYSP. OPTIM. PARMS(FAP 9999) ===> *************** 991 , 9999228; 991 , 9999018; 991 , 9999635; 991 , 9999357; 991 , 9999355; 16

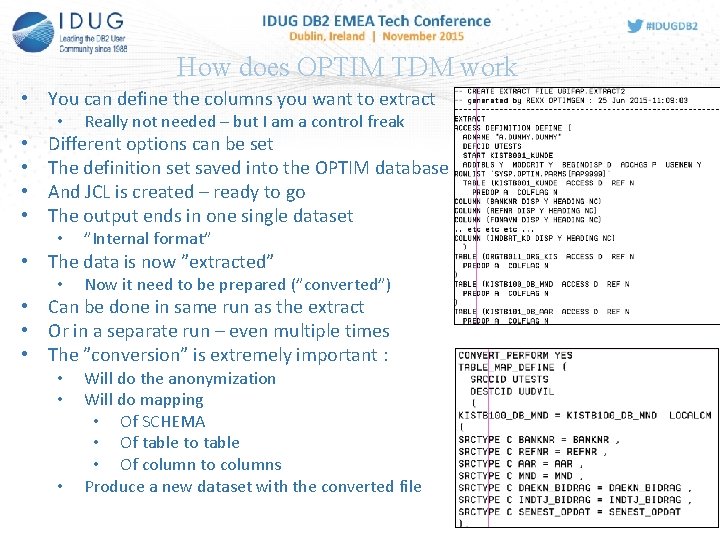

How does OPTIM TDM work • You can define the columns you want to extract • • • Really not needed – but I am a control freak Different options can be set The definition set saved into the OPTIM database And JCL is created – ready to go The output ends in one single dataset • ”Internal format” • The data is now ”extracted” • Now it need to be prepared (”converted”) • Can be done in same run as the extract • Or in a separate run – even multiple times • The ”conversion” is extremely important : • • • Will do the anonymization Will do mapping • Of SCHEMA • Of table to table • Of column to columns Produce a new dataset with the converted file 17

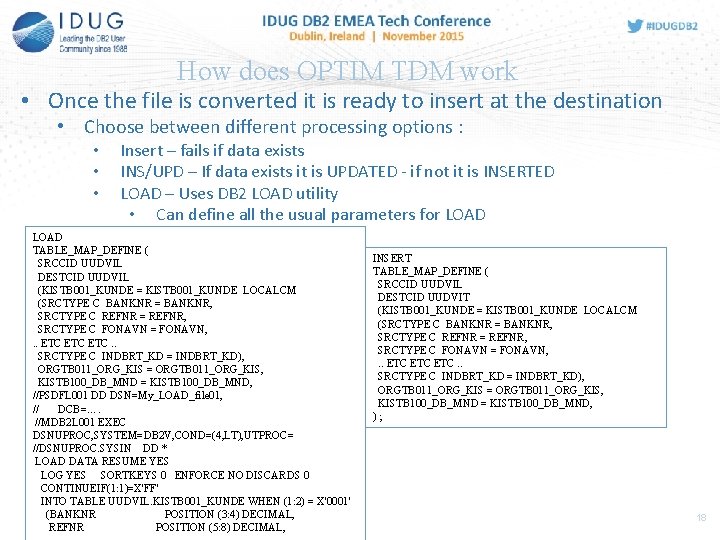

How does OPTIM TDM work • Once the file is converted it is ready to insert at the destination • Choose between different processing options : • • • Insert – fails if data exists INS/UPD – If data exists it is UPDATED - if not it is INSERTED LOAD – Uses DB 2 LOAD utility • Can define all the usual parameters for LOAD TABLE_MAP_DEFINE ( SRCCID UUDVIL DESTCID UUDVIL (KISTB 001_KUNDE = KISTB 001_KUNDE LOCALCM (SRCTYPE C BANKNR = BANKNR, SRCTYPE C REFNR = REFNR, SRCTYPE C FONAVN = FONAVN, . . ETC ETC. . SRCTYPE C INDBRT_KD = INDBRT_KD), ORGTB 011_ORG_KIS = ORGTB 011_ORG_KIS, KISTB 100_DB_MND = KISTB 100_DB_MND, //PSDFL 001 DD DSN=My_LOAD_file 01, // DCB=…. //MDB 2 L 001 EXEC DSNUPROC, SYSTEM=DB 2 V, COND=(4, LT), UTPROC= //DSNUPROC. SYSIN DD * LOAD DATA RESUME YES LOG YES SORTKEYS 0 ENFORCE NO DISCARDS 0 CONTINUEIF(1: 1)=X'FF' INTO TABLE UUDVIL. KISTB 001_KUNDE WHEN (1: 2) = X'0001' (BANKNR POSITION (3: 4) DECIMAL, REFNR POSITION (5: 8) DECIMAL, INSERT TABLE_MAP_DEFINE ( SRCCID UUDVIL DESTCID UUDVIT (KISTB 001_KUNDE = KISTB 001_KUNDE LOCALCM (SRCTYPE C BANKNR = BANKNR, SRCTYPE C REFNR = REFNR, SRCTYPE C FONAVN = FONAVN, . . ETC ETC. . SRCTYPE C INDBRT_KD = INDBRT_KD), ORGTB 011_ORG_KIS = ORGTB 011_ORG_KIS, KISTB 100_DB_MND = KISTB 100_DB_MND, ); 18

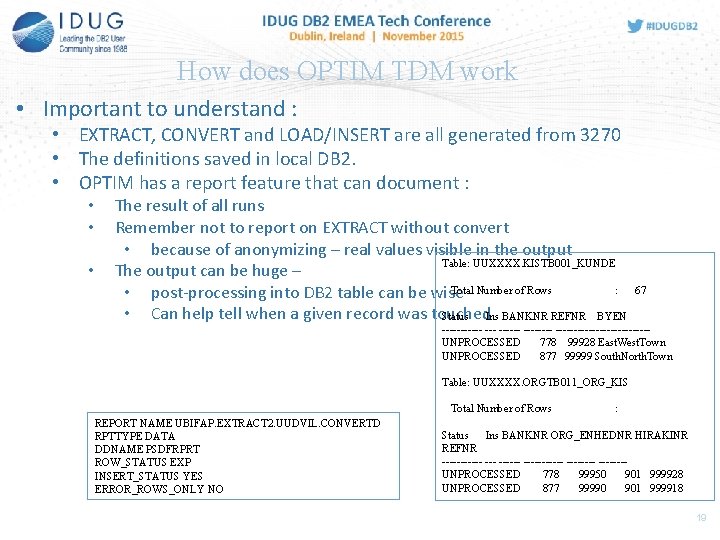

How does OPTIM TDM work • Important to understand : • EXTRACT, CONVERT and LOAD/INSERT are all generated from 3270 • The definitions saved in local DB 2. • OPTIM has a report feature that can document : • • • The result of all runs Remember not to report on EXTRACT without convert • because of anonymizing – real values visible in the output Table: UUXXXX. KISTB 001_KUNDE The output can be huge – Total Number of Rows : • post-processing into DB 2 table can be wise • Can help tell when a given record was touched Status Ins BANKNR REFNR BYEN 67 ------ ---------------UNPROCESSED 778 99928 East. West. Town UNPROCESSED 877 99999 South. North. Town Table: UUXXXX. ORGTB 011_ORG_KIS Total Number of Rows REPORT NAME UBIFAP. EXTRACT 2. UUDVIL. CONVERTD RPTTYPE DATA DDNAME PSDFRPRT ROW_STATUS EXP INSERT_STATUS YES ERROR_ROWS_ONLY NO : Status Ins BANKNR ORG_ENHEDNR HIRAKINR REFNR ------ --------UNPROCESSED 778 99950 901 999928 UNPROCESSED 877 99990 901 999918 19

Challenges that turned into problems • The most important problem : • The product is very much a “user at the terminal”-concept • We run everything automated from TWS (OPC) • DB 2 changes are flowing around the various subsystems and environments • We do not want users to go into 3270 because a table changes • • for generating new SQL for where-clauses For generating new JCL And sending this into TWS For every environment • Our entrances are these mentioned “Reference#” • • • How to get these into the OPTIM JCL ? Into a dataset if a suitable PRIMARY KEY exists Or into SQL syntax • We could modify the input deck manually • • Error prone Take the cost of generating manually – but every day ? ? 20

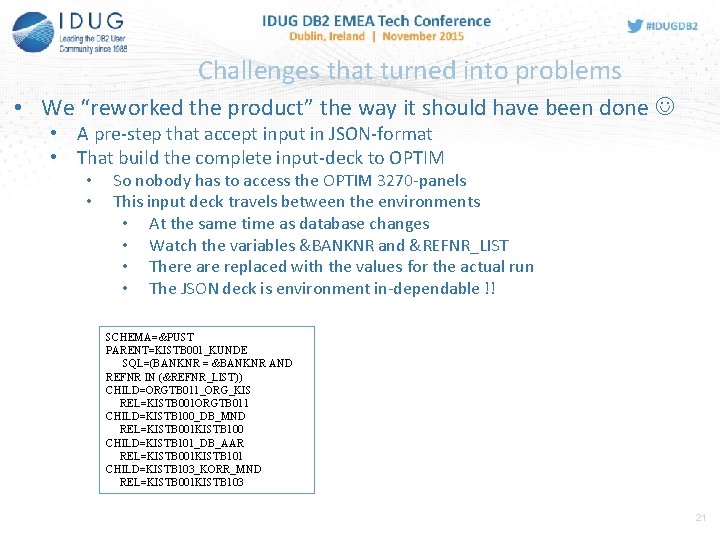

Challenges that turned into problems • We “reworked the product” the way it should have been done • A pre-step that accept input in JSON-format • That build the complete input-deck to OPTIM • • So nobody has to access the OPTIM 3270 -panels This input deck travels between the environments • At the same time as database changes • Watch the variables &BANKNR and &REFNR_LIST • There are replaced with the values for the actual run • The JSON deck is environment in-dependable !! SCHEMA=&PUST PARENT=KISTB 001_KUNDE SQL=(BANKNR = &BANKNR AND REFNR IN (&REFNR_LIST)) CHILD=ORGTB 011_ORG_KIS REL=KISTB 001 ORGTB 011 CHILD=KISTB 100_DB_MND REL=KISTB 001 KISTB 100 CHILD=KISTB 101_DB_AAR REL=KISTB 001 KISTB 101 CHILD=KISTB 103_KORR_MND REL=KISTB 001 KISTB 103 21

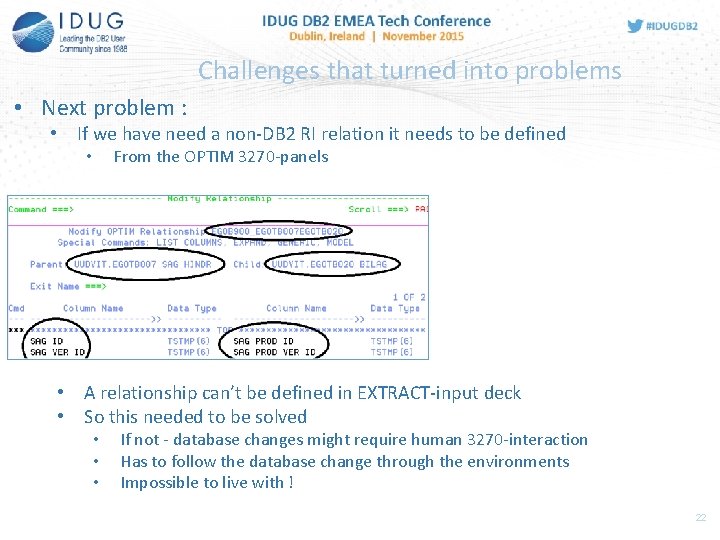

Challenges that turned into problems • Next problem : • If we have need a non-DB 2 RI relation it needs to be defined From the OPTIM 3270 -panels • • A relationship can’t be defined in EXTRACT-input deck • So this needed to be solved • • • If not - database changes might require human 3270 -interaction Has to follow the database change through the environments Impossible to live with ! 22

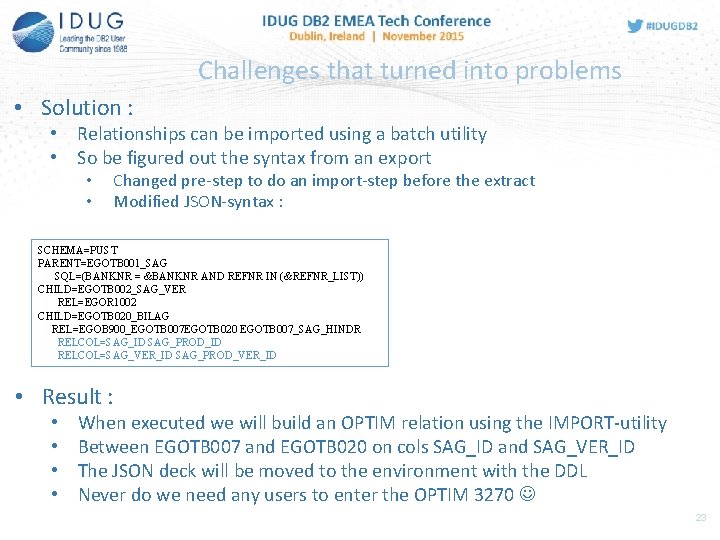

Challenges that turned into problems • Solution : • Relationships can be imported using a batch utility • So be figured out the syntax from an export • • Changed pre-step to do an import-step before the extract Modified JSON-syntax : SCHEMA=PUST PARENT=EGOTB 001_SAG SQL=(BANKNR = &BANKNR AND REFNR IN (&REFNR_LIST)) CHILD=EGOTB 002_SAG_VER REL=EGOR 1002 CHILD=EGOTB 020_BILAG REL=EGOB 900_EGOTB 007 EGOTB 020 EGOTB 007_SAG_HINDR RELCOL=SAG_ID SAG_PROD_ID RELCOL=SAG_VER_ID SAG_PROD_VER_ID • Result : • • When executed we will build an OPTIM relation using the IMPORT-utility Between EGOTB 007 and EGOTB 020 on cols SAG_ID and SAG_VER_ID The JSON deck will be moved to the environment with the DDL Never do we need any users to enter the OPTIM 3270 23

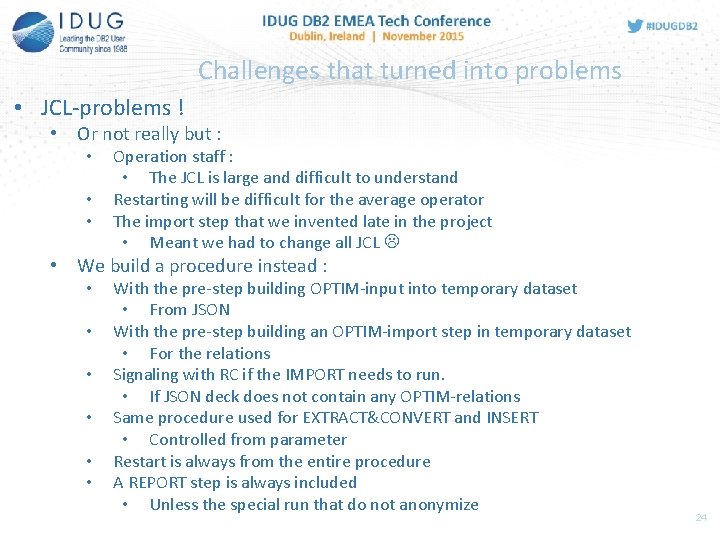

Challenges that turned into problems • JCL-problems ! • Or not really but : • • • Operation staff : • The JCL is large and difficult to understand Restarting will be difficult for the average operator The import step that we invented late in the project • Meant we had to change all JCL • We build a procedure instead : • • • With the pre-step building OPTIM-input into temporary dataset • From JSON With the pre-step building an OPTIM-import step in temporary dataset • For the relations Signaling with RC if the IMPORT needs to run. • If JSON deck does not contain any OPTIM-relations Same procedure used for EXTRACT&CONVERT and INSERT • Controlled from parameter Restart is always from the entire procedure A REPORT step is always included • Unless the special run that do not anonymize 24

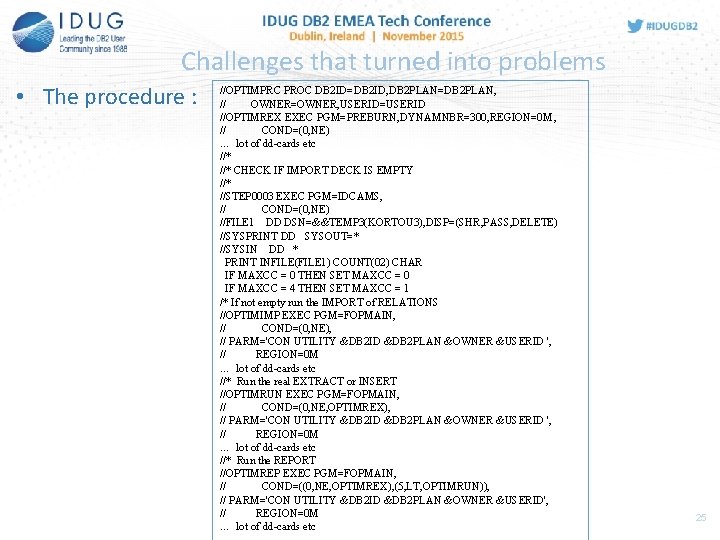

Challenges that turned into problems • The procedure : //OPTIMPRC PROC DB 2 ID=DB 2 ID, DB 2 PLAN=DB 2 PLAN, // OWNER=OWNER, USERID=USERID //OPTIMREX EXEC PGM=PREBURN, DYNAMNBR=300, REGION=0 M, // COND=(0, NE) … lot of dd-cards etc //* CHECK IF IMPORT DECK IS EMPTY //* //STEP 0003 EXEC PGM=IDCAMS, // COND=(0, NE) //FILE 1 DD DSN=&&TEMP 3(KORTOU 3), DISP=(SHR, PASS, DELETE) //SYSPRINT DD SYSOUT=* //SYSIN DD * PRINT INFILE(FILE 1) COUNT(02) CHAR IF MAXCC = 0 THEN SET MAXCC = 0 IF MAXCC = 4 THEN SET MAXCC = 1 /* If not empty run the IMPORT of RELATIONS //OPTIMIMP EXEC PGM=FOPMAIN, // COND=(0, NE), // PARM='CON UTILITY &DB 2 ID &DB 2 PLAN &OWNER &USERID ', // REGION=0 M … lot of dd-cards etc //* Run the real EXTRACT or INSERT //OPTIMRUN EXEC PGM=FOPMAIN, // COND=(0, NE, OPTIMREX), // PARM='CON UTILITY &DB 2 ID &DB 2 PLAN &OWNER &USERID ', // REGION=0 M … lot of dd-cards etc //* Run the REPORT //OPTIMREP EXEC PGM=FOPMAIN, // COND=((0, NE, OPTIMREX), (5, LT, OPTIMRUN)), // PARM='CON UTILITY &DB 2 ID &DB 2 PLAN &OWNER &USERID', // REGION=0 M … lot of dd-cards etc 25

Let’s catch our breath • What we (all) should have learned so far : • We can build a relatively simple JSON deck • • • That tells if this is a EXTRACT or INSERT Describes all tables that has to be included Describes all columns that has to be included Describes all relations between the tables Describes all relations between columns • We can let this deck follow database changes to ensure consistence • We can run a pre-step that builds the proper OPTIM decks • • • For import of relations Tell if this is an EXTRACT or an INSERT Include WHERE-clause or dataset to describe which rows to include • We can use one single procedure to run all OPTIM jobs • We don’t need any user to use the OPTIM TDM 3270 -screens 26

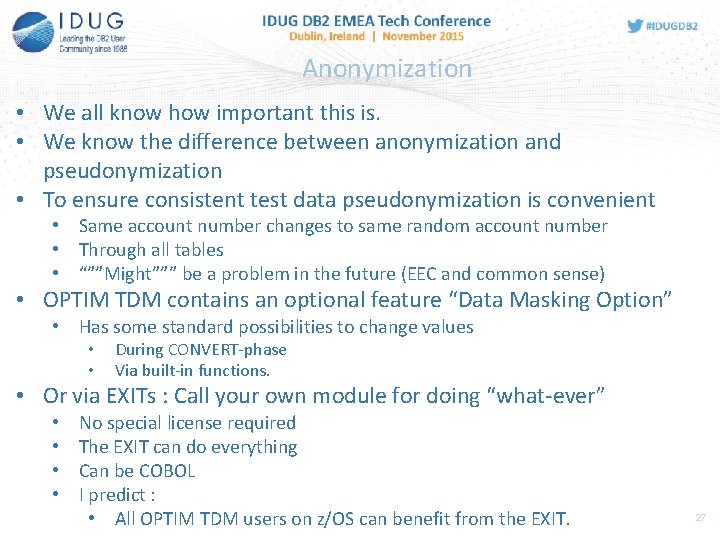

Anonymization • We all know how important this is. • We know the difference between anonymization and pseudonymization • To ensure consistent test data pseudonymization is convenient • Same account number changes to same random account number • Through all tables • “””Might””” be a problem in the future (EEC and common sense) • OPTIM TDM contains an optional feature “Data Masking Option” • Has some standard possibilities to change values • • During CONVERT-phase Via built-in functions. • Or via EXITs : Call your own module for doing “what-ever” • • No special license required The EXIT can do everything Can be COBOL I predict : • All OPTIM TDM users on z/OS can benefit from the EXIT. 27

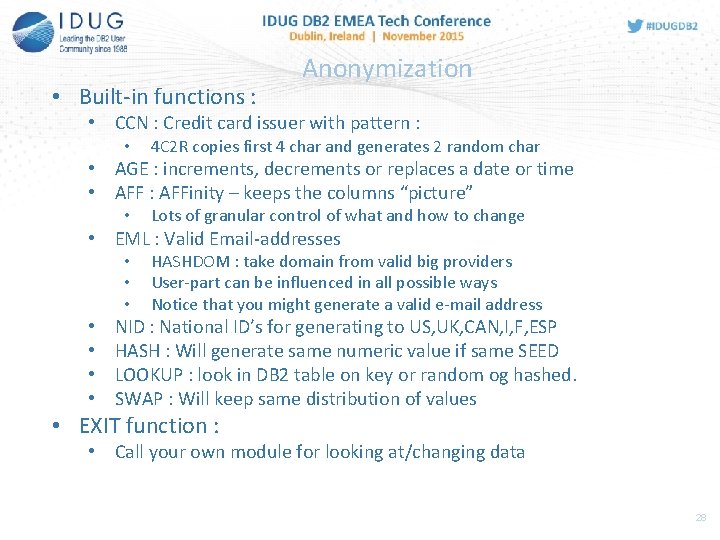

• Built-in functions : Anonymization • CCN : Credit card issuer with pattern : • 4 C 2 R copies first 4 char and generates 2 random char • AGE : increments, decrements or replaces a date or time • AFF : AFFinity – keeps the columns “picture” • Lots of granular control of what and how to change • EML : Valid Email-addresses • • HASHDOM : take domain from valid big providers User-part can be influenced in all possible ways Notice that you might generate a valid e-mail address NID : National ID’s for generating to US, UK, CAN, I, F, ESP HASH : Will generate same numeric value if same SEED LOOKUP : look in DB 2 table on key or random og hashed. SWAP : Will keep same distribution of values • EXIT function : • Call your own module for looking at/changing data 28

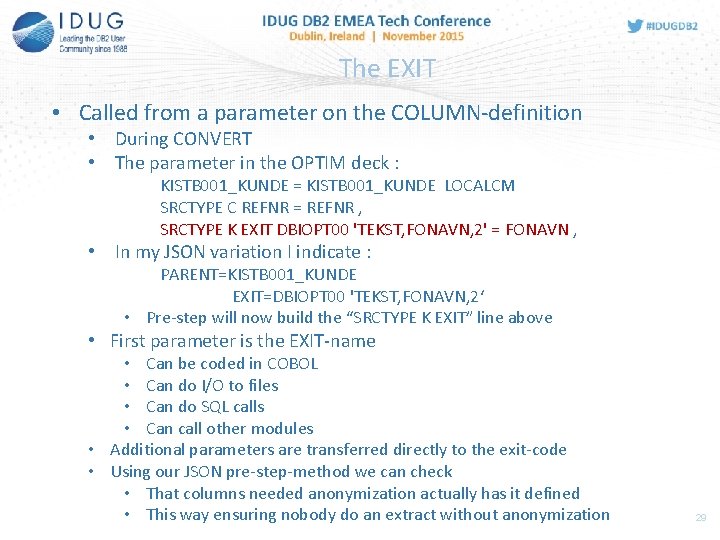

The EXIT • Called from a parameter on the COLUMN-definition • During CONVERT • The parameter in the OPTIM deck : KISTB 001_KUNDE = KISTB 001_KUNDE LOCALCM SRCTYPE C REFNR = REFNR , SRCTYPE K EXIT DBIOPT 00 'TEKST, FONAVN, 2' = FONAVN , • In my JSON variation I indicate : PARENT=KISTB 001_KUNDE EXIT=DBIOPT 00 'TEKST, FONAVN, 2‘ • Pre-step will now build the “SRCTYPE K EXIT” line above • First parameter is the EXIT-name • Can be coded in COBOL • Can do I/O to files • Can do SQL calls • Can call other modules • Additional parameters are transferred directly to the exit-code • Using our JSON pre-step-method we can check • That columns needed anonymization actually has it defined • This way ensuring nobody do an extract without anonymization 29

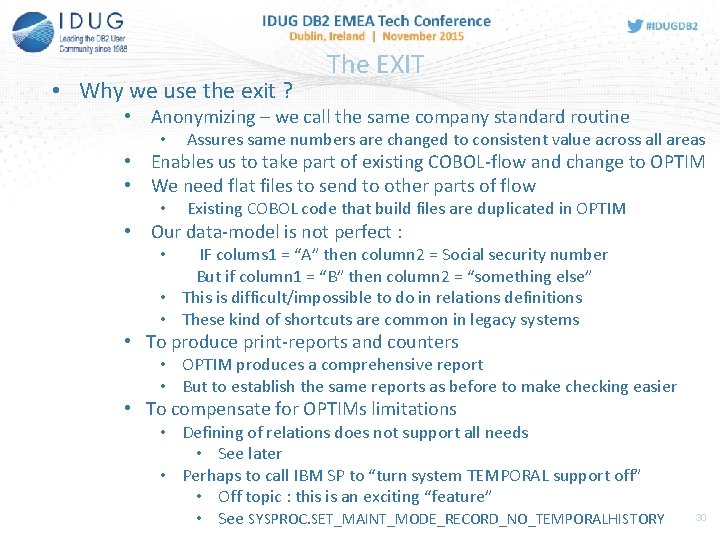

• Why we use the exit ? The EXIT • Anonymizing – we call the same company standard routine • Assures same numbers are changed to consistent value across all areas • Enables us to take part of existing COBOL-flow and change to OPTIM • We need flat files to send to other parts of flow • Existing COBOL code that build files are duplicated in OPTIM • Our data-model is not perfect : IF colums 1 = “A” then column 2 = Social security number But if column 1 = “B” then column 2 = “something else” • This is difficult/impossible to do in relations definitions • These kind of shortcuts are common in legacy systems • • To produce print-reports and counters • OPTIM produces a comprehensive report • But to establish the same reports as before to make checking easier • To compensate for OPTIMs limitations • Defining of relations does not support all needs • See later • Perhaps to call IBM SP to “turn system TEMPORAL support off” • Off topic : this is an exciting “feature” • See SYSPROC. SET_MAINT_MODE_RECORD_NO_TEMPORALHISTORY 30

Experiences in the project -converting !! • The challenge is debugging all the legacy code • To find abnormalities, special code • To find which datasets are produced and why • To test a legacy program complex after changing to OPTIM • 3 main application areas converted • Has taken around 6 months elapsed • Inclusive building the OPTIM “infrastructure” • • • The pre-step to transform JSON syntax to OPTIM Doing documentation Getting “someone” to test • 1 persons “ 50%-project” – guess who ! • Has reduced # of errors (0 real errors experienced) • Even solved known problem in the “old” system • Immune to object definition changes (more later) 31

Experiences in the project –new systems !! • With the infrastructure in place • How difficult is it to make a process for new areas ? • One complete new system called for help • Asked them to deliver a data model • • • With DB 2 RI relations With other application relations drawn With comments about shortcuts in the model • Like columns that can hold different data • 30 minutes meeting was held • The delivered data model showed no weaknesses • All relations beautiful described • The model was transferred to our JSON syntax • Extract run was scheduled • Data inserted on the receiving test-system • Meeting was held at 9 AM • Data was inserted at 10. 30 AM • Would have taken at least a month to build programs ! 32

Problems seen in OPTIM TDM • No automatic day 1 support for new DB 2 features • Missed support for temporal tables • Pressure needed to get it included in a product upgrade • Not tested yet : • Hopefully included at this very moment • • SYSPROC. SET_MAINT_MODE_RECORD_NO_TEMPORALHISTORY ? Might be possible to call from EXIT !!!! • Obviously not designed for batch-only execution !! • Dataset-names in SYSIN as parameters • If you type wrong ds-name : no JCLERR visible • Indicated in the output report - so invisible to operators • Not possible to merge several extracts to one accumulated • We do several extracts from production • These might have overlapping keys etc • Would have been nice to merge the extract-datasets • • To do only one INSERT-run • And thus get only one REPORT Would perhaps make baselines easier to maintain 33

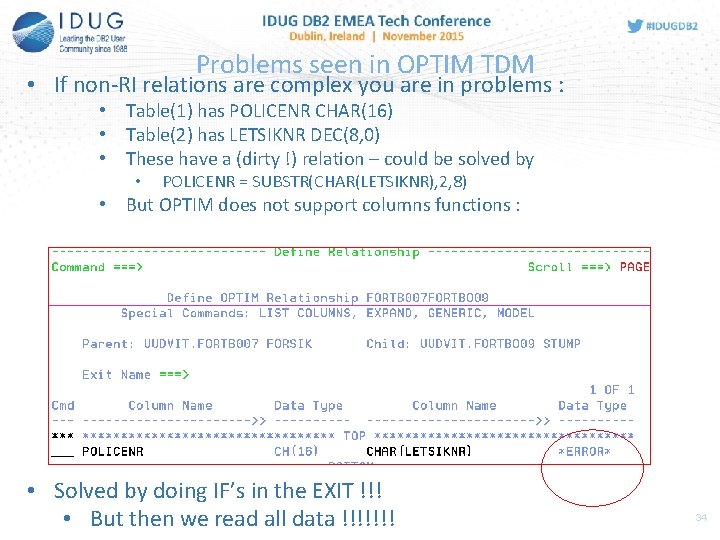

Problems seen in OPTIM TDM • If non-RI relations are complex you are in problems : • Table(1) has POLICENR CHAR(16) • Table(2) has LETSIKNR DEC(8, 0) • These have a (dirty !) relation – could be solved by • POLICENR = SUBSTR(CHAR(LETSIKNR), 2, 8) • But OPTIM does not support columns functions : • Solved by doing IF’s in the EXIT !!! • But then we read all data !!!!!!! 34

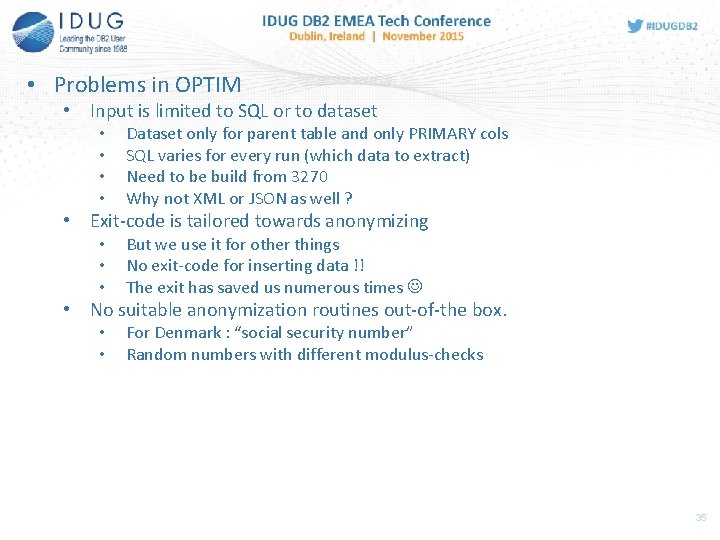

• Problems in OPTIM • Input is limited to SQL or to dataset • • Dataset only for parent table and only PRIMARY cols SQL varies for every run (which data to extract) Need to be build from 3270 Why not XML or JSON as well ? • Exit-code is tailored towards anonymizing • • • But we use it for other things No exit-code for inserting data !! The exit has saved us numerous times • No suitable anonymization routines out-of-the box. • • For Denmark : “social security number” Random numbers with different modulus-checks 35

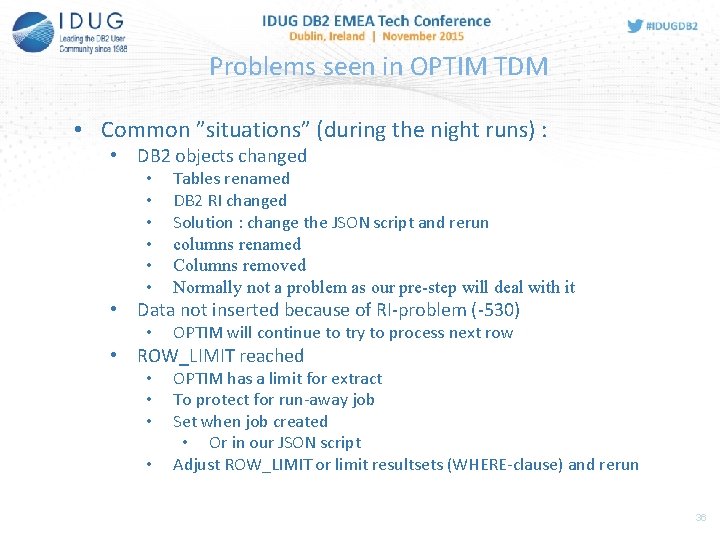

Problems seen in OPTIM TDM • Common ”situations” (during the night runs) : • DB 2 objects changed • • • Tables renamed DB 2 RI changed Solution : change the JSON script and rerun columns renamed Columns removed Normally not a problem as our pre-step will deal with it • OPTIM will continue to try to process next row • Data not inserted because of RI-problem (-530) • ROW_LIMIT reached • • OPTIM has a limit for extract To protect for run-away job Set when job created • Or in our JSON script Adjust ROW_LIMIT or limit resultsets (WHERE-clause) and rerun 36

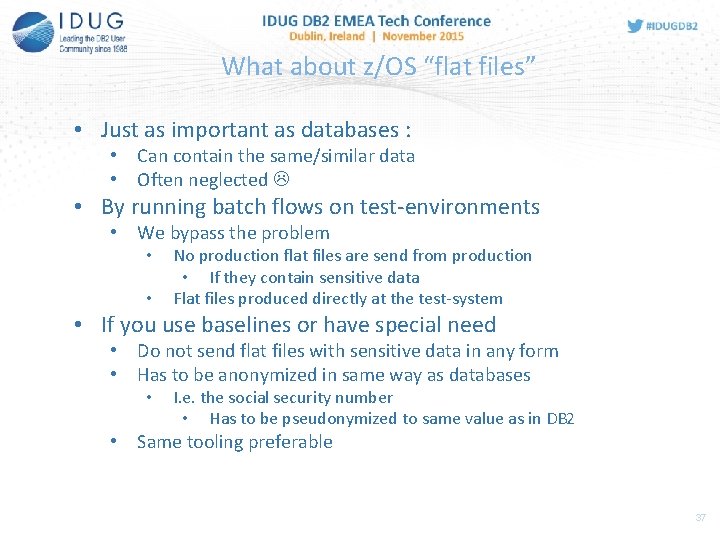

What about z/OS “flat files” • Just as important as databases : • Can contain the same/similar data • Often neglected • By running batch flows on test-environments • We bypass the problem • • No production flat files are send from production • If they contain sensitive data Flat files produced directly at the test-system • If you use baselines or have special need • Do not send flat files with sensitive data in any form • Has to be anonymized in same way as databases • I. e. the social security number • Has to be pseudonymized to same value as in DB 2 • Same tooling preferable 37

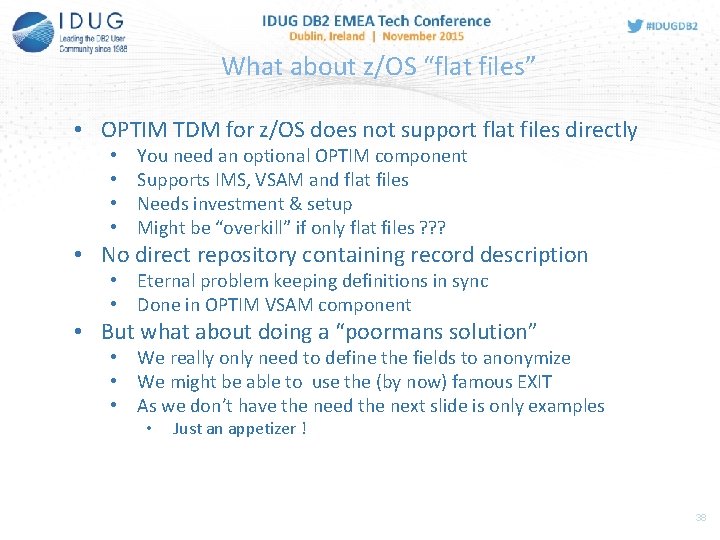

What about z/OS “flat files” • OPTIM TDM for z/OS does not support flat files directly • • You need an optional OPTIM component Supports IMS, VSAM and flat files Needs investment & setup Might be “overkill” if only flat files ? ? ? • No direct repository containing record description • Eternal problem keeping definitions in sync • Done in OPTIM VSAM component • But what about doing a “poormans solution” • We really only need to define the fields to anonymize • We might be able to use the (by now) famous EXIT • As we don’t have the need the next slide is only examples • Just an appetizer ! 38

What about z/OS “flat files” • Just for the fun of it : • Modified the pre-step code to accept JSON like this: • Meaning : anonymize offset 20, 42 and length 10 as Danish social security number • The table OPTIM thinks it is going to extract is SYSDUMMY 1 • Changed the EXIT to read/write the dataset • And do the calls to the security number • Result : 39

Baseline • Baselining are libraries of extracts that : • Are consistent • Users can pick from and “reset” a test environment to it • Easy for users to identify • OPTIM TDM are well designed for baselining : • The extracts are really baselines • But needs at least one CONVERT done to ensure anonymization • Probably consists of many extract datasets (customer, orders, etc …) • A merge feature of extracts would have been nice • A baseline can be re-converted to make it for a given test-environment • Or you can build baselines per environment by running converts in advance • If you consider using baselines : • What about data in other systems than z/OS • What about flat files on z/OS • Bankdata does not use baselines : • We run consistent batch flows on all test-environments • Right after data has been inserted • Resetting to baseline will require full batchflow to be redone !! 40

“ As birthday present I which “ • The EXIT receives control per columns, per row, per table • Difficult to test columns in other tables in the hierarchy • Nice if all columns in all related rows were available. • No combination of EXIT and standard function • For instance you can not both • • AGE a column And receive it in an EXIT • “Unclean” data model : • If table rows have to be selected if COLX in another table contains ”x” • • Tables can be addressed from 2 relations But SQL can only be set once ! • Upward compatibility missing • EXTRACT from version X can not be used in version X-1 • We can not migrate all LPARS at the same time • Problem when EXTRACTs are shipped in all directions 41

Conclusion • OPTIM TDM has full filled our expectations • Migration of existing homemade extracts/inserts difficult • Especially testing • All relations have to be 100% ok !! • If data model is “unclean” • Coding in exit probably needed ! • Makes the extract non-transparent ! • Anonymization is fairly easy ! • Using the built-in function and the exit • But only “Pseudonymization” • No product related errors experienced !! • The shell we use to make this automated (JSON) • Simply a prereq for this being a success • Product too 3270’ied • GUI version exists – not validated (price) !! 42

Frank Petersen Bankdata - Denmark fap@bankdata. dk DB 2 Test data management using IBM OPTIM TDM -- migration case study Session F 12 Please fill out your session evaluation before leaving!

- Slides: 43