Classification III Lecturer Dr Bo Yuan LOGO Email

Classification III Lecturer: Dr. Bo Yuan LOGO E-mail: yuanb@sz. tsinghua. edu. cn

Overview v Artificial Neural Networks 2

Biological Motivation Ø 1011: The number of neurons in the human brain Ø 104: The average number of connections of each neuron Ø 10 -3: The fastest switching times of neurons Ø 10 -10: The switching speeds of computers Ø 10 -1: The time required to visually recognize your mother 3

Biological Motivation v The power of parallelism v The information processing abilities of biological neural systems follow from highly parallel processes operating on representations that are distributed over many neurons. v The motivation of ANN is to capture this kind of highly parallel computation based on distributed representations. v Sequential machines vs. Parallel machines v Group A § Using ANN to study and model biological learning processes. v Group B § Obtaining highly effective machine learning algorithms, regardless of how closely these algorithms mimic biological processes. 4

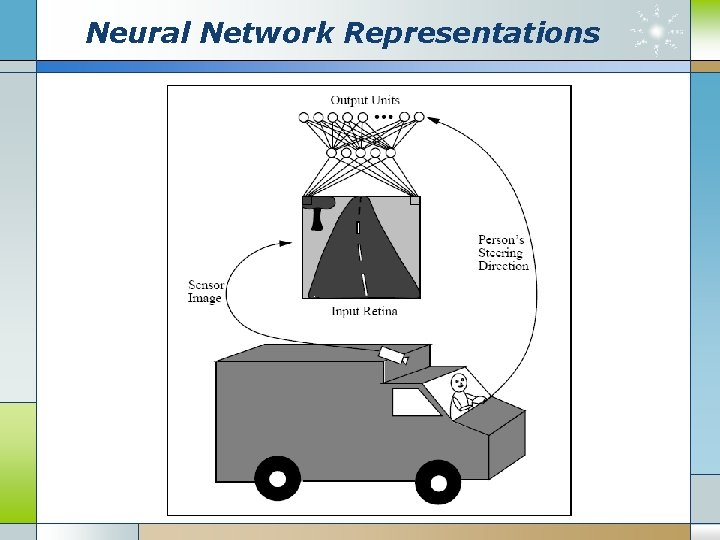

Neural Network Representations 5

Robot vs. Human 6

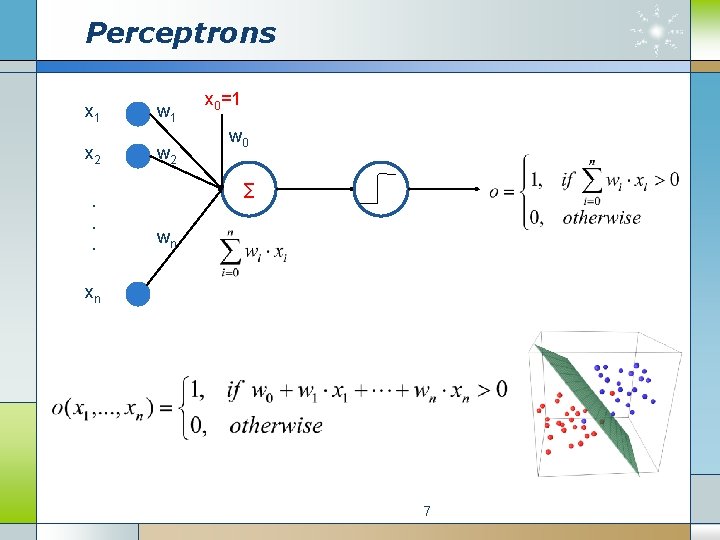

Perceptrons x 1 x 2. . . w 1 w 2 x 0=1 w 0 ∑ wn xn 7

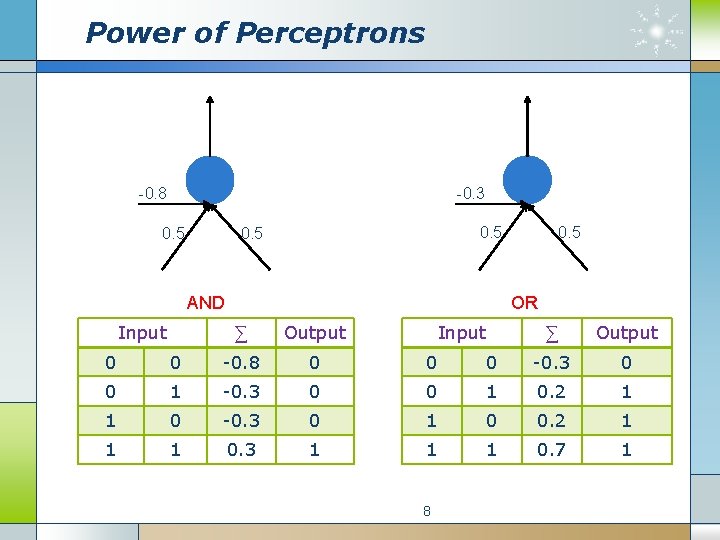

Power of Perceptrons -0. 3 -0. 8 0. 5 AND Input 0. 5 OR ∑ Output Input ∑ Output 0 0 -0. 8 0 0 0 -0. 3 0 0 1 0. 2 1 1 0 -0. 3 0 1 0 0. 2 1 1 1 0. 3 1 1 1 0. 7 1 8

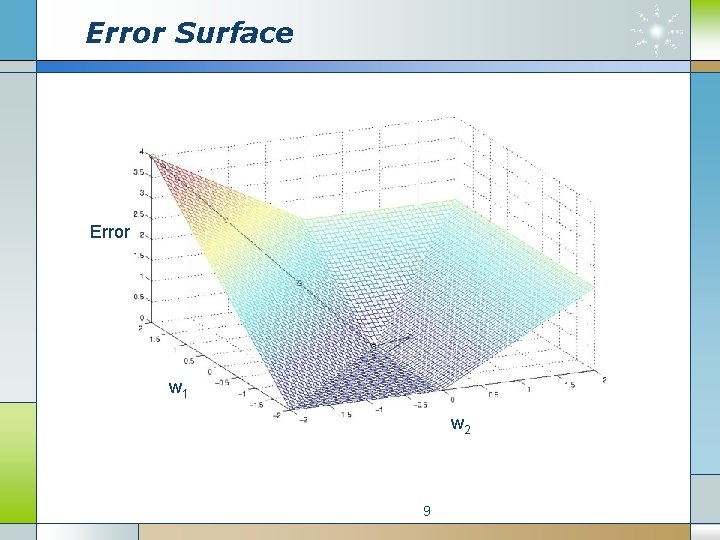

Error Surface Error w 1 w 2 9

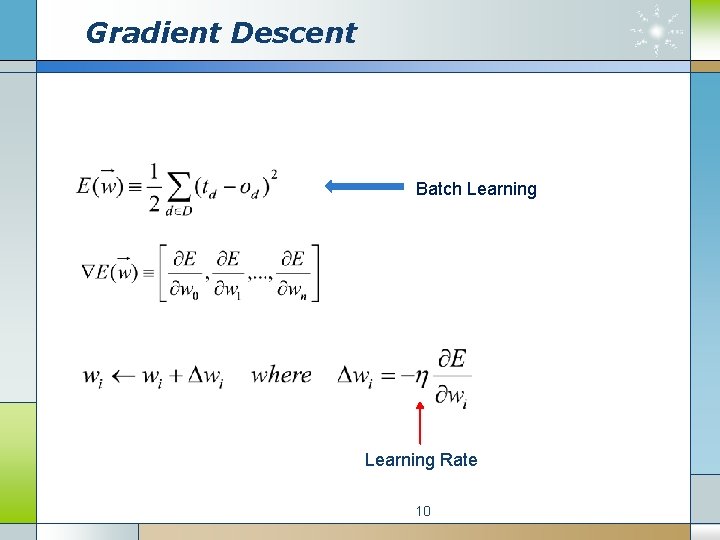

Gradient Descent Batch Learning Rate 10

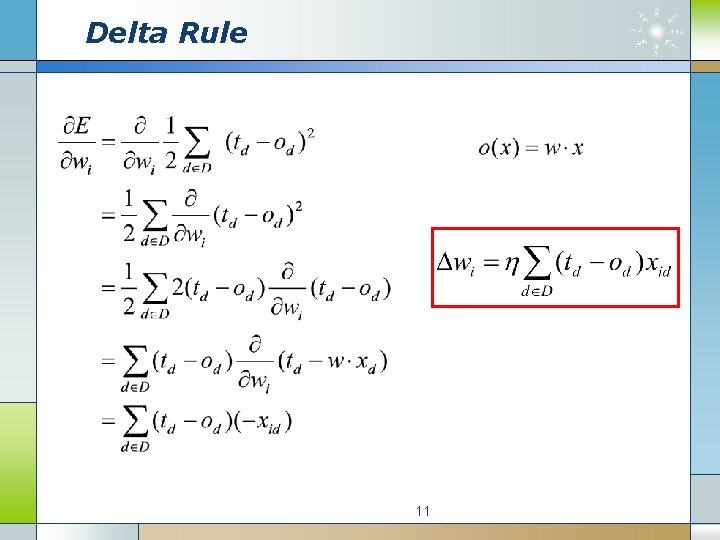

Delta Rule 11

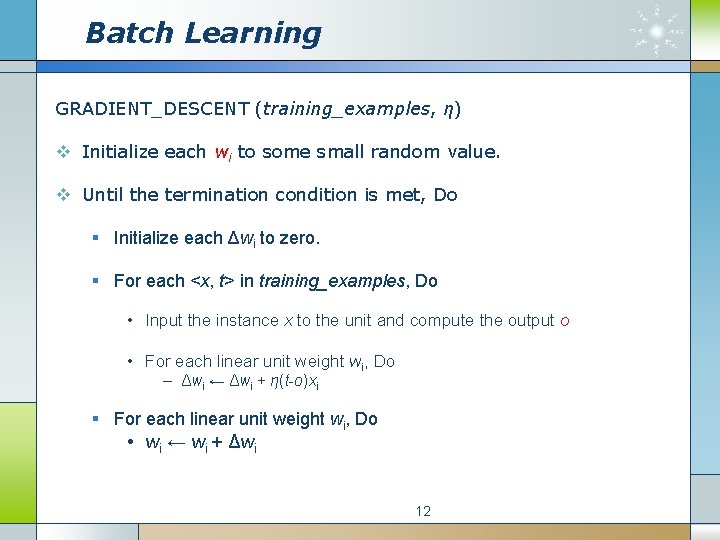

Batch Learning GRADIENT_DESCENT (training_examples, η) v Initialize each wi to some small random value. v Until the termination condition is met, Do § Initialize each Δwi to zero. § For each <x, t> in training_examples, Do • Input the instance x to the unit and compute the output o • For each linear unit weight wi, Do – Δwi ← Δwi + η(t-o)xi § For each linear unit weight wi, Do • wi ← wi + Δwi 12

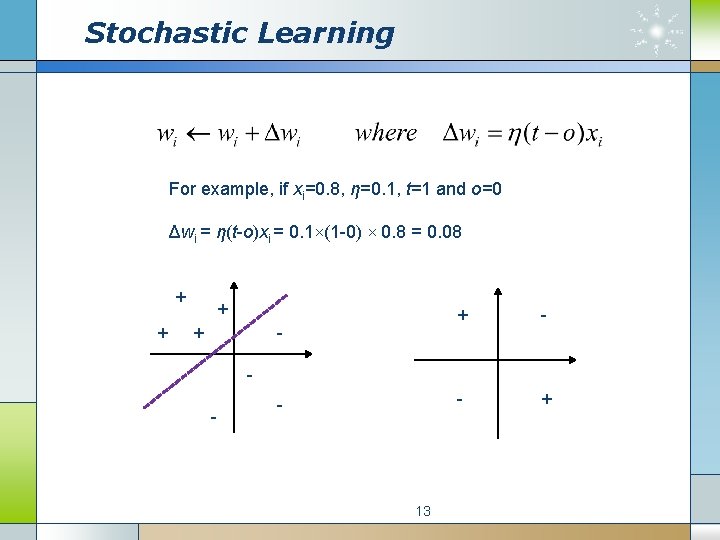

Stochastic Learning For example, if xi=0. 8, η=0. 1, t=1 and o=0 Δwi = η(t-o)xi = 0. 1×(1 -0) × 0. 8 = 0. 08 + + - + - - 13

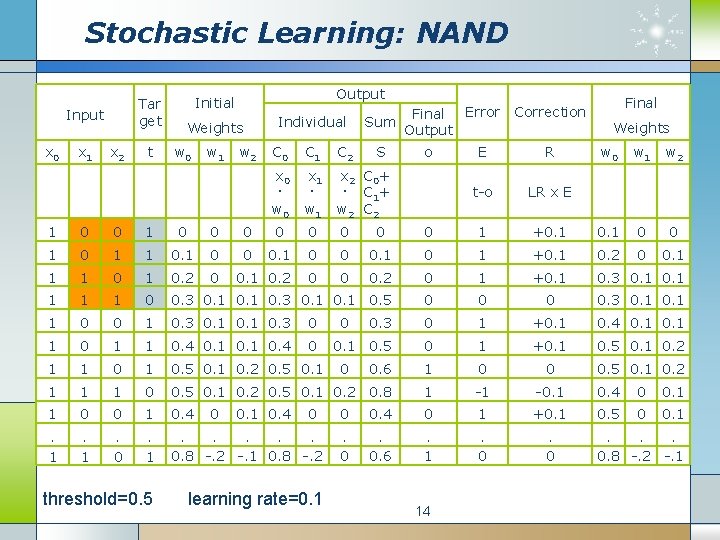

Stochastic Learning: NAND Tar get Input x 0 x 1 x 2 t Output Initial Weights w 0 w 1 w 2 Individual Final Error Correction Sum Output C 0 C 1 C 2 S x 0 · w 0 x 1 · w 1 x 2 C 0 + · C 1 + w 2 C 2 o E R t-o LR x E Final Weights w 0 w 1 w 2 1 0 0 0 0 0 1 +0. 1 0 0 1 1 0 0 0. 1 0 1 +0. 1 0. 2 0 0. 1 1 1 0. 2 0 0 0. 2 0 1 +0. 1 0. 3 0. 1 1 1 1 0 0. 3 0. 1 0. 5 0 0. 3 0. 1 1 0 0 1 0. 3 0 1 +0. 1 0. 4 0. 1 1 0. 4 0 0. 1 0. 5 0 1 +0. 1 0. 5 0. 1 0. 2 1 1 0. 5 0. 1 0. 2 0. 5 0. 1 0. 6 1 0 0 0. 5 0. 1 0. 2 1 1 1 0 0. 5 0. 1 0. 2 0. 8 1 -1 -0. 1 0. 4 0 0. 1 1 0 0 1 0. 4 0. 5 0 0. 1 . 1 . 0 . 1 . . . 0. 8 -. 2 -. 1 0. 8 -. 2 threshold=0. 5 learning rate=0. 1 0. 4 0 0 0. 4 0 1 +0. 1 . 0. 6 . 1 . 0 14 . . . 0. 8 -. 2 -. 1

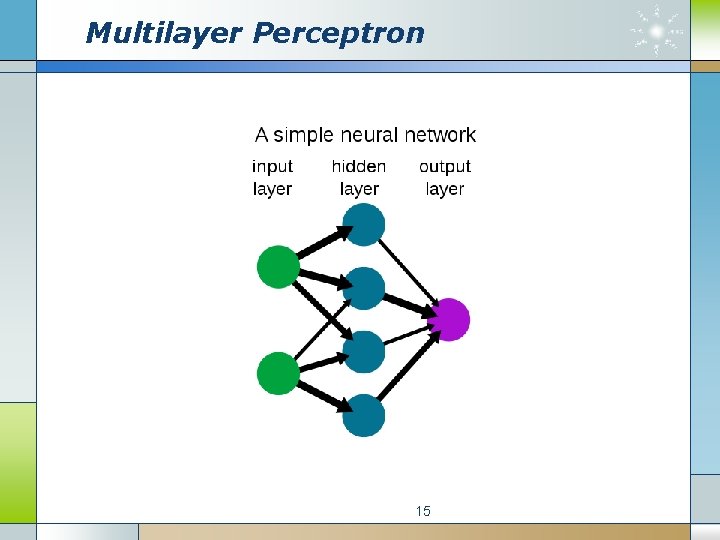

Multilayer Perceptron 15

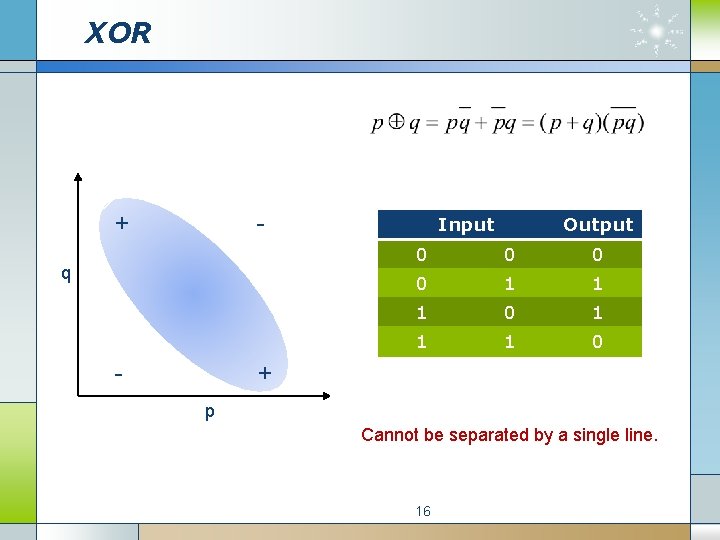

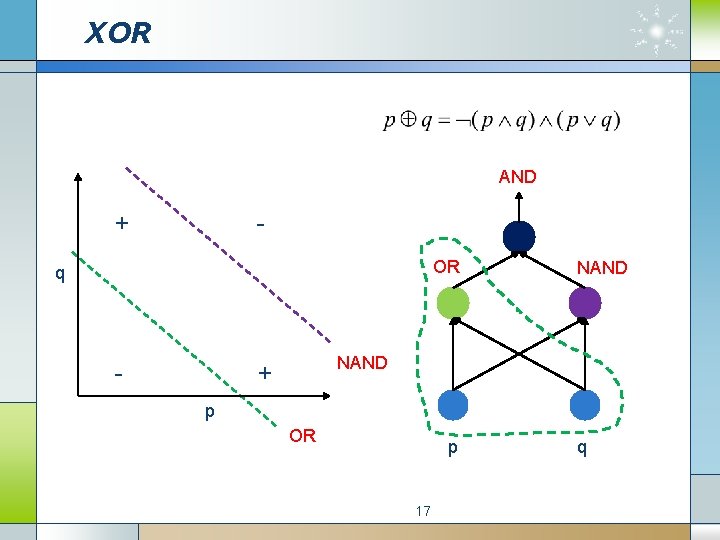

XOR + - q - Input Output 0 0 1 1 1 0 + p Cannot be separated by a single line. 16

XOR AND + OR q - NAND + p OR p 17 q

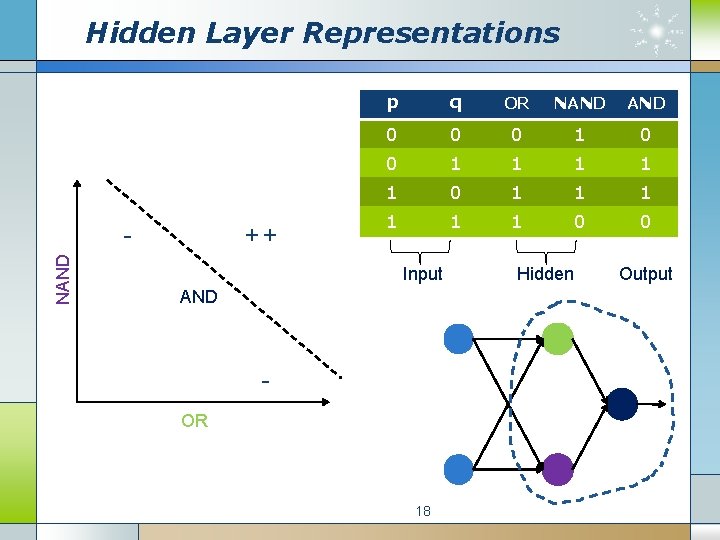

Hidden Layer Representations NAND - ++ p q OR NAND 0 0 0 1 1 1 0 0 Input AND OR 18 Hidden Output

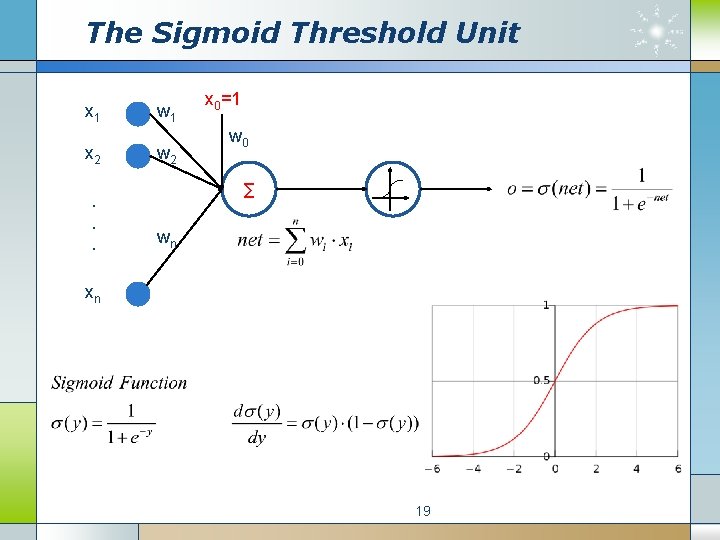

The Sigmoid Threshold Unit x 1 x 2. . . w 1 w 2 x 0=1 w 0 ∑ wn xn 19

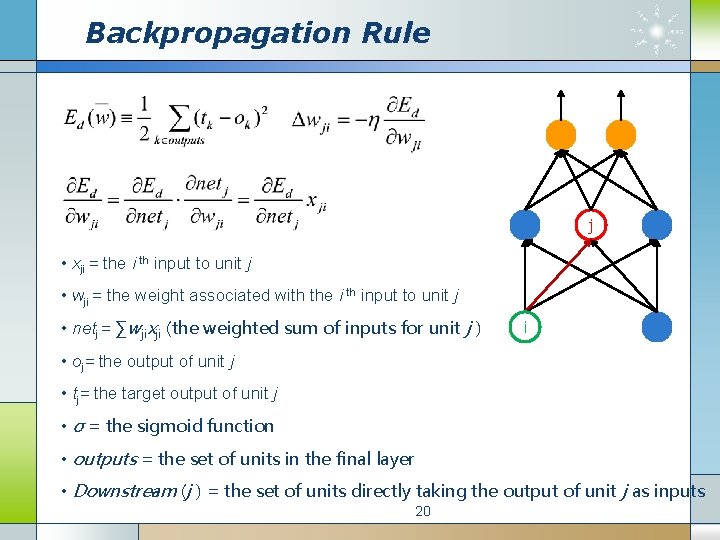

Backpropagation Rule j • xji = the i th input to unit j • wji = the weight associated with the i th input to unit j • netj = ∑wjixji (the weighted sum of inputs for unit j ) i • oj= the output of unit j • tj= the target output of unit j • σ = the sigmoid function • outputs = the set of units in the final layer • Downstream (j ) = the set of units directly taking the output of unit j as inputs 20

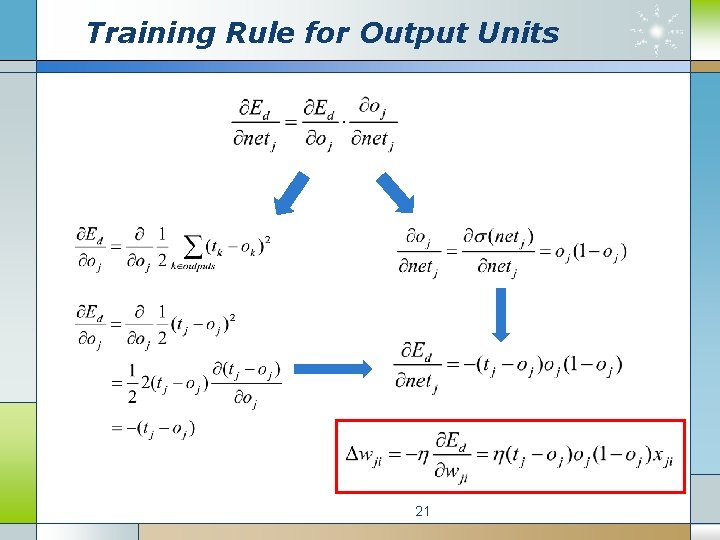

Training Rule for Output Units 21

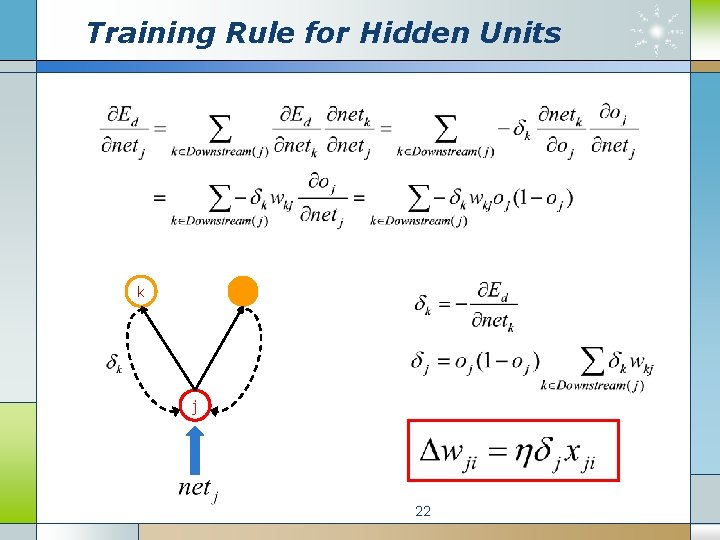

Training Rule for Hidden Units k j 22

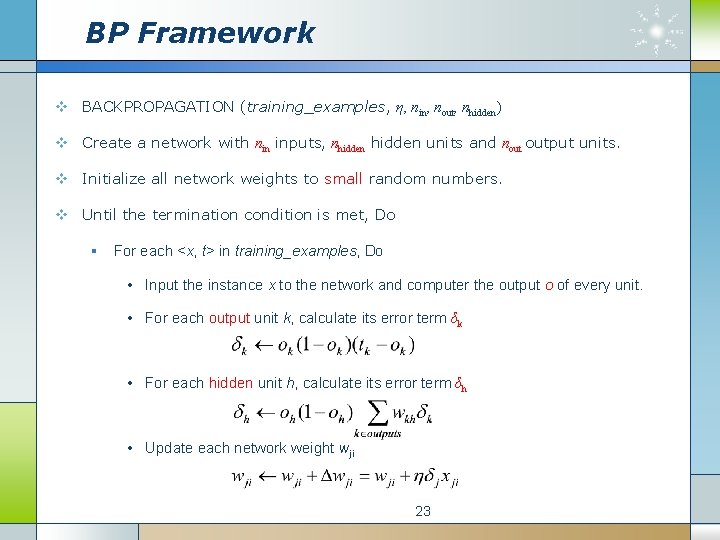

BP Framework v BACKPROPAGATION (training_examples, η, nin, nout, nhidden) v Create a network with nin inputs, nhidden units and nout output units. v Initialize all network weights to small random numbers. v Until the termination condition is met, Do § For each <x, t> in training_examples, Do • Input the instance x to the network and computer the output o of every unit. • For each output unit k, calculate its error term δk • For each hidden unit h, calculate its error term δh • Update each network weight wji 23

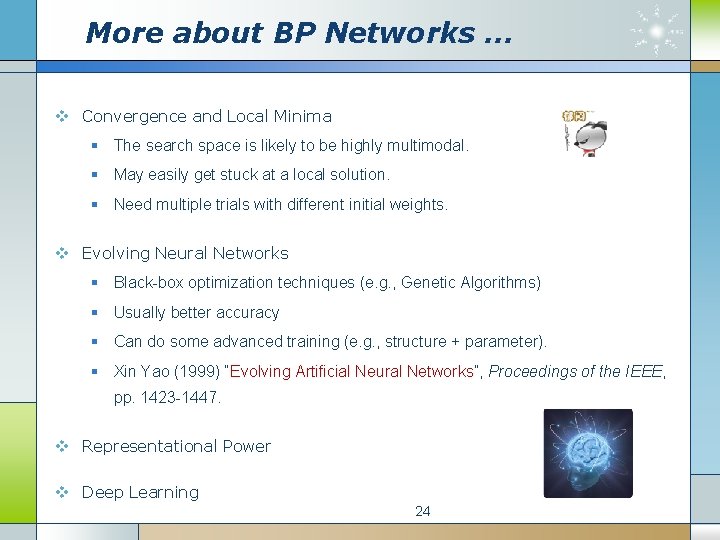

More about BP Networks … v Convergence and Local Minima § The search space is likely to be highly multimodal. § May easily get stuck at a local solution. § Need multiple trials with different initial weights. v Evolving Neural Networks § Black-box optimization techniques (e. g. , Genetic Algorithms) § Usually better accuracy § Can do some advanced training (e. g. , structure + parameter). § Xin Yao (1999) “Evolving Artificial Neural Networks”, Proceedings of the IEEE, pp. 1423 -1447. v Representational Power v Deep Learning 24

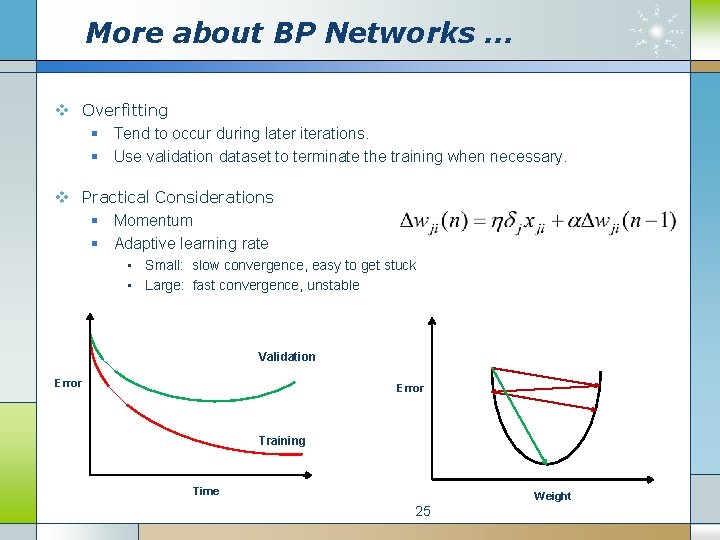

More about BP Networks … v Overfitting § Tend to occur during later iterations. § Use validation dataset to terminate the training when necessary. v Practical Considerations § Momentum § Adaptive learning rate • Small: slow convergence, easy to get stuck • Large: fast convergence, unstable Validation Error Training Time Weight 25

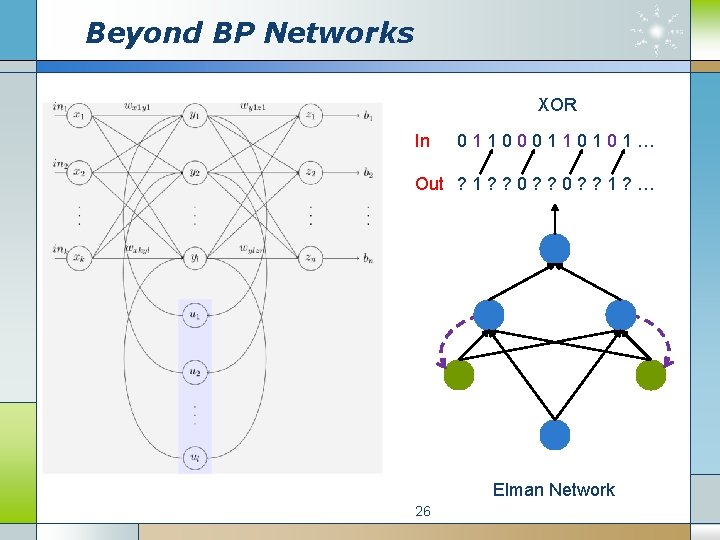

Beyond BP Networks XOR In 011000110101… Out ? 1 ? ? 0 ? ? 1 ? … Elman Network 26

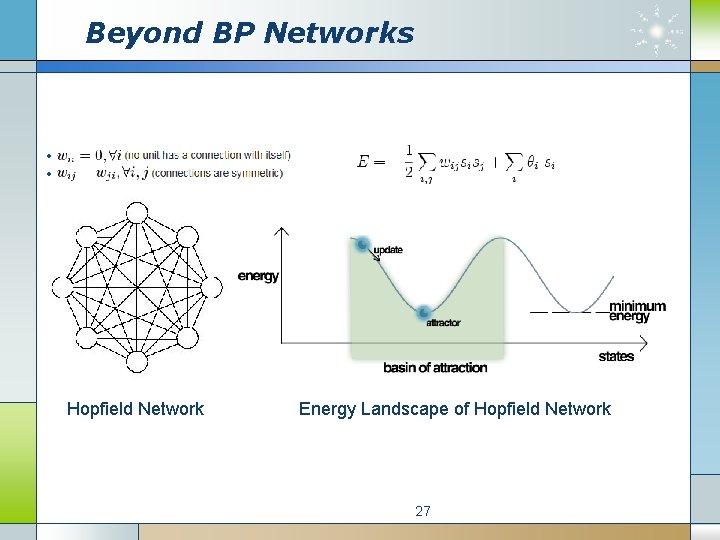

Beyond BP Networks Hopfield Network Energy Landscape of Hopfield Network 27

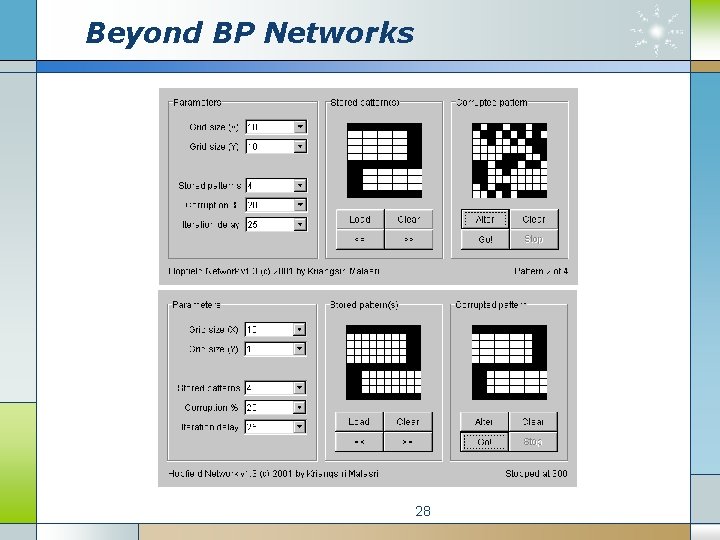

Beyond BP Networks 28

When does ANN work? v Instances are represented by attribute-value pairs. § Input values can be any real values. v The target output may be discrete-valued, real-valued, or a vector of several real- or discrete-valued attributes. v The training samples may contain errors. v Long training times are acceptable. § Can range from a few seconds to several hours. v Fast evaluation of the learned target function may be required. v The ability to understand the learned function is not important. § Weights are difficult for humans to interpret. 29

Reading Materials v Text Book v Richard O. Duda et al. , Pattern Classification, Chapter 6, John Wiley & Sons Inc. v Tom Mitchell, Machine Learning, Chapter 4, Mc. Graw-Hill. v http: //page. mi. fu-berlin. de/rojas/neural/index. html v Online Demo v http: //neuron. eng. wayne. edu/software. html v http: //www. cbu. edu/~pong/ai/hopfield. html v Online Tutorial v http: //www. autonlab. org/tutorials/neural 13. pdf v http: //www. cs. cmu. edu/afs/cs. cmu. edu/user/mitchell/ftp/faces. html v Wikipedia & Google 30

Review v What is the biological motivation of ANN? v When does ANN work? v What is a perceptron? v How to train a perceptron? v What is the limitation of perceptrons? v How does ANN solve non-linearly separable problems? v What is the key idea of Backpropogation algorithm? v What are the main issues of BP networks? v What are the examples of other types of ANN? 31

Next Week’s Class Talk v Volunteers are required for next week’s class talk. v Topic 1: Applications of ANN v Topic 2: Recurrent Neural Networks v Hints: § Robot Driving § Character Recognition § Face Recognition § Hopfield Network v Length: 20 minutes plus question time 32

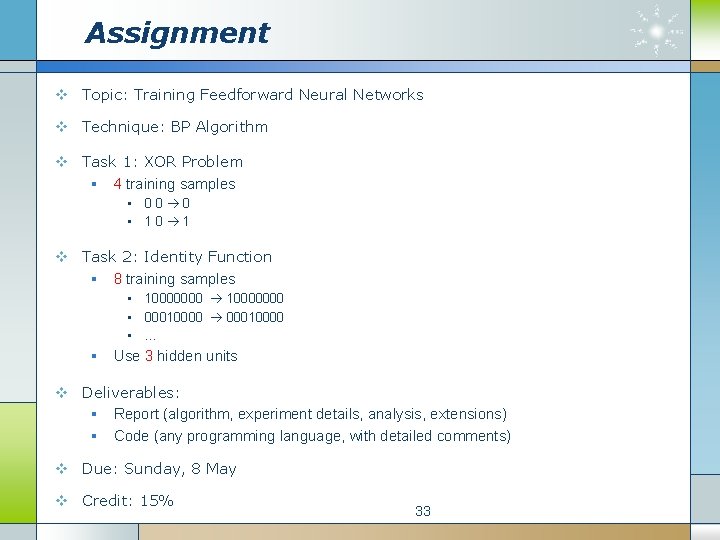

Assignment v Topic: Training Feedforward Neural Networks v Technique: BP Algorithm v Task 1: XOR Problem § 4 training samples • 00 0 • 10 1 v Task 2: Identity Function § 8 training samples • 10000000 • 00010000 • … § Use 3 hidden units v Deliverables: § Report (algorithm, experiment details, analysis, extensions) § Code (any programming language, with detailed comments) v Due: Sunday, 8 May v Credit: 15% 33

- Slides: 33