Building An Elastic Query Engine on Disaggregated Storage

Building An Elastic Query Engine on Disaggregated Storage Presented by Qianli Wang and Zevin King

Outline Ø Introduction Ø Design Overview Ø System Design and Some Directions Ø Other Problems Ø Conclusion

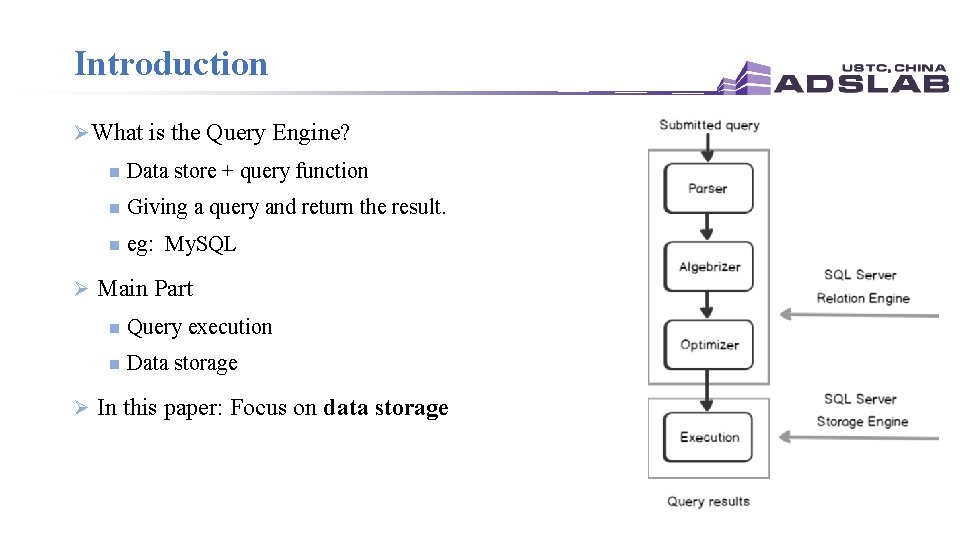

Introduction Ø What is the Query Engine? n Data store + query function n Giving a query and return the result. n eg: My. SQL Ø Main Part n Query execution n Data storage Ø In this paper: Focus on data storage

Introduction Ø Data store is very important for query system -> Store what? n Persistent data l n Intermediate data l l n Customer data stored in the database. Long-live and need strong durability and availability guarantees Generated by query operators, short live and need high throughput rather than good durability In critical path. Metadata l Some object catalogs.

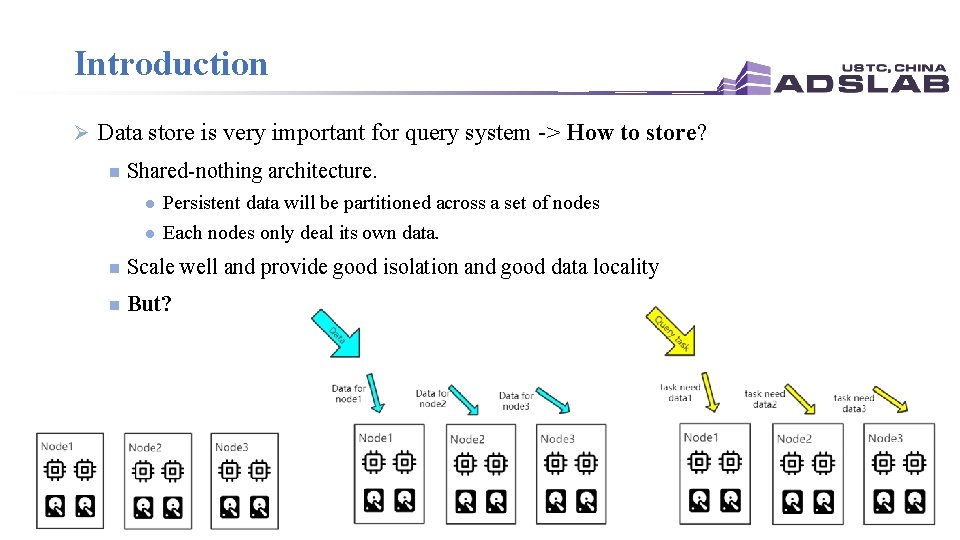

Introduction Ø Data store is very important for query system -> How to store? n Shared-nothing architecture. l l Persistent data will be partitioned across a set of nodes Each nodes only deal its own data. n Scale well and provide good isolation and good data locality n But?

Introduction: problems Ø Intermediate data n Not need too much available guarantees but current storage provide. Ø Hardware-workload mismatch n The allocation is coarse-grained(By machine) -> Low Resource Utilization. l n If we have many 4 CPU + 2 Disk VM, and we need 20 CPU and 80 Disk, we had to allocate 40 VMs, that’s means that 140 CPU are wasted. To meet performance goals, resources usually have to be overprovisioned. Ø Lake of Elasticity -> due to the workload n Skew intermediate data size and CPU requirement. n Current solution need to restore too much data -> write amplification

![Workload Ø What workload -> SQL storage query(opensource[1]) Ø Characters n Read Only: 28% Workload Ø What workload -> SQL storage query(opensource[1]) Ø Characters n Read Only: 28%](http://slidetodoc.com/presentation_image_h2/453fd79d389beda58f4a3043d34f0719/image-7.jpg)

Workload Ø What workload -> SQL storage query(opensource[1]) Ø Characters n Read Only: 28% for all customer query l l n Write Only: 13% l l n Can vary over nine orders of magnitude The number queries submitted by customers spike during daytime hours on weekdays. Huge change(8 orders of magnitude) Not have spikes Read-Write: 59% l l R/W rate near to 1 Have huge change [1] https: //github. com/resource-disaggregation/snowset

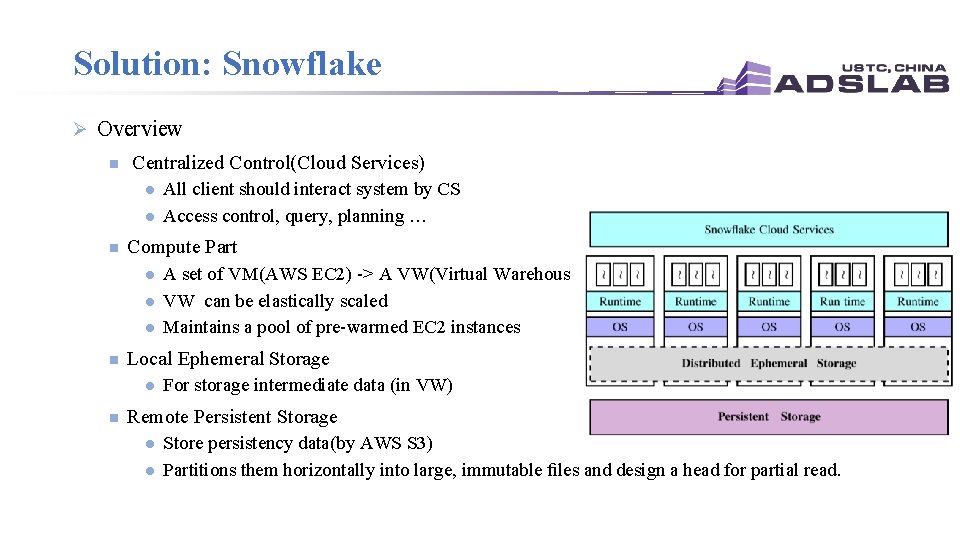

Solution: Snowflake Ø Overview n Centralized Control(Cloud Services) l l n Compute Part l l l n A set of VM(AWS EC 2) -> A VW(Virtual Warehouse) VW can be elastically scaled Maintains a pool of pre-warmed EC 2 instances Local Ephemeral Storage l n All client should interact system by CS Access control, query, planning … For storage intermediate data (in VW) Remote Persistent Storage l l Store persistency data(by AWS S 3) Partitions them horizontally into large, immutable files and design a head for partial read.

Outline Ø Introduction Ø Design Overview Ø System Design and Some Directions Ø Other Problems Ø Conclusion

1. Ephemeral Storage System Ø Motivation n S 3 is Okay for persistent data n S 3 is not Okay for intermediate data: l l 2021/5/21 insufficient latency and throughput stronger availability and durability than needed

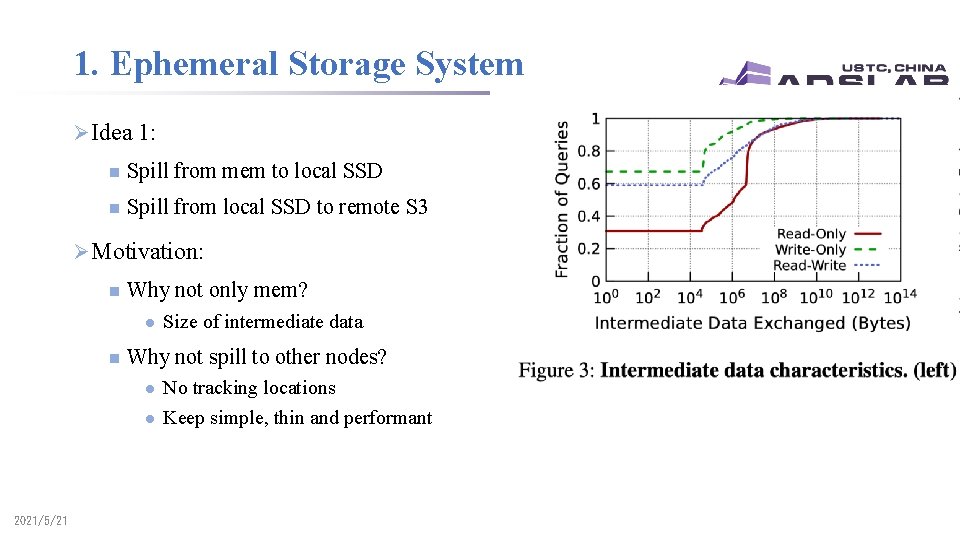

1. Ephemeral Storage System Ø Idea 1: n Spill from mem to local SSD n Spill from local SSD to remote S 3 Ø Motivation: n Why not only mem? l n Why not spill to other nodes? l l 2021/5/21 Size of intermediate data No tracking locations Keep simple, thin and performant

1. Ephemeral Storage System Ø Idea 2: n Caching persistent data Ø Motivation: n S 3 is too slow n Intermediate data is short-lived and the size varies l l n Peak demand is high, but average demand is low Mem and SSDs are under utilized Workload: l Query pattern for persistent data is highly skewed Ø How: 2021/5/21 n Consistent hashing n Write-through n LRU

2. Caching Persistent Data Ø Strategy 1: Locality-aware task scheduling Ø Situation 1: n A customer has 1 million persistent files in S 3 and runs a 10 -node VW. n Query A: operates on 100 files n Query B: operates on 100, 000 files n Tasks of A and B are running on all the 10 nodes(high likelihood) • Ideas: • Consistent hashing • Write-through • LRU 2021/5/21

2. Caching Persistent Data Ø Strategy 2: Work stealing Ø Situation 2: n Node A is overloaded n Node B is free n A new task t 1 comes which should be running on Node A n But t 1 is scheduled on Node B n For t 1, Node B reads from S 3 instead of Node A • Ideas: • Consistent hashing • Write-through • LRU 2021/5/21

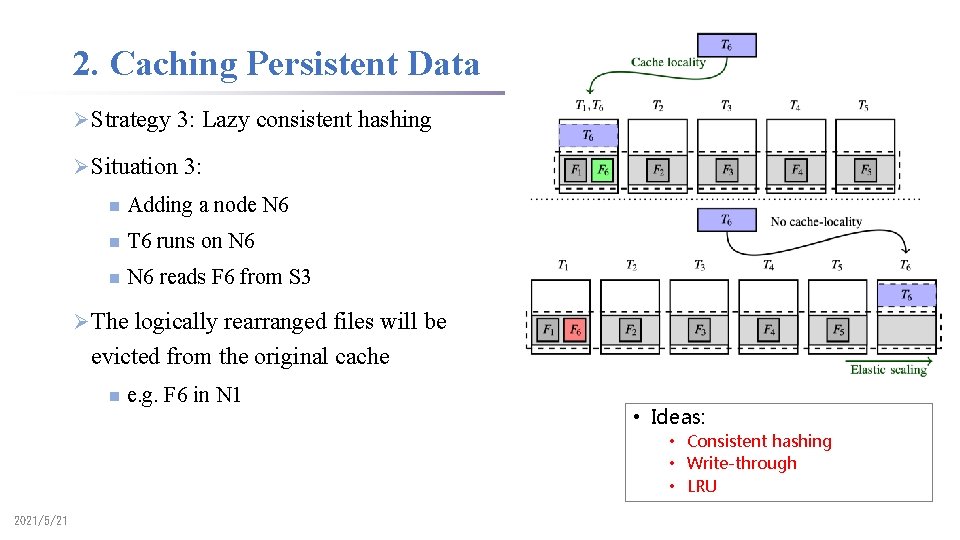

2. Caching Persistent Data Ø Strategy 3: Lazy consistent hashing Ø Situation 3: n Adding a node N 6 n T 6 runs on N 6 reads F 6 from S 3 Ø The logically rearranged files will be evicted from the original cache n e. g. F 6 in N 1 • Ideas: • Consistent hashing • Write-through • LRU 2021/5/21

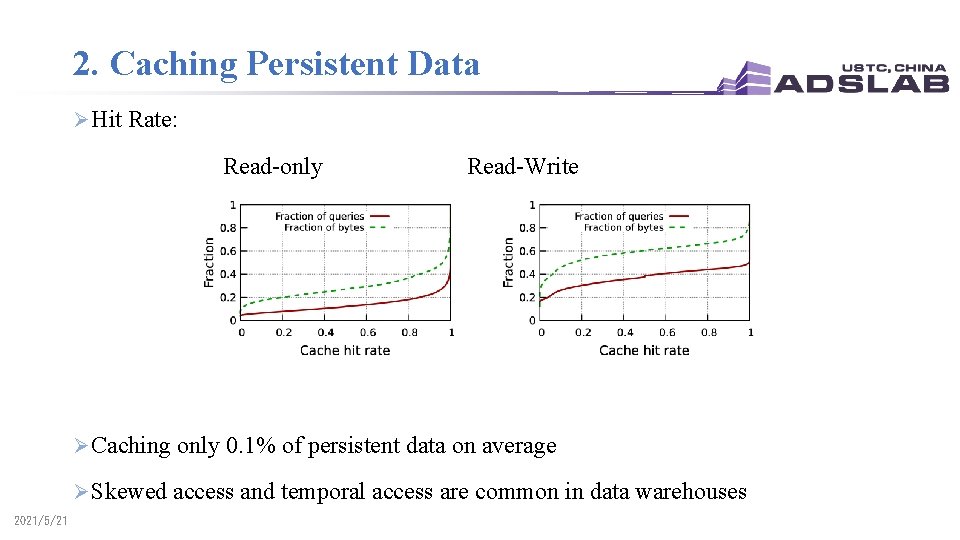

2. Caching Persistent Data Ø Hit Rate: Read-only Read-Write Ø Caching only 0. 1% of persistent data on average Ø Skewed access and temporal access are common in data warehouses 2021/5/21

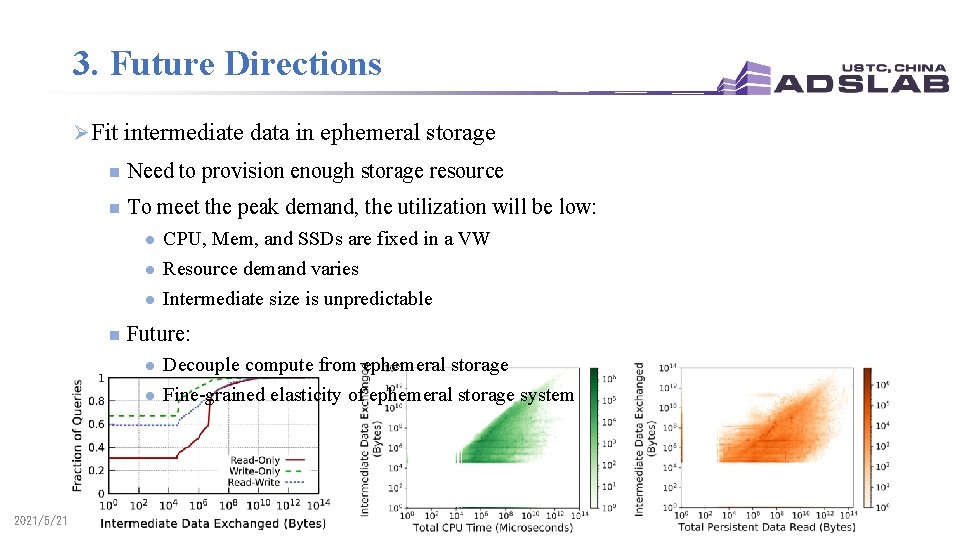

3. Future Directions Ø Fit intermediate data in ephemeral storage n Need to provision enough storage resource n To meet the peak demand, the utilization will be low: l l l n Future: l l 2021/5/21 CPU, Mem, and SSDs are fixed in a VW Resource demand varies Intermediate size is unpredictable Decouple compute from ephemeral storage Fine-grained elasticity of ephemeral storage system

3. Future Directions Ø End-to-end query performance depends on both: n Throughput of intermediate data n Hit rate of caching data Ø Intermediate data VS Caching data n Current priority: Intermediate data >> Caching data Ø Interesting to explore better policy to balance the two n 2021/5/21 e. g. A hot persistent file VS short-lived intermediate data

3. Future Directions Ø Caching hierarchy: n Existing caching mechanisms are designed for 2 -tier: Mem to SSD n Now in Snowflake: l l 2021/5/21 LRU from Mem to SSD LRU from SSD to S 3 n New techniques: NVM devices and other remote ephemeral storage systems n The hierarchy is getting more complex n Interesting to explore new caching mechanisms

3. Future Directions Ø Task scheduling: n One extreme: co-locate the tasks with the files (current) l n The other extreme: locate the tasks in a single node l n 2021/5/21 network: intermediate data shuffling network: remote persistent reading Interesting to explore how to pick the right set of nodes

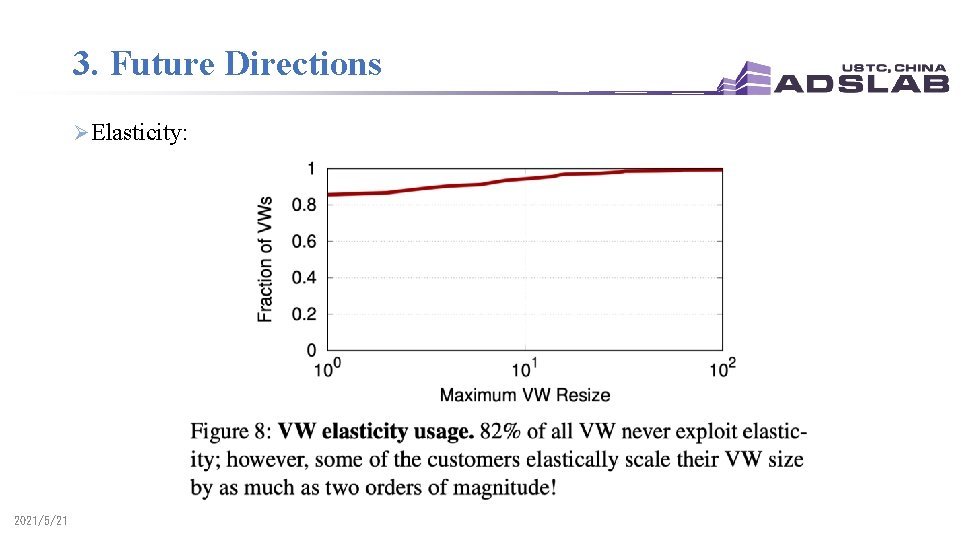

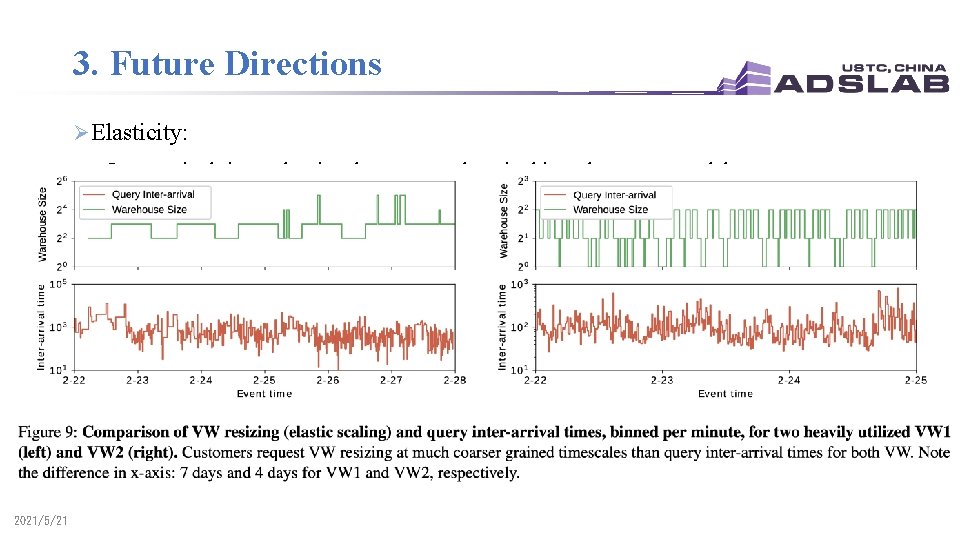

3. Future Directions Ø Elasticity: 2021/5/21

3. Future Directions Ø Elasticity: Inter-arrival time - the time between each arrival into the system and the next 2021/5/21

3. Future Directions Ø Elasticity: n Scaling requested from customers are too coarse-grained n Explore to achieve auto-scaling at intra-query granularity l l n Explore to user serverless platform l l 2021/5/21 Queries vary Auto-scaling VW with the task-level elasticity during the execution of a query Some data are sensitive and confidential Requires strong isolation guarantees.

4. Ephemeral Storage System Ø Motivation n S 3 is not Okay for intermediate data Ø Idea: n n Spill to local SSDs + spill to S 3 Caching persistent data l l l Locality-aware task scheduling Work stealing Lazy consistent hashing Ø Future directions: n n n 2021/5/21 Fit intermediate in ephemeral Intermediate VS Caching hierarchy Task scheduling Elasticity

Outline Ø Introduction Ø Design Overview Ø System Design and Some Directions Ø Other Problems Ø Conclusion

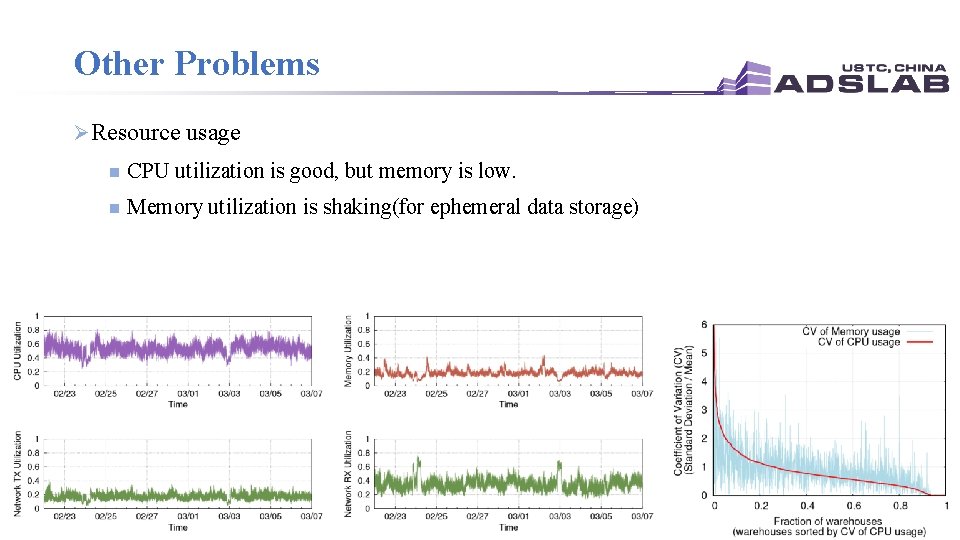

Other Problems Ø Resource usage n CPU utilization is good, but memory is low. n Memory utilization is shaking(for ephemeral data storage)

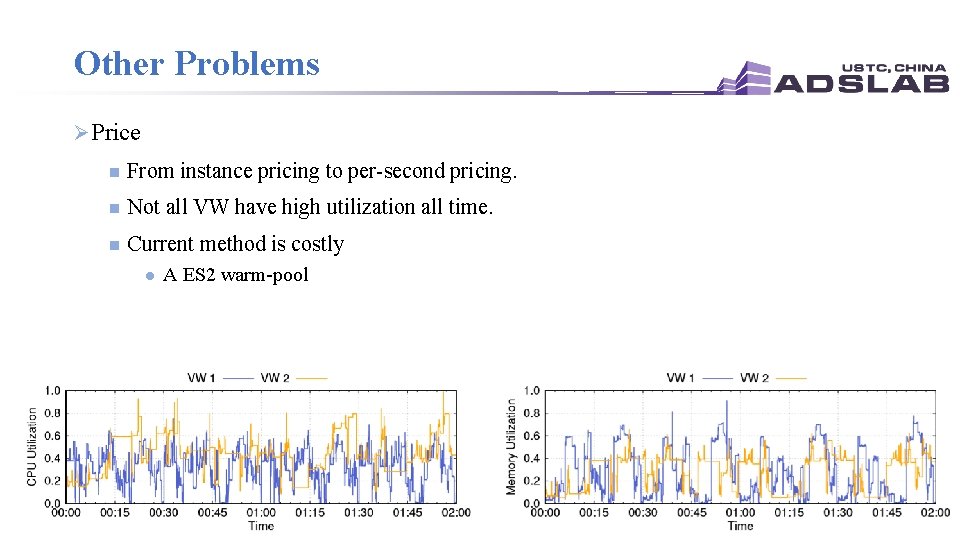

Other Problems Ø Price n From instance pricing to per-second pricing. n Not all VW have high utilization all time. n Current method is costly l A ES 2 warm-pool

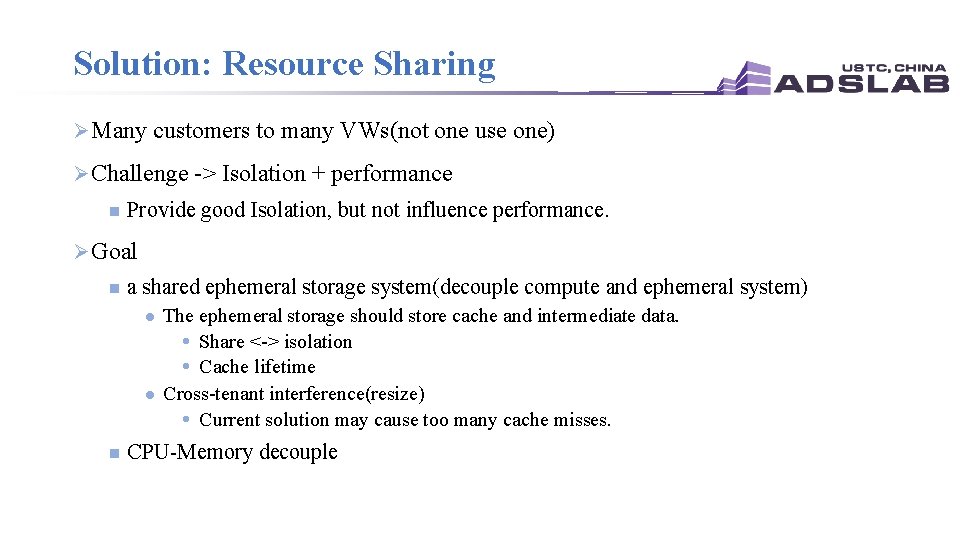

Solution: Resource Sharing Ø Many customers to many VWs(not one use one) Ø Challenge -> Isolation + performance n Provide good Isolation, but not influence performance. Ø Goal n a shared ephemeral storage system(decouple compute and ephemeral system) l l n The ephemeral storage should store cache and intermediate data. Share <-> isolation Cache lifetime Cross-tenant interference(resize) Current solution may cause too many cache misses. CPU-Memory decouple

Conclusion Ø Share-nothing architecture cannot suit for current environment Ø Query workload is very skew n For size, read-write rate … Ø Snowflake n Decouple the calculation and persistent storage n Design the ephemeral storage n Some directions Ø Resource utilization and some goals

Thank You!

- Slides: 30