Benchmarking Cloud Serving Systems with YCSB Presenter Zhujun

Benchmarking Cloud Serving Systems with YCSB Presenter: Zhujun Xiao Oct. 25 Brian F. Cooper, Adam Silberstein, Erwin Tam, Raghu Ramakrishnan, Russell Sears (Yahoo! Research), So. CC’ 2010

Motivation (1) • New systems for data storage and management “in the cloud” • Open source systems: Cassandra, HBase… • Cloud services: Microsoft Azure, Amazon web service (S 3, Simple. DB) … • Private systems: Yahoo!’s PNUTS … • Serving systems: provide online read/write access to data.

Motivation (2) • However, it is hard to compare their performances • Different data models • Big. Table model in Cassandra and HBase • Simple hashtable model in Voldemort • Document model in Couch. DB • Different design decisions (trade-offs) • • Hard to choose the appropriate system! Read or write optimized Synchronous or asynchronous replication Latency or durability: sync writes to disk at write time or not Data partitioning: row-based or column-based storage • Evaluted on different workloads • These systems only report performance for the “sweet spot” workloads for their system.

Goal • Build a standard benchmark for cloud serving systems • Evaluate different systems on different workloads • A package of standard workloads • A workload generator • Evaluate both performance and scalability • Future direction: availability and replication

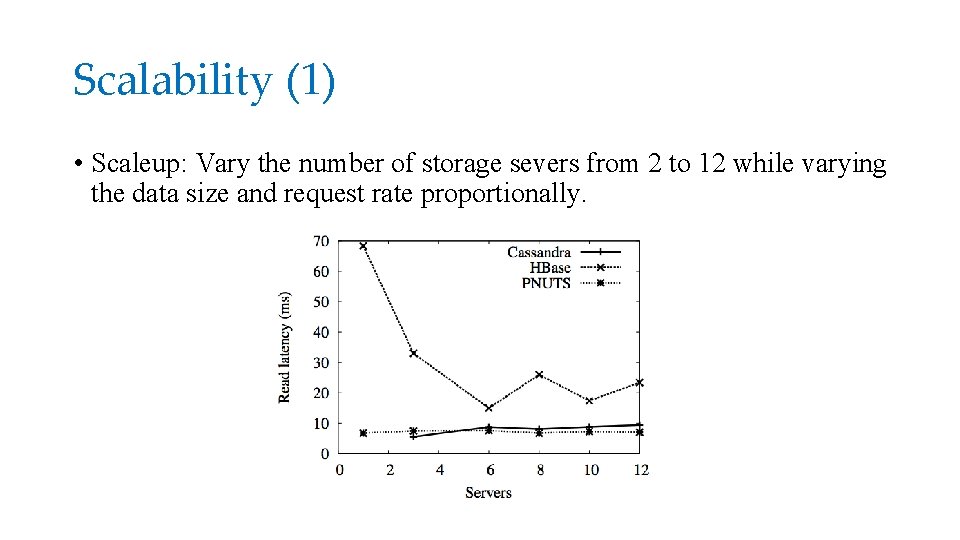

Benchmark Tiers • Tier 1: performance • Better system = achieve target latency and throughput with fewer server • Sizeup: latency versus throughput as throughput increases • Tier 2: scaling • Scaleup: latency should remain constant when number of servers and amount of data scale proportionally. • Elastic speedup: how latency changes as more servers are added.

YCSB client • Define the dataset and load it into the database • Data records • A set of operations • Execute the operations while measuring performance

Workload (1) •

Workload (2) • One workload is a mix of basic operations with choices of • Which operations to perform • Which record to read or write • How many records to scan • Decisions are governed by random distributions • • Uniform Zipfian Latest Multinomial

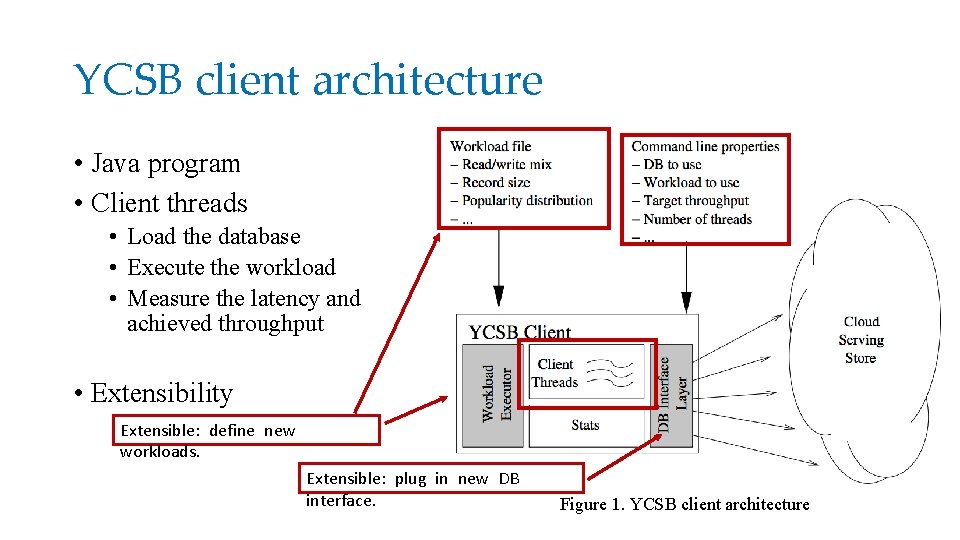

YCSB client architecture • Java program • Client threads • Load the database • Execute the workload • Measure the latency and achieved throughput • Extensibility Extensible: define new workloads. Extensible: plug in new DB interface. Figure 1. YCSB client architecture

Experimental setup (1) • Servers • Six storage servers • YCSB client runs on a separate server. • Tested systems • Cassandra, HBase, PNUTS, and Shared My. SQL. • Database • 120 million 1 KB records = 20 GB per sever

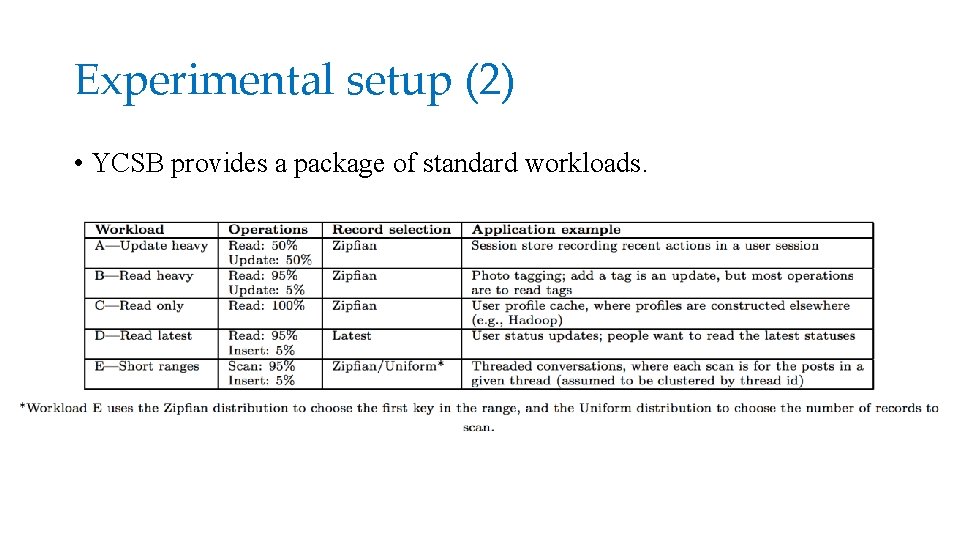

Experimental setup (2) • YCSB provides a package of standard workloads.

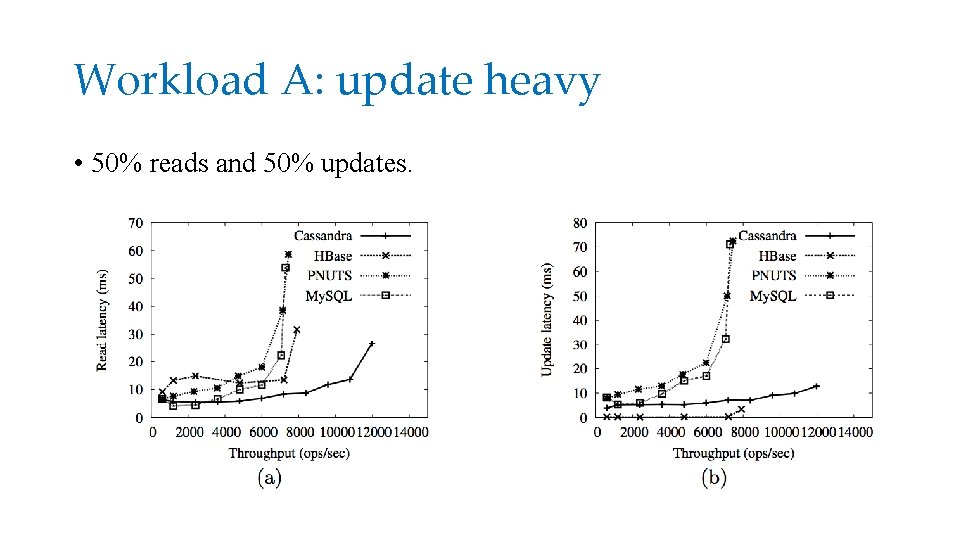

Workload A: update heavy • 50% reads and 50% updates.

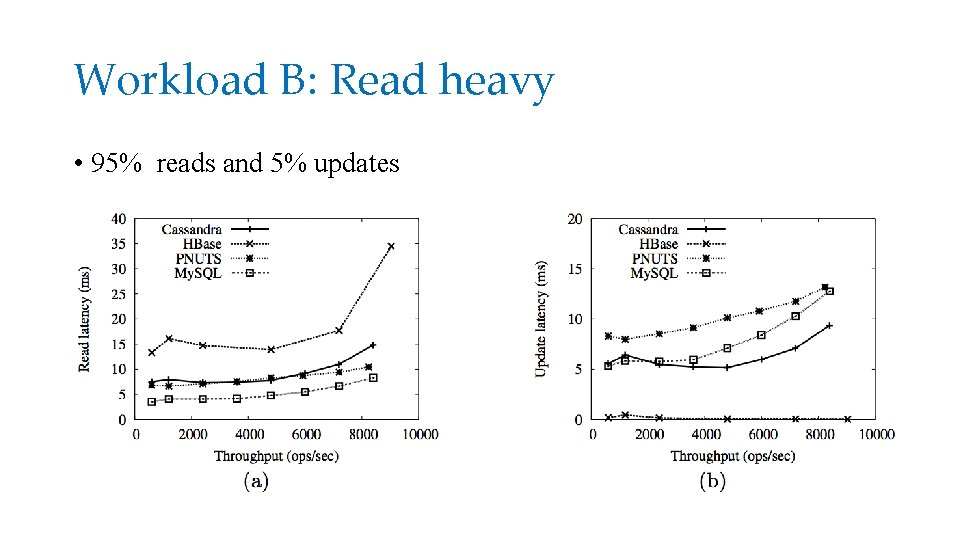

Workload B: Read heavy • 95% reads and 5% updates

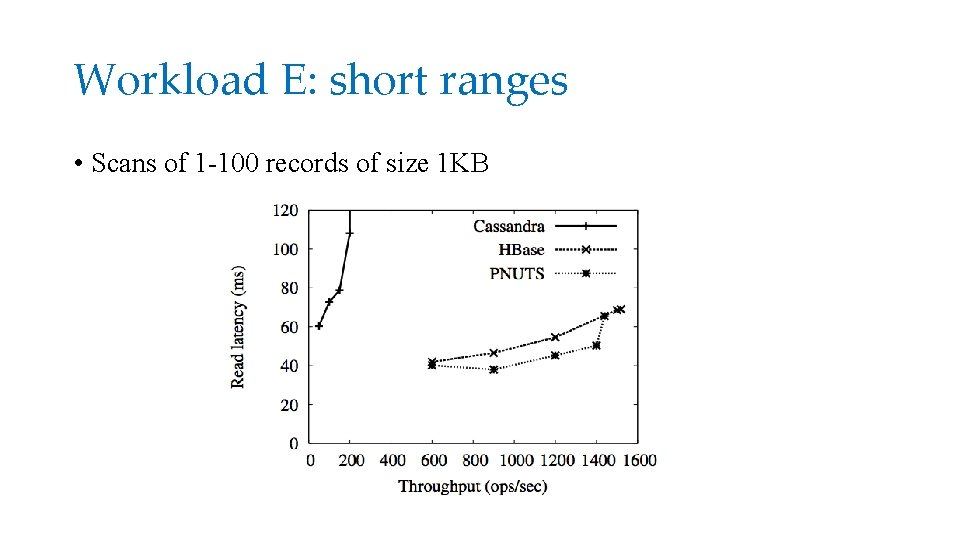

Workload E: short ranges • Scans of 1 -100 records of size 1 KB

Scalability (1) • Scaleup: Vary the number of storage severs from 2 to 12 while varying the data size and request rate proportionally.

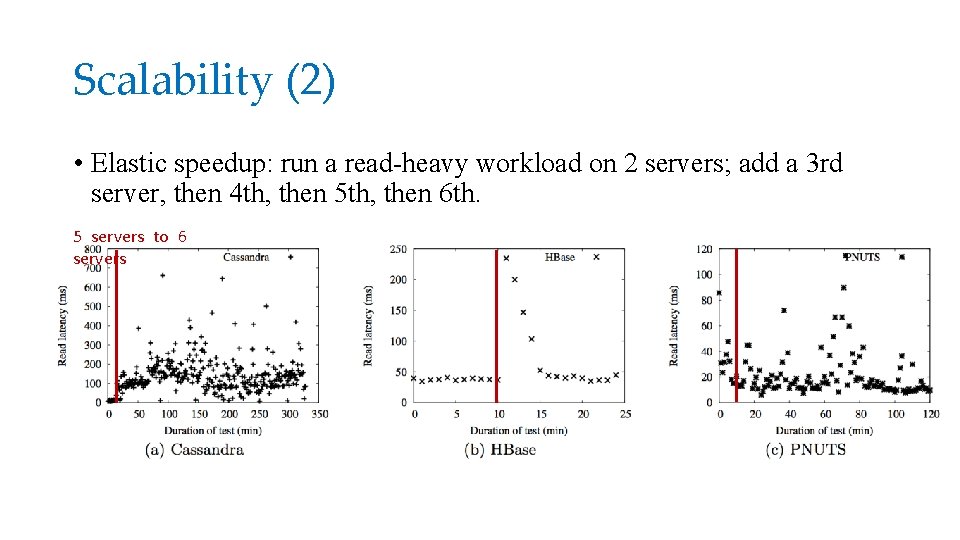

Scalability (2) • Elastic speedup: run a read-heavy workload on 2 servers; add a 3 rd server, then 4 th, then 5 th, then 6 th. 5 servers to 6 servers

Discussion • What is the challenging part of building a benchmark, compared with simply implementing several systems and reporting their performance metrics? • All the design decisions would contribute to the final performance. Could YCSB be used for contribution breakdown? • Is it reasonable to use the distribution listed in the paper? Rather, does the real request pattern follow these distribution? Maybe in some cases, there is not regular pattern.

- Slides: 17