Bayesian Networks Chapter 2 Duda et al Section

Bayesian Networks Chapter 2 (Duda et al. ) – Section 2. 11 CS 479/679 Pattern Recognition Dr. George Bebis

Bayesian Networks - Motivation • High-dimensional densities are very challenging to model since they depend on many parameters (e. g. , kn values) • When variables are conditionally dependent (i. e. , causation), the underlying pdf can be greatly simplified. • Bayesian Networks (or Belief Networks) are graphical models which represent a set of variables and their conditional dependencies.

Example of Dependencies • Model the state of an automobile: – – – Engine temperature Brake fluid pressure Coolant temperature Tire air pressure Wire voltages etc. • Causally related variables – Engine temperature – Coolant temperature • NOT causally related variables – Engine temperature – Tire air pressure Causation A causal relation between two events exists if the occurrence of the first causes the other. The first event is called the cause and the second event is called the effect. Causation vs Correlation A correlation between two variables does not imply causation. If there is a causal relationship between two variables, they must be correlated.

Applications • Microsoft: Answer Wizard, Print Troubleshooter http: //erichorvitz. com/ftp/x 2 xins. lo. pdf • US Army: SAIP (Battalion Detection from SAR, IR etc. ) • NASA: Vista (DSS for Space Shuttle) • GE: Gems (real-time monitoring of utility generators)

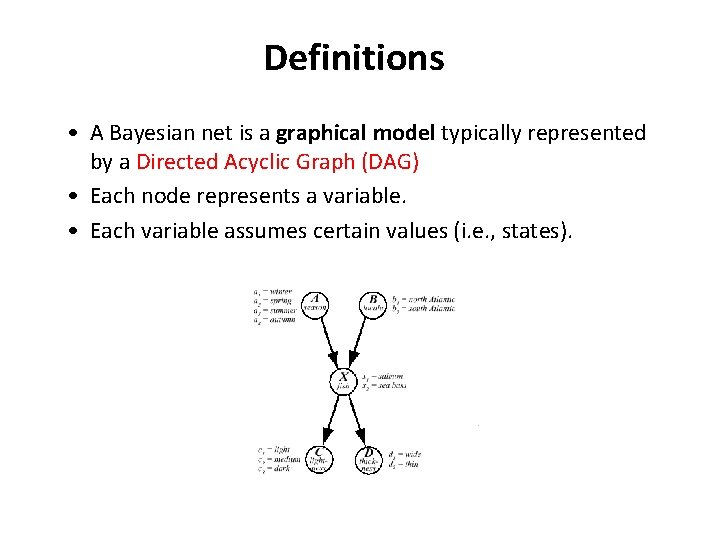

Definitions • A Bayesian net is a graphical model typically represented by a Directed Acyclic Graph (DAG) • Each node represents a variable. • Each variable assumes certain values (i. e. , states).

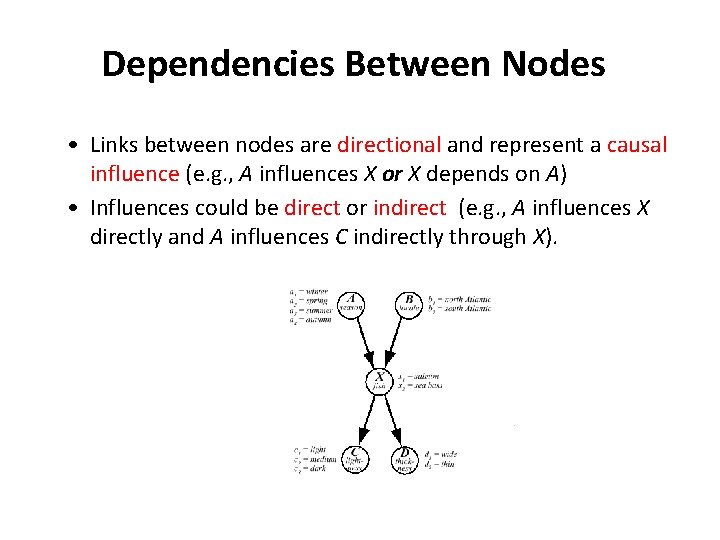

Dependencies Between Nodes • Links between nodes are directional and represent a causal influence (e. g. , A influences X or X depends on A) • Influences could be direct or indirect (e. g. , A influences X directly and A influences C indirectly through X).

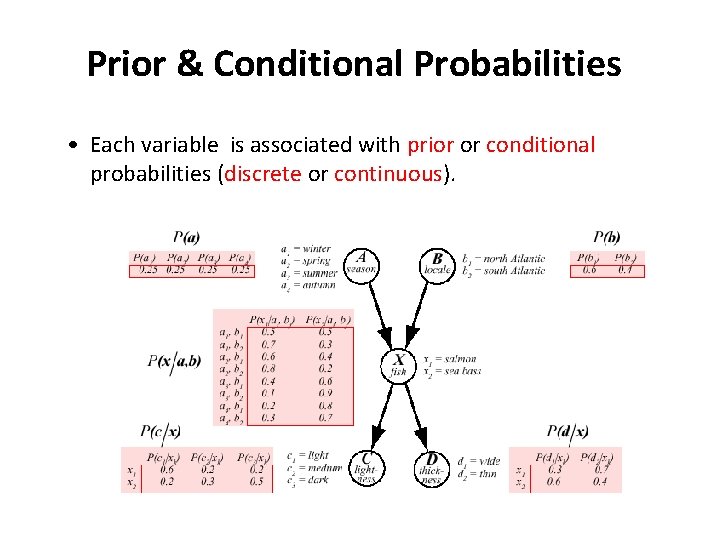

Prior & Conditional Probabilities • Each variable is associated with prior or conditional probabilities (discrete or continuous).

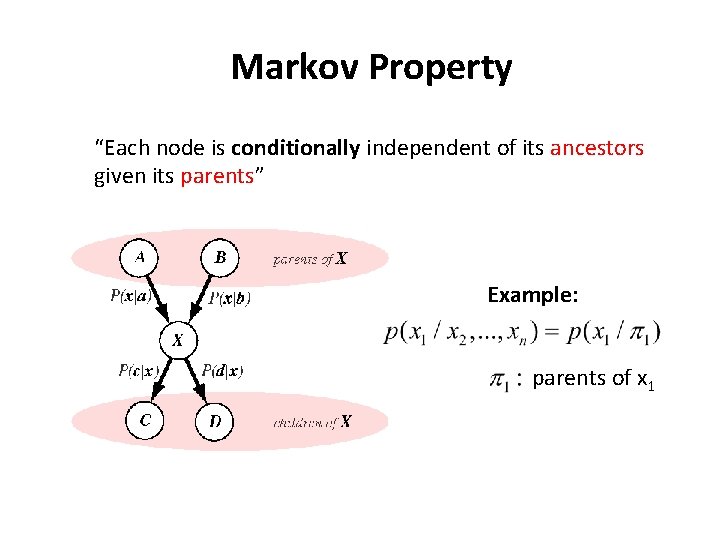

Markov Property “Each node is conditionally independent of its ancestors given its parents” Example: parents of x 1

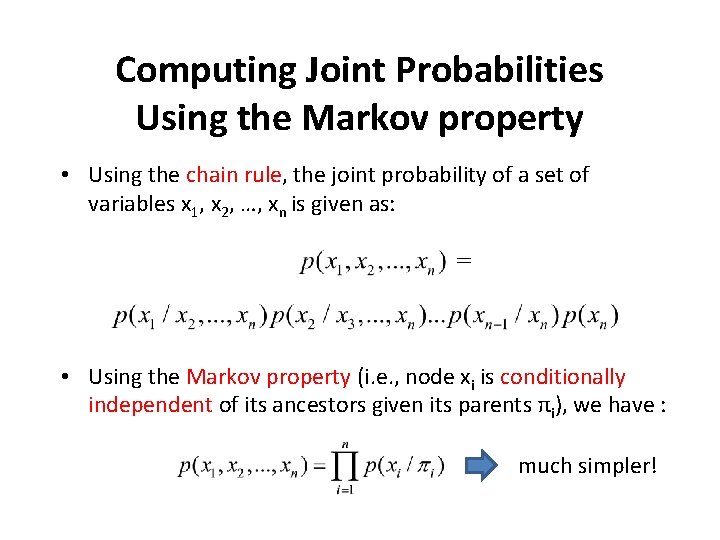

Computing Joint Probabilities Using the Markov property • Using the chain rule, the joint probability of a set of variables x 1, x 2, …, xn is given as: = • Using the Markov property (i. e. , node xi is conditionally independent of its ancestors given its parents πi), we have : much simpler!

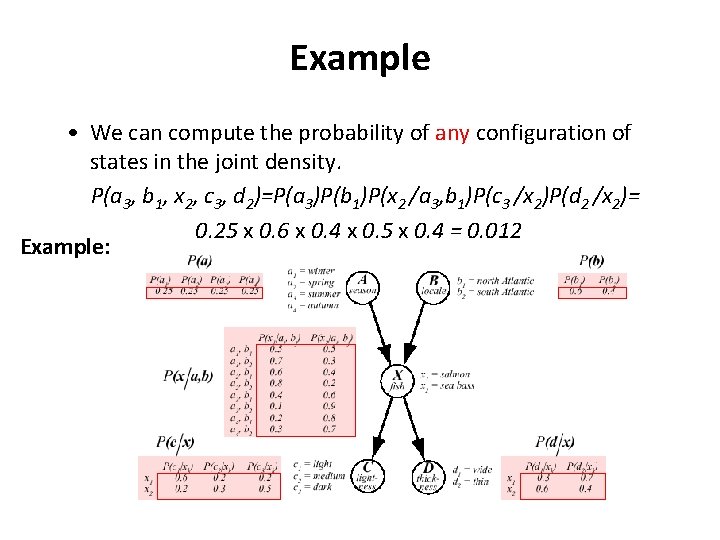

Example • We can compute the probability of any configuration of states in the joint density. P(a 3, b 1, x 2, c 3, d 2)=P(a 3)P(b 1)P(x 2 /a 3, b 1)P(c 3 /x 2)P(d 2 /x 2)= 0. 25 x 0. 6 x 0. 4 x 0. 5 x 0. 4 = 0. 012 Example:

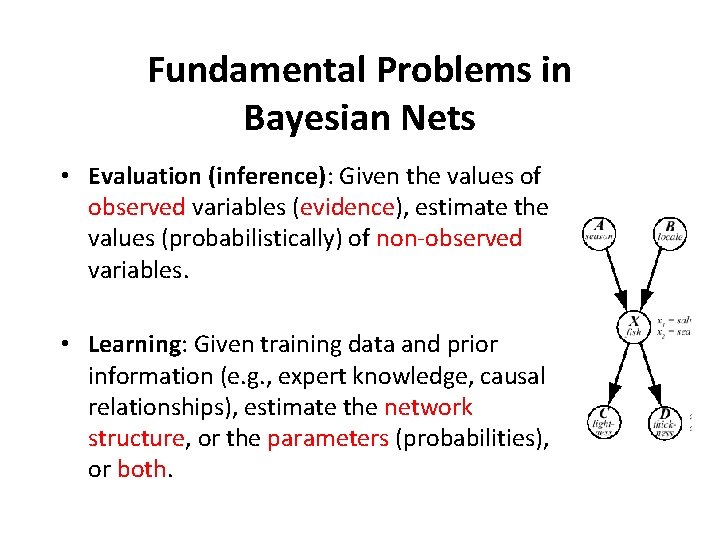

Fundamental Problems in Bayesian Nets • Evaluation (inference): Given the values of observed variables (evidence), estimate the values (probabilistically) of non-observed variables. • Learning: Given training data and prior information (e. g. , expert knowledge, causal relationships), estimate the network structure, or the parameters (probabilities), or both.

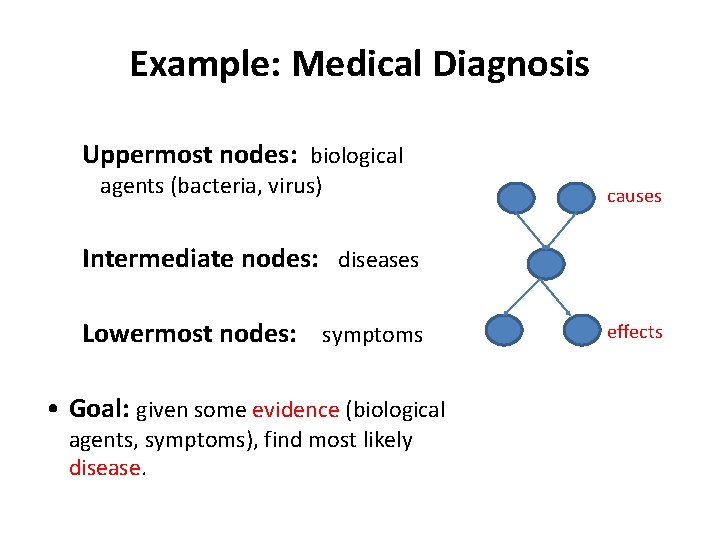

Example: Medical Diagnosis Uppermost nodes: biological agents (bacteria, virus) causes Intermediate nodes: diseases Lowermost nodes: symptoms • Goal: given some evidence (biological agents, symptoms), find most likely disease. effects

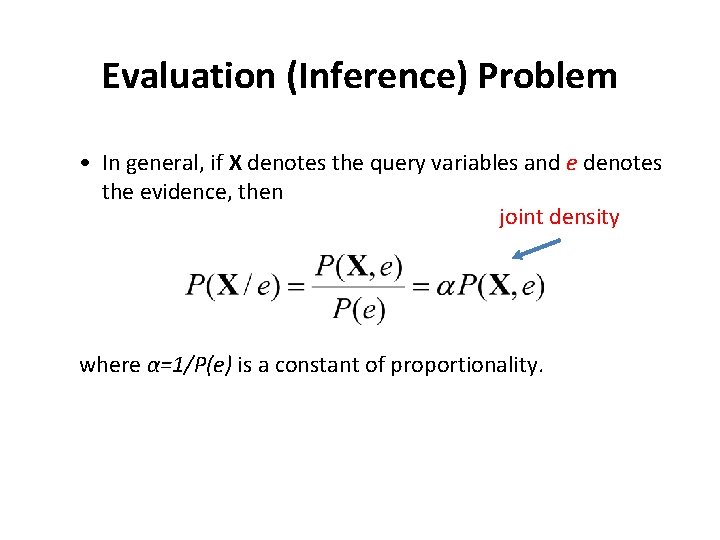

Evaluation (Inference) Problem • In general, if X denotes the query variables and e denotes the evidence, then joint density where α=1/P(e) is a constant of proportionality.

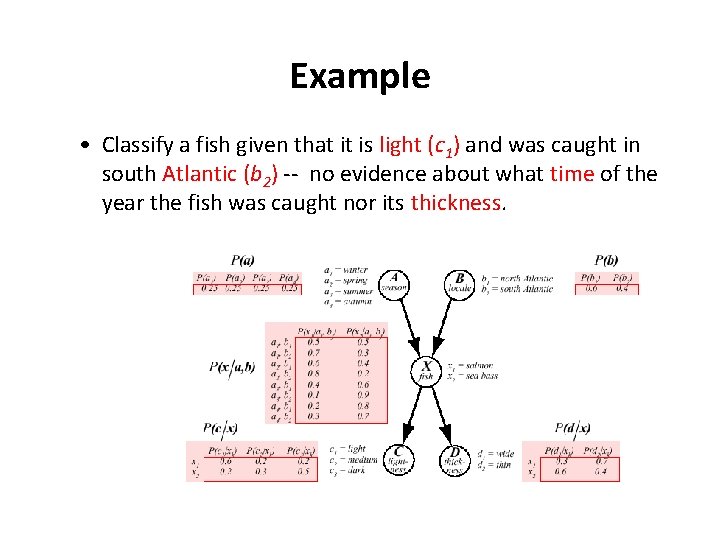

Example • Classify a fish given that it is light (c 1) and was caught in south Atlantic (b 2) -- no evidence about what time of the year the fish was caught nor its thickness.

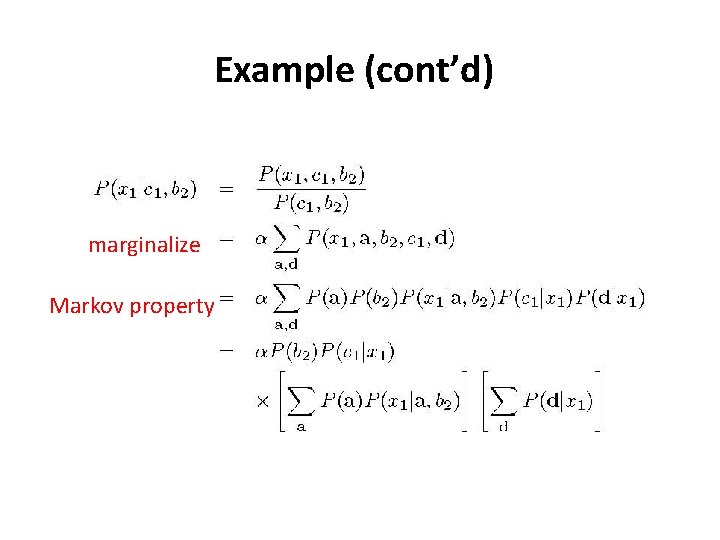

Example (cont’d) marginalize Markov property

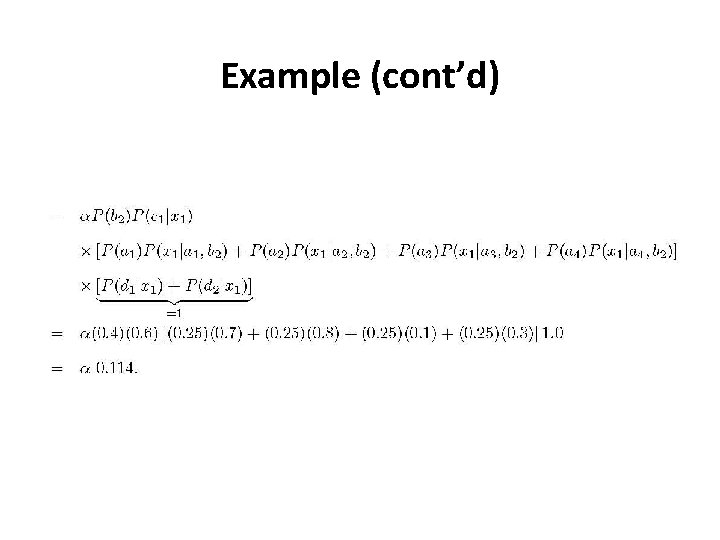

Example (cont’d)

Example (cont’d) • Similarly, P(x 2 / c 1, b 2)=α 0. 066 • Normalize probabilities (not necessary for classification): P(x 1 /c 1, b 2)+ P(x 2 /c 1, b 2)=1 (α=1/0. 18) P(x 1 /c 1, b 2)= 0. 73 P(x 2 /c 1, b 2)= 0. 27 salmon

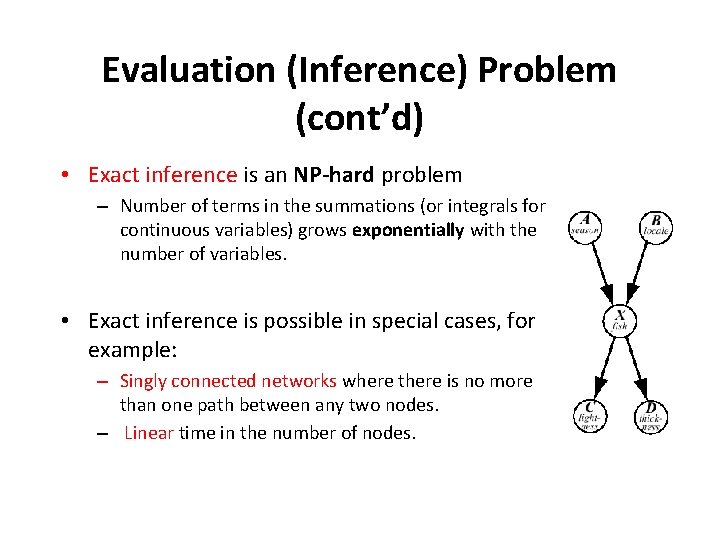

Evaluation (Inference) Problem (cont’d) • Exact inference is an NP-hard problem – Number of terms in the summations (or integrals for continuous variables) grows exponentially with the number of variables. • Exact inference is possible in special cases, for example: – Singly connected networks where there is no more than one path between any two nodes. – Linear time in the number of nodes.

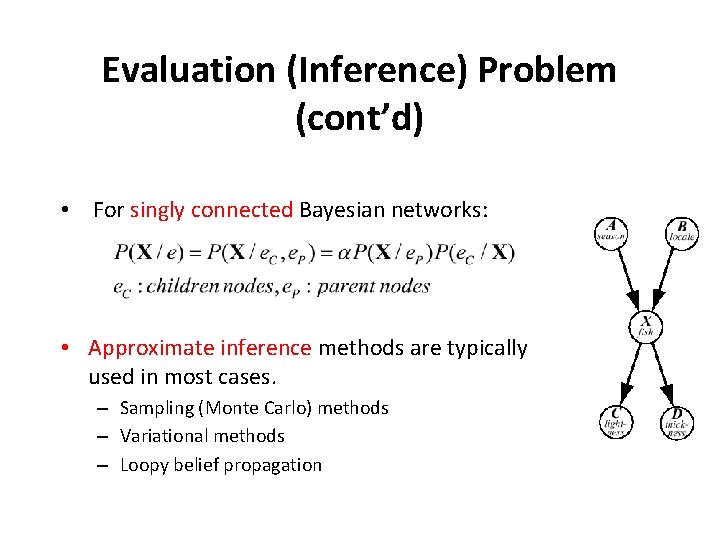

Evaluation (Inference) Problem (cont’d) • For singly connected Bayesian networks: • Approximate inference methods are typically used in most cases. – Sampling (Monte Carlo) methods – Variational methods – Loopy belief propagation

Example: Design a Bayesian Network and Apply Inference • You have a new burglar alarm installed at home. • It is fairly reliable at detecting burglary, but also sometimes responds to earthquakes. • You have two neighbors, Ali and Veli, who promised to call you at work when they hear the alarm.

Example (cont’d) • Ali always calls when he hears the alarm, but sometimes confuses telephone ringing with the alarm and calls too. • Veli likes loud music and sometimes misses the alarm. • Design a Bayesian network to estimate: P( burglary / evidence)

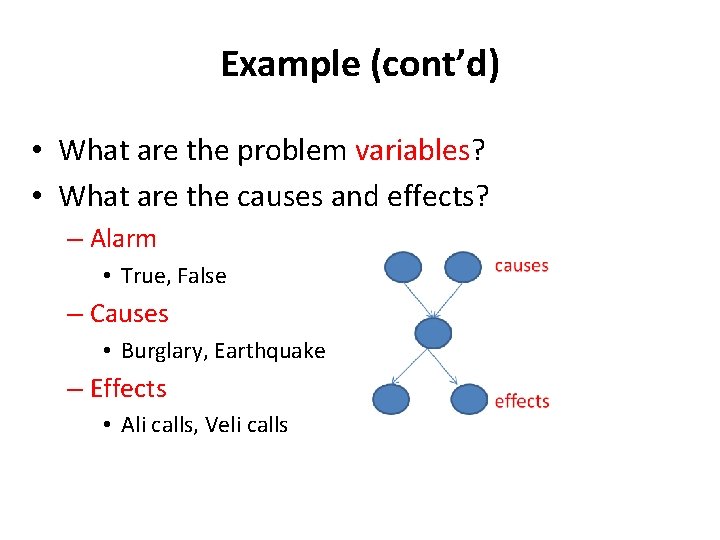

Example (cont’d) • What are the problem variables? • What are the causes and effects? – Alarm • True, False – Causes • Burglary, Earthquake – Effects • Ali calls, Veli calls

Example (cont’d) • What are the conditional dependencies? – Burglary (B) and earthquake (E) directly affect the probability of the alarm (A) going off – Whether or not Ali calls (AC) or Veli calls (VC) depends on the alarm.

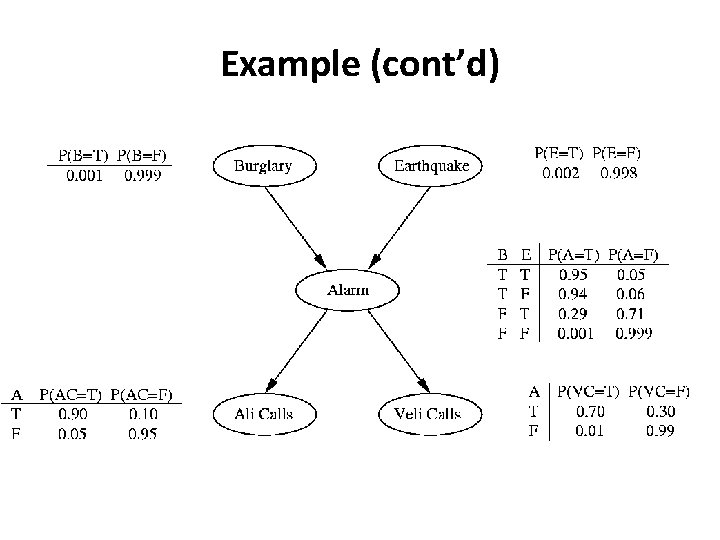

Example (cont’d)

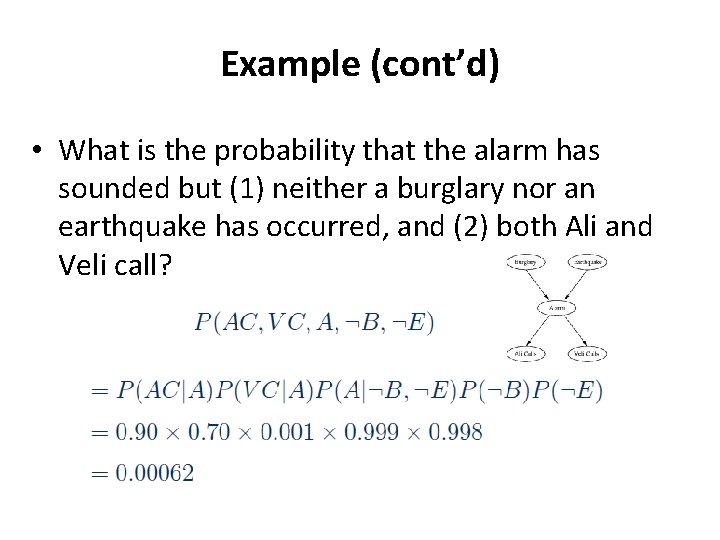

Example (cont’d) • What is the probability that the alarm has sounded but (1) neither a burglary nor an earthquake has occurred, and (2) both Ali and Veli call?

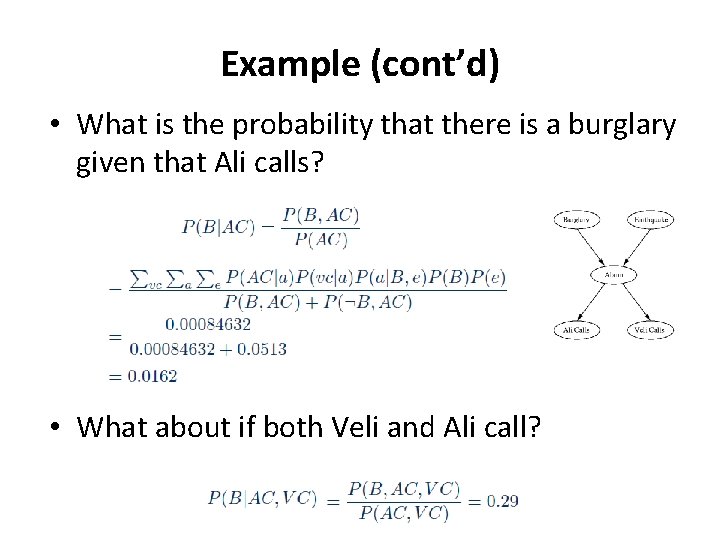

Example (cont’d) • What is the probability that there is a burglary given that Ali calls? • What about if both Veli and Ali call?

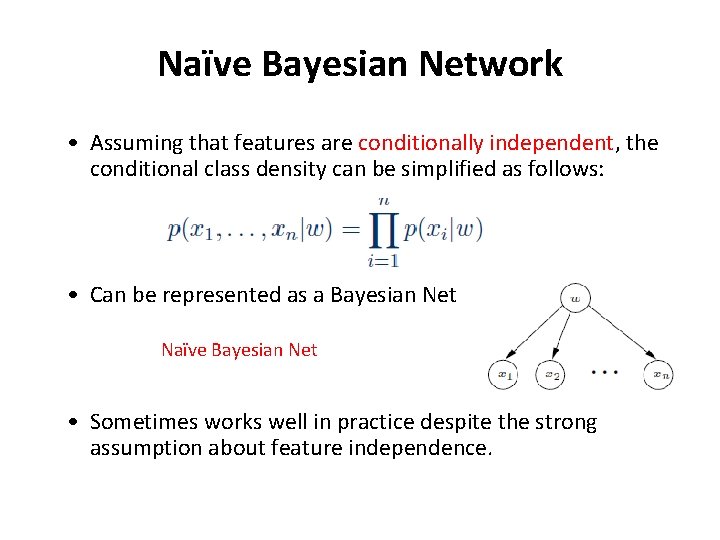

Naïve Bayesian Network • Assuming that features are conditionally independent, the conditional class density can be simplified as follows: • Can be represented as a Bayesian Net Naïve Bayesian Net • Sometimes works well in practice despite the strong assumption about feature independence.

- Slides: 27