Backpropagation Chihyun Lin 10302021 Agenda Perceptron vs backpropagation

Back-propagation Chih-yun Lin 10/30/2021

Agenda Perceptron vs. back-propagation network n n Network structure Learning rule Why a hidden layer? An example: Jets or Sharks Conclusions

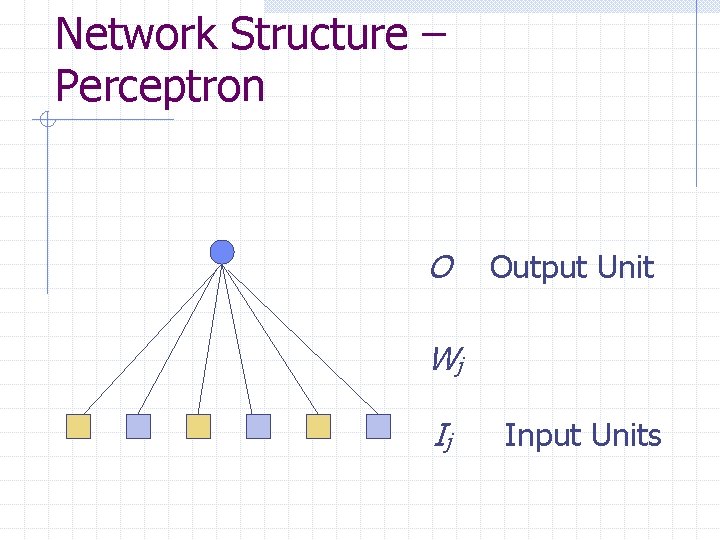

Network Structure – Perceptron O Output Unit Wj Ij Input Units

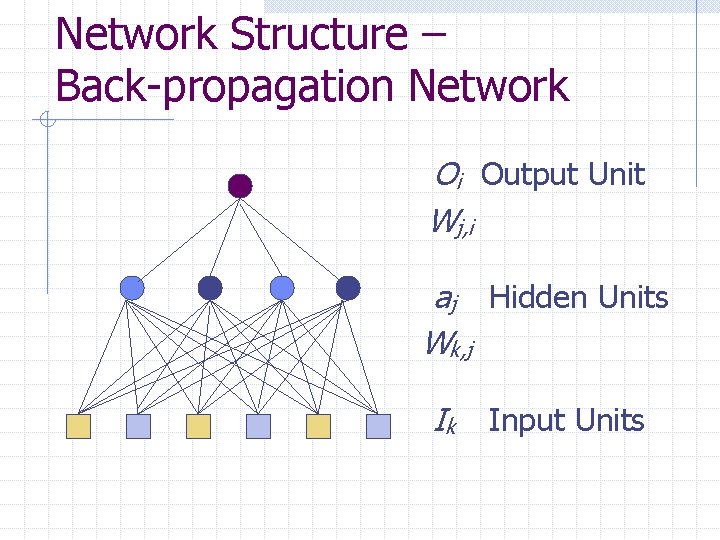

Network Structure – Back-propagation Network Oi Output Unit Wj, i aj Hidden Units Wk, j Ik Input Units

Learning Rule Measure error Reduce that error n By appropriately adjusting each of the weights in the network

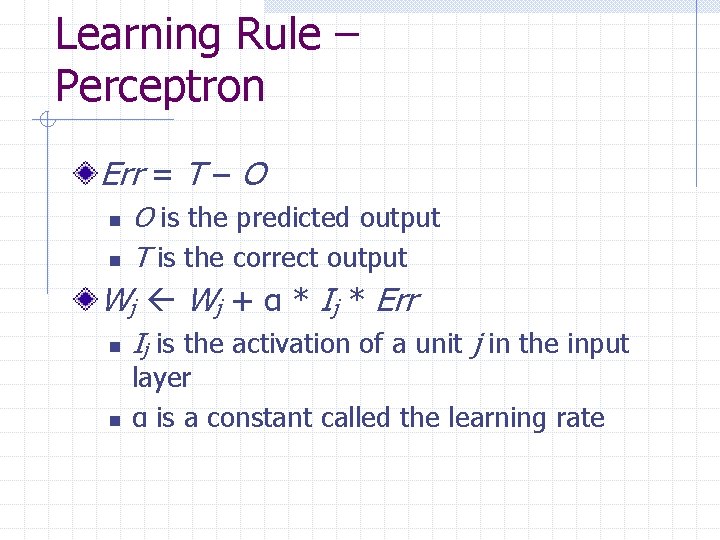

Learning Rule – Perceptron Err = T – O n n O is the predicted output T is the correct output Wj + α * Ij * Err n n Ij is the activation of a unit j in the input layer α is a constant called the learning rate

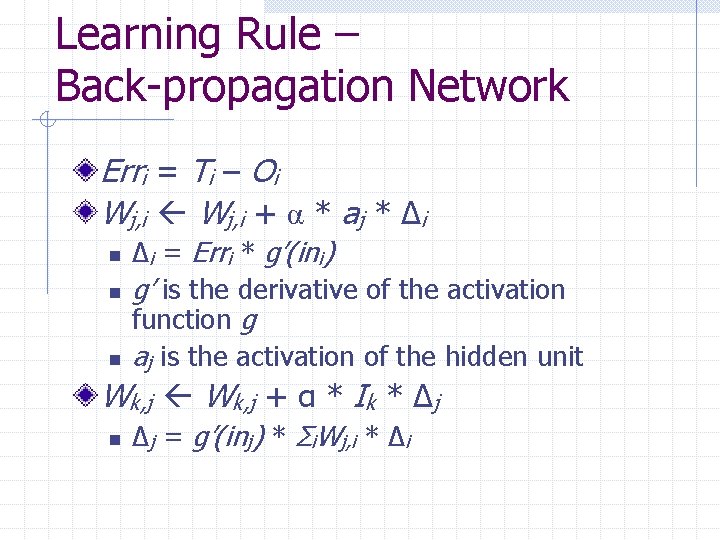

Learning Rule – Back-propagation Network Erri = Ti – Oi Wj, i + α * aj * Δi n n n Δi = Erri * g’(ini) g’ is the derivative of the activation function g aj is the activation of the hidden unit Wk, j + α * Ik * Δj n Δj = g’(inj) * Σi. Wj, i * Δi

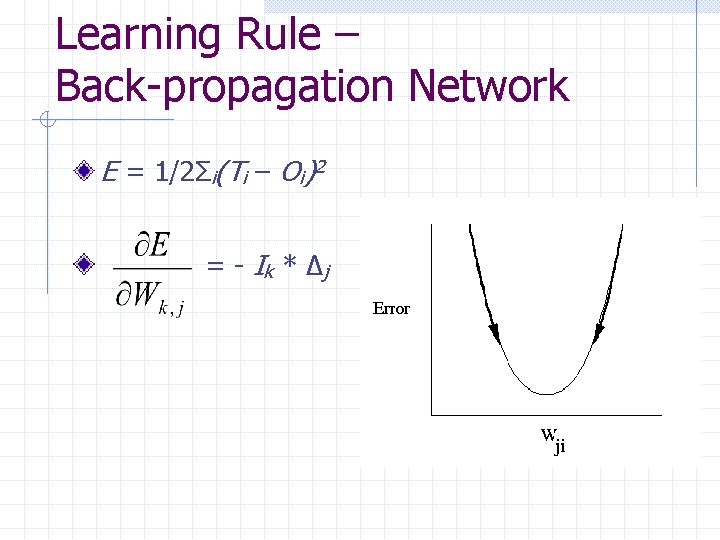

Learning Rule – Back-propagation Network E = 1/2Σi(Ti – Oi)2 = - Ik * Δ j

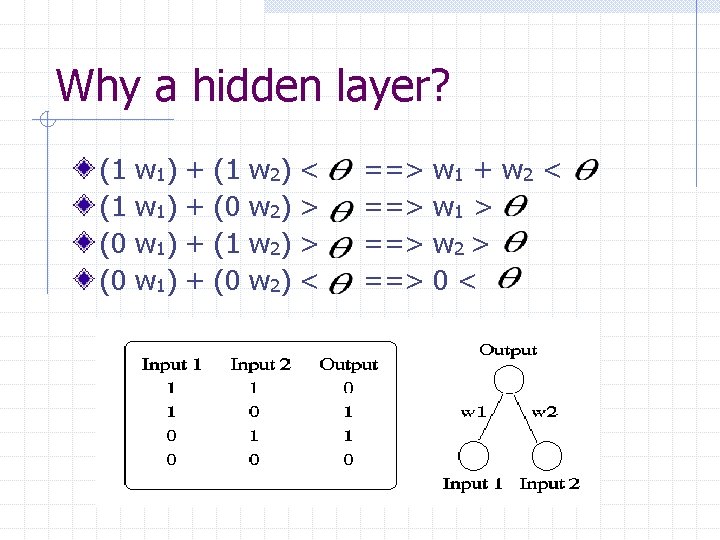

Why a hidden layer? (1 (1 (0 (0 w 1) w 1 ) + + (1 (0 w 2) < > > < ==> ==> w 1 + w 2 < w 1 > w 2 > 0<

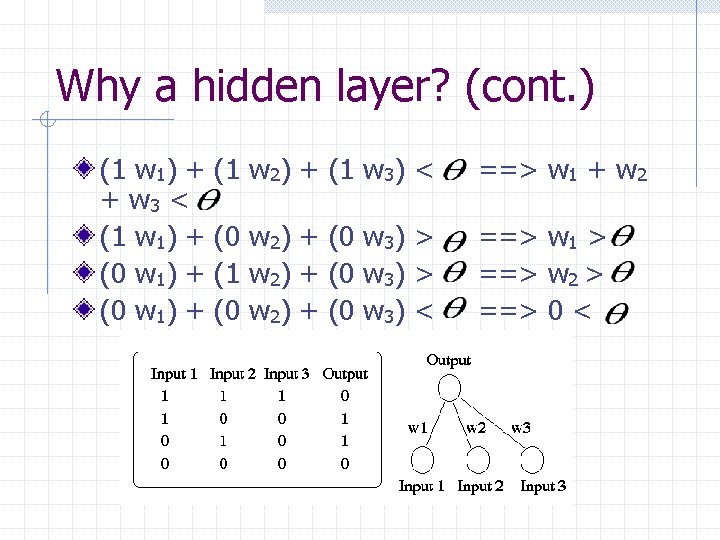

Why a hidden layer? (cont. ) (1 w 1) + + w 3 < (1 w 1) + (0 w 1) + (1 w 2) + (1 w 3) < ==> w 1 + w 2 (0 w 2) + (0 w 3) > (1 w 2) + (0 w 3) > (0 w 2) + (0 w 3) < ==> w 1 > ==> w 2 > ==> 0 <

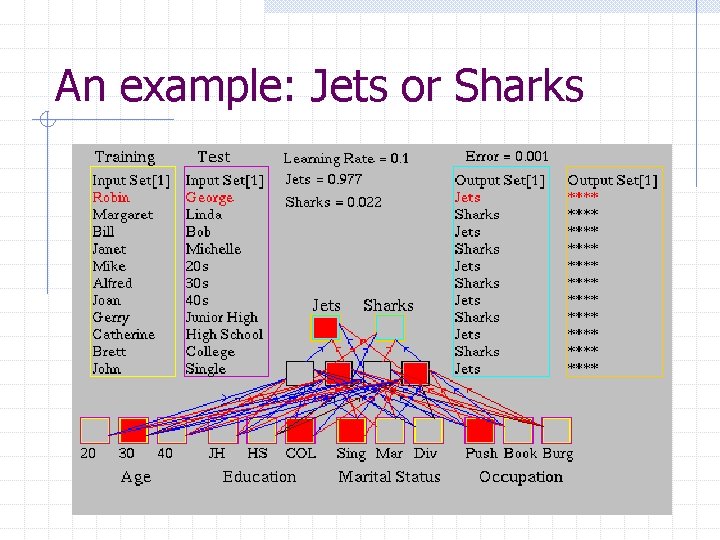

An example: Jets or Sharks

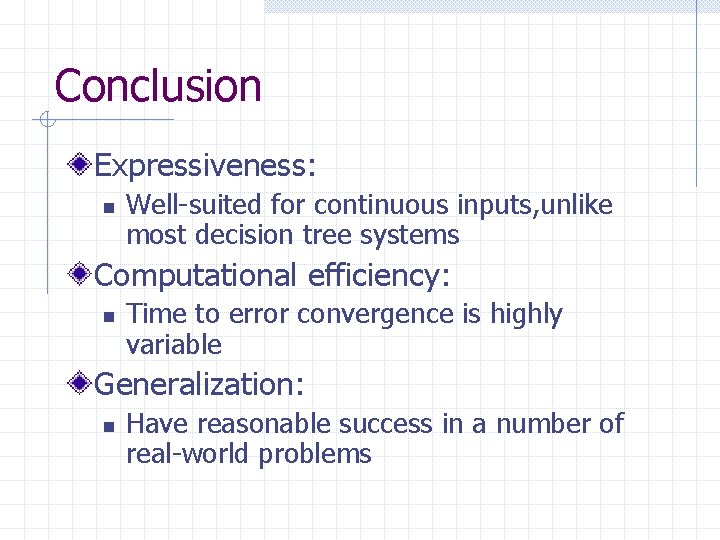

Conclusion Expressiveness: n Well-suited for continuous inputs, unlike most decision tree systems Computational efficiency: n Time to error convergence is highly variable Generalization: n Have reasonable success in a number of real-world problems

Conclusions (cont. ) Sensitivity to noise: n Very tolerant of noise in the input data Transparency: n Neural networks are essentially black boxes Prior knowledge: n Hard to used one’s knowledge to “prime” a network to learn better

- Slides: 13