Perceptron and Multilayer Perceptron CS 109 A Introduction

- Slides: 25

Perceptron and Multilayer Perceptron CS 109 A Introduction to Data Science Pavlos Protopapas, Kevin Rader and Chris Tanner 1

CS 109 A, PROTOPAPAS, RADER, TANNER 2

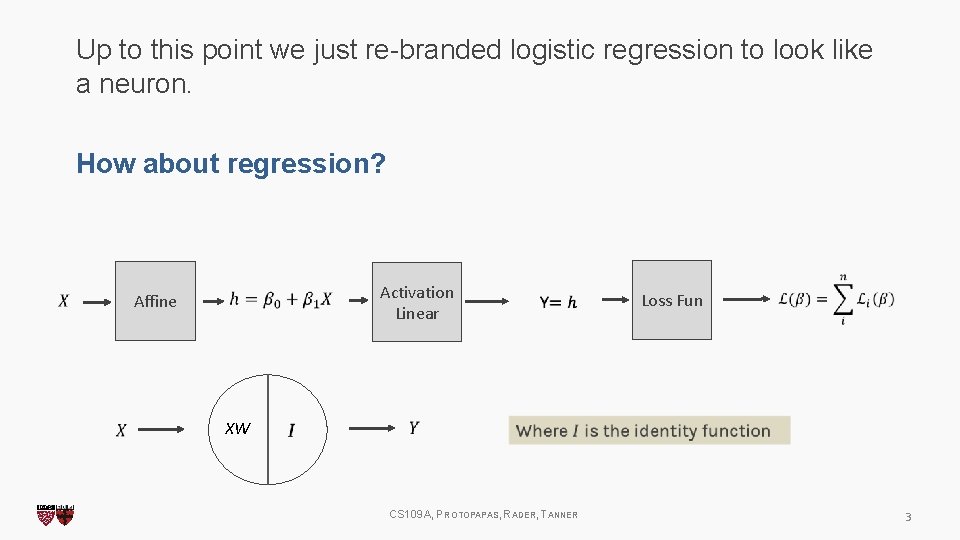

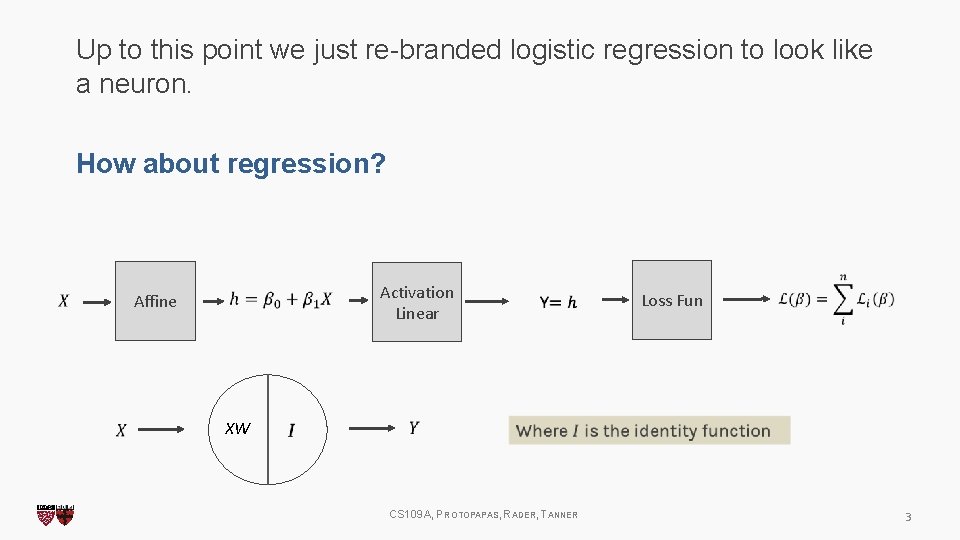

Up to this point we just re-branded logistic regression to look like a neuron. How about regression? Affine Activation Linear XW Loss Fun CS 109 A, PROTOPAPAS, RADER, TANNER 3

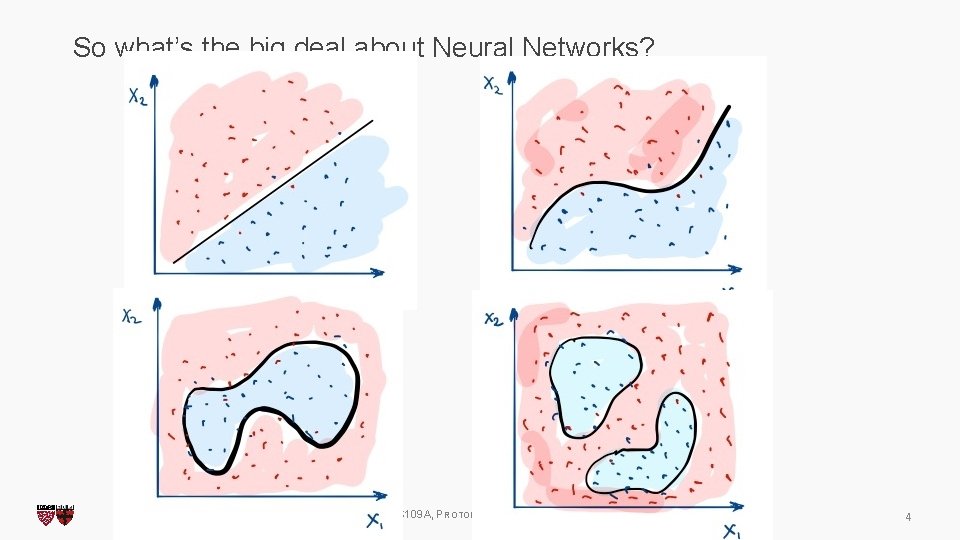

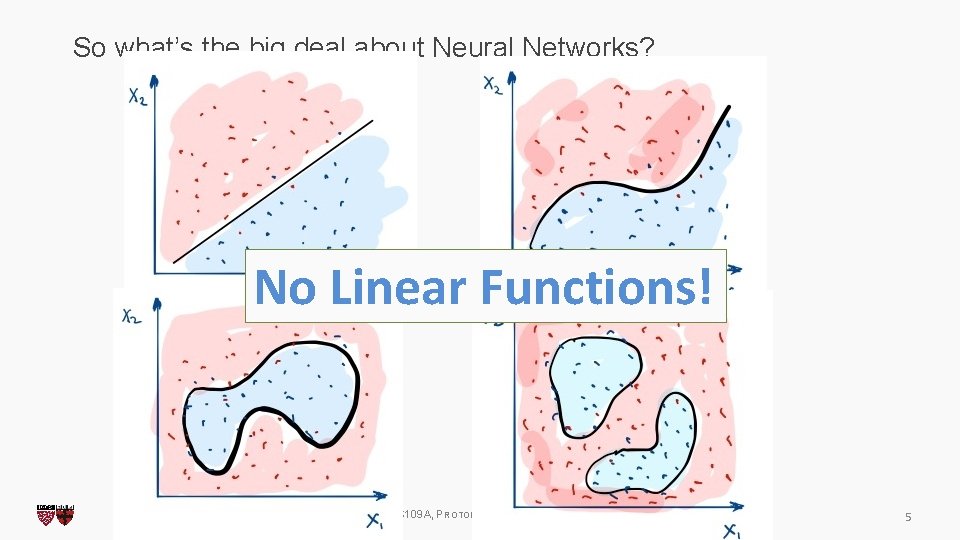

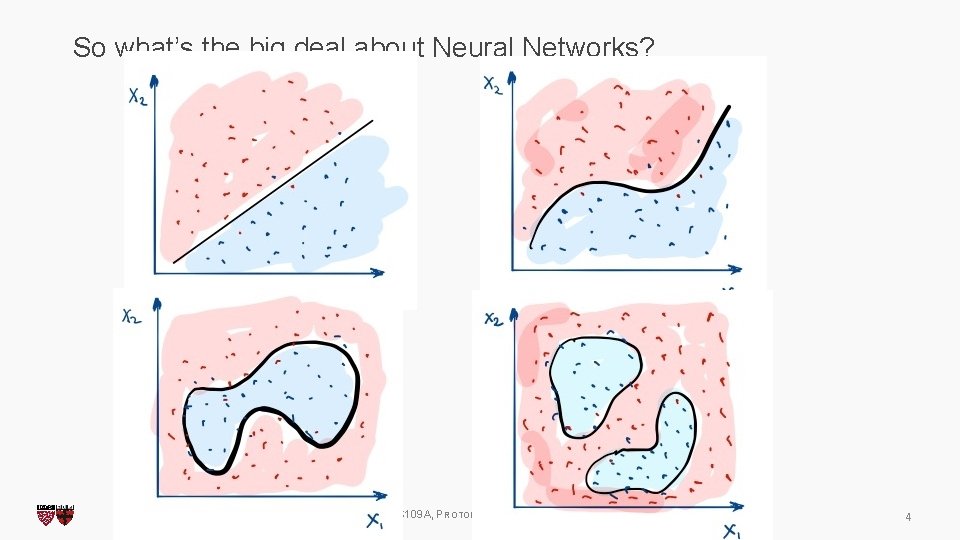

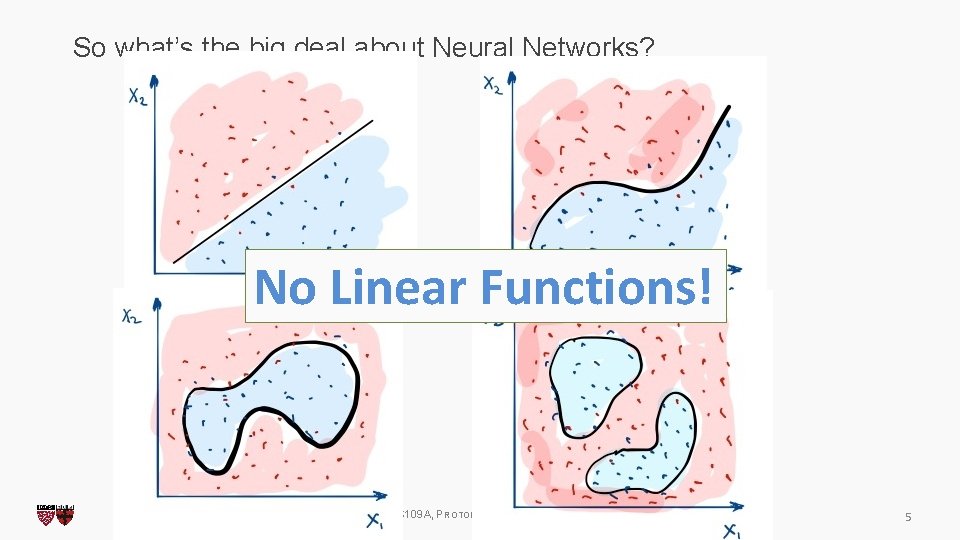

So what’s the big deal about Neural Networks? CS 109 A, PROTOPAPAS, RADER, TANNER 4

So what’s the big deal about Neural Networks? No Linear Functions! CS 109 A, PROTOPAPAS, RADER, TANNER 5

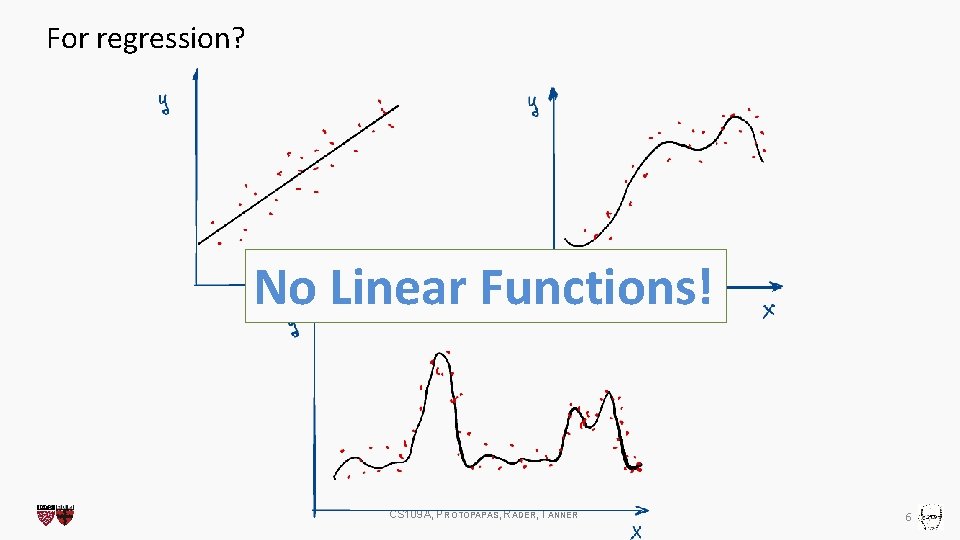

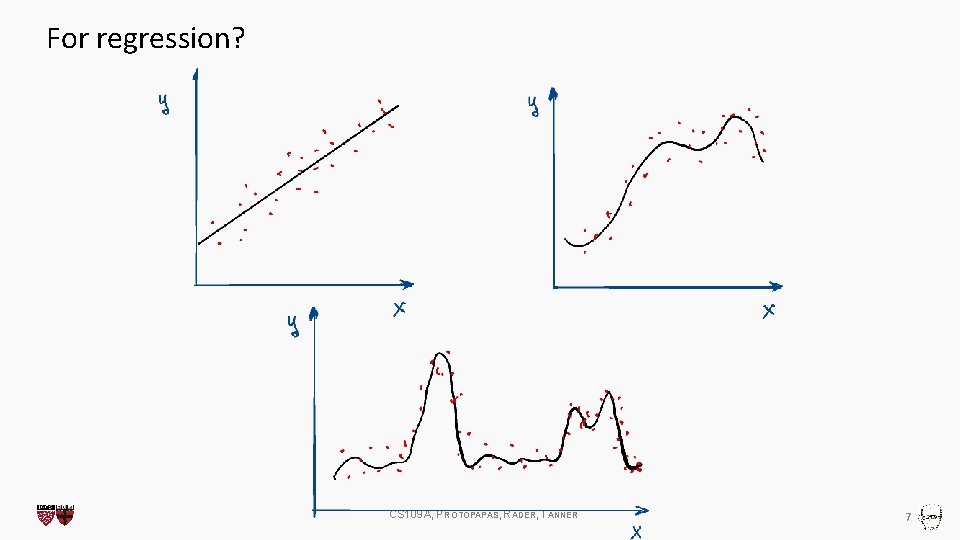

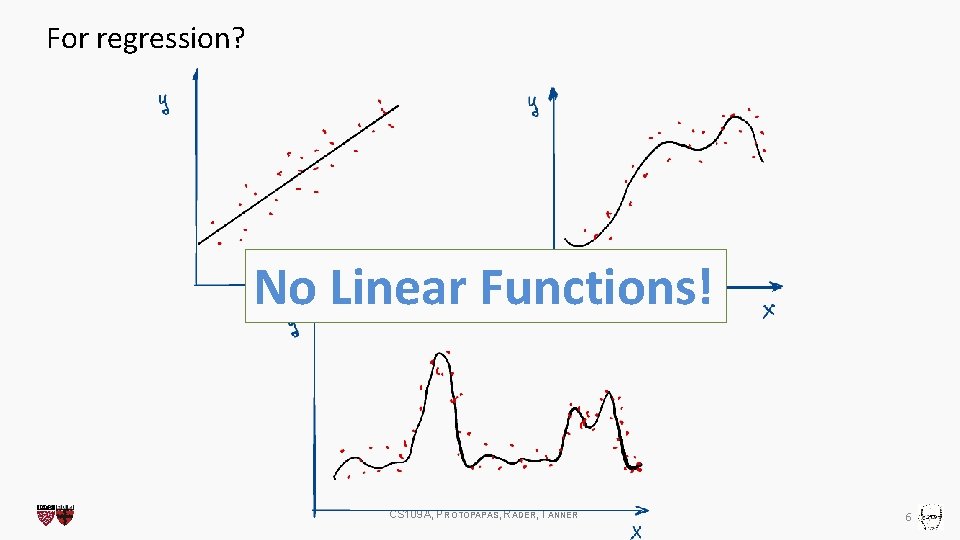

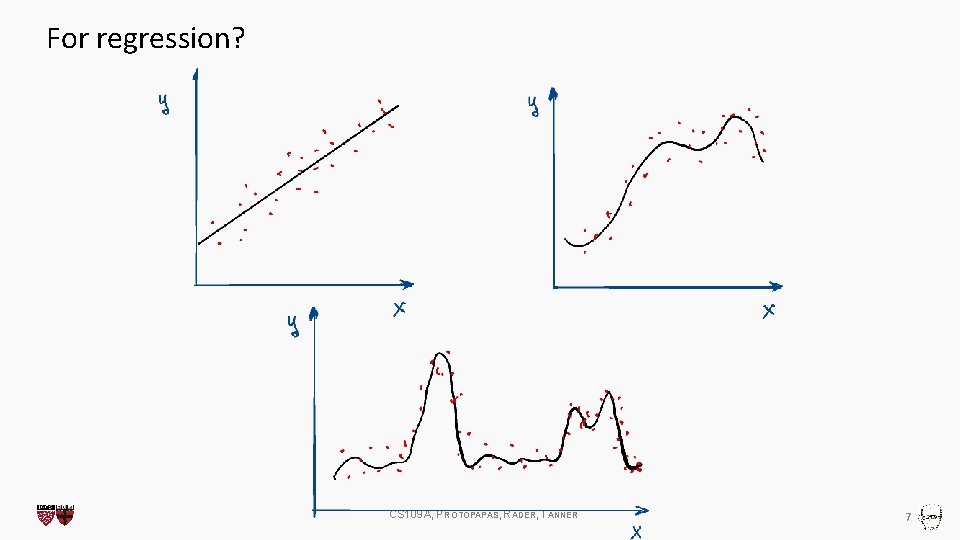

For regression? No Linear Functions! CS 109 A, PROTOPAPAS, RADER, TANNER 6

For regression? CS 109 A, PROTOPAPAS, RADER, TANNER 7

Outline 1. Introduction to Artificial Neural Networks 2. Review of Classification and Logistic Regression 3. Single Neuron Network (‘Perceptron’) 4. Multi-Layer Perceptron (MLP) CS 109 A, PROTOPAPAS, RADER, TANNER 8

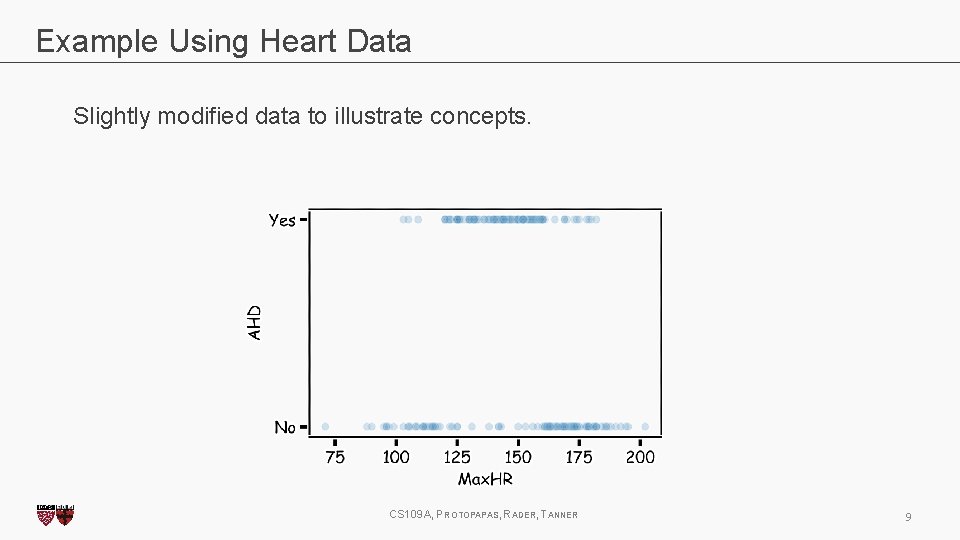

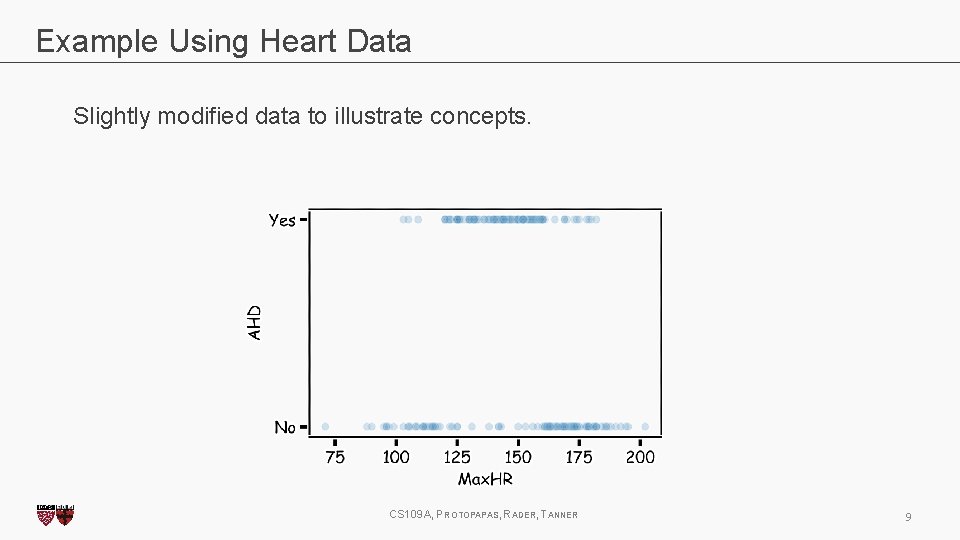

Example Using Heart Data Slightly modified data to illustrate concepts. CS 109 A, PROTOPAPAS, RADER, TANNER 9

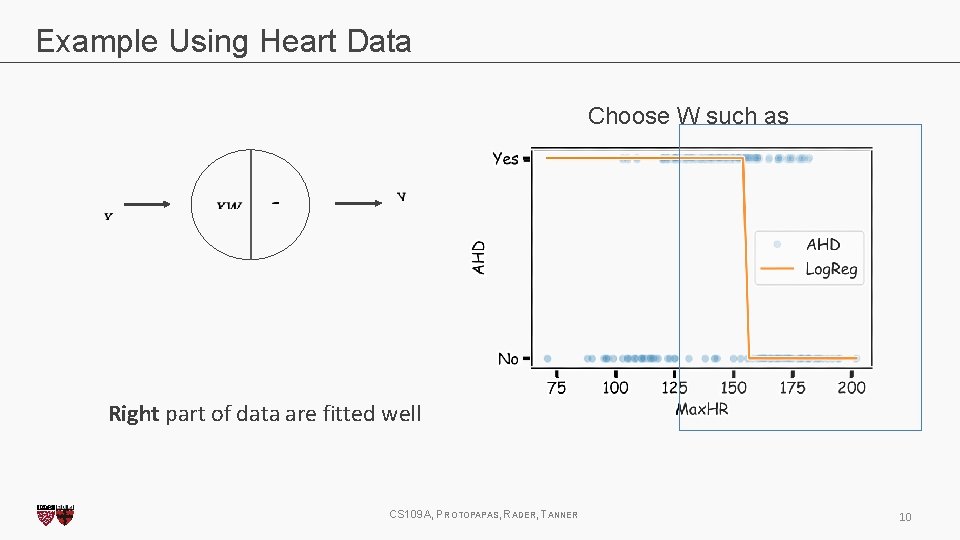

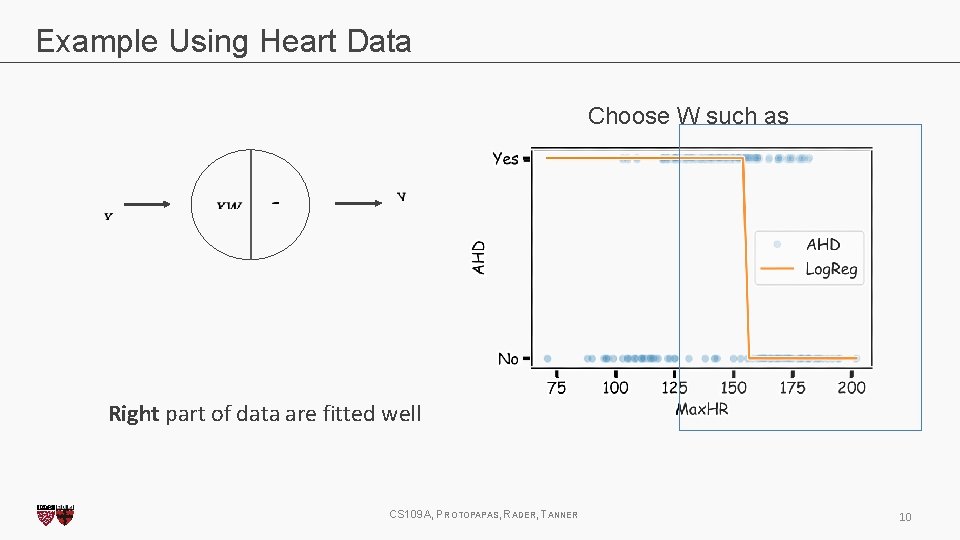

Example Using Heart Data Choose W such as Right part of data are fitted well CS 109 A, PROTOPAPAS, RADER, TANNER 10

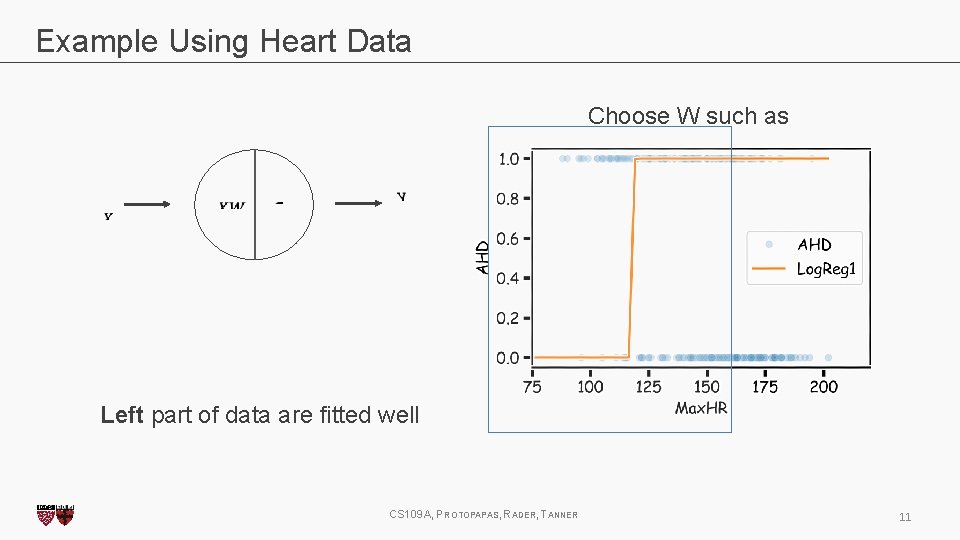

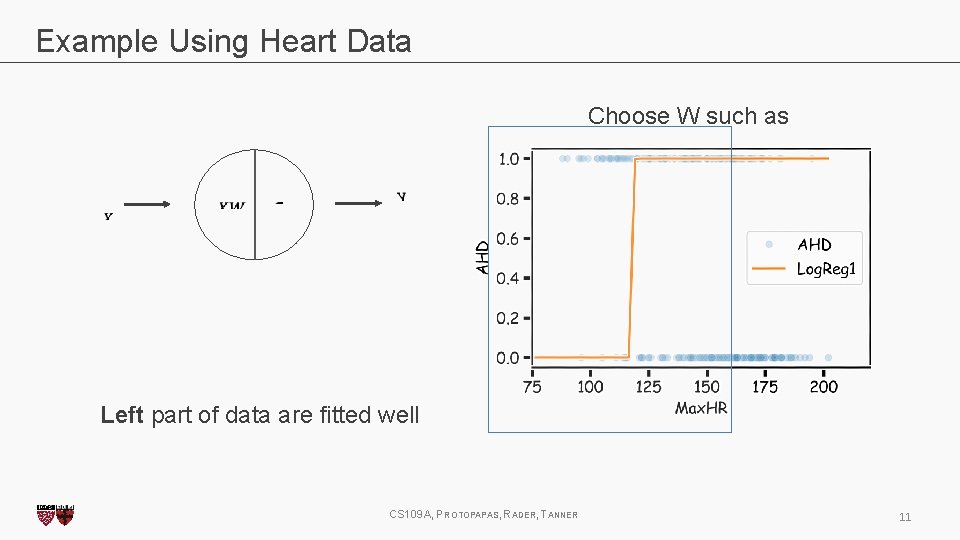

Example Using Heart Data Choose W such as Left part of data are fitted well CS 109 A, PROTOPAPAS, RADER, TANNER 11

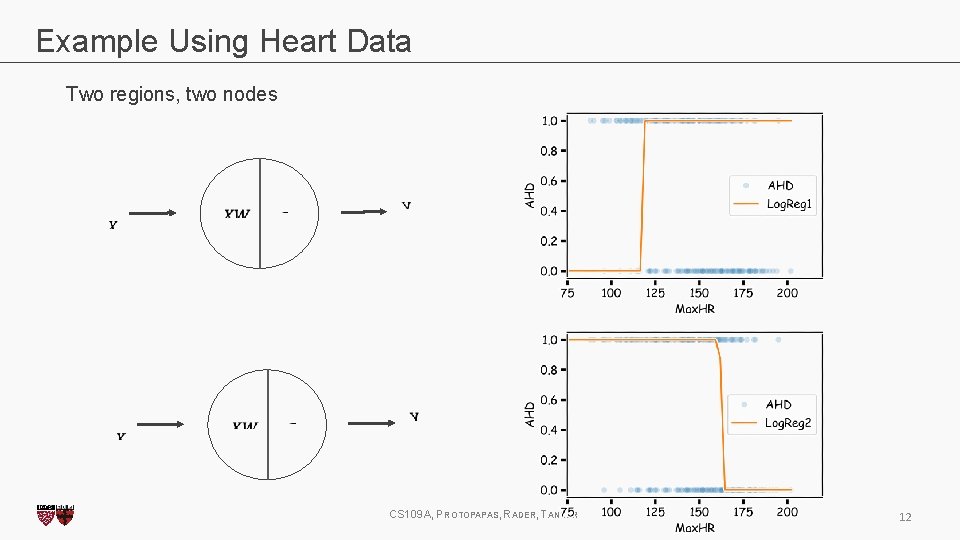

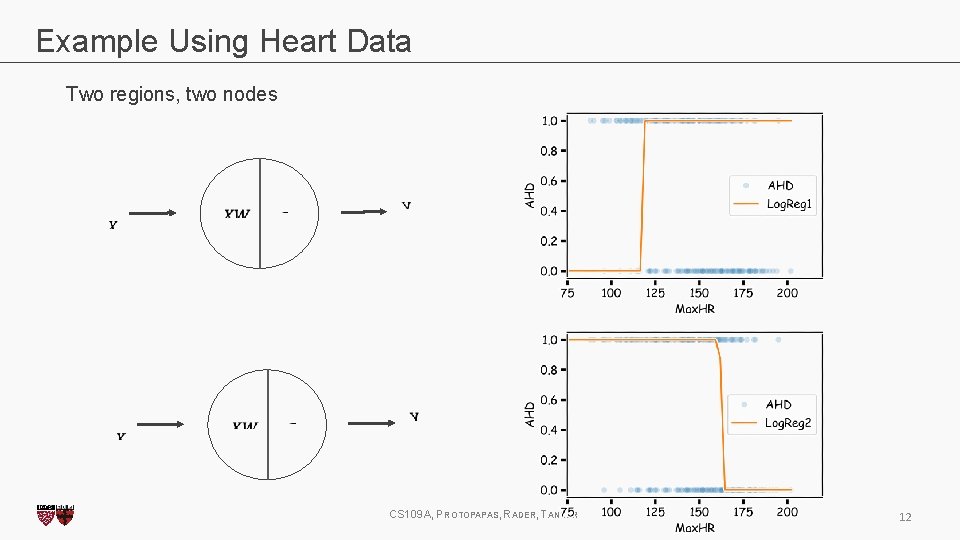

Example Using Heart Data Two regions, two nodes CS 109 A, PROTOPAPAS, RADER, TANNER 12

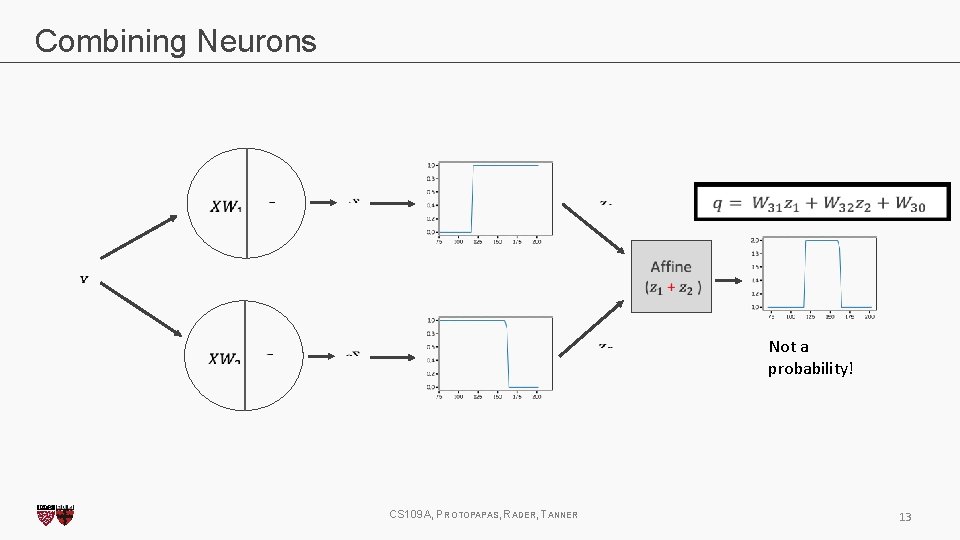

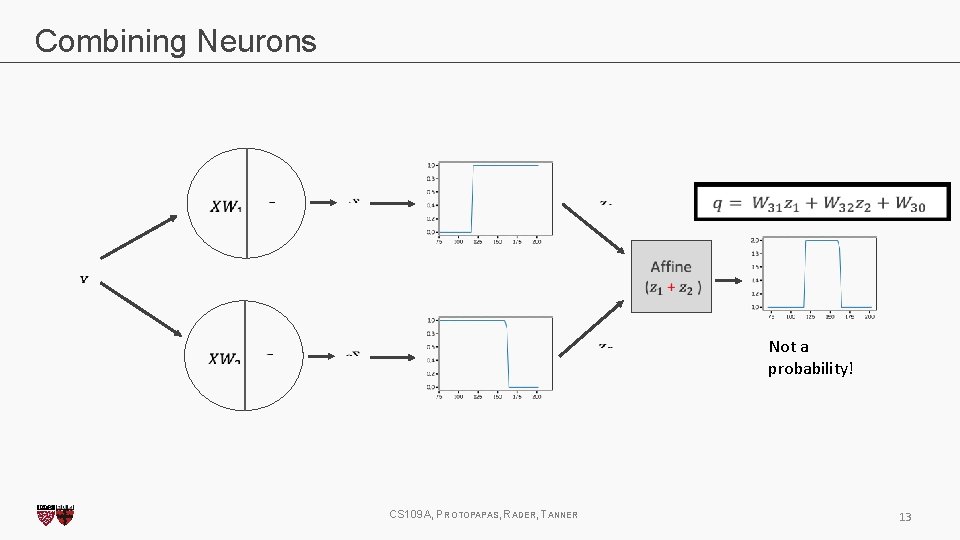

Combining Neurons CS 109 A, PROTOPAPAS, RADER, TANNER Not a probability! 13

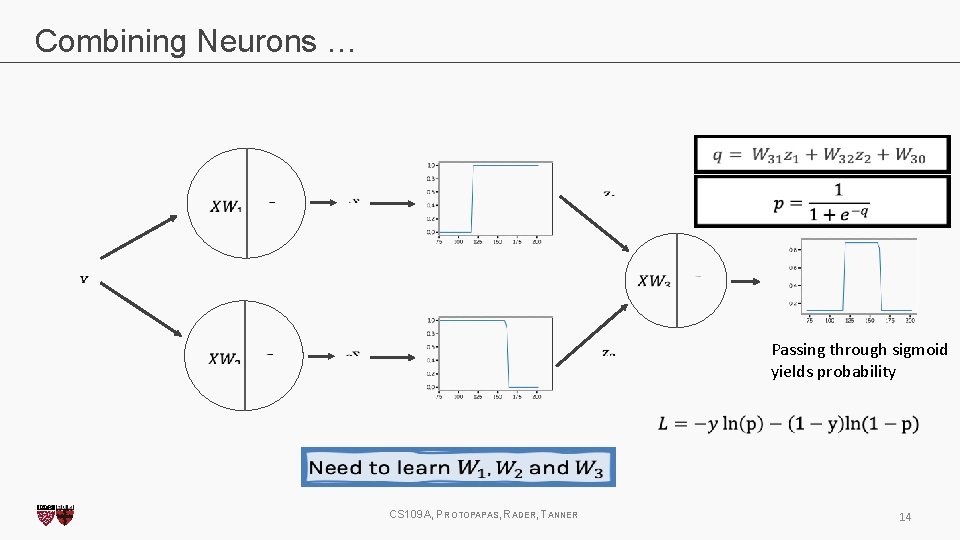

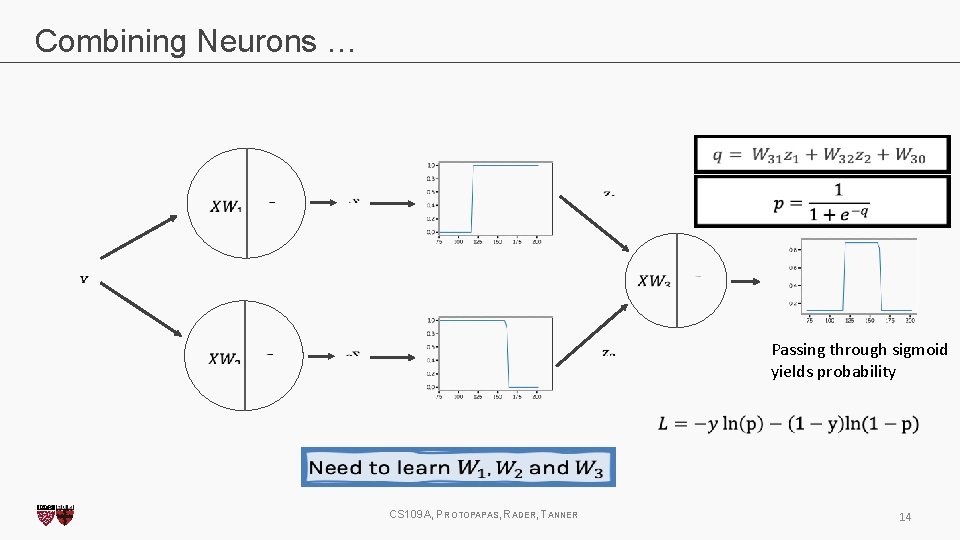

Combining Neurons … Passing through sigmoid yields probability CS 109 A, PROTOPAPAS, RADER, TANNER 14

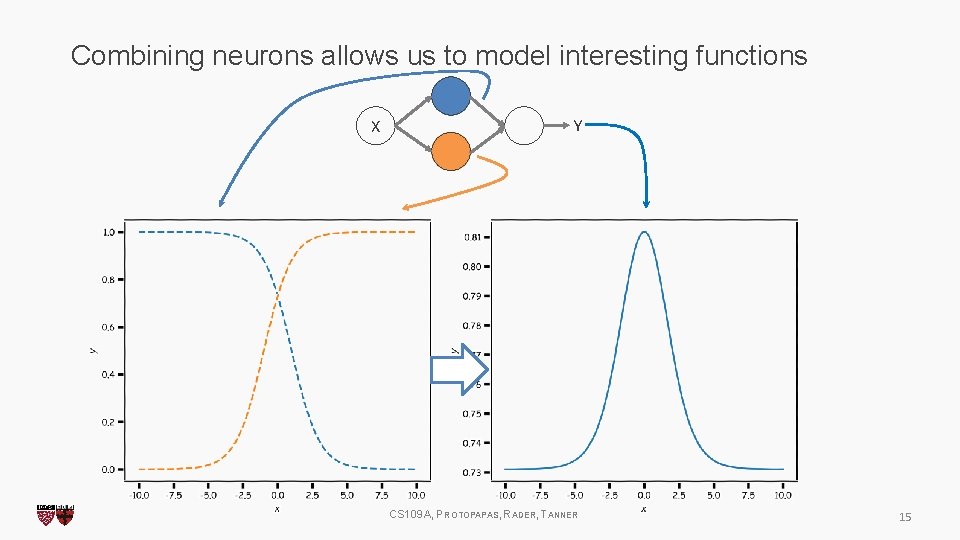

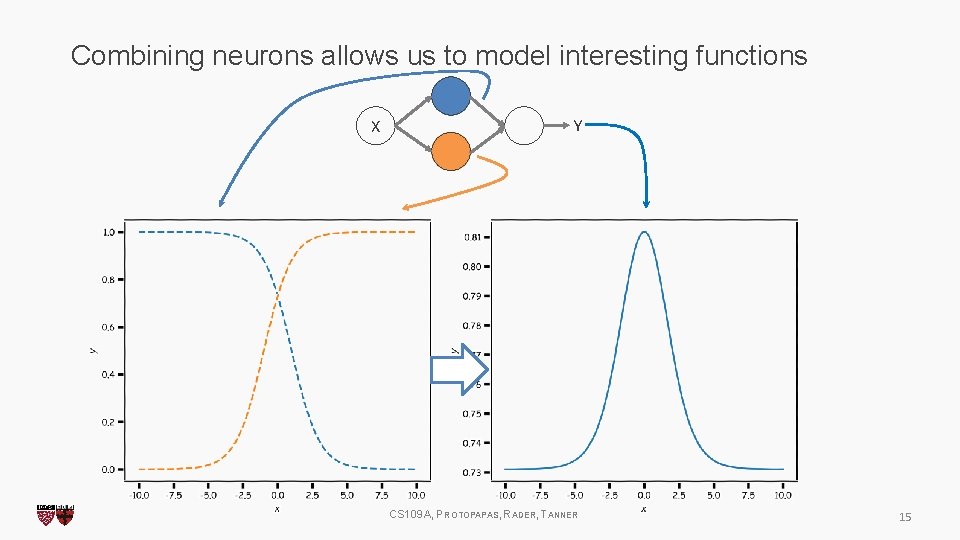

Combining neurons allows us to model interesting functions X Y CS 109 A, PROTOPAPAS, RADER, TANNER 15

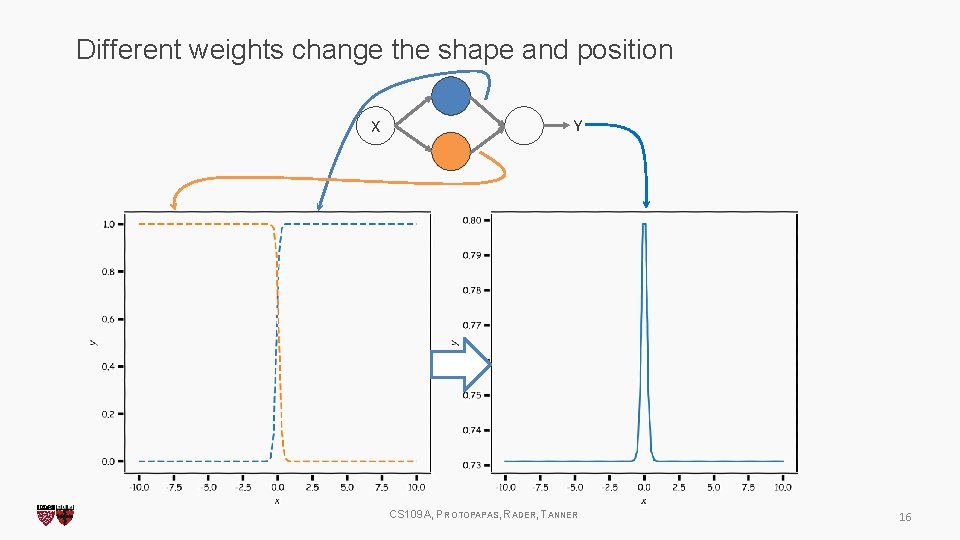

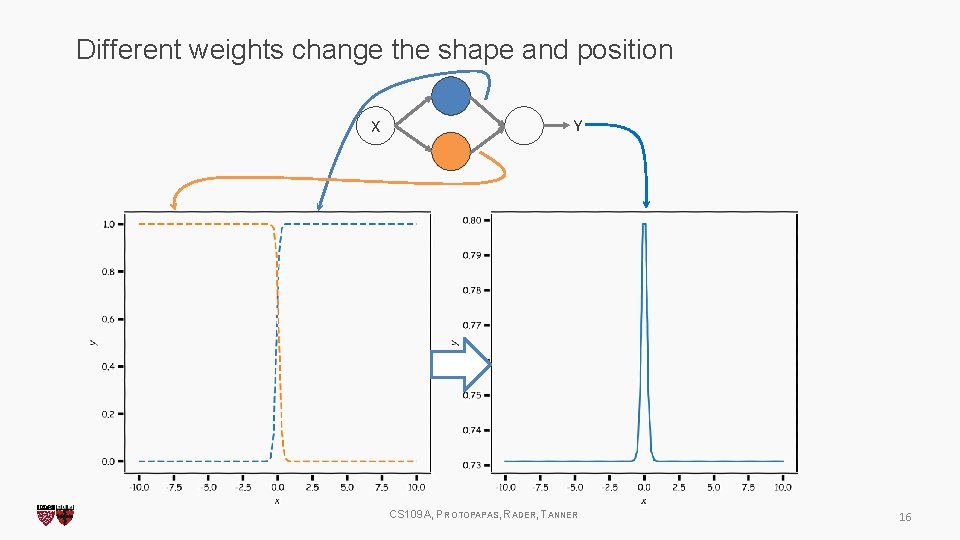

Different weights change the shape and position X Y CS 109 A, PROTOPAPAS, RADER, TANNER 16

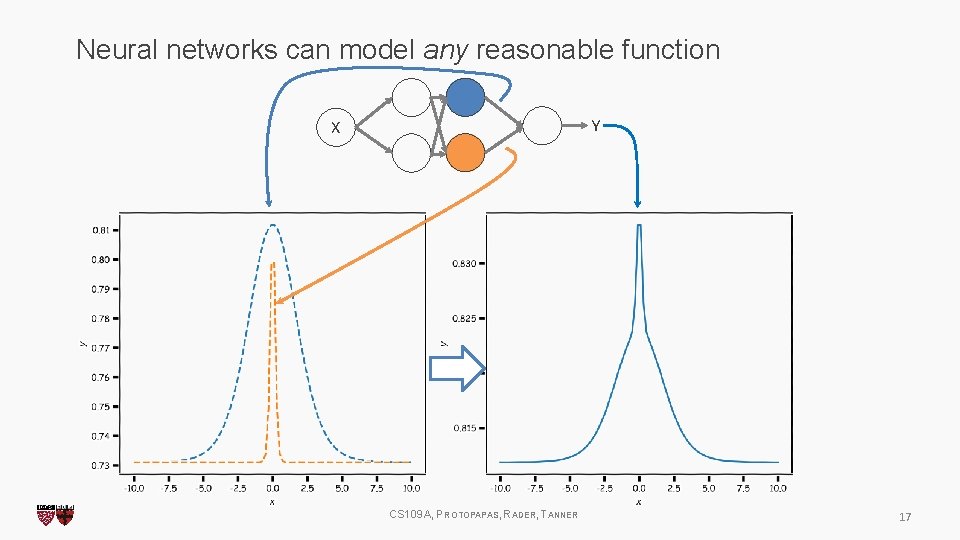

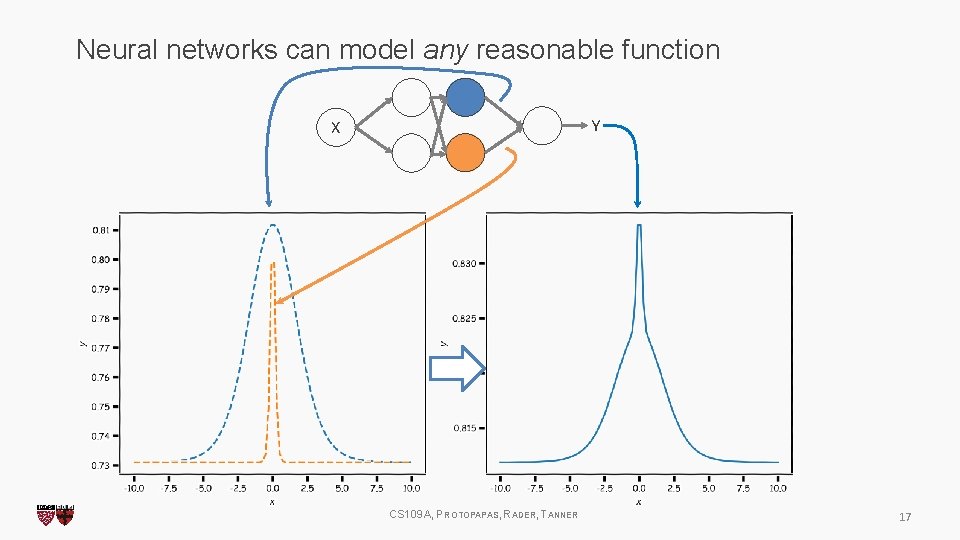

Neural networks can model any reasonable function Y X CS 109 A, PROTOPAPAS, RADER, TANNER 17

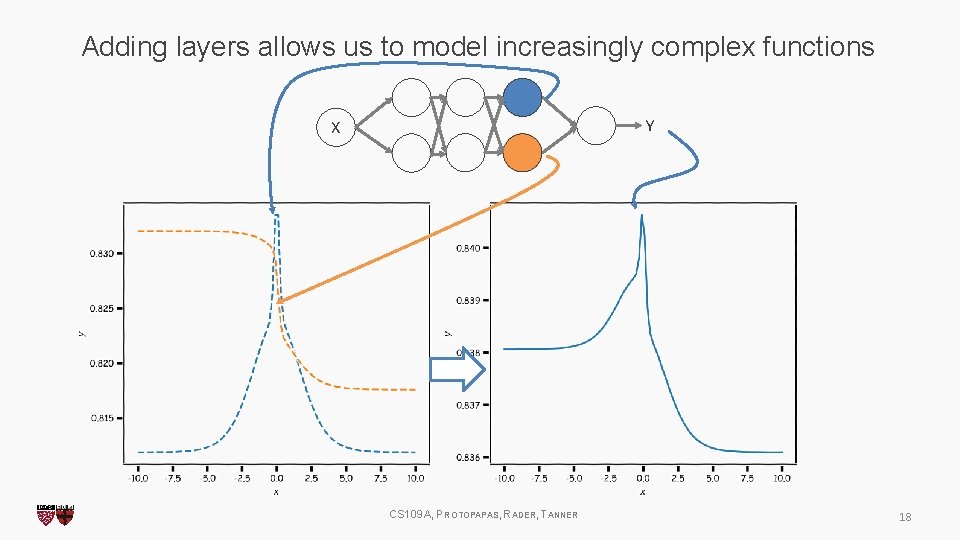

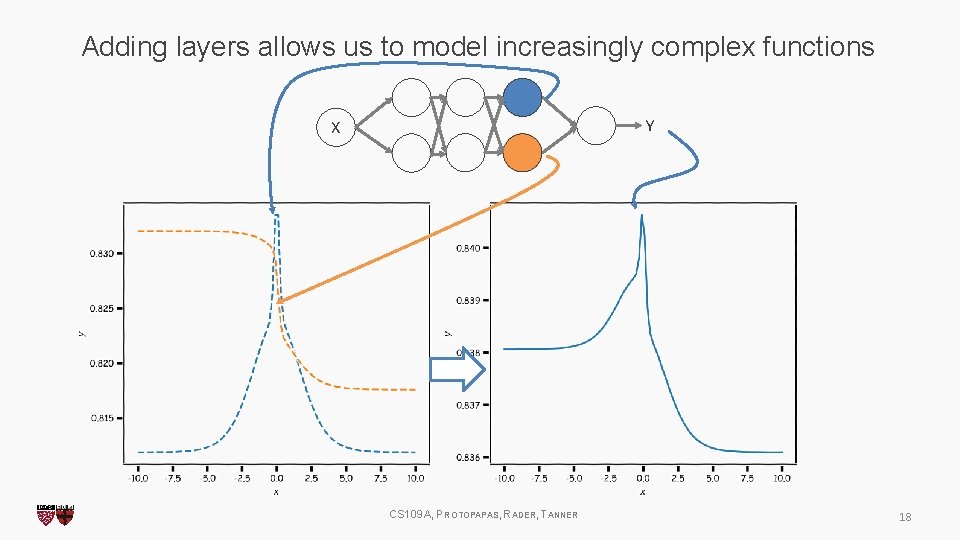

Adding layers allows us to model increasingly complex functions Y X CS 109 A, PROTOPAPAS, RADER, TANNER 18

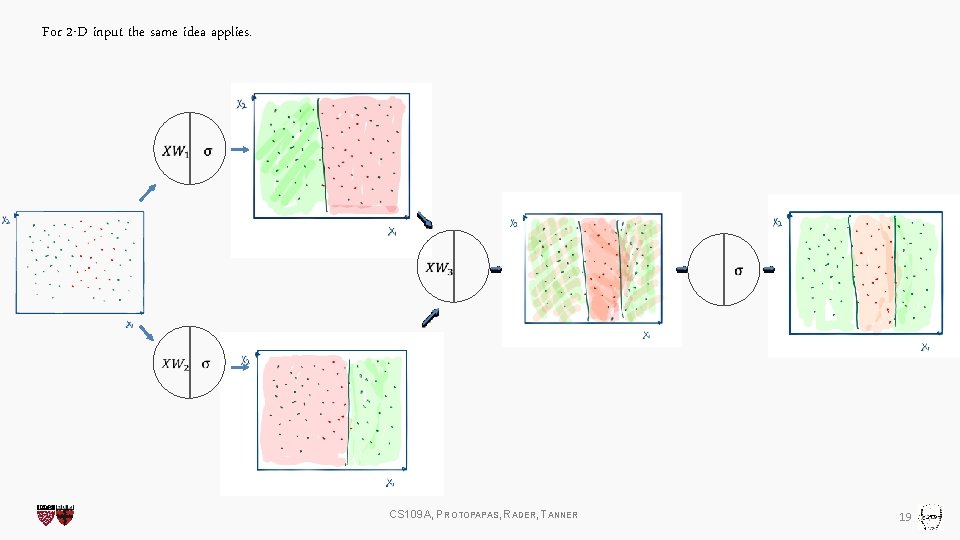

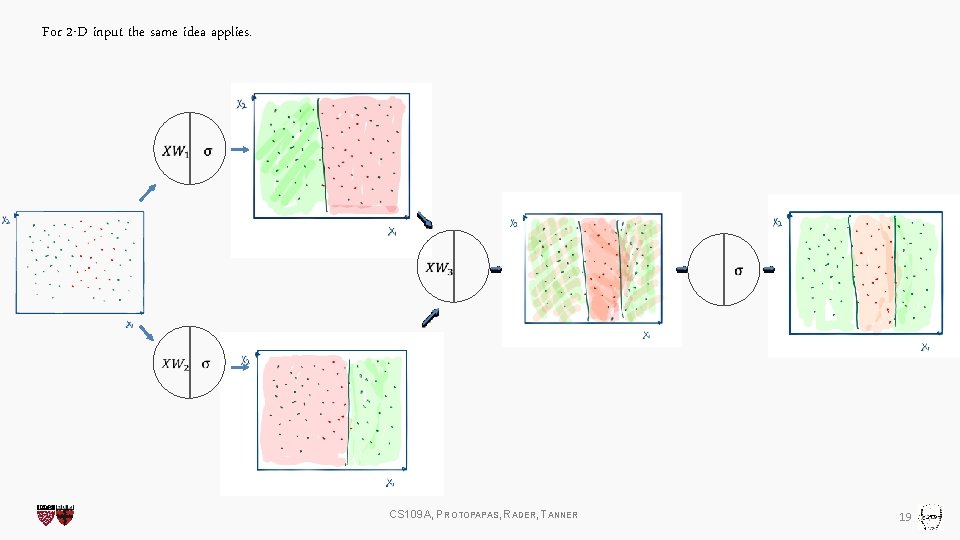

For 2 -D input the same idea applies. CS 109 A, PROTOPAPAS, RADER, TANNER 19

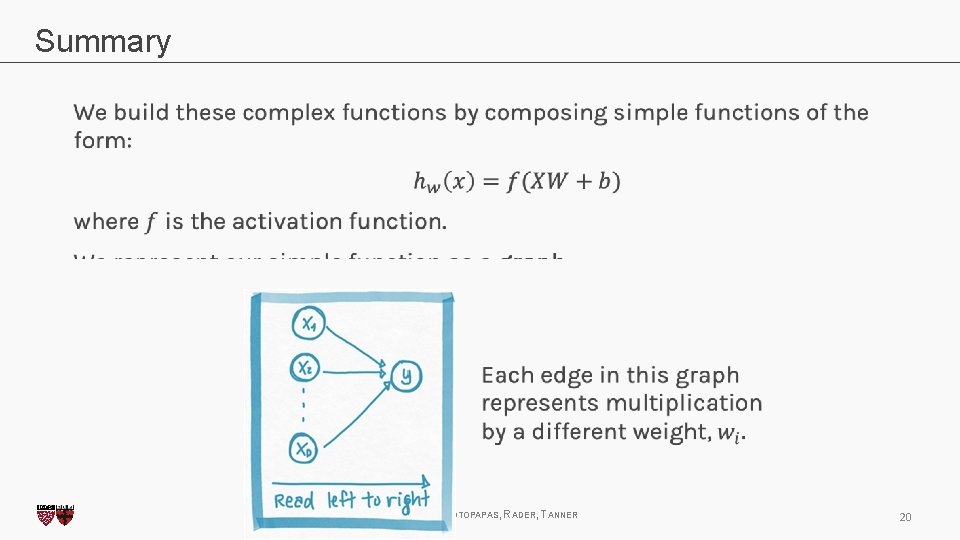

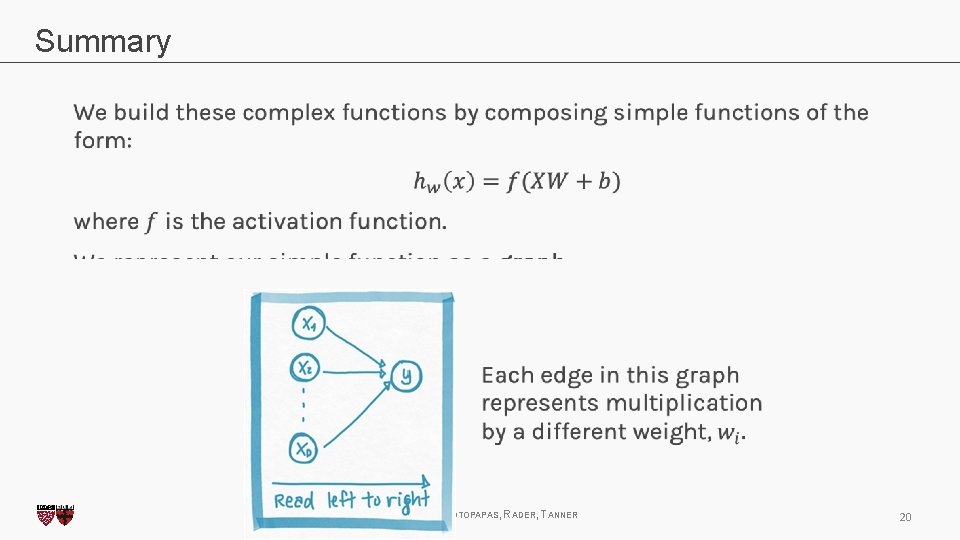

Summary CS 109 A, PROTOPAPAS, RADER, TANNER 20

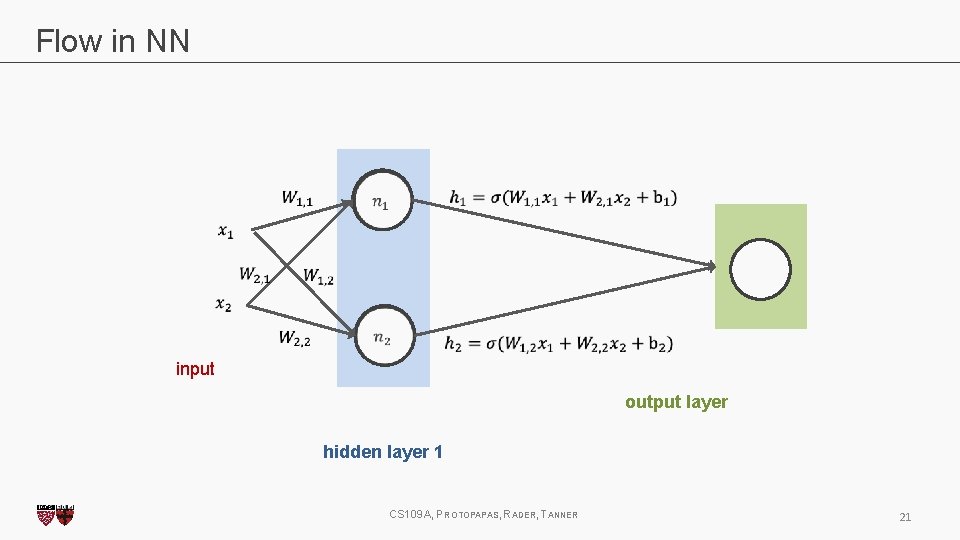

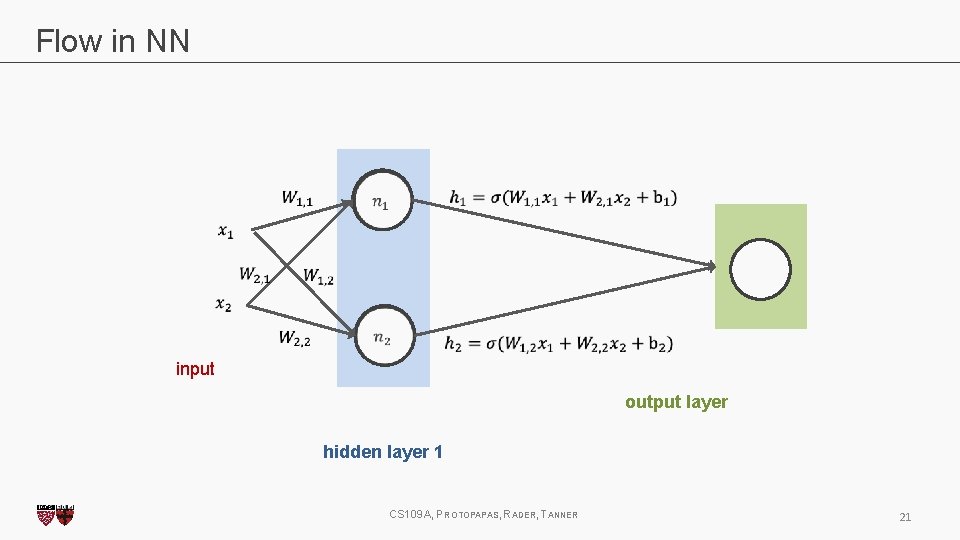

Flow in NN input output layer hidden layer 1 CS 109 A, PROTOPAPAS, RADER, TANNER 21

Summary So far: • A single neuron can be a logistic regression or linear unit. We will soon see other choices of activation function. • A neural network is a combination of logistic regression (or other types) units. • A neural network can approximate non-linear functions either for regression or classification. CS 109 A, PROTOPAPAS, RADER, TANNER 22

Next: • What kind of activations, how many neurons, how many layers, how to construct the output unit and what loss functions are appropriate? Following lectures on NN: • How do we estimate the weights and biases? • How to regularize Neural Networks? CS 109 A, PROTOPAPAS, RADER, TANNER 23

Next • What kind of activations, how many neurons, how many layers, how to construct the output unit and what loss functions are appropriate? Following two lectures on NN: • How do we estimate the weights and biases? • How to regularize Neural Networks? CS 109 A, PROTOPAPAS, RADER, TANNER 24

25