Bab 4 Classification Basic Concepts Decision Trees Model

Bab 4 Classification: Basic Concepts, Decision Trees & Model Evaluation Part 2 Model Overfitting & Classifier Evaluation Bab 4. 2 - 1/57

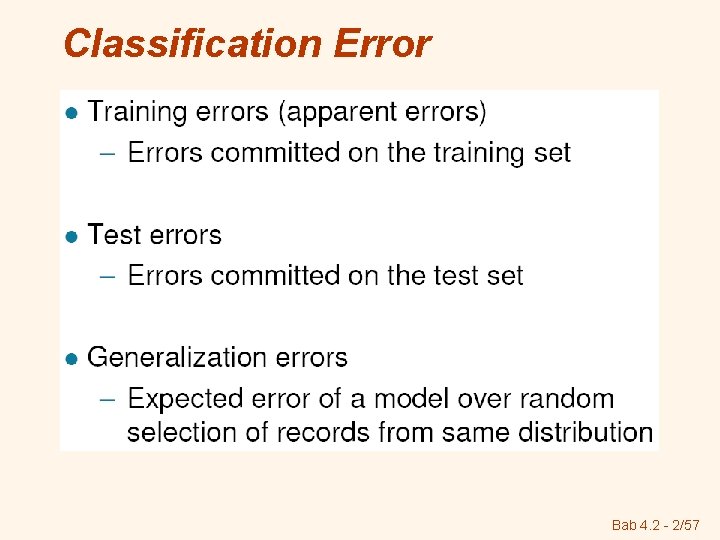

Classification Error Bab 4. 2 - 2/57

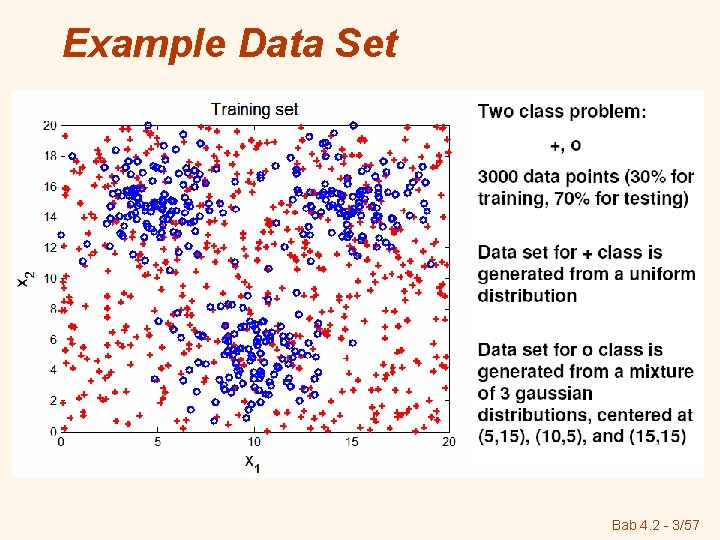

Example Data Set Bab 4. 2 - 3/57

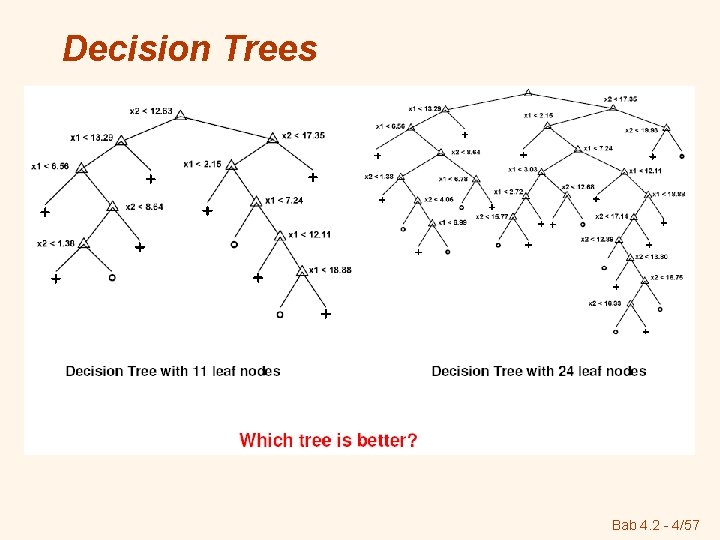

Decision Trees Bab 4. 2 - 4/57

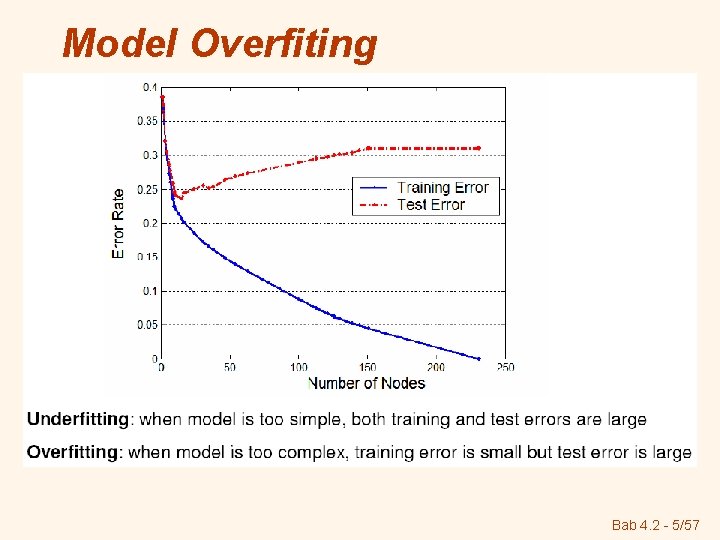

Model Overfiting Bab 4. 2 - 5/57

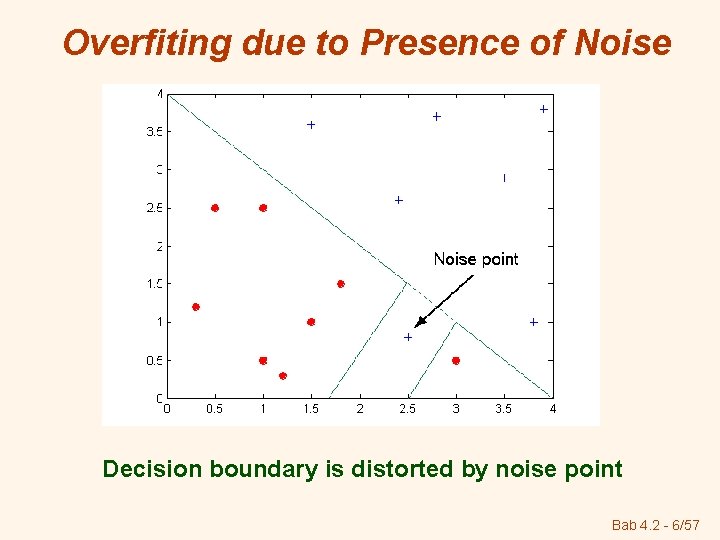

Overfiting due to Presence of Noise Decision boundary is distorted by noise point Bab 4. 2 - 6/57

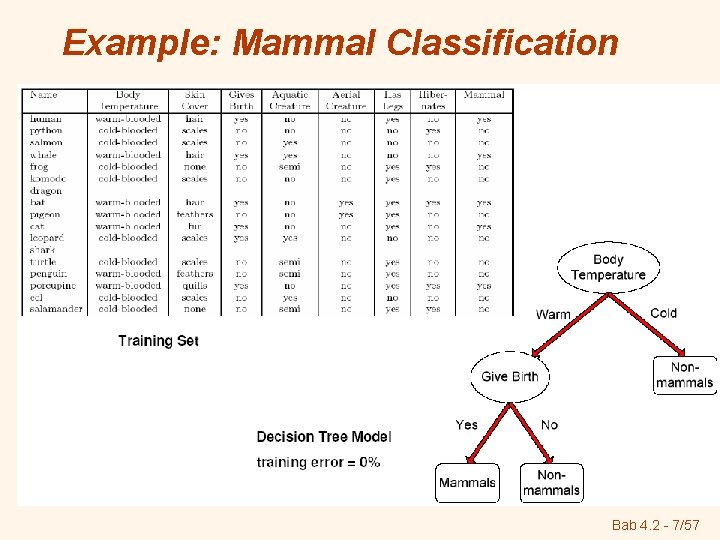

Example: Mammal Classification Bab 4. 2 - 7/57

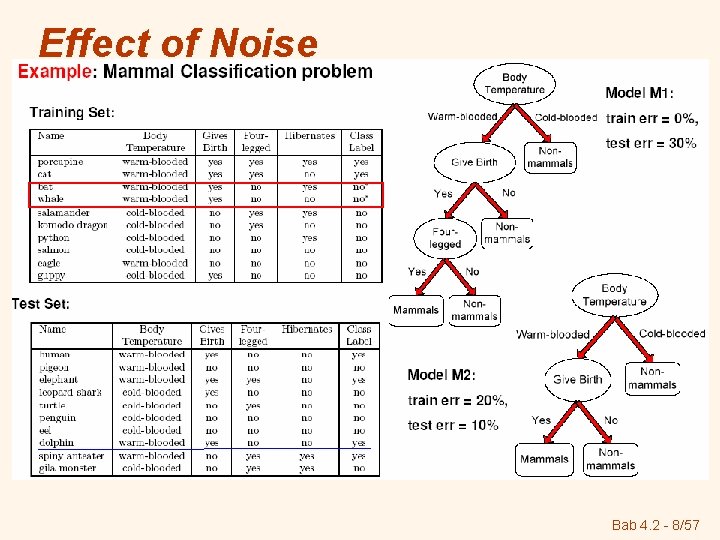

Effect of Noise Bab 4. 2 - 8/57

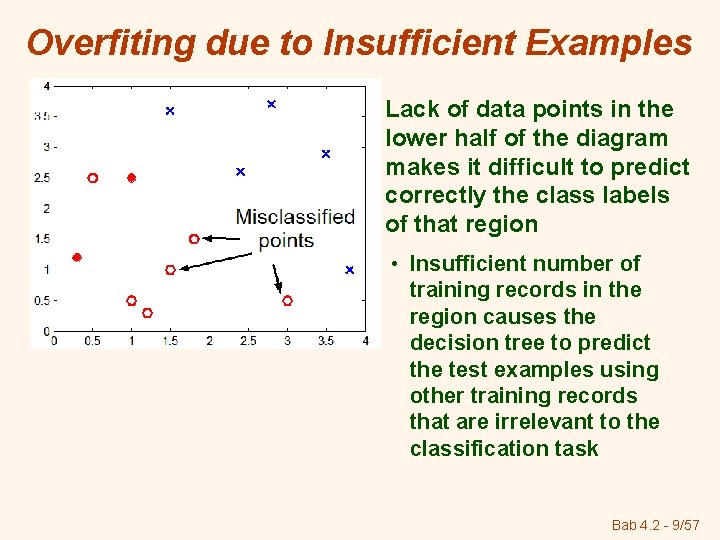

Overfiting due to Insufficient Examples Lack of data points in the lower half of the diagram makes it difficult to predict correctly the class labels of that region • Insufficient number of training records in the region causes the decision tree to predict the test examples using other training records that are irrelevant to the classification task Bab 4. 2 - 9/57

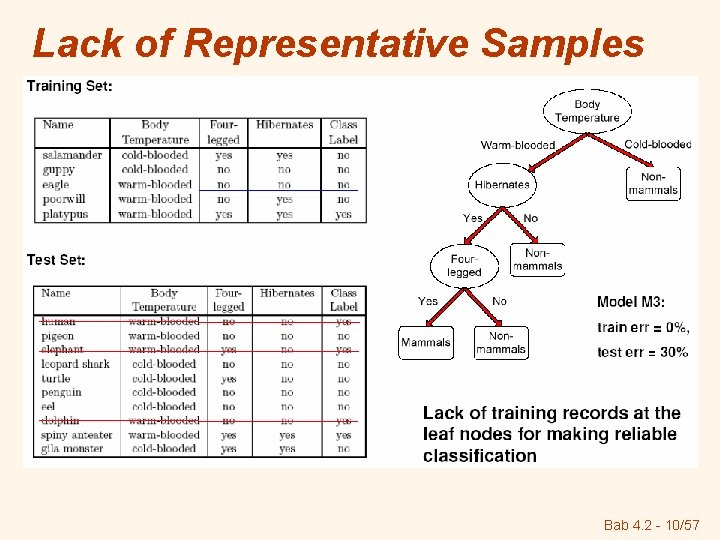

Lack of Representative Samples Bab 4. 2 - 10/57

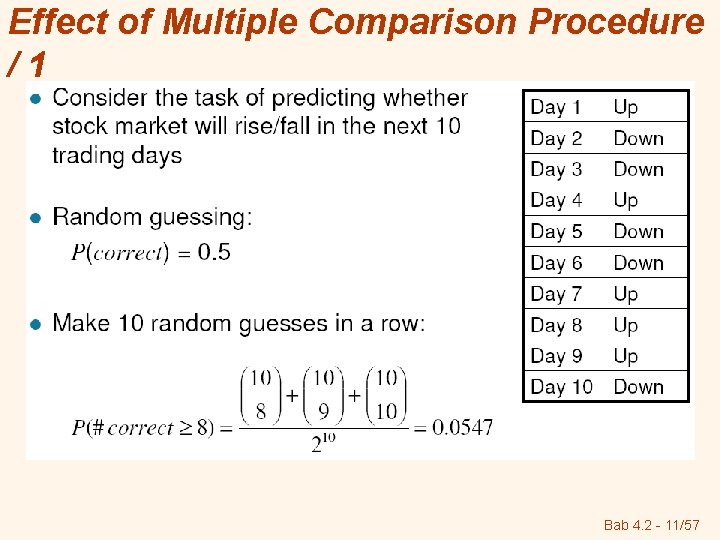

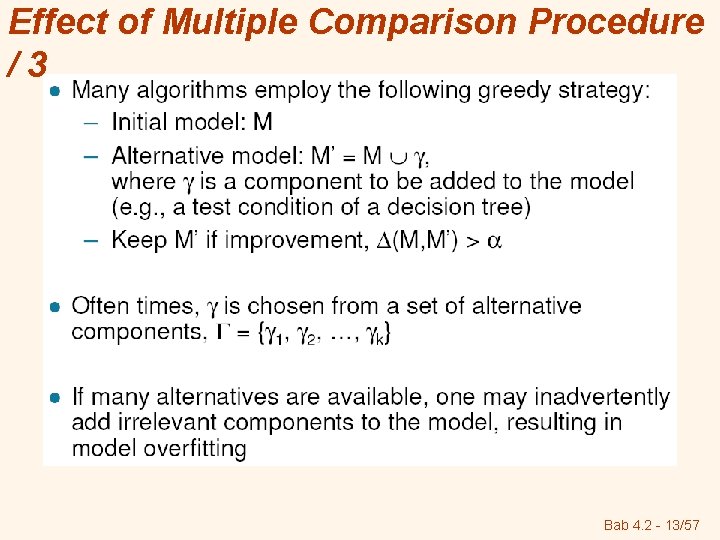

Effect of Multiple Comparison Procedure /1 Bab 4. 2 - 11/57

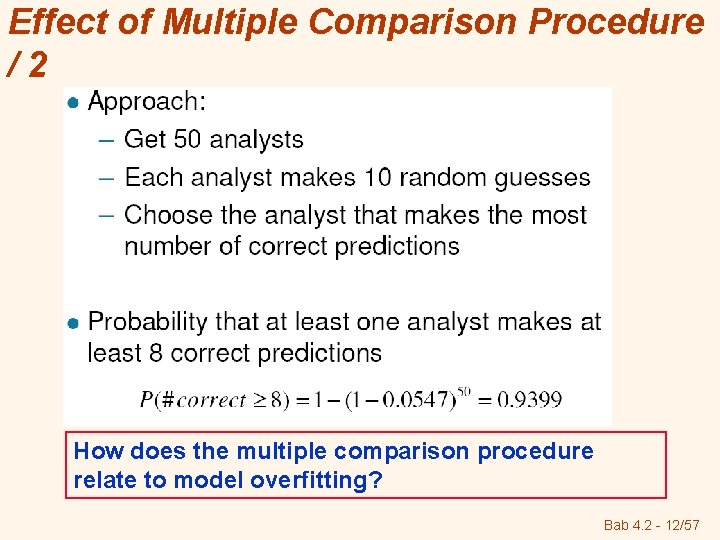

Effect of Multiple Comparison Procedure /2 How does the multiple comparison procedure relate to model overfitting? Bab 4. 2 - 12/57

Effect of Multiple Comparison Procedure /3 Bab 4. 2 - 13/57

Notes on Overfitting Bab 4. 2 - 14/57

Estimating Generalization Errors Bab 4. 2 - 15/57

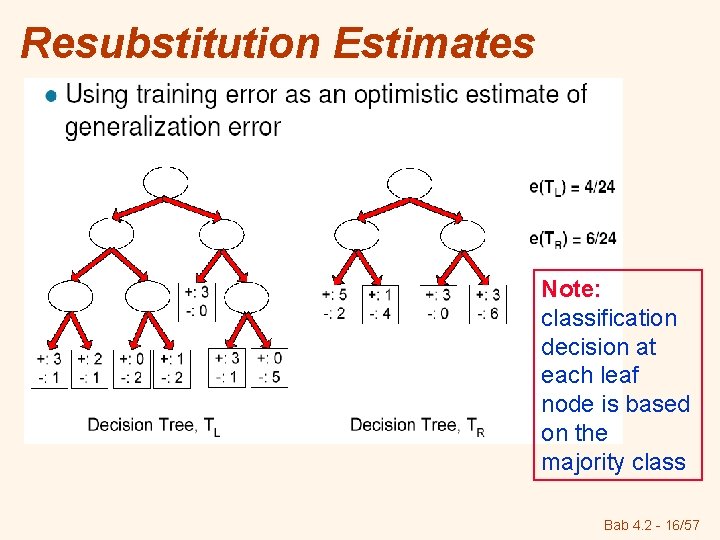

Resubstitution Estimates Note: classification decision at each leaf node is based on the majority class Bab 4. 2 - 16/57

Incorporating Model Complexity (IMC) Bab 4. 2 - 17/57

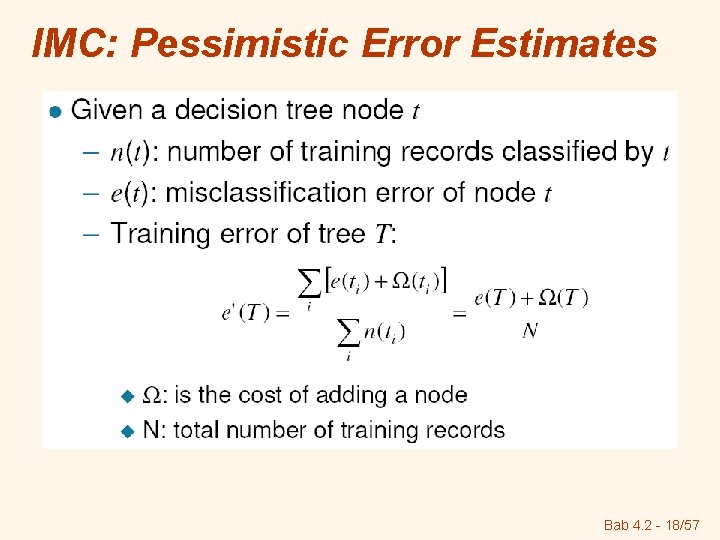

IMC: Pessimistic Error Estimates Bab 4. 2 - 18/57

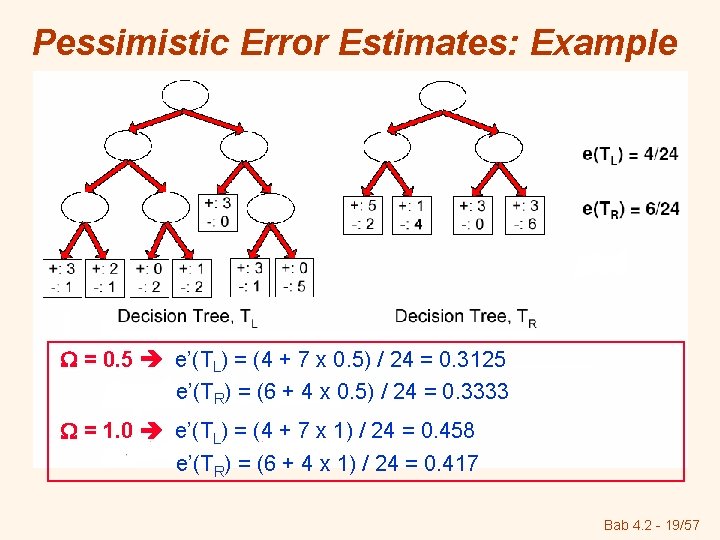

Pessimistic Error Estimates: Example = 0. 5 e’(TL) = (4 + 7 x 0. 5) / 24 = 0. 3125 e’(TR) = (6 + 4 x 0. 5) / 24 = 0. 3333 = 1. 0 e’(TL) = (4 + 7 x 1) / 24 = 0. 458 e’(TR) = (6 + 4 x 1) / 24 = 0. 417 Bab 4. 2 - 19/57

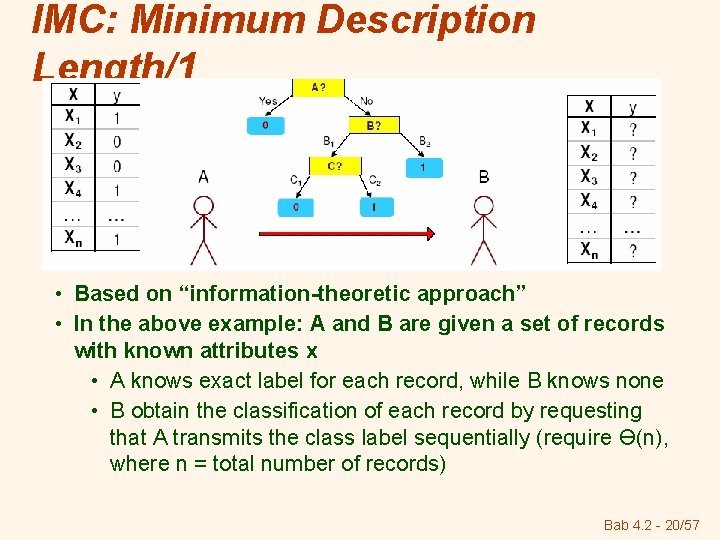

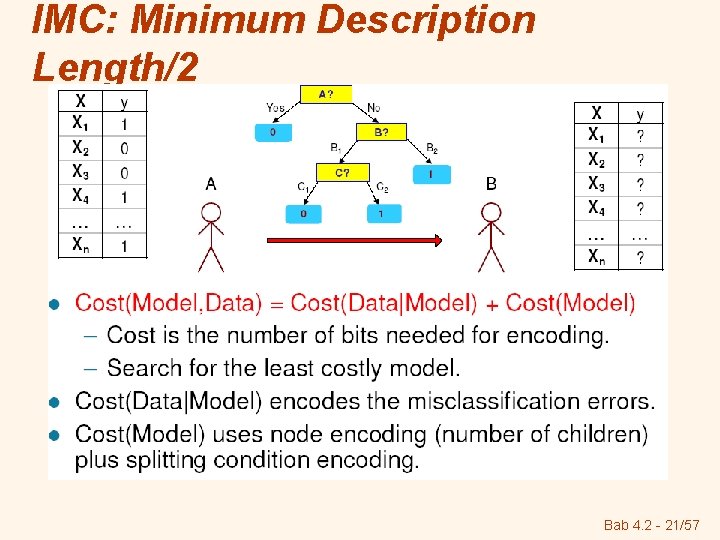

IMC: Minimum Description Length/1 • Based on “information-theoretic approach” • In the above example: A and B are given a set of records with known attributes x • A knows exact label for each record, while B knows none • B obtain the classification of each record by requesting that A transmits the class label sequentially (require Ө(n), where n = total number of records) Bab 4. 2 - 20/57

IMC: Minimum Description Length/2 Bab 4. 2 - 21/57

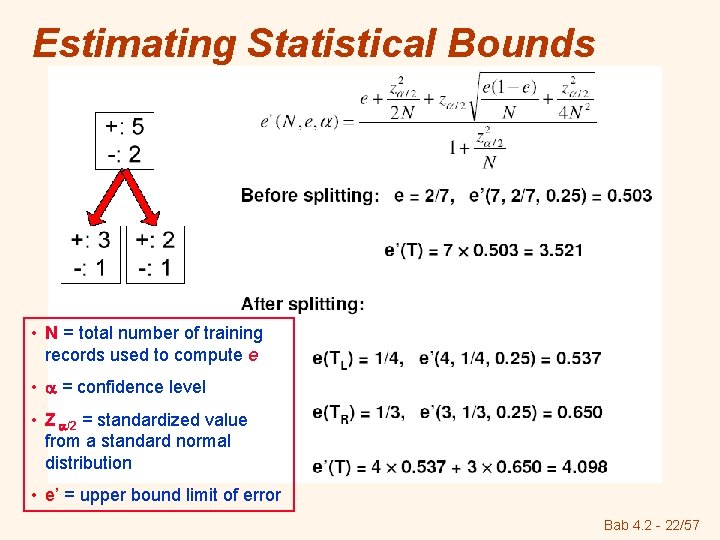

Estimating Statistical Bounds • N = total number of training records used to compute e • = confidence level • Z /2 = standardized value from a standard normal distribution • e’ = upper bound limit of error Bab 4. 2 - 22/57

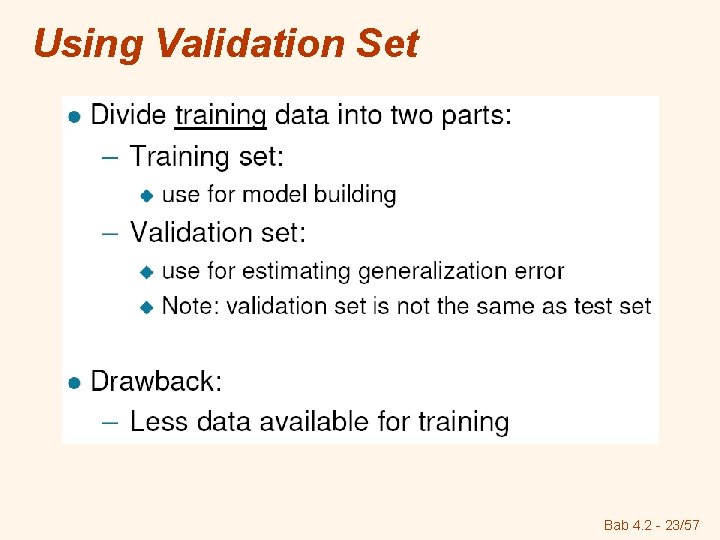

Using Validation Set Bab 4. 2 - 23/57

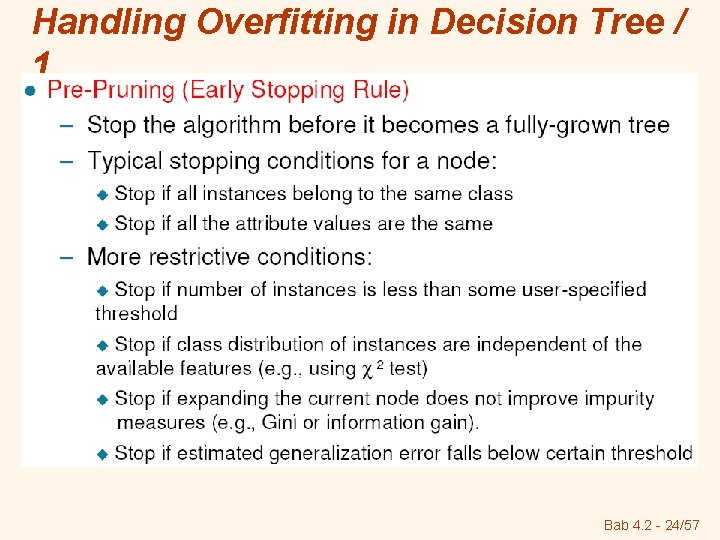

Handling Overfitting in Decision Tree / 1 Bab 4. 2 - 24/57

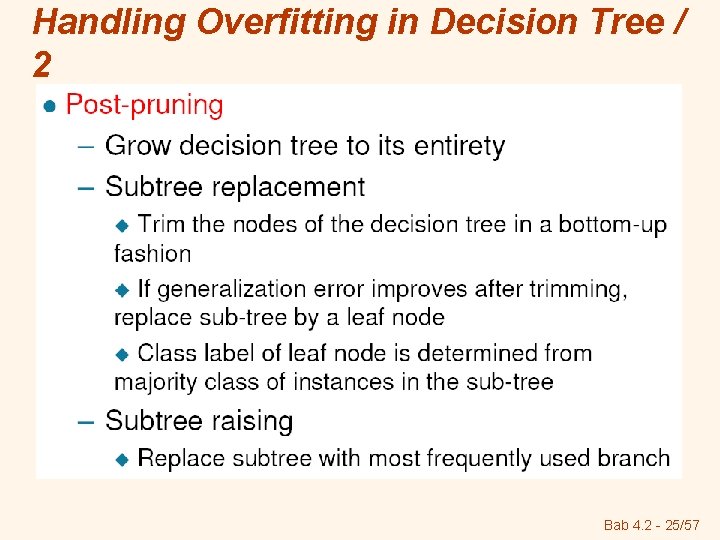

Handling Overfitting in Decision Tree / 2 Bab 4. 2 - 25/57

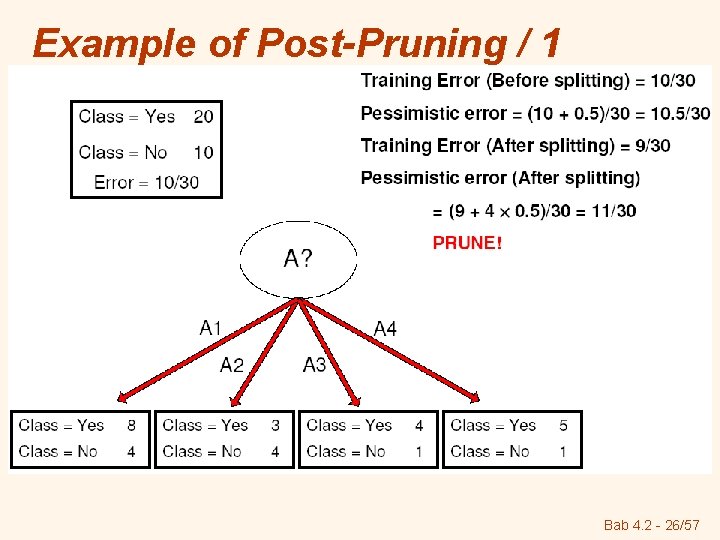

Example of Post-Pruning / 1 Bab 4. 2 - 26/57

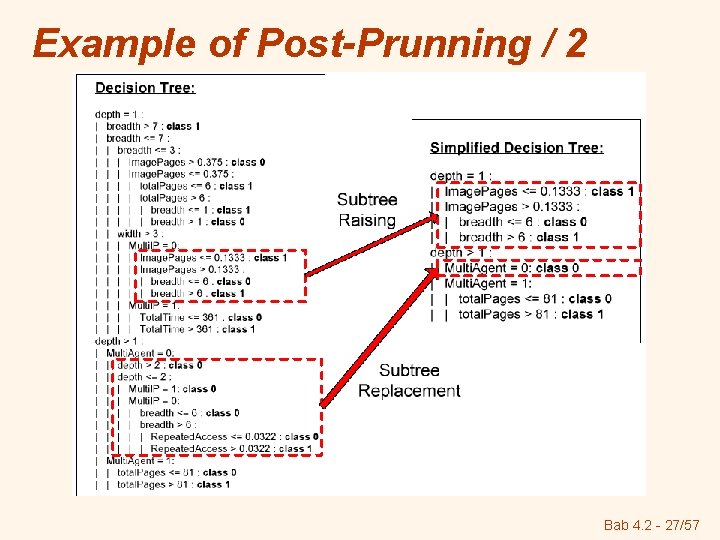

Example of Post-Prunning / 2 Bab 4. 2 - 27/57

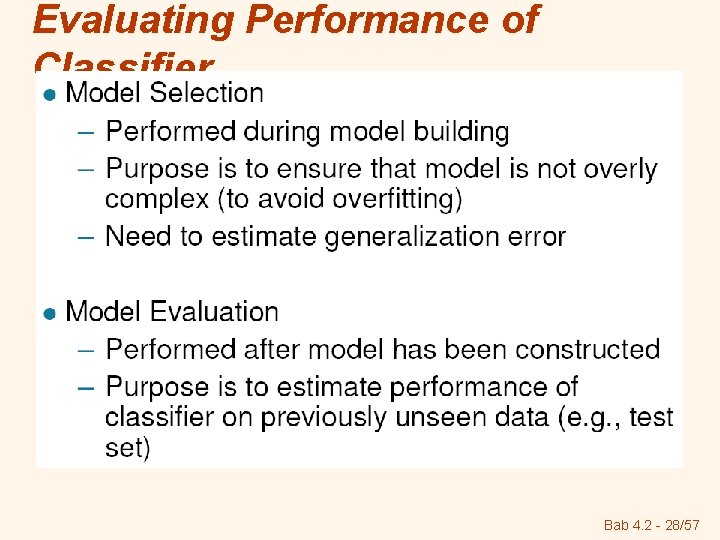

Evaluating Performance of Classifier Bab 4. 2 - 28/57

Model Evaluation v Metrics for Performance Evaluation § How to evaluate the performance of a model? v Methods for Performance Evaluation § How to obtain reliable estimates? v Methods for Model Comparison § How to compare the relative performance among competing models? Bab 4. 2 - 29/57

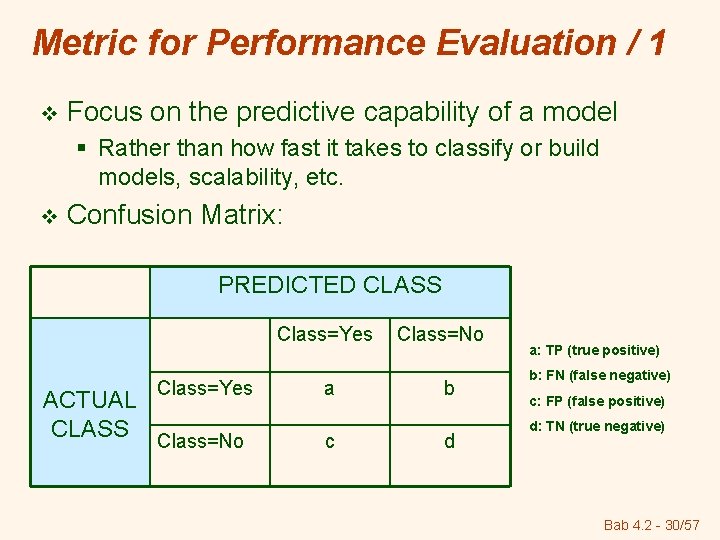

Metric for Performance Evaluation / 1 v Focus on the predictive capability of a model § Rather than how fast it takes to classify or build models, scalability, etc. v Confusion Matrix: PREDICTED CLASS Class=Yes ACTUAL CLASS Class=No a c Class=No b d a: TP (true positive) b: FN (false negative) c: FP (false positive) d: TN (true negative) Bab 4. 2 - 30/57

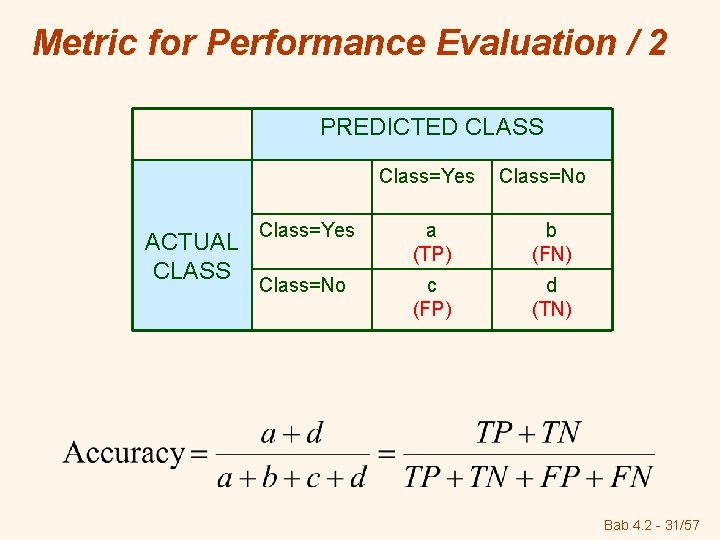

Metric for Performance Evaluation / 2 PREDICTED CLASS Class=Yes ACTUAL CLASS Class=No Class=Yes a (TP) b (FN) Class=No c (FP) d (TN) Bab 4. 2 - 31/57

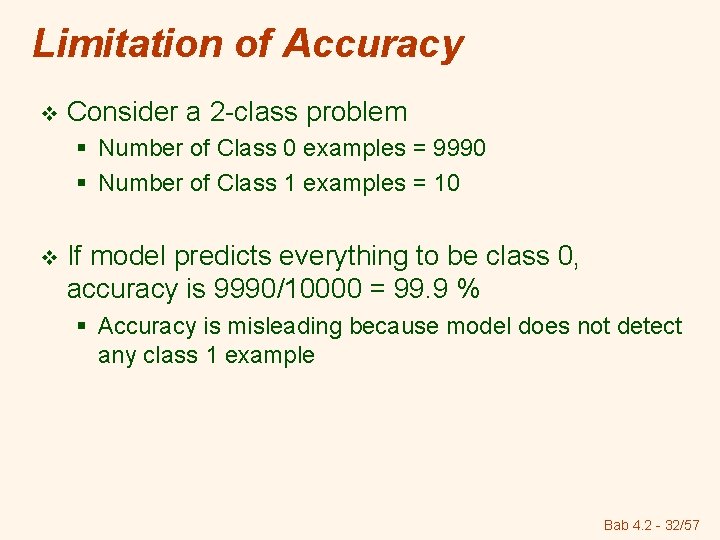

Limitation of Accuracy v Consider a 2 -class problem § Number of Class 0 examples = 9990 § Number of Class 1 examples = 10 v If model predicts everything to be class 0, accuracy is 9990/10000 = 99. 9 % § Accuracy is misleading because model does not detect any class 1 example Bab 4. 2 - 32/57

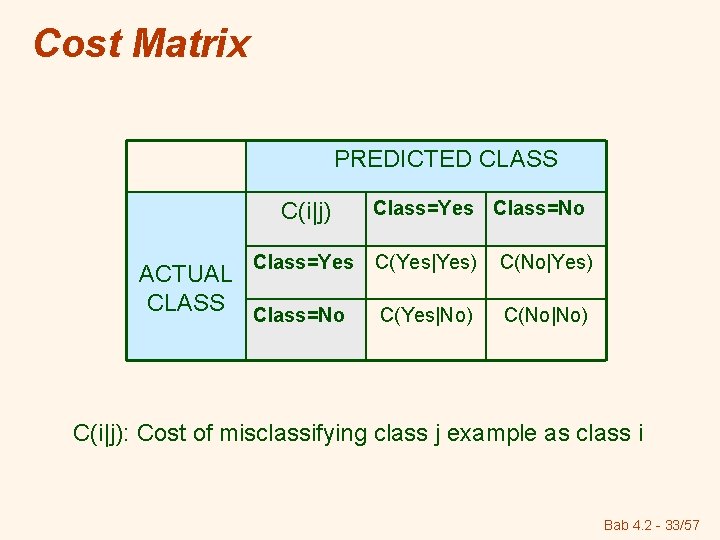

Cost Matrix PREDICTED CLASS C(i|j) Class=Yes ACTUAL CLASS Class=No Class=Yes Class=No C(Yes|Yes) C(No|Yes) C(Yes|No) C(No|No) C(i|j): Cost of misclassifying class j example as class i Bab 4. 2 - 33/57

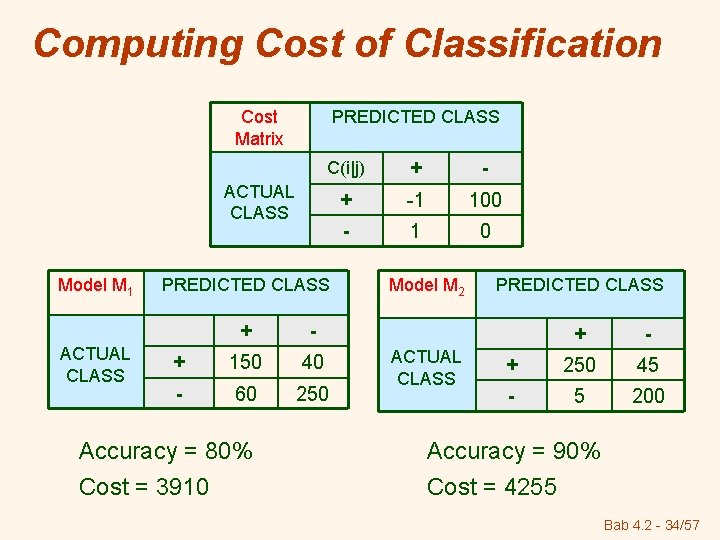

Computing Cost of Classification Cost Matrix PREDICTED CLASS C(i|j) + -1 100 - 1 0 ACTUAL CLASS Model M 1 ACTUAL CLASS PREDICTED CLASS + - + 150 40 - 60 250 Accuracy = 80% Cost = 3910 Model M 2 ACTUAL CLASS PREDICTED CLASS + - + 250 45 - 5 200 Accuracy = 90% Cost = 4255 Bab 4. 2 - 34/57

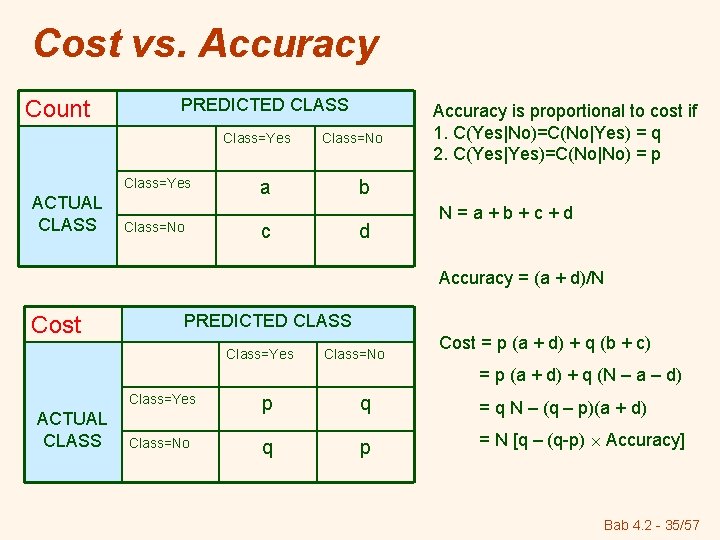

Cost vs. Accuracy Count PREDICTED CLASS Class=Yes ACTUAL CLASS Class=No a Accuracy is proportional to cost if 1. C(Yes|No)=C(No|Yes) = q 2. C(Yes|Yes)=C(No|No) = p b c d N=a+b+c+d Accuracy = (a + d)/N Cost PREDICTED CLASS Class=Yes Class=No Cost = p (a + d) + q (b + c) = p (a + d) + q (N – a – d) ACTUAL CLASS Class=Yes p q = q N – (q – p)(a + d) Class=No q p = N [q – (q-p) Accuracy] Bab 4. 2 - 35/57

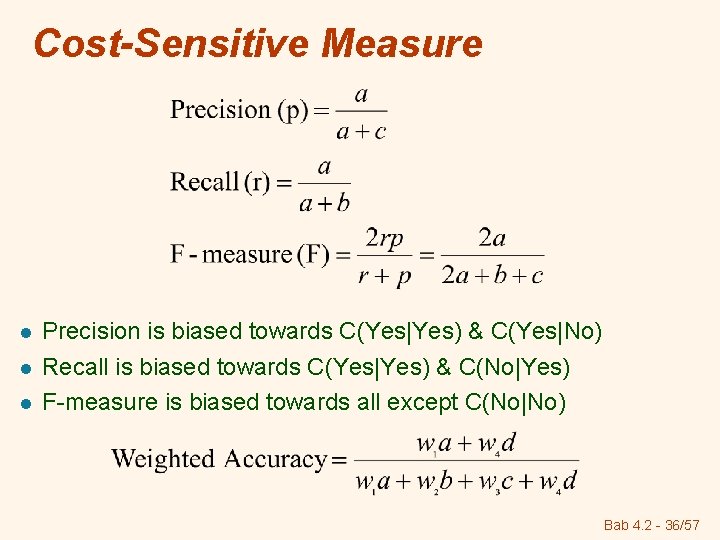

Cost-Sensitive Measure l l l Precision is biased towards C(Yes|Yes) & C(Yes|No) Recall is biased towards C(Yes|Yes) & C(No|Yes) F-measure is biased towards all except C(No|No) Bab 4. 2 - 36/57

Methods for Performance Evaluation v How to obtain a reliable estimate of performance? v Performance of a model may depend on other factors besides the learning algorithm: § Class distribution § Cost of misclassification § Size of training and test sets Bab 4. 2 - 37/57

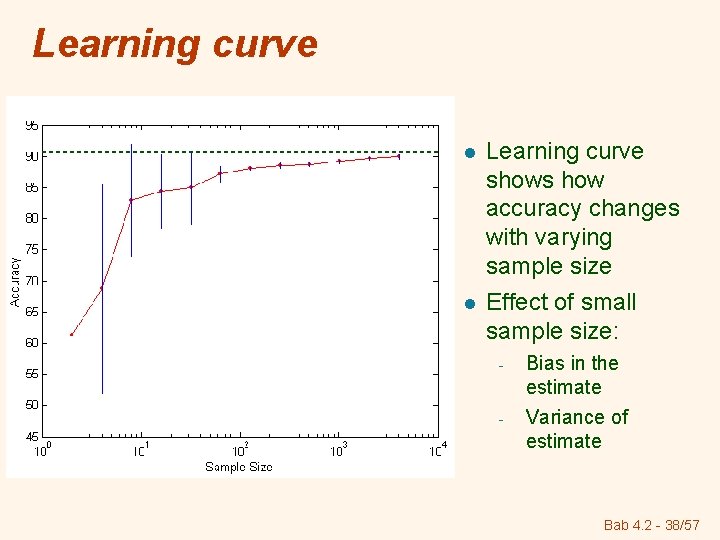

Learning curve l l Learning curve shows how accuracy changes with varying sample size Effect of small sample size: - Bias in the estimate - Variance of estimate Bab 4. 2 - 38/57

Methods for Estimation Holdout Method Random Subsampling Cross-Validation Bootstrap Bab 4. 2 - 39/57

Holdout Method Original data is partitioned into 2 disjoint sets: training dan test sets § § Reserve k% as training set and (100 -k)% as test set Accuracy of the classifier can be estimated based on the accuracy of the induced model on the test set Limitations: The induced model may not be as good as when all the labeled examples are used for training (i. e. , the smaller the training size, the larger the variance of the model) § If the training set is too large, the estimated accuracy computed from the smaller test set is less reliable (i. e. , a wide confidence interval) § The training and test sets are no longer independent of each other, because the training and test sets are subsets of the original data) § Bab 4. 2 - 40/57

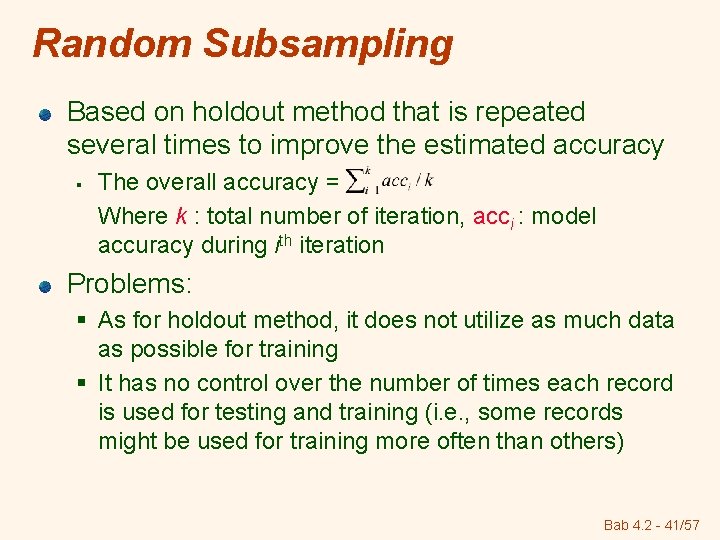

Random Subsampling Based on holdout method that is repeated several times to improve the estimated accuracy § The overall accuracy = Where k : total number of iteration, acci : model accuracy during ith iteration Problems: § As for holdout method, it does not utilize as much data as possible for training § It has no control over the number of times each record is used for testing and training (i. e. , some records might be used for training more often than others) Bab 4. 2 - 41/57

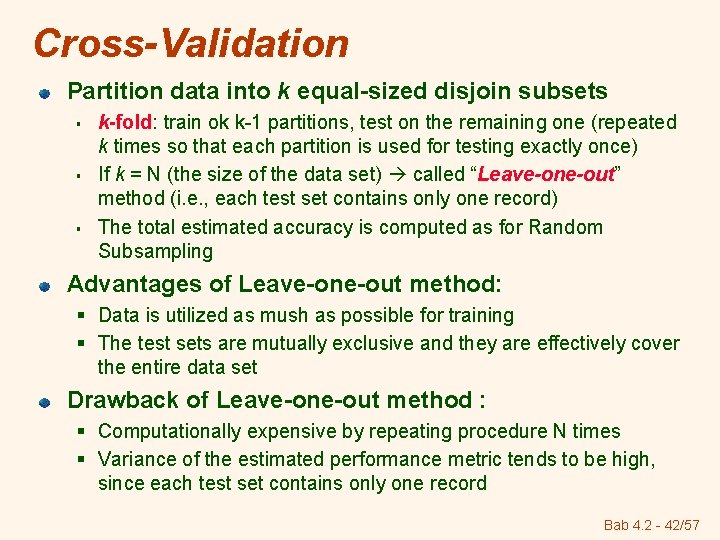

Cross-Validation Partition data into k equal-sized disjoin subsets § § § k-fold: train ok k-1 partitions, test on the remaining one (repeated k times so that each partition is used for testing exactly once) If k = N (the size of the data set) called “Leave-one-out” method (i. e. , each test set contains only one record) The total estimated accuracy is computed as for Random Subsampling Advantages of Leave-one-out method: § Data is utilized as mush as possible for training § The test sets are mutually exclusive and they are effectively cover the entire data set Drawback of Leave-one-out method : § Computationally expensive by repeating procedure N times § Variance of the estimated performance metric tends to be high, since each test set contains only one record Bab 4. 2 - 42/57

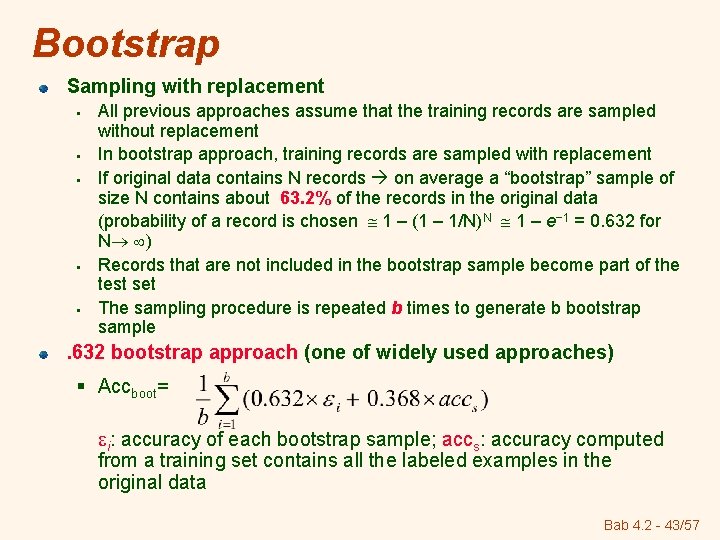

Bootstrap Sampling with replacement § § § All previous approaches assume that the training records are sampled without replacement In bootstrap approach, training records are sampled with replacement If original data contains N records on average a “bootstrap” sample of size N contains about 63. 2% of the records in the original data (probability of a record is chosen 1 – (1 – 1/N)N 1 – e− 1 = 0. 632 for N ) Records that are not included in the bootstrap sample become part of the test set The sampling procedure is repeated b times to generate b bootstrap sample . 632 bootstrap approach (one of widely used approaches) § Accboot= i: accuracy of each bootstrap sample; accs: accuracy computed from a training set contains all the labeled examples in the original data Bab 4. 2 - 43/57

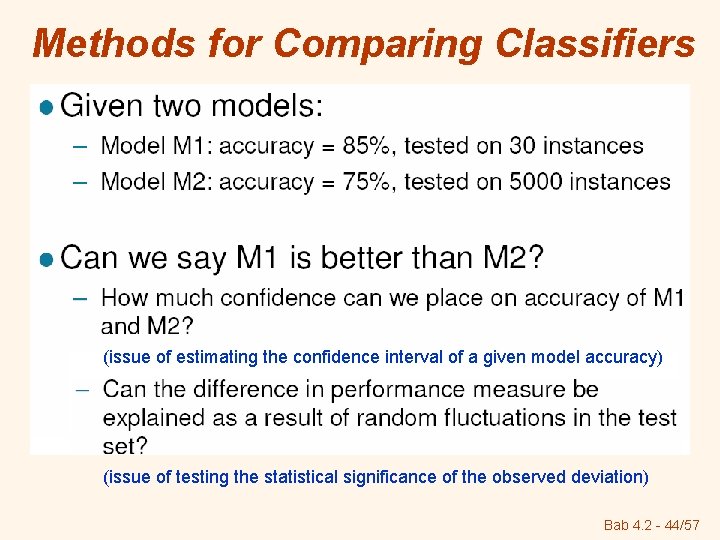

Methods for Comparing Classifiers (issue of estimating the confidence interval of a given model accuracy) (issue of testing the statistical significance of the observed deviation) Bab 4. 2 - 44/57

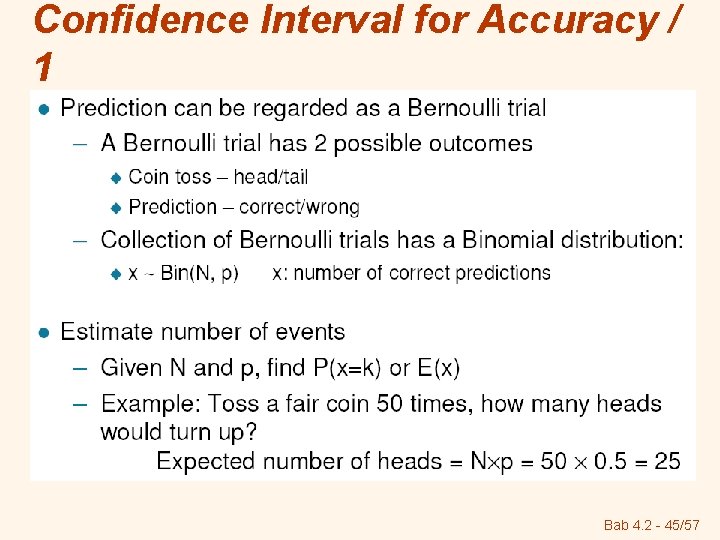

Confidence Interval for Accuracy / 1 Bab 4. 2 - 45/57

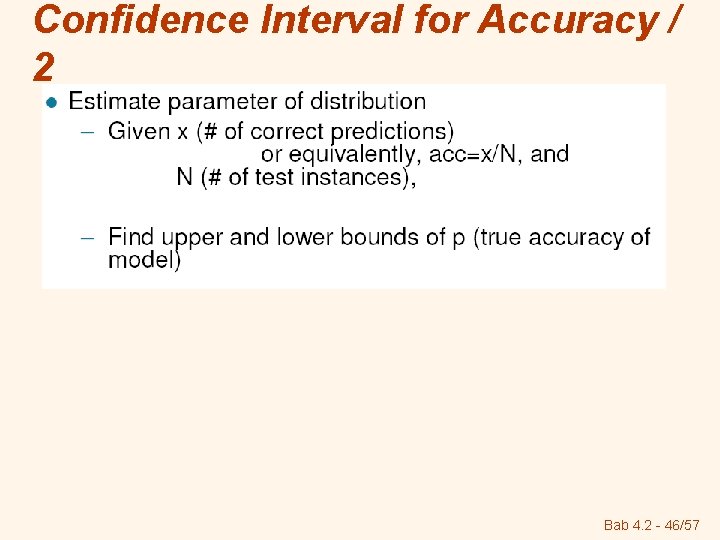

Confidence Interval for Accuracy / 2 Bab 4. 2 - 46/57

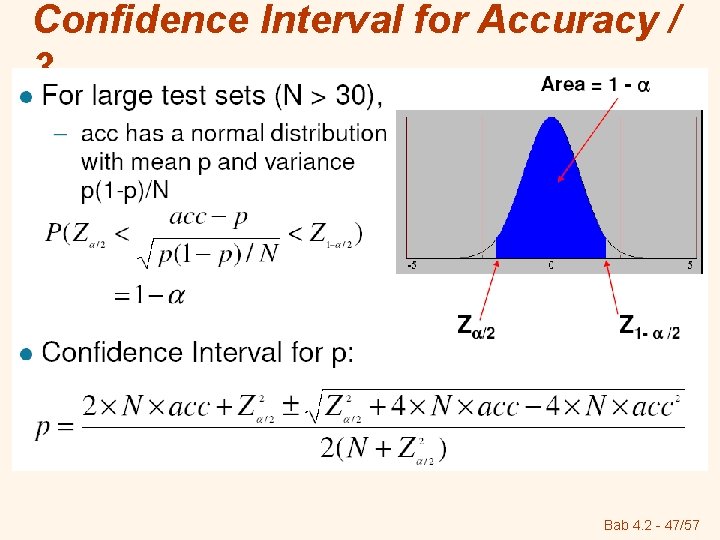

Confidence Interval for Accuracy / 3 Bab 4. 2 - 47/57

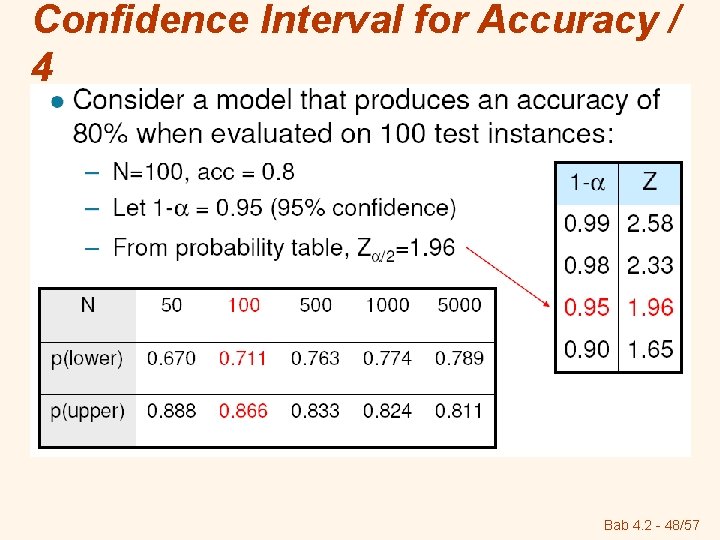

Confidence Interval for Accuracy / 4 Bab 4. 2 - 48/57

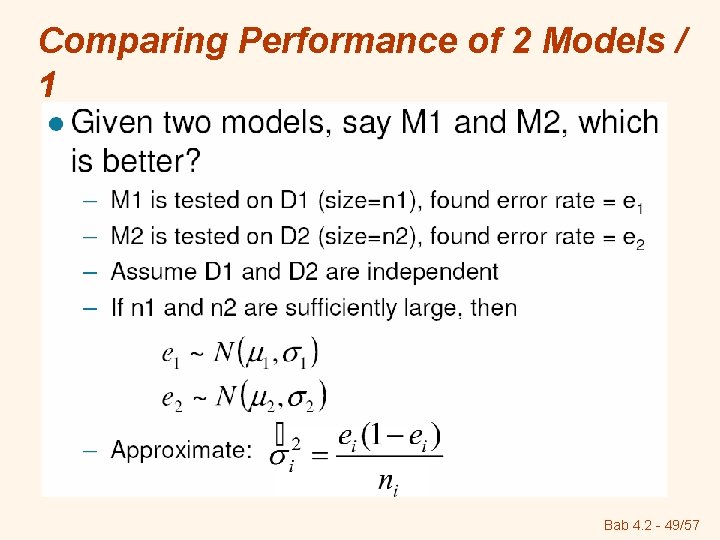

Comparing Performance of 2 Models / 1 Bab 4. 2 - 49/57

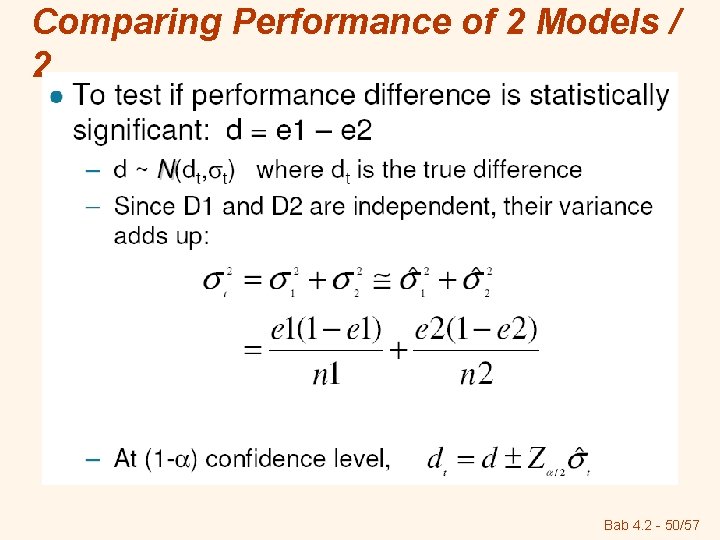

Comparing Performance of 2 Models / 2 Bab 4. 2 - 50/57

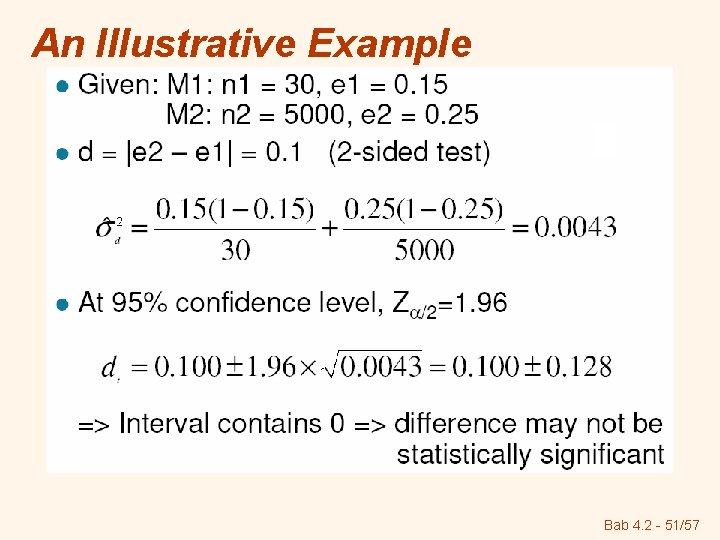

An Illustrative Example Bab 4. 2 - 51/57

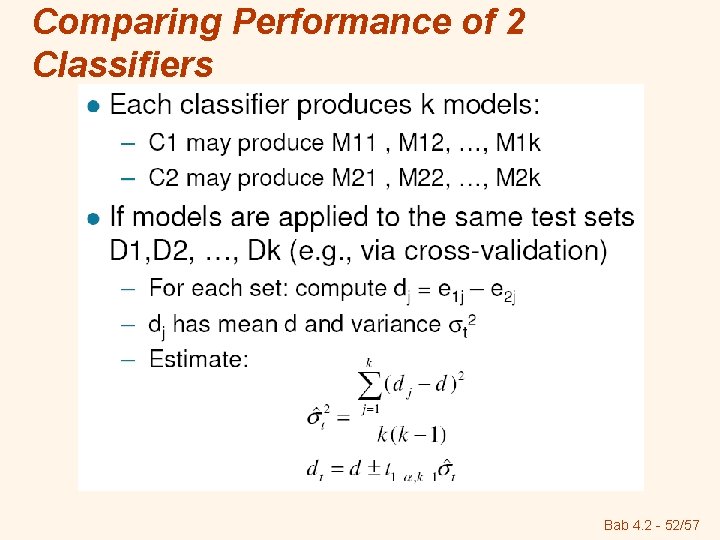

Comparing Performance of 2 Classifiers Bab 4. 2 - 52/57

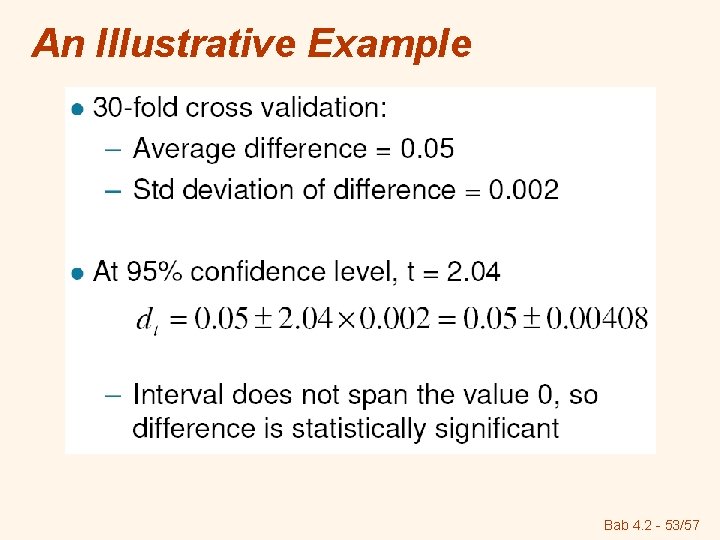

An Illustrative Example Bab 4. 2 - 53/57

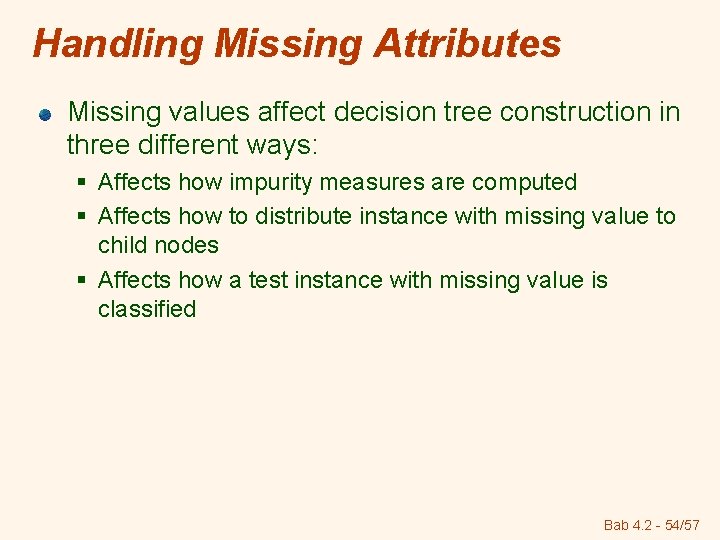

Handling Missing Attributes Missing values affect decision tree construction in three different ways: § Affects how impurity measures are computed § Affects how to distribute instance with missing value to child nodes § Affects how a test instance with missing value is classified Bab 4. 2 - 54/57

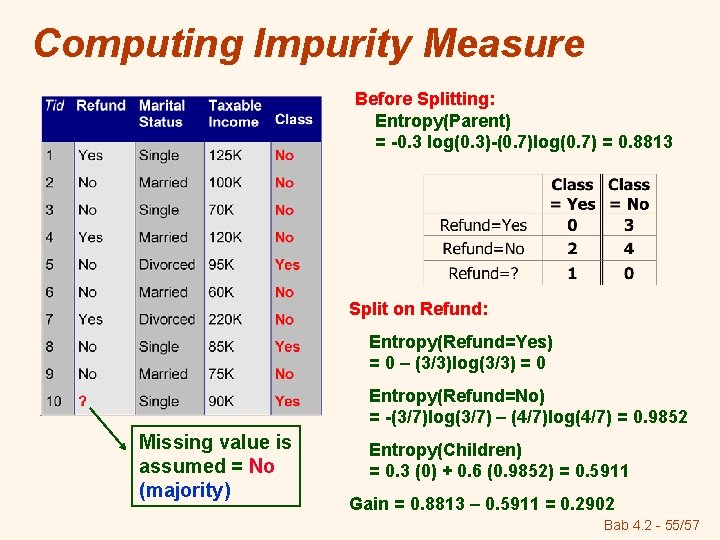

Computing Impurity Measure Before Splitting: Entropy(Parent) = -0. 3 log(0. 3)-(0. 7)log(0. 7) = 0. 8813 Split on Refund: Entropy(Refund=Yes) = 0 – (3/3)log(3/3) = 0 Entropy(Refund=No) = -(3/7)log(3/7) – (4/7)log(4/7) = 0. 9852 Missing value is assumed = No (majority) Entropy(Children) = 0. 3 (0) + 0. 6 (0. 9852) = 0. 5911 Gain = 0. 8813 – 0. 5911 = 0. 2902 Bab 4. 2 - 55/57

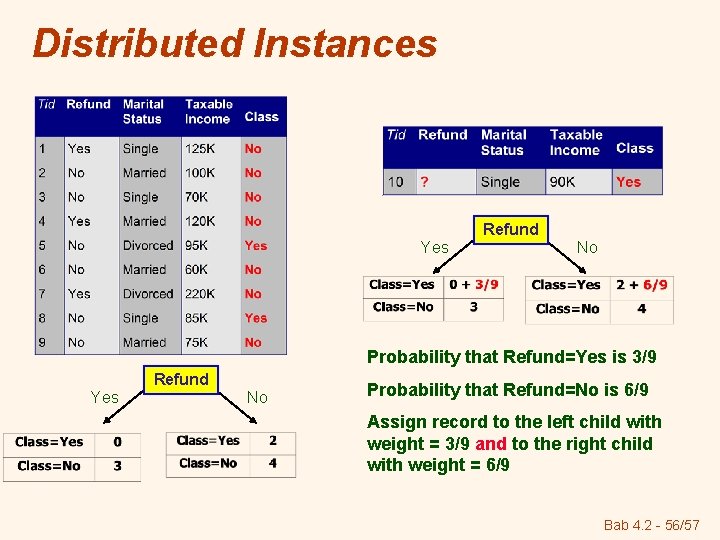

Distributed Instances Refund Yes No Probability that Refund=Yes is 3/9 Refund Yes No Probability that Refund=No is 6/9 Assign record to the left child with weight = 3/9 and to the right child with weight = 6/9 Bab 4. 2 - 56/57

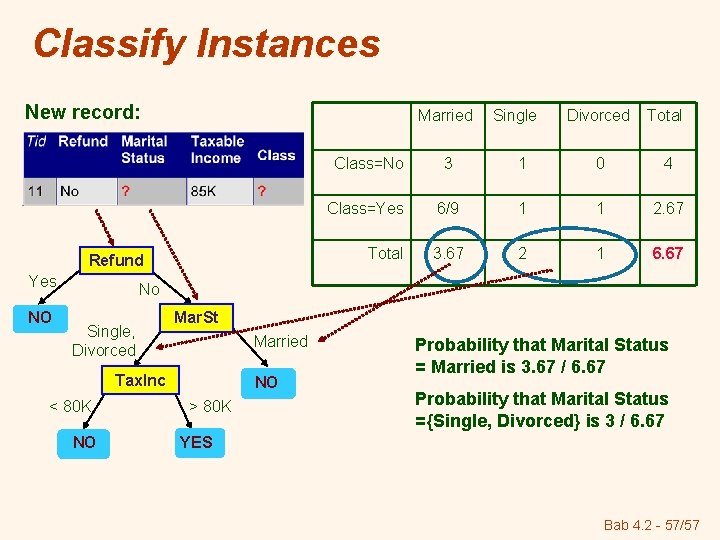

Classify Instances New record: Married Refund Yes NO Single Divorced Total Class=No 3 1 0 4 Class=Yes 6/9 1 1 2. 67 Total 3. 67 2 1 6. 67 No Single, Divorced Mar. St Married Tax. Inc < 80 K NO NO > 80 K Probability that Marital Status = Married is 3. 67 / 6. 67 Probability that Marital Status ={Single, Divorced} is 3 / 6. 67 YES Bab 4. 2 - 57/57

Tugas Kelompok Bab 4 Soal Nomor: 5, 6, 7, 9, 10, 11 Selain hardcopy, siapkan softcopynya untuk didiskusikan Dead line: Selasa (17 Maret 2008) Bab 4. 2 - 58/57

- Slides: 58