Automated Fitness Guided Fault Localization Josh Wilkerson Ph

![References Page 15 [1] I. Vessey, “Expertise in Debugging Computer Programs: An Analysis of References Page 15 [1] I. Vessey, “Expertise in Debugging Computer Programs: An Analysis of](https://slidetodoc.com/presentation_image_h2/0fca7b073f4528bb4e2be1543b97b9b0/image-15.jpg)

- Slides: 16

Automated Fitness Guided Fault Localization Josh Wilkerson, Ph. D. candidate Natural Computation Laboratory

Technical Background Software testing – Essential phase in software design – Subject software to test cases to expose errors – Locate errors in code • Fault localization • Most expensive component of software debugging [1] – Tools and techniques available to assist • Automation of this process is a very active research area Page 2

Technical Background Page 3 Fitness Function (FF) – Quantifies program performance • Sensitive to all objectives of the program • Graduated – Can be generated from: • Formal/informal specifications • Oracle (i. e. , software developer) – Correct execution: high/maximum fitness – Incorrect execution: quantify how close to being correct the execution was

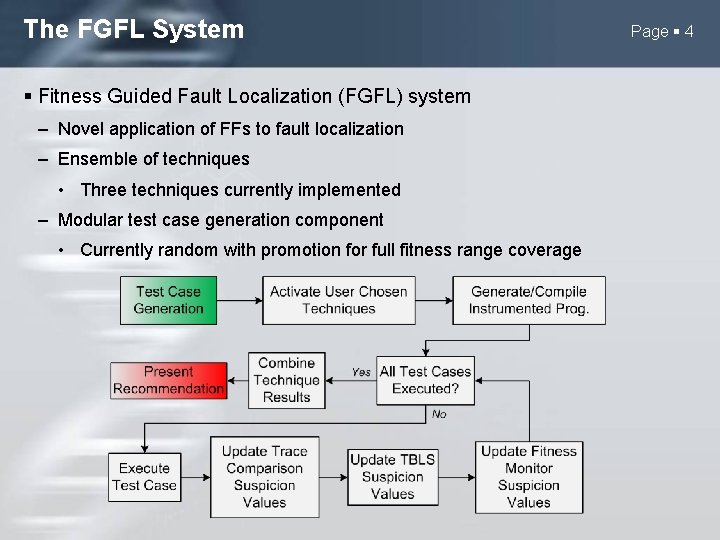

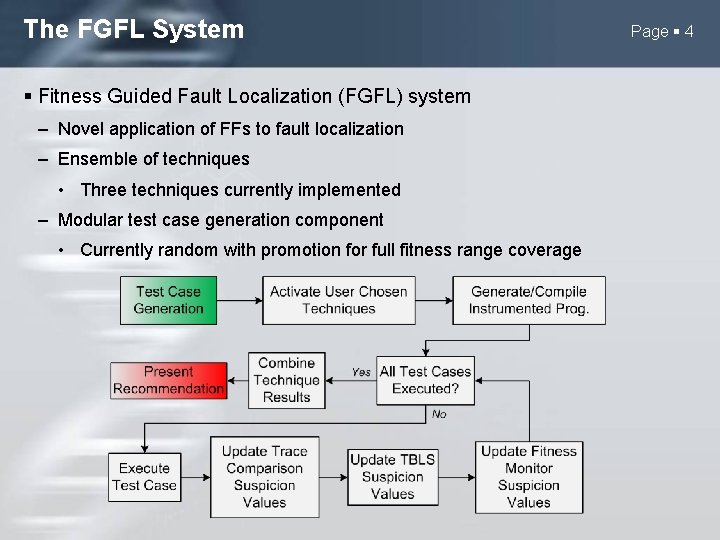

The FGFL System Fitness Guided Fault Localization (FGFL) system – Novel application of FFs to fault localization – Ensemble of techniques • Three techniques currently implemented – Modular test case generation component • Currently random with promotion for full fitness range coverage Page 4

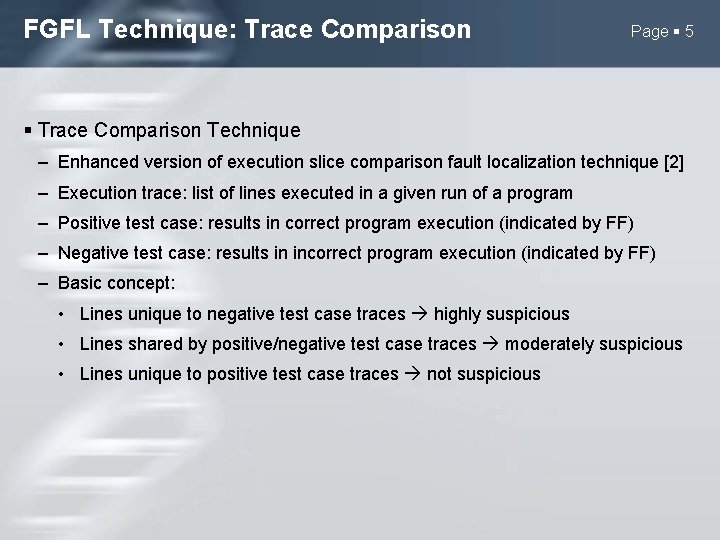

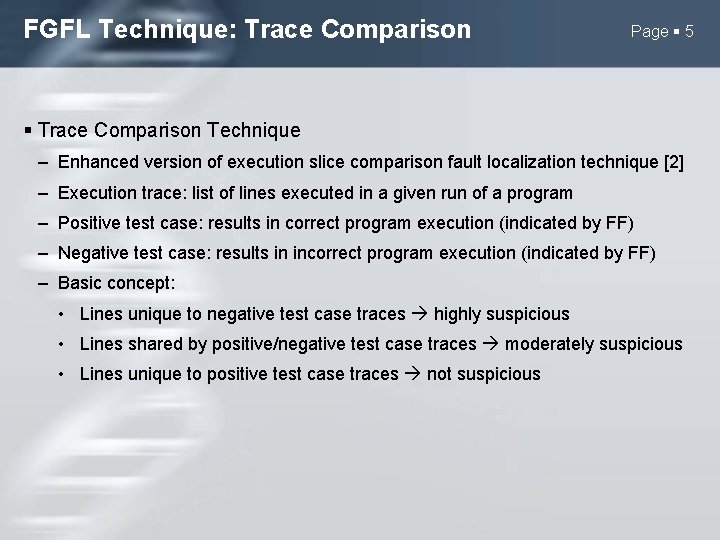

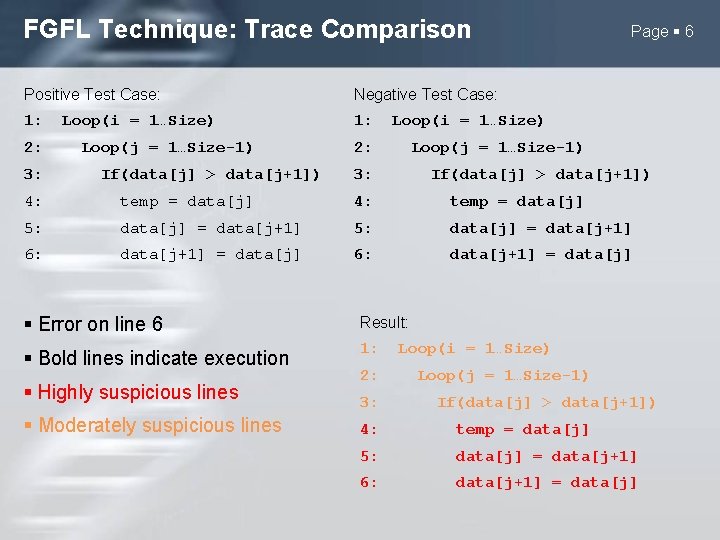

FGFL Technique: Trace Comparison Page 5 Trace Comparison Technique – Enhanced version of execution slice comparison fault localization technique [2] – Execution trace: list of lines executed in a given run of a program – Positive test case: results in correct program execution (indicated by FF) – Negative test case: results in incorrect program execution (indicated by FF) – Basic concept: • Lines unique to negative test case traces highly suspicious • Lines shared by positive/negative test case traces moderately suspicious • Lines unique to positive test case traces not suspicious

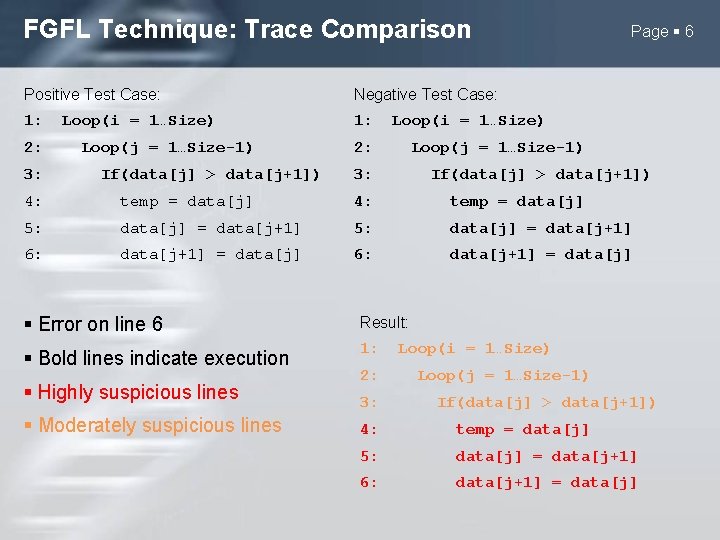

FGFL Technique: Trace Comparison Positive Test Case: Negative Test Case: 1: 2: 3: Loop(i = 1…Size) Loop(j = 1…Size-1) If(data[j] > data[j+1]) Page 6 Loop(i = 1…Size) 2: Loop(j = 1…Size-1) 3: If(data[j] > data[j+1]) 4: temp = data[j] 5: data[j] = data[j+1] 6: data[j+1] = data[j] Error on line 6 Result: Bold lines indicate execution 1: Highly suspicious lines Moderately suspicious lines 2: 3: Loop(i = 1…Size) Loop(j = 1…Size-1) If(data[j] > data[j+1]) 4: temp = data[j] 5: data[j] = data[j+1] 6: data[j+1] = data[j]

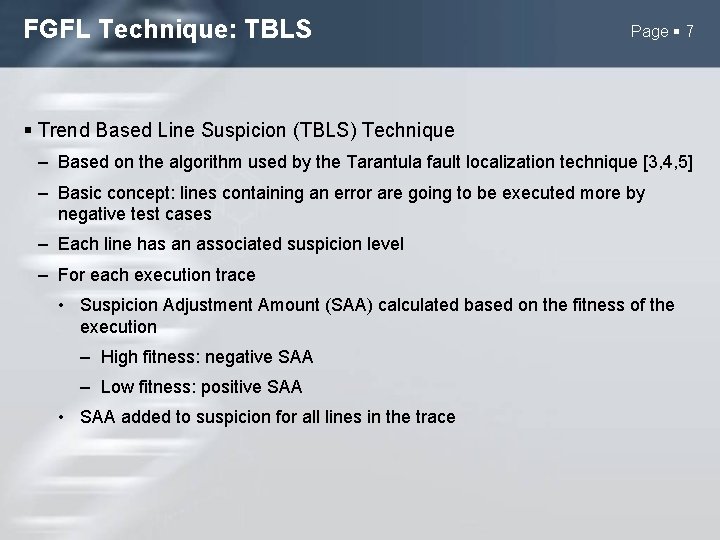

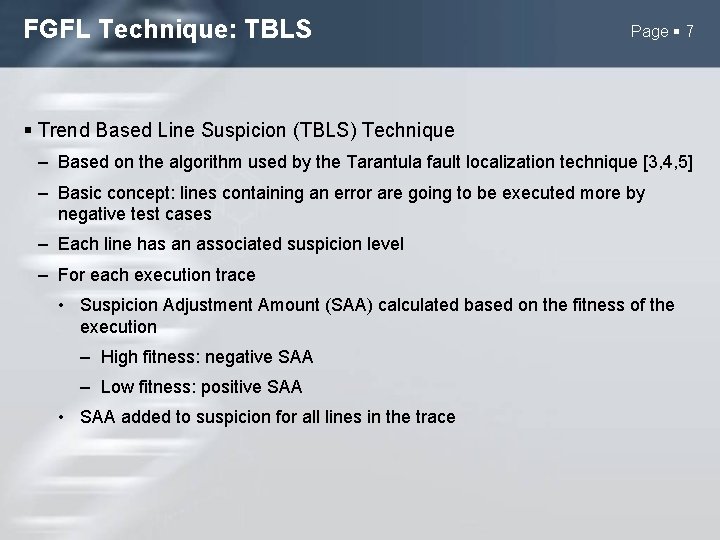

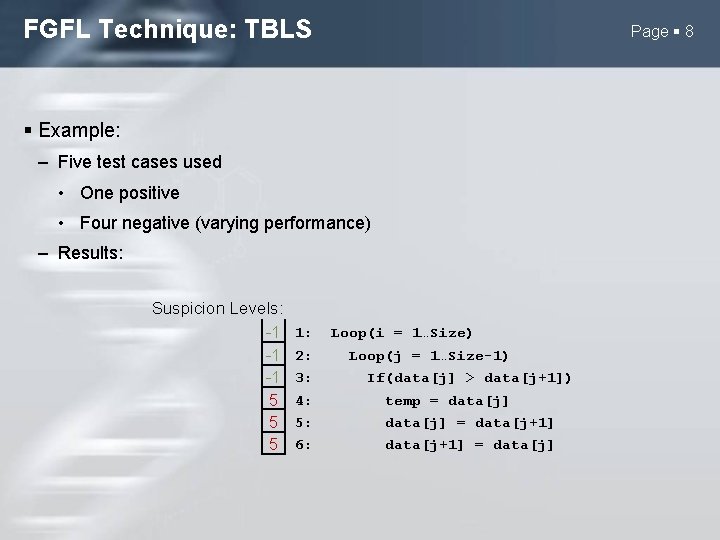

FGFL Technique: TBLS Page 7 Trend Based Line Suspicion (TBLS) Technique – Based on the algorithm used by the Tarantula fault localization technique [3, 4, 5] – Basic concept: lines containing an error are going to be executed more by negative test cases – Each line has an associated suspicion level – For each execution trace • Suspicion Adjustment Amount (SAA) calculated based on the fitness of the execution – High fitness: negative SAA – Low fitness: positive SAA • SAA added to suspicion for all lines in the trace

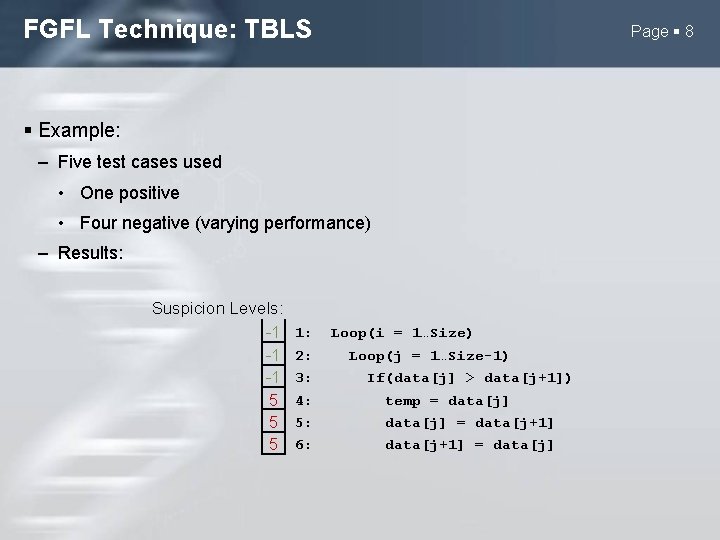

FGFL Technique: TBLS Page 8 Example: – Five test cases used • One positive • Four negative (varying performance) – Results: Suspicion Levels: -1 -1 -1 5 5 5 1: 2: 3: 4: 5: 6: Loop(i = 1…Size) Loop(j = 1…Size-1) If(data[j] > data[j+1]) temp = data[j] = data[j+1] = data[j]

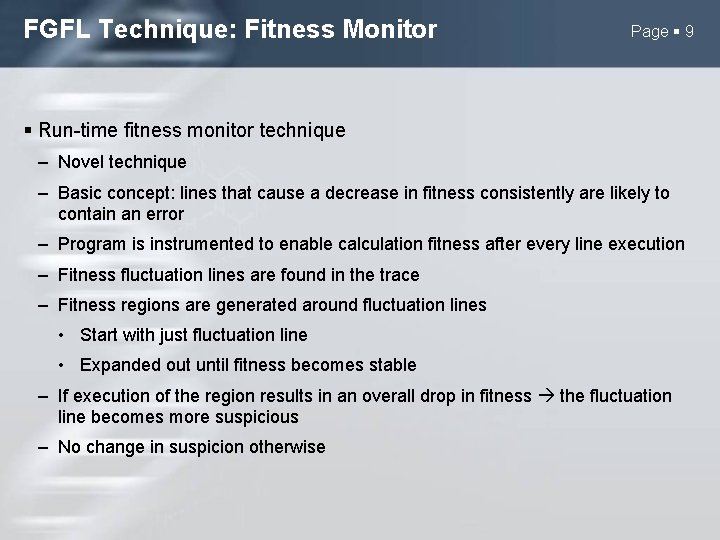

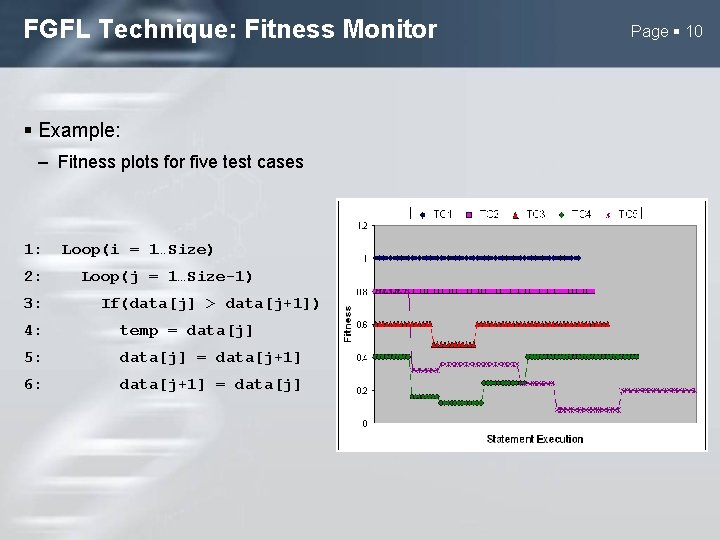

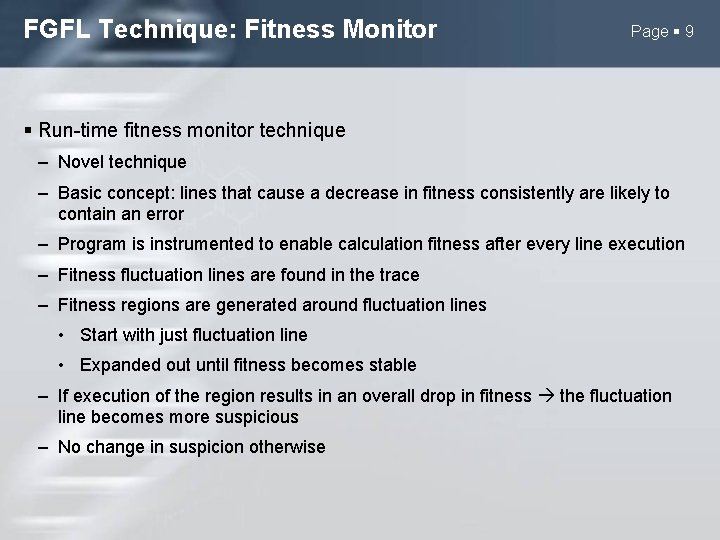

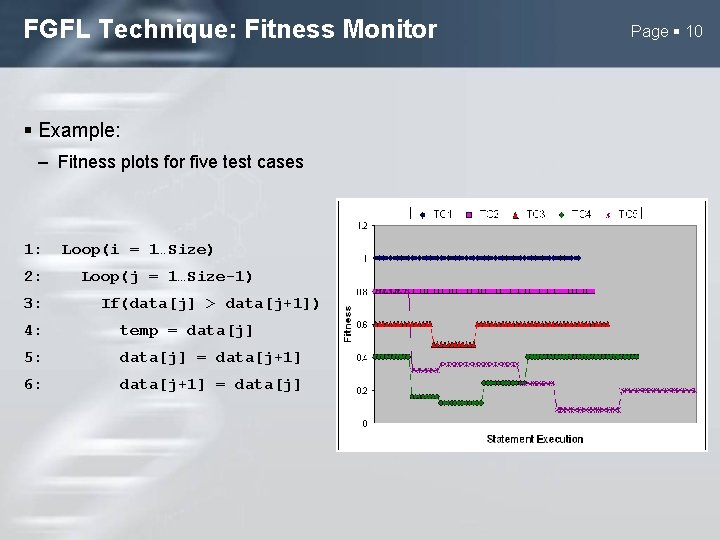

FGFL Technique: Fitness Monitor Page 9 Run-time fitness monitor technique – Novel technique – Basic concept: lines that cause a decrease in fitness consistently are likely to contain an error – Program is instrumented to enable calculation fitness after every line execution – Fitness fluctuation lines are found in the trace – Fitness regions are generated around fluctuation lines • Start with just fluctuation line • Expanded out until fitness becomes stable – If execution of the region results in an overall drop in fitness the fluctuation line becomes more suspicious – No change in suspicion otherwise

FGFL Technique: Fitness Monitor Example: – Fitness plots for five test cases 1: 2: 3: Loop(i = 1…Size) Loop(j = 1…Size-1) If(data[j] > data[j+1]) 4: temp = data[j] 5: data[j] = data[j+1] 6: data[j+1] = data[j] Page 10

FGFL: Result Combination Technique results combined using a voting system Each technique is given an equal number of votes – Number of votes equal to the number of lines in the program – Techniques do not have to use all votes Suspicion values adjusted to reflect confidence in result – Suspicion values scaled relevant to maximum suspicion possible for the technique Votes are applied proportional to the confidence of the result Method chosen to help reduce misleading results Page 11

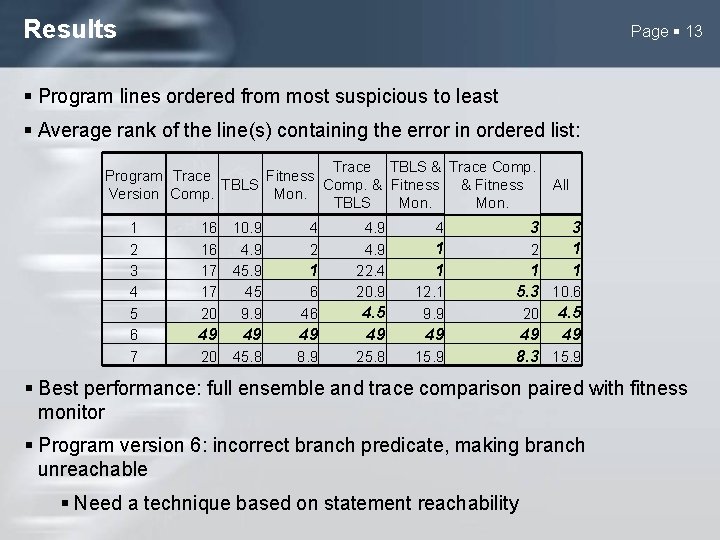

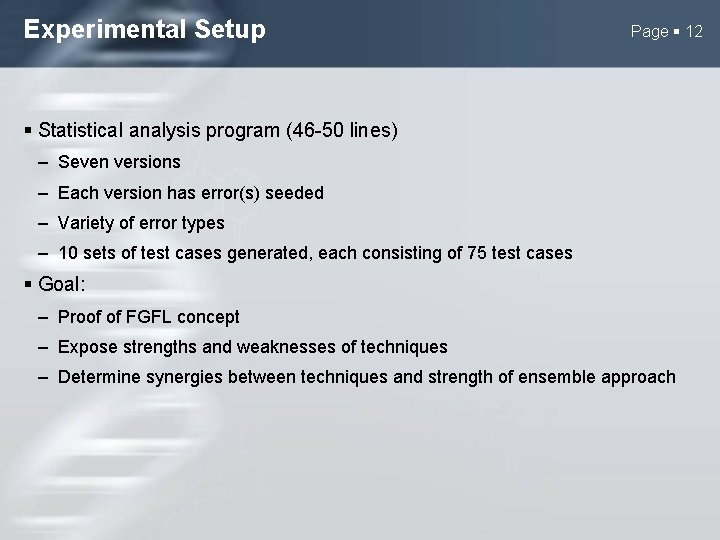

Experimental Setup Page 12 Statistical analysis program (46 -50 lines) – Seven versions – Each version has error(s) seeded – Variety of error types – 10 sets of test cases generated, each consisting of 75 test cases Goal: – Proof of FGFL concept – Expose strengths and weaknesses of techniques – Determine synergies between techniques and strength of ensemble approach

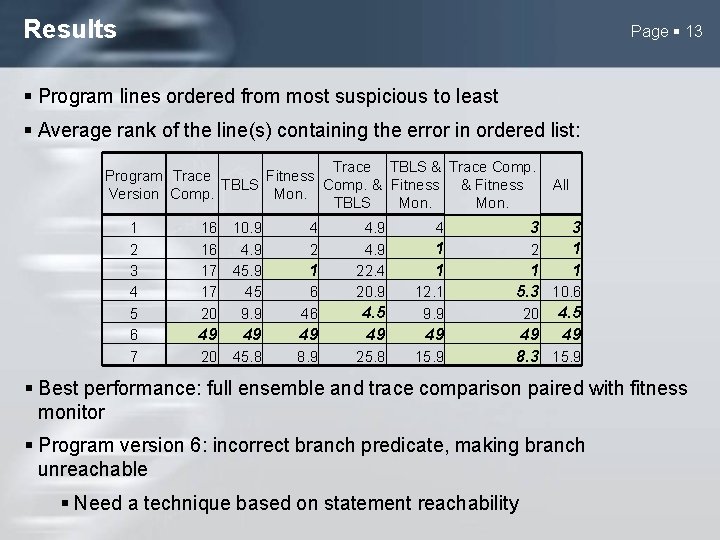

Results Page 13 Program lines ordered from most suspicious to least Average rank of the line(s) containing the error in ordered list: Trace TBLS & Trace Comp. Program Trace Fitness Comp. & Fitness TBLS Version Comp. Mon. TBLS Mon. 1 2 3 4 5 6 7 16 16 17 17 20 10. 9 45 9. 9 4 2 6 46 49 49 49 4. 5 49 20 45. 8 8. 9 25. 8 1 4. 9 22. 4 20. 9 4 3 1 1 2 12. 1 9. 9 49 15. 9 All 3 1 1 1 5. 3 10. 6 20 4. 5 49 49 8. 3 15. 9 Best performance: full ensemble and trace comparison paired with fitness monitor Program version 6: incorrect branch predicate, making branch unreachable Need a technique based on statement reachability

Ongoing Work Page 14 Techniques are still under active development Investigating the enhancement of other state-of-the-art fault localization techniques through a FF Development of new techniques exploiting the FF Use of multi-objective FF with FGFL Testing FGFL on well known, benchmark problems

![References Page 15 1 I Vessey Expertise in Debugging Computer Programs An Analysis of References Page 15 [1] I. Vessey, “Expertise in Debugging Computer Programs: An Analysis of](https://slidetodoc.com/presentation_image_h2/0fca7b073f4528bb4e2be1543b97b9b0/image-15.jpg)

References Page 15 [1] I. Vessey, “Expertise in Debugging Computer Programs: An Analysis of the Content of Verbal Protocols, ” IEEE Transactions on Systems, Man and Cybernetics, vol. 16, no. 5, pp. 621– 637, September 1986. [2] H. Agrawal, J. R. Horgan, S. London, and W. E. Wong, “Fault localization using execution slices and dataflow sets, ” in Proceedings of the 6 th IEEE International Symposium on Software Reliability Engineering, 1995, pp. 143– 151. [3] J. A. Jones, M. J. Harrold, and J. Stasko, “Visualization of test information to assist fault localization, ” in Proceedings of the 24 th International Conference on Software Engineering. New York, NY, USA: ACM, 2002, pp. 467– 477. [4] J. A. Jones and M. J. Harrold, “Empirical evaluation of the tarantula automatic fault-localization technique, ” in Proceedings of the 20 th IEEE/ACM international Conference on Automated software engineering. New York, NY, USA: ACM, 2005, pp. 273– 282. [5] J. A. Jones, “Semi-automatic fault localization, ” Ph. D. dissertation, Georgia Institute of Technology, 2008.

Page 16 Questions?