Workshop CAT Technology Localization Localization The term LOCALIZATION

- Slides: 34

Workshop CAT Technology & Localization

Localization The term LOCALIZATION “refers to the process of customizing or adapting a product for a target language and culture” According to Thibodeau (2000), the main reason for localizing a product is economic. Why?

Localization § A product that is not making profit in the domestic market may perform better in another market especially in markets that support higher prices. § A non-localized product is less likely to survive over the long run localization can extend a product’s life cycle.

Localization & CAT Technology Many companies now aim at launching a product or a web site and its accompanying document at the same time And time is money. Therefore the translator is sometimes required to work very quickly (and cheaply) This is where technology comes in handy!

CAT vs MT Computer-Aided Translation (CAT) a type of translation carried on by a human translator using computerized tools Machine Translation (MT) a process whereby a computer program translate texts from a SL into a TL The major distinction between CAT and MT lies with who is primarily responsible for the actual task of translation: CAT: the translator MT: the computer

CAT Technology CAT stands for “Computer-Aided Translation”, and familiarity with CAT technology is becoming a prerequisite for professional translators And despite what someone might think, the developments of CAT tools has not reduced the need for language professionals… On the contrary, it has created jobs for translators who are skilled at using technology!

CAT Technology Bowker (2002) underlines that “CAT technology can be understood to include any type of computerized tool that translators use to help them do their job” (in its broadest definition, word processors, the WWW, grammar checkers, etc. can all be considered CAT technology) Optical character recognition (OCR) Voice recognition (VR) Corpus-analysis tools Translation Memories Terminology management systems Localization and web pages translation tools Machine translation systems

OCR and VR To take advantage of translation technology , the texts needs to be in electronic form Optical character recognition (OCR) and voice recognition (VR) are used to convert hard-copied documents, that are not machine-readable, into electronic documents Can you guess the PROS &CONS of using OCR and VR?

Corpora and Corpus-analysis tools What is a CORPUS? “In its broadest sense, a corpus is a collection of texts or utterances that is used as a basis for conducting some type of linguistic investigation” Translators usually: § Compile and analyse corpora for terminological researches § Consult corpora of parallel texts to produce a TT with the appropriate style, format, terminology and phraseology

Electronic corpora A corpus in electronic form is an electronic corpus, and its advantage is that it can be manipulated by a computer (and quickly scanned analysed!) It must be noted that a corpus is not a random collection of texts. The texts are selected according to explicit criteria: Genre, topic, time frame, etc. Representativeness is a define feature for a corpus! You can find some corpora at: http: //www. accademiadellacrusca. it/it/link-utili/banche-dati-dellitalianoscritto-parlato

Types of corpora § General/reference vs. specialized corpora § Written vs. spoken corpora § Synchronic vs. diachronic corpora § Monolingual vs. multilingual corpora § Comparable vs. parallel corpora § Native vs. learner corpora § Etc…

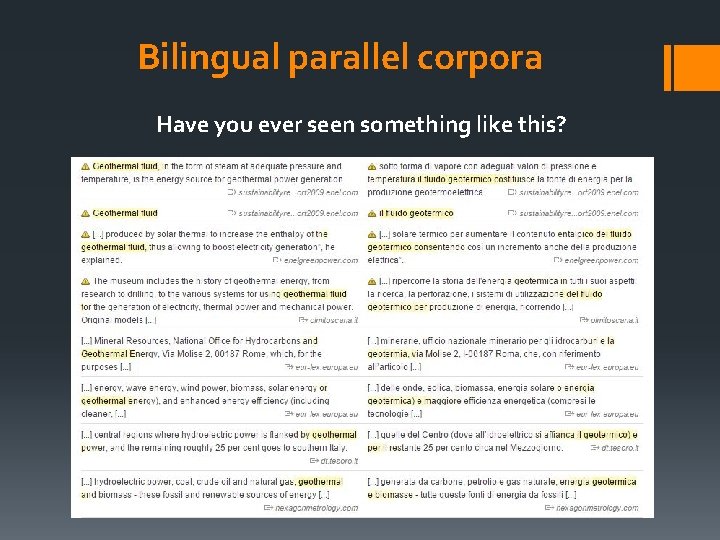

Bilingual parallel corpora A bilingual parallel corpus (also called “bitext”) can be a very powerful tool for a translator Source texts aligned with their translations Do you know any example of bitext?

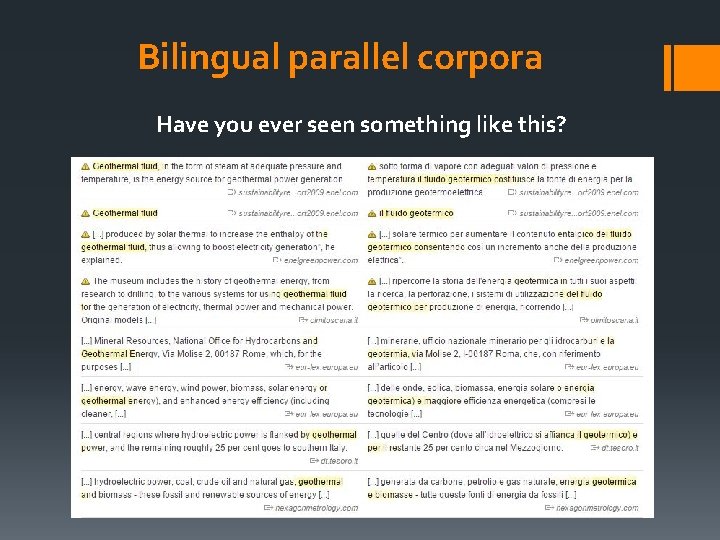

Bilingual parallel corpora Have you ever seen something like this?

Corpus-analysis tools A corpus-analysis tool is a software used to access and display information contained in a corpus… …and typically contain features that allow the user to generate and manipulate: § Word-frequency lists § Concordances § Collocations

Word-frequency lists The most basis feature for a corpus-analysis tool A word-frequency list allows the user to discover how many different words are in a corpus and how often they appear; and it can be manipulated in many ways: § Lemmatized lists § Stop lists

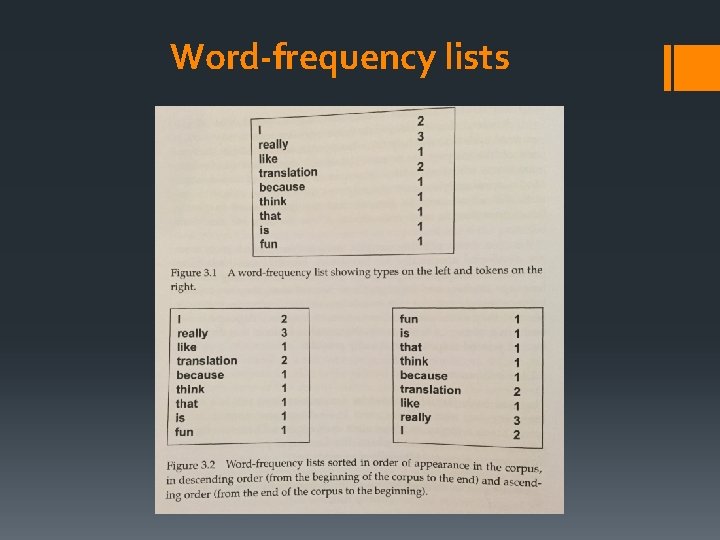

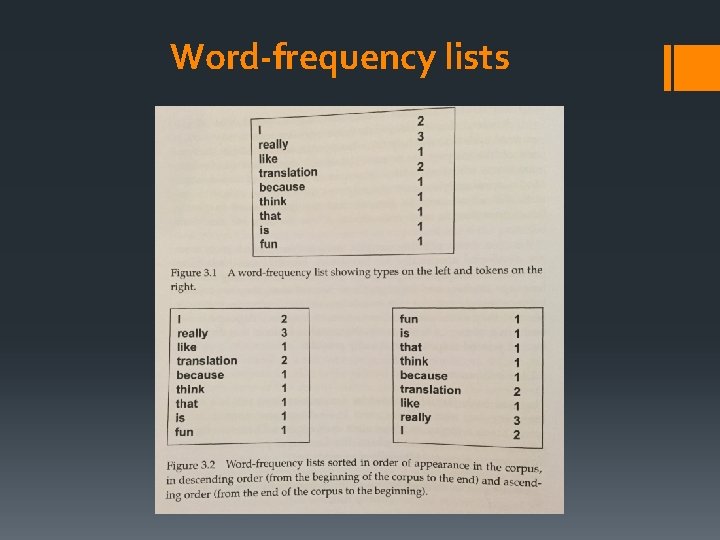

Word-frequency lists Let’s take this example (from Bowker, 2002): “I really like translation because I think that translation is really, really fun”. 13 words the corpus contains 13 tokens …but some words appear more than once (I, translation, really), and therefore this corpus contains only 9 different words types In a word-frequency list, the number of tokens is shown beside the type.

Word-frequency lists

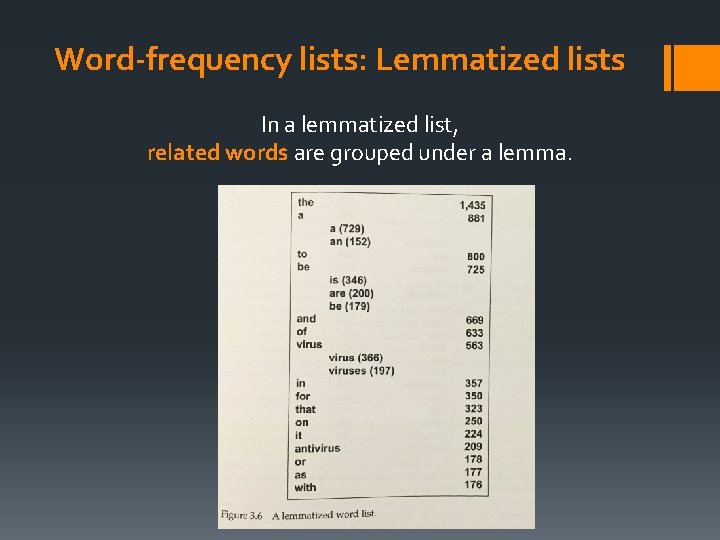

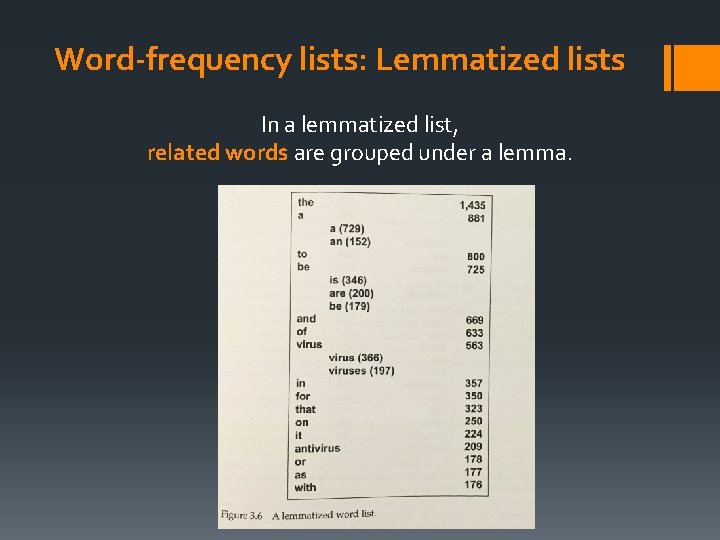

Word-frequency lists: Lemmatized lists In a lemmatized list, related words are grouped under a lemma.

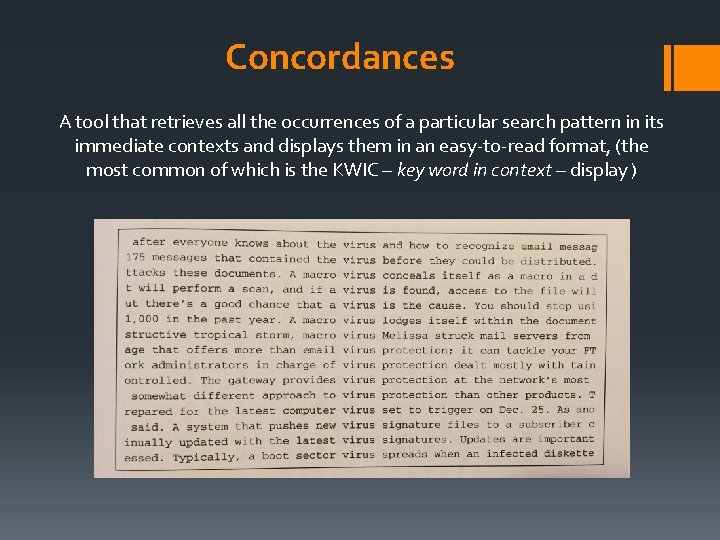

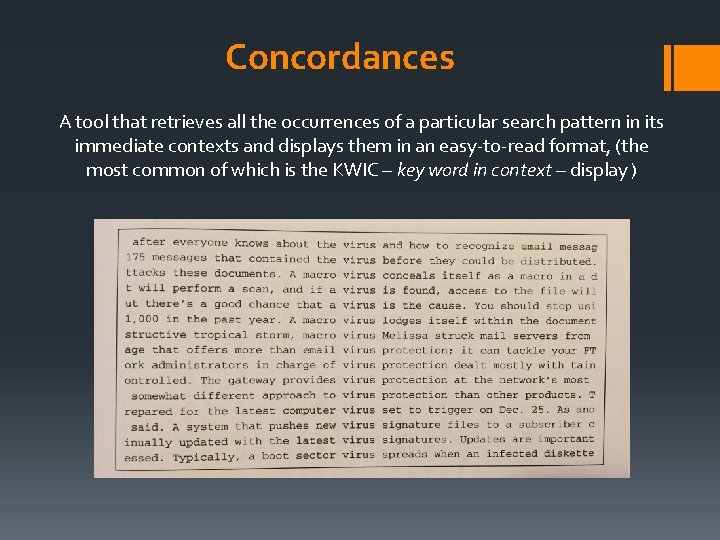

Concordances A tool that retrieves all the occurrences of a particular search pattern in its immediate contexts and displays them in an easy-to-read format, (the most common of which is the KWIC – key word in context – display )

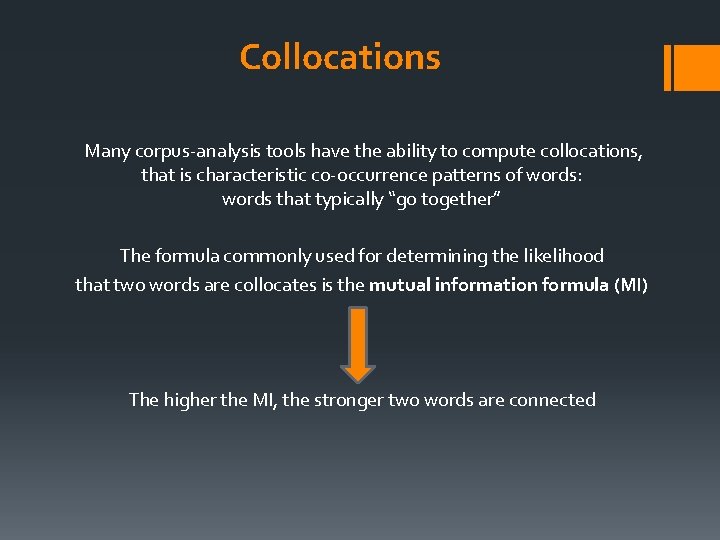

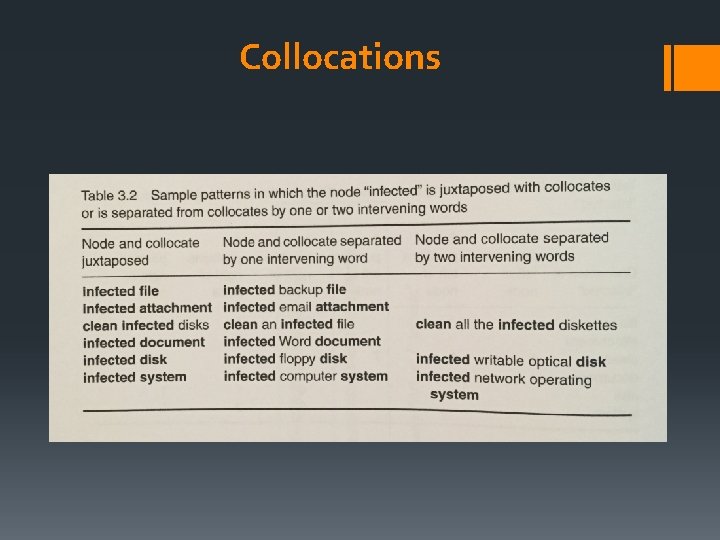

Collocations Many corpus-analysis tools have the ability to compute collocations, that is characteristic co-occurrence patterns of words: words that typically “go together” The formula commonly used for determining the likelihood that two words are collocates is the mutual information formula (MI) The higher the MI, the stronger two words are connected

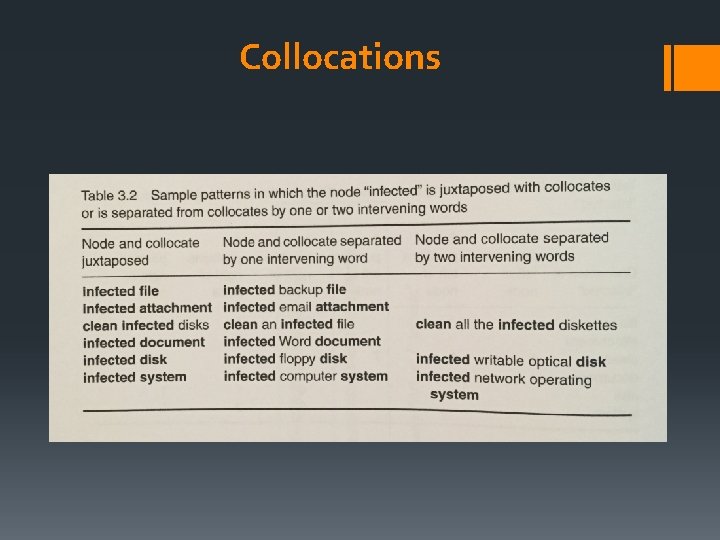

Collocations

Translation-Memory Systems A TM is a type of linguistic database that is used to store source texts and their translation, explicitly aligned Does it ring any bell? The resulting structure of a Translation Memory is a bilingual parallel corpus (or bitext)

Translation-Memory Systems Using a TM system the translator will be able to re-use and “recycle” previously translated segments These systems work automatically comparing new source segments against a database of translations. If a matching segment is found, the system will propose the “old” translation to the user, who will then decide whether to use or discard it. SEGMENT the basic unit in a TM system But deciding what constitutes a segment isn’t easy!

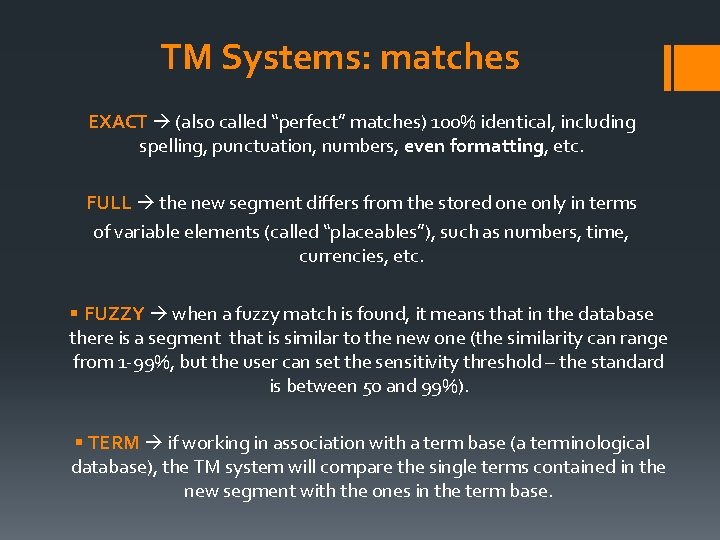

TM Systems: matches Matches: correspondences between the new SL segment and one or more “old” translations contained in the database. The most common types are: § EXACT matches § FULL matches § FUZZY matches § TERM matches

TM Systems: matches EXACT (also called “perfect” matches) 100% identical, including spelling, punctuation, numbers, even formatting, etc. FULL the new segment differs from the stored one only in terms of variable elements (called “placeables”), such as numbers, time, currencies, etc. § FUZZY when a fuzzy match is found, it means that in the database there is a segment that is similar to the new one (the similarity can range from 1 -99%, but the user can set the sensitivity threshold – the standard is between 50 and 99%). § TERM if working in association with a term base (a terminological database), the TM system will compare the single terms contained in the new segment with the ones in the term base.

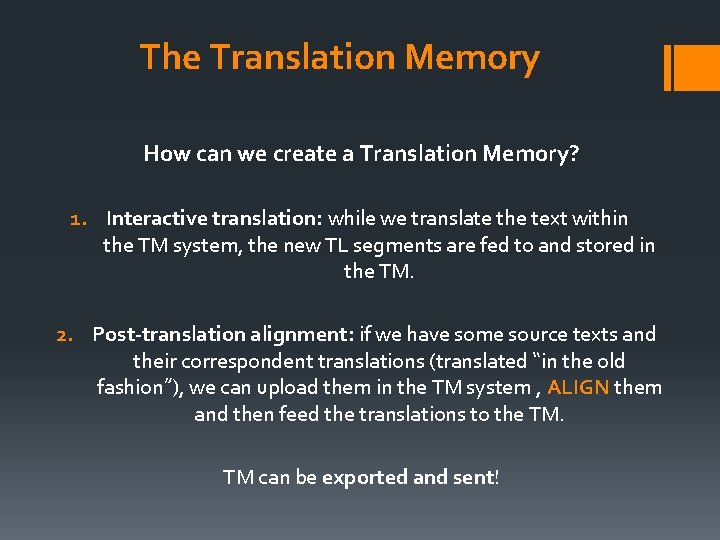

The Translation Memory How can we create a Translation Memory? 1. Interactive translation: while we translate the text within the TM system, the new TL segments are fed to and stored in the TM. 2. Post-translation alignment: if we have some source texts and their correspondent translations (translated “in the old fashion”), we can upload them in the TM system , ALIGN them and then feed the translations to the TM. TM can be exported and sent!

Examples of TM systems SDL TRADOS STUDIO (http: //www. sdl. com/cxc/language/translation-productivity/trados-studio) WORDFAST PRO (http: //www. wordfast. com/products_wordfast_pro_3) MEMOQ (https: //www. memoq. com/) Freeware: OMEGA T (http: //www. omegat. org/it/omegat. html) WORDFAST ANYWHERE (https: //www. freetm. com/)

Suitability Given that a TM system allows the user to re-use previously translated work… …in you opinion, which kind of texts are more suitable for inclusion in a TM?

Suitability The most suitable texts for a TM are repetitive and highly specialized texts, and texts that will be updated or revised: § Text with internal repetitions § Revisions § Recycled texts § Updates

Pros & cons According to Bowker (2002), the first thing to take into consideration is that an empty TM is of NO use The performance of the TM system is dependent on the scope and quality of the existing database… …and the quality of the translations store in the DB is dependent on the translator’s skills!

Pros & cons PROS: it saves you time …but… CONS: if you can’t use the software properly, it’s time consuming! You will need a few week’s training to be able to use a TM system in a way that it will save you time, instead of making you lose time! PROS: it improves consistency (internal and external) …but… CONS: The rigidity in maintaining the same ST’s order in the TT may affect the naturalness of the translation

Further considerations § TM systems are often quite expensive (even though the prices have been dropping and a few free systems are emerging) § And they tend to need “high” minimum requirements to work properly on a PC (a lot of RAM and a good CPU) § Different systems work with different formats (even though some standard are emerging – ex: . TMX for TM) § Some languages are easier to process than others (especially when it comes to handle the segmentation) § Using TM systems affects payments , as the clients may want to pay less for exact and fuzzy matches (but isn’t it fair, in a way? ) § A “full” TM is an asset, and issues of ownership may arise

Bibliography BOWKER, L. (2002). Computer-Aided Translation Technology: A Practical Introduction, University of Ottawa Press, Ottawa

THANKS FOR YOUR ATTENTION… and good luck! Prof. Laura Liucci – laura. liucci@gmail. com