App Insight Mobile App Performance Monitoring in the

App. Insight: Mobile App Performance Monitoring in the Wild Microsoft Research Chiraphat Chaiphet, Hsin-Yu Cheng UCL Computer Science CS GZ 06 11 th March 2016

Motivation ● Mobile apps ○ A wide variety of environmental conditions in the wild. ○ Different hardware and OS versions ○ Wide range of user interaction →Difficult to simulate in the lab ● User-perceived delay So, developers need data about how their app is performing in the wild to maintain and improve the quality of apps.

App. Insight Design principles ● Low overhead ○ Not slow down app performance ● Zero-effort ○ Developer don’t need to write any code ○ Done by rewriting app binaries ○ No source code required ● Immediately deployable ○ No change to mobile OS or runtime

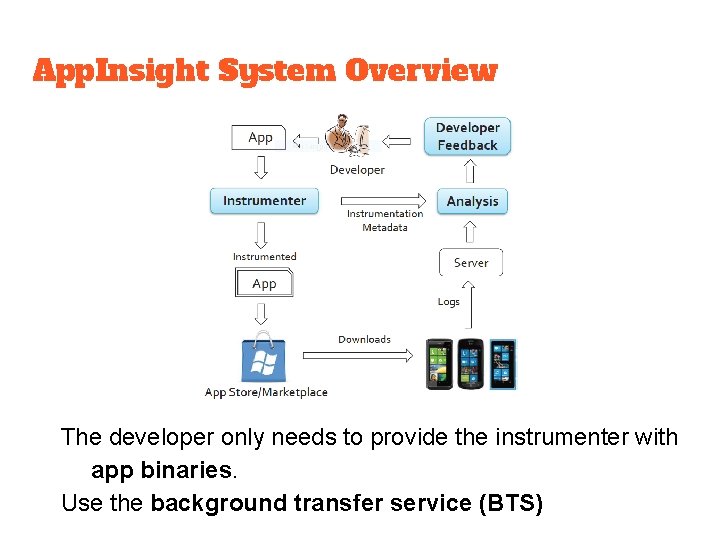

App. Insight System Overview The developer only needs to provide the instrumenter with app binaries. Use the background transfer service (BTS)

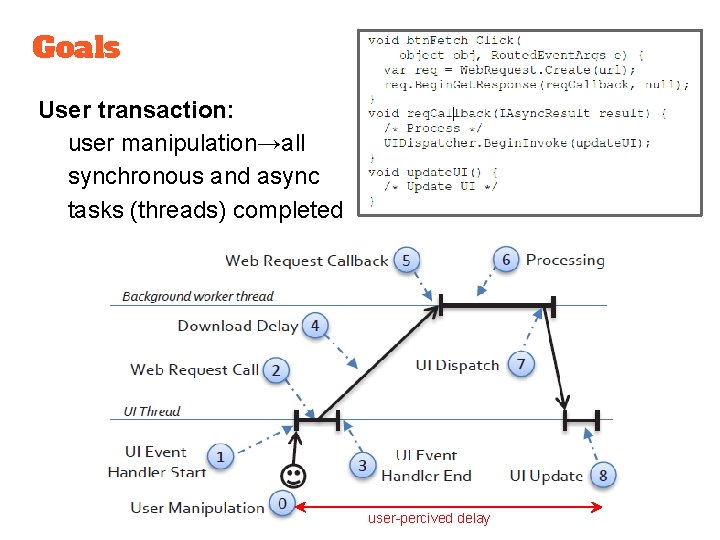

Goals User transaction: user manipulation→all synchronous and async tasks (threads) completed user-percived delay

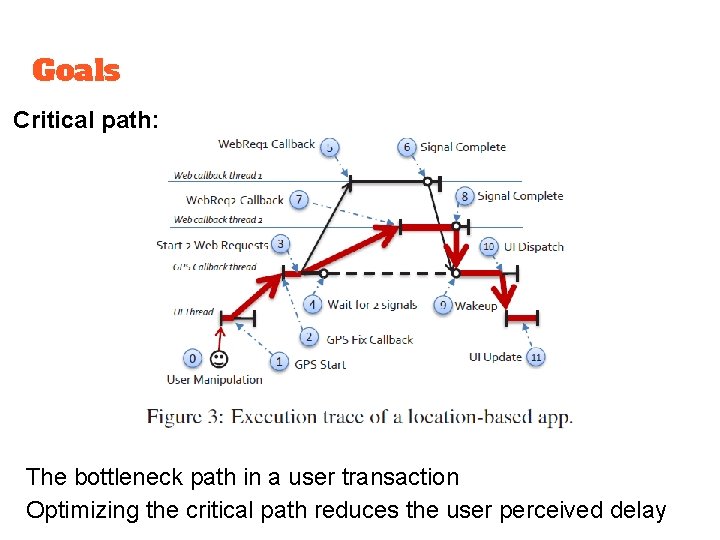

Goals Critical path: The bottleneck path in a user transaction Optimizing the critical path reduces the user perceived delay

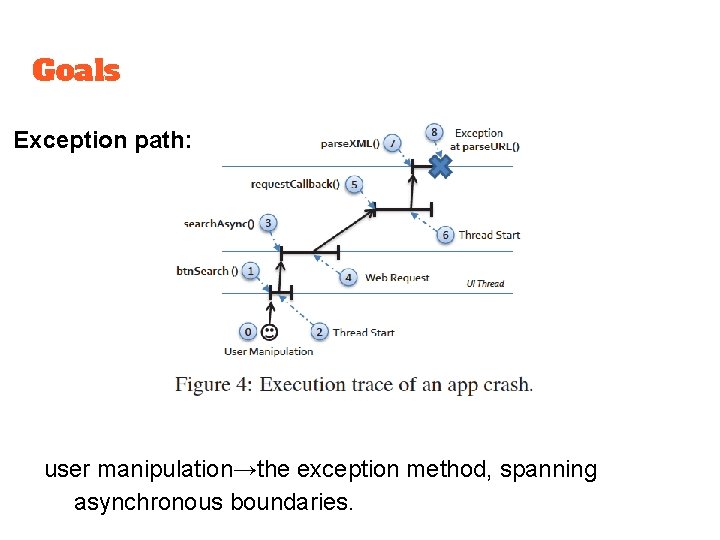

Goals Exception path: user manipulation→the exception method, spanning asynchronous boundaries.

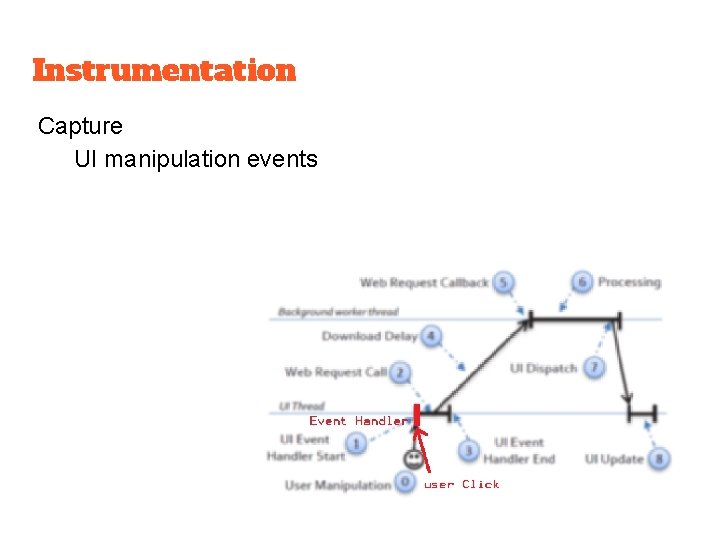

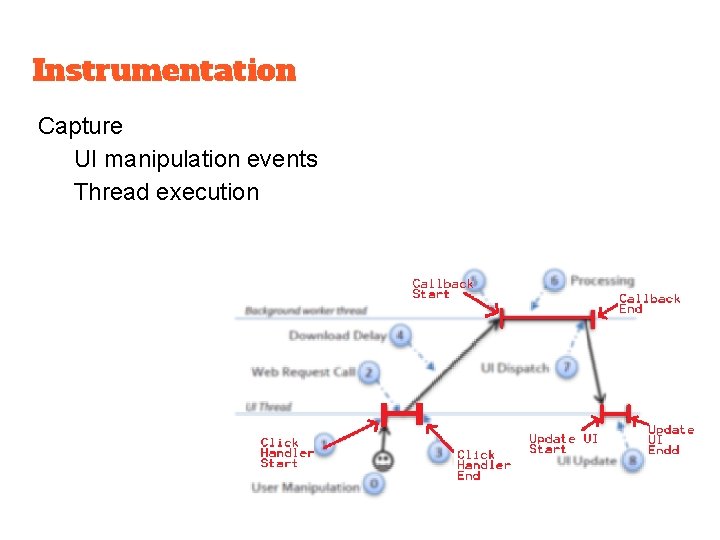

Instrumentation Capture UI manipulation events

Instrumentation Capture UI manipulation events Thread execution

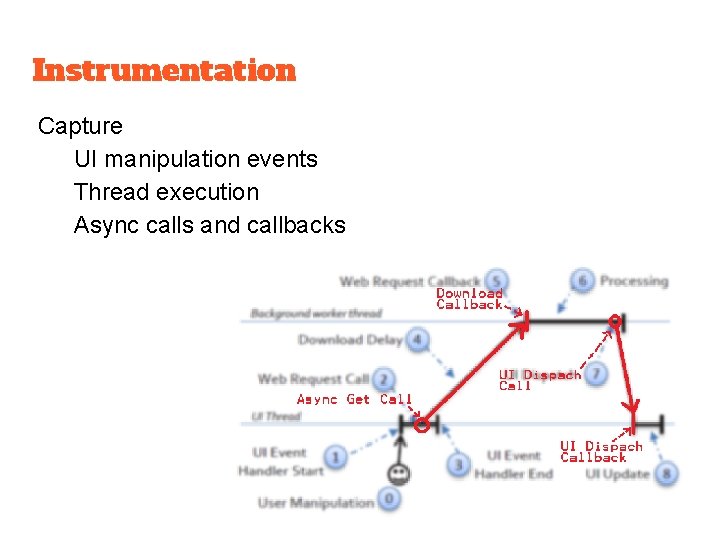

Instrumentation Capture UI manipulation events Thread execution Async calls and callbacks

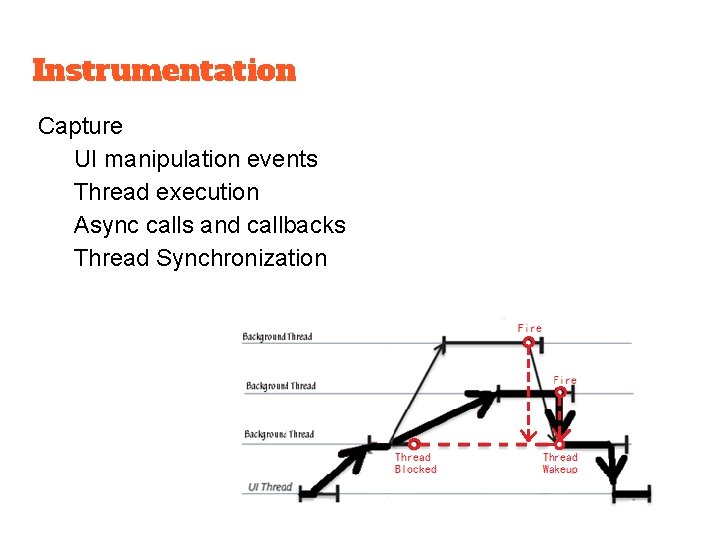

Instrumentation Capture UI manipulation events Thread execution Async calls and callbacks Thread Synchronization

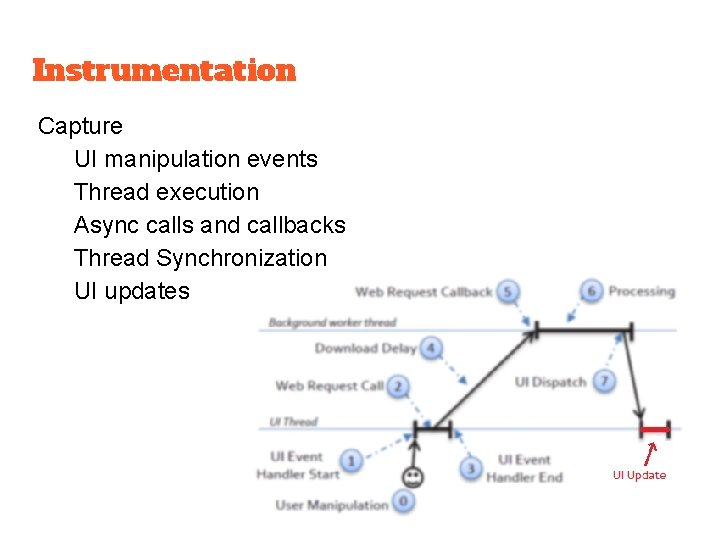

Instrumentation Capture UI manipulation events Thread execution Async calls and callbacks Thread Synchronization UI updates

Instrumentation Capture UI manipulation events Thread execution Async calls and callbacks Thread Synchronization UI updates Unhandled exceptions Additional Information URL, the network state GPS

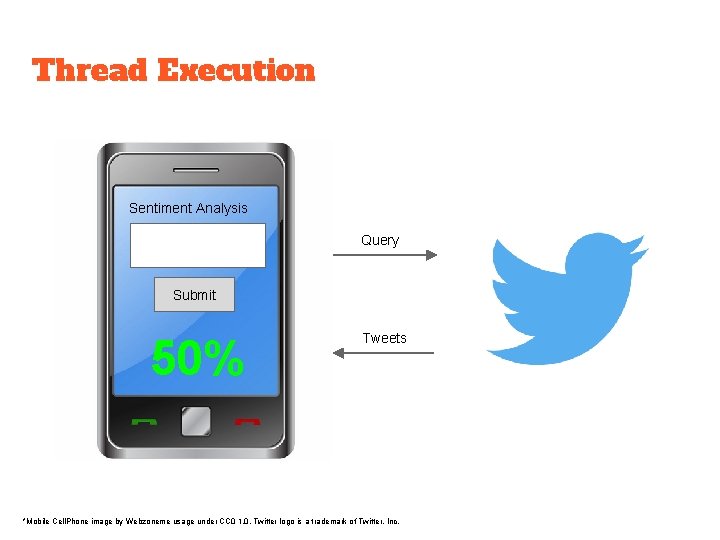

Thread Execution Sentiment Analysis Donald Trump Query Submit 50% Tweets *Mobile Cell. Phone image by Webzoneme usage under CC 0 1. 0, Twitter logo is a trademark of Twitter, Inc.

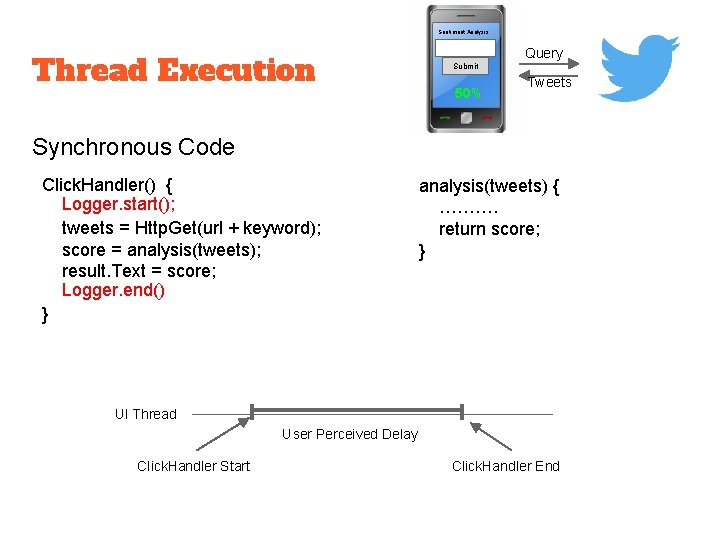

Sentiment Analysis Thread Execution Query Submit 50% Tweets Synchronous Code Click. Handler() { Logger. start(); tweets = Http. Get(url + keyword); score = analysis(tweets); result. Text = score; Logger. end() } analysis(tweets) { ………. return score; } UI Thread User Perceived Delay Click. Handler Start Click. Handler End

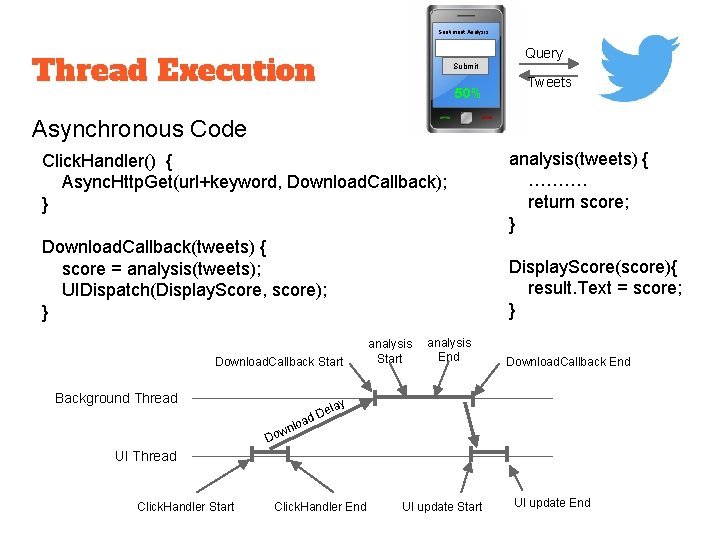

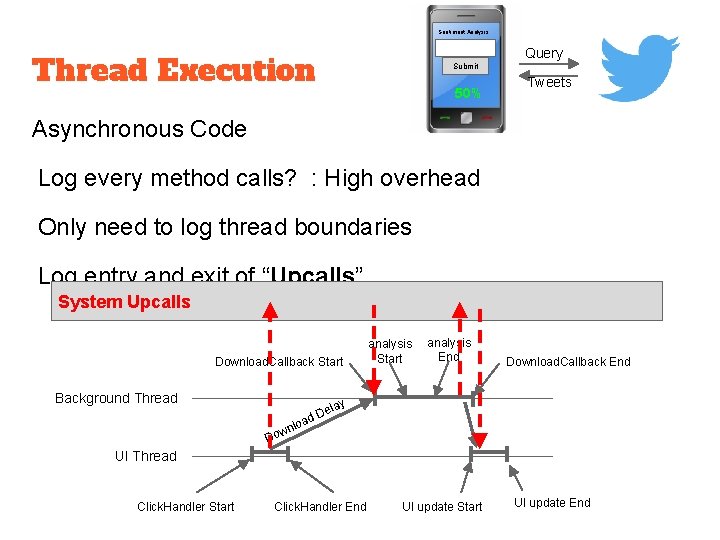

Sentiment Analysis Query Thread Execution Submit 50% Tweets Asynchronous Code Click. Handler() { Async. Http. Get(url+keyword, Download. Callback); } analysis(tweets) { ………. return score; } Download. Callback(tweets) { score = analysis(tweets); UIDispatch(Display. Score, score); } Display. Score(score){ result. Text = score; } Download. Callback Start Background Thread analysis Start analysis End Download. Callback End y ela D ad o l wn Do UI Thread Click. Handler Start Click. Handler End UI update Start UI update End

Sentiment Analysis Query Thread Execution Submit 50% Tweets Asynchronous Code Log every method calls? : High overhead Only need to log thread boundaries Log entry and exit of “Upcalls” System Upcalls Download. Callback Start Background Thread analysis Start analysis End Download. Callback End y ela D ad o l wn Do UI Thread Click. Handler Start Click. Handler End UI update Start UI update End

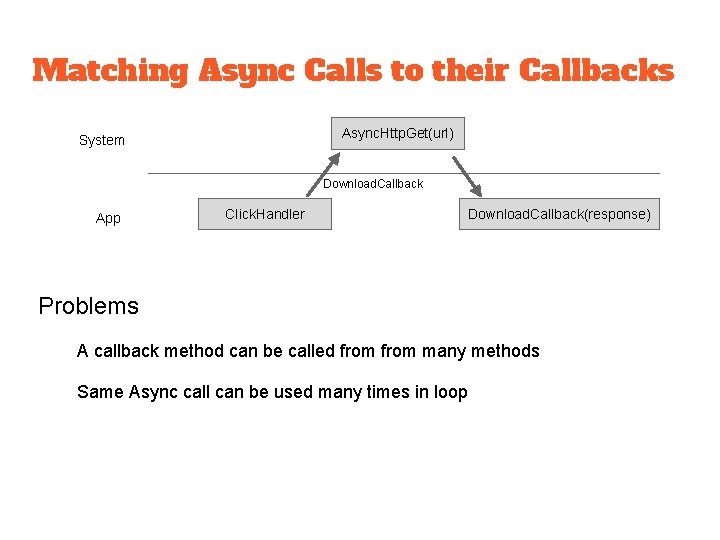

Matching Async Calls to their Callbacks Async. Http. Get(url) System Download. Callback App Click. Handler Download. Callback(response) Problems A callback method can be called from many methods Same Async call can be used many times in loop

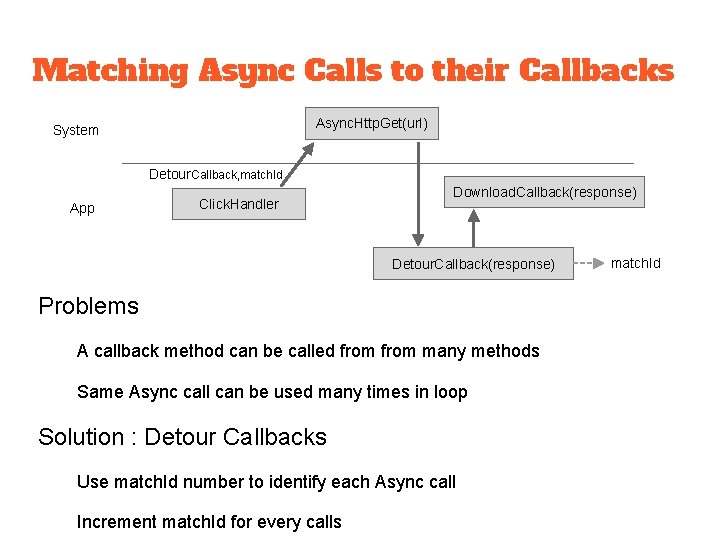

Matching Async Calls to their Callbacks Async. Http. Get(url) System Detour. Callback, match. Id App Download. Callback Click. Handler Download. Callback(response) Detour. Callback(response) Problems A callback method can be called from many methods Same Async call can be used many times in loop Solution : Detour Callbacks Use match. Id number to identify each Async call Increment match. Id for every calls match. Id

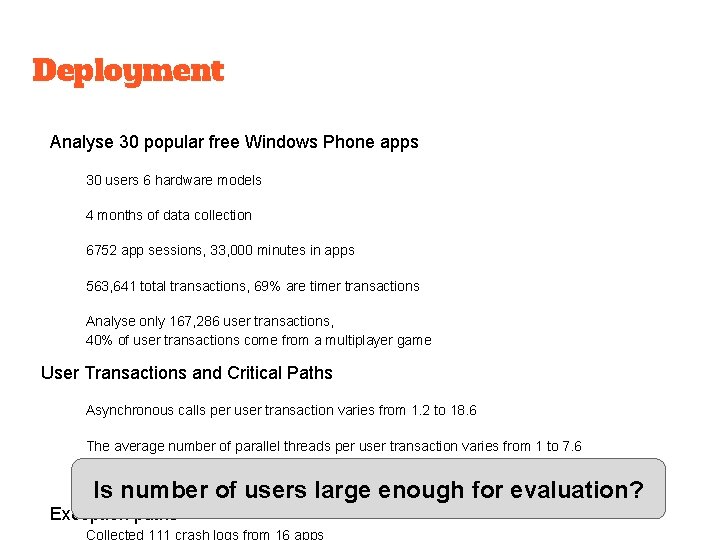

Deployment Analyse 30 popular free Windows Phone apps 30 users 6 hardware models 4 months of data collection 6752 app sessions, 33, 000 minutes in apps 563, 641 total transactions, 69% are timer transactions Analyse only 167, 286 user transactions, 40% of user transactions come from a multiplayer game User Transactions and Critical Paths Asynchronous calls per user transaction varies from 1. 2 to 18. 6 The average number of parallel threads per user transaction varies from 1 to 7. 6 In critical paths, only a few edges responsible for most of the transaction time Is number of users large enough for evaluation? Exception paths

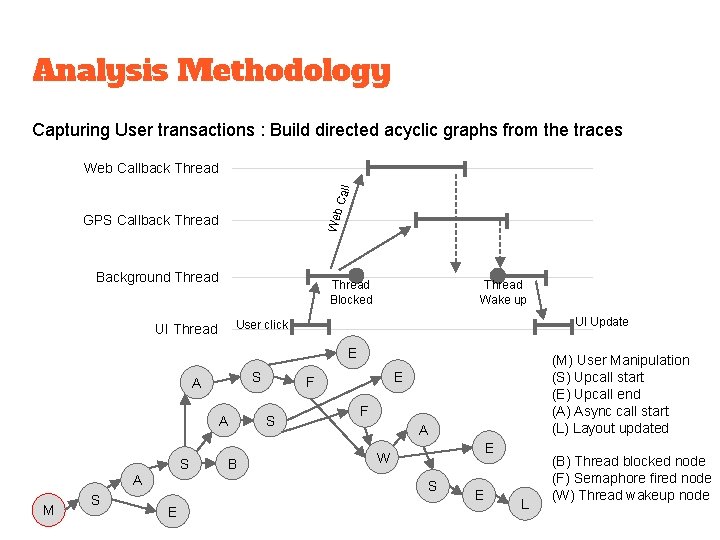

Analysis Methodology Capturing User transactions : Build directed acyclic graphs from the traces Web Callback Thread GPS Callback Thread Background Thread Blocked Thread Wake up UI Update User click UI Thread E S A A S A M S B E F S F A E W S E (M) User Manipulation (S) Upcall start (E) Upcall end (A) Async call start (L) Layout updated E L (B) Thread blocked node (F) Semaphore fired node (W) Thread wakeup node

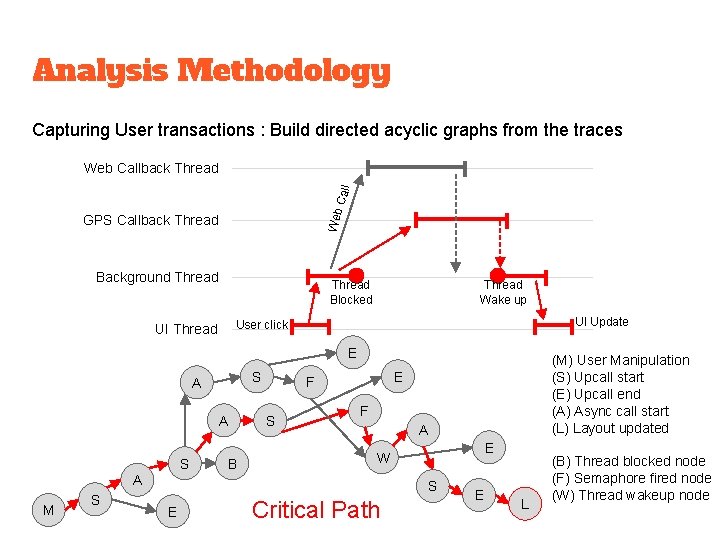

Analysis Methodology Capturing User transactions : Build directed acyclic graphs from the traces Web Callback Thread GPS Callback Thread Background Thread Blocked Thread Wake up UI Update User click UI Thread E S A A S B E F S F A S E W A M S E (M) User Manipulation (S) Upcall start (E) Upcall end (A) Async call start (L) Layout updated Critical Path E L (B) Thread blocked node (F) Semaphore fired node (W) Thread wakeup node

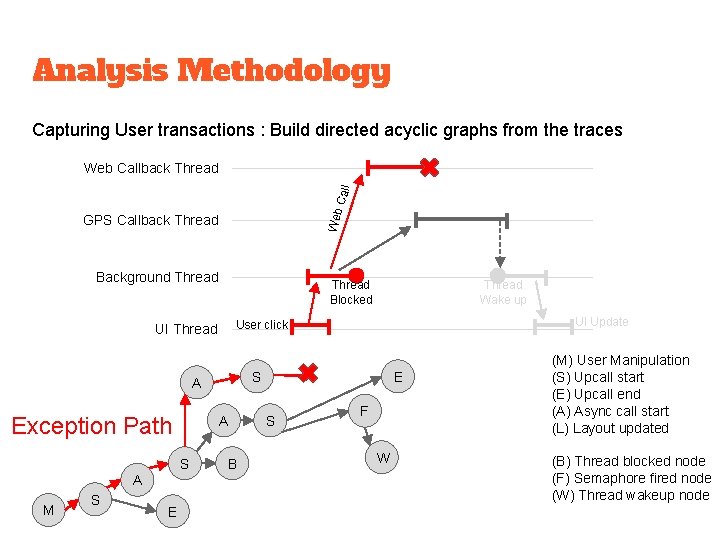

Analysis Methodology Capturing User transactions : Build directed acyclic graphs from the traces Web Callback Thread GPS Callback Thread Background Thread Blocked S A A S A M S E UI Update User click UI Thread Exception Path Thread Wake up B E S F W (M) User Manipulation (S) Upcall start (E) Upcall end (A) Async call start (L) Layout updated (B) Thread blocked node (F) Semaphore fired node (W) Thread wakeup node

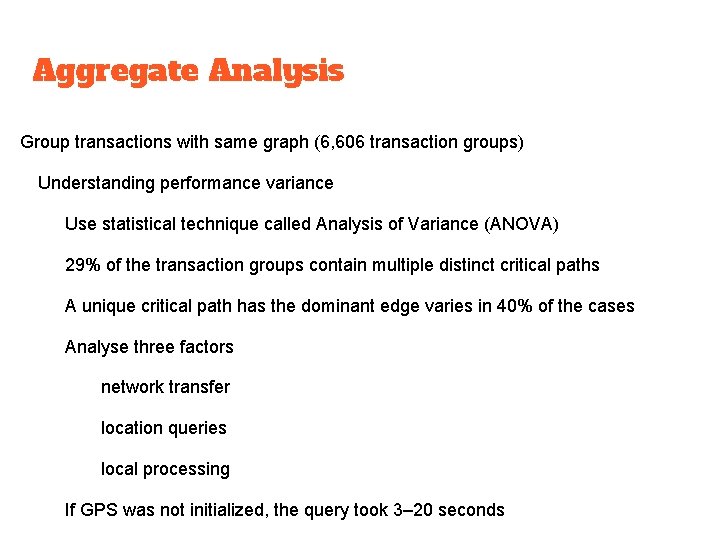

Aggregate Analysis Group transactions with same graph (6, 606 transaction groups) Understanding performance variance Use statistical technique called Analysis of Variance (ANOVA) 29% of the transaction groups contain multiple distinct critical paths A unique critical path has the dominant edge varies in 40% of the cases Analyse three factors network transfer location queries local processing If GPS was not initialized, the query took 3– 20 seconds

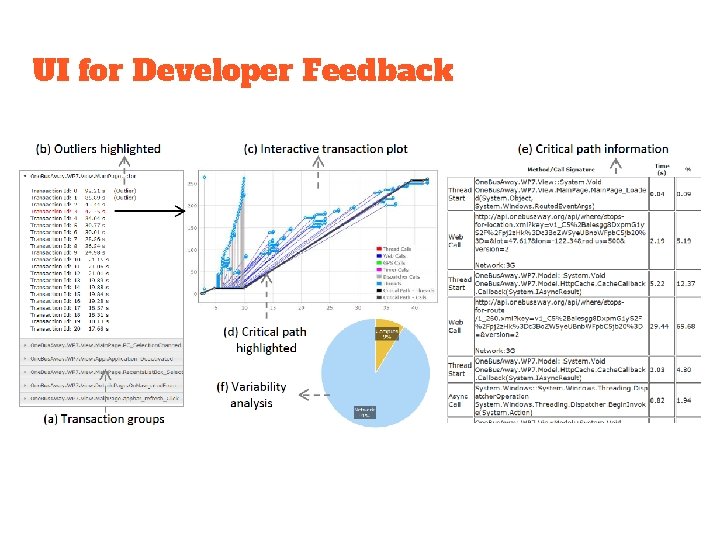

UI for Developer Feedback

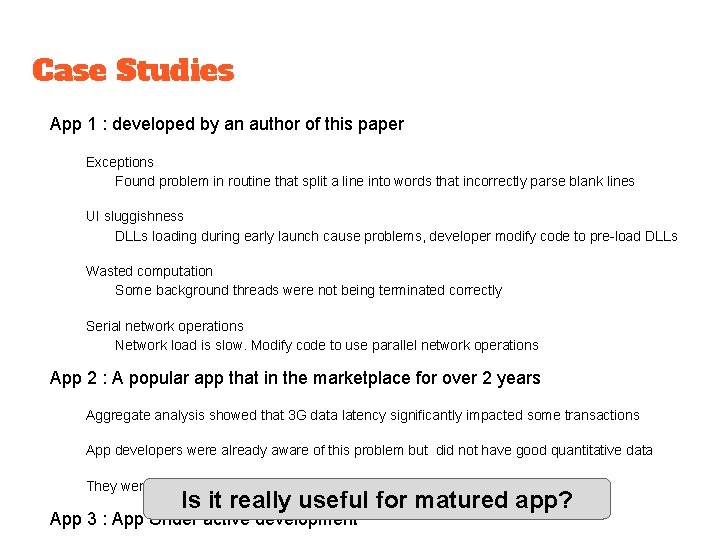

Case Studies App 1 : developed by an author of this paper Exceptions Found problem in routine that split a line into words that incorrectly parse blank lines UI sluggishness DLLs loading during early launch cause problems, developer modify code to pre-load DLLs Wasted computation Some background threads were not being terminated correctly Serial network operations Network load is slow. Modify code to use parallel network operations App 2 : A popular app that in the marketplace for over 2 years Aggregate analysis showed that 3 G data latency significantly impacted some transactions App developers were already aware of this problem but did not have good quantitative data They were impressed with App. Insight highlighted the problem easily Is it really useful for matured app? App 3 : App Under active development

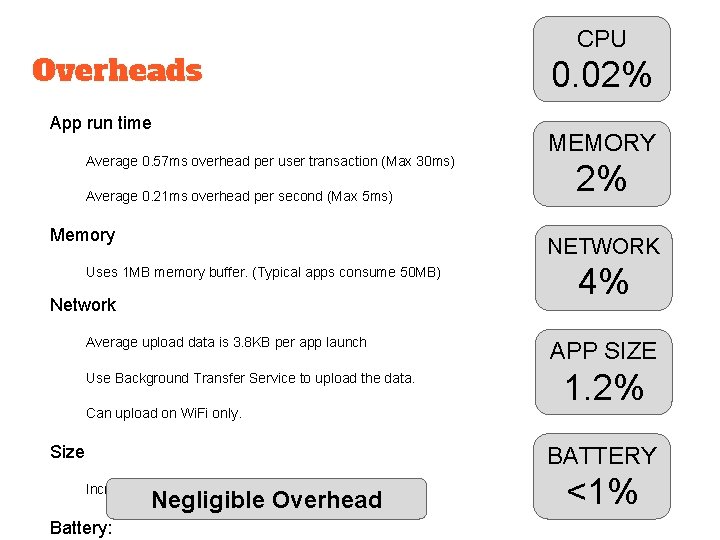

Overheads App run time Average 0. 57 ms overhead per user transaction (Max 30 ms) Average 0. 21 ms overhead per second (Max 5 ms) Memory CPU 0. 02% MEMORY 2% NETWORK Uses 1 MB memory buffer. (Typical apps consume 50 MB) Network Average upload data is 3. 8 KB per app launch Use Background Transfer Service to upload the data. Can upload on Wi. Fi only. Size 4% APP SIZE 1. 2% BATTERY Increased app binaries by 1. 2% Negligible Overhead Battery: <1%

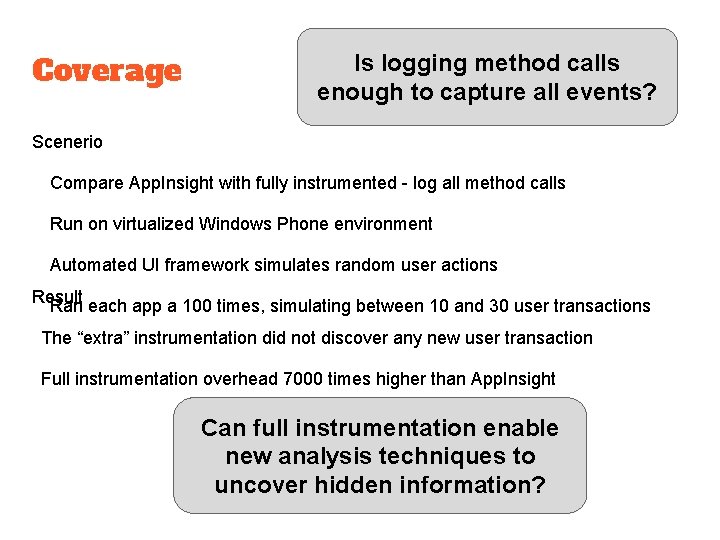

Coverage Is logging method calls enough to capture all events? Scenerio Compare App. Insight with fully instrumented - log all method calls Run on virtualized Windows Phone environment Automated UI framework simulates random user actions Result Ran each app a 100 times, simulating between 10 and 30 user transactions The “extra” instrumentation did not discover any new user transaction Full instrumentation overhead 7000 times higher than App. Insight Can full instrumentation enable new analysis techniques to uncover hidden information?

Related Works Correlating event traces Lag. Hunter Focus on synchronous rendering time, developer need to supply method lists. Magpie Transactions from event log for server workload. Windows Phone log not avaliable to app. XTrace and Pinpoint Trace the path using a special identifier attached to each request. Can trace across process/app but App. Insight does not trace across apps. Aguilera Use timing analysis to correlate trace logs collected from a black boxes system. Finding critical path of a transaction Yang and Miller “Critical Path Analysis for the Execution of Parallel and Distributed Programs” Finding the critical path of parallel and distributed programs. Barford and Crovella “Critical Path Analysis of TCP Transactions” Critical paths in TCP transactions, similiar ideas in building a graph but different design.

Limitations and Future Works Causal relationships between threads Not tracking data dependencies. Threads may use shared variables. Miss implicit causal relationships introduced by resource contention such as disk read/write. Cannot untangle complex dependencies introduced by counting semaphores. Does not track any state that a user transaction may leave behind. Definition of user transaction and critical path Some user interactions may involve multiple user inputs Current implementation will break them into multiple transactions Privacy issues Currenly use anonymous hash value instead of phone id to protect user privacy. Still risk in collecting user data from URLs Applicability to other platforms

Conclusion App. Insight can help developers to easily understand performance problems of their app that were deployed on real user devices (in the wild). Automatically trace User Transactions Identify Critical Paths and Exception Paths Aggregate Analysis show factors that affect performance ❏ Extremely low overhead, not slow down apps ❏ Zero developer effort, no code change ❏ Readily deployable on Windows Phone platform

- Slides: 31