1 Ranking Use and Usability Bettina Berendt K

1 Ranking – Use and Usability Bettina Berendt / K. U. Leuven www. cs. kuleuven. be/~berendt

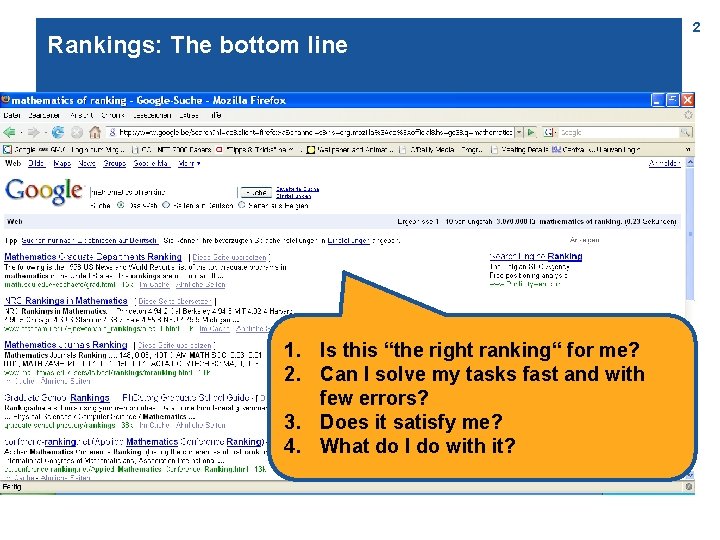

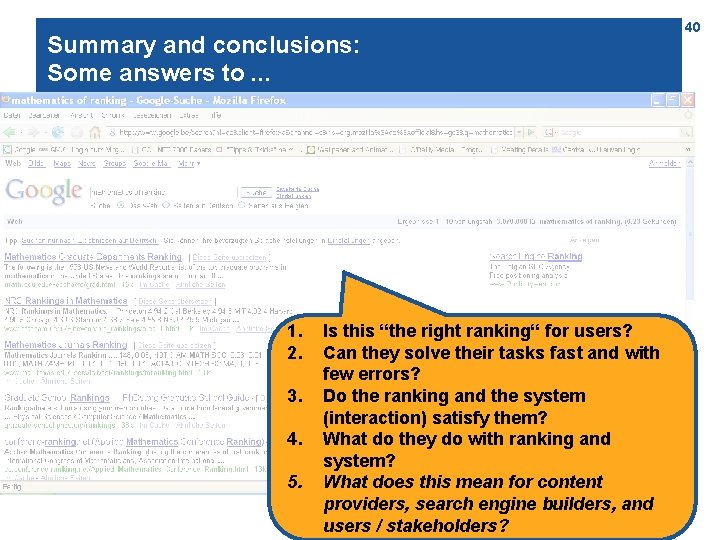

Rankings: The bottom line 1. Is this “the right ranking“ for me? 2. Can I solve my tasks fast and with few errors? 3. Does it satisfy me? 4. What do I do with it? 2

Agenda 3

Agenda System and user: A simple model 8 challenges – some solution approaches Outlook 4

5 Operationalizing question 1: Is this the right ranking for users? 1. . 2. . 3. . ? 1. 2. 3. 4. . .

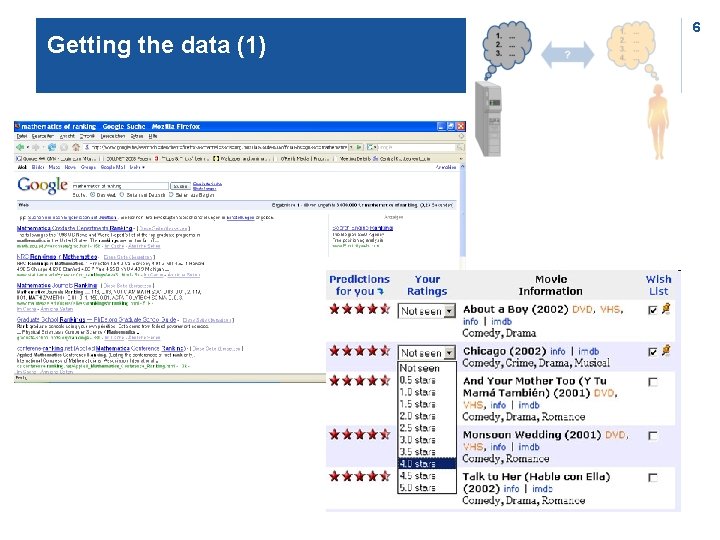

Getting the data (1) 6

Getting the data (2) 203. 30. 5. 145 - - [01/Jun/1999: 03: 09: 21 -0600] "GET /Calls/OWOM. html HTTP/1. 0" 200 3942 "http: //www. lycos. com/cgibin/pursuit? query=advertising+psychology&maxhits=20&cat=dir" "Mozilla/4. 5 [en] (Win 98; I)" 203. 30. 5. 145 - - [01/Jun/1999: 03: 09: 23 -0600] "GET /Calls/Images/earthani. gif HTTP/1. 0" 200 10689 "http: //www. acr-news. org/Calls/OWOM. html" "Mozilla/4. 5 [en] (Win 98; I)" 203. 30. 5. 145 - - [01/Jun/1999: 03: 09: 24 -0600] "GET /Calls/Images/line. gif HTTP/1. 0" 200 190 "http: //www. acr-news. org/Calls/OWOM. html" "Mozilla/4. 5 [en] (Win 98; I)" 203. 252. 234. 33 - - [01/Jun/1999: 03: 12: 31 -0600] "GET / HTTP/1. 0" 200 4980 "" "Mozilla/4. 06 [en] (Win 95; I)" 203. 252. 234. 33 - - [01/Jun/1999: 03: 12: 35 -0600] "GET /Images/line. gif HTTP/1. 0" 200 190 "http: //www. acr-news. org/" "Mozilla/4. 06 [en] (Win 95; I)" 7

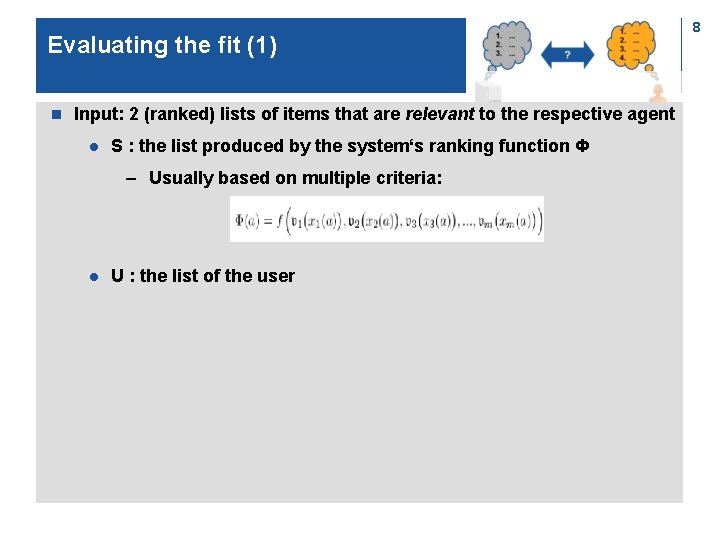

Evaluating the fit (1) n Input: 2 (ranked) lists of items that are relevant to the respective agent l S : the list produced by the system‘s ranking function Ф – Usually based on multiple criteria: l U : the list of the user 8

Evaluating the fit (2): Measures n Information retrieval classics: l Precision = | S ∩ U | / | S | l Recall = | S ∩ U | / | U | l Combinations and variations: – F 1 = ( 2 * precison * recall ) / ( precision + recall ) – Precision @ n = | [first n items of S] ∩ U | / n –. . . 9

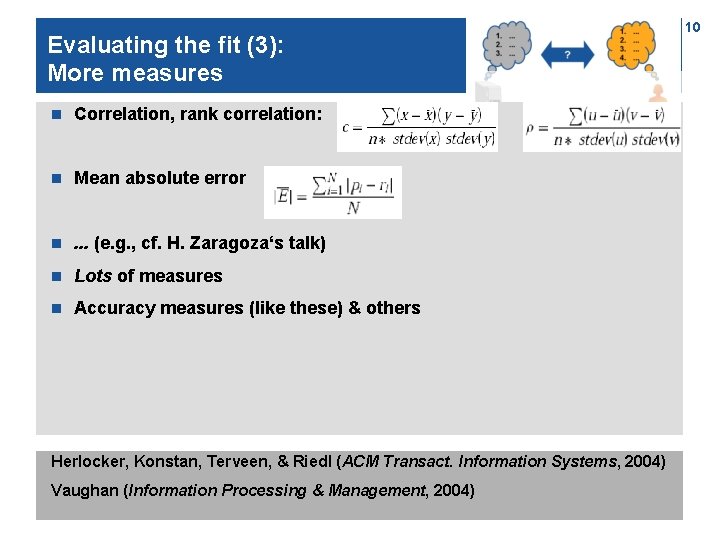

Evaluating the fit (3): More measures n Correlation, rank correlation: n Mean absolute error n . . . (e. g. , cf. H. Zaragoza‘s talk) n Lots of measures n Accuracy measures (like these) & others Herlocker, Konstan, Terveen, & Riedl (ACM Transact. Information Systems, 2004) Vaughan (Information Processing & Management, 2004) 10

Evaluating the fit (4) Measures fall into a small no. of “equivalence classes“ Going on: Performance measures optimization (Zaragoza) Herlocker et al. 2004 Webber, W. , Moffat, A. , Zobel, J. , and Sakai, T. (Proc. SIGIR 2008) 11

Challenge 1: U depends on the context (task, user characteristics, . . . ) 1. . 2. . 3. . ? 1. 2. 3. 4. . . 12

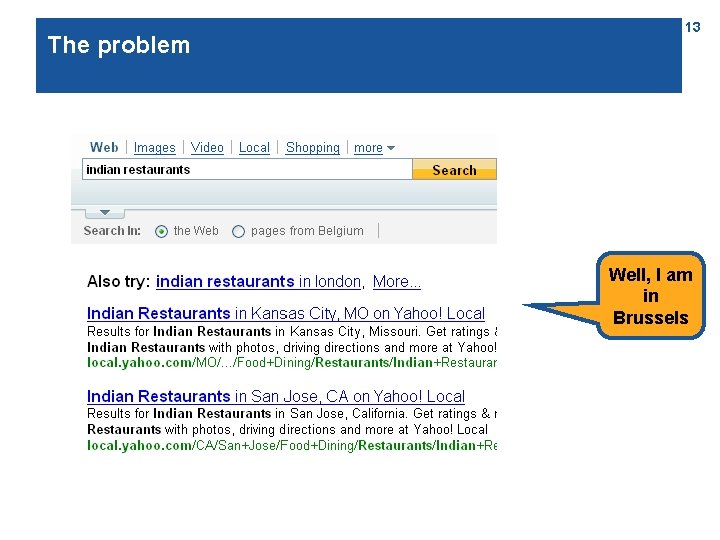

The problem 13 Well, I am in Brussels

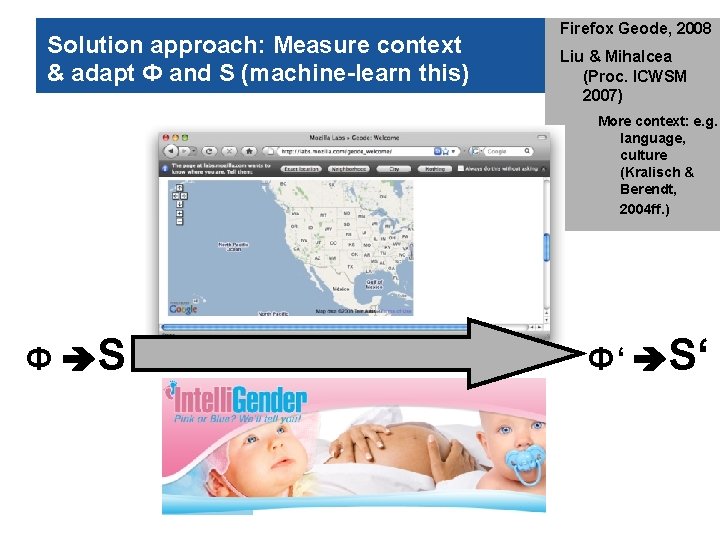

Solution approach: Measure context & adapt Ф and S (machine-learn this) 14 Firefox Geode, 2008 Liu & Mihalcea (Proc. ICWSM 2007) More context: e. g. language, culture (Kralisch & Berendt, 2004 ff. ) Ф S Ф‘ S‘

Challenge 2: U depends on the purpose of using the ranking 1. . 2. . 3. . ? 1. 2. 3. 4. . . 15

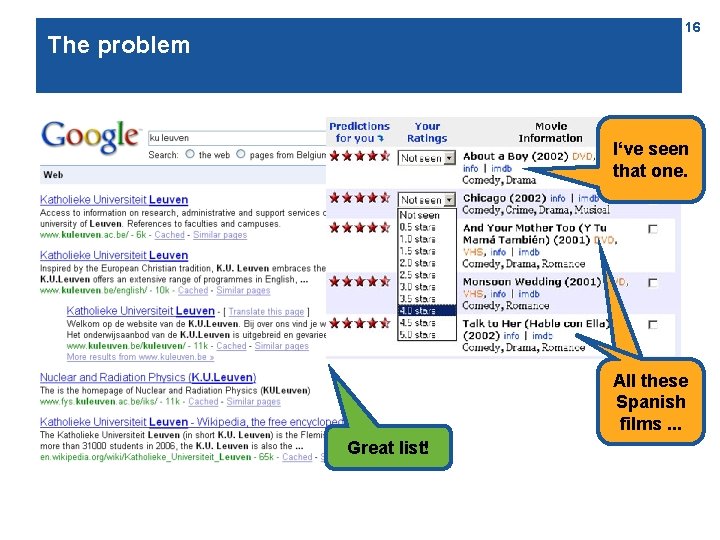

16 The problem I‘ve seen that one. All these Spanish ones. . . films Great list!

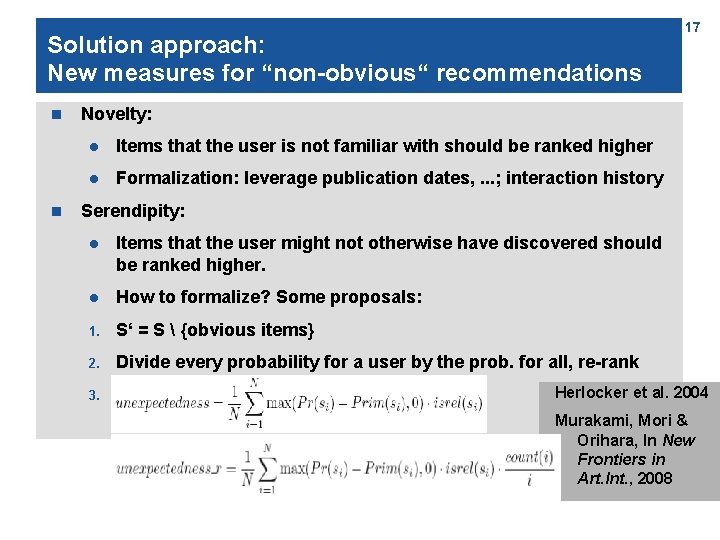

Solution approach: New measures for “non-obvious“ recommendations n n 17 Novelty: l Items that the user is not familiar with should be ranked higher l Formalization: leverage publication dates, . . . ; interaction history Serendipity: l Items that the user might not otherwise have discovered should be ranked higher. l How to formalize? Some proposals: 1. S‘ = S {obvious items} 2. Divide every probability for a user by the prob. for all, re-rank 3. X Herlocker et al. 2004 Murakami, Mori & Orihara, In New Frontiers in Art. Int. , 2008

Challenge 3: Querying and ranking is an iterative process 1. . 2. . 3. . ? 1. 2. 3. 4. . . 18

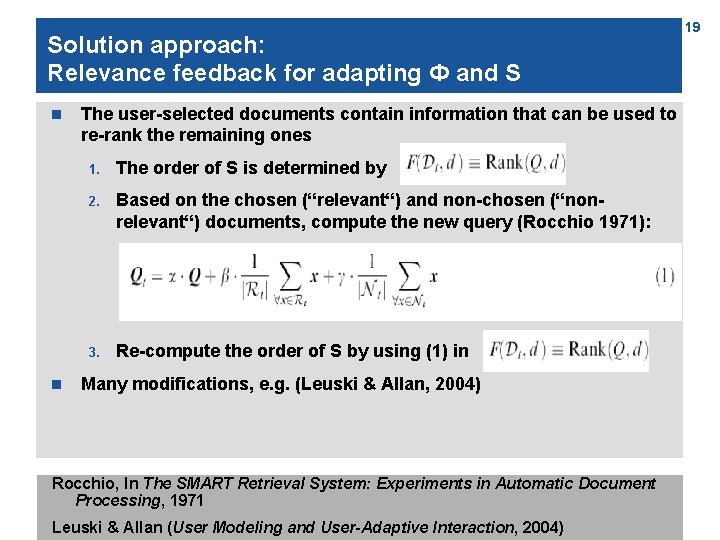

Solution approach: Relevance feedback for adapting Ф and S n n The user-selected documents contain information that can be used to re-rank the remaining ones 1. The order of S is determined by 2. Based on the chosen (“relevant“) and non-chosen (“nonrelevant“) documents, compute the new query (Rocchio 1971): 3. Re-compute the order of S by using (1) in Many modifications, e. g. (Leuski & Allan, 2004) Rocchio, In The SMART Retrieval System: Experiments in Automatic Document Processing, 1971 Leuski & Allan (User Modeling and User-Adaptive Interaction, 2004) 19

Challenge 4: The external ranking is only perceived partially 1. . 2. . 3. . ? 1. 2. 3. 4. . . 20

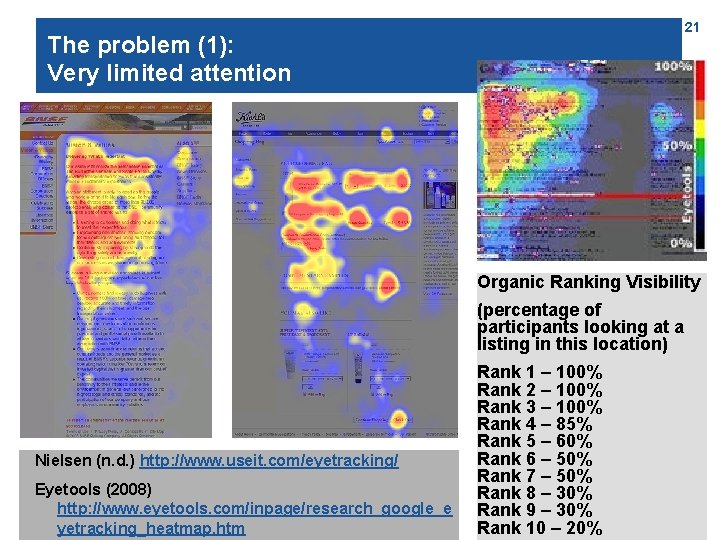

21 The problem (1): Very limited attention Organic Ranking Visibility (percentage of participants looking at a listing in this location) Nielsen (n. d. ) http: //www. useit. com/eyetracking/ Eyetools (2008) http: //www. eyetools. com/inpage/research_google_e yetracking_heatmap. htm Rank 1 – 100% Rank 2 – 100% Rank 3 – 100% Rank 4 – 85% Rank 5 – 60% Rank 6 – 50% Rank 7 – 50% Rank 8 – 30% Rank 9 – 30% Rank 10 – 20%

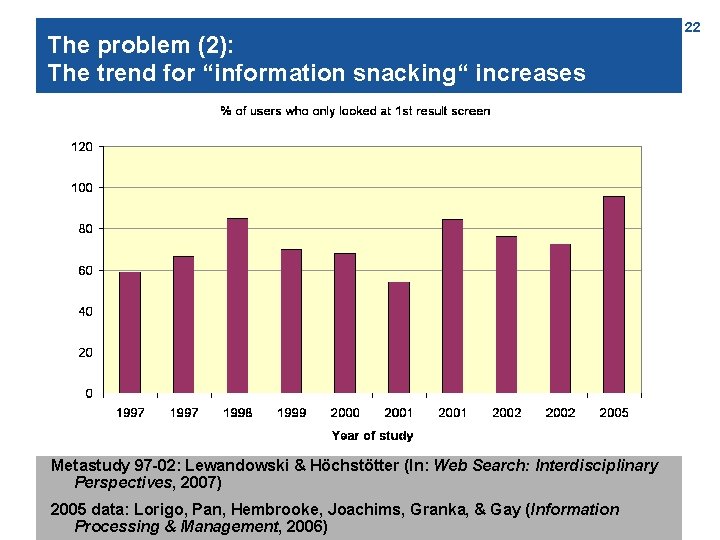

The problem (2): The trend for “information snacking“ increases Metastudy 97 -02: Lewandowski & Höchstötter (In: Web Search: Interdisciplinary Perspectives, 2007) 2005 data: Lorigo, Pan, Hembrooke, Joachims, Granka, & Gay (Information Processing & Management, 2006) 22

The problem (3): Interactions with task 3 types of search tasks (Broder, 2002): n n n Navigational l Find the homepage of Michael Jordan, the statistician. l Find the homepage for graduate housing at CMU. Informational l Who discovered the first modern antibiotic? l Where is the tallest mountain in NY located? l “You are searching for information about loans for a renovation. Select the website on which you would like to search information. “ Transactional l “You would like to contract a loan for a renovation. Select the website on which you would like to contract the loan. “ Broder (SIGIR Forum, 2002) Examples from Lorigo et al. (2006) and de Vos & Jansen (2007): http: //www. checkit. nl/pdf/eyetracking_research. pdf 23

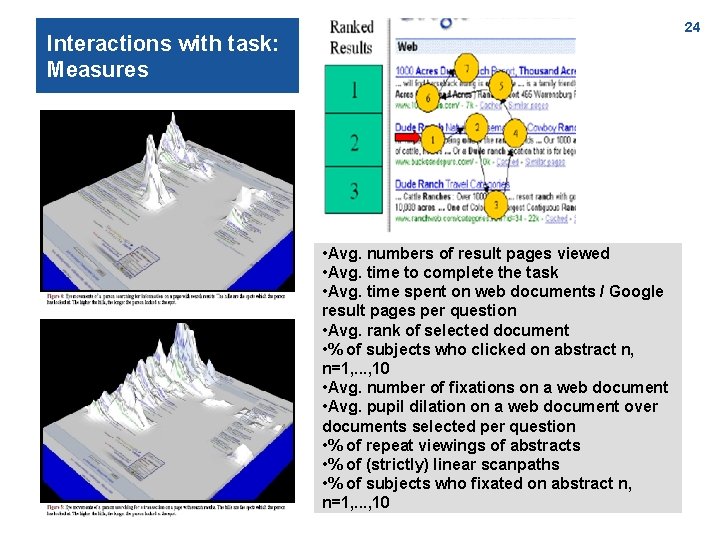

24 Interactions with task: Measures • Avg. numbers of result pages viewed • Avg. time to complete the task • Avg. time spent on web documents / Google result pages per question • Avg. rank of selected document • % of subjects who clicked on abstract n, n=1, . . . , 10 • Avg. number of fixations on a web document • Avg. pupil dilation on a web document over documents selected per question • % of repeat viewings of abstracts • % of (strictly) linear scanpaths • % of subjects who fixated on abstract n, n=1, . . . , 10

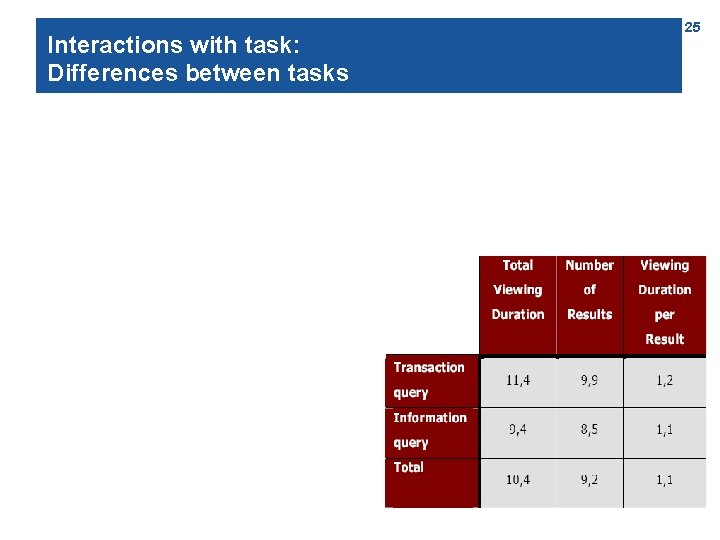

Interactions with task: Differences between tasks 25

Solution approaches n n Evaluation: l Precision @ 3 ? ! l Or a more sophisticated „top-heavy“ measure ( H. Zaragoza talk) Ranking: l n Commercial: l n Integrate query type into Φ (Hugo: How is that done? ) Search engine optimization An educational task? 26

Challenge 5: The platonic S and U don‘t exist – information systems do! 1. . 2. . 3. . ? ? 1. 2. 3. 4. . . 27

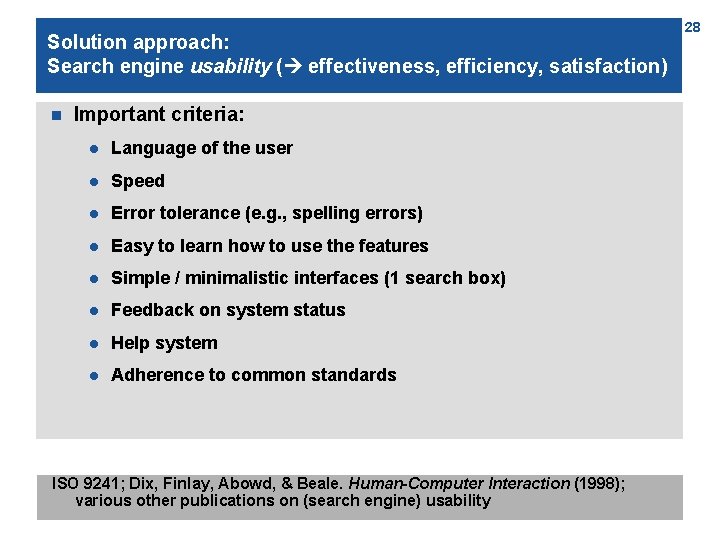

Solution approach: Search engine usability ( effectiveness, efficiency, satisfaction) n Important criteria: l Language of the user l Speed l Error tolerance (e. g. , spelling errors) l Easy to learn how to use the features l Simple / minimalistic interfaces (1 search box) l Feedback on system status l Help system l Adherence to common standards ISO 9241; Dix, Finlay, Abowd, & Beale. Human-Computer Interaction (1998); various other publications on (search engine) usability 28

Challenge 6: U depends on how the items are “thought of“ 1. . 2. . 3. . ? 1. 2. 3. 4. . . 29

The problem: Question 1 Imagine that the U. S. is preparing for the outbreak of an unusual Asian disease, which is expected to kill 600 people. Two alternative programs to combat the disease have been proposed. Assume that the exact scientific estimates of the consequences of the programs are as follows: n If program A is adopted, 200 people will be saved. n If program B is adopted, there is a one-third probability that 600 people will be saved and a two-thirds probability that no people will be saved. Which of the two programs would you favor? (Tversky & Kahneman, Science 1981; Nobel Prize 2002): Qs asked to a representative sample of physicians 30

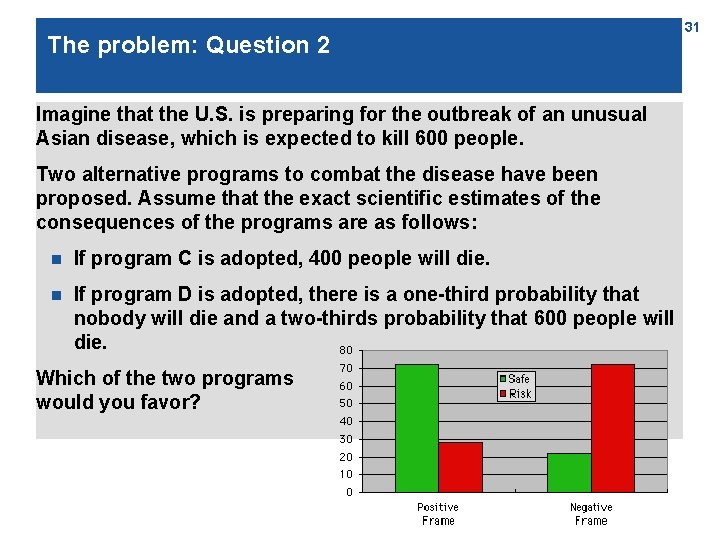

The problem: Question 2 Imagine that the U. S. is preparing for the outbreak of an unusual Asian disease, which is expected to kill 600 people. Two alternative programs to combat the disease have been proposed. Assume that the exact scientific estimates of the consequences of the programs are as follows: n If program C is adopted, 400 people will die. n If program D is adopted, there is a one-third probability that nobody will die and a two-thirds probability that 600 people will die. Which of the two programs would you favor? 31

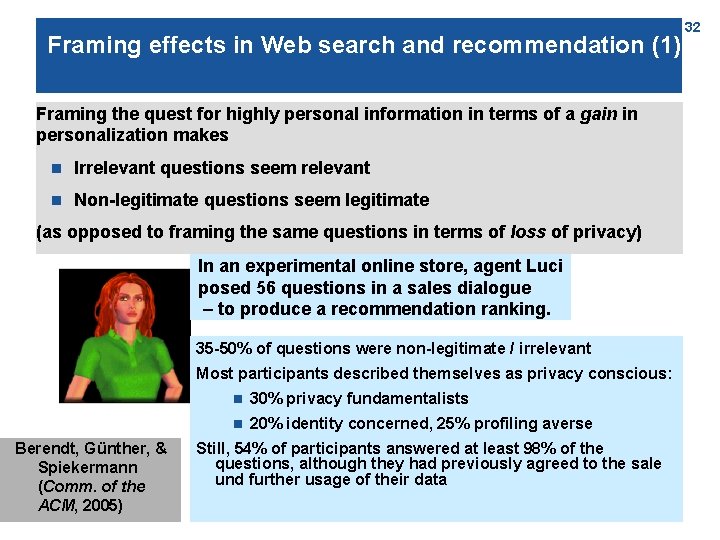

Framing effects in Web search and recommendation (1) Framing the quest for highly personal information in terms of a gain in personalization makes n Irrelevant questions seem relevant n Non-legitimate questions seem legitimate (as opposed to framing the same questions in terms of loss of privacy) In an experimental online store, agent Luci posed 56 questions in a sales dialogue – to produce a recommendation ranking. 35 -50% of questions were non-legitimate / irrelevant Most participants described themselves as privacy conscious: Berendt, Günther, & Spiekermann (Comm. of the ACM, 2005) n 30% privacy fundamentalists n 20% identity concerned, 25% profiling averse Still, 54% of participants answered at least 98% of the questions, although they had previously agreed to the sale und further usage of their data 32

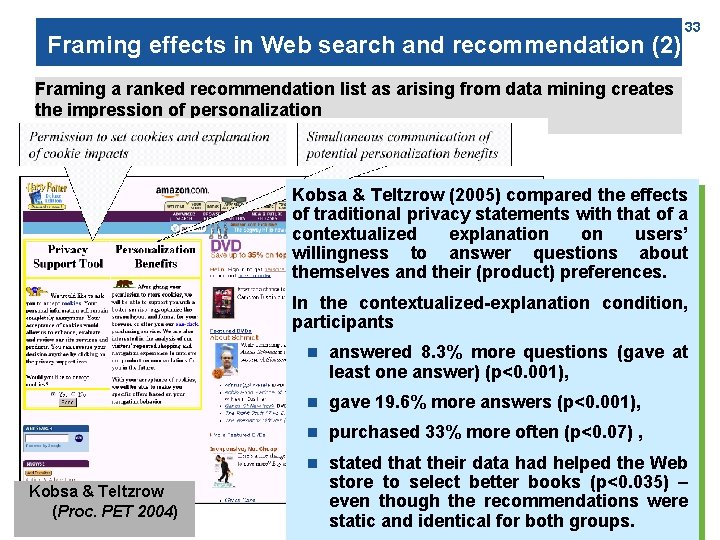

Framing effects in Web search and recommendation (2) 33 Framing a ranked recommendation list as arising from data mining creates the impression of personalization Kobsa & Teltzrow (2005) compared the effects of traditional privacy statements with that of a contextualized explanation on users’ willingness to answer questions about themselves and their (product) preferences. In the contextualized-explanation condition, participants Kobsa & Teltzrow (Proc. PET 2004) n answered 8. 3% more questions (gave at least one answer) (p<0. 001), n gave 19. 6% more answers (p<0. 001), n purchased 33% more often (p<0. 07) , n stated that their data had helped the Web store to select better books (p<0. 035) – even though the recommendations were static and identical for both groups.

Solution approaches n ? n (Automatically detecting) semantics: not a solution! n Of course, there‘s sentiment detection etc. . n But: even the problem needs to be defined! 34

35 Challenge 7: System-use dynamics and attitudes 1. . 2. . 3. . ? 1. 2. 3. 4. . .

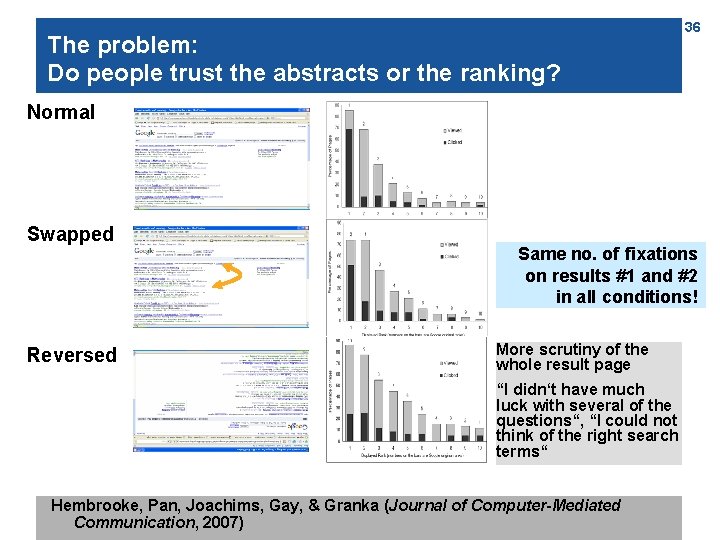

The problem: Do people trust the abstracts or the ranking? 36 Normal Swapped Reversed Same no. of fixations on results #1 and #2 in all conditions! More scrutiny of the whole result page “I didn‘t have much luck with several of the questions“, “I could not think of the right search terms“ Hembrooke, Pan, Joachims, Gay, & Granka (Journal of Computer-Mediated Communication, 2007)

Challenge 8: People have mistaken beliefs about algorithms 1. . 2. . 3. . ? 1. 2. 3. 4. . . 37

Introducing a third sample application area 38

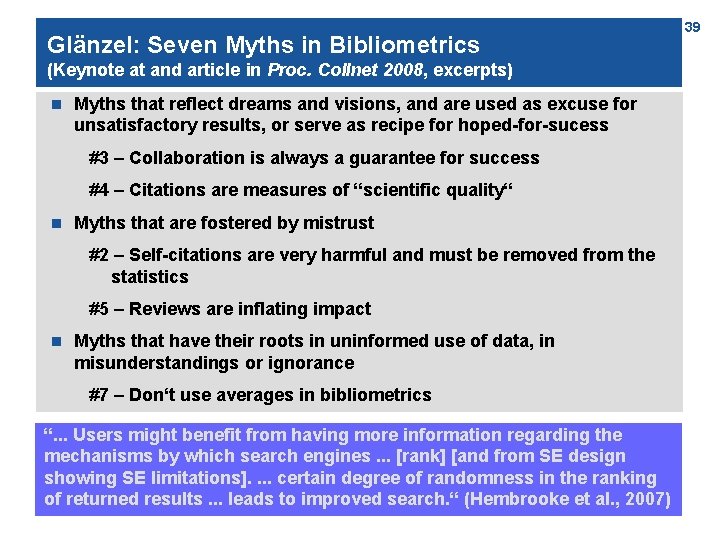

Glänzel: Seven Myths in Bibliometrics (Keynote at and article in Proc. Collnet 2008, excerpts) n Myths that reflect dreams and visions, and are used as excuse for unsatisfactory results, or serve as recipe for hoped-for-sucess #3 – Collaboration is always a guarantee for success #4 – Citations are measures of “scientific quality“ n Myths that are fostered by mistrust #2 – Self-citations are very harmful and must be removed from the statistics #5 – Reviews are inflating impact n Myths that have their roots in uninformed use of data, in misunderstandings or ignorance #7 – Don‘t use averages in bibliometrics “. . . Users “The history might of these benefit myths from reaches having more back information in a time when regarding bibliometrics the did not yet exist, mechanisms by which but due search to policy engines use and. . . [rank] misuse [andoffrom publication SE design and citation statistics, showing SE limitations]. bibliometrics. . . certain might degree act as of randomness catalyst in the inprocess the ranking of fostering, of returneddisseminating results. . . leads and toextending improved these search. “ myths. “ (Hembrooke (Glänzel, et 2008) al. , 2007) 39

Summary and conclusions: Some answers to. . . 1. 2. 3. 4. 5. Is this “the right ranking“ for users? Can they solve their tasks fast and with few errors? Do the ranking and the system (interaction) satisfy them? What do they do with ranking and system? What does this mean for content providers, search engine builders, and users / stakeholders? 40

41 Thank you! www. cs. kuleuven. be/~berendt

References (1) Picture credits: can be found in the "Notes" sections of the respective slides Literature: Berendt, B. , Günther, O. , Spiekermann, S. : Privacy in e-commerce: Stated preferences vs. actual behavior. Communications of the ACM 48(4) (2005). 101– 106. Broder, A. (2002). A taxonomy of web search. SIGIR Forum, 36(2), 3 -10. de Vos & Jansen (2007). Visual attention to Online Search Engine Results. http: //www. checkit. nl/pdf/eyetracking_research. pdf Dix, A. , Finlay, J. , Abowd, G. , & Beale, R. (1998). Human-Computer Interaction. Prentice Hall Europe. Eyetools (2008). Eyetools Research and Reports: Eyetools, Enquiro, and Did-it uncover Search's Golden Triangle. http: //www. eyetools. com/inpage/research_google_eyetracking_heatmap. htm Glänzel, W. (2008). Seven Myths in Bibliometrics. Proc. Collnet 2008. http: //www. collnet. de/Berlin-2008/Glanzel. WIS 2008 smb. pdf / Glänzel, W. (2008) “Seven myths in bibliometrics – About facts and fiction in quantitative science studies”, ISSI Newsletter, Vol. 4, No. 2, pp. 24– 32 Herlocker, J. L. , Konstan, J. A. , Terveen, L. G. , Riedl, J. T. : Evaluating Collaborative Filtering Recommender Systems. ACM Transactions on Information Systems, Vol. 22(1) (2004) (5 -53). Tversky, Amos, and Daniel Kahneman, 1981. "The Framing of Decisions and the Psychology of Choice. " Science 211: 453 -458. Kobsa, A. , Teltzrow, M. : Impacts of contextualized communication of privacy practices and personalization benefits on purchase behavior and perceived quality of recommendation. In: Beyond Personalization 2005: A Workshop on the Next Stage of Recommender Systems Research (IUI 2005), San Diego, CA (2005) 48– 53. Kralisch & Berendt publications: See http: //www. cs. kuleuven. be/~berendt 42

References (2) Leuski & Allan (2004). Interactive Information Retrieval Using Clustering and Spatial Proximity. User Modeling and User-Adaptive Interaction, 14, 259 -288. Lewandowski, D. & Höchstötter, N. (2007). Web Searching: A Quality Measurement Perspective. In M. Zimmer & A. Spink (Eds. ), Web Search: Interdisciplinary perspectives. Dordrecht: Spinger. Liu, H. & Mihalcea, R. (2007). Of men, women, and computers: Data-driven gender modeling for improved user interfaces. In Proc. of the International Conference on Weblogs and Social Media. http: //www. icwsm. org/papers/paper 3. html Lorigo, L. , Pan, B. , Hembrooke, H. , Joachims, T. , Granka, L. , & Gay, G. (2006). The influence of task and gender on search and evaluation behavior using Google. Information Processing & Management, 42 (4), 1123 -1131. Murakami, T. , Mori, K. , & Orihara, R. (2008). Metrics for Evaluating the Serendipity of Recommendation Lists. In K. Satoh et al. (Eds. ): JSAI 2007, (pp. 40– 46). Berlin Heidelberg: Springer-Verlag, LNAI 4914. Nielsen, J. (n. d. ) Eyetracking Research. http: //www. useit. com/eyetracking Pan, B. , Hembrooke, H. , Joachims, T. , Lorigo, L. , Gay, G. , and Granka, L. (2007). In Google we trust: Users' decisions on rank, position, and relevance. Journal of Computer-Mediated Communication, 12(3), 801 -823. http: //jcmc. indiana. edu/vol 12/issue 3/pan. html Rocchio, Jr. , J. J. : 1971, Relevance feedback in information retrieval. In: G. Salton (Ed. ): The SMART Retrieval System: Experiments in Automatic Document Processing. Prentice-Hall, Inc. , pp. 313 -323. Vaughan, L. (2004). New Measurements for Search Engine Evaluation Proposed and Tested. Information Processing & Management, 40(4), 677 -691. Webber, W. , Moffat, A. , Zobel, J. , & Sakai, T. (2008). Precision-at-ten considered redundant. In Proc. SIGIR'08 (695 --696). New York: ACM. . and references to Hugo Zaragoza‘s talk at the Mathematics of Ranking Symposium, Brussels, 15 Oct 2008. http: //www. cs. kuleuven. be/conference/ranking/programme. shtml 43

- Slides: 43