Document ranking Textbased Ranking 1 generation Doc is

Document ranking Text-based Ranking (1° generation)

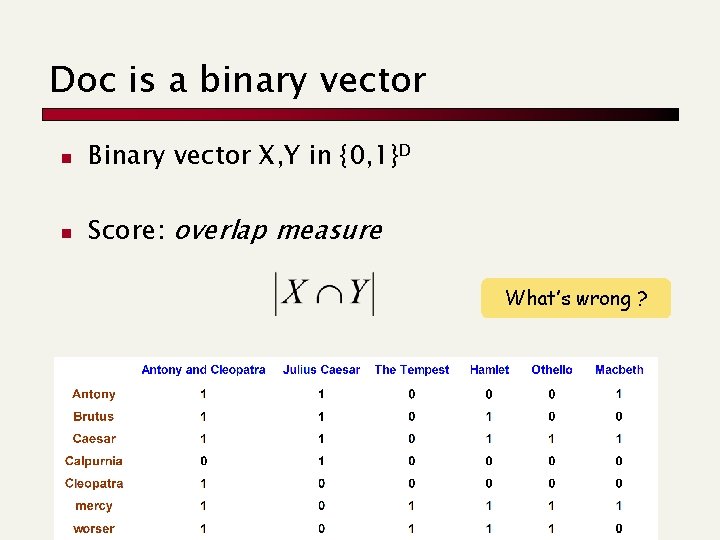

Doc is a binary vector n Binary vector X, Y in {0, 1}D n Score: overlap measure What’s wrong ?

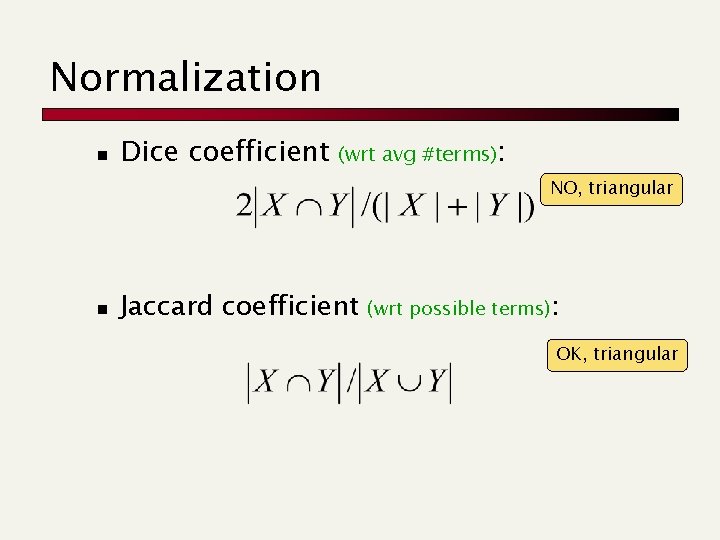

Normalization n Dice coefficient (wrt avg #terms): NO, triangular n Jaccard coefficient (wrt possible terms): OK, triangular

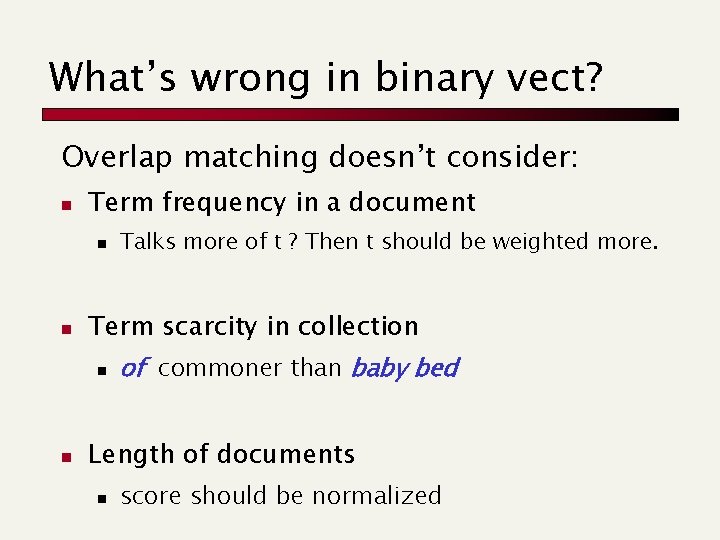

What’s wrong in binary vect? Overlap matching doesn’t consider: n Term frequency in a document n n Term scarcity in collection n n Talks more of t ? Then t should be weighted more. of commoner than baby bed Length of documents n score should be normalized

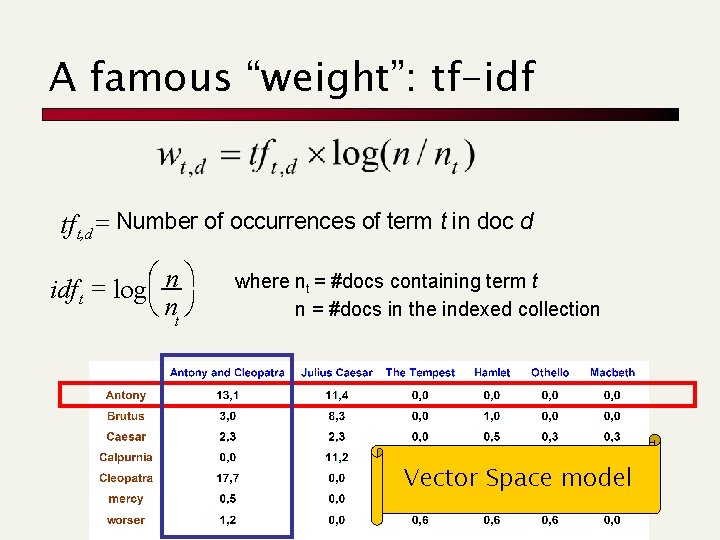

A famous “weight”: tf-idf tf t, d = Number of occurrences of term t in doc d idf t = logæç n ö è nt ø where nt = #docs containing term t n = #docs in the indexed collection Vector Space model

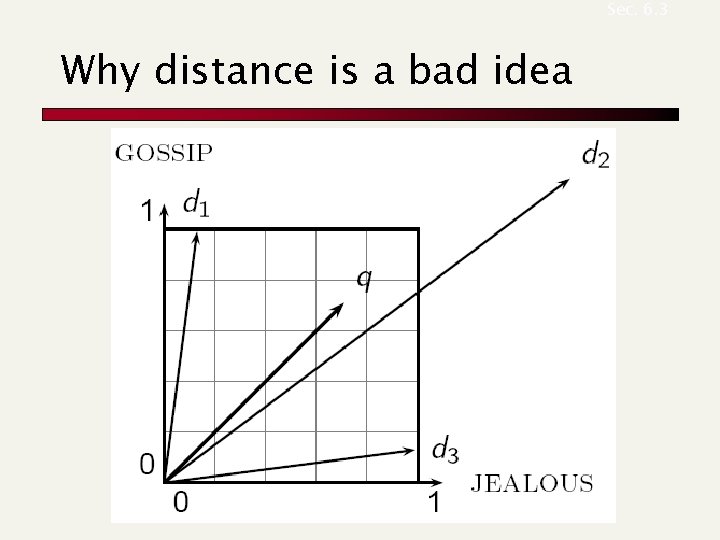

Sec. 6. 3 Why distance is a bad idea

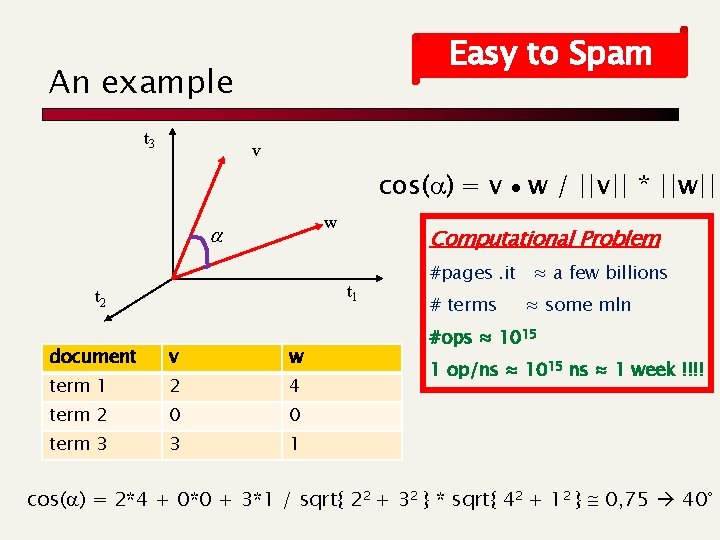

Easy to Spam An example t 3 v cos(a) = v w / ||v|| * ||w|| w a Computational Problem t 1 t 2 document v w term 1 2 4 term 2 0 0 term 3 3 1 #pages. it ≈ a few billions # terms ≈ some mln #ops ≈ 1015 1 op/ns ≈ 1015 ns ≈ 1 week !!!! cos(a) = 2*4 + 0*0 + 3*1 / sqrt{ 22 + 32 } * sqrt{ 42 + 12 } 0, 75 40°

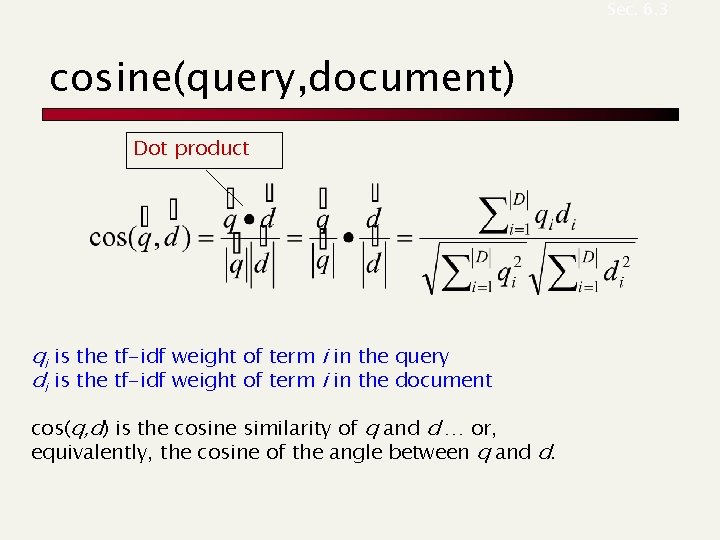

Sec. 6. 3 cosine(query, document) Dot product qi is the tf-idf weight of term i in the query di is the tf-idf weight of term i in the document cos(q, d) is the cosine similarity of q and d … or, equivalently, the cosine of the angle between q and d.

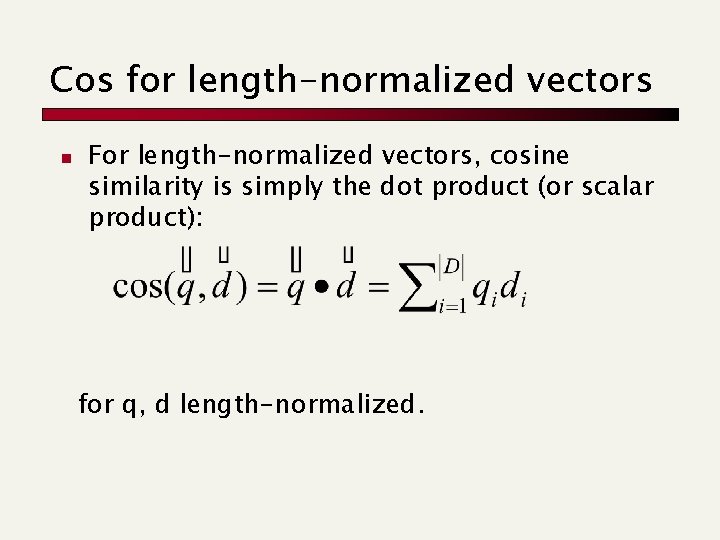

Cos for length-normalized vectors n For length-normalized vectors, cosine similarity is simply the dot product (or scalar product): for q, d length-normalized.

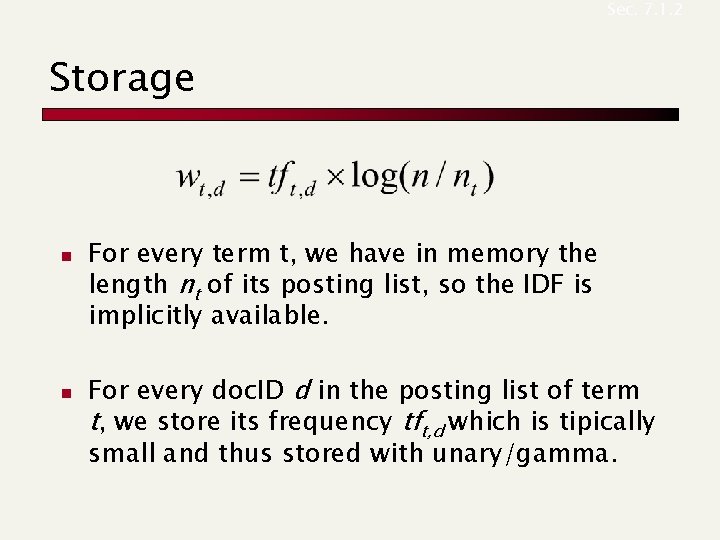

Sec. 7. 1. 2 Storage n n For every term t, we have in memory the length nt of its posting list, so the IDF is implicitly available. For every doc. ID d in the posting list of term t, we store its frequency tft, d which is tipically small and thus stored with unary/gamma.

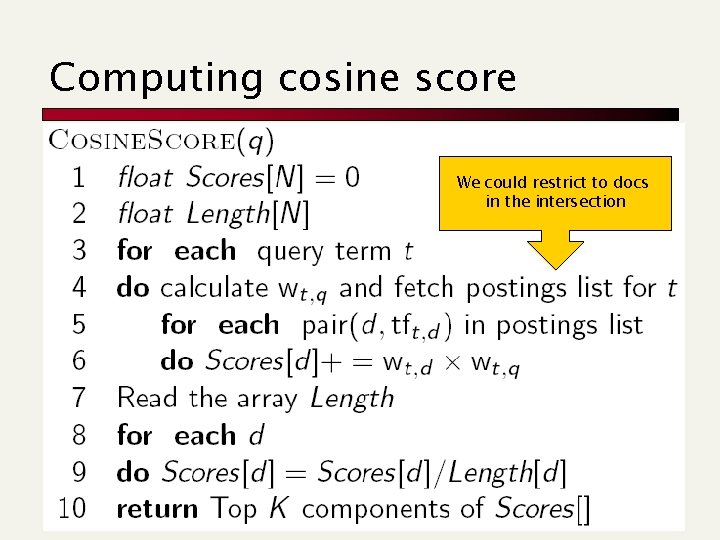

Computing cosine score We could restrict to docs in the intersection

Vector spaces and other operators n Vector space OK for bag-of-words queries n n n Clean metaphor for similar-document queries Not a good combination with operators: Boolean, wild-card, positional, proximity First generation of search engines n Invented before “spamming” web search

Top-K documents Approximate retrieval

Sec. 7. 1. 1 Speed-up top-k retrieval n Costly is the computation of the cos() n Find a set A of contenders, with k < |A| << N n n Set A does not necessarily contain all top-k, but has many docs from among the top-k Return the top-k docs in A, according to the score The same approach is also used for other (noncosine) scoring functions Will look at several schemes following this

Sec. 7. 1. 2 How to select A’s docs n n Consider docs containing at least one query term (obvious… as done before!). Take this further: 1. Only consider docs containing many query terms 2. Only consider high-idf query terms 3. Champion lists: top scores 4. Fancy hits: for complex ranking functions 5. Clustering

Approach #1: Docs containing many query terms n For multi-term queries, compute scores for docs containing several of the query terms n n n Say, at least q-1 out of q terms of the query Imposes a “soft conjunction” on queries seen on web search engines (early Google) Easy to implement in postings traversal

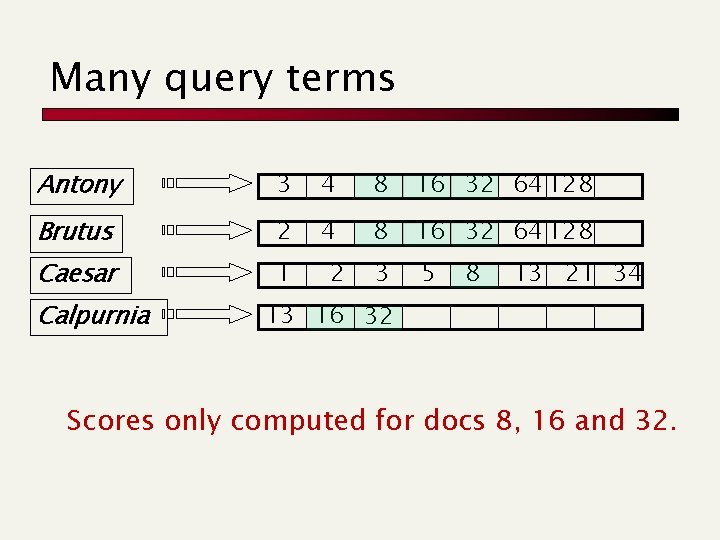

Many query terms Antony 3 4 8 16 32 64 128 Brutus 2 4 8 16 32 64 128 Caesar 1 3 5 Calpurnia 2 8 13 21 34 13 16 32 Scores only computed for docs 8, 16 and 32.

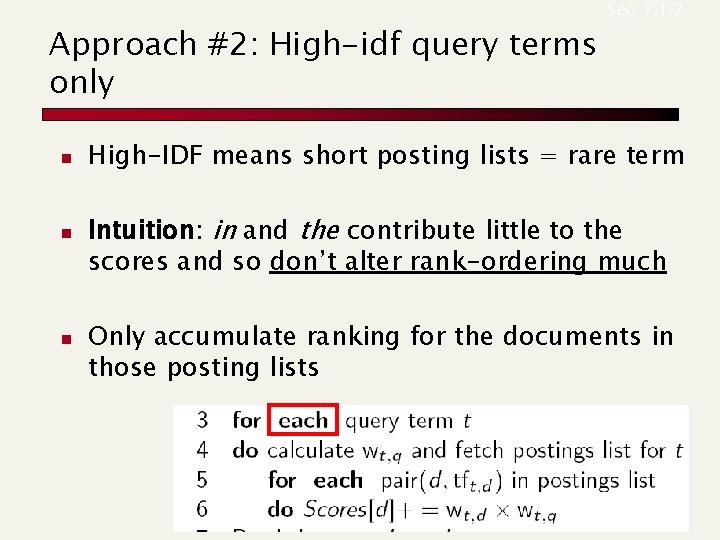

Approach #2: High-idf query terms only n n n Sec. 7. 1. 2 High-IDF means short posting lists = rare term Intuition: in and the contribute little to the scores and so don’t alter rank-ordering much Only accumulate ranking for the documents in those posting lists

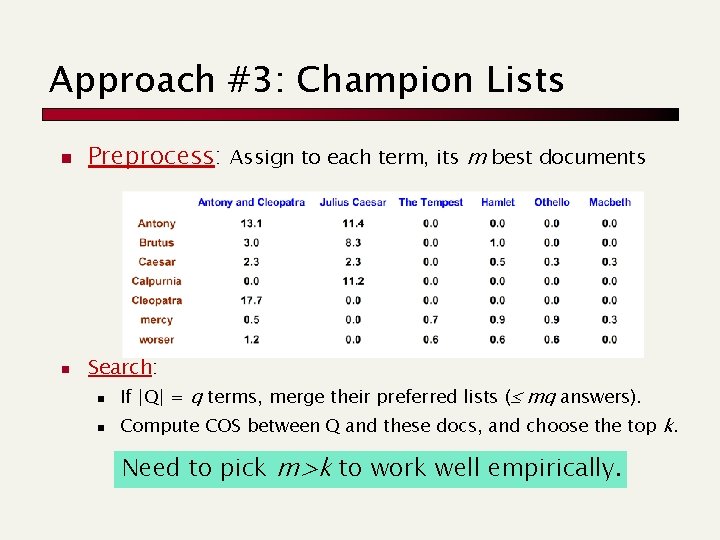

Approach #3: Champion Lists n Preprocess: Assign to each term, its m best documents n Search: n n If |Q| = q terms, merge their preferred lists ( mq answers). Compute COS between Q and these docs, and choose the top k. Need to pick m>k to work well empirically.

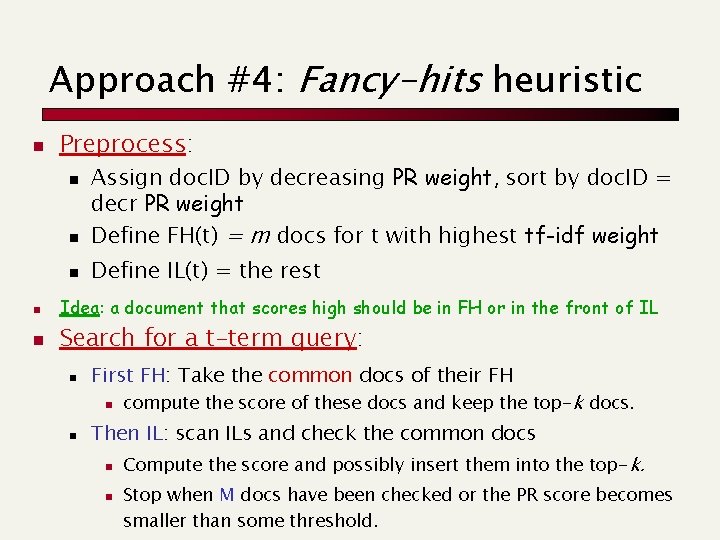

Approach #4: Fancy-hits heuristic n Preprocess: n n n Assign doc. ID by decreasing PR weight, sort by doc. ID = decr PR weight Define FH(t) = m docs for t with highest tf-idf weight Define IL(t) = the rest n Idea: a document that scores high should be in FH or in the front of IL n Search for a t-term query: n First FH: Take the common docs of their FH n n compute the score of these docs and keep the top-k docs. Then IL: scan ILs and check the common docs n n Compute the score and possibly insert them into the top-k. Stop when M docs have been checked or the PR score becomes smaller than some threshold.

Sec. 7. 1. 4 Modeling authority n n Assign to each document a query-independent quality score in [0, 1] to each document d n Denote this by g(d) Thus, a quantity like the number of citations (? ) is scaled into [0, 1]

Sec. 7. 1. 4 Champion lists in g(d)-ordering n n Can combine champion lists with g(d)ordering Or, maintain for each term a champion list of the r>k docs with highest g(d) + tf-idftd G(d) may be the Page. Rank Seek top-k results from only the docs in these champion lists

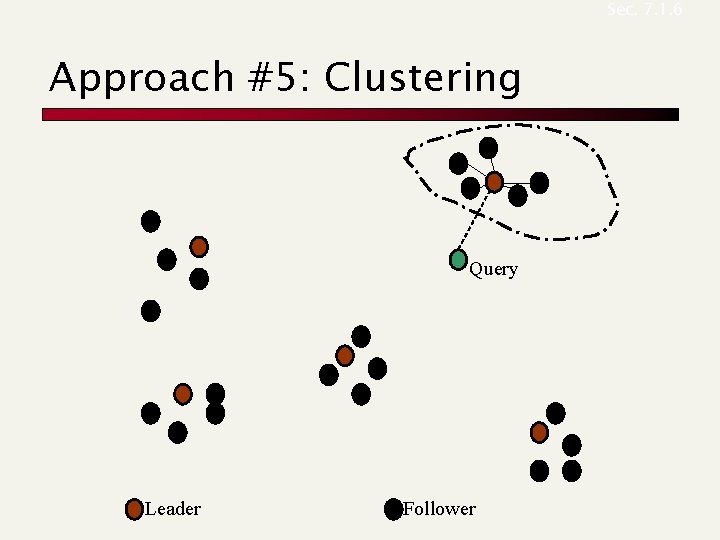

Sec. 7. 1. 6 Approach #5: Clustering Query Leader Follower

Sec. 7. 1. 6 Cluster pruning: preprocessing n n Pick N docs at random: call these leaders For every other doc, precompute nearest leader n n Docs attached to a leader: its followers; Likely: each leader has ~ N followers.

Sec. 7. 1. 6 Cluster pruning: query processing n Process a query as follows: n n Given query Q, find its nearest leader L. Seek K nearest docs from among L’s followers.

Sec. 7. 1. 6 Why use random sampling n n Fast Leaders reflect data distribution

Sec. 7. 1. 6 General variants n n n Have each follower attached to b 1=3 (say) nearest leaders. From query, find b 2=4 (say) nearest leaders and their followers. Can recur on leader/follower construction.

- Slides: 27