Thoughts on Big Data and ethics Bettina Berendt

Thoughts on “Big Data“ and ethics ‹#› Bettina Berendt Department of Computer Science KU Leuven, Belgium http: //people. cs. kuleuven. be/~bettina. berendt/ 23 November 2016

2 • What does ethics mean for you? • Why do you care? 2

‹#› Agenda Ethical dilemmas Ethics and law – a bird‘s-eye view Ethical theories – a bird‘s-eye view Big Data and responsibilities towards humans – esp. concerning personal data BD and responsibilities beyond personal data Ethical questions that may (or may not) change with BD

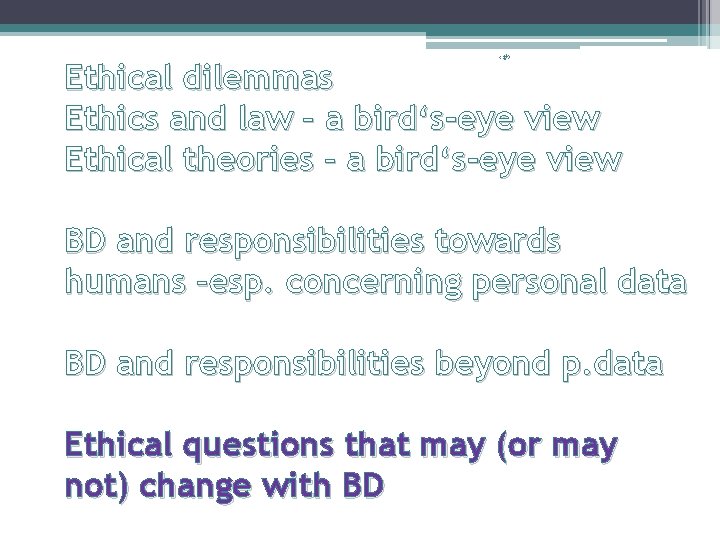

‹#› Ethical dilemmas Ethics and law – a bird‘s-eye view Ethical theories – a bird‘s-eye view BD and responsibilities towards humans –esp. concerning personal data BD and responsibilities beyond p. data Ethical questions that may (or may not) change with BD

5 What is ethics? • about right and wrong of human behaviour • assessing/judging/steering behaviour(actions, intentions, consequences) of individuals, groups, societies • Accounting for behaviour and consequences coherence and rationality, justification From (Vedder, 2015) 5

Gamer. Gate

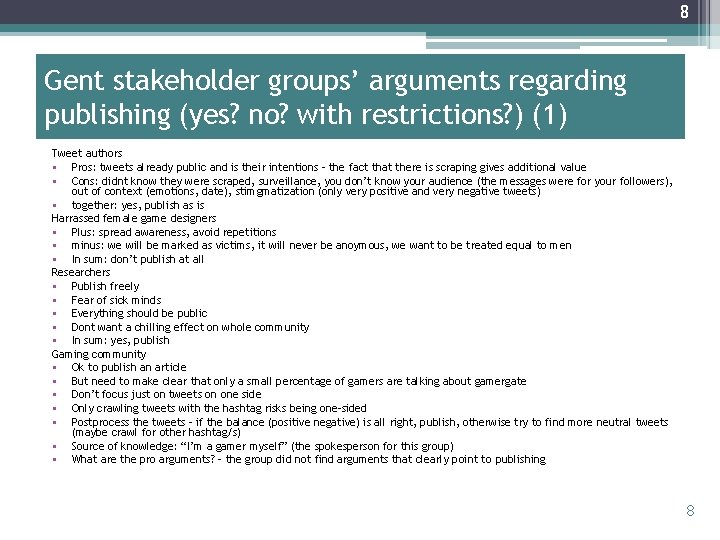

7 Your task Background: A research group wants to crawl #gamergate tweets, archive them and make data available to other researchers. The question is whether and, if so, how, to do this in an ethical way – and why. (for details, see Rockwell & Berendt, 2016, in progress). 1. Please identify stakeholder groups 2. Please form student groups, one for each stakeholder, discuss whether and why you would advocate publication, no publication, or publication under restrictions (which ones? ) 3. Please present your results in class 7

8 Gent stakeholder groups’ arguments regarding publishing (yes? no? with restrictions? ) (1) Tweet authors • Pros: tweets already public and is their intentions – the fact that there is scraping gives additional value • Cons: didnt know they were scraped, surveillance, you don’t know your audience (the messages were for your followers), out of context (emotions, date), stimgmatization (only very positive and very negative tweets) • together: yes, publish as is Harrassed female game designers • Plus: spread awareness, avoid repetitions • minus: we will be marked as victims, it will never be anoymous, we want to be treated equal to men • In sum: don’t publish at all Researchers • Publish freely • Fear of sick minds • Everything should be public • Dont want a chilling effect on whole community • In sum: yes, publish Gaming community • Ok to publish an article • But need to make clear that only a small percentage of gamers are talking about gamergate • Don’t focus just on tweets on one side • Only crawling tweets with the hashtag risks being one-sided • Postprocess the tweets – if the balance (positive negative) is all right, publish, otherwise try to find more neutral tweets (maybe crawl for other hashtag/s) • Source of knowledge: “I’m a gamer myself” (the spokesperson for this group) • What are the pro arguments? – the group did not find arguments that clearly point to publishing 8

9 Gent stakeholder groups’ arguments regarding publishing (yes? no? with restrictions? ) (2) Society at large • This stakeholder group is too big to discuss • Therefore we restricted ourselves to the stakeholder group “students” • Students opinions more important • Strong opinions, they are the future, importance of discussion is bigger for them • Freedom of speech is very important for them • more important than privacy • Because they are against censorship • In sum: yes • maybe without the names of the tweeters Journalists • Watchdogs of society • Want to inform our audience • Have to be aware of privacy laws • We want to appear trustworthy • Information has to be open, the more info the better • In sum: publish • If we think of ourselves as the stakeholder group “game journalists”, this doesn’t change our opinion • Take themselves out of the picture Gaming companies • Not a nice position, we were already targeted (no matter what stand we take) • We want to put an end to it • Want to have it published, as anonmyised as possible • Purpose should not be fingerpointing, but to provide clarity • We support equality but not unfairness – also in reporting – with this research, everyone could see what happened 9

‹#› Ethical dilemmas Ethics and law – a bird‘s-eye view Ethical theories – a bird‘s-eye view BD and responsibilities towards humans –esp. concerning personal data BD and responsibilities beyond p. data Ethical questions that may (or may not) change with BD

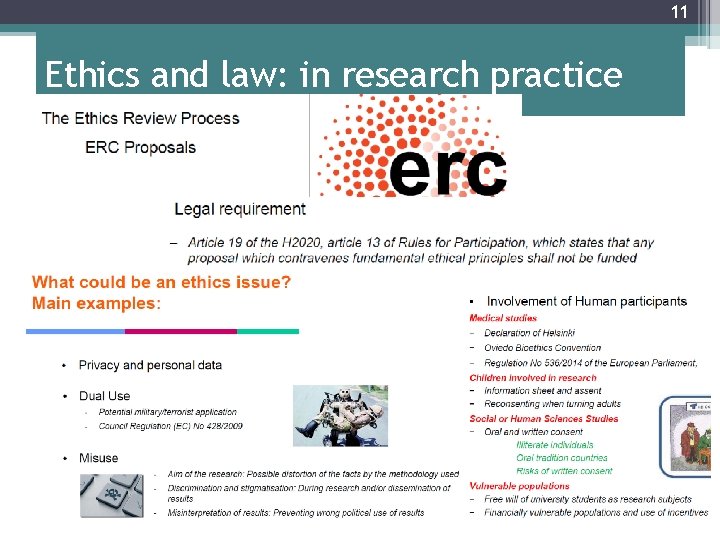

11 Ethics and law: in research practice

12 Ethics and law: similarities • Note: Similarities and differences: matters of degree; not black and white • Law and ethics both ▫ Are about(steering) human behaviour ▫ Sanction deviant behaviour ▫ Consist primarily of prescriptive propositions(commands, prohibitions – normative language) From (Vedder, 2015) 12

13 Ethics and law: differences (1) • Law mostly enforced by the state; ethics enforced by members of society: praise, contempt, ostracism • Law largely codified, ethics not • Large parts of law ethically neutral(e. g. substance of traffic rules) • Not everything ethically desirable incorporated in law • Law: system requirements; rapidity & flexibility of response often lacking • Law partially based on and “criticizable” by ethics (sometimes v. v. ) Ethics often claimed to prevail. From (Vedder, 2015) 13

14 Ethics and law: differences (2) • Ethical concepts may not even exist in the law ▫ Example: “good hacker” – “bad hacker” (Gonzalez Fuster & Gutwirth, 2014) • The law provides (in most cases) for a way back into society; this is not generally the case for ethical failures 14

15 Side note • Cultural understandings and legal framings of privacy are diverse • Here: an EU perspective 15

‹#› Ethical dilemmas Ethics and law – a bird‘s-eye view Ethical theories – a bird‘s-eye view BD and responsibilities towards humans –esp. concerning personal data BD and responsibilities beyond p. data Ethical questions that may (or may not) change with BD

17 Three mainstream lines of thinking • Intentions/Acts > Deontologist > Kantianism, Rawlsianism, Moral Rights theories, Cosmopolitanism [“liberal”] • Consequences> Consequentialist > Utilitarianism; Need consequentialism • Community > Communitarianism, Republicanisms, Virtue theories, anti-theorist theory et cetera From (Vedder, 2015) 17

Ethical dilemmas Ethics and law – a bird‘s-eye view Ethical theories – a bird‘s-eye view ‹#› BD and responsibilities towards humans –esp. concerning personal data 1. The case study BD and responsibilities beyond p. data Ethical questions that may (or may not) change with BD

Ethical dilemmas Ethics and law – a bird‘s-eye view Ethical theories – a bird‘s-eye view ‹#› BD and responsibilities towards humans –esp. concerning personal data 1. The case study 2. The living and the dead BD and responsibilities beyond p. data Ethical questions that may (or may not) change with BD

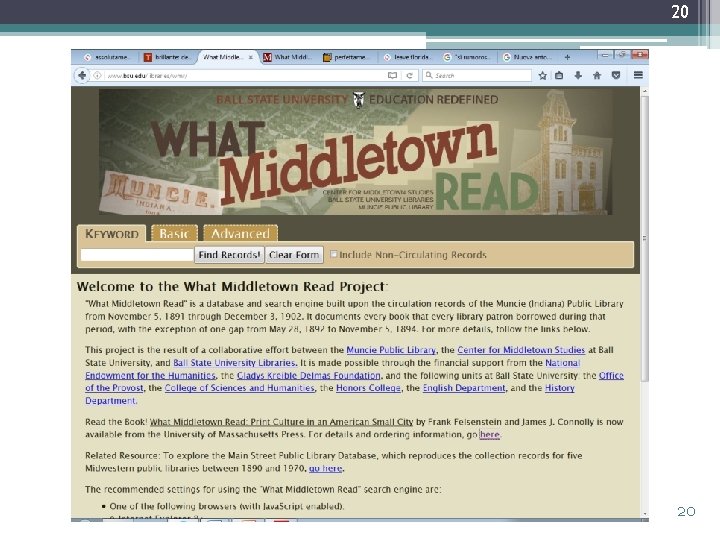

20 20

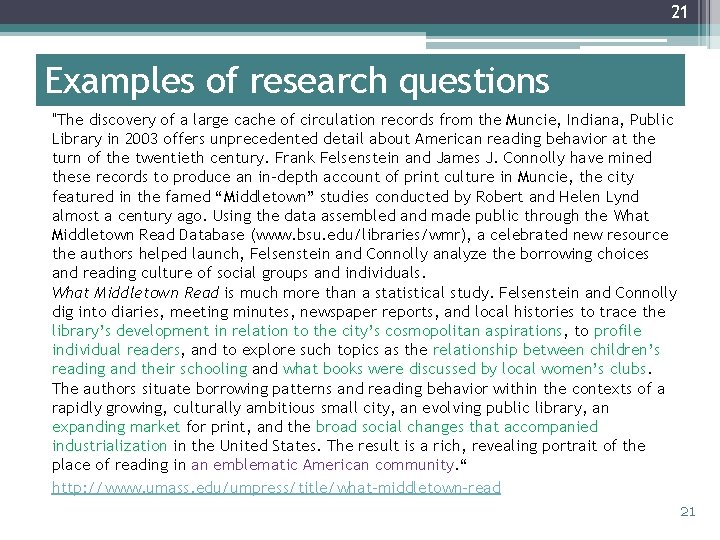

21 Examples of research questions "The discovery of a large cache of circulation records from the Muncie, Indiana, Public Library in 2003 offers unprecedented detail about American reading behavior at the turn of the twentieth century. Frank Felsenstein and James J. Connolly have mined these records to produce an in-depth account of print culture in Muncie, the city featured in the famed “Middletown” studies conducted by Robert and Helen Lynd almost a century ago. Using the data assembled and made public through the What Middletown Read Database (www. bsu. edu/libraries/wmr), a celebrated new resource the authors helped launch, Felsenstein and Connolly analyze the borrowing choices and reading culture of social groups and individuals. What Middletown Read is much more than a statistical study. Felsenstein and Connolly dig into diaries, meeting minutes, newspaper reports, and local histories to trace the library’s development in relation to the city’s cosmopolitan aspirations, to profile individual readers, and to explore such topics as the relationship between children’s reading and their schooling and what books were discussed by local women’s clubs. The authors situate borrowing patterns and reading behavior within the contexts of a rapidly growing, culturally ambitious small city, an evolving public library, an expanding market for print, and the broad social changes that accompanied industrialization in the United States. The result is a rich, revealing portrait of the place of reading in an emblematic American community. “ http: //www. umass. edu/umpress/title/what-middletown-read 21

‹#› Ethical dilemmas Ethics and law – a bird‘s-eye view Ethical theories – a bird‘s-eye view BD and responsibilities towards humans –esp. concerning personal data BD and responsibilities beyond p. data 1. Animals, nature, . . . Ethical questions that may (or may not) change with BD

‹#› Ethical dilemmas Ethics and law – a bird‘s-eye view Ethical theories – a bird‘s-eye view BD and responsibilities towards humans –esp. concerning personal data BD and responsibilities beyond p. data 1. Animals, nature, . . . 2. The truth? ! Ethical questions that may (or may not) change with BD

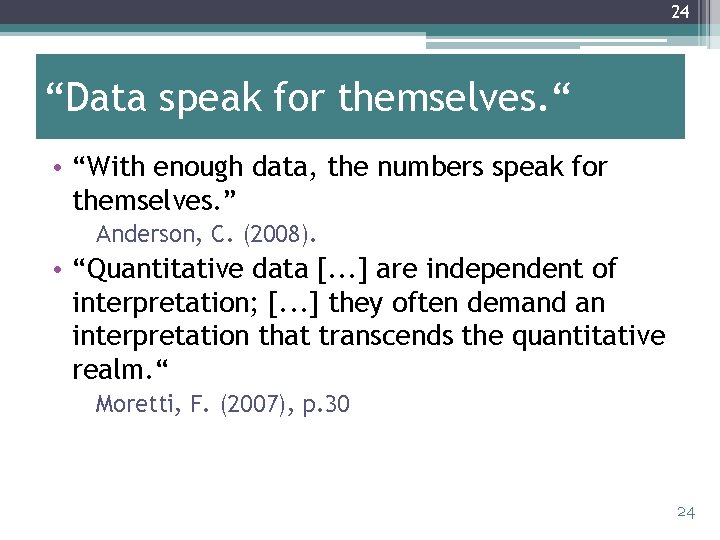

24 “Data speak for themselves. “ • “With enough data, the numbers speak for themselves. ” Anderson, C. (2008). • “Quantitative data [. . . ] are independent of interpretation; [. . . ] they often demand an interpretation that transcends the quantitative realm. “ Moretti, F. (2007), p. 30 24

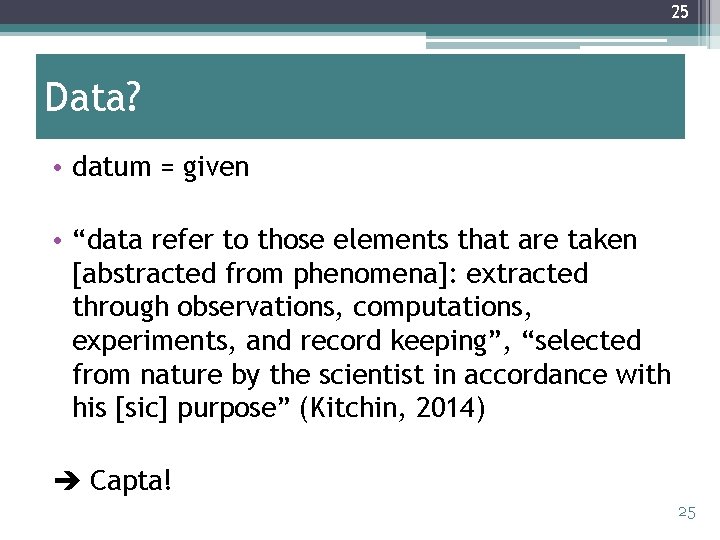

25 Data? • datum = given • “data refer to those elements that are taken [abstracted from phenomena]: extracted through observations, computations, experiments, and record keeping”, “selected from nature by the scientist in accordance with his [sic] purpose” (Kitchin, 2014) Capta! 25

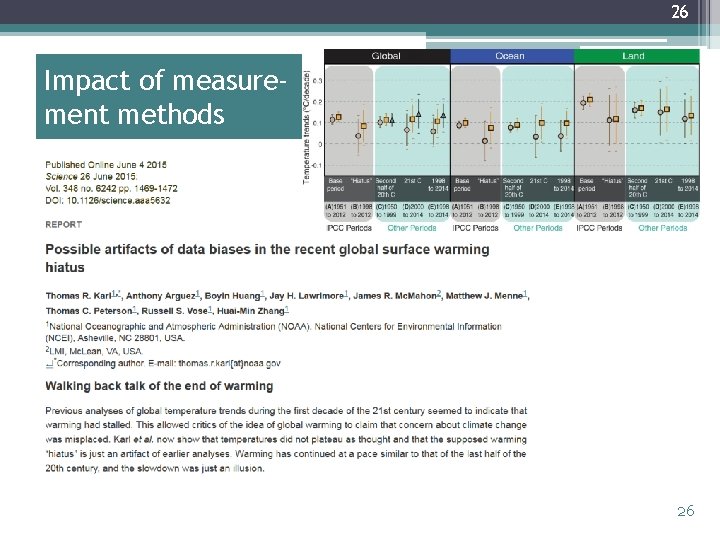

26 Impact of measurement methods 26

27 Name ≥ 2 aspects of the data we should question! Moretti, F. (2007) 27

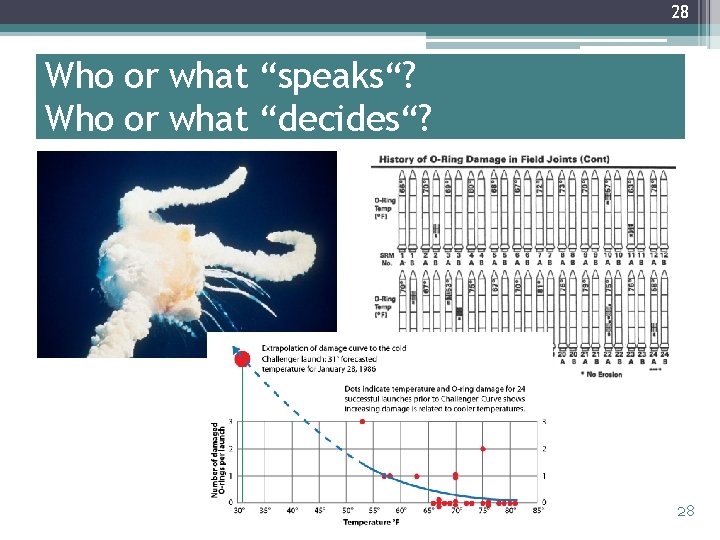

28 Who or what “speaks“? Who or what “decides“? 28

29 Summary: Can data speak for themselves? • „all researchers are interpreters of data“ (boyd & Crawford, 2012) • This starts with the design decisions that determine what will be measured • . . . Goes on with decisions of what is cleaned (what is a stopword? What is an outlier? . . . ) • . . . Continues with the decision of models (e. g. inductive bias of data-mining methods) • . . . And of course carries over into the interpretation of the results 29

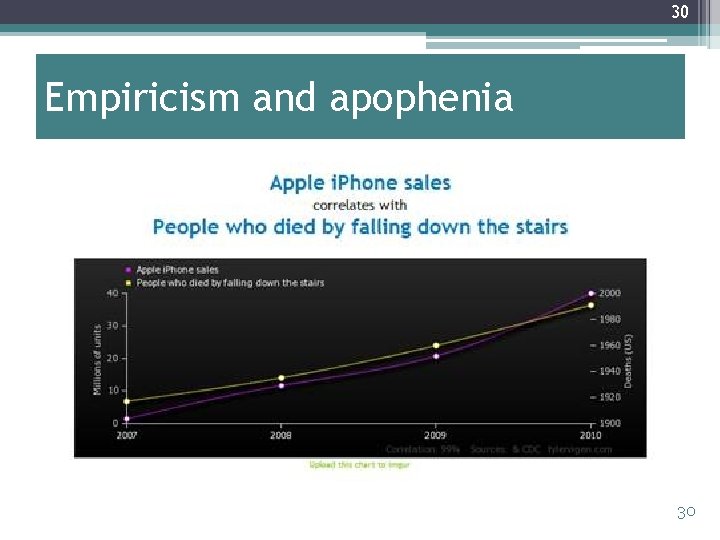

30 Empiricism and apophenia 30

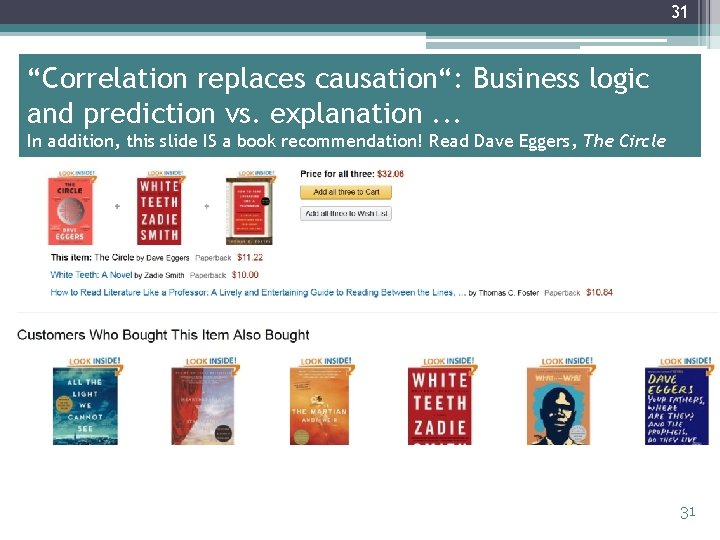

31 “Correlation replaces causation“: Business logic and prediction vs. explanation. . . In addition, this slide IS a book recommendation! Read Dave Eggers, The Circle 31

32 . . . but can we explain German history like this? (Thanks to Christiane Fellbaum for leading me up to this example) 32

33 Bigger data are not always better data • All data (big or not) are representations and samples ▫ E. g. „Twitter users“ =/= (all) people • Social scientists know this and have methods to correct for it (or: to assess the impact) • The Big Data assumption that “more data compensates for these factors“ is wrong! • Commercial interests behind data provision, privacy concerns, . . . we don‘t even know what the sampling method and the biases are! • Combining datasets makes the problem worse 33

34 Statistics doesn‘t change just because of computers (or big datasets) • Let‘s say you predict “politician X will win“ because you found, with sentiment analysis, that 60% rate her positively and 40% negatively. • What if the sentiment-analysis method has ~70% accuracy? • What if it builds on a prior ▫ language detection ▫ named-entity recognition ▫. . . with x<100% accuracy? 34

35 Parking lot science 35

36 Parking lot science: examples? ! • Restrictions on search in Twitter, . . . Research focus on current and recent events? ! • “Trending topics“ algorithm in Twitter based on burstiness Suppression of persistent topics? ! 36

‹#› Ethical dilemmas Ethics and law – a bird‘s-eye view Ethical theories – a bird‘s-eye view BD and responsibilities towards humans –esp. concerning personal data BD and responsibilities beyond p. data Ethical questions that may (or may not) change with BD

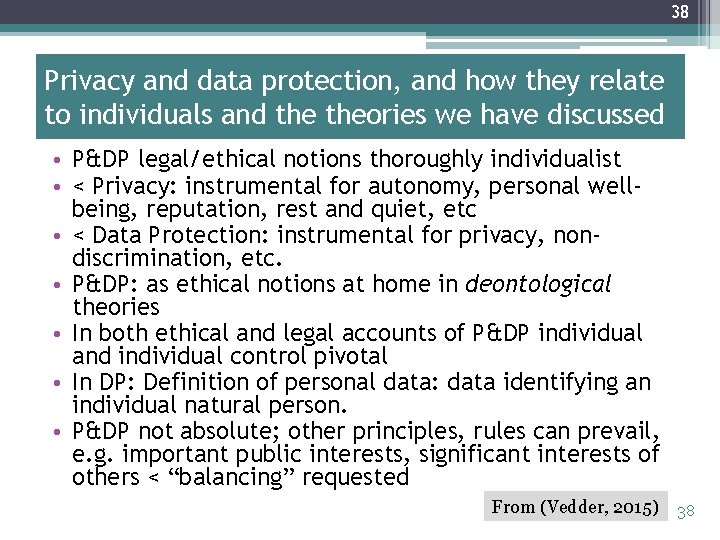

38 Privacy and data protection, and how they relate to individuals and theories we have discussed • P&DP legal/ethical notions thoroughly individualist • < Privacy: instrumental for autonomy, personal wellbeing, reputation, rest and quiet, etc • < Data Protection: instrumental for privacy, nondiscrimination, etc. • P&DP: as ethical notions at home in deontological theories • In both ethical and legal accounts of P&DP individual and individual control pivotal • In DP: Definition of personal data: data identifying an individual natural person. • P&DP not absolute; other principles, rules can prevail, e. g. important public interests, significant interests of others < “balancing” requested From (Vedder, 2015) 38

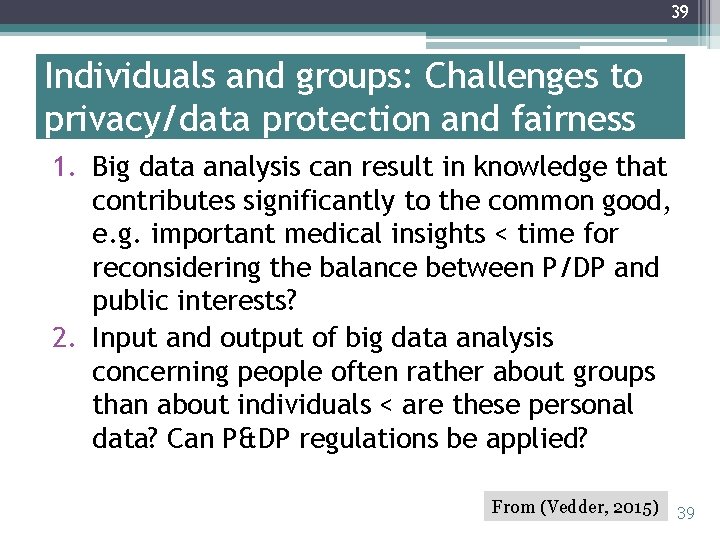

39 Individuals and groups: Challenges to privacy/data protection and fairness 1. Big data analysis can result in knowledge that contributes significantly to the common good, e. g. important medical insights < time for reconsidering the balance between P/DP and public interests? 2. Input and output of big data analysis concerning people often rather about groups than about individuals < are these personal data? Can P&DP regulations be applied? From (Vedder, 2015) 39

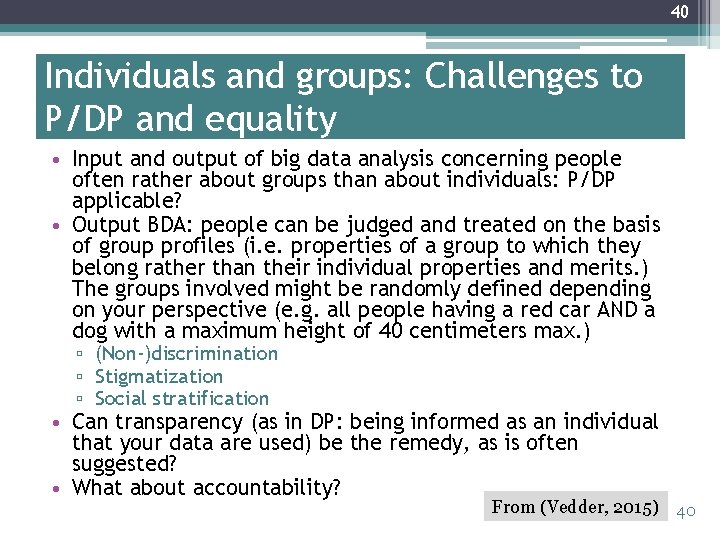

40 Individuals and groups: Challenges to P/DP and equality • Input and output of big data analysis concerning people often rather about groups than about individuals: P/DP applicable? • Output BDA: people can be judged and treated on the basis of group profiles (i. e. properties of a group to which they belong rather than their individual properties and merits. ) The groups involved might be randomly defined depending on your perspective (e. g. all people having a red car AND a dog with a maximum height of 40 centimeters max. ) ▫ (Non-)discrimination ▫ Stigmatization ▫ Social stratification • Can transparency (as in DP: being informed as an individual that your data are used) be the remedy, as is often suggested? • What about accountability? From (Vedder, 2015) 40

41 Data: collection vs. observation Challenges to autonomy • Data used to be primarily collected • Our model of consent works relatively well for situations in which there is a clear moment of collection, which involves the data subject • BD involves much more observed data • How can consent be obtained? • What does this mean for autonomy? 41

42 BD ideology itself: “You never know what it’s good for” • A basic principle of European data protection: purpose specification and purpose limitation • BD’s exploratory spirit • Shifts in emphasis from DP Directive towards GDPR ▫ Compatible use ▫ Legitimate interest • Towards a use-based approach? Moving (further) towards utilitarianism? • Who gets to define “harms” and “benefits”? 42

43 Respect for context • Respect for diverse social contexts and norms • A move towards communitarian ideas? 43

44 Challenges concerning the redistribution of knowledge/expertise Big Data collection and analysis about people, but also about traffic, machinery, farming, seeds, etc. can change the locus of knowledge. Some issues (list not exhaustive: ) • Changes in the distribution of knowledge can cause shifts in power relationships, e. g. relocating agricultural expertise from farmers to engineers in corporations. What about the intellectual rights of the traditional professionals? • Producing information that is significant for individuals but which they might not want to know: right to ignorance? From (Vedder, 2015) 44

45 Some challenges arising from the economics of digital media and research 45

46 1. Access and new digital divides? • Who gets to do research? ▫ ▫ Social-media companies? Rich top-tier universities? Computer scientists? . . . What about further demographics? • “Digital Humanities work is only getting funded these days if it involves big infrastructure projects. “ ▫ (from a conversation with a critical data scientist who has big infrastructure projects) 46

47 2. What if the researcher is also the service provider? • “The Facebook experiment” (Kramer et al. , 2014) manipulated the contents of nearly 700, 000 users’ News Feeds to induce changes in their emotions. • Basic question: If you hear happy stories from your friends, does this ▫ make you happy? (“emotional contagion“) ▫ make you miserable (“social comparison“) • This experiment was widely criticized on ethical grounds regarding informed consent. • But it also has severe methodological flaws. 47

48 3. What if the service provider is also the news medium? • Ex. Twitter‘s Trending topics, Facebook‘s edgerank “But keep in mind, Ferguson is also a net neutrality issue. It’s also an algorithmic filtering issue. How the internet is run, governed and filtered is a human rights issue. ” (Zeynep Tufekci, 2014) • Ex. Trump election: Facebook – news selection or advertising or …? 48

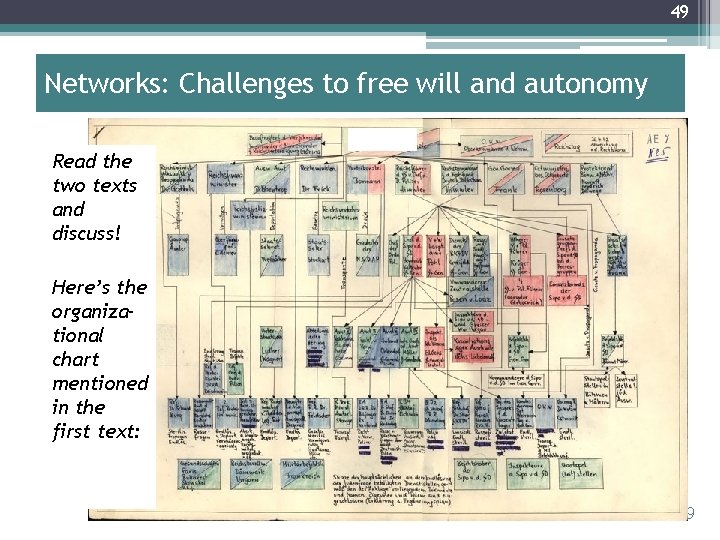

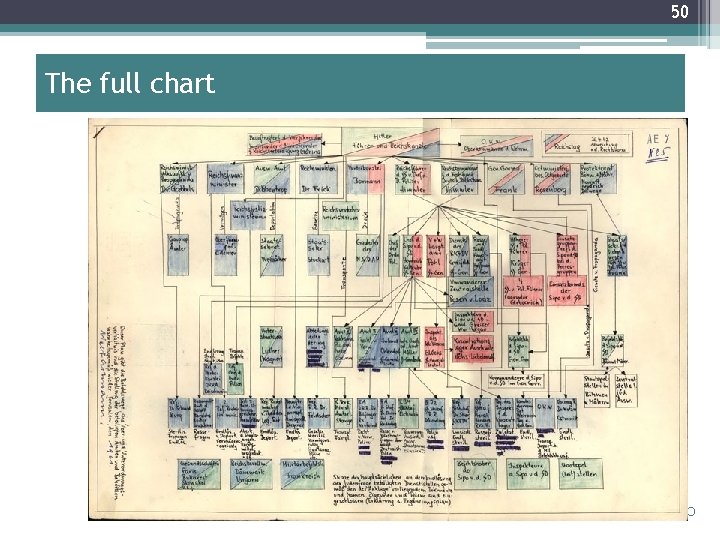

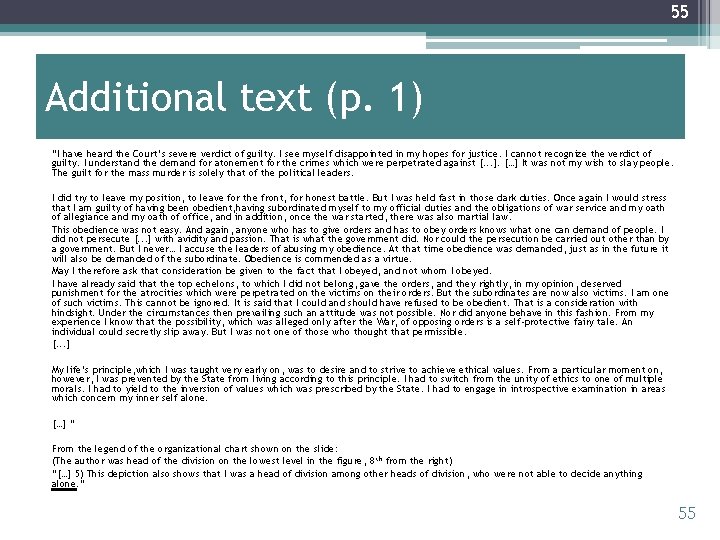

49 Networks: Challenges to free will and autonomy Read the two texts and discuss! Here’s the organizational chart mentioned in the first text: 49

50 The full chart 50

51 “I was one of the many horses pulling the wagon and couldn’t escape left or right” ? ! • Arendt (1963): ▫ ~ But he had a choice. ▫ There always choices. ▫ And many people did exercise them. • Note: Even if she was wrong about the individual case (Eichmann was an avid antisemite), the philosophical-political analysis remains valid. 51

52 The "narrative of inevitability" • BD is not a “law of nature” • BD is a (set of) social decision(s) 52

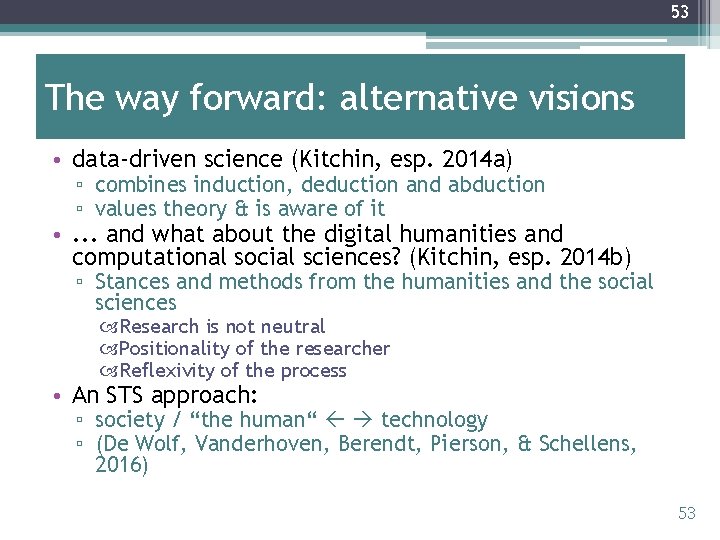

53 The way forward: alternative visions • data-driven science (Kitchin, esp. 2014 a) ▫ combines induction, deduction and abduction ▫ values theory & is aware of it • . . . and what about the digital humanities and computational social sciences? (Kitchin, esp. 2014 b) ▫ Stances and methods from the humanities and the social sciences Research is not neutral Positionality of the researcher Reflexivity of the process • An STS approach: ▫ society / “the human“ technology ▫ (De Wolf, Vanderhoven, Berendt, Pierson, & Schellens, 2016) 53

54 Your task • Read the following two texts and comment. 54

55 Additional text (p. 1) “I have heard the Court’s severe verdict of guilty. I see myself disappointed in my hopes for justice. I cannot recognize the verdict of guilty. I understand the demand for atonement for the crimes which were perpetrated against [. . . ]. […] It was not my wish to slay people. The guilt for the mass murder is solely that of the political leaders. I did try to leave my position, to leave for the front, for honest battle. But I was held fast in those dark duties. Once again I would stress that I am guilty of having been obedient, having subordinated myself to my official duties and the obligations of war service and my oath of allegiance and my oath of office, and in addition, once the war started, there was also martial law. This obedience was not easy. And again, anyone who has to give orders and has to obey orders knows what one can demand of people. I did not persecute [. . . ] with avidity and passion. That is what the government did. Nor could the persecution be carried out other than by a government. But I never… I accuse the leaders of abusing my obedience. At that time obedience was demanded, just as in the future it will also be demanded of the subordinate. Obedience is commended as a virtue. May I therefore ask that consideration be given to the fact that I obeyed, and not whom I obeyed. I have already said that the top echelons, to which I did not belong, gave the orders, and they rightly, in my opinion, deserved punishment for the atrocities which were perpetrated on the victims on their orders. But the subordinates are now also victims. I am one of such victims. This cannot be ignored. It is said that I could and should have refused to be obedient. That is a consideration with hindsight. Under the circumstances then prevailing such an attitude was not possible. Nor did anyone behave in this fashion. From my experience I know that the possibility, which was alleged only after the War, of opposing orders is a self-protective fairy tale. An individual could secretly slip away. But I was not one of those who thought that permissible. [. . . ] My life’s principle, which I was taught very early on, was to desire and to strive to achieve ethical values. From a particular moment on, however, I was prevented by the State from living according to this principle. I had to switch from the unity of ethics to one of multiple morals. I had to yield to the inversion of values which was prescribed by the State. I had to engage in introspective examination in areas which concern my inner self alone. […] “ From the legend of the organizational chart shown on the slide: (The author was head of the division on the lowest level in the figure, 8 th from the right) “[…] 5) This depiction also shows that I was a head of division among other heads of division, who were not able to decide anything alone. ” 55

56 Additional text (p. 2) “Already, simply the absence of knowledge about which data is in fact collected or what it can be used for puts the “data generator” (e. g. online consumers, cellphone owning people, etc. ) at an ethical disadvantage qua knowledge and free will. ” “Big Data might induce certain changes to traditional assumptions of ethics regarding individuality, free will, and power. ” “Big Data does have strong effects on assumptions about individual responsibility and power distributions. ” “[…] Big Data stakeholders, this could mean that we find these new stakeholders wielding a lot of power: 1. Big Data collectors determine which data is collected, which is stored and for how long. They govern the collection, and implicitly the utility, of Big Data. 2. Big Data utilizers: They are on the utility production side. While (a) might collect data with or without a certain purpose, (b) (re-)defines the purpose for which data is used, for example regarding: ▫ Determining behavior by imposing new rules on audiences or manipulating social processes; ▫ Creating innovation and knowledge through bringing together new datasets, thereby achieving a competitive advantage. 3. Big Data generators: ▫ Natural actors that by input or any recording voluntarily, involuntarily, knowingly, or unknowingly generate massive amounts of ▫ ▫ data. Artificial actors that create data as a direct or indirect result of their task or functioning. Physical phenomena, which generate massive amounts of data by their nature or which are measured in such detail that it amounts to massive data flows. The interaction between these three stakeholders illustrates power relationships and gives us already an entirely different view on individual agency, namely an agency that is, for its capability of morally relevant action, entirely dependent on other actors. One could call this agency ‘dependent agency', for its capability to act is depending on other actors. […] The network nature of society, however, means that this dependent agency is always a factor when judging the moral responsibility of the agent. In contrast to traditional ethics, where knock-on effects (that is, effects on third mostly unrelated parties, as for example in collateral damage scenarios) in a social or cause–effect network do play a minor role, Big Data-induced hypernetworked ethics exacerbate the effect of network knock-on effects. In other words, the nature of hyper-networked societies exacerbates the collateral damage caused by actions within this network. This changes foundational assumptions about ethical responsibility by changing what power is and the extent we can talk of free will by reducing knowable outcomes of actions, while increasing unintended consequences. ” 56

57 Homework ; -) Read “ethics guidelines” of your discipline (e. g. media, journalism, marketing) and think about • Which stakeholders have been thought of? • Which ones haven’t? • Which ethical stance(s) does the document reflect? • Can you agree? • Does the document say anything about special challenges of “Big Data”? 57

58 Thank you! I‘ll be more than happy to hear your ? s

59 References • • • • • Anderson, C. (2008). The end of theory: The data deluge makes the scientific method obsolete. Wired 16. 07. Available at http: //edge. org/3 rd_culture/anderson 08_index. html Arendt, H. (1963). Eichmann in Jerusalem: A Report on the Banality of Evil. Viking Press. Berendt, B. (2015). Big Capta, Bad Science? On two recent books on “Big Data” and its revolutionary potential. http: //people. cs. kuleuven. be/~bettina. berendt/Reviews/Big. Data. pdf Berendt, B. , Büchler, M. , & Rockwell, G. (2015). Is it research or is it spying? Thinking-through ethics in Big Data AI and other knowledge sciences. Künstliche Intelligenz, 29(2), 223 -232. boyd, d. & Crawford, K. (2012). Critical questions for Big Data. Information, Communication & Society, 15: 5, 662 -679, DOI: 10. 1080/1369118 X. 2012. 678878. De Wolf, R. , Vanderhoven, E. , Berendt, B. , Pierson, J. & Schellens, T. (2016). Self-reflection in privacy research on social network sites. Behaviour & Information Technology. DOI: 10. 1080/0144929 X. 2016. 1242653. http: //www. tandfonline. com/eprint/UEWXwb. FZx. B 2 AW 6 Np. St. Me/full Gonzalez Fuster, G. & Gutwirth, S. (2014). Ethics, Law and Privacy: Disentangling Law from Ethics in Privacy Discourse. In Proceedings of the 2014 IEEE International Symposium on Ethics in Science, Technology and Engineering. IEEE, pp. 50 -55. Kitchin, R. (2014 a). The Data Revolution. Big Data, Open Data, Data Infrastructures & Their Consequences. London: Sage. Kitchin, R. (2014 b). Big Data, new epistemologies and paradigm shifts. Big Data & Society, April-June 2014, 1 -12. Kramer, A. , Guillory, J. , & Hancock, J. (2014). Experimental evidence of massive-scale emotional contagion through social networks. Proceedings of the National Academy of Sciences 111, 8788 -8790. http: //www. pnas. org/content/111/24/8788. full. pdf+html Moretti, F. (2005). Graphs, Maps, Trees. Abstract Models for Literary History. p. 30 London: Verso (cited from the paperback published in 2007) Pauen, M. & Welzer, H. (2015). Autonomie: Eine Verteidigung [Autonomy: A Defence], Frankfurt am Main: S. Fischer Verlag Rockwell, G. & Berendt, B (2016). Information wants to be free: Thinking-through Respect by Design. Göttingen Dialog for Digital Humanities. University of Göttingen. 9 May 2106. http: //people. cs. kuleuven. be/~bettina. berendt/Talks/rockwell_berendt_2016_05_09. pdf (in progress: a text version) Tufekci, Z. (2014). What Happens to #Ferguson Affects Ferguson: Net Neutrality, Algorithmic Filtering and Ferguson. https: //medium. com/message/ferguson-is-also-a-net-neutrality-issue-6 d 2 f 3 db 51 eb 0 Vedder, A. (2015). BDA and Ethics. Presentation in the KU Leuven course “Privacy and Big Data“, Dec. 2015. Additional text (p. 1): Eichmann’s final plea. Cited from http: //remember. org/eichmann/ownwords Additional text (p. 2): Zwitter, A. (2014). Big Data ethics. Big Data & Society, 1(2). DOI 10. 1177/2053951714559253. http: //bds. sagepub. com/content/1/2/2053951714559253 59

60 More sources • Please find the URLs of pictures and screenshots in the Powerpoint “comment“ box • Thanks to the Internet for them! 60

- Slides: 60