1 ADB 2011 Text Mining Bettina Berendt K

1 ADB 2011 – Text Mining Bettina Berendt, K. U. Leuven

Agenda A basic concept: Texts as feature vectors (so we can apply the algorithms we know) Some notes about text preprocessing Text classification Other approaches to opinion mining Further examples from mining news, blogs and other social media. . 2

Agenda A basic concept: Texts as feature vectors (so we can apply the algorithms we know) Some notes about text preprocessing Text classification Other approaches to opinion mining Further examples from mining news, blogs and other social media. . 3

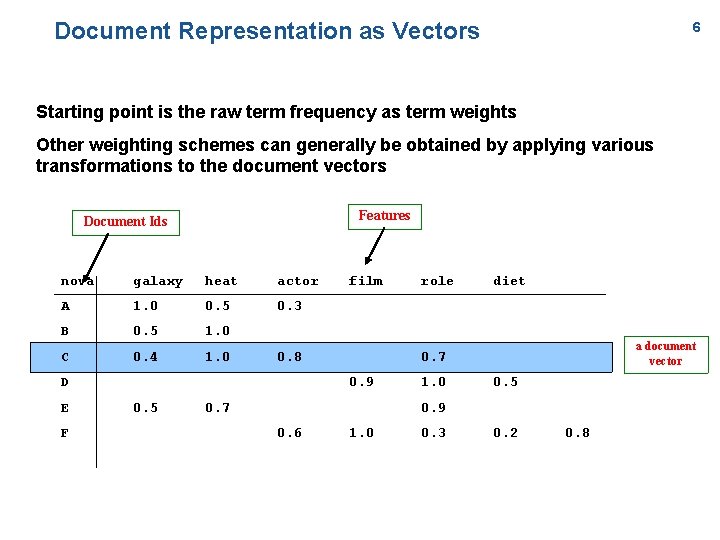

The goal: text representation in the usual “feature” model n n Basic idea: l Keywords are extracted from texts. l These keywords describe the (usually) topical content of Web pages and other text contributions. Based on the vector space model of document collections: l Each unique word in a corpus of Web pages = one dimension l Each page(view) is a vector with non-zero weight for each word in that page(view), zero weight for other words Words become “features” (in a data-mining sense) 4

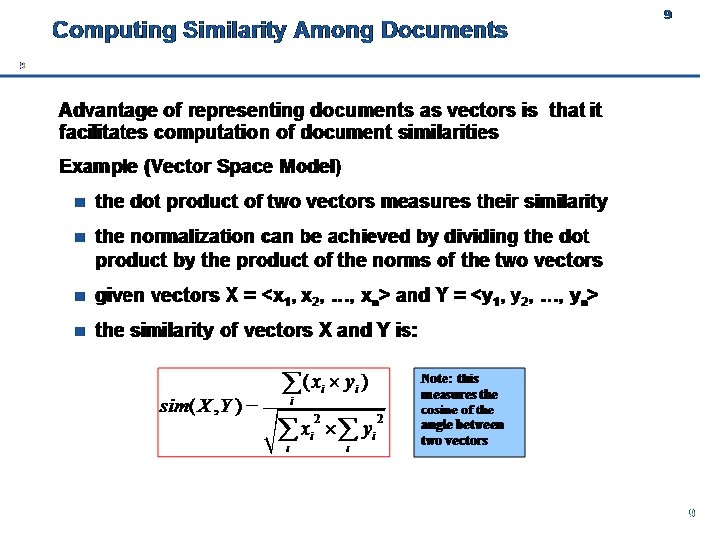

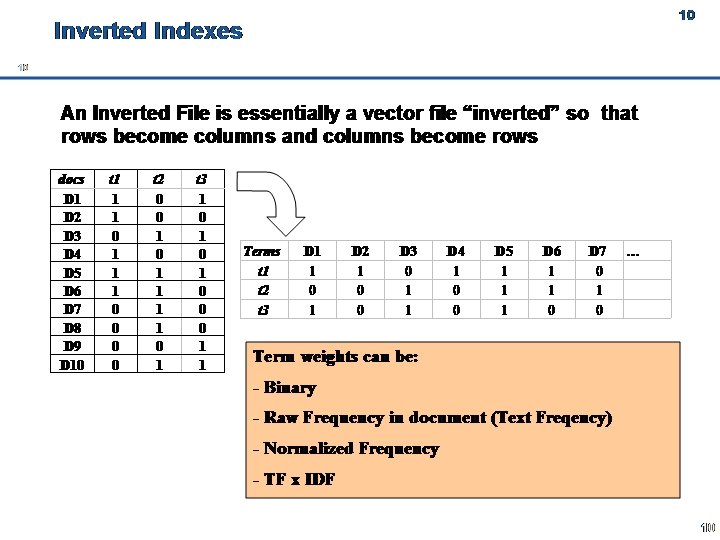

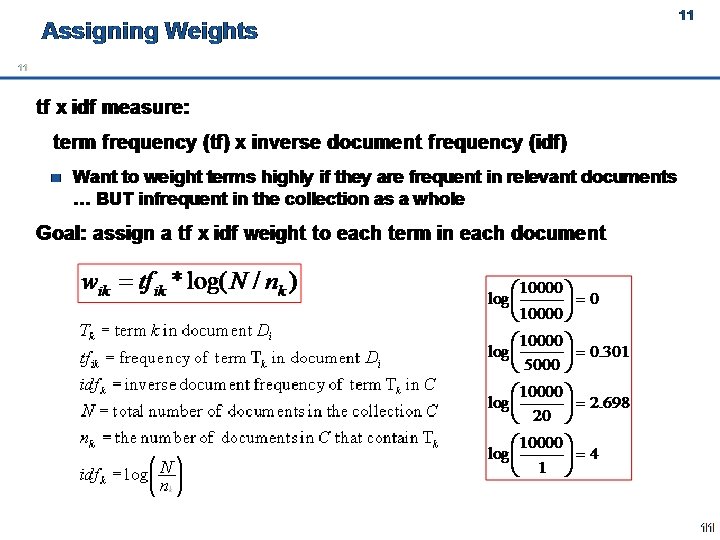

How to get there Feature representation for texts n each text p is represented as a k-dimensional feature vector, where k is the total number of extracted features from the site in a global dictionary n feature vectors obtained are organized into an inverted file structure containing a dictionary of all extracted features and posting files for pageviews Conceptually, the inverted file structure represents a document-feature matrix, where each row is the feature vector for a page and each column is a feature 5

Document Representation as Vectors 6 Starting point is the raw term frequency as term weights Other weighting schemes can generally be obtained by applying various transformations to the document vectors Features Document Ids nova galaxy heat actor A 1. 0 0. 5 0. 3 B 0. 5 1. 0 C 0. 4 1. 0 0. 8 D E F film diet a document vector 0. 7 0. 9 0. 5 role 0. 7 1. 0 0. 5 0. 9 0. 6 1. 0 0. 3 0. 2 0. 8

7

8

9

Agenda A basic concept: Texts as feature vectors (so we can apply the algorithms we know) Some notes about text preprocessing Text classification Other approaches to opinion mining Further examples from mining news, blogs and other social media. . 10

The idea of text mining. . . is to go beyond frequency-counting. . . is to go beyond the search-for-documents framework. . . is to find patterns (of meaning) within and across documents (yes, there is text mining behind some of the things the above tools do!) 11

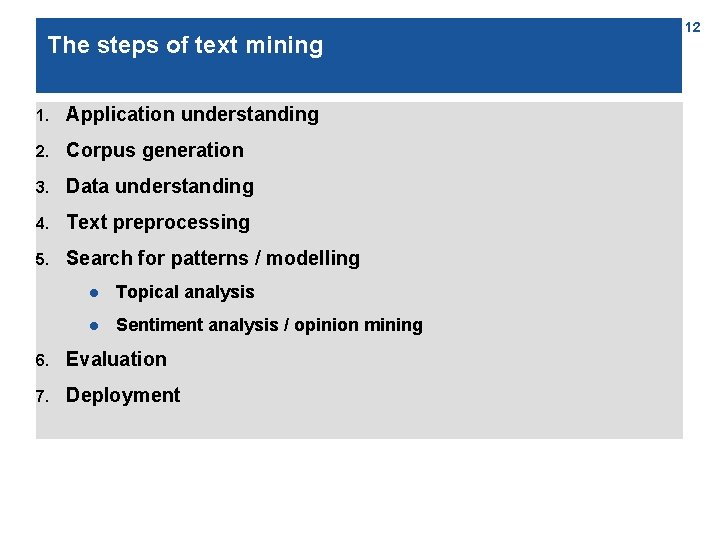

The steps of text mining 1. Application understanding 2. Corpus generation 3. Data understanding 4. Text preprocessing 5. Search for patterns / modelling l Topical analysis l Sentiment analysis / opinion mining 6. Evaluation 7. Deployment 12

Application understanding; Corpus generation n What is the question? n What is the context? n What could be interesting sources, and where can they be found? n Crawl n Use a search engine and/or archive l Google blogs search l Technorati l Blogdigger l . . . 13

Preprocessing (1) Data cleaning n Goal: get clean ASCII text n Remove HTML markup*, pictures, advertisements, . . . n Automate this: wrapper induction * Note: HTML markup may carry information too (e. g. , <b> or <h 1> marks something important), which can be extracted! (Depends on the application) 14

Preprocessing (2) Further text preprocessing n Goal: get processable lexical / syntactical units n Tokenize (find word boundaries) n Lemmatize / stem l ex. buyers, buyer / buyer, buying, . . . buy n Remove stopwords n Find Named Entities (people, places, companies, . . . ); filtering n Resolve polysemy and homonymy: word sense disambiguation; “synonym unification“ n Part-of-speech tagging; filtering of nouns, verbs, adjectives, . . . n Most steps are optional and application-dependent! n Many steps are language-dependent; coverage of non-English varies n Free and/or open-source tools or Web APIs exist for most steps 15

Preprocessing (3) Creation of text representation n Goal: a representation that the modelling algorithm can work on n Most common forms: A text as l a set or (more usually) bag of words / vector-space representation: term-document matrix with weights reflecting occurrence, importance, . . . l a sequence of words l a tree (parse trees) 16

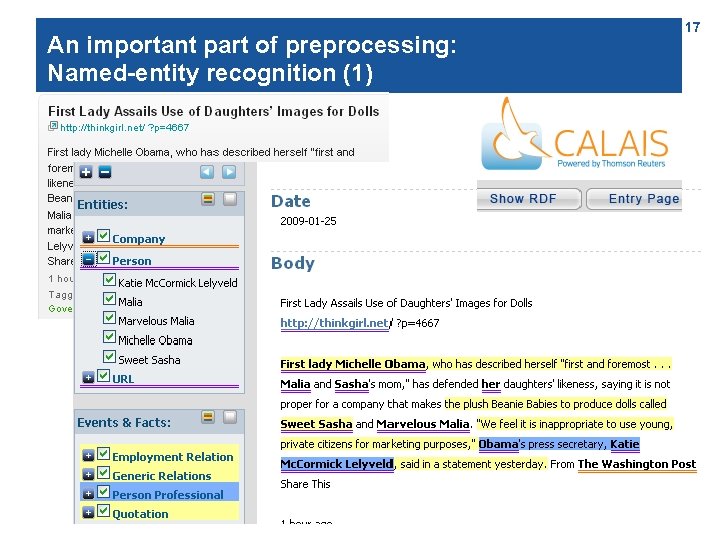

An important part of preprocessing: Named-entity recognition (1) 17

An important part of preprocessing: Named-entity recognition (2) n Technique: Lexica, heuristic rules, syntax parsing n Re-use lexica and/or develop your own l n configurable tools such as GATE A challenge: multi-document named-entity recognition l See proposal in Subašić & Berendt (Proc. ICDM 2008) 18

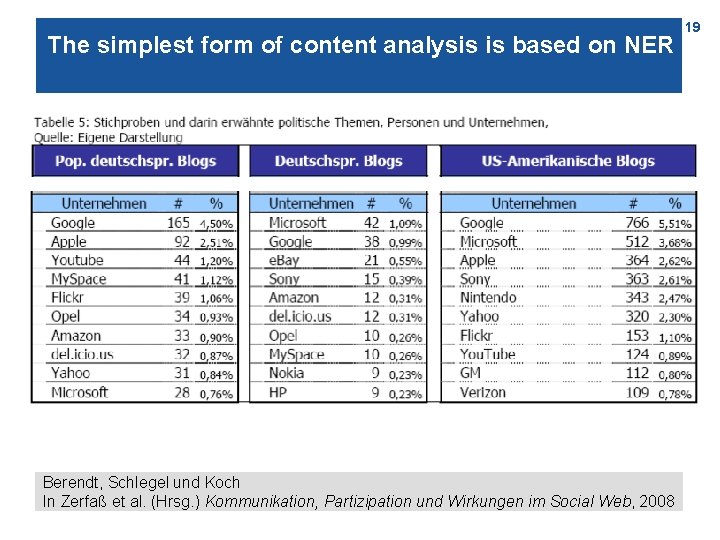

The simplest form of content analysis is based on NER Berendt, Schlegel und Koch In Zerfaß et al. (Hrsg. ) Kommunikation, Partizipation und Wirkungen im Social Web, 2008 19

Agenda A basic concept: Texts as feature vectors (so we can apply the algorithms we know) Some notes about text preprocessing Text classification Other approaches to opinion mining Further examples from mining news, blogs and other social media. . 20

Note Text classification was first done by topic ( you‘ll do this in the exercise session), but the class could be anything. In the following example, we‘ll use a sentiment class and thereby enter the area of sentiment/opinion mining (at the document level). 21

What makes people happy? 22

Happiness in blogosphere 23

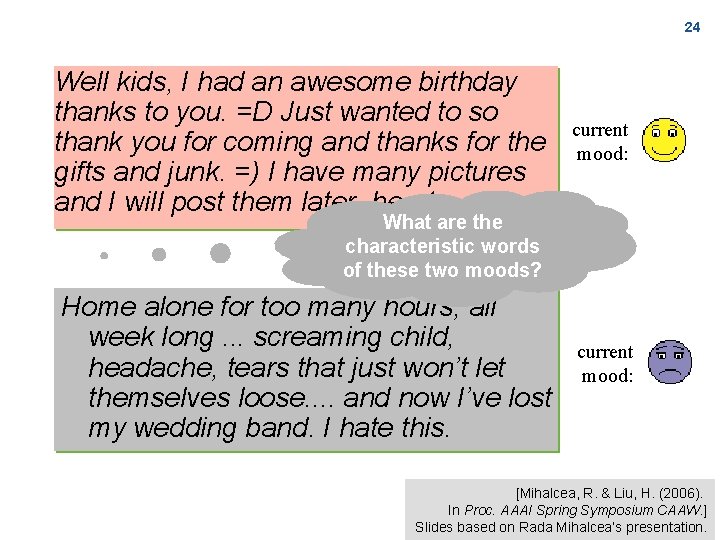

24 Well kids, I had an awesome birthday thanks to you. =D Just wanted to so thank you for coming and thanks for the gifts and junk. =) I have many pictures and I will post them later. hearts current mood: What are the characteristic words of these two moods? Home alone for too many hours, all week long. . . screaming child, headache, tears that just won’t let themselves loose. . and now I’ve lost my wedding band. I hate this. current mood: [Mihalcea, R. & Liu, H. (2006). In Proc. AAAI Spring Symposium CAAW. ] Slides based on Rada Mihalcea‘s presentation.

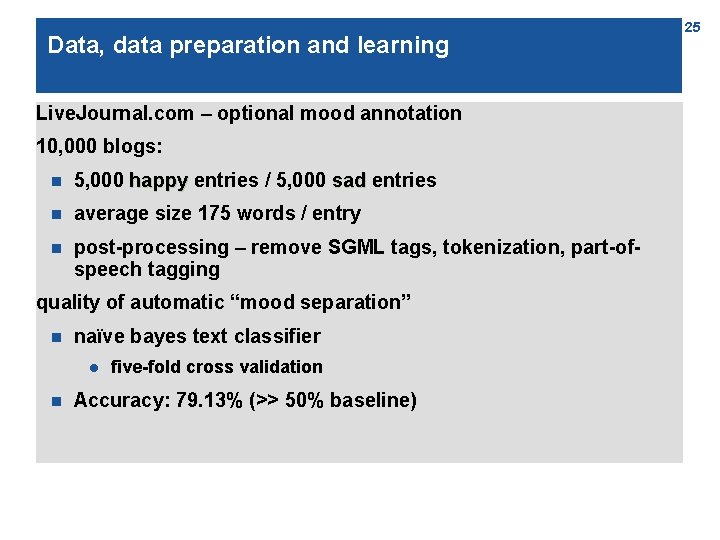

Data, data preparation and learning Live. Journal. com – optional mood annotation 10, 000 blogs: n 5, 000 happy entries / 5, 000 sad entries n average size 175 words / entry n post-processing – remove SGML tags, tokenization, part-ofspeech tagging quality of automatic “mood separation” n naïve bayes text classifier l n five-fold cross validation Accuracy: 79. 13% (>> 50% baseline) 25

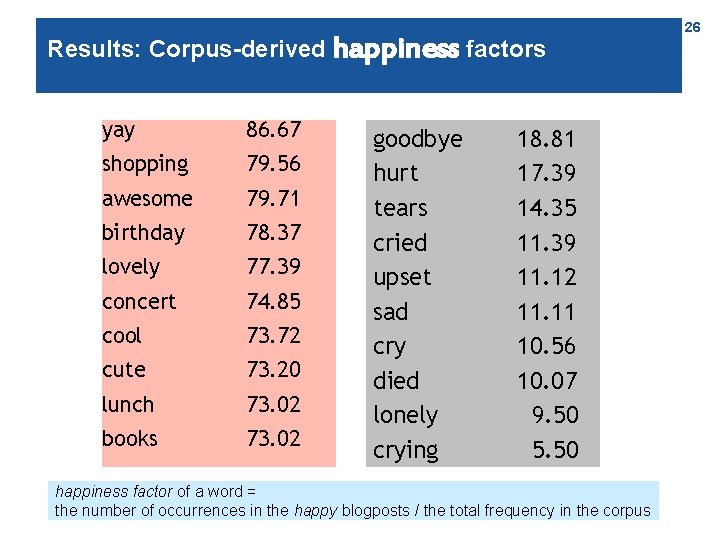

Results: Corpus-derived happiness factors yay 86. 67 shopping 79. 56 awesome 79. 71 birthday 78. 37 lovely 77. 39 concert 74. 85 cool 73. 72 cute 73. 20 lunch 73. 02 books 73. 02 goodbye hurt tears cried upset sad cry died lonely crying 18. 81 17. 39 14. 35 11. 39 11. 12 11. 11 10. 56 10. 07 9. 50 5. 50 happiness factor of a word = the number of occurrences in the happy blogposts / the total frequency in the corpus 26

Agenda A basic concept: Texts as feature vectors (so we can apply the algorithms we know) Some notes about text preprocessing Text classification Other approaches to opinion mining Further examples from mining news, blogs and other social media. . 27

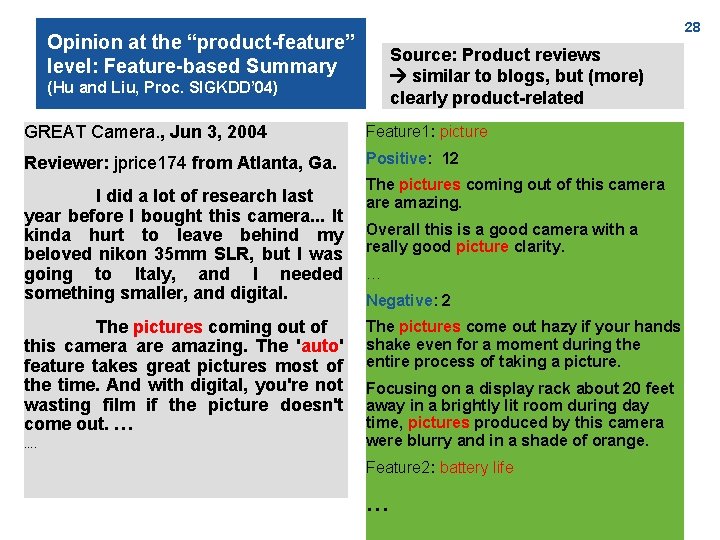

28 Opinion at the “product-feature” level: Feature-based Summary Source: Product reviews similar to blogs, but (more) clearly product-related (Hu and Liu, Proc. SIGKDD’ 04) GREAT Camera. , Jun 3, 2004 Feature 1: picture Reviewer: jprice 174 from Atlanta, Ga. Positive: 12 I did a lot of research last year before I bought this camera. . . It kinda hurt to leave behind my beloved nikon 35 mm SLR, but I was going to Italy, and I needed something smaller, and digital. The pictures coming out of this camera are amazing. The 'auto' feature takes great pictures most of the time. And with digital, you're not wasting film if the picture doesn't come out. … …. The pictures coming out of this camera are amazing. Overall this is a good camera with a really good picture clarity. … Negative: 2 The pictures come out hazy if your hands shake even for a moment during the entire process of taking a picture. Focusing on a display rack about 20 feet away in a brightly lit room during day time, pictures produced by this camera were blurry and in a shade of orange. Feature 2: battery life …

Sentistrength http: //gplsi. dlsi. ua. es/congresos/wassa 2010/authors/6%20 Emotion %20 detection. ppt 29

Agenda A basic concept: Texts as feature vectors (so we can apply the algorithms we know) Some notes about text preprocessing Text classification Other approaches to opinion mining Further examples from mining news, blogs and other social media. . 30

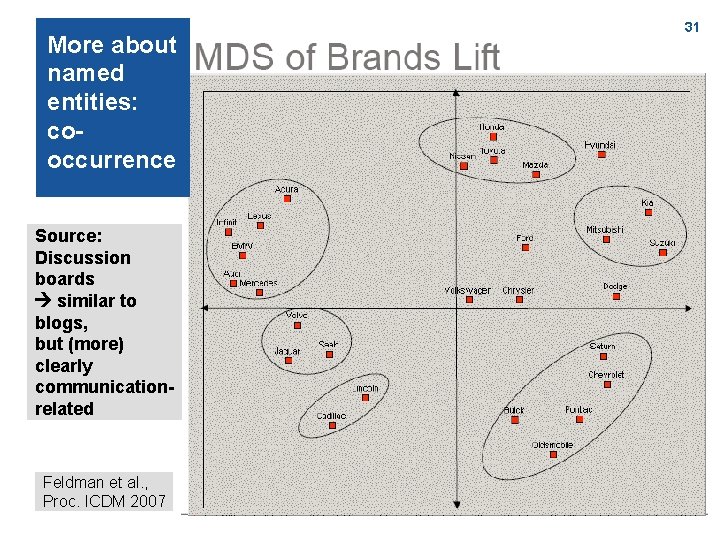

More about named entities: cooccurrence Source: Discussion boards similar to blogs, but (more) clearly communicationrelated Feldman et al. , Proc. ICDM 2007 31

32 Cooccurrence of brands and attributes Feldman et al. , Proc. ICDM 2007

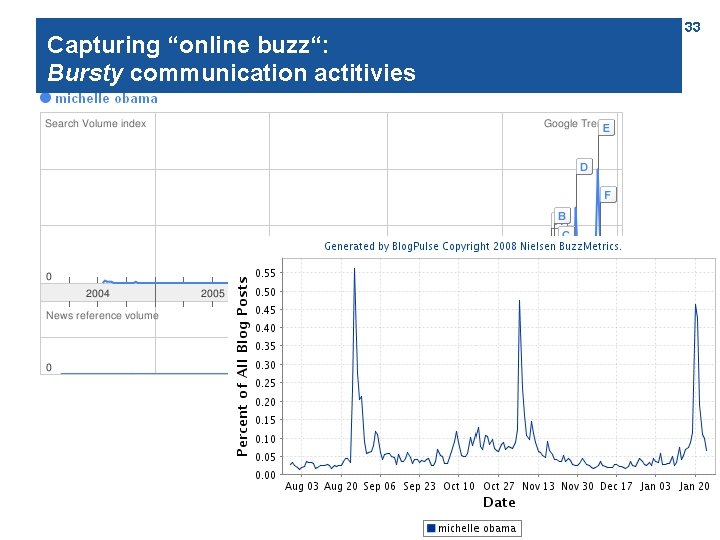

Capturing “online buzz“: buzz“ Bursty communication actitivies 33

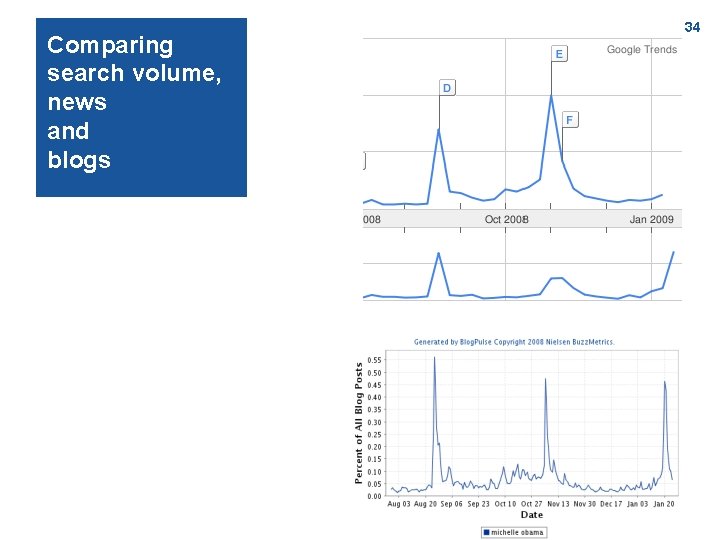

Comparing search volume, news and blogs 34

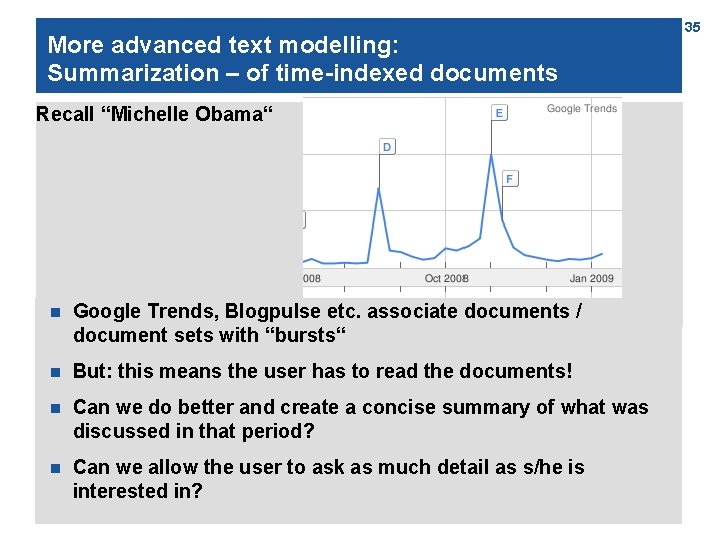

More advanced text modelling: Summarization – of time-indexed documents Recall “Michelle Obama“ n Google Trends, Blogpulse etc. associate documents / document sets with “bursts“ n But: this means the user has to read the documents! n Can we do better and create a concise summary of what was discussed in that period? n Can we allow the user to ask as much detail as s/he is interested in? 35

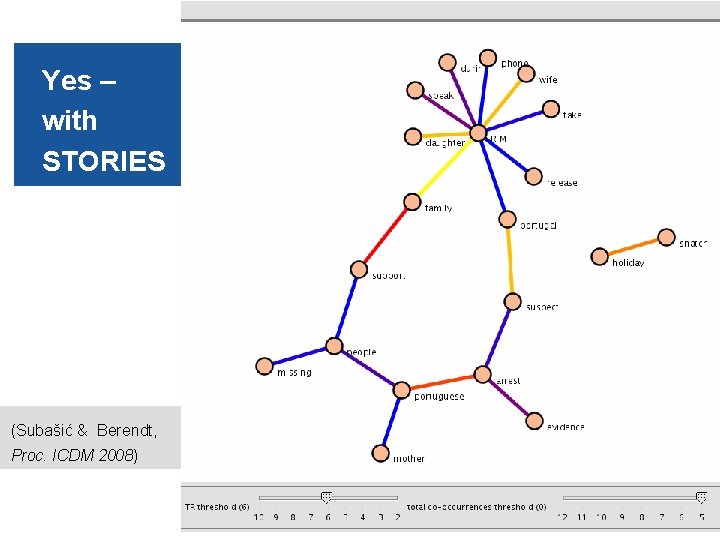

36 Yes – with STORIES (Subašić & Berendt, Proc. ICDM 2008)

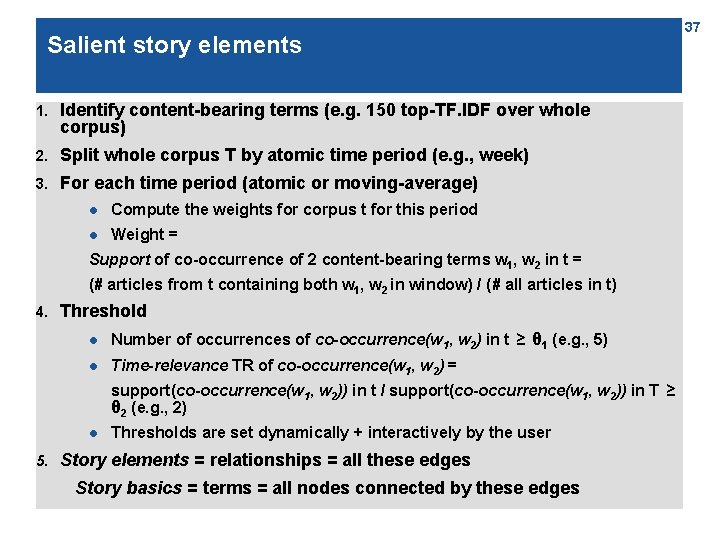

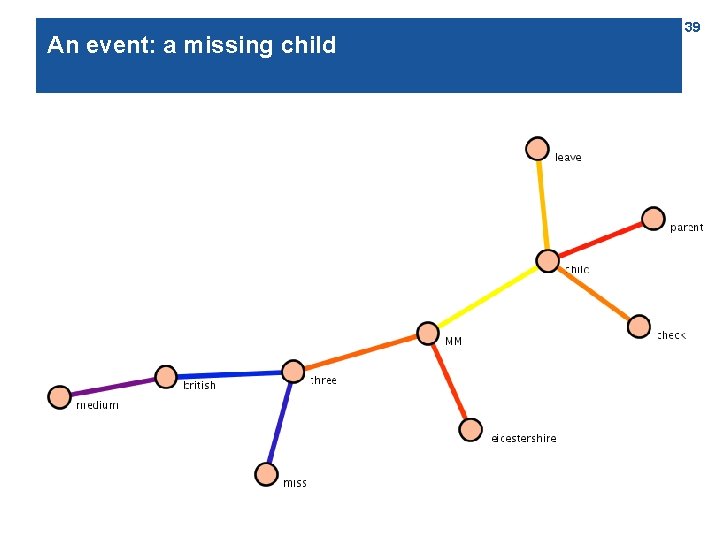

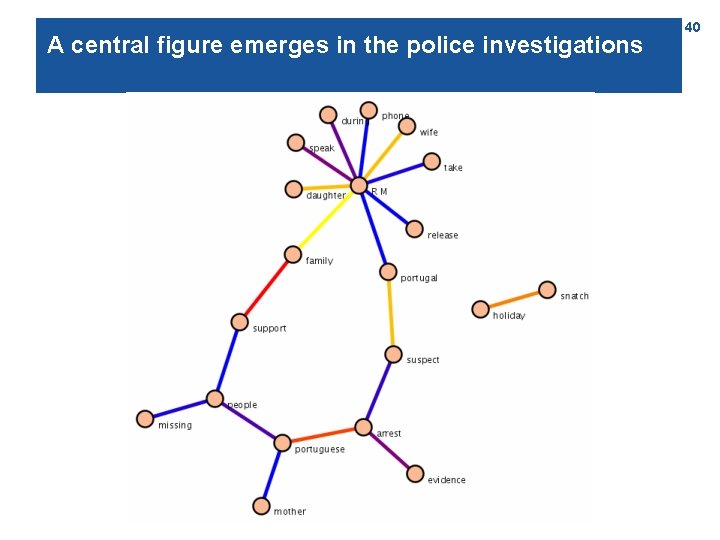

Salient story elements 1. Identify content-bearing terms (e. g. 150 top-TF. IDF over whole corpus) 2. Split whole corpus T by atomic time period (e. g. , week) 3. For each time period (atomic or moving-average) l Compute the weights for corpus t for this period l Weight = Support of co-occurrence of 2 content-bearing terms w 1, w 2 in t = (# articles from t containing both w 1, w 2 in window) / (# all articles in t) 4. Threshold l Number of occurrences of co-occurrence(w 1, w 2) in t ≥ θ 1 (e. g. , 5) l Time-relevance TR of co-occurrence(w 1, w 2) = support(co-occurrence(w 1, w 2)) in t / support(co-occurrence(w 1, w 2)) in T ≥ θ 2 (e. g. , 2) l 5. Thresholds are set dynamically + interactively by the user Story elements = relationships = all these edges Story basics = terms = all nodes connected by these edges 37

Salient story stages, and story evolution 6. Story stage = the story graph made of basics and elements in t 7. Story evolution = how story stages evolve over the t in T 38

An event: a missing child 39

A central figure emerges in the police investigations 40

Uncovering more details 41

Uncovering more details 42

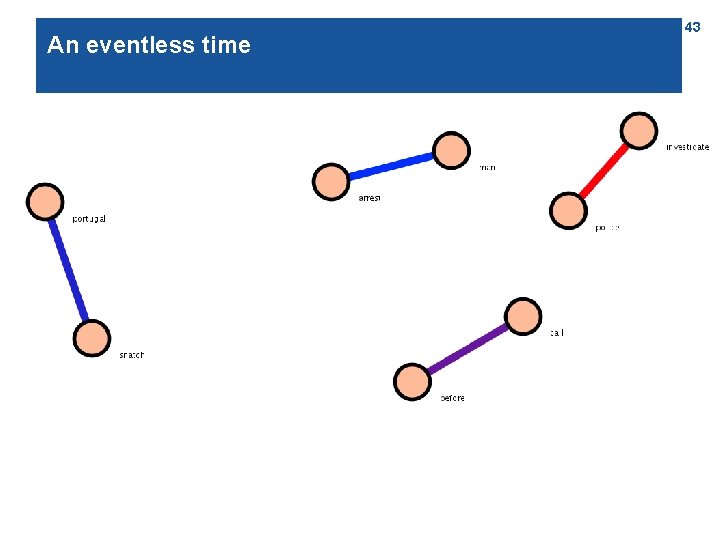

An eventless time 43

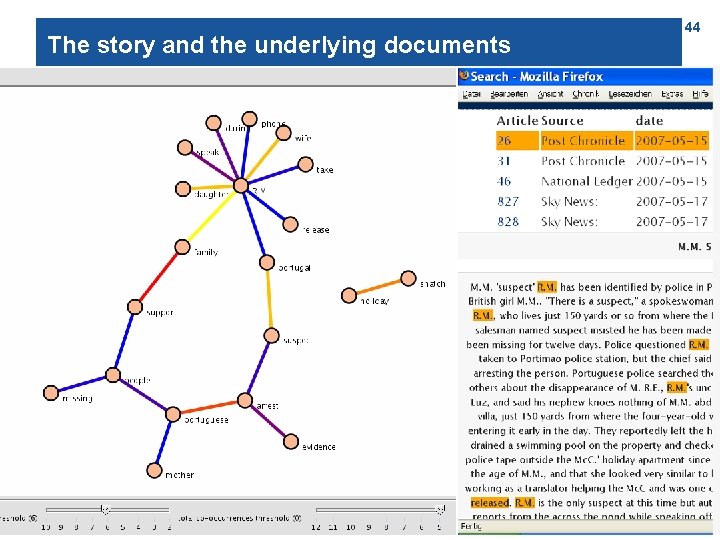

The story and the underlying documents 44

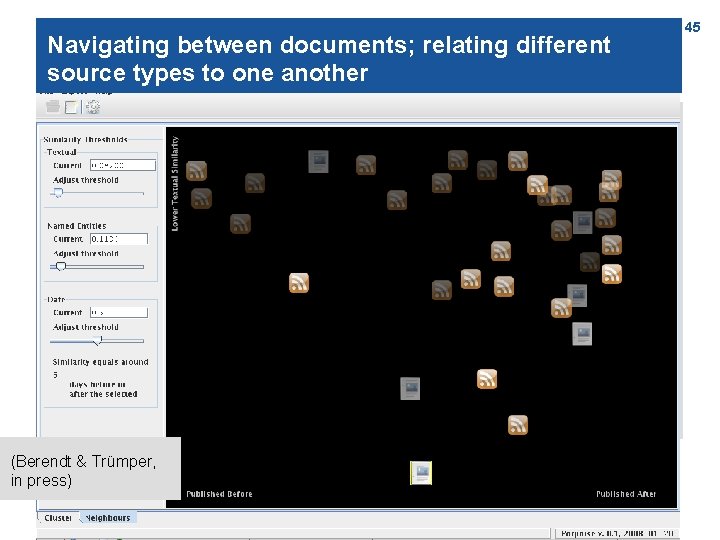

Navigating between documents; relating different source types to one another (Berendt & Trümper, in press) 45

Literature and other sources A good textbook on Text Mining: Feldman, R. & Sanger, J. (2007). The Text Mining Handbook. Advanced Approaches in Analyzing Unstructured Data. Cambridge University Press. A good introduction (even if a bit old), including a good overview of preprocessing issues: Baldi, Pierre / Frasconi, Paolo / Smyth, Padhraic (2003). Modeling the Internet and the Web. Probabilistic Methods and Algorithms. Wiley. Chapter 4: http: //media. wiley. com/product_data/excerpt/61/0470849061. pdf p. 29: Thelwall, M. , Buckley, K. , Paltoglou, G. Cai, D. , & Kappas, A. (2010). Sentiment strength detection in short informal text. Journal of the American Society for Information Science and Technology, 61(12), 2544– 2558. http: //www. scit. wlv. ac. uk/~cm 1993/papers/Senti. Strength. Preprint. doc More papers and materials are here: http: //sentistrength. wlv. ac. uk/ Individual references: pp. 36 ff. : Subašić, I. & Berendt, B. (2008). Web mining for understanding stories through graph visualisation. In Proc. of the 2008 Eighth IEEE International Conference on Data Mining (pp. 570– 579). Los Alamitos, CA: IEEE Computer Society Press. p. 19: Berendt, B. , Schlegel, M. , & Koch, R. (2008). Die deutschsprachige Blogosphäre: Reifegrad, Politisierung, Themen und Bezug zu Nachrichtenmedien. In A. Zerfaß, M. Welker, & J. Schmidt (Eds. ), Kommunikation, Partizipation und Wirkungen im Social Web (Band 2: Strategien und Anwendungen: Perspektiven für Wirtschaft, Politik, Publizistik) (pp. 72– 96). Köln, Germany: Herbert von Halem Verlag. pp. 31 f: R. Feldman, M. Fresko, J. Goldenberg, O. Netzer, and L. H. Ungar (2007). Extracting product comparisons from discussion boards. In Proc. ICDM 2007, pp. 469– 474. IEEE Computer Society, 2007. http: //ieeexplore. ieee. org/iel 5/4470209/4470210/04470275. pdf? arnumber=4470275 p. 45: Berendt, B. & Trümper, D. (2009). Semantics-based analysis and navigation of heterogeneous text corpora: the porpoise news and blogs engine. I. -H. Ting & H. -J. Wu (Eds. ), Web Mining Applications in E-commerce and E-services (pp. 45 -64). Berlin etc. : Springer, Studies in Computational Intelligence, Vol. 172. http: //www. cs. kuleuven. be/~berendt/Papers/berendt_truemper_2009. pdf p. 28: Minqing Hu and Bing Liu (2004). Mining and summarizing customer reviews. In Proc. SIGKDD’ 04 (pp. 168 -177). http: //portal. acm. org/citation. cfm? doid=1014052. 1014073 pp. 22 ff. : Mihalcea, R. & Liu, H. (2006). A corpus-based approach to finding happiness, In Proc. AAAI Spring Symposium on Computational Approaches to Analyzing Weblogs. http: //citeseerx. ist. psu. edu/viewdoc/summary? doi=10. 1. 1. 79. 6759 See http: //wiki. esi. ac. uk/Current_Approaches_to_Data_Mining_Blogs for more articles on the subject. 46

- Slides: 46