An Introduction to Text Mining Bettina Berendt Department

An Introduction to Text Mining Bettina Berendt Department of Computer Science KU Leuven, Belgium http: //people. cs. kuleuven. be/~bettina. berendt/ Vienna Summer School on Digital Humanities July 7 th, 2015, Vienna, Austria ‹#›

2 Starting questions for today • What is a text? • What questions can we ask of a text? • What kind of answers "make us happy"?

3 Some answers that would make you happy, and how (semi-)automatic text analysis could help • Author ▫ Usually a metadatum that is extracted from the metadata set ▫ • • • Can also be an inference: „“can you find out who is the author of this text? “ � This is a text-mining task that has been studied for example in online texts (one subtype of the de-anonymization problem) Genre ▫ A text-mining classification task (given a text, classify it into one from a list of genres) Style ▫ Same (stylometry classification) statement / summary ▫ Text mining task “summarization“ (e. g. of news texts) Content ▫ The most typical text mining task: identify topics, classify into a content class, . . . function, intention ▫ (I‘m still not quite sure what this. . . So this is for a future summer school ; -) ) sequence of signs 1. Sequential analysis of texts is common (e. g. In co-occurrence and collocation analysis) 2. Signs (in the sense of arbitrary words standing for concepts): a key element of theories of semantics, e. g. In the Semantic Web and Linked Open Data (e. g. DBPedia: a concept network version of Wikipedia) – are used in text mining, for example for improving topic modelling and classification July 9 th 2015: I‘ll add references to these yet, but wanted to get the slides out to you already! 3

4 Motivation (1) 4

5 Motivation (2) 5

6 Goals and non-goals • Goals ▫ ▫ ▫ Understand the basic ideas of data mining Understand how computer-scientist text miners approach texts Compare it with your own approaches Learn about some pitfalls and encourage a critical view Get your hands on some tools and real data Have an overview of other necessary steps (such as pre-processing) that take too much time to be included in this course ▫ Have pointers for inquiring and going further • Non-goals (selection) ▫ the statistical background of methods ▫ a comprehensive overview of the state-of-the-art of text mining applications in the digital humanities or social or behavioural sciences ▫ An introduction to big data computing or big DH infrastructuress 6

7 A more modest goal than revolutionising knowledge as such? ! “As long as there have been books there have been more books than you could read. … Knowing how to "not-read" is just as important as knowing how to read” (Mueller, 2007). “data mining and machine learning are best understood in terms of “provocation”—the potential for outlier results to surprise a reader into attending to some aspect of a text not previously deemed significant—as well as “not-reading” or “distant reading, ” the automated search for patterns across a much wider corpus than could be read and assimilated via traditional humanistic methods of “close reading. ”” (Kirschenbaum, 2007) 7

‹#› You use text mining every day Texts as strings and feature vectors Text mining: steps and basic tasks Evaluation About today‘s dataset

‹#› and/or its older brother: Information retrieval You use text mining every day Texts as strings and feature vectors Text mining: steps and basic tasks Evaluation About today‘s dataset

10 Origins of text mining. Or: What is a text for information retrieval? Let‘s do some reverse engineering. . . 10

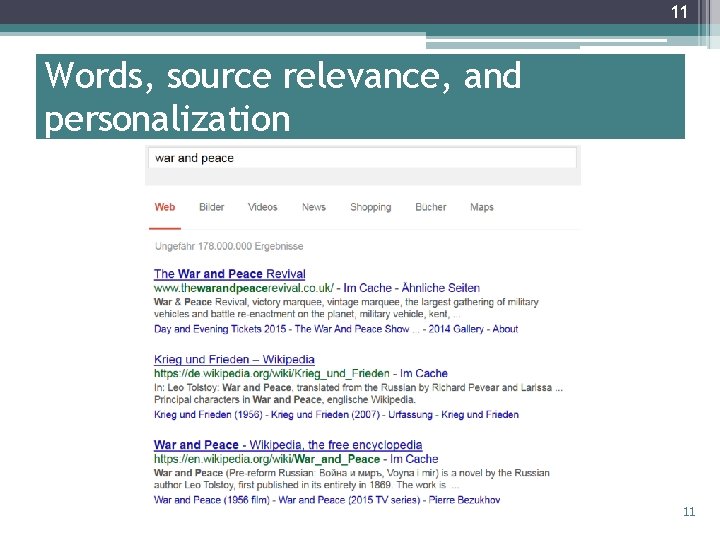

11 Words, source relevance, and personalization 11

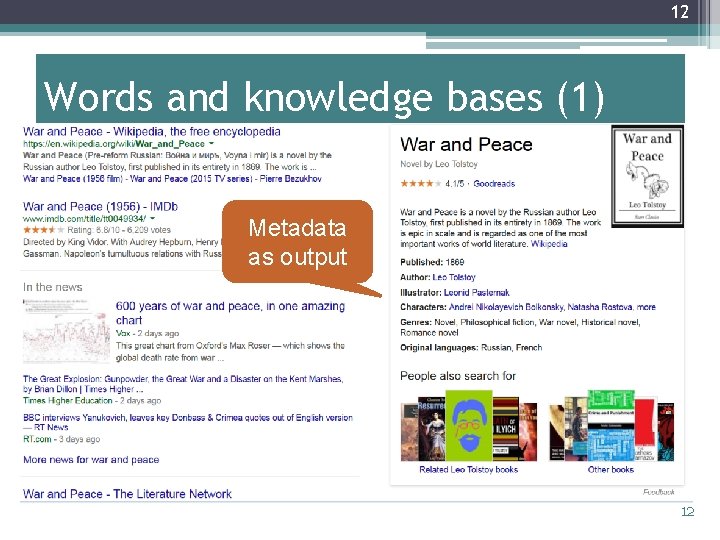

12 Words and knowledge bases (1) Metadata as output 12

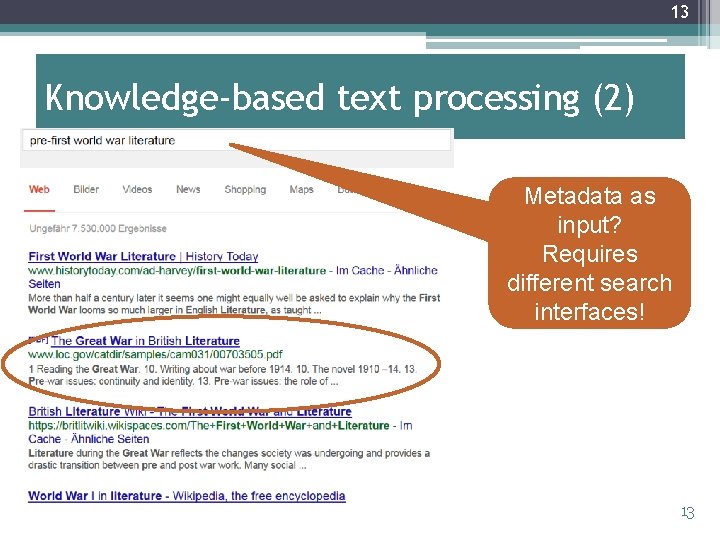

13 Knowledge-based text processing (2) Metadata as input? Requires different search interfaces! 13

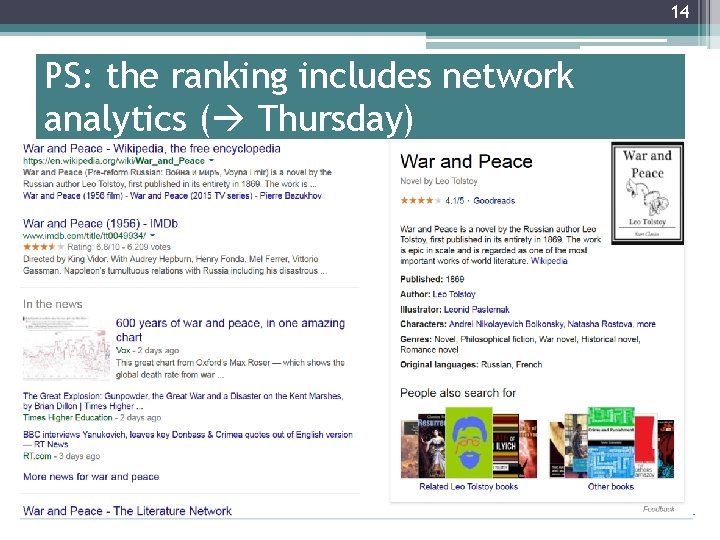

14 PS: the ranking includes network analytics ( Thursday) 14

15 PS: the ranking also includes adaptation; here: relevance feedback 15

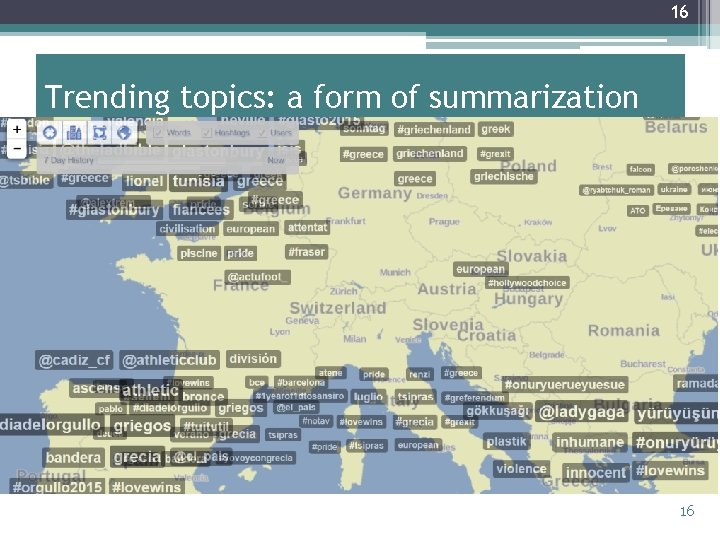

16 Trending topics: a form of summarization 16

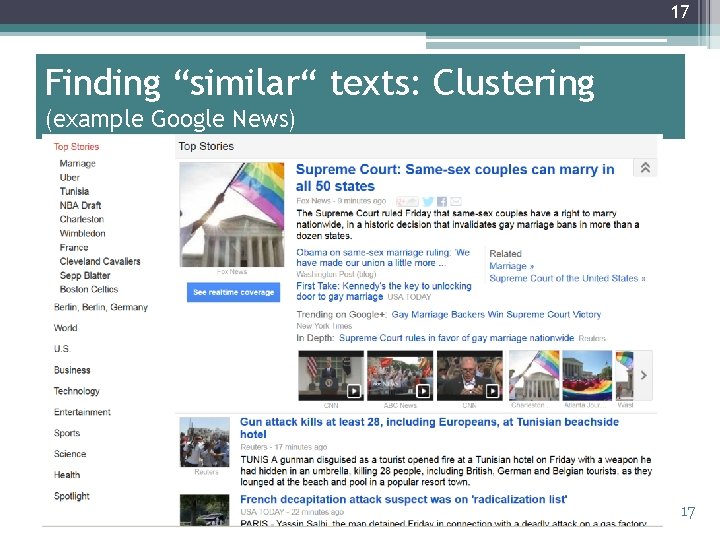

17 Finding “similar“ texts: Clustering (example Google News) 17

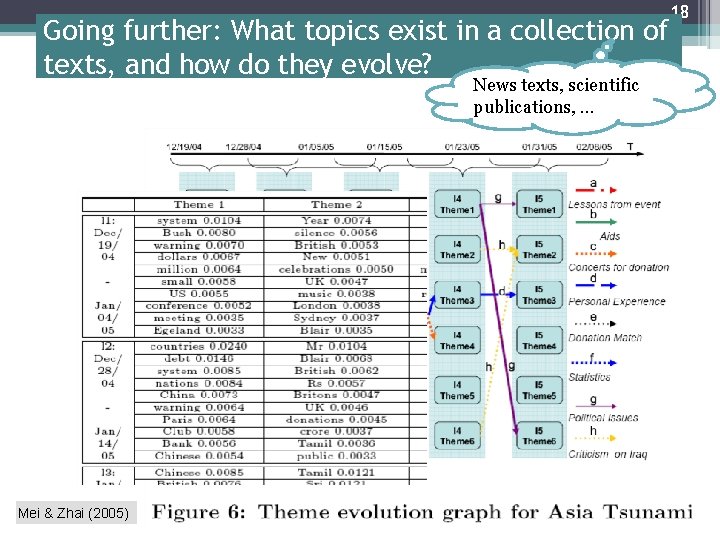

Going further: What topics exist in a collection of texts, and how do they evolve? News texts, scientific publications, … Mei & Zhai (2005) 18

19 Guiding questions • Information retrieval: ▫ Given the current user‘s information need, which are the most relevant documents? • Text mining: ▫ What do the documents tell us? What‘s in the texts? What can we learn about the texts, their authors, . . . ▫ Many different subquestions ▫ Summarization (of one text, of many texts) is just one of them • Cf. ▫ “Distant reading“ (Moretti) �understanding literature not by studying particular texts, but by aggregating and analyzing massive amounts of data. ▫ “Machine reading“ (UCL Machine Reading Group) �machines that can read and "understand" this textual information, converting it into interpretable structured knowledge to be leveraged by humans and other machines alike 19

‹#› You use text mining every day Texts as strings and feature vectors Text mining: steps and basic tasks Evaluation About today‘s dataset

21 Speed-reading (Woody Allen) I took a course in speed reading and was able to read War and Peace in twenty minutes. It's about Russia. . also quoted differently: I took a speed reading course and read 'War and Peace' in twenty minutes. It involves Russia. 21

22 A personal “experiment“ - deliberately a bit silly, more a gentle introduction to a great tool and to some pitfalls of “distant reading“ (I haven‘t read War and Peace yet. ) 22

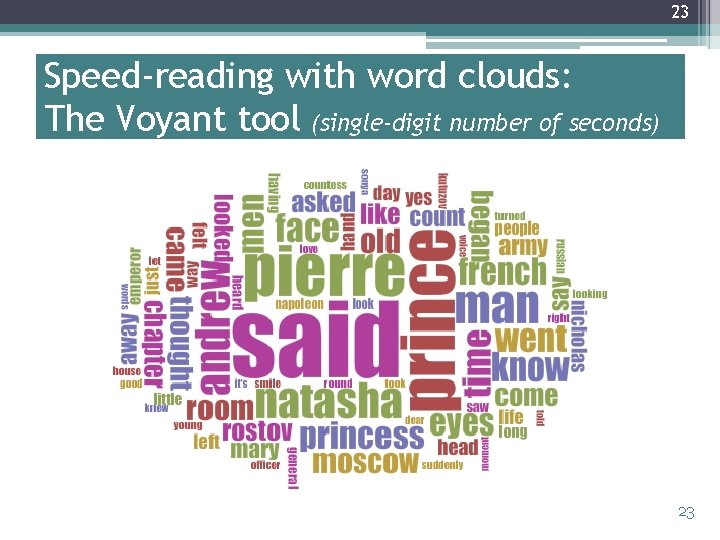

23 Speed-reading with word clouds: The Voyant tool (single-digit number of seconds) 23

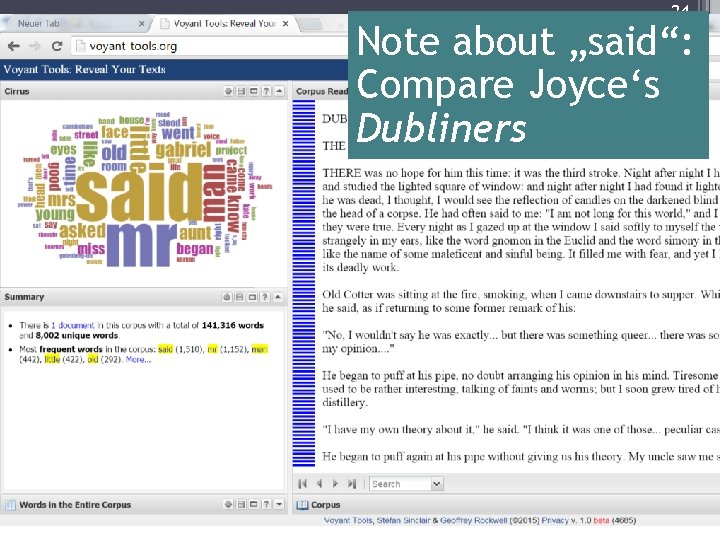

24 Note about „said“: Compare Joyce‘s Dubliners 24

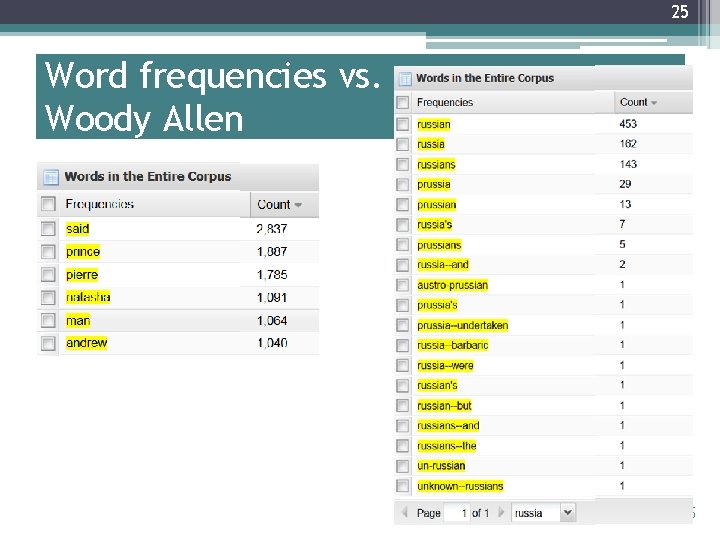

25 Word frequencies vs. Woody Allen 25

26 Can we find out more about the 3? 26

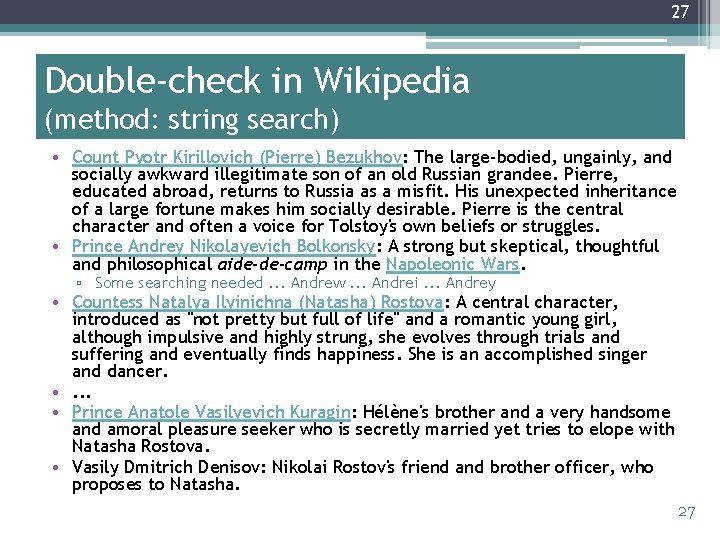

27 Double-check in Wikipedia (method: string search) • Count Pyotr Kirillovich (Pierre) Bezukhov: The large-bodied, ungainly, and socially awkward illegitimate son of an old Russian grandee. Pierre, educated abroad, returns to Russia as a misfit. His unexpected inheritance of a large fortune makes him socially desirable. Pierre is the central character and often a voice for Tolstoy's own beliefs or struggles. • Prince Andrey Nikolayevich Bolkonsky: A strong but skeptical, thoughtful and philosophical aide-de-camp in the Napoleonic Wars. ▫ Some searching needed. . . Andrew. . . Andrei. . . Andrey • Countess Natalya Ilyinichna (Natasha) Rostova: A central character, introduced as "not pretty but full of life" and a romantic young girl, although impulsive and highly strung, she evolves through trials and suffering and eventually finds happiness. She is an accomplished singer and dancer. • . . . • Prince Anatole Vasilyevich Kuragin: Hélène's brother and a very handsome and amoral pleasure seeker who is secretly married yet tries to elope with Natasha Rostova. • Vasily Dmitrich Denisov: Nikolai Rostov's friend and brother officer, who proposes to Natasha. 27

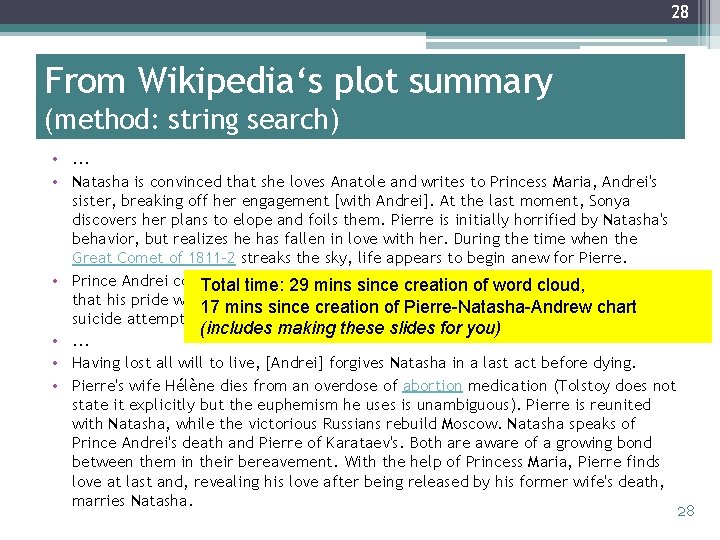

28 From Wikipedia‘s plot summary (method: string search) • . . . • Natasha is convinced that she loves Anatole and writes to Princess Maria, Andrei's sister, breaking off her engagement [with Andrei]. At the last moment, Sonya discovers her plans to elope and foils them. Pierre is initially horrified by Natasha's behavior, but realizes he has fallen in love with her. During the time when the Great Comet of 1811– 2 streaks the sky, life appears to begin anew for Pierre. • Prince Andrei coldly accepts of the engagement. He tells Pierre Total time: Natasha's 29 minsbreaking since creation of word cloud, that his pride will 17 notmins allowsince him tocreation renew his Ashamed, Natashachart makes a of proposal. Pierre-Natasha-Andrew suicide attempt and is left seriously ill. (includes making these slides for you) • . . . • Having lost all will to live, [Andrei] forgives Natasha in a last act before dying. • Pierre's wife Hélène dies from an overdose of abortion medication (Tolstoy does not state it explicitly but the euphemism he uses is unambiguous). Pierre is reunited with Natasha, while the victorious Russians rebuild Moscow. Natasha speaks of Prince Andrei's death and Pierre of Karataev's. Both are aware of a growing bond between them in their bereavement. With the help of Princess Maria, Pierre finds love at last and, revealing his love after being released by his former wife's death, marries Natasha. 28

29 Questions • How much of this was “really automatic“? • What existing knowledge (in my head and in others‘) went into this analysis, • and how? • Can you think of another reason why this (deliberately) turned out silly? 29

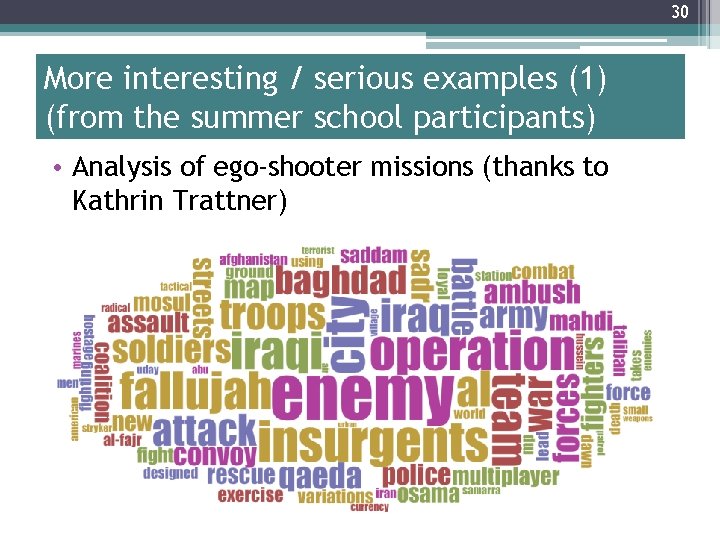

30 More interesting / serious examples (1) (from the summer school participants) • Analysis of ego-shooter missions (thanks to Kathrin Trattner) 30

31 Comment B. Berendt – compare this with an earlier text-mining analysis of reporting on the same events by CNN in comparison with Al Jazeera • See next slide 31

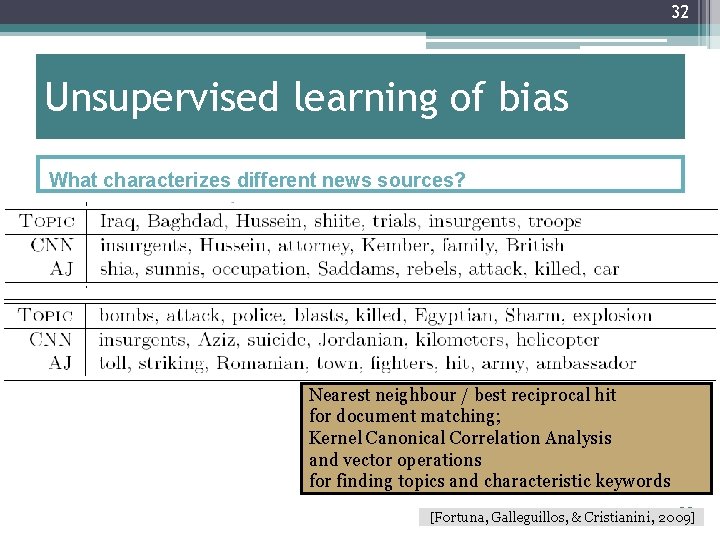

32 Unsupervised learning of bias What characterizes different news sources? Nearest neighbour / best reciprocal hit for document matching; Kernel Canonical Correlation Analysis and vector operations for finding topics and characteristic keywords 32 [Fortuna, Galleguillos, & Cristianini, 2009]

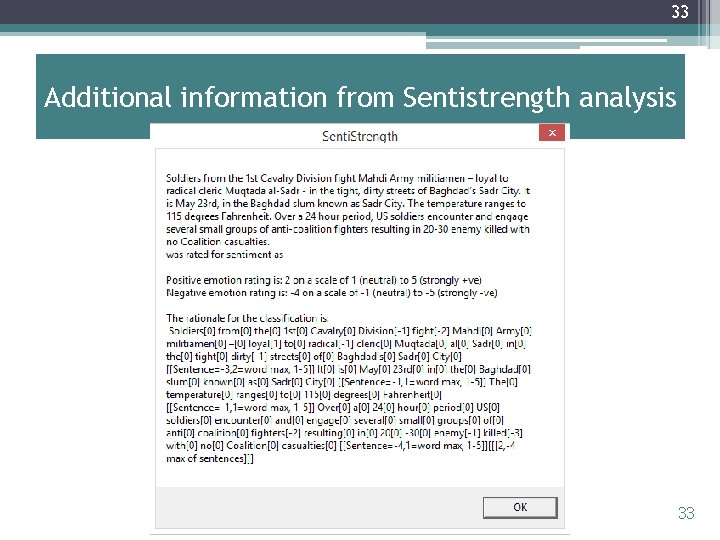

33 Additional information from Sentistrength analysis 33

34 More interesting / serious examples (2) (from the summer school participants) • Analysis of ideological documents: • Charter of Hamas (thanks to Alexandra Preitschopf): • analyses word usage and – interestingly – also the absence of specific words ▫ Note: this shows clearly why we need domain knowledge to interpret frequencies! • It also shows the difficulties of using sentiment analysis when the real object of analysis is opinion/bias. • For more details, see her presentation (also linked on my Summer School Web page) 34

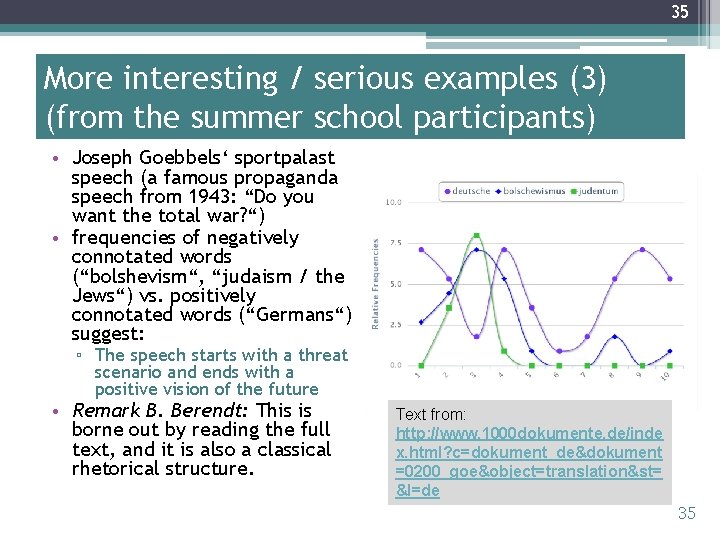

35 More interesting / serious examples (3) (from the summer school participants) • Joseph Goebbels‘ sportpalast speech (a famous propaganda speech from 1943: “Do you want the total war? “) • frequencies of negatively connotated words (“bolshevism“, “judaism / the Jews“) vs. positively connotated words (“Germans“) suggest: ▫ The speech starts with a threat scenario and ends with a positive vision of the future • Remark B. Berendt: This is borne out by reading the full text, and it is also a classical rhetorical structure. Text from: http: //www. 1000 dokumente. de/inde x. html? c=dokument_de&dokument =0200_goe&object=translation&st= &l=de 35

36 More interesting / serious examples (4) (from others) Examples of Voyant in Research: http: //docs. voyant-tools. org/about/examples-gallery/ 36

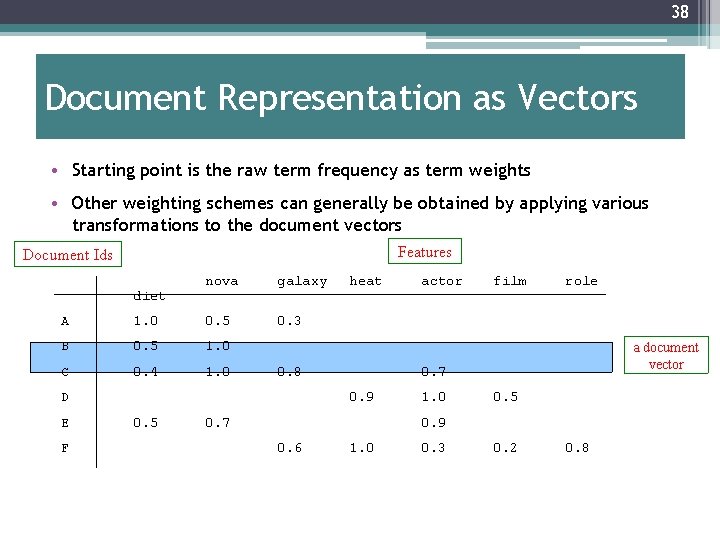

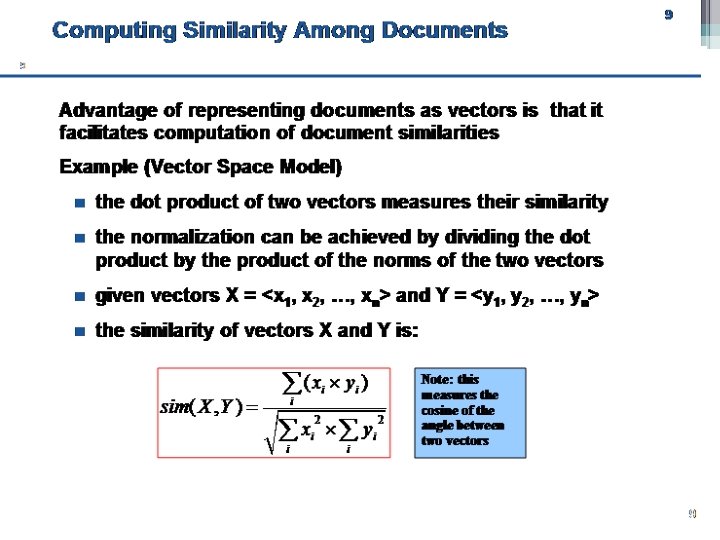

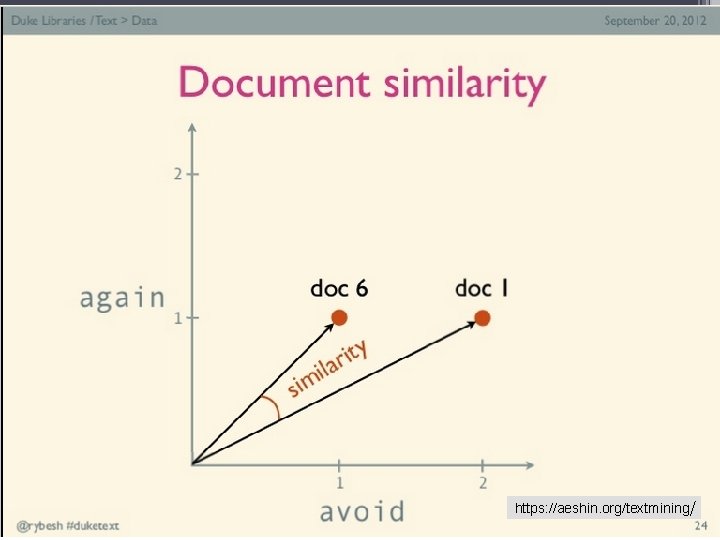

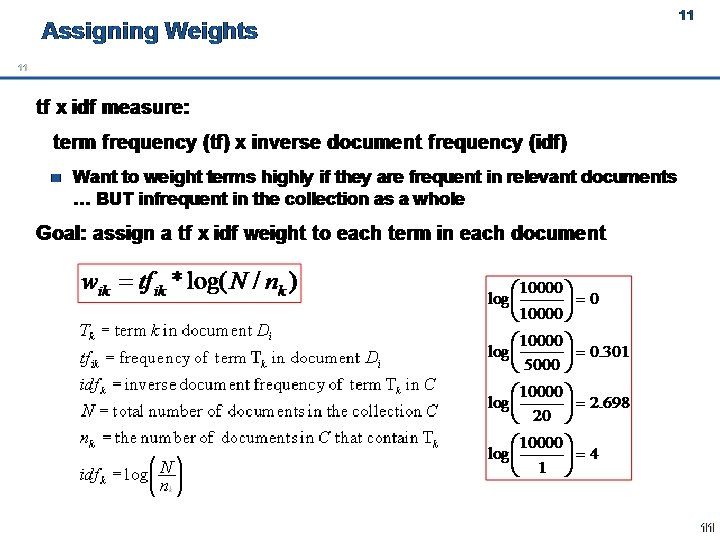

37 Some formalism: the vector-space model of text (basic model used in information retrieval and text mining) ▫ Basic idea: �Keywords are extracted from texts. �These keywords describe the (usually) topical content of Web pages and other text contributions. ▫ Based on the vector space model of document collections: �Each unique word in a corpus of Web pages = one dimension �Each page(view) is a vector with non-zero weight for each word in that page(view), zero weight for other words Words become “features” (in a data-mining sense) 37

38 Document Representation as Vectors • Starting point is the raw term frequency as term weights • Other weighting schemes can generally be obtained by applying various transformations to the document vectors Features Document Ids diet nova galaxy 0. 3 A 1. 0 0. 5 B 0. 5 1. 0 C 0. 4 1. 0 0. 8 D E F heat film role a document vector 0. 7 0. 9 0. 5 actor 0. 7 1. 0 0. 5 0. 9 0. 6 1. 0 0. 3 0. 2 0. 8

39 Other features (usually metadata of different sorts) can be added • Tags or other categories • Special content (e. g. URLs, images, Twitter mentions) • Source • Number of followers of source • . . . 39

40

41 https: //aeshin. org/textmining/ 41

42

‹#› You use text mining every day Texts as strings and feature vectors Text mining: steps and basic tasks Evaluation About today‘s dataset

44 The idea of text mining. . . • . . . is to go beyond frequency-counting • . . . is to go beyond the search-for-documents framework • . . . is to find patterns (of meaning) within and especially across documents • (but boundaries are not fixed)

45 Data mining (aka Knowledge Discovery) The non-trivial process of identifying valid, novel, potentially useful, and ultimately understandable patterns in data (Fayyad, Platetsky-Shapiro, Smyth, 1996) 45

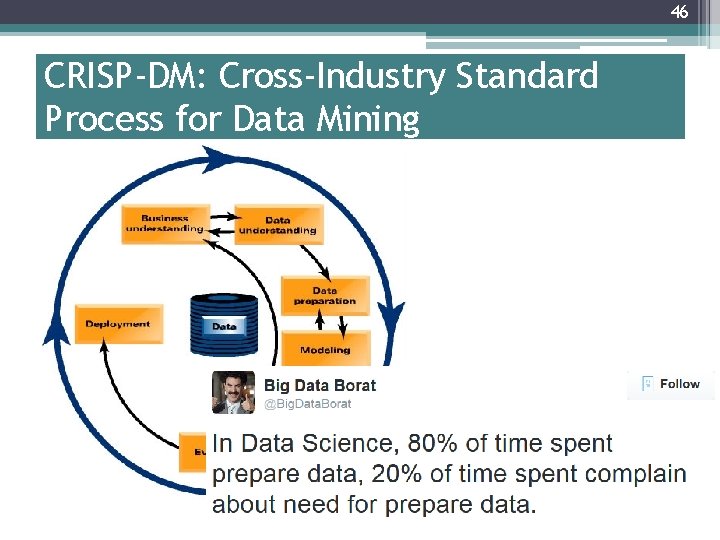

46 CRISP-DM: Cross-Industry Standard Process for Data Mining 46

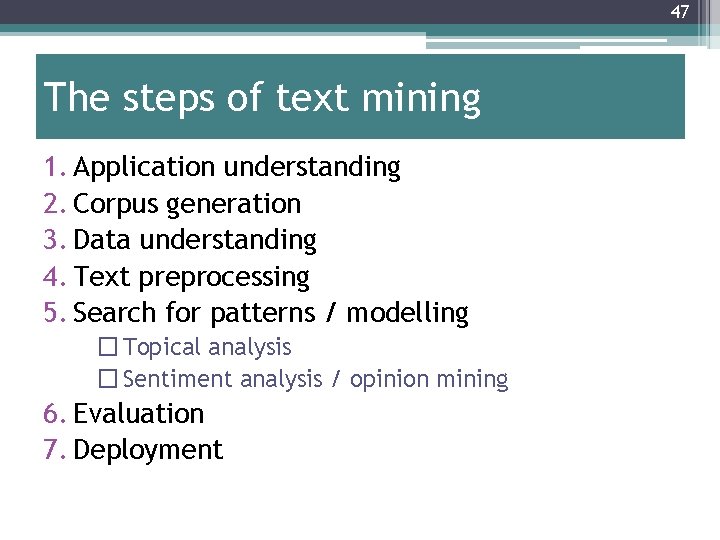

47 The steps of text mining 1. Application understanding 2. Corpus generation 3. Data understanding 4. Text preprocessing 5. Search for patterns / modelling � Topical analysis � Sentiment analysis / opinion mining 6. Evaluation 7. Deployment

48 Application understanding; Corpus generation ▫ What is the question? ▫ What is the context? ▫ What could be interesting sources, and where can they be found? ▫ Use an existing corpus ▫ Crawl ▫ Use a search engine and/or archive and/or API ▫ Get help!

49 Preprocessing (1) • Data cleaning ▫ Goal: get clean ASCII text ▫ Remove HTML markup*, pictures, advertisements, . . . ▫ Automate this: wrapper induction * Note: HTML markup may carry information too (e. g. , <b> or <h 1> marks something important), which can be extracted! (Depends on the application)

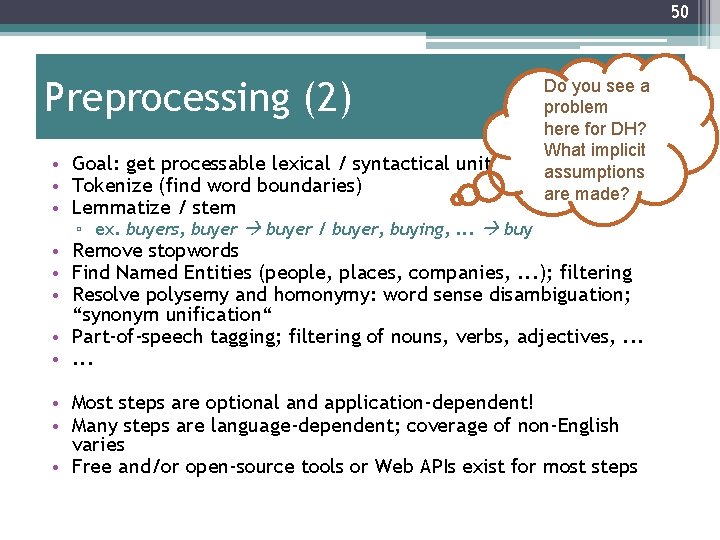

50 Preprocessing (2) • Goal: get processable lexical / syntactical units • Tokenize (find word boundaries) • Lemmatize / stem Do you see a problem here for DH? What implicit assumptions are made? ▫ ex. buyers, buyer / buyer, buying, . . . buy • Remove stopwords • Find Named Entities (people, places, companies, . . . ); filtering • Resolve polysemy and homonymy: word sense disambiguation; “synonym unification“ • Part-of-speech tagging; filtering of nouns, verbs, adjectives, . . . • Most steps are optional and application-dependent! • Many steps are language-dependent; coverage of non-English varies • Free and/or open-source tools or Web APIs exist for most steps

51 Preprocessing (3) • Creation of text representation ▫ Goal: a representation that the modelling algorithm can work on ▫ Most common forms: A text as �a set or (more usually) bag of words / vector-space representation: term-document matrix with weights reflecting occurrence, importance, . . . �a sequence of words �a tree (parse trees)

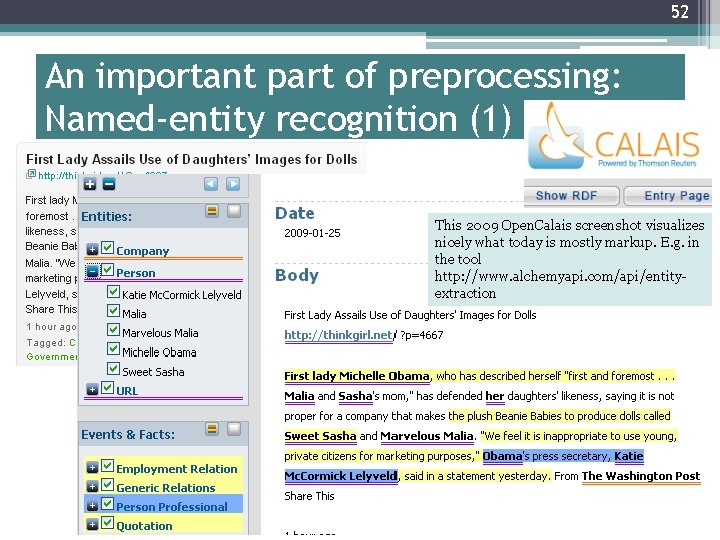

52 An important part of preprocessing: Named-entity recognition (1) This 2009 Open. Calais screenshot visualizes nicely what today is mostly markup. E. g. in the tool http: //www. alchemyapi. com/api/entityextraction

53 An important part of preprocessing: Named-entity recognition (2) • Technique: Lexica, heuristic rules, syntax parsing • Re-use lexica and/or develop your own ▫ configurable tools such as GATE • An example challenge: multi-document namedentity recognition ▫ Several solution proposals • A more difficult problem: Anaphora resolution

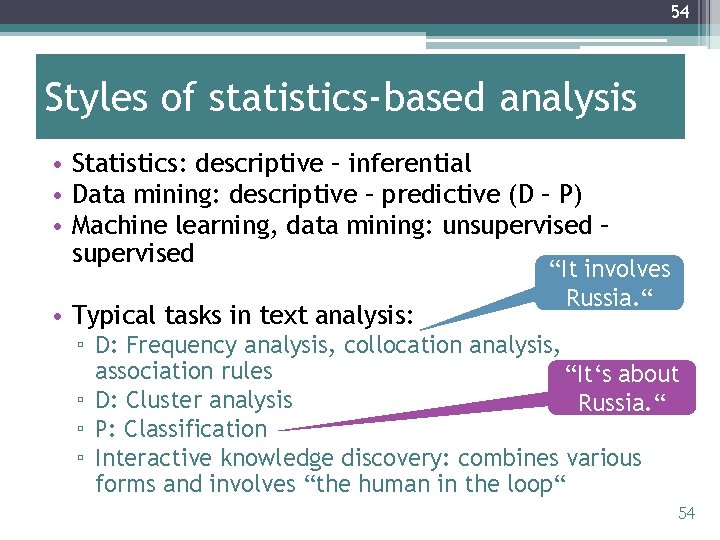

54 Styles of statistics-based analysis • Statistics: descriptive – inferential • Data mining: descriptive – predictive (D – P) • Machine learning, data mining: unsupervised – supervised • Typical tasks in text analysis: “It involves Russia. “ ▫ D: Frequency analysis, collocation analysis, association rules “It‘s about ▫ D: Cluster analysis Russia. “ ▫ P: Classification ▫ Interactive knowledge discovery: combines various forms and involves “the human in the loop“ 54

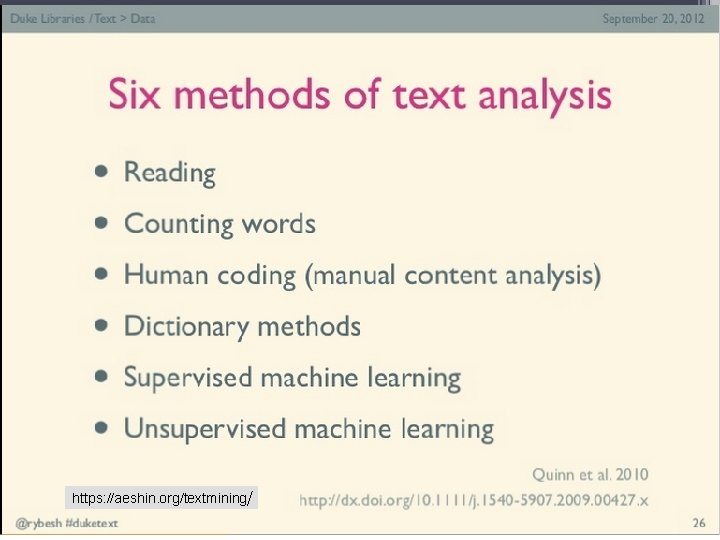

55 https: //aeshin. org/textmining/ 55

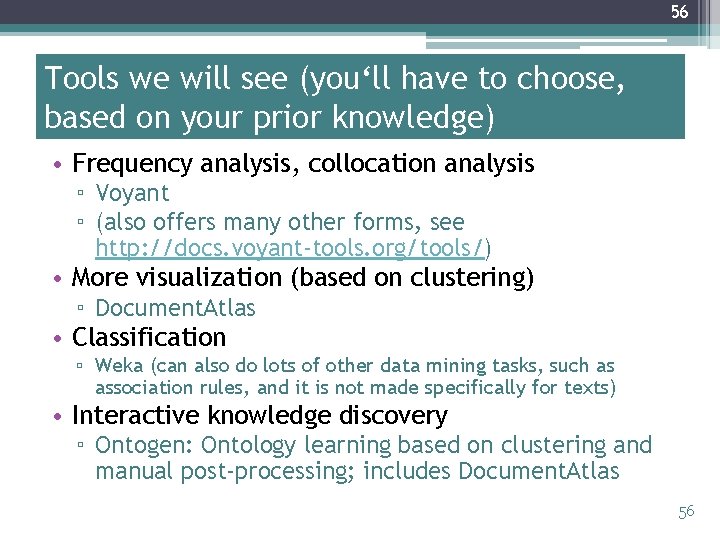

56 Tools we will see (you‘ll have to choose, based on your prior knowledge) • Frequency analysis, collocation analysis ▫ Voyant ▫ (also offers many other forms, see http: //docs. voyant-tools. org/tools/) • More visualization (based on clustering) ▫ Document. Atlas • Classification ▫ Weka (can also do lots of other data mining tasks, such as association rules, and it is not made specifically for texts) • Interactive knowledge discovery ▫ Ontogen: Ontology learning based on clustering and manual post-processing; includes Document. Atlas 56

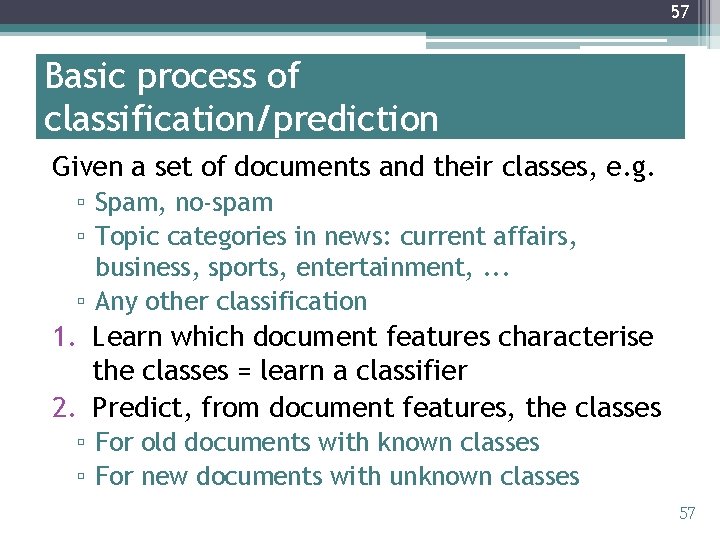

57 Basic process of classification/prediction Given a set of documents and their classes, e. g. ▫ Spam, no-spam ▫ Topic categories in news: current affairs, business, sports, entertainment, . . . ▫ Any other classification 1. Learn which document features characterise the classes = learn a classifier 2. Predict, from document features, the classes ▫ For old documents with known classes ▫ For new documents with unknown classes 57

58 What makes people happy?

59 Happiness in blogosphere

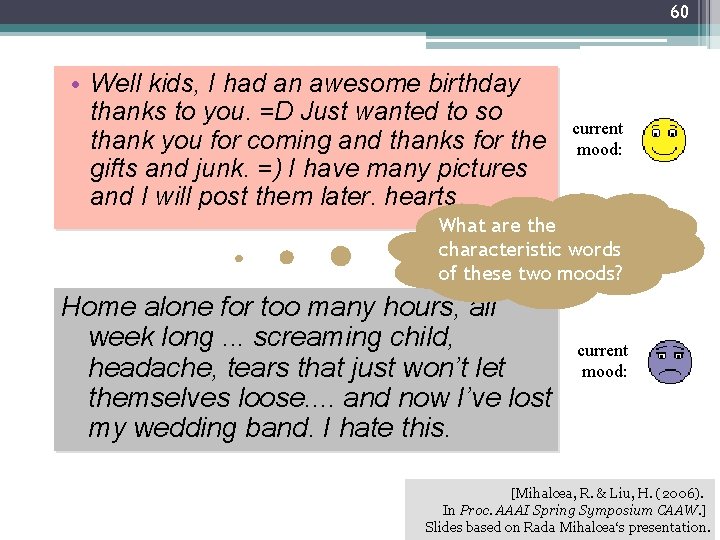

60 • Well kids, I had an awesome birthday thanks to you. =D Just wanted to so thank you for coming and thanks for the gifts and junk. =) I have many pictures and I will post them later. hearts current mood: What are the characteristic words of these two moods? Home alone for too many hours, all week long. . . screaming child, headache, tears that just won’t let themselves loose. . and now I’ve lost my wedding band. I hate this. current mood: [Mihalcea, R. & Liu, H. (2006). In Proc. AAAI Spring Symposium CAAW. ] Slides based on Rada Mihalcea‘s presentation.

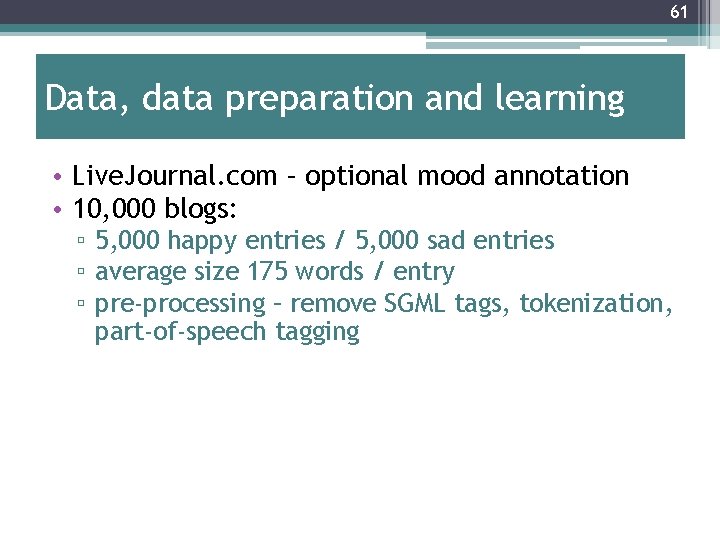

61 Data, data preparation and learning • Live. Journal. com – optional mood annotation • 10, 000 blogs: ▫ 5, 000 happy entries / 5, 000 sad entries ▫ average size 175 words / entry ▫ pre-processing – remove SGML tags, tokenization, part-of-speech tagging

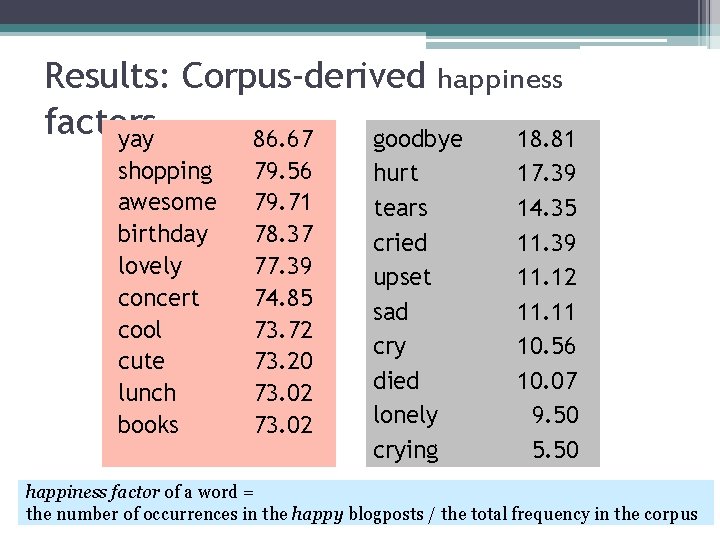

Results: Corpus-derived happiness factors yay 86. 67 goodbye 18. 81 shopping awesome birthday lovely concert cool cute lunch books 79. 56 79. 71 78. 37 77. 39 74. 85 73. 72 73. 20 73. 02 hurt tears cried upset sad cry died lonely crying 17. 39 14. 35 11. 39 11. 12 11. 11 10. 56 10. 07 9. 50 5. 50 happiness factor of a word = the number of occurrences in the happy blogposts / the total frequency in the corpus

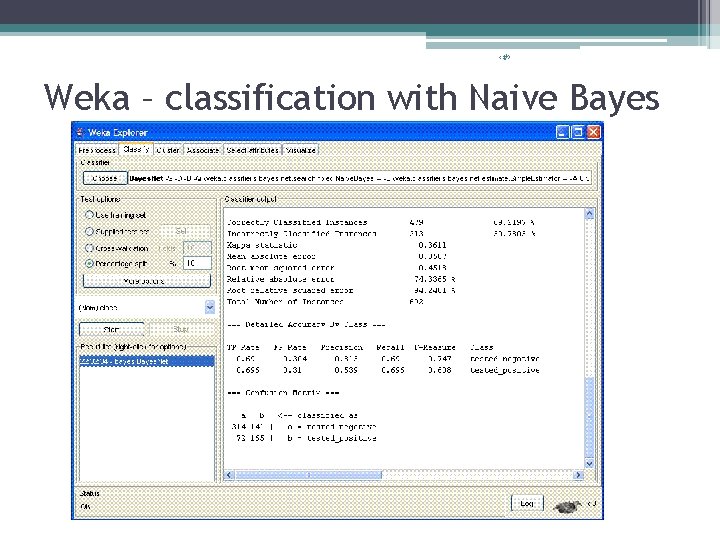

‹#› Weka – classification with Naive Bayes

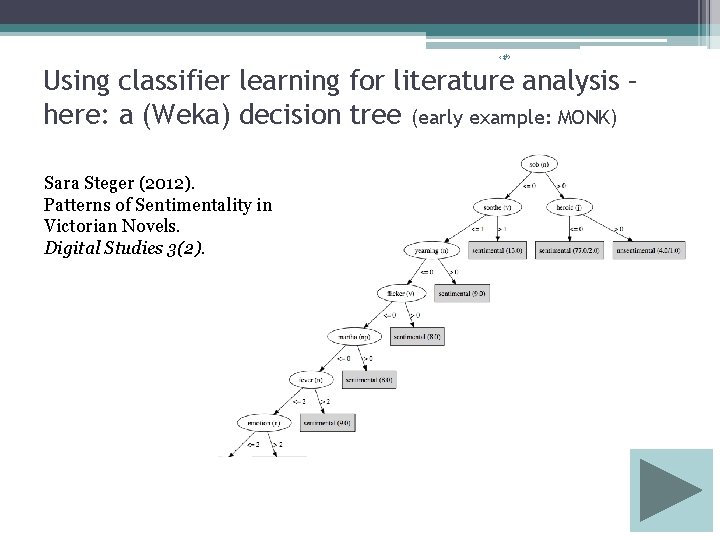

‹#› Using classifier learning for literature analysis – here: a (Weka) decision tree (early example: MONK) Sara Steger (2012). Patterns of Sentimentality in Victorian Novels. Digital Studies 3(2).

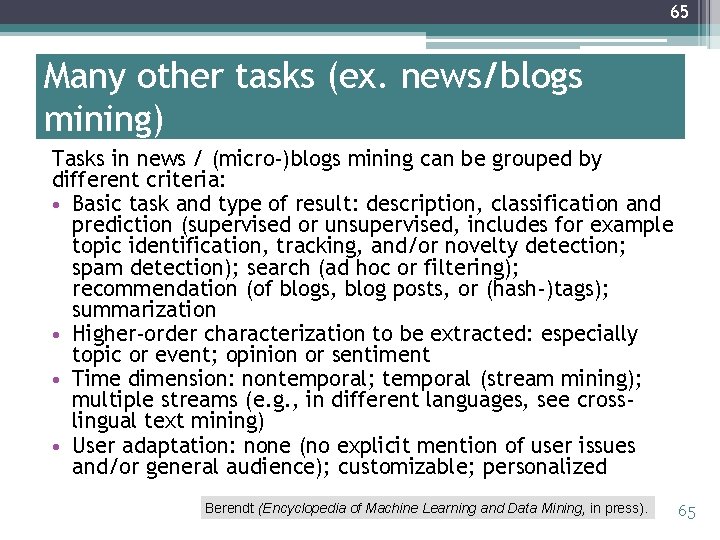

65 Many other tasks (ex. news/blogs mining) Tasks in news / (micro-)blogs mining can be grouped by different criteria: • Basic task and type of result: description, classification and prediction (supervised or unsupervised, includes for example topic identification, tracking, and/or novelty detection; spam detection); search (ad hoc or filtering); recommendation (of blogs, blog posts, or (hash-)tags); summarization • Higher-order characterization to be extracted: especially topic or event; opinion or sentiment • Time dimension: nontemporal; temporal (stream mining); multiple streams (e. g. , in different languages, see crosslingual text mining) • User adaptation: none (no explicit mention of user issues and/or general audience); customizable; personalized Berendt (Encyclopedia of Machine Learning and Data Mining, in press). 65

66 Real-world applications of news/blogs mining Real-world applications increasingly employ selections or, more often, combinations of these tasks by their intended users and use cases, in particular: • News aggregators allow laypeople and professional users (e. g. journalists) to see “what’s in the news” and to compare different sources’ texts on one story. Reflecting the presumption that news (especially mainstream news – sources for news aggregators are usually whitelisted) are mostly objective/neutral, these aggregators focus on topics and events. News aggregators are now provided by all major search engines. • Social-media monitoring tools allow laypeople and professional users to track not only topical mentions of a keyword or named entity (e. g. person, brand), but also aggregate sentiment towards it. The focus on sentiment reflects the perceptions that even when news-related, social media content tends to be subjective and that studying the blogosphere is therefore an inexpensive way of doing market research or public-opinion research. The whitelist here is usually the platforms (e. g. Twitter, Tumblr, Live. Journal, Facebook) rather than the sources themselves, reflecting the huge size and dynamic structure of the blogosphere / the Social Web. The landscape of commercial and free social-media monitoring tools is wide and changes frequently; up-to-date overviews and comparisons can easily be found on the Web. • Emerging application types include text mining not of, but for journalistic texts, in particular natural language generation in domains with highly schematized event structures and reporting, such as sports and finance reporting (e. g. Allen et al. , 2010; narrativescience. com) and social-media monitoring tools for helping journalists find sources (Diakopoulos et al. , 2012). Berendt (Encyclopedia of Machine Learning and Data Mining, in press). 66

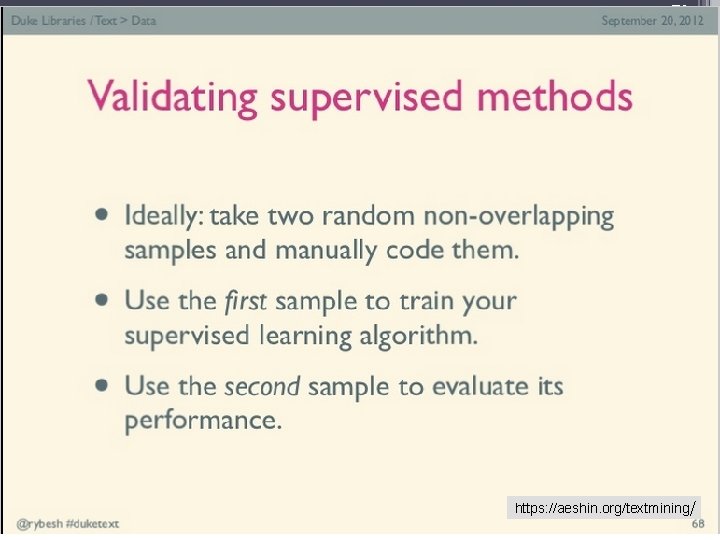

‹#› You use text mining every day Texts as strings and feature vectors Text mining: steps and basic tasks Evaluation About today‘s dataset

68 Evaluation of unsupervised learning: e. g. clustering • Do the clusters make sense? • Are the instances within one cluster similar to one another? • Are the instances in different clusters dissimilar to one another? • (There are quantitative metrics of #2 and #3)

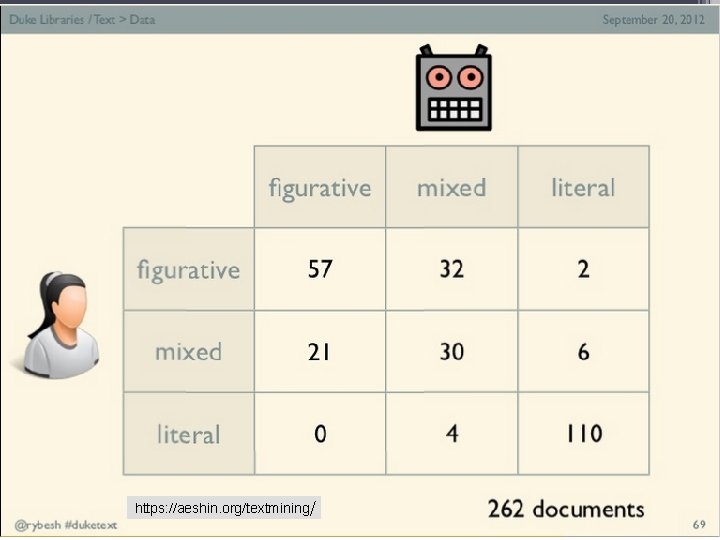

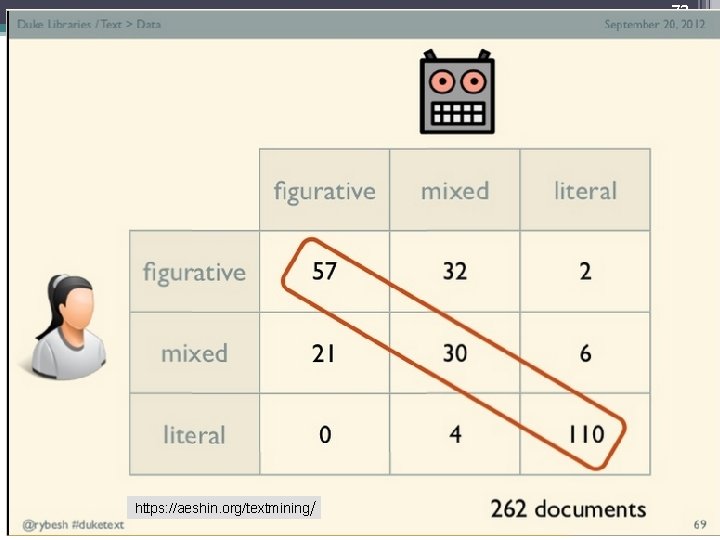

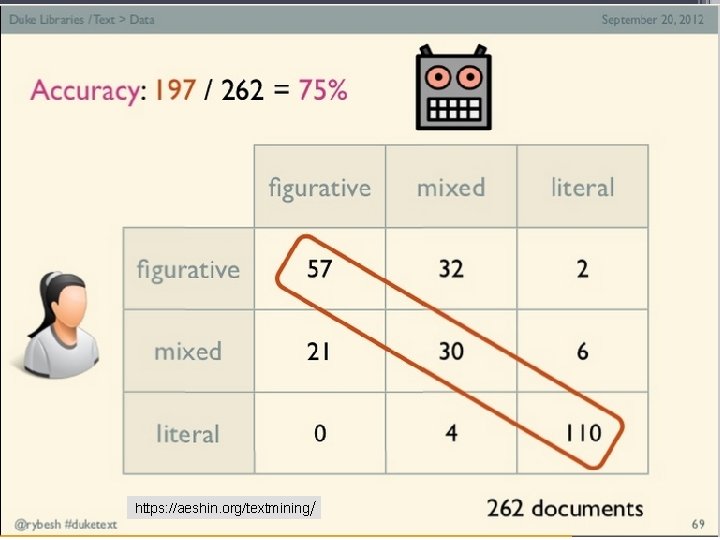

69 Quality of automatic “mood separation” • naïve bayes text classifier ▫ five-fold cross validation • Accuracy: 79. 13% (>> 50% baseline)

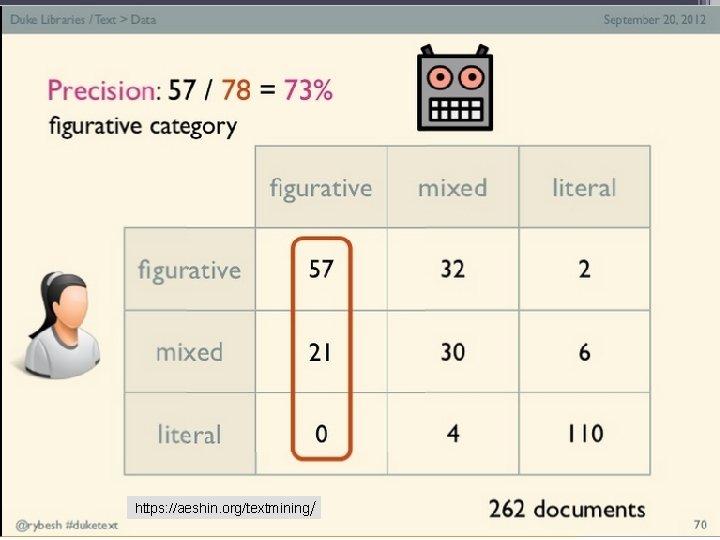

70 https: //aeshin. org/textmining/ 70

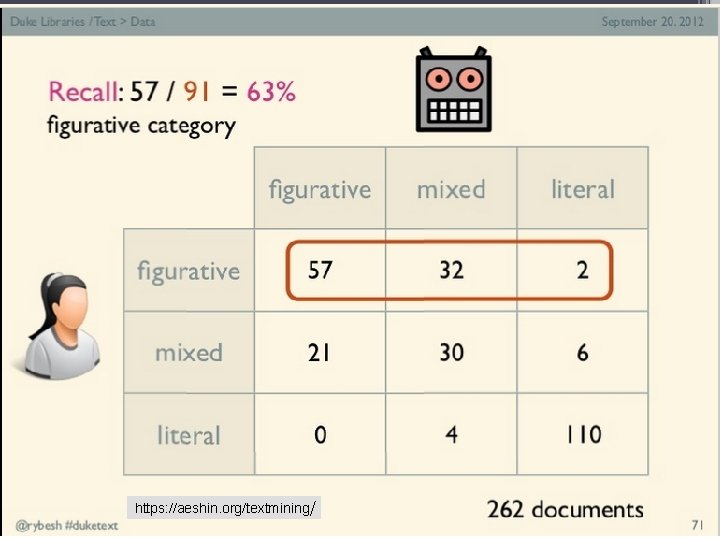

71 https: //aeshin. org/textmining/ 71

72 https: //aeshin. org/textmining/ 72

73 https: //aeshin. org/textmining/ 73

74 https: //aeshin. org/textmining/ 74

75 https: //aeshin. org/textmining/ 75

78 Who defines which class a document belongs to? The researcher? The author? The reader? Someone paid to do exactly this (e. g. a worker on m. Turk)? • Several of them? • Someone else? • • 78

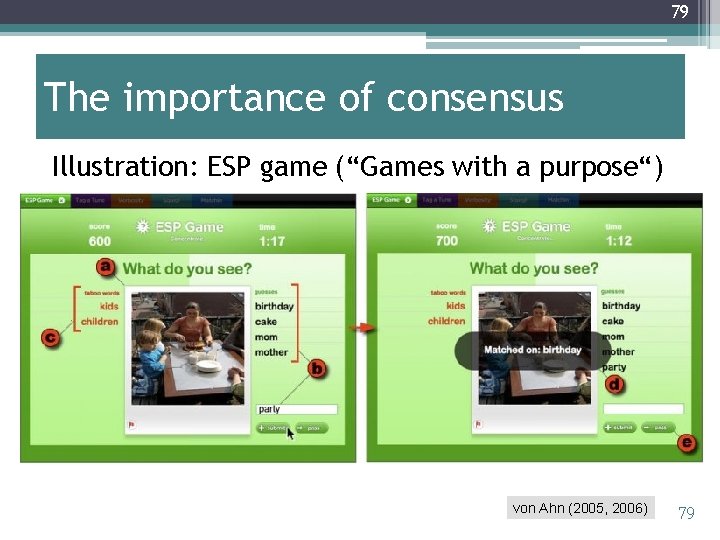

79 The importance of consensus Illustration: ESP game (“Games with a purpose“) von Ahn (2005, 2006) 79

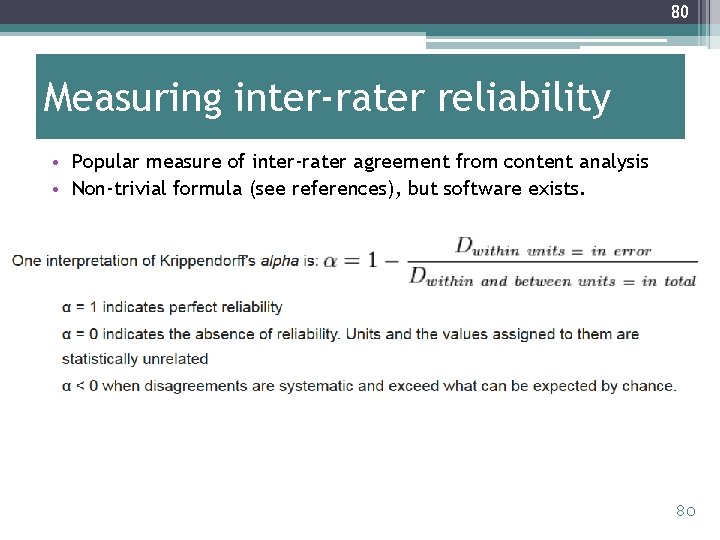

80 Measuring inter-rater reliability • Popular measure of inter-rater agreement from content analysis • Non-trivial formula (see references), but software exists. 80

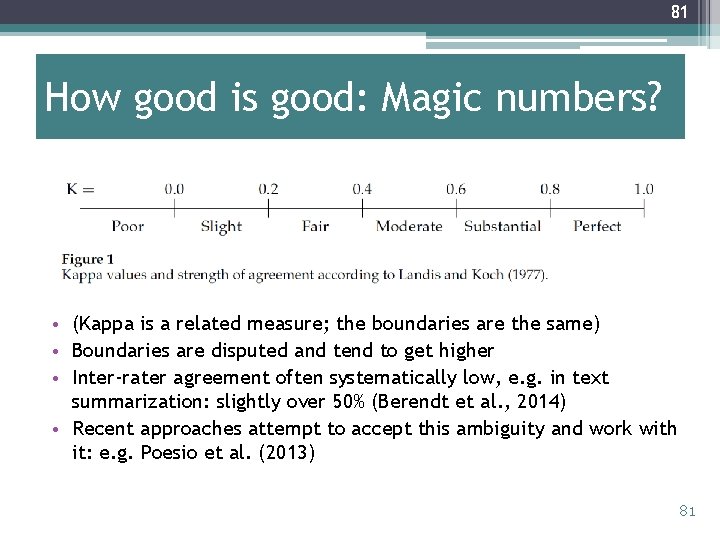

81 How good is good: Magic numbers? • (Kappa is a related measure; the boundaries are the same) • Boundaries are disputed and tend to get higher • Inter-rater agreement often systematically low, e. g. in text summarization: slightly over 50% (Berendt et al. , 2014) • Recent approaches attempt to accept this ambiguity and work with it: e. g. Poesio et al. (2013) 81

82 In what sense is this an alternative? • “Given that there is no ground truth is a discipline like literary criticism, it is difficult to know how influential these results will prove. • A scholar would have to write them up in traditional article or monograph form, wait for the article or monograph to move through the peer-review process (this can take months or years) and then other scholars in the field will have to read it, be influenced by its arguments, and adjust their own interpretations of Dickinson—in turn publishing these in their own articles and monographs. • Nonetheless, we believe that the Nora system has suggested that classification and prediction can be useful agents of provocation in humanistic study. ” (Kirschenbaum, 2007) 82

‹#› You use text mining every day Texts as strings and feature vectors Text mining: steps and basic tasks Evaluation About today‘s dataset

84 #gamergate “Gamer. Gate is a grassroots movement with the goal of supporting ethics in game journalism. Some feminists have claimed it is a hateful, misogynistic movement, but they haven't been able to meet the burden of proof on that. ” http: //drunken-peasantspodcast. wikia. com/wiki/Gamer. Gate 84

85 (Only) one reason this is interesting for text analysis “Ethics aren't the only thing #Gamergate is concerned with. As the movement made the shift from ad hominem attacks to insisting that its only interest in Quinn was as an example of nepotism and corruption in the gaming industry, it also began co-opting the language of social justice movements and of journalism to legitimize its complaints. Although their movement targets women specifically, #Gamergaters insist they speak for a victimized "demographic, " and that anyone who opposes misogyny while making generalizations about gamers must be a hypocrite. ” http: //gawker. com/what-is-gamergate-and-why-an-explainer-for-non-geeks 1642909080 85

86 Gamergate tweets • Based on the work of Budac, A. , Chartier, R. , Suomela, T. , Gouglas, S. , & Rockwell, G. (see sources at the end of this slideset) • I received the data for the purposes of this summer school (i. e. also for you) ▫ Condition: we all respect the associated ethics code ▫ This is an interesting document in itself, and we will use it for part 3 • Data post-processed for you: “most retweeted tweets“ Oct‘ 14 – Mar’ 15, in 4 versions (each version assembled into one ZIP file) ▫ 1 document per month, tweet texts ordered by count of retweets (desc. ) Voyant ▫ 1 document per tweet, sorted into 1 folder per month Document. Atlas/Ontogen ▫ 1 document overall ( Weka), with fields - � anonymized user ID � Month � Count in that month‘s dataset � Tweet text The same, but with some post-processing that will make your analysis easier 86

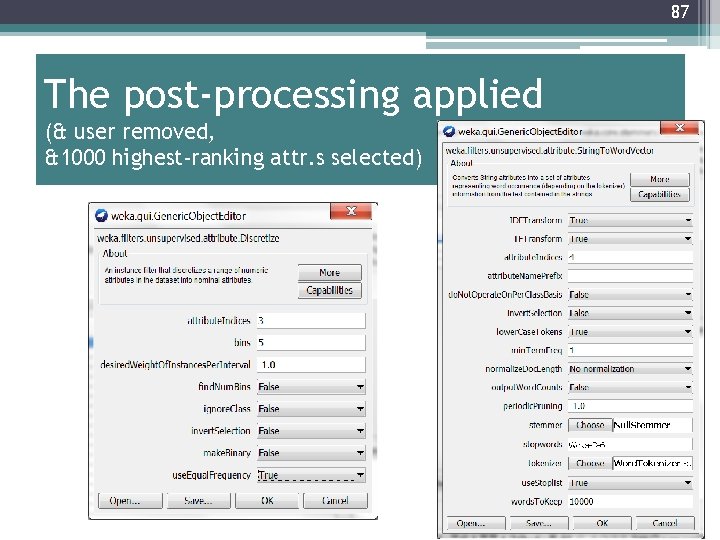

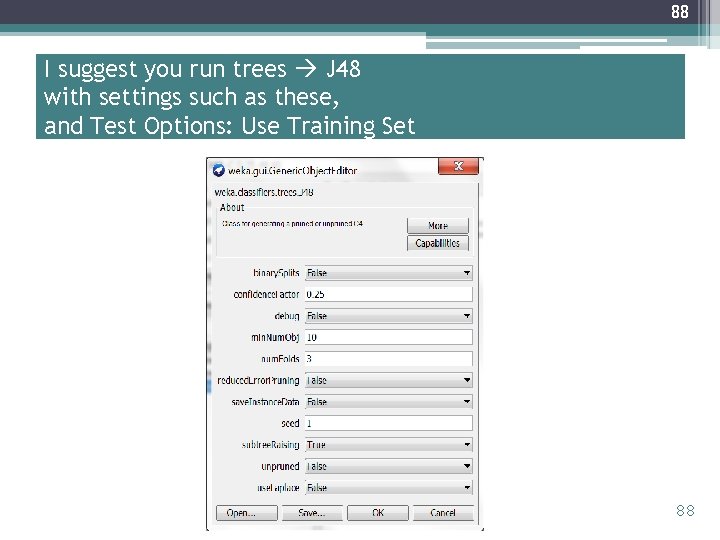

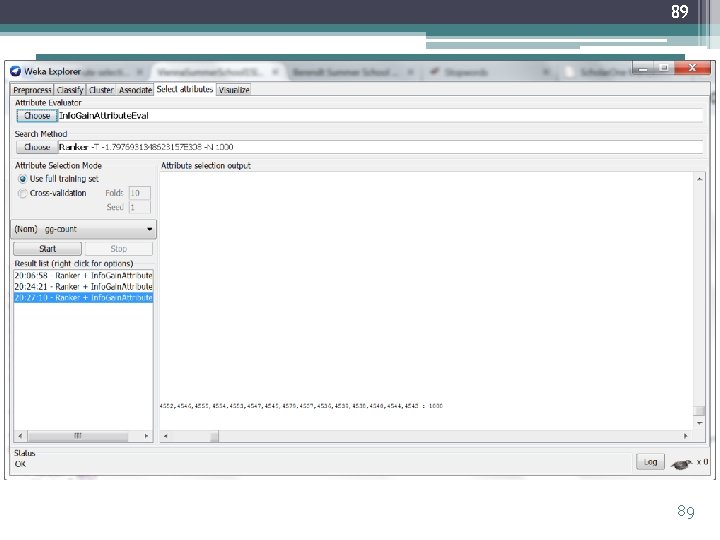

87 The post-processing applied (& user removed, &1000 highest-ranking attr. s selected) 87

88 I suggest you run trees J 48 with settings such as these, and Test Options: Use Training Set 88

89 89

90 Thank you! I‘ll be more than happy to hear your ? s

91 References A good textbook on Text Mining: • Feldman, R. & Sanger, J. (2007). The Text Mining Handbook. Advanced Approaches in Analyzing Unstructured Data. Cambridge University Press. An introduction similar to this one, but also covering unsupervised learning in some detail, and with lots of pointers to books, materials, etc. : • Shaw, R. (2012). Text-mining as a Research Tool in the Humanities and Social Sciences. Presentation at the Duke Libraries, September 20, 2012. https: //aeshin. org/textmining/ An overview of news and (micro-)blogs mining: • Berendt, B. (in press). Text mining for news and blogs analysis. To appear in C. Sammut & G. I. Webb (Eds. ), Encyclopedia of Machine Learning and Data Mining. Berlin etc. : Springer. http: //people. cs. kuleuven. be/~bettina. berendt/Papers/berendt_encyclopedia_2015_with_publication_info. pdf See http: //wiki. esi. ac. uk/Current_Approaches_to_Data_Mining_Blogs for more articles on the subject. Individual sources cited on the slides • Fortuna, B. , Galleguillos, C. , & Cristianini, N. (2009). Detecting the bias in media with statistical learning methods. In Text Mining: Classification, Clustering, and Applications, Chapman & Hall/CRC, 2009. • Qiaozhu Mei, Cheng. Xiang Zhai: Discovering evolutionary theme patterns from text: an exploration of temporal text mining. KDD 2005: 198 -207 • Mihalcea, R. & Liu, H. (2006). A corpus-based approach to finding happiness, In Proc. AAAI Spring Symposium on Computational Approaches to Analyzing Weblogs. http: //citeseerx. ist. psu. edu/viewdoc/summary? doi=10. 1. 1. 79. 6759 • Kirschenbaum, M. "The Remaking of Reading: Data Mining and the Digital Humanities. " In NGDM 07: National Science Foundation Symposium on Next Generation of Data Mining and Cyber-Enabled Discovery for Innovation. http: //www. cs. umbc. edu/~hillol/NGDM 07/abstracts/talks/MKirschenbaum. pdf • Mueller, M. “Notes towards a user manual of MONK. ” https: //apps. lis. uiuc. edu/wiki/display/MONK/Notes+towards+a+user+manual+of+Monk, 2007. • Massimo Poesio, Jon Chamberlain, Udo Kruschwitz, Livio Robaldo and Luca Ducceschi, 2013. Phrase Detectives: Utilizing Collective Intelligence for Internet-Scale Language Resource Creation. ACM Transactions on Intelligent Interactive Systems, 3(1). http: //csee. essex. ac. uk/poesio/publications/poesio_et_al_ACM_TIIS_13. pdf • Luis von Ahn (2005). Human Computation. Ph. D Dissertation. Computer Science Department, Carnegie Mellon University. http: //reports-archive. adm. cs. cmu. edu/anon/usr 0/ftp/usr/ftp/2005/abstracts/05 -193. html • Luis von Ahn: Games with a Purpose. IEEE Computer 39(6): 92 -94 (2006)

92 More DH-specific tools Overviews of 71 tools for Digital Humanists • Simpson, J. , Rockwell, G. , Chartier, R. , Sinclair, S. , Brown, S. , Dyrbye, A. , & Uszkalo, K. (2013). Text Mining Tools in the Humanities: An Analysis Framework. Journal of Digital Humanities, 2 (3), http: //journalofdigitalhumanities. org/2 -3/textmining-tools-in-the-humanities-an-analysisframework/ • See also the link collection on the Voyant 92 documentation Web page

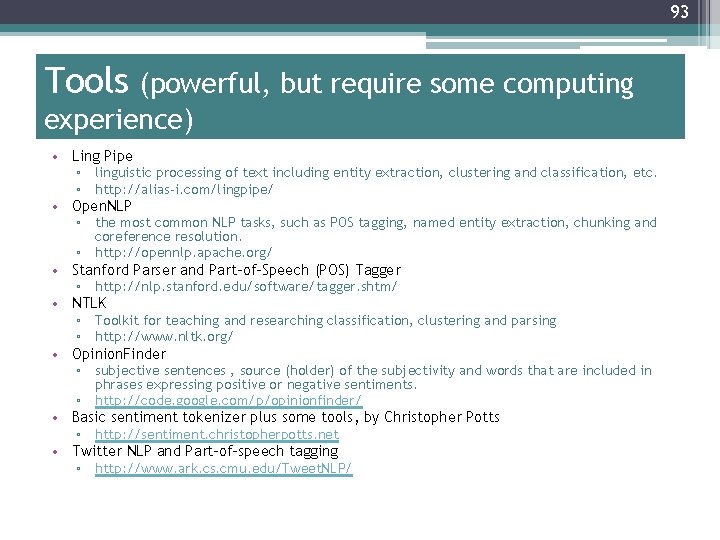

93 Tools (powerful, but require some computing experience) • Ling Pipe • • • ▫ linguistic processing of text including entity extraction, clustering and classification, etc. ▫ http: //alias-i. com/lingpipe/ Open. NLP ▫ the most common NLP tasks, such as POS tagging, named entity extraction, chunking and coreference resolution. ▫ http: //opennlp. apache. org/ Stanford Parser and Part-of-Speech (POS) Tagger ▫ http: //nlp. stanford. edu/software/tagger. shtm/ NTLK ▫ Toolkit for teaching and researching classification, clustering and parsing ▫ http: //www. nltk. org/ Opinion. Finder ▫ subjective sentences , source (holder) of the subjectivity and words that are included in phrases expressing positive or negative sentiments. ▫ http: //code. google. com/p/opinionfinder/ Basic sentiment tokenizer plus some tools, by Christopher Potts ▫ http: //sentiment. christopherpotts. net Twitter NLP and Part-of-speech tagging ▫ http: //www. ark. cs. cmu. edu/Tweet. NLP/

94 Further tools (thanks for your suggestions!) • Atlas TI: “Qualitative data analysis“ ▫ http: //atlasti. com/ ▫ Commercial product, has free trial version 94

95 Gamergate sources • Budac, A. , Chartier, R. , Suomela, T. , Gouglas, S. , & Rockwell, G. (2015) #Gamer. Gate: Distant Reading Games Discourse. Paper presented at the CGSA 2015 conference at the HSSFC Congress at University of Ottawa, Ontario, June 2015. • Rockwell, G. (2015). Appendix 1: Ethics of Twitter Gamergate Research. • Rockwell, Geoffrey; Suomela, Todd, 2015, "Gamergate Reactions", http: //dx. doi. org/10. 7939/DVN/10253 V 5 [Version]. 95

96 More sources • Please find the URLs of pictures and screenshots in the Powerpoint “comment“ box • Thanks to the Internet for them! 96

- Slides: 94