1 Last update 5 December 2007 Advanced databases

1 Last update: 5 December 2007 Advanced databases – Inferring implicit/new knowledge from data(bases): Text mining Bettina Berendt Katholieke Universiteit Leuven, Department of Computer Science http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/ Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

2 Agenda Motivation Brief overview of text mining Preprocessing text: word level Preprocessing text: document level Application: Happiness (& intro to Naïve Bayes class. ) More on preprocessing text: changing representation Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

3 Classification (1) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

Classification (2): spam detection (Note: Typically done based not only on text!) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/ 4

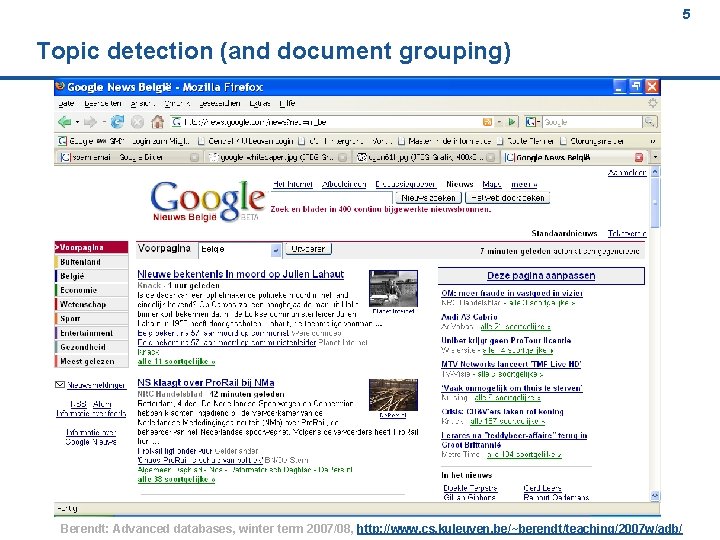

5 Topic detection (and document grouping) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

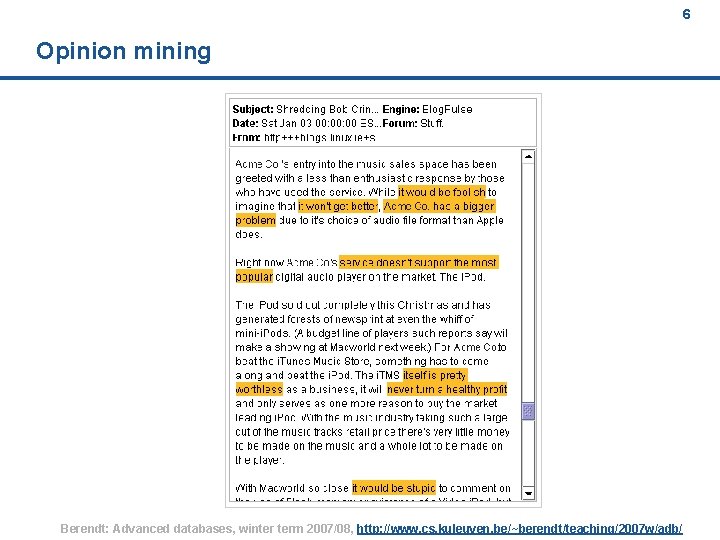

6 Opinion mining Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

7 What characterizes / differentiates news sources? (1) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

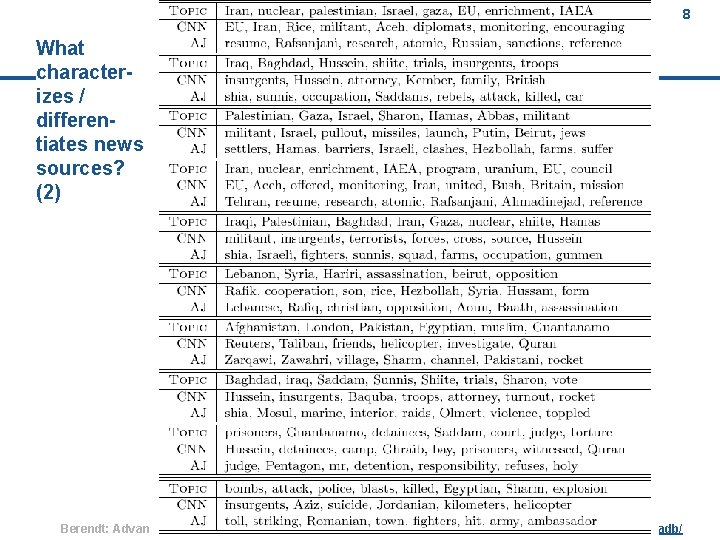

8 What characterizes / differentiates news sources? (2) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

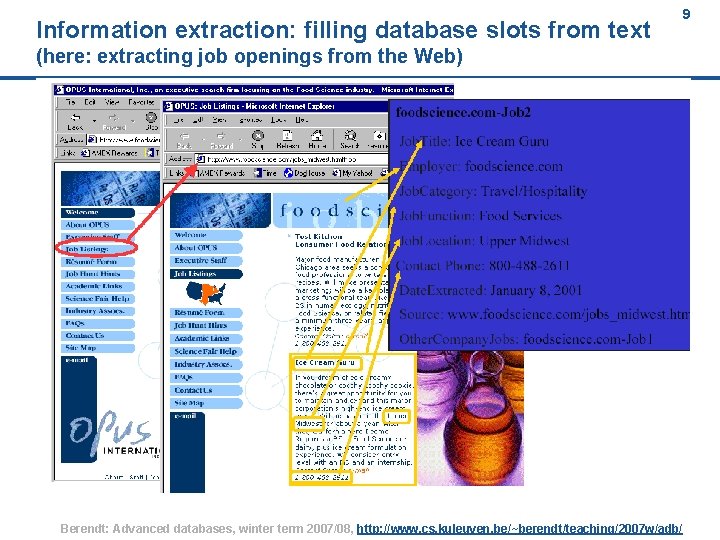

Information extraction: filling database slots from text (here: extracting job openings from the Web) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/ 9

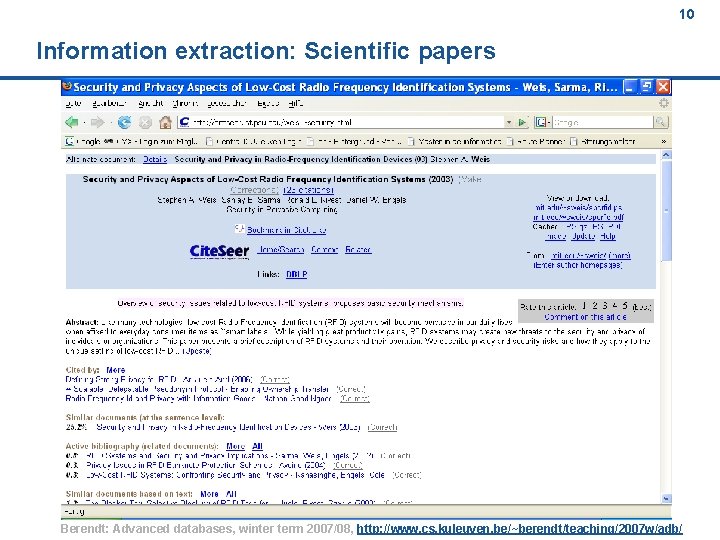

10 Information extraction: Scientific papers Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

11 Agenda Motivation Brief overview of text mining Preprocessing text: word level Preprocessing text: document level Application: Happiness (& intro to Naïve Bayes class. ) More on preprocessing text: changing representation Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

12 (slides 2 -6 from the Text Mining Tutorial by Grobelnik & Mladenic) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

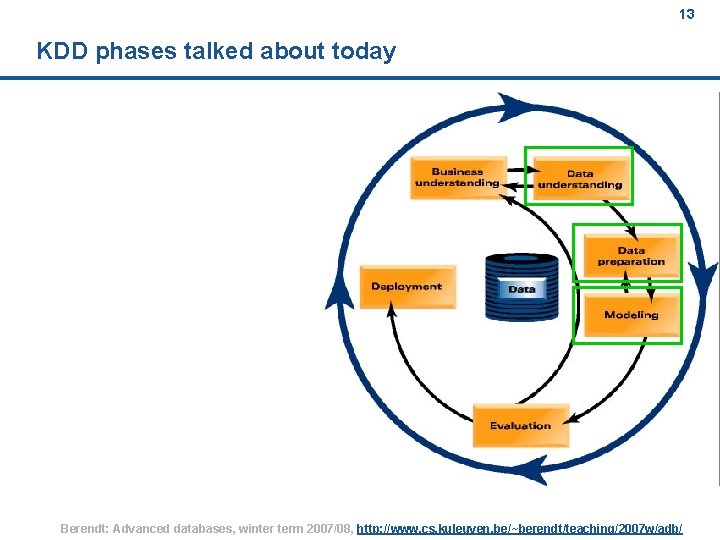

13 KDD phases talked about today Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

14 Agenda Motivation Brief overview of text mining Preprocessing text: word level Preprocessing text: document level Application: Happiness (& intro to Naïve Bayes class. ) More on preprocessing text: changing representation Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

15 (One) goal n Convert a „text instance“ (usually a document) into a representation amenable to mining / machine learning algorithms n Most common form: vector-space representation / bag-ofwords Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

16 (slides 7 -19 from the Text Mining Tutorial by Grobelnik & Mladenic) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

17 Agenda Motivation Brief overview of text mining Preprocessing text: word level Preprocessing text: document level Application: Happiness (& intro to Naïve Bayes class. ) More on preprocessing text: changing representation Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

18 (slides 36 -45 from the Text Mining Tutorial by Grobelnik & Mladenic) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

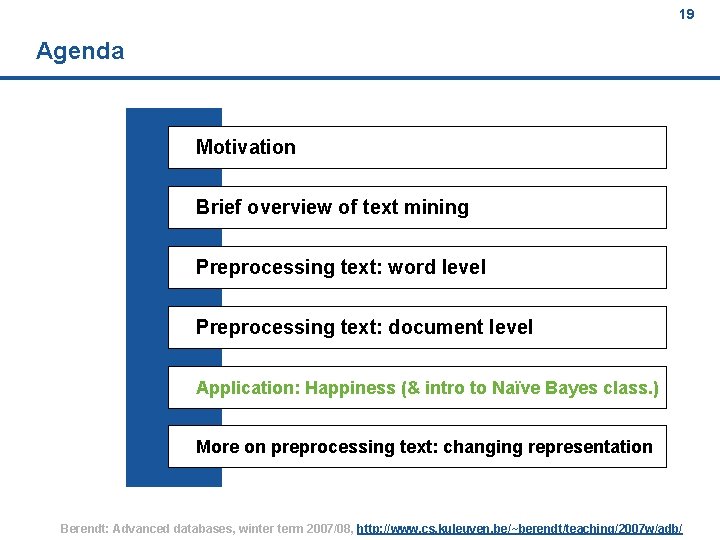

19 Agenda Motivation Brief overview of text mining Preprocessing text: word level Preprocessing text: document level Application: Happiness (& intro to Naïve Bayes class. ) More on preprocessing text: changing representation Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

“What makes people happy? ” – a corpus-based approach to 20 finding happiness Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

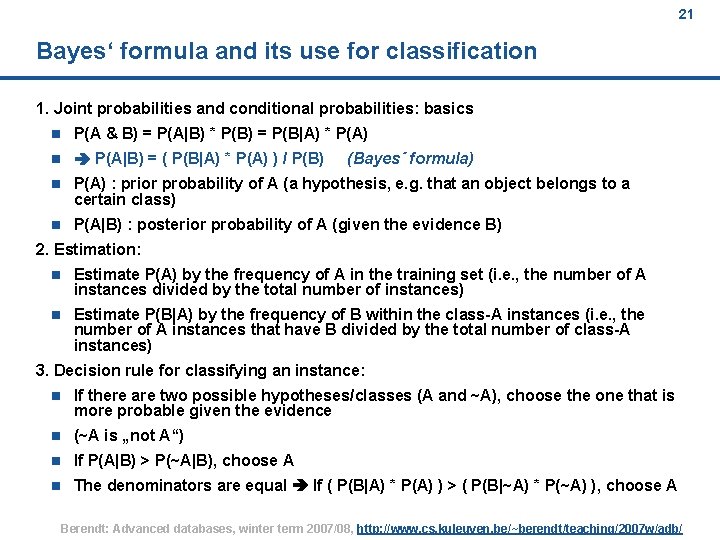

21 Bayes‘ formula and its use for classification 1. Joint probabilities and conditional probabilities: basics n P(A & B) = P(A|B) * P(B) = P(B|A) * P(A) n P(A|B) = ( P(B|A) * P(A) ) / P(B) n P(A) : prior probability of A (a hypothesis, e. g. that an object belongs to a certain class) n P(A|B) : posterior probability of A (given the evidence B) (Bayes´ formula) 2. Estimation: n Estimate P(A) by the frequency of A in the training set (i. e. , the number of A instances divided by the total number of instances) n Estimate P(B|A) by the frequency of B within the class-A instances (i. e. , the number of A instances that have B divided by the total number of class-A instances) 3. Decision rule for classifying an instance: n If there are two possible hypotheses/classes (A and ~A), choose the one that is more probable given the evidence n (~A is „not A“) n If P(A|B) > P(~A|B), choose A n The denominators are equal If ( P(B|A) * P(A) ) > ( P(B|~A) * P(~A) ), choose A Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

![22 Simplifications and Naive Bayes [Repeated from previous slide: ] If ( P(B|A) * 22 Simplifications and Naive Bayes [Repeated from previous slide: ] If ( P(B|A) *](http://slidetodoc.com/presentation_image_h2/45977c9dd79b73f3180b38bbf68d75e8/image-22.jpg)

22 Simplifications and Naive Bayes [Repeated from previous slide: ] If ( P(B|A) * P(A) ) > ( P(B|~A) * P(~A) ), choose A 4. Simplify by setting the priors equal (i. e. , by using as many instances of class A as of class ~A) n If P(B|A) > P(B|~A), choose A 5. More than one kind of evidence n General formula: n P(A | B 1 & B 2 ) = P(A & B 1 & B 2 ) / P(B 1 & B 2) = P(B 1 & B 2 | A) * P(A) / P(B 1 & B 2) = P(B 1 | B 2 & A) * P(B 2 | A) * P(A) / P(B 1 & B 2) n Enter the „naive“ assumption: B 1 and B 2 are independent given A n P(A | B 1 & B 2 ) = P(B 1|A) * P(B 2|A) * P(A) / P(B 1 & B 2) n By reasoning as in 3. and 4. above, the last two terms can be omitted n If (P(B 1|A) * P(B 2|A) ) > (P(B 1|~A) * P(B 2|~A) ), choose A n The generalization to n kinds of evidence is straightforward. n These kinds of evidence are often called features in machine learning. Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

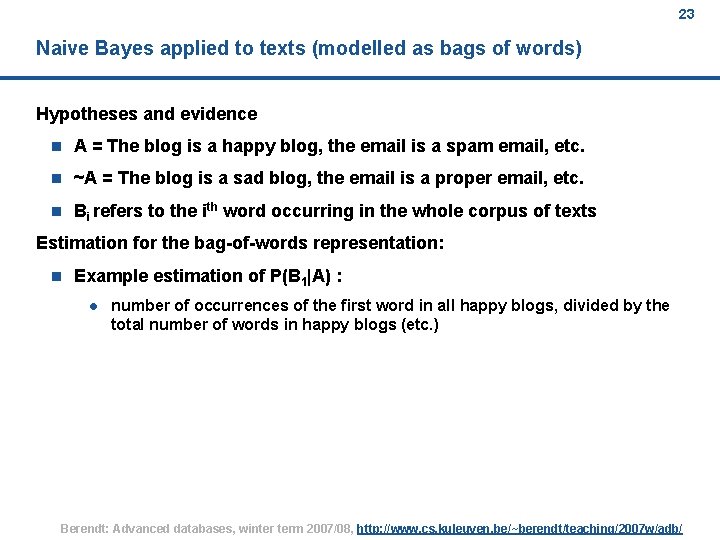

23 Naive Bayes applied to texts (modelled as bags of words) Hypotheses and evidence n A = The blog is a happy blog, the email is a spam email, etc. n ~A = The blog is a sad blog, the email is a proper email, etc. n Bi refers to the ith word occurring in the whole corpus of texts Estimation for the bag-of-words representation: n Example estimation of P(B 1|A) : l number of occurrences of the first word in all happy blogs, divided by the total number of words in happy blogs (etc. ) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

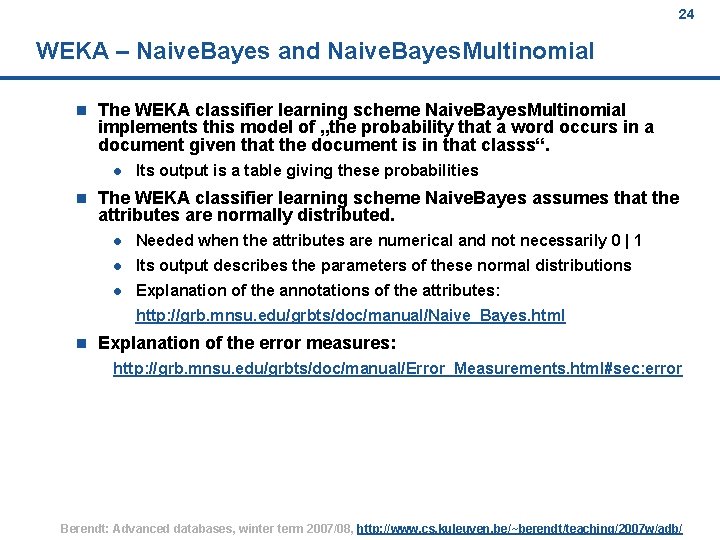

24 WEKA – Naive. Bayes and Naive. Bayes. Multinomial n The WEKA classifier learning scheme Naive. Bayes. Multinomial implements this model of „the probability that a word occurs in a document given that the document is in that classs“. l n Its output is a table giving these probabilities The WEKA classifier learning scheme Naive. Bayes assumes that the attributes are normally distributed. l Needed when the attributes are numerical and not necessarily 0 | 1 l Its output describes the parameters of these normal distributions l Explanation of the annotations of the attributes: http: //grb. mnsu. edu/grbts/doc/manual/Naive_Bayes. html n Explanation of the error measures: http: //grb. mnsu. edu/grbts/doc/manual/Error_Measurements. html#sec: error Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

25 So what is happiness? (Rada Mihalcea‘s presentation at AAAI Spring Symposium 2006) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

26 Agenda Motivation Brief overview of text mining Preprocessing text: word level Preprocessing text: document level Application: Happiness (& intro to Naïve Bayes class. ) More on preprocessing text: changing representation Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

27 (slides 46 -48 from the Text Mining Tutorial by Grobelnik & Mladenic) Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

28 Next lecture Motivation Brief overview of text mining Preprocessing text: word level Preprocessing text: document level Application: Happiness (& intro to Naïve Bayes class. ) More on preprocessing text: changing representation Coming full circle: Induction in Sem. Web & other DBs Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

29 References and background reading; acknowledgements n Grobelnik, M. & Mladenic, D. (2004). Text-Mining Tutorial. In: Learning Methods for Text Understanding and Mining, 26 - 29 January 2004, Grenoble, France. http: //eprints. pascal-network. org/archive/00000017/01/Tutorial_Marko. pdf n Mihalcea, R. & Liu, H. (2006). A corpus-based approach to finding happiness, In Proceedings of the AAAI Spring Symposium on Computational Approaches to Analyzing Weblogs. http: //www. cs. unt. edu/%7 Erada/papers/mihalcea. aaaiss 06. pdf Picture credits: n p. 4: http: //www. cartoonstock. com/newscartoons/cartoonists/mba/lowres/mban 763 l. jpg n p. 6: n p. 8: Fortuna, B. , Galleguillos, C. , & Cristianini, N. (in press). Detecting the bias in media with statistical learning methods. n p. 9: from Grobelnik & Mladenic (2004), see above http: //www. nielsenbuzzmetrics. com/images/uploaded/img_tech_sentimentmining. gif Berendt: Advanced databases, winter term 2007/08, http: //www. cs. kuleuven. be/~berendt/teaching/2007 w/adb/

- Slides: 29