1 Last update 18 October 2011 Advanced databases

1 Last update: 18 October 2011 Advanced databases – Core ideas of federated databases; Schema and ontology matching Bettina Berendt Katholieke Universiteit Leuven, Department of Computer Science http: //www. cs. kuleuven. ac. be/~berendt/teaching/2011 -12 -1 stsemester/adb/ Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 1

2 Until now. . . n . . . we have looked into modelling n . . . we have seen how the languages RDF and OWL allow us to combine different schemas and data n . . . we have seen how Linked Data on the Web uses HTTP as a connecting protocol/architecture n . . . we have assumed that such combinations can be done effortlessly (unique names etc. ) n . . . we have looked at some interpretation problems associated with these procedures n Now we need to ask: l What are (further) challenges of such combinations? l What are approaches proposed to solve it? – from the databases & the Semantic Web / ontologies fields – from architectural and logical points of view Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 2

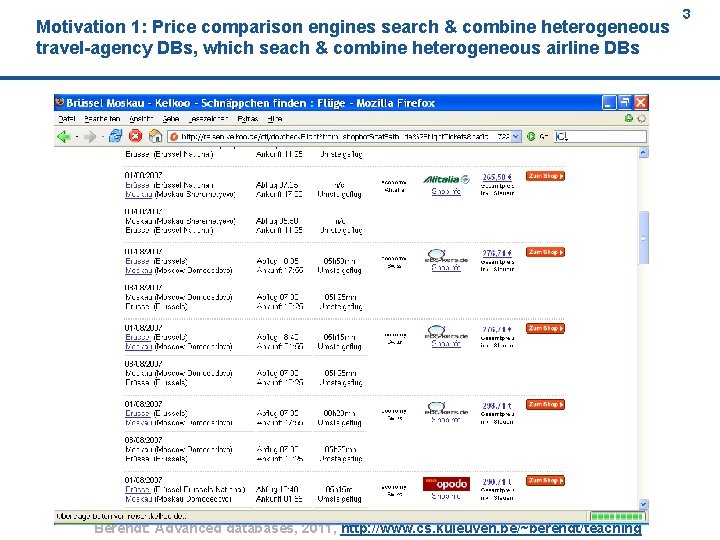

Motivation 1: Price comparison engines search & combine heterogeneous travel-agency DBs, which seach & combine heterogeneous airline DBs Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 3 3

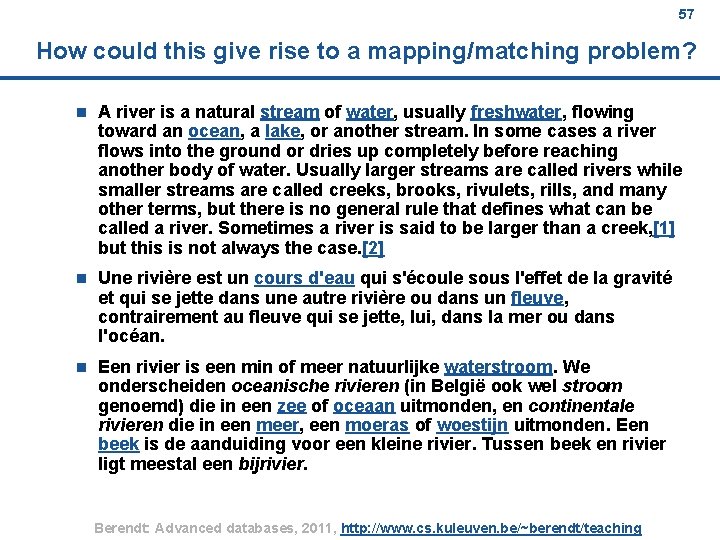

4 Motivation 2: Schemas coming from different languages n A river is a natural stream of water, usually freshwater, flowing toward an ocean, a lake, or another stream. In some cases a river flows into the ground or dries up completely before reaching another body of water. Usually larger streams are called rivers while smaller streams are called creeks, brooks, rivulets, rills, and many other terms, but there is no general rule that defines what can be called a river. Sometimes a river is said to be larger than a creek, [1] but this is not always the case. [2] n Une rivière est un cours d'eau qui s'écoule sous l'effet de la gravité et qui se jette dans une autre rivière ou dans un fleuve, contrairement au fleuve qui se jette, lui, dans la mer ou dans l'océan. n Een rivier is een min of meer natuurlijke waterstroom. We onderscheiden oceanische rivieren (in België ook wel stroom genoemd) die in een zee of oceaan uitmonden, en continentale rivieren die in een meer, een moeras of woestijn uitmonden. Een beek is de aanduiding voor een kleine rivier. Tussen beek en rivier ligt meestal een bijrivier. Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 4

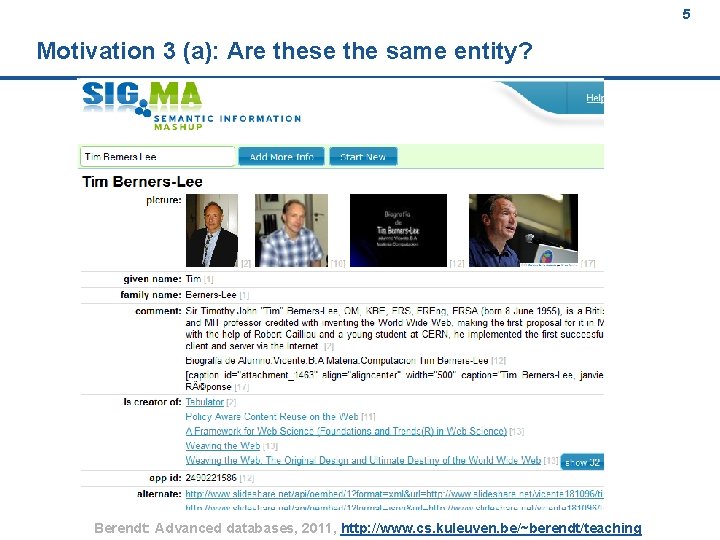

5 Motivation 3 (a): Are these the same entity? Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 5

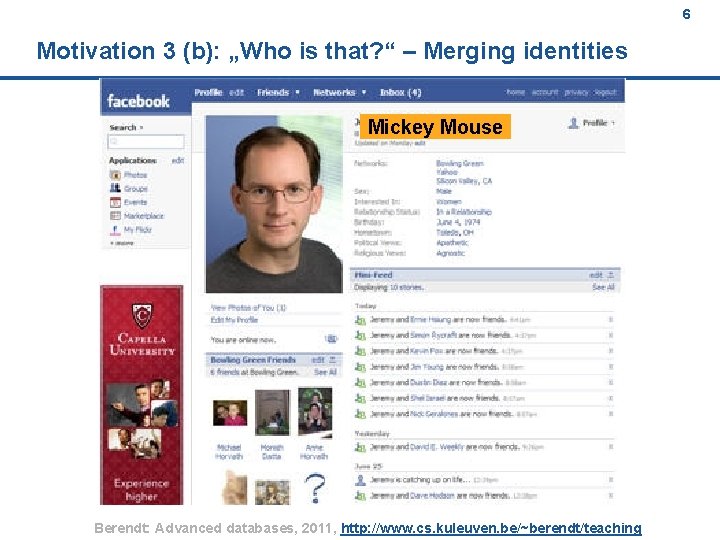

6 Motivation 3 (b): „Who is that? “ – Merging identities Mickey Mouse Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 6

7 Motivation 3 (c): „Who was that? “ – Re-identification Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 7

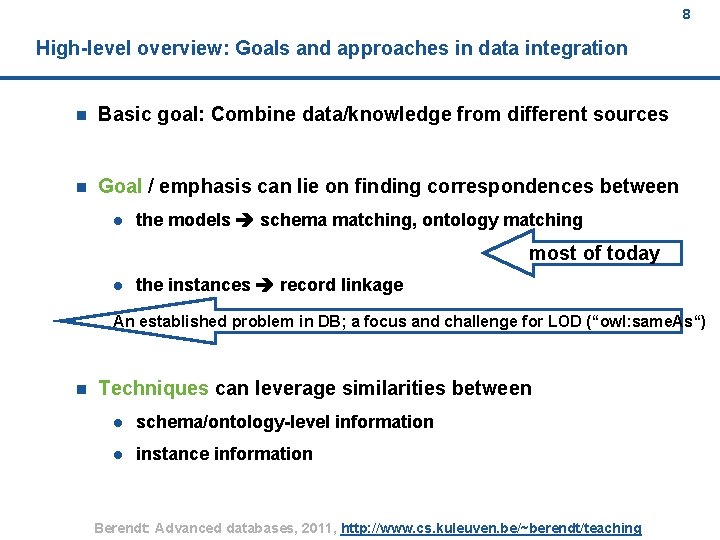

8 High-level overview: Goals and approaches in data integration n Basic goal: Combine data/knowledge from different sources n Goal / emphasis can lie on finding correspondences between l the models schema matching, ontology matching most of today l the instances record linkage An established problem in DB; a focus and challenge for LOD (“owl: same. As“) n Techniques can leverage similarities between l schema/ontology-level information l instance information Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 8

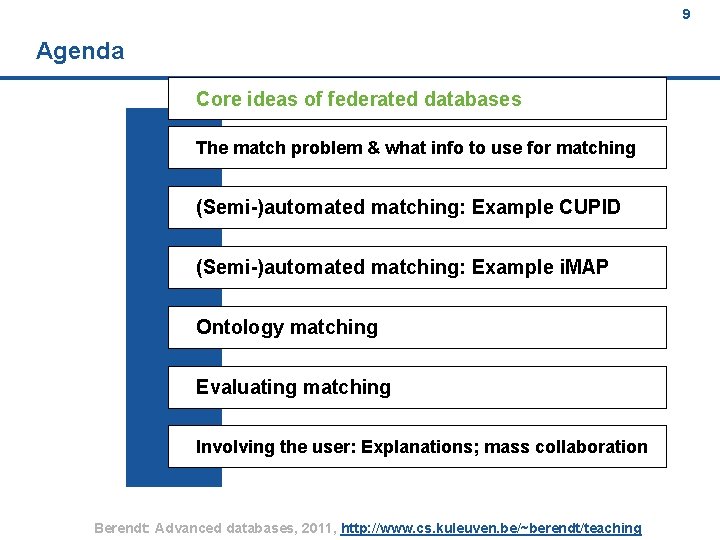

9 Agenda Core ideas of federated databases The match problem & what info to use for matching (Semi-)automated matching: Example CUPID (Semi-)automated matching: Example i. MAP Ontology matching Evaluating matching Involving the user: Explanations; mass collaboration Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 9

10 Overview n goal: interoperability through data integration: l combining heterogeneous data sources under a single query interface n A federated database system is a type of meta-database management system (DBMS) which transparently integrates multiple autonomous database systems into a single federated database. n The constituent databases are interconnected via a computer network, and may be geographically decentralized. n Since the constituent database systems remain autonomous, a federated database system is a contrastable alternative to the (sometimes daunting) task of merging together several disparate databases. n A federated database (or virtual database) is the fully-integrated, logical composite of all constituent databases in a federated database system. Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 10

11 Issues in federating data sources Interconnection and cooperation of autonomous and heterogeneous databases must address n Distribution n Autonomy n Heterogeneity Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 11

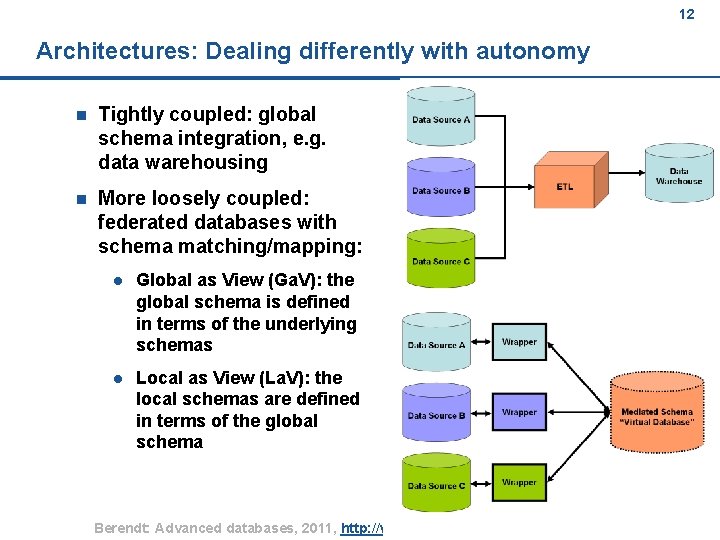

12 Architectures: Dealing differently with autonomy n Tightly coupled: global schema integration, e. g. data warehousing n More loosely coupled: federated databases with schema matching/mapping: l Global as View (Ga. V): the global schema is defined in terms of the underlying schemas l Local as View (La. V): the local schemas are defined in terms of the global schema Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 12

13 Issues in query processing n In both Ga. V and La. V systems, a user poses conjunctive queries over a virtual schema represented by a set of views, or "materialized" conjunctive queries. n Integration seeks to rewrite the queries represented by the views to make their results equivalent or maximally contained by our user's query. n This corresponds to the problem of answering queries using views. Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 13

14 An example developed in-house: SQI - PLQL n Purpose: For federated search in learning-object repositories n An approach with conceptual-level abstraction from data sources n Integratable data source types: l n Full abstraction of user from data sources: l n Depends on application User-specific data modeling for integration: l n Yes User-specific data souce selection for integration: l n Relational, XML, IR systems, (search engine) Web services, search APIs No Explicit, queryable semantics: l (delegated to the sources: LOM etc. ) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 14

15 Heterogeneity n Heterogeneity is independent of location of data n When is an information system homogeneous? n l Software that creates and manipulates data is the same l All data follows same structure and data model and is part of a single universe of discourse Different levels of heterogeneity l Different languages to write applications l Different query languages l Different models l Different DBMSs l Different file systems l Semantic heterogeneity etc. Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 15

16 Agenda Core ideas of federated databases The match problem & what info to use for matching (Semi-)automated matching: Example CUPID (Semi-)automated matching: Example i. MAP Ontology matching Evaluating matching Involving the user: Explanations; mass collaboration Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 16

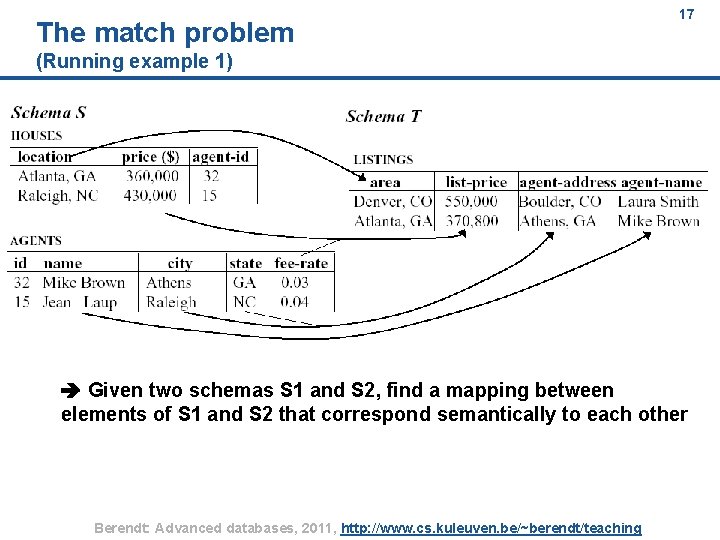

The match problem 17 (Running example 1) Given two schemas S 1 and S 2, find a mapping between elements of S 1 and S 2 that correspond semantically to each other Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 17

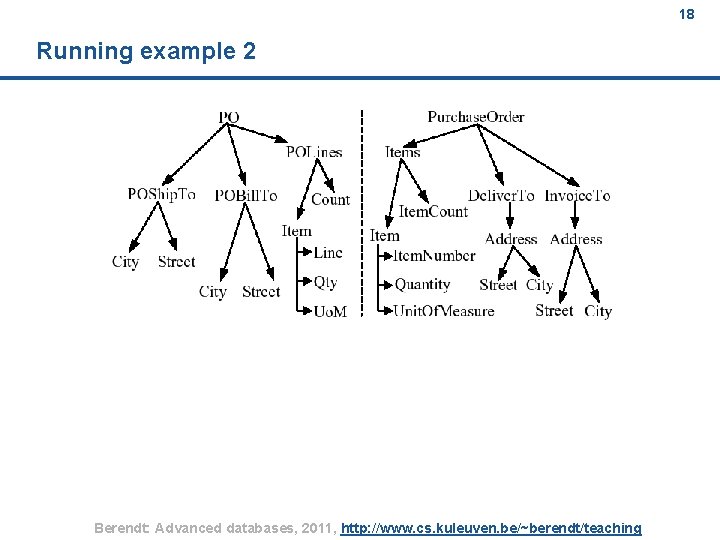

18 Running example 2 Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 18

19 Motivation: application areas n Schema integration in multi-database systems n Data integration systems on the Web n Translating data (e. g. , for data warehousing) n E-commerce message translation n P 2 P data management n Model management (tools for easily manipulating models of data) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 19

20 Based on what information can the matchings/mappings be found? (work on the two running examples) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 20

21 The match operator n Match operator: f(S 1, S 2) = mapping between S 1 and S 2 l n Mapping l n a set of mapping elements Mapping elements l n for schemas S 1, S 2 elements of S 1, elements of S 2, mapping expression Mapping expression l different functions and relationships Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 21

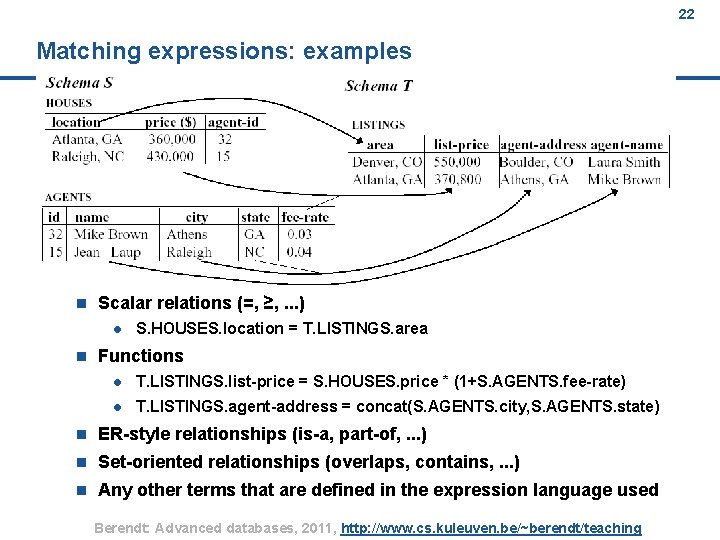

22 Matching expressions: examples n Scalar relations (=, ≥, . . . ) l n S. HOUSES. location = T. LISTINGS. area Functions l T. LISTINGS. list-price = S. HOUSES. price * (1+S. AGENTS. fee-rate) l T. LISTINGS. agent-address = concat(S. AGENTS. city, S. AGENTS. state) n ER-style relationships (is-a, part-of, . . . ) n Set-oriented relationships (overlaps, contains, . . . ) n Any other terms that are defined in the expression language used Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 22

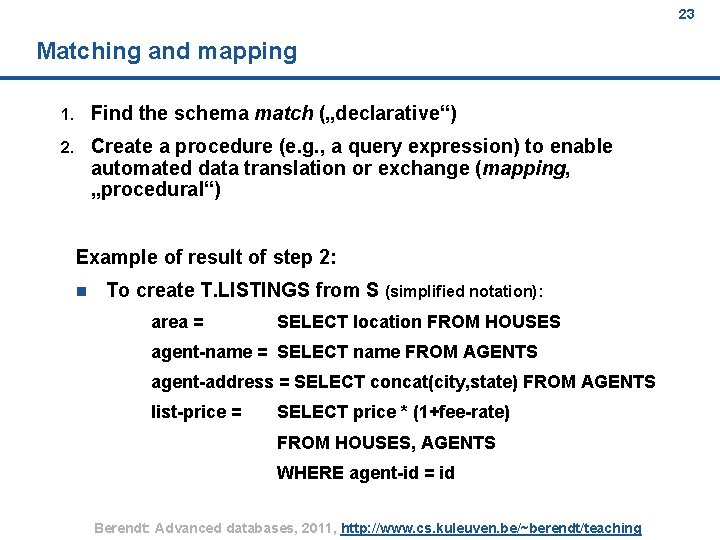

23 Matching and mapping 1. Find the schema match („declarative“) 2. Create a procedure (e. g. , a query expression) to enable automated data translation or exchange (mapping, „procedural“) Example of result of step 2: n To create T. LISTINGS from S (simplified notation): area = SELECT location FROM HOUSES agent-name = SELECT name FROM AGENTS agent-address = SELECT concat(city, state) FROM AGENTS list-price = SELECT price * (1+fee-rate) FROM HOUSES, AGENTS WHERE agent-id = id Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 23

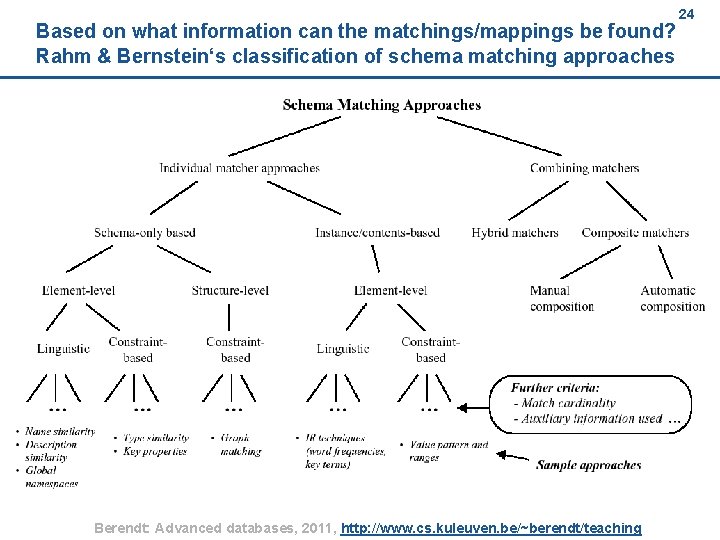

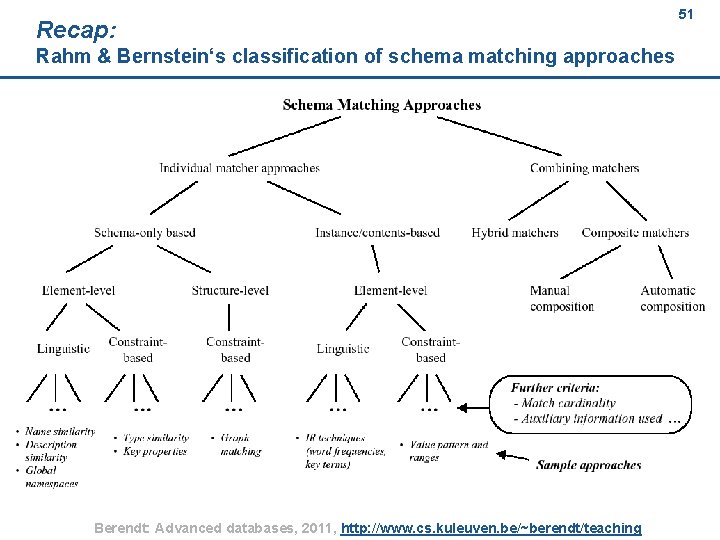

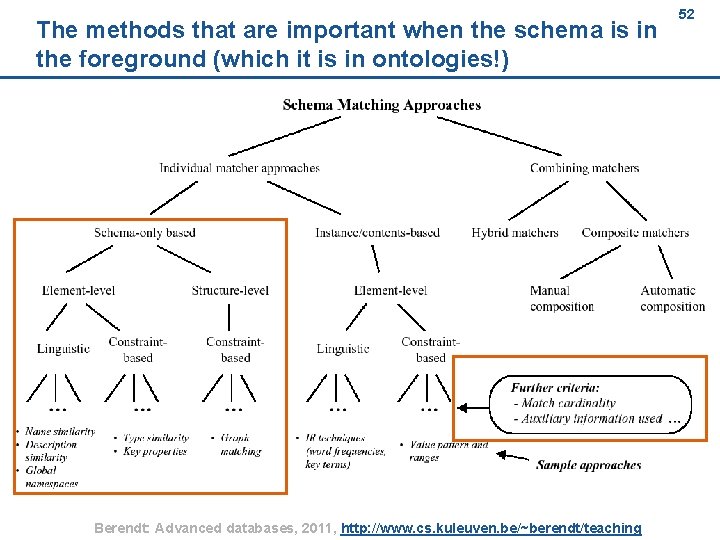

Based on what information can the matchings/mappings be found? Rahm & Bernstein‘s classification of schema matching approaches Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 24 24

25 Challenges n Semantics of the involved elements often need to be inferred Often need to base (heuristic) solutions on cues in schema and data, which are unreliable l n e. g. , homonyms (area), synonyms (area, location) Schema and data clues are often incomplete l e. g. , date: date of what? n Global nature of matching: to choose one matching possibility, must typically exclude all others as worse n Matching is often subjective and/or context-dependent l n e. g. , does house-style match house-description or not? Extremely laborious and error-prone process l e. g. , Li & Clifton 200: project at GTE telecommunications: 40 databases, 27 K elements, no access to the original developers of the DB estimated time for just finding and documenting the matches: 12 person years Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 25

26 Semi-automated schema matching (1) Rule-based solutions n Hand-crafted rules n Exploit schema information + relatively inexpensive + do not require training + fast (operate only on schema, not data) + can work very well in certain types of applications & domains + rules can provide a quick & concise method of capturing user knowledge about the domain – cannot exploit data instances effectively – cannot exploit previous matching efforts (other than by re-use) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 26

27 Semi-automated schema matching (2) Learning-based solutions n Rules/mappings learned from attribute specifications and statistics of data content (Rahm&Bernstein: „instance-level matching“) Exploit schema information and data n Some approaches: external evidence l Past matches l Corpus of schemas and matches („matchings in real-estate applications will tend to be alike“) l Corpus of users (more details later in this slide set) + can exploit data instances effectively + can exploit previous matching efforts – relatively expensive – require training – slower (operate data) – results may be opaque (e. g. , neural network output) explanation components! (more details later) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 27

28 Agenda Core ideas of federated databases The match problem & what info to use for matching (Semi-)automated matching: Example CUPID (Semi-)automated matching: Example i. MAP Ontology matching Evaluating matching Involving the user: Explanations; mass collaboration Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 28

29 Overview (1) n Rule-based approach n Schema types: l n Metadata representation: l n Extended ER Match granularity: l n Relational, XML Element, structure Match cardinality: l 1: 1, n: 1 Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 29

30 Overview (2) n n Schema-level match: l Name-based: name equality, synonyms, hypernyms, homonyms, abbreviations l Constraint-based: data type and domain compatibility, referential constraints l Structure matching: matching subtrees, weighted by leaves Re-use, auxiliary information used: l n Combination of matchers: l n Thesauri, glossaries Hybrid Manual work / user input: l User can adjust threshold weights Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 30

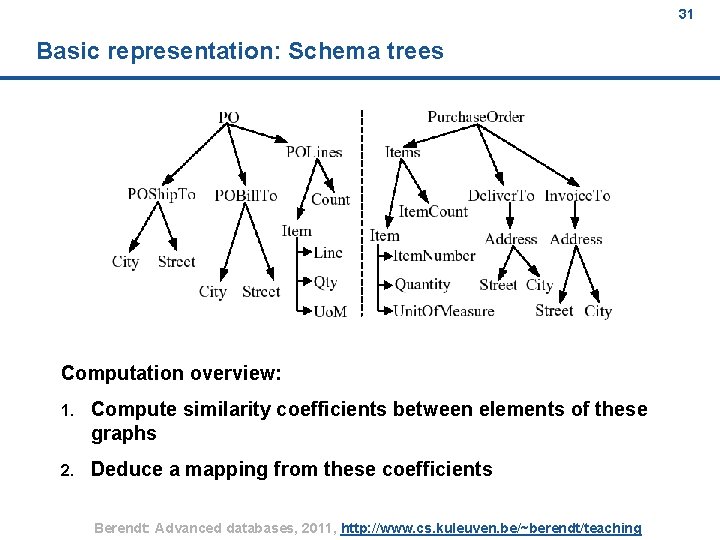

31 Basic representation: Schema trees Computation overview: 1. Compute similarity coefficients between elements of these graphs 2. Deduce a mapping from these coefficients Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 31

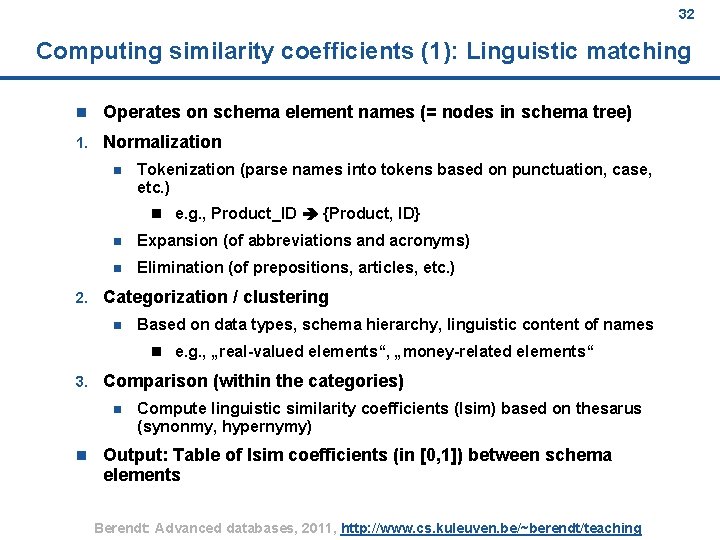

32 Computing similarity coefficients (1): Linguistic matching n Operates on schema element names (= nodes in schema tree) 1. Normalization n Tokenization (parse names into tokens based on punctuation, case, etc. ) n e. g. , Product_ID {Product, ID} 2. n Expansion (of abbreviations and acronyms) n Elimination (of prepositions, articles, etc. ) Categorization / clustering n Based on data types, schema hierarchy, linguistic content of names n e. g. , „real-valued elements“, „money-related elements“ 3. Comparison (within the categories) n n Compute linguistic similarity coefficients (lsim) based on thesarus (synonmy, hypernymy) Output: Table of lsim coefficients (in [0, 1]) between schema elements Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 32

33 How to identify synonyms and homonyms: Example Word. Net Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 33

34 How to identify hypernyms: Example Word. Net Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 34

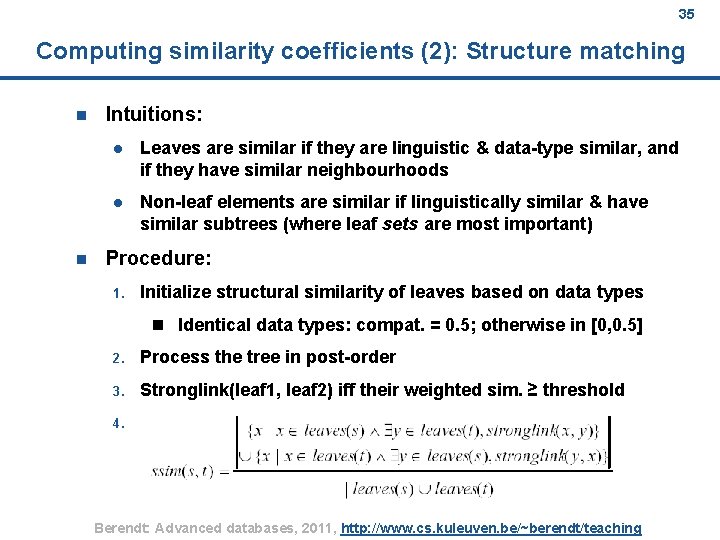

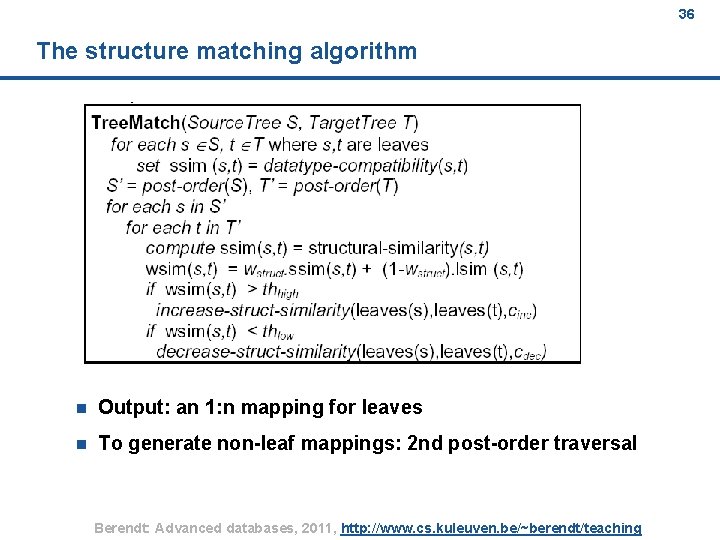

35 Computing similarity coefficients (2): Structure matching n n Intuitions: l Leaves are similar if they are linguistic & data-type similar, and if they have similar neighbourhoods l Non-leaf elements are similar if linguistically similar & have similar subtrees (where leaf sets are most important) Procedure: 1. Initialize structural similarity of leaves based on data types n Identical data types: compat. = 0. 5; otherwise in [0, 0. 5] 2. Process the tree in post-order 3. Stronglink(leaf 1, leaf 2) iff their weighted sim. ≥ threshold 4. . Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 35

36 The structure matching algorithm n Output: an 1: n mapping for leaves n To generate non-leaf mappings: 2 nd post-order traversal Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 36

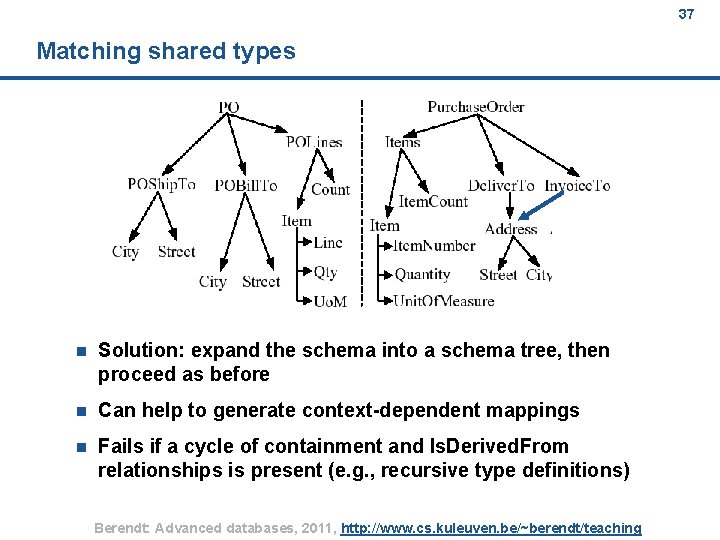

37 Matching shared types n Solution: expand the schema into a schema tree, then proceed as before n Can help to generate context-dependent mappings n Fails if a cycle of containment and Is. Derived. From relationships is present (e. g. , recursive type definitions) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 37

38 Agenda Core ideas of federated databases The match problem & what info to use for matching (Semi-)automated matching: Example CUPID (Semi-)automated matching: Example i. MAP Ontology matching Evaluating matching Involving the user: Explanations; mass collaboration Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 38

39 Main ideas n A learning-based approach n Main goal: discover complex matches l In particular: functions such as T. LISTINGS. list-price = S. HOUSES. price * (1+S. AGENTS. fee-rate) T. LISTINGS. agent-address = concat(S. AGENTS. city, S. AGENTS. state) n Works on relational schemas n Basic idea: reformulate schema matching as search Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 39

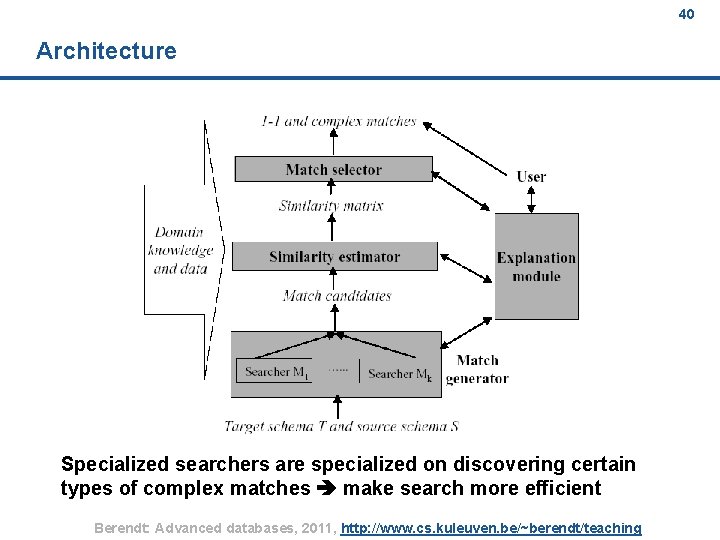

40 Architecture Specialized searchers are specialized on discovering certain types of complex matches make search more efficient Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 40

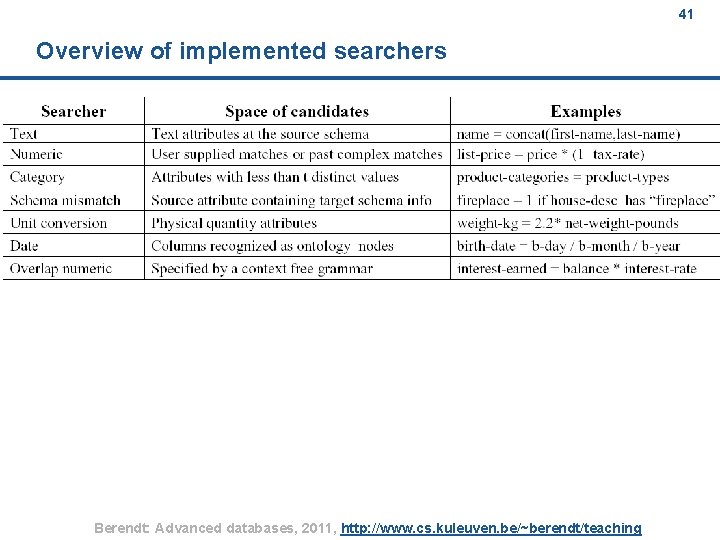

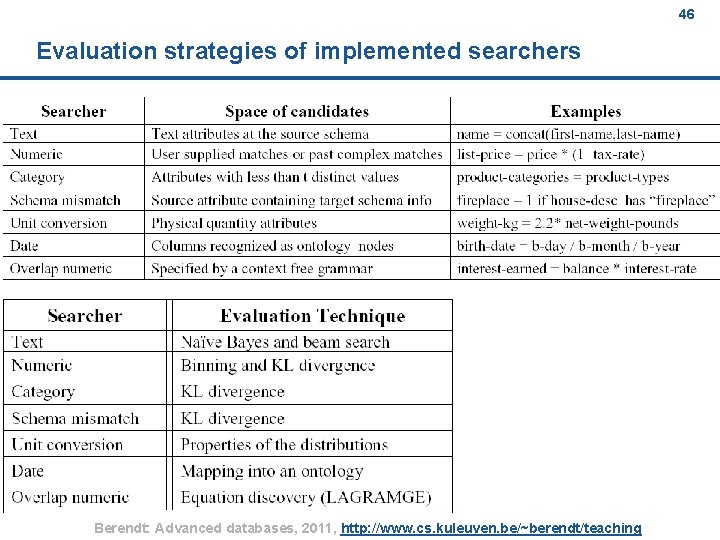

41 Overview of implemented searchers Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 41

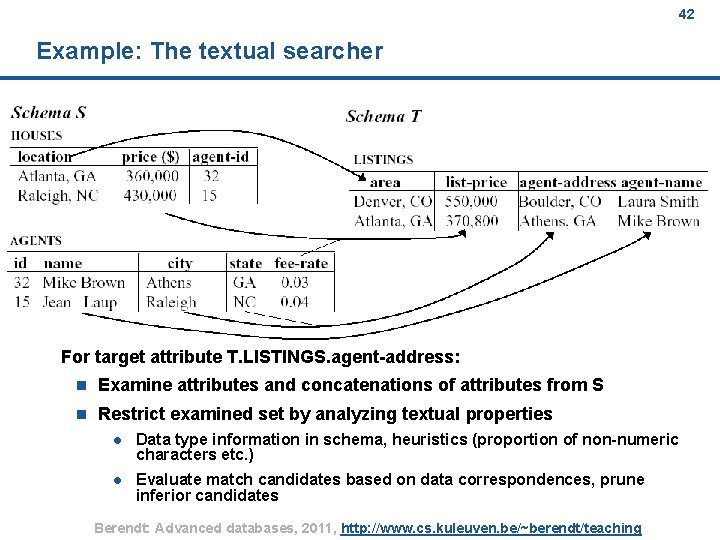

42 Example: The textual searcher For target attribute T. LISTINGS. agent-address: n Examine attributes and concatenations of attributes from S n Restrict examined set by analyzing textual properties l Data type information in schema, heuristics (proportion of non-numeric characters etc. ) l Evaluate match candidates based on data correspondences, prune inferior candidates Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 42

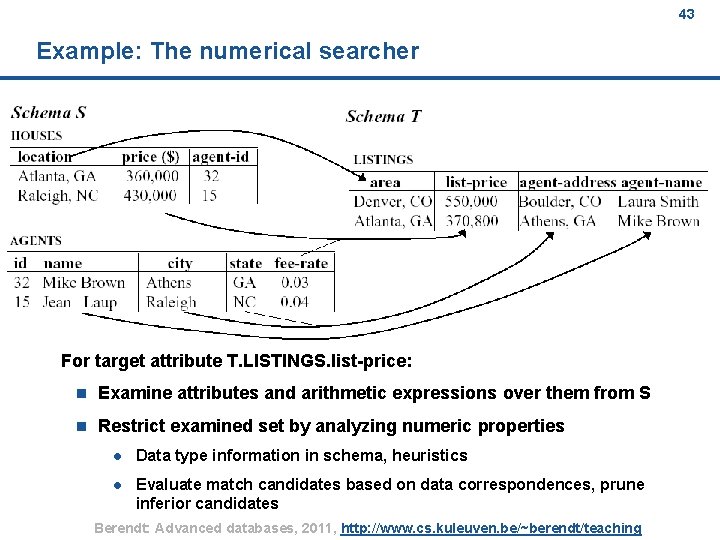

43 Example: The numerical searcher For target attribute T. LISTINGS. list-price: n Examine attributes and arithmetic expressions over them from S n Restrict examined set by analyzing numeric properties l Data type information in schema, heuristics l Evaluate match candidates based on data correspondences, prune inferior candidates Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 43

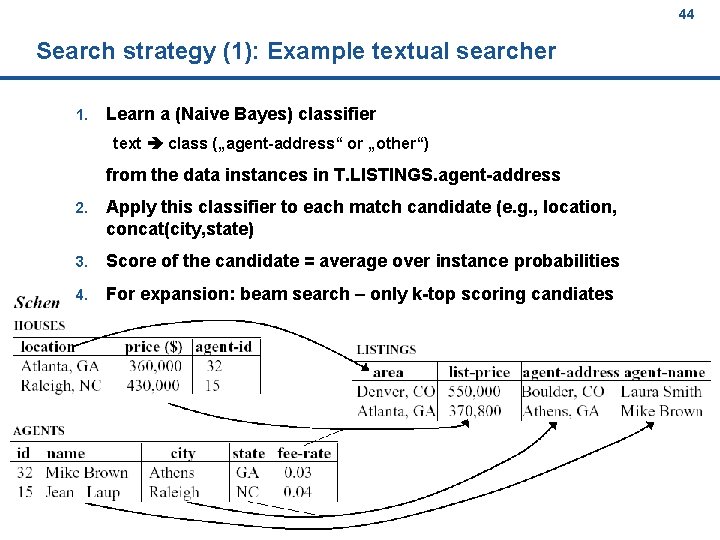

44 Search strategy (1): Example textual searcher 1. Learn a (Naive Bayes) classifier text class („agent-address“ or „other“) from the data instances in T. LISTINGS. agent-address 2. Apply this classifier to each match candidate (e. g. , location, concat(city, state) 3. Score of the candidate = average over instance probabilities 4. For expansion: beam search – only k-top scoring candiates Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 44

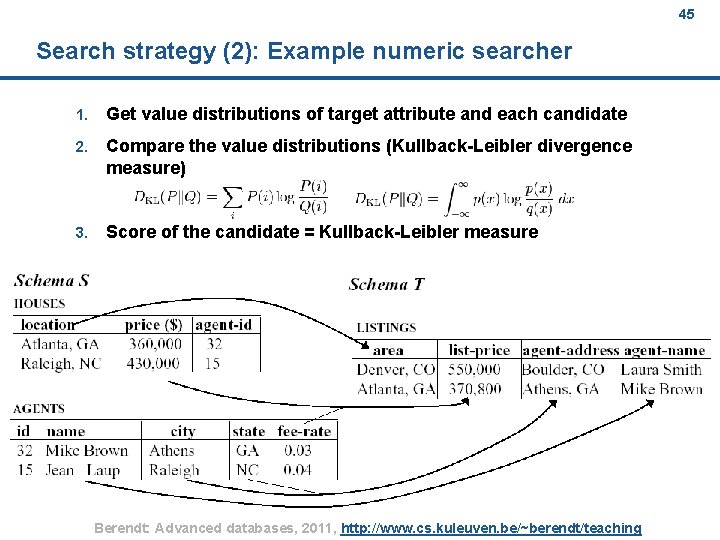

45 Search strategy (2): Example numeric searcher 1. Get value distributions of target attribute and each candidate 2. Compare the value distributions (Kullback-Leibler divergence measure) 3. Score of the candidate = Kullback-Leibler measure Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 45

46 Evaluation strategies of implemented searchers Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 46

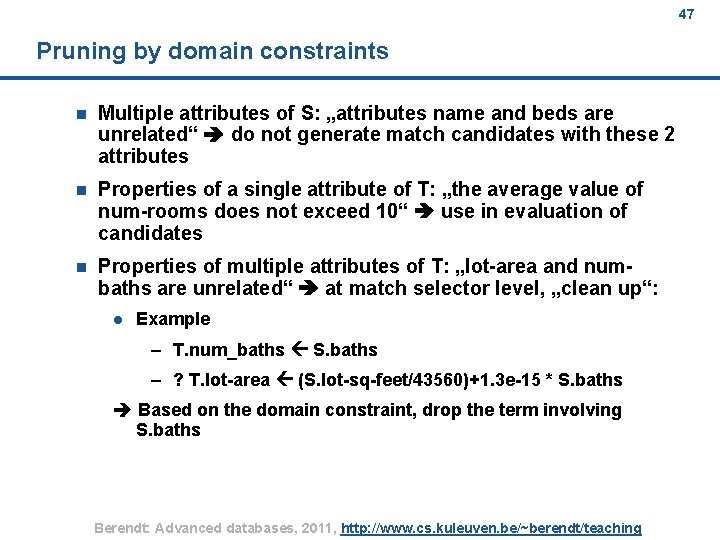

47 Pruning by domain constraints n Multiple attributes of S: „attributes name and beds are unrelated“ do not generate match candidates with these 2 attributes n Properties of a single attribute of T: „the average value of num-rooms does not exceed 10“ use in evaluation of candidates n Properties of multiple attributes of T: „lot-area and numbaths are unrelated“ at match selector level, „clean up“: l Example – T. num_baths S. baths – ? T. lot-area (S. lot-sq-feet/43560)+1. 3 e-15 * S. baths Based on the domain constraint, drop the term involving S. baths Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 47

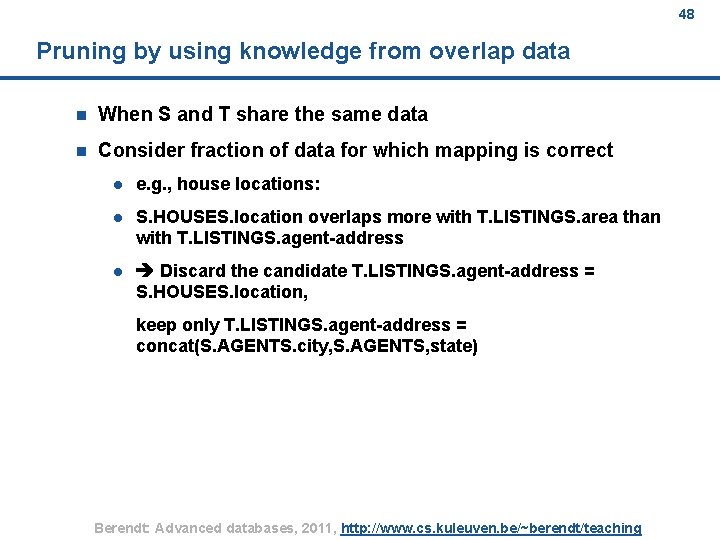

48 Pruning by using knowledge from overlap data n When S and T share the same data n Consider fraction of data for which mapping is correct l e. g. , house locations: l S. HOUSES. location overlaps more with T. LISTINGS. area than with T. LISTINGS. agent-address l Discard the candidate T. LISTINGS. agent-address = S. HOUSES. location, keep only T. LISTINGS. agent-address = concat(S. AGENTS. city, S. AGENTS, state) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 48

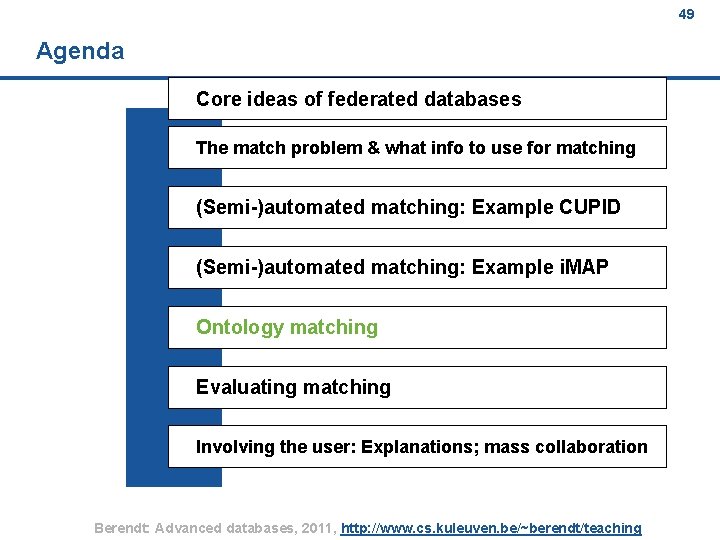

49 Agenda Core ideas of federated databases The match problem & what info to use for matching (Semi-)automated matching: Example CUPID (Semi-)automated matching: Example i. MAP Ontology matching Evaluating matching Involving the user: Explanations; mass collaboration Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 49

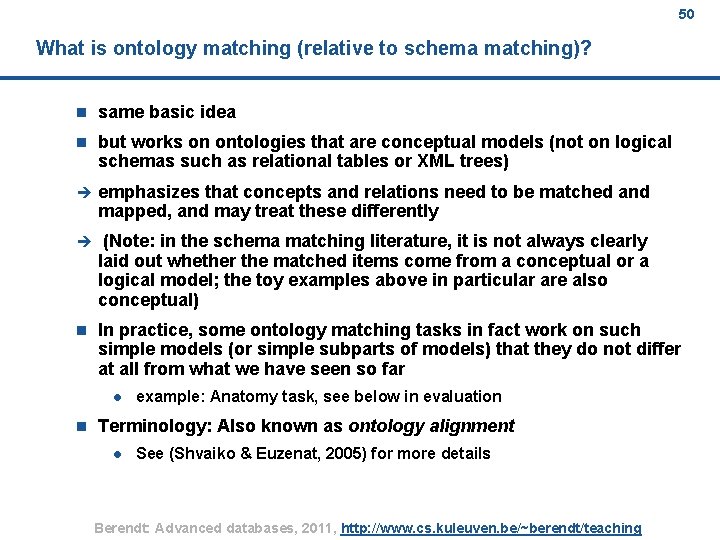

50 What is ontology matching (relative to schema matching)? n same basic idea n but works on ontologies that are conceptual models (not on logical schemas such as relational tables or XML trees) è emphasizes that concepts and relations need to be matched and mapped, and may treat these differently è (Note: in the schema matching literature, it is not always clearly laid out whether the matched items come from a conceptual or a logical model; the toy examples above in particular are also conceptual) n In practice, some ontology matching tasks in fact work on such simple models (or simple subparts of models) that they do not differ at all from what we have seen so far l n example: Anatomy task, see below in evaluation Terminology: Also known as ontology alignment l See (Shvaiko & Euzenat, 2005) for more details Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 50

Recap: 51 Rahm & Bernstein‘s classification of schema matching approaches Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 51

The methods that are important when the schema is in the foreground (which it is in ontologies!) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 52 52

![53 The extension by Shvaiko & Euzenat (2005) [Partial view] Berendt: Advanced databases, 2011, 53 The extension by Shvaiko & Euzenat (2005) [Partial view] Berendt: Advanced databases, 2011,](http://slidetodoc.com/presentation_image/fd0015d06804c25d5ed7ba8ce4415295/image-53.jpg)

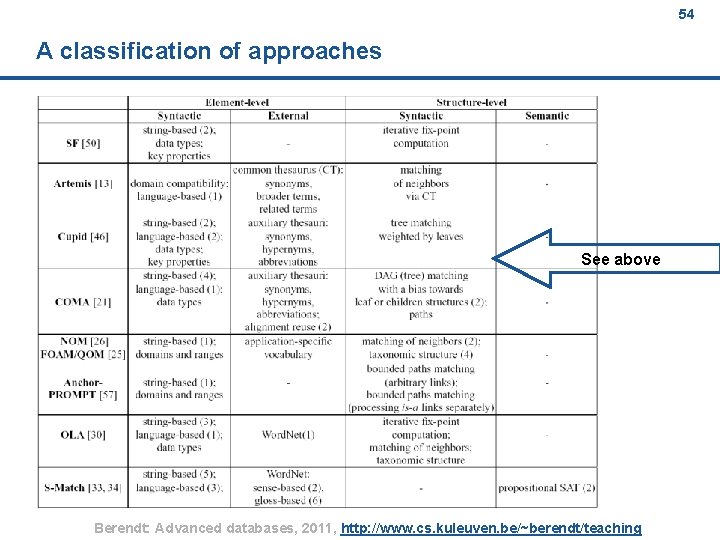

53 The extension by Shvaiko & Euzenat (2005) [Partial view] Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 53

54 A classification of approaches See above Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 54

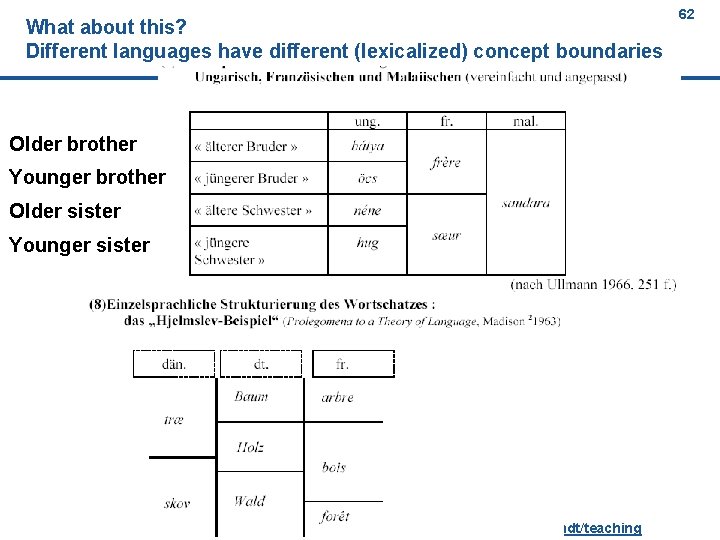

One area in which ontology alignment becomes particularly interesting: Natural language and cross-lingual integration 55 (because this shows very nicely how concepts are not always aligned nicely) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 55

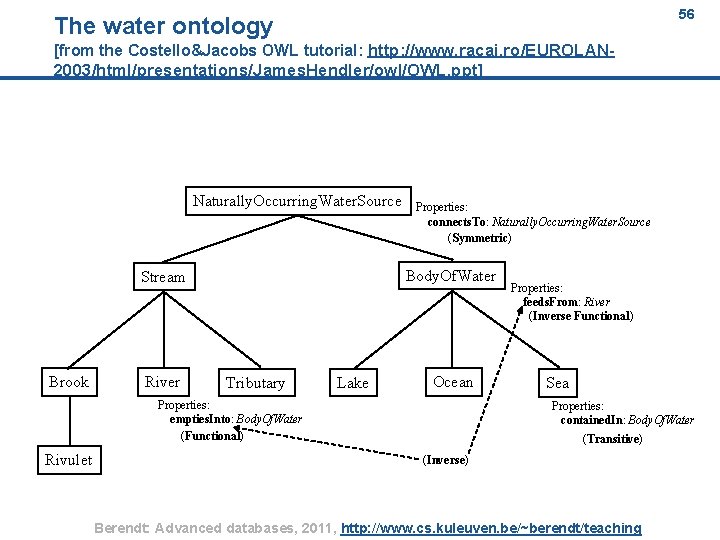

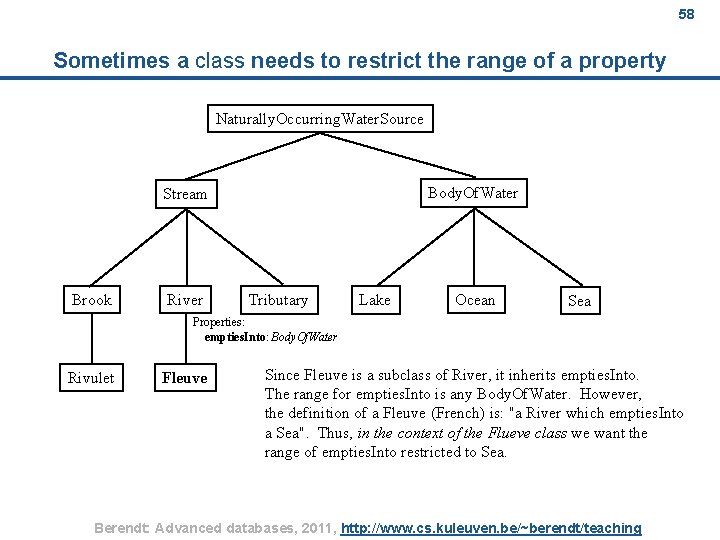

56 The water ontology [from the Costello&Jacobs OWL tutorial: http: //www. racai. ro/EUROLAN 2003/html/presentations/James. Hendler/owl/OWL. ppt] Naturally. Occurring. Water. Source Body. Of. Water Stream Brook River Properties: connects. To: Naturally. Occurring. Water. Source (Symmetric) Tributary Lake Ocean Properties: empties. Into: Body. Of. Water (Functional) Rivulet Properties: feeds. From: River (Inverse Functional) Sea Properties: contained. In: Body. Of. Water (Transitive) (Inverse) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 56

57 How could this give rise to a mapping/matching problem? n A river is a natural stream of water, usually freshwater, flowing toward an ocean, a lake, or another stream. In some cases a river flows into the ground or dries up completely before reaching another body of water. Usually larger streams are called rivers while smaller streams are called creeks, brooks, rivulets, rills, and many other terms, but there is no general rule that defines what can be called a river. Sometimes a river is said to be larger than a creek, [1] but this is not always the case. [2] n Une rivière est un cours d'eau qui s'écoule sous l'effet de la gravité et qui se jette dans une autre rivière ou dans un fleuve, contrairement au fleuve qui se jette, lui, dans la mer ou dans l'océan. n Een rivier is een min of meer natuurlijke waterstroom. We onderscheiden oceanische rivieren (in België ook wel stroom genoemd) die in een zee of oceaan uitmonden, en continentale rivieren die in een meer, een moeras of woestijn uitmonden. Een beek is de aanduiding voor een kleine rivier. Tussen beek en rivier ligt meestal een bijrivier. Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 57

58 Sometimes a class needs to restrict the range of a property Naturally. Occurring. Water. Source Body. Of. Water Stream Brook River Tributary Lake Ocean Sea Properties: empties. Into: Body. Of. Water Rivulet Fleuve Since Fleuve is a subclass of River, it inherits empties. Into. The range for empties. Into is any Body. Of. Water. However, the definition of a Fleuve (French) is: "a River which empties. Into a Sea". Thus, in the context of the Flueve class we want the range of empties. Into restricted to Sea. Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 58

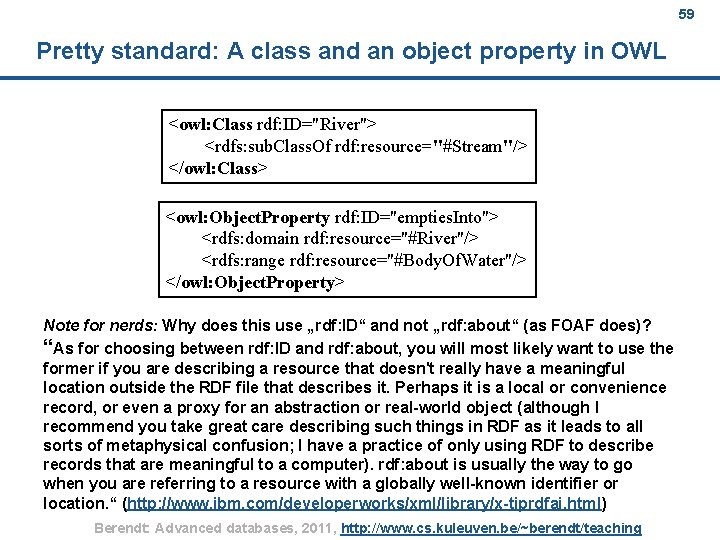

59 Pretty standard: A class and an object property in OWL <owl: Class rdf: ID="River"> <rdfs: sub. Class. Of rdf: resource="#Stream"/> </owl: Class> <owl: Object. Property rdf: ID="empties. Into"> <rdfs: domain rdf: resource="#River"/> <rdfs: range rdf: resource="#Body. Of. Water"/> </owl: Object. Property> Note for nerds: Why does this use „rdf: ID“ and not „rdf: about“ (as FOAF does)? “As for choosing between rdf: ID and rdf: about, you will most likely want to use the former if you are describing a resource that doesn't really have a meaningful location outside the RDF file that describes it. Perhaps it is a local or convenience record, or even a proxy for an abstraction or real-world object (although I recommend you take great care describing such things in RDF as it leads to all sorts of metaphysical confusion; I have a practice of only using RDF to describe records that are meaningful to a computer). rdf: about is usually the way to go when you are referring to a resource with a globally well-known identifier or location. “ (http: //www. ibm. com/developerworks/xml/library/x-tiprdfai. html) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 59

60 Global vs Local Properties rdfs: range imposes a global restriction on the empties. Into property, i. e. , the rdfs: range value applies to River and all subclasses of River. As we have seen, in the context of the Fleuve class, we would like the empties. Into property to have its range restricted to just the Sea class. Thus, for the Fleuve class we want a local definition of empties. Into. Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 60

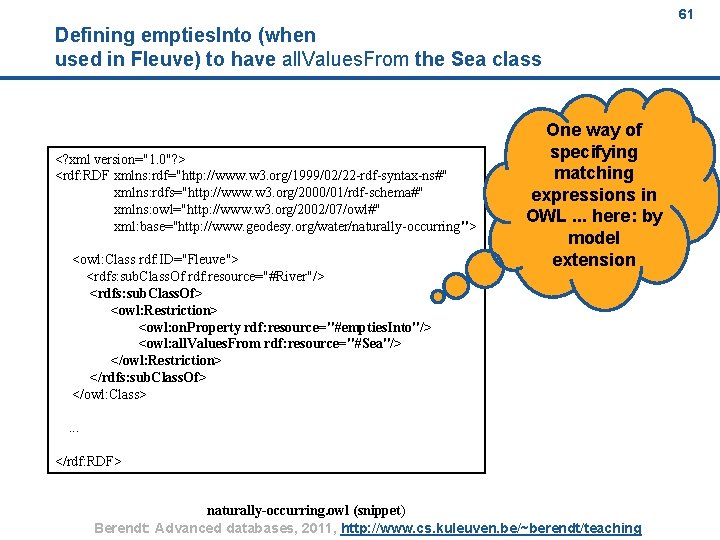

61 Defining empties. Into (when used in Fleuve) to have all. Values. From the Sea class <? xml version="1. 0"? > <rdf: RDF xmlns: rdf="http: //www. w 3. org/1999/02/22 -rdf-syntax-ns#" xmlns: rdfs="http: //www. w 3. org/2000/01/rdf-schema#" xmlns: owl="http: //www. w 3. org/2002/07/owl#" xml: base="http: //www. geodesy. org/water/naturally-occurring"> <owl: Class rdf: ID="Fleuve"> <rdfs: sub. Class. Of rdf: resource="#River"/> <rdfs: sub. Class. Of> <owl: Restriction> <owl: on. Property rdf: resource="#empties. Into"/> <owl: all. Values. From rdf: resource="#Sea"/> </owl: Restriction> </rdfs: sub. Class. Of> </owl: Class> One way of specifying matching expressions in OWL. . . here: by model extension . . . </rdf: RDF> naturally-occurring. owl (snippet) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 61

What about this? Different languages have different (lexicalized) concept boundaries 62 Older brother Younger brother Older sister Younger sister Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 62

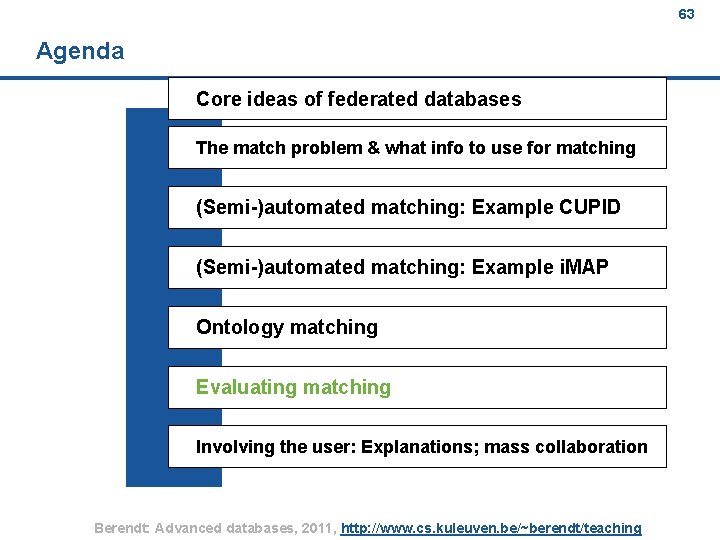

63 Agenda Core ideas of federated databases The match problem & what info to use for matching (Semi-)automated matching: Example CUPID (Semi-)automated matching: Example i. MAP Ontology matching Evaluating matching Involving the user: Explanations; mass collaboration Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 63

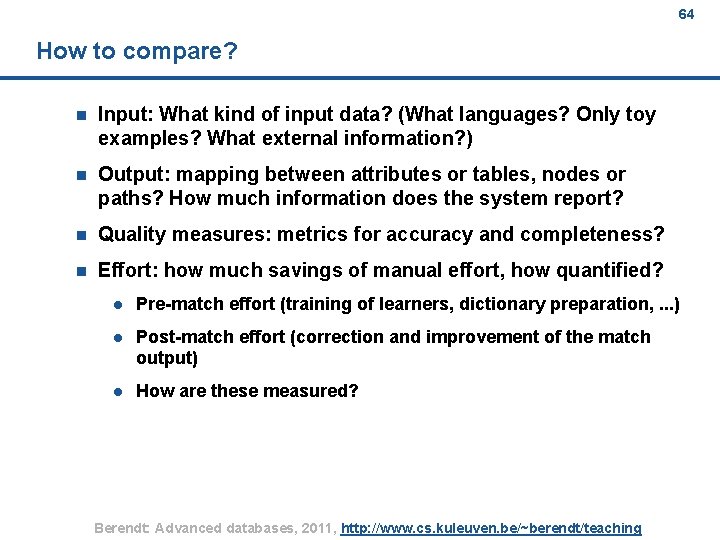

64 How to compare? n Input: What kind of input data? (What languages? Only toy examples? What external information? ) n Output: mapping between attributes or tables, nodes or paths? How much information does the system report? n Quality measures: metrics for accuracy and completeness? n Effort: how much savings of manual effort, how quantified? l Pre-match effort (training of learners, dictionary preparation, . . . ) l Post-match effort (correction and improvement of the match output) l How are these measured? Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 64

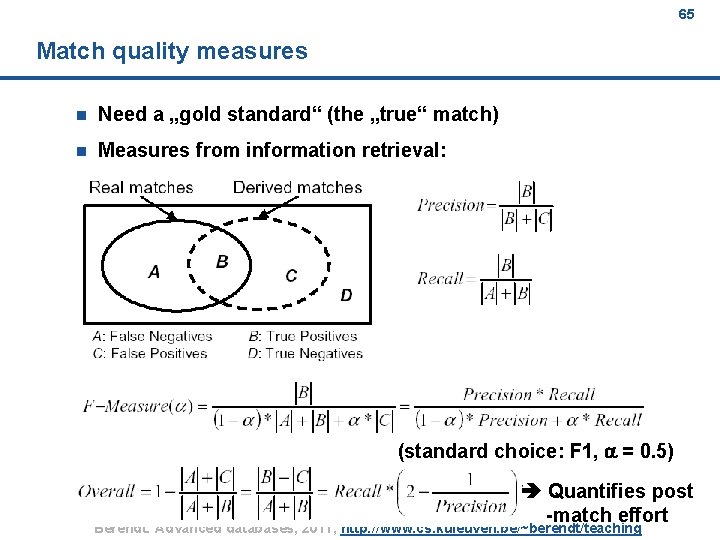

65 Match quality measures n Need a „gold standard“ (the „true“ match) n Measures from information retrieval: (standard choice: F 1, a = 0. 5) Quantifies post -match effort Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 65

66 Benchmarking n Do, Melnik, and Rahm (2003) found that evaluation studies were not comparable Need more standardized conditions (benchmarks) n Now a tradition of competitions in ontology matching (more in the next session): l Test cases and contests at http: //www. ontologymatching. org/evaluation. html Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 66

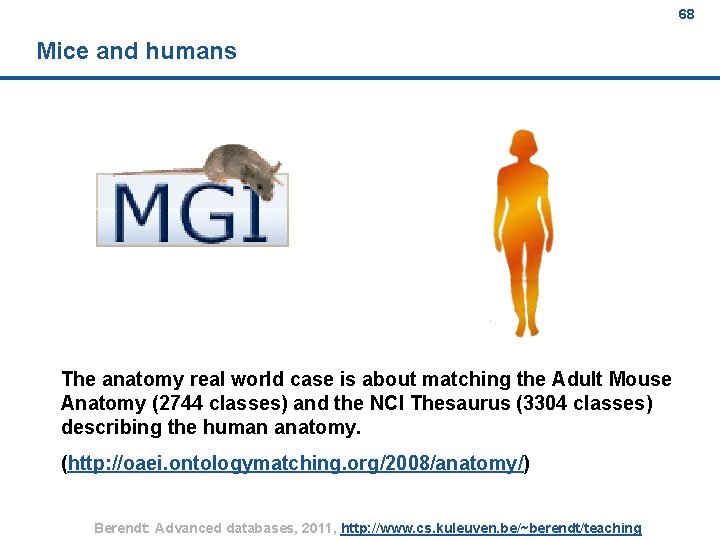

67 Example: Tasks 2009 (various are re-used; 2011 is currently running) (excerpt; from http: //oaei. ontologymatching. org/2009/) Expressive ontologies n anatomy l n The anatomy real world case is about matching the Adult Mouse Anatomy (2744 classes) and the NCI Thesaurus (3304 classes) describing the human anatomy. conference l Participants will be asked to find all correct correspondences (equivalence and/or subsumption correspondences) and/or 'interesting correspondences' within a collection of ontologies describing the domain of organising conferences (the domain being well understandable for every researcher). Results will be evaluated a posteriori in part manually and in part by datamining techniques and logical reasoning techniques. There will also be evaluation against reference mapping based on subset of the whole collection. Directories and thesauri n fishery gears l features four different classification schemes, expressed in OWL, adopted by different fishery information systems in FIM division of FAO. An alignment performed on this 4 schemes should be able to spot out equivalence, or a degree of similarity between the fishing gear types and the groups of gears, such to enable a future exercise of data aggregation cross systems. Oriented matching n This track focuses on the evaluation of alignments that contain other mapping relations than equivalences. Instance matching n very large crosslingual resources l The purpose of this task (vlcr) is to match the Thesaurus of the Netherlands Institute for Sound and Vision (called GTAA, see below for more information) to two other resources: the English Word. Net from Princeton University and DBpedia. Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 67

68 Mice and humans The anatomy real world case is about matching the Adult Mouse Anatomy (2744 classes) and the NCI Thesaurus (3304 classes) describing the human anatomy. (http: //oaei. ontologymatching. org/2008/anatomy/) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 68

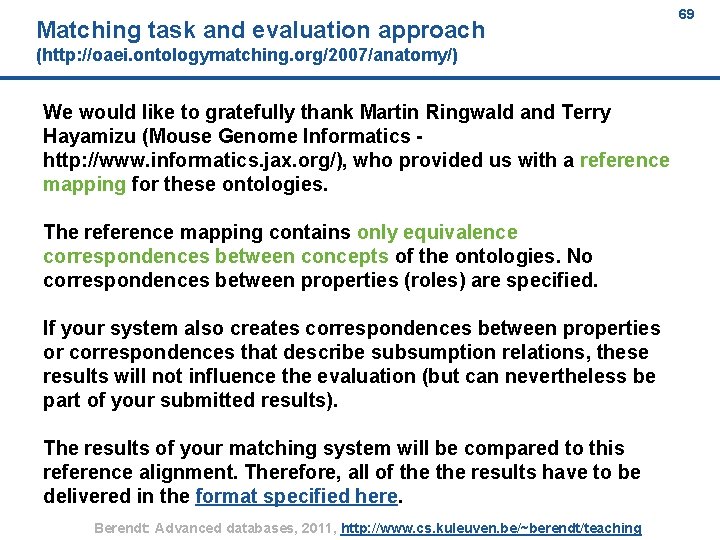

Matching task and evaluation approach 69 (http: //oaei. ontologymatching. org/2007/anatomy/) We would like to gratefully thank Martin Ringwald and Terry Hayamizu (Mouse Genome Informatics http: //www. informatics. jax. org/), who provided us with a reference mapping for these ontologies. The reference mapping contains only equivalence correspondences between concepts of the ontologies. No correspondences between properties (roles) are specified. If your system also creates correspondences between properties or correspondences that describe subsumption relations, these results will not influence the evaluation (but can nevertheless be part of your submitted results). The results of your matching system will be compared to this reference alignment. Therefore, all of the results have to be delivered in the format specified here. Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 69

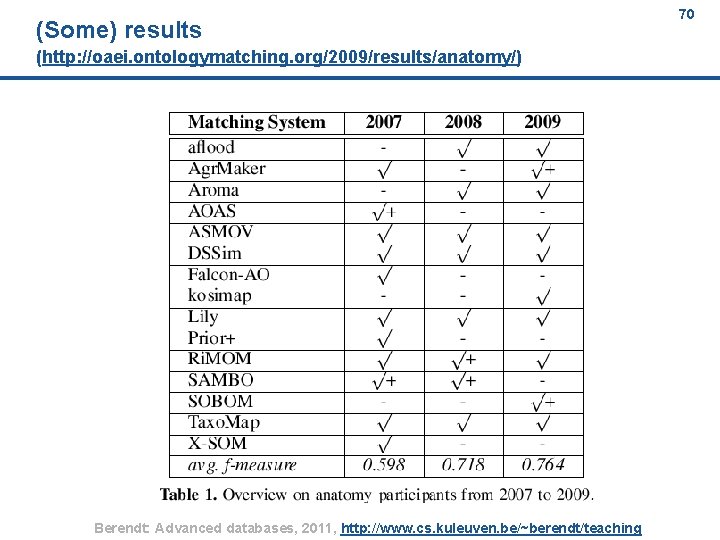

(Some) results 70 (http: //oaei. ontologymatching. org/2009/results/anatomy/) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 70

71 Agenda Core ideas of federated databases The match problem & what info to use for matching (Semi-)automated matching: Example CUPID (Semi-)automated matching: Example i. MAP Ontology matching Evaluating matching Involving the user: Explanations; mass collaboration Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 71

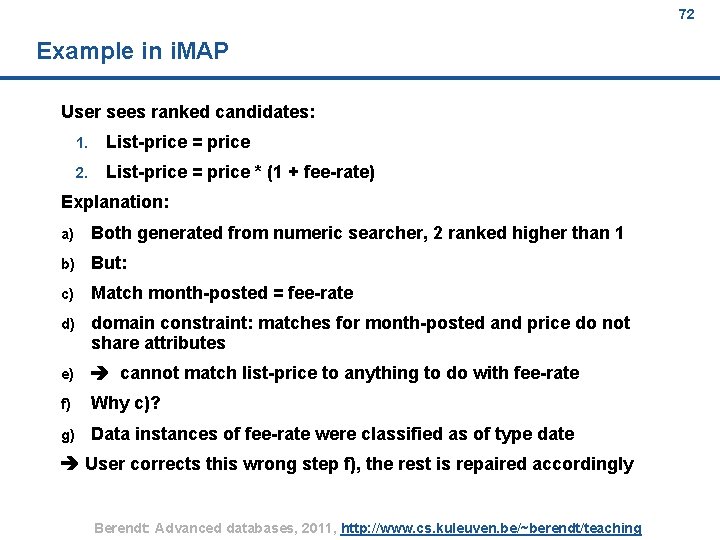

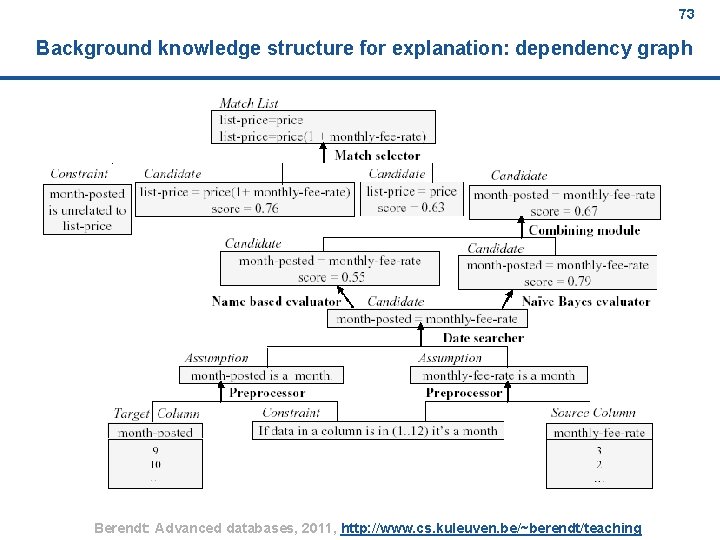

72 Example in i. MAP User sees ranked candidates: 1. List-price = price 2. List-price = price * (1 + fee-rate) Explanation: a) Both generated from numeric searcher, 2 ranked higher than 1 b) But: c) Match month-posted = fee-rate d) domain constraint: matches for month-posted and price do not share attributes e) cannot match list-price to anything to do with fee-rate f) Why c)? g) Data instances of fee-rate were classified as of type date User corrects this wrong step f), the rest is repaired accordingly Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 72

73 Background knowledge structure for explanation: dependency graph Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 73

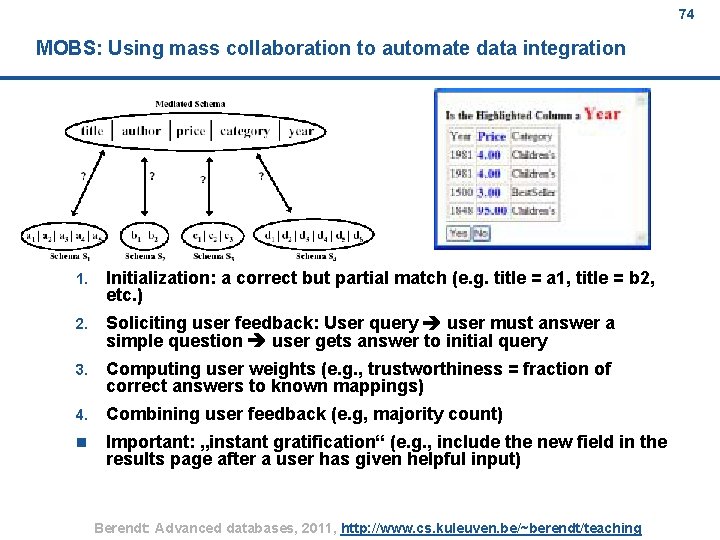

74 MOBS: Using mass collaboration to automate data integration 1. Initialization: a correct but partial match (e. g. title = a 1, title = b 2, etc. ) 2. Soliciting user feedback: User query user must answer a simple question user gets answer to initial query 3. Computing user weights (e. g. , trustworthiness = fraction of correct answers to known mappings) 4. Combining user feedback (e. g, majority count) n Important: „instant gratification“ (e. g. , include the new field in the results page after a user has given helpful input) Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 74

75 Some issues of matching – (not only) when it comes to individuals “Is this the same entity? “: n What does “the same“ mean anyway? n (When) do we want these inferences? Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 75

76 Outlook Core ideas of federated databases The match problem & what info to use for matching (Semi-)automated matching: Example CUPID (Semi-)automated matching: Example i. MAP Ontology matching Evaluating matching Involving the user: Explanations; mass collaboration KDD (1): Visualizations for exploratory data analysis Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 76

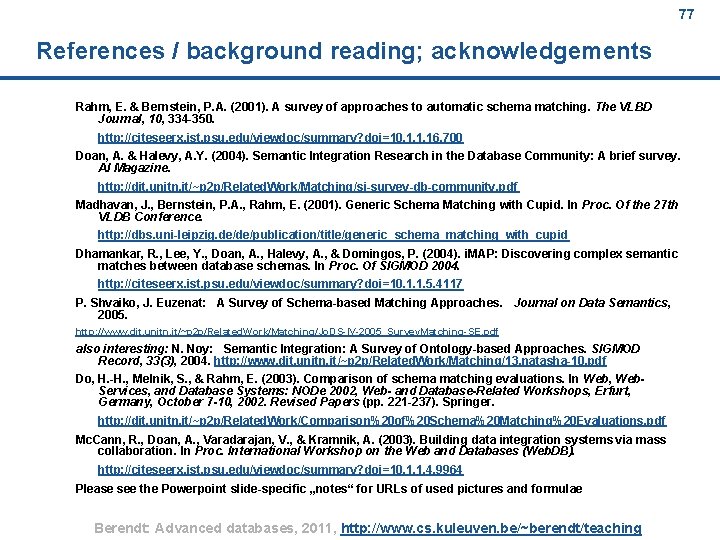

77 References / background reading; acknowledgements Rahm, E. & Bernstein, P. A. (2001). A survey of approaches to automatic schema matching. The VLBD Journal, 10, 334 -350. http: //citeseerx. ist. psu. edu/viewdoc/summary? doi=10. 1. 1. 16. 700 Doan, A. & Halevy, A. Y. (2004). Semantic Integration Research in the Database Community: A brief survey. AI Magazine. http: //dit. unitn. it/~p 2 p/Related. Work/Matching/si-survey-db-community. pdf Madhavan, J. , Bernstein, P. A. , Rahm, E. (2001). Generic Schema Matching with Cupid. In Proc. Of the 27 th VLDB Conference. http: //dbs. uni-leipzig. de/de/publication/title/generic_schema_matching_with_cupid Dhamankar, R. , Lee, Y. , Doan, A. , Halevy, A. , & Domingos, P. (2004). i. MAP: Discovering complex semantic matches between database schemas. In Proc. Of SIGMOD 2004. http: //citeseerx. ist. psu. edu/viewdoc/summary? doi=10. 1. 1. 5. 4117 P. Shvaiko, J. Euzenat: A Survey of Schema-based Matching Approaches. Journal on Data Semantics, 2005. http: //www. dit. unitn. it/~p 2 p/Related. Work/Matching/Jo. DS-IV-2005_Survey. Matching-SE. pdf also interesting: N. Noy: Semantic Integration: A Survey of Ontology-based Approaches. SIGMOD Record, 33(3), 2004. http: //www. dit. unitn. it/~p 2 p/Related. Work/Matching/13. natasha-10. pdf Do, H. -H. , Melnik, S. , & Rahm, E. (2003). Comparison of schema matching evaluations. In Web, Web. Services, and Database Systems: NODe 2002, Web- and Database-Related Workshops, Erfurt, Germany, October 7 -10, 2002. Revised Papers (pp. 221 -237). Springer. http: //dit. unitn. it/~p 2 p/Related. Work/Comparison%20 of%20 Schema%20 Matching%20 Evaluations. pdf Mc. Cann, R. , Doan, A. , Varadarajan, V. , & Kramnik, A. (2003). Building data integration systems via mass collaboration. In Proc. International Workshop on the Web and Databases (Web. DB). http: //citeseerx. ist. psu. edu/viewdoc/summary? doi=10. 1. 1. 4. 9964 Please see the Powerpoint slide-specific „notes“ for URLs of used pictures and formulae Berendt: Advanced databases, 2011, http: //www. cs. kuleuven. be/~berendt/teaching 77

- Slides: 77