1 Last update 2 November 2010 Advanced databases

1 Last update: 2 November 2010 Advanced databases – Inferring new knowledge from data(bases): Knowledge Discovery in Databases Bettina Berendt Katholieke Universiteit Leuven, Department of Computer Science http: //www. cs. kuleuven. be/~berendt/teaching Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 1

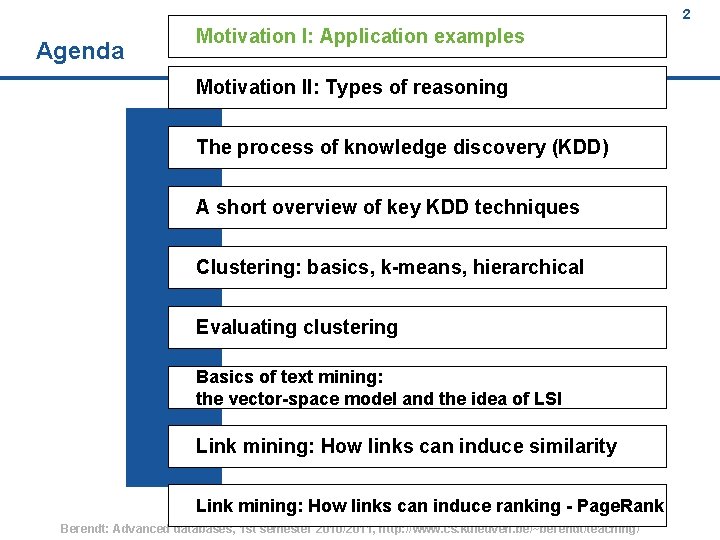

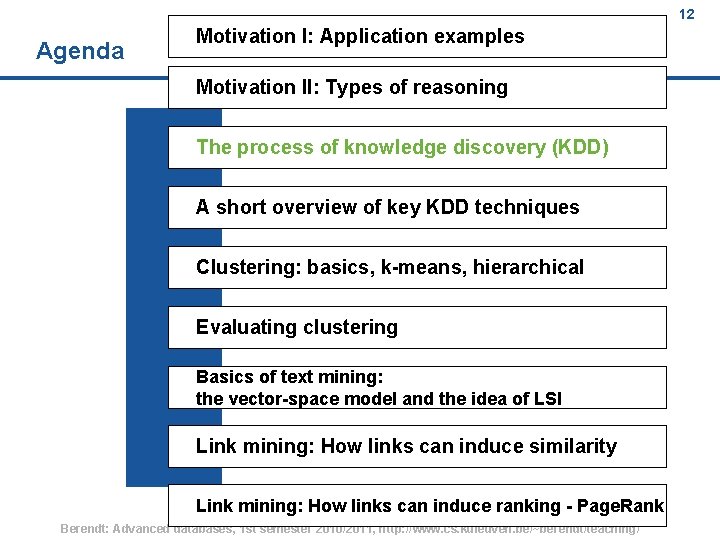

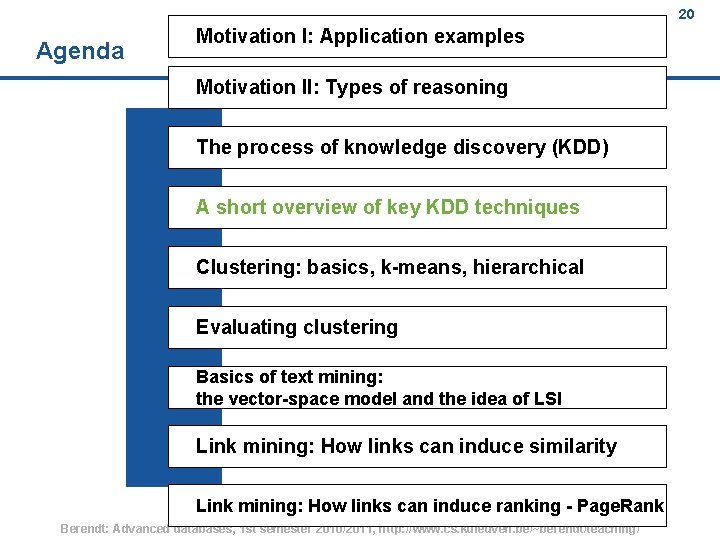

2 Agenda Motivation I: Application examples Motivation II: Types of reasoning The process of knowledge discovery (KDD) A short overview of key KDD techniques Clustering: basics, k-means, hierarchical Evaluating clustering Basics of text mining: the vector-space model and the idea of LSI Link mining: How links can induce similarity Link mining: How links can induce ranking - Page. Rank Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 2

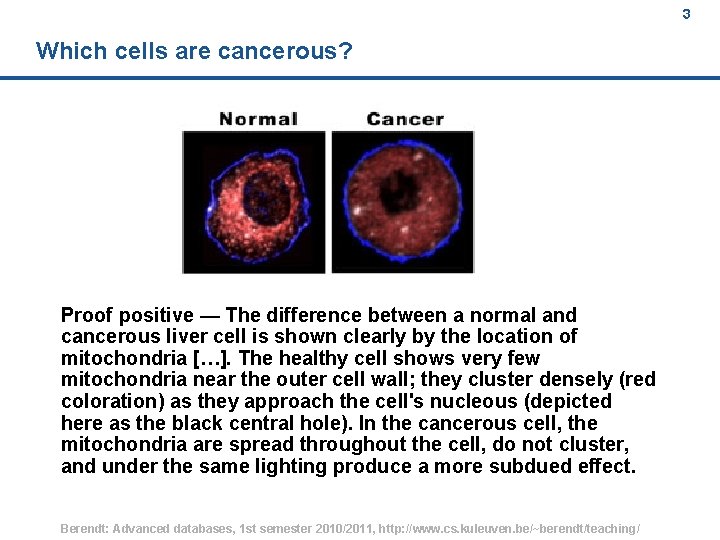

3 Which cells are cancerous? Proof positive — The difference between a normal and cancerous liver cell is shown clearly by the location of mitochondria […]. The healthy cell shows very few mitochondria near the outer cell wall; they cluster densely (red coloration) as they approach the cell's nucleous (depicted here as the black central hole). In the cancerous cell, the mitochondria are spread throughout the cell, do not cluster, and under the same lighting produce a more subdued effect. Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 3

4 What is the impact of genetically modified organisms? Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 4

5 What makes people happy? Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 5

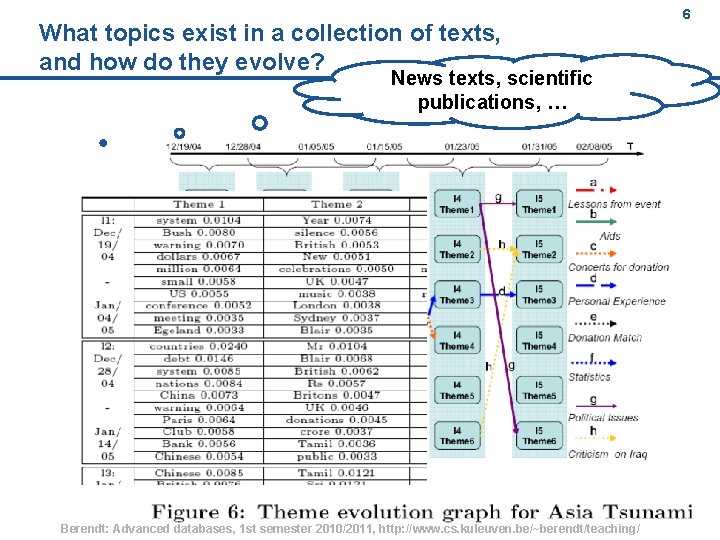

What topics exist in a collection of texts, and how do they evolve? 6 News texts, scientific publications, … Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 6

7 Agenda Motivation I: Application examples Motivation II: Types of reasoning The process of knowledge discovery (KDD) A short overview of key KDD techniques Clustering: basics, k-means, hierarchical Evaluating clustering Basics of text mining: the vector-space model and the idea of LSI Link mining: How links can induce similarity Link mining: How links can induce ranking - Page. Rank Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 7

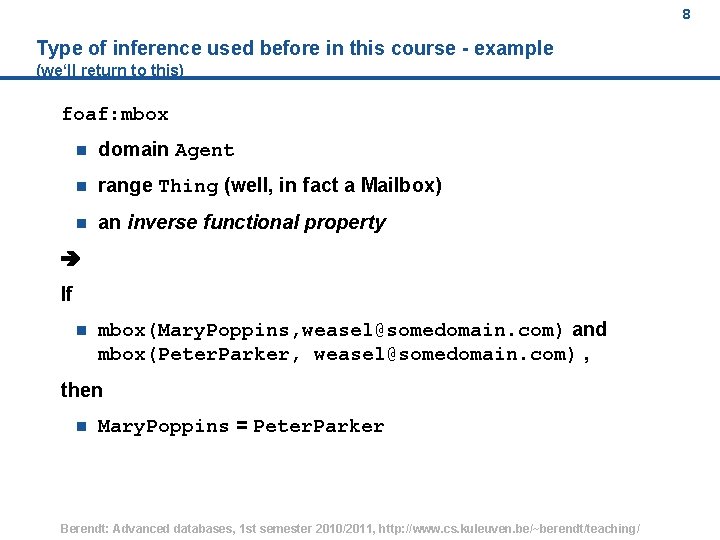

8 Type of inference used before in this course - example (we‘ll return to this) foaf: mbox n domain Agent n range Thing (well, in fact a Mailbox) n an inverse functional property If n mbox(Mary. Poppins, weasel@somedomain. com) and mbox(Peter. Parker, weasel@somedomain. com) , then n Mary. Poppins = Peter. Parker Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 8

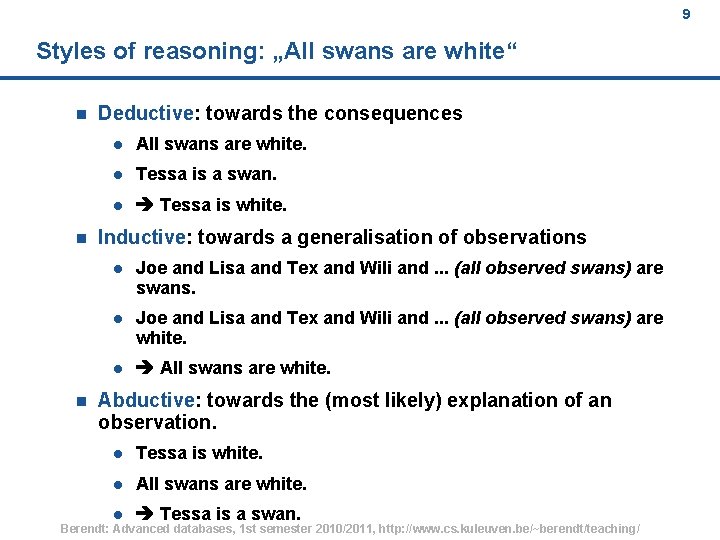

9 Styles of reasoning: „All swans are white“ n n n Deductive: towards the consequences l All swans are white. l Tessa is a swan. l Tessa is white. Inductive: towards a generalisation of observations l Joe and Lisa and Tex and Wili and. . . (all observed swans) are swans. l Joe and Lisa and Tex and Wili and. . . (all observed swans) are white. l All swans are white. Abductive: towards the (most likely) explanation of an observation. l Tessa is white. l All swans are white. l Tessa is a swan. Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 9

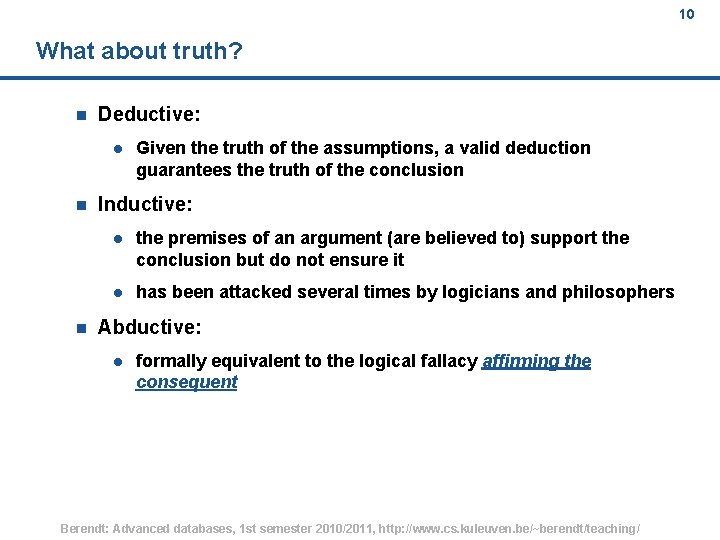

10 What about truth? n Deductive: l n n Given the truth of the assumptions, a valid deduction guarantees the truth of the conclusion Inductive: l the premises of an argument (are believed to) support the conclusion but do not ensure it l has been attacked several times by logicians and philosophers Abductive: l formally equivalent to the logical fallacy affirming the consequent Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 10

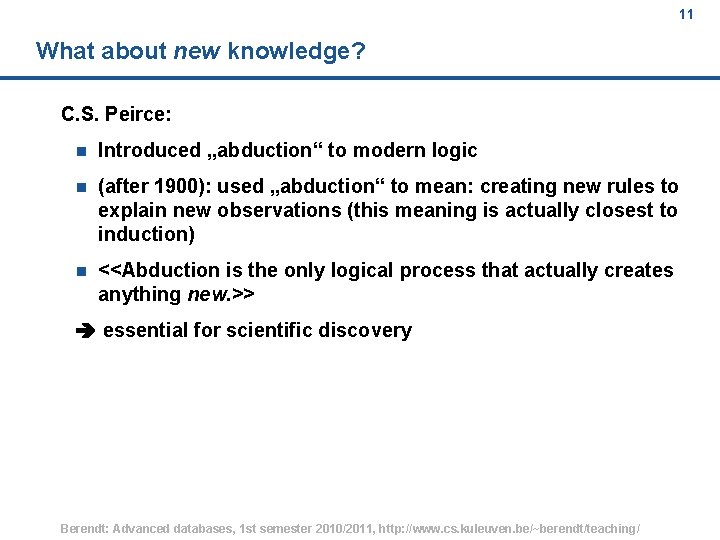

11 What about new knowledge? C. S. Peirce: n Introduced „abduction“ to modern logic n (after 1900): used „abduction“ to mean: creating new rules to explain new observations (this meaning is actually closest to induction) n <<Abduction is the only logical process that actually creates anything new. >> essential for scientific discovery Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 11

12 Agenda Motivation I: Application examples Motivation II: Types of reasoning The process of knowledge discovery (KDD) A short overview of key KDD techniques Clustering: basics, k-means, hierarchical Evaluating clustering Basics of text mining: the vector-space model and the idea of LSI Link mining: How links can induce similarity Link mining: How links can induce ranking - Page. Rank Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 12

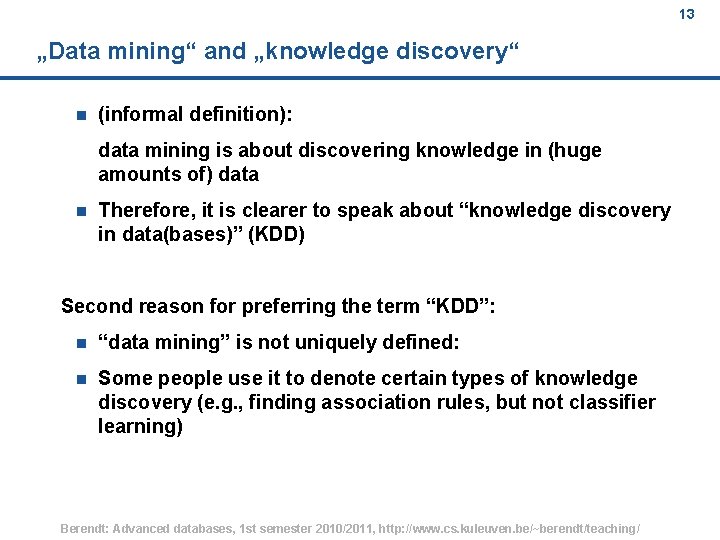

13 „Data mining“ and „knowledge discovery“ n (informal definition): data mining is about discovering knowledge in (huge amounts of) data n Therefore, it is clearer to speak about “knowledge discovery in data(bases)” (KDD) Second reason for preferring the term “KDD”: n “data mining” is not uniquely defined: n Some people use it to denote certain types of knowledge discovery (e. g. , finding association rules, but not classifier learning) Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 13

14 „Data mining“ is generally inductive n (informal definition): data mining is about discovering knowledge in (huge amounts of) data : . . . Looking at all the empirically observed swans. . . Finding they are white. . . Concluding that swans are white Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 14

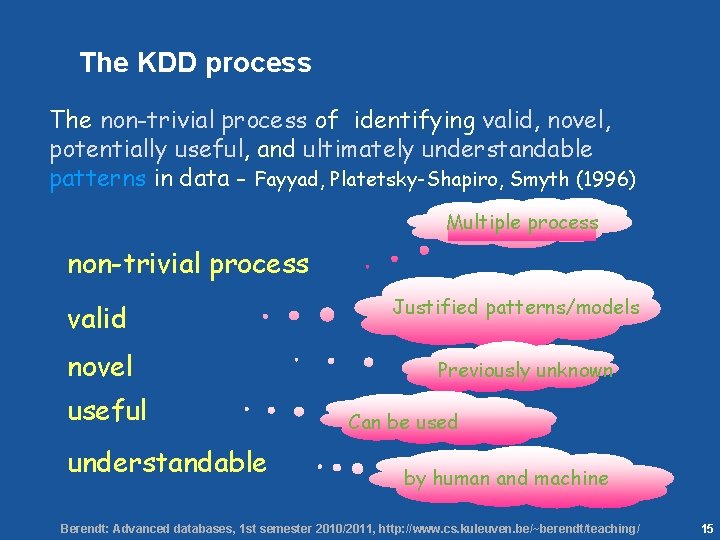

15 The KDD process The non-trivial process of identifying valid, novel, potentially useful, and ultimately understandable patterns in data - Fayyad, Platetsky-Shapiro, Smyth (1996) Multiple process non-trivial process valid novel useful understandable Justified patterns/models Previously unknown Can be used by human and machine Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 15

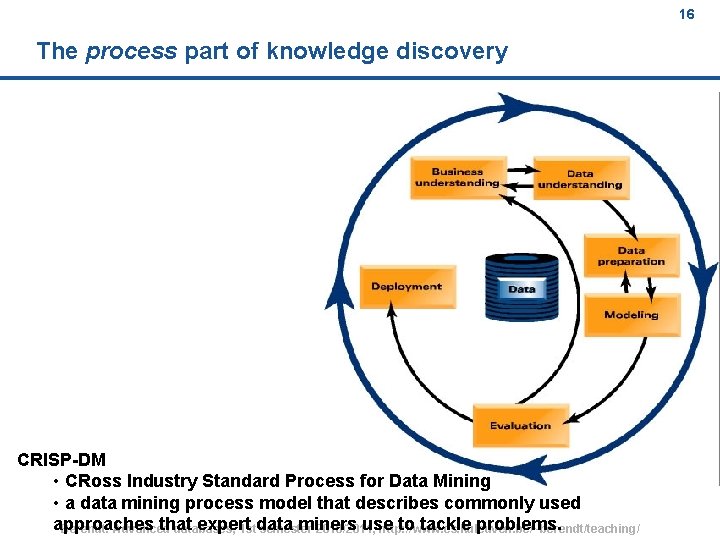

16 The process part of knowledge discovery CRISP-DM • CRoss Industry Standard Process for Data Mining • a data mining process model that describes commonly used approaches that expert miners use to tackle problems. Berendt: Advanced databases, 1 stdata semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 16

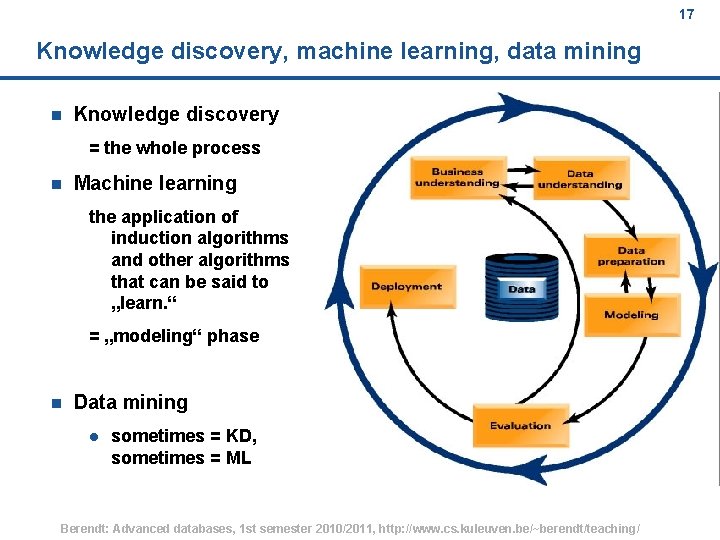

17 Knowledge discovery, machine learning, data mining n Knowledge discovery = the whole process n Machine learning the application of induction algorithms and other algorithms that can be said to „learn. “ = „modeling“ phase n Data mining l sometimes = KD, sometimes = ML Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 17

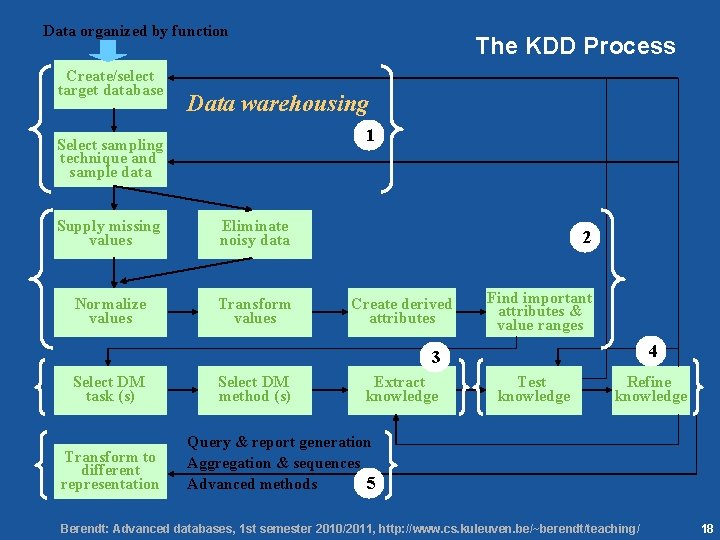

18 Data organized by function Create/select target database The KDD Process Data warehousing 1 Select sampling technique and sample data Supply missing values Eliminate noisy data Normalize values Transform values 2 Create derived attributes Find important attributes & value ranges 4 3 Select DM task (s) Transform to different representation Select DM method (s) Extract knowledge Test knowledge Refine knowledge Query & report generation Aggregation & sequences Advanced methods 5 Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 18

![19 Main Contributing Areas of KDD [data warehouses: integrated data] Statistics [OLAP: On-Line Analytical 19 Main Contributing Areas of KDD [data warehouses: integrated data] Statistics [OLAP: On-Line Analytical](http://slidetodoc.com/presentation_image/e1b0a85c27e2b5e9a283a69de29961cd/image-19.jpg)

19 Main Contributing Areas of KDD [data warehouses: integrated data] Statistics [OLAP: On-Line Analytical Processing] Databases Store, access, search, update data (deduction) Infer info from data (deduction & induction, mainly numeric data) KDD Machine Learning Computer algorithms that improve automatically through experience (mainly induction, symbolic data) Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 19

20 Agenda Motivation I: Application examples Motivation II: Types of reasoning The process of knowledge discovery (KDD) A short overview of key KDD techniques Clustering: basics, k-means, hierarchical Evaluating clustering Basics of text mining: the vector-space model and the idea of LSI Link mining: How links can induce similarity Link mining: How links can induce ranking - Page. Rank Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 20

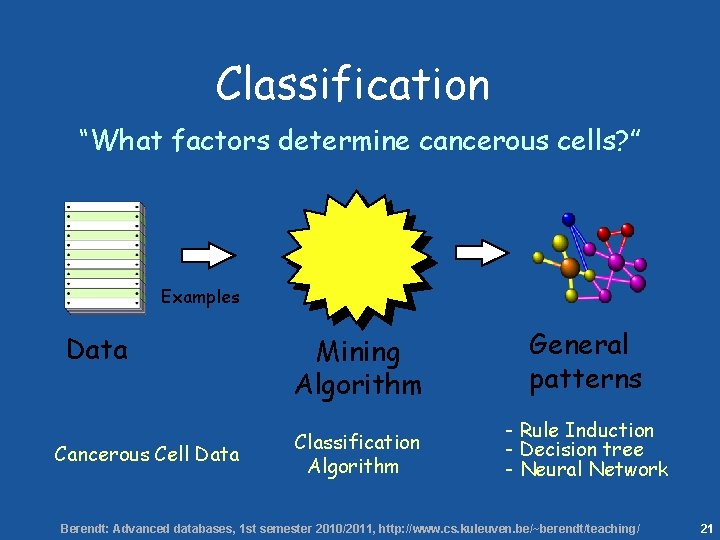

21 Classification “What factors determine cancerous cells? ” Examples Data Cancerous Cell Data Mining Algorithm General patterns Classification Algorithm - Rule Induction - Decision tree - Neural Network Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 21

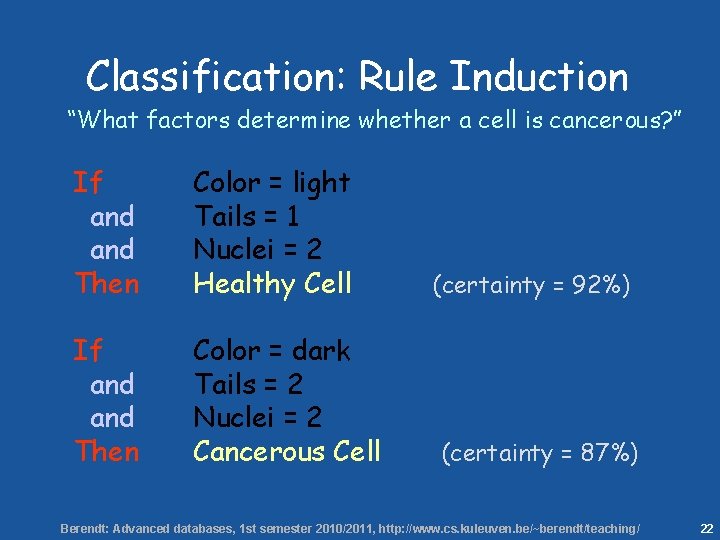

22 Classification: Rule Induction “What factors determine whether a cell is cancerous? ” If and Then Color = light Tails = 1 Nuclei = 2 Healthy Cell If and Then Color = dark Tails = 2 Nuclei = 2 Cancerous Cell (certainty = 92%) (certainty = 87%) Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 22

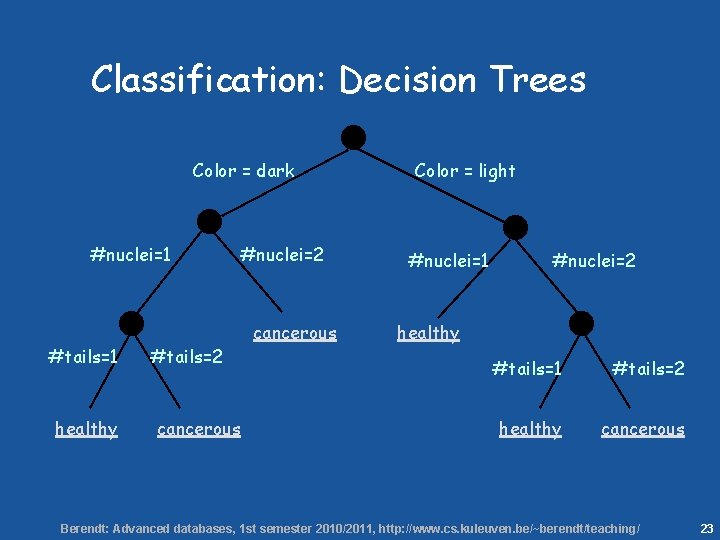

23 Classification: Decision Trees Color = dark #nuclei=1 #tails=1 healthy #tails=2 cancerous #nuclei=2 cancerous Color = light #nuclei=1 #nuclei=2 healthy #tails=1 #tails=2 healthy cancerous Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 23

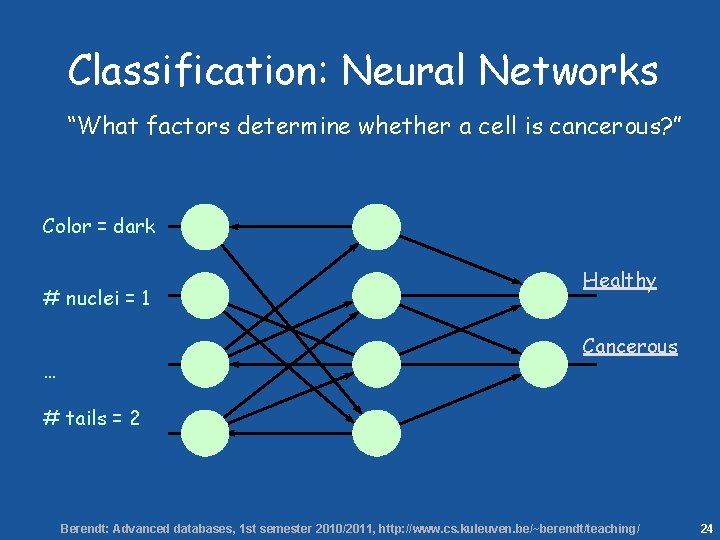

24 Classification: Neural Networks “What factors determine whether a cell is cancerous? ” Color = dark # nuclei = 1 Healthy Cancerous … # tails = 2 Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 24

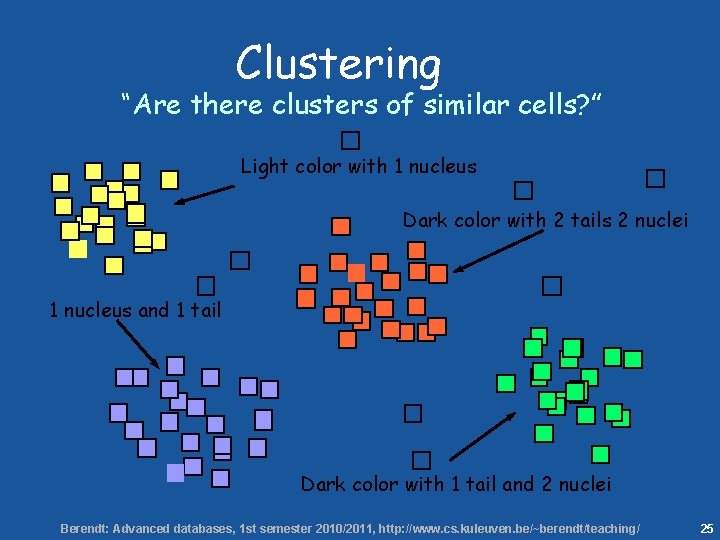

25 Clustering “Are there clusters of similar cells? ” Light color with 1 nucleus Dark color with 2 tails 2 nuclei 1 nucleus and 1 tail Dark color with 1 tail and 2 nuclei Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 25

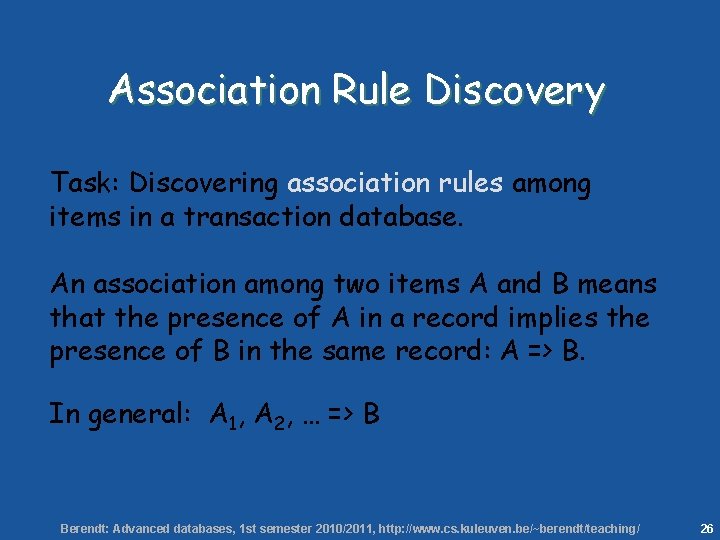

26 Association Rule Discovery Task: Discovering association rules among items in a transaction database. An association among two items A and B means that the presence of A in a record implies the presence of B in the same record: A => B. In general: A 1, A 2, … => B Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 26

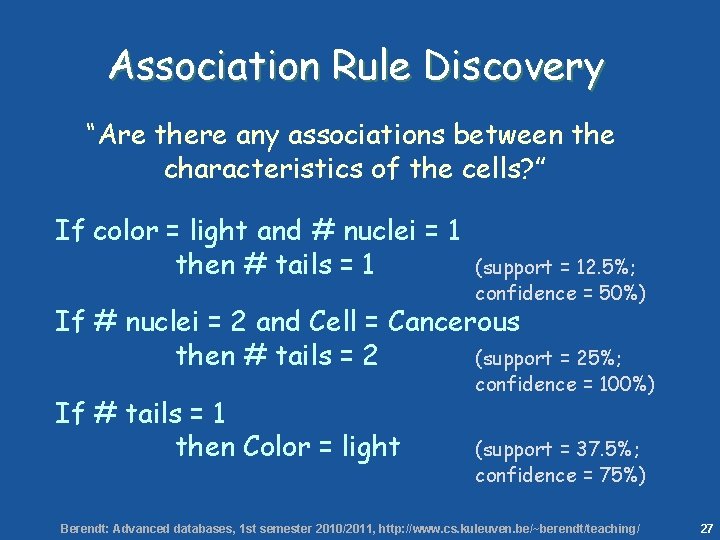

27 Association Rule Discovery “Are there any associations between the characteristics of the cells? ” If color = light and # nuclei = 1 then # tails = 1 (support = 12. 5%; confidence = 50%) If # nuclei = 2 and Cell = Cancerous then # tails = 2 (support = 25%; If # tails = 1 then Color = light confidence = 100%) (support = 37. 5%; confidence = 75%) Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 27

28 Many Other Data Mining Techniques Genetic Algorithms Rough Sets Bayesian Networks Text Mining Statistics Time Series Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 28

29 Agenda Motivation I: Application examples Motivation II: Types of reasoning The process of knowledge discovery (KDD) A short overview of key KDD techniques Clustering: basics, k-means, hierarchical Evaluating clustering Basics of text mining: the vector-space model and the idea of LSI Link mining: How links can induce similarity Link mining: How links can induce ranking - Page. Rank Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 29

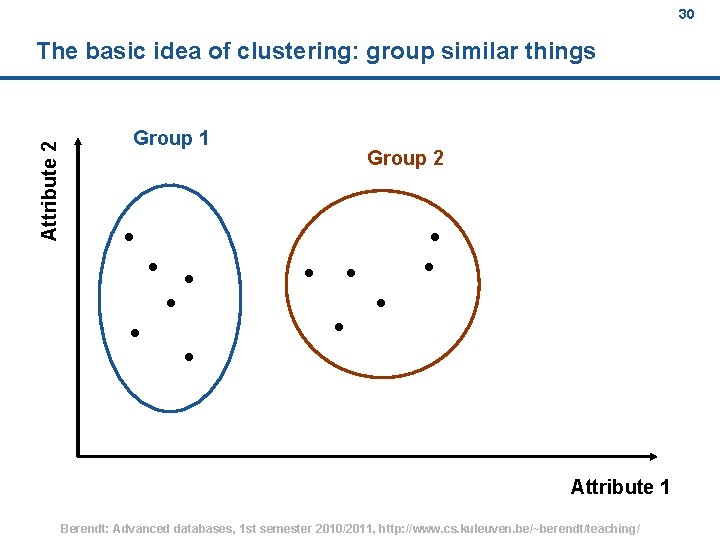

30 Attribute 2 The basic idea of clustering: group similar things Group 1 Group 2 Attribute 1 Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 30

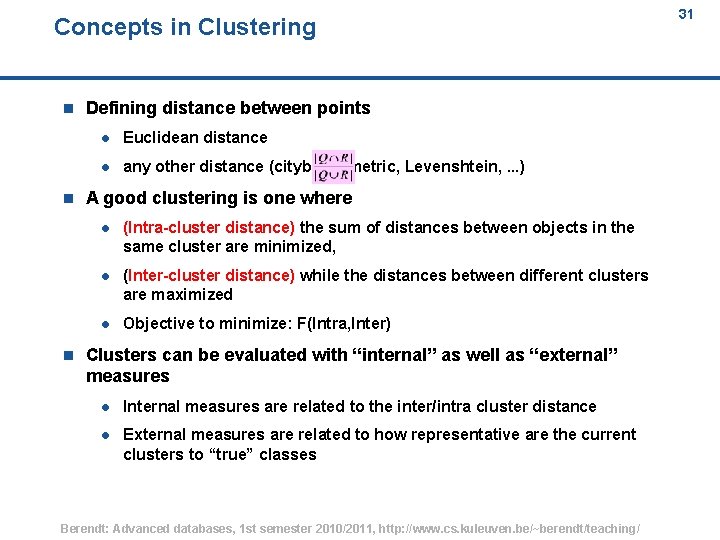

Concepts in Clustering n n n 31 Defining distance between points l Euclidean distance l any other distance (cityblock metric, Levenshtein, . . . ) A good clustering is one where l (Intra-cluster distance) the sum of distances between objects in the same cluster are minimized, l (Inter-cluster distance) while the distances between different clusters are maximized l Objective to minimize: F(Intra, Inter) Clusters can be evaluated with “internal” as well as “external” measures l Internal measures are related to the inter/intra cluster distance l External measures are related to how representative are the current clusters to “true” classes Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 31

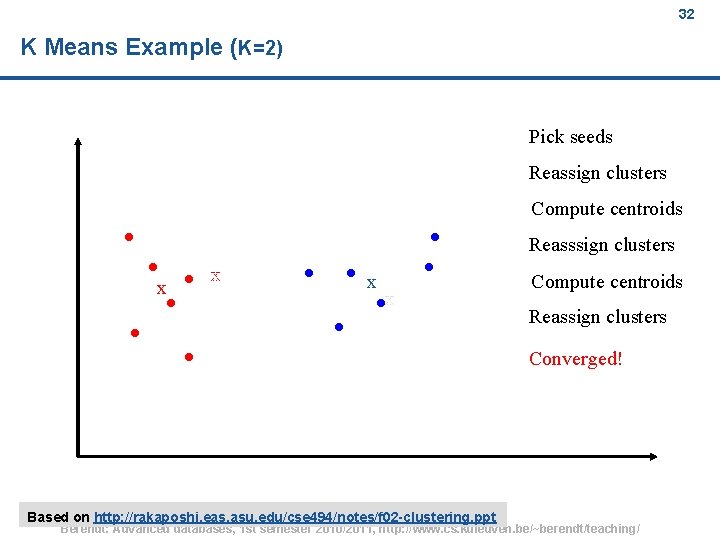

32 K Means Example (K=2) Pick seeds Reassign clusters Compute centroids Reasssign clusters x x Compute centroids Reassign clusters Converged! Based on http: //rakaposhi. eas. asu. edu/cse 494/notes/f 02 -clustering. ppt Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 32

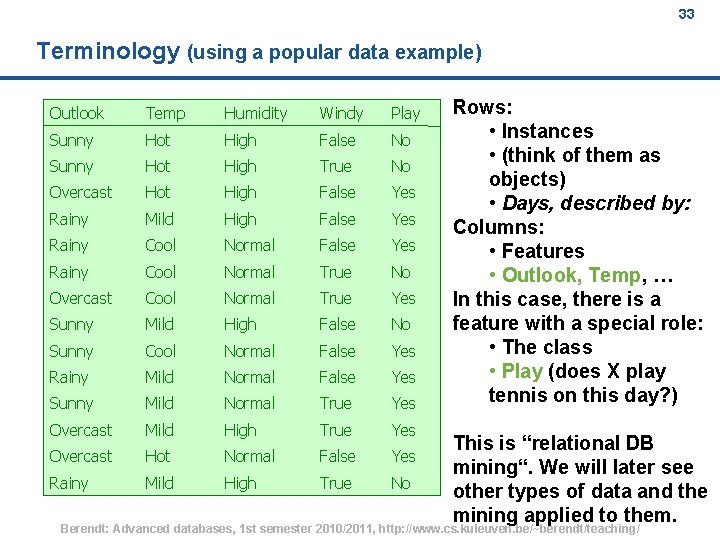

33 Terminology (using a popular data example) Outlook Temp Humidity Windy Play Sunny Hot High False No Sunny Hot High True No Overcast Hot High False Yes Rainy Mild High False Yes Rainy Cool Normal True No Overcast Cool Normal True Yes Sunny Mild High False No Sunny Cool Normal False Yes Rainy Mild Normal False Yes Sunny Mild Normal True Yes Overcast Mild High True Yes Overcast Hot Normal False Yes Rainy Mild High True No Rows: • Instances • (think of them as objects) • Days, described by: Columns: • Features • Outlook, Temp, … In this case, there is a feature with a special role: • The class • Play (does X play tennis on this day? ) This is “relational DB mining“. We will later see other types of data and the mining applied to them. Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 33

34 Forms of clustering n Flat vs. Hierarchical: l n divide into (disjoint or overlapping) clusters, or divide into clusters which each contain further clusters? Hard vs. Soft: l Are the clusters mutually disjoint, or can they overlap? Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 34

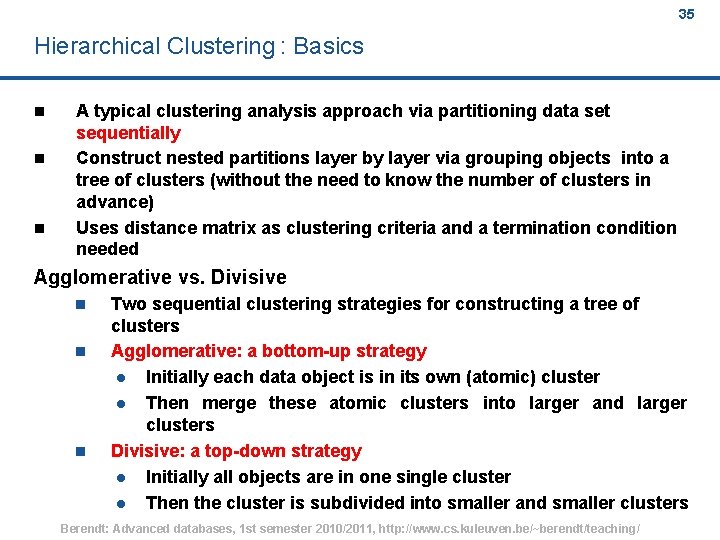

35 Hierarchical Clustering : Basics n n n A typical clustering analysis approach via partitioning data set sequentially Construct nested partitions layer by layer via grouping objects into a tree of clusters (without the need to know the number of clusters in advance) Uses distance matrix as clustering criteria and a termination condition needed Agglomerative vs. Divisive n n n Two sequential clustering strategies for constructing a tree of clusters Agglomerative: a bottom-up strategy l Initially each data object is in its own (atomic) cluster l Then merge these atomic clusters into larger and larger clusters Divisive: a top-down strategy l Initially all objects are in one single cluster l Then the cluster is subdivided into smaller and smaller clusters Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 35

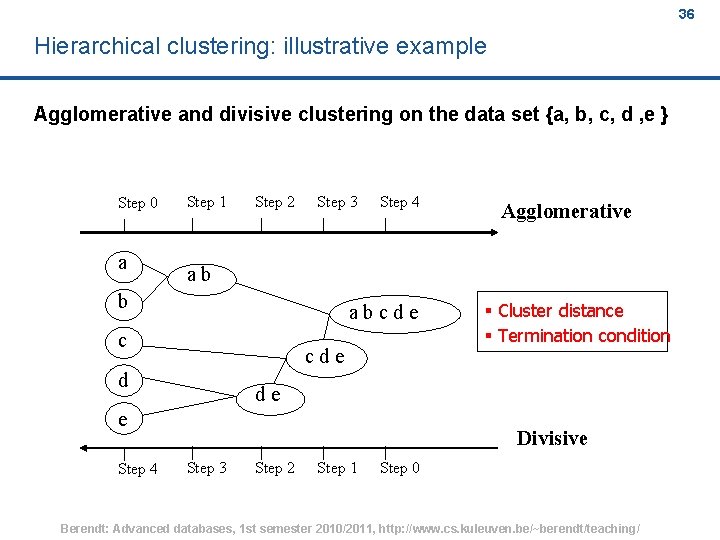

36 Hierarchical clustering: illustrative example Agglomerative and divisive clustering on the data set {a, b, c, d , e } Step 0 a Step 1 Step 2 Step 3 Step 4 ab b abcde c cde d § Cluster distance § Termination condition de e Step 4 Agglomerative Divisive Step 3 Step 2 Step 1 Step 0 Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 36

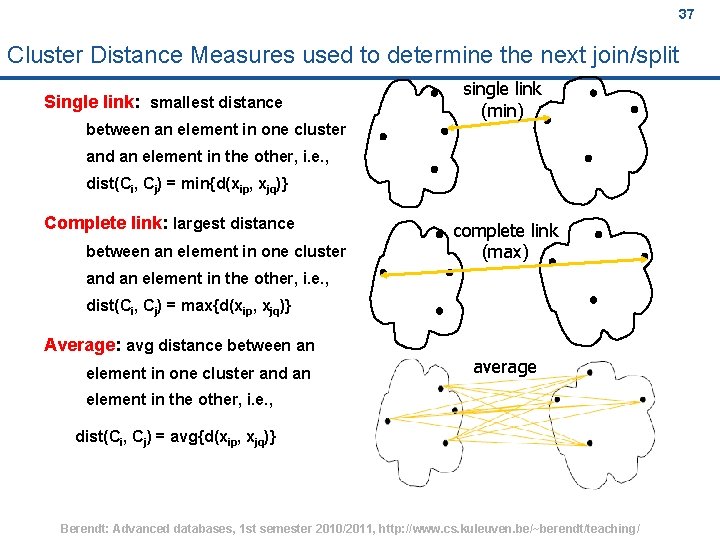

37 Cluster Distance Measures used to determine the next join/split Single link: smallest distance single link (min) between an element in one cluster and an element in the other, i. e. , dist(Ci, Cj) = min{d(xip, xjq)} Complete link: largest distance between an element in one cluster complete link (max) and an element in the other, i. e. , dist(Ci, Cj) = max{d(xip, xjq)} Average: avg distance between an element in one cluster and an average element in the other, i. e. , dist(Ci, Cj) = avg{d(xip, xjq)} Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 37

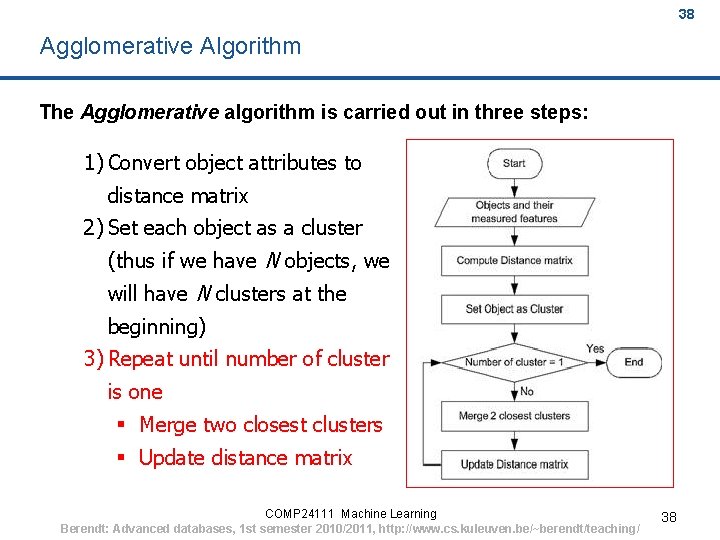

38 Agglomerative Algorithm The Agglomerative algorithm is carried out in three steps: 1) Convert object attributes to distance matrix 2) Set each object as a cluster (thus if we have N objects, we will have N clusters at the beginning) 3) Repeat until number of cluster is one § Merge two closest clusters § Update distance matrix COMP 24111 Machine Learning Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 38 38

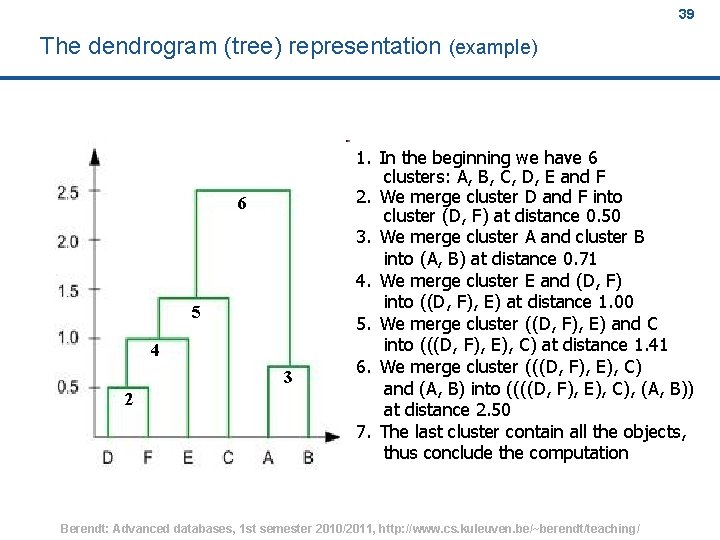

39 The dendrogram (tree) representation (example) 6 5 4 3 2 1. In the beginning we have 6 clusters: A, B, C, D, E and F 2. We merge cluster D and F into cluster (D, F) at distance 0. 50 3. We merge cluster A and cluster B into (A, B) at distance 0. 71 4. We merge cluster E and (D, F) into ((D, F), E) at distance 1. 00 5. We merge cluster ((D, F), E) and C into (((D, F), E), C) at distance 1. 41 6. We merge cluster (((D, F), E), C) and (A, B) into ((((D, F), E), C), (A, B)) at distance 2. 50 7. The last cluster contain all the objects, thus conclude the computation Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 39

40 Agenda Motivation I: Application examples Motivation II: Types of reasoning The process of knowledge discovery (KDD) A short overview of key KDD techniques Clustering: basics, k-means, hierarchical Evaluating clustering Basics of text mining: the vector-space model and the idea of LSI Link mining: How links can induce similarity Link mining: How links can induce ranking - Page. Rank Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 40

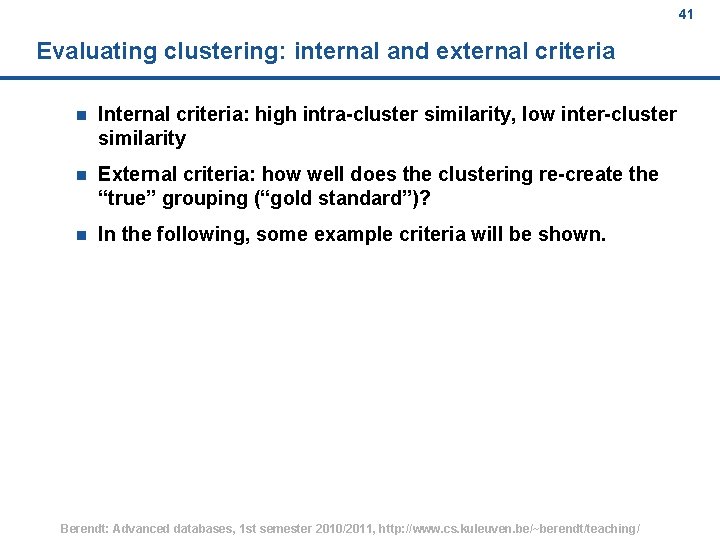

41 Evaluating clustering: internal and external criteria n Internal criteria: high intra-cluster similarity, low inter-cluster similarity n External criteria: how well does the clustering re-create the “true” grouping (“gold standard”)? n In the following, some example criteria will be shown. Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 41

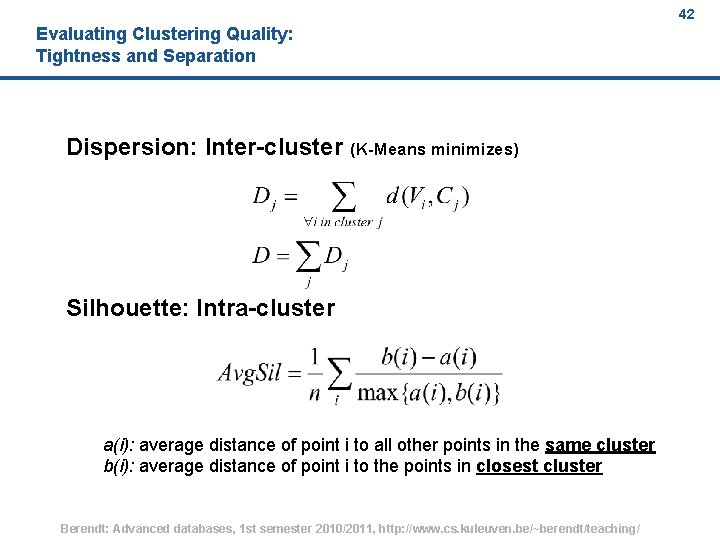

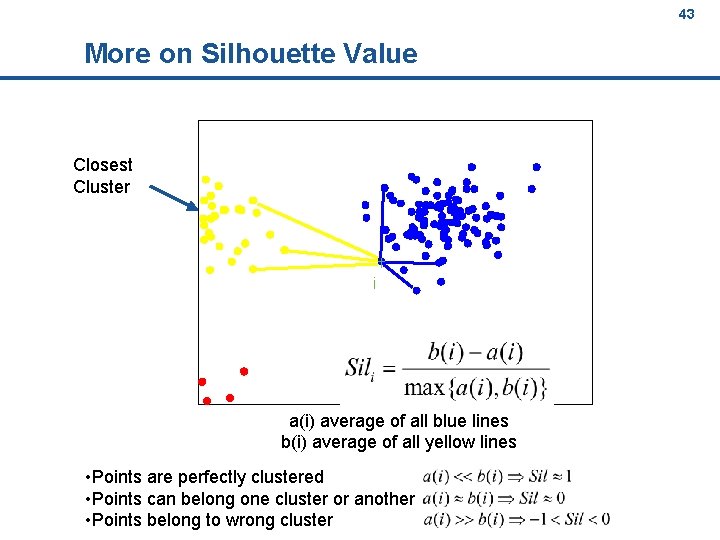

42 Evaluating Clustering Quality: Tightness and Separation Dispersion: Inter-cluster (K-Means minimizes) Silhouette: Intra-cluster a(i): average distance of point i to all other points in the same cluster b(i): average distance of point i to the points in closest cluster Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 42

43 More on Silhouette Value Closest Cluster i a(i) average of all blue lines b(i) average of all yellow lines • Points are perfectly clustered • Points can belong one cluster or another • Points belong to wrong cluster Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 43

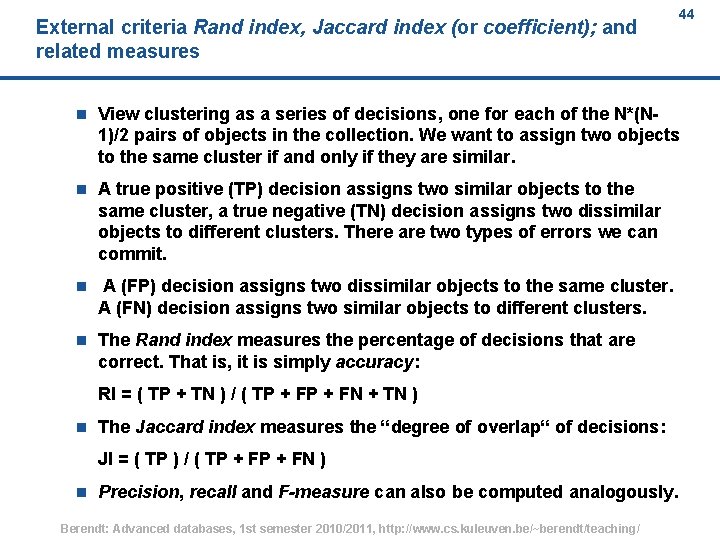

External criteria Rand index, Jaccard index (or coefficient); and related measures 44 n View clustering as a series of decisions, one for each of the N*(N 1)/2 pairs of objects in the collection. We want to assign two objects to the same cluster if and only if they are similar. n A true positive (TP) decision assigns two similar objects to the same cluster, a true negative (TN) decision assigns two dissimilar objects to different clusters. There are two types of errors we can commit. n A (FP) decision assigns two dissimilar objects to the same cluster. A (FN) decision assigns two similar objects to different clusters. n The Rand index measures the percentage of decisions that are correct. That is, it is simply accuracy: RI = ( TP + TN ) / ( TP + FN + TN ) n The Jaccard index measures the “degree of overlap“ of decisions: JI = ( TP ) / ( TP + FN ) n Precision, recall and F-measure can also be computed analogously. Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 44

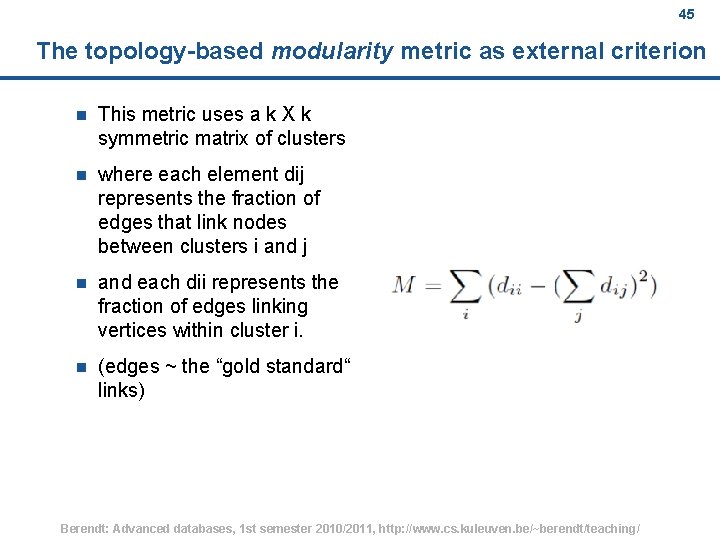

45 The topology-based modularity metric as external criterion n This metric uses a k X k symmetric matrix of clusters n where each element dij represents the fraction of edges that link nodes between clusters i and j n and each dii represents the fraction of edges linking vertices within cluster i. n (edges ~ the “gold standard“ links) Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 45

46 Agenda Motivation I: Application examples Motivation II: Types of reasoning The process of knowledge discovery (KDD) A short overview of key KDD techniques Clustering: basics, k-means, hierarchical Evaluating clustering Basics of text mining: the vector-space model and the idea of LSI Link mining: How links can induce similarity Link mining: How links can induce ranking - Page. Rank Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 46

47 The steps of text mining 1. Application understanding 2. Corpus generation 3. Data understanding 4. Text preprocessing 5. Search for patterns / modelling l Topical analysis l Sentiment analysis / opinion mining 6. Evaluation 7. Deployment Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 47

48 Application understanding; Corpus generation n What is the question? n What is the context? n What could be interesting sources, and where can they be found? n Crawl n Use a search engine and/or archive l Google blogs search l Technorati l Blogdigger l . . . Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 48

49 The goal: text representation n n Basic idea: l Keywords are extracted from texts. l These keywords describe the (usually) topical content of Web pages and other text contributions. Based on the vector space model of document collections: l Each unique word in a corpus of Web pages = one dimension l Each page(view) is a vector with non-zero weight for each word in that page(view), zero weight for other words Words become “features” (in a data-mining sense) Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 49

50 Data Preparation Tasks for Mining Text Data Feature representation for texts n each text p is represented as a k-dimensional feature vector, where k is the total number of extracted features from the site in a global dictionary n feature vectors obtained are organized into an inverted file structure containing a dictionary of all extracted features and posting files for pageviews Conceptually, the inverted file structure represents a document-feature matrix, where each row is the feature vector for a page and each column is a feature Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 50

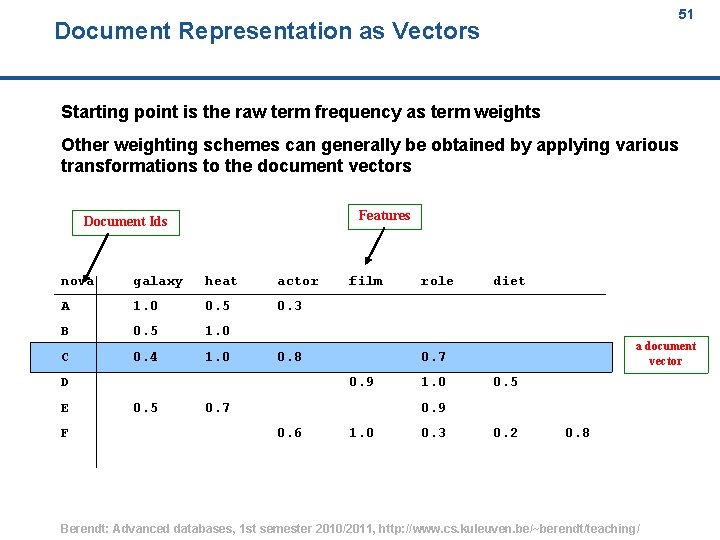

51 Document Representation as Vectors Starting point is the raw term frequency as term weights Other weighting schemes can generally be obtained by applying various transformations to the document vectors Features Document Ids nova galaxy heat actor A 1. 0 0. 5 0. 3 B 0. 5 1. 0 C 0. 4 1. 0 0. 8 D E F film diet a document vector 0. 7 0. 9 0. 5 role 0. 7 1. 0 0. 5 0. 9 0. 6 1. 0 0. 3 0. 2 0. 8 Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 51

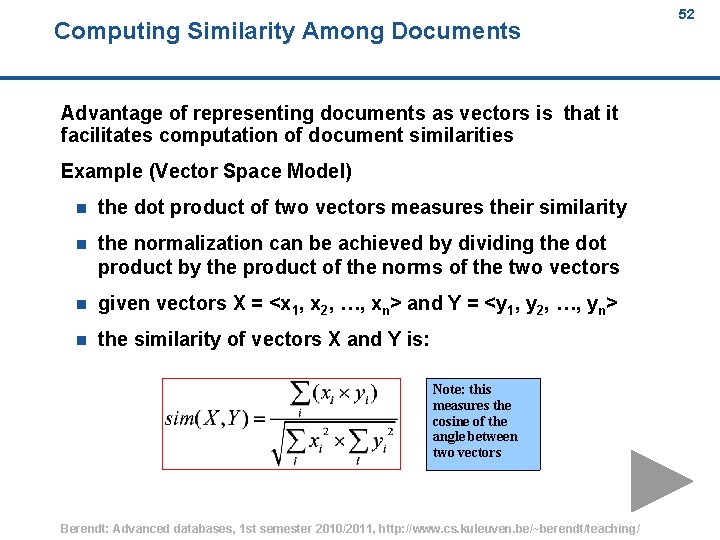

Computing Similarity Among Documents 52 Advantage of representing documents as vectors is that it facilitates computation of document similarities Example (Vector Space Model) n the dot product of two vectors measures their similarity n the normalization can be achieved by dividing the dot product by the product of the norms of the two vectors n given vectors X = <x 1, x 2, …, xn> and Y = <y 1, y 2, …, yn> n the similarity of vectors X and Y is: Note: this measures the cosine of the angle between two vectors Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 52

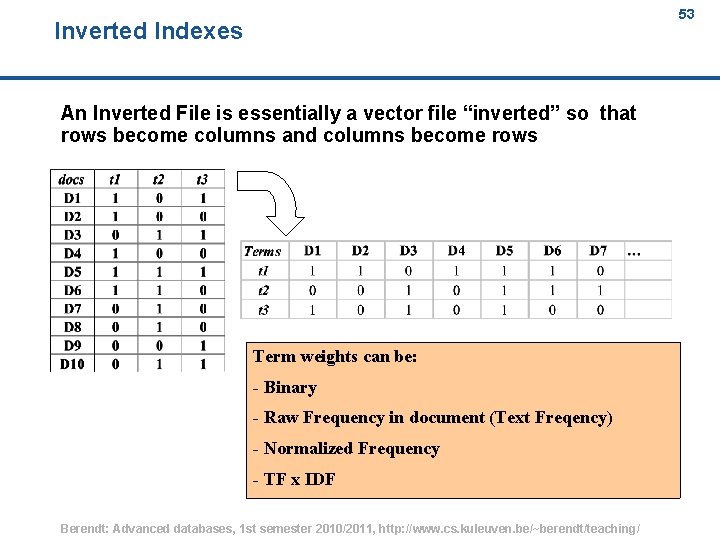

53 Inverted Indexes An Inverted File is essentially a vector file “inverted” so that rows become columns and columns become rows Term weights can be: - Binary - Raw Frequency in document (Text Freqency) - Normalized Frequency - TF x IDF Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 53

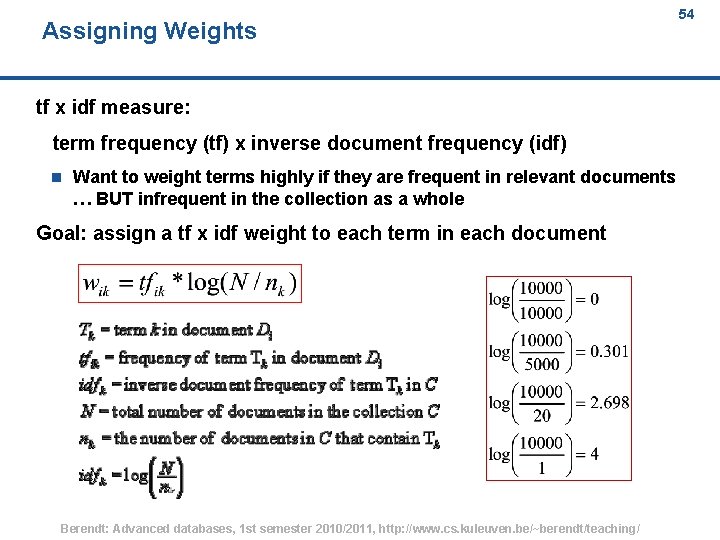

Assigning Weights 54 tf x idf measure: term frequency (tf) x inverse document frequency (idf) n Want to weight terms highly if they are frequent in relevant documents … BUT infrequent in the collection as a whole Goal: assign a tf x idf weight to each term in each document Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 54

Latent Semantic Analysis (LSA) / Latent Semantic Indexing (LSI) 55 Two problems that arose using the vector space model: n synonymy: many ways to refer to the same object, e. g. car and automobile l n leads to poor recall polysemy: most words have more than one distinct meaning, e. g. model, python, chip l leads to poor precision Solution approach of LSA / LSI: n Represents words and passages as vectors in the same (lowdimensional) semantic space n Similarity in word meaning is defined by similarity of their contexts. n Terminological note: What is the difference between LSI and LSA? l LSI refers to using it for indexing or information retrieval. l LSA refers to everything else. Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 55

56 LSA Steps 1. Document-Term Co-occurrence Matrix e. g. , 1151 documents X 5793 terms 1. 2. Compute SVD 1. 3. Reduce dimension by taking k largest singular values 1. 4. Compute the new vector representations for documents 1. 5. [a frequently applied subsequent step] Clustering the new context vectors Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 56

57 Agenda Motivation I: Application examples Motivation II: Types of reasoning The process of knowledge discovery (KDD) A short overview of key KDD techniques Clustering: basics, k-means, hierarchical Evaluating clustering Basics of text mining: the vector-space model and the idea of LSI Link mining: How links can induce similarity Link mining: How links can induce ranking - Page. Rank Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 57

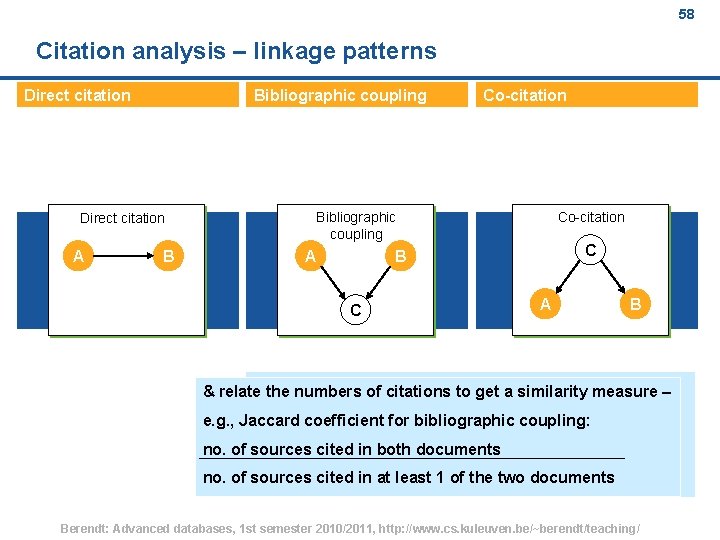

58 Citation analysis – linkage patterns Direct citation Bibliographic coupling Direct citation A B Co-citation Bibliographic coupling A Co-citation C B C A B & relate the numbers of citations to get a similarity measure – e. g. , Jaccard coefficient for bibliographic coupling: no. of sources cited in both documents no. of sources cited in at least 1 of the two documents Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 58

59 Agenda Motivation I: Application examples Motivation II: Types of reasoning The process of knowledge discovery (KDD) A short overview of key KDD techniques Clustering: basics, k-means, hierarchical Evaluating clustering Basics of text mining: the vector-space model and the idea of LSI Link mining: How links can induce similarity Link mining: How links can induce ranking - Page. Rank Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 59

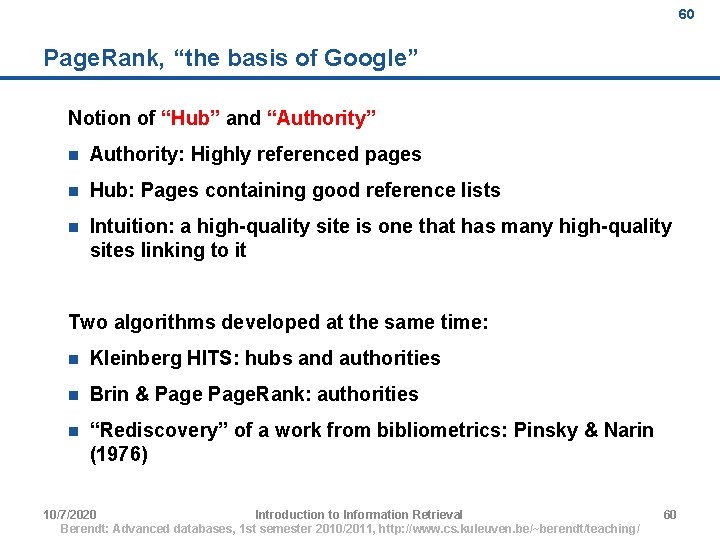

60 Page. Rank, “the basis of Google” Notion of “Hub” and “Authority” n Authority: Highly referenced pages n Hub: Pages containing good reference lists n Intuition: a high-quality site is one that has many high-quality sites linking to it Two algorithms developed at the same time: n Kleinberg HITS: hubs and authorities n Brin & Page. Rank: authorities n “Rediscovery” of a work from bibliometrics: Pinsky & Narin (1976) 10/7/2020 Introduction to Information Retrieval Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 60 60

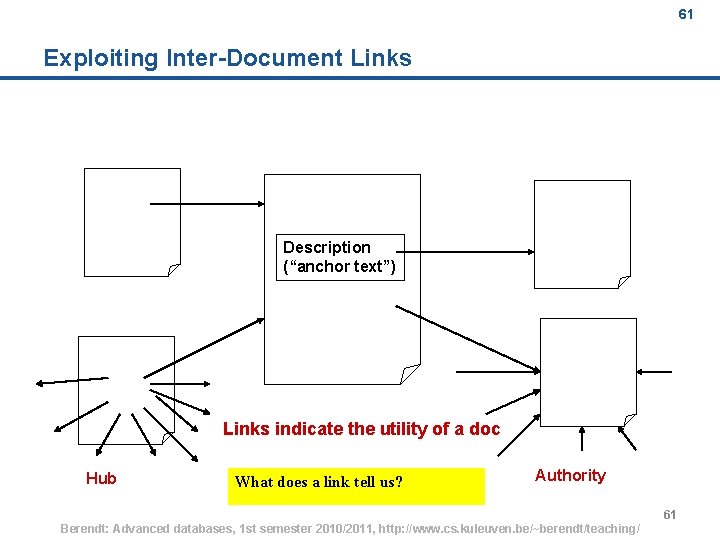

61 Exploiting Inter-Document Links Description (“anchor text”) Links indicate the utility of a doc Hub What does a link tell us? Authority Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 61 61

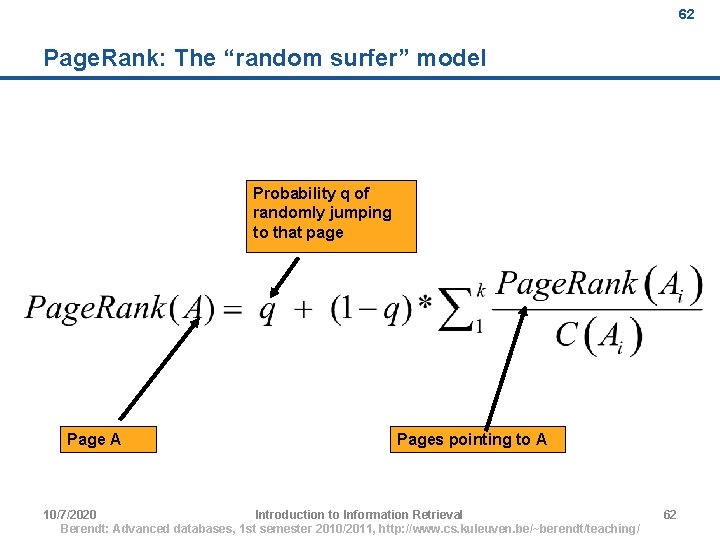

62 Page. Rank: The “random surfer” model Probability q of randomly jumping to that page Page A Pages pointing to A 10/7/2020 Introduction to Information Retrieval Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 62 62

63 Page. Rank and relevance “Random surfer” selects a page, keeps clicking links until “bored”, then randomly selects another page. n Page. Rank(A) is the probability that such a user visits A n q is the probability of getting bored at a page Page. Rank matrix can be computed offline Google takes into account both the relevance of the page and Page. Rank Relevance is computed from the text and other features (of course, a proprietary and evolving scheme). 10/7/2020 Introduction to Information Retrieval Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 63 63

64 Next lecture (tomorrow) … an application example of all these concepts! Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 64

65 References / background reading n n Knowledge discovery is now an established area with some excellent general textbooks. I recommend the following as examples of the 3 main perspectives: l a databases / data warehouses perspective: Han, J. & Kamber, M. (2001). Data Mining: Concepts and Techniques. San Francisco, CA: Morgan Kaufmann. http: //www. cs. sfu. ca/%7 Ehan/dmbook l a machine learning perspective: Witten, I. H. , & Frank, E. (2005). Data Mining. Practical Machine Learning Tools and Techniques with Java Implementations. 2 nd ed. Morgan Kaufmann. http: //www. cs. waikato. ac. nz/%7 Eml/weka/book. html l a statistics perspective: Hand, D. J. , Mannila, H. , & Smyth, P. (2001). Principles of Data Mining. Cambridge, MA: MIT Press. http: //mitpress. mit. edu/catalog/item/default. asp? tid=3520&ttype =2 The CRISP-DM phase model can be found at http: //www. crisp -dm. org Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 65

66 Acknowledgements (to be revised!, see also comment field) n pp. 14, 15: http: //en. wikipedia. org/wiki/Abductive_reasoning n pp. 19 -26 taken from (with minor modifications): l n pp. 33, 36, 38, 43 -50 were taken from (with minor modifications): l n Tzacheva, A. A. (2006). SIMS 422. Knowledge Inference Systems & Applications. http: //faculty. uscupstate. edu/atzacheva/SIMS 422/Overview. I. ppt pp. 47 -50 were taken from l n Ramakrishnan, R. & Gehrke, J. (2002? ). Database Management Systems, 3 rd Edition 2002. Instructor Slides. Ch. 25 - Deductive Databases. http: //pages. cs. wisc. edu/~dbbook/open. Access/third. Edition/slides/slide s 3 ed-english/Ch 25_Ded. DB-95. pdf Tzacheva, A. A. (2006). Knowledge Discovery and Data Mining. http: //faculty. uscupstate. edu/atzacheva/SIMS 422/Overview. II. ppt pp. 30, 31, 39 -41 were taken from l Han, J. & Kamber, M. (2006). Data Mining: Concepts and Techniques — Chapter 1 — Introduction. http: //www. cs. sfu. ca/%7 Ehan/bk/1 intro. ppt Berendt: Advanced databases, 1 st semester 2010/2011, http: //www. cs. kuleuven. be/~berendt/teaching/ 66

- Slides: 66