When Choosing Plausible Alternatives Clever Hans can be

When Choosing Plausible Alternatives, Clever Hans can be Clever Pride Kavumba Keshav Singh Naoya Inoue Paul Reisert Benjamin Heinzerling Kentaro Inui https: //balanced-copa. github. io

Clever Hans performed arithmetic by exploiting cues from handlers 2

Clever Hans Effect in NLP NLI: models perform well with incomplete input [Gururangan+18; Poliak+18; Dasgupta+18] Machine Reading Comprehension: superficial cues make questions easier [Sugawara+18] Argument Reasoning Comprehension: BERT exploits superficial cues (e. g. not). Nearly random performance without cues [Niven+19] 3

![COPA: Choice Of Plausible Alternatives [Roemmele+11] Benchmark for causal reasoning Part of Super. GLUE COPA: Choice Of Plausible Alternatives [Roemmele+11] Benchmark for causal reasoning Part of Super. GLUE](http://slidetodoc.com/presentation_image_h2/98cf1b853f409bf1901bc406125ac0e9/image-4.jpg)

COPA: Choice Of Plausible Alternatives [Roemmele+11] Benchmark for causal reasoning Part of Super. GLUE [Wang+19] Example: Premise: The woman hummed to herself. Question: What was the cause for this? Alternative 1: She was in a good mood. Alternative 2: She was nervous. 4

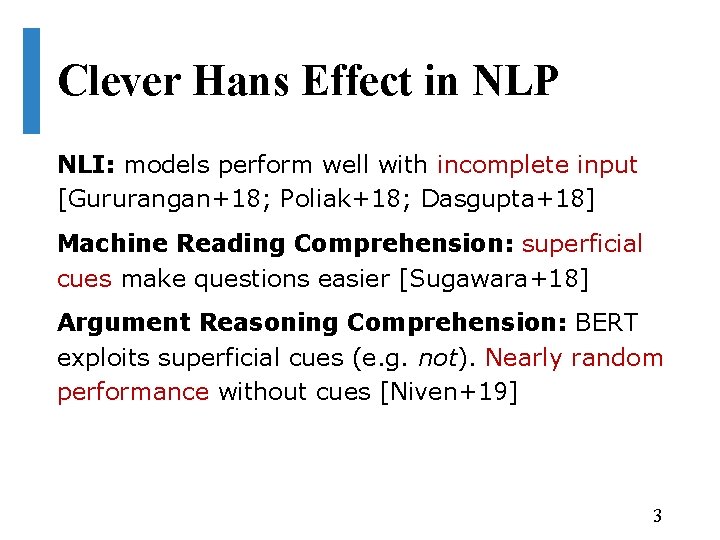

COPA accuracy Rise of the Muppets on COPA 95 90 85 80 75 70 65 Ro. BERTa BERT Gordon+2011 Luo+2016 Sasaki+2017 Sap+2019 Liu+2019 Is this the Clever Hans effect? 5

Research Questions 1. Does COPA have superficial cues? 2. If so, do pre-trained language models exploit these cues? 3. If they do, how do LMs perform without cues? 6

Superficial Cues in COPA Superficial cues: • Uneven token distributions across classes • Allow models to use simple heuristics to solve We found cues in COPA: • Some tokens appear more often in one alternative • Most informative cues: in, was, to, the, a These cues are predictive of the correct choice 7

Research Questions 1. Does COPA have superficial cues? Yes! 2. Do pre-trained language models exploit these cues? 8

![Ro. BERTa [Liu+19] exploits superficial cues Experiment: provide only incomplete input Makes the task Ro. BERTa [Liu+19] exploits superficial cues Experiment: provide only incomplete input Makes the task](http://slidetodoc.com/presentation_image_h2/98cf1b853f409bf1901bc406125ac0e9/image-9.jpg)

Ro. BERTa [Liu+19] exploits superficial cues Experiment: provide only incomplete input Makes the task impossible Question: What was the cause of this? A 1: She was in a good mood. A 2: She was nervous Ro. BERTa performs better (59. 6%) than random chance Problematic: COPA is designed as a choice between alternatives given the premise 9

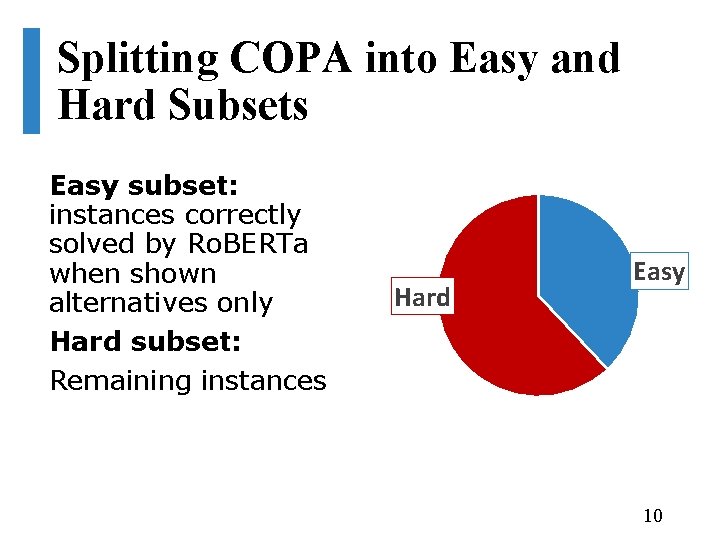

Splitting COPA into Easy and Hard Subsets Easy subset: instances correctly solved by Ro. BERTa when shown alternatives only Hard subset: Remaining instances Hard Easy 10

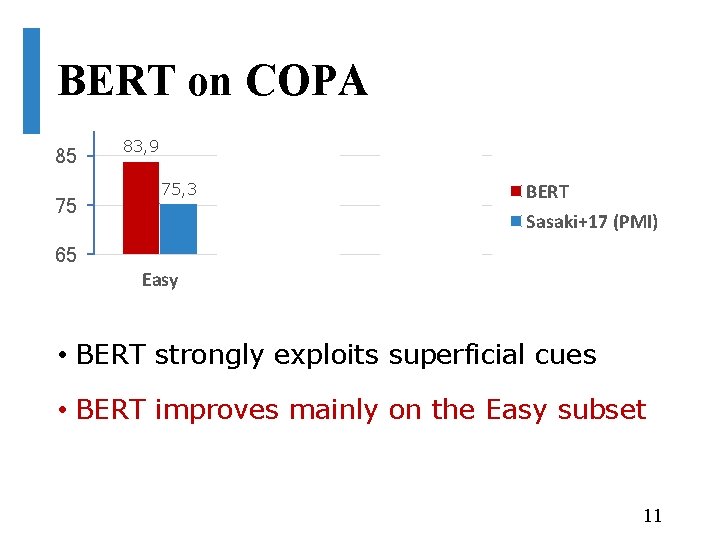

BERT on COPA 85 75 83, 9 75, 3 76, 5 71, 9 69 71, 4 BERT Sasaki+17 (PMI) 65 Easy Hard Overall • BERT strongly exploits superficial cues • BERT improves mainly on the Easy subset 11

Ro. BERTa on COPA 95 91, 6 85 75 87, 7 85, 3 71, 4 69 Ro. BERTa Sasaki+17 (PMI) 65 Easy Hard Overall • Ro. BERTa also exploits superficial cues • But: Ro. BERTa seems to rely less on superficial cues than BERT 12

Research Questions 1. Does COPA have superficial cues? Yes! 2. Do pre-trained language models exploit these cues? Yes! 3. How do LMs perform without cues? 13

Let’s fix COPA! 14 14

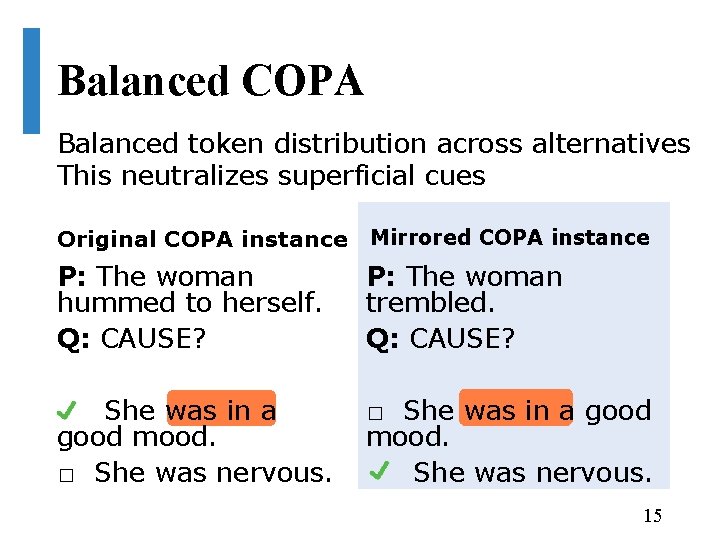

Balanced COPA Balanced token distribution across alternatives This neutralizes superficial cues Original COPA instance Mirrored COPA instance P: The woman hummed to herself. Q: CAUSE? She was in a good mood. � She was nervous. P: The woman trembled. Q: CAUSE? She was in a good mood. She was nervous. � 15

Balanced COPA Available at https: //balanced-copa. github. io Makes superficial cues ineffective Human Evaluation shows it is of similar quality as the original COPA 16

Training without Superficial Cues • Train BERT and Ro. BERTa on Balanced COPA • Test on original COPA 17

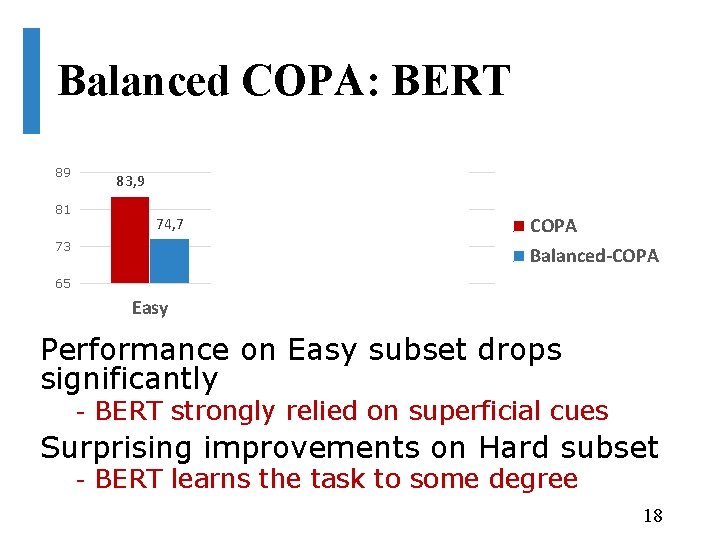

Balanced COPA: BERT 89 81 83, 9 74, 7 73 71, 9 74, 4 76, 5 74, 5 COPA Balanced-COPA 65 Easy Hard Overall Performance on Easy subset drops significantly - BERT strongly relied on superficial cues Surprising improvements on Hard subset - BERT learns the task to some degree 18

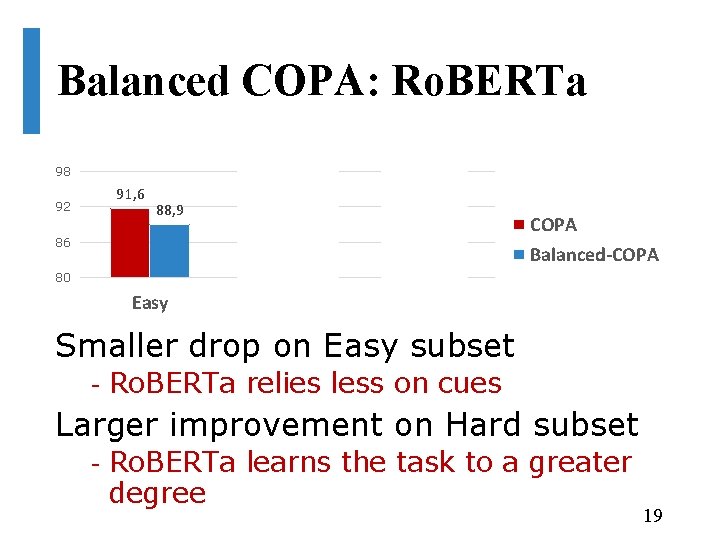

Balanced COPA: Ro. BERTa 98 92 91, 6 89 88, 9 85, 3 86 87, 7 89 COPA Balanced-COPA 80 Easy Hard Overall Smaller drop on Easy subset - Ro. BERTa relies less on cues Larger improvement on Hard subset - Ro. BERTa learns the task to a greater degree 19

Research Questions 1. Does COPA have superficial cues? Yes! 2. Do pre-trained language models exploit these cues? Yes! 3. How do LMs perform without cues? They do well! 20

Conclusions COPA contains superficial cues BERT exploits these cues Ro. BERTa relies less on cues Balanced COPA does not contain superficial cues (hopefully) https: //balanced-copa. github. io Trained on Balanced COPA, BERT and Ro. BERTa perform well 21

22

Superficial cues 23

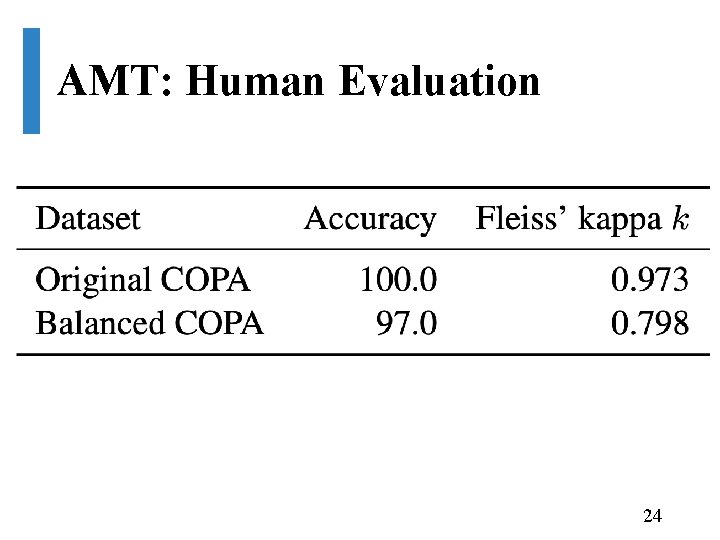

AMT: Human Evaluation 24

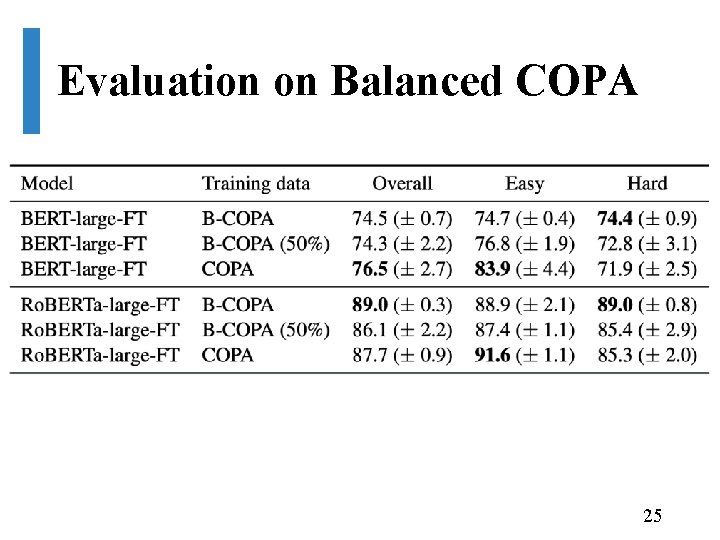

Evaluation on Balanced COPA 25

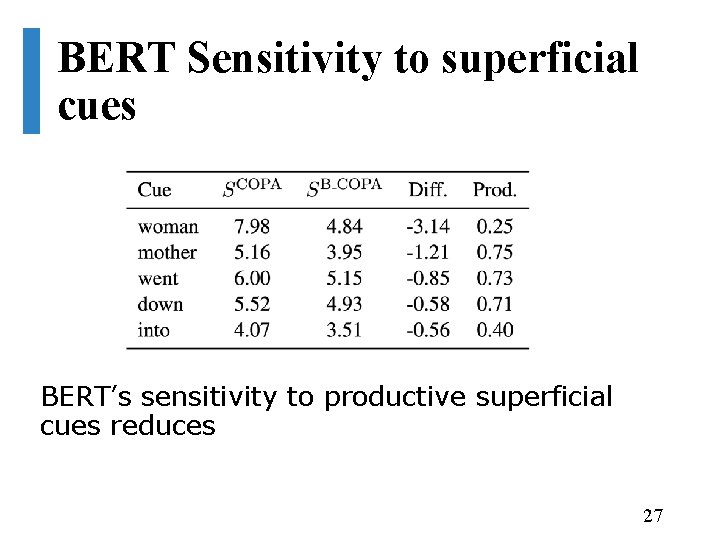

Gradient Sensitivity BERT’s sensitivity to productive cues reduces Ro. BERTa sensitivity change not so clear 26

BERT Sensitivity to superficial cues BERT’s sensitivity to productive superficial cues reduces 27

- Slides: 27