Week 1 Data Representation and Computer Arithmetic Number

Week 1 Data Representation and Computer Arithmetic (Number Bases) Lesson Outlines: Students should be able to: Explain number bases Convert from one base to another Explain data representation codes State the various units of storage bit, nibble, byte and word

The decimal system of counting and keeping track of items was first created by Hindu mathematicians in India in A. D. 400. Since it involved the use of fingers and thumbs, it was natural that this system would have 10 digits. The system found its way to all the Arab countries by A. D. 800, where it was named the Arabic number system, and from there it was eventually adopted by nearly all the European countries by A. D. 1200, where it was called the decimal number system. The key feature that distinguishes one number system from another is the number system's base or radix. This base indicates the number of digits that will be used. The decimal number system, for example, is a base 10 number system which means that it uses 10 digits (0 to 9) to communicate information about an amount. A subscript is something included after a number when different number systems are being used to indicate the base of the number. For example 5327 would represent a number in base 7 (using the digits 0 to 6) number system. 110010 and 11002 are two numbers which contain the same digits but which are written using different bases. The first number we recognize as one thousand one hundred in the decimal (base 10) number system. The second number is a number in the base 2 number system called the binary number system.

The Decimal System This uses ten digits (0, 1, 2, 3, 4, 5, 6, 7, 8, 9) to represent numbers. Consider a decimal number, 847. This means eight hundreds, four tens plus seven. 847 = 8*100 + 4*10 + 7 The system has a base or radix of ten. This means that each digit in a decimal number is multiplied by ten raised to a power corresponding to its position. Thus 847 = 8 * 102 + 4 * 101 + 7 * 100 Fractional values can be represented in the same way. 472. 83 = 4 * 102 + 7 * 101 + 2 * 100 + 8 * 10 -1 + 3 * 10 -2 The Binary System The binary system uses just two digits, 0 and 1, as it is easier for a computer to distinguish between two different voltage levels than ten. This means that it has a base of two. Each digit in a binary number is multiplied by two raised to a power corresponding to its position. 1011 = 1 * 23 + 0 * 22 + 1 * 21 + 1 * 20 = 1 * 8 + 0 * 4 + 1 * 2 + 1 = 11 When confusion is possible, we can show the base of a number by using a subscript. For example, 10112 = 1110

Number Bases Number bases are different was of writing and using the same number - just as Roman Numerals or tally charts are. . We use a system called base 10, or denary, for our arithmetic, but there as many number bases as possible. We can work in any number base (except 1, which wouldn’t really make sense). In computing, however, the most common base system are; � � Base 2 – Binary – 0, 1 Base 8 – Octal – 0, 1, 2, 3, 4, 5, 6, 7 Base 10 – Decimal – 0, 1, 2, 3, 4, 5, 6, 7, 8, 9 Base 16 – Hexadecimal – 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15. The double digits are represented with; A = 10 D = 13 B = 11 E = 14 C = 12 F = 15

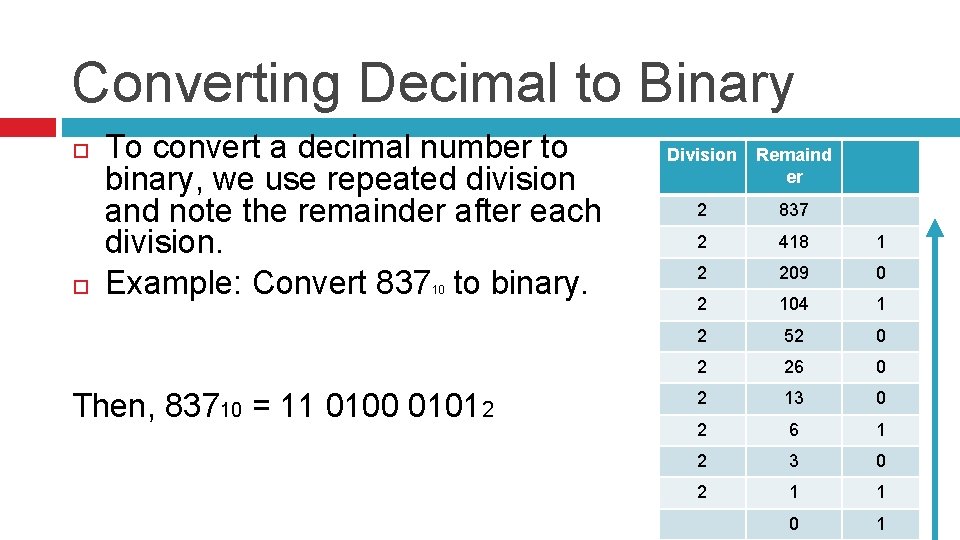

Converting Decimal to Binary To convert a decimal number to binary, we use repeated division and note the remainder after each division. Example: Convert 837 to binary. 10 Then, 83710 = 11 0100 01012 Division Remaind er 2 837 2 418 1 2 209 0 2 104 1 2 52 0 2 26 0 2 13 0 2 6 1 2 3 0 2 1 1 0 1

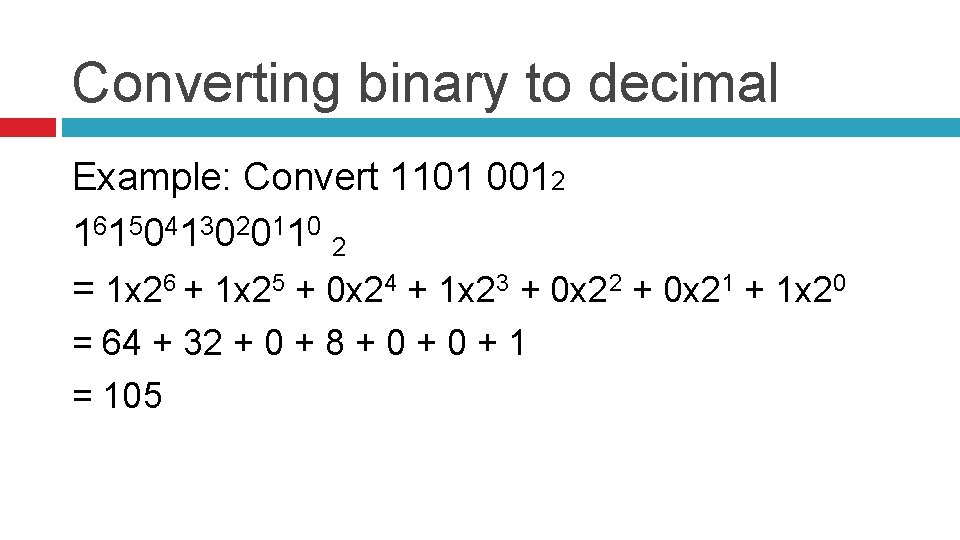

Converting binary to decimal Example: Convert 1101 0012 1 61 50 41 30 20 11 0 2 = 1 x 26 + 1 x 25 + 0 x 24 + 1 x 23 + 0 x 22 + 0 x 21 + 1 x 20 = 64 + 32 + 0 + 8 + 0 + 1 = 105

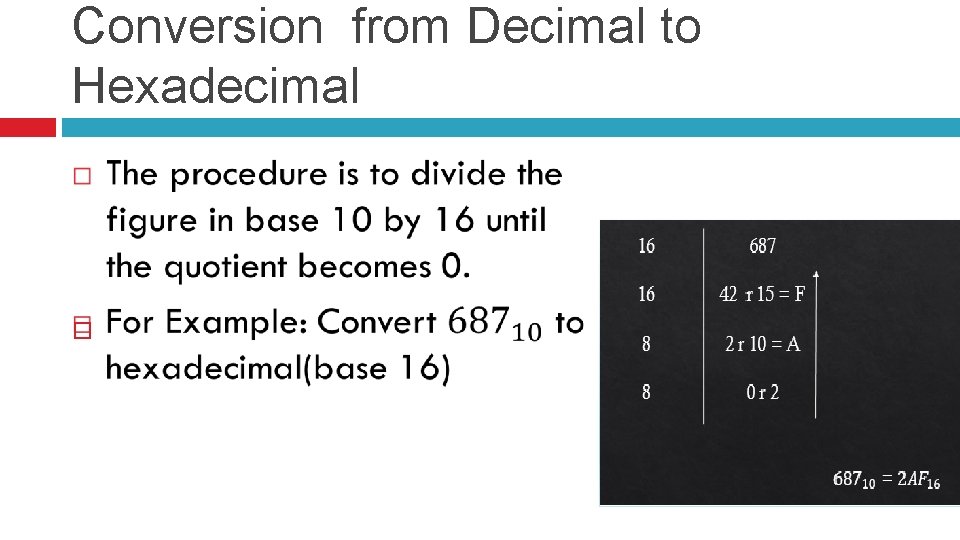

Conversion from Decimal to Hexadecimal

Data Representation and Computer Arithmetic (Number Bases) – cont. Lesson Outlines: Students should be able to: Explain data representation codes State the various units of storage bit, nibble, byte and word

Data representation codes/schemes Any text-based data is stored by the computer in the form of bits (a series of 0 s and 1 s), and follows the specified coding scheme. The coding scheme is a standard which tells the user’s machine which character represents which set of bytes. . This is important because without it, the machine could interpret the given bytes as different character than was intended. For example: ox 6 B may be interpreted as character ‘k’ in ASCII, but as the character ‘, ’ in the less commonly used EBCDIC coding scheme.

Some of the coding schemes are; 1. ASCII (American Standard Code for Information Interchange) – the most common. 2. UTF-32 (Unicode Transformation Format 32 -bit) 3. UTF-16 (Unicode Transformation Format 16 -bit) 4. UTF-8 (Unicode Transformation Format 8 -bit) 5. ISCII (Indian Script Code for Information Interchange) 6. Others are 1. 2. BCD (Binary Coded Decimal) EBCDIC (Extended Binary Coded Decimal Interchange Code),

Assignment Click on attached for the assignment Send your answers to; dikebestbrain@googlemail. com

- Slides: 11