Very Large Scale Computing In Accelerator Physics Robert

Very Large Scale Computing In Accelerator Physics Robert D. Ryne Los Alamos National Laboratory

…with contributions from members of l l Grand Challenge in Computational Accelerator Physics Advanced Computing for 21 st Century Accelerator Science and Technology project Robert Ryne 2

Outline l l Importance of Accelerators Future of Accelerators Importance of Accelerator Simulation Past Accomplishments: n Grand Challenge in Computational Accelerator Physics – electromagnetics – beam dynamics – applications beyond accelerator physics l Future Plans n Advanced Computing for 21 st Century Accelerator S&T Robert Ryne 3

Accelerators have enabled some of the greatest discoveries of the 20 th century l “Extraordinary tools for extraordinary science” n high energy physics n nuclear physics n materials science n biological science Robert Ryne 4

Accelerator Technology Benefits Science, Technology, and Society l l l electron microscopy beam lithography ion implantation accelerator mass spectrometry medical isotope production medical irradiation therapy Robert Ryne 5

Accelerators have been proposed to address issues of international importance l l Accelerator transmutation of waste Accelerator production of tritium Accelerators for proton radiography Accelerator-driven energy production Accelerators are key tools for solving problems related to energy, national security, and quality of the environment Robert Ryne 6

Future of Accelerators: Two Questions l What will be the next major machine beyond LHC? linear collider n n-factory/ m-collider n rare isotope accelerator n 4 th generation light source n l Can we develop a new path to the high-energy frontier? n Plasma/Laser systems may hold the key Robert Ryne 7

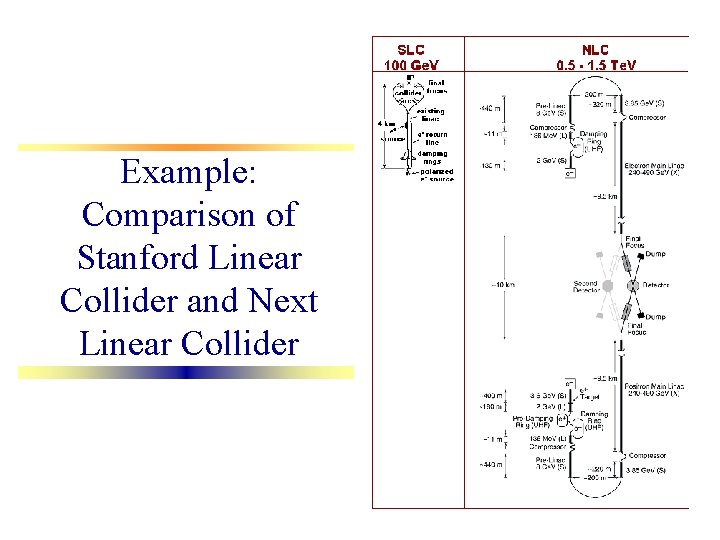

Example: Comparison of Stanford Linear Collider and Next Linear Collider

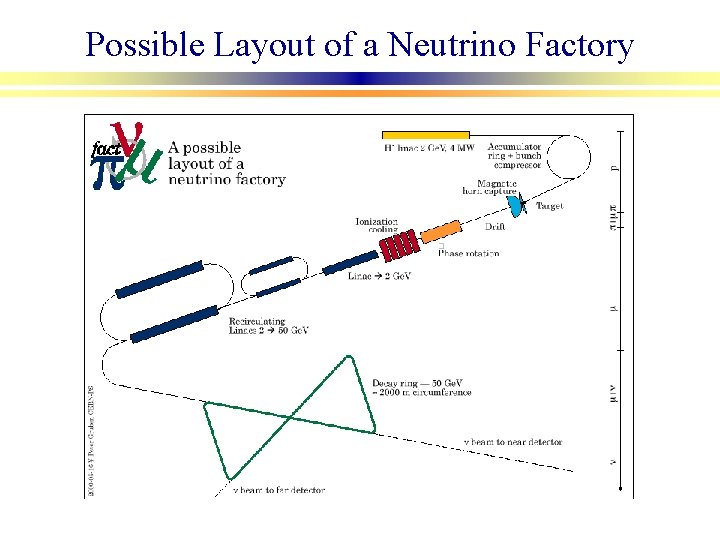

Possible Layout of a Neutrino Factory

Importance of Accelerator Simulation l Next generation of accelerators will involve: higher intensity, higher energy n greater complexity n increased collective effects n l Large-scale simulations essential for design decisions & feasibility studies: – evaluate/reduce risk, reduce cost, optimize performance n accelerator science and technology advancement n Robert Ryne 10

Cost Impacts l Without large-scale simulation: cost escalation n SSC: 1 cm increase in aperture due to lack of confidence in design resulted in $1 B cost increase l With large-scale simulation: cost savings n NLC: Large-scale electromagnetic simulations have led to $100 M cost reduction Robert Ryne 11

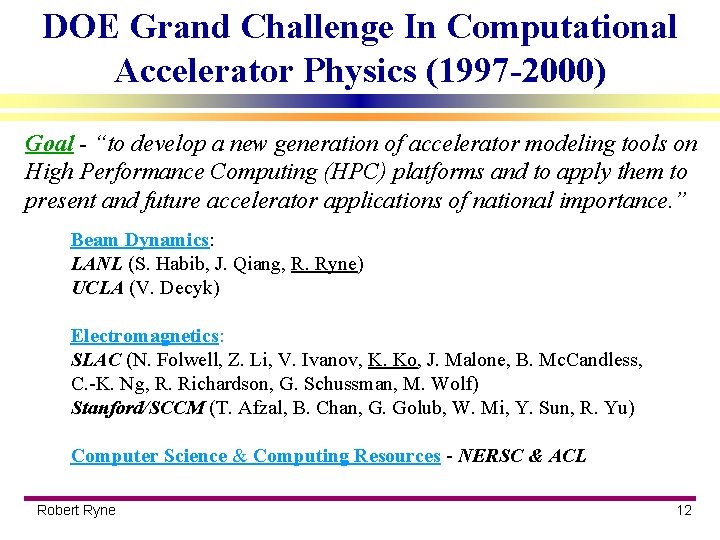

DOE Grand Challenge In Computational Accelerator Physics (1997 -2000) Goal - “to develop a new generation of accelerator modeling tools on High Performance Computing (HPC) platforms and to apply them to present and future accelerator applications of national importance. ” Beam Dynamics: LANL (S. Habib, J. Qiang, R. Ryne) UCLA (V. Decyk) Electromagnetics: SLAC (N. Folwell, Z. Li, V. Ivanov, K. Ko, J. Malone, B. Mc. Candless, C. -K. Ng, R. Richardson, G. Schussman, M. Wolf) Stanford/SCCM (T. Afzal, B. Chan, G. Golub, W. Mi, Y. Sun, R. Yu) Computer Science & Computing Resources - NERSC & ACL Robert Ryne 12

New parallel applications codes have been applied to several major accelerator projects l l Main deliverables: 4 parallel applications codes Electromagnetics: n 3 D parallel eigenmode code Omega 3 P n 3 D parallel time-domain EM code Tau 3 P Beam Dynamics: n 3 D parallel Poisson/Vlasov code, IMPACT n 3 D parallel Fokker/Planck code, LANGEVIN 3 D Applied to SNS, NLC, PEP-II, APT, ALS, CERN/SPL New capability has enabled simulations 3 -4 orders of magnitude greater than previously possible Robert Ryne 13

Parallel Electromagnetic Field Solvers: Features l l l l C++ implementation w/ MPI Reuse of existing parallel libraries (Par. Metis, AZTEC) Unstructured grids for conformal meshes New solvers for fast convergence and scalability Adaptive refinement to improve accuracy & performance Omega 3 P: 3 D finite element w/ linear & quadratic basis functions Tau 3 P: unstructured Yee grid Robert Ryne 14

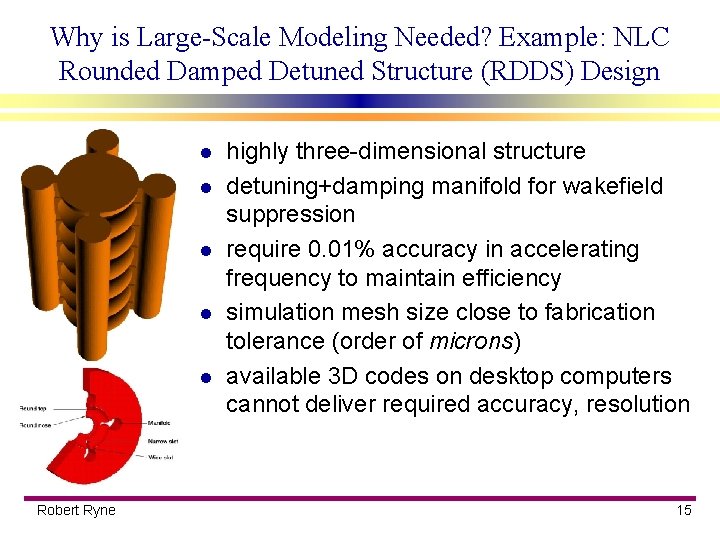

Why is Large-Scale Modeling Needed? Example: NLC Rounded Damped Detuned Structure (RDDS) Design l l l Robert Ryne highly three-dimensional structure detuning+damping manifold for wakefield suppression require 0. 01% accuracy in accelerating frequency to maintain efficiency simulation mesh size close to fabrication tolerance (order of microns) available 3 D codes on desktop computers cannot deliver required accuracy, resolution 15

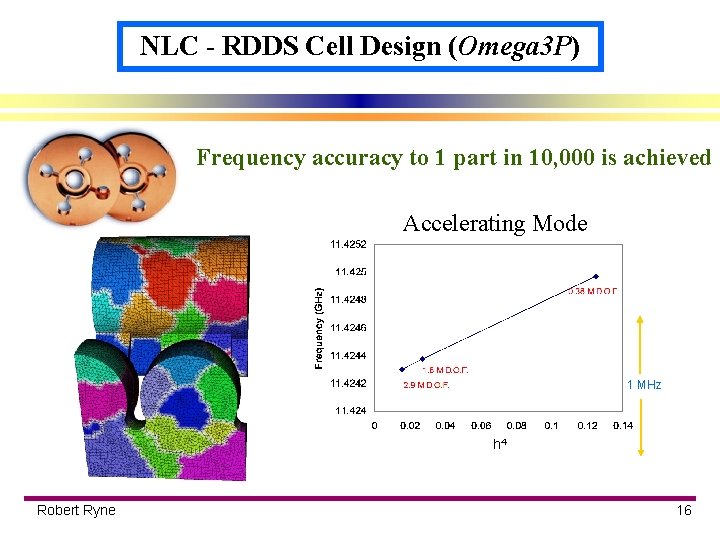

NLC - RDDS Cell Design (Omega 3 P) Frequency accuracy to 1 part in 10, 000 is achieved Accelerating Mode 1 MHz h 4 Robert Ryne 16

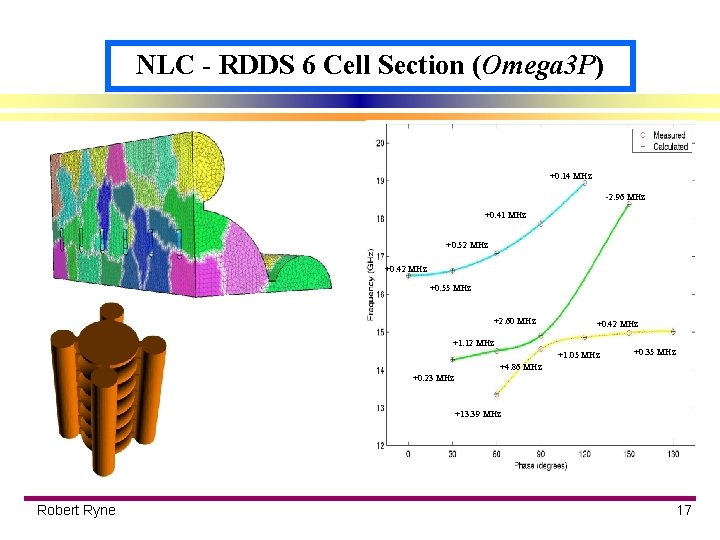

NLC - RDDS 6 Cell Section (Omega 3 P) +0. 14 MHz -2. 96 MHz +0. 41 MHz +0. 52 MHz +0. 42 MHz +0. 55 MHz +2. 60 MHz +0. 42 MHz +1. 12 MHz +1. 05 MHz +0. 35 MHz +4. 86 MHz +0. 23 MHz +13. 39 MHz Robert Ryne 17

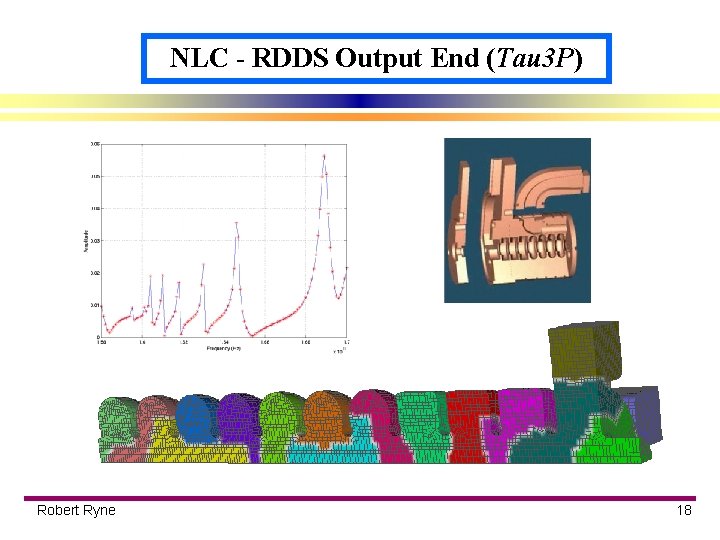

NLC - RDDS Output End (Tau 3 P) Robert Ryne 18

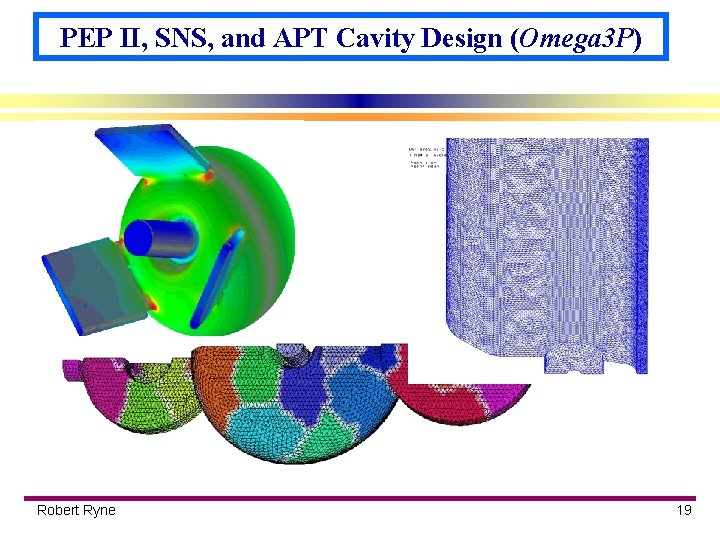

PEP II, SNS, and APT Cavity Design (Omega 3 P) Robert Ryne 19

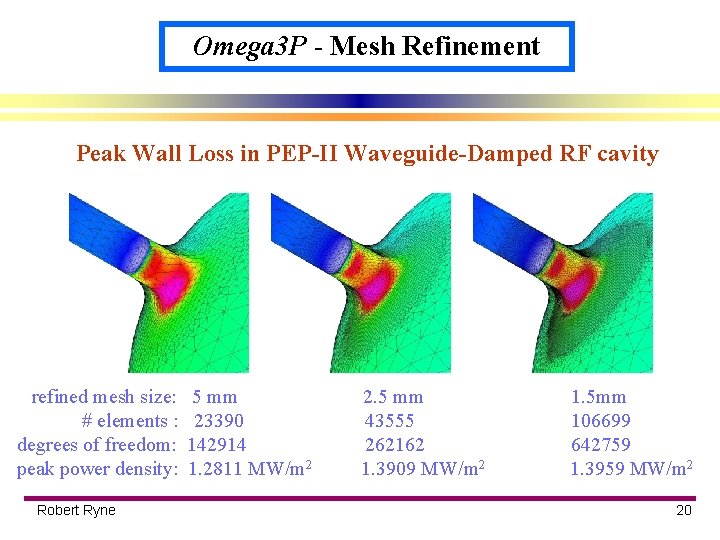

Omega 3 P - Mesh Refinement Peak Wall Loss in PEP-II Waveguide-Damped RF cavity refined mesh size: # elements : degrees of freedom: peak power density: Robert Ryne 5 mm 23390 142914 1. 2811 MW/m 2 2. 5 mm 43555 262162 1. 3909 MW/m 2 1. 5 mm 106699 642759 1. 3959 MW/m 2 20

Parallel Beam Dynamics Codes: Features l l l split-operator-based 3 D parallel particle-in-cell canonical variables variety of implementations (F 90/MPI, C++, POOMA, HPF) particle manager, field manager, dynamic load balancing 6 types of boundary conditions for field solvers: n l l open/circular/rectangular transverse; open/periodic longitudinal reference trajectory + transfer maps computed “on the fly” philosophy: do not take tiny steps to push particles n do take tiny steps to compute maps; then push particles w/ maps n l LANGEVIN 3 D: self-consistent damping/diffusion coefficients Robert Ryne 21

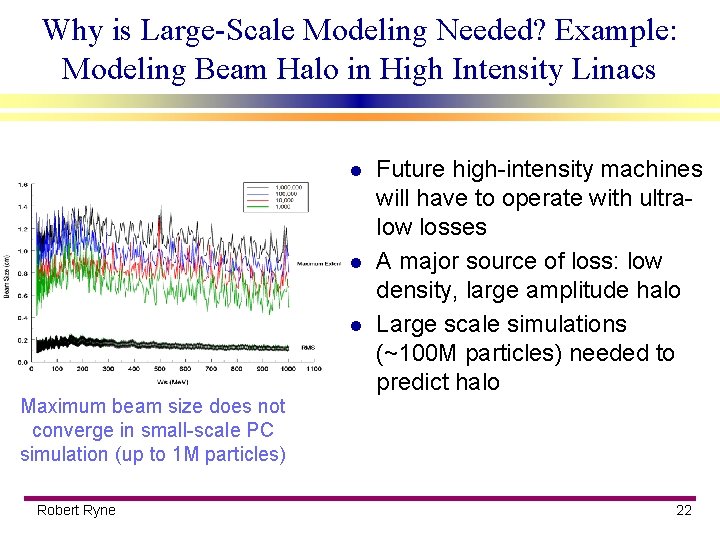

Why is Large-Scale Modeling Needed? Example: Modeling Beam Halo in High Intensity Linacs l l l Future high-intensity machines will have to operate with ultralow losses A major source of loss: low density, large amplitude halo Large scale simulations (~100 M particles) needed to predict halo Maximum beam size does not converge in small-scale PC simulation (up to 1 M particles) Robert Ryne 22

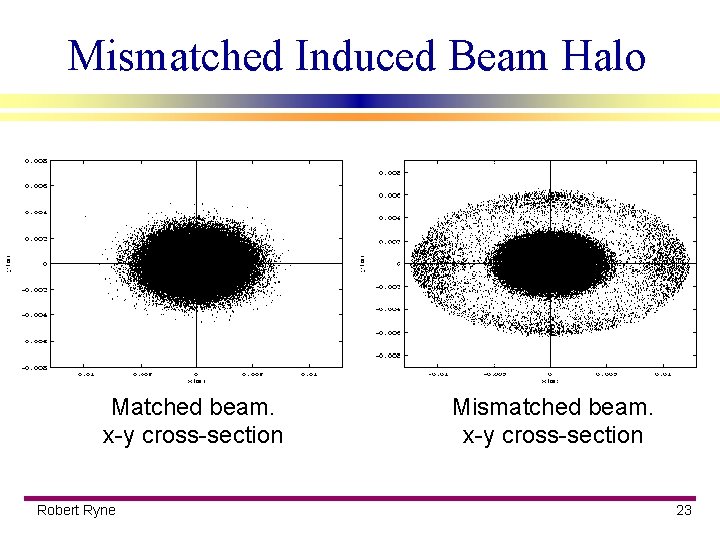

Mismatched Induced Beam Halo Matched beam. x-y cross-section Robert Ryne Mismatched beam. x-y cross-section 23

Vlasov Code or PIC code? l Direct Vlasov: bad: very large memory n bad: subgrid scale effects n good: no sampling noise n good: no collisionality n l Particle-based: good: low memory n good: subgrid resolution OK n bad: statistical fluctuations n bad: numerical collisionality n Robert Ryne 24

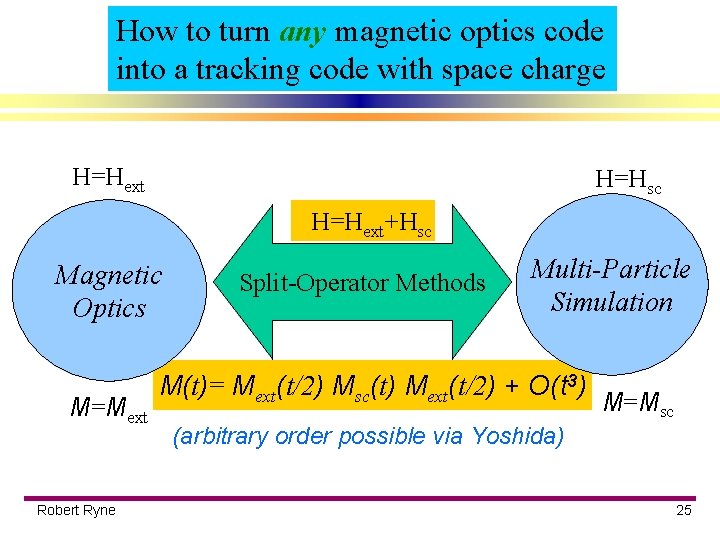

How to turn any magnetic optics code into a tracking code with space charge H=Hext H=Hsc H=Hext+Hsc Magnetic Optics M=Mext Robert Ryne Split-Operator Methods Multi-Particle Simulation M(t)= Mext(t/2) Msc(t) Mext(t/2) + O(t 3) M=Msc (arbitrary order possible via Yoshida) 25

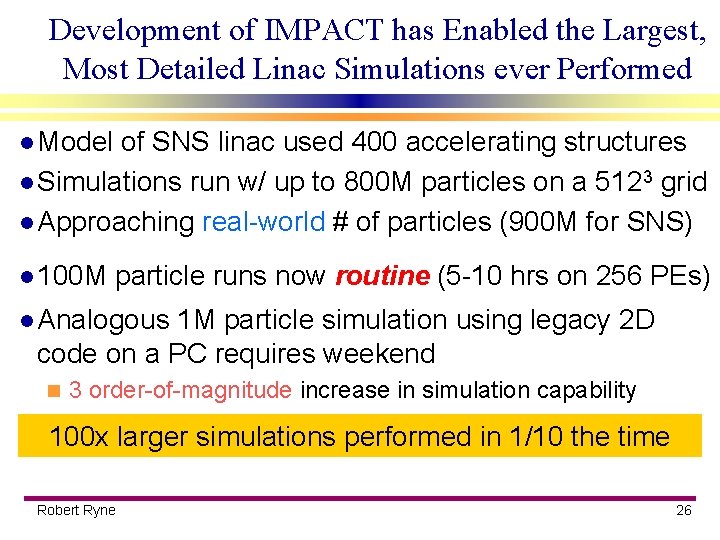

Development of IMPACT has Enabled the Largest, Most Detailed Linac Simulations ever Performed l Model of SNS linac used 400 accelerating structures l Simulations run w/ up to 800 M particles on a 5123 grid l Approaching real-world # of particles (900 M for SNS) l 100 M particle runs now routine (5 -10 hrs on 256 PEs) l Analogous 1 M particle simulation using legacy 2 D code on a PC requires weekend n 3 order-of-magnitude increase in simulation capability 100 x larger simulations performed in 1/10 the time Robert Ryne 26

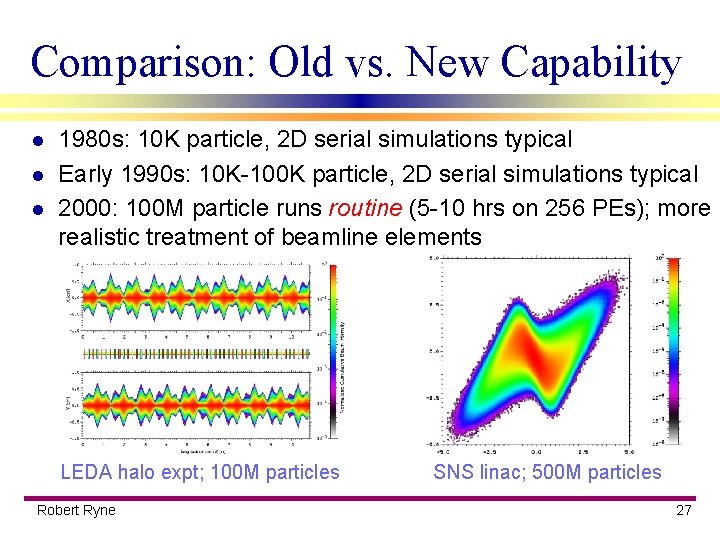

Comparison: Old vs. New Capability l l l 1980 s: 10 K particle, 2 D serial simulations typical Early 1990 s: 10 K-100 K particle, 2 D serial simulations typical 2000: 100 M particle runs routine (5 -10 hrs on 256 PEs); more realistic treatment of beamline elements LEDA halo expt; 100 M particles Robert Ryne SNS linac; 500 M particles 27

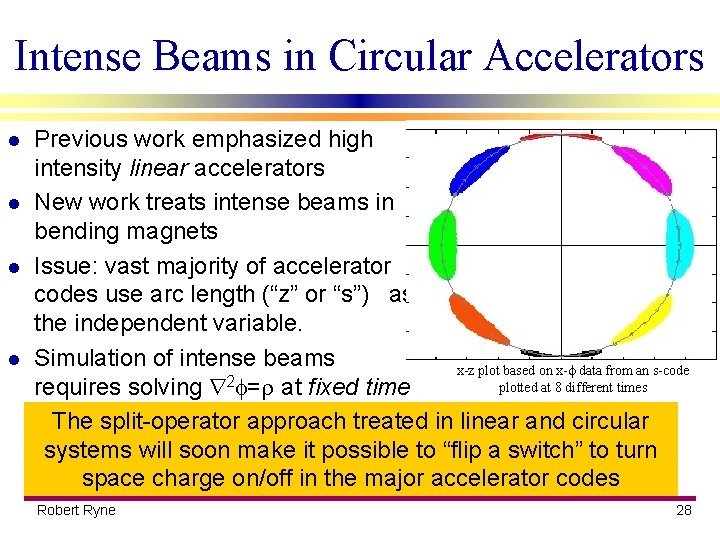

Intense Beams in Circular Accelerators l l Previous work emphasized high intensity linear accelerators New work treats intense beams in bending magnets Issue: vast majority of accelerator codes use arc length (“z” or “s”) as the independent variable. Simulation of intense beams x-z plot based on x- data from an s-code 2 plotted at 8 different times requires solving = at fixed time The split-operator approach treated in linear and circular systems will soon make it possible to “flip a switch” to turn space charge on/off in the major accelerator codes Robert Ryne 28

Collaboration/impact beyond accelerator physics l Modeling collisions in plasmas n l new Fokker/Planck code Modeling astrophysical systems starting w/ IMPACT, developing astrophysical PIC code n also a testbed for testing scripting ideas n l Modeling stochastic dynamical systems n l Simulations requiring solution of large eigensystems n l new leap-frog integrator for systems w/ multiplicative noise new eigensolver developed by SLAC/NMG & Stanford SCCM Modeling quantum systems n Spectral and De. Raedt-style codes to solve the Schrodinger, density matrix, and Wigner-function equations Robert Ryne 29

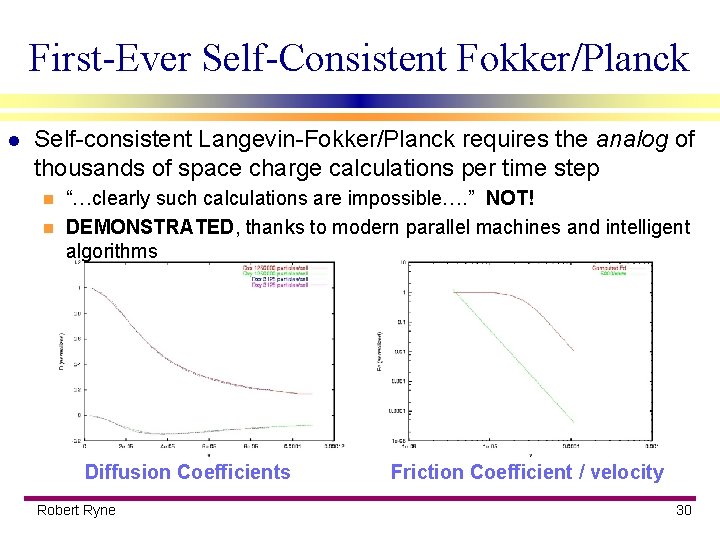

First-Ever Self-Consistent Fokker/Planck l Self-consistent Langevin-Fokker/Planck requires the analog of thousands of space charge calculations per time step “…clearly such calculations are impossible…. ” NOT! n DEMONSTRATED, thanks to modern parallel machines and intelligent algorithms n Diffusion Coefficients Robert Ryne Friction Coefficient / velocity 30

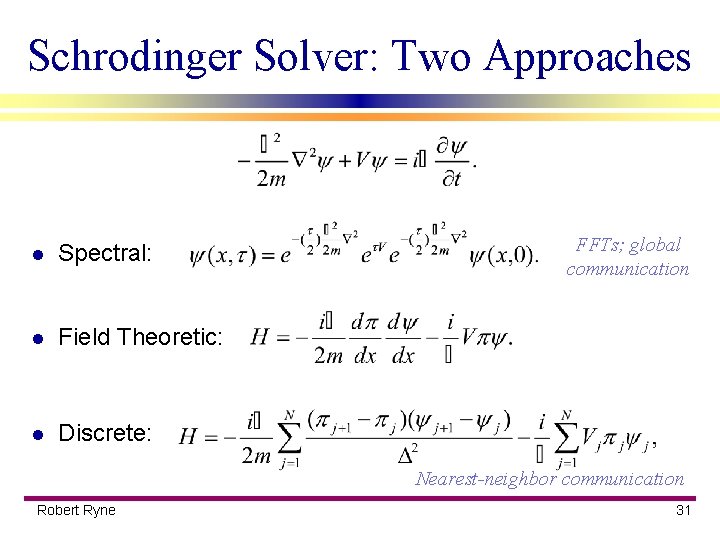

Schrodinger Solver: Two Approaches l Spectral: l Field Theoretic: l Discrete: FFTs; global communication Nearest-neighbor communication Robert Ryne 31

Conclusion “Advanced Computing for 21 st Century Accelerator Sci. & Tech. ” l l Builds on foundation laid by Accelerator Grand Challenge Larger collaboration: n l l Project Goal: develop a comprehensive, coherent accelerator simulation environment Focus Areas: n l presently LANL, SLAC, FNAL, LBNL, JLab, Stanford, UCLA Beam Systems Simulation, Electromagnetic Systems Simulation, Beam/Electromagnetic Systems Integration View toward near-term impact on: n NLC, n-factory (driver, muon cooling), laser/plasma accelerators Robert Ryne 32

Acknowledgement l Work supported by the DOE Office of Science Office of Advanced Scientific Computing Research, Division of Mathematical, Information, and Computational Sciences n Office of High Energy and Nuclear Physics n Division of High Energy Physics, Los Alamos Accelerator Code Group n Robert Ryne 33

- Slides: 33