VAE for RL state encoding Bren Francesco Rebecca

VAE for RL state encoding Bren, Francesco, Rebecca, Simon, Verena

Objectives • RL agents need state space (based on observation), as well as action space (which change state) and reward • As an alternative to explicit feature extraction for the state, here we will try to use a VAE for implicit state description for RL agent • This should gives us automatic unsupervised feature extraction • Could be relevant where the explicit state feature extraction is difficult/impossible

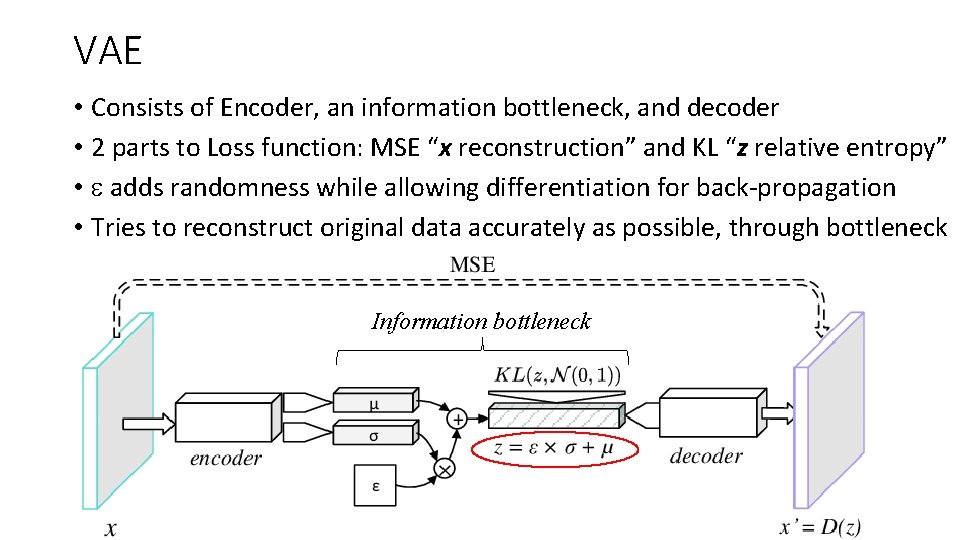

VAE • Consists of Encoder, an information bottleneck, and decoder • 2 parts to Loss function: MSE “x reconstruction” and KL “z relative entropy” • e adds randomness while allowing differentiation for back-propagation • Tries to reconstruct original data accurately as possible, through bottleneck Information bottleneck

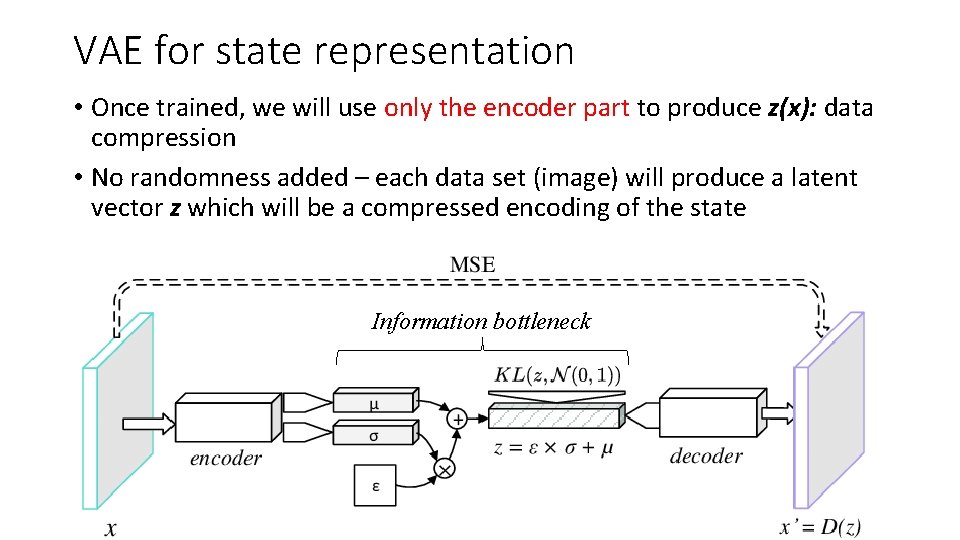

VAE for state representation • Once trained, we will use only the encoder part to produce z(x): data compression • No randomness added – each data set (image) will produce a latent vector z which will be a compressed encoding of the state Information bottleneck

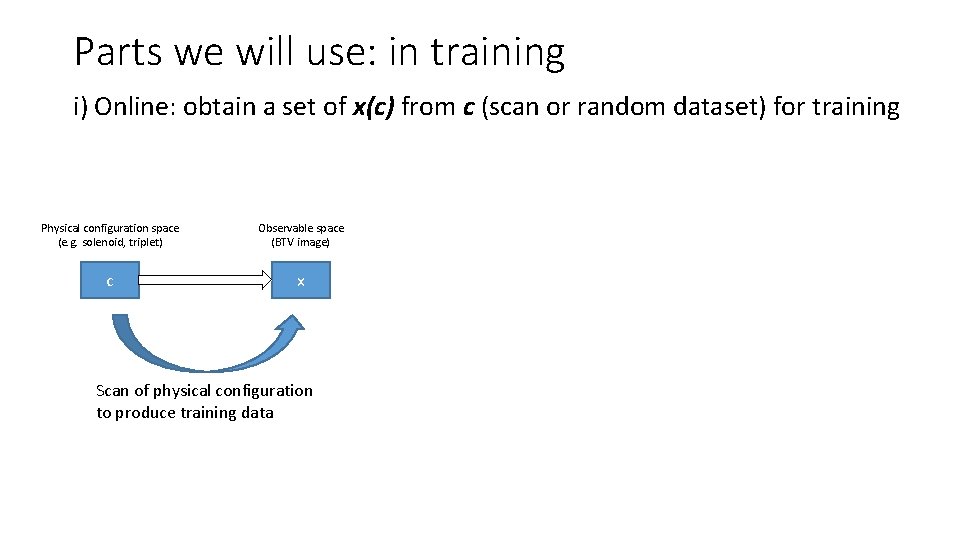

Parts we will use: in training i) Online: obtain a set of x(c) from c (scan or random dataset) for training Physical configuration space (e. g. solenoid, triplet) Observable space (BTV image) c x Scan of physical configuration to produce training data

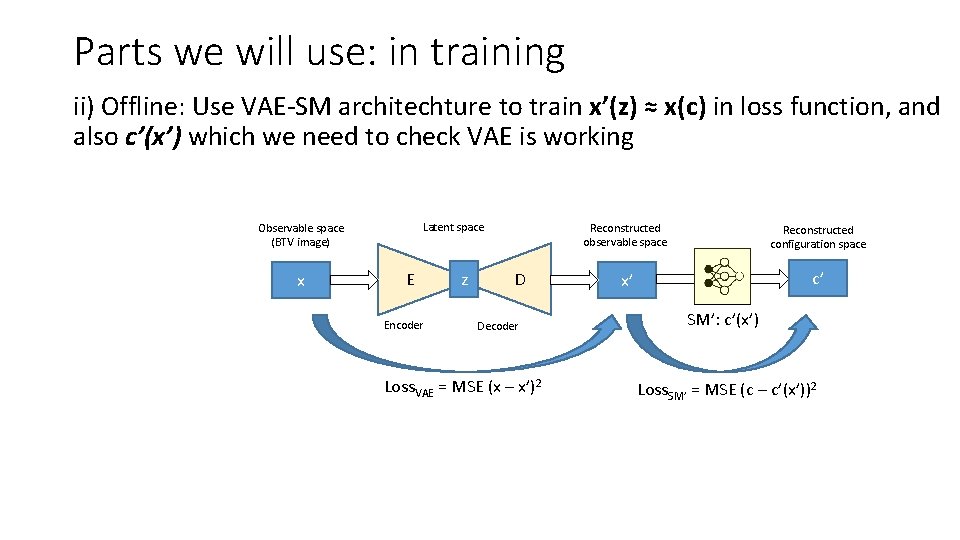

Parts we will use: in training ii) Offline: Use VAE-SM architechture to train x’(z) ≈ x(c) in loss function, and also c’(x’) which we need to check VAE is working Latent space Observable space (BTV image) x E Encoder z D Decoder Loss. VAE = MSE (x – x’)2 Reconstructed observable space Reconstructed configuration space x’ c’ SM’: c’(x’) Loss. SM’ = MSE (c – c’(x’))2

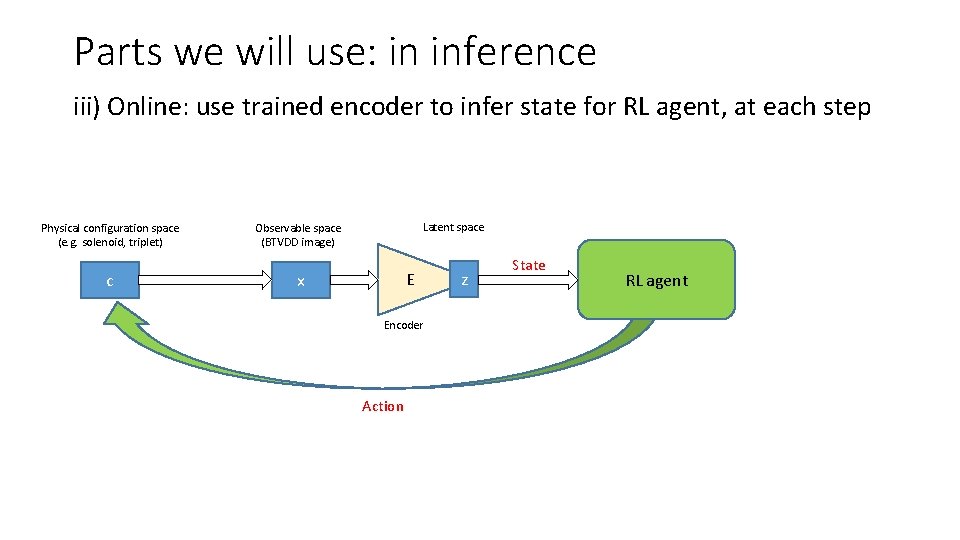

Parts we will use: in inference iii) Online: use trained encoder to infer state for RL agent, at each step Physical configuration space (e. g. solenoid, triplet) c Latent space Observable space (BTVDD image) E x Encoder Action z State RL agent

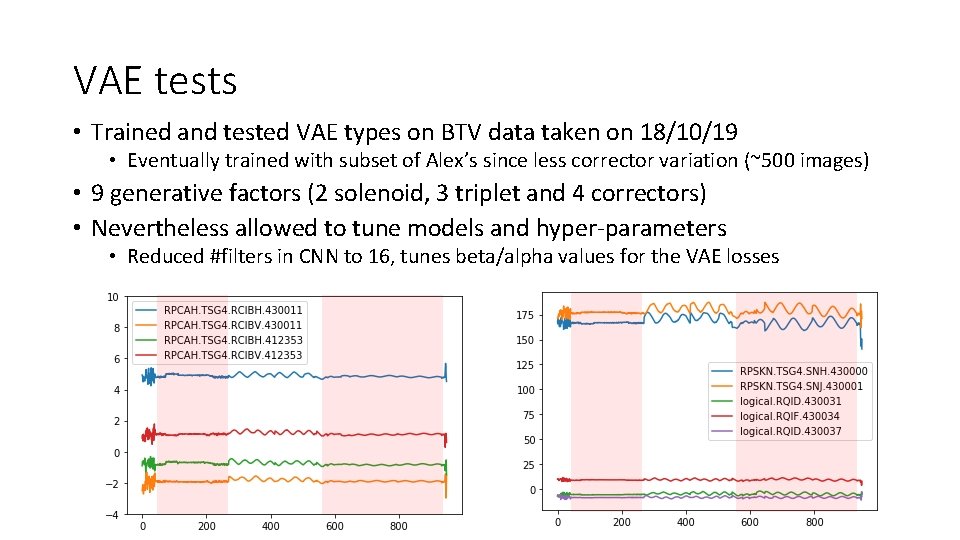

VAE tests • Trained and tested VAE types on BTV data taken on 18/10/19 • Eventually trained with subset of Alex’s since less corrector variation (~500 images) • 9 generative factors (2 solenoid, 3 triplet and 4 correctors) • Nevertheless allowed to tune models and hyper-parameters • Reduced #filters in CNN to 16, tunes beta/alpha values for the VAE losses

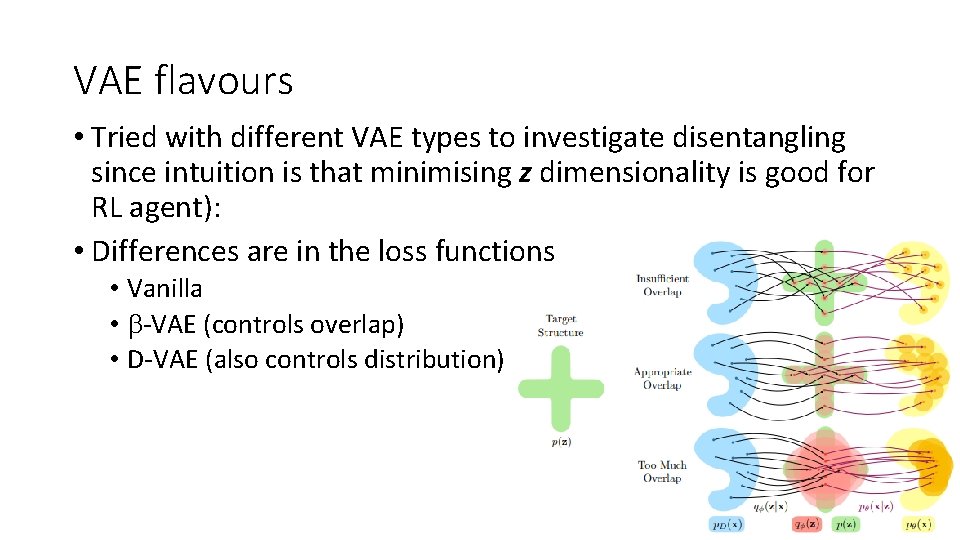

VAE flavours • Tried with different VAE types to investigate disentangling since intuition is that minimising z dimensionality is good for RL agent): • Differences are in the loss functions • Vanilla • b-VAE (controls overlap) • D-VAE (also controls distribution)

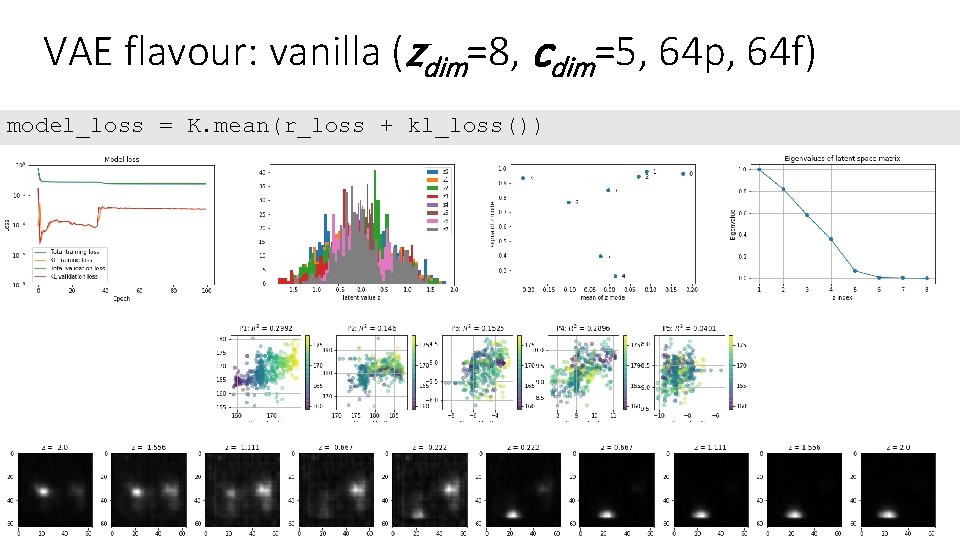

VAE flavour: vanilla (zdim=8, cdim=5, 64 p, 64 f) model_loss = K. mean(r_loss + kl_loss())

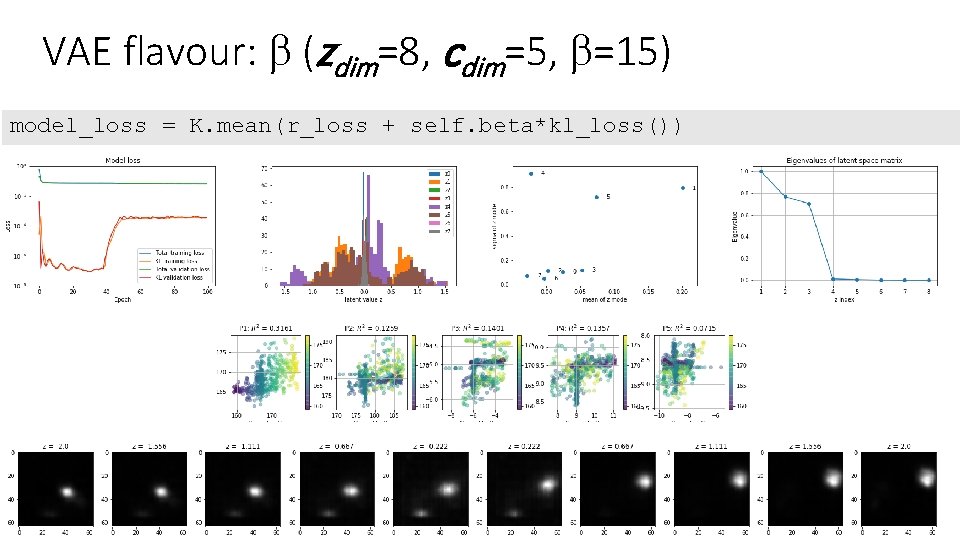

VAE flavour: b (zdim=8, cdim=5, b=15) model_loss = K. mean(r_loss + self. beta*kl_loss())

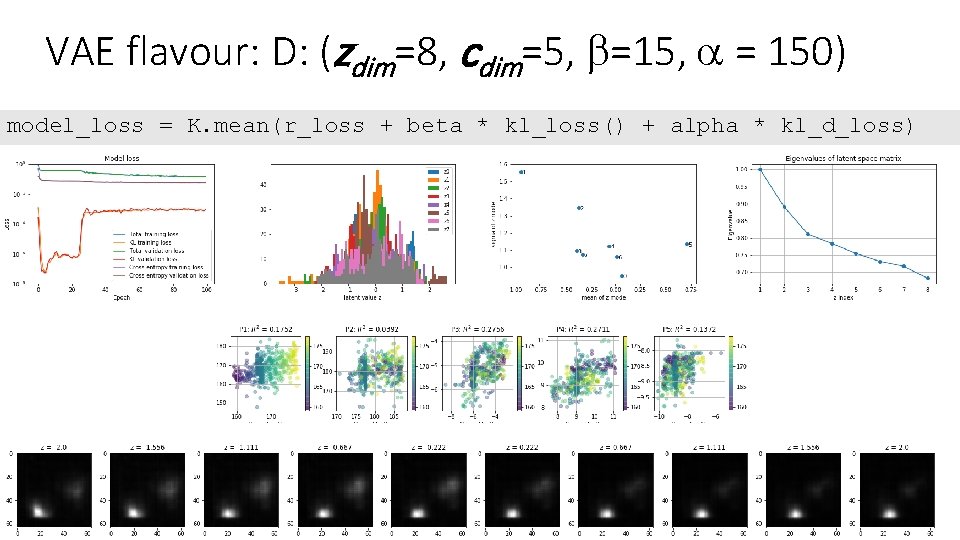

VAE flavour: D: (zdim=8, cdim=5, b=15, a = 150) model_loss = K. mean(r_loss + beta * kl_loss() + alpha * kl_d_loss)

VAE Loss function: comes in many parts • “x Reconstruction Loss” measures how well the reconstructed data x’ compares to the original data x. For an image this could be pixel-wise comparison. • “z KL* loss”, or relative entropy, measures the difference between the actual z distribution and an ideal Gaussian with m 0 and s of 1. A low value means the z distribution is close to this ideal • The two losses are fighting each other in the network – a perfect reconstruction means finite entropy between z and N(0, 1) while perfect (zero) entropy means no information content can be in the reconstruction • VAE is example of Bayesian inference, i. e. tries to model underlying probability distribution of data, so that it can sample new data from this distribution

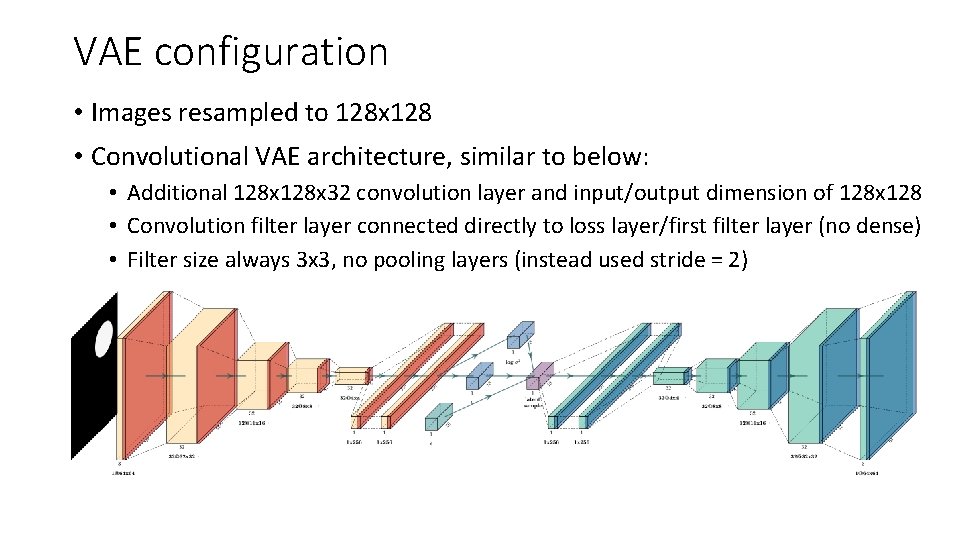

VAE configuration • Images resampled to 128 x 128 • Convolutional VAE architecture, similar to below: • Additional 128 x 32 convolution layer and input/output dimension of 128 x 128 • Convolution filter layer connected directly to loss layer/first filter layer (no dense) • Filter size always 3 x 3, no pooling layers (instead used stride = 2)

- Slides: 15