UNIX Internals The New Frontiers Device Drivers and

![VOP_POLL 24 u Error = VOP_POLL(vp, events, anyyet, &revents, &php) u spec_poll() indexes cdevsw[] VOP_POLL 24 u Error = VOP_POLL(vp, events, anyyet, &revents, &php) u spec_poll() indexes cdevsw[]](https://slidetodoc.com/presentation_image_h/c4a346fca3ac59fd005a80bac506c0c2/image-24.jpg)

- Slides: 45

UNIX Internals – The New Frontiers Device Drivers and I/O 1

16. 2 Overview u Device driver u An object that controls one or more devices and interacts with the kernel u Written by third-party vendor u Isolate device-specific code in a module u Easy to add without kernel source code u Kernel has a consistent view of all devices 2

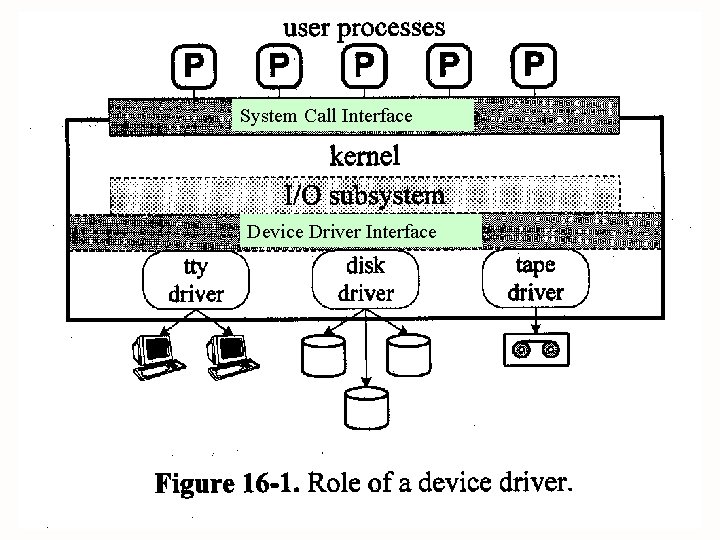

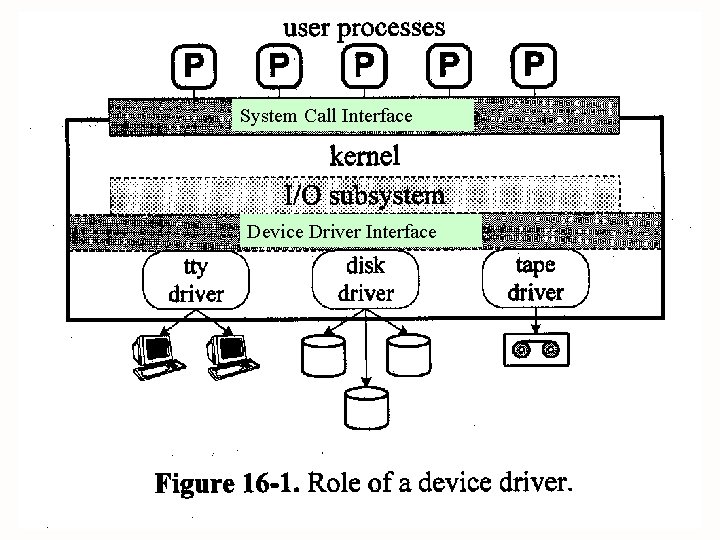

System Call Interface Device Driver Interface 3

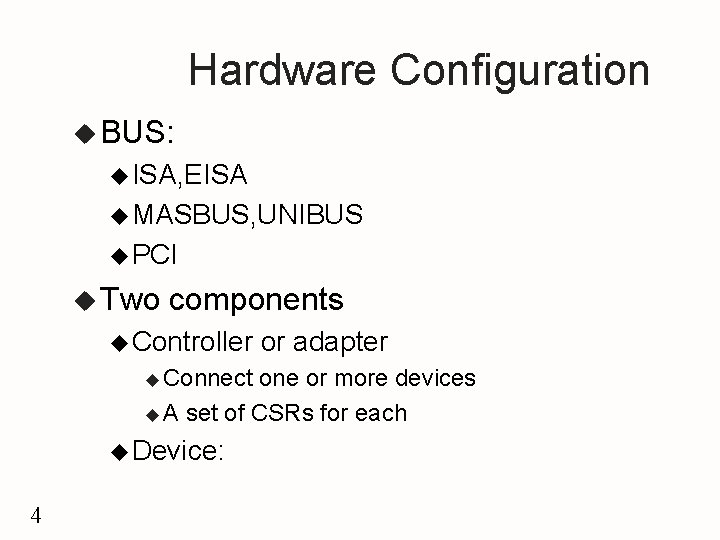

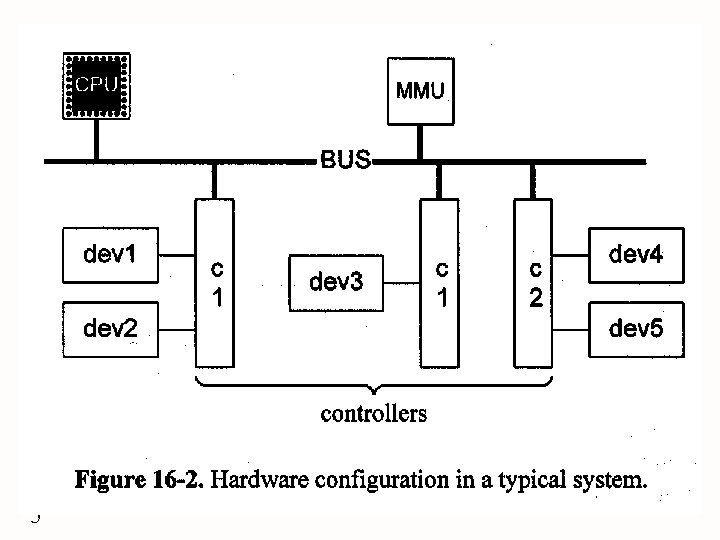

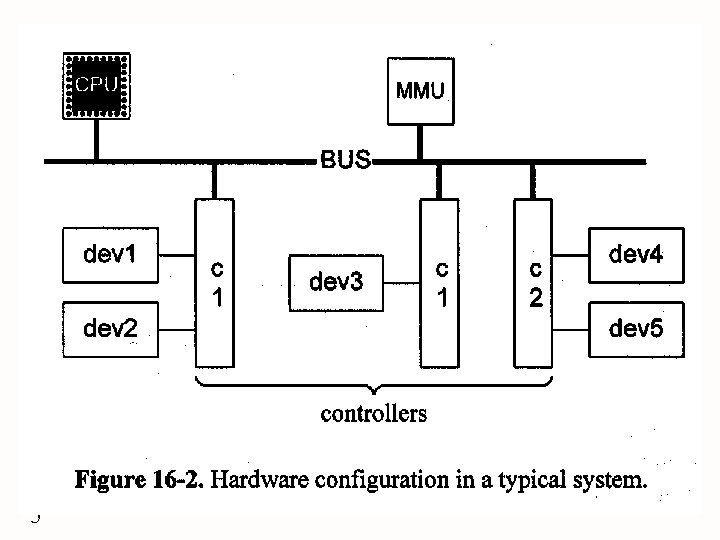

Hardware Configuration u BUS: u ISA, EISA u MASBUS, UNIBUS u PCI u Two components u Controller u Connect or adapter one or more devices u A set of CSRs for each u Device: 4

5

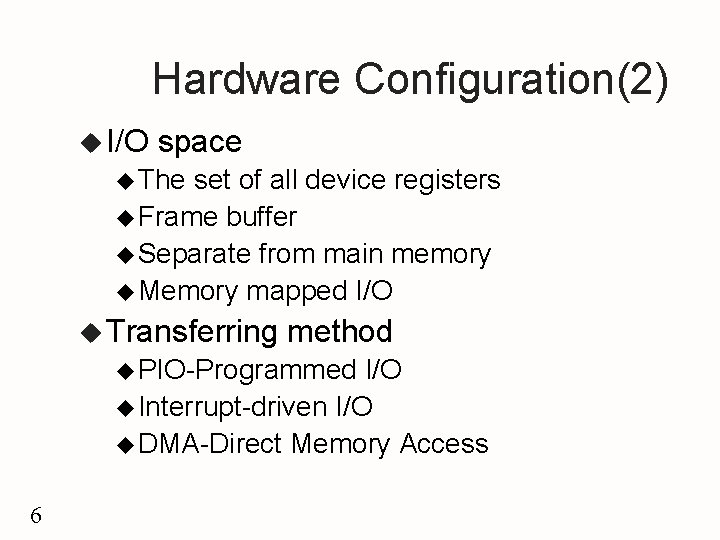

Hardware Configuration(2) u I/O space u The set of all device registers u Frame buffer u Separate from main memory u Memory mapped I/O u Transferring method u PIO-Programmed I/O u Interrupt-driven I/O u DMA-Direct Memory Access 6

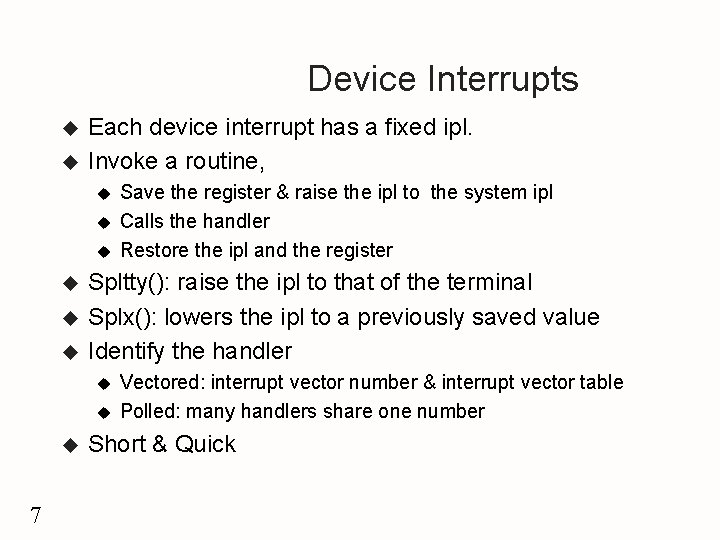

Device Interrupts u u Each device interrupt has a fixed ipl. Invoke a routine, u u u Spltty(): raise the ipl to that of the terminal Splx(): lowers the ipl to a previously saved value Identify the handler u u u 7 Save the register & raise the ipl to the system ipl Calls the handler Restore the ipl and the register Vectored: interrupt vector number & interrupt vector table Polled: many handlers share one number Short & Quick

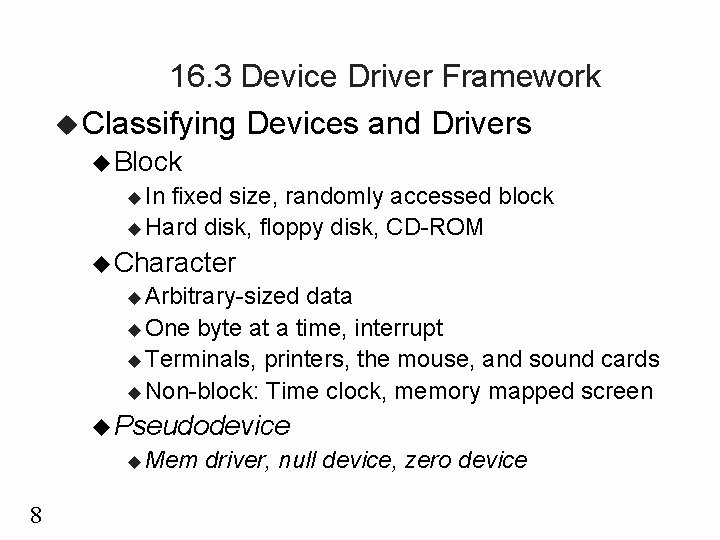

16. 3 Device Driver Framework u Classifying Devices and Drivers u Block u In fixed size, randomly accessed block u Hard disk, floppy disk, CD-ROM u Character u Arbitrary-sized data u One byte at a time, interrupt u Terminals, printers, the mouse, and sound cards u Non-block: Time clock, memory mapped screen u Pseudodevice u Mem 8 driver, null device, zero device

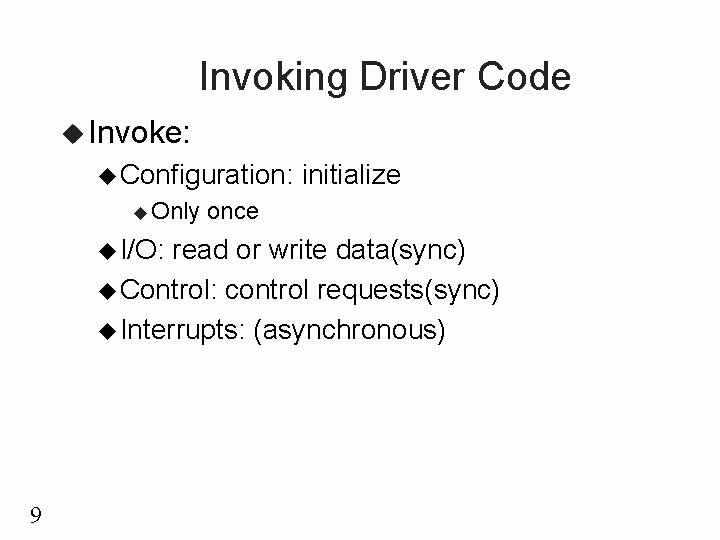

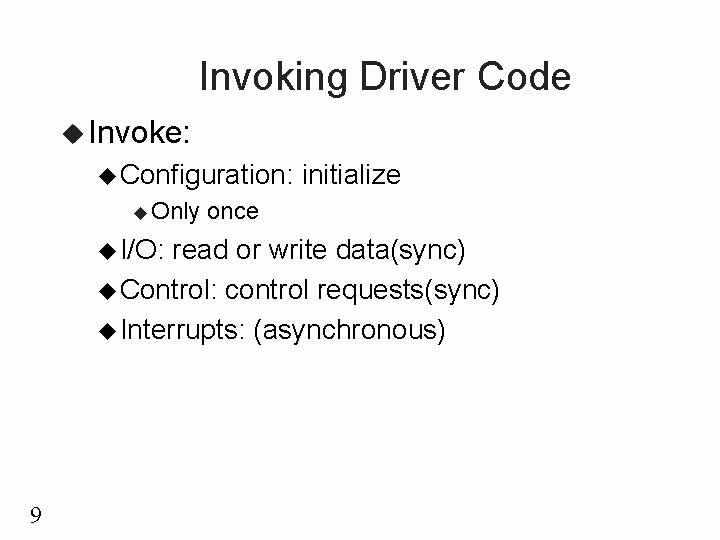

Invoking Driver Code u Invoke: u Configuration: u Only u I/O: initialize once read or write data(sync) u Control: control requests(sync) u Interrupts: (asynchronous) 9

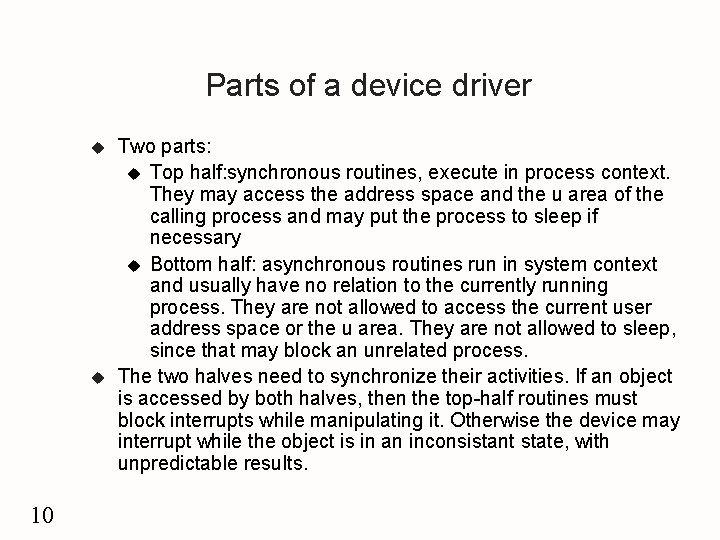

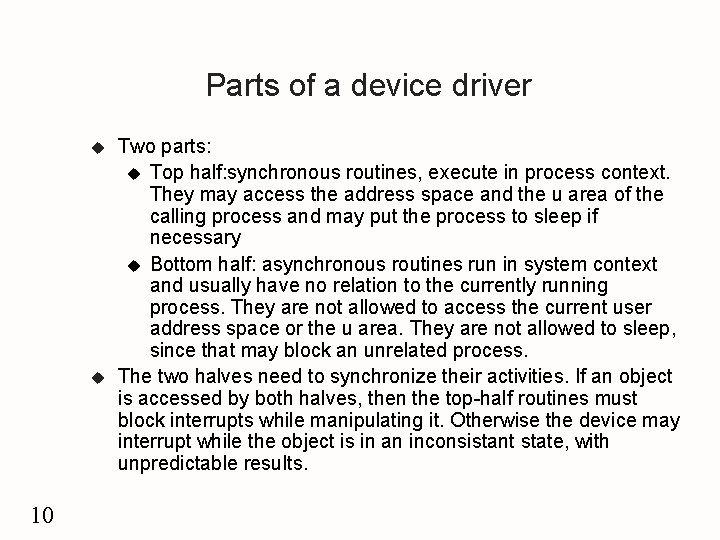

Parts of a device driver u u 10 Two parts: u Top half: synchronous routines, execute in process context. They may access the address space and the u area of the calling process and may put the process to sleep if necessary u Bottom half: asynchronous routines run in system context and usually have no relation to the currently running process. They are not allowed to access the current user address space or the u area. They are not allowed to sleep, since that may block an unrelated process. The two halves need to synchronize their activities. If an object is accessed by both halves, then the top-half routines must block interrupts while manipulating it. Otherwise the device may interrupt while the object is in an inconsistant state, with unpredictable results.

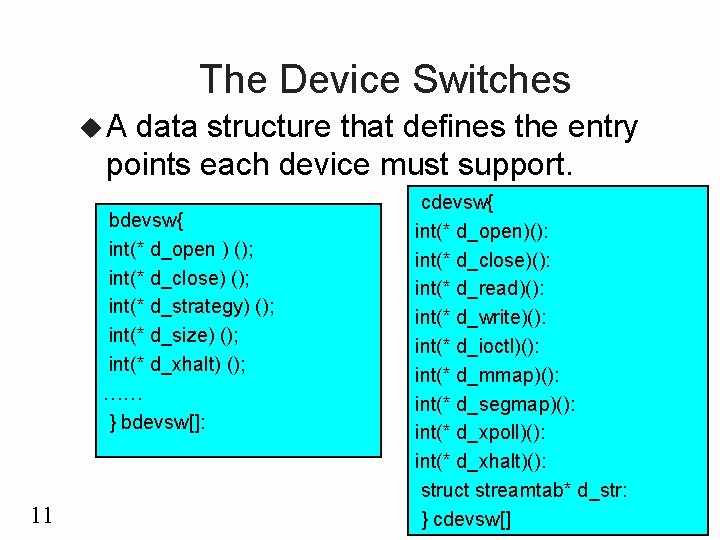

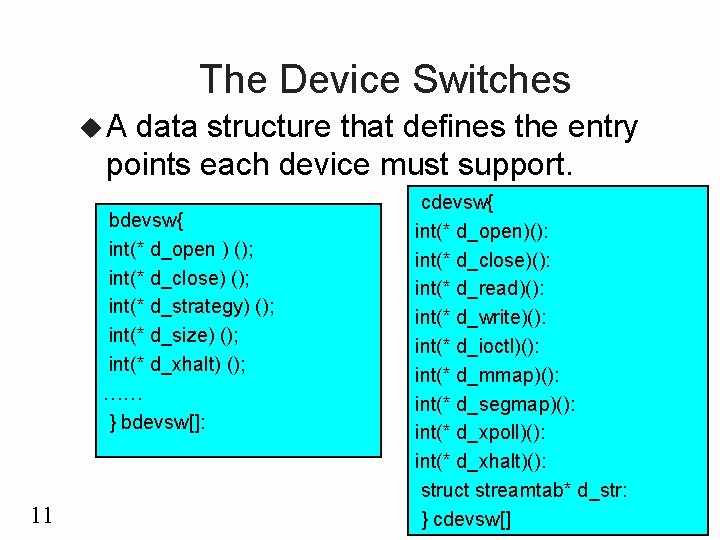

The Device Switches u. A data structure that defines the entry points each device must support. bdevsw{ int(* d_open ) (); int(* d_close) (); int(* d_strategy) (); int(* d_size) (); int(* d_xhalt) (); …… } bdevsw[]: 11 cdevsw{ int(* d_open)(): int(* d_close)(): int(* d_read)(): int(* d_write)(): int(* d_ioctl)(): int(* d_mmap)(): int(* d_segmap)(): int(* d_xpoll)(): int(* d_xhalt)(): struct streamtab* d_str: } cdevsw[]

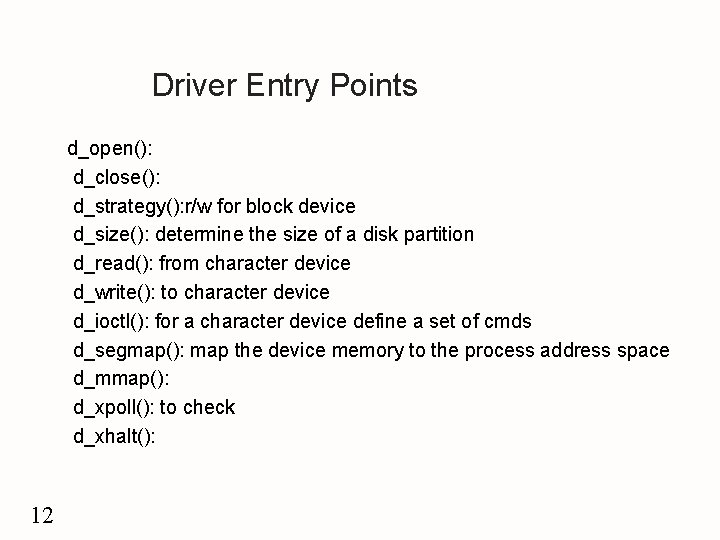

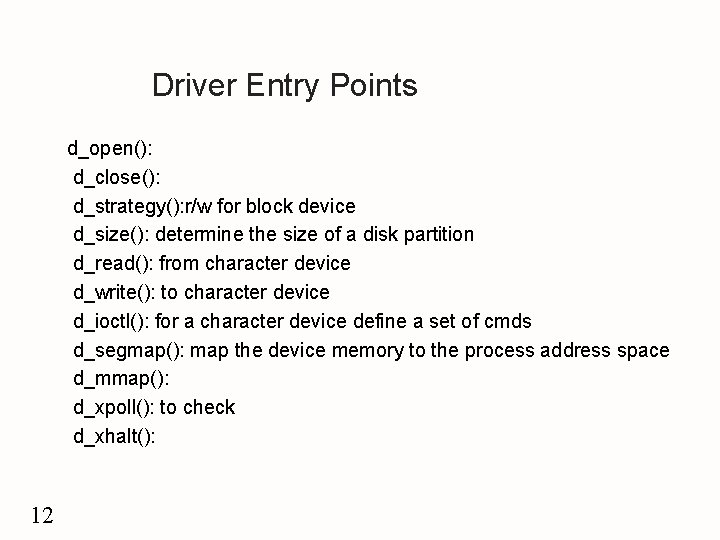

Driver Entry Points d_open(): d_close(): d_strategy(): r/w for block device d_size(): determine the size of a disk partition d_read(): from character device d_write(): to character device d_ioctl(): for a character device define a set of cmds d_segmap(): map the device memory to the process address space d_mmap(): d_xpoll(): to check d_xhalt(): 12

16. 4 The I/O Subsystem u. A portion of the kernel that controls the deviceindependent part of I/O u Major and Minor Numbers u Major u Device type u Minor u number: Device instance u *bdevsw[getmajor(dev)]. d_open()(dev, …) u dev_t: Earlier: 16 b, 8 for major and minor u SVR 4: 32 b, 14 for major, 18 for minor u 13

Device Files u. A specified file located in the file system and associated with a specific device. u Users can use the device file as ordinary inode u u u di_mode: IFBLK, IFCHR di_rdev: <major, minor> mknod(path, mode, dev) u Create u Access 14 u r/w/e a device file control & protection for o, g and others

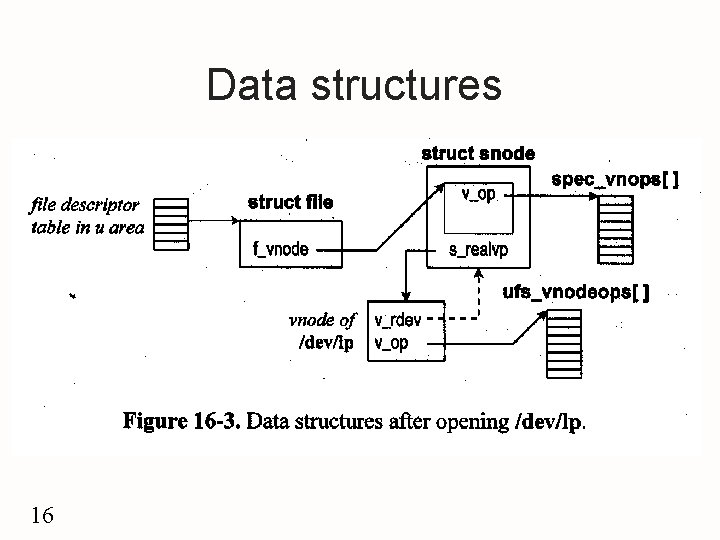

The specfs File System u. A u special file system type specfs vnode u All operations to the file are routed to it snode u E. g: /dev/lp u u ufs_lookup()->vnode of dev->vnode of lp ->the file type=IFCHR-><major, minor> -> specvp()->search the snode hash table by <major, minor> u No, create snode and vnode: stores the pointer to the vnode of /dev/lp to the s_realvp u Returns the pointer to the specfs vnode to ufs_lookup(), to open() 15

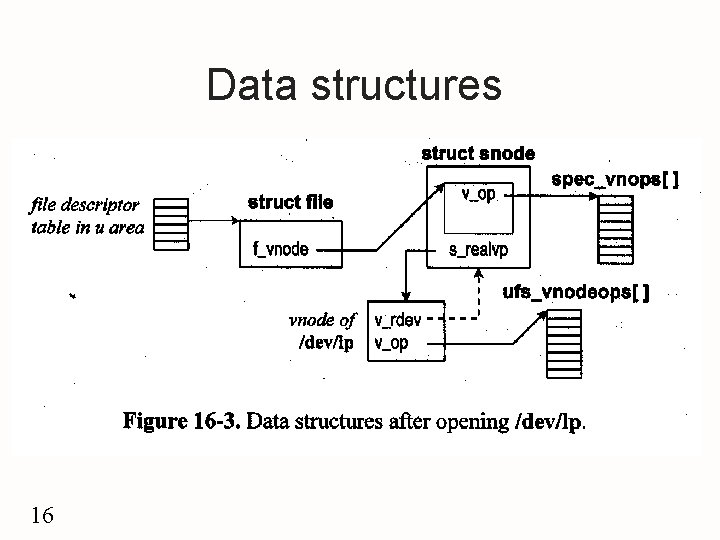

Data structures 16

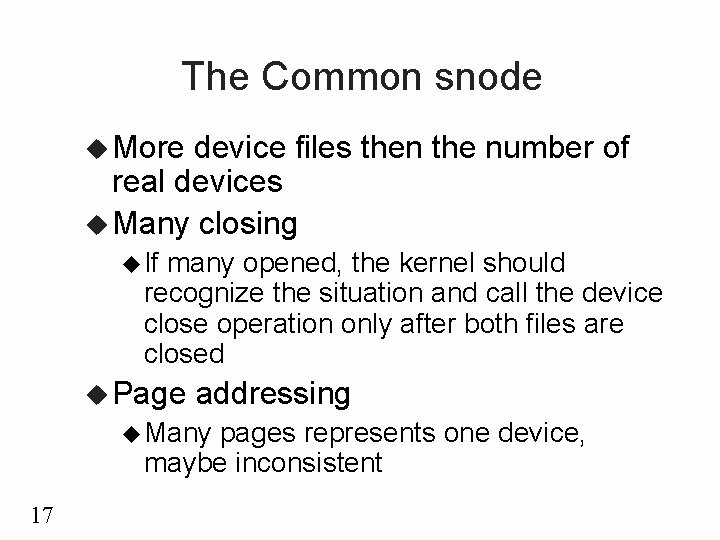

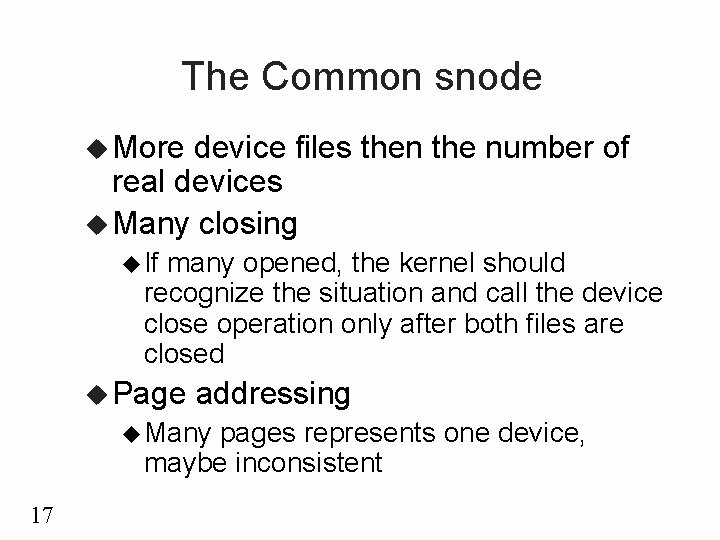

The Common snode u More device files then the number of real devices u Many closing u If many opened, the kernel should recognize the situation and call the device close operation only after both files are closed u Page addressing u Many pages represents one device, maybe inconsistent 17

18

Device cloning u u 19 When a user does not care what instance of a device is used, e. g. for network access, Multiple active connections can be created, each with a different minor dev. number Cloning is supported by dedicated clone drivers with major dev. # = # of the clone device, minor dev. # = major dev. # of the real device E. g. clone driver # = 63 (major #), TCP driver major # = 31, /dev/tcp major # = 63, minor # = 31; tcpopen() generates an unused minor device #

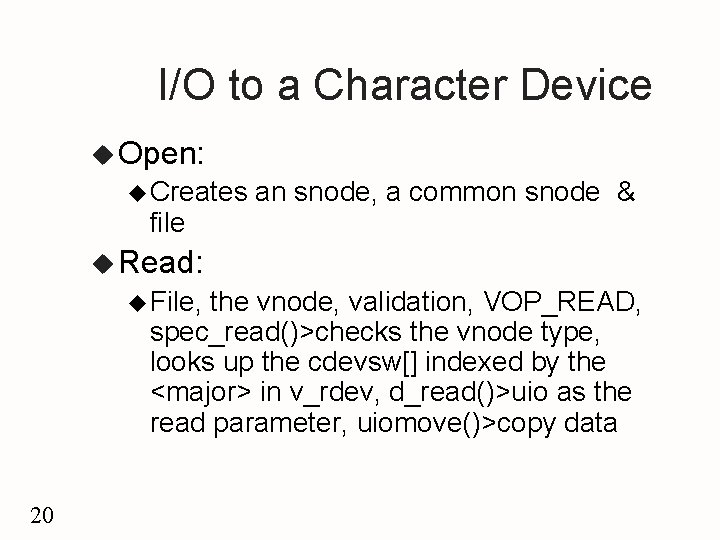

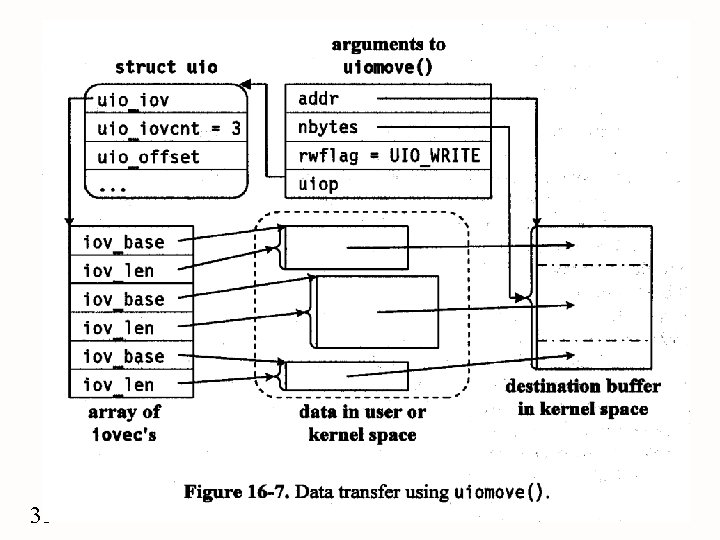

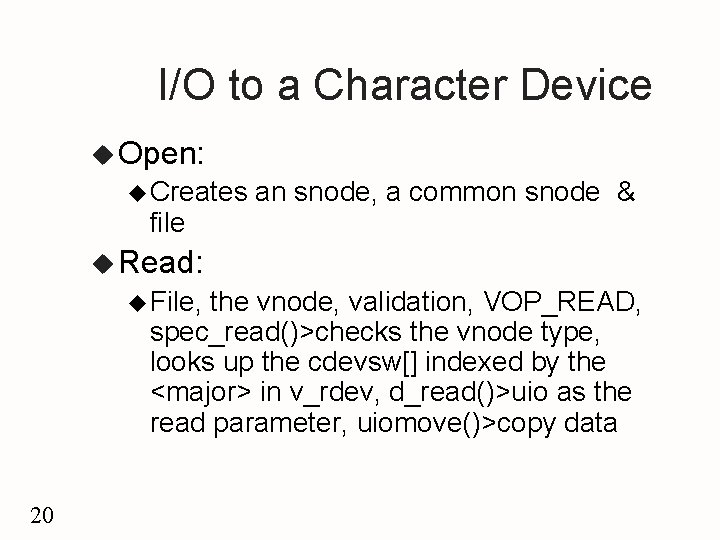

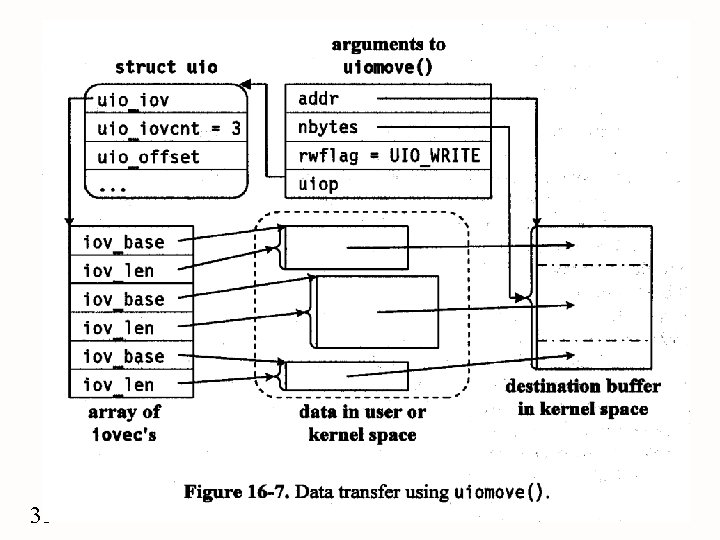

I/O to a Character Device u Open: u Creates file an snode, a common snode & u Read: u File, the vnode, validation, VOP_READ, spec_read()>checks the vnode type, looks up the cdevsw[] indexed by the <major> in v_rdev, d_read()>uio as the read parameter, uiomove()>copy data 20

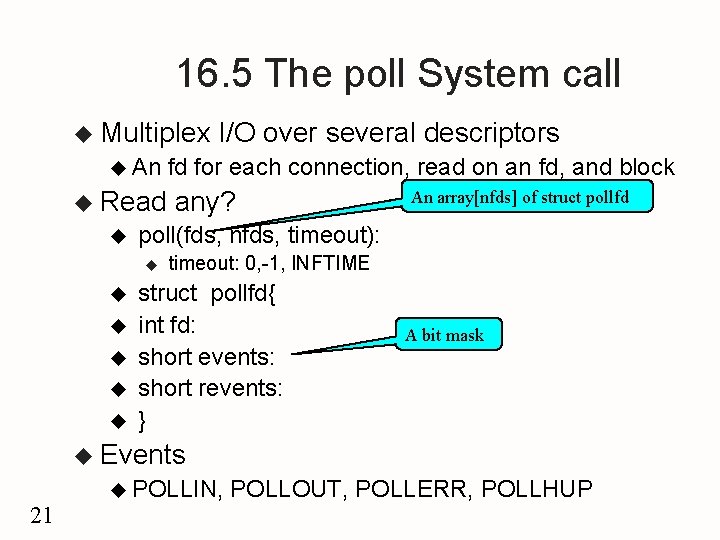

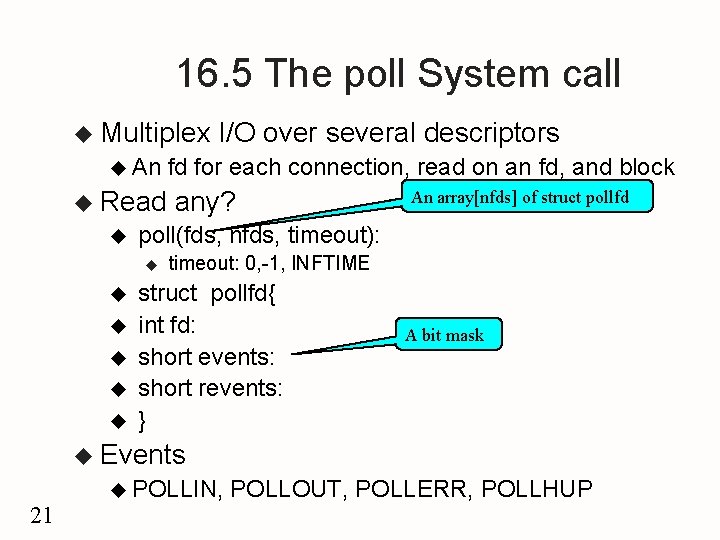

16. 5 The poll System call u Multiplex u An u Read u u u fd for each connection, read on an fd, and block any? An array[nfds] of struct pollfd poll(fds, nfds, timeout): u u I/O over several descriptors timeout: 0, -1, INFTIME struct pollfd{ int fd: short events: short revents: } A bit mask u Events u POLLIN, 21 POLLOUT, POLLERR, POLLHUP

poll Implementation u Structures pollhead: with a device file, maintains a queue of polldat u polldat: u ua blocked process(proc ) u the events u link 22

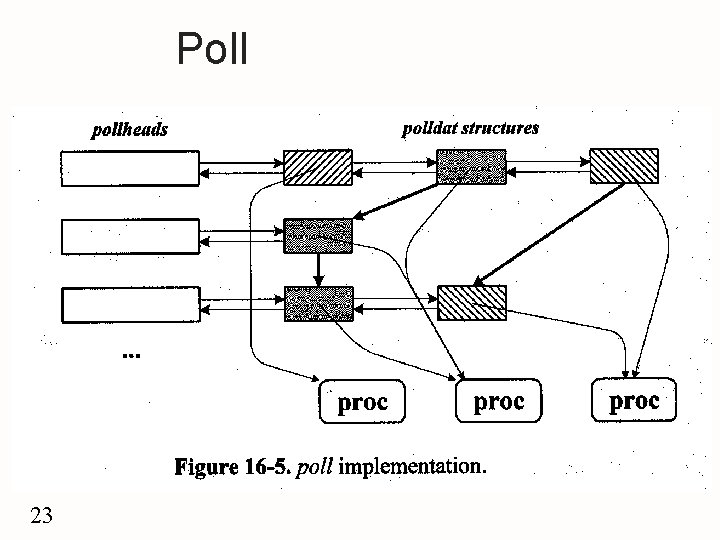

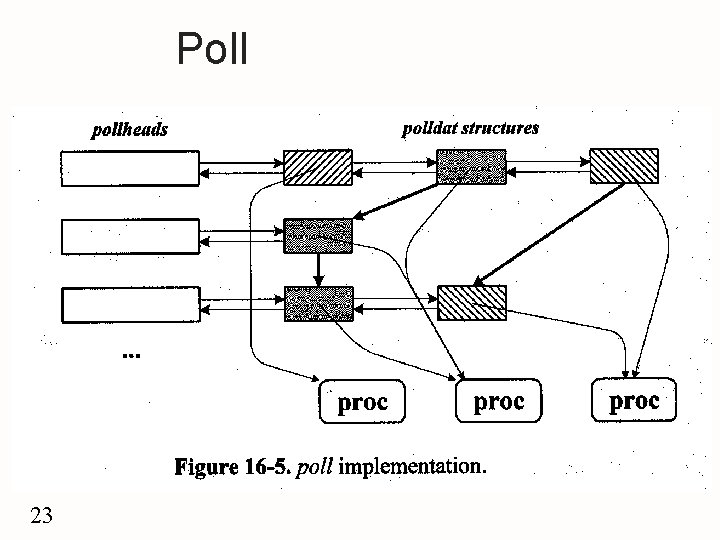

Poll 23

![VOPPOLL 24 u Error VOPPOLLvp events anyyet revents php u specpoll indexes cdevsw VOP_POLL 24 u Error = VOP_POLL(vp, events, anyyet, &revents, &php) u spec_poll() indexes cdevsw[]](https://slidetodoc.com/presentation_image_h/c4a346fca3ac59fd005a80bac506c0c2/image-24.jpg)

VOP_POLL 24 u Error = VOP_POLL(vp, events, anyyet, &revents, &php) u spec_poll() indexes cdevsw[] > d_xpoll()>checks events? updates revent, returns: anyyet=0? return a pointer to the pollhead u Returns to poll()> check revents & anyyet u Both = 0? Get the pollhead php, allocates a polldat, adds it to the queue, pointer to a proc, mask the events, link to another , block : !=0 in revents, removes all the polldat from the queue, free, anyyet+=number u Block, maintain the events in the driver, when occurs, pollwakeup(), event& the php

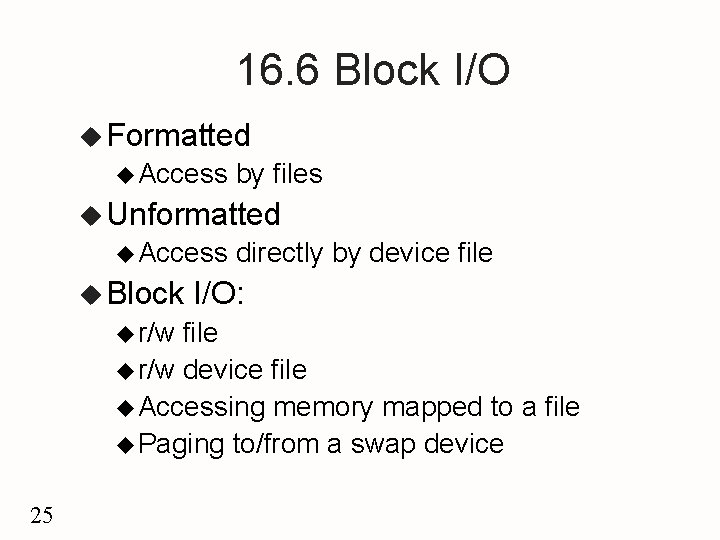

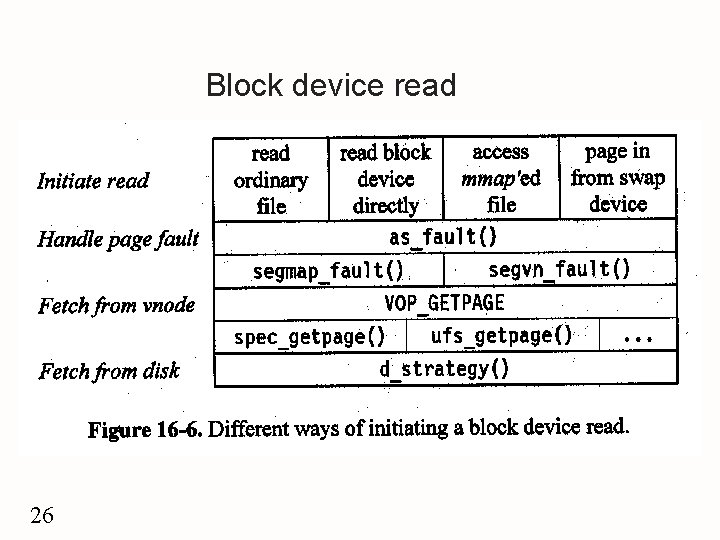

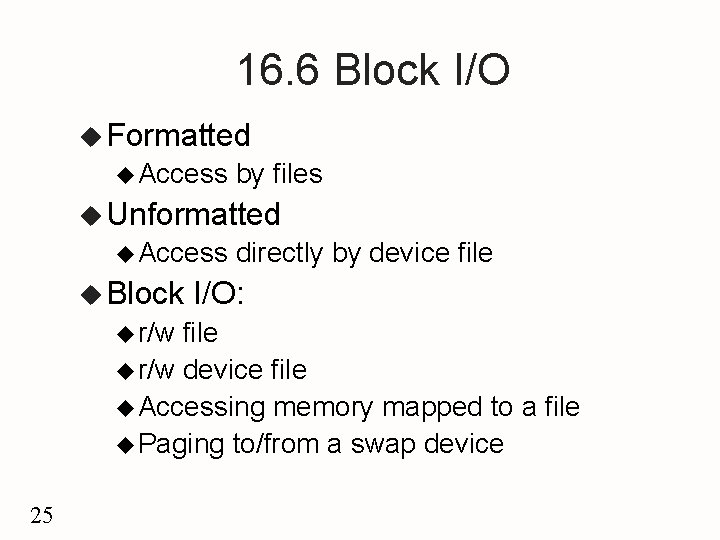

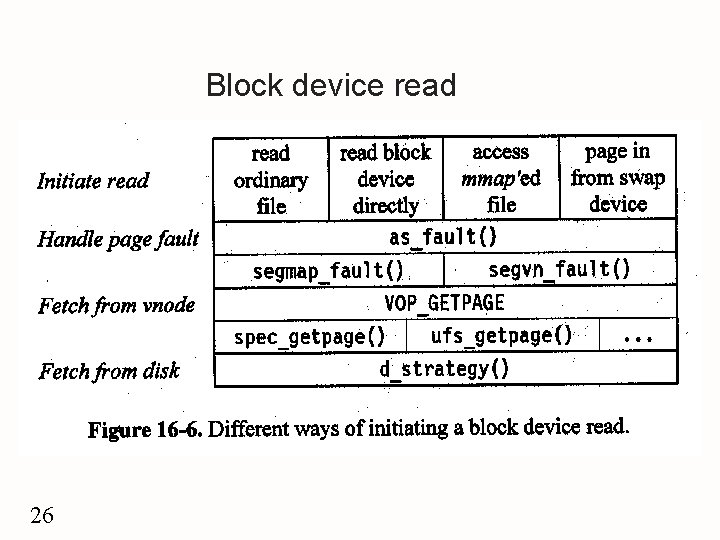

16. 6 Block I/O u Formatted u Access by files u Unformatted u Access u Block u r/w directly by device file I/O: file u r/w device file u Accessing memory mapped to a file u Paging to/from a swap device 25

Block device read 26

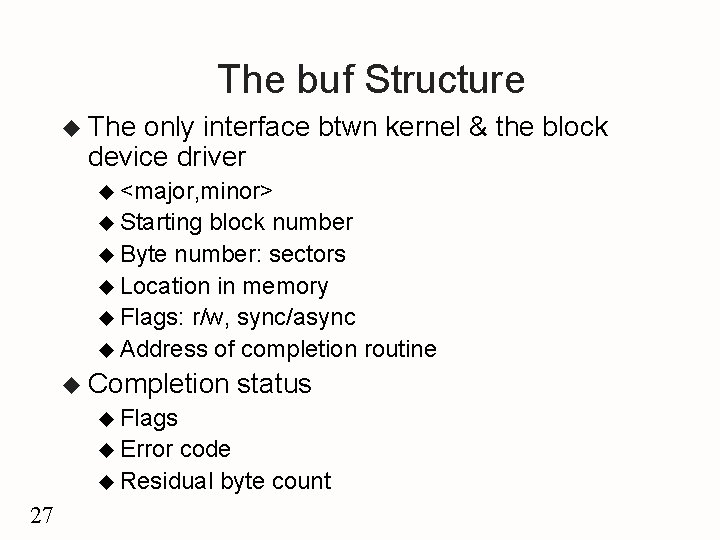

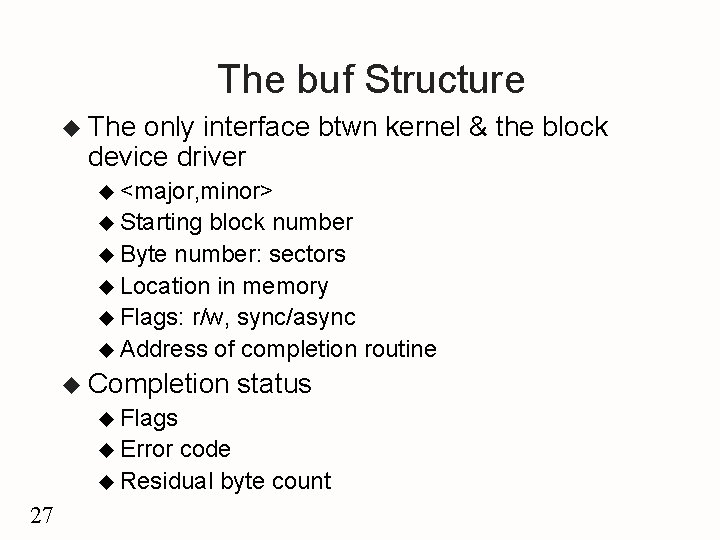

The buf Structure u The only interface btwn kernel & the block device driver u <major, minor> u Starting block number u Byte number: sectors u Location in memory u Flags: r/w, sync/async u Address of completion routine u Completion status u Flags u Error code u Residual byte count 27

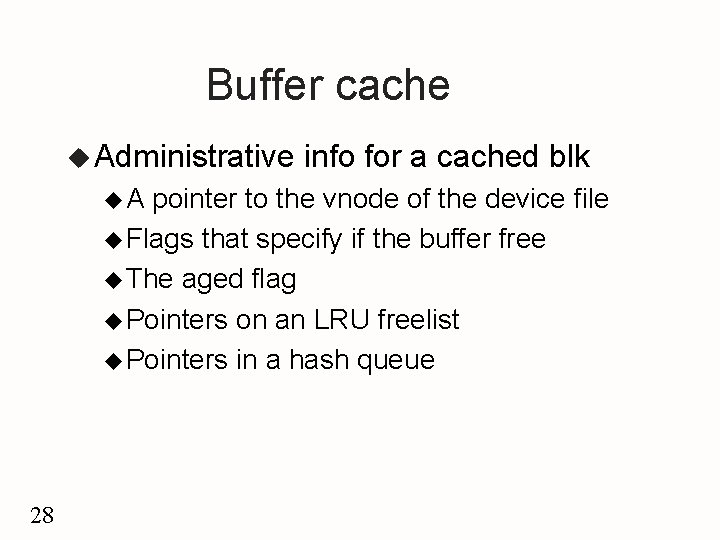

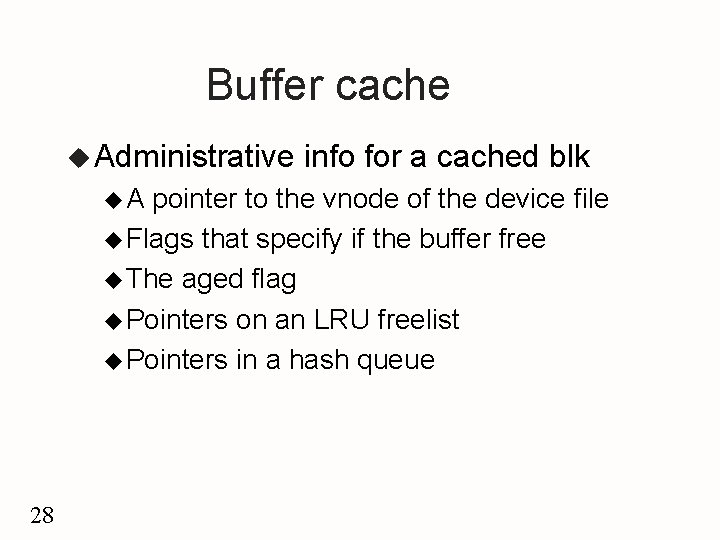

Buffer cache u Administrative u. A info for a cached blk pointer to the vnode of the device file u Flags that specify if the buffer free u The aged flag u Pointers on an LRU freelist u Pointers in a hash queue 28

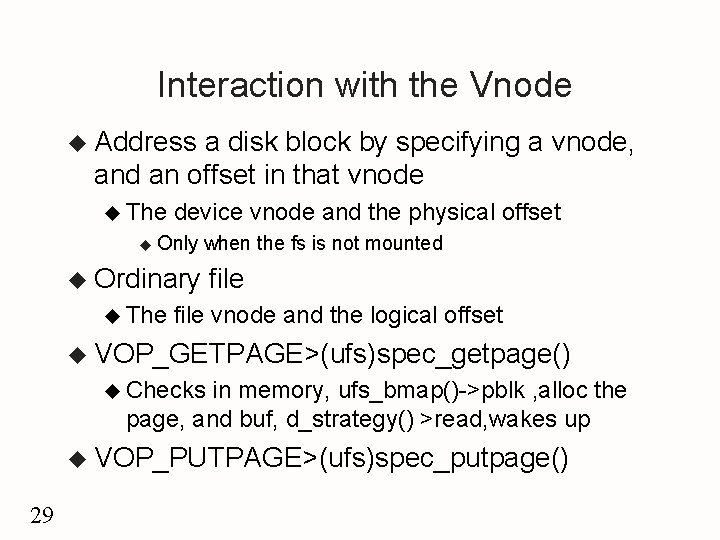

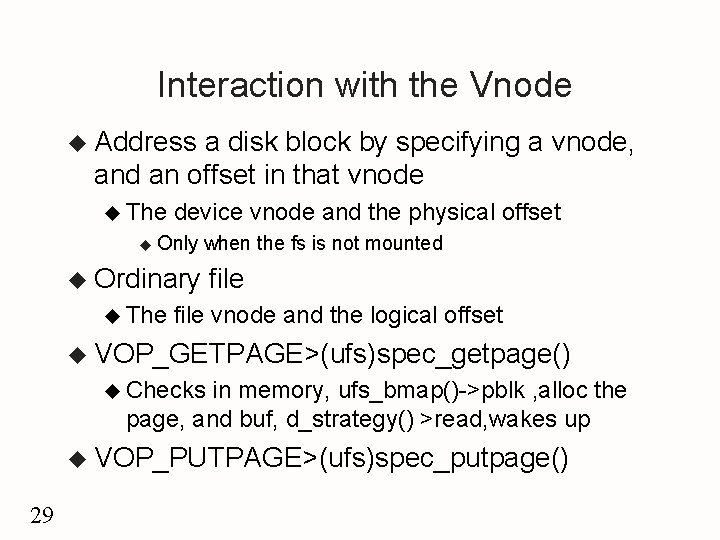

Interaction with the Vnode u Address a disk block by specifying a vnode, and an offset in that vnode u The u device vnode and the physical offset Only when the fs is not mounted u Ordinary u The file vnode and the logical offset u VOP_GETPAGE>(ufs)spec_getpage() u Checks in memory, ufs_bmap()->pblk , alloc the page, and buf, d_strategy() >read, wakes up u VOP_PUTPAGE>(ufs)spec_putpage() 29

Device Access Methods u Pageout u Vnode, u u 30 File I/O ufs_read: segmap_getmap(), uiomove(), segmap_release() u Direct u I/O to a File exec: page fault, segvn_fault(), VOP_GETPAGE u Ordinary u VOP_PUTPAGE spec_putpage(), d_strategy() ufs_putpage(), ufs_bmap() u Mapped u Operations I/O to Block Device spec_read: segmap_getmap(), uiomove(), segmap_release()

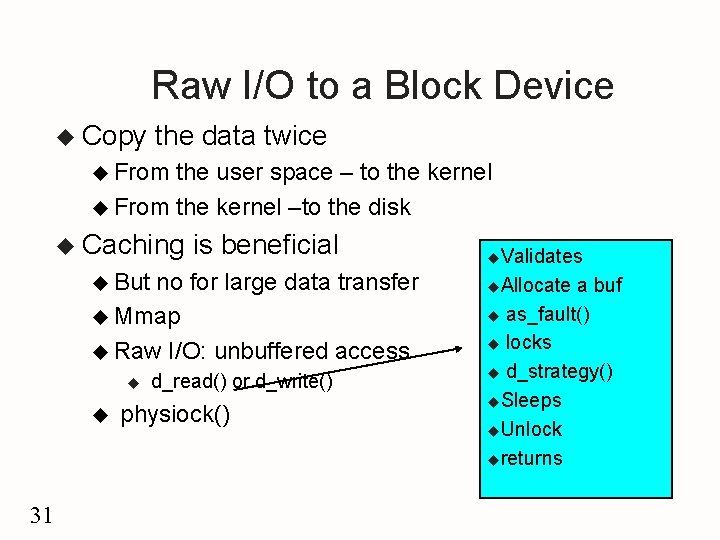

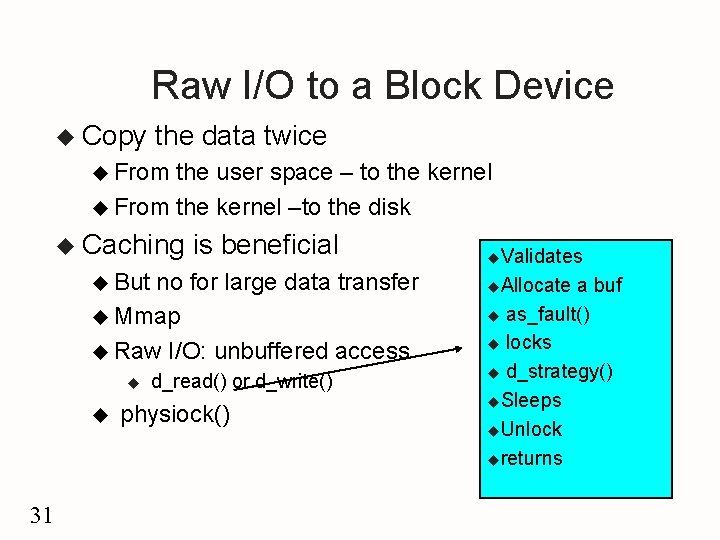

Raw I/O to a Block Device u Copy the data twice u From the user space – to the kernel u From the kernel –to the disk u Caching is beneficial u But no for large data transfer u Mmap u Raw I/O: unbuffered access u u 31 d_read() or d_write() physiock() u. Validates u. Allocate a buf u as_fault() u locks u d_strategy() u. Sleeps u. Unlock ureturns

16. 7 The DDI/DKI Specification u DDI/DKI: Device-Driver Kernel Interface u 5 Interface & Device- sections: S 1: data definition u S 2: driver entry point routines u S 3: kernel routines u S 4: kernel data structures u S 5: kernel #define statements u u 3 parts: Driver-kernel: the driver entry points and the kernel support routines u Driver-hardware: machine-dependent u Driver-boot: incorporate a driver into the kernel u 32

General Recommendation u u u u 33 Should not directly access system data structure. Only access the fields described in S 4 Should not define arrays of the structures defined in S 4 Should only set or clear flags for masks and never assign directly to the field Some structures opaque can be accessed by the routines Use the functions in S 3 to read or modify the structures in S 4 Include ddi. h Declare any private routines or global variables as static

Section 3 Functions u Synchronization and timing u Memory management u Buffer management u Device number operations u Direct memory access u Data transfers u Device polling u STREAMS u Utility routines 34

35

Other sections u S 1: specify prefix, prefixdevflag, disk -> dk u u S 2: u u describes data structures shared by the kernel and the devices S 5: u 36 specify the driver entry points S 4: u u D_DMA D_TAPE D_NOBRKUP The relevant kernel #define values

16. 8 Newer SVR 4 Releases u MP-Safe Drivers u Protect most global data by using multiprocessor synchronization primitives. u SVR 4/MP Adds a set of functions that allow drivers to use its new synchronization facilities. u Three locks: basic, read/write and sleep locks u Adds functions to allocate and manipulate the difference synchronization u Adds a D_MP flag to the prefixdevflag of the driver. u 37

Dynamic Loading & Unloading u SVR 4. 2 supports dynamic operation for: u Device drivers u Host bus adapter and controller drivers u STREAMS modules u File systems u Miscellaneous modules u Dynamic Loading: u Relocation and binding of the driver’s symbols. u Driver and device initialization u Adding the driver to the device switch tables, so that the kernel can access the switch routines u Installing the interrupt handler 38

SVR 4. 2 routines prefix_load() u prefix_unload() u mod_drvattach() u mod_drvdetach() u Wrapper Macros u u MOD_DRV _WRAPPER u MOD_HDRV_WRAPPER u MOD_STR_WRAPPER u MOD_FS_WRAPPER u MOD_MISC_WRAPPER 39

Future directions u Divide the code into a device-dependent and a controller-dependent part u PDI standard u. A set of S 2 functions that each host bus adapter must implement u A set of S 3 functions that perform common tasks required by SCSI devices u A set of S 4 data structures that are used in S 3 functions 40

Linux I/O u Elevator scheduler u Maintains a single queue for disk read and write requests u Keeps list of requests sorted by block number u Drive moves in a single direction to satisfy each request 41

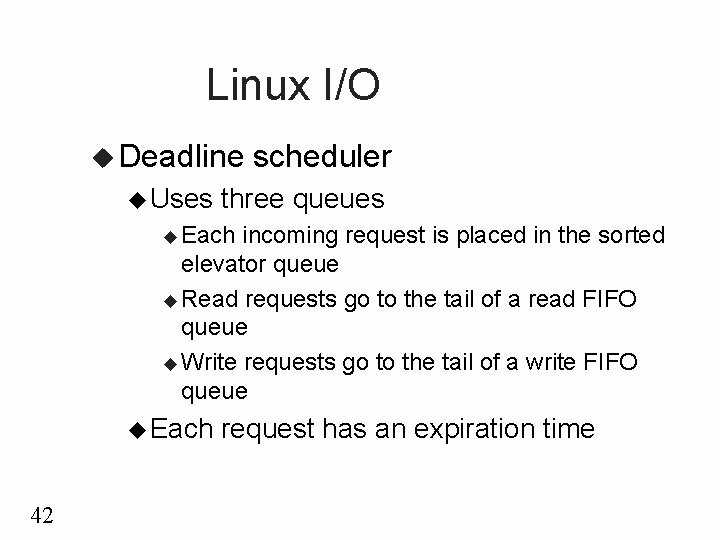

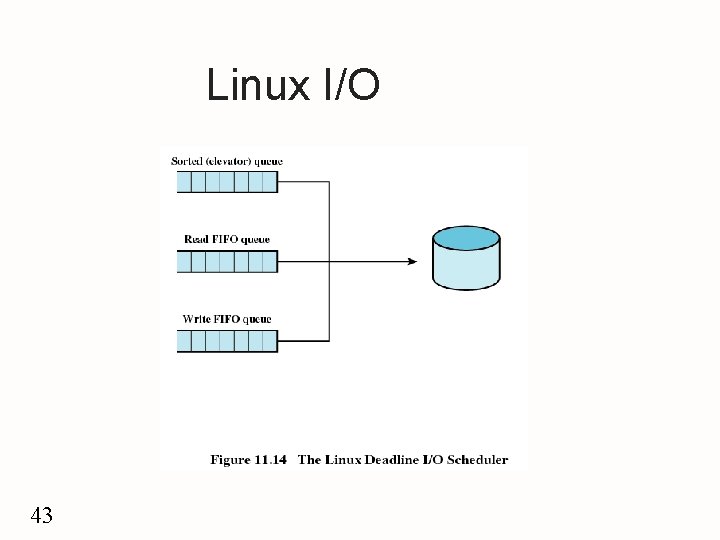

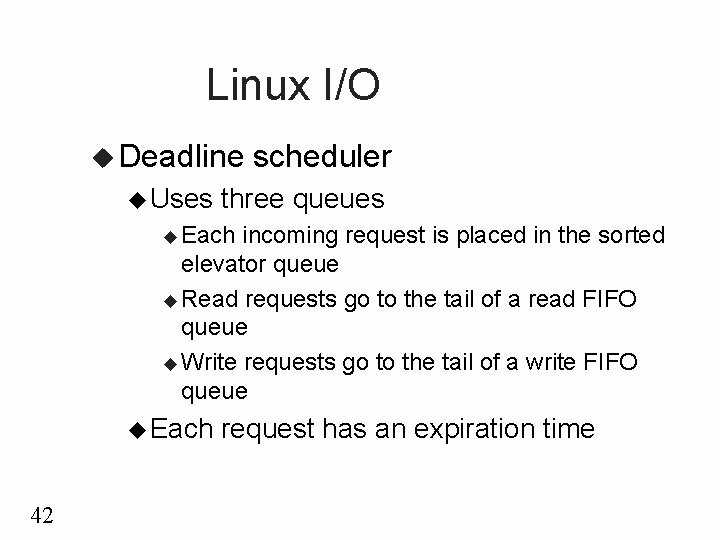

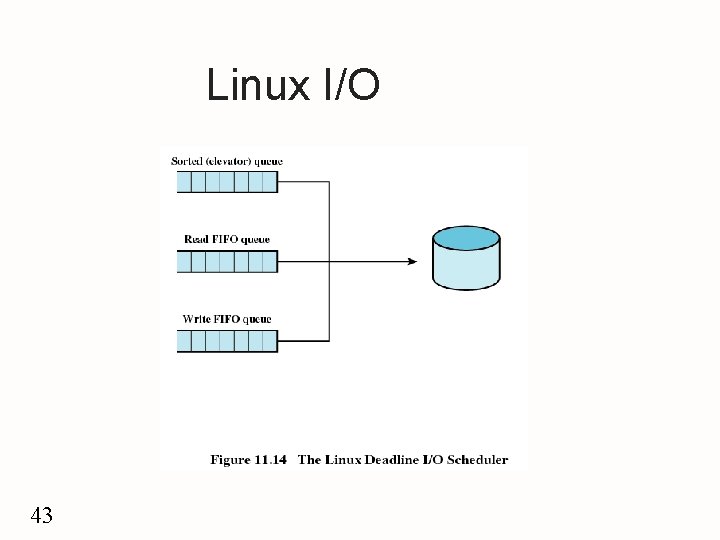

Linux I/O u Deadline u Uses scheduler three queues u Each incoming request is placed in the sorted elevator queue u Read requests go to the tail of a read FIFO queue u Write requests go to the tail of a write FIFO queue u Each 42 request has an expiration time

Linux I/O 43

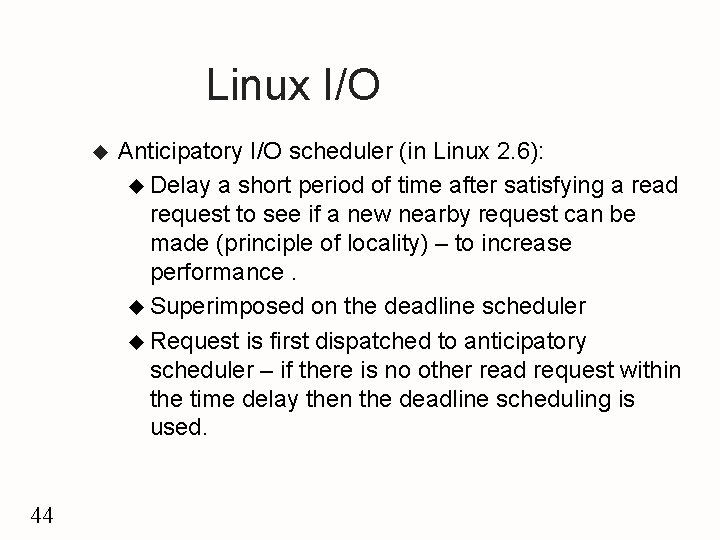

Linux I/O u 44 Anticipatory I/O scheduler (in Linux 2. 6): u Delay a short period of time after satisfying a read request to see if a new nearby request can be made (principle of locality) – to increase performance. u Superimposed on the deadline scheduler u Request is first dispatched to anticipatory scheduler – if there is no other read request within the time delay then the deadline scheduling is used.

Linux page cache (in Linux 2. 4 and later) u u 45 Single unified page cache involved in all traffic between disk and main memory Benefits – when it is time to write back dirty pages to disk, a collection of them can be ordered properly and written out efficiently; - pages in the page cache are likely to be referenced again before they are flushed from the cache, thus saving a disk I/O operation.