University of Paderborn Software Engineering Group Prof Dr

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer Design of Self-Managing Dependable Systems with UML and Fault Tolerance Patterns M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st • Matthias Tichy, Daniela Schilling, Holger Giese

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer Application Example Rail. Cab M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st

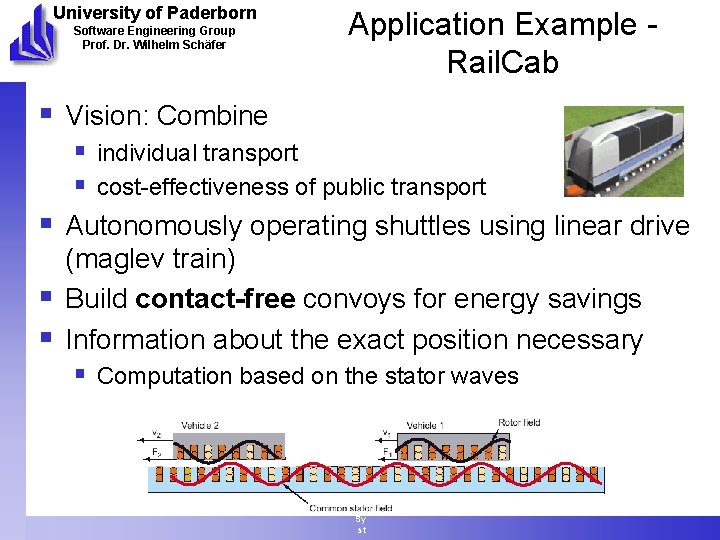

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer Application Example Rail. Cab § Vision: Combine § individual transport § cost-effectiveness of public transport § Autonomously operating shuttles using linear drive (maglev train) § Build contact-free convoys for energy savings § Information about the exact position necessary § Computation based on the stator waves M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st

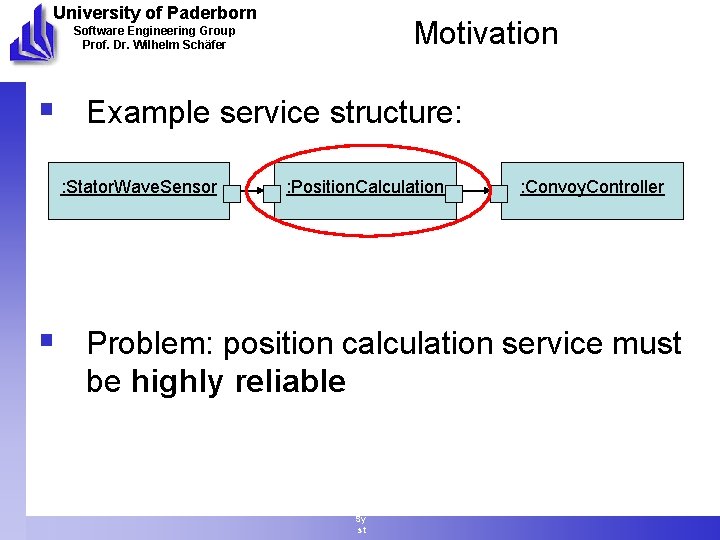

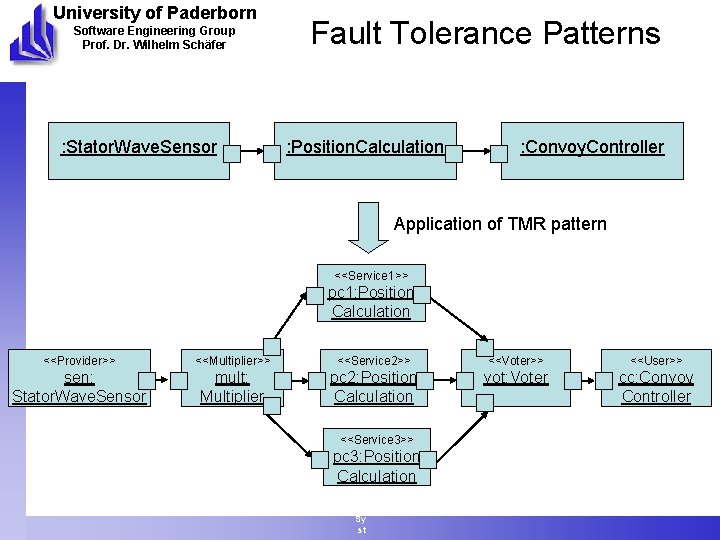

University of Paderborn Motivation Software Engineering Group Prof. Dr. Wilhelm Schäfer § Example service structure: : Stator. Wave. Sensor : Position. Calculation M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st : Convoy. Controller § Problem: position calculation service must be highly reliable

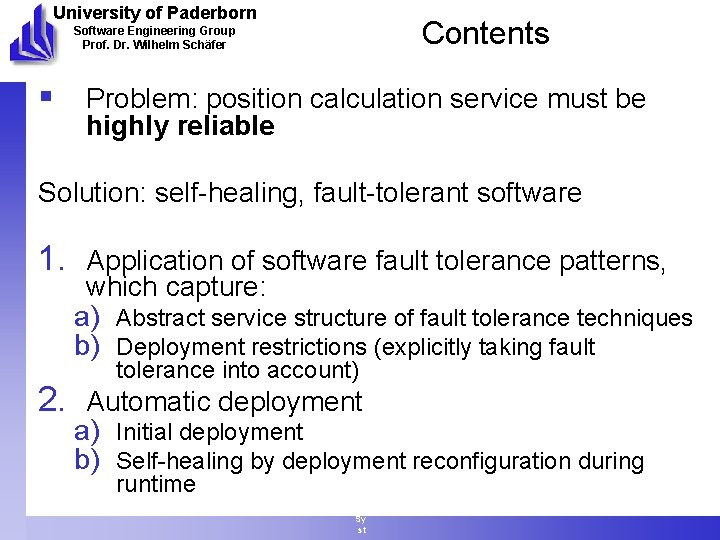

University of Paderborn Contents Software Engineering Group Prof. Dr. Wilhelm Schäfer § Problem: position calculation service must be highly reliable Solution: self-healing, fault-tolerant software 1. Application of software fault tolerance patterns, M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st which capture: a) Abstract service structure of fault tolerance techniques b) Deployment restrictions (explicitly taking fault tolerance into account) 2. Automatic deployment a) Initial deployment b) Self-healing by deployment reconfiguration during runtime

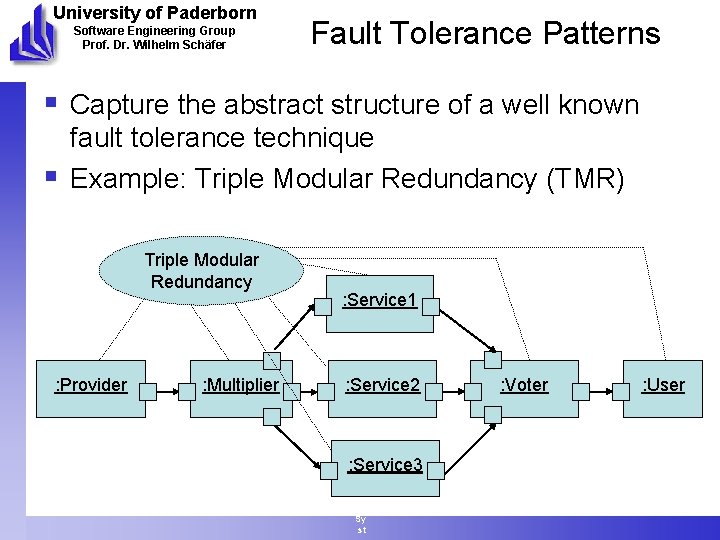

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer Fault Tolerance Patterns § Capture the abstract structure of a well known § fault tolerance technique Example: Triple Modular Redundancy (TMR) Triple Modular Redundancy : Provider : Multiplier M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st : Service 1 : Service 2 : Service 3 : Voter : User

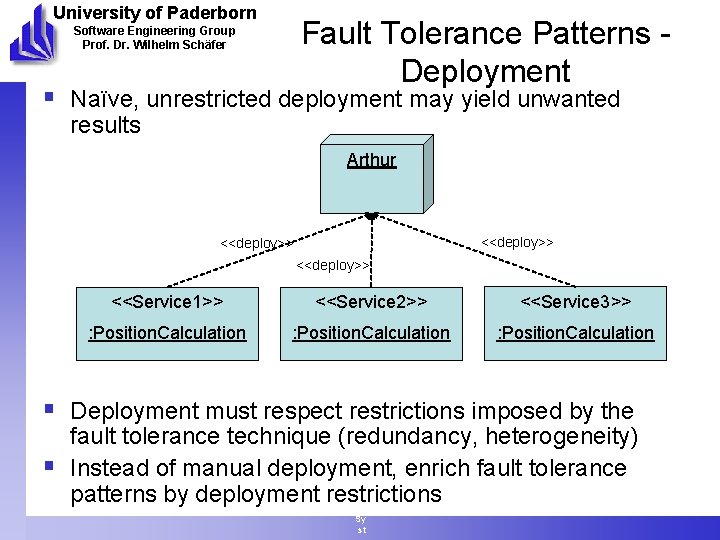

University of Paderborn Fault Tolerance Patterns Deployment Software Engineering Group Prof. Dr. Wilhelm Schäfer § Naïve, unrestricted deployment may yield unwanted results Arthur <<deploy>> <<Service 1>> : Position. Calculation M att <<deploy>> hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st <<deploy>> <<Service 2>> <<Service 3>> : Position. Calculation § Deployment must respect restrictions imposed by the § fault tolerance technique (redundancy, heterogeneity) Instead of manual deployment, enrich fault tolerance patterns by deployment restrictions

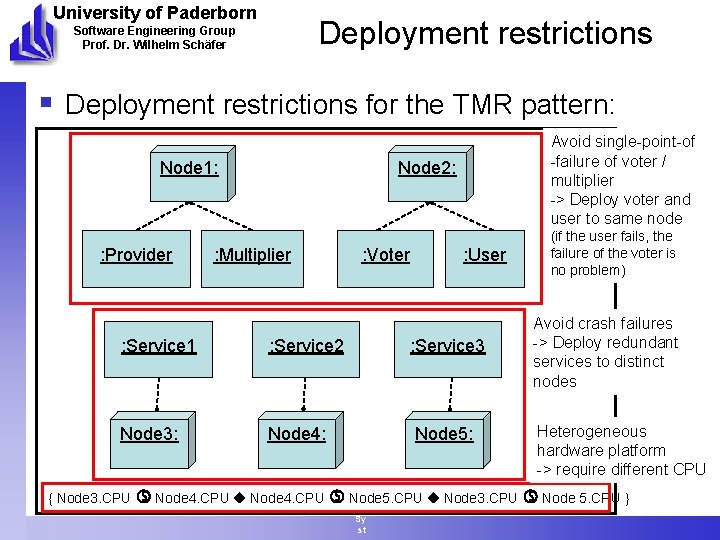

University of Paderborn Deployment restrictions Software Engineering Group Prof. Dr. Wilhelm Schäfer § Deployment restrictions for the TMR pattern: Node 1: : Provider Avoid single-point-of -failure of voter / multiplier -> Deploy voter and user to same node Node 2: : Multiplier : Service 1 : Service 2 Node 3: Node 4: { Node 3. CPU Node 4. CPU M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl Node 5. CPU e Sy st : Voter : User : Service 3 Node 5: (if the user fails, the failure of the voter is no problem) Avoid crash failures -> Deploy redundant services to distinct nodes Heterogeneous hardware platform -> require different CPU Node 3. CPU Node 5. CPU }

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer : Stator. Wave. Sensor Fault Tolerance Patterns : Position. Calculation : Convoy. Controller Application of TMR pattern M att hi <<Service 1>> as Ti ch y. D es ig n <<Service 2>> of Se lf. M an ag in <<Service 3>> g D ep en da bl e Sy st pc 1: Position Calculation <<Provider>> <<Multiplier>> sen: Stator. Wave. Sensor mult: Multiplier pc 2: Position Calculation pc 3: Position Calculation <<Voter>> <<User>> vot: Voter cc: Convoy Controller

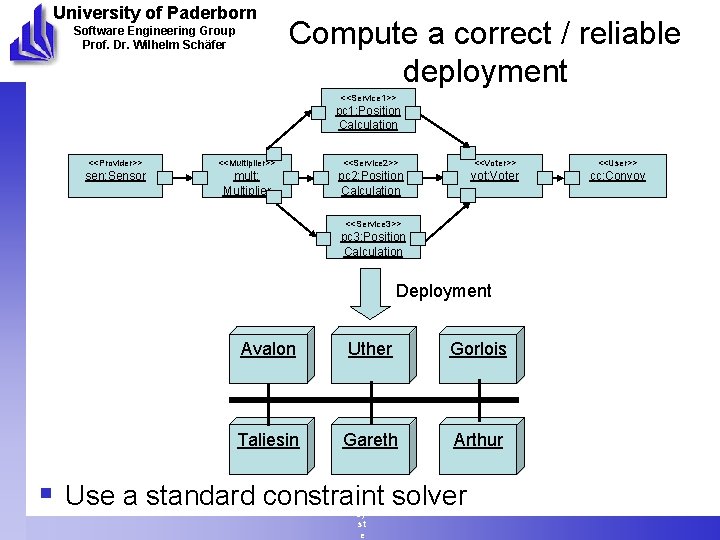

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer Compute a correct / reliable deployment <<Service 1>> pc 1: Position Calculation <<Provider>> <<Multiplier>> <<Service 2>> <<Voter>> <<User>> sen: Sensor mult: Multiplier pc 2: Position Calculation vot: Voter cc: Convoy <<Service 3>> pc 3: Position M Calculation att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e Deployment Avalon Uther Gorlois Taliesin Gareth Arthur § Use a standard constraint solver

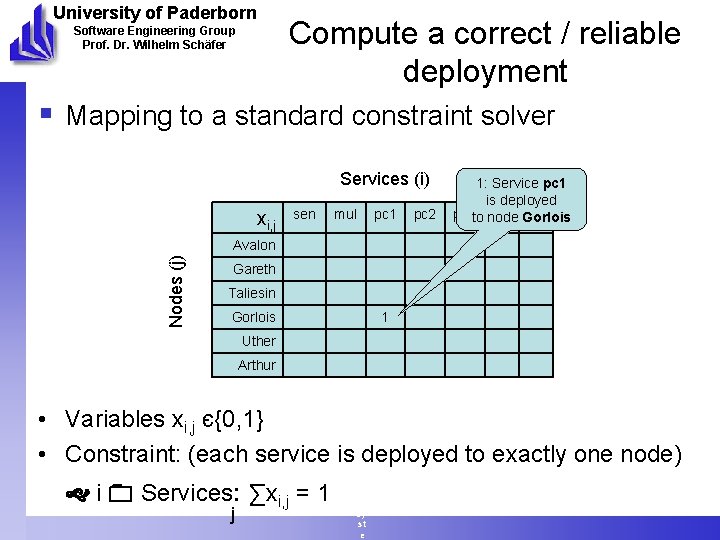

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer Compute a correct / reliable deployment § Mapping to a standard constraint solver Services (i) xi, j sen Nodes (j) Avalon Gareth Taliesin Gorlois Uther Arthur mul M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e pc 1 pc 2 1: Service pc 1 is deployed pc 3 to node vot Gorlois cc 1 • Variables xi, j є{0, 1} • Constraint: (each service is deployed to exactly one node) i Services: ∑xi, j = 1 j

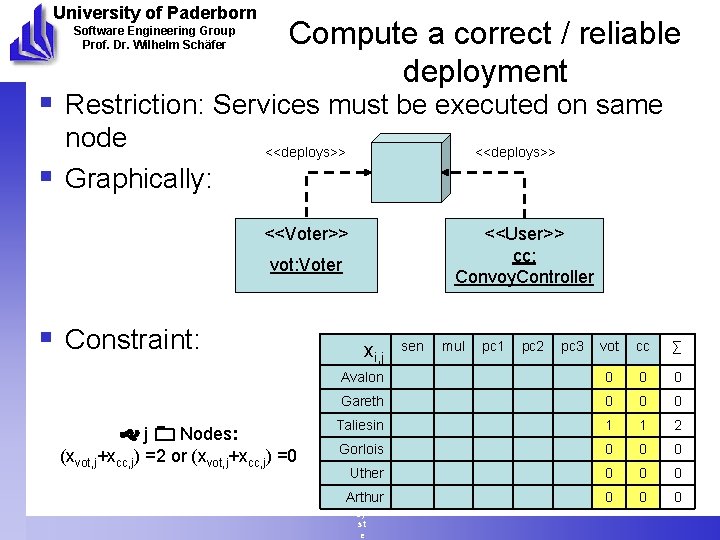

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer Compute a correct / reliable deployment § Restriction: Services must be executed on same § node Graphically: <<deploys>> <<Voter>> M att hi as Ti ch y. D es ig n of i, j Se Avalon lf. M Gareth an ag Taliesin in g D Gorlois ep en Uther da bl Arthur e Sy st e <<User>> cc: Convoy. Controller vot: Voter § Constraint: j Nodes: (xvot, j+xcc, j) =2 or (xvot, j+xcc, j) =0 x sen mul pc 1 pc 2 pc 3 vot cc ∑ 0 0 0 1 1 2 0 0 0 0 0

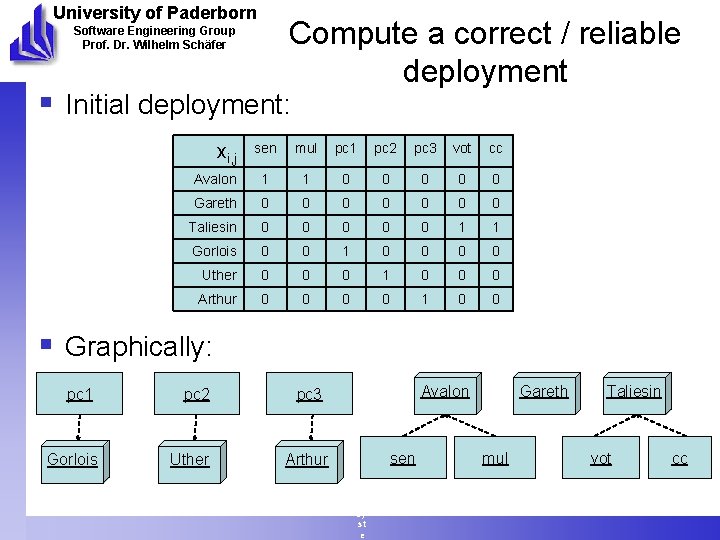

University of Paderborn Compute a correct / reliable deployment Software Engineering Group Prof. Dr. Wilhelm Schäfer § Initial deployment: sen mul pc 1 pc 2 pc 3 vot cc Avalon 1 1 0 0 0 Gareth 0 0 0 0 Taliesin 0 0 0 1 1 Gorlois 0 0 1 0 0 Uther 0 0 0 1 0 0 0 Arthur 0 0 1 0 0 xi, j § Graphically: pc 1 Gorlois pc 2 Uther pc 3 Arthur M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e Avalon sen Gareth mul Taliesin vot cc

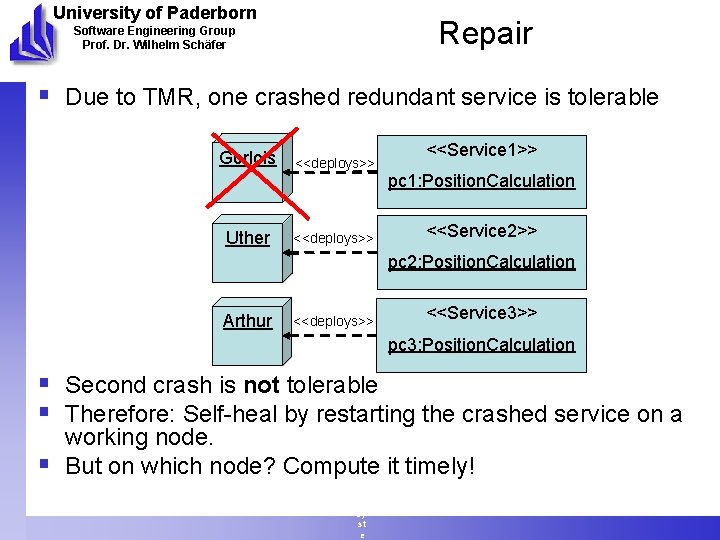

University of Paderborn Repair Software Engineering Group Prof. Dr. Wilhelm Schäfer § Due to TMR, one crashed redundant service is tolerable Gorlois <<deploys>> <<Service 1>> pc 1: Position. Calculation Uther Arthur <<deploys>> M att hi as Ti ch y. D <<deploys>> es ig n of Se lf. M an ag in g D ep en da bl e Sy st e <<Service 2>> pc 2: Position. Calculation <<Service 3>> pc 3: Position. Calculation § Second crash is not tolerable § Therefore: Self-heal by restarting the crashed service on a § working node. But on which node? Compute it timely!

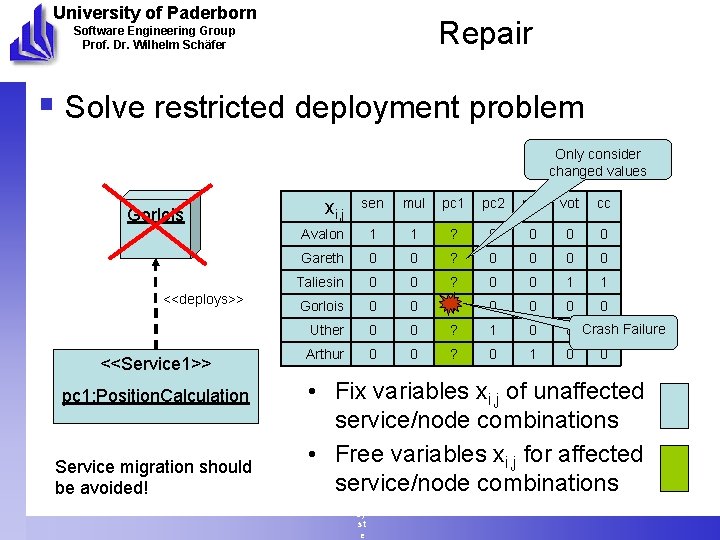

University of Paderborn Repair Software Engineering Group Prof. Dr. Wilhelm Schäfer § Solve restricted deployment problem Only consider changed values Gorlois xi, j Avalon Gareth Taliesin <<deploys>> Gorlois Uther <<Service 1>> pc 1: Position. Calculation Service migration should be avoided! Arthur sen mul pc 1 pc 2 pc 3 vot cc 1 1 ? 0 0 0 ? 0 0 1 1 0 0 0 0 ? 1 0 0 Crash 0 Failure 0 0 ? 0 1 0 M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e 0 • Fix variables xi, j of unaffected service/node combinations • Free variables xi, j for affected service/node combinations

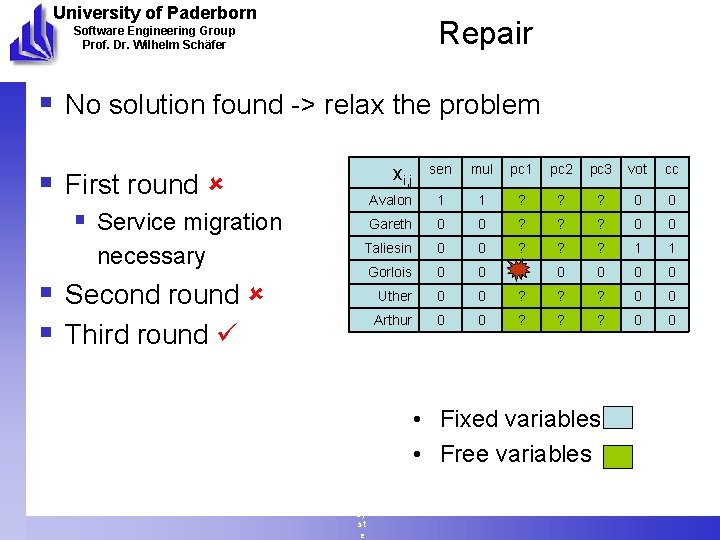

University of Paderborn Repair Software Engineering Group Prof. Dr. Wilhelm Schäfer § No solution found -> relax the problem § First round § Service migration necessary § Second round § Third round sen mul pc 1 pc 2 pc 3 vot cc Avalon 1 1 ? 0 0 Gareth 0 0 ? 0 0 M att. Taliesin hi as Gorlois Ti ch Uther y. D Arthur es ig n of Se lf. M an ag in g D ep en da bl e Sy st e 0 0 ? 1 1 0 0 0 0 ? 1 ? 0 0 xi, j • Fixed variables • Free variables

University of Paderborn Tool Support Software Engineering Group Prof. Dr. Wilhelm Schäfer § Current work M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer Conclusions / Future Work § Fault tolerance patterns capture fault tolerance techniques § Contain: Structure + Deployment restrictions § Graphical specification § Easy application 1. Automatic deployment 1. Initial deployment 2. Self-healing by deployment reconfiguration during runtime § Future Work § Heterogeneous service restrictions in pattern application § Synthesize behavior of voter and multiplier services § Use fault tolerance application knowledge for automatic fault tree analysis M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e www. . de

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer Questions? M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e www. . de

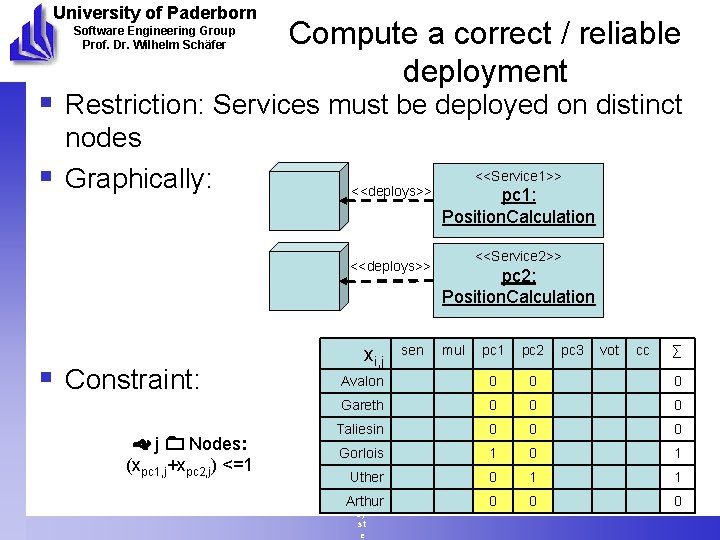

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer Compute a correct / reliable deployment § Restriction: Services must be deployed on distinct § nodes Graphically: § Constraint: j Nodes: (xpc 1, j+xpc 2, j) <=1 <<Service 1>> <<deploys>> M att hi <<deploys>> as Ti ch y. D es ig n sen of i, j Se Avalon lf. M an Gareth ag in Taliesin g D Gorlois ep en Uther da bl e Arthur Sy st e x pc 1: Position. Calculation <<Service 2>> pc 2: Position. Calculation mul pc 1 pc 2 pc 3 vot cc ∑ 0 0 0 0 0 1 0 1 1 0 0 0

University of Paderborn Software Engineering Group Prof. Dr. Wilhelm Schäfer Compute a correct / reliable deployment § Restriction: CPU of nodes for redundant service must be distinct § Constraint: M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e xs 1, j 2 xs 2, j 2 xs 3, j 3 j 1. cpu j 2. cpu j 3. cpu

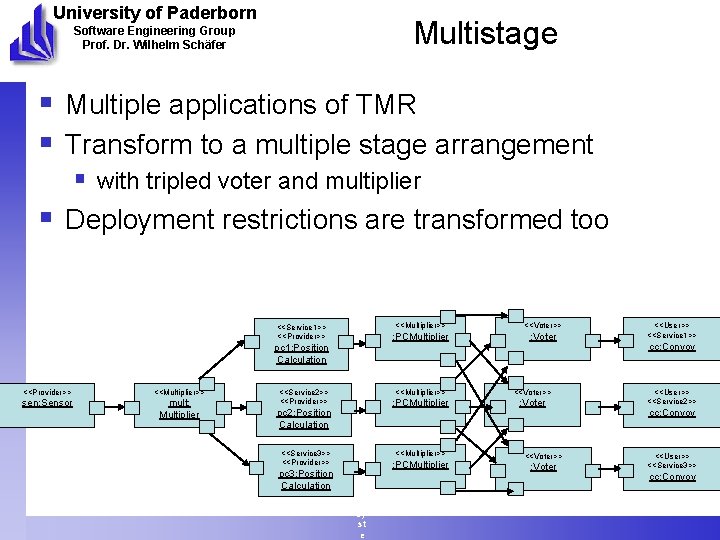

University of Paderborn Multistage Software Engineering Group Prof. Dr. Wilhelm Schäfer § Multiple applications of TMR § Transform to a multiple stage arrangement § with tripled voter and multiplier § Deployment restrictions are transformed too <<Service 1>> <<Provider>> pc 1: Position Calculation <<Provider>> <<Multiplier>> sen: Sensor mult: Multiplier <<Service 2>> <<Provider>> pc 2: Position Calculation <<Service 3>> <<Provider>> pc 3: Position Calculation M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e <<Multiplier>> <<Voter>> : PCMultiplier : Voter <<Multiplier>> : PCMultiplier <<Voter>> : Voter <<User>> <<Service 1>> cc: Convoy <<User>> <<Service 2>> cc: Convoy <<User>> <<Service 3>> cc: Convoy

University of Paderborn Tool Support Software Engineering Group Prof. Dr. Wilhelm Schäfer § Already working: § Graphical specification of fault tolerance patterns and deployment restrictions Automatic mapping to ILOG solver software § § Next: § Runtime support M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e

University of Paderborn Motivation Software Engineering Group Prof. Dr. Wilhelm Schäfer § System without fault-tolerance, node crash § (1) fault tolerance pattern § Introduce redundancy (TMR) but deploy two § § § redundant service on one node, crash of that node Better deploy redundant services to distinct nodes (2) deployment restrictions for fault tolerance pattern Example with distinct nodes, crash failure, everything ok (3) which nodes Now self-heal the system by restarting a crashed failure on a new node M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e

University of Paderborn Motivation Software Engineering Group Prof. Dr. Wilhelm Schäfer § Goals: § Easy application of fault tolerance techniques § Deployment issues taken into account § Easy deployment specification § Reusable deployment specification M att hi as Ti ch y. D es ig n of Se lf. M an ag in g D ep en da bl e Sy st e

- Slides: 25