Towards Holographic Brain Memory Based on Randomization and

Towards Holographic "Brain" Memory Based on Randomization and Walsh-Hadamard Transformation Ariel Hanemann Joint work with Shlomi Dolev* and Sergey Frenkel** *Department of Computer Science Ben-Gurion University, Israel **Institute of Informatics Problems, Russia Sept, 2012

Holographic brain theory • In a hologram each part of the recording media contains information about the whole image • Reconstructing the image from a piece of the recording media results in a noisy version of the whole image

Holographic brain theory • Karl Pribram’s holographic brain theory suggests that the brain holds memories in a holographic manner (Pribram 1971) • Experiments show that one cannot remove specific memory traces by removing parts of the brain in animals (Lashley 1929)

Holographic brain theory • The current belief today in neuroscience is that the brain performs some sort of fourier transform which is a holographic transform as part of the visual processing

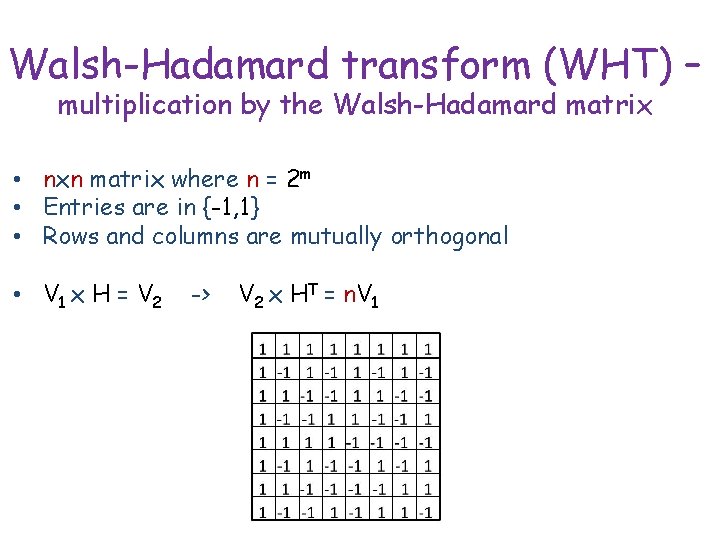

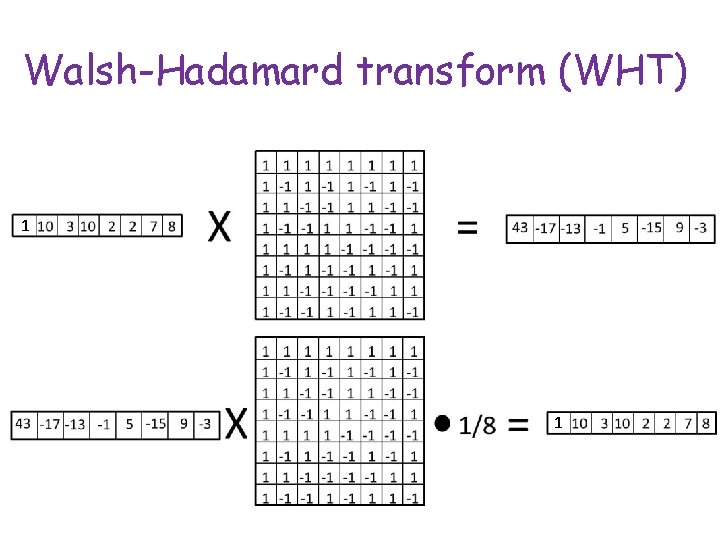

Walsh-Hadamard transform (WHT) – multiplication by the Walsh-Hadamard matrix • nxn matrix where n = 2 m • Entries are in {-1, 1} • Rows and columns are mutually orthogonal • V 1 x H = V 2 -> V 2 x HT = n. V 1

Walsh-Hadamard transform (WHT) 1 1

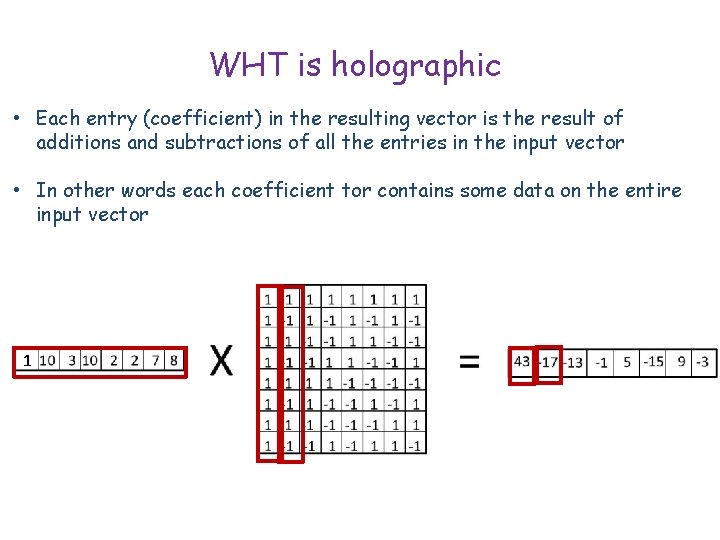

WHT is holographic • Each entry (coefficient) in the resulting vector is the result of additions and subtractions of all the entries in the input vector • In other words each coefficient tor contains some data on the entire input vector 1

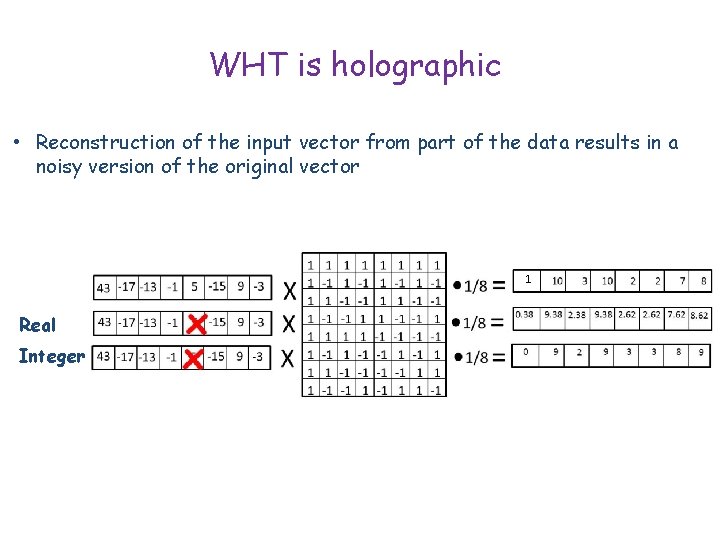

WHT is holographic • Reconstruction of the input vector from part of the data results in a noisy version of the original vector 1 1 Real Integer

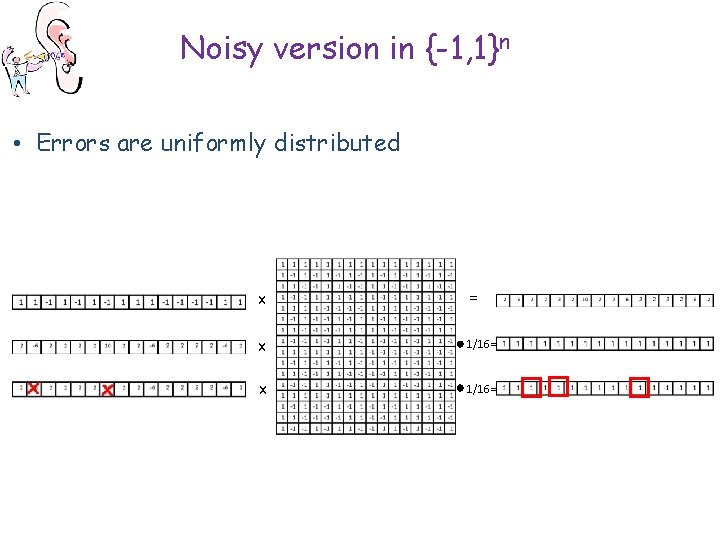

Noisy version in {-1, 1}n • Errors are uniformly distributed x = x 1/16=

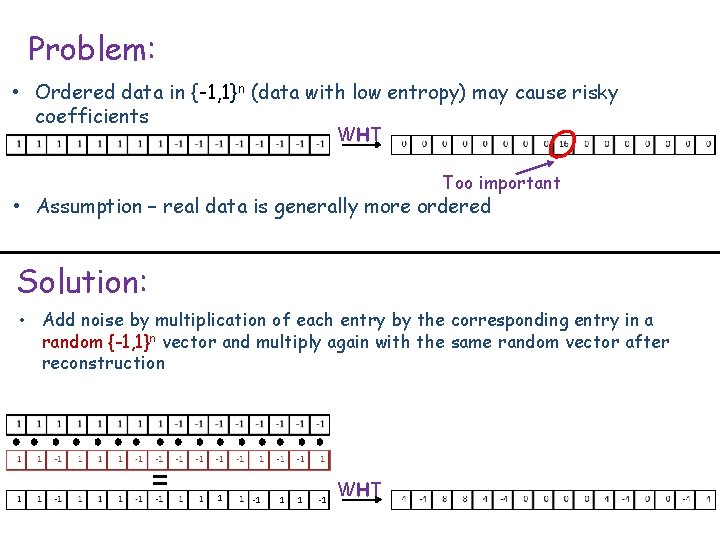

Problem: • Ordered data in {-1, 1}n (data with low entropy) may cause risky coefficients WHT Too important • Assumption – real data is generally more ordered Solution: • Add noise by multiplication of each entry by the corresponding entry in a random {-1, 1}n vector and multiply again with the same random vector after reconstruction = 1 -1 1 1 -1 WHT

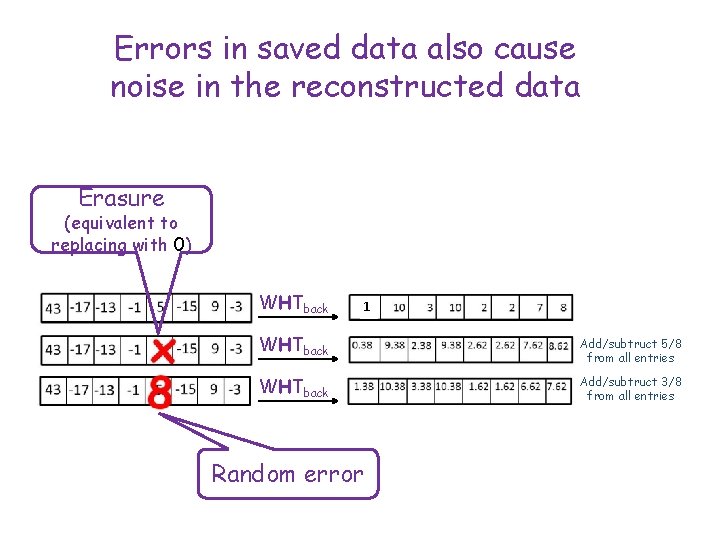

Errors in saved data also cause noise in the reconstructed data Erasure (equivalent to replacing with 0) WHTback 1 WHTback Add/subtruct 5/8 from all entries WHTback Add/subtruct 3/8 from all entries Random error

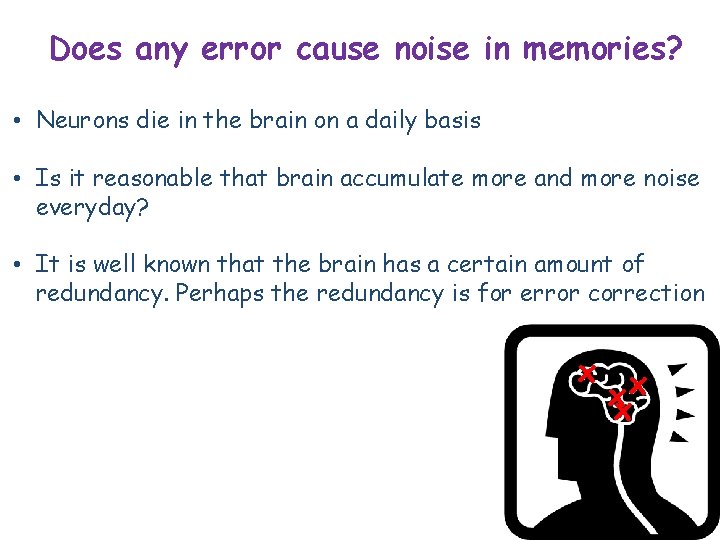

Does any error cause noise in memories? • Neurons die in the brain on a daily basis • Is it reasonable that brain accumulate more and more noise everyday? • It is well known that the brain has a certain amount of redundancy. Perhaps the redundancy is for error correction x x xx

Error correction • Adding redundant data to be able to correct errors

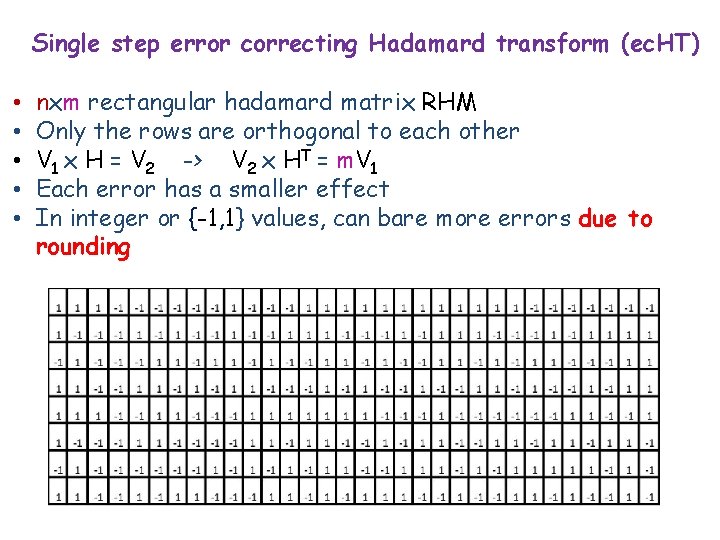

Single step error correcting Hadamard transform (ec. HT) • • • nxm rectangular hadamard matrix RHM Only the rows are orthogonal to each other V 1 x H = V 2 -> V 2 x HT = m. V 1 Each error has a smaller effect In integer or {-1, 1} values, can bare more errors due to rounding

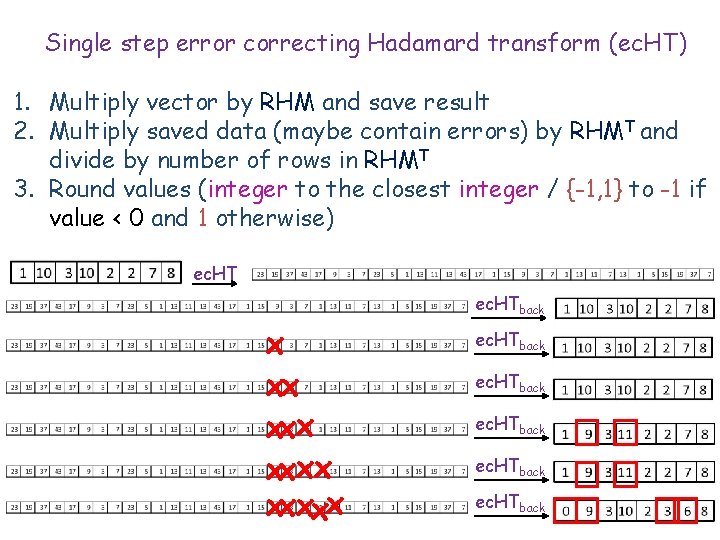

Single step error correcting Hadamard transform (ec. HT) 1. Multiply vector by RHM and save result 2. Multiply saved data (maybe contain errors) by RHMT and divide by number of rows in RHMT 3. Round values (integer to the closest integer / {-1, 1} to -1 if value < 0 and 1 otherwise) ec. HTback x xx xxxxx ec. HTback

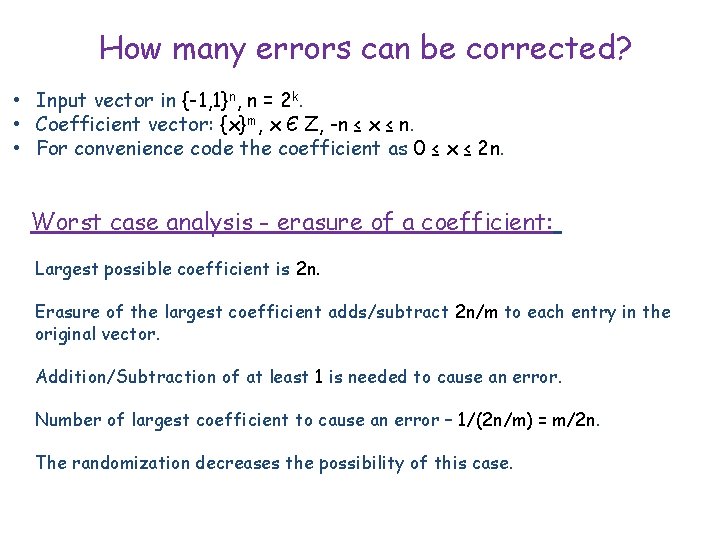

How many errors can be corrected? • Input vector in {-1, 1}n, n = 2 k. • Coefficient vector: {x}m, x Є Z, -n ≤ x ≤ n. • For convenience code the coefficient as 0 ≤ x ≤ 2 n. Worst case analysis - erasure of a coefficient: Largest possible coefficient is 2 n. Erasure of the largest coefficient adds/subtract 2 n/m to each entry in the original vector. Addition/Subtraction of at least 1 is needed to cause an error. Number of largest coefficient to cause an error – 1/(2 n/m) = m/2 n. The randomization decreases the possibility of this case.

How many errors can be corrected? Worst case analysis - Bit flip in binary representation : n = 2 k most significant bit (MSB) equals 2 n. MSB flip adds/subtract 2 n/m to each entry in the original vector. Addition/Subtraction of at least 1 is needed to cause an error. Number of MSB to cause an error – 1/(2 n/m) = m/2 n. The randomization does not help in this case, bit flip does not depend on coefficient size (same probability for 0 to become 2 n as for 2 n to become 0).

How many errors can be corrected? Worst case analysis - Bit flip in Unary representation : Each bit flip adds/subtract 1/m to each entry in the original vector. Addition/Subtraction of at least 1 is needed to cause an error. Number of MSB to cause an error – 1/(1/m) = m. The randomization does not help in this case, bit flip does not depend on coefficient size (same probability for 0 to become 0 + 1 as for 2 n to become 2 n – 1).

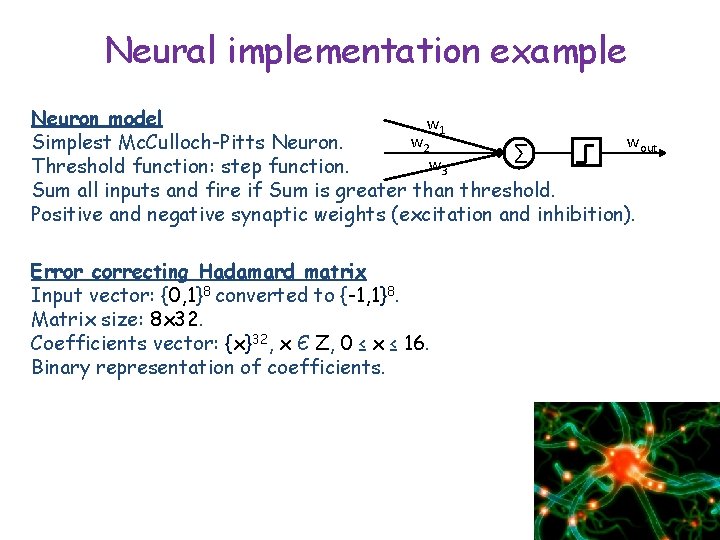

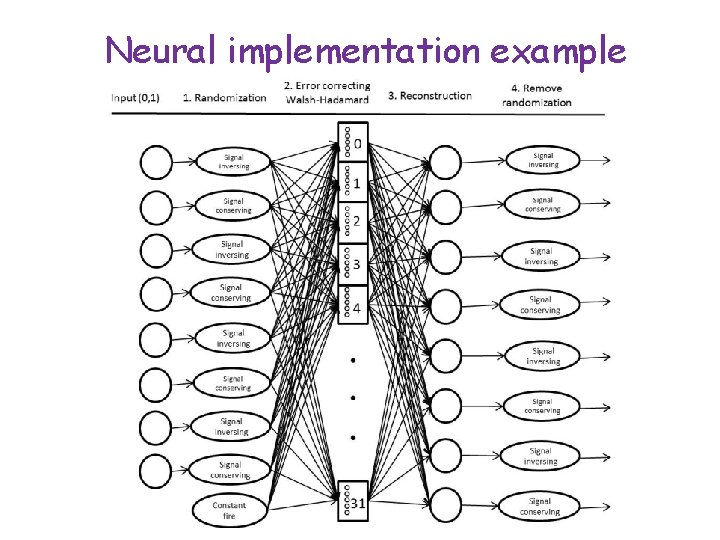

Neural implementation example Neuron model w 1 w 2 wout Simplest Mc. Culloch-Pitts Neuron. ∑ w 3 Threshold function: step function. Sum all inputs and fire if Sum is greater than threshold. Positive and negative synaptic weights (excitation and inhibition). Error correcting Hadamard matrix Input vector: {0, 1}8 converted to {-1, 1}8. Matrix size: 8 x 32. Coefficients vector: {x}32, x Є Z, 0 ≤ x ≤ 16. Binary representation of coefficients.

Neural implementation example

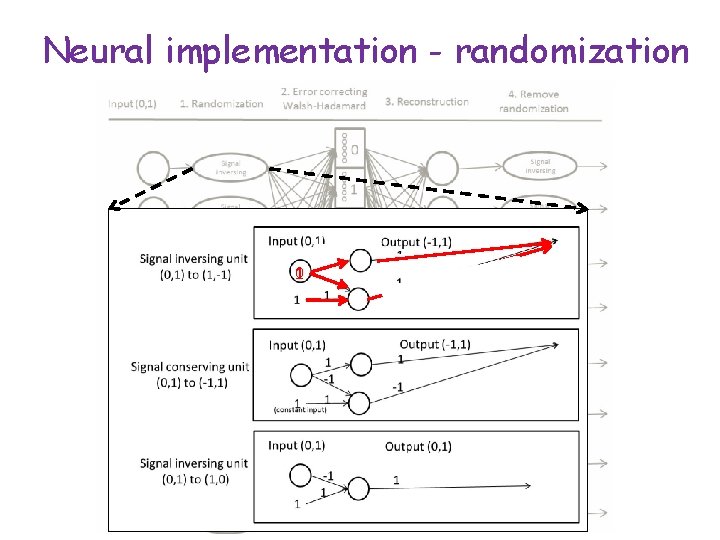

Neural implementation - randomization 0 1

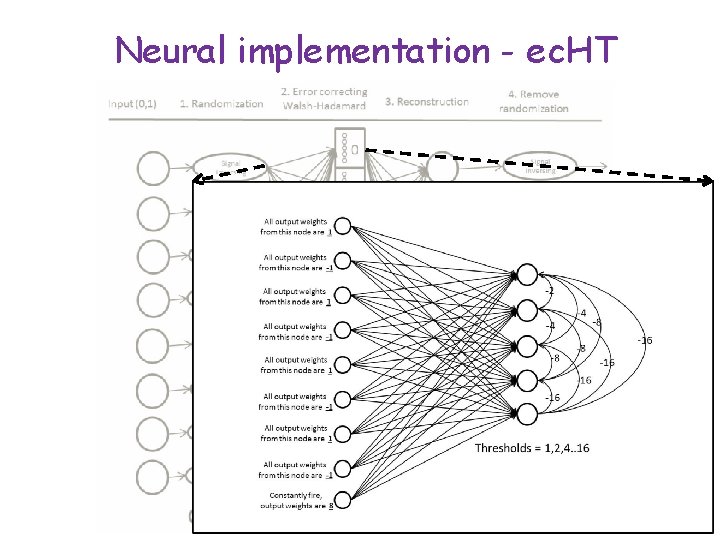

Neural implementation - ec. HT

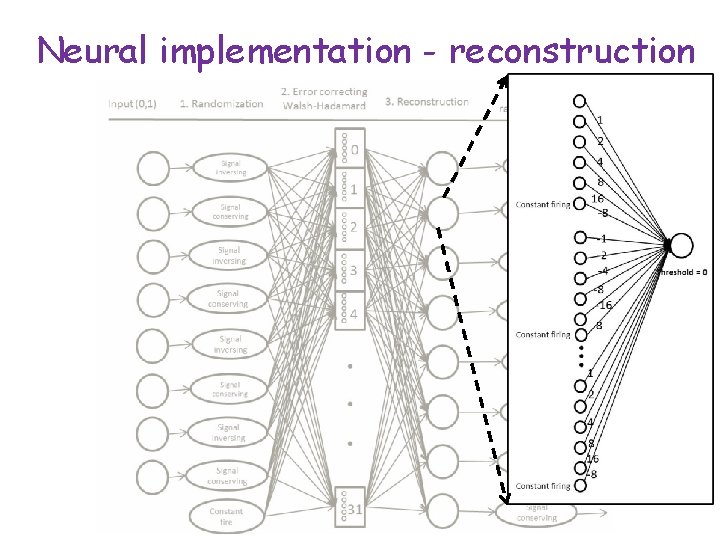

Neural implementation - reconstruction

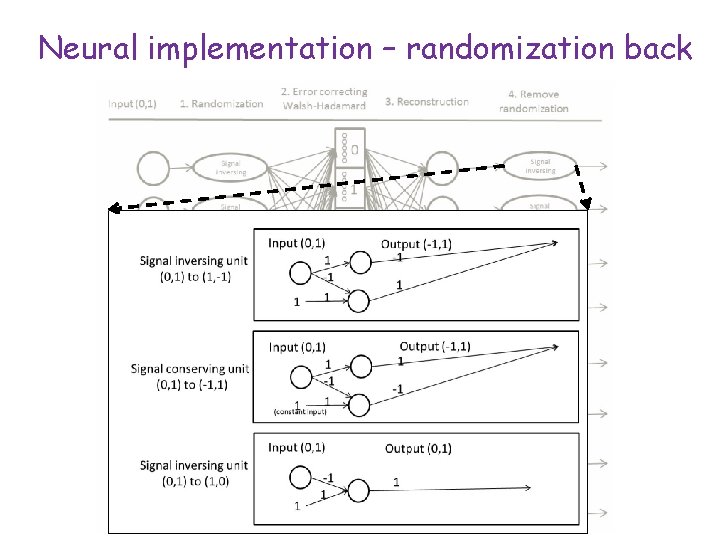

Neural implementation – randomization back

Summary • A holographic error correcting memory using Hadamard matrices was presented • Initial randomization to increase entropy and avoid risky coefficient (good for coefficient erasure) • Neural implementation using simplest neuron model was presented for feasibility proof. • More complicated neurons should be considered and may improve the simplicity of the net • Binary representation and the well ordered form of the net are only for example. The reality is probably more complicated…

Applications • Communication (sending images) • data storage (saving images)

Future research • Actual storage part of the system • Different representations • A less ordered network

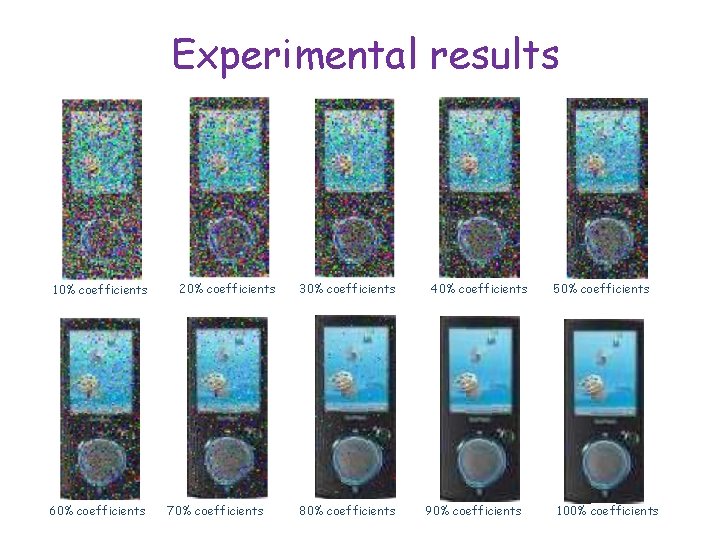

Experimental results 10% coefficients 60% coefficients 20% coefficients 70% coefficients 30% coefficients 80% coefficients 40% coefficients 90% coefficients 50% coefficients 100% coefficients

Thank you!

- Slides: 29